Linear PCA vs. Kernel PCA for Genomic Data: A Comprehensive Guide for Biomedical Researchers

This article provides a thorough comparison of Linear and Kernel Principal Component Analysis (PCA) for analyzing high-dimensional genomic data.

Linear PCA vs. Kernel PCA for Genomic Data: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a thorough comparison of Linear and Kernel Principal Component Analysis (PCA) for analyzing high-dimensional genomic data. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts, practical implementation guidelines, and optimization strategies. We explore the strengths and limitations of each method in contexts like population genetics, gene expression analysis, and trait prediction, addressing critical challenges such as interpretability and missing data. The guide synthesizes evidence from recent studies to help practitioners select the right tool, enhance analytical robustness, and unlock deeper biological insights from complex genomic datasets.

The Core Concepts: Understanding PCA and Its Role in Genomics

Defining Principal Component Analysis (PCA) and its Linear Assumptions

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique that simplifies complex datasets by transforming correlated variables into a smaller set of uncorrelated principal components [1] [2]. These new variables are linear combinations of the original features, constructed to successively capture the maximum possible variance within the data while remaining orthogonal to one another [1]. The method is fundamentally a linear transformation, projecting data onto a new set of axes defined by the directions of maximal variance, making it highly effective for exploratory data analysis, noise reduction, and data compression [3] [2].

The mathematical foundation of PCA rests on linear algebra operations. The process begins by standardizing the data to ensure each feature contributes equally to the analysis [1] [4]. Subsequently, PCA computes the covariance matrix to understand how variables relate to one another [1] [4]. The principal components themselves are derived from the eigenvectors of this covariance matrix, with the corresponding eigenvalues indicating the amount of variance each component explains [1] [2] [4]. This elegant linear framework makes PCA an adaptive data analysis technique whose components are defined by the dataset itself rather than by a priori assumptions [2].

The Linear Assumptions of Standard PCA

The standard PCA framework operates under several critical linear assumptions that dictate its applicability and performance. First and foremost, PCA assumes linear relationships between all variables in the dataset [5] [3]. This linearity is essential because PCA identifies components through linear combinations of original variables. When underlying data structures are non-linear, PCA may fail to capture important patterns and relationships [6].

Additionally, PCA requires that variables are measured on continuous scales, though ordinal variables are frequently used in practice [5]. The technique also demands adequate sampling for reliable results, with recommendations varying from an absolute minimum of 150 cases to 5-10 cases per variable [5]. Furthermore, the data must contain sufficient correlations between variables to justify reduction to fewer components, which can be verified using Bartlett's test of sphericity [5].

PCA is also notably sensitive to feature scales, necessitating standardization prior to analysis to prevent variables with larger ranges from dominating the component structure [1] [3]. The presence of significant outliers can substantially distort results, as these extreme values exert disproportionate influence on the variance-maximizing process [5] [3]. Finally, PCA assumes that greater variance corresponds to more important information, which may not always hold true for specific analytical objectives [1].

Table 1: Core Linear Assumptions of Standard PCA

| Assumption | Description | Consequence of Violation |

|---|---|---|

| Linearity | Relationships between variables are linear | Fails to capture non-linear patterns |

| Scale Sensitivity | Variables must be standardized | Variables with larger ranges dominate components |

| Outlier Sensitivity | Data should not contain significant outliers | Component directions are disproportionately influenced |

| Variance Equals Importance | Directions with maximum variance are most informative | May preserve noise instead of signal |

| Adequate Correlation | Variables must be sufficiently correlated | Data reduction becomes ineffective |

Kernel PCA: Extending PCA to Non-Linear Domains

Kernel PCA (KPCA) represents a sophisticated extension of conventional PCA that effectively handles non-linear data structures through the application of the kernel trick [7] [6]. This approach enables PCA to operate in a higher-dimensional feature space without explicitly computing the coordinates in that space, instead focusing on the inner products between data points [6]. By applying a non-linear mapping function to transform the original data, KPCA can capture complex relationships that standard linear PCA would miss, while still leveraging the computational efficiency of linear algebra operations in the transformed space [6].

The kernel function itself serves as a measure of similarity between data points, with common choices including the radial basis function (RBF), polynomial, and sigmoid kernels [8] [6]. For genomic data research, this capability is particularly valuable as biological systems often exhibit non-linear interactions. For instance, in spatial transcriptomics, KPCA has successfully integrated single-cell RNA-seq with spatial transcriptomics data, enabling accurate inference of RNA velocity in spatially resolved tissues at single-cell resolution [8]. The KSRV framework demonstrates KPCA's practical utility by employing an RBF kernel to model the complex, non-linear relationships present in genomic data, outperforming methods relying on linear dimensionality reduction [8].

A significant challenge with KPCA, however, is the interpretation of its components. Unlike standard PCA where component loadings directly indicate variable contributions, KPCA components are less interpretable [6]. To address this limitation, researchers have integrated random forest conditional variable importance measures (cforest) with KPCA to identify key variables, enabling both non-linear pattern detection and meaningful biological interpretation [6].

Comparative Analysis: Linear PCA vs. Kernel PCA

Theoretical and Methodological Differences

The fundamental distinction between linear PCA and kernel PCA lies in their approach to data transformation. While linear PCA identifies orthogonal directions of maximum variance in the original feature space through eigendecomposition of the covariance matrix, kernel PCA operates by implicitly mapping data to a higher-dimensional space where non-linear patterns become linearly separable [7] [6]. This methodological divergence leads to significantly different capabilities and limitations for each technique.

Linear PCA generates components that are linear combinations of original variables, maintaining interpretability through component loadings that indicate each variable's contribution [1] [2]. In contrast, kernel PCA produces components in a high-dimensional feature space where direct interpretation becomes challenging [6]. Computational requirements also differ substantially, with linear PCA being more efficient for large datasets due to its reliance on straightforward linear algebra operations, while kernel PCA requires handling of the kernel matrix whose size scales with the square of the number of samples [7] [6].

Table 2: Theoretical Comparison of Linear PCA and Kernel PCA

| Characteristic | Linear PCA | Kernel PCA |

|---|---|---|

| Transformation Type | Linear | Non-linear (via kernel trick) |

| Component Interpretability | High (direct loadings) | Low (implicit feature space) |

| Computational Complexity | O(p²) for p variables | O(n²) to O(n³) for n samples |

| Data Assumptions | Linear relationships | Non-linear patterns can be captured |

| Handling Redundancy | Removes linear correlations | Addresses non-linear dependencies |

| Common Applications | Exploratory analysis, data compression | Complex pattern recognition, biological data |

Performance Comparison in Genomic Research Context

Empirical evaluations in genomic research contexts demonstrate the complementary strengths of linear and kernel PCA. For population genetics studies analyzing tens of millions of single-nucleotide polymorphisms (SNPs), linear PCA implementations like VCF2PCACluster have proven highly effective at determining population structure with minimal computational resources [9]. This tool achieves remarkable efficiency, requiring only approximately 0.1 GB of memory when analyzing over 81 million SNPs from the 1000 Genome Project, while successfully distinguishing African (AFR), Asian (EAS/SAS), European (EUR), and Americas (AMR) populations [9].

In more complex biological scenarios where non-linear relationships prevail, kernel PCA demonstrates superior performance. In metabolomics research, KPCA successfully captured non-linear variations in metabolic data that conventional PCA failed to detect [6]. When applied to urinary metabolic and elemental data, KPCA effectively dispersed samples according to individual differences and dietary patterns, while linear PCA concentrated most samples in particular positions, obscuring meaningful patterns [6]. Similarly, in spatial transcriptomics, the KSRV framework leveraging KPCA more accurately reconstructed spatial differentiation trajectories in mouse brain development and organogenesis compared to linear methods [8].

Table 3: Empirical Performance Comparison on Biological Datasets

| Dataset & Application | Linear PCA Performance | Kernel PCA Performance |

|---|---|---|

| 1000 Genome Project (Population Genetics) | Accurate population clustering with minimal memory (0.1GB) [9] | Not applied; linear sufficient |

| Spatial Transcriptomics (Mouse Brain) | Limited by linear assumptions in integration [8] | Accurate RNA velocity inference and trajectory reconstruction [8] |

| Metabolic Profiling (Human Urine) | Concentrated samples, obscured patterns [6] | Effective dispersion by individual/diet, identified hippurate as key metabolite [6] |

| Genomic Selection (Pig Populations) | GBLUP model as baseline [10] | NTLS framework with ML improved accuracy [10] |

Experimental Protocols for Genomic Data Analysis

Benchmarking Protocol for PCA Performance Evaluation

Robust evaluation of PCA methods in genomic research requires standardized protocols to ensure fair comparison and reproducible results. The following methodology, adapted from benchmarking studies [9], outlines a comprehensive approach for comparing linear and kernel PCA performance on genomic datasets:

Dataset Preparation: Begin with standard VCF formatted SNP data, such as the 1000 Genome Project dataset encompassing 1,055,401 SNPs across 2,504 samples [9]. Apply quality control filters including minor allele frequency (MAF > 0.05), missingness per marker (Miss < 0.25), and Hardy-Weinberg equilibrium (HWE) p-value threshold [9]. For non-linear benchmark tasks, incorporate spatial transcriptomics data integrating single-cell RNA-seq with spatial location information [8].

Data Preprocessing: Standardize the data to have mean zero and unit variance for each variable to ensure equal contribution to component determination [1] [4]. For kernel PCA, select appropriate kernel functions (e.g., RBF with optimized sigma parameter) through sensitivity analysis [8] [6].

Implementation and Execution: For linear PCA, employ efficient implementations such as VCF2PCACluster or PLINK2 [9]. For kernel PCA, utilize frameworks like KSRV for genomic applications [8]. Execute both methods with identical computational resources, recording execution time and memory consumption.

Evaluation Metrics: Assess computational efficiency through peak memory usage and processing time [9]. Evaluate biological accuracy by measuring clustering consistency with known population structures [9] or through weighted cosine similarity between estimated and reference vectors for trajectory inference [8]. For genomic selection applications, compare predictive accuracy for traits such as days to 100 kg, back fat thickness, and number of piglets born alive [10].

Workflow for Genomic Data Analysis Using PCA

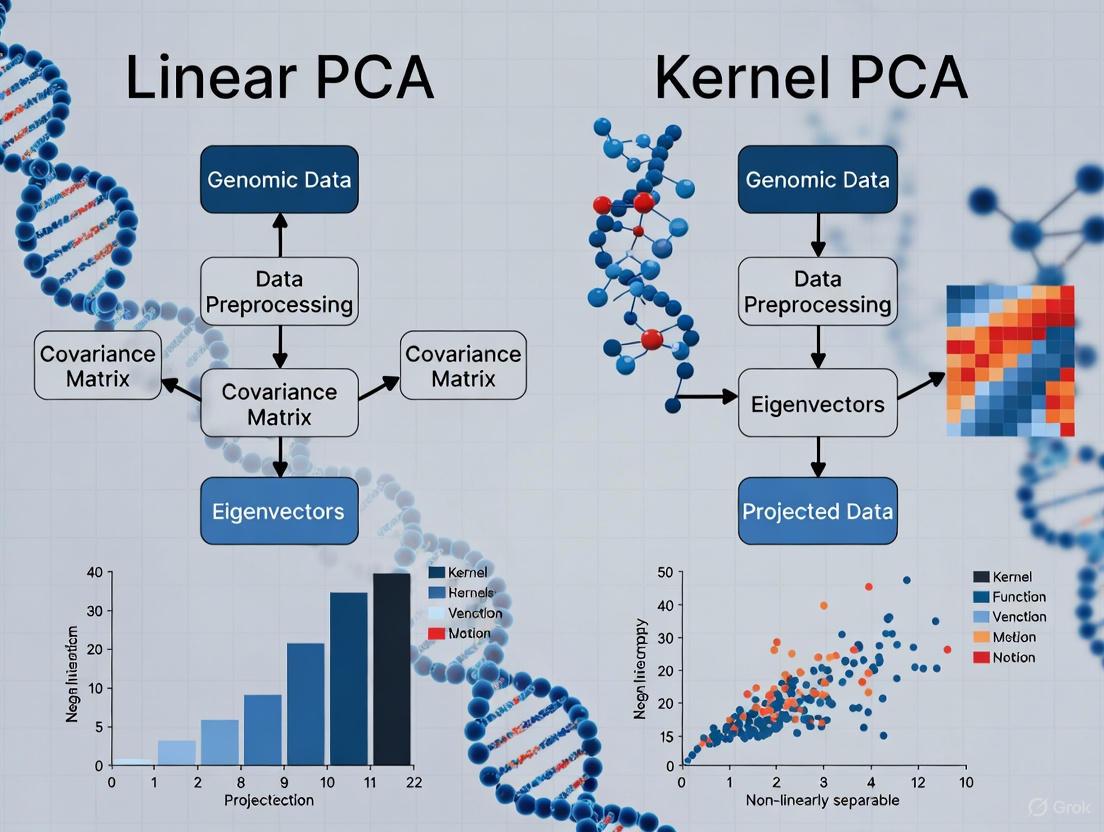

The following diagram illustrates the standardized workflow for applying both linear and kernel PCA to genomic data, highlighting their divergent paths for handling linear versus non-linear patterns:

PCA Workflow for Genomic Data

Essential Computational Tools for Genomic PCA

Implementing PCA in genomic research requires specialized software tools capable of handling large-scale biological datasets while providing appropriate algorithmic variants for different data structures. The following table catalogs key software solutions and their capabilities for both linear and kernel PCA applications in genomic research:

Table 4: Computational Tools for PCA in Genomic Research

| Tool | PCA Type | Key Features | Genomic Applications |

|---|---|---|---|

| VCF2PCACluster [9] | Linear | Memory-efficient (0.1GB for 81M SNPs), fast processing, clustering integration | Population structure analysis, large-scale SNP datasets |

| PLINK2 [9] | Linear | Established standard, comprehensive QC features, format conversion | Genome-wide association studies, population genetics |

| KSRV Framework [8] | Kernel PCA (RBF) | Spatial transcriptomics integration, RNA velocity inference | Cellular differentiation trajectories, developmental biology |

| KPCA with cforest [6] | Kernel PCA (ANOVA) | Random forest variable importance, metabolite identification | Metabolomics, biomarker discovery, nutritional studies |

| Scikit-learn [4] | Linear & Kernel PCA | Python implementation, versatile kernels, integration with ML pipelines | General-purpose genomic analysis, prototyping algorithms |

Linear PCA remains an indispensable tool for genomic research, particularly for population structure analysis where its efficiency and interpretability provide significant advantages [9] [2]. Its linear assumptions, while limiting in some contexts, yield highly computationally efficient algorithms capable of processing tens of millions of SNPs with minimal memory requirements [9]. However, as genomic research increasingly addresses complex non-linear phenomena such as cellular differentiation, gene expression dynamics, and metabolic pathways, kernel PCA offers a powerful extension that can capture these sophisticated patterns [8] [6].

The choice between linear and kernel PCA should be guided by the specific research question, data characteristics, and computational resources. Linear PCA suffices for many population genetics applications where linear patterns dominate and interpretability is paramount [9]. In contrast, kernel PCA excels in contexts where biological systems exhibit inherent non-linearities, such as spatial transcriptomics and metabolomics, despite its greater computational demands and interpretive challenges [8] [6]. Future methodological developments will likely focus on hybrid approaches that balance efficiency with flexibility, along with improved interpretation techniques for non-linear component analyses [7] [6].

In the field of genomics, researchers routinely encounter datasets where the number of variables (p) vastly exceeds the number of observations (n), creating a significant p >> n problem. This high-dimensionality is further complicated by multicollinearity—extreme correlations between genetic variants due to linkage disequilibrium—which makes traditional statistical models unstable or impossible to fit [11] [12]. Genomic data from technologies like SNP microarrays or RNA sequencing can measure tens of thousands to millions of features (genes, SNPs) from only hundreds of samples. Principal Component Analysis (PCA) has emerged as a fundamental tool to address these challenges, with both linear and nonlinear (kernel) variants offering distinct approaches to dimensionality reduction in genomic studies [13] [14].

Linear vs. Kernel PCA: Core Methodological Differences

Linear PCA

Linear PCA is an unsupervised linear dimensionality reduction technique that projects data onto a new set of orthogonal axes called principal components [15]. These components are chosen to maximize the variance of the projected data, with the first component (PC1) capturing the largest possible variance, the second (PC2) capturing the next largest while being orthogonal to PC1, and so on [15]. The method works by performing an eigen decomposition of the covariance matrix of the centered data, with the eigenvectors defining the directions of the new components [15].

Kernel PCA (KPCA)

Kernel PCA extends linear PCA by applying the kernel trick to implicitly map data into a higher-dimensional feature space [16] [6]. This mapping allows KPCA to capture complex nonlinear relationships in the data that linear PCA would miss. After the transformation, standard PCA is performed in this new feature space, enabling the discovery of nonlinear patterns and structures [16]. The choice of kernel function—such as linear, polynomial, Gaussian (RBF), or sigmoid—provides flexibility in how the data is transformed [16].

The diagram below illustrates the conceptual difference between the two approaches:

Comparative Strengths and Limitations

Table 1: Methodological Comparison of Linear PCA and Kernel PCA

| Feature | Linear PCA | Kernel PCA |

|---|---|---|

| Mathematical Foundation | Eigen decomposition of covariance matrix | Kernel trick + eigen decomposition of kernel matrix |

| Linearity Assumption | Assumes linear relationships in data | Captures nonlinear patterns and interactions |

| Computational Complexity | Lower complexity, suitable for large datasets | Higher complexity, especially for large sample sizes |

| Interpretability | Components are linear combinations of original variables | Interpretability challenging due to implicit mapping |

| Key Advantage | Computational efficiency, simplicity, interpretability | Flexibility in capturing complex data structures |

| Primary Limitation | Cannot capture nonlinear patterns | Computational demands, parameter selection complexity |

Performance Comparison in Genomic Applications

Simulation Studies and Direct Comparisons

In a comprehensive simulation study specifically designed for high-dimensional genomic data integration, researchers found that the first few kernel principal components showed poorer performance compared to linear principal components for classification tasks [13]. The study developed a copula-based simulation algorithm that accounted for the degree of dependence and nonlinearity observed in real genomic datasets, then compared linear and kernel PCA methods for data integration and death classification [13]. Surprisingly, the results indicated that reducing dimensions using linear PCA with a logistic regression model provided adequate classification performance for genomic data, though integrating information from multiple datasets using either approach improved classification accuracy [13].

Genomic Prediction Performance

Research comparing principal component regression (PCR) with genomic REML (GREML) for genomic prediction across populations revealed that GREML slightly outperformed PCR in most scenarios [11] [12]. The study utilized pre-corrected average daily milk, fat, and protein yields of 1,609 first lactation Holstein heifers from Ireland, UK, the Netherlands, and Sweden, genotyped with 50k SNPs [12]. The highest achievable PCR accuracies were obtained across a wide range of principal components (from one to more than 1,000), but selecting the optimal number of components remained challenging [12].

Table 2: Experimental Performance in Genomic Studies

| Study/Application | Linear PCA Performance | Kernel PCA Performance | Key Metrics |

|---|---|---|---|

| Genomic Data Integration [13] | Adequate for classification | Poor performance in first few components | Classification accuracy, AUC |

| Across-Population Genomic Prediction [12] | Slightly less accurate than GREML | Not evaluated | Prediction accuracy, correlation |

| Metabolite-Diet Association [6] | Limited pattern separation | Effective clustering by individual differences | Pattern separation, clustering quality |

| Cancer Classification [17] | Used in combination with other methods | Not evaluated | Classification accuracy |

Successful Application of KPCA in Metabolomics

Despite generally mixed performance, KPCA has demonstrated notable success in specific genomic applications. In NMR-based metabolic profiling, KPCA effectively revealed nonlinear metabolic relationships that conventional PCA failed to capture [6]. The study incorporated a random forest conditional variable importance measure (cforest) to identify key metabolites following KPCA, successfully identifying hippurate as the most important variable associated with dietary patterns [6]. This KPCA-incorporated analytical approach enabled researchers to capture input-output responses between urinary metabolites and nutritional intake that remained hidden with linear methods [6].

Experimental Protocols and Methodologies

Standard PCA Protocol for Genomic Data

The typical workflow for applying linear PCA to genomic data involves several key steps [12]:

Data Preparation: Format genotype data into a matrix X of order (n × p) where n individuals have been genotyped for p SNPs, with elements coded as 0, 1, or 2 representing homozygous reference, heterozygous, and homozygous alternative genotypes respectively.

Data Standardization: Center the data by subtracting the mean of each variable, with optional scaling to unit variance.

Covariance Matrix Computation: Calculate the covariance matrix (or correlation matrix) of the standardized genotype data.

Eigen Decomposition: Perform eigen decomposition of the covariance matrix to obtain eigenvalues and eigenvectors.

Component Selection: Choose the top k eigenvectors (principal components) based on eigenvalues (variance explained) or a predetermined threshold (e.g., 95% variance explained).

Data Projection: Project the original data onto the selected principal components to obtain the transformed dataset: T = XW, where W contains the selected eigenvectors.

Kernel PCA Implementation Protocol

The workflow for Kernel PCA includes additional steps related to kernel selection and parameter tuning [16] [6]:

Kernel Selection: Choose an appropriate kernel function based on data characteristics (linear, polynomial, Gaussian RBF, sigmoid).

Parameter Tuning: Optimize kernel parameters (e.g., degree for polynomial kernels, sigma for Gaussian kernels) through cross-validation.

Kernel Matrix Computation: Compute the kernel matrix K where each element Kij represents the similarity between subjects i and j based on the chosen kernel function.

Center the Kernel Matrix: Modify the kernel matrix to ensure it represents data centered in the feature space.

Eigen Decomposition: Perform eigen decomposition of the centered kernel matrix.

Component Selection and Projection: Similar to linear PCA, but operating on the kernel matrix rather than the original data.

The following diagram illustrates the comparative experimental workflows:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Analytical Tools for Genomic Dimensionality Reduction

| Tool/Technique | Function | Application Context |

|---|---|---|

| Linear PCA | Linear dimensionality reduction | Population stratification, noise reduction, multicollinearity resolution |

| Kernel PCA | Nonlinear dimensionality reduction | Capturing complex genetic interactions, metabolic pathway analysis |

| Random Forest cforest | Variable importance measurement | Identifying key biomarkers post-KPCA analysis [6] |

| Copula-based Simulation | Generating realistic genomic data | Method comparison accounting for genomic data structures [13] |

| Genomic Relationship Matrix (G matrix) | Quantifying genetic similarity | Mixed models replacing pedigree relationships [12] |

| Cross-validation Protocols | Model selection and validation | Determining optimal number of components [12] |

The comparative analysis reveals that linear PCA remains a robust and efficient choice for many genomic applications, particularly when dealing with population structure, multicollinearity, and the p >> n problem [13] [12]. Its computational efficiency, interpretability, and generally adequate performance make it suitable for initial data exploration and dimensionality reduction in high-dimensional genomic studies.

Kernel PCA offers complementary strengths for specific scenarios where nonlinear patterns are theoretically important, such as in metabolic profiling or when analyzing complex gene-gene interactions [6]. However, its increased computational demands, parameter sensitivity, and challenging interpretation have limited its widespread adoption in genomics.

For researchers navigating these methodologies, the evidence suggests: (1) Begin with linear PCA for initial data exploration and dimensionality reduction; (2) Reserve kernel PCA for situations where nonlinear relationships are strongly suspected or initial linear approaches prove inadequate; (3) Consider hybrid approaches that combine PCA with machine learning methods like random forests for enhanced pattern detection [6]; and (4) Carefully validate the choice of dimensionality reduction method based on the specific analytical goals and data characteristics of each study.

The analysis of genomic data presents a fundamental challenge: the number of variables (e.g., SNPs, genes) vastly exceeds the number of observations (samples or cells), a phenomenon known as the "curse of dimensionality." Principal Component Analysis (PCA) has emerged as a ubiquitous solution to this problem, providing a robust mathematical framework for simplifying complex biological data while preserving essential patterns and structures. As a dimensionality reduction technique, PCA transforms high-dimensional genomic data into a smaller set of uncorrelated variables called principal components, which capture the maximum variance in the data [1]. This process enables researchers to visualize population structure, identify key quality control metrics, and uncover underlying biological relationships that would otherwise remain hidden in the high-dimensional space.

The recent advent of kernel PCA (KPCA) represents a significant evolution of traditional linear PCA, extending its capabilities to capture complex nonlinear relationships in data [18]. While linear PCA identifies principal components that are linear combinations of the original variables, KPCA utilizes the kernel trick to implicitly map data into a higher-dimensional feature space where nonlinear patterns become separable by linear methods. This theoretical advancement has profound implications for genomic research, where biological relationships often exhibit nonlinear characteristics. This article provides a comprehensive comparison of linear PCA and kernel PCA, examining their respective performances, applications, and methodological considerations within genomic research contexts, from population genetics to single-cell transcriptomics.

Theoretical Foundations: Linear PCA vs. Kernel PCA

Mathematical Underpinnings of Linear PCA

Principal Component Analysis operates on a simple yet powerful geometric principle: identifying the orthogonal directions (principal components) in which the data exhibits maximum variance. The algorithm follows a standardized computational pipeline [1]:

- Standardization: Centering and scaling the data to ensure each variable contributes equally to the analysis

- Covariance Matrix Computation: Calculating how variables vary from the mean relative to each other

- Eigen Decomposition: Determining eigenvectors (principal components) and eigenvalues (variance explained) from the covariance matrix

- Feature Selection: Retaining components that capture significant variance

- Data Projection: Transforming the original data onto the new principal component axes

The principal components are constructed as linear combinations of the initial variables, with the first component capturing the largest possible variance, the second component capturing the next highest variance while being uncorrelated with the first, and so on [1]. Geometrically, these components represent new axes that provide the optimal angle for visualizing and evaluating data differences.

Kernel PCA: Extending to Nonlinear Domains

Kernel PCA extends traditional PCA to capture nonlinear structures by leveraging the kernel trick, a mathematical approach that enables operations in high-dimensional feature spaces without explicit computation of coordinates [18]. The fundamental innovation of KPCA lies in its implicit mapping of original data points from their input space to a higher-dimensional feature space using a nonlinear function φ:

[ φ:ℝ^d→ℝ^D, D≫d ]

Instead of performing eigen-decomposition on the covariance matrix of the original data, KPCA works with the kernel matrix K, where each entry (K{ij} = k(xi,xj) = \langle φ(xi), φ(x_j)\rangle) represents the similarity between data points in this high-dimensional feature space [18]. Common kernel functions include the Radial Basis Function (RBF), polynomial, and sigmoid kernels, each imposing different geometric properties on the transformed feature space.

Table 1: Common Kernel Functions in Kernel PCA

| Kernel Type | Mathematical Form | Key Parameters | Best Suited For |

|---|---|---|---|

| Radial Basis Function (RBF) | (k(xi,xj) = \exp(-\gamma ||xi-xj||^2)) | γ (bandwidth) | Complex nonlinear structures |

| Polynomial | (k(xi,xj) = (xi \cdot xj + c)^d) | d (degree), c (coefficient) | Polynomial relationships |

| Sigmoid | (k(xi,xj) = \tanh(\alpha xi \cdot xj + c)) | α, c | Neural network-like structures |

Performance Comparison: Empirical Evidence Across Genomic Applications

Population Genetics and Ancestry Analysis

In population genetics, PCA has become the standard method for visualizing population structure and inferring ancestry patterns from genome-wide SNP data. Linear PCA efficiently identifies major population divergences, revealing genetic clusters that often correspond to geographic origins [19]. However, kernel PCA demonstrates superior capability in capturing fine-scale population structures and continuous gradients of genetic variation that may exhibit nonlinear patterns.

The performance of PCA in population genetics is heavily dependent on implementation considerations. VCF2PCACluster exemplifies modern optimized PCA tools, achieving identical accuracy to established software like PLINK2 and GCTA while demonstrating significantly better performance in memory usage (~0.1 GB versus >200 GB for 81.2 million SNPs) [9]. This memory efficiency stems from its line-by-line processing strategy, where memory usage depends solely on sample size rather than the number of SNPs, making it particularly suitable for large-scale genome-wide studies.

Table 2: Performance Comparison of PCA Tools for Genomic Analysis

| Tool | Input Format | Key Features | Memory Usage | Computation Time |

|---|---|---|---|---|

| VCF2PCACluster | VCF | Kinship estimation, PCA, clustering, visualization | ~0.1 GB (independent of SNP number) | ~610 min for 81.2M SNPs |

| PLINK2 | VCF/PLINK | General genetic analysis | >200 GB for 81.2M SNPs | Comparable to VCF2PCACluster |

| GCTA | VCF/BED | Heritability analysis, PCA | High | Similar to PLINK2 |

| TASSEL | Multiple | Evolutionary genetics | >150 GB | >400 min |

| GAPIT3 | Multiple | Genome-wide association study | >150 GB | >400 min |

Single-Cell Transcriptomics and Spatial Genomics

In single-cell RNA sequencing (scRNA-seq) and spatial transcriptomics, kernel methods have demonstrated particular utility for tackling nonlinear relationships in cellular differentiation trajectories. The KSRV (Kernel PCA-based Spatial RNA Velocity) framework integrates scRNA-seq with spatial transcriptomics using Kernel PCA to accurately infer RNA velocity in spatially resolved tissues at single-cell resolution [8]. When validated using 10x Visium data and MERFISH datasets, KSRV showed superior accuracy and robustness compared to existing methods like SIRV and spVelo, successfully revealing spatial differentiation trajectories in the mouse brain and during mouse organogenesis [8].

The KSRV algorithm follows a systematic three-step process that highlights the practical application of kernel methods in genomic research [8]:

- Integration: scRNA-seq and spatial transcriptomics (ST) data are independently projected into a nonlinear latent space via kernel PCA with a radial basis function (RBF) kernel, followed by alignment using domain adaptation

- Prediction: Based on aligned latent representations, KSRV predicts spliced and unspliced expression at each spatial spot by borrowing information from nearby single cells using k-nearest neighbors (kNN) regression with k=50

- Velocity Estimation: With the enriched data, spatial RNA velocity vectors are estimated and used to reconstruct cell differentiation trajectories in space at single-cell resolution

Figure 1: KSRV Workflow for Spatial RNA Velocity Inference

Quality Control in Industrial and Manufacturing Applications

Beyond genomic research, PCA and kernel PCA play crucial roles in quality control and process monitoring, particularly in industrial applications where multivariate process data requires continuous surveillance. While conventional PCA effectively monitors linear processes, its kernel variant proves essential for detecting faults in systems with nonlinear characteristics [20].

In a comprehensive comparison of monitoring techniques for industrial processes like the Tennessee Eastman Process and Cement Rotary Kiln, Reduced KPCA (RKPCA) methods demonstrated significant advantages in fault detection accuracy while addressing KPCA's computational challenges [20]. These approaches utilize data reduction techniques like Spectral Clustering (SpC) and Random Sampling (RnS) to retain the most relevant observations, maintaining detection performance while reducing computation time and storage space by up to 70% compared to conventional KPCA [20].

For monitoring mixed attribute and variable quality characteristics, the Kernel PCA Mix Chart has shown superior performance compared to the PCA Mix Chart, particularly for detecting small mean shifts when categorical data has imbalanced proportions [21]. This capability is crucial for modern manufacturing, where 95% of products might have good quality while only 5% are defective, creating precisely the type of imbalanced scenario where kernel methods excel.

Experimental Protocols and Methodological Considerations

Standardized Protocol for Population Genetics PCA

For researchers implementing PCA in population genetic studies, the following protocol ensures reproducible results:

- Data Preprocessing: Filter SNPs based on missingness (>10% excluded), minor allele frequency (MAF < 0.05 excluded), and Hardy-Weinberg equilibrium (p < 10⁻⁶) [9]

- Kinship Matrix Calculation: Use normalizedIBS or centeredIBS methods to account for genetic relatedness and mitigate confounding factors [9]

- PCA Implementation: Execute eigen decomposition on the kinship matrix using optimized tools like VCF2PCACluster

- Component Selection: Retain components explaining >1% of variance each, or use scree plot inflection point

- Visualization: Generate 2D/3D plots of the first 2-3 principal components, coloring samples by putative populations

- Cluster Validation: Apply EM-Gaussian, K-Means, or DBSCAN clustering on top PCs and compare with known sample labels

Kernel PCA Implementation for Nonlinear Data

When implementing kernel PCA for genomic data with suspected nonlinear patterns, the following protocol is recommended:

Kernel Selection: Choose an appropriate kernel function based on data characteristics:

- RBF kernel for complex nonlinear structures (default γ = 1/(number of features × variance of data))

- Polynomial kernel for known polynomial relationships

- Sigmoid kernel for neural network applications [18]

Parameter Optimization: Use grid search with cross-validation to identify optimal kernel parameters, as performance heavily depends on proper tuning [18]

Computational Considerations: For large datasets (>10,000 samples), implement reduced KPCA approaches like SpC or RnS to maintain computational feasibility [20]

Interpretation: Address the black-box nature of kernel methods using interpretation techniques like KPCA-IG (Interpretable Gradient), which computes feature importance based on partial derivatives of the kernel function [22]

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Computational Tools for PCA in Genomic Research

| Tool/Resource | Function | Application Context | Key Advantage |

|---|---|---|---|

| VCF2PCACluster | Kinship estimation, PCA, clustering | Population genetics | Memory efficiency independent of SNP number |

| KSRV Framework | Spatial RNA velocity inference | Single-cell/spatial transcriptomics | Nonlinear integration of scRNA-seq and spatial data |

| KPCA-IG | Feature interpretation in kernel PCA | High-dimensional omics data | Data-driven feature importance ranking |

| RKPCA with SpC/RnS | Fault detection in nonlinear processes | Quality control, process monitoring | Reduced computation while maintaining detection accuracy |

| Kernel PCA Mix Chart | Monitoring mixed quality characteristics | Industrial quality control | Superior performance with imbalanced categorical data |

Discussion: Strategic Selection for Genomic Research Applications

The choice between linear PCA and kernel PCA depends fundamentally on the research question, data characteristics, and computational resources. Linear PCA remains the preferred method for initial data exploration, visualization of clear population structures, and quality control of large-scale genomic datasets where computational efficiency is paramount. Its advantages include computational efficiency, straightforward interpretation, and well-established methodologies [1].

Kernel PCA demonstrates superior performance in scenarios involving complex nonlinear relationships, such as cellular differentiation trajectories, subtle population substructures, and systems with strong interactive effects [8] [22]. The limitations of KPCA—including computational demands, sensitivity to kernel parameters, and interpretability challenges—are being actively addressed through methodological advances like reduced KPCA and interpretation frameworks like KPCA-IG [20] [22].

As genomic technologies continue to evolve, producing increasingly complex and high-dimensional data, the integration of kernel methods with traditional linear approaches will likely become standard practice. Frameworks like KSRV that strategically combine linear and nonlinear dimensionality reduction techniques point toward a future where researchers can flexibly adapt their analytical approach to the inherent structure of their biological data, ultimately leading to more accurate models of complex biological systems.

For researchers in genomics, analyzing high-dimensional data like single-cell RNA sequencing (scRNA-seq) results often means confronting complex, nonlinear relationships between variables. Traditional Principal Component Analysis (PCA), a linear method, can struggle to capture these intricate patterns, potentially obscuring meaningful biological insights [23] [24]. Kernel PCA (KPCA) addresses this fundamental limitation, offering a powerful nonlinear alternative that has become instrumental in advancing genomic research [8] [22].

The Core Principle: From Linear to Nonlinear Separation

The central idea behind KPCA is conceptually elegant: it uses a kernel function to implicitly map input data into a higher-dimensional feature space, where complex nonlinear structures in the original data become linearly separable. Principal components are then identified in this new space [23] [24].

This process relies on the "kernel trick," which allows the model to compute the inner products (a measure of similarity) in the high-dimensional feature space without ever needing to calculate the coordinates of the data in that space explicitly [22]. This makes the computation feasible, even for very high-dimensional genomic data.

The following diagram illustrates the conceptual workflow of KPCA compared to linear PCA.

The Mathematical Foundation

The kernel trick transforms the data matrix. Let a dataset of ( n ) observations be ( \mathbf{x}1, \ldots, \mathbf{x}n ) with ( \mathbf{x}_i \in \mathbb{R}^p ). A kernel function ( k ) is defined as ( k: \chi \times \chi \longrightarrow \mathbb{R} ), which must be symmetric and positive semi-definite [22].

KPCA operates on the kernel matrix ( \mathbf{K} ), where each element ( \mathbf{K}{ij} = k(\mathbf{x}i, \mathbf{x}_j) ) represents the pairwise similarity between data points ( i ) and ( j ) in the high-dimensional feature space [22]. The principal components in this space are obtained by solving an eigenvalue problem on the centered kernel matrix ( \tilde{\mathbf{K}} ) [22].

Kernel Functions in Practice

The choice of kernel function determines the type of nonlinear relationships the model can capture. The table below summarizes common kernels used in genomic studies.

Table 1: Common Kernel Functions in KPCA

| Kernel Name | Mathematical Form | Key Characteristics | Typical Use Cases in Genomics |

|---|---|---|---|

| Radial Basis Function (RBF) | ( K(\mathbf{x}i, \mathbf{x}j) = \exp(-\gamma |\mathbf{x}i - \mathbf{x}j|^2) ) | Captures local, complex nonlinear structures; highly flexible [8] [24]. | Analyzing data with intricate, local patterns like cellular differentiation trajectories [8]. |

| Polynomial | ( K(\mathbf{x}i, \mathbf{x}j) = (\mathbf{x}i \cdot \mathbf{x}j + c)^d ) | Captures global, polynomial relationships; complexity controlled by degree ( d ) [24]. | Useful for feature interactions that can be modeled by polynomial functions. |

KPCA vs. Linear PCA: A Performance Benchmark

The theoretical advantage of KPCA is best understood through its performance in practical genomic applications. The following examples demonstrate its superiority over linear PCA in handling nonlinear data structures.

Case Study: The Swiss Roll Dataset

The "Swiss Roll" is a classic example of a simple 3D manifold where the true underlying structure is a 2D spiral. When linear PCA is applied, it fails to "unroll" the spiral, instead projecting the data in a way that retains the spiral shape and leaves different sections linearly inseparable [24]. In contrast, KPCA using an RBF kernel successfully unrolls the manifold, clearly separating the different sections of the spiral and revealing the true, simpler structure of the data [24].

Case Study: Spatial Transcriptomics and RNA Velocity

A direct benchmark in a cutting-edge genomic application comes from the KSRV framework, a method for inferring spatial RNA velocity. KSRV integrates scRNA-seq data with spatial transcriptomics data using KPCA. The developers validated KSRV on 10x Visium and MERFISH datasets, showing it was more accurate and robust at revealing spatial differentiation trajectories in the mouse brain and during mouse organogenesis compared to existing methods like SIRV and spVelo, which may rely on linear assumptions [8]. This demonstrates KPCA's power in integrating complex, multi-modal genomic data to uncover dynamic biological processes.

Quantitative Performance Comparisons

The table below summarizes key findings from benchmarks comparing dimensionality reduction techniques, including PCA and its alternatives.

Table 2: Benchmarking Dimensionality Reduction Techniques

| Method | Reported Performance / Characteristics | Context / Dataset |

|---|---|---|

| Kernel PCA (KPCA) | Successfully revealed spatial differentiation trajectories; more accurate/robust than SIRV/spVelo [8]. | Spatial RNA velocity inference (10x Visium, MERFISH) [8]. |

| Random Projections (RP) | Surpassed PCA in computational speed; rivaled or exceeded PCA in preserving data variability and clustering quality [25]. | Benchmarking on scRNA-seq datasets [25]. |

| Standard PCA | Performance typically degrades with increasing data size; sensitive to outliers; assumes linearity [25]. | General limitation noted in benchmarking study [25]. |

Experimental Protocols for Genomic Data

Implementing KPCA effectively on genomic data requires a structured workflow. The following diagram and protocol detail the key steps, drawing from methodologies used in recent studies.

Detailed Methodology for Spatial RNA Velocity Inference (KSRV)

The KSRV framework provides a clear protocol for using KPCA with genomic data [8]:

- Data Integration and Domain Adaptation: Begin with scRNA-seq and spatial transcriptomics (ST) data. Identify a common gene set and apply a domain adaptation framework (like PRECISE) to align the distributions of the two datasets and mitigate batch effects before dimensionality reduction [8].

- Nonlinear Projection with Kernel PCA: Independently project both the scRNA-seq and ST data into a shared nonlinear latent space using Kernel PCA with an RBF kernel. Compute the eigenvectors of the kernel matrices to extract principal components [8].

- Latent Space Alignment: Apply singular value decomposition (SVD) to orthogonalize the components from the two datasets. Retain only those components with a cosine similarity exceeding a defined threshold (e.g., 0.3) to finalize the common latent space [8].

- Prediction and Imputation: For each spot in the spatial data, predict its unmeasured spliced and unspliced gene expression. This is done by performing a k-nearest neighbors (kNN) regression (e.g., k=50) on its nearest neighbors from the aligned scRNA-seq data in the latent space, using a weighted average based on cosine distance [8].

- Velocity Calculation and Trajectory Inference: Using the imputed expression values, compute RNA velocity vectors for each spatial cell. Project these vectors onto the tissue's spatial coordinates to visualize and reconstruct cell differentiation trajectories [8].

The Researcher's Toolkit for KPCA

Table 3: Essential Research Reagent Solutions for KPCA in Genomics

| Tool / Resource | Function / Description | Relevance to KPCA |

|---|---|---|

| scRNA-seq Data (e.g., from 10X Genomics) | Provides high-resolution, single-cell gene expression profiles. | Serves as a foundational data source for integration with spatial data using frameworks like KSRV [8]. |

| Spatial Transcriptomics Data (e.g., 10x Visium, MERFISH) | Provides gene expression data with preserved spatial context within a tissue. | The key dataset for which KPCA helps infer dynamics (e.g., RNA velocity) [8]. |

| RBF Kernel | A kernel function to measure nonlinear similarity between data points. | The core function that enables KPCA to capture complex, nonlinear patterns in genomic data [8] [24]. |

| KSRV Framework | A computational framework for inferring spatial RNA velocity. | A specific, validated implementation of KPCA for a pressing genomic challenge [8]. |

| Domain Adaptation Tools (e.g., PRECISE) | Methods to align data distributions from different sources or technologies. | Critical pre-processing step for integrating diverse genomic datasets (e.g., scRNA-seq and ST) before applying KPCA [8]. |

| k-Nearest Neighbors (kNN) Regression | An algorithm used for prediction based on local neighbors in a latent space. | Used in conjunction with KPCA to impute missing gene expression values in spatial data [8]. |

In the analysis of high-dimensional genomic data, the limitations of linear PCA are becoming increasingly apparent. Kernel PCA provides a mathematically sound and practically validated framework for uncovering the nonlinear patterns that define cellular heterogeneity, differentiation, and spatial organization. As genomic technologies continue to generate data of increasing complexity and scale, leveraging nonlinear alternatives like KPCA will be crucial for researchers and drug developers aiming to extract the most profound biological insights from their data.

In the field of genomic research, where data dimensionality is exceptionally high and biological relationships are often nonlinear, kernel functions have emerged as a powerful mathematical framework for data transformation and analysis. Kernel methods operate on a fundamental principle: they implicitly map data from its original input space into a higher-dimensional feature space where complex, nonlinear patterns become linearly separable. This "kernel trick" allows researchers to perform sophisticated analyses without explicitly computing the coordinates in the higher-dimensional space, making computationally intensive genomic analyses feasible. Within this framework, Linear, Polynomial, and Radial Basis Function (RBF) kernels represent the most widely adopted approaches, each with distinct characteristics that make them suitable for different genomic scenarios. The application of these kernels extends beyond classification to include dimensionality reduction through Kernel Principal Component Analysis (KPCA), which provides a nonlinear alternative to standard PCA and can more effectively capture the complex manifold of biological sample spaces [22].

The selection of an appropriate kernel function is particularly crucial in genomics, where the choice influences the model's ability to capture the underlying biological reality. As we compare these fundamental kernels, it's important to recognize that they form the foundation for more advanced methods now being developed for single-cell and multi-omics integration, such as Multiple Kernel Learning (MKL) frameworks that can transparently model both transcriptomic and epigenomic modalities [26]. This guide provides a systematic comparison of Linear, Polynomial, and RBF kernels specifically within the context of genomic data transformation, offering researchers evidence-based guidance for method selection.

Kernel Functions: Mathematical Foundations and Biological Interpretations

Core Kernel Concepts

Kernel functions fundamentally measure the similarity between pairs of data points based on their genomic features. Mathematically, a kernel function ( k(\mathbf{xi}, \mathbf{xj}) ) computes the inner product between two data points ( \mathbf{xi} ) and ( \mathbf{xj} ) after they have been transformed by a feature mapping function ( \phi ) that projects them into a higher-dimensional space: ( k(\mathbf{xi}, \mathbf{xj}) = \langle \phi(\mathbf{xi}), \phi(\mathbf{xj}) \rangle ). The requirement for a valid kernel is that it must be symmetric and positive semi-definite, ensuring a solid statistical foundation for its use in penalized regression models [14]. This mathematical framework allows genomic researchers to work with similarity measures between samples rather than explicit coordinate representations, which is particularly advantageous when dealing with the high dimensionality of genomic data where the number of features (genes, SNPs, etc.) often vastly exceeds the number of observations.

The biological interpretation of kernel functions relates to how they quantify functional or structural relationships between biological samples. In genome-wide association studies (GWAS), for instance, kernels can be designed to reflect shared genetic variation, while in gene expression analysis, they might capture coordinated expression patterns. The kernel matrix (or Gram matrix) generated by applying a kernel function to all pairs of samples in a dataset effectively encodes a similarity network of biological samples, which can then be used for various analyses including classification, regression, clustering, and dimensionality reduction. This network perspective aligns well with the complex interconnected nature of biological systems.

Kernel Functions for Genomic Data

Table 1: Comparison of Primary Kernel Functions for Genomic Data

| Kernel Type | Mathematical Formulation | Key Parameters | Genomic Interpretation | Ideal Use Cases |

|---|---|---|---|---|

| Linear | ( k(\mathbf{xi}, \mathbf{xj}) = \mathbf{xi}^T \mathbf{xj} ) | None | Measures simple covariance between genomic profiles | Linearly separable data, high-dimensional datasets, baseline comparisons |

| Polynomial | ( k(\mathbf{xi}, \mathbf{xj}) = (1 + \mathbf{xi}^T \mathbf{xj})^d ) | Degree (d), Coefficient (c) | Captures multiplicative interaction effects between genomic features | Modeling pathway interactions, epistasis in genetics, higher-order feature combinations |

| RBF (Gaussian) | ( k(\mathbf{xi}, \mathbf{xj}) = \exp\left(-\frac{|\mathbf{xi} - \mathbf{xj}|^2}{2\sigma^2}\right) ) | Bandwidth (γ), where ( \gamma = \frac{1}{2\sigma^2} ) | Creates local similarity neighborhoods based on exponential decay of similarity with distance | Capturing complex nonlinear relationships, clustering similar expression patterns, most biological datasets |

| Weighted Linear | ( k(\mathbf{xi}, \mathbf{xj}) = \sum{k=1}^q wk G{ik} G{jk} ) | Weights ( w_k ) for each SNP/feature | Gives greater importance to rarer genetic variants when sharing rare alleles | GWAS studies, population genetics, familial relatedness |

The Linear Kernel represents the simplest approach, computing a standard dot product between two sample vectors. It assumes a linear relationship between genomic features and the outcome of interest, making it computationally efficient and less prone to overfitting, though it may fail to capture complex biological interactions. In practice, linear kernels work well for genomic datasets where the number of features already provides sufficient representational power, or when seeking a computationally efficient baseline model.

The Polynomial Kernel introduces nonlinearity by computing the d-th degree polynomial of the linear dot product. The degree parameter (d) controls the complexity of interactions the kernel can capture—with degree 2 capturing pairwise interactions, degree 3 capturing three-way interactions, and so on. This makes polynomial kernels particularly suitable for modeling biological phenomena like epistasis in genetics or pathway interactions in transcriptomics, where the combined effect of multiple genomic features is not merely additive [14].

The Radial Basis Function (RBF) Kernel, also known as the Gaussian kernel, takes a different approach by measuring similarity as an exponentially decaying function of the Euclidean distance between samples. The γ parameter (or bandwidth) controls the influence range of a single sample—small values create a broader influence, while large values create tighter, more localized similarity neighborhoods. The RBF kernel is particularly powerful for genomic data because it can capture complex nonlinear relationships without explicitly defining their functional form, making it suitable for most biological datasets where the true underlying relationship is unknown [14] [27].

Experimental Comparison: Benchmarking Kernel Performance on Genomic Data

Experimental Protocol for Kernel Evaluation

To objectively compare kernel performance on genomic data, researchers must implement a standardized evaluation protocol. A robust experimental framework includes the following key components:

Dataset Selection and Preparation: Utilize diverse genomic datasets representing different biological contexts (e.g., gene expression, SNP data, epigenomic markers). The datasets should vary in key characteristics including number of features, sample size, and biological complexity. Prior to analysis, perform data scaling and normalization as kernel performance, particularly for linear kernels, can be significantly impacted by feature scales [28].

Kernel Implementation: Apply each kernel function to transform the genomic data. For linear kernels, use the direct dot product implementation. For polynomial kernels, test multiple degree values (typically 2, 3, and 4) to capture different interaction levels. For RBF kernels, employ a range of γ values, often determined through cross-validation.

Dimensionality Reduction and Analysis: Apply Kernel PCA to each transformed dataset to visualize the data structure in reduced dimensions. For classification tasks, implement Support Vector Machines (SVM) with each kernel type.

Evaluation Metrics: Quantify performance using multiple metrics including:

- Classification Accuracy: Area Under the Receiver Operating Characteristic Curve (AUROC) for classification tasks

- Computational Efficiency: Training time and memory usage

- Representation Quality: Variance explained in KPCA, cluster separation metrics

Statistical Validation: Implement repeated train-test splits (e.g., 100 repetitions of 80/20 splits) with cross-validation to optimize hyperparameters and ensure robust performance estimates [26].

Quantitative Performance Comparison

Benchmarking studies across diverse genomic datasets reveal consistent patterns in kernel performance. The following table summarizes key findings from multiple genomic studies comparing kernel functions:

Table 2: Experimental Performance Comparison Across Genomic Datasets

| Study Context | Best Performing Kernel | Performance Metric | Linear Kernel | Polynomial Kernel | RBF Kernel | Key Findings |

|---|---|---|---|---|---|---|

| Single-cell Multiomics Classification [26] | RBF | AUROC | 0.82-0.89 | 0.85-0.91 | 0.89-0.94 | RBF consistently outperformed linear across multiple cancer types (breast, prostate, lung) |

| Spatial RNA Velocity Inference (KSRV) [8] | RBF | Trajectory Accuracy | N/A | N/A | Superior | RBF-based KPCA effectively captured nonlinear spatial gene expression patterns |

| Genomic Prediction [29] | Non-parametric Methods | Pearson's r | 0.62 (mean) | N/A | +0.014-0.025 improvement | Non-linear methods showed modest but significant gains over linear alternatives |

| Scintillation Detection [27] | Fine Gaussian (RBF) | Detection Accuracy | Lowest | Medium | Highest | Fine Gaussian SVM outperformed linear and polynomial kernels in complex signal detection |

| Computational Efficiency [28] | Linear (with scaling) | Training Time | 0.0021s (scaled) | Hours (unscaled) | 0.0039s (scaled) | Data scaling dramatically improved linear kernel performance (from 0.8672s to 0.0021s) |

The experimental evidence consistently demonstrates that RBF kernels generally achieve superior performance for most genomic applications, particularly when capturing complex nonlinear relationships present in biological systems. In single-cell multiomics classification, scMKL utilizing RBF kernels achieved AUROC values between 0.89-0.94, outperforming linear kernels which ranged from 0.82-0.89 across breast, prostate, and lung cancer datasets [26]. Similarly, in the KSRV framework for spatial transcriptomics, RBF-based Kernel PCA successfully revealed spatial differentiation trajectories in the mouse brain and during mouse organogenesis by effectively modeling nonlinear relationships [8].

However, linear kernels maintain important advantages in specific scenarios. With proper data scaling, linear kernels can achieve competitive performance with significantly reduced computational requirements—in some benchmarks training 7× faster and using 12× less memory than more complex alternatives [26]. This makes linear kernels particularly valuable for initial exploratory analysis, extremely high-dimensional genomic data, or when computational resources are constrained.

Polynomial kernels occupy a middle ground, offering better performance than linear kernels for capturing multiplicative interactions while generally being more computationally intensive than RBF kernels. Their performance is highly dependent on proper parameter tuning, particularly the degree parameter which should be aligned with the expected complexity of biological interactions in the system under study.

Kernel PCA vs. Linear PCA: A Genomic Perspective

The fundamental difference between linear PCA and kernel PCA lies in their approach to dimensionality reduction. Linear PCA identifies linear directions of maximum variance in the original data space, while kernel PCA first projects data into a higher-dimensional feature space via a kernel function, then performs linear PCA in that space. This enables kernel PCA to capture nonlinear patterns that would be inaccessible to standard PCA.

In genomic applications, this distinction has profound implications. As noted in benchmark studies, "the relationships between the variables may be nonlinear, making linear methods unsuitable. Hence, with high-dimensional data such as genomic data, where the number of features is usually much larger than the number of samples, nonlinear methods like kernel methods can provide a valid alternative for data analysis" [22]. Kernel PCA has proven particularly valuable for spatial transcriptomics analysis, where it enables accurate inference of RNA velocity in spatially resolved tissue at single-cell resolution by capturing nonlinear gene expression dynamics [8].

However, kernel PCA introduces interpretability challenges. The original features are summarized in pairwise kernel similarity scores, making it difficult to identify which genomic features drive the observed patterns. Recent methodological advances like KPCA Interpretable Gradient (KPCA-IG) address this limitation by computing partial derivatives of the kernel to identify influential variables, providing a data-driven feature importance ranking specifically designed for high-dimensional genomic datasets [22].

Implementing kernel methods effectively for genomic analysis requires both computational tools and biological knowledge resources. The following table outlines key solutions and their applications:

Table 3: Essential Research Reagent Solutions for Genomic Kernel Applications

| Resource Category | Specific Solutions | Function/Purpose | Genomic Application Examples |

|---|---|---|---|

| Computational Frameworks | scMKL [26], KSRV [8], ktest [30] | Specialized kernel methods for single-cell and spatial genomics | Multiomics integration, spatial trajectory inference, differential analysis |

| Kernel Implementations | SVM (scikit-learn), KPCA (scikit-learn), KernelRidge | General-purpose kernel method implementations | Baseline comparisons, custom analysis pipelines, method development |

| Biological Knowledge Bases | Hallmark Gene Sets (MSigDB), JASPAR, Cistrome [26] | Curated biological pathways and regulatory information | Biologically-informed kernel construction, pathway-centric analysis |

| Benchmarking Resources | EasyGeSe [29] | Curated genomic datasets for method validation | Performance benchmarking across diverse species and traits |

| Interpretability Tools | KPCA-IG [22] | Feature importance ranking for kernel PCA | Identification of influential genomic features in nonlinear analysis |

Specialized computational frameworks like scMKL (Single-Cell Multiple Kernel Learning) have been specifically designed to address the unique challenges of genomic data, integrating both transcriptomic and epigenomic modalities while maintaining interpretability through group Lasso regularization [26]. Similarly, KSRV (Kernel PCA-based Spatial RNA Velocity) implements kernel PCA with RBF kernels to infer developmental trajectories from spatial transcriptomics data [8].

For biologically-informed analysis, leveraging curated knowledge bases is essential. The Molecular Signature Database (MSigDB) provides Hallmark gene sets that can guide kernel construction for transcriptomic data, while JASPAR and Cistrome offer transcription factor binding site information for epigenomic analysis [26]. These resources enable researchers to move beyond generic kernel functions to construct biologically meaningful similarity measures that reflect known regulatory relationships.

Benchmarking resources like EasyGeSe provide curated collections of genomic datasets across multiple species, enabling systematic evaluation of kernel methods and ensuring robust, generalizable performance [29]. As new kernel methods are developed, such resources become increasingly important for objective comparison and validation.

The comparative analysis of kernel functions for genomic data transformation reveals a consistent pattern: while RBF kernels generally provide superior performance for capturing complex biological relationships, linear kernels maintain value for computationally efficient analysis of high-dimensional data, particularly when properly scaled. Polynomial kernels offer a middle ground for capturing specific interaction effects but require careful parameter tuning.

The choice between linear and kernel PCA ultimately depends on the research question and data characteristics. For initial exploration or when biological relationships are expected to be primarily linear, standard PCA provides interpretable results with computational efficiency. For capturing complex nonlinear patterns in gene expression, spatial organization, or cellular trajectories, kernel PCA with RBF kernels offers significantly enhanced capability, albeit with increased computational demands and interpretability challenges.

Future directions in genomic kernel methods point toward multiple kernel learning approaches that integrate diverse data types and biological knowledge, interpretability enhancements that bridge the gap between complex models and biological insight, and scalability improvements that enable application to increasingly large genomic datasets. As single-cell and spatial technologies continue to advance, kernel methods will play an increasingly important role in unraveling the complex, nonlinear relationships that define biological systems, ultimately accelerating discoveries in basic science and therapeutic development.

From Theory to Practice: Implementing PCA and KPCA on Genomic Datasets

A Step-by-Step Workflow for Linear PCA on Genotype Matrices

Principal Component Analysis (PCA) remains a cornerstone technique for visualizing population structure and correcting for confounding in genomic studies. While kernel PCA offers advantages for capturing complex non-linear relationships, linear PCA maintains critical importance for its computational efficiency, straightforward interpretation, and well-established theoretical foundations in genetics. The leading principal components of genomic relationship matrices effectively capture genetic relatedness and population stratification, with the first few components typically sufficient for visualizing overarching population structures [31]. This guide provides a detailed workflow for implementing linear PCA on genotype matrices, objectively compares its performance with kernel alternatives, and presents experimental data to inform method selection for genomic research.

Theoretical Foundation: Linear PCA vs. Kernel PCA in Genomics

Linear PCA for Genomic Data

Linear PCA operates by identifying orthogonal directions of maximum variance in the original feature space of genotype data. For a centered genotype matrix X ∈ ℝn×p (with n individuals and p markers), PCA performs an eigen decomposition of the covariance matrix C = (1/(n-1))X⊤X, or equivalently, a singular value decomposition (SVD) of X itself [32]. The resulting principal components (PCs) are linear combinations of the original genotypes that capture genetic variation in decreasing order. The projection of sample i onto PC k is given by tki = vk⊤xi, where vk is the k-th eigenvector [32]. In population genetics, these projections often correspond to geographic ancestry or breeding patterns when applied to genome-wide data.

Kernel PCA for Capturing Non-Linear Patterns

Kernel PCA extends this concept by first projecting genotypes into a higher-dimensional feature space via a non-linear mapping φ(x), then performing linear PCA in this transformed space [33]. This enables capture of complex patterns beyond simple covariance structures. The kernel trick allows this without explicitly computing φ(x) by working with the kernel matrix K where Kij = k(xi, xj) = ⟨φ(xi), φ(xj)⟩, representing similarity between samples i and j in the transformed space [30]. Common kernel functions include radial basis function (RBF) and polynomial kernels. In genomics, this enables identification of subtle population structures and complex differentiation patterns that may not align with simple linear axes of variation.

Comparative Advantages in Genomic Context

Table 1: Theoretical Comparison of Linear and Kernel PCA for Genomic Data

| Feature | Linear PCA | Kernel PCA |

|---|---|---|

| Computational Complexity | O(min(nk2, n2k)) for truncated SVD [31] | Higher due to kernel matrix computation and decomposition |

| Interpretability | High - PCs are linear combinations of original genotypes | Reduced - Components in feature space lack direct genetic interpretation |

| Memory Requirements | Moderate - Works with genotype matrix or covariance | High - Requires storing n×n kernel matrix |

| Handling of Non-Linear Patterns | Limited to linear relationships | Excellent for complex population structures |

| Implementation Maturity | Extensive tools and established best practices | Emerging methods with ongoing development |

Experimental Comparison: Performance Benchmarks

Computational Efficiency

Recent benchmarks demonstrate substantial advantages of optimized linear PCA implementations for large-scale genomic data. The randPedPCA package enables rapid pedigree PCA using sparse matrix representations, achieving a speed-up greater than 10,000 times compared to naive PCA implementations [31]. This efficiency allows analysis of extremely large pedigrees, exemplified by processing the UK Kennel Club registered Labrador Retriever population of almost 1.5 million individuals [31].

For genotype data, linear PCA implementations leveraging randomized SVD algorithms show similar scalability advantages. The SF-GWAS framework implements secure federated PCA for genome-wide association studies, successfully processing UK Biobank-scale datasets of 410,000 individuals while maintaining practical runtimes [34]. These results underscore the maturity of linear PCA for biobank-scale genomic datasets.

Biological Relevance and Accuracy

Table 2: Empirical Performance Comparison on Genomic Datasets

| Method | Dataset | Performance Metrics | Key Findings |

|---|---|---|---|

| Linear PCA (randPedPCA) | Simulated pedigree data | >10,000× speed-up vs. naive PCA [31] | Enables analyses impossible with naive PCA |

| Linear PCA (SF-GWAS) | UK Biobank (n=410,000) | 5.3 days total runtime [34] | Practical for biobank-scale datasets |

| Kernel PCA (KSRV) | Mouse brain spatial transcriptomics | Superior to SIRV and spVelo methods [33] | Accurately reveals spatial differentiation trajectories |

| Kernel PCA (ktest) | Single-cell ChIP-Seq data | Identifies epigenomic heterogeneity [30] | Detects subtle population variations missed by other methods |

Kernel PCA demonstrates particular strength in applications requiring capture of complex differentiation patterns. The KSRV framework, which employs kernel PCA to integrate single-cell RNA-seq with spatial transcriptomics, successfully revealed spatial differentiation trajectories in mouse brain and during mouse organogenesis [33]. Similarly, the ktest package applies kernel Fisher discriminant analysis to single-cell epigenomic data, identifying pre-existing subpopulations of breast cancer cells with persister-like epigenomic profiles prior to treatment [30]. These results highlight kernel PCA's advantage for detecting subtle biological variations in complex cellular systems.

Step-by-Step Workflow for Linear PCA on Genotype Matrices

Data Preprocessing and Quality Control

Genotype Encoding and Standardization: Raw genotype data should be encoded as 0, 1, 2 representing allele counts, then centered and scaled to ensure each marker contributes equally to the covariance structure. For a genotype matrix X, centering is typically performed by subtracting mean allele frequencies: W = X - 2p, where p is the vector of allele frequencies [34]. Some implementations further scale by √[2pj(1-pj)] to standardize by expected variance under Hardy-Weinberg equilibrium.

Quality Control Procedures: Prior to PCA, implement standard genomic QC filters: exclude markers with high missingness rates (>5%), significant deviation from Hardy-Weinberg equilibrium (p < 10-6), and low minor allele frequency (MAF < 0.01). Sample-level QC should exclude individuals with excessive missing data (>10%) and identify unexpected duplicates or related individuals [34]. These steps ensure technical artifacts don't dominate the principal components.

Core PCA Implementation

Algorithm Selection: For large genotype matrices (n > 10,000), randomized SVD algorithms provide the best balance of computational efficiency and accuracy. These algorithms approximate the top principal components without computing the full covariance matrix, using random projections to identify the subspace containing the dominant eigenvectors [31]. When only a few leading PCs are needed (typically the case for population structure visualization), randomized methods can halve the running time required compared to traditional approaches [31].

Variance Standardization: For visualization of population structure, use the genotype correlation matrix rather than covariance matrix, which equalizes contributions across markers with different allele frequencies. This approach prevents rare variants from having disproportionate influence on the leading components and better captures true population signals.

Figure 1: Linear PCA workflow for genotype data

Special Considerations for Large Pedigrees

For pedigree data represented by the additive relationship matrix A, efficient PCA can be performed without explicitly constructing this dense matrix by leveraging the sparse Cholesky factor L-1 of A-1 [31]. The randPedPCA package implements this approach, enabling matrix-vector multiplications with A through solving triangular systems with L-1, requiring only O(n) operations [31]. This allows PCA on pedigrees with millions of individuals using standard computational resources.

Advanced Methodological Considerations

Handling Missing Data in Ancient DNA

Ancient DNA datasets present unique challenges with extreme missingness (often <1% of SNPs observed). Standard PCA projection methods like SmartPCA can produce misleading results when missingness patterns correlate with true population structure [32]. The TrustPCA framework addresses this by quantifying projection uncertainty through a probabilistic model that estimates the distribution of possible PC coordinates given the observed SNPs [32]. This approach reveals when PCA placements are statistically robust versus highly uncertain due to sparse data.

Federated PCA for Privacy-Preserving Analysis

Multi-institutional genomic studies require privacy-preserving methods. SF-GWAS implements secure federated PCA using a hybrid homomorphic encryption and secure multiparty computation framework [34]. This approach performs PCA on distributed datasets without sharing raw genotypes, achieving runtimes of 44 hours for UK Biobank-scale data (n=275,812) while providing cryptographic privacy guarantees [34]. Federated PCA produces results virtually identical to pooled analysis, overcoming limitations of meta-analysis approaches that can produce biased results with heterogeneous datasets [34].

Dimensionality Reduction for Genomic Prediction

In genomic selection, PCA and other dimensionality reduction methods serve as valuable pre-processing steps before prediction modeling. Studies evaluating DR for genomic prediction found that only a fraction of features was sufficient to achieve maximum prediction accuracy, regardless of the DR method used [35]. This suggests that carefully implemented linear PCA can capture most genetically relevant variation while dramatically reducing computational demands for downstream prediction tasks.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Tools for Genomic PCA Implementation

| Tool | Function | Application Context |

|---|---|---|

| randPedPCA (R) | Rapid pedigree PCA using sparse matrices | Large pedigree visualization [31] |

| SF-GWAS | Secure federated PCA for GWAS | Multi-institutional genomic studies [34] |

| TrustPCA | Uncertainty quantification for PCA projections | Ancient DNA with extensive missingness [32] |

| KSRV | Kernel PCA for spatial transcriptomics | Spatial RNA velocity inference [33] |

| ktest | Kernel testing for single-cell differential analysis | Identifying subtle population heterogeneity [30] |

| PLINK | Standardized genotype QC and PCA | General population genetics [34] |