Mastering Gene Expression Analysis with MATLAB PCA: A Comprehensive Guide for Biomedical Research

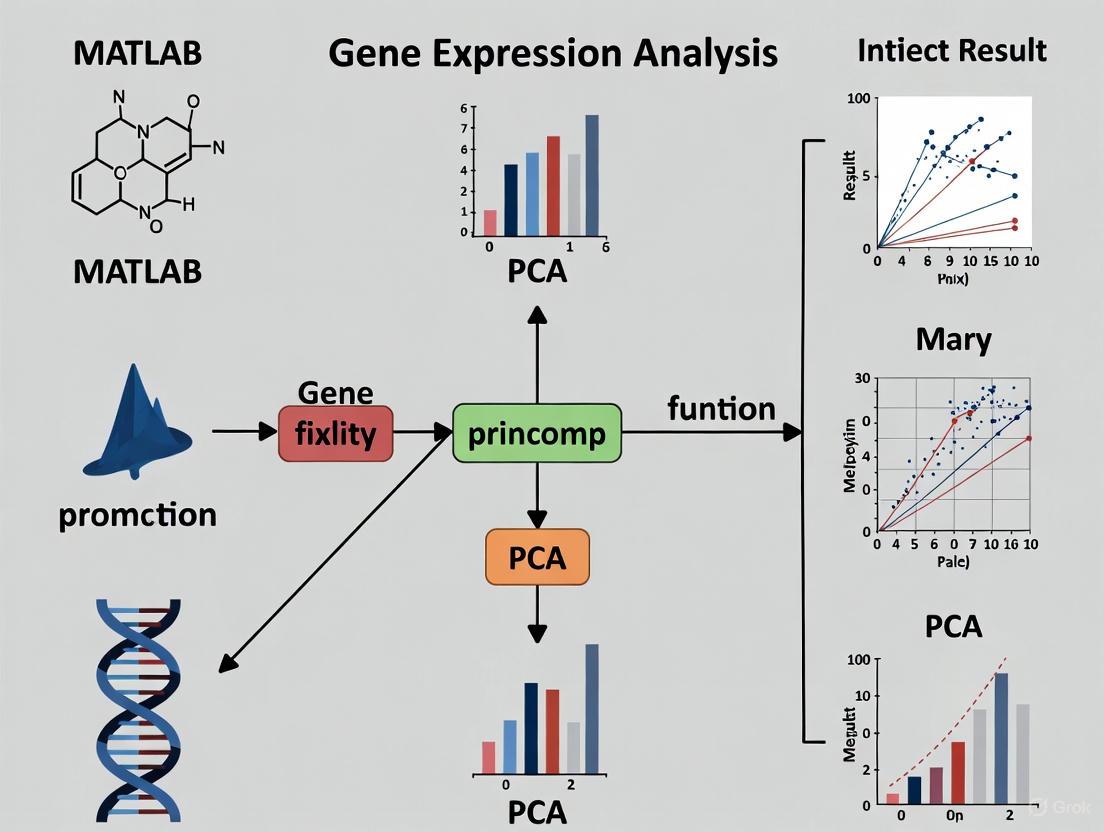

This comprehensive guide explores the application of Principal Component Analysis (PCA) in MATLAB for analyzing high-dimensional gene expression data.

Mastering Gene Expression Analysis with MATLAB PCA: A Comprehensive Guide for Biomedical Research

Abstract

This comprehensive guide explores the application of Principal Component Analysis (PCA) in MATLAB for analyzing high-dimensional gene expression data. Tailored for researchers, scientists, and drug development professionals, the article covers foundational PCA concepts, step-by-step implementation workflows, advanced troubleshooting techniques, and validation frameworks. Drawing from real gene expression case studies and current methodologies, it demonstrates how PCA enables dimensionality reduction, pattern discovery, and biomarker identification in genomic research. The content addresses critical challenges including data preprocessing, computational optimization, and integration with other bioinformatics tools, providing a complete resource for extracting meaningful biological insights from complex expression datasets.

Understanding PCA Fundamentals for Genomic Data Exploration

Theoretical Foundation of PCA

Principal Component Analysis (PCA) is a powerful statistical method for simplifying complex datasets. It operates by transforming multiple potentially correlated variables into a smaller set of uncorrelated variables called principal components (PCs). These components are linear combinations of the original variables and are ordered so that the first few retain most of the variation present in the original dataset [1]. In mathematical terms, PCA identifies the eigenvectors and eigenvalues of the data covariance matrix, where the eigenvectors (principal components) indicate directions of maximum variance, and the eigenvalues quantify the amount of variance carried by each component [2].

In biomedical research, this dimensionality reduction is particularly valuable for analyzing high-dimensional data where the number of variables (e.g., genes, proteins, metabolic markers) far exceeds the number of observations (the "large d, small n" problem) [2]. PCA helps researchers visualize high-dimensional data, identify patterns, detect outliers, and uncover hidden structures without prior knowledge of sample classes [3] [1]. The principal components themselves are often referred to as "metagenes," "super genes," or "latent genes" in genomic studies, as they effectively capture coordinated biological variation across multiple molecular entities [2].

PCA in Practice: A MATLAB-Centric Workflow for Gene Expression Analysis

Data Preparation and Preprocessing

The initial phase of PCA involves meticulous data preparation to ensure meaningful results. For gene expression analysis, this begins with loading the dataset, typically containing expression values (often log2 ratios), gene names, and experimental time points or conditions [4]. A critical preprocessing step involves filtering to remove uninformative genes and handle missing values, as microarray data often contains empty spots marked as 'EMPTY' and missing measurements represented as NaN [4] [5].

Essential filtering steps include:

- Removing empty spots:

emptySpots = strcmp('EMPTY',genes); - Eliminating genes with missing values:

nanIndices = any(isnan(yeastvalues),2); - Applying variance filters:

mask = genevarfilter(yeastvalues);to remove genes with small variance - Low absolute value filtering:

genelowvalfilter(yeastvalues,genes,'absval',log2(3)); - Entropy-based filtering:

geneentropyfilter(yeastvalues,genes,'prctile',15);[4] [5]

These filtering steps dramatically reduce dataset size—from 6,400 genes to approximately 614 informative genes in the yeast data example—while retaining biologically relevant information related to the phenomenon under investigation (e.g., metabolic shifts) [4].

Implementing PCA with princomp

In MATLAB, PCA can be performed using the princomp function. The basic syntax is:

Where:

ais the input data matrix (observations × variables)coeffcontains principal component coefficients (loadings)scoreholds the principal component scoreslatentstores the eigenvalues (variances of principal components)tsquaredcontains Hotelling's T-squared statistic for each observation [6]

Critical Implementation Note: The princomp function assumes rows represent observations. For gene expression data where rows typically represent genes and columns represent samples, proper data transposition is essential:

[6]. MATLAB computes PCA using singular value decomposition (SVD), the same algorithm used by most statistical software [6].

Table 1: MATLAB PCA Function Outputs and Interpretation

| Output Variable | Mathematical Meaning | Biological Interpretation |

|---|---|---|

coeff (loadings) |

Principal component coefficients | Influence of original genes on each PC |

score |

Projection of data into PC space | Sample positions in new coordinate system |

latent (eigenvalues) |

Variances of principal components | Amount of variance explained by each PC |

tsquared |

Hotelling's T-squared statistic | Multivariate distance from each observation to center |

Advanced PCA Applications in MATLAB

Beyond basic implementation, MATLAB supports advanced PCA applications:

Weighted PCA: Incorporates variable weights, often using inverse variable variances:

Handling Missing Data with ALS: The Alternating Least Squares (ALS) algorithm handles datasets with missing values:

Data Normalization for PCA: Proper normalization ensures each variable contributes equally:

Visualization and Interpretation of Results

Visualizing Principal Components

Effective visualization is crucial for interpreting PCA results. The principal component scores can be visualized using scatter plots:

A scree plot displays the variance explained by each principal component and helps determine how many components to retain:

Biplots combine both the scores (observations) and loadings (variables) in a single plot, showing how original variables contribute to the principal components and how observations are positioned relative to these components [8].

Interpreting Variance Contributions

The proportion of variance explained by each principal component indicates its relative importance in capturing dataset structure:

Table 2: Variance Explanation in PCA (Example from Yeast Data)

| Principal Component | Variance Explained (%) | Cumulative Variance (%) |

|---|---|---|

| PC1 | 79.83 | 79.83 |

| PC2 | 9.59 | 89.42 |

| PC3 | 4.08 | 93.50 |

| PC4 | 2.65 | 96.14 |

| PC5 | 2.17 | 98.32 |

| PC6 | 0.97 | 99.29 |

| PC7 | 0.71 | 100.00 |

Data derived from [4]

In practice, the first few components (typically 2-4) often capture the majority of biologically relevant information, though this varies by dataset [3]. For the yeast diauxic shift data, the first two components explain nearly 90% of total variance [4], while in larger human tissue datasets, the first three components typically explain approximately 36% of variability [3].

Biological Interpretation of Components

Interpreting principal components biologically requires identifying which original variables (genes) contribute most strongly to each component. Genes with high absolute loading values (typically >|0.4| to |0.5|) on a particular PC are considered influential [8]. Researchers then examine these genes for common biological functions, pathway membership, or regulatory elements.

In the yeast diauxic shift example, the 15th gene (YAL054C, ACS1) showed strong up-regulation during the metabolic shift, representing a biologically meaningful pattern captured by PCA [4]. In clinical CAH (congenital adrenal hyperplasia) studies, PCA successfully differentiated patient subtypes and treatment efficacy based on endocrine profiles [9].

Experimental Design and Protocol

Complete PCA Workflow for Gene Expression Analysis

PCA Workflow for Gene Expression Data

Step-by-Step Experimental Protocol

Step 1: Data Acquisition and Initialization

- Load gene expression data into MATLAB workspace:

load yeastdata.mat - Verify data structure:

numel(genes)returns number of genes - Check data dimensions:

size(yeastvalues)should match genes × time points [4]

Step 2: Data Cleaning and Filtering

- Remove empty spots:

- Eliminate genes with missing values:

- Apply variance filter:

mask = genevarfilter(yeastvalues); - Implement low absolute value filter:

- Execute entropy-based filtering: [4] [5]

Step 3: Data Normalization and PCA Computation

- Normalize data to zero mean and unit variance:

- Perform PCA with variance retention threshold:

- Alternative direct PCA computation: [4] [5]

Step 4: Component Selection and Validation

- Calculate variance proportions:

explained = latent./sum(latent)*100; - Generate scree plot:

screeplot(coeff,'type','lines'); - Determine optimal component count using eigenvalue >1 criterion (Kaiser's rule) [9] [8]

- Validate component stability through bootstrap resampling

Step 5: Visualization and Interpretation

- Create 2D score plots:

scatter(score(:,1),score(:,2)); - Generate biplots:

biplot(coeff(:,1:2),'Scores',score(:,1:2)); - Identify high-loading genes:

[~,idx] = sort(abs(coeff(:,1)),'descend'); - Perform functional enrichment on high-loading genes

Research Reagent Solutions

Table 3: Essential Computational Tools for PCA in Gene Expression Research

| Tool/Resource | Function | Implementation in MATLAB |

|---|---|---|

| Bioinformatics Toolbox | Specialized functions for genomic data | Required for genevarfilter, genelowvalfilter |

| Statistics and Machine Learning Toolbox | Core statistical algorithms | Provides pca, princomp functions |

| Gene Expression Data | Primary research material | Import from GEO, ArrayExpress |

| Quality Control Metrics | Data reliability assessment | RLE (Relative Log Expression) [3] |

| Normalization Algorithms | Data standardization | zscore, mapstd functions |

| Visualization Packages | Results presentation | scatter, screeplot, biplot |

Applications in Biomedical Research

PCA has diverse applications across biomedical domains, each leveraging its dimensionality reduction capabilities:

Exploratory Data Analysis and Visualization: PCA enables researchers to visualize high-dimensional gene expression data in 2D or 3D spaces, revealing sample clusters, outliers, and patterns without prior hypotheses [2]. For example, PCA of global human gene expression datasets consistently separates hematopoietic cells, neural tissues, and cell lines along the first three components [3].

Clustering and Sample Stratification: By reducing dimensionality while preserving biological variation, PCA facilitates more robust clustering of samples or genes. The principal component scores can be used as input for clustering algorithms like K-means or hierarchical clustering, often yielding more biologically meaningful partitions than raw data [4] [4].

Regression Analysis for Predictive Modeling: In pharmacogenomic studies, PCA addresses multicollinearity when predicting clinical outcomes from genomic profiles. Principal components serve as uncorrelated predictors in regression models, enabling stable parameter estimation even with high-dimensional data [8] [2].

Biomarker Discovery and Signature Development: PCA helps identify coordinated gene expression patterns that differentiate disease states or treatment responses. In congenital adrenal hyperplasia, PCA-derived "endocrine profiles" successfully predicted treatment efficacy with 80-92% accuracy [9].

Data Quality Assessment: PCA components often capture technical artifacts, such as batch effects or RNA degradation, enabling quality control and normalization. The fourth PC in some gene expression datasets correlates with array quality metrics rather than biological variables [3].

Technical Considerations and Limitations

Critical Implementation Factors

Data Distribution Assumptions: PCA theoretically assumes normally distributed data, though it demonstrates robustness to moderate violations. For severely non-normal data, transformations (log, rank) may improve performance [2].

Missing Data Strategies: Options include complete-case analysis, imputation (mean, median, k-nearest neighbors), or specialized algorithms like PCA-ALS [7].

Scaling and Centering: Proper normalization is essential when variables have different measurement units. Mean-centering ensures PC directions maximize variance, while scaling (unit variance) prevents dominance by high-variance variables [8].

Component Selection Criteria: No universal rule exists for determining how many components to retain. Common approaches include:

- Kaiser's criterion (eigenvalue >1)

- Scree plot inflection point

- Proportion of variance explained (e.g., >70-90%)

- Cross-validation predictive accuracy [8]

Methodological Limitations

Linear Assumption: PCA captures only linear relationships between variables. Nonlinear dimensionality reduction techniques (t-SNE, UMAP) may be preferable for complex data structures [3].

Variance-Biased Interpretation: PCA prioritizes high-variance directions, which may not always align with biologically important signals, particularly when relevant signals have small effect sizes [3] [1].

Sample Composition Sensitivity: PCA results depend heavily on dataset composition. Rare cell types or conditions may be overlooked unless sufficiently represented [3]. In one study, liver-specific patterns only emerged in PC4 when liver samples comprised adequate proportions (>3.9%) of the dataset [3].

Interpretation Challenges: While PCA reduces dimensionality, interpreting biological meaning from principal components requires additional analysis, as each component represents complex combinations of original variables [1].

Advanced PCA Extensions

Several PCA variants address specific analytical challenges:

Sparse PCA: Incorporates regularization to produce components with fewer non-zero loadings, enhancing interpretability by focusing on key variables [2].

Supervised PCA: Guides component identification using outcome variables, improving relevance for predictive modeling [2].

Functional PCA: Adapted for time-course gene expression data, capturing dynamic patterns across experimental time points [2].

Rough PCA: Integrates rough set theory with PCA for improved feature selection in classification tasks [10].

Troubleshooting and Optimization

Common Implementation Issues

Incorrect Data Orientation: Ensure the data matrix has observations as rows and variables as columns before applying princomp [6].

Missing Value Handling: Choose appropriate strategies based on missing data mechanism and extent. The 'pairwise' option in MATLAB's pca function uses available data for each variable pair but may produce non-positive definite covariance matrices [7].

Component Instability: With small sample sizes or high noise, components may vary across samples. Consider bootstrap validation to assess component reliability.

Interpretation Difficulty: When biological interpretation proves challenging, try:

- Rotating components (varimax, promax)

- Focusing on genes with extreme loading values

- Pathway enrichment analysis of high-loading genes

- Comparing with known biological patterns

Performance Optimization

Computational Efficiency: For very large datasets (>10,000 variables), consider:

- Randomized SVD algorithms

- Initial variable filtering

- Subsampling approaches

- Sparse PCA implementations

Biological Relevance Enhancement:

- Incorporate biological knowledge through pathway-guided PCA

- Integrate with complementary methods (cluster analysis, differential expression)

- Validate findings in independent datasets

- Correlate components with clinical or phenotypic data

This protocol provides a comprehensive foundation for applying Principal Component Analysis to biomedical data using MATLAB, enabling researchers to extract meaningful biological insights from complex high-dimensional datasets.

Principal Component Analysis (PCA) is a quantitatively rigorous method for visualizing and analyzing data with many variables, which is particularly relevant in gene expression studies where researchers often measure dozens or hundreds of system variables simultaneously [11]. In multivariate statistics like gene expression analysis, the fundamental challenge lies in visualizing data that has many variables, as groups of variables often move together due to measuring the same underlying driving principles governing biological systems [11]. PCA addresses this by generating a new set of variables called principal components, where each component represents a linear combination of the original variables, forming an orthogonal basis for the space of the data with no redundant information [11].

The core mathematical principles of PCA—variance maximization and orthogonal transformation—make it particularly valuable for genomic studies. The first principal component is a single axis in space where the projection of observations creates a new variable with maximum variance among all possible axis choices [11]. The second principal component is another axis, perpendicular to the first, that again maximizes variance among remaining choices [11]. This sequential variance maximization across orthogonal components allows researchers to capture the majority of data variance in just a few dimensions, enabling efficient visualization and analysis of high-dimensional gene expression data.

Theoretical Foundation: Variance Maximization and Orthogonal Transformation

The Mathematics of Variance Maximization

The principle of variance maximization in PCA operates on the fundamental objective of finding component directions that capture maximum data variance. Mathematically, for a data matrix X with n observations and p variables, PCA seeks a set of orthogonal vectors that successively maximize the retained variance. The first principal component is determined by the direction vector w₁ that maximizes the variance of the projected data:

w₁ = argmax‖w‖=1 {wᵀXᵀXw}

Subsequent components w₂, w₃, ..., wₚ are found similarly with the additional constraint that each new component must be orthogonal to all previous ones (wᵢᵀwⱼ = 0 for i ≠ j). This orthogonal transformation ensures that each component captures residual variance not explained by previous components, with the full set of principal components forming an orthogonal basis for the original data space [11].

Orthogonal Transformation in Dimensionality Reduction

The orthogonal transformation in PCA converts correlated variables into a set of uncorrelated components ordered by their variance contribution. This transformation is achieved through the eigen decomposition of the covariance matrix XᵀX, where the eigenvectors represent the principal component directions (loadings), and the eigenvalues correspond to their respective variances [11] [7]. The nesting property of PCA ensures that components are hierarchically organized, meaning that the first k components of a p-dimensional analysis (where k < p) are identical to the components obtained from an analysis requiring only k components [12]. This property is particularly valuable for progressive dimensionality reduction in gene expression studies.

Table 1: Mathematical Components of PCA Transformation

| Component | Mathematical Representation | Interpretation |

|---|---|---|

| Principal Components (Loadings) | Columns of coefficient matrix coeff | Linear combinations of original variables defining new orthogonal axes |

| Scores | score = X × coeff | Projection of original data onto principal component space |

| Variances | latent (eigenvalues) | Amount of variance explained by each principal component |

| Explained Variance | explained = (latent/sum(latent)) × 100 | Percentage of total variance accounted for by each component |

MATLAB Implementation for Gene Expression Analysis

Data Preparation and Preprocessing Protocol

Before applying PCA to gene expression data, proper preprocessing is essential to ensure meaningful results. The following protocol outlines the critical steps for preparing microarray data using MATLAB, specifically demonstrated with yeast gene expression data during the diauxic shift [5] [4]:

Load gene expression data from microarray experiments containing expression values, gene names, and measurement time points:

Filter non-informative genes by removing empty spots and genes with missing values:

Apply statistical filters to retain biologically relevant genes using Bioinformatics Toolbox functions:

Normalize data to standardize variable scales before PCA application:

This preprocessing protocol typically reduces a dataset from thousands of genes to a more manageable number of several hundred most significant genes, focusing analysis on genes with substantial expression changes during biological processes like the diauxic shift [4].

PCA Computation and Result Interpretation

The core PCA implementation in MATLAB utilizes the pca function, which returns multiple components for analyzing gene expression data [7]:

Table 2: MATLAB PCA Output Components for Gene Expression Analysis

| Output Variable | Interpretation | Application in Gene Expression Analysis |

|---|---|---|

coeff (Principal component coefficients) |

Linear combinations of original genes defining each PC | Identifies which genes contribute most to each component |

score (Principal component scores) |

Representation of original data in principal component space | Enables visualization of samples/samples relationships |

latent (Principal component variances) |

Eigenvalues of covariance matrix | Quantifies importance of each component |

explained (Percentage of variance explained) |

Percentage of total variance accounted for by each component | Determines how many components to retain for analysis |

mu (Estimated means of variables) |

Mean of each variable (gene) in original data | Useful for data reconstruction and interpretation |

The visualization of PCA results enables researchers to identify patterns in gene expression data:

In typical gene expression analyses, the first two principal components often account for a substantial proportion of total variance (frequently exceeding 80-90% cumulative variance), enabling effective two-dimensional visualization of high-dimensional data [11] [4].

Experimental Protocols for Gene Expression Analysis

Complete Workflow for Dimensionality Reduction

This protocol provides a comprehensive methodology for applying PCA to gene expression data, from initial data preparation through result interpretation:

Data Acquisition and Quality Control

- Load microarray or RNA-seq data into MATLAB workspace

- Verify data integrity and structure using

whosandsummarycommands - Identify and handle missing values using imputation or removal strategies

Gene Filtering and Selection

- Remove control spots and empty measurements using

strcmpfunction - Eliminate genes with excessive missing values using

isnanfunction - Apply variance-based filtering with

genevarfilterto retain informative genes - Implement expression level filtering with

genelowvalfilter - Use entropy-based filtering with

geneentropyfilterto select genes with dynamic expression patterns

- Remove control spots and empty measurements using

Data Normalization and Standardization

- Normalize data using

mapstdfunction to achieve zero mean and unit variance - Verify normalization effectiveness through statistical summaries

- Normalize data using

PCA Computation and Component Selection

- Execute PCA using

pcafunction with appropriate algorithm options - Calculate variance explained by each component using

cumsum(latent./sum(latent)*100) - Determine optimal number of components to retain based on variance thresholds (typically 70-95% cumulative variance)

- Extract component coefficients and scores for downstream analysis

- Execute PCA using

Result Visualization and Interpretation

- Create scatter plots of principal component scores

- Color-code data points by experimental conditions or sample types

- Identify clusters and outliers in the reduced-dimensionality space

- Correlate principal components with biological variables or experimental factors

Advanced Applications: Orthogonal Regression for Gene Expression Trajectories

Beyond dimensionality reduction, PCA enables orthogonal regression (total least squares) for modeling relationships in gene expression data where all variables contain measurement error [12]. This protocol adapts PCA for orthogonal regression analysis of expression trajectories:

This approach minimizes perpendicular distances from data points to the fitted model, making it appropriate when there is no natural distinction between predictor and response variables—a common scenario in time-course gene expression studies [12].

Visualization and Computational Tools

Research Reagent Solutions for Computational Gene Expression Analysis

Table 3: Essential MATLAB Tools and Functions for PCA-Based Gene Expression Analysis

| Tool/Function | Category | Purpose in Gene Expression Analysis |

|---|---|---|

pca |

Core PCA Function | Computes principal components, scores, and variances from expression data |

pcacov |

Covariance-based PCA | Performs PCA when only covariance/correlation matrix is available |

genevarfilter |

Gene Filtering | Identifies genes with variance above specified percentile threshold |

genelowvalfilter |

Gene Filtering | Removes genes with very low absolute expression values |

geneentropyfilter |

Gene Filtering | Filters genes based on profile entropy to select informative genes |

mapstd |

Data Preprocessing | Normalizes data to zero mean and unit variance before PCA |

scatter |

Visualization | Creates 2D/3D scatter plots of principal component scores |

clustergram |

Cluster Analysis | Generates heat maps with dendrograms based on PCA-reduced data |

Workflow Visualization Using Graphviz

The following DOT script illustrates the complete PCA workflow for gene expression analysis:

PCA Workflow for Gene Expression Data: This diagram illustrates the sequential process from data loading through biological interpretation, with decision points for component selection.

The variance maximization principle in PCA can be visualized through the following conceptual diagram:

Variance Maximization through Orthogonal Transformation: This diagram illustrates how PCA sequentially extracts components that capture maximum variance while maintaining orthogonality.

Applications in Drug Development and Biomedical Research

PCA's dual principles of variance maximization and orthogonal transformation provide powerful approaches for addressing key challenges in pharmaceutical research and development. In biomarker discovery, PCA enables researchers to identify patterns in high-dimensional genomic data that distinguish treatment responders from non-responders, maximizing the signal-to-noise ratio through variance-focused dimensionality reduction. The orthogonal transformation property ensures that each component captures independent biological signals, facilitating interpretation of complex molecular signatures.

In compound screening and mechanism of action studies, PCA reduces high-content screening data to its essential components, allowing researchers to cluster compounds with similar effects and identify potential novel therapeutic agents. The variance maximization principle prioritizes components that explain the greatest differences between compound treatments, while orthogonal transformation eliminates redundant information across multiple assay endpoints. This application is particularly valuable in target identification and validation phases of drug development.

Pharmacogenomics applications leverage PCA to stratify patient populations based on genomic profiles, identifying subpopulations that may benefit from targeted therapies. By maximizing captured variance in gene expression data, PCA reveals the dominant patterns of transcriptional regulation that differentiate patient subgroups, supporting personalized medicine approaches. The orthogonal components frequently correspond to distinct biological pathways or regulatory mechanisms, providing insights into the molecular basis of treatment response variability.

Advantages of PCA for High-Dimensional Gene Expression Data

Principal Component Analysis (PCA) serves as a cornerstone technique for the analysis of high-dimensional gene expression data. By reducing dimensionality, PCA enhances computational efficiency, mitigates overfitting, and facilitates the visualization of underlying data structures. When implemented via MATLAB's princomp function, PCA provides researchers with a powerful tool to uncover biologically significant patterns in transcriptomic studies, supporting advancements in biomarker discovery and drug development. This application note details the theoretical advantages, practical protocols, and critical interpretive considerations for employing PCA in gene expression analysis.

Gene expression datasets from technologies like microarrays and RNA-sequencing are characterized by a massive number of variables (genes) per observation, creating a high-dimensional space that challenges conventional statistical analysis [13] [14]. This high-dimensionality leads to issues such as increased computational cost, the curse of dimensionality, and difficulty in visualization. Principal Component Analysis (PCA) is a linear dimensionality reduction technique that addresses these challenges by transforming the original correlated variables into a new set of uncorrelated variables called principal components (PCs), which are ordered by the amount of variance they capture from the data [15]. This document frames the application of PCA within the context of MATLAB's princomp function, providing a structured guide for life science researchers.

Core Advantages of PCA in Gene Expression Analysis

The application of PCA to gene expression data confers several distinct advantages crucial for scientific research and drug development.

Table 1: Key Advantages of PCA for Gene Expression Data

| Advantage | Mechanism | Impact on Research |

|---|---|---|

| Computational Efficiency | Reduces the number of features for downstream analysis [15]. | Enables faster model training and clustering of large datasets (e.g., thousands of samples) [16]. |

| Noise Reduction | Isolates dominant signals by concentrating variance into the first few PCs, effectively filtering out low-variance noise [5]. | Improves the signal-to-noise ratio, leading to more robust identification of biologically relevant patterns. |

| Data Visualization | Projects high-dimensional data onto 2D or 3D plots using the first 2-3 PCs [4] [3]. | Allows researchers to visually assess sample clustering, identify outliers, and generate hypotheses about group relationships. |

| Overfitting Prevention | Mitigates the "curse of dimensionality" by reducing the feature space used in predictive modeling [15]. | Enhances the generalizability of models for clinical outcome prediction or disease classification. |

| Uncovered Data Structure | Reveals major axes of variation in an unsupervised manner, without prior knowledge of sample groups [3]. | Can identify novel subclasses of diseases, batch effects, or the influence of major biological processes (e.g., cell cycle, immune response). |

Beyond these general benefits, studies have shown that the first few principal components in large, heterogeneous gene expression datasets often have clear biological interpretations, such as separating hematopoietic cells, neural tissues, and cell lines [3]. Furthermore, PCA facilitates the handling of correlated structures among genes, a common feature in transcriptomics, by creating new, uncorrelated variables for subsequent analysis [14].

Experimental Protocol: PCA with MATLABprincomp

This protocol details the steps for performing PCA on a gene expression matrix, using a public yeast diauxic shift dataset [4] [5] as an example. The workflow encompasses data loading, preprocessing, PCA execution, and result interpretation.

Workflow Visualization

The following diagram illustrates the complete analytical pipeline from raw data to clustered results.

Step-by-Step Procedures

Step 1: Data Loading and Exploration

Begin by loading the dataset into the MATLAB workspace. The example dataset yeastdata.mat contains expression levels for 6,400 genes across seven time points.

Explore the data dimensions and content:

Step 2: Data Preprocessing and Filtering

High-quality input data is critical for a meaningful PCA. Preprocessing involves removing non-informative genes and handling missing values.

- Remove Empty Spots: Filter out control spots not associated with genes.

- Handle Missing Values: Eliminate genes with any missing data (

NaN). - Filter by Variance: Remove genes with little variation over time, as they contribute minimal information to the PCA model. The

genevarfilterfunction retains genes with variance above the 10th percentile. - Filter by Absolute Value and Entropy (Optional): Further refine the gene set by removing genes with very low absolute expression levels (

genelowvalfilter) or low profile entropy (geneentropyfilter). After these steps, the dataset is reduced to a manageable number of highly informative genes (e.g., 614 from an initial 6,400) [4] [5].

Table 2: Essential Research Reagents & Computational Tools

| Item Name | Function / Purpose in Analysis |

|---|---|

| Gene Expression Matrix | The primary data input; rows typically represent genes and columns represent samples or experimental conditions. |

| Bioinformatics Toolbox (MATLAB) | Provides specialized functions for biological data analysis, such as genevarfilter and clustergram. |

MATLAB princomp Function |

The core function that performs Principal Component Analysis, returning components, scores, and variances. |

| Statistics and Machine Learning Toolbox | Provides additional clustering algorithms (e.g., kmeans, linkage) for downstream analysis of PCA results. |

Step 3: Executing Principal Component Analysis

Perform PCA on the preprocessed data matrix using the princomp function.

Output Interpretation:

COEFF(Principal Component Coefficients): Ap x pmatrix (wherepis the number of genes). Each column defines a principal component as a linear combination of all original genes. The first column is the first PC, which captures the most variance.SCORE(Principal Component Scores): Ann x pmatrix (wherenis the number of samples). This is the projection of the original data onto the new principal component axes. It represents the transformed dataset in the PC space and is used for visualization and clustering.VARIANCE(Eigenvalues): A vector containing the variances explained by each principal component.

Step 4: Result Interpretation and Downstream Analysis

a. Variance Explained: Calculate the percentage of total variance accounted for by each PC. This helps determine how many components to retain.

In the yeast example, the first two PCs may account for over 89% of the cumulative variance [4], meaning a 2D scatter plot of the first two PCs faithfully represents most of the data's structure.

b. Data Visualization: Create a scatter plot of the first two principal components to visualize sample relationships.

c. Downstream Clustering: Use the PC scores (often from the first ~20 PCs) as input for clustering algorithms like K-means or hierarchical clustering to identify groups of samples with similar expression profiles.

Critical Considerations and Best Practices

Successful application of PCA requires attention to several key factors to ensure biologically valid interpretations.

Data Normalization is Crucial: The choice of normalization method (e.g., min-max, z-score, log transformation) profoundly impacts the PCA solution and its biological interpretation [17]. Z-score normalization is a common choice as it standardizes all genes to a mean of zero and a standard deviation of one, preventing highly abundant genes from dominating the first PCs.

Interpretation of Higher Components: While the first few PCs often capture major batch effects or dominant biological processes, biologically relevant information can reside in higher principal components [3]. For example, tissue-specific or subtype-specific signals may be found in PC4 and beyond. Dismissing these components outright could lead to a loss of critical insights.

Understand the Limitations: PCA is a linear technique and may struggle to capture complex non-linear relationships in gene expression data. It is also sensitive to outliers. The sample composition of the dataset heavily influences the principal components; an over-represented tissue type will dominate the early PCs, which may not generalize to other sample sets [3].

PCA, particularly when implemented through MATLAB's princomp function, is an indispensable tool for the exploratory analysis of high-dimensional gene expression data. Its ability to enhance computational efficiency, enable intuitive visualization, and reveal the underlying structure of complex transcriptomic datasets makes it a fundamental first step in many bioinformatics workflows. By following the detailed protocols and considerations outlined in this application note, researchers and drug development professionals can leverage PCA to distill meaningful biological insights from genomic big data, thereby accelerating scientific discovery and therapeutic development.

Principal Component Analysis (PCA) is a quantitatively rigorous method for simplifying multivariate data sets by reducing their dimensionality. In MATLAB, PCA transforms a set of possibly correlated variables into a set of linearly uncorrelated variables called principal components. These components are orthogonal to each other and form a basis for the data, ordered such that the first component captures the maximum variance in the data, the second captures the next highest variance while being orthogonal to the first, and so on [11]. This technique is particularly valuable for researchers analyzing high-dimensional data, such as gene expression profiles from microarray experiments, where visualizing relationships between more than three variables becomes challenging [4] [11]. The MATLAB ecosystem provides several functions for performing PCA, each with distinct advantages for specific data scenarios commonly encountered in bioinformatics and computational biology research.

Within the context of gene expression analysis, PCA enables researchers to identify predominant patterns of gene expression changes under experimental conditions, such as during the diauxic shift in Saccharomyces cerevisiae (baker's yeast) [5] [4]. By applying PCA to expression data, scientists can reduce thousands of gene expression measurements to a few principal components that capture the most significant variations, thereby revealing underlying biological processes and relationships that might otherwise remain hidden in the high-dimensional data space. This approach facilitates the identification of co-expressed genes, potential regulatory networks, and key molecular drivers of phenotypic changes.

Table 1: Core PCA Functions in the MATLAB Ecosystem

| Function | Input Data Type | Key Features | Best Use Cases |

|---|---|---|---|

| pca | Raw data matrix (n-by-p) | Uses SVD or eigenvalue decomposition; handles missing data with 'algorithm','als' [7] | Standard PCA on complete data or data with few missing values |

| pcacov | Covariance matrix (p-by-p) | Performs PCA on precomputed covariance matrix; does not standardize variables [18] | When only covariance matrix is available or computational efficiency is critical |

| ppca | Raw data matrix with missing values | Probabilistic approach using EM algorithm; handles missing data [19] | Data with significant missing values (>10-20%) assumed missing at random |

Theoretical Foundations and Algorithmic Differences

Mathematical Underpinnings of PCA

The fundamental mathematical operation behind PCA involves the eigenvalue decomposition of the covariance matrix of the data or the singular value decomposition (SVD) of the data matrix itself [7] [6]. When using the pca function on raw data, MATLAB centers the data by default and employs the SVD algorithm, which factorizes the data matrix X into USVᵀ, where the columns of V represent the principal components (eigenvectors of XᵀX) and the diagonal elements of S are proportional to the square roots of the eigenvalues [7]. The pcacov function operates directly on a covariance matrix, performing eigenvalue decomposition to obtain the principal components, but does not automatically standardize the variables to unit variance [18]. For standardized variable analysis, researchers must preprocess the covariance matrix into a correlation structure before applying pcacov.

Probabilistic PCA Framework

Probabilistic PCA (PPCA) extends classical PCA within a probabilistic framework, modeling the data using a Gaussian distribution and introducing a latent variable model that represents the principal components [19] [20]. The key advantage of this approach is its foundation on maximum likelihood estimation, which enables handling of missing data through an expectation-maximization (EM) algorithm. Unlike conventional PCA, PPCA provides a proper probability density model that can be used for statistical inference and offers greater robustness to noise in the data [20]. The EM algorithm iteratively estimates the missing values and model parameters until convergence, making it particularly suitable for gene expression datasets where missing values frequently occur due to experimental artifacts or measurement limitations.

Figure 1: The Expectation-Maximization Workflow of PPCA for Handling Missing Data

Comparative Analysis of PCA Functions

Functional Capabilities and Limitations

Each PCA function in MATLAB's ecosystem presents distinct advantages and limitations for gene expression research. The standard pca function offers the most comprehensive set of features for complete datasets, including support for different algorithms (SVD and Eigenvalue decomposition), variable weighting options, and the ability to return multiple output statistics such as Hotelling's T-squared values [7]. The pcacov function provides computational efficiency for scenarios where the covariance matrix is already available or when working with tall arrays that exceed memory limitations [18]. Meanwhile, ppca specializes in handling datasets with values missing at random, employing an iterative EM algorithm that converges to maximum likelihood estimates of the principal components while simultaneously imputing missing values [19].

Table 2: Output Components of PCA Functions in MATLAB

| Output | pca | pcacov | ppca | Description |

|---|---|---|---|---|

| coeff | ✓ | ✓ | ✓ | Principal component coefficients (loadings) |

| score | ✓ | ✗ | ✓ | Representations of input data in principal component space |

| latent | ✓ | ✓ | ✓ | Principal component variances (eigenvalues) |

| tsquared | ✓ | ✗ | ✗ | Hotelling's T-squared statistic for each observation |

| explained | ✓ | ✓ | ✗ | Percentage of total variance explained by each component |

| mu | ✓ | ✗ | ✓ | Estimated mean of each variable |

Performance Considerations for Gene Expression Data

When processing large-scale gene expression datasets, computational performance becomes a significant consideration. For the standard pca function, the SVD algorithm generally provides better numerical stability, while eigenvalue decomposition may offer performance benefits for certain matrix structures [7]. The ppca function typically requires more computational resources due to its iterative EM algorithm, with the number of iterations controlled through options structures that can modify termination criteria and display settings [19]. For massive datasets that exceed memory limitations, the pcacov function enables a distributed computing approach where researchers can compute the covariance matrix from tall arrays and then perform PCA on the resulting covariance matrix [18].

Experimental Protocols for Gene Expression Analysis

Data Preprocessing and Filtering

Comprehensive preprocessing of gene expression data is essential before applying PCA to ensure meaningful results. The protocol begins with loading the expression data, typically represented as a matrix where rows correspond to genes and columns to experimental conditions or time points [5] [4]. For yeast expression data during diauxic shift, the dataset includes expression values (log2 ratios) measured at seven time points [4]. Initial preprocessing involves removing empty spots and genes with missing values, followed by applying variance-based and entropy-based filtering to retain only genes with informative expression profiles [5] [4].

Code 1: Gene Filtering Protocol for Yeast Expression Data Prior to PCA

Protocol for Standard PCA Analysis

After preprocessing, standard PCA can be applied to identify patterns in the filtered gene expression data. The protocol involves normalizing the data to zero mean and unit variance, followed by principal component extraction using the pca function [4]. Researchers can then visualize the results through scatter plots of principal component scores and analyze the variance explained by each component to determine how many principal components to retain for subsequent analysis.

Code 2: Standard PCA Protocol for Gene Expression Analysis

Protocol for Probabilistic PCA with Missing Data

When working with gene expression datasets containing missing values, PPCA provides a robust alternative. This protocol demonstrates how to apply ppca to handle missing data, which commonly occurs in microarray experiments due to technical artifacts [19]. The method is particularly valuable when the missing data mechanism can be assumed to be missing at random, as it provides maximum likelihood estimates of the principal components while simultaneously imputing missing values.

Code 3: Probabilistic PCA Protocol for Handling Missing Values in Gene Expression Data

Protocol for PCA on Covariance Matrix

For scenarios where the covariance matrix is already available or when working with tall arrays that exceed memory limitations, pcacov offers an efficient alternative [18]. This protocol demonstrates how to compute the covariance matrix from expression data and perform PCA directly on the covariance structure, which can be particularly useful for large-scale genomic studies.

Code 4: PCA on Covariance Matrix Protocol for Large-Scale Expression Data

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Gene Expression PCA Analysis

| Tool/Function | Purpose | Application Context |

|---|---|---|

| Bioinformatics Toolbox | Provides specialized functions for genomic data analysis | Required for genevarfilter, genelowvalfilter, and geneentropyfilter functions [5] [4] |

| Statistics and Machine Learning Toolbox | Implements core PCA functions and clustering algorithms | Essential for pca, pcacov, and ppca functions [19] [7] [18] |

| genevarfilter | Filters genes with small variance across experimental conditions | Removes uninformative genes with static expression profiles [5] [4] |

| genelowvalfilter | Removes genes with very low absolute expression values | Eliminates genes with minimal expression signal [5] [4] |

| geneentropyfilter | Filters genes with low entropy expression profiles | Selects genes with dynamic expression patterns across conditions [5] [4] |

| mapstd | Normalizes data to zero mean and unit variance | Standard preprocessing step before PCA [5] |

Data Interpretation and Visualization Strategies

Analyzing PCA Outputs for Biological Insight

Interpreting PCA results requires understanding the biological significance of each output component. The principal component coefficients (loadings) indicate how much each original variable (gene) contributes to a particular principal component, revealing which genes have the strongest influence on the observed patterns [7] [6]. The principal component scores represent the original data projected into the principal component space, enabling visualization of sample relationships [6]. The variances (eigenvalues) indicate the importance of each principal component, while the explained variance percentage quantifies how much of the total data variability each component captures [7] [4]. For gene expression time course data, these outputs can identify groups of co-expressed genes and temporal expression patterns that correspond to specific biological processes.

Figure 2: Pathway from PCA Outputs to Biological Interpretation in Gene Expression Analysis

Advanced Visualization Techniques

Effective visualization of PCA results enhances the extraction of biological insights from gene expression data. The mapcaplot function provides an interactive environment for exploring principal components, allowing researchers to select data points across multiple scatter plots and identify corresponding genes [21]. For publication-quality figures, the scatter function can create 2D plots of the first two principal components, which often capture the majority of data variance [5] [4]. When analyzing time course expression data, researchers can color-code data points by time points or experimental conditions to visualize temporal patterns and transitions, such as the metabolic shift from fermentation to respiration in yeast [4]. Cluster analysis techniques, including hierarchical clustering and k-means applied to principal component scores, can further elucidate gene expression patterns and identify potential regulatory modules.

The MATLAB PCA ecosystem offers a comprehensive suite of functions tailored to different data scenarios in gene expression research. The standard pca function serves as the primary tool for complete datasets, while ppca provides specialized handling for data with missing values through its probabilistic framework and EM algorithm [19] [20]. The pcacov function offers computational efficiency for scenarios where covariance matrices are precomputed or when working with large-scale data that exceeds memory limitations [18]. For researchers analyzing gene expression data, following systematic protocols for data filtering, normalization, and dimensionality assessment ensures biologically meaningful results. By selecting the appropriate PCA function based on data characteristics and research objectives, scientists can effectively uncover patterns in high-dimensional genomic data, leading to deeper insights into transcriptional regulation and cellular responses.

Principal Component Analysis (PCA) is a fundamental dimension reduction technique widely used in gene expression analysis. It transforms high-dimensional data into a new coordinate system, highlighting the dominant patterns of variation and enabling researchers to visualize sample similarities, identify outliers, and uncover latent biological structures. For scientists working with genomic datasets, which often contain tens of thousands of genes (variables) across relatively few samples, PCA provides a critical first step in exploratory data analysis. This application note details the interpretation of core PCA outputs—coefficients, scores, latent values, and explained variance—within the context of gene expression research using MATLAB, specifically framing these concepts within a broader thesis on the princomp function and its applications.

Core Components of PCA Output

The output of PCA consists of several interconnected matrices and vectors that collectively describe the transformed data. Understanding their statistical meaning and biological interpretation is essential for proper analysis.

Table: Core Outputs from MATLAB's PCA Function

| Output Term | Mathematical Definition | Biological Interpretation in Gene Expression | MATLAB Variable |

|---|---|---|---|

| Coefficients (Loadings) | Eigenvectors of the covariance matrix; weights for each gene in the principal components. | Contribution of each gene to a PC. High absolute values mark genes important for the sample separation along that PC. | coeff |

| Scores | Projections of the original data onto the new principal component axes. | Representation of each sample in the new, low-dimensional PC space. Used to visualize sample clustering. | score |

| Latent (Eigenvalues) | Eigenvalues of the covariance matrix. | The variance captured by each respective principal component. | latent |

| Explained Variance | Percentage of the total variance explained by each PC (e.g., latent/sum(latent)*100). |

Helps decide how many PCs are biologically relevant versus noise. | explained |

Coefficients (Loadings)

The principal component coefficients, also known as loadings, form the transformation matrix that defines the direction of the principal components in the original variable space [7]. Each column of the coefficient matrix coeff contains the coefficients for one principal component, with these columns sorted in descending order of component variance [7]. In gene expression analysis, where variables correspond to genes, these coefficients indicate the weight or contribution of each gene to a specific principal component. A high absolute value of a coefficient for a gene within a principal component signifies that this gene strongly influences the direction and separation of samples along that component. For instance, in a large-scale gene expression compendium, the first few principal components often have high loadings for genes specific to major biological programs like hematopoiesis, neural function, or cellular proliferation [3].

Scores

Principal component scores are the representations of the original data in the newly established principal component space [7]. Rows of the score matrix correspond to individual observations (e.g., patient samples, cell lines), and columns correspond to the principal components. These scores are obtained by projecting the original, typically mean-centered, data onto the principal component axes defined by the coefficients. Plotting these scores—for example, PC1 vs. PC2—allows for the visualization of the overall data structure, enabling researchers to identify clusters of samples with similar gene expression profiles, detect outliers, and hypothesize about underlying biological or technical effects [22] [23].

Latent Values and Explained Variance

The latent output is a vector containing the eigenvalues of the covariance matrix of the input data [7]. These eigenvalues represent the variance explained by each corresponding principal component. The explained output directly quantifies the percentage of the total variance in the original dataset that is captured by each principal component, calculated as the corresponding latent value divided by the sum of all latent values [7] [23]. This metric is critical for assessing the importance of each component and determining the number of components to retain for further analysis. In gene expression studies, it is common for the first few components to explain a limited portion of the total variance (e.g., 20-40% for PC1), with the cumulative explained variance increasing gradually with subsequent components [3]. A scree plot, which plots the explained variance or eigenvalues against the component number, is a standard tool for this evaluation. The cumulative explained variance can be visualized and calculated using the cumsum function on the explained vector [24].

Experimental Protocol: PCA for Gene Expression Analysis

This protocol outlines the steps for performing and interpreting PCA on a gene expression matrix using MATLAB, where rows correspond to samples and columns to genes.

Sample Preparation and Data Preprocessing

- Data Matrix Assembly: Compile your gene expression data into a numerical matrix

Xof sizen x p, wherenis the number of observations (samples) andpis the number of variables (genes) [7]. - Data Normalization: Normalize the data to ensure genes are comparable. A common approach is to transform the data to Z-scores, which centers each gene on zero mean and scales it to unit variance using

Z = zscore(X)[2]. This step is crucial when the variances of the original variables differ by orders of magnitude [25]. - Handling Missing Values: Identify and handle missing values (

NaN). MATLAB'spcafunction offers several methods via the'Rows'name-value pair. The'complete'option removes observations with anyNaNvalues before calculation, which is the default. Alternatively, the'pairwise'option can be used with the eigenvalue decomposition algorithm, though this may result in a non-positive definite covariance matrix [7].

Computational Procedure in MATLAB

- Execute PCA: Perform PCA on the preprocessed data matrix. Use a command that returns all necessary outputs:

Here,

Zis the normalized data matrix. The outputmucontains the estimated means of each variable, which is useful for reconstruction [7]. - Determine Significant Components: Examine the

explainedvector to decide how many principal components to retain. This can be done by:- Creating a scree plot:

- Setting a threshold for cumulative variance (e.g., 70-90%) or looking for an "elbow" in the scree plot where the explained variance drops off markedly.

- Visualize Results:

- Sample Clustering: Create a 2D or 3D scatter plot of the principal component scores to visualize sample relationships.

- Biplot Generation: Create a biplot to overlay the scores (samples as points) and the coefficients (genes as vectors) on the same graph, showing their relationship [22].

- Interpret Loadings: To identify genes that drive separation along a specific principal component (e.g., PC1), sort the coefficients for that component and examine the genes with the highest absolute values [26].

PCA Workflow for Gene Expression Data

Advanced Interpretation in a Genomic Context

Applying PCA to gene expression data comes with specific considerations and challenges that researchers must address for a valid biological interpretation.

Dimensionality and Sample Composition

A key finding in genomics is that the apparent intrinsic dimensionality of gene expression data is often higher than initially assumed. While the first three principal components might capture large-scale, dominant patterns (e.g., separating hematopoietic cells, neural tissues, and cell lines), significant tissue-specific or condition-specific information can reside in higher-order components [3]. The sample composition of the dataset profoundly influences the resulting principal components. If a particular tissue or cell type is over-represented, it will likely dominate the early components. For example, a dataset with a high proportion of liver samples may show a liver-specific separation in PC4, which would be absent in a dataset with fewer liver samples [3]. This underscores the importance of considering sample cohort structure when interpreting PCA results.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for PCA-Based Gene Expression Analysis

| Tool / Resource | Function in Analysis | Application Context |

|---|---|---|

| MATLAB Statistics and Machine Learning Toolbox | Provides the core pca and biplot functions for computation and visualization. |

Primary environment for performing the PCA analysis and generating initial plots. [7] [22] |

| Predefined Gene Labels | A cell array of gene symbols corresponding to the columns of the data matrix. | Critical for annotating vectors in a biplot to identify which genes drive component separation. [22] |

| Custom Scripting for Visualization | MATLAB scripts for generating enhanced scree plots and score scatter plots. | Allows for tailored visualization that clearly communicates the variance explained and sample clustering. [24] |

| Biological Annotation Databases | Resources like GO, KEGG, or MSigDB for functional enrichment analysis. | Used to interpret the biological meaning of genes with high loadings on a given principal component. |

Case Study: Analysis of a Public Microarray Dataset

To illustrate the interpretation of PCA outputs, consider a re-analysis of a large public microarray dataset, such as the one from Lukk et al. (2016), which contains 5,372 samples from 369 different tissues and cell types [3].

- Procedure: The gene expression data was normalized and subjected to PCA using MATLAB. The first four principal components were retained for detailed examination.

- Results and Interpretation:

- Variance Explained: The first three PCs explained approximately 36% of the total variance in the data, indicating that a substantial amount of information remained in higher components [3].

- Component Interpretation: PC1 was strongly associated with hematopoietic cells, PC2 with malignancy and proliferation, and PC3 with neural tissues. A subsequent analysis of a different dataset revealed that PC4 separated liver and hepatocellular carcinoma samples, a finding attributed to the higher proportion of such samples in that specific dataset [3].

- Residual Information: By projecting the data onto the first three PCs and analyzing the residual information, it was shown that significant tissue-specific information (e.g., distinguishing between different brain regions) was retained in the higher components (PC4 and beyond) [3].

Interpreting a Biplot for Gene Expression Data

A rigorous interpretation of PCA outputs—coefficients, scores, latent values, and explained variance—is fundamental for extracting meaningful biological insights from complex gene expression datasets. The coefficients reveal the genes that drive major patterns of variation, the scores show how samples are arranged according to these patterns, and the explained variance quantifies the importance of each pattern. Researchers must be mindful of the data's structure and scale, as these factors directly influence the PCA results. By following the detailed protocols and considerations outlined in this document, scientists and drug development professionals can reliably use PCA as a powerful, unsupervised tool for quality control, hypothesis generation, and the exploration of the fundamental dimensionality of their genomic data.

Application Note: Principal Component Analysis of Gene Expression During the Diauxic Shift in Yeast

The diauxic shift in Saccharomyces cerevisiae represents a crucial metabolic transition from fermentative growth on glucose to respiratory growth on ethanol, accompanied by extensive gene expression reprogramming [27] [28]. This physiological transition serves as an excellent model for studying metabolic adaptation and regulatory networks, with implications for understanding similar processes in cancer cells, particularly the Warburg effect [27]. This application note demonstrates how Principal Component Analysis (PCA) via MATLAB's princomp function can reveal key patterns in transcriptional regulation during this shift, providing a framework for analyzing similar transformations in cancer genomics.

Experimental Dataset

The analysis utilizes a publicly available microarray dataset from DeRisi et al. (1997) that captures temporal gene expression of Saccharomyces cerevisiae during the diauxic shift [5] [4]. Expression levels were measured at seven time points as yeast transitioned from fermentation to respiration. The raw dataset contains 6,400 expression profiles, though filtering techniques reduce this to the most biologically relevant genes.

Table: Dataset Overview of Yeast Diauxic Shift Experiment

| Parameter | Specification |

|---|---|

| Organism | Saccharomyces cerevisiae (Baker's Yeast) |

| Experimental Condition | Diauxic Shift (Fermentation to Respiration) |

| Time Points Measured | 7 time points during metabolic transition |

| Initial Gene Count | 6,400 genes |

| Technology | DNA Microarray |

| Public Accession | GSE28 (Gene Expression Omnibus) |

Methodology: PCA with MATLAB princomp

Data Preprocessing and Filtering

Before performing PCA, the expression data must be filtered to remove uninformative genes:

Load Data: Load the yeast dataset into MATLAB workspace.

Remove Empty Spots: Filter out empty microarray spots.

Handle Missing Data: Remove genes with missing values (NaN).

Apply Variance Filter: Retain genes with variance above the 10th percentile.

Apply Low-Value Filter: Remove genes with low absolute expression values.

Apply Entropy Filter: Remove genes with low entropy profiles.

Principal Component Analysis

The core analysis utilizes the princomp function (or pca in newer versions) on the preprocessed data:

Perform PCA: Calculate principal components, scores, and variances.

Variance Explanation: Calculate the percentage of variance explained by each component.

Visualization: Create a scatter plot of the first two principal components.

Results and Bioinformatics Interpretation

PCA reveals distinct expression patterns separating the fermentative and respiratory growth phases. The first principal component (PC1) typically captures the majority of variance (approximately 80%), representing the dominant expression program shift between metabolic states [4]. The second component (PC2) often captures additional variance (approximately 10%), potentially reflecting finer-scale regulatory events.

Table: Variance Explained by Principal Components in a Typical Diauxic Shift Dataset

| Principal Component | Percentage of Variance Explained | Cumulative Percentage |

|---|---|---|

| PC1 | 79.8% | 79.8% |

| PC2 | 9.6% | 89.4% |

| PC3 | 4.1% | 93.5% |

| PC4 | 2.6% | 96.1% |

| PC5 | 2.2% | 98.3% |

| PC6 | 1.0% | 99.3% |

| PC7 | 0.7% | 100.0% |

Genes with high loadings on PC1 represent those most significantly altered during the metabolic shift, including those involved in carbon metabolism, mitochondrial function, and stress response. This dimension effectively separates samples collected during fermentative growth (negative scores) from those during respiratory growth (positive scores).

Workflow Visualization

Advanced Protocol: Integrating Metabolomics with Transcriptomics Using Multi-Omics PCA

Modern systems biology increasingly relies on multi-omics approaches that integrate different molecular data layers to gain comprehensive insights into biological systems [27] [29]. This protocol extends the basic PCA approach to integrate gene expression and metabolomics data from diauxic shift studies, providing a framework for similar integrations in cancer research where transcriptomic and metabolomic dysregulation are hallmarks of malignancy.

Experimental Design

The integrated analysis utilizes both transcriptomic profiles and untargeted intracellular metabolomic data collected during the diauxic shift in yeast. Samples are collected during both pre-diauxic (fermentative) and post-diauxic (respiratory) phases [27]. For cancer studies, equivalent designs would compare tumor versus normal tissues.

Multi-Omics Integration Methodology

Data Preprocessing

Normalization: Independently normalize transcriptomic and metabolomic datasets using Z-score normalization.

Data Merging: Combine normalized datasets into a single matrix with samples as rows and features (genes + metabolites) as columns.

Batch Effect Correction: Apply ComBat or similar algorithms if data originated from different analytical batches.

Concatenation-Based PCA

Perform PCA on the combined dataset to identify patterns that capture covariance between transcriptomic and metabolomic features:

Result Interpretation

- Joint Components: Identify principal components that represent coordinated variation in both gene expression and metabolite abundance.

- Feature Loadings: Examine high-loading genes and metabolites on each component to infer functional relationships.

- Pathway Mapping: Use enrichment analysis to map high-loading features to biological pathways.

Key Findings from Integrated Diauxic Shift Studies

Integrated analysis of diauxic shift reveals:

- Metabolic Rewiring: Distinct metabolic profiles characterize fermentative versus respiratory phases, with 215 metabolic features significantly altered [27].

- Pathway Enrichments: Significant perturbations occur in central carbon metabolism, glycerophospholipid metabolism, glutathione metabolism, and amino acid metabolism [27].

- Regulatory Insights: Deletion of specific regulatory genes (YGR067C, TDA1, RTS3) causes distinct metabolic perturbations, revealing their functional roles [27].

Table: Research Reagent Solutions for Diauxic Shift and Cancer Genomics Studies

| Reagent/Resource | Function/Application | Example Source/Provider |

|---|---|---|

| Yeast Deletion Strains (e.g., tda1Δ) | Functional characterization of genes during metabolic shifts | EUROSCARF Deletion Library [30] |

| RNA Extraction Kit (e.g., RNeasy) | High-quality RNA isolation for transcriptomics | QIAGEN [30] |

| SC Medium | Defined growth medium for controlled yeast cultivation | Formulated in-house per Sherman (2002) |

| Illumina Sequencing | High-throughput RNA sequencing | NgI, Azenta [30] |

| Mass Spectrometry | Untargeted metabolomics profiling | Various platforms (e.g., LC-MS) [27] |

| MATLAB Bioinformatics Toolbox | Gene expression analysis and PCA | MathWorks [5] [4] |

| exvar R Package | Integrated analysis of gene expression and genetic variation | GitHub [31] |

Application in Cancer Genomics: From Yeast Models to Human Tumors

Cross-Species Analytical Framework

The analytical framework established in yeast diauxic shift studies directly translates to cancer genomics, particularly in investigating the Warburg effect (aerobic glycolysis) where cancer cells preferentially utilize fermentation over respiration even in oxygen-rich conditions [27] [28].

Signaling Pathway Analysis

Comparative Analysis: Diauxic Shift versus Cancer Metabolic Dysregulation

Table: Comparative Metabolic Features Between Yeast Diauxic Shift and Cancer Warburg Effect

| Biological Feature | Yeast Diauxic Shift | Cancer Warburg Effect |

|---|---|---|

| Preferred Metabolic State | Transition: Fermentation → Respiration | Locked: Aerobic Glycolysis (Fermentation) |

| Regulatory Proteins | Mig1p, Hxk2p, Tda1p, HAP Complex | HIF-1, MYC, p53, AKT/mTOR |

| Key Metabolic Pathways | Glycolysis, TCA Cycle, Oxidative Phosphorylation | Glycolysis, Lactate Fermentation, Pentose Phosphate |

| Gene Expression Analysis | PCA reveals phase-specific clusters [4] | PCA separates tumor subtypes and grades |

| Mitochondrial Function | Activated post-shift for respiration | Often impaired despite functional capacity |

| Technological Approaches | Microarrays, RNA-seq, Mass Spectrometry [27] | Single-cell RNA-seq, Spatial Transcriptomics [29] |

Transcriptomics in Cancer Studies: Advanced Methodologies

Modern cancer transcriptomics employs several advanced approaches beyond standard PCA:

Weighted Gene Co-expression Network Analysis (WGCNA): Identifies modules of highly correlated genes and assesses their preservation between normal and tumor tissues [32].

Single-Cell RNA Sequencing: Reveals transcriptional heterogeneity within tumors, identifying rare cell populations and resistance mechanisms [29].

Spatial Transcriptomics: Maps gene expression within tissue architecture, preserving spatial context of tumor-microenvironment interactions [33].

Integrated Variant Analysis: Tools like the exvar package combine expression and genetic variant analysis from RNA-seq data [31].

Practical Considerations for MATLAB Implementation

Handling Large-Scale Genomic Data

- Memory Management: Use tall arrays for datasets exceeding memory limits.

- Parallel Computing: Accelerate PCA computation with the Parallel Computing Toolbox.

- Cloud Integration: Leverage cloud resources for computationally intensive analyses [29].

Algorithm Selection

- SVD vs. Eigenvalue Decomposition: Choose appropriate algorithms based on data dimensions and missing value patterns [7].

- Missing Data Handling: Implement appropriate strategies (complete case, imputation) based on missing data patterns [7].

The application of PCA through MATLAB's princomp/pca functions provides a powerful analytical framework for extracting biologically meaningful patterns from gene expression data, from fundamental metabolic transitions in model organisms like yeast to complex dysregulations in cancer. The protocols outlined here for both standard and multi-omics PCA create a foundation for researchers to investigate complex biological systems, with direct relevance to drug development through identification of key regulatory pathways and potential therapeutic targets. As genomic technologies evolve, integrating these classical statistical approaches with modern machine learning methods will further enhance our ability to decipher complex biological networks in both basic and translational research contexts.

Step-by-Step PCA Workflow for Gene Expression Analysis

Within gene expression analysis research, particularly when utilizing the princomp function for Principal Component Analysis (PCA), the initial and most critical step is the proper loading and import of microarray and RNA-seq data. MATLAB provides a comprehensive environment for managing gene expression data, offering specialized functions and objects within its Bioinformatics Toolbox for handling data from various technological platforms [34] [35]. For researchers and drug development professionals, understanding these data import mechanisms is fundamental to ensuring the biological validity of subsequent analyses, including dimensionality reduction and pattern discovery. Proper data handling establishes the foundation for all downstream analytical processes, from basic differential expression testing to advanced multivariate methods like PCA that reveal hidden structures in high-dimensional genomic data.

Microarray Data Handling

Data Import and Preprocessing

Microarray technology enables high-throughput measurement of gene expression levels using oligonucleotide or cDNA probes attached to a solid surface [36]. MATLAB provides specialized functions for importing data from various microarray file formats and platforms:

- Platform-Specific Import Functions: Use

gprreadfor GenePix Results files,agfereadfor Agilent Feature Extraction files,ilmnbsreadfor Illumina BeadStudio data, andimagenereadfor ImaGene Results files [34]. - GEO Data Access: Retrieve public data from the Gene Expression Omnibus (GEO) using

getgeodata,geoseriesread, andgeosoftreadfunctions [34]. - Data Management Objects: Store and manage experimental data using specialized objects including

bioma.ExpressionSet,bioma.data.ExptData, andbioma.data.MIAMEfor MIAME-standard experiment information [34] [37].

A critical preprocessing workflow involves filtering to remove uninformative genes before proceeding to advanced analysis like PCA:

This filtering sequence typically reduces a dataset from thousands of genes to several hundred most informative profiles, creating a manageable set for PCA analysis while preserving biologically relevant expression patterns [5] [4].

Experimental Design and Normalization Considerations

Microarray data acquisition begins with hybridization of labeled samples to complementary DNA probes fixed on a solid surface [36]. The quantification process involves distinguishing foreground probe intensity from local background, typically using mean or median summaries of pixel intensities within defined regions [36]. The resulting data represent fluorescence intensities that reflect relative gene expression levels, which are commonly log2-transformed to approximate normal distributions suitable for parametric statistical analysis [36] [4].

Table 1: Key MATLAB Functions for Microarray Data Analysis

| Function | Category | Purpose |

|---|---|---|

gprread |

Data Import | Read GenePix Results file |

geoseriesread |

Data Import | Read GEO Series data |

genevarfilter |

Preprocessing | Filter genes with low variance |

genelowvalfilter |

Preprocessing | Filter genes with low expression |

mattest |

Analysis | Two-sample t-test for differential expression |

mafdr |

Analysis | False discovery rate estimation |

clustergram |

Visualization | Heat map with hierarchical clustering |

mapcaplot |

Visualization | Interactive PCA scatter plot |

RNA-seq Data Handling

Data Import and Preprocessing Workflow

RNA sequencing represents a more recent technological approach that enables comprehensive transcriptome quantification through high-throughput sequencing of cDNA fragments [38]. Unlike microarray intensities, RNA-seq data originate as sequence reads that require extensive preprocessing before quantitative analysis:

- Raw Sequence Import: Use