Mastering Heteroskedasticity in RNA-seq PCA: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive framework for addressing heteroskedasticity in RNA-seq data analysis, particularly when using Principal Component Analysis (PCA).

Mastering Heteroskedasticity in RNA-seq PCA: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive framework for addressing heteroskedasticity in RNA-seq data analysis, particularly when using Principal Component Analysis (PCA). Heteroskedasticity—where variance depends on mean expression—violates key assumptions of standard PCA and can severely distort biological interpretation. We explore foundational concepts explaining why count data exhibits this property and its detrimental effects on dimensionality reduction. The guide compares established and emerging methodological solutions including variance-stabilizing transformations, Pearson residuals, and model-based factor analysis. Through troubleshooting guidance and empirical validation from recent benchmarks, we deliver practical recommendations for selecting appropriate methods based on data characteristics. This resource empowers researchers and drug development professionals to implement robust preprocessing pipelines that preserve biological signal in transcriptomic studies.

Understanding Heteroskedasticity: Why RNA-seq Data Breaks Standard PCA Assumptions

Frequently Asked Questions (FAQs)

1. What is heteroskedasticity in the context of RNA-seq data? Heteroskedasticity refers to the phenomenon where the variance of gene expression is not constant across different levels of mean expression. In RNA-seq data, this manifests as a specific dependency: genes with low read counts tend to exhibit different variability than genes with high read counts. Specifically, the variance observed is typically greater than the mean, a characteristic known as overdispersion, and the relationship between the mean and variance often follows a quadratic trend rather than the linear relationship assumed under a Poisson model [1] [2].

2. Why is heteroskedasticity a problem for RNA-seq analysis? Most standard bulk RNA-seq analysis methods, including many differential expression tools, assume equal variance across experimental groups (homoscedasticity). When this assumption is violated due to heteroskedasticity, it can lead to:

- Poor error control, increasing false positives (Type I errors).

- Reduced statistical power, increasing false negatives (Type II errors).

- Compromised detection of differentially expressed genes (DEGs), as variance can be both under- and over-estimated [3] [4].

3. How can I visually assess if my data exhibits heteroskedasticity? You can use several diagnostic plots to identify unequal variances:

- Mean-Variance Scatter Plots: Plot the log of the mean expression against the log of the variance for each gene. A strong, correlated trend indicates a mean-variance relationship [2].

- Voom Trend Plot: Plot the mean-variance trend calculated by the

voomfunction. Distinct, separated trends for different experimental groups indicate group heteroscedasticity [3]. - Multi-dimensional Scaling (MDS) Plots or PCA Plots: Samples from a group with higher variability will appear more spread out in the plot dimensions compared to samples from a group with lower variability [3] [5].

4. My PCA plot shows heteroskedasticity. What should I do next? If your PCA or other diagnostics reveal group heteroscedasticity, you should employ statistical methods designed to handle it.

- For differential expression analysis, consider methods like

voomByGrouporvoomWithQualityWeightswith a blocked design (voomQWB), which account for group-specific variances [3]. - Ensure you are using information-sharing approaches (e.g., empirical Bayes shrinkage in DESeq2 or edgeR) that model the mean-variance relationship, as they are more robust than methods that do not [1] [6].

5. Does normalization affect heteroskedasticity? Yes, normalization is a critical step that can influence the mean-variance relationship. Between-sample normalization methods, such as TMM (Trimmed Mean of M-values) used in edgeR or the median-of-ratios method used in DESeq2, are designed to account for technical variations like sequencing depth. Using an appropriate normalization method is foundational for ensuring that technical variability does not artificially introduce or mask biological heteroskedasticity [7] [4] [6].

Troubleshooting Guides

Issue 1: Identifying Heteroskedasticity in Pseudo-bulk RNA-seq Data

Problem: A researcher is analyzing a pseudo-bulk single-cell RNA-seq dataset and wants to determine if heteroskedasticity exists between different experimental conditions (e.g., healthy vs. diseased).

Investigation Protocol:

- Create Pseudo-bulk Samples: Aggregate raw counts from single cells into sample-level summaries (e.g., sum counts per gene for each biological replicate) [3].

- Normalize Data: Perform between-sample normalization (e.g., using TMM in edgeR or the median-of-ratios method in DESeq2) to adjust for library size [7] [6].

- Generate Diagnostic Plots:

- MDS/PCA Plot: Visualize overall sample similarities and distances. Look for differences in spread (dispersion) between sample groups [3] [5].

- Calculate Group-specific Voom Trends:

- For each experimental group, use the

voomfunction separately to obtain group-specific mean-variance trends. - Plot these trends together. Well-separated curves or curves with distinct shapes indicate group heteroscedasticity [3].

- For each experimental group, use the

- Estimate Biological Coefficient of Variation (BCV):

- Using a tool like

edgeR, estimate the common BCV for each group separately. A larger range of BCV values across groups (e.g., 0.15 to 0.50) suggests heteroskedasticity [3].

- Using a tool like

Interpretation of Key Metrics: The table below summarizes quantitative indicators of heteroskedasticity from real datasets.

| Dataset | Evidence of Heteroskedasticity | Common BCV Range |

|---|---|---|

| Human PBMCs [3] | Healthy controls had distinctly lower voom trends than patient groups. | 0.154 (Healthy) to 0.241 (Asymptomatic) |

| Human Lung Macrophages [3] | Group-specific voom trends had distinct shapes and were well-separated. | 0.338 to 0.495 |

| Mouse Lung Tissue [3] | Minor differences in voom trends; 24m samples more spread out in MDS. | 0.197 to 0.240 |

| LNCaP Cell Line (DHT vs Mock) [2] | Strong correlation between mean and variance (Spearman > 0.91). | Not Provided |

Issue 2: Addressing Heteroskedasticity in Differential Expression Analysis

Problem: A scientist has confirmed the presence of group heteroscedasticity and needs to perform a differential expression analysis that controls for it to avoid inflated false discovery rates.

Solution Protocol:

- Method Selection: Choose a differential expression method that explicitly accounts for unequal group variances. Two recommended approaches are:

voomByGroup: This method fits a separate mean-variance trend for each experimental group in the dataset, allowing for group-specific variance modeling [3].voomWithQualityWeightswith Blocked Design (voomQWB): This approach assigns quality weights at the group level (as "blocks") rather than at the individual sample level, effectively adjusting the variance estimates for entire groups [3].

Implementation in R:

The analysis typically starts with a DGEList object (created using

edgeR)Following this, the

voomByGrouporvoomQWBfunction is applied, and the resulting object is used in a standardlimmapipeline for linear modeling and empirical Bayes moderation [3].

- Validation:

- Compare the results (number of DEGs, p-value distribution) with methods that assume homoscedasticity.

- Check if the method provides better error control and power through simulation studies or benchmark datasets known to have heteroskedasticity [3].

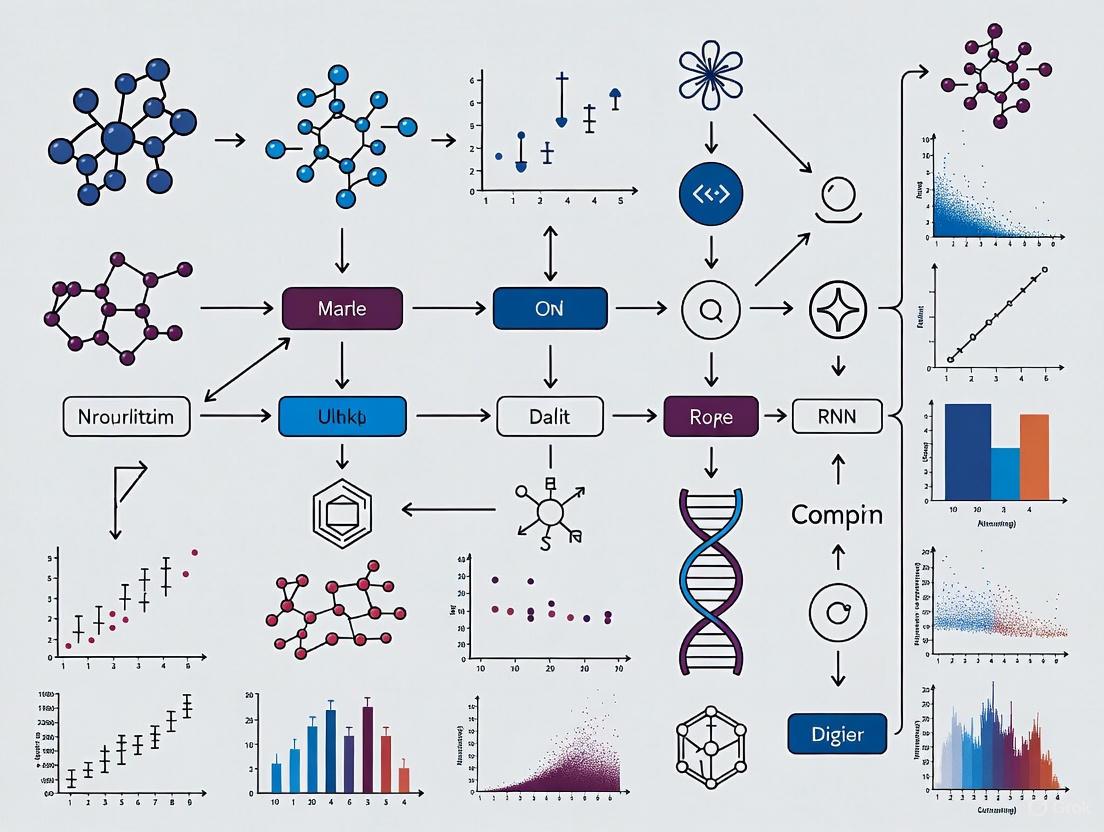

Logical Workflow for Heteroskedastic Data: The following diagram illustrates the decision pathway for analyzing RNA-seq data when heteroskedasticity is suspected.

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Method | Function | Use Case |

|---|---|---|

voom (limma) [3] [1] |

Models the mean-variance trend in log-CPM data and generates precision weights for linear modeling. | Standard differential expression analysis for RNA-seq data. |

voomByGroup [3] |

Extends voom by fitting group-specific mean-variance trends. |

Differential expression when groups have unequal variances. |

voomWithQualityWeights (voomQWB) [3] |

Assigns sample or group-level quality weights to down-weight outliers or adjust for group-wise variability. | Handling group heteroscedasticity or individual sample outliers. |

| DESeq2 [6] | Uses empirical Bayes shrinkage to estimate dispersions and fold changes, modeling overdispersion with a negative binomial distribution. | A robust standard for differential expression analysis that handles mean-variance dependence. |

| edgeR [3] [1] | Employs empirical Bayes shrinkage to estimate dispersions under a negative binomial model. | A robust standard for differential expression analysis. |

| TMM Normalization [7] [4] | A between-sample normalization method that corrects for differences in sequencing depth and RNA composition. | Standard first-step normalization for most RNA-seq analyses. |

| PCA/MDS Plots [3] [5] | Dimension reduction techniques to visualize overall sample similarities and disparities. | Quality control and initial assessment of group variability. |

Why is heteroskedasticity a problem for standard data analysis tools like PCA?

Answer: Principal Component Analysis (PCA) and many distance metrics are most effective and reliable when applied to data with uniform variance across its dynamic range. This property is known as homoscedasticity.

Single-cell and bulk RNA-seq data are inherently heteroskedastic. In these datasets, the variance of gene counts depends strongly on their mean expression level. Highly expressed genes show much greater variance than lowly expressed genes [8]. When you apply standard PCA to raw or simply transformed counts, this heteroskedasticity introduces a major bias: the first principal components often simply reflect the difference between high-variance and low-variance genes, rather than meaningful biological signal [9] [10]. This can mask true biological heterogeneity, such as the presence of rare cell types, and reduce the power of downstream analyses like clustering [9].

How can I visually identify heteroskedasticity in my own dataset?

Answer: A simple and effective diagnostic tool is the MA-plot, which plots the log-fold change between two samples against the average expression level [10].

In a homoscedastic scenario, the spread of log-fold changes would be consistent across all expression levels. In RNA-seq data, you will typically see that the spread of points (the variance) is much larger for genes with low average expression and smaller for genes with high average expression. This "funnel-shaped" pattern is a classic signature of heteroskedasticity [10].

The workflow below outlines the process of diagnosing heteroskedasticity and exploring potential solutions.

Comparison of Common Transformation and Modeling Approaches

The following table summarizes the core methodologies available to address heteroskedasticity, along with their key characteristics.

| Method/Approach | Core Principle | Key Advantages | Key Limitations |

|---|---|---|---|

| Shifted Logarithm [8] | Applies a log transformation with a pseudo-count ($log(y/s + y_0)$). | Simple, fast, and computationally efficient. | Can be sensitive to the choice of pseudo-count $y_0$; may not fully stabilize variance [8]. |

| Pearson Residuals (e.g., scTransform) [9] [8] | Rescales counts based on residuals from a fitted model (e.g., negative binomial GLM). | Effectively stabilizes variance; is widely used. | Model misspecification can introduce biases; computationally intensive for very large datasets [9]. |

| Model-Based DR (e.g., scGBM, GLM-PCA) [9] | Directly models count data using a probabilistic framework (e.g., Poisson or negative binomial) to infer latent factors. | Directly handles count nature of data; allows for uncertainty quantification in the low-dimensional space [9]. | Can be slow and have convergence issues on large datasets; can be more complex to implement [9]. |

What are the step-by-step protocols for implementing these solutions?

Protocol 1: Analysis Pipeline Using Pearson Residuals

This protocol uses a Generalized Linear Model (GLM) to calculate Pearson residuals, which are used for downstream PCA.

Experimental Workflow:

Key Research Reagents & Solutions:

| Item | Function in the Protocol |

|---|---|

| UMI Count Matrix | The raw input data; a genes (rows) x cells (columns) matrix of integer counts. |

| Size Factors ($s_c$) | Cell-specific scaling factors to correct for technical variability in sequencing depth. Often calculated as the total count per cell [8]. |

| Gamma-Poisson (Negative Binomial) GLM | A statistical model that accounts for overdispersion in count data, providing fitted mean ($μ$) and dispersion ($α$) parameters for each gene [8]. |

| Pearson Residuals | The variance-stabilized output; calculated as (observed count - fitted value) / standard deviation of the fitted model [8]. |

Protocol 2: Analysis Pipeline Using Model-Based Dimensionality Reduction

This protocol uses a model like scGBM, which performs dimensionality reduction directly on the counts, integrating normalization and latent factor discovery into a single, unified step [9].

Experimental Workflow:

Key Research Reagents & Solutions:

| Item | Function in the Protocol |

|---|---|

| UMI Count Matrix | The raw input data. |

| Poisson Bilinear Model | The core model, e.g., used by scGBM, which factorizes the count matrix into gene loadings ($Λ$), cell scores ($X$), and offset terms [9]. |

| Fast Estimation Algorithm | An optimized algorithm (e.g., iteratively reweighted SVD) that makes fitting the model to large datasets (millions of cells) computationally feasible [9]. |

| Cluster Cohesion Index (CCI) | A metric that leverages the model's uncertainty quantification to assess the confidence that a cluster represents a distinct biological population versus an artifact of sampling variability [9]. |

A fundamental challenge in RNA-Seq data analysis, particularly for single-cell RNA sequencing (scRNA-seq), is the presence of heteroskedasticity—where the variability of gene expression data depends on its mean expression level. This phenomenon means that highly expressed genes show more count variation than lowly expressed genes, violating the uniform variance assumption of many standard statistical methods [8]. This heteroskedasticity arises from both biological sources (genuine cell-to-cell variation in transcript abundance) and technical sources (measurement noise, sampling efficiency, and library preparation artifacts). When performing dimensionality reduction via Principal Component Analysis (PCA), failing to properly account for this heteroskedasticity can induce spurious heterogeneity, mask true biological variability, and lead to incorrect biological interpretations [9]. This technical support guide provides troubleshooting protocols to help researchers disentangle these complex variance structures.

Troubleshooting Guides & FAQs

FAQ 1: My PCA plot shows separation that I suspect is technical, not biological. How can I confirm this?

Answer: Suspect technical drivers in your PCA when separation strongly correlates with known technical covariates like sequencing depth or batch.

- Investigation Protocol:

- Correlate PCs with Technical Metrics: Calculate correlations between your principal components and metrics like total UMIs per cell, mitochondrial percentage, or batch identity.

- Visualize Covariates: Plot these technical metrics onto your PCA plot. Color the data points by, for example, the total number of UMIs per cell (

nCount_RNAin Seurat) or batch ID. A clear gradient or grouping by a technical metric indicates a strong technical influence. - Employ Variance-Stabilizing Transformations: Instead of simple log-transformation, use methods designed to handle heteroskedasticity. Pearson residuals (from tools like

sctransform) or theacosh-based transformation are theoretically better suited for stabilizing variance across the dynamic range of expression data [8].

FAQ 2: How can I handle unequal variance (heteroscedasticity) between experimental groups in pseudo-bulk analysis?

Answer: Standard bulk RNA-Seq differential expression tools like limma-voom, edgeR, and DESeq2 often assume equal variance across groups (homoscedasticity). When this assumption is violated, they can produce inflated false discovery rates or lose power [11].

- Solution Protocol:

- Diagnose Heteroscedasticity:

- Inspect Multi-dimensional Scaling (MDS) plots for differences in spread between sample groups.

- Examine group-specific mean-variance (voom) trends. Separated or differently shaped trends for different groups indicate heteroscedasticity [11].

- Compare the common biological coefficient of variation (BCV) across groups. Larger BCVs indicate greater within-group biological variation [11].

- Apply Robust Methods: Use differential expression methods designed to handle group heteroscedasticity.

voomByGroup: Fits group-specific mean-variance trends.voomWithQualityWeightswith a blocked design (voomQWB): Assigns quality weights at the group level rather than the sample level, effectively adjusting variance estimates for entire groups [11].

- Diagnose Heteroscedasticity:

FAQ 3: What is the best data transformation before PCA to mitigate technical variation?

Answer: No single transformation is universally "best," but benchmarks indicate that a logarithm with a carefully chosen pseudo-count is a robust and computationally efficient choice. The key is to avoid transformations that leave strong residual dependencies on technical factors like sequencing depth [8].

- Transformation Comparison Protocol: The table below summarizes common transformation approaches and their performance in mitigating technical variation.

Table 1: Comparison of Data Transformation Methods for scRNA-seq PCA

| Transformation Approach | Key Principle | Effectiveness in Benchmarking | Technical Artifact Reduction |

|---|---|---|---|

Shifted Logarithm (e.g., log(y/s + y0)) |

Delta-method based variance stabilization [8]. | Good to excellent performance; a strong default choice [8]. | Can leave a residual dependency on size factors if y0 is chosen poorly. |

Pearson Residuals (e.g., sctransform) |

Residuals from a regularized negative binomial GLM [8]. | Good performance; effectively stabilizes variance. | Better at mixing cells with different size factors compared to simple log transform [8]. |

| Analytic Pearson Residuals (APR) | Closed-form approximation of Pearson residuals [9]. | Can capture signal in common cell types but may struggle with rare cell types [9]. | Varies based on data structure. |

| Latent Expression (Sanity, Dino) | Infers a latent, true expression state from a generative model [8]. | Appealing theoretical properties, but variable empirical performance. | Designed to model and separate technical noise. |

| Model-based (scGBM) | Performs dimensionality reduction directly on counts using a Poisson bilinear model, unifying normalization and factorization [9]. | Better captures biological signal and removes unwanted variation in benchmarks [9]. | Directly models count distribution, avoiding transformation-induced artifacts. |

FAQ 4: My dataset contains rare cell types. Why are they not appearing in my dimensionality reduction?

Answer: Rare cell types can be masked by the high variance of more abundant "null" genes when using standard PCA on transformed data. The normalization process can inadvertently downweight the signal from genes that define rare populations [9].

- Protocol for Rare Cell Type Discovery:

- Leverage Model-based Methods: Consider using a model-based dimensionality reduction tool like scGBM (Single-Cell Gamma-Poisson Bilinear Model). This method directly models the count data, which can help preserve the signal from rare cell types that is lost in standard log+PCA or SCT+PCA workflows [9].

- Focus on Feature Selection: Prior to PCA, use rigorous feature selection to identify Highly Variable Genes (HVGs). This reduces the dilution of signal by non-informative genes.

- Validate with Clustering Cohesion: The scGBM method provides a Cluster Cohesion Index (CCI), which quantifies the confidence that a cluster represents a biologically distinct population versus an artifact of sampling variability. This is a powerful tool for validating the presence of rare cell types [9].

Experimental Protocols for Key Analyses

Protocol 1: Pseudo-bulk Analysis with Heteroscedasticity Checks

Application: Differential expression analysis from scRNA-seq data across pre-defined groups (e.g., conditions, genotypes) while accounting for group-level variance differences.

Workflow:

Materials:

- Input: Single-cell count matrix with cell-level metadata (sample, cluster/cell type).

- Software: R environment with packages

limma,edgeR, andvariancePartitionormissMethyl.

Step-by-Step Instructions:

- Pseudo-bulk Creation: Aggregate raw counts from all cells belonging to the same combination of sample and cell type (or cluster) to create a pseudo-bulk count matrix.

- Data Normalization: Calculate normalization factors (e.g., using

edgeR::calcNormFactors) on the pseudo-bulk matrix. - Heteroscedasticity Diagnosis:

- Generate an MDS plot and color points by group. Observe if some groups exhibit greater spread than others.

- Use

edgeR::estimateDispto calculate a common biological coefficient of variation (BCV) for the dataset. Compare BCVs across groups by estimating them separately. - Plot group-specific mean-variance trends using the

voomfunction on subsets of data for each group. Look for trends that are separated along the y-axis or have different shapes [11].

- Differential Expression:

- If significant heteroscedasticity is detected, use

voomByGrouporvoomQWB. - If group variances are similar, proceed with standard

voom,edgeR, orDESeq2.

- If significant heteroscedasticity is detected, use

- Model Fitting: Fit a linear model in

limmaaccounting for relevant experimental design and conduct empirical Bayes moderation (eBayes). Extract differentially expressed genes usingtopTable.

Protocol 2: Model-Based Dimensionality Reduction with scGBM

Application: Capturing a low-dimensional representation of single-cell data that is robust to technical noise and suitable for identifying rare cell types.

Workflow:

Materials:

- Input: Raw UMI count matrix (genes x cells).

- Software: R and the

scGBMpackage available from GitHub (https://github.com/phillipnicol/scGBM) [9].

Step-by-Step Instructions:

- Model Fitting: Fit the scGBM model to the raw count matrix. The model is defined as:

- ( Y{gc} \sim \text{Poisson}(\exp(\mug + \nuc + \mathbf{\Lambda}g^\top \mathbf{F}c)) )

- Where ( Y{gc} ) is the count for gene g in cell c, ( \mug ) is a gene-specific intercept, ( \nuc ) is a cell-specific intercept (accounting for depth), and ( \mathbf{\Lambda}g ) and ( \mathbf{F}c ) are the latent factor loadings and scores, respectively [9].

- Extract Outputs: From the fitted model, extract the low-dimensional embedding (matrix of ( \mathbf{F}_c )) and the associated uncertainty estimates for each cell's position.

- Downstream Clustering: Use a clustering algorithm (e.g., Louvain, Leiden) on the low-dimensional embedding to identify cell populations.

- Uncertainty Quantification: For each cluster, calculate the Cluster Cohesion Index (CCI) provided by scGBM. This index leverages the uncertainty in each cell's latent position.

- A high CCI indicates a tight, well-separated cluster with high confidence that it represents a true biological population.

- A low CCI suggests the cluster may be an artifact of technical noise or sampling variability and should be interpreted with caution [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Computational Tools for RNA-Seq Variation Analysis

| Item/Tool | Function/Benefit | Considerations for Use |

|---|---|---|

| ERCC Spike-In Mix | A set of synthetic RNA controls of known concentration used to standardize RNA quantification, determine sensitivity, dynamic range, and control for technical variation between runs [12]. | Not recommended for samples of very low concentration. Should be spiked in at a checkerboard pattern across samples for robust comparison [12]. |

| UMIs (Unique Molecular Identifiers) | Short random nucleotide sequences that tag individual mRNA molecules before PCR amplification. Enable accurate correction for PCR amplification bias and errors, providing a true digital count of original transcript molecules [12]. | Essential for deep sequencing (>50 million reads/sample) or low-input library preps. Require specific bioinformatic processing (e.g., with fastp or umis tools) for UMI extraction and deduplication [12]. |

| Ribo-depletion Kits | Remove abundant ribosomal RNA (rRNA), allowing for efficient sequencing of other RNA species like mRNA and lncRNA. Crucial for studying non-polyadenylated transcripts or samples with degraded RNA (e.g., FFPE) [12]. | Required for analysis of bacterial transcripts, lncRNA, or when using FFPE or blood samples (often combined with globin depletion) [12]. |

| scGBM R Package | A model-based dimensionality reduction tool that directly models UMI counts, quantifies uncertainty in cell embeddings, and helps distinguish biological signal from artifact [9]. | Particularly useful for analyzing datasets with rare cell types or when confidence in cluster definitions is required. |

| Limma with voomByGroup/voomQWB | Extensions to the standard limma-voom pipeline that account for unequal variance between experimental groups in pseudo-bulk analyses, improving error control and power [11]. |

Should be employed after diagnostic plots (MDS, BCV, voom trends) indicate the presence of group heteroscedasticity. |

Core Concepts: Understanding Spurious Separation in PCA

What is the fundamental relationship between variance and PCA in RNA-seq data? Principal Component Analysis (PCA) summarizes the largest sources of variance in a dataset. In RNA-seq, the first principal component (PC1) captures the most variation, PC2 the next largest, and so on [13]. When technical factors like library size (the total number of reads per sample) differ systematically between groups, this can become a dominant, non-biological source of variance that PCA may mistake for a meaningful signal [14].

How can expression levels mislead the analysis? Genes with very high raw counts naturally exhibit larger absolute variations. If data is not properly scaled before PCA, these highly expressed genes can disproportionately influence the principal components, creating separation patterns based on technical artifacts rather than true biological differences [15].

Diagnostic Guide: Identifying Technical Drivers in Your PCA

Use the following workflow to determine if your sample separation is biologically meaningful or technically driven.

Diagnostic Table: Patterns of Spurious vs. Biological Separation

| Diagnostic Feature | Spurious Technical Separation | True Biological Separation |

|---|---|---|

| Library Size vs. PC1 Correlation | Strong correlation (e.g., R² > 0.8) [14] | Weak or no correlation |

| RLE Plot Pattern | Boxplot medians not aligned around zero; systematic wobble [14] | Medians aligned around zero; similar IQR across samples |

| Leading Genes in PC Loadings | Dominated by very highly expressed genes (e.g., ribosomal proteins, mitochondrial genes) [15] | Biologically relevant marker genes for the experimental conditions |

| Scree Plot Pattern | PC1 explains an exceptionally high percentage of variance (e.g., >50%) [16] | Variance more evenly distributed across several PCs |

| Replicate Clustering | Poor clustering of biological replicates within experimental groups | Tight clustering of biological replicates by experimental condition |

Normalization Protocols: Correcting Technical Variation

How do I normalize for library size differences? Library size normalization is essential because samples with more reads will naturally have higher counts across most genes. The Counts Per Million (CPM) method calculates scaled proportions:

Protocol: CPM Normalization

- Let ( Y_{ij} ) be the count for gene ( i ) in sample ( j )

- Let ( N_j ) be the library size for sample ( j ) (sum of all counts)

- Calculate: ( \text{CPM}{ij} = \frac{Y{ij}}{N_j} \times 10^6 ) [14]

R Implementation:

How do I handle compositional differences between samples? The TMM (Trimmed Mean of M-values) method is more robust for between-sample normalization, as it accounts for the fact that RNA-seq data represents a compositional landscape where increases in some genes necessarily mean decreases in others [14].

Protocol: TMM Normalization using edgeR

- Filter lowly expressed genes:

keep <- filterByExpr(count_matrix, group=group) - Create DGEList object:

y <- DGEList(counts[keep,]) - Calculate normalization factors:

y <- calcNormFactors(y, method="TMM") - Convert to log-CPM:

logcpm <- cpm(y, log=TRUE)[14]

Data Transformation Protocols for PCA

Should I scale genes before PCA? Yes. Without scaling, highly expressed genes will dominate the PCA results regardless of their biological relevance.

Protocol: Z-score Normalization for PCA

Should I select genes before PCA? Filtering to the most variable genes often improves signal-to-noise ratio.

Protocol: Highly Variable Gene Selection

Advanced Solutions for Heteroskedastic Data

What is Biwhitened PCA (BiPCA) and when should I use it? BiPCA is a principled framework specifically designed to handle heteroskedastic noise in count data like RNA-seq. It overcomes the limitation of standard PCA, which assumes uniform noise variance across all measurements [17].

How BiPCA Works:

- Biwhitening: Rescales rows and columns of the data matrix to standardize noise variances

- Rank Estimation: Determines the true dimensionality of the biological signal

- Optimal Denoising: Applies singular value shrinkage to recover the underlying signal matrix [17]

When to Consider BiPCA:

- When standard normalization methods fail to remove technical batch effects

- When working with complex single-cell or spatial transcriptomics data

- When you suspect heteroskedastic noise is obscuring biological signals

Research Reagent Solutions

Essential Computational Tools for Robust PCA

| Tool/Resource | Function | Application Context |

|---|---|---|

| DESeq2 [14] | Differential expression analysis and data transformation | Provides variance-stabilizing transformation for PCA input |

| edgeR [14] | Normalization using TMM and related methods | Library size and composition normalization |

| pcaExplorer [18] | Interactive exploration of PCA results | Diagnostic visualization and interpretation |

| BiPCA [17] | Advanced PCA for heteroskedastic count data | When standard PCA shows persistent technical artifacts |

| Unique Molecular Identifiers (UMIs) [19] | Correction for amplification bias | Single-cell RNA-seq and low-input protocols |

Frequently Asked Questions

Q1: My PCA shows clear group separation but I suspect it's driven by library size. How can I confirm this? Calculate the correlation between PC1 values and library sizes. A strong correlation (e.g., Pearson r > 0.7) suggests library size is driving the separation. Then, re-run PCA on properly normalized data (e.g., TMM-normalized log-CPM) and check if the separation persists [14].

Q2: I've normalized my data but technical batch effects still dominate my PCA. What should I do? Consider these advanced approaches:

- Include batch as a covariate in your normalization model

- Use combat or other batch correction algorithms [19]

- Implement BiPCA, which specifically addresses heteroskedastic noise [17]

- Ensure you're using the correct experimental design formula

Q3: How much variance should PC1 explain in a good quality RNA-seq dataset? There's no universal threshold, but in a well-controlled experiment with strong biological signals, PC1 typically explains 20-40% of total variance. If PC1 explains >50%, carefully check for technical confounders. Also examine whether PC1 separation corresponds to your experimental groups or technical factors [16].

Q4: Should I use raw counts or transformed data for PCA? Never use raw counts. Always apply appropriate normalization and transformation. The standard pipeline is: raw counts → filtering of lowly expressed genes → normalization (e.g., TMM) → transformation (e.g., log-CPM) → optional scaling → PCA [14].

Q5: My negative controls (e.g., DMSO) don't cluster together in PCA. What does this indicate? This suggests strong technical variation or failed experiments. Negative controls should cluster tightly in PCA. If they don't, investigate RNA quality (RIN values), batch effects, or sample processing errors before proceeding with differential expression analysis [20].

Practical Solutions: Variance Stabilization and Model-Based Approaches for RNA-seq PCA

Troubleshooting Guides

FAQ 1: Why does my PCA plot still show a strong batch effect related to sequencing depth even after applying a shifted logarithm transformation?

This is a common issue that often stems from an improperly chosen pseudo-count (y₀) in the shifted logarithm transformation. The transformation is log(y/s + y₀), where y is the raw count and s is the size factor [8].

- Problem: The value of

y₀is frequently set in an arbitrary way, for example, by using Counts Per Million (CPM) normalization which implicitly sets a very small pseudo-count. An incorrecty₀fails to adequately stabilize the variance, leaving unwanted technical variation (like library size) in the data [8]. - Solution: Instead of an arbitrary choice, parameterize the shifted logarithm based on the data's overdispersion. The recommended pseudo-count is

y₀ = 1 / (4α), whereαis a typical overdispersion value for your dataset. For many single-cell RNA-seq datasets, a value ofα = 0.5is a reasonable starting point, which setsy₀ = 0.5[8].

FAQ 2: When should I use the acosh transformation over the shifted logarithm?

The acosh transformation is the theoretically exact variance-stabilizing transformation for data following a gamma-Poisson (negative binomial) distribution [8].

- Problem: The shifted logarithm is only an approximation of the acosh transformation.

- Solution: Use the acosh transformation when you know or have a reliable estimate of the overdispersion parameter (

α). The transformation is defined asg(y) = (1/√α) * acosh(2αy + 1)[8]. In practice, the shifted logarithm with a correctly chosen pseudo-count often performs similarly, but the acosh is theoretically more sound when its assumptions are met.

FAQ 3: My data contains many zeros. Are these transformations still valid?

Yes, both the shifted logarithm and acosh transformations can handle zero counts.

- Problem: The logarithm of zero is undefined, which is why a pseudo-count is added in the shifted logarithm approach. The acosh function is defined for input values greater than or equal to 1, which is achieved by the term

(2αy + 1)even wheny=0[8]. - Solution: Ensure your transformation parameters are set correctly. For the shifted logarithm, a proper pseudo-count (

y₀) avoids creating large negative values for zero counts. For the acosh, the input for a zero count is1, andacosh(1) = 0.

Comparison of Variance-Stabilizing Transformations

The table below summarizes the core properties of the two primary transformations discussed.

Table 1: Key Characteristics of Shifted Logarithm and Acoin Transformations

| Feature | Shifted Logarithm | Acoin (acosh) Transformation |

|---|---|---|

| Formula | log(y/s + y₀) [8] |

(1/√α) * acosh(2αy + 1) [8] |

| Key Parameter | Pseudo-count (y₀) |

Overdispersion (α) |

| Parameter Guidance | y₀ = 1/(4α); α=0.5 is a good start for scRNA-seq [8] |

Requires a reliable estimate of the gene-specific or typical overdispersion [8] |

| Theoretical Basis | Approximate variance stabilizer | Exact variance stabilizer for gamma-Poisson data [8] |

| Handling of Zero Counts | Defined, as y₀ is added [8] |

Defined, as 2α*0 + 1 = 1 [8] |

Experimental Protocol: Applying Transformations for RNA-seq PCA

This protocol details the steps for applying variance-stabilizing transformations prior to Principal Component Analysis (PCA).

1. Preprocessing and Size Factor Calculation

- Input: Raw count matrix (genes x cells).

- Calculate Size Factors: For each cell

c, compute a size factors_cto normalize for differences in sequencing depth. A standard method is:s_c = (Total UMIs in cell c) / L, whereLis the average of total UMIs across all cells [8]. - Filtering: Remove genes with zero counts across all cells [21].

2. Transformation Application

- Option A: Shifted Logarithm

- Estimate a typical overdispersion (

α) for your dataset. If unknown, start withα = 0.5[8]. - Calculate the pseudo-count:

y₀ = 1 / (4α). - Apply the transformation:

log( y/s + y₀ ).

- Estimate a typical overdispersion (

- Option B: Acoin (acosh) Transformation

- Obtain a reliable estimate of the overdispersion parameter

α(e.g., per-gene estimates from a gamma-Poisson GLM) [8]. - Apply the transformation:

(1/√α) * acosh(2αy + 1).

- Obtain a reliable estimate of the overdispersion parameter

3. Post-Transformation and PCA

- Z-score Normalization (Optional but Recommended): After transformation, center and scale each gene (across cells) to have zero mean and unit variance. This ensures all genes contribute equally to the PCA [21].

- Perform PCA: Execute PCA on the transformed and scaled matrix. Use the resulting principal components for downstream analysis like clustering and visualization.

Workflow Visualization

The following diagram illustrates the logical decision pathway and steps for applying these transformations in an RNA-seq analysis pipeline.

Table 2: Key Computational Tools and Concepts for Variance-Stabilizing Transformations

| Item Name | Function / Description |

|---|---|

| Size Factor (s_c) | A per-cell scaling factor used to normalize for variations in total sequencing depth (library size) [8]. |

| Overdispersion (α) | A parameter from the gamma-Poisson model quantifying extra-Poisson variation; crucial for parameterizing both transformations [8]. |

| Pseudo-count (y₀) | A small constant added to normalized counts to avoid taking the logarithm of zero in the shifted logarithm approach [8]. |

| Gamma-Poisson GLM | A statistical model used to fit count data and estimate parameters like overdispersion, which can inform the transformations [8]. |

| Z-score Normalization | A post-transformation step that standardizes each gene to mean=0 and variance=1, ensuring equal weight in PCA [21]. |

Conceptual Foundation: From Heteroskedasticity to Pearson Residuals

What are Pearson residuals and why are they needed for single-cell RNA-seq data?

In single-cell RNA-sequencing (scRNA-seq) data analysis, Pearson residuals represent a sophisticated normalization approach that addresses the fundamental issue of heteroskedasticity - the technical variation in gene counts that depends on expression level and sequencing depth. Traditional normalization methods like log-normalization struggle with this because the variance in raw UMI count data is mean-dependent [22].

Pearson residuals are calculated as part of the SCTransform workflow, which uses a regularized negative binomial regression model. The core formula is:

Pearson residual = (Observed count - Expected count) / √(Expected variance) [23]

This transformation effectively standardizes gene expression values such that:

- Positive values indicate higher-than-expected expression

- Zero values indicate expected expression levels

- Negative values indicate lower-than-expected expression [23]

How do Pearson residuals address heteroskedasticity in PCA?

In traditional PCA applied to RNA-seq data, heteroskedasticity creates a serious technical artifact: the principal components are often dominated by technical variance rather than biological signals. This occurs because highly expressed genes naturally exhibit more variance, causing them to disproportionately influence the component loadings [22] [24].

Pearson residuals resolve this by stabilizing variance across all expression levels. After transformation, genes with different expression levels become comparable because the residuals have approximately the same variance regardless of mean expression [25] [23]. This ensures that PCA captures biological heterogeneity rather than technical artifacts.

Table 1: Comparison of Normalization Approaches for scRNA-seq PCA

| Method | Variance Handling | Technical Effect Removal | Biological Preservation | Recommended Use |

|---|---|---|---|---|

| Log-Normalization | Incomplete stabilization | Partial | Moderate | Standard workflows |

| Pearson Residuals | Complete stabilization | Effective | High | Heteroskedastic data |

| GLM-PCA | Model-based | Effective | High | Advanced users |

Implementation Frameworks: sctransform and Analytic Variants

What is the theoretical foundation of sctransform?

The sctransform method, introduced by Hafemeister and Satija (2019), employs a regularized negative binomial regression framework [22]. For each gene, it models UMI counts as:

Xcg ~ NB(μcg, θg) ln(μcg) = β0g + β1glog10(n̂c)

Where:

- Xcg represents UMI counts for cell c and gene g

- θg is the gene-specific overdispersion parameter

- β0g and β1g are gene-specific intercept and slope parameters

- n̂c is the observed sequencing depth for cell c [26]

The original implementation encountered parameter instability, particularly for lowly-expressed genes, which was addressed through a regularization procedure that smooths parameters across genes with similar expression levels [22].

What are the key improvements in sctransform v2?

The updated sctransform v2 incorporates several critical enhancements based on analysis of 59 scRNA-seq datasets [27]:

- Fixed slope parameter: The GLM slope is fixed to ln(10) with log10(total UMI) as predictor

- Improved parameter estimation: Reduces uncertainty and bias from fitting GLMs for lowly-expressed genes

- Lower bound on gene-level standard deviation: Prevents genes with extremely low expression from having artificially high Pearson residuals [27]

These improvements are invoked in Seurat via the vst.flavor = "v2" parameter in the SCTransform() function [27].

What is the "analytic Pearson residuals" approach?

Subsequent research by Lause et al. (2021) demonstrated that the original sctransform model was overspecified, leading to noisy parameter estimates [26] [28]. They proposed a more parsimonious model with a theoretical foundation:

μcg = pgnc ln(μcg) = ln(pg) + ln(nc) = β0g + ln(nc)

This model fixes the slope parameter to 1 when using ln(nc) as a predictor (known as an "offset" in GLM terminology), resulting in a single-parameter model that has an analytic solution [26] [28]. This analytic approach produces stable estimates without requiring post-hoc smoothing of parameters.

Figure 1: SCTransform Normalization Workflow

Troubleshooting Guide: Common Implementation Challenges

How should I interpret the different data layers in the SCT assay?

After running SCTransform, the Seurat object contains a new "SCT" assay with three distinct layers that serve different purposes:

Table 2: SCT Assay Layers and Their Applications

| Data Layer | Content | Purpose | Downstream Use |

|---|---|---|---|

| counts | Corrected UMI counts | Depth-adjusted counts | Differential expression |

| data | log1p(corrected counts) | Normalized expression | Visualization |

| scale.data | Pearson residuals | Variance-stabilized values | PCA, clustering, integration [25] [23] |

A common point of confusion arises when users inadvertently use the wrong layer for specific analyses. For optimal results:

- Use scale.data (Pearson residuals) for dimensionality reduction (PCA, UMAP) and clustering

- Use data or counts for visualization and differential expression [27] [23]

Why might Pearson residuals fail to resolve heteroskedasticity in my data?

Several factors can limit the effectiveness of Pearson residuals:

- Extreme batch effects: When technical variation exceeds biological variation, additional integration methods may be needed

- Poor model convergence: For very sparse datasets, the negative binomial model may not fit properly

- Insufficient regularization: The default parameters may not be optimal for all dataset types

If PCA still shows strong technical patterns after SCTransform, consider:

- Increasing the

ncellsparameter to use more cells for parameter estimation - Including additional covariates in the model using

vars.to.regress - Trying the v2 flavor with

vst.flavor = "v2"[27] [29]

How do I prepare SCT-normalized data for differential expression?

A critical implementation detail often overlooked is the need to run PrepSCTFindMarkers() before performing differential expression with SCT-normalized data [27]. This function ensures that the corrected counts are properly scaled for DE analysis:

Without this preparation step, the results may be suboptimal or misleading [27].

Experimental Protocols and Implementation

Basic SCTransform Workflow for PCA

For researchers addressing heteroskedasticity in PCA, the following protocol implements the current best practices:

Installation and setup:

Normalization with SCTransform v2:

Dimensionality reduction:

Validation of heteroskedasticity correction:

Integration Workflow for Multiple Samples

When working with multiple conditions or batches, the Pearson residuals enable effective integration:

Separate normalization:

Feature selection and integration preparation:

Integration using Pearson residuals:

This workflow preserves biological variance while removing technical artifacts, making PCA results more biologically interpretable [27].

Table 3: Critical Computational Tools for Pearson Residuals Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| sctransform R package | Core normalization algorithm | Primary implementation |

| glmGamPoi package | Accelerated model fitting | Speed improvement for large datasets |

| Seurat (v4.1+) | Integration framework | Downstream analysis |

| Scanpy (v1.9+) | Python implementation | Python workflows [26] |

Advanced Applications and Methodological Comparisons

When should I choose analytic Pearson residuals over standard sctransform?

The analytic approach provides particular advantages in specific scenarios:

- Computational efficiency: The closed-form solution requires less computation

- Theoretical purity: Avoids ad hoc smoothing steps

- Reproducibility: Deterministic results without stochastic elements [26] [28]

However, the standard sctransform implementation with regularization may be more robust for extremely heterogeneous datasets where the Poisson assumption is violated.

How do Pearson residuals compare to other variance-stabilizing transformations?

Table 4: Variance-Stabilization Methods Comparison

| Method | Theoretical Basis | Handling of Zeros | PCA Performance |

|---|---|---|---|

| Log(x+1) | Heuristic | Poor | Suboptimal due to mean-variance relationship |

| VST (DESeq2) | Parametric empirical Bayes | Moderate | Good for bulk RNA-seq |

| Pearson Residuals | Negative binomial GLM | Excellent | Optimal for scRNA-seq PCA [26] [22] |

The key advantage of Pearson residuals emerges from their direct modeling of UMI count distributions, which accurately captures the statistical properties of single-cell data, particularly the mean-variance relationship and zero inflation.

Figure 2: Method Comparison for PCA Preparation

Frequently Asked Questions

Can I apply Pearson residuals to non-UMI data?

Pearson residuals as implemented in sctransform are specifically designed for UMI-based data, which follows negative binomial or Poisson distributions. For non-UMI data (e.g., TPM, FPKM), alternative approaches like regular log-normalization or VST may be more appropriate, as the count-based assumptions may not hold.

How many variable features should I use with SCTransform?

The default of 3,000 variable features generally works well, but this parameter can be adjusted based on dataset size and complexity. For larger datasets (>50,000 cells), increasing to 5,000 may capture additional biological signal. The variable.features.n parameter controls this setting [29].

Why does SCTransform sometimes produce different results than log-normalization?

SCTransform explicitly models the mean-variance relationship in count data, while log-normalization applies a heuristic transformation. This fundamental difference means SCTransform better preserves biological heterogeneity while removing technical noise, often resulting in:

- Improved cluster separation

- Reduced influence of sequencing depth on PCA

- Enhanced identification of rare cell populations [25] [22]

Can I combine SCTransform with other normalization methods?

SCTransform is designed as a complete replacement for the standard NormalizeData, FindVariableFeatures, and ScaleData workflow. Combining it with additional normalization is generally unnecessary and may remove meaningful biological variance. The method is specifically engineered to produce data ready for downstream dimensional reduction.

Method Comparison Table

The table below summarizes key characteristics of the three model-based factor analysis methods.

| Method | Underlying Model | Key Features | Computational Scalability | Uncertainty Quantification |

|---|---|---|---|---|

| GLM-PCA [9] [8] | Poisson or Negative Binomial | Latent factor estimation in log space; an early model-based alternative to PCA on transformations. | Suffers from slow runtime and convergence issues on datasets with millions of cells [9] [30]. | Not specified in available literature. |

| NewWave [8] | Negative Binomial (with optional zero-inflation) | An optimized implementation of the ZINB-WAVE approach; allows for zero inflation. | Can be computationally burdensome on large datasets [30]. | Not specified in available literature. |

| scGBM [9] [30] | Poisson Bilinear Model | Fast estimation via iteratively reweighted SVD; quantifies uncertainty in latent positions; provides a Cluster Cohesion Index (CCI). | Scales to datasets with millions of cells [9] [30]. | Yes, quantifies uncertainty for each cell's latent position and propagates it to clustering [9] [30]. |

Troubleshooting Common Issues

Problem: Method fails to separate rare cell types in the low-dimensional embedding.

- Potential Cause: Standard transformations (e.g., log(1+x), scTransform) can downweight the signal from marker genes for rare populations and upweight noise from null genes [9].

- Solution:

- Consider using scGBM, which, in simulations, has been shown to better capture signal from rare cell types compared to some transformation-based PCA methods [9].

- Validate findings using the Cluster Cohesion Index (CCI) provided by scGBM to assess the confidence that a cluster represents a biologically distinct population versus an artifact of sampling variability [9] [30].

Problem: PCA results are dominated by a few high-variance genes, masking biological signal.

- Potential Cause: When applying standard PCA, if the data is not properly scaled, genes with high raw variance (often highly expressed genes) will dominate the principal components, even if they contain little biological signal [31].

- Solution:

- For standard PCA, always use scaling (

scale.=TRUEinprcomp()) to give all genes equal weight in the analysis [31]. - As a more integrated solution, use a model-based method like GLM-PCA or scGBM, which inherently model the mean-variance relationship of count data and can avoid this artifact [9] [30].

- For standard PCA, always use scaling (

Problem: Long runtimes or failure to converge on large datasets.

- Potential Cause: Some model-based methods, like the original GLM-PCA and ZINB-WAVE, were not designed for the scale of modern single-cell datasets (millions of cells) [9] [30].

- Solution:

Frequently Asked Questions (FAQs)

Q: Why should I use a model-based method instead of simple log-transformation and PCA?

A: Standard transformations like log(1+x) applied to count data can induce spurious heterogeneity and mask true biological variability. The discrete nature and mean-variance relationship of count data make models based on normal distributions inappropriate. Model-based methods like GLM-PCA, NewWave, and scGBM directly model the count matrix (e.g., using Poisson or Negative Binomial distributions), which avoids these biases and provides a more statistically sound foundation for dimensionality reduction [9] [30].

Q: How do I handle heteroskedasticity in single-cell RNA-seq data?

A: Heteroskedasticity (the dependence of variance on the mean) is an inherent property of count data. The primary strategies are:

- Variance-Stabilizing Transformation (VST): Methods like the shifted logarithm or Pearson residuals (e.g., scTransform) attempt to stabilize the variance across the dynamic range before applying PCA [8].

- Direct Count Modeling: Methods like GLM-PCA, NewWave, and scGBM incorporate the mean-variance relationship directly into their probabilistic models, making them a natural choice for dealing with heteroskedasticity [9] [30].

- Compositional Data Analysis (CoDA): An emerging approach that treats the data as compositions and uses log-ratio transformations, which can also address heteroskedasticity [32].

Q: Which model-based method is best for uncertainty quantification?

A: Among the methods listed, scGBM specifically emphasizes uncertainty quantification. It provides estimates of the uncertainty in each cell's latent position. Furthermore, it leverages these uncertainties to define a Cluster Cohesion Index (CCI), which helps assess the confidence associated with cell clustering results [9] [30].

Q: My pseudo-bulk data shows unequal variance between experimental groups. How does this affect model-based factor analysis?

A: While not directly addressed by GLM-PCA, NewWave, or scGBM, heteroscedasticity between groups in pseudo-bulk data is a known issue that can hamper the detection of differentially expressed genes. If your primary goal is differential expression analysis on pseudo-bulk data, consider methods designed for this, like voomByGroup or voomWithQualityWeights with a blocked design, which explicitly model group-level heteroscedasticity [11]. For cell-level analysis, the model-based factor methods discussed here remain appropriate.

Experimental Protocol: Benchmarking Dimensionality Reduction Methods

This protocol outlines how to evaluate the performance of GLM-PCA, NewWave, and scGBM on a single-cell RNA-seq dataset.

Data Simulation and Preparation

- Simulated Data: Generate a count matrix with known ground truth, such as:

- Real Data: Use a well-annotated public dataset (e.g., from the 10X Genomics PBMC collection) where major cell types have been established.

Application of Dimensionality Reduction Methods

- Apply each method (GLM-PCA, NewWave, scGBM) to the count matrix according to their package documentation to obtain a low-dimensional embedding (e.g., 50 dimensions).

- As a benchmark, also apply standard methods:

Performance Evaluation Metrics

- Cluster Separation: Apply a clustering algorithm (e.g., Louvain) on each embedding. Calculate metrics like Adjusted Rand Index (ARI) against the ground truth labels.

- Rare Cell Type Detection: Visually inspect 2D visualizations (UMAP/t-SNE) of the embeddings for the separation of known rare populations [9].

- Computational Efficiency: Record the runtime and memory usage for each method on the same hardware.

- Biological Signal Preservation: For the simulated data with all genes differentially expressed, check if the PC1 loadings from each method are independent of the genes' base mean expression, which indicates an unbiased capture of signal [30].

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Analysis |

|---|---|

| scGBM R Package [9] | Implements the core scGBM algorithm for fast, model-based dimensionality reduction with uncertainty quantification. |

| NewWave R/Bioconductor Package [8] | Provides the optimized implementation of the negative binomial (and zero-inflated) factor model. |

| Seurat R Package | A toolkit for single-cell analysis; can be used to run standard PCA on transformed data (e.g., log-normalized or scTransform residuals) for comparison [9] [8]. |

| Scanpy Python Package | A scalable toolkit for single-cell analysis in Python; its default Log+PCA method serves as a useful benchmark [9]. |

| PCATools / scater | R/Bioconductor packages for visualizing and interpreting the results of PCA, such as creating scree plots and PCA biplots. |

| Benchmarking Dataset (e.g., PBMC3k) | A standard, well-characterized single-cell dataset used to validate and compare the performance of different methods. |

Workflow Diagram: Method Selection for scRNA-seq Factor Analysis

The diagram below illustrates a logical workflow for choosing a dimensionality reduction method based on your data and research goals.

Frequently Asked Questions (FAQs)

Q1: What are latent variables, and why are they used in expression estimation? Latent variables are unobserved factors that influence your observed data. In RNA-seq analysis, they are used to model and correct for unobserved confounders—such as batch effects, hidden technical artifacts, or unknown biological variations—that are not accounted for by your known covariates. Properly estimating these latent factors is essential as it can significantly increase the statistical power for detecting true biological signals, such as expression quantitative trait loci (eQTLs) [33].

Q2: My pseudo-bulk single-cell data is sparse and skewed. How does this affect latent variable inference? The inherent properties of single-cell RNA-seq data, including sparsity (many zero counts), distributional skewness (non-normal, right-skewed expression), and mean-variance dependency, directly challenge the assumptions of standard methods like PCA and PEER. If these properties are not addressed, they can lead to highly correlated and unreliable latent factors (with Pearson correlations between factors ranging from 0.63 to 0.99), ultimately compromising your downstream analysis and eQTL discovery power [33].

Q3: What is the practical difference between an Empirical Bayes and a Fully Bayesian approach? The primary difference lies in how prior information is handled. Empirical Bayes (eBayes) methods, used by tools like DESeq2, first use the data to estimate the hyperparameters (e.g., the prior distribution for dispersions or fold changes) and then treat these estimates as known constants for subsequent inference. This is a computationally efficient approximation [34] [6]. In contrast, a Fully Bayesian analysis uses Markov Chain Monte Carlo (MCMC) to simultaneously estimate all parameters and their joint posterior distributions, explicitly accounting for the uncertainty in the hyperparameters. While fully Bayesian is more statistically rigorous, it is also far more computationally intensive [34].

Q4: How can I stabilize noisy fold-change estimates for low-count genes? Shrinkage estimation is the key technique for stabilizing fold-change estimates. By sharing information across genes, this method shrinks the noisy log-fold changes of lowly expressed genes toward a more stable, common value. This results in more reliable and interpretable effect sizes, helping to prevent misinterpretation of the large but highly variable fold changes often seen for genes with low counts [6].

Troubleshooting Guides

Problem 1: Highly Correlated Latent Factors in Single-Cell Pseudo-Bulk Analysis

Symptoms: The inferred latent factors (e.g., PEER factors or principal components) are highly correlated with each other, and the first factor explains an overwhelmingly large proportion of the variance.

Diagnosis: This is a classic pitfall arising from applying methods designed for bulk RNA-seq directly to pseudo-bulk single-cell data without accounting for its unique properties, such as sparsity and skewness [33].

Solution Protocol: Follow this step-by-step quality control and transformation procedure to generate valid latent variables:

- Gene Filtering: Exclude genes that have a high proportion of zeros across individuals. A common and effective threshold is to remove genes with zero expression in ≥ 90% of samples (

π₀ ≥ 0.9) [33]. - Data Transformation: Apply a log transformation with a pseudo-count to the expression matrix. Use

log(x + 1)[33]. - Standardization: Scale the transformed data so that each gene has a mean of 0 and a standard deviation of 1 [33].

- Utilize Highly Variable Genes (HVGs): For a computationally efficient alternative that captures most of the relevant variation, generate latent factors using only the top 2000 Highly Variable Genes (ranked by variance-to-mean ratio). This approach can achieve similar eGene discovery power while being approximately 6.2 times faster than using all genes [33].

Table 1: Impact of QC Steps on Latent Factor Quality

| Step | Objective | Outcome |

|---|---|---|

| Exclude genes (π₀ ≥ 0.9) | Reduce sparsity & skewness | Mitigates violation of normality assumptions |

| log(x+1) transformation | Address right-skewness | Reduces median skewness of expression distribution |

| Standardization | Normalize gene variance | Prevents highly expressed genes from dominating |

| Use Top 2000 HVGs | Computational efficiency | ~6.2x faster runtime with minimal power loss |

Problem 2: Suboptimal Number of Latent Factors in eQTL Modelling

Symptoms: The number of significant eGenes detected does not increase monotonically with the number of factors fitted; instead, it peaks and then decreases. The optimal number varies unpredictably between cell types.

Diagnosis: Using a fixed, arbitrary number of factors (e.g., 5, 10, 15) for all datasets is insufficient. The optimal number is data-dependent and must be determined empirically [33].

Solution Protocol: Perform a sensitivity analysis to identify the dataset-specific optimal number of factors.

- Incremental Fitting: Run your eQTL association model multiple times, incrementally increasing the number of latent factors (PFs/PCs) used as covariates from 0 to a sufficiently high number (e.g., 50) [33].

- Monitor eGene Discovery: For each run, record the number of eGenes detected at a specified significance threshold.

- Identify the Inflection Point: Plot the number of eGenes against the number of factors fitted. The optimal number is typically just before the point where the curve plateaus or begins to decline. Research has shown that this pattern varies significantly across cell types, necessitating this empirical approach [33].

Problem 3: Inaccurate Allele-Specific Expression (ASE) Quantification

Symptoms: ASE estimates are biased due to reference genome alignment preference or an inability to accurately assign reads to parental alleles, especially across different isoforms.

Diagnosis: Standard alignment to a single (haploid) reference genome introduces systematic bias at heterozygous SNP sites. Methods that only consider reads overlapping known SNPs lack the power for precise isoform-level quantification [35].

Solution Protocol: Implement a unified Bayesian framework for allele-specific inference.

- Input Preparation: Create diploid genome sequences (paternal and maternal) for the sample using tools like

vcf2diploidfrom personal variant call files (VCFs) [35]. - Alignment: Align RNA-seq reads to the combined paternal and maternal cDNA sequences, retaining all possible alignment locations for each read using an aligner like Bowtie2 with the

-koption [35]. - Joint Quantification with a Bayesian Model: Use a method like ASE-TIGAR, which employs a probabilistic generative model. In this model, the haplotype origin (

Hn) of each read is treated as a latent variable. The model simultaneously estimates:- Isoform abundance parameters (

θ) - Allelic preference parameters (

ϕ) for each isoform Variational Bayesian inference is used to efficiently approximate the posterior distributions of these parameters, optimizing read alignments and providing accurate ASE estimates [35].

- Isoform abundance parameters (

Diagram 1: ASE Estimation Workflow. A Bayesian pipeline for accurate allele-specific expression quantification, integrating diploid genomes and probabilistic assignment of reads.

The Scientist's Toolkit: Key Reagents & Computational Solutions

Table 2: Essential Materials and Software for Bayesian Expression Analysis

| Name | Type | Function / Application |

|---|---|---|

| DESeq2 [6] | R/Bioconductor Package | Differential expression analysis using shrinkage estimation for dispersion and fold changes to handle heteroskedasticity. |

| ASE-TIGAR [35] | Software Pipeline | Bayesian estimation of allele-specific expression and isoform abundance from RNA-Seq data given diploid genomes. |

| Bowtie2 [35] | Alignment Software | Aligns sequencing reads to a reference genome, with options to retain multiple alignments crucial for ASE analysis. |

| PEER [33] | R/Python Library | Infers latent factors (unobserved confounders) from expression data to increase power in association studies like eQTL mapping. |

| Diploid Genome Sequences [35] | Data Input | Paternal and maternal cDNA sequences in FASTA format, essential for unbiased ASE analysis. |

| vcf2diploid [35] | Software Tool | Generates personal diploid genome sequences from a variant call file (VCF), a key pre-processing step for ASE. |

Advanced Methodologies

Experimental Protocol: A Fully Bayesian Analysis of RNA-Seq Count Data

This protocol outlines the steps for performing a fully Bayesian analysis of a hierarchical model, as applied in a heterosis study, which can be adapted for other count-based expression analyses [34].

Model Specification:

- Define a hierarchical model appropriate for your experimental design. For a typical two-group comparison, this often involves a negative binomial model for the read counts

K_ijto handle overdispersion. - The mean parameter

μ_ijis modeled via a log-link function to a linear predictor containing the experimental conditions and other covariates. - Place prior distributions on all unknown parameters, including dispersion parameters and group coefficients.

- Define a hierarchical model appropriate for your experimental design. For a typical two-group comparison, this often involves a negative binomial model for the read counts

Computational Implementation:

- Due to the high dimensionality (tens of thousands of genes), general-purpose MCMC software (e.g., JAGS, Stan) may be computationally intractable for the entire dataset.

- Implement a customized, efficient MCMC algorithm. Leverage parallelization frameworks, such as those designed for Graphics Processing Units (GPUs), to ameliorate the computational burden and achieve reasonable time frames [34].

Posterior Inference:

- Run the MCMC sampler to obtain samples from the joint posterior distribution of all parameters.

- Use these samples to calculate posterior probabilities and credible intervals for the effects of interest (e.g., the probability of heterosis or differential expression).

- This approach provides well-calibrated posterior probabilities and explicitly accounts for uncertainty in all model parameters [34].

Experimental Protocol: Variational Bayesian Inference for Allele-Specific Expression

This protocol details the core statistical methodology behind the ASE-TIGAR pipeline [35].

Define the Generative Model:

- For each sequencing read

n, define latent variables: isoform originT_n, haplotype originH_n, and start positionS_n. - The observed data is the read sequence

R_n. - The model parameters are:

θ(isoform abundances) andϕ(allelic preferences for each isoform). - The joint probability is:

p(T_n, H_n, S_n, R_n | θ, ϕ) = p(T_n | θ) * p(H_n | T_n, ϕ) * p(S_n | T_n, H_n) * p(R_n | T_n, H_n, S_n).

- For each sequencing read

Variational Bayesian (VB) Inference:

- Objective: Approximate the true, intractable posterior distribution

p(Z | R, θ, ϕ)(whereZrepresents all latent variables) with a simpler distributionq(Z). - Process: Iteratively optimize the parameters of

q(Z)to minimize the Kullback-Leibler (KL) divergence betweenq(Z)and the true posterior. This is computationally more efficient than MCMC for this problem structure. - Outcome: The process yields approximate posterior distributions for the haplotype assignments and point estimates for the model parameters

θandϕ, enabling accurate ASE quantification [35].

- Objective: Approximate the true, intractable posterior distribution

Understanding Heteroskedasticity in RNA-seq Data

What is heteroskedasticity and why does it matter for RNA-seq PCA?

Heteroskedasticity refers to the non-constant variability in data across different measurement levels. In RNA-seq count tables, this manifests as genes with high expression levels showing greater variance than lowly expressed genes [8]. This creates significant challenges for Principal Component Analysis (PCA) because standard PCA performs best with uniform variance data [8]. The gamma-Poisson (negative binomial) distribution characteristic of UMI-based single-cell RNA-seq data produces a quadratic mean-variance relationship (Var[Y] = μ + αμ²), where highly expressed genes dominate the variance and can disproportionately influence principal components [8].

Data Transformation Methods to Address Heteroskedasticity

What transformation methods effectively stabilize variance for RNA-seq PCA?

Multiple transformation approaches have been developed to address heteroskedasticity in RNA-seq data. The table below summarizes the primary methods, their mechanisms, and implementation considerations:

| Method | Underlying Principle | Key Features | Performance Notes |

|---|---|---|---|

| Delta Method | Applies non-linear function based on mean-variance relationship [8] | Includes acosh transformation (1/√α · acosh(2αy+1)) and shifted logarithm (log(y+y₀)) [8] |

Simple approach; performance can be affected by size factor scaling issues [8] |

| Pearson Residuals | Models count data with gamma-Poisson GLM, calculates (observed - expected)/√variance [8] | Implemented in tools like sctransform and transformGamPoi; requires fitting generalized linear model [8] | Better handles size factor variability; stabilizes variance across expression range [8] |

| Latent Expression | Infers underlying expression states using Bayesian models [8] | Includes Sanity Distance, Sanity MAP, Dino, and Normalisr; estimates "true" expression [8] | Theoretical appeal but complex implementation; performance varies [8] |

| Factor Analysis | Directly models count distribution in low-dimensional space [8] | GLM PCA and NewWave; uses gamma-Poisson sampling distribution [8] | Avoids transformation entirely; directly produces latent representation [8] |

| Biwhitened PCA (BiPCA) | Adaptive row/column rescaling to standardize noise variances [17] | Makes noise homoscedastic and analytically tractable; reveals true data rank [17] | Recent method; enhances biological interpretability of count data [17] |

Table 1: Comparison of transformation methods for addressing heteroskedasticity in RNA-seq data

How do I implement the shifted logarithm transformation?

The shifted logarithm remains a widely used approach despite its simplicity. The basic transformation is:

g(y) = log(y/s + y₀)

Where y represents raw counts, s is the size factor for each cell, and y₀ is the pseudo-count [8]. The critical consideration is proper parameterization:

- Size factors (s): Typically calculated as

s_c = (∑_g y_gc)/L, where the numerator is the total UMIs for cell c, and L is a scaling factor [8] - Pseudo-count (y₀): Should be parameterized based on typical overdispersion (α) using

y₀ = 1/(4α)rather than using arbitrary values [8] - Avoid arbitrary scaling: Using fixed values like L=10,000 (Seurat) or L=1,000,000 (CPM) implies different overdispersion assumptions that may not match your data [8]

Figure 1: Workflow for proper implementation of shifted logarithm transformation

Batch Effect Correction Methods

How can I correct for batch effects in RNA-seq data before PCA?

Batch effects represent systematic non-biological variations that can compromise data reliability. Several methods are available for batch correction:

| Method | Approach | Data Format | Key Advantage |

|---|---|---|---|

| ComBat-Seq | Negative binomial model using empirical Bayes framework [36] | Preserves integer count data [36] | Specifically designed for RNA-seq count data [36] |

| ComBat-ref | Enhanced ComBat-seq with reference batch selection [36] | Adjusts counts toward reference batch [36] | Superior statistical power with dispersed batches [36] |

| Include as Covariate | Includes batch as covariate in linear models [36] | Works with standard DE tools | Simple implementation in edgeR/DESeq2 [36] |

| RUVSeq | Removes unwanted variation using control genes [36] | Flexible factor analysis | Handles unknown batch effects [36] |

Table 2: Batch effect correction methods for RNA-seq data analysis

What is the step-by-step protocol for ComBat-ref batch correction?

ComBat-ref builds upon ComBat-seq but innovates by selecting the batch with the smallest dispersion as a reference:

- Model RNA-seq counts using a negative binomial distribution for each batch

- Estimate batch-specific dispersion parameters (λ_i) for each gene

- Select reference batch with the smallest dispersion parameter

- Adjust other batches toward the reference batch using the model:

log(μ̃_ijg) = log(μ_ijg) + γ_1g - γ_igwhere μ_ijg is the expected count, and γ represents batch effects [36] - Set adjusted dispersion to match the reference batch (λ̃i = λ1)

Troubleshooting Common PCA Problems

Why does my PCA still show strong batch effects after transformation?

If PCA visualization continues to show batch-driven clustering after transformation, consider these potential issues:

- Insufficient modeling of batch effects: Simple transformations like shifted logarithm may not fully remove the influence of size factors on the variance structure [8]

- Incorrect parameterization: Using arbitrary pseudo-counts (y₀) rather than those derived from data-specific overdispersion estimates [8]

- Unaccounted for technical covariates: Library preparation method, sequencing depth, or RNA quality can introduce batch effects that require explicit modeling [37]

Solution: Apply dedicated batch correction methods like ComBat-seq or include batch as a covariate in your model. Ensure your experimental design includes representation of each biological condition across all batches [37].

How can I objectively detect outlier samples in my RNA-seq data?

Traditional PCA is sensitive to outliers, which can disproportionately influence component orientation. Robust PCA methods provide objective outlier detection:

- Implement PcaGrid or PcaHubert from the rrcov R package [38]

- Apply to your transformed count data

- Identify samples flagged as outliers based on robust distance measures

- Investigate nature of outliers (technical artifacts vs. true biological variation) [38]

PcaGrid has demonstrated 100% sensitivity and specificity in tests with positive control outliers [38]. Removing technical outliers before differential expression analysis significantly improves performance, but biological outliers should be retained to preserve natural biological variance [38].

Why is my PCA dominated by highly expressed genes?

This common problem stems from the mean-variance relationship in RNA-seq data. Solutions include:

- Apply variance-stabilizing transformations that specifically address the quadratic mean-variance relationship [8]

- Use Pearson residuals from gamma-Poisson GLMs, which effectively stabilize variance across the dynamic range [8]

- Consider Biwhitened PCA (BiPCA) which adaptively rescales rows and columns to standardize noise variances [17]