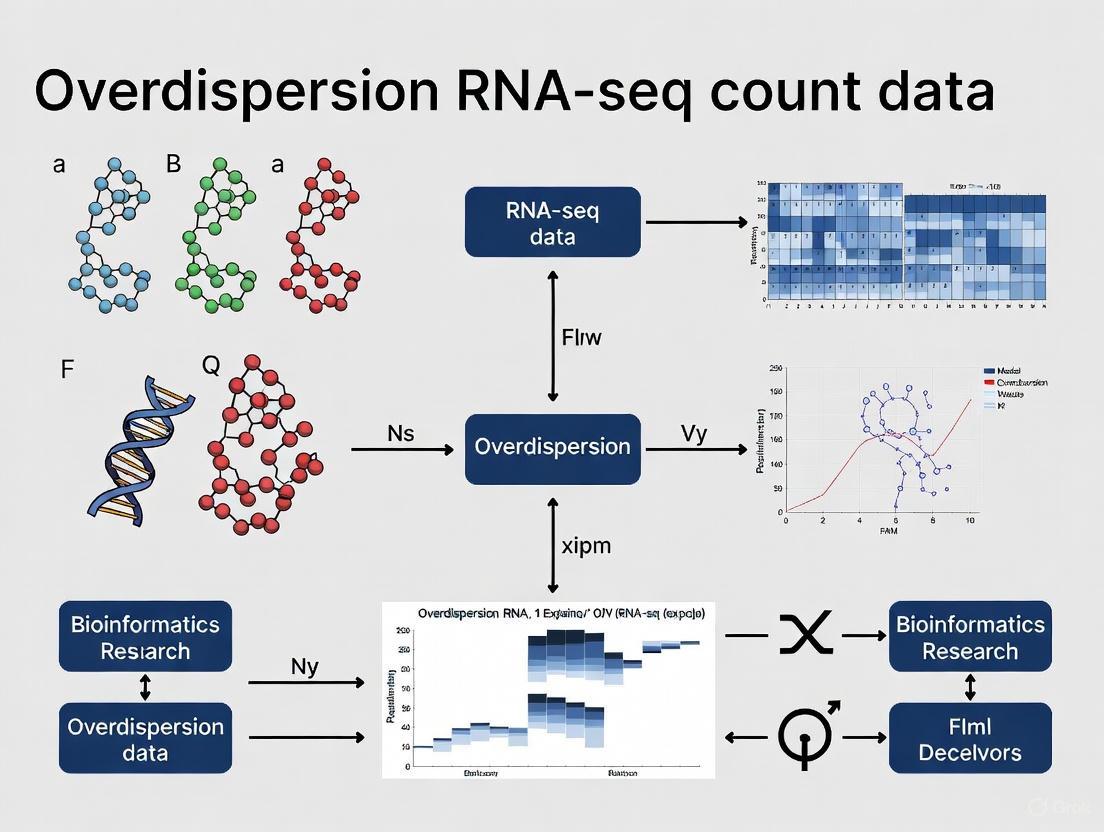

Mastering Overdispersion in RNA-seq Data: From Foundational Concepts to Advanced Solutions for Reliable Gene Expression Analysis

This comprehensive guide addresses the critical challenge of overdispersion in RNA-seq count data, where observed variance exceeds mean expression levels.

Mastering Overdispersion in RNA-seq Data: From Foundational Concepts to Advanced Solutions for Reliable Gene Expression Analysis

Abstract

This comprehensive guide addresses the critical challenge of overdispersion in RNA-seq count data, where observed variance exceeds mean expression levels. Tailored for researchers, scientists, and drug development professionals, we explore the fundamental causes of overdispersion stemming from both technical and biological variability. The article provides a detailed examination of established and emerging methodological approaches including negative binomial models, quasi-Poisson regression, variance-stabilizing transformations, and regularized generalized linear models. Through practical troubleshooting guidance and comparative analysis of popular tools like DESeq2, edgeR, and newer methods such as DEHOGT and sctransform, we equip researchers with strategies to optimize their analysis pipelines. Validation frameworks and benchmarking considerations ensure robust differential expression analysis, ultimately supporting more accurate biological interpretations and discoveries in biomedical research.

Understanding RNA-seq Overdispersion: From Biological Sources to Statistical Characterization

FAQs: Understanding Overdispersion in RNA-seq Data

What is overdispersion in the context of RNA-seq data?

In RNA-seq data, overdispersion refers to the phenomenon where the variance of gene expression counts is greater than the mean [1] [2]. This violates the assumption of a Poisson distribution, where the mean and variance are expected to be equal [3]. This excess variability is observed as a quadratic mean-variance relationship, meaning that as the mean expression of a gene increases, its variance increases at an even faster rate [2].

Why does overdispersion occur in RNA-seq experiments?

Overdispersion arises from both technical and biological sources [2]:

- Technical variability: The process of measuring gene expression is inherently noisy. For a gene with a low number of transcripts in a cell, the number captured during library preparation can vary significantly between replicates due to sampling stochasticity. You might capture all transcripts in one sample but only a few in another [4].

- Biological variability: True biological differences in gene expression exist between replicates that are genetically identical or from the same treatment group. This variability is often the effect of interest but contributes to the overall variance observed in the data [2].

How does overdispersion impact differential expression analysis?

Ignoring overdispersion in statistical models leads to inflated false positive rates. Models that assume a Poisson distribution (mean = variance) will underestimate the uncertainty for most genes. This can cause you to incorrectly identify genes as being differentially expressed when they are not. Properly modeling overdispersion is therefore essential for robust and reliable biological conclusions [1] [2].

I have heard that log-transforming counts can help with heterogeneity. Is this sufficient?

While a log transformation can help stabilize variance and make the data more homoscedastic, it is often an ad-hoc solution that does not fully address the underlying statistical issue [4]. Dedicated methods for RNA-seq data, such as those based on the negative binomial distribution, explicitly model the mean-variance relationship, providing a more principled and powerful approach for differential expression testing [1] [2].

Troubleshooting Guides

Problem: High False Positive Rates in Differential Expression Analysis

Potential Cause: The analysis pipeline does not adequately account for overdispersion in the count data.

Solution:

- Diagnose: Plot the mean versus the variance of your genes (on a log-log scale). A clear trend above the line of equality (the Poisson line) indicates overdispersion [1] [5].

- Switch Models: Avoid methods that assume a Poisson distribution. Instead, use software designed to handle overdispersion.

- Recommended Tools:

- edgeR: Uses a negative binomial model and empirical Bayes methods to "shrink" gene-wise dispersion estimates towards a common or trended value, improving stability for studies with small sample sizes [1] [2].

- DESeq2: Similarly employs a negative binomial model and shares information across genes to estimate dispersion, using a trended prior [1].

- limma-voom: Transforms the data to model the mean-variance relationship at the observation level and incorporates this relationship into the linear modeling framework using precision weights [2].

- BBSeq: Offers a beta-binomial model as an alternative for modeling overdispersion [1].

Workflow for diagnosing and addressing overdispersion:

Problem: Analyzing Data with Very Small Sample Sizes

Potential Cause: With few replicates, gene-wise dispersion estimates are highly unreliable.

Solution: Leverage information across all genes to stabilize dispersion estimates.

- Use Information-Sharing Methods: Employ the "common," "trended," or "tagwise" dispersion options in

edgeR, or the dispersion estimation inDESeq2[1] [2]. These methods use empirical Bayes to shrink noisy, gene-wise estimates towards a fitted trend, borrowing strength from the entire dataset. - Consider the

voomMethod: Thevoommethod in thelimmapackage can also be effective for small sample sizes, as it robustly fits a mean-variance trend to create precision weights for each observation [2].

Key Experimental Protocols

Protocol: Visualizing and Confirming the Mean-Variance Relationship

Purpose: To empirically demonstrate the presence of overdispersion in your dataset.

Methodology:

- Data Input: Start with a raw count matrix (genes as rows, samples as columns).

- Filtering: Filter out genes with zero counts across all samples to avoid trivial results [5].

- Normalization: Normalize the counts to account for differences in library size between samples. A simple Counts Per Million (CPM) calculation is a good start [5].

- Formula: ( \text{CPM} = \frac{\text{Reads for gene}}{\text{Total reads in sample}} \times 10^6 )

- Calculation: For each gene, calculate the mean and variance of its normalized expression across samples in a condition.

- Visualization: Create a scatter plot of the log-transformed mean against the log-transformed variance for all genes [1] [5].

- Interpretation: Add a reference line (slope=1, intercept=0) representing the Poisson expectation (mean = variance). Data points consistently above this line indicate overdispersion.

Protocol: Differential Expression Analysis Accounting for Overdispersion (using edgeR)

Purpose: To identify differentially expressed genes while properly controlling for overdispersion.

Methodology:

- Create a DGEList object: Input the count matrix and sample group information into

edgeR[5]. - Filter lowly expressed genes: Remove genes that are not expressed at a sufficient level in a minimum number of samples.

- Normalize for composition: Use the

calcNormFactorsfunction to calculate scaling factors that normalize for differences in library size and RNA composition [5]. - Estimate dispersion: This is the critical step for handling overdispersion.

- Step 1:

estimateCommonDisp- Calculates a single, overall dispersion value for all genes. - Step 2:

estimateTagwiseDisp- Estimates gene-specific (tagwise) dispersions, which are shrunk towards the common or a trended dispersion based on the gene's abundance [5].

- Step 1:

- Test for DE: Fit a negative binomial generalized linear model (GLM) and test for differential expression using a likelihood ratio test or a quasi-likelihood F-test.

- Interpret results: The resulting p-values account for the overdispersion in the data, leading to more reliable inference.

Table 1: Comparison of primary statistical models used to handle overdispersion in RNA-seq data.

| Model / Method | Underlying Distribution | Key Feature | Typical Use Case | Software/Tool |

|---|---|---|---|---|

| Poisson | Poisson | Assumes mean = variance; generally inadequate for real biological data [3]. | Technical replicates only [3]. | Basic GLM |

| Negative Binomial | Negative Binomial | Explicitly models overdispersion via a dispersion parameter; allows variance > mean [1] [2]. | Standard for bulk RNA-seq DGE analysis. | edgeR [2] [5], DESeq2 [1] |

| Beta-Binomial | Beta-Binomial | An alternative to the negative binomial for modeling overdispersion in count data [1]. | Alternative to Negative Binomial. | BBSeq[citation:] |

| Variance Modeling | Linear Model (on transformed data) | Models the mean-variance trend to create precision weights for each observation [2]. | Flexible approach, integrates with limma's methods. | limma-voom [2] |

| Hurdle Model | Two-component (e.g., Logistic + Gaussian) | Jointly models detection rate (0 vs >0) and expression level (if >0) [6]. | Single-cell RNA-seq data with high dropout rates. | MAST [6] |

Table 2: Key research reagents and computational tools for RNA-seq experiments focused on overdispersion.

| Item / Resource | Type | Function / Purpose |

|---|---|---|

| ERCC Spike-In Controls | Synthetic RNA Mix | A set of exogenous RNA controls at known concentrations used to assess technical variation, accuracy, and the dynamic range of an experiment [7]. |

| UMIs (Unique Molecular Identifiers) | Oligonucleotide Barcodes | Short random sequences added to each molecule during library prep to correct for PCR amplification bias and duplicates, refining count estimates [7]. |

| Poly-A Selection / rRNA Depletion Kits | Library Prep Reagent | Methods to enrich for mRNA by targeting polyadenylated tails or removing abundant ribosomal RNA, crucial for defining the transcript population being sequenced [7]. |

| edgeR | Software / Bioconductor Package | A statistical software package using a negative binomial model to assess differential expression, with robust methods for dispersion estimation [2] [5]. |

| DESeq2 | Software / Bioconductor Package | A widely used Bioconductor package for differential analysis of count data, based on a negative binomial generalized linear model [1]. |

| limma-voom | Software / Bioconductor Package | A method that transforms count data and models the mean-variance trend to allow the use of linear models for RNA-seq analysis [2]. |

FAQs: Core Concepts and Troubleshooting

FAQ 1: What is the fundamental difference between technical and biological variance?

- Biological Variance refers to the natural variation in gene expression that occurs between different biological samples (e.g., different individuals, animals, or independently cultured cells) due to genetic heterogeneity, environmental exposures, and stochastic biological processes [8] [9]. It is the variation of scientific interest in most studies.

- Technical Variance arises from the experimental and measurement processes themselves. This includes variability from steps like RNA extraction, library preparation, sequencing lane effects, and platform-specific biases [8] [10]. Unlike biological variance, technical variance is not a property of the system you are studying.

FAQ 2: Why is distinguishing between these variance types critical in RNA-seq analysis?

Accurately distinguishing between technical and biological variance is essential for valid statistical inference. Failure to do so can lead to two major problems:

- Inflated False Positives: If biological variance is underestimated (e.g., by using too few replicates), the analysis may mistake natural variation for a treatment effect, identifying genes as differentially expressed when they are not [9].

- Handling Overdispersion: RNA-seq data is count-based. A Poisson distribution assumes the mean equals the variance, but in real data, biological variance almost always makes the observed variance greater than the mean—a phenomenon called overdispersion [11]. Statistical models that account for this, such as the Negative Binomial distribution, are required for accurate detection of differential expression [11] [12].

FAQ 3: My Negative Binomial GLM still shows overdispersion. What are my options?

If a standard Negative Binomial model does not fully account for the variance in your data, consider these strategies:

- Investigate Hidden Biases: Check for unaccounted for batch effects or other confounding factors (e.g., sample processing date, researcher) that introduce systematic technical variation. Including these as factors in your statistical model can help [10] [9].

- Use Quasi-Likelihood Methods: Models like Quasipoisson treat the overdispersion as a nuisance parameter, scaling the standard errors to provide more conservative and robust inference, even if the exact source of overdispersion is unknown [12].

- Model Dependent Data: If the overdispersion is due to correlations between measurements (e.g., from the same patient over time), a Generalized Linear Mixed Model (GLMM) with a subject-level random effect can explicitly model this dependence structure [12].

FAQ 4: How can I proactively minimize technical variance in my experimental design?

- Randomization and Blocking: Randomly assign samples to processing batches to avoid confounding your treatment groups with technical batches (e.g., all controls processed on one day, all treatments on another) [10] [9].

- Multiplexing: Use sample barcoding to run all samples across all sequencing lanes, which helps distribute and average out lane-specific technical effects [10].

- Spike-In Controls: Use synthetic RNA spike-ins (e.g., ERCC RNA) added to each sample at known concentrations. These serve as an internal standard to measure and correct for technical variability across samples [13] [14].

The table below summarizes the characteristics and appropriate statistical models for different variance structures in RNA-seq count data.

Table 1: Characteristics and Modeling of Variance in RNA-seq Data

| Variance Type | Source | Mean-Variance Relationship | Recommended Distribution/Model |

|---|---|---|---|

| Technical (Poisson) | Sequencing depth, library amplification [11] | Var = μ (Variance equals the mean) |

Poisson |

| Biological (Overdispersed) | Natural variation between individuals or cells [11] | Var = μ + φμ² (Variance is a quadratic function of the mean) |

Negative Binomial [11] [12] |

| Scaled Biological | General overdispersion, often with unknown sources | Var = kμ (Variance is proportional to the mean) |

Quasi-Poisson [11] [12] |

Experimental Protocols for Variance Analysis

Protocol: Designing an Experiment to Estimate Biological Variance

- Define Biological Replicates: Plan for independent biological replicates. For cell lines, this means cells from different passages or different frozen stocks cultured independently. For tissues, this means RNA extracted from different individuals [14] [9].

- Determine Replicate Number: Use a power analysis if possible. If not, a minimum of 3 biological replicates per condition is typically recommended, with 4-8 being ideal for reliably estimating biological variance and detecting more differentially expressed genes [14] [9].

- Avoid Confounding: Ensure that biological or technical groupings are not perfectly aligned with your experimental conditions. For example, do not process all control samples on one day and all treatment samples on another. Instead, process samples from all groups in each batch [9].

- Metadata Collection: Meticulously record all potential sources of technical variation (e.g., RNA extraction date, library preparation kit lot, sequencing lane) to be used as covariates in the statistical analysis [9].

Protocol: A Workflow for Diagnosing Overdispersion

- Initial Model Fitting: Fit a Poisson Generalized Linear Model (GLM) to your count data.

- Goodness-of-Fit Test: Calculate the Pearson's chi-square statistic for the model. A statistic greatly exceeding the residual degrees of freedom is a strong indicator of overdispersion [11].

- Model Comparison: Refit the data using a Negative Binomial GLM, which explicitly includes a parameter to model overdispersion.

- Residual Analysis: Examine the residuals from the Negative Binomial model. If patterns of overdispersion remain, investigate the need for including batch effects or other hidden covariates in the model [12].

Visualizing Variance Structures and Workflows

The following diagram illustrates the decomposition of total observed variance in an RNA-seq dataset into its biological and technical components.

Diagram 1: Deconstructing Total Variance into Biological and Technical Components.

This diagram outlines a logical troubleshooting workflow for addressing overdispersion in RNA-seq data analysis.

Diagram 2: A Troubleshooting Workflow for Addressing Overdispersion.

Table 2: Key Research Reagent Solutions for Managing Variance

| Tool / Reagent | Primary Function | Role in Managing Variance |

|---|---|---|

| ERCC RNA Spike-Ins | Synthetic RNA controls at known concentrations [13] | Allows direct measurement and correction of technical variance across samples, as their abundance should not change biologically. |

| SIRV Spike-Ins | Complex synthetic spike-in controls for isoform-level analysis [14] | Measures dynamic range, sensitivity, and quantification accuracy of the entire RNA-seq assay, helping to control for technical performance. |

| Barcodes/Indexes | Unique nucleotide sequences ligated to each sample's cDNA [10] | Enables multiplexing, allowing multiple samples to be sequenced in the same lane, thus averaging out lane-specific technical effects. |

| rRNA Depletion Kits | Remove abundant ribosomal RNA [14] | Reduces technical bias in transcript representation, improving the accuracy of mRNA quantification, especially for lowly expressed genes. |

| Stranded Library Prep Kits | Preserve the directionality of transcripts during library construction [10] | Minimizes misannotation artifacts and improves quantification accuracy for overlapping genes, a source of technical noise. |

In RNA-seq data analysis, choosing the appropriate statistical model for count data is fundamental to drawing accurate biological conclusions. The Poisson and Negative Binomial distributions are central to this modeling process, each with distinct advantages and limitations. This guide explores the core differences between these distributions, providing researchers with practical frameworks for selecting the right model based on their experimental data characteristics.

Frequently Asked Questions (FAQs)

What are the fundamental differences between Poisson and Negative Binomial distributions for RNA-seq data?

Poisson Distribution assumes the variance equals the mean, which works well for technical replicates where the only source of variation is sampling noise [3] [15]. However, this assumption becomes limiting with biological replicates where additional variability is present.

Negative Binomial Distribution explicitly models overdispersion (variance greater than the mean) by introducing a dispersion parameter, making it more appropriate for biological replicates where extra variation exists beyond sampling noise [16] [15].

When should I use Poisson versus Negative Binomial models for my RNA-seq data?

Table 1: Guidelines for Distribution Selection Based on Data Characteristics

| Data Characteristic | Recommended Distribution | Key Rationale |

|---|---|---|

| Technical replicates | Poisson | Only sampling noise is present [3] |

| Biological replicates | Negative Binomial | Accounts for biological variability [15] |

| Low expression genes | Negative Binomial | Poisson underestimates variance for low counts [17] |

| UMI-based scRNA-seq | Poisson | Works well with unique molecular identifiers [18] |

| Presence of hub genes | Poisson Log-Normal | Better detects highly connected regulatory genes [17] |

Why do Negative Binomial models sometimes fail to control false discovery rates in real datasets?

Despite their theoretical advantages, Negative Binomial models can perform poorly in practice because real sequence count data are not always well described by the NB distribution [16]. Goodness-of-fit tests have demonstrated violations of NB assumptions in many publicly available RNA-Seq datasets, leading to inflated false discovery rates when the distributional assumptions are not met [16].

How does zero inflation affect distribution choice for RNA-seq data?

RNA-seq data frequently exhibit zero inflation (excess zeros beyond what standard distributions predict). In these cases, neither standard Poisson nor Negative Binomial distributions may be adequate. Researchers should consider zero-inflated mixture models that simultaneously account for multiple sequencing preferences and zero inflation [19].

Troubleshooting Guides

Problem: Poor False Discovery Rate Control

Symptoms: Too many false positives in differential expression analysis; simulation studies show FDR exceeding nominal levels.

Solutions:

- Test distributional assumptions using goodness-of-fit tests before selecting your model [16]

- Consider nonparametric methods when distributional assumptions are violated [16]

- Explore regularized Negative Binomial regression to prevent overfitting [20]

Problem: Inadequate Model Fit for Low-Abundance Genes

Symptoms: Poor performance in detecting differentially expressed low-abundance genes; unreliable variance estimates for low-count features.

Solutions:

- Implement Poisson Log-Normal models that show improved performance for low-abundance transcripts [17]

- Utilize variance stabilization transformations to handle the mean-variance relationship [20]

- Apply information sharing across genes through regularization to stabilize parameter estimates [20]

Experimental Protocols

Protocol 1: Goodness-of-Fit Testing for Distribution Selection

Purpose: Formally test whether Poisson or Negative Binomial distributions appropriately describe your RNA-seq data.

Materials:

- RNA-seq count matrix

- Statistical software (R recommended)

- Goodness-of-fit testing packages (e.g.,

sctransform,scpoisson)

Procedure:

- Extract count data for a homogeneous cell population or treatment group

- Fit both Poisson and Negative Binomial distributions to your count data

- Calculate goodness-of-fit statistics using Kolmogorov-Smirnov tests or specialized smooth tests for count data [16]

- Compare observed versus expected quantiles using Q-Q plots with simulation envelopes [18]

- Formally test for overdispersion using dedicated statistical tests [16]

- Select the distribution that provides the best fit while maintaining model parsimony

Protocol 2: Handling Overdispersed Data with Negative Binomial Regression

Purpose: Properly implement Negative Binomial regression for overdispersed RNA-seq data.

Materials:

- Count data matrix with experimental design metadata

- R packages

edgeR,DESeq2, orsctransform

Procedure:

- Calculate library sizes for each sample

- Estimate genewise dispersions using empirical Bayes methods to share information across genes [21]

- Include sequencing depth as covariate in the generalized linear model [20]

- Regularize parameter estimates by pooling information across genes with similar abundances [20]

- Validate model assumptions by examining residual plots and checking for remaining overdispersion

Workflow Visualization

Decision Workflow for RNA-seq Distribution Selection

Research Reagent Solutions

Table 2: Essential Tools for RNA-seq Distribution Analysis

| Tool/Reagent | Primary Function | Application Context |

|---|---|---|

| edgeR [21] | Negative Binomial-based differential expression | Bulk RNA-seq with biological replicates |

| DESeq2 [16] | Generalized linear modeling with dispersion shrinkage | Bulk RNA-seq, various experimental designs |

| sctransform [20] | Regularized negative binomial regression | Single-cell RNA-seq with UMI counts |

| scpoisson [18] | Independent Poisson distribution framework | UMI-based scRNA-seq data |

| BBSeq [21] | Beta-binomial modeling for overdispersed data | Alternative to NB for RNA-seq counts |

| Goodness-of-fit tests [16] | Validate distributional assumptions | Model selection and validation |

In RNA-seq data analysis, a fundamental challenge is the presence of overdispersion—where the variance of gene expression counts exceeds what would be expected under simpler statistical models like the Poisson distribution. This overdispersion exhibits a specific pattern known as the mean-variance relationship, which is consistently observed as quadratic in form [2]. Unlike the linear mean-variance relationship implicit in a Poisson model, a quadratic relationship indicates that variability increases disproportionately with the mean expression level [2].

Understanding this relationship is not merely a statistical exercise; it is critical for drawing robust biological conclusions. Properly modeling this variance ensures that tests for differential expression are accurate, preventing an excess of false positives or false negatives. This technical guide and FAQ addresses the core issues researchers face when dealing with overdispersion and mean-variance patterns in their data, providing actionable troubleshooting advice framed within the broader thesis of managing overdispersion in genomic research.

Frequently Asked Questions (FAQs) & Troubleshooting

FAQ 1: Why does a quadratic mean-variance relationship exist in my RNA-seq data?

The quadratic relationship arises from the confluence of biological and technical factors.

- Biological Variance: Genuine differences in gene expression exist between biological replicates, even within the same treatment group. This variance is often greater for genes at medium to high expression levels.

- Technical Variance: The process of RNA-seq measurement is inherently noisy. Library preparation, sequencing depth, and sampling effects introduce variability. This technical variation is well-modeled by a Poisson process, but the combined biological and technical variance results in overdispersion that increases with expression level, creating a quadratic trend [4] [2].

- Troubleshooting Tip: If you do not observe a mean-variance trend at all, it is often a sign of insufficient biological replication. With too few replicates, the software cannot reliably estimate the gene-wise dispersion, which is essential for modeling this relationship.

FAQ 2: My analysis is sensitive to highly variable genes. How can I stabilize the variance across different expression levels?

This is a common issue because high-expression genes with large variances can dominate the analysis. The solution is to apply a variance-stabilizing transformation.

- Logarithmic Transformation: A simple log-transform (e.g., ( \log(y_{gi} + 0.5) )) can help stabilize variance and make the data more amenable to linear modeling [2].

- Voom Transformation: The

voommethod in thelimmapackage models the mean-variance trend in the log-counts-per-million data and generates precision weights for each observation. These weights are then used in a standard linear model, which stabilizes the variance across the dynamic range of expression and ensures that lowly expressed genes are not overwhelmed by the variance of highly expressed ones [2]. - Troubleshooting Tip: After applying a transformation like

voom, always check the "mean-variance trend" plot produced by the software. A flattened trend line indicates successful variance stabilization.

FAQ 3: Should I use a method that assumes a common dispersion, or should I use gene-wise dispersion estimates?

While a common dispersion estimate (where all genes share the same dispersion value) is computationally simpler, gene-specific variances exist and must be accounted for in the analysis [2].

- The Problem with Common Dispersion: Assuming a common dispersion for all genes is an oversimplification. It fails to capture the true variability in the data, leading to suboptimal statistical power. Genes with genuinely high variability will be under-penalized, while stable genes will be over-penalized.

- The Solution: Shrinkage Estimation. Methods like those in

edgeRandDESeq2use empirical Bayes techniques to "shrink" gene-wise dispersion estimates towards a shared mean-variance trend. This approach borrows information from the entire dataset to produce more robust dispersion estimates for each gene, which is particularly crucial for experiments with small sample sizes [2] [21]. - Troubleshooting Tip: If your analysis with gene-wise dispersions is unstable or fails to converge, check for outliers or genes with extremely low counts. These can sometimes destabilize estimates.

Experimental Protocols for Investigating Mean-Variance Relationships

Protocol: Visualizing the Mean-Variance Trend

Objective: To empirically observe the quadratic relationship between mean expression and variance in a raw RNA-seq count dataset.

Materials:

- A count matrix (genes as rows, samples as columns).

- R or Bioconductor.

edgeRorDESeq2package.

Methodology:

- Load Data: Load your raw count matrix into R. Ensure that the data have not been normalized or transformed for this initial step.

- Calculate Mean and Variance: For each gene, compute the average expression (mean) and the variance across all samples in the dataset.

- Create a Scatter Plot: Generate a scatter plot with the log-transformed mean on the x-axis and the log-transformed variance on the y-axis.

- Overlay Trend Lines:

- Add a red line with a slope of 1, representing the Poisson mean-variance relationship (where mean = variance).

- Fit and overlay a loess curve (a local regression smoother) to the data points to visualize the empirical trend.

Interpretation: The loess curve will typically lie above the red Poisson line, demonstrating overdispersion. The curve will also show a quadratic shape, bending upwards, indicating that variance grows faster than the mean.

Protocol: Differential Expression Analysis Accounting for Overdispersion

Objective: To perform a robust differential expression analysis that correctly models the mean-variance relationship.

Materials:

- A raw count matrix.

- A metadata file describing the experimental groups.

- R and Bioconductor packages

DESeq2orlimmawithvoom.

Methodology using DESeq2:

- Create Dataset: Construct a

DESeqDataSetfrom the count matrix and metadata. - Estimate Size Factors: Calculate normalization factors to account for differences in library size using

estimateSizeFactors. - Estimate Dispersions: The critical step is

estimateDispersions. This function will:- Estimate a gene-wise dispersion for every gene.

- Fit a smooth curve capturing the mean-variance trend across all genes.

- Shrink the gene-wise dispersion estimates towards this trend to generate robust final dispersion values.

- Test for DE: Perform the differential expression test using the negative binomial Wald test (

nbinomWaldTest).

Methodology using Voom/Limma:

- Normalize and Transform: Convert raw counts to log-counts-per-million (log-CPM) using the

voomfunction. - Weight Observations: The

voomfunction estimates the mean-variance trend and calculates a precision weight for each observation. - Linear Modeling: Use the weighted data in a standard linear modeling pipeline with the

limmapackage to test for differential expression.

Data Presentation: Comparison of Key RNA-seq Analysis Methods

The following table summarizes the characteristics of the primary methods used to handle the mean-variance relationship in RNA-seq data.

Table 1: Comparison of RNA-seq Analysis Methods for Overdispersed Data

| Method | Core Model | Dispersion Estimation Strategy | Key Advantage | Best Suited For |

|---|---|---|---|---|

| DESeq2 | Negative Binomial | Shrinks gene-wise estimates towards a fitted mean-dispersion trend. | High sensitivity and specificity; robust for a wide range of experiments. | Most standard experiments; performs well in benchmark studies. |

| edgeR | Negative Binomial | Common, trended, or tagwise (gene-wise) dispersion with empirical Bayes shrinkage. | Excellent statistical power; highly interpretable biological coefficient of variation. | Experiments with complex designs and multiple factors. |

| Voom/Limma | Linear Model on transformed data | Models the mean-variance trend to create precision weights for each observation. | Holds its Type I error rate well; integrates with vast limma toolkit for complex designs. |

Large experiments with continuous covariates or multiple factors. |

| BBSeq | Beta-Binomial | Can fit gene-wise overdispersion or model it as a function of the mean. | Flexible handling of discrete and continuous covariates. | An alternative when negative binomial models show poor fit. |

The Scientist's Toolkit: Essential Research Reagents & Computational Tools

Table 2: Key Research Reagent Solutions for RNA-seq Analysis

| Item | Function in Analysis |

|---|---|

| R/Bioconductor | The core open-source software environment for statistical computing and genomic analysis. |

| DESeq2 Package | Provides functions to import, normalize, model dispersion, and test for differential expression using a negative binomial model. |

| edgeR Package | A flexible tool for the analysis of count data, offering multiple options for dispersion estimation and hypothesis testing. |

| Limma & Voom | The limma package provides a framework for linear models, and the voom function transforms count data for use within this framework. |

| High-Quality Annotation | A transcript database (e.g., from Ensembl or GENCODE) is essential for accurately assigning reads to genes. |

Visualization of Analysis Workflows

The following diagram illustrates the logical workflow for a differential expression analysis that accounts for the mean-variance relationship, comparing the two primary methodologies.

RNA-seq Analysis Pathways for Overdispersion

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

Q1: What is overdispersion in RNA-seq data, and why is it a problem? Overdispersion refers to the observed variance in sequencing read counts that is larger than what would be expected under a simple statistical model, like the Poisson distribution. It is a problem because if unaccounted for, it can lead to an inflated false discovery rate in differential expression analysis, meaning you might incorrectly identify genes as being differentially expressed [22] [23].

Q2: What is the single largest source of technical variation leading to overdispersion? Multiple studies identify the library preparation step as the most significant source of technical variation. This process, which includes RNA extraction, conversion to cDNA, and PCR amplification, introduces biases and fluctuations that manifest as overdispersion in the final count data [10].

Q3: Should I prioritize increasing my sequencing depth or the number of biological replicates to improve detection power? Recent toxicogenomics research demonstrates that increasing biological replication provides a greater improvement in statistical power and reproducibility than increasing sequencing depth. While depth is important, studies show that moving from 2 to 4 replicates significantly enhances the consistent identification of differentially expressed genes across various sequencing depths [24].

Q4: How does sequencing depth influence overdispersion? The relationship is inverse; as sequencing depth increases, the overdispersion rate decreases. This means measurements become more accurate and less variable with deeper sequencing. However, the rate of improvement is slower than a simple Poisson model would predict, necessitating specialized statistical models like the beta-binomial distribution that account for this dynamic overdispersion [22] [23].

Q5: Does local sequence composition directly cause overdispersion? While local sequences (e.g., those causing random hexamer priming bias) can create a non-uniform distribution of reads across a gene, their direct influence on the overdispersion rate between replicates is less significant. After adjusting for the effect of sequencing depth, the impact of the local sequence itself on overdispersion is notably reduced [22].

Troubleshooting Common Problems

Problem: High false discovery rate in differential expression analysis.

- Potential Cause: Overdispersion in the count data is not being properly accounted for by the statistical model.

- Solution: Employ statistical methods designed to model overdispersed data, such as those based on the negative binomial (e.g., DESeq2, edgeR) or beta-binomial distributions. These tools explicitly estimate a dispersion parameter for each gene, leading to more reliable results [22] [10] [25].

Problem: Inconsistent results for differentially expressed genes between technical replicates.

- Potential Cause: Technical noise, primarily stemming from the library preparation process.

- Solution: Ensure rigorous standardization of library preparation protocols. Where possible, use multiplexing and distribute samples across different sequencing lanes to avoid confounding technical batch effects with biological effects of interest [10].

Problem: Low reproducibility of gene expression patterns across experiments.

- Potential Cause: Insufficient biological replication, leading to an inability to distinguish true biological signal from random biological variation.

- Solution: Increase the number of biological replicates. A well-powered experiment typically requires at least 3-4 biological replicates per condition. Evidence suggests this is more critical for reproducibility than ultra-high sequencing depth [24].

The following tables synthesize key quantitative findings from the literature regarding the impact of experimental factors on overdispersion and detection power.

Table 1: Impact of Sequencing Depth and Biological Replication on Differential Expression Analysis This table summarizes findings from a systematic study on the effects of subsampling sequencing depth and replicate number [24].

| Experimental Factor | Level | Impact on Differentially Expressed Gene (DEG) Detection |

|---|---|---|

| Biological Replicates | 2 Replicates | High variability; >80% of ~2000 DEGs were unique to specific sequencing depths. |

| 4 Replicates | Substantially improved reproducibility; over 550 genes were consistently identified across most depths. | |

| Sequencing Depth | Various (5-100%) | With low replication, DEG lists were highly dependent on depth. With 4 replicates, core pathways were consistently detected even at lower depths. |

Table 2: Statistical Modeling of Overdispersion in RNA-seq Data This table compares different statistical models used to account for overdispersion [22] [23].

| Statistical Model | Handles Overdispersion? | Relationship with Sequencing Depth | False Discovery Rate (FDR) Performance |

|---|---|---|---|

| Poisson / Binomial | No | Assumed to follow √N | High, as it underestimates true variance. |

| Standard Beta-Binomial | Yes | Assumes a constant overdispersion parameter. | Lower than Binomial, but may not be optimal. |

| Dynamic Beta-Binomial | Yes | Explicitly models decreasing overdispersion with increasing depth. | Demonstrated lower FDR than other models. |

Experimental Protocols

Detailed Methodology: Estimating Base-Level Overdispersion

This protocol is adapted from a study that investigated the dependency of overdispersion on sequencing depth and local sequence [22].

1. Dataset Preparation:

- Obtain RNA-seq data from a consortium-level project with large sample sizes (e.g., ENCODE spike-in dataset or MAQC dataset).

- Align sequencing reads to the appropriate reference genome or transcriptome using a splice-aware aligner (e.g., Bowtie, STAR).

- For each gene, truncate a number of nucleotides from the end equivalent to the read length to avoid regions with no data.

- Extract the count data

n_ijandm_ij, representing the number of mapped reads starting at the j-th nucleotide of the i-th gene for two samples being compared.

2. Normalization and Proportion Calculation:

- Calculate the overall neutral proportion

p_nassuming most genes do not change. This is done across all base pairs of all genes:p_n = (Σ_i Σ_j n_ij) / (Σ_i Σ_j n_ij + Σ_i Σ_j m_ij). - For a more gene-centric approach, the proportion for the i-th gene can be estimated as:

p_i = (Σ_j n_ij) / (Σ_j n_ij + Σ_j m_ij).

3. Estimation of Overdispersion Parameter θ_ij:

- Model the counts using a beta-binomial distribution, which introduces an overdispersion parameter

θ_ij. - The parameter

θ_ijcan be estimated from the variance observed between replicates using the formula:θ_ij = [ (1/R) * Σ_r ( σ²_pij,r / ( p_n,r * (1 - p_n,r) ) ) ] - 1 / (n_ij,r + m_ij,r)whereRis the number of replicate pairs,σ²_pij,ris the variance of the proportion for base j of gene i in replicate pair r, andp_n,ris the neutral proportion for that replicate pair [22].

4. Modeling with Sequencing Depth and Local Sequence:

- Fit a full model that includes the effects of both local sequence context and sequencing depth on the overdispersion rate.

- Compare this to reduced models containing only one of these effects to evaluate their individual contributions.

Workflow Diagram for Protocol

The diagram below outlines the key steps in the experimental protocol for analyzing overdispersion.

Signaling Pathways and Logical Relationships

Relationship Between Experimental Factors and Overdispersion

The following diagram illustrates the logical relationships and influence pathways between the key experimental factors discussed and their impact on overdispersion and downstream analysis.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for RNA-seq Experiments Focused on Managing Overdispersion

| Item | Function / Description | Relevance to Overdispersion |

|---|---|---|

| External RNA Control Consortium (ERCC) Spike-ins | Synthetic RNA transcripts added to samples in known quantities. | Serve as a internal control to monitor technical variance and assess the performance of statistical models in capturing overdispersion [22]. |

| Strand-Specific dUTP Protocol | A library preparation method that preserves information about the original strand of the RNA. | Helps reduce biases in transcript assignment, thereby contributing to more accurate count data and mitigating one source of overdispersion [22]. |

| Quality Control Tools (FastQC, MultiQC) | Software for assessing raw sequence data quality, base quality scores, and sequence content. | Critical for identifying poor-quality data and technical artifacts that can contribute to spurious variance and overdispersion [26] [25]. |

| Alignment Tools (STAR, HISAT2) | Splice-aware software for accurately mapping RNA-seq reads to a reference genome. | Accurate alignment minimizes misassignment of reads, which is a potential source of noise and overdispersion, especially for paralogous genes [26] [25]. |

| Differential Expression Tools (DESeq2, edgeR, limma-voom) | Statistical software packages that use negative binomial or related models to test for differential expression. | These tools directly model gene-specific overdispersion, which is essential for controlling false positives and generating reliable biological insights [22] [26] [25]. |

FAQ: Core Concepts and Troubleshooting

Q1: What is overdispersion in RNA-seq data and why should I care? Overdispersion occurs when the variance in your count data is larger than what would be expected under a simple theoretical model, such as the Poisson distribution where the variance equals the mean [27]. In RNA-seq data, this is the rule rather than the exception—the variance of read counts consistently exceeds the mean [28] [21]. You should care because failing to account for overdispersion leads to flawed statistical inference: standard tests become anti-conservative, and you lose control over your false discovery rate (FDR), meaning you might identify many genes as differentially expressed when they are not [29] [30].

Q2: How does overdispersion directly impact False Discovery Rate (FDR) estimation? Correlation and overdispersion in your data inflate both the bias and variance of the standard FDR estimator [29]. Essentially, the method used to estimate the FDR relies on the behavior of the number of significant discoveries. Overdispersion causes this number to be more variable than assumed under independence. This increased variance translates directly to instability and inaccuracy in the FDR estimates you obtain, meaning the reported FDR may be significantly lower than the actual proportion of false positives in your results [29]. In some cases, like with an exchangeable correlation structure, the standard FDR estimator can fail to be consistent altogether [29].

Q3: My analysis has limited replicates. How does overdispersion affect me? Small sample sizes drastically exacerbate the challenges of overdispersion. With few replicates, it is very difficult to obtain a stable, gene-specific estimate of the overdispersion parameter [28] [31]. Methods that do not share information across genes will have low power to detect true differential expression. This is precisely why modern tools like DESeq2 and edgeR use shrinkage estimators—they "borrow information" from all genes to obtain more robust dispersion estimates, which is crucial for reliable testing when replicates are scarce [31].

Q4: I've heard I should use a negative binomial model. Is this sufficient?

The negative binomial (NB) model is a fundamental and powerful advancement over the Poisson model for RNA-seq data, as it explicitly models the variance as a quadratic function of the mean (Var = μ + αμ²) [28] [31]. However, simply applying an NB model with gene-wise dispersion estimates is often insufficient with limited replicates. The key is how the dispersion is estimated. Methods like DESeq2 and the proposed DEHOGT go a step further by adapting to the specific overdispersion patterns in your data, with DESeq2 using shrinkage towards a mean trend [31] and DEHOGT using a gene-wise scheme that integrates information across all conditions [28] [32].

Q5: What are the practical consequences of ignoring overdispersion? Ignoring overdispersion has two primary consequences, both detrimental to the validity of your conclusions:

- Inflated False Discovery Rate (FDR): You will identify more genes as statistically significant than your error threshold allows. Your list of "hits" will be contaminated with a higher number of false positives [29] [30].

- Reduced Power and Reproducibility: Paradoxically, while the overall number of discoveries might seem high, the power to detect true, reproducible biological effects can be reduced because the statistical tests are not properly calibrated for the data's inherent noise structure [28].

The following table summarizes the characteristics and approaches of several key methods for handling overdispersion in differential expression analysis.

Table 1: Comparison of Statistical Methods for Overdispersed RNA-seq Data

| Method | Core Distribution | Dispersion Estimation Strategy | Key Feature | Reported Advantage |

|---|---|---|---|---|

| DESeq2 [31] | Negative Binomial | Shrinkage towards a smooth trend of dispersion over mean. Empirical Bayes. | Shrunken LFC estimates. | Improved stability and interpretability; powerful for small sample sizes. |

| edgeR [28] [21] | Negative Binomial | Moderates dispersion towards a common or trended value. | Weighted conditional likelihood. | Established, powerful method for a wide range of designs. |

| DEHOGT [28] [32] | Quasi-Poisson / Negative Binomial | Gene-wise estimation without homogeneous dispersion assumption. | Joint estimation across all conditions. | Enhanced power for heterogeneous overdispersion; more flexible modeling. |

| BBSeq [21] | Beta-Binomial | Models overdispersion as a function of the mean or as a free parameter. | Logistic regression framework. | Favorable power and flexibility, especially for small samples. |

| Standard GLM | Poisson / Negative Binomial | Gene-wise, no information sharing. | - | Low power with small n; high FDR if overdispersion is unaccounted for. |

Experimental Protocols for Robust FDR Control

Protocol A: Standard Differential Expression Analysis with DESeq2

This protocol is the current best practice for most RNA-seq experiments and includes steps to account for overdispersion.

- Data Input and Preprocessing: Begin with a count matrix

Kwhere rows are genes and columns are samples. Calculate size factorss_jto correct for differences in library depth using the median-of-ratios method [31]. - Model Fitting: For each gene

i, model the countsK_ijusing a Negative Binomial generalized linear model (GLM):μ_ij = s_j * q_ijVar(K_ij) = μ_ij + α_i * μ_ij²log2(q_ij) = X_j * β_iHere,α_iis the dispersion parameter andXis the design matrix.

- Dispersion Estimation:

- Calculate a gene-wise dispersion estimate (maximum likelihood).

- Fit a smooth curve predicting dispersion as a function of the mean expression (the trend).

- Shrink gene-wise estimates towards the trend using an empirical Bayes approach to obtain final robust dispersion values [31].

- Hypothesis Testing and FDR Control:

- Test the null hypothesis (LFC = 0) using the Wald test.

- Apply independent filtering to remove low-count genes, which have little power to detect differences.

- Calculate adjusted p-values using the Benjamini-Hochberg procedure to control the FDR across all tested genes.

Protocol B: Two-Stage Testing for Complex Experiments with stageR

For experiments involving multiple hypotheses per gene (e.g., differential transcript usage, complex multi-condition designs), a conventional FDR control on the hypothesis level can inflate the gene-level FDR. The stageR package implements a two-stage procedure to address this [33].

- Screening Stage (Gene-Level):

- Perform an omnibus test for each gene (e.g., a global likelihood ratio test) that aggregates evidence across all related hypotheses for that gene.

- Adjust the resulting p-values for multiple testing using the FDR procedure. This yields a set of significant genes that pass a gene-level FDR threshold.

- Confirmation Stage (Transcript/Hypothesis-Level):

- For each gene that passed the screening stage, test the individual hypotheses (e.g., for specific transcripts).

- Control the within-gene FDR for these confirmatory tests. This step provides resolution on which specific feature within the gene is driving the signal.

This approach combines the high power of aggregated tests with the resolution of individual hypothesis testing, ensuring that the gene-level FDR is controlled while still identifying specific significant events [33].

Signaling Pathways and Workflow Diagrams

The Scientist's Toolkit: Essential Reagents & Solutions

Table 2: Key Research Reagent Solutions for RNA-seq Analysis

| Item / Resource | Function / Purpose | Implementation Example |

|---|---|---|

| DESeq2 [31] [34] | Primary tool for differential expression analysis. Performs normalization, dispersion shrinkage, and statistical testing. | R/Bioconductor: DESeqDataSetFromMatrix() -> DESeq() -> results() |

| edgeR [28] [21] | Alternative robust tool for differential expression, also using negative binomial models and dispersion moderation. | R/Bioconductor: DGEList() -> calcNormFactors() -> estimateDisp() -> exactTest() or glmQLFit() |

| stageR [33] | For complex experimental designs to control the gene-level FDR when testing multiple hypotheses per gene. | R/Bioconductor: Two-stage procedure using stageR after obtaining p-values from a primary DE tool. |

| Quasi-Poisson / Negative Binomial Models [28] [30] | The foundational statistical models that explicitly parameterize overdispersion, forming the basis of most modern methods. | R: glm(..., family=quasipoisson) or MASS::glm.nb(...) |

| Trimmed Mean of M-values (TMM) [28] | Normalization method to correct for sample-specific biases (e.g., sequencing depth, RNA composition). | Used in edgeR's calcNormFactors function. |

| Median-of-Ratios Method [31] | Normalization method to estimate size factors that correct for differences in sequencing depth between samples. | Used in DESeq2 during the DESeq() function. |

Methodological Approaches for Overdispersed Data: Practical Implementation and Workflow Integration

Frequently Asked Questions (FAQs)

1. What are count data and why do they require special statistical models? Count data are a type of statistical data where observations can only take non-negative integer values {0, 1, 2, 3, ...} that arise from counting rather than ranking [35]. They require specialized models like Generalized Linear Models (GLMs) because they differ from normal data in several key ways: they are discrete, often exhibit positive skew, can have an abundance of zeros, and typically show a variance that increases with the mean [36]. The normal distribution, which is continuous and has a range from -∞ to +∞, is inappropriate for such data [37].

2. What is the fundamental problem with using ordinary linear regression for count data? Using ordinary linear regression for count data violates several key assumptions. The normal distribution assumes a continuous response and constant variance (homoscedasticity), whereas count data are discrete and typically show a mean-variance relationship [3]. Furthermore, a linear model can predict negative values, which are impossible for count data [37] [38]. This misspecification can lead to incorrect inferences and poor predictive performance [37].

3. What is overdispersion in the context of count data modeling? Overdispersion occurs when the variance in the observed count data is larger than the mean [35] [39]. The Poisson distribution assumes that the mean and variance are equal (a property called equidispersion). When data are overdispersed, which is common in real-world applications like RNA-seq analysis, the Poisson model becomes less ideal as it underestimates the variability, potentially leading to an increased number of false discoveries in differential expression analysis [40] [41].

4. How do I choose between a Poisson and a Negative Binomial model for my data? The choice depends on whether your data exhibit overdispersion. The Poisson model is suitable when the mean and variance of your counts are approximately equal [35]. If your data show overdispersion (variance > mean), the Negative Binomial model is more appropriate because it includes an additional parameter to model the variance separately from the mean [41] [42]. You can check for overdispersion by comparing the residual deviance to the degrees of freedom or by using diagnostic plots [37].

5. What should I do if my count data contain an excess of zeros? For data with a high proportion of zeros that cannot be explained by a standard Poisson or Negative Binomial distribution, zero-inflated models are recommended [43]. These models combine a count component (e.g., Poisson or Negative Binomial) with a point mass at zero, providing a more flexible framework for datasets with more zeros than expected [43].

Troubleshooting Guides

Issue 1: Diagnosing and Addressing Overdispersion

Problem: After fitting a Poisson GLM, diagnostic checks indicate overdispersion, meaning the variance of the response is significantly larger than its mean. This violates a key Poisson assumption and can lead to biased standard errors and misleading p-values [35] [41].

Solution:

- Confirm Overdispersion: Fit a Poisson model and check if the residual deviance is much larger than the residual degrees of freedom.

- Switch Distributions: Fit a model that can handle overdispersion. The most common alternative is the Negative Binomial (NB) model [41] [42].

- Consider Advanced Models: For complex overdispersion patterns, especially in RNA-seq data with small sample sizes, consider methods like

DESeq2[41] orDEHOGT(Differentially Expressed Heterogeneous Overdispersion Genes Testing), which integrate sample information from all conditions for more flexible overdispersion modeling [40].

Issue 2: Model Producing Negative or Non-Integer Predictions

Problem: Your model is predicting impossible values for counts, such as negative numbers or fractions.

Solution:

- Use a Proper Link Function: Ensure you are using a GLM with a log link function. The log link ensures that the linear predictor, when transformed, yields a positive mean (μ). The model is specified as: ( \log(\mui) = \beta0 + \beta1 x{1,i} + ... \betan x{n,i} \quad \text{or} \quad \mui = e^{\beta0 + \beta1 x{1,i} + ... \betan x{n,i} ) [37] [38].

- This guarantees that all predicted mean values are positive, and the actual counts are generated from a discrete distribution like Poisson or Negative Binomial [36].

Issue 3: Handling Zero-Inflated Count Data

Problem: Your dataset contains a large number of zero counts that a standard Poisson or NB model does not fit well.

Solution:

- Implement a Zero-Inflated Model: Use a zero-inflated Poisson (ZIP) or zero-inflated negative binomial (ZINB) model [43]. These models consist of two parts:

- A binary component (e.g., logistic regression) that models the probability that an observation is an excess zero.

- A count component (Poisson or NB) that models the non-zero counts.

- This approach provides a more accurate and interpretable model for data with excess zeros, such as in insurance claims or species abundance studies [43].

Experimental Protocols for RNA-seq Count Data Analysis

This protocol outlines a differential expression analysis pipeline for RNA-seq count data using a Negative Binomial GLM framework, addressing the common challenge of overdispersion.

Methodology

1. Model Specification: RNA-seq read counts ((K{ij}) for gene (i) in sample (j)) are modeled using a Negative Binomial distribution to account for overdispersion [41]: (K{ij} \sim \text{NB}(\mu{ij}, \sigma^2{ij})), where the mean ((\mu{ij})) and variance ((\sigma^2{ij})) are linked by: (\sigma^2{ij} = \mu{ij} + sj^2 v{\rho}(q{i,\rho(j)})). Here, (v{\rho}) is a smooth function describing how the raw variance depends on the expected mean abundance (q_{i,\rho}), fitted via local regression [41].

2. Parameter Estimation:

- Size Factors ((sj)): Calculate to normalize for different sequencing depths across samples using the median-of-ratios method [41]: ( \hat{s}j = \text{median}i \frac{k{ij}}{(\prod{v=1}^m k{iv})^{1/m}} ).

- Expression Strengths ((q{i\rho})): Estimate for each gene (i) in condition (\rho) as the average of normalized counts from replicates [41]: ( \hat{q}{i\rho} = \frac{1}{m\rho} \sum{j: \rho(j)=\rho} \frac{k{ij}}{\hat{s}j} ).

3. Testing for Differential Expression:

- Fit a Negative Binomial GLM for each gene using the model parameters estimated above.

- Test the significance of coefficients related to experimental conditions using likelihood ratio tests or Wald tests.

Workflow Visualization

Comparative Data Tables

Properties of Common Count Data Distributions

| Distribution | Modeling Approach | Variance Function | Key Application Context |

|---|---|---|---|

| Poisson | Poisson Regression [3] [38] | (Var(Y) = \mu) [3] | Ideal for equidispersed counts; technical replicates in RNA-seq [3] |

| Negative Binomial (NB) | NB Regression [41] [42] | (Var(Y) = \mu + \alpha\mu^2) [41] | Handles overdispersion; biological replicates in RNA-seq [41] |

| Generalized Poisson (GP) | Generalized Poisson Regression [35] | (Var(Y) = (1 + \alpha\mu)^2 \mu) [35] | Flexible alternative for both over- and under-dispersion [35] |

| COM-Poisson | Conway-Maxwell-Poisson Regression [35] | (Var(Y)) is a function of (\lambda) and (\nu) [35] | Generalizes Poisson to handle over- and under-dispersion [35] |

Model Selection Guide Based on Data Characteristics

| Data Characteristic | Recommended Model | Rationale | Example R Function |

|---|---|---|---|

| Equidispersion(Mean ≈ Variance) | Poisson Regression [35] | Theoretically correct and simplest model for counts. | glm(..., family=poisson) [37] |

| Overdispersion(Variance > Mean) | Negative Binomial Regression [41] [42] | Extra parameter accommodates extra-Poisson variation. | stan_glm(..., family=neg_binomial_2) [42] |

| Excess Zeros | Zero-Inflated (Poisson/NB) Model [43] | Explicitly models two processes: one for zeros and one for counts. | zeroinfl(...) (from pscl package) |

| Complex Overdispersion(e.g., in RNA-seq) | DESeq2 [41] or DEHOGT [40] |

Uses local regression to model mean-variance trend across all genes. | DESeq() (from DESeq2 package) |

| Tool / Reagent | Function / Purpose | Example in Analysis |

|---|---|---|

| R/Bioconductor Environment | Open-source software platform for statistical computing and genomic analysis. | Primary environment for implementing specialized packages like DESeq2 and edgeR [41]. |

| DESeq2 Package | Performs differential expression analysis based on a Negative Binomial GLM with shrinkage estimators. | Models overdispersion in RNA-seq data and tests for differentially expressed genes [41]. |

| edgeR Package | Analyzes RNA-seq data using a Negative Binomial model with empirical Bayes methods. | Provides robust differential expression analysis for count data [41]. |

| DEHOGT Method | Implements heterogeneous overdispersion genes testing. | Detects differentially expressed genes with enhanced power in cases of overdispersion and limited sample size [40] [39]. |

| stan_glm Function (rstanarm) | Fits Bayesian GLMs using Stan, allowing for incorporation of prior knowledge. | Fits Bayesian Poisson and Negative Binomial models, useful for robust inference and prediction [42]. |

A technical guide for researchers addressing overdispersion in RNA-seq count data analysis

Frequently Asked Questions

1. What does the Negative Binomial variance function μ + φμ² actually mean in practical terms?

In RNA-Seq data analysis, the variance function μ + φμ² represents how the variability in your count data changes with the mean expression level. The first term (μ) accounts for Poisson-like sampling variance that would be present even in perfectly homogeneous biological samples. The second term (φμ²) captures additional biological variability between replicates that exceeds Poisson sampling noise. The dispersion parameter φ quantifies the extent of this overdispersion - when φ = 0, the model reduces to Poisson regression, while larger φ values indicate greater overdispersion [44].

2. My model fails to initialize with errors about parameter estimation. What could be wrong?

Initialization errors often occur when working with complex Negative Binomial models, particularly when using Bayesian estimation methods. As one researcher reported, you might encounter messages like: "Initialization between (-2, 2) failed after 100 attempts" when using Stan for RNA-Seq count modeling [45]. This typically indicates that:

- Your dispersion priors might be too vague - try constraining them (e.g., changing

cauchy(0, 5)tocauchy(0, 1)) [45] - The model may be poorly identified with your current data dimensions

- You may need to specify initial values or consider reparameterizing your model [45]

3. When should I use Negative Binomial regression instead of Poisson for RNA-Seq data?

Use Negative Binomial regression when your count data exhibits overdispersion - where the variance exceeds the mean. This is routinely the case with RNA-Seq data due to biological variability beyond technical sampling noise. You can identify this need through descriptive statistics: if conditional variances within experimental groups are higher than conditional means, Negative Binomial regression is appropriate [46] [47]. The Likelihood Ratio Test comparing Poisson and Negative Binomial models also provides formal evidence - a significant test (p < 0.05) supports using the Negative Binomial approach [47].

4. How do I interpret the coefficients from a Negative Binomial regression?

Negative Binomial regression coefficients are interpreted on the log scale. For a coefficient β₁, a one-unit increase in the predictor variable is associated with a β₁ change in the log count of the response, holding other variables constant. For easier interpretation, you can exponentiate coefficients to get incidence rate ratios [47]. For example, if exp(β₁) = 0.94, this indicates a 6% decrease in the expected count for each one-unit increase in the predictor variable.

5. Can I model nonlinear relationships using Negative Binomial regression?

Yes, through Negative Binomial Additive Models (NBAMSeq), which extend generalized additive models to count data with overdispersion. This approach allows modeling nonlinear covariate effects while maintaining the advantages of Negative Binomial distribution for RNA-Seq data. NBAMSeq has shown improved performance in detecting nonlinear effects while maintaining equivalent performance for linear effects compared to standard methods like DESeq2 and edgeR [48].

Dispersion Modeling Strategies for RNA-Seq Data

Table: Comparison of Dispersion Estimation Methods in Negative Binomial RNA-Seq Analysis

| Method | Key Principle | Parameters for Dispersion | Advantages | Limitations |

|---|---|---|---|---|

| Genewise | Estimates dispersion separately for each gene | m parameters (one per gene) | Captures gene-specific variability | High variance with small samples [44] |

| Common | Assumes same dispersion for all genes | 1 parameter | Maximum parsimony | Often too simplistic for real data [44] |

| NBP | Models dispersion as function of mean: log(φ) = α₀ + α₁log(μ) | 2 parameters | Accounts for mean-variance trend | Assumes specific parametric form [44] |

| NBQ | Quadratic extension of NBP: log(φ) = α₀ + α₁log(μ) + α₂[log(μ)]² | 3 parameters | More flexible mean-variance relationship | Increased complexity [44] |

| Non-parametric | Smooth function of dispersion on mean | Data-driven | Maximum flexibility | Requires sufficient data points [44] |

| Tagwise | Weighted average of common/trend and genewise estimates | Empirical Bayes shrinkage | Balanced approach | Computational complexity [44] |

Experimental Protocol: Negative Binomial Model Fitting for RNA-Seq Data

Materials and Reagents Table: Essential Research Reagent Solutions for RNA-Seq Analysis

| Reagent/Resource | Function/Purpose |

|---|---|

| RNA extraction kit | Isolate high-quality RNA from biological samples |

| Illumina HiSeq/MiSeq platform | High-throughput sequencing of cDNA fragments |

| DESeq2 (Bioconductor) | Negative Binomial-based differential expression analysis [48] |

| edgeR (Bioconductor) | Negative Binomial modeling with empirical Bayes dispersion estimation [48] |

| NBAMSeq (Bioconductor) | Negative Binomial additive models for nonlinear effects [48] |

| NBPSeq (R package) | Implements NBP and NBQ dispersion modeling approaches [44] |

Step-by-Step Methodology

Data Preprocessing

- Obtain raw count matrix from aligned RNA-Seq reads

- Filter genes with low expression (e.g., remove genes with counts < 10 in minimal sample size)

- Calculate normalization factors to account for library size differences using methods like median-of-ratios [48]

Exploratory Data Analysis

- Examine mean-variance relationship across genes

- Check for overdispersion by comparing sample variances to means within experimental groups

- Assess potential nonlinear patterns using scatterplots of counts versus key covariates [48]

Model Specification

- Define the log-link function: log(μ) = β₀ + β₁X₁ + ... + βₚXₚ + offset(log(library size))

- Select appropriate dispersion modeling strategy based on sample size and biological context

- For small sample sizes (n < 10), prefer shrinkage methods (tagwise, trended)

- For larger sample sizes, consider genewise or flexible approaches (NBQ, non-parametric)

Parameter Estimation

- Estimate regression coefficients using maximum likelihood

- Estimate dispersion parameters using the selected method

- For complex models, use iterative algorithms (e.g., Newton-Raphson, Fisher scoring)

Model Diagnostics

- Check goodness-of-fit using Pearson residuals and simulation-based tests [44]

- Examine residual plots for patterns suggesting model inadequacy

- Verify dispersion structure appropriateness using diagnostic plots

Statistical Inference

- Test differential expression using Wald tests or likelihood ratio tests

- Adjust for multiple testing using Benjamini-Hochberg FDR control

- Interpret coefficients as log-fold-changes or exponentiate for rate ratios

Workflow Visualization

Negative Binomial Regression Analysis Workflow for RNA-Seq Data

Key Troubleshooting Guidelines

Problem: Convergence issues during model fitting

- Solution: Check for complete separation or near-zero counts; consider adding a small pseudo-count or using penalized likelihood methods

Problem: Residual plots show systematic patterns

- Solution: Consider more flexible dispersion models (NBQ instead of NBP) or Negative Binomial Additive Models (NBAMSeq) for nonlinear covariate effects [48]

Problem: Unrealistically wide confidence intervals

- Solution: Use dispersion shrinkage methods to borrow information across genes, particularly beneficial with small sample sizes [44]

Problem: Computational bottlenecks with large datasets

- Solution: Utilize optimized implementations in DESeq2 or edgeR, which use efficient algorithms for high-dimensional RNA-Seq data [48]

The variance function μ + φμ² in Negative Binomial regression provides a flexible framework for addressing the overdispersion inherent in RNA-Seq count data, with multiple strategies available for estimating the dispersion parameter φ based on your specific experimental design and sample size considerations.

In RNA-Seq data analysis, a fundamental assumption of standard Poisson regression—that the variance of a count variable equals its mean (variance = μ)—is frequently violated. This phenomenon, where the observed variance exceeds the mean, is known as overdispersion [49] [50]. Overdispersion is empirically common in RNA-Seq read counts, meaning the data exhibits extra-Poisson variability, which, if overlooked, can lead to biased and misleading inferences about gene associations [51].

Quasi-Poisson regression directly addresses this by generalizing the Poisson model. It introduces a dispersion parameter (θ or φ) that scales the variance, making it a linear function of the mean. The model assumes a mean-variance relationship of Var(Y) = θ × μ, where θ > 1 indicates overdispersion [49] [50]. This approach maintains the interpretability of Poisson regression while providing more reliable, conservative inference for overdispersed count data common in transcriptome profiling [49] [51].

Frequently Asked Questions (FAQs)

1. What is the primary advantage of using a Quasi-Poisson model over a standard Poisson model for RNA-Seq data?

The primary advantage is its ability to correctly handle overdispersed count data. Standard Poisson regression underestimates the variance when overdispersion is present, leading to artificially small standard errors, inflated Type I error rates (false positives), and potentially misleading conclusions. Quasi-Poisson regression accounts for this by estimating a dispersion parameter, which scales the standard errors, resulting in more reliable and conservative statistical inference [49] [50].

2. How do I decide between using a Quasi-Poisson model and a Negative Binomial model?

Both models handle overdispersion, but they define the mean-variance relationship differently and have different theoretical foundations.

- Variance Structure: Quasi-Poisson assumes a linear relationship where

Var(Y) = θ × μ[51] [50]. The Negative Binomial assumes a quadratic relationship whereVar(Y) = μ + μ²/θ[51] [52]. - Theoretical Basis: Quasi-Poisson is a quasi-likelihood model that does not specify a full probability distribution. It is well-suited for providing robust standard errors and is a practical choice when the goal is reliable inference on coefficients without a full likelihood model [50]. Negative Binomial is a true probability model based on the Gamma-Poisson mixture, making it a full Generalized Linear Model (GLM). It is more appropriate when a full probabilistic interpretation is desired, or when overdispersion is severe [50] [52].

Table: Comparison of Quasi-Poisson and Negative Binomial Models

| Feature | Quasi-Poisson | Negative Binomial |

|---|---|---|

| Handles Overdispersion? | Yes | Yes |

| Variance Function | Linear: θ × μ [51] |

Quadratic: μ + μ²/θ [51] |

| Full Probability Distribution? | No (Quasi-likelihood) [50] | Yes (Gamma-Poisson) [52] |

| Estimation Method | Quasi-likelihood [50] | Maximum Likelihood [52] |

| Model Selection (AIC/BIC) | Not applicable [50] | Yes [50] |

| Best for Severe Overdispersion? | Less ideal [50] | Yes [50] |

3. My model is still exhibiting poor fit after switching to Quasi-Poisson. What are the next steps?

If a Quasi-Poisson model does not adequately capture the variability in your data, consider these steps:

- Evaluate Model Diagnostics: Check residual plots (e.g., deviance or Pearson residuals) for patterns that suggest other unmet assumptions or the presence of outliers [49] [52].

- Switch to Negative Binomial: The quadratic variance form of the Negative Binomial model often provides a better fit for data with substantial overdispersion, as it gives more weight to the influence of larger counts [50].

- Check for Zero-Inflation: If your RNA-Seq count data contains an excess of zero counts beyond what standard count distributions expect, models like Zero-Inflated Poisson (ZIP) or Zero-Inflated Negative Binomial (ZINB) may be necessary [52].

- Verify Data Preprocessing: Ensure that read counts have been properly normalized (e.g., using TMM normalization) to account for differences in sequencing depth and RNA composition between samples, which is a critical precursor to any count modeling [51].

4. Can I use AIC or BIC to compare a Quasi-Poisson model with other models?

No, you cannot. Since the Quasi-Poisson model is not based on a full likelihood function, it does not have a true log-likelihood value from which to calculate Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC) [50]. For model selection that involves Quasi-Poisson, you must rely on other goodness-of-fit measures or use tests like the Likelihood Ratio Test to compare a standard Poisson model against a nested Negative Binomial model [52].

Troubleshooting Guides

Issue 1: Detecting and Confirming Overdispersion in Your Dataset

Problem: You have fitted a standard Poisson regression model but suspect the results are unreliable due to overdispersion.

Solution: Perform an overdispersion test after fitting a Poisson model.

Protocol:

- Fit a Poisson Model: First, run a standard Poisson regression on your RNA-Seq count data.

- Calculate the Dispersion Statistic: A common metric is the Pearson Chi-squared dispersion statistic. It is calculated as the Pearson Chi-squared statistic divided by the residual degrees of freedom.

- Interpret the Result:

- A statistic approximately equal to 1 suggests that the Poisson assumption (variance = mean) is reasonable.

- A statistic significantly greater than 1 (e.g., 1.5, 2, or higher) provides clear evidence of overdispersion and indicates that a Quasi-Poisson or Negative Binomial model should be used [50].

Issue 2: Implementing Quasi-Poisson Regression in R

Problem: You need practical guidance on implementing a Quasi-Poisson regression model for your RNA-Seq analysis.

Protocol:

- Data Preparation: Ensure your dependent variable is a count (non-negative integers) and that independent variables (predictors like condition, treatment) are correctly formatted [49].

- Model Fitting: Use the

glm()function in R, specifying thefamilyargument asquasipoisson. - Interpret Output:

- Coefficients: Represent the log of the incidence rate ratio for a one-unit change in the predictor. A positive coefficient indicates an increase in the log count [49] [52].

- Standard Errors: Will be inflated compared to a standard Poisson model, correctly reflecting the greater uncertainty due to overdispersion [50].

- P-values: Based on the adjusted standard errors, they are more conservative and trustworthy [49].

Issue 3: Choosing the Right Model for Your Experimental Data

Problem: You are unsure whether Quasi-Poisson or Negative Binomial is the best choice for your specific research goals.

Solution: Follow a structured decision workflow.

Experimental Protocols & Workflows

Protocol 1: Comprehensive RNA-Seq Count Data Analysis with Overdispersion