Mastering Scree Plots: A Step-by-Step Guide to Selecting the Optimal Number of Principal Components for Biomedical Data

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on using scree plots to determine the optimal number of principal components in Principal Component Analysis (PCA).

Mastering Scree Plots: A Step-by-Step Guide to Selecting the Optimal Number of Principal Components for Biomedical Data

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on using scree plots to determine the optimal number of principal components in Principal Component Analysis (PCA). Covering foundational theory, practical implementation, and advanced validation techniques, it addresses the critical challenge of dimensionality reduction in high-dimensional biomedical datasets, such as those from genomic, transcriptomic, and clinical studies. The content bridges statistical methodology with real-world application, enabling professionals to enhance model performance, avoid overfitting, and extract meaningful biological insights from complex data.

Understanding the Why: The Core Principles of PCA and the Role of the Scree Plot

The curse of dimensionality describes a set of phenomena that arise when analyzing and organizing data in high-dimensional spaces, which do not occur in low-dimensional settings. In biomedical research, this concept has become increasingly critical with the proliferation of high-throughput technologies that generate vast amounts of features (dimensions) per observation. Patient health states can now be characterized by multimodal data streams including medical imaging, clinical variables, genome sequencing, clinician-patient conversations, and continuous signals from wearables [1]. This high-volume, personalized data aggregated over patients' lives has spurred development of artificial intelligence models for higher-precision diagnosis, prognosis, and tracking.

The fundamental challenge emerges when the number of features (p) becomes very large, often exceeding the sample size (n), creating what statisticians call "small n, large p" problems. As dimensionality increases, the available data becomes sparse in the corresponding feature space, with potentially catastrophic consequences for model generalizability. This sparsity creates "dataset blind spots"—contiguous regions of feature space without any observations—which can lead to highly variable estimates of true model performance and unexpected failures when deployed in real-world clinical settings [1]. The curse of dimensionality thus represents a rate-limiting factor in developing robust AI models that generalize reliably beyond their training data.

Theoretical Foundations and Mathematical Principles

The Geometry of High-Dimensional Spaces

The curse of dimensionality manifests through several counterintuitive geometric properties. As dimensionality increases, the volume of the space grows exponentially, causing data points to become increasingly sparse. This sparsity undermines the concept of proximity that many statistical and machine learning algorithms rely upon. In high dimensions, most data points reside in the outskirts of the feature space, and the average distance between points becomes large and homogeneous [1].

The combinatorial explosion of possible feature value combinations means that fewer individuals are close to average for many measurements simultaneously than for any single measurement alone [2]. This phenomenon explains why designing airplane cockpits for the "average pilot" across multiple body measurements failed—virtually no pilots were average across all dimensions. Similarly, in precision medicine, a patient with 10 independent risk factors each with 10% prevalence implies a probability of only 1 in 10 billion of finding a similar previous patient for comparison [2].

Implications for Statistical Inference

High-dimensional spaces present fundamental challenges for statistical inference. The large feature space increases the risk of overfitting, where models learn patterns specific to the training data that do not generalize. This occurs because with limited samples in high dimensions, algorithms can appear to find "patterns" that are actually statistical artifacts [1].

The Watson for Oncology case exemplifies this problem—trained on high-dimensional historical patient data but with small sample sizes ranging from 106 cases for ovarian cancer to 635 cases for lung cancer, the system proved susceptible to dataset blind spots and produced incorrect treatment recommendations when encountering data from these blind spots post-deployment [1].

Manifestations in Biomedical Data Types

Genomic Data

Genomic selection represents a prime example where high-dimensional data challenges emerge. The development of high-throughput genotyping technologies has yielded dense genomic marker data, often comprising tens of thousands of single nucleotide polymorphisms (SNPs) [3]. With typical study sample sizes of a few hundred individuals, genomic prediction must estimate large numbers of marker effects (p) using limited observations (n).

Table 1: Dimensionality Challenges in Genomic Studies

| Data Characteristic | Typical Scale | Dimensionality Challenge |

|---|---|---|

| Number of markers (SNPs) | 26,000+ [3] | Far exceeds sample size |

| Sample size | 315 lines [3] | Small n, large p problem |

| Environmental factors | Multiple environments | Increases complexity through G×E interactions |

| Prediction accuracy | Varies with DR method | Plateaus with fraction of features [3] |

Clinical and Digital Health Data

Digital health data presents particularly challenging high-dimensional scenarios. Medical imaging like MRI brain scans contains sub-mm resolution, leading to data with a million or more voxels. Continuous wearable device data samples at tens or hundreds of samples per second, while speech signals sample between 16k-44k samples per second [1]. These data streams create massive clinical data footprints with highly complex information.

In speech-based digital biomarker discovery, researchers transform raw speech samples into high-dimensional feature vectors containing hundreds to thousands of features to detect neurological diseases. However, clinical speech databases typically contain only tens or hundreds of patients, creating the "perfect storm" of high-dimensional data with small sample size used to model complex phenomena [1].

Dimensionality Reduction Methodologies

Principal Component Analysis (PCA) Fundamentals

Principal Component Analysis stands as one of the most widely used dimensionality reduction techniques in biomedical research. PCA transforms a complex dataset with many variables into a simpler one that retains critical trends and patterns by identifying principal components—directions that maximize variance and are orthogonal to each other [4] [5].

The algorithm follows a systematic process:

- Standardization: Normalizing data so each variable contributes equally

- Covariance Matrix Computation: Understanding how variables deviate from mean and correlate

- Eigenvectors and Eigenvalues Identification: Finding new axes that maximize variance

- Sorting and Ranking: Prioritizing components by variance capture ability

- Feature Vector Formation: Selecting subset of eigenvectors as principal components

- Transformation: Mapping original data into principal component space [5]

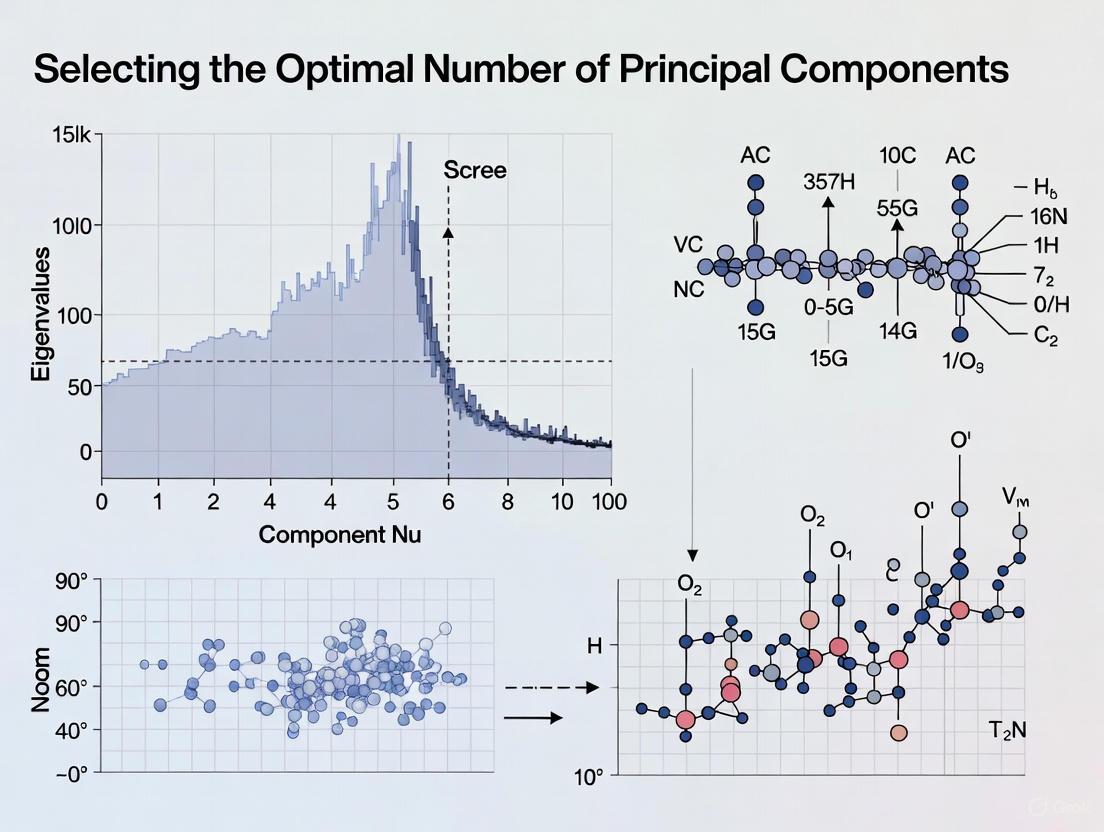

Determining the Optimal Number of Principal Components

Selecting the correct number of principal components represents a critical hyperparameter tuning process in PCA. Multiple methods exist for this determination, each with distinct advantages:

Table 2: Methods for Selecting Optimal Number of Principal Components

| Method | Description | Application Context |

|---|---|---|

| Scree Plot | Visual identification of "elbow" where eigenvalues drop off [6] | Exploratory data analysis |

| Variance Threshold | Specifying float (0-1) for variance to retain [4] | When specific variance retention needed |

| Kaiser's Rule | Retaining components with eigenvalues >1 [4] [6] | Initial screening, tends to overestimate |

| Parallel Analysis | More accurate than scree plot or Kaiser's rule [6] | When accuracy critical |

| Performance Metrics | Using RMSE (regression) or Accuracy (classification) [4] | When downstream model performance paramount |

The scree plot method, central to the thesis context of this article, involves creating a visual representation of eigenvalues that define the magnitude of eigenvectors (principal components). Researchers select all components up to the point where the bend (elbow) occurs in the scree plot [4]. For genomic prediction applications, studies show that regardless of the dimensionality reduction method and prediction model used, only a fraction of features is sufficient to achieve maximum correlation [3].

Experimental Protocol: PCA with Scree Plot Analysis

Objective: Implement PCA with scree plot analysis for dimensionality reduction in high-dimensional genomic data.

Materials:

- Genomic dataset (e.g., SNP markers)

- Computing environment with linear algebra capabilities

- Visualization tools for scree plot generation

Procedure:

- Standardize the input data to have zero mean and unit variance

- Compute covariance matrix of standardized data

- Calculate eigenvectors and eigenvalues from covariance matrix

- Sort eigenvectors by decreasing eigenvalues

- Plot ordered eigenvalues (scree plot)

- Identify the "elbow" point where eigenvalues drop off markedly

- Select principal components up to the elbow point

- Transform original data to reduced dimensional space

Validation:

- Compare explained variance ratio for selected components

- Evaluate downstream model performance with cross-validation

- Assess computational efficiency gains

Advanced Dimensionality Reduction Techniques

Feature Selection vs. Feature Projection

Dimensionality reduction techniques broadly fall into two categories: feature selection and feature projection. Feature selection methods identify and retain the most relevant features, reducing complexity while maintaining interpretability. These include embedded methods (LASSO regularization), filters (statistical measures), and wrappers (feature subset evaluation) [5].

Feature projection techniques transform data into lower-dimensional space, maintaining essential structures while reducing complexity. These include manifold learning (t-SNE, UMAP), principal component analysis (PCA), linear discriminant analysis (LDA), and autoencoders [5]. For genomic prediction, feature selection approaches often prove preferable as they avoid interpretability issues associated with linear combinations of original features [3].

Comparative Analysis of Dimensionality Reduction Methods

Table 3: Dimensionality Reduction Techniques for Biomedical Data

| Method | Type | Key Characteristics | Biomedical Applications |

|---|---|---|---|

| PCA | Feature projection | Linear, maximizes variance | Genomic prediction, imaging data |

| t-SNE | Manifold learning | Non-linear, preserves local structure | Single-cell RNA sequencing, visualization |

| UMAP | Manifold learning | Preserves local/global structure, scalable | Large-scale biomedical data |

| LDA | Feature projection | Supervised, maximizes class separation | Diagnostic classification |

| Autoencoders | Neural network | Non-linear, deep learning approach | Complex pattern recognition |

| Feature Selection | Feature selection | Maintains original feature interpretability | Genomic marker selection |

Research demonstrates that in genomic selection, dimensionality reduction methods significantly improve computational efficiency while maintaining prediction accuracy. Studies applying DR methods to chickpea genomic data containing 315 lines phenotyped in nine environments with 26,817 markers showed that only a fraction of features was sufficient to achieve maximum correlation, regardless of the DR method and prediction model used [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools

| Item | Function | Application Note |

|---|---|---|

| High-Throughput Genotyping Platform | Generates dense SNP array data | Foundation for genomic selection studies [3] |

| Scikit-learn PCA Implementation | Python-based PCA with hyperparameter tuning | Enables n_components optimization [4] |

| R Statistical Environment with factoextra | Scree plot visualization and eigenvalue analysis | Provides fviz_eig() for variance plots [4] |

| Parallel Analysis Algorithms | Determines significant components beyond eigenvalue >1 | More accurate than Kaiser's rule [6] |

| Cross-Validation Framework | Estimates out-of-sample performance | Critical for evaluating generalizability [1] |

| Manifold Learning Libraries (UMAP, t-SNE) | Non-linear dimensionality reduction | Handles complex biomedical data structures [5] |

Implementation Protocols for Biomedical Applications

Protocol: Genomic Prediction with Dimensionality Reduction

Objective: Implement dimensionality reduction as pre-processing step for genomic selection models to improve computational efficiency.

Background: Genomic selection must estimate large numbers of marker effects using limited observations, complicated by environment and genotype by environment (G×E) interactions [3].

Materials and Reagents:

- Plant or animal lines with genotype and phenotype data

- Genotyping platform for SNP markers

- Computing resources for large-scale matrix operations

Procedure:

- Data Preparation

- Collect genotype data (e.g., 26,817 SNP markers [3])

- Acquire phenotype measurements across multiple environments

- Format data into marker matrix and response variables

Dimensionality Reduction Application

- Apply selected DR method (PCA, feature selection, etc.)

- Create reduced datasets with increasing marker numbers

- Compute respective prediction accuracy for each size

Model Training and Validation

- Implement genomic prediction models (GBLUP, Bayesian methods)

- Incorporate environment and G×E interaction effects

- Apply cross-validation scheme appropriate for data structure

Performance Evaluation

- Calculate prediction accuracy as correlation between predicted and observed

- Plot accuracy versus number of features retained

- Identify point where accuracy plateaus

Expected Outcomes: Prediction accuracy values plateau beyond a certain feature set size, with further increases providing no significant improvement [3].

Protocol: Digital Biomarker Development with Speech Analysis

Objective: Develop AI models for neurological disease detection from speech signals while mitigating curse of dimensionality effects.

Background: Speech production involves distributed neuronal activation, with disturbances from neurological disease manifesting as signal changes. Speech signals sampled at high frequencies create high-dimensional feature spaces [1].

Procedure:

- Data Acquisition

- Collect speech samples according to standardized protocol

- Include participants with target condition (e.g., MCI) and healthy controls

- Record minimum of several minutes of speech per participant

Feature Extraction

- Transform raw speech samples into feature vectors

- Include features like type-to-token ratio (TTR) and lexical density (LD)

- Normalize features to common scale (e.g., 0-1)

Dimensionality Assessment

- Evaluate feature space sparsity

- Identify dataset blind spots in relevant feature space

- Apply dimensionality reduction appropriate for sample size

Model Development with Generalizability Testing

- Train multiple model architectures on available data

- Evaluate performance on held-out test set

- Implement robustness checks for blind spot regions

Critical Considerations: Small clinical speech databases (tens to hundreds of participants) with high-dimensional features create ideal conditions for the curse of dimensionality [1].

The curse of dimensionality presents fundamental challenges in biomedical research as data dimensionality continues to grow with technological advances. The phenomenon of dataset blind spots and performance misestimation requires methodological approaches that prioritize generalizability over training set performance. Dimensionality reduction techniques, particularly those incorporating scree plot analysis for optimal component selection, offer powerful strategies to mitigate these effects.

Future directions in biomedical research will likely incorporate more sophisticated approaches to the dimensionality challenge. Advanced Inferential Medicine frameworks that use "modelbases" rather than solely relying on ever-larger databases represent promising alternatives [2]. Similarly, randomized algorithms for dimensionality reduction may provide computational advantages for massive-scale biomedical data [3]. As precision medicine advances, recognizing and addressing the cursed dimensions will be essential for translating high-dimensional data into clinically meaningful insights.

In multivariate statistics and dimensionality reduction, a scree plot serves as a fundamental graphical tool for determining the optimal number of components to retain in Principal Component Analysis (PCA). PCA is a linear dimensionality reduction technique that transforms high-dimensional data into a set of linearly uncorrelated variables called principal components, which capture the maximum variance in the data [7] [8]. The scree plot, first introduced by Raymond B. Cattell in 1966, provides researchers with a visual method to balance information retention against model simplicity [9].

The plot derives its name from the geological term "scree," referring to the accumulation of loose stones or rocky debris that forms at the base of a mountain slope [9]. This analogy perfectly captures the visual appearance of a typical scree plot: a steep descent followed by a gradual "rubble" of less significant components. For researchers in drug development and other scientific fields working with high-dimensional data, the scree plot offers an intuitive approach to one of PCA's most critical challenges—determining how many principal components effectively capture the essential patterns in their data without overfitting or unnecessary complexity.

Theoretical Foundation: Eigenvalues and Variance Explained

Principal Components and Eigenvalues

Principal components are new variables constructed as linear combinations of the original variables in a dataset [8]. These components are calculated in sequence such that:

- The first principal component (PC1) accounts for the largest possible variance in the data

- The second principal component (PC2) accounts for the next highest variance while being uncorrelated (orthogonal) to the first

- Each subsequent component follows the same pattern, explaining remaining variance while maintaining orthogonality to previous components [8] [10]

Eigenvalues represent the amount of variance carried by each principal component [11]. Mathematically, if we have a data matrix (X) with covariance matrix (Σ), the eigenvalues (λ_i) are obtained through eigen decomposition of (Σ) and satisfy the equation:

[Σvi = λiv_i]

where (v_i) are the eigenvectors (principal components). The size of each eigenvalue corresponds directly to the importance of its associated principal component—larger eigenvalues indicate components that capture more substantial portions of the total variance in the dataset [8].

Variance Explained Calculation

The proportion of variance explained by each principal component is calculated by dividing its eigenvalue by the sum of all eigenvalues [8]:

[\text{Proportion of Variance for PC}i = \frac{λi}{\sum{j=1}^{p} λj}]

where (p) equals the total number of variables (and components) in the original dataset.

The cumulative variance explained by the first (k) components is:

[\text{Cumulative Variance} = \frac{\sum{j=1}^{k} λj}{\sum{j=1}^{p} λj}]

This cumulative measure helps researchers determine what percentage of the original information is preserved when retaining (k) components [11].

Table 1: Sample Eigenanalysis Results from a PCA Study [11]

| Principal Component | Eigenvalue | Proportion of Variance | Cumulative Proportion |

|---|---|---|---|

| PC1 | 3.5476 | 0.443 | 0.443 |

| PC2 | 2.1320 | 0.266 | 0.710 |

| PC3 | 1.0447 | 0.131 | 0.841 |

| PC4 | 0.5315 | 0.066 | 0.907 |

| PC5 | 0.4112 | 0.051 | 0.958 |

| PC6 | 0.1665 | 0.021 | 0.979 |

| PC7 | 0.1254 | 0.016 | 0.995 |

| PC8 | 0.0411 | 0.005 | 1.000 |

Visual Interpretation of Scree Plots

Anatomy of a Scree Plot

A scree plot displays eigenvalues on the y-axis against the corresponding principal component number on the x-axis [7] [9]. The components are always arranged in descending order of their eigenvalues, creating a characteristic downward curve [7].

Most scree plots share a common visual pattern: starting high on the left, falling rather quickly, and then flattening out at some point [7]. This distinctive shape emerges because the first component typically explains much of the variability, the next few components explain a moderate amount, and the latter components explain only a small fraction of the overall variability [7].

The Elbow Criterion

The primary interpretation method for scree plots is the elbow criterion, which involves identifying the point where the curve bends—the "elbow"—and selecting all components just before this flattening occurs [7] [9]. According to the scree test, the "elbow" of the graph represents where the eigenvalues seem to level off, and factors or components to the left of this point should be retained as significant [9].

When the eigenvalues drop dramatically in size, it indicates that an additional factor would add relatively little to the information already extracted [7]. In the example provided in Table 1, the scree plot would show a distinct elbow after the third principal component, suggesting that three components effectively capture the essential variance in the data while the remaining components contribute minimally [11].

Methodological Protocols for Scree Plot Analysis

Standard PCA and Scree Plot Generation Workflow

Step-by-Step Experimental Protocol

Protocol 1: Comprehensive Scree Plot Analysis for Component Selection

Data Standardization

- Standardize the range of continuous initial variables by subtracting the mean and dividing by the standard deviation for each value of each variable [8] [10]

- Purpose: Ensures each variable contributes equally to the analysis and prevents variables with larger ranges from dominating those with smaller ranges [8]

Covariance Matrix Computation

Eigen Decomposition

Scree Plot Generation

Component Selection

Data Transformation (Optional)

Complementary Component Selection Methods

Alternative and Supporting Criteria

While the scree plot provides a visual method for component selection, researchers often combine it with quantitative approaches for more robust results:

- Kaiser's Rule: Retain components with eigenvalues greater than 1 [7] [4]

- Proportion of Variance Criterion: Select components that collectively explain at least 80-90% of the total variance [7] [11]

- Parallel Analysis: Compare data eigenvalues with those from uncorrelated normal variables, retaining components where data eigenvalues exceed random eigenvalues [6]

Table 2: Comparison of Component Selection Methods [7] [11] [6]

| Method | Procedure | Advantages | Limitations |

|---|---|---|---|

| Scree Plot (Elbow Criterion) | Visual identification of slope change in eigenvalue plot | Intuitive, graphical, widely applicable | Subjective interpretation, multiple elbows possible |

| Kaiser's Rule | Retain components with eigenvalues > 1 | Simple, objective criterion | Often overestimates components, too conservative for large variable sets |

| Variance Proportion | Retain components until cumulative variance reaches threshold (e.g., 80-90%) | Direct control over information retention | Does not consider component significance, may include trivial components |

| Parallel Analysis | Compare with eigenvalues from random uncorrelated data | Objective, based on statistical significance | Requires simulation, more computationally intensive |

Integrated Decision Framework

For robust component selection, researchers should:

- Generate scree plot and identify potential elbow points [7] [9]

- Apply Kaiser's rule to determine minimum components to retain [4]

- Calculate cumulative variance explained by components before the elbow [11]

- Compare with parallel analysis results if available [6]

- Consider domain knowledge and analysis objectives for final decision [4]

In Table 1, despite three components having eigenvalues >1 (Kaiser's Rule), the scree plot might suggest that only two components represent the true elbow, demonstrating how these methods can yield different recommendations that require researcher judgment [11].

Practical Applications in Scientific Research

Drug Development and Biomarker Discovery

Scree plots play a crucial role in pharmaceutical research where high-dimensional data is prevalent:

- Genomic and Transcriptomic Data: PCA with scree plot analysis helps reduce dimensionality from thousands of genes to manageable components while preserving biological signal [12]

- Proteomic Profiling: Identifying major patterns in protein expression data for biomarker discovery [13]

- Chemical Compound Analysis: Analyzing spectroscopic data to identify major molecular components in drug formulations [14]

For example, in a study predicting breast cancer using PCA with logistic regression, scree plot analysis helped determine the optimal number of components to retain from six clinical and radiological features, including mean radius, texture, perimeter, and area of breast lumps [10].

Data Visualization and Exploration

When the primary goal of PCA is data visualization, researchers typically select exactly 2 or 3 principal components regardless of the elbow position, as these can be directly visualized in 2D or 3D plots [4]. This approach sacrifices statistical optimality for interpretability, allowing researchers to identify clusters, outliers, and patterns in complex datasets.

Troubleshooting and Methodological Considerations

Common Challenges in Scree Plot Interpretation

- Multiple Elbows: Some scree plots exhibit several points where the slope changes, making elbow identification ambiguous [9]

- Gradual Slope Transitions: Without a clear elbow, the scree plot may show a smooth, gradual decline, providing no obvious cutoff point [7]

- Subjectivity: Different researchers may identify different elbow points in the same scree plot [9]

Solutions and Advanced Approaches

- Kneedle Algorithm: Computational method that automatically detects knees (elbows) in curves by finding points of maximum curvature [9]

- Combined Criteria: Using scree plots alongside other methods like parallel analysis or variance thresholds [6] [4]

- Validation Techniques: Assessing stability of component selection through cross-validation or bootstrap methods [6]

Essential Research Reagent Solutions

Table 3: Key Computational Tools for Scree Plot Analysis

| Tool/Software | Application Context | Key Functions | Implementation Example |

|---|---|---|---|

| R Statistical Software | General statistical analysis | Comprehensive PCA and visualization | plot(fit <- princomp(mydata, cor=TRUE)) [6] |

| Python Scikit-learn | Machine learning applications | PCA with automated variance calculation | PCA(n_components=0.85) # keeps 85% variance [4] |

| factoextra R Package | Enhanced visualization | Specialized scree plot generation | fviz_eig(pca_model, addlabels=TRUE) [4] |

| SpectroChemPy | Spectroscopic data analysis | Domain-specific PCA implementation | pca.screeplot() [14] |

| MATLAB | Engineering and signal processing | Matrix computations and eigenanalysis | Minka's PCA dimensionality toolbox [6] |

Scree plots remain an essential tool in the multivariate analysis toolkit, providing researchers across scientific domains with a visually intuitive method for determining the optimal number of components in PCA. While the technique has acknowledged limitations regarding subjectivity, when combined with complementary criteria like Kaiser's rule and parallel analysis, it offers a robust framework for balancing information retention with model parsimony.

For drug development professionals and researchers working with high-dimensional biological data, mastering scree plot interpretation represents a critical skill in the era of big data analytics. By following the standardized protocols outlined in this article and leveraging the appropriate computational tools, scientists can make informed decisions about component selection that enhance the validity and interpretability of their multivariate analyses.

Historical Origins and Theoretical Foundations

The scree plot, a cornerstone of multivariate statistics, was introduced by Raymond B. Cattell in 1966 in his seminal paper, "The Scree Test For The Number Of Factors," published in Multivariate Behavioral Research [15] [9]. This graphical tool was designed to address a fundamental challenge in exploratory factor analysis (EFA) and principal component analysis (PCA): determining the optimal number of components or factors to retain from a dataset [15] [9].

Cattell coined the term "scree" from the geological word for the accumulation of loose stones or debris at the base of a mountain cliff [15]. He provided the following rationale for the name:

"Such a plot falls first in a steep curve but then straightens out in a line which runs with only trivial and irregular deviations from straightness to the nth factor… This straight end portion we began calling the scree—from the straight line of rubble and boulders which forms at the pitch of sliding stability at the foot of a mountain. The initial implication was that this scree represents a 'rubbish' of small error factors" [15].

The theoretical underpinning of the scree test is that the variance in observed data can be partitioned into two distinct parts: common variance and unique variance. Cattell proposed that the scree plot visually separates the few major factors or components (the "mountain") that represent common variance shared across multiple variables from the numerous minor factors (the "scree") that represent unique or error variance specific to individual variables [15] [16]. This conceptual framework provides a principled approach for distinguishing psychologically or scientifically meaningful factors from those attributable to random error or measurement specificity.

The Scree Test in Principal Component Analysis

Relationship between PCA and Scree Plots

Principal Component Analysis (PCA) is a linear dimensionality reduction technique that transforms data to a new coordinate system, with the first principal component capturing the largest variance in the data, the second the next largest, and so on [17]. The scree plot serves as a critical diagnostic tool in PCA by visualizing the eigenvalues associated with each successive component, which represent the magnitude of variance each component explains [11] [9].

The fundamental connection between PCA and scree plots lies in how eigenvalues are calculated and interpreted. In PCA, eigenvalues are derived from the covariance or correlation matrix of the data and represent the variances of the principal components [17] [11]. The scree plot graphically displays these eigenvalues in descending order, allowing researchers to identify the point where the explained variance drops off markedly [9].

Visualizing the Scree Test Logic

The following diagram illustrates the logical workflow and decision process when applying Cattell's scree test to determine the number of principal components to retain.

Figure 1: Logical workflow for applying Cattell's scree test in PCA

Quantitative Framework for Component Selection

The scree test operates within a specific quantitative framework where eigenvalues serve as the fundamental metric for decision-making. The following table summarizes key statistical measures used in conjunction with scree plots for component selection.

Table 1: Key Quantitative Metrics in PCA and Scree Plot Interpretation

| Metric | Calculation | Interpretation in Scree Plot | Role in Component Selection |

|---|---|---|---|

| Eigenvalue | Variance of each principal component [11] | Y-axis value; represents "size" of each component [4] [11] | Components with larger eigenvalues are more meaningful; Kaiser criterion suggests retaining eigenvalues >1 [11] |

| Proportion of Variance | (Eigenvalue / Total Variance) × 100 [11] | Height of each bar in a variance explained plot [4] | Indicates individual contribution of each component to total variance explained [11] |

| Cumulative Variance | Sum of proportions up to current component [11] | Step-line in a cumulative variance plot [4] | Helps determine if retained components explain sufficient total variance (often 70-90%) [11] |

| Component Number | Sequence of components (1 to p) | X-axis value; ordered from largest to smallest eigenvalue [9] | Determines position relative to "elbow"; components before elbow are retained [9] |

Practical Application and Protocol

Step-by-Step Experimental Protocol

The following protocol provides a detailed methodology for implementing the scree test in PCA, suitable for researchers across various disciplines including pharmaceutical research and biomarker discovery.

Protocol 1: Implementing Cattell's Scree Test for Principal Component Selection

Purpose: To determine the optimal number of principal components to retain using Cattell's scree test methodology.

Materials and Software Requirements:

- Dataset with n observations and p variables

- Statistical software with PCA capability (e.g., R, Python with scikit-learn, Minitab)

- Visualization tools for creating scree plots

Procedure:

Data Preparation:

- Standardize variables if using correlation matrix for PCA (essential when variables have different units or scales) [11].

- Ensure sample size adequacy (generally n > 50 and n > 5×p for stable results).

Initial PCA Execution:

Scree Plot Construction:

Visual Inspection and Elbow Identification:

- Examine the plot for a distinct "elbow" or point of maximum curvature where the slope changes from steep to gradual [15] [9].

- Identify the component number immediately before this elbow point.

- Retain all components to the left of this point (higher eigenvalues) as meaningful [9].

- Discard components to the right of this point (the "scree") as representing error variance [15].

Validation and Interpretation:

- Calculate cumulative variance explained by retained components to ensure sufficient explanatory power (typically 70-90% for most applications) [11].

- Compare with other retention criteria (e.g., Kaiser rule, parallel analysis) for consensus [4] [11].

- Interpret retained components by examining variable loadings (eigenvectors) to assign meaningful conceptual labels [11].

Troubleshooting Notes:

- For ambiguous plots with multiple elbows, consider domain knowledge, theoretical expectations, or more objective methods like parallel analysis [15] [9].

- If the scree plot shows a smooth curve without a clear elbow, this may indicate the absence of strong factor structure in the data.

- When different scaling methods produce different plots, report the method used and consider consistency across methods [9].

Research Reagent Solutions for PCA Implementation

Table 2: Essential Analytical Tools for PCA and Scree Plot Implementation

| Tool/Software | Specific Function | Application Context | Implementation Example |

|---|---|---|---|

| Statistical Software (R) | prcomp(), princomp() functions for PCA; fviz_eig() from factoextra for visualization [4] [18] |

Comprehensive statistical analysis and visualization | fviz_eig(pca_model, addlabels=TRUE, linecolor="Red", ylim=c(0,50)) creates scree plot with variance percentages [4] |

| Python Scikit-learn | PCA() class from sklearn.decomposition [4] |

Machine learning pipelines and data preprocessing | pca.explained_variance_ returns eigenvalues; pca.n_components_ shows selected components [4] |

| Minitab Statistical Software | Eigenanalysis and scree plot generation [11] | Quality control and industrial statistics | Provides eigenvalues, proportions, cumulative variance, and eigenvectors in standardized output [11] |

| Kaiser Criterion | Automated component selection based on eigenvalue >1 rule [11] | Initial screening and comparison with scree test results | Useful when combined with scree plot; sometimes retains slightly different number of components [4] [11] |

Comparative Analysis and Methodological Integration

Comparison of Component Retention Methods

The scree test should not be used in isolation but rather as part of a comprehensive approach to component retention. The following table compares major retention methods, highlighting their relative strengths and limitations.

Table 3: Comparative Analysis of Component/Factor Retention Methods

| Method | Theoretical Basis | Implementation | Advantages | Limitations |

|---|---|---|---|---|

| Scree Test (Cattell, 1966) | Visual identification of break point between major components and error factors [15] [9] | Subjective visual inspection of eigenvalue plot [15] | Intuitively appealing; based on structure of own data; identifies meaningful break points [15] | Subjective; multiple elbows possible; unreliable without clear break; axis scaling affects appearance [9] |

| Kaiser Criterion (Kaiser, 1960) | Retain components with eigenvalues >1 (if using correlation matrix) [11] | Automated threshold application | Objective; easy to implement; widely available in software [11] | Often overfactors or underfactors; particularly problematic with many variables (>50) [4] |

| Variance Explained | Retain components until predetermined variance percentage reached (e.g., 70-90%) [11] | Cumulative proportion calculation | Pragmatic; ensures sufficient information retention; application-specific [11] | Arbitrary threshold; may include trivial components or exclude meaningful ones [4] |

| Parallel Analysis (Horn, 1965) | Compare actual eigenvalues with those from random data [19] | Simulation with random datasets | More objective; controls for sampling error; good accuracy [19] | Computationally intensive; not always available in software; implementation variations exist [19] |

Integration with Broader Research Workflow

The scree test functions within a comprehensive analytical workflow for multivariate data analysis. The following diagram illustrates how Cattell's scree test integrates with other methodological approaches in a typical research pipeline for optimal component selection.

Figure 2: Integration of scree test within comprehensive component selection workflow

Critical Evaluation and Contemporary Applications

Methodological Limitations and Refinements

Despite its enduring popularity, the scree test has faced methodological criticisms that researchers must acknowledge:

Subjectivity Concerns: The identification of the "elbow" point remains inherently subjective, with different analysts potentially identifying different break points on the same plot [9]. This inter-rater variability can affect the reliability of results, particularly in regulatory contexts where methodological consistency is valued.

Multiple Elbow Ambiguity: Some scree plots display multiple points of curvature, creating uncertainty about which elbow represents the true break between meaningful components and scree [15] [9]. This situation often arises in complex datasets with hierarchical factor structures.

Scale Sensitivity: The visual appearance of scree plots can vary depending on the scaling of axes, particularly the y-axis range, potentially influencing elbow identification [9]. This lack of standardization complicates cross-study comparisons.

Retention Conservatism: Evidence suggests the scree test may sometimes retain too few components, potentially excluding meaningful factors that explain substantively important variance [9].

Recent computational advances have addressed these limitations through objective algorithmic approaches. The Kneedle algorithm formalizes elbow detection by identifying the point of maximum curvature mathematically, reducing subjectivity [9]. Similarly, parallel analysis enhances objectivity by comparing actual eigenvalues to those derived from random datasets [19].

Applications in Pharmaceutical and Biomedical Research

The scree test maintains particular relevance in pharmaceutical and biomarker research where dimensionality reduction precedes critical analyses:

Biomarker Discovery: In high-throughput genomic, proteomic, and metabolomic studies, scree tests help identify the minimal number of components that capture majority of variance in biomarker panels, facilitating development of simplified diagnostic models.

Clinical Outcome Assessment: During patient-reported outcome (PRO) measure validation, scree tests determine the dimensionality of underlying constructs, ensuring measurement tools adequately capture relevant health domains without overfactoring.

Drug Response Profiling: In pharmacogenomic studies, scree tests assist in identifying dominant patterns of drug response variability, potentially corresponding to distinct molecular subtypes with therapeutic implications.

Quality by Design (QbD): In pharmaceutical manufacturing, scree tests help identify critical process parameters (CPPs) from multivariate process data by distinguishing influential factors from noise in process analytical technology (PAT) datasets.

The enduring utility of Cattell's scree test across these diverse applications stems from its intuitive visual framework for distinguishing signal from noise—a fundamental challenge in all scientific domains dealing with complex multivariate systems.

The scree plot is a foundational graphical tool used primarily in principal component analysis (PCA) and factor analysis to aid in selecting the optimal number of components or factors to retain. Originally proposed by Raymond Cattell in 1966, the technique visualizes the eigenvalues associated with each component, ordered from largest to smallest, to reveal the underlying variance structure of multivariate data [15]. The name "scree" derives from the characteristic rock debris found at the base of mountains, metaphorically representing the point where eigenvalues transition from the steep "mountain face" of meaningful components to the flat "rubble" of trivial variance [15]. For researchers in drug development and biomedicine, proper interpretation of scree plots enables more scientifically defensible decisions about dimensionality reduction, ensuring that captured components represent genuine biological signals rather than random noise.

In practical applications across omics sciences and pharmaceutical research, the scree plot provides an intuitive visual method for balancing parsimony against information retention. By identifying an inflection point known as the "elbow" or "knee," analysts can determine when additional components contribute diminishing returns to explained variance [7]. This approach is particularly valuable for gene expression analysis, spectroscopic data, and clinical biomarker studies where high-dimensional datasets require simplification without sacrificing critical biological information [20]. The subjective nature of traditional scree plot interpretation has spurred development of more formalized criteria, yet its enduring popularity across scientific disciplines underscores its fundamental utility for exploring multivariate data structure.

Theoretical Foundation and Interpretation Criteria

Conceptual Framework of Variance Decomposition

Principal component analysis operates on the fundamental principle of explaining maximum variance through orthogonal linear transformations of original variables. Each eigenvalue (λi) derived from the covariance or correlation matrix represents the proportion of total variance captured by its corresponding component [21]. Mathematically, if we have a scaled covariance matrix X′X/(NT) with eigenvalues λ1,N ≥ λ2,N ≥ ... ≥ λN,N, the total variance equals the sum of all eigenvalues, standardized to 1 for correlation-based PCA [21]. The scree plot simply visualizes these eigenvalues in descending order of magnitude, creating a characteristic downward curve that reveals the relative importance of successive components.

The theoretical justification for the elbow method rests on distinguishing systematic variation from random noise. Components preceding the elbow theoretically represent structured variance reflecting genuine relationships among variables, while those following the elbow primarily represent random error or noise [13]. In biological datasets, this distinction corresponds to separating technical artifacts and stochastic variation from meaningful biological signals. The scree plot thus serves as a diagnostic for determining the intrinsic dimensionality of a dataset—the number of components needed to capture its essential structure before encountering diminishing returns.

Established Interpretation Methods

Table 1: Criteria for Scree Plot Interpretation in Component Selection

| Method | Key Principle | Implementation | Advantages | Limitations |

|---|---|---|---|---|

| Traditional Elbow | Visual identification of inflection point | Locate point where slope changes from steep to flat | Intuitive; No calculations needed | Subjective; Multiple elbows possible |

| Kaiser-Guttman | Retain components with eigenvalues >1 | Calculate eigenvalues from correlation matrix | Objective; Easy to implement | Often overestimates components in high-dim data |

| Variance Explained | Cumulative proportion of total variance | Retain components until ~80-90% variance explained [22] | Directly addresses information retention | Arbitrary threshold; Sample size dependent |

| Parallel Analysis | Comparison to random data eigenvalues | Simulate data with no factors; retain components exceeding random eigenvalues [13] | Statistical foundation; Reduces overfitting | Computationally intensive; Requires simulations |

The most straightforward approach to scree plot interpretation remains Cattell's original visual method, which seeks the point where the steep decline in eigenvalues transitions to a more gradual slope [7]. This "elbow" represents the optimal trade-off between parsimony and comprehensiveness. For example, in a PCA of the 50-item Big Five Personality Inventory, the scree plot typically shows a distinct elbow after five components, corresponding to the theoretical five-factor structure of personality [15]. Similarly, analysis of Fisher's iris dataset reveals that the first two principal components explain approximately 96% of the total variance, with subsequent components contributing minimally [23].

The Kaiser-Guttman criterion (eigenvalue >1) provides a simple quantitative alternative, particularly when scree plots show ambiguous patterns [7] [22]. However, this method tends to overestimate components in high-dimensional datasets like gene expression arrays, where most variables exhibit minimal correlation [20]. The proportion of variance explained approach sets a predetermined threshold (commonly 80-90%) and selects the minimum number of components needed to reach this threshold [22]. This method directly addresses the information retention goal of dimensionality reduction but relies on an arbitrary cutoff that may not reflect underlying data structure.

Experimental Protocols and Implementation

Standardized Workflow for Scree Plot Analysis

Diagram 1: Standardized workflow for scree plot analysis from data preparation through final component selection decision.

Detailed Protocol for PCA and Scree Plot Generation

Protocol 1: Principal Component Analysis with Scree Plot Visualization

This protocol outlines the complete procedure for performing PCA and generating scree plots for component selection, with specific examples from gene expression analysis and pharmaceutical applications.

Data Preparation and Preprocessing

- Standardize variables if using a correlation matrix (recommended when variables have different units of measurement) [23]

- Center variables by subtracting means (automatically performed by most PCA algorithms)

- Address missing data through imputation or casewise deletion

- For gene expression data: apply appropriate normalization (e.g., quantile normalization) before PCA [20]

Matrix Decomposition and Eigenvalue Calculation

- Compute the covariance matrix X′X/(N-1) for centered data or correlation matrix for standardized data

- Perform eigenvalue decomposition: X′X/(N-1) = VΛV′ where Λ = diag(λ1, λ2, ..., λ_p) contains eigenvalues

- Sort eigenvalues in descending order: λ1 ≥ λ2 ≥ ... ≥ λ_p

- Calculate proportion of variance explained: Variancei = λi/∑{j=1}^p λj

- Compute cumulative variance explained: Cumulativei = ∑{j=1}^i λj/∑{j=1}^p λ_j

Scree Plot Generation and Visualization

- Create a line plot with component numbers on x-axis and corresponding eigenvalues on y-axis

- Use adequate labeling: "Principal Component" on x-axis, "Eigenvalue" or "Variance Explained" on y-axis [22]

- Add reference lines: horizontal line at eigenvalue=1 (Kaiser criterion) and vertical lines at potential elbows

- For enhanced interpretation, create dual-panel plots showing both individual and cumulative variance [22]

Implementation in Statistical Software

- R: Use

prcomp()orprincomp()functions, withscreeplot()for visualization [13] - Python: Utilize

sklearn.decomposition.PCA()with manual plotting ofexplained_variance_attribute [22] - SAS: Implement

PROC PRINCOMPwithplots=screeoption [23] - Specialized packages:

bio3dfor biological data,veganfor ecological applications [24]

- R: Use

Research Reagents and Computational Tools

Table 2: Essential Research Reagent Solutions for Scree Plot Analysis

| Tool/Category | Specific Examples | Function/Purpose | Implementation Considerations |

|---|---|---|---|

| Statistical Environments | R, Python with scikit-learn, SAS PROC PRINCOMP | Provides PCA algorithms and eigenvalue calculation | R offers extensive visualization; Python integrates with machine learning workflows |

| Visualization Packages | ggplot2 (R), matplotlib (Python), SAS ODS Graphics | Creates publication-quality scree plots | Customize colors, labels, and reference lines for clear interpretation |

| Specialized PCA Modules | bio3d (R), scikit-bio (Python), MULTBIPLOT (SAS) | Implements domain-specific variations and enhancements | Bio3d particularly suited for molecular and structural biology data |

| Data Preprocessing Tools | PreProcessCore (R), sklearn.preprocessing (Python) | Handles normalization, scaling, and missing data | Critical for omics data where normalization significantly impacts results |

| Benchmarking Methods | Parallel analysis, permutation tests, factor congruence | Provides objective validation of visual elbow selection | Parallel analysis compares eigenvalues to random data expectation [13] |

Advanced Applications and Methodological Extensions

Extended Application Across Methodological Domains

The elbow method and scree plot interpretation have expanded beyond traditional factor analysis to diverse applications in computational biology and pharmaceutical research. In nonnegative matrix factorization (NMF) for gene expression analysis, the Unit Invariant Knee (UIK) method adapts the elbow approach to determine optimal factorization rank by identifying inflection points in the residual sum of squares [20]. This application demonstrates how the fundamental elbow concept transfers to related dimensionality reduction techniques, providing objective criteria for rank selection in matrix factorization problems.

In factor mixture modeling (FMM), researchers face the challenge of class enumeration—determining the correct number of latent classes in heterogeneous populations. The elbow plot method has been adapted for this context by plotting information criterion values (AIC, BIC) against the number of classes rather than eigenvalues against components [25]. Simulation studies demonstrate that this approach correctly identifies the generating model 90% of the time for two- and three-class FMMs, performing particularly well compared to alternative criteria in biologically plausible scenarios [25].

Formal Mathematical Frameworks for Elbow Detection

Diagram 2: Formal elbow detection methodologies extending beyond visual interpretation, showing relationships between mathematical approaches and their primary application domains.

Recent methodological developments have formalized the intuitive elbow concept through mathematical frameworks. One approach compares surfaces under the scree plot, operationalizing Cattell's "steep" versus "not steep" distinction by analyzing differences in consecutive eigenvalue products [21]. Formally, this method examines the sequence DJN(k) = (k+1)λ{k+1,N} - kλ{k,N}, where λ{k,N} represents the k-th largest eigenvalue, identifying the elbow where this difference stabilizes [21]. This quantitative approach reduces subjectivity while maintaining the scree test's conceptual foundation.

The Unit Invariant Knee (UIK) method represents another formalization, specifically designed for rank selection in NMF of gene expression data [20]. Rather than relying on visual inspection, UIK algorithmically identifies the first inflection point in the curvature of the residual sum of squares, corresponding to the point of maximum deceleration in variance explanation. This approach offers computational efficiency and objectivity while avoiding arbitrary threshold parameters that plague alternative metrics like the cophenetic correlation coefficient [20].

Comparative Analysis and Validation Framework

Empirical Performance Across Methodological Contexts

Table 3: Performance Comparison of Elbow Detection Methods Across Applications

| Application Context | Optimal Method | Performance Metrics | Key Considerations | Reference Examples |

|---|---|---|---|---|

| Gene Expression NMF | Unit Invariant Knee (UIK) | Computational efficiency; Agreement with known dimensions | Superior to cophenetic metric; Free from prior rank input | Acute lymphoblastic leukemia data [20] |

| Factor Mixture Models | Elbow plot of BIC values | 90% accuracy for 2-3 class models | Outperforms lowest value criterion for simple structures | Personality assessment data [25] |

| Traditional PCA | Parallel analysis with scree plot | Minimizes overfactoring; Statistical justification | More robust than Kaiser criterion alone | Big Five Inventory [15] |

| Clinical Biomarker PCA | Variance explained (80-90%) with scree validation | Biological interpretability; Clinical relevance | Balances statistical and practical considerations | Iris dataset [23] [22] |

Empirical evaluations across diverse methodological contexts reveal that elbow-based methods demonstrate strong performance when appropriately matched to analytical goals. In factor mixture models for psychological assessment, the elbow plot method correctly identified generating models with 90% accuracy for two- and three-class conditions, outperforming the lowest value criterion and difference methods in these biologically plausible scenarios [25]. However, performance diminished for complex four-class conditions with two factors, highlighting the importance of context-specific method selection.

For gene expression analysis utilizing nonnegative matrix factorization, the Unit Invariant Knee method demonstrated significant computational advantages over consensus matrix-based approaches while maintaining accuracy against simulated data with known dimensions [20]. This combination of efficiency and objectivity makes formalized elbow methods particularly valuable for high-dimensional biological data where visual inspection becomes impractical and computational efficiency is paramount.

Validation Protocols and Goodness-of-Fit Assessment

Bootstrap Validation for Elbow Stability

- Generate multiple bootstrap samples from original dataset

- Perform PCA on each resample and construct corresponding scree plots

- Calculate consistency of elbow location across resamples

- High variation in elbow position suggests unreliable component selection

Parallel Analysis Implementation

- Simulate uncorrelated random datasets matching original data dimensions

- Perform PCA on simulated data and compute average eigenvalues

- Plot simulated eigenvalues alongside observed eigenvalues

- Retain components where observed eigenvalues exceed simulated eigenvalues [13]

Goodness-of-Fit and Interpretability Checks

- Examine residuals after extracting selected components

- Assess biological plausibility of component loadings

- For gene expression: check enrichment of component-associated genes in functional pathways

- For clinical data: validate component associations with external clinical variables

The integration of multiple validation approaches strengthens component selection decisions, particularly when different criteria suggest conflicting solutions. Residual analysis provides diagnostic information about model adequacy, with standardized residuals greater than 2 indicating potential misfit [13]. Parallel analysis offers statistical justification by comparing observed eigenvalues to those expected from random data, reducing capitalization on chance patterns [13]. Most critically in pharmaceutical and biological applications, component solutions must demonstrate interpretability within established biological frameworks, ensuring that statistical dimensions correspond to meaningful biological constructs.

Scree plot analysis remains an essential tool for determining intrinsic data dimensionality across biological and pharmaceutical research contexts. The visual elbow method provides an accessible starting point, while formal extensions like the Unit Invariant Knee method offer objective, computationally efficient alternatives for high-throughput applications. Successful implementation requires matching method selection to analytical goals—favoring variance-explained thresholds for clinically oriented studies, parallel analysis for exploratory psychometrics, and algorithmic approaches for genomic applications requiring objectivity and efficiency.

For researchers implementing these techniques, a sequential approach combining multiple criteria typically yields the most defensible results. Begin with visual scree plot examination to identify candidate solutions, then apply appropriate quantitative criteria (variance explained, parallel analysis, or UIK) for validation. Finally, assess the biological interpretability and stability of proposed solutions through resampling and external validation. This comprehensive approach leverages both the intuitive appeal of traditional scree plots and the statistical rigor of contemporary extensions, ensuring component selection decisions that are both mathematically sound and scientifically meaningful within drug development and biomedical research contexts.

Within the framework of research on selecting the optimal number of principal components (PCs), the scree plot stands as a foundational graphical tool introduced by Raymond Cattell in 1966 [15] [9]. Its primary function is to aid in determining the dimensionality of a dataset in analyses like Principal Component Analysis (PCA) and Exploratory Factor Analysis (EFA). This protocol directly compares the scree plot method against a prevalent variance-based method—the cumulative variance threshold—evaluating their theoretical bases, applications, and performance in practical research scenarios, particularly for scientific and drug development professionals.

The core challenge in PCA is to balance parsimony and information retention. While simple variance thresholds offer an objective criterion, the scree plot provides a visual assessment of the underlying data structure, making the choice between them context-dependent [11] [26].

Theoretical Foundations

The Scree Plot Method

A scree plot is a line graph that displays the eigenvalues of principal components in descending order of magnitude [27]. The name "scree," derived from geology, refers to the loose rock debris that accumulates at the base of a mountain, metaphorically representing the point where eigenvalues level off and form a straight line of "rubbish" components [15]. The key to interpretation lies in identifying the "elbow" or inflection point—the location where the steep decline in eigenvalues transitions to a gradual flattening [7] [9]. Components to the left of this elbow are considered meaningful and are retained for further analysis.

The Cumulative Variance Threshold Method

This method involves selecting the smallest number of principal components such that their cumulative explained variance meets or exceeds a pre-defined threshold [11] [28]. Common thresholds in practice are 80%, 90%, or 95% of the total variance [26]. The proportion of variance explained by each component is calculated as its eigenvalue divided by the sum of all eigenvalues [27]. This approach provides an objective and easily automatable criterion for component selection.

Comparative Theoretical Basis

| Feature | Scree Plot Method | Cumulative Variance Method |

|---|---|---|

| Underlying Principle | Visual identification of the point where eigenvalues from meaningful components transition to "rubbish" components [15] | Achieving a pre-specified level of information retention (variance explained) [11] |

| Primary Output | A suggested number of components, ( k ), based on the elbow [7] | A suggested number ofcomponents, ( k ), based on a variance threshold [28] |

| Key Strength | Reflects the inherent structure and dimensionality of the data [27] | Simple, objective, and ensures a measurable level of information preservation [11] |

| Key Weakness | Subjective interpretation can lead to ambiguity, especially with multiple elbows [9] | Does not directly assess the true dimensionality and may retain noise to meet the threshold [26] |

Experimental Protocols

Protocol A: Generating and Interpreting a Scree Plot

This protocol details the steps for creating a scree plot from a multivariate dataset, such as gene expression data or protein structural variables [27] [26].

Procedure:

- Data Standardization: Standardize the data matrix ( X ) (with ( p ) variables and ( n ) observations) to have a mean of zero and unit variance for each variable. This is crucial when variables are on different scales [29].

- Compute Covariance/Correlation Matrix: Calculate the ( p \times p ) covariance matrix (if variables are on comparable scales) or correlation matrix (for standardized data) [27] [26].

- Eigenvalue Decomposition: Perform eigenvalue decomposition on the matrix to extract eigenvalues ( \lambda1 \geq \lambda2 \geq \dots \geq \lambda_p \geq 0 ) and corresponding eigenvectors [27].

- Plot Eigenvalues: Create a line plot with the component index (1 to ( p )) on the x-axis and the corresponding eigenvalue on the y-axis [7].

- Identify the Elbow: Visually inspect the plot for the point of maximum curvature where the steep slope transitions to a flatter line. The components before this elbow are retained [9].

Protocol B: Applying a Cumulative Variance Threshold

This protocol provides an objective, non-visual method for selecting the number of components [11] [28].

Procedure:

- Execute Steps 1-3 of Protocol A: Standardize the data and compute eigenvalues.

- Calculate Variance Proportions: For each component ( i ), compute the proportion of variance explained: ( \frac{\lambdai}{\sum{j=1}^{p} \lambda_j} ) [27].

- Calculate Cumulative Variance: For the ( k )-th component, compute the cumulative variance: ( \frac{\sum{i=1}^{k} \lambdai}{\sum{j=1}^{p} \lambdaj} ) [11].

- Apply Threshold: Determine the smallest integer ( k ) for which the cumulative variance meets or exceeds the chosen threshold (e.g., 80%, 90%, 95%) [26] [28].

Data Presentation and Comparison

Quantitative Comparison of Methods

The following table synthesizes key performance metrics for the two methods based on a review of the literature and practical applications [7] [11] [26].

Table 1: Comparative Analysis of Component Selection Methods

| Criterion | Scree Plot | Cumulative Variance Threshold |

|---|---|---|

| Objectivity | Low (subjective visual interpretation) [9] | High (precise numerical criterion) [11] |

| Ease of Automation | Low | High [28] |

| Handling of Ambiguous Cases | Poor (multiple elbows complicate decisions) [9] | Good (provides a unambiguous answer) |

| Information Preservation Guarantee | None directly | Explicit (e.g., ensures 90% variance kept) [11] |

| Sensitivity to Data Dimensionality | High (plot shape changes with ( p )) [27] | Low (robust across different ( p )) |

| Commonly Cited Performance | Often agrees with Kaiser criterion (eigenvalue >1) in clear cases [7] | Effective for descriptive purposes at ~80%; requires ≥90% for subsequent analyses [11] |

Empirical Example from a Protein Dynamics Study

In a PCA of a protein trajectory using alpha carbon atoms, the scree plot showed a distinct kink (elbow) after the first 20 modes. These 20 modes defined the "essential space," capturing the large-scale motions governing biological function. A cumulative variance threshold of 80% might have retained fewer components, potentially omitting biologically relevant but lower-variance motions, while a 95% threshold might have retained over 100 components, many representing small-scale noise [26]. This demonstrates the scree plot's utility in identifying a parsimonious and biologically meaningful subspace.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for PCA and Scree Plot Analysis

| Tool / Resource | Function / Description | Example Use Case |

|---|---|---|

| Statistical Software (R/Python) | Provides the computational environment for performing PCA, eigenvalue decomposition, and visualization [27] [29]. | R's prcomp() or princomp() functions; Python's sklearn.decomposition.PCA. |

| Standardization Algorithm | Pre-processing step to center and scale variables to mean=0 and variance=1 [29]. | Essential when variables are on different scales (e.g., gene expression levels vs. clinical measurements). |

| Eigenvalue Decomposition Solver | The numerical linear algebra core that computes eigenvalues and eigenvectors from a covariance/correlation matrix [27]. | Automated within PCA functions of standard statistical packages. |

| Visualization Package | Generates the scree plot and other diagnostic graphs (e.g., cumulative variance plot) [28]. | R's ggplot2 for custom plots; Python's matplotlib. |

| Parallel Analysis Script | A more objective alternative/complement to the scree test that compares data eigenvalues to those from random data [6]. | Used to validate the number of components suggested by the scree plot, reducing subjectivity. |

Integrated Workflow for Component Selection

The following diagram synthesizes the protocols into a recommended decision framework for researchers.

The choice between a scree plot and a simple variance threshold is not mutually exclusive. A robust analysis should employ both methods as complementary diagnostic tools [11] [28] [6].

- Use a Scree Plot When: The primary goal is to discover the inherent dimensionality of the data, especially during the exploratory phase of research. It is particularly valuable in fields like bioinformatics and protein dynamics where the goal is to identify a small set of "essential" components that capture major structural or functional shifts, even if they don't explain an arbitrarily high percentage of variance [26].

- Use a Variance Threshold When: The analysis requires an objective, reproducible criterion for component selection. It is ideal for automated pipelines, when a specific amount of information retention is required for downstream analysis, or for descriptive purposes where explaining a fixed amount of variance (e.g., 80-90%) is sufficient [11].

For research demanding high confidence, the scree plot should be the starting point for hypothesis generation about data dimensionality, with its suggestion validated against a variance threshold and other objective methods like parallel analysis [6]. This integrated approach ensures that model parsimony is achieved without sacrificing critical, domain-relevant information.

From Theory to Practice: A Hands-On Guide to Generating and Interpreting Scree Plots in R and Python

This application note provides a detailed protocol for the critical data preprocessing step of standardization and centering prior to Principal Component Analysis (PCA) in clinical research. Proper preprocessing is fundamental for generating reliable scree plots and accurately determining the optimal number of principal components, which directly impacts the validity of downstream analyses in drug development and biomarker discovery. We present experimental validation demonstrating that inappropriate preprocessing can lead to misinterpretation of data structure, ultimately compromising research conclusions. The guidelines herein are designed to ensure that PCA outcomes are both biologically meaningful and statistically robust.

Principal Component Analysis (PCA) is a powerful dimensionality reduction technique widely used to analyze high-dimensional clinical datasets, such as genomic profiles, patient health records, and medical imaging data [8] [30]. By transforming original variables into a new set of uncorrelated variables (principal components), PCA helps identify key patterns, trends, and sources of variation within complex biological systems [31].

The process of PCA is highly sensitive to the variances of the initial variables [8]. Clinical data often contain variables measured on different scales (e.g., blood glucose levels in mmol/L, gene expression counts, and age in years). If variables with larger numerical ranges are not standardized, they will dominate the PCA procedure, potentially obscuring biologically relevant patterns from variables with smaller ranges [8] [32]. Standardization and centering correct for this by ensuring all variables contribute equally to the analysis. This preprocessing step is not merely a technical formality but a crucial determinant for the accurate interpretation of scree plots and the correct selection of principal components that capture genuine biological signal rather than measurement artifacts [30].

The Critical Role of Preprocessing in PCA

The Problem of Differing Scales in Clinical Data

In clinical and biomarker research, datasets are inherently heterogeneous. Consider a simple dataset containing:

- Binary or Categorical Variables: Presence or absence of a genetic mutation (0 or 1), patient sex (0/1).

- Ordinal Variables: Disease severity scores ranging from 0 to 5.

- Continuous Variables with Large Ranges: Serum cholesterol levels (e.g., 150-300 mg/dL), gene expression values spanning several orders of magnitude.

Without preprocessing, a variable like serum cholesterol will exert a disproportionately large influence on the principal components compared to a binary variable, simply due to its numerical range [32]. The PCA algorithm, which operates by maximizing variance in the derived components, will be biased towards variables with larger scales, as they contribute more to the total variance calculated in the sum of squares [32]. This can lead to a misleading representation where the first few principal components primarily reflect scale differences rather than underlying biological relationships.

The Mathematical Foundation: Covariance vs. Correlation Matrix

The need for standardization is rooted in the mathematics of PCA, which is typically solved via the Singular Value Decomposition (SVD) of the data matrix [32].

- Without Standardization (Covariance Matrix PCA): PCA is performed on the covariance matrix of the original data. The covariance between two variables is scale-dependent, meaning that the resulting principal components will be dominated by the variables with the largest variances.

- With Standardization (Correlation Matrix PCA): Standardizing data (centering and scaling to unit variance) before applying PCA is equivalent to performing PCA on the correlation matrix [8]. This ensures that all variables are treated as equally important, each contributing one unit of variance to the total variance.

Geometrically, centering the data (subtracting the mean) ensures the point swarm is repositioned around the origin of the coordinate system, which is a prerequisite for the "lines and planes of closest fit" that PCA seeks [31]. Scaling then equalizes the "length" of each coordinate axis, creating a uniform spherical space where directions of maximum variance can be identified without bias [31].

Experimental Protocols

Protocol: Data Standardization and Centering for PCA

This protocol details the two-step process for standardizing a clinical data matrix ( X ) with ( N ) rows (observations, e.g., patients) and ( P ) columns (variables, e.g., biomarkers).

Step 1: Centering

The goal of centering is to reposition the data so that its mean is at the origin.

- Calculation: For each variable ( j ) in the data matrix, compute its mean, ( \bar{x}_j ).

- Transformation: Subtract the mean of the variable from each individual value.

- Outcome: The mean of every variable in the centered dataset is zero.

Step 2: Scaling (Standardization to Unit Variance)

The goal of scaling is to adjust the variables so they all have a uniform scale and contribute equally to the analysis.

- Calculation: For each centered variable ( j ), compute its standard deviation, ( s_j ).

- Transformation: Divide each centered value by the standard deviation of its variable.

- ( x{\text{standardized},ij} = \frac{x{\text{centered},ij}}{sj} = \frac{x{ij} - \bar{x}j}{sj} ) [8]

- Outcome: Every standardized variable has a mean of zero and a standard deviation (and variance) of one.

Workflow Visualization

The following diagram illustrates the complete standardization workflow and its role in the broader PCA process for clinical data.

Experimental Validation: Impact of Preprocessing on Simulated Clinical Data

To empirically demonstrate the necessity of standardization, we followed an experimental procedure adapted from a published simulation study [32].

Objective: To visualize how preprocessing choices can create artificial clusters or mask true data structure in a PCA output and subsequent scree plot.

Methodology:

- Data Generation: A dataset was simulated with 200 observations (

N = 200) and 5 continuous variables drawn from a standard normal distribution. A sixth variable was added to represent a dominant clinical variable (e.g., a highly abundant protein or a binary resource index), which took values of 0 or 5 assigned randomly [32]. - Preprocessing & Analysis: The dataset was analyzed under three conditions:

- A. No Preprocessing: PCA was applied directly to the raw data.

- B. Normalization (L2 Norm): Data was normalized using the L2 norm (scaling the magnitude of each observation's vector to 1).

- C. Standardization (Centering & Unit Variance Scaling): Data was processed as per the protocol in Section 3.1.

- Visualization: For each condition, a two-component PCA was performed, and the results were visualized in a score plot. The corresponding scree plots were also generated.

Results:

- Condition A (No Preprocessing): The score plot showed two distinct clusters, entirely driven by the dominant sixth variable. The scree plot suggested a strong, potentially misleading, underlying data structure.

- Condition B (Normalization): The clustering effect persisted, though the plot's appearance changed.

- Condition C (Standardization): The score plot correctly showed a random scatter of points with no apparent clusters, and the scree plot reflected the true, random nature of the data, accurately indicating a lack of strong latent structure [32].

Conclusion: This experiment confirms that standardization is essential to prevent variables with larger scales from dominating the PCA and leading to false conclusions about clustering or data patterns in clinical research.

The Researcher's Toolkit: Reagents and Computational Solutions

Table 1: Essential Tools for Implementing PCA Preprocessing and Analysis.

| Item | Function in PCA Preprocessing | Example Solutions / Notes |

|---|---|---|

| Statistical Software | Provides functions for data centering, scaling, and PCA computation. | R (prcomp(), scale()), Python/Sci-Kit Learn (StandardScaler(), PCA()) [33] [6] |