Navigating Batch Effects in Histone Modification Analysis: From Foundational Concepts to Clinical Translation

This article provides a comprehensive guide for researchers and drug development professionals on managing batch effects in histone modification studies.

Navigating Batch Effects in Histone Modification Analysis: From Foundational Concepts to Clinical Translation

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on managing batch effects in histone modification studies. It covers the foundational principles of why batch effects are a critical concern in epigenomic data, explores established and emerging computational correction methods like ComBat and Harmony, and offers practical troubleshooting strategies to avoid false discoveries. The content further benchmarks performance across different scenarios, including single-cell multi-omics, and discusses how robust batch correction is pivotal for validating biological insights, identifying therapeutic targets, and advancing precision oncology.

Why Batch Effects Compromise Histone Modification Data and Biological Discovery

In histone modification profiling, a batch effect is technical variation introduced into your data during the experimental process, rather than from true biological differences. These non-biological variations arise from differences in sample processing, personnel, reagent lots, sequencing runs, or instrumentation. If not properly identified and corrected, batch effects can lead to false interpretations, masking true biological signals and compromising the validity of your research findings [1].

This guide provides troubleshooting and best practices for researchers to diagnose, address, and prevent batch effects in epigenomic studies.

Understanding Batch Effects: Causes and Impacts

Batch effects originate from multiple sources throughout the experimental workflow. The table below summarizes the primary culprits:

Table: Common Sources of Batch Effects in Histone Modification Profiling

| Source Category | Specific Examples |

|---|---|

| Sequencing Processes | Different sequencing runs, instruments, or lanes [1] |

| Reagent Variations | Changes in antibody lots, reagent batches, or kit manufacturers [1] [2] |

| Sample Handling | Variations in personnel, sample preparation protocols, or transposition time [1] [3] |

| Temporal & Environmental Factors | Experiments conducted on different days, or changes in temperature/humidity [1] |

Why Batch Effects Matter

The impact of batch effects extends across your analysis:

- Differential Analysis: May falsely identify genes that differ between batches rather than biological conditions [1].

- Clustering Algorithms: Can group samples by batch instead of true biological similarity [1].

- Data Integration: Meta-analyses combining data from multiple sources become particularly vulnerable [1].

- Downstream Interpretation: Pathway analysis could highlight technical artifacts instead of meaningful biology [1].

Diagnosing Batch Effects: A Practical Workflow

Visual inspection is a critical first step in diagnosing batch effects. The following workflow provides a systematic approach for researchers.

Key Diagnostic Steps

- Visualize with Principal Component Analysis (PCA): Before any correction, generate a PCA plot colored by batch. If samples cluster primarily by batch rather than biological condition, this confirms significant batch effects [1].

- Examine Negative Controls: In mass spectrometry-based histone analysis, monitor internal control peptides and background signals to distinguish technical artifacts from biological signals [2].

- Check Replicate Concordance: Poor agreement between biological replicates processed in different batches often indicates batch effects. This can manifest in CUT&Tag, ChIP-seq, or other antibody-based enrichment assays due to variable antibody efficiency or sample preparation [3].

Batch Effect Correction Strategies

Two primary approaches exist for handling batch effects: data correction and statistical modeling.

Table: Comparison of Batch Effect Correction Methods

| Method | Underlying Approach | Best For | Considerations |

|---|---|---|---|

| ComBat-seq [1] | Empirical Bayes framework | RNA-seq count data; smaller sample sizes | Uses Bayesian shrinkage to adjust for batch effects |

| limma's removeBatchEffect [1] | Linear model adjustment | Normalized expression data; limma-voom workflows | Well-integrated with established differential expression pipelines |

| Harmony [4] | Iterative clustering in PCA space | Single-cell data; large datasets | Fast runtime; effective for complex cell populations |

| Including Batch as a Covariate [1] | Statistical modeling | Designed experiments; differential analysis | Adjusts for batch during statistical testing without transforming data |

| Mixed Linear Models (MLM) [1] | Fixed and random effects | Complex designs; hierarchical batch effects | Powerful for nested or crossed random effects |

Implementation Notes

- Choice of Method: The optimal method depends on data type (counts vs. normalized), experimental design, and the nature of the batch effects [1].

- Visual Validation: After applying a correction method, regenerate PCA plots. Successful correction should show reduced clustering by batch and improved grouping by biological condition [1].

- Avoid Over-correction: Especially in methods like LIGER, which assume some differences between datasets may be biological, it's crucial not to remove true biological signals [4].

Frequently Asked Questions

Q1: My replicates from different batches show poor agreement. Is this always a batch effect?

Not necessarily. First, verify data quality. For chromatin assays like CUT&Tag or ChIP-seq, check for low read counts, uneven signal distribution, or antibody efficiency issues. If these are ruled out and the clustering is by batch, it is likely a batch effect [3].

Q2: Can I correct for batch effects if I forgot to record batch information during the experiment?

Yes, but it is challenging. Surrogate Variable Analysis (SVA) can estimate unmodeled batch effects. However, proactively recording all experimental metadata is always the best practice [1].

Q3: How many replicates do I need to reliably detect and correct for batch effects in histone PTM analysis?

For mass spectrometry-based histone PTM analysis, evidence suggests at least n=4 per condition is necessary to measure changes of 20% or greater, assuming α=0.05 and power=0.80. Sufficient replicates are crucial for statistical power to distinguish batch effects from biological variation [2].

Q4: I am profiling multiple histone modifications. Should I correct each mark separately?

Generally, yes. Different histone marks have unique distributions and signal-to-noise characteristics. A correction model should be tailored to the specific properties of each mark. For example, broad marks like H3K27me3 require different handling than sharp promoter marks like H3K4me3 [3].

Q5: After batch correction, my negative controls look strange. What could be wrong?

Over-correction might be occurring. Some methods can be aggressive, especially with small sample sizes. Check if the correction preserves known biological patterns. Using a method like ComBat-seq, which borrows information across genes, can be more robust for smaller studies [1] [5].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Reagents for Histone Modification Profiling and Batch Effect Mitigation

| Reagent / Material | Critical Function | Considerations for Batch Effects |

|---|---|---|

| Specific Histone Antibodies [3] [2] | Immunoenrichment of target modifications (e.g., H3K27me3, H3K4me3) | Major Source: Varying specificity/affinity between lots. Solution: Use the same validated lot for an entire study. |

| Protein A-Tn5 Conjugates [6] [7] | Targeted tagmentation in methods like CUT&Tag and Paired-Tag | Pre-assembled complexes can vary. Aliquot and use consistent batches. |

| Sequencing Kits & Reagents [1] | Library preparation and sequencing | Different reagent lots or kits can introduce systematic variation. |

| Internal Standard Peptides (for MS) [2] | Normalization in mass spectrometry | Enables accurate quantification and helps control for run-to-run technical variation. |

| Cell Line Controls [2] | Quality control and process monitoring | Include control samples in every batch to track technical variability. |

| Barcoded Adapters & Primers [6] [7] | Sample multiplexing and library indexing | Allows pooling of samples from different conditions early to minimize batch effects. |

Proactive Planning: Best Practices for Prevention

Preventing batch effects is more effective than correcting them.

- Randomize and Balance: Distribute biological conditions and sample types evenly across sequencing runs, reagent lots, and processing days [1] [2].

- Record Comprehensive Metadata: Meticulously document every step, including reagent lot numbers, instrument IDs, personnel, and processing dates [1].

- Utilize Technical Replicates: Process the same control sample across all batches to quantify technical noise [2].

- Plan for Multiplexing: Use barcoding to process samples from different experimental groups together, thereby reducing lane-to-lane or run-to-run variation [6] [7].

- Validate Antibodies: Ensure antibody specificity for your application, as cross-reactivity can create artifacts mistaken for biology [3] [2].

Technical Support Center: Batch Effect Correction in Histone Modification Studies

Core Concepts and FAQs

FAQ 1: What exactly is a batch effect in the context of histone modification studies? A batch effect is a technical source of variation that occurs when samples processed in different groups (or "batches") show systematic non-biological differences. In histone modification research, this can manifest as apparent differences in ChIP-seq read counts or enrichment profiles that are not due to the actual epigenetic state but rather to technical factors [8] [9]. These effects are a major threat to data integrity as they can be misinterpreted as genuine biological signals, leading to false conclusions.

FAQ 2: What are the common causes of batch effects? Batch effects can originate from multiple sources throughout the experimental workflow [1] [8]:

- Different sequencing runs or instruments

- Variations in reagent lots or antibody batches (critical for ChIP-seq)

- Changes in sample preparation protocols or personnel

- Environmental conditions (e.g., temperature, humidity) in the lab

- Time-related factors, especially in long-term studies spanning months or years

FAQ 3: How do batch effects specifically impact the analysis of broad histone marks like H3K27me3?

Histone modifications with broad genomic footprints, such as the repressive mark H3K27me3, present a particular analytical challenge [10]. Their diffuse patterns, which can span thousands of base pairs, often yield low signal-to-noise ratios in ChIP-seq data. Batch effects can obscure true differential enrichment regions or create artificial ones. Specialized tools like histoneHMM, a bivariate Hidden Markov Model, are often required for accurate differential analysis, as standard peak-calling methods designed for sharp marks can produce high false positive or negative rates [10].

Detection and Diagnosis

How can you determine if your data suffer from batch effects? The table below summarizes common diagnostic approaches.

| Method | Description | What to Look For |

|---|---|---|

| Principal Component Analysis (PCA) [1] [8] | An unsupervised technique that reduces data dimensionality to its main axes of variation. | Samples clustering strongly by batch (e.g., processing date) rather than by biological condition in a 2D plot of the first few principal components. |

| t-SNE/UMAP Examination [8] | Non-linear dimensionality reduction methods used for visualizing high-dimensional data. | Cells or samples from the same biological group forming separate clusters based on their batch of origin. |

| Quantitative Metrics [8] | Scores like k-BET (k-nearest neighbor batch effect test) or ARI (Adjusted Rand Index). | Metrics that indicate poor mixing of batches. Values closer to 1 for some metrics (e.g., ARI) indicate better integration. |

Correction Protocols and Methodologies

This section provides detailed methodologies for correcting batch effects, a critical step before downstream differential analysis.

Protocol 1: Batch Correction using ComBat-seq (for RNA-seq count data)

ComBat-seq uses an empirical Bayes framework to adjust for batch effects in raw count data, making it suitable for RNA-seq and similar datasets [1].

Set up the R environment.

Prepare your data. You need a raw count matrix (

exprmx) and a metadata table (meta) that includes abatchcolumn and atreatment(biological condition) column [1].Filter lowly expressed genes to reduce noise.

Run ComBat-seq.

Validate the correction by performing PCA on the corrected data and visualizing to confirm reduced batch clustering [1].

Protocol 2: Integration of Batch in Statistical Models (Recommended for Differential Expression)

A statistically sound alternative to pre-correcting data is to include batch as a covariate in your linear model during differential analysis. This is the preferred method in many frameworks [11].

In DESeq2:

In limma:

Protocol 3: Specialized Differential Analysis for Broad Histone Marks with histoneHMM

For differential analysis of broad histone marks like H3K27me3 or H3K9me3 between two samples (e.g., case vs. control), histoneHMM provides a robust workflow [10].

- Data Preprocessing: Process ChIP-seq reads for each sample and its input control through a standard pipeline (alignment, duplicate removal).

- Binning the Genome: Divide the reference genome into consecutive 1000 bp windows [10].

- Read Counting: Count the number of reads mapping into each window for both the ChIP and input control samples.

- Run histoneHMM:

- Interpret Output: The algorithm outputs probabilistic classifications for each genomic region: modified in both samples, unmodified in both, or differentially modified [10].

Troubleshooting Common Issues

Issue 1: Overcorrection and Loss of Biological Signal

- Symptoms:

- Cluster-specific markers include ubiquitous genes (e.g., ribosomal genes) [8].

- Significant overlap between markers for different cell types or conditions [8].

- Absence of expected canonical markers for a known biological group [8].

- Few or no significant hits in differential expression analysis for pathways known to be active [8].

- Solutions:

- Avoid using the biological variable of interest (e.g., case/control status) as a covariate in batch correction algorithms like ComBat, especially with unbalanced designs, as this can lead to overfitting and artificial inflation of group differences [11].

- Prefer the approach of including batch as a covariate in the final statistical model for differential testing (see Protocol 2) [11].

- Always run negative controls, such as permuting batch labels, to test if the correction method creates artificial separation even when none should exist [11].

Issue 2: Handling Incrementally Added Data

- Problem: In longitudinal studies, new batches are continuously added. Traditional methods require re-processing all data simultaneously, which can alter previously corrected data and disrupt longitudinal consistency [12].

- Solution: Use an incremental batch correction framework like iComBat, a modification of ComBat designed for DNA methylation data. It allows new batches to be adjusted to a pre-existing reference without the need to re-correct the entire dataset from scratch, ensuring stable and consistent results over time [12].

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Material | Critical Function |

|---|---|

| High-Quality Histone Modification Specific Antibodies | The specificity of the antibody used for chromatin immunoprecipitation (ChIP) is paramount. Different lots or sources can have varying affinities, directly introducing batch effects. Using antibodies from the same validated lot for a full study is crucial [9]. |

| Universal Reference Materials | In proteomics and other MS-based studies, a universal reference sample (like those from the Quartet Project) profiled across all batches enables ratio-based scaling methods, which are highly effective for cross-batch integration [13]. |

| Standardized Reagent Lots | Using the same lots of all key reagents (e.g., enzymes, buffers, kits) across all batches minimizes a major source of technical variation [1] [9]. |

| Quartz Protein Reference Materials (Quartet) | Specifically for proteomics, these reference materials provide a ground truth for benchmarking and correcting batch effects across multiple labs and instrumentation platforms [13]. |

Batch effects are technical sources of variation introduced during high-throughput experiments due to differences in experimental conditions, reagents, personnel, or instrumentation over time [14]. In epigenomic studies, particularly those investigating histone modifications, these non-biological variations can confound data analysis, dilute true biological signals, and lead to misleading or irreproducible conclusions [14] [15]. The profound negative impact of batch effects includes increased variability, decreased statistical power, and potentially incorrect conclusions when batch effects correlate with biological outcomes of interest [14]. For example, in a clinical trial setting, a simple change in RNA-extraction solution resulted in incorrect classification outcomes for 162 patients, 28 of whom received incorrect or unnecessary chemotherapy regimens [14]. This technical guide provides troubleshooting resources to identify, mitigate, and correct for batch effects in epigenomic workflows focused on histone modification studies.

FAQs on Batch Effects in Epigenomics

Q1: What are the most common sources of batch effects in histone modification studies? Batch effects in histone modification workflows arise from multiple sources throughout the experimental pipeline. The most prevalent include reagent lot variations (especially antibodies for chromatin immunoprecipitation), platform differences (e.g., between Illumina Infinium I and II designs), processing time variations, and operator effects [16] [17]. For histone-specific workflows, antibody lot consistency is particularly critical as different lots may have varying affinities for specific histone post-translational modifications such as H3K27ac or H3K4me3 [18]. Other significant factors include sample storage conditions (temperature, duration, freeze-thaw cycles), DNA bisulfite conversion efficiency for methylation analyses, and scanner variability in array-based platforms [14] [16].

Q2: How can I determine if my dataset has significant batch effects? Multiple visualization and statistical approaches can detect batch effects. Principal component analysis (PCA) is commonly used to visualize whether samples cluster by batch rather than biological group [15]. For single-cell epigenomic data, the k-nearest neighbor batch effect test (kBET) provides a quantitative measure of how well batches are mixed at the local level [15]. Additionally, monitoring the coefficient of variation (CV) across technical replicates processed in different batches can reveal batch-specific technical variances [19]. In Illumina Methylation BeadChip data, examining the distribution of M values before and after batch processing can identify residual technical variance [16].

Q3: My biological groups are completely confounded with batch (e.g., all controls in batch 1, all treatments in batch 2). Can I still correct for batch effects? Complete confounding between biological groups and batches presents the most challenging scenario for batch effect correction [20]. In such cases, most standard correction algorithms may remove biological signal along with technical variation [20]. The most effective approach in confounded designs incorporates reference materials processed concurrently with study samples in each batch [20]. By scaling absolute feature values of study samples relative to those of concurrently profiled reference material(s), the ratio-based method (Ratio-G) can effectively correct batch effects even when biological and technical variables are completely confounded [20]. Without reference materials, correction in completely confounded scenarios should be approached with extreme caution and validation through independent experiments is recommended.

Q4: Are batch effects more problematic in single-cell epigenomics compared to bulk analyses? Yes, single-cell technologies (e.g., scRNA-seq, scATAC-seq) present additional challenges for batch effect management [14] [15]. Single-cell data suffers from higher technical variations due to lower input material, higher dropout rates, increased cell-to-cell heterogeneity, and a higher proportion of zero counts [14] [21]. The automated C1 microfluidic platform (Fluidigm) demonstrates that technical variability across batches remains substantial even with unique molecular identifiers (UMIs) and spike-in controls [21]. Batch effects and the selection of correction algorithms have been shown to be predominant factors in large-scale and/or multi-batch single-cell data [14].

Q5: What quality control measures can minimize batch effects during experimental design? Proactive experimental design is the most effective strategy for batch effect management. Key considerations include: (1) processing cases and controls simultaneously in randomized order, (2) including technical replicates across batches, (3) using multi-channel pipettes or automated liquid handlers to reduce operator-induced variation, (4) incorporating reference materials in each batch, and (5) documenting reagent lot numbers for potential covariate adjustment [16] [17]. For single-cell automation systems, select platforms that enable parallel processing of various experimental groups within the same run [17]. Additionally, integrated imaging helps identify true single-cell samples to exclude doublets or empty wells that can introduce technical artifacts [17].

Troubleshooting Guides

Table 1: Common Sources of Batch Variation in Epigenomic Workflows

| Source Category | Specific Examples | Affected Epigenomic Methods | Detection Methods |

|---|---|---|---|

| Reagent Variations | Antibody lot differences, enzyme batch effects (bisulfite conversion), buffer composition | ChIP-seq, CUT&Tag, DNA methylation arrays | Correlation analysis of controls, spike-in controls [21] |

| Platform Effects | Scanner differences, probe design (Infinium I vs II), array position effects | Methylation BeadChips, microarray-based methods | PCA, probe-specific error analysis [16] |

| Processing Time | Bisulfite conversion duration, immunoprecipitation time, hybridization time | All methods, particularly time-sensitive enzymatic steps | Time-series analysis, examination of temporal patterns [14] |

| Sample Storage | Freeze-thaw cycles, storage temperature prior to processing, storage duration | All epigenomic methods, particularly histone modification analyses | Sample integrity metrics, correlation with storage logs [14] |

| Operator Effects | Pipetting technique, protocol deviations, sample handling | All manual protocols, particularly complex multi-step workflows | Intra- vs inter-operator variance analysis [17] |

Mitigation Protocol: Reference Material-Based Batch Correction

The ratio-based method using reference materials is particularly effective for confounded batch-group scenarios [20].

Materials Needed:

- Well-characterized reference material (e.g., commercial standards or internal controls)

- Study samples for processing

- Identical processing reagents across batches

- Standardized protocols

Procedure:

- Experimental Design: Include reference materials in each processing batch alongside study samples. For histone modification studies, use a standardized chromatin source with well-characterized modification patterns.

- Concurrent Processing: Process reference materials and study samples simultaneously using identical reagents and conditions.

- Data Generation: Generate omics data (e.g., sequencing counts, methylation β-values) for both reference and study samples.

- Ratio Calculation: For each feature (e.g., gene, peak, CpG site), calculate ratio values: Ratio = Featurevaluestudysample / Featurevaluereferencematerial

- Data Integration: Use ratio-scaled values for downstream analyses and cross-batch integration.

Validation:

- Assess separation of biological groups in PCA plots pre- and post-correction

- Evaluate consistency of reference material profiles across batches

- Monitor signal-to-noise ratio improvements

Correction Algorithm Selection Guide

Table 2: Batch Effect Correction Algorithms for Epigenomic Data

| Algorithm | Best Suited Data Types | Strengths | Limitations | Software Implementation |

|---|---|---|---|---|

| Ratio-based (Ratio-G) | Multi-omics data, confounded designs | Effective in confounded scenarios, simple implementation | Requires reference materials | Custom implementation [20] |

| ComBat | Microarray, bulk sequencing data | Handles balanced designs, empirical Bayes framework | Struggles with confounded designs, may over-correct | sva R package [15] [16] |

| Harmony | Single-cell data, multi-omics integration | Integrates across modalities, preserves biological variance | Requires cell type alignment, computational intensity | Harmony R package [20] |

| BMC (Per Batch Mean-Centering) | Balanced designs, preliminary correction | Simple, fast implementation | Ineffective for confounded designs | Custom implementation [20] |

| RUVm | Methylation array data | Handles probe-type differences, designed for methylation data | May require control probes | missMethyl R package [16] |

Experimental Protocols for Batch Effect Assessment

Protocol: Systematic Assessment of Antibody Lot Variation in ChIP-seq

Purpose: To evaluate batch effects introduced by different antibody lots in histone modification ChIP-seq experiments.

Materials:

- Identical chromatin samples

- Multiple lots of antibody targeting specific histone mark (e.g., H3K27me3)

- ChIP-seq kit reagents

- Library preparation materials

Procedure:

- Sample Allocation: Divide identical chromatin aliquots across different antibody lots, ensuring other reagents remain constant.

- Parallel Processing: Perform ChIP-seq protocol simultaneously for all antibody lots using standardized conditions.

- Library Preparation: Prepare sequencing libraries with unique barcodes for each lot.

- Sequencing: Pool libraries and sequence simultaneously on same flow cell.

- Data Analysis:

- Map reads and call peaks for each antibody lot

- Calculate correlation coefficients between peak profiles across lots

- Identify lot-specific peaks and consensus peaks

- Assess variance attributable to antibody lot versus biological signal

Interpretation: High correlation (>0.9) between lots indicates minimal batch effects. Lot-specific peaks suggest antibody-specific biases requiring correction.

Protocol: Longitudinal Processing Time Assessment

Purpose: To evaluate effects of processing time variations on epigenomic data quality.

Materials:

- Sample replicates

- Standardized reagents

- Timing documentation system

Procedure:

- Staggered Processing: Intentionally process identical sample replicates at different time points (e.g., different days, different positions in workflow).

- Metadata Collection: Meticulously document processing times for each step.

- Data Generation: Process all samples using otherwise identical conditions.

- Time-effect Analysis:

- Correlate processing times with data quality metrics

- Identify time-sensitive steps in workflow

- Quantify variance explained by processing time

Interpretation: Significant correlations between processing time and data metrics indicate time-sensitive steps requiring stricter standardization.

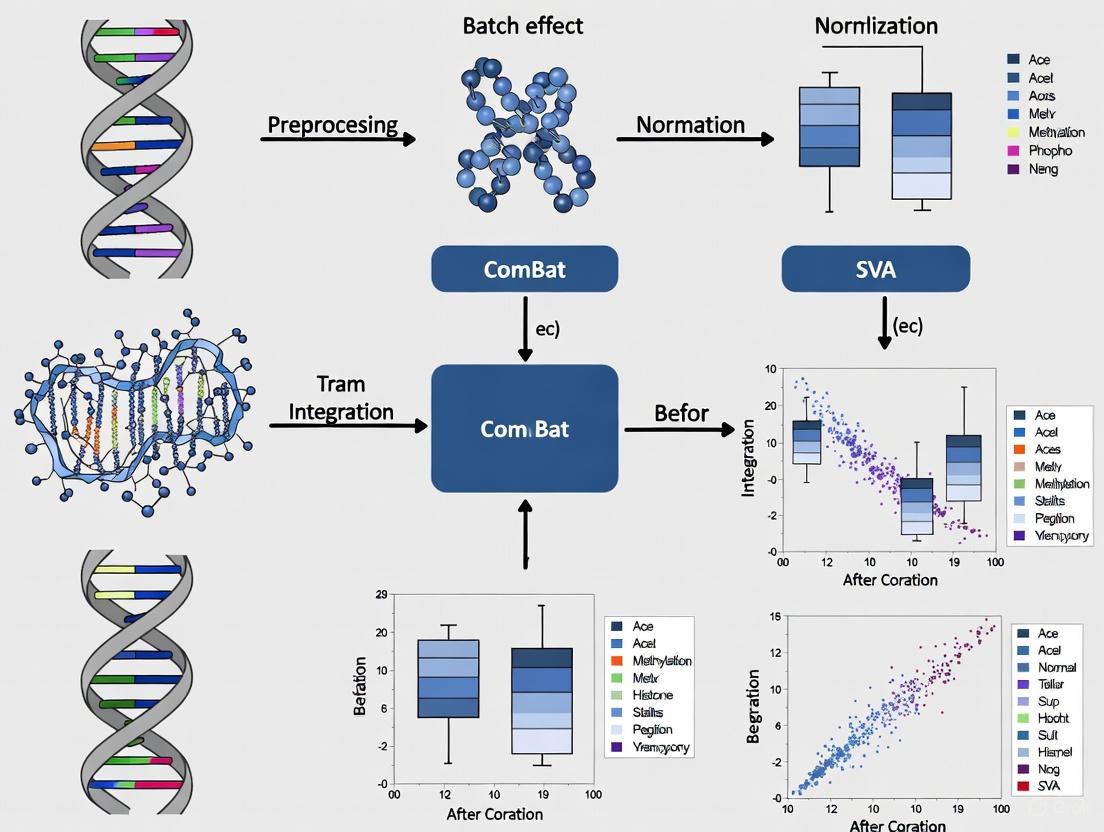

Visualization of Batch Effect Management

Batch Effect Management Workflow

Research Reagent Solutions

Table 3: Essential Materials for Batch Effect Management in Epigenomics

| Reagent/Material | Function | Implementation Examples | Considerations |

|---|---|---|---|

| Reference Materials | Normalization standards for cross-batch comparison | Quartet multiomics reference materials, commercial chromatin standards, in-house reference samples | Should be well-characterized, stable, and biologically relevant [20] |

| UMIs (Unique Molecular Identifiers) | Correct for amplification bias in sequencing | Incorporate in library preparation for scRNA-seq, ChIP-seq | Reduces technical variability but doesn't eliminate all batch effects [21] |

| Spike-in Controls | External standards for normalization | ERCC RNA spike-ins, foreign chromatin spikes | May not experience all processing steps as endogenous samples [21] |

| Control Cell Lines | Biological reference standards | Well-characterized cell lines (e.g., K562, HEK293) processed in each batch | Provides biological context for technical variation assessment [20] |

| Standardized Antibody Lots | Reduce immunoprecipitation variability | Large-volume purchases of validated antibody lots | Critical for histone modification studies; test lot-to-lot consistency [18] |

This case study examines a critical challenge in epigenomics research: the introduction of false positive findings during the statistical correction of batch effects in high-throughput data. We detail a pilot study using the Illumina Infinium HumanMethylation450 (450k) BeadChip that serves as a cautionary tale for researchers working with DNA methylation microarrays and, by extension, other genomic data types like histone modification analyses [22].

The core issue arose when researchers, following a standard analysis pipeline, applied the empirical Bayes tool ComBat to correct for technical batch effects. In the initial, unbalanced study design, this correction dramatically and erroneously inflated the number of significant differentially methylated positions (DMPs), creating thousands of false discoveries [22]. This case underscores a fundamental principle: statistical correction is not a substitute for sound experimental design. The lessons learned are directly transferable to histone modification research (e.g., ChIP-seq, CUT&Tag), where batch effects from different processing days, reagent lots, or sequencing runs can similarly confound results if not properly managed in the experimental plan [23] [18].

Background: The Batch Effect Problem in Epigenomics

Batch effects are systematic technical variations that are not related to the biological variables under investigation. In microarray and next-generation sequencing workflows, these can be introduced by:

- Processing Time: Samples processed on different days or weeks.

- Reagent Batches: Use of different lots of chemicals or kits.

- Personnel: Different technicians handling samples.

- Array Position: Physical location of a sample on a chip or slide [22] [16].

In the featured 450k array pilot study, the two primary sources of batch effect were identified as "row" and "chip" [22]. When these technical factors are unevenly distributed across biological groups (e.g., all cases processed on one chip and all controls on another), they become confounded. This confounding makes it impossible to distinguish whether observed data variation stems from the biology of interest or from the technical artifact, leading to a high risk of both false positives and false negatives [24] [16].

The Case: Initial Analysis and False Discovery

Experimental Setup and Unbalanced Design

The pilot study aimed to investigate differences in placental DNA methylation (n=30 samples) across three different MTHFR genotype groups. These 30 samples were part of a larger set of 84 samples run across seven 450k chips [22].

- Biological Variable of Interest: MTHFR genotype group (Variant 677, Variant 1298, Reference).

- Technical Batch Variables: Chip (

n=7), Row (n=6), and bisulfite conversion batch.

A critical flaw in the "initial analysis" was that the distribution of the 30 pilot samples across the seven chips was unbalanced with respect to the genotype groups. This created a confounded design where the technical variable (chip) was not orthogonal to the biological variable (genotype) [22].

Data Processing and Aberrant Results

The data processing pipeline for the initial analysis is summarized below. The pipeline included standard quality control and normalization steps before the critical batch correction step.

Principal Component Analysis (PCA) revealed that the top principal components (PC3, PC4, PC6) were significantly associated with the technical row and chip variables, confirming the presence of batch effects [22]. The decision was made to correct for these using ComBat.

The outcome was alarming. After applying ComBat to the unbalanced design, the analysis returned 9,612 to 19,214 significant DMPs (FDR < 0.05), despite no significant differences being present prior to correction. The authors were suspicious of this dramatic and biologically implausible increase in findings [22].

Troubleshooting Guide & FAQ: Resolving the Batch Effect Crisis

This section addresses the specific problems encountered in the case study and provides actionable guidance for researchers.

Frequently Asked Questions

Q1: Our PCA shows a strong batch effect. Why did applying ComBat make our results worse, not better? A1: ComBat and similar methods can introduce false signal when the study design is unbalanced or confounded [24]. This occurs when the technical batch variable (e.g., processing chip) is perfectly or highly correlated with your biological variable of interest (e.g., disease status). The algorithm mistakenly "corrects" the biological signal as if it were technical noise, which can either remove real signal or, as in this case, create artificial signal. This is a classic symptom of a design flaw, not necessarily a tool flaw [22] [24].

Q2: How can we check if our study design is confounded before running the experiment? A2: Before processing samples, create a sample allocation table. Map every sample against its biological group and its planned technical batch (chip, row, processing date). Visually inspect this table to ensure that biological groups are evenly distributed across all technical batches. A simulated version of this table for the initial flawed design would show genotype groups clustered on specific chips rather than spread across them [22].

Q3: We discovered an unbalanced design after data collection. What are our options? A3:

- Revise the Analysis: If possible, incorporate a larger number of samples processed in a balanced manner. In the case study, a "revised processing" with more samples successfully reduced batch effects without introducing false signal [22].

- Include Batch as a Covariate: Instead of using an aggressive correction tool like ComBat, include the batch variable as a simple covariate in your linear model during differential analysis. This is a more conservative approach.

- Assess and Filter Probes: Be aware that some microarray probes are notoriously prone to batch effects. One study identified 4,649 probes that consistently required high levels of correction across multiple datasets. Consider filtering these out if they are not critical to your research question [16].

Troubleshooting Flowchart for Batch Effects

Follow this logical pathway to diagnose and address batch effects in your methylation or histone data.

Revised Best-Practice Protocol

Learning from the initial failure, the researchers established a revised, robust protocol for DNA methylation analysis. This protocol is equally applicable to other epigenomic workflows.

Step-by-Step Analytical Workflow

Thoughtful Experimental Design

- Action: Allocate samples to chips, rows, and processing batches using a stratified randomization to ensure biological groups are balanced across all technical factors [22].

- Rationale: This is the single most important step in preventing false discoveries. Prevention is better than correction.

Quality Control (QC) & Normalization

- Action: Perform standard QC on samples and probes. Remove poorly performing samples, probes with high detection p-values, and probes known to be problematic (e.g., cross-hybridizing probes, polymorphic probes, probes on sex chromosomes) [22] [25]. Apply appropriate normalization (e.g., SWAN for 450k data) to correct for technical biases between probe types [22].

Batch Effect Assessment

- Action: Use PCA and unsupervised clustering to visually and statistically inspect the data. Test the top principal components for association with both biological and technical variables [22] [16].

- Rationale: This step diagnoses the presence and severity of batch effects and checks for residual confounding after randomization.

Cautious Batch Effect Correction

- Action: If a balanced design is confirmed, apply a batch correction method like ComBat or Harman.

- Critical Note: Always use M-values for batch correction as they are statistically more valid for linear models. Convert back to Beta-values for interpretation and reporting [16].

Post-Correction Diagnostic

- Action: Repeat the PCA and clustering from Step 3. Confirm that the association between principal components and batch variables has been minimized, while the biological signal remains [16].

The Scientist's Toolkit: Key Research Reagents & Materials

The following table lists essential materials and computational tools used in the featured case study and relevant to the broader field.

| Item Name | Function/Description | Relevance to Field |

|---|---|---|

| Illumina Infinium HumanMethylation450 BeadChip | Microarray measuring methylation status at >450,000 CpG sites. | Primary platform in the case study. Batch effects are inherent to this and similar array platforms [22]. |

ComBat (R sva package) |

Empirical Bayes method for adjusting for batch effects in genomic data. | Central tool in the case study. Powerful but must be used with caution on balanced designs to avoid false positives [22] [24]. |

| Harman | A probabilistic model-based method for correcting batch effects. | An alternative to ComBat. Also effective but requires the same careful consideration of study design [16]. |

| SWAN Normalization | Subset-quantile Within Array Normalization for Illumina Methylation arrays. | Used in the case study to normalize differences between Type I and Type II probes on the 450k array [22]. |

| Specific Histone Markers (e.g., H3K27ac) | Marker of active gene enhancers; can be cyclically modified by metabolic stimuli like palmitate [26]. | Connects to the thesis context. Batch effects in histone ChIP-seq/CUT&Tag data can obscure true biological signals like these. |

| CUT&Tag | Low-input, high-resolution method for mapping histone modifications and protein-DNA interactions. | Modern technique for histone studies. Its data are also susceptible to batch effects from different processing runs [18]. |

Implications for Histone Modification Studies

The lessons from this DNA methylation case study are profoundly relevant to research on histone modifications. Histone marks, such as H3K27ac (an activation mark) and H3K27me3 (a repression mark), are dynamic and can be influenced by environmental factors like lipid overload [26]. Studies investigating these changes using techniques like ChIP-seq or CUT&Tag are equally vulnerable to technical batch effects.

- Technical Variability: Differences in antibody lots, chromatin shearing efficiency, library preparation kits, and sequencing runs can all introduce batch effects.

- Risk of Confounding: If samples from different experimental conditions (e.g., control vs. drug-treated) are processed in separate batches, the observed differences in histone mark enrichment could be technical artifacts rather than true biological effects.

Therefore, the core principle established in this case study must be adopted in histone research: * rigorous experimental design with balanced sample processing across technical batches is the most effective strategy to ensure the integrity and reproducibility of epigenomic findings* [23] [18].

Frequently Asked Questions

What are batch effects and why are they problematic in multi-omics studies? Batch effects are technical, non-biological variations introduced when samples are processed in different groups (batches) due to factors like different sequencing runs, reagent lots, personnel, or instruments [20] [1] [9]. They are problematic because they can:

- Skew analysis, leading to false-positive or false-negative findings [20].

- Cause samples to cluster by batch rather than biological condition in analyses like PCA, misleading conclusions [1].

- Severely compromise data integration and meta-analyses, making it difficult to distinguish true biological signals from technical noise [20] [27].

Why is batch effect correction especially critical when integrating histone modification data with other omics? Histone modification data, such as from ChIP-seq or Paired-Tag, is highly cell-type-specific [7]. When integrating this with other omics layers (e.g., transcriptomics), strong batch effects can completely obscure the true, coordinated biological relationships between chromatin state and gene expression [7] [28]. Furthermore, without correction, it becomes nearly impossible to integrate datasets of multiple histone marks from different batches to understand combinatorial regulatory mechanisms [7].

What is the most robust method for correcting batch effects, particularly in confounded study designs? A ratio-based method is highly effective, especially when batch effects are completely confounded with biological factors of interest (e.g., all samples from biological group A are processed in one batch, and all from group B in another) [20] [29]. This method involves scaling the absolute feature values of study samples relative to those of a concurrently profiled reference material in the same batch [20]. This approach has been shown to outperform other algorithms in confounded scenarios commonly found in longitudinal and multi-center studies [20].

What are the risks of improperly applying batch effect correction algorithms? Two main risks exist:

- Over-correction: Removing true biological variation along with technical noise, which can lead to false negatives [20] [30].

- Under-correction: Leaving residual technical bias in the data, which can lead to false positives and obscure real biological signals [30]. It is crucial to validate correction methods to ensure known biological signals persist [30].

Troubleshooting Guides

Guide 1: My multi-omics data shows strong batch clustering in PCA. What should I do?

Symptoms:

- Principal Component Analysis (PCA) plots show clear separation of samples by processing date, sequencing run, or other technical factors, rather than by biological group [1].

- Differential expression or differential peak analysis identifies many significant features that are correlated with batch.

Solutions:

- Visualize the Batch Effect: Begin by generating a PCA plot colored by batch to confirm the presence and extent of the effect [1].

- Apply a Batch Effect Correction Algorithm (BECA): Choose an algorithm based on your data and experimental design. The following table summarizes common methods.

| Method | Best For | Key Principle | Considerations |

|---|---|---|---|

| Ratio-based Scaling [20] [29] | Multi-omics studies, confounded designs | Scales study sample data relative to a common reference material processed in the same batch. | Requires planning to include a reference material in every batch. |

| ComBat-seq [1] | Bulk RNA-seq count data | Uses an empirical Bayes framework to adjust for batch effects in raw count data. | Specifically designed for RNA-seq; part of the sva R package. |

| removeBatchEffect (limma) [1] | Normalized expression data (e.g., log-CPM) | Removes batch effects using linear models. | Corrected data should not be used directly for DE analysis; include batch in design matrix instead. |

| Harmony [20] [9] | Single-cell data, multi-sample integration | Uses PCA and a novel integration method to correct embeddings. | Effective for balancing and confounded scenarios in various data types. |

| Mixed Linear Models (MLM) [1] | Complex designs with random effects | Models batch as a random effect to calculate residuals for correction. | Powerful for hierarchical or nested batch structures. |

- Validate the Correction: After applying a BECA, perform PCA again. A successful correction will show reduced clustering by batch and improved clustering by biological condition [1].

Guide 2: I am designing a multi-omics study. How can I prevent batch effects?

Best Practices for Experimental Design:

- Plan for Reference Materials: Incorporate a well-characterized reference material (e.g., from the Quartet Project) into every batch of your experiment. This enables the use of the robust ratio-based correction method [20] [29].

- Randomize and Balance: Whenever possible, randomize samples from different biological groups across processing batches. Avoid confounded designs where one biological group is processed entirely in a single batch [20].

- Standardize Protocols: Use the same equipment, reagents, protocols, and personnel across the entire study. If changes are unavoidable, ensure they constitute a new batch and that reference materials are included [9].

- Document Everything: Meticulously record all technical and sample metadata. This is essential for identifying the sources of batch effects during analysis [31].

Best Practices for Data Preprocessing:

- Standardize and Harmonize: Process raw data from different omics platforms using consistent scaling, normalization, and transformation approaches before integration [31].

- Regress Out Confounders: For histone modification data, regress out the effects of known confounders before training models or integrating datasets [28].

Experimental Protocols

Protocol 1: Ratio-Based Batch Effect Correction Using Reference Materials

This protocol is adapted from large-scale multiomics studies and is effective for transcriptomics, proteomics, and metabolomics data [20] [29].

Key Materials:

- Reference Material: A stable, well-characterized control sample (e.g., Quartet multiomics reference materials) [20].

- Study Samples: The experimental samples to be corrected.

Methodology:

- Experimental Setup: In every batch of your experiment, concurrently profile both your study samples and one or more aliquots of the reference material.

- Data Generation: Generate your multiomics data (e.g., RNA-seq, proteomics, metabolomics) as usual for all samples and the reference material.

- Ratio Calculation: For each feature (e.g., gene, protein, metabolite) in each study sample, transform the absolute value into a ratio relative to the average value of that feature in the reference material profiled in the same batch.

Ratio = Value_study_sample / Value_reference_material

- Data Integration: Use the resulting ratio-scaled values for all downstream integrated analyses. This scaling effectively anchors the data from each batch to a common standard, removing batch-specific technical variation [20].

Protocol 2: Integrating Histone Modification and Transcriptome Data with Paired-Tag

This protocol describes the workflow for joint profiling, a powerful method for generating matched single-cell multiomics data [7].

Key Materials:

- Antibodies: Specific antibodies against the histone modifications of interest (e.g., H3K4me3, H3K27ac).

- Paired-Tag Reagents: Protein A-fused Tn5 transposase, well-specific DNA barcodes for transposase adaptors and reverse transcription (RT) primers, and reagents for combinatorial barcoding [7].

- Nuclei: Permeabilized nuclei from your target tissue or cell line.

Methodology:

- Antibody Binding: Incubate permeabilized nuclei with antibodies against specific histone modifications. This targets the protein A-fused Tn5 transposase to specific chromatin regions [7].

- Tagmentation and RT: Perform tagmentation to fragment the targeted chromatin. Subsequently, perform reverse transcription (RT) to generate cDNA. The transposase adaptors and RT primers contain the first round of sample barcodes [7].

- Combinatorial Barcoding: Use a ligation-based strategy in 96-well plates to introduce second and third rounds of DNA barcodes to the nuclei, attaching them to both chromatin DNA fragments and cDNA [7].

- Library Preparation: Pool the barcoded nuclei, lyse them, and purify the chromatin DNA and cDNA. These are then amplified and split into two separate sequencing libraries: one for histone modifications (DNA) and one for the transcriptome (cDNA) [7].

- Data Analysis: After sequencing, bioinformatic processing is used to generate cell-type-resolved maps of chromatin state and transcriptome from the same cells, enabling direct correlation of epigenetic state and gene expression [7].

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in Batch Correction / Multi-omics Integration |

|---|---|

| Quartet Reference Materials [20] [29] | Publicly available multiomics reference materials (DNA, RNA, protein, metabolite) derived from four related cell lines. Used for ratio-based batch correction and quality control across batches and platforms. |

| Histone Modification Antibodies [7] | Target specific histone marks (e.g., H3K27ac for active enhancers, H3K27me3 for repressed regions) in assays like ChIP-seq or Paired-Tag. High specificity is critical for accurate epigenomic profiling. |

| Protein A-fused Tn5 Transposase [7] | An engineered enzyme used in Paired-Tag. It is targeted to chromatin by histone modification antibodies and simultaneously fragments DNA and adds sequencing adaptors. |

| Combinatorial Barcodes [7] | Unique DNA sequences used to label cells or nuclei from different samples or batches, allowing them to be pooled for processing and computationally de-multiplexed after sequencing. |

Functional Guide to Common Histone Modifications

Understanding the biological interpretation of histone marks is key to analyzing integrated data.

| Histone Mark | Common Functional Role | Genomic Context |

|---|---|---|

| H3K4me3 [32] | Activation; a classic promoter mark. | Tightly localized at active gene promoters. |

| H3K27ac [32] | Activation; marks active enhancers and promoters. | Broad regions at active regulatory elements. |

| H3K4me1 [32] | Primed/poised enhancer mark. | Found broadly at both active and inactive enhancers. |

| H3K27me3 [32] | Repression; polycomb-mediated silencing. | Diffuse regions over developmentally repressed genes. |

| H3K9me3 [32] | Repression; often associated with repetitive elements. | Localized to heterochromatic and repetitive regions. |

| H3K36me3 [32] | Transcriptional elongation. | Enriched across the gene body of actively transcribed genes. |

A Practical Guide to Batch Effect Correction Methods and Their Applications

Fundamental Concepts: What Are Batch Effects and Why Do They Matter?

What is a batch effect in high-throughput genomics? A batch effect is a technical source of variation that introduces non-biological differences between groups of samples processed in separate experimental runs. These can arise from differences in reagents, personnel, laboratory conditions, instrument calibration, or processing time. In the context of histone modification studies and other multi-omics data, if left uncorrected, these effects can confound real biological signals, leading to both false-positive and false-negative findings and potentially jeopardizing the reproducibility of research [20] [33].

How can a confounded study design complicate batch effect correction? A confounded design occurs when a biological factor of interest (e.g., disease status) is completely aligned with a batch. For instance, if all control samples are processed in Batch 1 and all case samples in Batch 2, it becomes statistically challenging to distinguish whether observed differences are truly biological or merely technical artifacts. In such scenarios, many standard correction methods risk removing the genuine biological signal along with the technical noise [20].

The Methodological Spectrum: A Comparative Guide

The table below summarizes key batch-effect correction algorithms, their underlying principles, and their applicability to different research scenarios, such as histone modification studies.

Table 1: Comparison of Batch Effect Correction Methodologies

| Method Name | Core Principle | Typical Input Data | Key Application Scenario | Considerations for Histone Modification Studies |

|---|---|---|---|---|

| ComBat [34] | Empirical Bayes framework to adjust for location (additive) and scale (multiplicative) batch effects. | Multi-omics (Microarray, RNA-seq, DNAm) | Cross-sectional studies with known batches. | Can introduce false positives if batch and biology are confounded [24]. |

| Longitudinal ComBat [34] | Extends ComBat by incorporating subject-specific random effects to account for within-subject repeated measures. | Longitudinal 'omics data | Longitudinal studies with repeated measurements from the same subjects. | Protects biological time effects from being over-corrected. |

| BRIDGE [34] | Empirical Bayes using "bridge samples" (technical replicates measured across multiple batches). | Microarray, DNA methylation | Confounded longitudinal studies with bridging samples. | Leverages replicate design to separate time from batch effects. |

| GMQN [35] | Reference-based Gaussian Mixture Quantile Normalization. | DNA Methylation BeadChip | Correcting public data where raw intensity files are unavailable. | Uses a reference distribution to correct probe bias and batch effects. |

| Ratio-based (e.g., Ratio-G) [20] | Scales feature values of study samples relative to a concurrently profiled reference material. | Multi-omics (Transcriptomics, Proteomics, Metabolomics) | Both balanced and confounded scenarios, provided reference materials are used. | Highly effective for confounded designs; requires running reference samples in each batch. |

| Machine Learning Quality-Aware Correction [33] | Uses a machine-learning model to predict sample quality (Plow) and corrects data based on this metric. | RNA-seq | Detecting and correcting batches from quality differences when batch info is unknown. | Corrects quality-related batch effects without prior batch knowledge. |

| iComBat [5] | An incremental version of ComBat that allows new batches to be adjusted without recorrecting old data. | DNA Methylation array | Longitudinal studies or trials with sequentially added data batches. | Maintains data consistency in long-term or ongoing studies. |

Workflow and Decision Diagrams for Your Experiments

Integrating a batch effect correction strategy into your experimental workflow is crucial for data integrity. The following diagram outlines a logical decision pathway to select an appropriate method based on your experimental design.

Diagram 1: Method selection workflow.

Once a method is selected, the general correction process follows a series of standardized steps, from raw data to corrected analysis-ready data, as visualized below.

Diagram 2: Generic batch correction pipeline.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: I used ComBat on my DNA methylation data, but now I have an unexpectedly high number of significant hits. What could be wrong? This is a known risk. Simulation studies have demonstrated that applying ComBat to data where batch effects are perfectly confounded with the biological groups of interest can systematically introduce false positive results. The inflation of significant findings is more pronounced with smaller sample sizes and a higher number of batch factors [24]. Before correction, always visualize your data with PCA to check for confounding. If present, a method like the ratio-based approach using a reference material may be more suitable [20].

Q2: My study involves collecting samples from the same individuals over time (longitudinal design). How do I correct for batch effects without removing the biological time signal? Standard methods like ComBat assume sample independence and can over-correct in longitudinal settings. You should use methods specifically designed for dependent data.

- Option 1: Longitudinal ComBat incorporates subject-specific random effects to model within-subject correlation, protecting temporal biological signals [34].

- Option 2: BRIDGE is highly effective if your study includes "bridge samples" (technical replicates from the same individual profiled in multiple batches), as it uses these to disentangle time effects from batch effects [34].

Q3: I am integrating public histone data from multiple studies, and the raw data or batch information is missing. What are my options? For this challenging but common scenario, reference-based methods are your best option.

- GMQN: This method was developed for DNA methylation data where raw intensity files are missing. It uses a large, standardized reference dataset to correct for probe bias and batch effects, requiring only the processed signal intensity files [35]. The principle can inspire similar approaches for histone data.

- Machine Learning Quality Score: One study on RNA-seq data used a machine-learning model to predict sample quality (Plow) which was then used to detect and correct for batches, even without prior batch information [33].

Q4: What is the most robust method for a confounded study design where my biological groups are processed in completely separate batches? The reference-material-based ratio method (Ratio-G) has been shown to be particularly effective in completely confounded scenarios. By scaling the absolute feature values of your study samples relative to the values of a common reference material processed concurrently in every batch, you effectively cancel out the batch-specific technical variation. A large-scale benchmark study found it "much more effective and broadly applicable than others" in such difficult situations [20].

Essential Research Reagents and Materials

The following table lists key reagents and computational tools that are fundamental to implementing effective batch effect correction strategies in a research environment.

Table 2: Key Research Reagent Solutions for Batch Effect Correction

| Item Name / Solution | Type | Primary Function | Relevance to Batch Correction |

|---|---|---|---|

| Quartet Reference Materials [20] | Biological Material | Matched DNA, RNA, protein, and metabolite reference materials from four cell lines. | Serves as a universal reference for ratio-based correction across multi-omics studies, enabling robust correction in confounded designs. |

| Common Reference Sample | Biological Material | A well-characterized, stable biological sample (e.g., a commercial cell line). | Processed in every batch to serve as an internal technical control for methods like Ratio-G and to monitor technical variation. |

| Bridge Samples [34] | Technical Replicates | Aliquots from the same subject measured across multiple batches/timepoints. | Informs batch-effect correction in longitudinal studies by directly measuring technical variation across batches for the same biological material. |

R sva Package |

Software / Computational Tool | Contains the ComBat function for empirical Bayes batch correction. | A widely used tool for correcting batch effects when batches are known and the design is not severely confounded. |

| GMQN R Package [35] | Software / Computational Tool | Implements Gaussian Mixture Quantile Normalization. | A specialized tool for correcting batch effects and probe bias in public DNA methylation array data where raw data is missing. |

| seqQscorer [33] | Software / Computational Tool | A machine learning tool that predicts NGS sample quality (Plow score). | Enables batch effect detection and correction based on predicted quality scores, useful when batch information is not available. |

Experimental Protocols and Validation Metrics

Protocol: Implementing a Ratio-Based Correction for a Confounded Study This protocol is adapted from the Quartet Project [20].

- Experimental Design: Incorporate a common reference material (e.g., a Quartet reference or a commercial cell line) into every batch of your experiment. The number of replicates for the reference should match that of your study samples.

- Data Generation: Process all samples (study and reference) using your standard histone modification profiling protocol (e.g., ChIP-seq).

- Data Extraction: Generate quantitative matrices for your histone marks (e.g., read counts in peaks or signal intensities).

- Ratio Calculation: For each feature (e.g., genomic region) in every study sample, calculate a ratio value:

Ratio = (Feature Value in Study Sample) / (Feature Value in Reference Material). It is common to use a summary measure (e.g., median) of the reference replicates per batch for this calculation. - Downstream Analysis: Use the resulting ratio matrix for all subsequent analyses (e.g., differential analysis, clustering). The data is now scaled relative to the constant reference, mitigating batch-specific technical variation.

How to Validate Correction Success: Key Metrics After applying a batch correction method, it is critical to assess its performance.

- Principal Component Analysis (PCA): Visualize the data before and after correction. Successful correction is indicated by the mixing of samples from different batches in the PCA plot, whereas before they formed separate clusters by batch [33] [20].

- Signal-to-Noise Ratio (SNR): Measures the separation of distinct biological groups after integration. An increase post-correction indicates improved biological signal clarity [20].

- Differential Feature Analysis: In a controlled benchmark (e.g., using reference materials), a good correction increases the true positive rate for known differentially modified features while minimizing false positives [20].

- Clustering Metrics: Internal clustering metrics like the Dunn Index or Gamma can quantify the improvement in sample grouping by biological type rather than by batch [33].

Batch effects are technical sources of variation introduced during experimental procedures that can confound biological results in high-throughput data analysis. In microarray studies, these effects can arise from various sources including different processing times, reagent batches, operators, or specific chip positions (row effects) [36]. The ComBat method, developed by Johnson et al., has emerged as a powerful statistical approach for identifying and correcting these unwanted variations, thereby enhancing data quality and reliability for downstream analysis [37] [36].

ComBat employs an empirical Bayes framework that effectively adjusts for batch effects while preserving biologically meaningful signals [36]. This method has proven particularly valuable in histone modification studies, where technical artifacts can obscure important epigenetic patterns relevant to cancer research and therapeutic development [38]. By integrating ComBat correction into their analytical pipelines, researchers can significantly improve the consistency and reproducibility of their microarray data, especially when combining datasets from multiple sources or experimental batches.

Understanding ComBat's Methodology

Core Algorithm and Mechanism

ComBat operates through a sophisticated empirical Bayes approach that stabilizes the parameter estimates across batches, making it particularly effective even when dealing with small sample sizes [36]. The method works by standardizing data both across genes and batches, then estimating batch-specific parameters (location and scale adjustments) through a parametric empirical Bayes framework before applying these adjustments to remove batch effects [37] [36].

The algorithm follows these key steps:

- Standardization: Normalizes the data across features to make batch effects comparable

- Parameter Estimation: Calculates batch-specific mean and variance parameters using empirical Bayes estimation

- Adjustment: Applies shrinkage estimators to remove batch effects while preserving biological variation

This approach allows ComBat to effectively handle situations where the number of samples per batch is small, as it "borrows information" across genes to stabilize the parameter estimates [36].

ComBat Variants and Their Applications

Several variants of ComBat have been developed to address specific data types and analytical challenges:

Table 1: ComBat Variants and Their Specific Applications

| Variant Name | Data Type | Key Features | Best Use Cases |

|---|---|---|---|

| Standard ComBat | Continuous, normalized data | Parametric empirical Bayes framework | Microarray data, normalized expression values |

| ComBat_seq | RNA-seq count data | Negative binomial regression | Raw count data from sequencing experiments [39] |

| Non-parametric ComBat | Various distributions | Non-parametric adjustments | When distributional assumptions aren't met [36] |

Technical Support: Troubleshooting Common ComBat Issues

Frequently Asked Questions

Q1: My data shows unexpected patterns after ComBat correction. What could be wrong? This often occurs when biological groups are confounded with batch groups. Before applying ComBat, verify that your biological variables of interest are distributed across multiple batches. If a biological group exists in only one batch, ComBat may incorrectly remove biological signal along with batch effects [37]. Always visualize your data using PCA before and after correction to identify such issues.

Q2: How do I handle missing values in my dataset before running ComBat? ComBat cannot directly handle missing values. You must either impute missing values using appropriate methods (e.g., k-nearest neighbors imputation) or remove features with excessive missingness prior to running ComBat. The specific approach should be determined by the proportion of missing data and the experimental design.

Q3: Can I use ComBat for very small sample sizes (n < 5 per batch)? While ComBat's empirical Bayes framework is designed to handle small sample sizes better than traditional methods, extreme cases with very few samples per batch may lead to unstable results. In such situations, consider using non-parametric ComBat or exploring alternative methods like Harmonym which may be more robust for very small batches [40].

Q4: How does ComBat handle extreme outliers in the data? ComBat is somewhat sensitive to extreme outliers, which can disproportionately influence parameter estimates. It's recommended to identify and address significant outliers before applying ComBat, either through transformation or winsorization, though careful consideration should be given to whether outliers represent technical artifacts or genuine biological signals.

Common Error Messages and Solutions

Table 2: Troubleshooting Common ComBat Errors

| Error Message | Likely Cause | Solution |

|---|---|---|

| "Error in model.matrix" | Incorrect specification of model parameters or batch variables | Verify that batch and model variables are properly formatted as factors with appropriate levels [36] |

| Matrix dimension mismatches | Inconsistent dimensions between expression data and sample information | Ensure the sample names in expression matrix columns exactly match row names in phenotype data [37] |

| Convergence issues | Highly heterogeneous batches or insufficient sample size | Increase iterations or consider non-parametric ComBat variant [36] |

| Memory allocation errors | Large dataset size exceeding computational capacity | Process data in chunks or increase memory allocation; consider using ComBat implementations optimized for large datasets [41] |

Experimental Protocols and Workflows

Standard ComBat Implementation Protocol

For microarray data analysis in histone modification studies, follow this detailed protocol:

Materials Needed:

- Normalized expression matrix (genes as rows, samples as columns)

- Batch information for each sample

- Biological covariates to preserve (optional)

- R statistical environment with sva package installed

Step-by-Step Procedure:

Data Preparation and Quality Control

- Format expression data as a matrix with row and column names

- Ensure batch information is encoded as a factor variable

- Perform initial PCA to visualize batch effects prior to correction

- Log-transform data if necessary to stabilize variance

Model Specification

- Define the model matrix incorporating biological variables of interest

- Specify batch variable separately from biological covariates

- For complex designs, include interaction terms if appropriate

ComBat Execution

Post-Correction Validation

- Perform PCA on corrected data to visualize batch effect removal

- Compare cluster dendrograms before and after correction

- Verify preservation of biological signals through differential expression analysis

ComBat Workflow Visualization

Diagram 1: ComBat Analysis Workflow - This workflow illustrates the sequential steps for proper implementation of ComBat correction in microarray studies.

Integration with Histone Modification Research

Special Considerations for Epigenetic Studies

In histone modification research, particularly in cancer epigenetics, ComBat correction requires additional considerations due to the unique characteristics of epigenetic data:

Preserving Biologically Relevant Variation: Histone modification patterns often exhibit subtle variations that drive important biological processes in carcinogenesis [38] [42]. When applying ComBat to such data, researchers must carefully distinguish between technical artifacts and genuine biological signals, particularly when studying modifications like H3K4 methylation, H3K27 acetylation, or novel modifications such as lactylation and succinylation [42].

Batch Effect Identification in Epigenetic Data:

- Chip-Specific Effects: Variations between microarray chips can introduce systematic biases in histone modification measurements

- Antibody Batch Effects: Different lots of immunoprecipitation antibodies can yield varying enrichment efficiencies

- Processing Time Effects: Extended processing times may affect histone modification stability

Research Reagent Solutions for Quality Control

Table 3: Essential Research Reagents and Tools for ComBat-Assisted Histone Modification Studies

| Reagent/Tool | Function | Quality Control Application |

|---|---|---|

| Reference epigenome standards | Inter-laboratory calibration | Normalization control for cross-batch comparisons |

| Spike-in controls | Technical variation assessment | Distinguishing technical from biological variation |

| Antibody validation panels | IP efficiency verification | Controlling for antibody-related batch effects |

| Automated processing systems | Reduction of operator-induced variability | Minimizing personnel-related batch effects |

| SVA R package | Surrogate variable analysis | Identifying unknown sources of batch effects [36] |

Advanced Topics and Recent Developments

Integration with Other Batch Correction Methods

ComBat can be effectively combined with other preprocessing and normalization approaches to enhance its performance:

Multi-Stage Correction Strategies: For complex experimental designs involving multiple sources of variation, consider implementing a sequential correction approach:

- Apply quantile normalization to address distributional differences

- Use ComBat to correct for known batch effects

- Employ SVA to remove residual unknown batch effects [36]

Comparison with Alternative Methods: While ComBat remains popular for its robustness and simplicity, newer methods like Harmonym and scVI offer advantages for specific data types [40]. The choice between methods depends on data characteristics:

- ComBat: Ideal for known batch effects with moderate sample sizes

- Harmony: Better suited for large datasets with complex batch structures

- scVI: Preferred for single-cell data and complex hierarchical designs

ComBat in Multi-Omics Integration

The principles underlying ComBat have been extended to integrated analyses combining microarray data with other data types commonly used in histone modification research:

Cross-Platform Integration: When combining microarray-based histone modification data with RNA-seq expression data or mass spectrometry-based proteomics, platform-specific batch effects must be addressed. Modified ComBat implementations can handle such cross-platform integration while preserving biologically meaningful correlations between data types.

Temporal Batch Effects: For longitudinal histone modification studies, temporal batch effects require special consideration. Extensions of ComBat that incorporate time-series components can address these complex batch effect structures while preserving dynamic biological patterns relevant to cancer progression and treatment response [38].

By implementing these ComBat protocols and troubleshooting guidelines within the framework of histone modification research, scientists and drug development professionals can significantly enhance the reliability and interpretability of their epigenetic studies, ultimately accelerating the discovery of novel therapeutic targets and biomarkers.

Frequently Asked Questions

Q1: I'm new to single-cell data integration. Which method should I try first? A1: Based on comprehensive benchmarks, Harmony is recommended as the first method to try due to its significantly shorter runtime and competitive performance in integrating batches while preserving biological variation [4] [43]. It is also the only method among the top performers that can integrate datasets of up to ~1 million cells on a personal computer [44].

Q2: My datasets have very different cell type compositions. Will these methods still work? A2: Yes, but the choice of method is important. Benchmarking studies tested this scenario (non-identical cell types) and found that Harmony, LIGER, and Seurat 3 all performed well [4]. LIGER was specifically designed to handle cases where biological differences (like unique cell types) are confounded with technical batch effects [4].

Q3: After integration, my count matrix is modified. Can I use it for differential expression analysis? A3: You must proceed with caution. Methods that directly return a corrected count matrix (e.g., ComBat, MNN Correct, Seurat 3) can be used for downstream analysis, but be aware that the process may introduce artifacts [45]. Methods that only correct an embedding (e.g., Harmony, BBKNN, LIGER) are primarily designed for clustering and visualization; for differential expression, technical variation should be accounted for using other means, such as including batch as a covariate in a linear model [45] [46].

Q4: The batch effect in my multi-omics histone modification data is severe. What should I check? A4: First, ensure your data preprocessing (normalization, highly variable gene selection) is robust. For severe batch effects, try Harmony first for its speed and reliability. If integration remains poor, LIGER or Seurat 3 are viable alternatives, as they use different algorithms that might capture the complex variation in your data more effectively [4]. Always validate that the integrated data shows good mixing of batches while keeping known cell types (e.g., from histone modification patterns) separate.

Q5: The batch correction seems to have mixed my distinct cell types. What went wrong? A5: This can happen if the batch correction is too strong. Some methods, particularly those using adversarial learning, are prone to mixing embeddings of unrelated cell types that have unbalanced proportions across batches [47]. To fix this, try reducing the integration strength parameter in your chosen method (if available). Alternatively, switch to a method like Harmony, which has been shown to introduce fewer such artifacts [45].

Troubleshooting Guides

Poor Data Integration after Running Harmony, LIGER, or Seurat 3

Problem: After running an integration method, batches remain separate in the UMAP/t-SNE plot, or biological cell types have been incorrectly merged.

Solutions:

- Verify Preprocessing: Ensure that all datasets have been normalized and the same set of highly variable genes has been used as input. Inconsistent preprocessing is a common cause of failed integration.

- Adjust Method Parameters: Each method has key parameters that control the integration strength.

- For Harmony, you can adjust the

thetaparameter, which controls the degree of batch correction. A higher value increases correction strength [44]. - For LIGER, the

k(number of factors) andlambda(regularization parameter) can be tuned to improve results [4]. - For Seurat 3, the