Open Reading Frame Prediction in Microbes: Methods, Tools, and Applications in Drug Discovery

Accurate prediction of Open Reading Frames (ORFs) is fundamental to deciphering microbial genomes, identifying novel gene products, and understanding pathogenicity.

Open Reading Frame Prediction in Microbes: Methods, Tools, and Applications in Drug Discovery

Abstract

Accurate prediction of Open Reading Frames (ORFs) is fundamental to deciphering microbial genomes, identifying novel gene products, and understanding pathogenicity. This article provides a comprehensive guide for researchers and drug development professionals, covering the foundational principles of microbial ORFs, from classic definitions to the challenges of small ORFs (smORFs) and proto-genes. It details and compares current computational methods—including ab initio, homology-based, and machine learning tools—applied to both isolate genomes and complex metagenomic data. The content further addresses critical troubleshooting and optimization strategies for handling annotation inconsistencies and data quality issues. Finally, it outlines rigorous validation frameworks integrating Ribo-seq and mass spectrometry to distinguish functional coding sequences, concluding with the translational impact of robust ORF prediction on uncovering new antimicrobial targets and virulence factors.

The Microbial ORF Blueprint: From Basic Concepts to Functional Genomics

In genomic research, an Open Reading Frame (ORF) is defined as a portion of a DNA sequence that does not contain a stop codon and has the potential to be translated into a protein [1]. This fundamental concept is paramount in gene prediction and annotation, especially in microbial genomics where efficient genome scanning is critical for identifying potential protein-coding genes. An ORF represents a sequence of DNA triplets bounded by start and stop codons, which can be transcribed into mRNA and subsequently translated into protein [2]. In the context of microbial genomes, ORF identification serves as a primary method for cataloging the functional elements of a genome, enabling researchers to hypothesize about gene function and regulatory mechanisms based on sequence characteristics alone.

The terminology originates from the concept of a "frame of reference" where the RNA code is "read" by ribosomes to synthesize proteins [1]. The "open" designation indicates that the ribosomal reading pathway remains unobstructed by termination signals, allowing for continuous amino acid incorporation into the growing polypeptide chain. In prokaryotic systems, where genes are not interrupted by introns, ORF identification is particularly straightforward compared to eukaryotic genomes, making microbial genomes ideal for studying the principles of ORF prediction and annotation [3] [4].

The Genetic Code: Start, Stop, and Reading Frames

Codons and Reading Frames

The genetic code is interpreted in groups of three nucleotides called codons, each specifying a particular amino acid or signaling the termination of protein synthesis [1]. Of the 64 possible codons, 61 specify amino acids while 3 (TAA, TAG, and TGA in DNA; UAA, UAG, and UGA in RNA) function as stop codons that terminate translation [1] [4]. Translation typically initiates at a start codon, which is usually AUG (coding for methionine) in terms of RNA [4].

Because DNA is interpreted in these triplet groups, any DNA sequence can be read in three different reading frames depending on the starting nucleotide position [1] [4]. Since DNA is double-stranded with two anti-parallel strands, and each strand has three possible reading frames, every DNA molecule actually has six possible reading frames for analysis [1] [4]. This is a critical consideration in genome annotation, as the correct frame must be identified to accurately predict the encoded protein.

Table 1: Genetic Code Components Essential for ORF Identification

| Component | Sequence(s) in DNA | Biological Function |

|---|---|---|

| Start Codon | ATG (also GTG, TTG in some cases) | Initiates protein translation; codes for formylmethionine (prokaryotes) or methionine (eukaryotes) |

| Stop Codons | TAA, TAG, TGA | Terminates protein translation; releases the completed polypeptide from the ribosome |

| Typical Codon Length | 3 nucleotides (triplet) | Encodes a single amino acid or termination signal |

| Standard ORF Structure | Start + (3n nucleotides) + Stop | Defines a complete protein-coding sequence without interruption |

The Six-Frame Translation

The concept of six-frame translation is fundamental to ORF prediction in microbial genomes. As DNA has two complementary strands (5'→3' and 3'→5'), and each can be read in three different frames, comprehensive ORF detection requires scanning all six possibilities [4]. For example, considering the sequence 5'-ACGACGACGACGACGACG-3', the three possible reading frames on this strand would be:

- Frame 1: ACG ACG ACG ACG ACG ACG

- Frame 2: CGA CGA CGA CGA CGA CGA

- Frame 3: GAC GAC GAC GAC GAC GAC [5]

The complementary strand would present three additional reading frames for analysis. In actual genomic sequences, stop codons appear frequently in non-coding frames, while true protein-coding regions maintain an open reading frame of significant length [5] [2].

Figure 1: The Six Reading Frames of DNA. Every double-stranded DNA sequence has six potential reading frames—three on the forward strand and three on the reverse strand—that must be analyzed for ORF identification.

ORF Prediction in Microbial Genomes

Computational Identification of ORFs

ORF prediction begins with scanning DNA sequences for extended stretches between start and stop codons. In a randomly generated DNA sequence with equal percentage of each nucleotide, a stop codon would be expected approximately once every 21 codons [4] [2]. Therefore, simple gene prediction algorithms for prokaryotes typically look for a start codon followed by an open reading frame of sufficient length to encode a typical protein, where the codon usage matches the frequency characteristic for the given organism's coding regions [4] [2].

Most algorithms employ a minimum length threshold to distinguish likely protein-coding ORFs from random occurrences. While specific thresholds vary, commonly used values include 100 codons [2] or 150 codons [4]. The longer an ORF is, the more likely it represents a genuine protein-coding gene rather than a random sequence lacking stop codons [1]. Additional evidence such as codon usage bias, ribosome binding sites upstream of start codons, and sequence homology to known proteins further strengthens ORF predictions [3] [4].

Table 2: Key Criteria for ORF Prediction in Microbial Genomes

| Criterion | Typical Parameters | Rationale |

|---|---|---|

| Minimum ORF Length | 100-150 codons (300-450 bp) | Reduces false positives from random occurrences without stop codons; most authentic proteins exceed this length |

| Start Codon | ATG (most common), GTG, TTG | Standard initiation codons recognized by bacterial ribosomes |

| Stop Codons | TAA, TAG, TGA | Translation termination signals that define ORF boundaries |

| Codon Usage Bias | Organism-specific codon frequency tables | Authentic genes typically show non-random codon usage matching genomic patterns |

| Ribosome Binding Site | Shine-Dalgarno sequence (AGGAGG) 5-10 bp upstream of start | Prokaryotic translation initiation site that validates start codon selection |

| Sequence Conservation | BLAST homology to known proteins | ORFs with significant similarity to proteins in databases more likely to represent genuine genes |

Distinguishing Coding from Non-Coding ORFs

While ORF prediction algorithms can identify potential coding sequences, not all ORFs represent functional genes. Several analytical approaches help distinguish protein-coding ORFs from non-coding sequences:

Sequence Conservation: Genuine protein-coding sequences typically show evolutionary conservation across related species, while non-functional ORFs accumulate mutations more rapidly [2].

Codon Adaptation Index (CAI): This measurement evaluates how similar the codon usage of an ORF is to the preferred codon usage of highly expressed genes in the organism [4].

Homology Searches: Comparing predicted ORFs against protein databases using tools like BLAST can identify conserved domains and functional motifs that support coding potential [4].

In bacterial genomes, a substantial fraction of gene content differences, particularly in free-living bacteria, comes from ORFans—ORFs that have no known homologs in databases and consequently have no assigned function [2]. These present particular challenges for functional annotation and may represent taxonomically restricted genes with specialized functions.

Experimental Protocols for ORF Analysis

Computational ORF Prediction Workflow

Figure 2: Computational Workflow for ORF Prediction. The standard bioinformatics pipeline for identifying and validating open reading frames in microbial genomes.

Procedure:

Sequence Acquisition: Obtain the complete genomic DNA sequence of the microorganism of interest. For prokaryotic genome annotation, this may be a single circular chromosome or include additional plasmid sequences [3] [6].

Six-Frame Translation: Use computational tools (e.g., ORF Finder, OrfPredictor) to translate the DNA sequence in all six reading frames [4]. Most tools allow selection of the appropriate genetic code for the organism (standard, bacterial, etc.).

ORF Identification: Scan each reading frame for start codons followed by a sequence without stop codons until a termination signal is encountered. Most algorithms will identify all such regions regardless of length [4] [7].

Initial Filtering: Apply length thresholds (typically 100-150 codons) to eliminate likely spurious ORFs [4] [2]. Shorter ORFs may be retained for special consideration if studying small proteins.

Codon Usage Analysis: Evaluate the codon usage bias of potential ORFs against organism-specific codon frequency tables. Authentic protein-coding regions typically exhibit non-random codon usage [4] [2].

Homology Searching: Perform BLASTP searches of predicted amino acid sequences against protein databases (e.g., UniProt, RefSeq) to identify homologous sequences and functional domains [4].

Annotation: Assign putative functions based on homology, conserved domains, and genomic context (e.g., operon structure). ORFs without significant homology should be annotated as "hypothetical proteins" [3].

Whole-Genome ORF Array Analysis

Whole-genome ORF arrays (WGAs) represent an experimental approach for analyzing ORF content and expression across microbial genomes [8]. This methodology involves:

Materials:

- DNA microarrays containing probes for all ORFs in one or more reference genomes [8]

- Genomic DNA or cDNA from target organisms

- Fluorescent labeling reagents (e.g., Cy3, Cy5)

- Hybridization and washing solutions

- Microarray scanner

Protocol:

Array Design: Construct microarrays with oligonucleotide probes representing each ORF in the reference genome(s). For comparative genomics, design should ensure specific hybridization under stringent conditions [8].

Sample Preparation: Extract genomic DNA from microbial strains of interest. Fragment DNA and label with fluorescent dyes (e.g., Cy5). Label reference DNA with a different dye (e.g., Cy3) [8] [9].

Hybridization: Mix labeled test and reference DNA samples and hybridize to the microarray under appropriate stringency conditions. This allows competitive binding of sequences to their complementary probes [8] [9].

Washing and Scanning: Wash arrays to remove non-specifically bound DNA and scan using a microarray scanner to quantify fluorescence signals at each probe location [9].

Data Analysis: Calculate fluorescence ratios (test/reference) for each ORF. ORFs with similar sequences between test and reference strains will show balanced signals, while divergent or absent ORFs will show imbalanced ratios [8].

This approach has been successfully applied to examine relatedness among bacterial strains, identify genomic islands, and associate specific ORFs with phenotypic traits like host specificity or antibiotic resistance [8].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Research Reagents for ORF Analysis in Microbial Genomics

| Reagent/Resource | Function in ORF Analysis | Examples/Specifications |

|---|---|---|

| ORF Prediction Software | Identifies potential protein-coding regions in DNA sequences | ORF Finder [4], OrfPredictor [4], ORF Investigator [4], ORFik [4] |

| Sequence Annotation Tools | Provides structural and functional annotation of predicted ORFs | NCBI Prokaryotic Genome Annotation Pipeline [3], RAST, Prokka |

| Whole-Genome ORF Arrays | Experimental validation of ORF presence/absence and expression | Custom-designed microarrays with probes for all ORFs in reference genome(s) [8] |

| BLAST Databases | Homology searching to assign putative functions to predicted ORFs | NCBI nr database, UniProt, organism-specific databases |

| Genetic Code Tables | Specifies codon-amino acid relationships for different organisms | Standard code, bacterial code, alternative mitochondrial codes |

| Codon Usage Tables | Organism-specific codon frequency references for coding potential assessment | Codon Usage Database (https://www.kazusa.or.jp/codon/) |

| DNA Sequencing Kits | Generate sequence data for ORF identification and verification | Illumina DNA Prep, PacBio SMRTbell, Oxford Nanopore ligation sequencing kits [6] |

Applications in Microbial Research

Gene Finding and Genome Annotation

ORF identification represents the fundamental first step in gene finding and genome annotation for microbial sequences [4] [2]. In prokaryotes, where genes lack introns, ORFs typically correspond directly to protein-coding genes. The process of annotating a newly sequenced bacterial genome involves:

- Computational ORF Prediction: Using algorithms to identify all potential protein-coding regions [3] [6].

- Functional Assignment: Assigning putative functions based on homology to characterized proteins [3].

- Locus Tag Assignment: Providing systematic identifiers for each predicted gene (e.g., OBB_0001) [3].

- Protein ID Assignment: Assigning tracking identifiers to all predicted proteins (e.g., gnl|dbname|string) [3].

The NCBI Prokaryotic Genome Annotation Pipeline provides specific guidelines for this process, including standardized protein naming conventions that avoid references to subcellular location, molecular weight, or species of origin [3].

Bacterial Resistance Characterization

ORF analysis has important applications in identifying and tracking antibiotic resistance mechanisms in bacterial pathogens. A recently patented method demonstrates how ORF-based screening can identify bacterial resistance characteristics through the following approach:

- ORF Prediction: Identify all potential ORFs in bacterial genomes [10].

- Variant Detection: Identify genetic variations (single nucleotide polymorphisms, insertions, deletions) within ORFs [10].

- Association Analysis: Correlate specific ORF variants with drug resistance phenotypes using machine learning and statistical algorithms [10].

- Feature Selection: Apply positive predictive value (PPV) calculations to identify ORFs with the strongest association to resistance [10].

This method has been applied to clinically important pathogens including Staphylococcus aureus, Escherichia coli, Klebsiella pneumoniae, Pseudomonas aeruginosa, and Acinetobacter baumannii to identify resistance features for various drug classes including β-lactams, glycopeptides, and quinolones [10].

Comparative Genomics and Evolutionary Studies

ORF content analysis facilitates comparative studies of microbial evolution and phylogeny. Key applications include:

Genome Reduction Studies: Analysis of ORF content in bacterial parasites and symbionts reveals patterns of massive genome reduction, where these organisms retain only a subset of genes present in their free-living ancestors [2].

Horizontal Gene Transfer: Identification of ORFs with atypical GC content or codon usage can reveal genes acquired through horizontal transfer, often containing virulence or antibiotic resistance functions [2].

Strain Differentiation: Comparing ORF content among strains of the same species using whole-genome ORF arrays helps identify strain-specific genes that may contribute to phenotypic differences [8].

Challenges and Future Directions

While ORF prediction is relatively mature for prokaryotic genomes, several challenges remain:

Short ORFs (sORFs): Traditional algorithms often miss small open reading frames encoding proteins shorter than 100 amino acids [4]. These sORFs may encode functional microproteins or sORF-encoded proteins (SEPs) with important regulatory functions [4]. Recent studies indicate that 5'-UTRs of approximately 50% of mammalian mRNAs contain one or several upstream ORFs (uORFs), and similar regulatory elements exist in bacterial systems [4].

ORFans: A substantial fraction of ORFs in bacterial genomes have no known homologs (ORFans), presenting challenges for functional prediction [2]. These may represent rapidly evolving genes, taxon-specific adaptations, or false positive predictions.

Definitional Ambiguity: Surprisingly, at least three definitions of ORFs are in use in the scientific literature [7]. Some definitions require both start and stop codons, while others define ORFs simply as sequences bounded by stop codons with length divisible by three, regardless of the presence of a start codon [4] [7]. This definitional ambiguity can lead to inconsistencies in gene prediction and counting.

Future directions in ORF research include the integration of ribosome profiling (Ribo-seq) data to validate translation of predicted ORFs, development of machine learning approaches that incorporate multiple genomic features for improved prediction accuracy, and standardized functional characterization of the vast number of currently hypothetical proteins identified through ORF prediction in microbial genomes.

Small open reading frames (smORFs), typically defined as sequences shorter than 100-150 codons, represent a vast and largely unexplored frontier within the genomes of microbes and other organisms [11] [12]. For decades, conventional genome annotation pipelines systematically excluded these sequences, dismissing them as random noise or biologically irrelevant "junk DNA" due to their small size and the associated high false-positive prediction rate [13] [12]. This historical bias has hidden a potentially rich repository of functional elements. The advent of advanced genomic, ribonomic, and proteomic technologies has fundamentally overturned this view, revealing that thousands of smORFs are translated into functional microproteins—a diverse class of polypeptides with critical roles in regulation, metabolism, and stress response [11] [13] [14]. This technical guide examines the challenges and methodologies central to smORF and microprotein research, framed within the broader objective of advancing open reading frame prediction and functional annotation in microbial systems.

The Technical Challenge of smORF Annotation

The primary challenge in smORF research stems from their fundamental characteristics. Their short length means they possess lower statistical coding potential, making them difficult to distinguish from the millions of smORFs that occur stochastically throughout any genome [11] [15]. Furthermore, many microproteins exhibit intermediate evolutionary conservation and can emerge de novo, rendering traditional homology-based searches less effective [13] [15]. This creates a "needle in a haystack" problem, where identifying genuinely functional smORFs among a background of non-functional sequences is a significant computational and experimental hurdle [11].

Table 1: Key Challenges in smORF and Microprotein Research

| Challenge Domain | Specific Obstacle | Consequence |

|---|---|---|

| Computational Prediction | Low statistical coding potential due to short length [11] | High false-positive and false-negative rates in annotation |

| Intermediate evolutionary conservation; prevalence of de novo genes [13] [15] | Limited utility of standard homology-based tools | |

| Experimental Detection | Small size and low abundance of microproteins [12] | Difficult detection via standard mass spectrometry |

| Overlap with canonical coding sequences (CDSs) [13] | Complicates genetic knockout and functional screening | |

| Functional Validation | Distinguishing regulatory translation from protein-coding function [15] | Labor-intensive requirement for individual validation |

Methodological Framework: From smORF Discovery to Functional Validation

A multi-faceted, integrated approach is required to confidently identify and characterize smORFs and their encoded microproteins. The following sections outline the core methodological pillars of this field.

Computational Discovery and Prioritization

Bioinformatic tools form the first line of smORF discovery. Initial identification often involves using programs like getORF (provided by EMBOSS) to scan intergenic and RNA-derived sequences for all possible start-to-stop codon stretches [11]. However, given the immense number of putative smORFs, prioritization is essential. Machine learning frameworks are increasingly valuable for this task.

For instance, ShortStop is a recently developed tool that classifies translated smORFs into two categories: SAMs (Swiss-Prot Analog Microproteins), which resemble known microproteins, and PRISMs (Physicochemically Resembling In Silico Microproteins), which are synthetic sequences serving as a proxy for non-functional peptides [16] [15]. This classification helps researchers focus on the ~8% of smORFs that are most likely to be functional [15]. Other algorithms, such as PhyloCSF and miPFinder, leverage phylogenetic codon substitution frequencies and machine learning, respectively, to identify smORFs with high coding potential [13].

Figure 1: A Computational Workflow for smORF Discovery and Prioritization.

Empirical Evidence of Translation

Computational predictions require empirical validation. Ribosome Profiling (Ribo-seq) has been a revolutionary technology in this regard [13] [12]. This method involves deep sequencing of ribosome-protected mRNA fragments, providing a genome-wide snapshot of actively translating ribosomes. The key strength of Ribo-seq is its ability to reveal the three-nucleotide periodicity of ribosome movement, which not only confirms translation but also defines the exact reading frame [13]. Specialized variants like Translation Initiation Sequencing (TI-seq), which uses drugs like retapamulin in prokaryotes to capture initiating ribosomes, are particularly powerful for pinpointing authentic start codons [13].

Direct evidence of the translated microprotein is provided by proteogenomics, which integrates mass spectrometry (MS) with genomic data [17] [12]. This involves creating custom protein sequence databases from in silico translated smORFs and searching MS data against them. A major technical hurdle is the poor detection of microproteins in standard MS workflows, which can be mitigated by size-selective enrichment protocols (e.g., acid- and cartridge-based enrichment) to isolate small proteins below 17 kDa before MS analysis [15].

Functional Characterization and Validation

Establishing translation is only the first step; determining function is the ultimate goal. CRISPR-based functional screens have emerged as a powerful method for this. In a recent study, researchers used CRISPR to knock out thousands of smORF genes in a pre-fat cell model, identifying dozens that regulated fat cell proliferation or lipid accumulation [18]. This high-throughput approach can rapidly pinpoint smORFs critical for specific phenotypes.

For a deeper mechanistic understanding, structural biology techniques offer invaluable insights. Experimental structures determined via X-ray crystallography, cryo-electron microscopy, and NMR spectroscopy can reveal how a microprotein functions at the molecular level, for instance, by showing how it binds and modulates a larger protein complex [13].

Table 2: Key Experimental Reagents and Solutions for smORF Research

| Research Reagent / Tool | Function / Application |

|---|---|

| Ribosome Profiling (Ribo-seq) [13] [12] | Genome-wide mapping of actively translating ribosomes to provide empirical evidence of smORF translation. |

| Translation Initiation Inhibitors (e.g., Retapamulin, Onc112) [13] | Used in TI-seq to capture initiating ribosomes and accurately define translation start sites. |

| Size-Selective Protein Enrichment Cartridges [15] | Enrich for sub-17 kDa proteins from complex lysates to improve microprotein detection by mass spectrometry. |

| CRISPR sgRNA Libraries [18] | Enable high-throughput, pooled knockout screens to assess the functional importance of thousands of smORFs in a specific phenotype. |

| Synthetic Microproteins [19] | Chemically synthesized peptides for in vitro and in vivo functional assays, antibiotic testing, and structural studies (e.g., CD spectroscopy). |

Applications and Future Directions in Microbial Research

The study of smORFs is moving from discovery to application, particularly in microbiology and therapeutic development. A striking example is the use of deep learning to mine archaeal proteomes for encrypted antimicrobial peptides (AMPs). One study used the APEX 1.1 deep learning framework to identify over 12,000 putative AMPs, termed "archaeasins," from 233 archaeal proteomes [19]. Subsequently, 93% of a subset of 80 synthesized archaeasins showed antimicrobial activity in vitro, with one lead candidate, archaeasin-73, demonstrating efficacy comparable to polymyxin B in a mouse infection model [19]. This highlights the immense potential of smORFs as a source of new antibiotics.

Furthermore, the rapid evolution of microprotein genes suggests they may play key roles in host-pathogen interactions and immunity [14]. Their quick turnover rate is a hallmark of genes involved in evolutionary arms races, making them exciting candidates for understanding immune defense and autoimmune diseases [14].

Figure 2: From Functional Microprotein to Therapeutic Application.

The exploration of smORFs and microproteins represents a paradigm shift in our understanding of genomic coding potential. Moving beyond the simplistic view of a genome dominated by long, conserved open reading frames requires a sophisticated toolkit that integrates computational prioritization, advanced 'omics technologies, and high-throughput functional validation. For researchers studying microbes, this expanding universe of small elements offers a new layer of regulatory complexity and a promising reservoir of novel antibiotic and therapeutic candidates. As computational tools like ShortStop and deep learning models continue to evolve in tandem with sensitive proteomic and CRISPR screening methods, the systematic illumination of this "hidden proteome" will undoubtedly yield profound new insights into biology and medicine.

The emergence of new genes from previously non-coding sequences, known as de novo gene birth, represents a radical pathway for genomic innovation. This whitepaper explores the proto-gene model, which posits that functional genes evolve through transitional proto-gene phases generated by widespread translational activity in non-genic sequences. Within the context of microbial research, understanding these nascent open reading frames (ORFs) is paramount for refining gene prediction algorithms and comprehending evolutionary adaptation. We synthesize recent findings from eukaryotic and bacterial systems, present quantitative analyses of proto-gene properties, detail experimental methodologies for their identification, and provide visual frameworks for their study. The evidence confirms that proto-genes are not evolutionary artifacts but dynamic elements that arise frequently, can persist in populations, and serve as a reservoir for new gene functions.

The traditional view of gene evolution has centered on mechanisms that modify pre-existing genes, such as duplication and divergence. However, comparative genomics has revealed an abundance of lineage-specific genes across diverse taxa, many of which lack recognizable homologs. This observation, coupled with pervasive transcription and translation of non-genic sequences, supports the occurrence of de novo gene birth. The proto-gene hypothesis formalizes this process, suggesting that new functional genes evolve through intermediate proto-gene stages—transitory sequences translated from non-genic ORFs that provide adaptive potential [20] [21].

This model is particularly relevant for microbial research, where accurate ORF prediction is complicated by an abundance of short, taxonomically restricted sequences. In bacteria, whose genomes are generally compact, the very possibility of de novo gene birth was long doubted. Yet, recent studies confirm that proto-genes emerge regularly in bacterial populations, challenging traditional gene annotation pipelines and demanding refined computational and experimental approaches for their discovery [22] [23].

Quantitative Properties of Proto-genes

Proto-genes exhibit distinct sequence and structural properties that differentiate them from both established genes and non-coding sequences. These properties evolve along a continuum, reflecting their transitional status.

Genomic Features and Evolutionary Continuum

Analyses in model organisms like Saccharomyces cerevisiae demonstrate that proto-genes and young genes are shorter, less expressed, and evolve more rapidly than established genes. Their sequence composition is intermediate, with amino acid abundances and codon usage biases becoming more gene-like with evolutionary age [20]. A study of 23,135 human proto-genes further elucidated features correlated with their age and mechanism of emergence, summarized in Table 1 [24].

Table 1: Properties of Human Proto-genes by Genomic Emergence Mechanism

| Emergence Mechanism | Description | Intron Origin | Enriched Regulatory Motifs | 5' UTR mRNA Stability |

|---|---|---|---|---|

| Overprinting | Overlap with pre-existing exons on same or opposite strand | Correlated with genomic position | Core promoter motifs | Higher (similar to established genes) |

| Exonisation | Emergence within an intron, often via intron retention | ~41% may capture existing introns | Enhancers and TATA motifs | Lower |

| From Scratch | Emergence in intergenic regions; requires co-occurrence of all regulatory elements | Correlated with genomic position | Enhancers and TATA motifs | Lower |

Prevalence and Rates of Emergence

The propensity for proto-gene emergence is a subject of intense investigation. In a long-term evolution experiment (LTEE) with Escherichia coli, after 50,000 generations, almost 9% of nongenic regions located away from known genes were associated with high-density transcripts, of which about 25% underwent translation [23]. Contrary to expectations, this emergence occurs at a uniform rate across distant bacterial taxa despite significant genomic differences, suggesting taxon-specific mechanisms regulate their origination and persistence [22]. In yeast, hundreds of short, species-specific ORFs show evidence of translation and adaptive potential, with de novo gene birth from this reservoir potentially being more prevalent than sporadic gene duplication [20].

Experimental Protocols for Proto-gene Detection

Rigorous identification of proto-genes requires a multi-faceted approach, integrating comparative genomics, transcriptomics, and proteomics to distinguish functional coding sequences from spurious ORFs.

Integrated Genomic, Transcriptomic, and Proteomic Analysis

This protocol, adapted from recent bacterial studies, outlines a comprehensive strategy for proto-gene discovery [22].

- Objective: To identify and validate novel protein-coding genes that have emerged from non-coding sequences in a microbial genome.

- Procedure:

- Genome Sequencing and ORF Prediction:

- Sequence the genome of the target strain and its close relatives to establish synteny.

- Predict all possible ORFs (e.g., >9-30 codons) without applying strict length filters, including those overlapping annotated genes on sense and antisense strands.

- Comparative Genomics:

- Perform homology searches (e.g., using BLASTp) against translated ORFs from outgroup taxa to identify taxonomically restricted ORFs (ORFans).

- Manually inspect ORFans to confirm the absence of homologs and check for syntenic, non-coding sequences in outgroups to validate de novo emergence.

- Transcriptomic Analysis (RNA-seq):

- Culture cells under multiple growth conditions and stressors to capture condition-specific expression.

- Extract total RNA and prepare strand-specific RNA-seq libraries.

- Sequence and map reads to the genome. Identify transcribed regions that do not overlap annotated genomic features.

- Ribosome Profiling (Ribo-seq):

- Treat cells with cycloheximide to arrest translating ribosomes.

- Digest RNA and isolate ribosome-protected mRNA footprints.

- Sequence footprints and map them to the genome to confirm ORFs are actively translated.

- Proteomic Validation (Mass Spectrometry):

- Generate protein extracts from the same conditions used for transcriptomics.

- Digest proteins with trypsin and analyze peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

- Search mass spectra against a customized database containing all predicted ORFs (including novel candidates) and annotated genes.

- Apply stringent validation thresholds (e.g., q-value < 0.0001) and manually inspect fragmentation spectra to minimize false positives from decoy sequences.

- Genome Sequencing and ORF Prediction:

Workflow Visualization

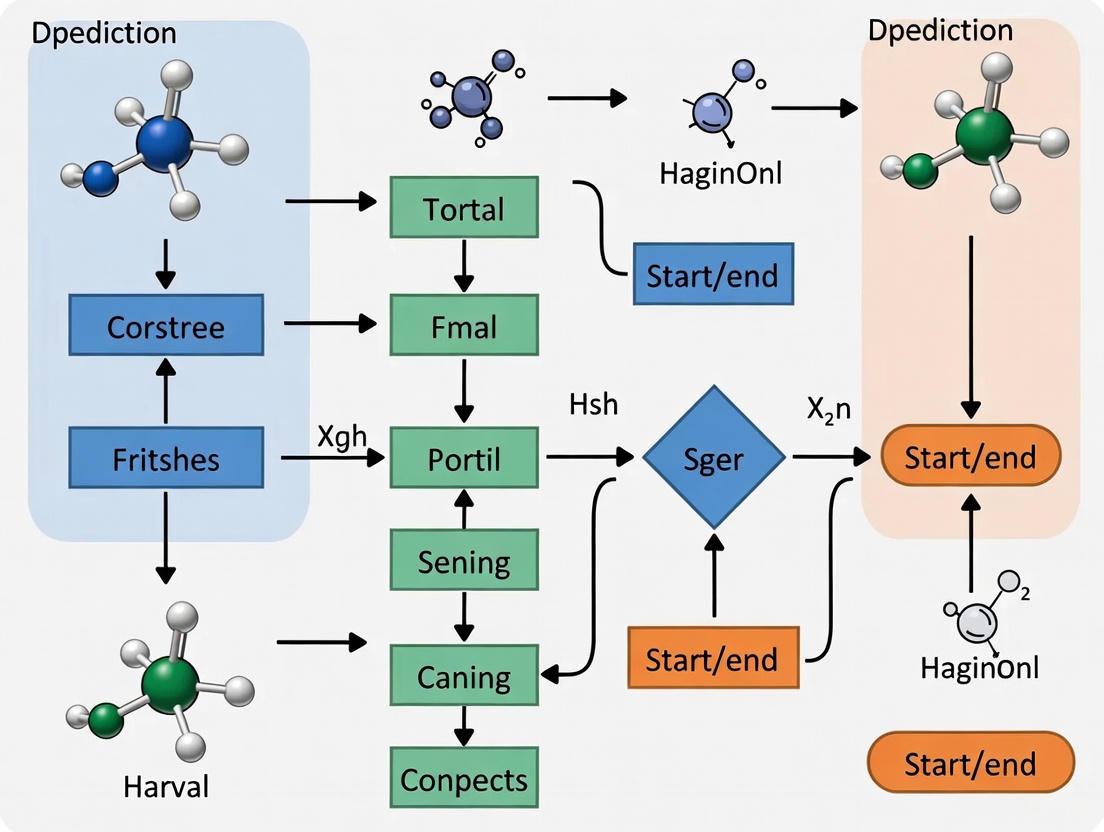

The following diagram illustrates the logical workflow and data integration points of the proto-gene identification protocol.

Signaling Pathways and Evolutionary Models

The emergence of proto-genes is not a singular event but a process governed by molecular signals and evolutionary pressures. Two non-mutually exclusive models have been proposed to explain this process.

Regulatory Motif Recruitment and Evolutionary Models

A key driver of proto-gene emergence is the acquisition of regulatory sequences. Research in the E. coli LTEE revealed that proto-genes most frequently emerge downstream of new mutations that fuse pre-existing regulatory sequences to previously silent regions, often via insertion element (IS) activity or chromosomal translocations. The formation of entirely new promoters is a rarer event [25]. This recruitment of regulatory elements jumpstarts transcription, the first critical step toward gene birth.

The evolutionary trajectory of these transcribed proto-genes is explained by two primary models, as illustrated in the following pathway diagram.

The Scientist's Toolkit: Research Reagent Solutions

Studying proto-genes requires specialized reagents and methodologies to detect and characterize these often elusive, weakly expressed elements. The following table details key resources.

Table 2: Essential Research Reagents for Proto-gene Analysis

| Reagent / Method | Function in Proto-gene Research | Key Considerations |

|---|---|---|

| Strand-Specific RNA-seq | Identifies transcripts originating from non-genic regions, including antisense strands. | Critical for detecting overlapping transcripts and assigning ORFs to the correct strand. |

| Ribo-seq (Ribosome Profiling) | Provides genome-wide snapshot of translated ORFs by sequencing ribosome-protected mRNA fragments. | Confirms translation; can reveal short or non-canonical ORFs missed by annotation. |

| High-Stringency Mass Spectrometry | Validates the existence of novel proteins at the peptide level. | Requires customized search databases and stringent statistical thresholds (e.g., q<0.0001) to avoid false positives from decoy hits [22]. |

| Long-Term Evolution Experiments (LTEE) | Directly observes the emergence and fixation of proto-genes in real-time. | Provides temporal data on mutation origins and population dynamics; exemplified by the E. coli LTEE [25] [23]. |

| Synthetic Random Peptide Libraries | Empirically tests the bioactivity and adaptive potential of random sequences. | Studies show a significant fraction of random peptides can affect cellular growth, supporting the plausibility of de novo birth [21]. |

The study of proto-genes has transformed from a controversial idea to a vibrant field demonstrating that genomes are more dynamic and creative than previously imagined. For microbial researchers, this paradigm shift underscores the necessity of moving beyond static gene catalogs. Accurate ORF prediction must now account for a fluid continuum of sequences, from non-coding DNA to proto-genes and established genes. Future efforts will need to leverage the powerful experimental tools outlined herein—particularly integrated multi-omics and controlled evolution experiments—to distinguish functional proto-genes from transcriptional noise. Understanding the birth of new genes from non-coding sequences not only clarifies a fundamental evolutionary process but also opens new avenues for discovering lineage-specific functions that could be targeted in drug development or harnessed in biotechnology.

The accurate identification of open reading frames (ORFs) represents a fundamental challenge in microbial genomics, with profound implications for understanding bacterial physiology, pathogenesis, and drug target discovery. Traditional genome annotation relied on assumptions that each gene contains a single, sufficiently long ORF and that minimal length cutoffs prevent spurious annotations [26]. However, emerging evidence demonstrates that these assumptions are incorrect, leading to a significant underestimation of microbial coding potential. The serendipitous discoveries of translated ORFs encoded upstream and downstream of annotated ORFs, from alternative start sites nested within annotated ORFs, and from RNAs previously considered noncoding have revealed that genetic information is more densely coded and that the proteome is more complex than previously anticipated [26].

This newly recognized complexity includes an abundance of small ORFs (sORFs) that encode functional small proteins, alternative ORFs (alt-ORFs) that expand the coding capacity of transcriptional units, and leaderless transcripts that employ non-canonical translation initiation mechanisms [26] [27]. These elements constitute what has been termed the "dark proteome" of microbes—functional genomic elements that have remained largely overlooked despite their potential significance for understanding bacterial biology and developing novel antimicrobial strategies. For researchers in drug development, these overlooked genomic regions represent potential new targets for therapeutic intervention, particularly as they often regulate critical metabolic processes and stress responses in pathogenic bacteria.

Computational Methods for ORF Prediction

Fundamental Concepts and Challenges

Computational identification of ORFs involves detecting DNA sequences uninterrupted by stop codons, but distinguishing truly coding from non-coding ORFs presents significant challenges. The primary obstacle lies in the fact that random DNA sequences statistically contain occasional stretches without stop codons, making length-based filtering necessary but potentially misleading [26] [28]. This challenge is particularly acute for short ORFs, whose length approaches statistical random ORF background frequencies and whose amino acid sequences provide limited bioinformatic value for traditional gene-finding algorithms [28]. Furthermore, conventional gene prediction tools that rely on sequence conservation and codon usage bias may fail to identify species-specific or rapidly evolving small protein-coding genes [26].

The problem is further compounded in metagenomic studies, where limited genomic context and the inherent fragmentation of assembled contigs complicate accurate gene prediction [29]. In bacterial and archaeal genomes, genes are not interrupted by introns, and intergenic space is minimal, making short read sequences more likely to encode a fragment of a gene uninterrupted by a stop codon. However, sequencing errors in earlier technologies presented additional challenges for ORF prediction, though modern Illumina-based sequencers generate reads where indel errors are rare, making ORF prediction more reliable [29].

Computational Tools and Algorithms

Table 1: Computational Tools for ORF Prediction and Analysis

| Tool | Methodology | Application Context | Key Features |

|---|---|---|---|

| OrfM [29] | Aho-Corasick algorithm to find regions uninterrupted by stop codons | Metagenomic reads, large datasets | Platform-agnostic; 4-5x faster than GetOrf/Translate; minimal length threshold: 96 bp |

| RNAcode [30] | Evolutionary signatures (substitution patterns, gap patterns) | Multiple sequence alignments | Statistical model without machine learning; works across all life domains; provides P-values |

| RiboCode [31] | Improved Wilcoxon signed-rank test on ribosome profiling data | Translation annotation from Ribo-seq | Identifies actively translated ORFs using triplet periodicity; handles noisy data |

| RanSEPs [26] | Random forest-based scoring of sORFs | Bacterial sORF identification | Species-specific scoring based on coding potential |

Several computational approaches have been developed to address these challenges. OrfM represents a highly efficient solution for large-scale metagenomic datasets, applying the Aho-Corasick algorithm to rapidly identify regions uninterrupted by stop codons [29]. This approach is particularly valuable for processing the enormous volume of data generated by modern sequencing platforms, as it demonstrates significantly faster processing times compared to traditional tools like GetOrf and Translate.

For evolutionary analysis, RNAcode provides a robust method for detecting protein-coding regions in multiple sequence alignments by combining information from nucleotide substitution patterns and gap patterns in a unified statistical framework [30]. The algorithm calculates expected amino acid similarity scores under a neutral nucleotide model and identifies deviations from this expectation that indicate coding potential. This method is particularly valuable for analyzing conserved genomic regions without prior annotation.

RiboCode takes a different approach by leveraging ribosome profiling data to identify actively translated ORFs based on the characteristic three-nucleotide periodicity of ribosome-protected fragments [31]. This method employs an improved Wilcoxon signed-rank test and P-value integration strategy to examine whether an ORF has more in-frame ribosome protected fragments than out-of-frame reads, providing evidence of active translation rather than mere coding potential.

Experimental Validation of Coding Potential

Ribosome Profiling (Ribo-seq)

Ribosome profiling has emerged as a powerful technique for experimentally mapping translated regions genome-wide. This method involves deep sequencing of ribosome-protected mRNA fragments, providing a snapshot of actively translated sequences at nucleotide resolution [26]. The technique relies on the fact that ribosomes protect approximately 30 nucleotides of mRNA from nuclease digestion, and sequencing these protected fragments reveals both the position and reading frame of translating ribosomes.

The standard ribosome profiling protocol involves several critical steps: (1) rapid harvesting of cells and flash-freezing to capture translational events; (2) nuclease digestion of unprotected mRNA regions; (3) size selection of ribosome-protected fragments (RPFs); (4) library preparation and deep sequencing; and (5) computational analysis to map RPFs to the reference genome [31]. Strong start and stop codon peaks along with clear 3-nucleotide periodicity provide unambiguous evidence of translation, allowing researchers to distinguish coding from non-coding ORFs regardless of their length or conservation [26].

To enhance the specificity of translation initiation site identification, modified protocols such as TIS-seq, GTI-seq, and QTI-seq have been developed. These methods use translation inhibitors like harringtonine, lactimidomycin, or puromycin to stall initiating ribosomes at start codons, enabling direct capture of translation initiation events [26]. Application of QTI-seq in mouse cells revealed that approximately 50% of mRNAs contain at least one upstream ORF (uORF) occupied by ribosomes, highlighting the prevalence of alternative translation initiation sites [26].

Mass Spectrometry-Based Approaches

Mass spectrometry provides direct evidence of protein expression by detecting peptide sequences derived from translated ORFs. Traditional proteomic approaches compare mass spectrometric data against databases of previously annotated proteins, but this approach inevitably misses novel small proteins and alternative ORFs [26]. To address this limitation, researchers now employ custom databases generated from all possible translations of a genome, enabling the detection of previously unannotated proteins.

Several specialized mass spectrometry approaches have been developed for small protein detection. Peptidomics methods that inhibit proteolysis and use electrostatic repulsion hydrophilic interaction chromatography for peptide separation have identified 90 new proteins in human cells, many matching proteins encoded by alt-ORFs [26]. In bacteria, N-terminomics approaches that inhibit the deformylase enzyme and enrich for formylated N-terminal peptides allow specific detection of translation initiation sites, as bacterial translation is initiated with N-formylated methionine tRNA [26]. Application of this method in Listeria monocytogenes revealed 6 putative sORFs and 19 putative alt-ORFs with translation initiation sites internal to an annotated ORF [26].

Integrated Workflow for Experimental Validation

The most robust experimental approaches combine multiple complementary methods to validate coding potential. A typical integrated workflow begins with computational prediction of putative ORFs, followed by ribosome profiling to assess ribosome engagement, and culminates with mass spectrometry to confirm protein expression. This multi-step approach maximizes both sensitivity and specificity in ORF annotation.

Diagram 1: Experimental validation workflow for ORF annotation showing sequential steps from computational prediction to functional validation.

Leaderless Transcription and Translation

Prevalence and Mechanism

Leaderless transcripts represent a significant departure from canonical bacterial translation initiation mechanisms. These mRNAs lack a 5' untranslated region (UTR) and Shine-Dalgarno ribosome-binding site, instead beginning immediately with the initiation codon [27]. While initially considered rare anomalies, genomic studies have revealed that leaderless transcription is surprisingly common in certain bacterial lineages. In mycobacteria, nearly one-quarter of transcripts are leaderless, indicating this represents a major feature of their translational landscape rather than an exception [27].

The mechanism of leaderless translation differs fundamentally from canonical initiation. Rather than involving 30S ribosomal subunit binding to a Shine-Dalgarno sequence, leaderless translation appears to be mediated by direct binding of 70S ribosomes to the 5' end of the mRNA [27]. Experimental studies using translational reporters in mycobacteria have demonstrated that an AUG or GUG (collectively designated RUG) at the mRNA 5' end is both necessary and sufficient for leaderless translation initiation [28] [27]. This mechanism is comparably robust to leadered initiation in these species, suggesting it represents a biologically significant alternative translation strategy rather than a inefficient backup system.

The conservation of this mechanism across bacterial domains suggests it may represent an ancient mode of translation initiation [27]. Leaderless genes are particularly common in archaea and mitochondria, supporting the hypothesis that this mechanism predates the Shine-Dalgarno-dependent initiation that characterizes most well-studied bacterial model systems [27].

Functional Significance of Leaderless sORFs

Leaderless transcripts often encode small proteins that function as regulatory elements, particularly in metabolic pathways. In mycobacteria, many leaderless sORFs contain consecutive cysteine codons (polycysteine tracts) upstream of genes involved in cysteine metabolism [28]. These sORFs function as cysteine-responsive attenuators that regulate expression of downstream operonic genes in response to cellular cysteine availability.

The regulatory mechanism involves ribosome stalling at polycysteine tracts when charged cysteine-tRNA levels are low. Under cysteine-replete conditions, ribosomes quickly translate through the polycysteine-encoding sORF, allowing formation of an mRNA secondary structure that sequesters the ribosome-binding site of the downstream gene [28]. When cysteine is limited, ribosomes stall at the consecutive cysteine codons, preventing formation of this inhibitory structure and allowing translation of the downstream genes involved in cysteine biosynthesis [28]. This mechanism enables individual operons to respond independently to cysteine availability while ensuring coordinated regulation across the metabolic pathway.

Diagram 2: Regulatory mechanism of polycysteine leaderless sORFs in cysteine-responsive attenuation.

Research Reagent Solutions

Table 2: Essential Research Reagents for ORF and Leaderless Transcription Studies

| Reagent/Category | Specific Examples | Function/Application |

|---|---|---|

| Ribosome Profiling Reagents | Harringtonine, Lactimidomycin, Puromycin | Translation inhibitors that stall initiating/elongating ribosomes for precise mapping of translation events |

| Mass Spectrometry Reagents | Deformylase inhibitors, Chromatography materials (e.g., ERIC) | Enrichment of N-terminal peptides and separation of small proteins for proteomic detection |

| Computational Tools | OrfM, RiboCode, RNAcode | Bioinformatic prediction of ORFs and assessment of coding potential from sequence and ribosome data |

| Translation Reporters | Luciferase, GFP | Empirical assessment of translation initiation efficiency and regulatory mechanisms |

| Sequence Datasets | RNA-seq, Ribo-seq, TSS mapping data | Empirical evidence for transcript boundaries and ribosome occupancy |

Comparative Analysis of Methodologies

Table 3: Performance Comparison of ORF Identification Methods

| Method Type | Sensitivity | Specificity | Applications | Limitations |

|---|---|---|---|---|

| Bioinformatic Prediction | Moderate (high false negative rate for sORFs) | Variable (high false positive rate) | Initial genome annotation, high-throughput screening | Limited by training data, misses novel genes |

| Ribosome Profiling | High for translated ORFs | High (with 3-nt periodicity) | Genome-wide mapping of translation, uORF identification | Does not confirm protein stability/function |

| Mass Spectrometry | Lower for small proteins | Very high (direct protein evidence) | Validation of protein expression, protein-level quantification | Limited by protein size, abundance, and detectability |

| Integrated Approaches | Very high | Very high | Comprehensive ORF annotation, functional studies | Resource-intensive, technically complex |

The field of ORF annotation has evolved dramatically from its initial reliance on simplistic assumptions about coding potential. We now recognize that microbial genomes employ diverse coding strategies, including alternative ORFs, small proteins, and leaderless transcription, that significantly expand their functional capability. For researchers and drug development professionals, these previously overlooked genomic elements represent both a challenge and an opportunity—a challenge because they complicate genome annotation efforts, but an opportunity because they may reveal novel biological mechanisms and potential therapeutic targets.

Robust identification of coding regions requires integrated approaches that combine computational prediction with experimental validation through ribosome profiling and mass spectrometry. The specialized case of leaderless transcription demonstrates how species-specific adaptations can dramatically reshape translational landscapes, with nearly one-quarter of mycobacterial transcripts employing this non-canonical initiation mechanism. As sequencing technologies continue to advance, particularly with the improved contiguity provided by long-read metagenomic sequencing [32], our ability to detect and characterize these elusive genomic elements will continue to improve, promising new insights into microbial biology and novel avenues for therapeutic intervention.

A Practical Toolkit for Microbial ORF Prediction: From Algorithms to Real-World Data

Ab initio gene prediction represents a critical methodology for identifying protein-coding genes in genomic sequences without relying on experimental data or known homologs. This whitepaper examines the core computational frameworks, primarily Hidden Markov Models (HMMs), that power tools like GeneMark to decipher genetic signatures within microbial genomes. We detail the underlying algorithms, provide performance comparisons against emerging deep learning tools such as Helixer, and present standardized protocols for gene prediction in novel fungal genomes. Within the context of open reading frame (ORF) prediction in microbial research, this guide equips researchers and drug development professionals with the technical knowledge to select, implement, and critically evaluate ab initio annotation tools, thereby strengthening the foundation for downstream functional genomics and target identification.

Ab initio gene prediction is a computational approach that identifies protein-coding regions in DNA sequences using intrinsic signals and statistical patterns alone. Unlike evidence-based methods that require RNA-seq data or homologous proteins, ab initio tools rely on fundamental genetic signatures such as start and stop codons, splice sites (in eukaryotes), codon usage bias, and nucleotide composition to distinguish coding from non-coding sequences [33] [34]. This capability is particularly vital for annotating novel genomes where extrinsic evidence is scarce or unavailable.

The core challenge in microbial gene prediction lies in the accurate identification of translation initiation sites. The "longest ORF" rule, often used as a simple heuristic, has a theoretical accuracy of only approximately 75%, underscoring the need for more sophisticated models that incorporate the context of the ribosomal binding site (RBS) and its variable spacer length [34]. Hidden Markov Models have emerged as the predominant statistical framework to address this complexity, enabling the integration of multiple probabilistic signals into a unified gene-finding system.

Core Computational Framework: Hidden Markov Models

Theoretical Foundations

A Hidden Markov Model is a statistical model that represents a doubly embedded stochastic process: an unobservable Markov chain of hidden states and a set of observable symbols emitted by these states [35]. Its power in modeling biological sequences stems from its capacity to capture dependencies between adjacent sequence elements.

An HMM is defined by the parameter set λ = (A, B, π) [35]:

- State Space (Q): The set of all N possible hidden states (e.g., exon, intron, intergenic).

- Observation Space (V): The set of all M possible observable symbols (e.g., A, C, G, T).

- Transition Probability Matrix (A): An N×N matrix where aij = P(xt+1 = qj | xt = qi) defines the probability of transitioning from state i to state j.

- Emission Probability Matrix (B): An N×M matrix where bj(k) = P(ot = vk | xt = qj) defines the probability of emitting symbol k while in state j.

- Initial State Distribution (π): A vector of probabilities πi = P(x1 = qi) for starting in each state i.

The HMM approach to gene prediction rests on two key assumptions [35]:

- The Homogeneous Markov Property: The state at time t depends only on the state at time t-1.

- Observation Independence: Each observation ot depends only on the current state xt.

The Three Fundamental Problems and Algorithms

HMMs are applied to gene prediction through three canonical problems, each with a corresponding algorithmic solution [35].

Problem 1: Evaluation - Computing the probability P(O|λ) that a given observation sequence O was generated by the model λ. This is efficiently solved by the Forward-Backward Algorithm, which uses dynamic programming to avoid computational intractability.

Problem 2: Decoding - Determining the most likely sequence of hidden states X given the observations O and the model λ. This is solved by the Viterbi Algorithm, another dynamic programming approach that finds the optimal path through the state space. The algorithm recursively computes δt(i), the probability of the most probable path ending in state i at time t, and backtraces using ψt(i) to reconstruct the full state sequence [35].

Problem 3: Learning - Estimating the model parameters λ = (A, B, π) that maximize P(O|λ). This can be approached via:

- Supervised Learning: When labeled training data (known state sequences) is available, parameters are derived directly from observed frequencies of transitions and emissions [35].

- Unsupervised Learning (Baum-Welch Algorithm): An Expectation-Maximization (EM) algorithm used when no labeled data exists. It iteratively refines model parameters until convergence, making it essential for analyzing novel genomes [34].

The following diagram illustrates the logical workflow and data flow between these core HMM algorithms.

Implementation in GeneMark and Comparative Tool Analysis

The GeneMark Suite: From Prokaryotes to Eukaryotes

The GeneMark family of tools exemplifies the evolution of HMM-based ab initio prediction. Its implementations are tailored to different taxonomic groups and data availability [33]:

- GeneMarkS and GeneMark.hmm: Utilize unsupervised training for prokaryotic genomes. GeneMarkS improved gene start prediction by integrating a model of the RBS through Gibbs sampling multiple alignment [34].

- GeneMark-ES: An extension for eukaryotic genomes that employs unsupervised training without requiring predetermined training sets. Version 2 enhanced its intron submodel to accommodate variations in splicing mechanisms across fungal phyla like Ascomycota, Basidiomycota, and Zygomycota [36].

- GeneMark-ET, EP, ETP: Integrate external evidence such as RNA-seq reads (ET), cross-species protein sequences (EP), or both (ETP) into the self-training framework of GeneMark-ES [33].

Performance Comparison of Modern Ab Initio Tools

The following table summarizes a quantitative performance comparison of contemporary ab initio tools as reported in recent evaluations.

Table 1: Performance Comparison of Ab Initio Gene Prediction Tools

| Tool | Core Methodology | Training Requirement | Key Phylogenetic Strength | Reported Performance (F1 Score) |

|---|---|---|---|---|

| Helixer [37] | Deep Learning (CNN + RNN) + HMM post-processing | Pretrained models; no species-specific training | Plants, Vertebrates | Phase F1 notably higher than GeneMark-ES/AUGUSTUS in plants/vertebrates |

| GeneMark-ES [37] [36] | Hidden Markov Model | Unsupervised (self-training) | Fungi, Invertebrates | Competitively performed with Helixer in fungi; strong in some invertebrates |

| AUGUSTUS [37] | Hidden Markov Model | Supervised or unsupervised | General eukaryotes | Performance varies; can be outperformed by Helixer, especially with softmasking |

| Tiberius [37] | Deep Neural Network | Mammal-specific training | Mammalia | Outperforms Helixer in mammals (e.g., ~20% higher gene precision/recall) |

Helixer, a recently developed tool, represents a significant shift by using a combination of convolutional and recurrent neural networks for base-wise classification of genic features (e.g., coding regions, UTRs), followed by an HMM-based tool (HelixerPost) to assemble final gene models [37]. While its pretrained models achieve state-of-the-art performance in plants and vertebrates, traditional HMM tools like GeneMark-ES and AUGUSTUS remain highly competitive, and in some cases superior, for specific clades like fungi [37].

Experimental Protocol: Gene Prediction in Novel Fungal Genomes

This section provides a detailed methodology for annotating a newly sequenced fungal genome using the ab initio algorithm GeneMark-ES, based on its application as described in Ter-Hovhannisyan et al. (2008) [36].

Input Requirements and Preparation

- Genomic Sequence Data: The anonymous genomic DNA sequence of the target fungal genome in FASTA format. The genome assembly should be as contiguous as possible to maximize prediction accuracy.

- Computational Resources: A standard high-performance computing (HPC) cluster or server with sufficient memory (RAM) to hold the entire genome and model parameters in memory during computation.

- Software: GeneMark-ES software, available for download from the Georgia Institute of Technology website [33].

Step-by-Step Procedure

Software Installation and Setup:

- Download the GeneMark-ES distribution package.

- Compile the source code according to the provided instructions, ensuring all library dependencies are met.

- Add the compiled binaries to the system

PATH.

Algorithm Execution:

- Run the GeneMark-ES algorithm using the basic command structure:

- The

--ESflag triggers the unsupervised self-training mode specific to eukaryotes.

Iterative Unsupervised Training (Internal Process):

- The algorithm initiates a multi-pass bootstrapping process. It begins by identifying regions with strong coding potential using a simplified model.

- These initial predictions are used to estimate parameters for the initial HMM, including models for exons, introns, and intergenic regions.

- The model is refined iteratively. The intron submodel is enhanced progressively to its full complexity, accommodating fungal-specific splice mechanisms (e.g., with and without branch point sites) [36].

- The process converges when parameter estimates stabilize between iterations.

Genome Parsing and Prediction:

- The final, trained HMM is used to parse the entire genome sequence via the Viterbi algorithm [35].

- This step identifies the most probable path through the hidden states (e.g., intergenic, exon, intron), thereby defining the coordinates and structures of putative genes.

Output Generation:

- The primary output is a Gene Transfer Format (GTF) or General Feature Format (GFF) file containing the coordinates of all predicted genes, exons, introns, and other relevant features.

- The tool also typically generates a file with the predicted protein sequences in FASTA format.

Output Analysis and Validation

- Structural Validation: Compare the predicted gene structures against any available expressed sequence tags (ESTs) or RNA-seq data from public databases to validate splice sites and intron-exon boundaries.

- Functional Annotation: Perform BLASTP searches of the predicted protein sequences against non-redundant (Nr) and fungal-specific protein databases (e.g., UniProt, FungiDB) to assign putative functions.

- Benchmarking: Assess the completeness of the predicted proteome using tools like Benchmarking Universal Single-Copy Orthologs (BUSCO) to determine the fraction of conserved fungal genes that were successfully identified [37].

The following workflow diagram maps the key stages of this protocol.

The following table catalogues key computational tools and data resources essential for conducting ab initio gene prediction and subsequent validation.

Table 2: Essential Reagents and Resources for Ab Initio Gene Prediction Research

| Item Name | Type | Function / Application | Example / Source |

|---|---|---|---|

| Ab Initio Prediction Software | Software Tool | Core engine for predicting gene models from sequence alone. | GeneMark-ES [33] [36], Helixer [37], AUGUSTUS [37] |

| Reference Genome Sequence | Data | The assembled genomic DNA sequence to be annotated. | Target organism's FASTA file. |

| High-Performance Computing (HPC) Cluster | Infrastructure | Provides the computational power required for training models and parsing large genomes. | Local university cluster, cloud computing (AWS, Google Cloud). |

| BUSCO Dataset | Data / Software | Benchmarks annotation completeness by searching for universal single-copy orthologs. | BUSCO software with lineage-specific datasets (e.g., fungi_odb10) [37]. |

| Sequence Homology Databases | Database | Provides independent evidence for validating the predicted protein sequences. | UniProt, Nr, FungiDB. |

| Curated Model Parameters | Data | Pre-computed HMM parameters for well-studied species, can be used for related organisms. | Species-specific parameters available on GeneMark.hmm website [38]. |

Ab initio gene prediction, powered by robust statistical models like HMMs, remains an indispensable component of modern genomics. While established tools such as the GeneMark suite continue to offer reliable, unsupervised annotation across diverse taxa, the field is being advanced by new deep learning approaches like Helixer, which show exceptional performance in specific phylogenetic groups. The accuracy of these tools directly impacts downstream research, from functional gene characterization in academic labs to target identification in drug discovery pipelines. As genomic sequencing continues to outpace functional characterization, the refinement of these computational methods will be paramount for unlocking the biological insights encoded within microbial DNA.

In the field of microbial genomics, accurately identifying homologous sequences—genes sharing a common evolutionary ancestor—is a fundamental task. Homology can be categorized into orthology, which arises from speciation events, and paralogy, which results from gene duplication events [39]. For researchers focused on open reading frame (ORF) prediction in microbes, distinguishing between these is critical, as orthologs typically retain the same biological function across different species, while paralogs may evolve new functions [39]. This distinction is vital for functional annotation, comparative genomics, and phylogenetic studies. The core challenge lies in the fact that due to slight sequence dissimilarity between orthologs and paralogs, analyses are prone to falsely identifying paralogs as orthologs [39]. This technical guide outlines sophisticated methods using BLAST and custom databases to achieve precise ortholog identification, framed within the context of microbial ORF research.

The BLAST Toolsuite: Programs and Applications

The Basic Local Alignment Search Tool (BLAST) suite is the cornerstone of modern homology search. Selecting the appropriate BLAST program is the first critical step in any analysis pipeline [40].

Table 1: Core BLAST Programs for Nucleotide and Protein Analysis

| Program | Query Type | Database Type | Primary Use Case | Key Consideration |

|---|---|---|---|---|

| BLASTN [40] | Nucleotide | Nucleotide | Compare a DNA sequence against a nucleotide database (e.g., to find similar genomic regions). | Default database is the "nucleotide collection (nt/nr)". Less sensitive for distant relationships due to degeneracy of the genetic code. |

| BLASTP [40] | Protein | Protein | Compare a protein sequence against a protein database (e.g., to infer function). | Often coupled with motif searches for detecting weaker sequence similarity. |

| BLASTX [40] | Nucleotide (translated) | Protein | Analyze a nucleotide sequence by translating it in all six reading frames and comparing the products to a protein database. | Ideal for confirming protein-coding potential of a novel DNA sequence, such as a predicted ORF. |

| TBLASTN [40] | Protein | Nucleotide (translated) | Search a translated nucleotide database using a protein query. | Useful for finding homologous genes in unfinished genomes or environmental sequences. |

| TBLASTX [40] | Nucleotide (translated) | Nucleotide (translated) | Compare a translated nucleotide query against a translated nucleotide database. | Computationally intensive; used for deep analysis of nucleotide sequences where protein homology is low. |

For more complex analyses, advanced iterative BLAST methods are available. PSI-BLAST (Position-Specific Iterative BLAST) creates a position-specific scoring matrix (PSSM) from the initial search results and uses it for subsequent searches, dramatically improving sensitivity for detecting remote homologs [40]. DELTA-BLAST (Domain Enhanced Lookup Time Accelerated BLAST) further improves performance by using a database of pre-constructed PSSMs [40].

Orthology Detection: From Basic Hits to Sophisticated Inference

While BLAST is a powerful tool for finding homologs, additional layers of analysis are required to infer orthology with high confidence. Simple methods like Reciprocal Best Hit (RBH), where two genes from two different species are each other's best BLAST hit, are a starting point but can be error-prone, particularly in the presence of paralogs [39].

More robust, phylogeny-based methods have been developed to address these shortcomings. These methods use evolutionary relationships to distinguish orthologs from paralogs but are computationally demanding and can be affected by uncertainties in phylogenetic tree reconstruction [39]. The Mestortho algorithm represents a novel evolutionary distance-based approach that operates on the principle of minimum evolution [39]. It postulates that a set of sequences consisting purely of orthologs will have a smaller sum of branch lengths (the Minimum Evolution Score, or MES) on a phylogenetic tree than a set that includes paralogous relationships [39]. The algorithm computationally evaluates possible sequence sets to find the one with the smallest MES, which is then identified as the orthologous cluster.

Table 2: Comparison of Orthology Detection Methods

| Method | Underlying Principle | Key Advantage | Key Limitation |

|---|---|---|---|

| Reciprocal Best Hit (RBH) [39] | BLAST-based heuristic (reciprocity) | Simple and fast to compute. | High error rate in the presence of paralogs; ignores evolutionary distance. |

| Reciprocal Smallest Distance (RSD) [39] | Evolutionary distance (maximum likelihood) | Uses a more robust evolutionary distance than BLAST E-values. | Still susceptible to falsely detecting homoplasious paralogs as orthologs. |

| Orthostrapper [39] | Phylogeny and bootstrap resampling | Uses bootstrap values to assess confidence, overcoming some tree topology issues. | Computationally intensive and can be slow for large datasets. |

| Mestortho [39] | Evolutionary distance and minimum evolution | Appears free from problems of incorrect topologies of species and gene trees; good balance of sensitivity and specificity. | Requires a multiple sequence alignment as input. |

Specialized databases and resources are essential for orthology analysis. Clusters of Orthologous Groups (COGs) provide a phylogenetic classification of proteins from completed microbial genomes, where each COG consists of orthologs from at least three lineages [40]. The EggNOG database provides automated construction of Non-supervised Orthologous Groups (NOGs) and functional annotation [40]. The KEGG Automatic Annotation Server (KAAS) assigns KEGG Orthology (KO) identifiers to genes via BLAST comparisons, enabling pathway mapping [40].

Experimental Protocols and Workflows

Protocol 1: Basic BLAST Workflow for Homology Search

This protocol describes the standard procedure for conducting a BLAST search to identify homologous sequences, a prerequisite for more specialized ortholog detection.

- Sequence Preparation: Obtain the query sequence (nucleotide or protein) in FASTA format. For nucleotide sequences encoding proteins, BLASTX is often the most informative program.

- Program and Database Selection: Select the appropriate BLAST program based on your query and goal (see Table 1). Choose a relevant database (e.g., "Non-redundant protein sequences (nr)" for a comprehensive search or a specific genomic database for a targeted search). The EMBL-EBI BLAST server allows searching against specific data like prokaryote or bacteriophage sequences [40].

- Parameter Configuration: Adjust algorithm parameters as needed. Critical parameters include:

- Result Interpretation: Analyze the output, focusing on significant hits with low E-values, high percent identity, and high query coverage. Use built-in visualizations like pairwise alignment graphs and summary statistics to aid interpretation [41].

Protocol 2: Ortholog Identification Using the Mestortho Algorithm

This protocol details the steps for using the Mestortho program to extract orthologs from a set of homologous sequences [39].

- Input Alignment Preparation: Generate a multiple sequence alignment of homologous sequences in ClustalW, FASTA, or Phylip format. The sequence identifiers must include species information.

- Reference Sequence Designation: Select a reference sequence to define the orthologous cluster of interest.

- Program Execution: Run Mestortho on the prepared alignment. The program will:

- Classify sequences into those with single and multiple occurrences per species.

- Generate exhaustive combinatorial sets from sequences with multiple occurrences per species.

- Reconstruct a Neighbor-Joining (NJ) tree for each merged dataset and calculate its Minimum Evolution Score (MES).

- Select the dataset with the smallest MES as the orthologous cluster.

- Output Analysis: Mestortho provides the list of orthologous sequences, the MES, co-orthology relationships, and the NJ tree of the orthologs [39].

Workflow Visualization: From ORF Prediction to Ortholog Identification

The following diagram illustrates the integrated workflow for predicting open reading frames in a microbial genome and subsequently identifying their orthologs via homology searching.

Implementation and Customization

Leveraging Custom Databases and Local Implementations

While web-based BLAST services are convenient, using custom databases offers significant advantages for specialized research. Local BLAST implementations allow researchers to create databases from proprietary or specific sets of genomes, enabling faster, confidential searches tailored to their projects [41]. Tools like SequenceServer provide a user-friendly interface for setting up local BLAST servers, facilitating the sharing of custom databases and analyses within a team [41]. This is particularly useful for ongoing microbial genomics projects where internal sequence data is continuously generated.

For orthology analysis, resources like the Actinobacteriophage Database allow for direct BLAST analyses against a curated set of phages infecting Actinobacterial hosts [40]. Similarly, the Database of Bacterial ExoToxins (DBETH) provides a specialized database for homology searches related to bacterial exotoxins [40].

Table 3: Key Bioinformatics Resources for Homology Search and Orthology Detection

| Resource Name | Type/Function | Brief Description and Utility |

|---|---|---|

| NCBI BLAST Suite [40] | Core Search Engine | The standard toolkit for basic local alignment search against public repositories. Essential for initial homology assessment. |

| ORFfinder [42] | ORF Prediction Tool | Identifies open reading frames in DNA sequences. The first step in characterizing the protein-coding potential of a microbial genome. |

| Mestortho [39] | Orthology Detection Software | A specialized program that uses the minimum evolution principle to identify orthologs from a set of homologs with high reliability. |

| SequenceServer [41] | Custom BLAST Server | Software to set up and run a local BLAST server with custom databases, enabling secure, fast, and collaborative analysis. |

| COG/eggNOG [40] | Orthologous Group Databases | Pre-computed clusters of orthologs. Used for functional annotation and evolutionary classification of novel protein sequences. |

| HHpred [40] | Remote Homology Detection | A sensitive method for database searching and structure prediction based on Hidden Markov Model (HMM) comparison, useful for detecting very distant relationships. |

| SmORFinder [43] | Specialized ORF Annotation | A tool combining profile HMMs and deep learning to identify and annotate small open reading frames (smORFs) in microbial genomes. |

Visualization and Advanced Analysis

Advanced visualization tools can greatly enhance the interpretation of homology and orthology data. Kablammo is a web-based tool that creates interactive, publication-ready visualizations of BLAST results, making it easy to identify interesting alignments [40]. For synteny analysis—the study of conserved gene order—GeCoViz provides fast and interactive visualization of custom genomic regions, which can be anchored by a target gene found via BLAST [40]. This is crucial for confirming orthology, as true orthologs often reside in conserved genomic contexts.