Optimizing Cloud-Based STAR RNA-seq Workflows: A Comprehensive Guide for Scalable and Cost-Effective Transcriptomics

This article provides a comprehensive guide for researchers and drug development professionals on implementing and optimizing the STAR aligner for RNA-seq analysis in cloud environments.

Optimizing Cloud-Based STAR RNA-seq Workflows: A Comprehensive Guide for Scalable and Cost-Effective Transcriptomics

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing and optimizing the STAR aligner for RNA-seq analysis in cloud environments. Covering foundational concepts through advanced optimization strategies, it details how cloud-native architectures enable processing of terabyte-scale datasets efficiently. The content explores cost-performance trade-offs, practical troubleshooting for common pipeline challenges, and validation methodologies to ensure biological accuracy. By synthesizing recent performance analyses and optimization techniques, this guide serves as an essential resource for teams seeking to leverage cloud computing for high-throughput transcriptomics while maintaining analytical rigor and reproducibility.

Cloud Computing and STAR Aligner: Foundations for Modern Transcriptomics

The field of genomics is experiencing an unprecedented data explosion. The integration of cutting-edge sequencing technologies, artificial intelligence, and multi-omics approaches has fundamentally reshaped biological research, enabling unprecedented insights into human biology and disease [1]. Next-Generation Sequencing (NGS) technologies, particularly for RNA sequencing (RNA-seq), have democratized genomic research by making large-scale sequencing faster, cheaper, and more accessible than ever before [1]. However, this progress has created a monumental computational challenge: a single human genome sequence generates approximately 150 gigabytes of data, while large-scale studies can require petabytes of storage capacity [2]. Traditional computing methods, which rely on local servers and personal computers, struggle immensely with such immense datasets, creating significant bottlenecks that slow down scientific discovery [2]. Cloud computing has emerged as an essential solution to this data deluge, providing researchers with on-demand access to scalable, high-performance computing resources without the need for massive infrastructure investments [3] [2]. This paradigm shift is particularly crucial for modern RNA-seq analysis, where tools like the STAR aligner require substantial computational resources to process the massive volumes of data generated by transcriptomic studies [4] [5]. By leveraging cloud infrastructure, researchers can now perform analyses in a fraction of the time it would take using traditional methods, enabling rapid insights into gene expression, alternative splicing, and transcriptional regulation that were previously impractical or impossible [4].

The Computational Challenge of RNA-seq Data

Scale of the Data Problem

The data generation capabilities of modern sequencing technologies have far outpaced traditional computational capacity. While the initial Human Genome Project took 13 years to complete, today's cloud-based platforms can process whole-genome sequencing in hours [2]. This acceleration comes with massive data storage and processing requirements that challenge institutional computing resources. RNA-seq experiments, especially those utilizing single-cell or spatial transcriptomics approaches, regularly generate terabytes of data that require specialized, scalable infrastructure for efficient processing [1] [6].

The fundamental challenge lies not only in the volume of data but also in its complexity. RNA-seq analysis involves multiple computationally intensive steps, including quality control, alignment, quantification, and differential expression analysis [4] [7]. Each step demands significant memory, processing power, and storage capacity, often requiring different computational optimizations. For example, the STAR aligner, one of the most widely used tools for RNA-seq alignment, requires substantial memory for genome indexing - often needing 48 GB or more for human genomes [4]. This memory requirement alone exceeds the capacity of most personal computers and many institutional servers, particularly when multiple analyses need to be run concurrently.

Limitations of Traditional Computing Infrastructure

Traditional computing infrastructure struggles with RNA-seq data on multiple fronts. Local servers and workstations typically have fixed capacity, creating bottlenecks during peak usage periods and remaining underutilized during slower periods [2]. The procurement process for additional hardware can take weeks or months, significantly delaying research progress [2]. Furthermore, maintaining and upgrading physical infrastructure requires substantial financial investment and specialized IT expertise, diverting resources from core research activities [3].

The pay-as-you-go model of cloud computing eliminates these constraints by providing instant access to virtually unlimited computational resources [3] [2]. Researchers can scale their computing power based on immediate project needs, accessing supercomputer-level resources when required without long-term commitment or infrastructure management [2]. This flexibility is particularly valuable for RNA-seq studies with variable data volumes or processing requirements across different stages of analysis.

Table 1: Comparative Analysis of Computing Approaches for RNA-seq Analysis

| Computing Aspect | Traditional Computing | Cloud Computing |

|---|---|---|

| Resource Scalability | Fixed capacity; requires hardware procurement for expansion | Dynamic, on-demand scaling based on workload requirements |

| Cost Structure | High upfront capital expenditure for hardware | Pay-as-you-go model with no upfront costs |

| Accessibility | Limited to physical location or institutional network | Global access via internet connection |

| Maintenance Responsibility | User/institution responsible for hardware and software maintenance | Cloud provider manages infrastructure maintenance and updates |

| Typical Setup Time | Weeks to months for new hardware | Minutes to hours for provisioning new resources |

| Suitability for Large RNA-seq Studies | Limited by local storage and processing constraints | Virtually unlimited capacity for large-scale studies |

Cloud-Native RNA-seq Workflow Architecture

Core Components of Cloud-Based RNA-seq Analysis

Implementing an effective cloud-based RNA-seq analysis pipeline requires several interconnected components that leverage the unique advantages of cloud infrastructure. The core architecture typically includes: (1) scalable object storage for raw sequencing data and processed files, (2) on-demand virtual machines with appropriate CPU and memory configurations, (3) containerization technologies for reproducible tool deployment, (4) workflow management systems for pipeline orchestration, and (5) data analysis platforms for visualization and interpretation [4] [7].

Cloud storage solutions such as Amazon S3 and Google Cloud Storage provide durable, scalable repositories for massive RNA-seq datasets, with automatic replication and robust access controls [3]. These storage systems seamlessly integrate with cloud computing resources, enabling efficient data transfer during processing. The computational heavy lifting occurs on virtual machines specifically configured for bioinformatics workloads, with instance types optimized for memory-intensive operations like genome alignment or CPU-intensive tasks like quality control [4] [5].

Containerization technologies, particularly Docker, play a crucial role in ensuring reproducibility and simplifying dependency management [4] [7]. By packaging analysis tools and their dependencies into standardized containers, researchers can create portable, version-controlled analysis environments that produce consistent results across different cloud platforms and computing environments. This approach eliminates the "it works on my machine" problem that frequently plagues bioinformatics analyses.

Workflow Management Systems

Workflow management systems like Nextflow provide the orchestration layer that coordinates the various steps of RNA-seq analysis across cloud resources [7]. These systems enable researchers to define complex, multi-step pipelines in a reproducible manner, with automatic handling of job scheduling, failure recovery, and resource management. Nextflow's integration with Google Batch, for example, allows seamless execution of RNA-seq pipelines on cloud infrastructure without manual intervention [7].

Platforms like Nextflow Tower further simplify workflow execution by providing a web-based interface for monitoring pipeline progress, managing computational environments, and tracking analysis metrics [7]. This abstraction layer makes sophisticated cloud-based RNA-seq analysis accessible to researchers without extensive computational expertise, democratizing access to high-performance bioinformatics capabilities.

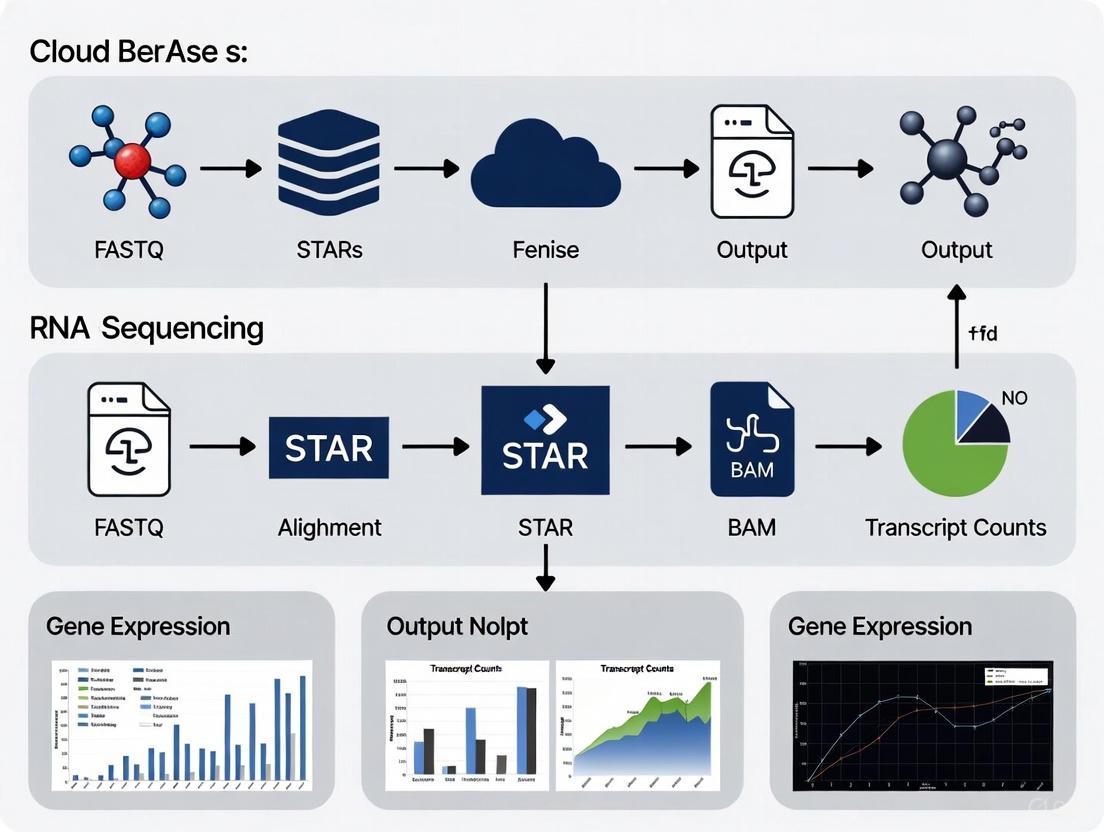

Cloud-Based RNA-seq Analysis Workflow: This diagram illustrates the core steps in a cloud-native RNA-seq analysis pipeline, from raw data processing in cloud storage through alignment, quantification, and differential expression analysis performed within scalable cloud computing resources.

STAR Aligner Implementation: Protocols and Optimization

Genome Index Generation

The first critical step in STAR-based RNA-seq analysis is generating a genome index, which significantly impacts alignment speed and accuracy. This process requires both the reference genome sequence in FASTA format and gene annotation in GTF or GFF format [4] [7]. For human studies, the GRCh38 reference genome and corresponding GENCODE annotations (version 36 or later) represent current best practices [4] [8].

The genome index generation should be performed on a cloud instance with sufficient memory - typically a machine with 48 GB RAM or more for human genomes [4]. The following protocol outlines the key steps:

Data Preparation: Download reference genome and annotation files from authoritative sources such as GENCODE and store them in cloud storage for reproducible access [4] [7].

Containerized Execution: Use Docker containers to ensure version control and reproducibility of the indexing process. A specialized container with STAR pre-installed can be deployed on cloud instances [4].

Index Command Execution: Run the STAR genome generation command with appropriate parameters. A typical implementation includes:

This command utilizes 8 CPU cores (--runThreadN 8) and specifies an overhang value (--sjdbOverhang 100) corresponding to the read length minus 1 [4].

- Storage of Index: Once generated, the genome index should be stored in cloud storage for reuse across multiple alignment jobs, avoiding redundant computation [4] [7].

RNA-seq Alignment and Quantification

After genome index generation, the actual alignment of RNA-seq data can proceed. This process maps the sequencing reads in FASTQ format to the reference genome and generates alignment files (BAM) along with gene-level counts [4] [7]. A cloud-optimized approach enables parallel processing of multiple samples, dramatically reducing overall processing time.

The alignment protocol involves:

Sample Processing Configuration: Create a tab-separated value (TSV) file specifying the input FASTQ files and output paths for each sample. This enables parallel processing of multiple samples through array jobs [4].

Alignment Execution: For each sample, execute the STAR alignment command:

This command uses 4 CPU cores (--runThreadN 4) and automatically decompresses gzipped FASTQ files (--readFilesCommand zcat) [4].

Quantification: Following alignment, tools like RSEM (RNA-Seq by Expectation Maximization) can be used to estimate gene and isoform abundances [7]. The integration of this step within the cloud pipeline enables seamless transition from alignment to quantification without data transfer bottlenecks.

Results Loading to BigQuery: For large-scale studies, results can be loaded directly into cloud-based data warehouses like Google BigQuery for efficient querying and downstream analysis [7]. This approach facilitates collaborative exploration of results across research teams.

Table 2: Computational Requirements for Key RNA-seq Analysis Steps

| Analysis Step | Recommended Cloud Instance Type | Memory Requirements | Approximate Execution Time* | Key Software Tools |

|---|---|---|---|---|

| Genome Indexing | Memory-optimized (e.g., n1-highmem-8) | 48+ GB | 60-90 minutes | STAR, HISAT2 |

| Read Alignment | Compute-optimized (e.g., n1-standard-8) | 32+ GB | 30-60 minutes per sample | STAR, HISAT2 |

| Gene Quantification | Standard instances (e.g., n1-standard-4) | 16+ GB | 15-30 minutes per sample | RSEM, featureCounts |

| Differential Expression | Memory-optimized (e.g., n1-highmem-4) | 32+ GB | Varies by sample size | DESeq2, edgeR, limma |

| Quality Control | Standard instances (e.g., n1-standard-2) | 8+ GB | 10-20 minutes per sample | FastQC, MultiQC |

Execution times are approximate and depend on sample size, read depth, and instance specifications.

Performance Analysis and Cost Optimization

Computational Efficiency Gains

Cloud-based implementation of STAR RNA-seq workflows delivers substantial performance improvements over traditional computing approaches. Recent research demonstrates that optimized cloud architectures can process tens to hundreds of terabytes of RNA-seq data with significant efficiency gains [5]. One key optimization - early stopping of alignment processes - can reduce total alignment time by approximately 23% without compromising results accuracy [5].

The scalability of cloud environments enables additional performance enhancements through parallel processing. Unlike traditional computing environments where samples are typically processed sequentially due to resource constraints, cloud platforms can align hundreds of samples simultaneously through array jobs [4]. This approach reduces the total analysis time from weeks to hours for large-scale studies, accelerating the research timeline dramatically.

Performance analysis of the STAR aligner in cloud environments has identified optimal instance types for different stages of RNA-seq processing [5]. Memory-optimized instances provide the best price-to-performance ratio for genome indexing and alignment steps, while compute-optimized instances are more efficient for quantification and quality control steps. This specialized allocation of resources further enhances overall workflow efficiency.

Cost Management Strategies

While cloud computing offers unparalleled scalability, cost management remains an important consideration. Several strategies can optimize cloud spending for RNA-seq analyses:

Spot/Preemptible Instances: Using spot instances (AWS) or preemptible instances (Google Cloud) can reduce compute costs by 60-90% for fault-tolerant workflows [4] [5]. These instances are available at discounted prices with the tradeoff that they may be terminated if capacity is needed elsewhere.

Rightsizing Resources: Selecting appropriately sized instances for each analysis step prevents overprovisioning. Monitoring tools provided by cloud platforms can identify underutilized resources and suggest more cost-effective instance types [3].

Storage Lifecycle Policies: Implementing automated storage lifecycle policies transitions data to cheaper storage classes (e.g., Amazon Glacier or Google Cloud Archive) when it is no longer frequently accessed, reducing storage costs by up to 70% [3].

Workflow Optimization: Recent research has demonstrated that algorithm-level optimizations, such as the early stopping technique for STAR alignment, not only improve performance but also directly reduce computational costs by minimizing resource utilization [5].

Table 3: Cost Optimization Strategies for Cloud-Based RNA-seq Analysis

| Strategy | Implementation Approach | Potential Cost Reduction | Considerations |

|---|---|---|---|

| Spot/Preemptible Instances | Use for fault-tolerant workflow steps | 60-90% for compute costs | Possible job interruption; requires checkpointing |

| Rightsizing Instances | Monitor CPU and memory utilization; select optimal instance types | 20-40% for compute costs | Requires performance testing and monitoring |

| Storage Tiering | Implement lifecycle policies to archive old data | 40-70% for storage costs | Retrieval latency for archived data |

| Container Optimization | Use lightweight base images; multi-stage builds | 10-20% for storage and data transfer | Requires Docker expertise |

| Workflow Optimization | Implement early stopping; efficient algorithm selection | 15-25% for compute costs | Requires validation of result quality |

Essential Research Reagents and Computational Tools

Successful implementation of cloud-based RNA-seq analysis requires both wet-lab reagents and computational resources. The following toolkit encompasses the essential components for end-to-end RNA-seq studies in cloud environments.

Table 4: Research Reagent Solutions for Cloud-Based RNA-seq Analysis

| Category | Specific Tools/Platforms | Function/Purpose |

|---|---|---|

| Cloud Platforms | Google Cloud Platform (GCP), Amazon Web Services (AWS) | Provides scalable infrastructure for storage and computation |

| Workflow Management | Nextflow, dsub, Cromwell | Orchestrates multi-step analysis pipelines across cloud resources |

| Containerization | Docker, Singularity | Packages tools and dependencies for reproducible execution |

| RNA-seq Alignment | STAR, HISAT2 | Aligns RNA sequencing reads to reference genome |

| Quality Control | FastQC, MultiQC, Trim Galore | Assesses data quality; performs adapter trimming and quality filtering |

| Quantification | RSEM, featureCounts, salmon | Estimates gene and transcript abundance from aligned reads |

| Differential Expression | DESeq2, edgeR, limma | Identifies statistically significant expression changes between conditions |

| Data Storage & Analysis | Google BigQuery, Amazon Athena | Enables scalable querying and analysis of results data |

| Reference Data Sources | GENCODE, NCBI RefSeq, Ensembl | Provides reference genomes and annotation files |

| Public Data Repositories | TCGA, GTEx, GEO | Sources of publicly available RNA-seq data for validation and meta-analysis |

The integration of cloud computing with RNA-seq analysis represents a paradigm shift in how researchers approach transcriptomic studies. The scalable nature of cloud infrastructure directly addresses the computational challenges posed by ever-increasing data volumes, while simultaneously reducing barriers to high-performance bioinformatics [3] [2]. The implementation of STAR-based workflows in cloud environments demonstrates tangible benefits in both performance and cost-efficiency, particularly through optimizations that can reduce alignment time by 23% or more [5].

As RNA-seq technologies continue to evolve toward single-cell and spatial transcriptomics approaches, generating even larger and more complex datasets, the importance of cloud computing will only intensify [1] [6]. The protocols and strategies outlined in this article provide researchers with a framework for leveraging cloud infrastructure to accelerate discovery while maintaining reproducibility and analytical rigor. By adopting these cloud-native approaches, the research community can fully harness the potential of RNA-seq technology to advance our understanding of biology and disease.

The accurate alignment of high-throughput RNA sequencing (RNA-seq) data is a foundational step in genomic analysis, enabling the interpretation of the transcriptome's complex structure. Unlike DNA sequencing, RNA-seq must account for spliced transcripts, where non-contiguous exons are joined together after intron removal. This presents a unique computational challenge: mapping short sequencing reads that may span these splice junctions back to a reference genome. The Spliced Transcripts Alignment to a Reference (STAR) software was developed specifically to address this challenge, outperforming earlier aligners by a factor of more than 50 in mapping speed while simultaneously improving sensitivity and precision [9]. Its ability to rapidly and accurately process vast datasets, such as the large ENCODE Transcriptome RNA-seq dataset comprising over 80 billion reads, has made it an indispensable tool in modern genomics [9]. For researchers implementing cloud-based RNA-seq workflows, STAR's performance characteristics directly influence computational resource requirements, execution time, and ultimately, the cost and feasibility of large-scale transcriptomic studies [10].

STAR's core functionality extends beyond basic alignment to include unbiased de novo detection of canonical and non-canonical splices, as well as chimeric (fusion) transcripts [9] [11]. Furthermore, it is capable of mapping full-length RNA sequences, making it suitable for the emerging long-read sequencing technologies [9]. In cloud-based implementations, where managing computational resources is critical, understanding STAR's underlying algorithm and its configuration is essential for optimizing workflow efficiency. The following sections detail the algorithmic foundations of STAR, its performance advantages, and practical protocols for its implementation in transcriptomic research.

Algorithmic Principles of STAR

The STAR algorithm employs a novel strategy fundamentally different from many earlier RNA-seq aligners, which were often extensions of contiguous DNA short-read mappers. Instead, STAR aligns non-contiguous sequences directly to the reference genome using a method based on sequential maximum mappable seed search followed by a clustering and stitching procedure [9]. This two-phase approach allows it to efficiently identify splice junctions in a single alignment pass without prior knowledge of their locations.

Core Two-Pass Alignment Strategy

STAR's operation can be divided into two main phases: seed searching and clustering/stitching/scoring.

Seed Search with Maximal Mappable Prefix (MMP): The central concept of STAR's first phase is the search for the Maximal Mappable Prefix (MMP). For a given read sequence and starting position, the MMP is defined as the longest substring that matches one or more substrings of the reference genome exactly [9]. This search is implemented through uncompressed suffix arrays (SAs), which provide a computationally efficient means for string matching with logarithmic scaling relative to the genome size [9]. The process is sequential; after finding the MMP for the beginning of a read, the algorithm repeats the search for the remaining unmapped portion. This natural progression allows STAR to pinpoint splice junction locations precisely without resorting to arbitrary read splitting. The use of uncompressed SAs confers a significant speed advantage, traded off against higher memory usage compared to aligners using compressed indexes [9].

Clustering, Stitching, and Scoring: In the second phase, STAR assembles complete read alignments by stitching together all seeds discovered in the first phase. Seeds are first clustered by proximity to selected "anchor" seeds. All seeds mapping within user-definable genomic windows around these anchors are then stitched together using a frugal dynamic programming algorithm. This algorithm allows for any number of mismatches but only one insertion or deletion per seed-pair alignment [9]. A key feature is the concurrent processing of paired-end read mates. STAR treats the mates as a single sequence, which increases alignment sensitivity, as only one correct anchor from one mate is sufficient to accurately align the entire read pair [9].

Handling Complex Alignments

The MMP strategy enables STAR to manage various sequencing artifacts and complex genomic events.

- Mismatches, Indels, and Poly-A Tails: If an MMP search is interrupted by mismatches, the resulting seeds act as anchors that can be extended to form gapped alignments. In cases where extension does not yield a good genomic match, STAR can identify poly-A tails, adapter sequences, or low-quality sequencing tails [9].

- Chimeric and Fusion Transcript Detection: STAR can detect chimeric alignments where different parts of a read map to distal genomic loci, different chromosomes, or different strands. This includes instances where the chimeric junction lies in the unsequenced portion between paired-end mates, allowing for sensitive fusion transcript discovery [9] [11].

Table 1: Key Algorithmic Components of STAR and Their Functions

| Algorithmic Component | Description | Function in Alignment |

|---|---|---|

| Maximal Mappable Prefix (MMP) | Longest exact match between a read substring and the reference genome [9]. | Acts as an anchor; identifies potential exonic segments and splice junctions. |

| Uncompressed Suffix Array (SA) | Data structure for efficient string matching of the reference genome [9]. | Enables fast MMP search with logarithmic scaling; increases mapping speed. |

| Seed Clustering & Stitching | Process of grouping nearby MMPs and connecting them with gapped alignments [9]. | Reconstructs the complete alignment of a spliced read across introns. |

| Concurrent Paired-End Processing | Treating mate pairs as a single sequence during alignment [9]. | Increases sensitivity by leveraging information from both reads simultaneously. |

The following diagram illustrates the core two-step workflow of the STAR aligner algorithm.

Performance and Advantages in Transcriptomics

STAR's design confers significant performance and functional benefits that make it particularly suited for both large-scale consortia projects and cloud-based analytical workflows.

Speed and Accuracy

STAR was developed to address the throughput bottlenecks presented by modern sequencing technologies. In its original publication, it demonstrated the capability to align 550 million 2x76 bp paired-end reads per hour on a standard 12-core server, a speed that was more than 50 times faster than other contemporary aligners [9]. This high mapping speed does not come at the cost of accuracy. Experimental validation of 1960 novel intergenic splice junctions discovered by STAR using Roche 454 sequencing confirmed a high precision rate of 80-90% [9]. This combination of speed and precision is crucial for processing the tens to hundreds of terabytes of data generated in large-scale projects like the Transcriptomics Atlas [10].

Superior Detection of Splicing Events

A key advantage of STAR over "lightweight" pseudoalignment methods is its detailed alignment-based approach. Studies have shown that the choice of alignment methodology can significantly influence subsequent transcript abundance estimates and differential expression analysis [12]. While lightweight methods are fast and memory-efficient, they can suffer from spurious mappings because they do not validate fragment mappings with a formal alignment score [12]. STAR, as a full aligner, provides more accurate mapping in complex regions, which translates to more reliable quantification, especially for applications requiring the discovery of novel splice junctions, fusion genes, or other complex transcriptional events [13] [12]. This makes STAR the "superior option" when the research aim extends beyond simple gene-level quantification to include splice variant analysis [13].

Cloud-Based Performance Optimizations

Recent research into optimizing STAR in cloud environments has identified specific strategies to enhance its performance and cost-effectiveness further.

- Early Stopping: One significant optimization is the implementation of an early stopping feature, which can reduce the total alignment time by 23% [10] [5]. This optimization leverages the fact that for many samples, a sufficient amount of reads can be aligned quickly, and prolonged runtime yields diminishing returns, a feature exploitable in quality-controlled, large-scale datasets.

- Instance Selection and Spot Usage: Cloud deployments benefit from selecting the most cost-efficient EC2 instance types, typically those with high memory and CPU resources that match STAR's demands. Furthermore, the use of spot instances (preemptible cloud VMs) has been verified as a viable and cost-saving strategy for running this resource-intensive aligner [10].

Table 2: Comparative Analysis of STAR vs. Pseudoaligners like Kallisto

| Feature | STAR (Alignment-Based) | Pseudoaligners (e.g., Kallisto) |

|---|---|---|

| Core Method | Full spliced alignment to the genome using MMP and seeding [9]. | Pseudoalignment to a transcriptome index using k-mer matching [13]. |

| Primary Output | Read counts per gene, splice junction counts, BAM alignment files [13]. | Transcript abundance estimates (TPM, counts) [13]. |

| Key Strength | High accuracy for novel splice/fusion detection; provides genomic coordinates [13] [12]. | Extremely fast and memory-efficient; ideal for high-sample-size quantification [13]. |

| Computational Load | High memory (tens of GiBs) and CPU; benefits from high-throughput disks [10]. | Low memory and CPU requirements [14]. |

| Ideal Use Case | Discovery of novel isoforms, splice junctions, and fusion genes [13]. | Rapid gene-level quantification on well-annotated transcriptomes [13]. |

Experimental Protocols and Cloud Implementation

Implementing STAR effectively requires careful attention to experimental design, data preparation, and computational configuration. The following protocols are adapted from benchmark studies and cloud optimization experiments.

Protocol: Standard RNA-seq Alignment with STAR

This protocol outlines the key steps for aligning bulk RNA-seq data using STAR, from data preparation to quantification.

Step 1: Preprocessing of Raw Sequencing Data

- Input: Raw sequencing reads in FASTQ format. For paired-end experiments, two FASTQ files are required.

- Quality Control: Use tools like FastQC to assess read quality.

- Adapter Trimming: Remove adapter sequences using tools such as Cutadapt or Trimmomatic. Adapter contamination reduces alignment quality and is a common issue in raw reads [14].

Step 2: Generating the Genome Index

- Input Requirements: A reference genome sequence (FASTA format) and corresponding annotation file (GTF format).

- STAR Command: Run STAR in genomeGenerate mode. The key parameter

--sjdbOverhangshould be set to the read length minus 1. This step is computationally intensive but needs to be performed only once for a given genome and annotation combination. - Computational Note: Indexing the human genome requires approximately 30 GB of RAM [10].

Step 3: Aligning Reads

- Input: Trimmed FASTQ files and the pre-built genome index.

- Key STAR Parameters:

--runThreadN: Number of threads for parallel execution.--readFilesCommand: For compressed input files (e.g.,--readFilesCommand zcat).--outSAMtype: Specify output format, typically BAM sorted by coordinate (--outSAMtype BAM SortedByCoordinate).--quantMode: Enables transcript quantification.--quantMode GeneCountsoutputs read counts per gene directly [10].

- Output: A sorted BAM file with alignments and a file with read counts per gene.

Step 4: Downstream Quantification and Analysis

- While STAR can produce gene counts directly, tools like Salmon (in alignment-based mode) can be used on the BAM file for potentially more accurate transcript-level quantification [12]. The BAM file can also be used for variant calling, visualization, and detecting novel events.

Protocol: Cloud-Native STAR Workflow Optimization

For implementing STAR at scale in a cloud environment, specific architectural and configuration optimizations are recommended based on recent research [10].

Step 1: Architectural Design

- Use a scalable, cloud-native architecture built on managed services. A microservices approach, containerizing the pipeline with Docker, allows for reproducibility and efficient resource management [14]. AWS Batch or Kubernetes with workflow managers (e.g., Argo Workflows) are suitable for orchestration.

Step 2: Data Distribution Strategy

- The precomputed STAR genome index must be efficiently distributed to all worker instances. Solutions include pre-loading index files onto a shared network file system or using fast cloud storage with caching mechanisms to avoid becoming a bottleneck.

Step 3: Resource Configuration and Optimization

- Instance Selection: Choose compute-optimized (e.g., C5) or memory-optimized (e.g., R5) EC2 instance families that provide a balance of CPU and memory. Test for the most cost-efficient type.

- Parallelism: Analyze the scalability of STAR on a single node to find the optimal core count, as performance may not scale linearly beyond a certain point [10].

- Cost Saving: Leverage spot instances for alignment tasks, as they have been shown to be suitable for running STAR, offering significant cost reductions [10].

- Early Stopping: Implement the early stopping optimization, which can halt alignment once a sufficient proportion of reads have been mapped, reducing runtime and cost by 23% [10] [5].

The following diagram visualizes this optimized cloud-based workflow.

Successful execution of a STAR-based RNA-seq pipeline relies on a suite of computational reagents and resources. The table below details these essential components.

Table 3: Key Research Reagent Solutions for STAR RNA-seq Workflows

| Resource/Reagent | Function and Role in the Workflow |

|---|---|

| Reference Genome | A high-quality reference genome (e.g., GRCh38 for human) in FASTA format. Serves as the foundational scaffold for read alignment [10]. |

| Genome Annotation | A comprehensive annotation file (GTF/GFF format) specifying known gene and transcript models. Crucial for generating the splice junction database during STAR indexing and for accurate quantification [10]. |

| STAR Genome Index | A precomputed index of the reference genome and annotations, generated by STAR. This data structure is loaded into memory during alignment to enable ultra-fast searching [10] [9]. |

| SRA Toolkit | A collection of tools for accessing and manipulating sequencing data from the NCBI Sequence Read Archive (SRA). It is used to retrieve (prefetch) and convert (fasterq-dump) public data into the FASTQ format for alignment [10]. |

| High-Performance Computing (HPC) or Cloud Resources | STAR requires substantial computational resources. A server with multiple CPU cores and large RAM capacity (e.g., >30 GB for human genome) is essential. Cloud instances (e.g., AWS EC2) provide scalable, on-demand resources for large studies [10]. |

| Downstream Analysis Tools | Software like DESeq2 or the quantification tool Salmon (in alignment mode) that processes the output of STAR (BAM or count files) for differential expression analysis and biological interpretation [10] [12]. |

The selection of a cloud platform is a critical first step in implementing a cloud-based STAR RNA-seq workflow. The table below provides a high-level comparison of the key platforms used in genomics research.

Table 1: Core Cloud Platform Comparison for Genomics Workflows

| Feature | AWS (Amazon Web Services) | Google Cloud Platform (GCP) | Terra |

|---|---|---|---|

| Market Share (2025) | ~30% [15] | ~13% [15] | N/A (Built on GCP/AWS) |

| Primary Genomics Focus | End-to-end managed workflows & storage [16] | Data analytics, AI/ML, and scalable compute [15] | Collaborative, user-friendly biomedical research [2] |

| Key Genomics Service | AWS HealthOmics [16] | Google Cloud Life Sciences, Vertex AI [17] | Pre-configured, community-driven analysis platform [2] |

| Cost Model for Compute | Per-second billing, Savings Plans, Spot Instances [15] | Per-second billing, Sustained-Use Discounts, Committed Use Discounts [15] [17] | Integrated billing; often managed via workspaces |

| Strengths | Broadest service catalog, strong ecosystem, automated scaling with HealthOmics [16] [18] [15] | Leading in data analytics and AI, superior Kubernetes management, automatic discounts [15] [17] | Lower technical barrier, pre-validated workflows, built-in data governance and collaboration [2] |

Experimental Protocols for STAR RNA-seq Across Platforms

The following protocols detail the implementation of a STAR RNA-seq alignment workflow on the major cloud platforms.

Protocol: Automated RNA-seq Analysis on AWS HealthOmics

This protocol leverages AWS HealthOmics to orchestrate a Nextflow-based RNA-seq pipeline, minimizing infrastructure management [16].

- Input Preparation: Create a CSV file containing the GEO Accession IDs (e.g., GSM123456) to be analyzed and upload it to a designated Amazon S3 bucket [16].

- Workflow Trigger: An S3 event notification automatically invokes an AWS Lambda function, which parses the CSV and launches a Step Functions state machine [16].

- Data Ingestion: The state machine uses AWS Batch for parallelized ingestion of FASTQ files from public repositories like the NCBI GEO. It checks an Amazon DynamoDB table to avoid re-downloading existing files and uses

fasterq-dumpfor efficient data transfer [16]. - Pipeline Execution: Upon ingestion completion, a sample sheet is generated and placed in an S3 bucket, triggering another Lambda function that submits the RNA-seq workflow (e.g., nf-core RNA-seq) to AWS HealthOmics [16].

- Output Handling: AWS HealthOmics executes the workflow, handling all compute provisioning and scaling. Upon completion, output files (e.g., BAM alignments, count tables) are automatically written to a specified S3 bucket [16].

Protocol: STAR Alignment on Google Cloud Platform

This protocol uses dsub for batch job management on GCP, offering fine-grained control over compute resources [4].

Preliminary Setup:

Reference Genome Indexing:

Sequence Alignment:

Protocol: Analysis Using the Terra Platform

Terra provides a centralized, collaborative environment for running pre-configured workflows, ideal for researchers seeking a lower-code solution [2].

- Workspace Setup: Navigate to the Terra platform and either create a new workspace or join an existing one dedicated to your project. Pre-configured workspaces for common analyses like bulk RNA-seq are often available.

- Data Configuration:

- Import or select your FASTQ files (hosted in a Google Bucket or other compatible cloud storage) into the Terra workspace's "Data" tab.

- Use Terra's interactive data table to link your samples (e.g., FASTQ files) to the analysis workflow.

- Workflow Selection & Configuration:

- In the "Workflows" tab, choose a pre-loaded STAR RNA-seq workflow, such as the "RNA with UMIs" pipeline from the Broad Institute's WARP repository [19].

- In the workflow configuration, specify your input data by matching the column names from your data table. Set the required reference genome (e.g., GRCh38) and any desired workflow parameters.

- Execution and Monitoring: Launch the workflow. Terra will submit the job to the cloud backend (GCP or AWS) and provide a real-time dashboard to monitor execution status and logs. Results, such as BAM files and quality metrics, will be automatically deposited back into your workspace's cloud storage.

Workflow Visualization and Data Flow

The end-to-end process of a cloud-based STAR RNA-seq analysis, from raw data to gene expression counts, follows a logical progression. The diagram below illustrates the major steps and their relationships.

Successful execution of a cloud-based RNA-seq experiment requires both biological and computational "reagents." The table below details the key materials and resources needed.

Table 2: Essential Research Reagents and Computational Resources

| Category | Item | Specifications / Example Source | Function in Workflow |

|---|---|---|---|

| Raw Sequencing Data | FASTQ Files | From NCBI GEO, SRA, or in-house sequencing [16] | The primary input data containing sequenced reads. |

| Reference Genome | FASTA File | GRCh38 primary assembly from GENCODE or GCP Broad references [4] [19] | The reference sequence for aligning reads. |

| Gene Annotations | GTF/GFF3 File | GENCODE v36 (or latest) comprehensive gene annotation [4] [20] | Provides gene model coordinates for alignment and counting. |

| STAR Aligner | STAR Software | Available via Bioconda or pre-installed in Docker images (e.g., registry.gitlab.com/hylkedonker/rna-seq) [4] |

Performs fast and accurate splicing-aware alignment of RNA-seq reads [4]. |

| Post-Alignment Tools | Samtools | Available via Bioconda [20] | Converts, sorts, and indexes SAM/BAM files for downstream analysis [20]. |

| Quantification Tool | HTSeq-count / RNA-SeQC | Available via Bioconda or pre-packaged in pipelines [19] [20] | Generates gene-level count data from aligned reads. |

| Workflow Manager | Nextflow / WDL | Used by nf-core and WARP pipelines on AWS HealthOmics and Terra [16] [19] | Defines and executes the portable, scalable computational workflow. |

| Containerization | Docker Image | e.g., registry.gitlab.com/hylkedonker/rna-seq [4] |

Ensures consistency and reproducibility of the software environment. |

Understanding Cloud-Native Architecture for Scalable Bioinformatics

The analysis of RNA sequencing (RNA-seq) data is a fundamental task in modern molecular biology and medicine, providing crucial insights into gene expression and its role in health and disease [10]. The STAR (Spliced Transcripts Alignment to a Reference) aligner has emerged as a widely used tool for this purpose, valued for its accuracy and ability to handle large-scale transcriptomic datasets [10] [4]. However, processing the hundreds of terabytes of data generated by high-throughput sequencing technologies presents significant computational challenges, requiring substantial memory, processing power, and high-throughput disk systems [10].

Cloud-native architecture addresses these challenges by leveraging the scalability, flexibility, and cost-efficiency of cloud computing platforms. This approach allows researchers to design systems that can dynamically scale to meet computational demands, avoiding the limitations and upfront costs of traditional on-premises high-performance computing (HPC) infrastructure. For bioinformatics pipelines like those based on STAR, a cloud-native approach enables the processing of large datasets in a fraction of the time required by traditional computing environments [4]. This document provides detailed application notes and protocols for implementing a cloud-native STAR RNA-seq workflow, developed within the context of broader research on cloud-based genomic analysis implementation.

Cloud-Native Architecture for Bioinformatics

A cloud-native architecture is designed from the ground up to leverage cloud services and infrastructure, focusing on scalability, resilience, and manageability. For data- and compute-intensive bioinformatics applications like the Transcriptomics Atlas pipeline, this involves a thoughtful integration of various cloud services and components [10].

The core architectural pattern for a cloud-native bioinformatics pipeline typically involves a coordinated sequence of steps: input data retrieval, format conversion, genomic alignment, and downstream analysis [10]. The alignment phase, often the most resource-intensive, benefits significantly from parallelization strategies that distribute workloads across multiple cloud instances. Cloud batch processing systems such as AWS Batch or Kubernetes-native solutions like Argo Workflows are commonly employed to manage these distributed computations, abstracting away the underlying infrastructure complexity [10].

Key considerations in this architecture include the efficient distribution of large reference datasets (such as the STAR index) to worker instances, selection of appropriate instance types based on the application's memory and CPU requirements, and implementation of robust data management strategies to handle intermediate files and final results [10]. The adoption of containerization technologies like Docker ensures consistency and reproducibility across computing environments, facilitating the packaging of complex bioinformatics tools and their dependencies [4].

Table 1: Core Components of a Cloud-Native Bioinformatics Architecture

| Component | Function | Example Technologies |

|---|---|---|

| Compute Management | Orchestrates and scales workload execution | AWS Batch, Kubernetes, Google Cloud dsub [10] [4] |

| Object Storage | Provides scalable and durable storage for large genomic datasets | AWS S3, Google Cloud Storage [10] [4] |

| Container Registry | Stores and manages containerized application images | Docker Hub, Google Container Registry [4] |

| Reference Data Management | Handles distribution of genomic references and indices | Dedicated data services, optimized data distribution techniques [10] |

| Security & Access Control | Manages authentication and data protection | Cloud IAM, encryption protocols, attestation frameworks [21] [22] |

Performance Analysis and Optimization

Rigorous performance analysis is essential for designing a cost-effective and efficient cloud-native bioinformatics pipeline. Research into running the Transcriptomics Atlas pipeline in AWS cloud has yielded quantitative insights into optimization strategies for the STAR aligner [10].

Application-Specific Optimizations

- Early Stopping: Implementation of an early stopping feature based on intermediate results can significantly reduce processing time. Experiments demonstrate that this optimization can reduce total alignment time by approximately 23%, thereby increasing overall pipeline throughput [10].

- Parallelism Configuration: Identifying the optimal level of parallelism within a single compute node is crucial. Beyond a certain core count, STAR exhibits performance degradation due to increased I/O wait times and resource contention. Benchmarking is necessary to determine the most cost-effective core allocation for specific instance types [10].

- Genomic Index Distribution: Efficiently distributing the large STAR genomic index (often tens of gigabytes) to worker instances is a critical bottleneck. Solutions that pre-cache indexes on fast local storage or use high-throughput network file systems can dramatically reduce startup latency [10].

Cloud Infrastructure Optimizations

- Instance Type Selection: The choice of virtual machine instance type directly impacts performance and cost. Memory-optimized instances (e.g., AWS R5 or Google Cloud n2-highmem series) are often suitable for STAR, which requires substantial RAM. Research indicates that careful selection can identify the most cost-efficient instance type for alignment tasks [10] [4].

- Spot Instance Usage: Leveraging preemptible or spot instances can reduce compute costs by 60-80% compared to on-demand pricing. Studies confirm that spot instances are generally suitable for running resource-intensive aligners like STAR, though checkpointing strategies are recommended for fault tolerance in large-scale simulations [10] [4].

- Storage Optimization: Pairing compute instances with appropriate high-throughput block storage (e.g., local SSDs or provisioned IOPS volumes) is necessary to prevent disk I/O from becoming a bottleneck during alignment [10].

Table 2: Quantitative Impact of Optimizations on STAR Workflow [10]

| Optimization Technique | Measured Impact | Key Consideration |

|---|---|---|

| Early Stopping | 23% reduction in total alignment time | Requires analysis of intermediate results |

| Optimal Core Allocation | Prevents performance degradation | Specific to instance type and data size |

| Spot Instance Utilization | Significant cost reduction (60-80%) | Requires handling of potential preemption |

| Efficient Index Distribution | Reduces job startup latency | Critical for maintaining high throughput |

Experimental Protocols

Protocol 1: Baseline STAR Alignment in Cloud Environment

This protocol establishes the foundational setup and execution of the STAR aligner in a cloud environment, forming the baseline against which optimizations can be measured.

Research Reagent Solutions:

- Genomic Reference and Annotation: A reference genome (e.g., GRCh38) in FASTA format and a corresponding annotation file in GFF3 or GTF format (e.g., from GENCODE). These provide the coordinate system and gene models for aligning reads [4].

- RNA-seq Read Files: Sequencing data in FASTQ format, containing the short reads to be aligned. These can be sourced from public repositories like the NCBI Sequence Read Archive (SRA) [10] [4].

- STAR Genomic Index: A precomputed index from the reference and annotation files. This is a critical data structure that dramatically accelerates the alignment process and must be distributed efficiently to compute nodes [10] [4].

Methodology:

Infrastructure Provisioning:

- Select a memory-optimized cloud instance type (e.g., n2-highmem-32 on Google Cloud or r5.8xlarge on AWS) with sufficient RAM for the target genome and adequate vCPUs [10] [4].

- Attach high-throughput local SSD storage to handle the I/O demands of alignment.

- Provision cloud object storage (e.g., Google Cloud Storage, AWS S3) for initial input data and final output storage [4].

Data Preparation:

- Upload reference genome FASTA file and annotation GTF file to a designated bucket in cloud object storage [4].

- Upload or transfer RNA-seq FASTQ files to the cloud storage input directory. If using SRA files, retrieve them using

prefetchand convert to FASTQ usingfasterq-dumpfrom the SRA Toolkit [10].

Genome Index Generation:

Alignment Execution:

Protocol 2: Optimized Large-Scale Deployment

This protocol builds upon the baseline, incorporating optimizations for processing tens to hundreds of terabytes of RNA-seq data in a cost-efficient and high-throughput manner.

Methodology:

Orchestrated Batch Processing:

- Deploy the pipeline using a managed batch processing service (e.g., AWS Batch) or a Kubernetes-based workflow manager (e.g., Argo Workflows) to automate cluster creation, job submission, and resource scaling [10].

- Configure the compute environment to use a mix of on-demand and spot instances to optimize for both availability and cost, implementing job retry logic for failed spot instances [10].

Implementation of Early Stopping:

- Modify the alignment workflow to check for and utilize existing intermediate results from previous, potentially failed, runs. This prevents recomputation of already completed alignment stages [10].

- In the workflow logic, add a preprocessing step that verifies the state of alignment for each sample before allocating full compute resources.

Optimized Data Distribution:

- Pre-cache the STAR genomic index on a fast, shared file system accessible by all worker nodes or replicate it to the local SSD of each worker instance at startup to minimize data transfer times [10].

- For input FASTQ files, ensure they are located in cloud object storage in the same region as the compute cluster to reduce data transfer latency and costs.

Performance Monitoring and Validation:

- Instrument the pipeline to collect detailed metrics on execution time, cost per sample, and resource utilization.

- Validate the biological accuracy of the optimized outputs by comparing key quality metrics (e.g., alignment rate, read distribution across features) with those generated by the non-optimized baseline protocol.

Workflow and Data Flow Visualization

The following diagrams illustrate the logical organization of the cloud-native STAR RNA-seq workflow and the flow of data between its core components, providing a visual summary of the protocols described above.

The Scientist's Toolkit

This section details the essential software, data, and cloud resources required to implement the cloud-native STAR RNA-seq workflow.

Table 3: Essential Research Reagents and Resources for Cloud-Native STAR Workflow

| Category | Resource | Description and Function |

|---|---|---|

| Bioinformatics Tools | STAR Aligner | Performs the core alignment of RNA-seq reads to the reference genome, handling spliced alignments [10] [4]. |

| SRA Toolkit | Provides utilities (prefetch, fasterq-dump) for retrieving and converting sequencing data from the NCBI SRA database [10]. |

|

| DESeq2 / R | Used for downstream normalization and differential expression analysis of the count data generated by STAR [10]. | |

| Reference Data | Reference Genome | A species-specific reference sequence (e.g., GRCh38 for human) in FASTA format, serving as the alignment scaffold [10] [4]. |

| Gene Annotation | A GTF/GFF3 file defining genomic coordinates of genes and transcripts, used for generating the STAR index and assigning reads to features [4]. | |

| STAR Genomic Index | A precomputed index from the reference genome and annotation, crucial for accelerating the alignment process [10] [4]. | |

| Cloud Services & Tools | Workflow Orchestrator | Manages and scales job execution (e.g., dsub, AWS Batch, Argo Workflows) across the cloud compute fleet [10] [4]. |

| Object Storage | Provides durable and scalable storage for input, output, and reference files (e.g., AWS S3, Google Cloud Storage) [10] [4]. | |

| Container Image | A Docker image containing the required bioinformatics software (STAR, SRA Toolkit) and their dependencies, ensuring runtime consistency [4]. |

The implementation of cloud-based STAR RNA-seq workflows presents researchers with a critical economic decision: selecting the appropriate computational infrastructure. The choice between the pay-as-you-go cloud model and traditional High-Performance Computing (HPC) infrastructure significantly impacts research budgets, scalability, and operational flexibility [23]. Next-generation sequencing technologies have democratized genomic research while simultaneously increasing the costs associated with data storage, analysis, and interpretation [24]. This application note provides a structured economic comparison and detailed protocols to guide researchers in optimizing their computational expenditures for transcriptomic studies.

The STAR (Spatio-Temporal Acidic Region) aligner represents a particularly resource-intensive component in RNA-seq workflows, requiring substantial memory allocation (approximately 30GB for human genome indices) and significant processing capabilities [25]. Understanding the economic tradeoffs between computational approaches is essential for maximizing research output within constrained budgets, particularly as the NGS-based RNA-sequencing market continues expanding at a CAGR of 20.1% [26].

Economic Comparison: Architectural and Financial Considerations

Fundamental Architectural Differences

The economic implications of infrastructure selection stem from fundamental architectural differences between cloud computing and traditional HPC systems.

Table 1: Architectural Comparison Between HPC and Cloud Computing

| Feature | High-Performance Computing (HPC) | Cloud Computing |

|---|---|---|

| Core Architecture | Tightly-Coupled Clusters/Supercomputers | Loosely-Coupled, Distributed Systems |

| Interconnect | Ultra-Low Latency (InfiniBand HDR/NDR, ~100ns-1µs) | Standard High-Bandwidth Ethernet (RoCEv2, ~µs) |

| Compute Focus | Raw Flops, Parallel Scaling (CPU/GPU Density) | Service Breadth, Elasticity, Managed Services |

| Storage | Parallel File Systems (Lustre, GPFS - High IOPS/BW) | Object Storage (S3), Block Storage, File (NFS) |

| Management | Complex, Specialized (Job Schedulers - Slurm, PBS) | Simplified, API-Driven, Self-Service |

| Deployment Model | Often On-Prem, Dedicated Colo, Cloud HPC "Pods" | Public Cloud, Private Cloud, Hybrid Cloud |

| Cost Model | High Capex (Hardware) / Lower Opex (Power, Staff) | Low/No Capex / Pay-as-you-Go Opex |

| Scalability | Scale-Up/Scale-Out (Pre-planned, less elastic) | Highly Elastic (Instant Up/Down) |

| Tenancy | Typically Dedicated | Multi-Tenant (Shared Resources) |

| Best For | Tightly-Coupled, Latency-Sensitive Simulations | Variable Workloads, Bursty Patterns, Managed AI/ML [23] |

These architectural differences directly influence economic efficiency for various workload types. HPC systems excel for tightly-coupled parallel applications where tasks constantly communicate, while cloud computing provides superior economic value for variable, bursty, or embarrassingly parallel workloads where tasks can be executed independently [23].

Diagram 1: Architectural and economic decision framework for computational infrastructure.

Comprehensive Cost Analysis

The economic analysis reveals distinct cost structures that favor different usage patterns and project timelines.

Table 2: Economic Comparison of Computational Infrastructure Options

| Cost Factor | Traditional HPC Infrastructure | Pay-As-You-Go Cloud | Reserved Cloud Instances |

|---|---|---|---|

| Initial Investment | High upfront capital expenditure ($50,000+) | Minimal to no upfront costs | Moderate commitment fees |

| Ongoing Operational Costs | Lower operational costs (power, cooling, maintenance) | Pay-per-use billing | Discounted rates (up to 50-70% off on-demand) |

| Staffing Requirements | Specialized IT staff for management and maintenance | Reduced operational complexity | Reduced operational complexity |

| Utilization Efficiency | Cost-effective at high utilization rates (>70%) | Cost-effective for variable workloads | Cost-effective for steady-state workloads |

| Scalability Cost | Significant additional capital investment | Instant, granular scaling | Planned scaling with commitments |

| Hardware Refresh | Additional capital investment every 3-5 years | Automatically included in service | Automatically included in service |

| Experimental Cost Example | N/A | $127 (ECS Fargate for 17.3TB data) | ~$50 (with Spot instances) [25] |

The economic advantage shifts based on utilization patterns. For steady-state workloads exceeding 30-50% utilization, traditional HPC or reserved cloud instances become more economical than pay-as-you-go models [27]. Research indicates that running steady-state workloads in pay-as-you-go mode can be more expensive than reserving capacity even when resources sit idle more than 50% of the time [27].

STAR RNA-Seq Workflow Cost Considerations

Specific cost patterns emerge when implementing STAR RNA-seq workflows in cloud environments:

- STAR alignment constitutes approximately 70-75% of total pipeline execution time, making it the primary cost driver [25]

- Memory requirements for human genome alignment (~30GB) necessitate appropriate instance selection to balance cost and performance [25]

- Storage costs for intermediate files (FASTQ formats up to 220GB) represent significant recurring expenses [25]

- Potential optimizations including spot instances (up to 70% savings) and early termination of low-quality sequences (23% reduction in alignment time) can substantially reduce costs [25]

Experimental Protocols for Economic Analysis

Protocol 1: Cloud Cost Estimation for STAR RNA-Seq

Objective: Systematically estimate computational costs for implementing STAR RNA-seq workflow in cloud environments.

Materials:

- Cloud cost estimator tools (AWS Pricing Calculator, Google Cloud Pricing Calculator)

- Sample RNA-seq dataset (FASTQ format)

- Reference genome index

Methodology:

- Workload Characterization:

- Quantify input dataset size (FASTQ files)

- Determine appropriate instance type based on memory requirements (minimum 32GB for non-human genomes, 48GB+ for human genomes)

- Estimate processing time using benchmarking data (5-10 minutes for index loading plus alignment time)

Storage Cost Calculation:

- Calculate input data storage requirements

- Project output data volume (typically 1.5-2x input size)

- Determine appropriate storage class (standard, infrequent access, archive) based on access patterns

Compute Cost Estimation:

- Apply formula: Total Cost = (Instance Cost per Hour × Estimated Runtime) + (Storage Cost per GB × Storage Volume) + Data Transfer Costs

- Incorporate 30% buffer for unexpected failures or optimization cycles [28]

Optimization Assessment:

- Evaluate spot instance compatibility for fault-tolerant workloads

- Assess reserved instance discounts for long-term projects

- Consider multi-cloud strategies to leverage competitive pricing [28]

Economic Analysis: Document cost projections and compare against traditional HPC alternatives using total cost of ownership calculations.

Protocol 2: Performance Benchmarking Across Platforms

Objective: Quantitatively compare STAR RNA-seq workflow performance and cost across computational infrastructures.

Materials:

- Standardized RNA-seq dataset (100-1000 samples)

- STAR workflow implementation

- Cloud computing account (AWS, GCP, or Azure)

- Access to HPC infrastructure

Methodology:

- Experimental Setup:

- Configure identical STAR workflow parameters across all platforms

- Select comparable hardware specifications (vCPUs, memory, storage I/O)

- Implement consistent monitoring for resource utilization and timing

Execution Protocol:

- Process standardized dataset on each platform

- Record precise execution times for each workflow step

- Monitor actual resource consumption (CPU hours, memory allocation, storage I/O)

Cost Calculation:

- Cloud environments: Use actual consumption-based billing data

- HPC environments: Calculate amortized hardware costs, power, cooling, and support staff

Data Collection:

- Record total execution time

- Calculate total cost per sample

- Document any operational complexities or failures

Analysis: Compare cost-effectiveness across platforms while considering operational overhead and scalability limitations.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Research Reagents and Computational Solutions for Cloud-Based RNA-Seq

| Item | Function/Application | Specification Notes |

|---|---|---|

| STAR Aligner | RNA-seq read alignment to reference genome | Requires 30GB RAM for human genome; most resource-intensive workflow step [25] |

| Reference Genome Index | Pre-computed genome index for alignment acceleration | Human genome index ~30GB; requires pre-loading into memory [25] |

| Elastic Block Storage (EBS) | Scalable storage for intermediate FASTQ files | GP3 volume with 500MiB/s throughput recommended for I/O intensive tasks [25] |

| Elastic File System (EFS) | Shared storage for reference indices across multiple instances | Enables efficient index sharing without redundant loading [25] |

| SRA Toolkit | Access and conversion of sequence read archive data | Converts SRA to FASTQ format; requires substantial temporary storage [25] |

| Containerization | Consistent execution environment across platforms | Docker containers encapsulate complex dependencies and ensure reproducibility [24] |

| Workflow Management | Orchestration of multi-step analysis pipelines | Tools like Nextflow or Cromwell enable scalable, reproducible analyses [29] |

| Monitoring Tools | Resource utilization tracking and cost attribution | Cloud-specific monitoring (AWS CloudWatch) essential for cost control [28] |

Economic Decision Framework

The economic decision between pay-as-you-go cloud models and traditional HPC infrastructure depends primarily on workload characteristics and utilization patterns.

Diagram 2: Economic decision framework for computational infrastructure selection.

Decision Guidelines:

Choose Traditional HPC when:

Choose Pay-As-You-Go Cloud when:

Choose Reserved Cloud Instances when:

- Steady-state workload patterns are predictable

- Long-term projects justify commitment-based discounts (up to 70% savings) [27]

- Cloud infrastructure is preferred but pay-as-you-go costs would be prohibitive

Implement Hybrid Approach when:

- Base workload runs on-premises with cloud bursting for peak demands

- Specific workloads are cloud-optimized while others require specialized HPC

- Organizational strategy involves gradual cloud migration [23]

The economic analysis demonstrates that both pay-as-you-go cloud models and traditional HPC infrastructure offer distinct economic advantages for different research scenarios. For STAR RNA-seq workflows, cloud implementations provide compelling economic benefits for small-to-medium scale projects, bursty workloads, and research environments with limited capital funding. Traditional HPC infrastructure remains economically superior for large-scale, consistent utilization scenarios where the high upfront investment can be amortized across multiple projects and years.

Researchers should carefully characterize their workload patterns, project timelines, and technical requirements before selecting computational infrastructure. The protocols and decision framework provided in this application note enable systematic economic evaluation to optimize research expenditures while maintaining computational efficiency for transcriptomic studies.

Implementing End-to-End STAR RNA-seq Pipelines in the Cloud

Within the broader context of implementing cloud-based STAR RNA-seq workflows, this application note details a standardized, scalable pipeline architecture for processing data from its raw form in sequence read archives (SRA) to ready-for-analysis differential expression results. The complexity of RNA-Seq data, addressing various aspects of gene expression, necessitates robust and reproducible analysis strategies [31]. The protocol outlined herein is designed to meet this need, integrating state-of-the-art bioinformatics tools within a structured framework that leverages cloud computing environments to provide researchers and drug development professionals with a comprehensive solution from data acquisition to biological insight.

Pipeline Architecture and Workflow

The overarching pipeline architecture is modular, ensuring flexibility and scalability. The workflow can be conceptually divided into three major phases: Data Acquisition and Preprocessing, Read Alignment and Quantification, and Downstream Analysis. This structure allows researchers to execute the entire pipeline seamlessly or to run specific modules independently based on their requirements [32].

The following diagram illustrates the complete workflow and the logical relationships between its core components:

Quantitative Pipeline Comparison

Multiple RNA-seq pipeline solutions are available, each with distinct features, computational environments, and capabilities. The table below provides a structured comparison of several prominent options to guide researchers in selecting the most appropriate platform for their cloud-based STAR RNA-seq workflow implementation.

Table 1: Comparative Analysis of RNA-Seq Analysis Pipelines

| Pipeline Name | Core Features | Computational Environment | Quantification Tools | Differential Expression | Key References |

|---|---|---|---|---|---|

| mainakm7/RNAseq_pipeline [32] | SRA download, alignment, QC, alternative splicing (RMATS) | HPC (SLURM), Singularity/Docker | featureCounts, Kallisto | Yes (via downstream analysis) | Integrated tool documentation |

| nf-core/rnaseq [33] | Extensive QC, trimming, multiple alignment routes, pseudoalignment | Nextflow, Cloud/Cluster | featureCounts, Salmon, Kallisto | No (output for statistical environments) | Ewels et al., 2020 |

| RumBall [34] | Read mapping, normalization, statistical modeling, GO enrichment | Docker | Internally integrated popular tools | Yes | Protocol publication |

| RAP [31] | Quality checks, expression quantification, alternative splicing, chimeric transcripts | Cloud computing web application | Cufflinks, HTSeq | Yes (Cuffdiff2, DESeq) | D'Antonio et al., 2015 |

| LncExpDB Pipeline [35] | Standardized processing for lncRNA expression database | Modular command-line tools | featureCounts, Kallisto | Implied by database purpose | Database methodology |

Detailed Experimental Protocol

Phase 1: Data Acquisition and Preprocessing

Downloading SRA Files

The pipeline begins with acquiring raw sequencing data from public repositories like the Sequence Read Archive (SRA). This can be accomplished using command-line tools.

Using

wget:The

-cparameter enables resume capability, crucial for large file transfers, while-t 0sets retry attempts to infinite, ensuring robust download completion [35].Using

sratoolkit: The SRA toolkit provides specialized utilities for efficient data access and extraction.

Converting SRA to FASTQ

The downloaded SRA files are converted into standard FASTQ format using fasterq-dump, which is faster and more efficient than the older fastq-dump.

- Command:

Here,

-pshows progress,-e 32specifies the use of 32 threads to speed up the process, and--split-3separates paired-end reads into two files while handling unpaired reads appropriately [35].

Quality Control and Trimming

Raw sequencing reads often contain adapter sequences and low-quality bases that can adversely affect alignment. Trimmomatic is used for this purpose.

- For Paired-End Data: This command removes Illumina adapters, cuts the first 3 bases, trims low-quality bases from the start and end, and discards reads shorter than 15 bases after trimming [35].

Phase 2: Read Alignment and Quantification

Alignment to Reference Genome with STAR

The STAR aligner is optimized for RNA-seq data as it accurately handles splice junctions.

- Command:

Key parameters include

--outSAMtype BAM SortedByCoordinateto generate a sorted BAM file, and--outFilterMultimapNmax 20to control the number of multiple alignments allowed per read [35]. This parameter combination is recommended for quantifying abundance in deep-sequencing samples [35].

Gene-Level Quantification with featureCounts

Following alignment, reads are assigned to genomic features. This step requires knowing the library's strandedness, which can be inferred automatically using tools like infer_experiment.py from the RSeQC package [35].

- Command for Paired-End Data:

The

-s 2parameter indicates reverse strandedness, which is common for many library prep kits; this should be adjusted based on empirical inference [35].

Phase 3: Downstream Analysis

While the core processing pipeline ends with a count matrix, the subsequent differential expression analysis is critical for extracting biological meaning. The generated count matrix can be directly imported into statistical environments like R for analysis with packages such as DESeq2 or Limma [33]. Furthermore, platforms like RumBall encapsulate this process within a Docker container, guiding users through statistical modeling and gene ontology enrichment to interpret the results in the context of biological pathways [34].

The Scientist's Toolkit

Successful implementation of a cloud-based STAR RNA-seq workflow requires a suite of specialized research reagents and software solutions. The following table details the essential materials and their critical functions within the pipeline.

Table 2: Key Research Reagent Solutions for RNA-Seq Pipeline Implementation

| Tool/Resource | Category | Primary Function | Protocol-Specific Role |

|---|---|---|---|

| SRAtoolkit [35] | Data Access | Download and extract data from SRA | Converts SRA accession IDs into analysis-ready FASTQ files |

| Trimmomatic [35] | Quality Control | Remove adapter sequences and low-quality bases | Ensures read quality and removes contaminants for accurate alignment |

| STAR Aligner [32] [35] | Alignment | Maps RNA-seq reads to a reference genome | Precisely aligns spliced transcripts and identifies junction reads |

| featureCounts [35] | Quantification | Assign aligned reads to genomic features | Generates the gene-count matrix for differential expression testing |

| RSeQC [35] | QC & Utility | Evaluate data quality and infer experiment type | Determines library strandedness automatically from aligned data |

| Docker/Singularity [32] [34] | Containerization | Package software into portable, reproducible units | Ensures pipeline consistency and portability across cloud environments |

| Nextflow [33] | Workflow Management | Orchestrate pipeline execution across platforms | Enables scalable, reproducible workflows on cloud and HPC systems |

| Reference Genome & GTF [35] | Reference Data | Provide species-specific genomic sequence and annotation | Serves as the template for read alignment and gene quantification |

Within the framework of a comprehensive thesis on implementing cloud-based STAR RNA-seq workflows, efficient data preparation is a critical foundational step. The NCBI SRA Toolkit provides the essential utilities for accessing and converting publicly available sequencing data from the Sequence Read Archive (SRA) into analysis-ready FASTQ files. This protocol focuses specifically on optimizing the SRA to FASTQ conversion process, a prerequisite for subsequent alignment and differential expression analysis in transcriptomic studies. The massive scale of modern RNA-seq experiments, often involving hundreds of samples, demands efficient and reliable conversion methodologies that leverage cloud computing capabilities for scalable processing [36]. As genomic datasets continue to grow exponentially, with SRA currently housing over 30 petabytes of sequencing data, optimized data retrieval and conversion protocols become increasingly vital for productive research [10].

SRA Toolkit Tools and Configuration

Essential Toolkit Components

The SRA Toolkit encompasses several command-line utilities designed for specific aspects of data retrieval and processing [37]:

- prefetch: Downloads SRA files from NCBI repositories to local storage

- fasterq-dump: The preferred tool for rapid conversion of SRA files to FASTQ format (multithreaded)

- fastq-dump: The legacy tool for SRA to FASTQ conversion (single-threaded, being deprecated)

- vdb-config: Interactive configuration tool for setting SRA Toolkit parameters

- srapath: Verifies accessibility and location of SRA accession files

Critical Configuration Steps

Proper configuration of the SRA Toolkit is essential for optimal performance, particularly in cloud environments:

For researchers working with controlled-access data (such as dbGaP), additional configuration with AWS or GCP credentials is required [38]. The toolkit must also be configured to accept cloud egress charges when accessing original submission files stored in commercial clouds [37].

Table 1: SRA Toolkit Configuration Settings for Cloud Environments

| Setting | Recommended Value | Purpose |

|---|---|---|

| Remote Access | Enabled | Allows fetching data from NCBI, AWS, and GCP |

| Temporary Directory | Local scratch space | Improves I/O performance during conversion |

| Cloud Identity Reporting | Enabled | Enables free access to public data in cloud repositories |

| File Caching | Enabled with sufficient RAM | Reduces redundant download operations |

Performance Comparison: fasterq-dump vs fastq-dump

Tool Selection Guidelines

The SRA Toolkit provides two primary utilities for FASTQ conversion, with significant performance implications:

fasterq-dump is now the recommended tool for all new workflows, as explicitly stated in NIH documentation: "fastq-dump is being deprecated. Use fasterq-dump instead - it is much faster and more efficient" [37]. This utility employs multi-threading (default: 6 threads) and optimized caching mechanisms to accelerate the conversion process.

fastq-dump remains available but operates as a single-threaded process with significantly lower performance. It retains utility only for specific use cases such as extracting small subsets of data or when direct compression during conversion is required [39].

Quantitative Performance Metrics

Performance testing reveals substantial differences between the two tools:

Table 2: Performance Comparison: fasterq-dump vs fastq-dump

| Tool | Threads | Default Splitting | Compression During Conversion | Subset Extraction | Relative Speed |

|---|---|---|---|---|---|

| fasterq-dump | 6 (default) | --split-3 | No (requires post-processing) | No | ~3-5x faster |

| fastq-dump | 1 | Manual (--split-3 required) | Yes (--gzip) | Yes (-X) | 1x (baseline) |

Empirical testing demonstrates that combining prefetch with fasterq-dump provides the fastest overall workflow, as prefetch downloads the SRA file in its native compressed format, which fasterq-dump then processes in parallel [39]. This approach can reduce processing time by 23-40% compared to standalone fasterq-dump execution [10].

Experimental Protocols

Protocol 1: Standard SRA to FASTQ Conversion

This protocol describes the optimal method for converting SRA accessions to FASTQ format using the fasterq-dump utility [37] [38]:

Environment Setup: Load the SRA Toolkit module and configure temporary storage:

Download SRA File: Use prefetch to download the SRA file:

Convert to FASTQ: Execute fasterq-dump with appropriate parameters:

The

-pflag shows progress,-tspecifies temporary directory, and-Osets output directory.Compress Output (optional): Compress resulting FASTQ files to save space: