Optimizing Gene Caller Performance on Draft Assemblies: Strategies for Accurate Variant Detection in Genomic Research

This article provides a comprehensive guide for researchers and bioinformaticians on overcoming the challenges of gene calling and variant detection in draft genome assemblies.

Optimizing Gene Caller Performance on Draft Assemblies: Strategies for Accurate Variant Detection in Genomic Research

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians on overcoming the challenges of gene calling and variant detection in draft genome assemblies. It explores the foundational impact of assembly quality on downstream analyses, evaluates the latest AI-powered and conventional variant calling methodologies, and presents practical optimization and troubleshooting strategies. Furthermore, it outlines robust validation frameworks and comparative benchmarking approaches to ensure high-confidence results. Tailored for professionals in genomics and drug development, this resource synthesizes current best practices to enhance the accuracy and reliability of genetic variant discovery in complex genomic studies.

Understanding the Impact of Draft Assembly Quality on Gene Calling

Frequently Asked Questions (FAQs)

1. What are the most common types of errors in draft genome assemblies? Draft genomes typically contain two primary categories of errors. Small-scale errors include single nucleotide polymorphisms (SNPs) and small insertions or deletions (indels). Large-scale structural errors involve misjoined contigs, where two unlinked genomic fragments are improperly connected, leading to erroneous scaffolds. These structural errors can significantly distort downstream comparative genomic studies. [1]

2. How does genome fragmentation affect gene annotation? Low-quality, fragmented assemblies directly lead to inaccurate gene annotations. A primary issue is the "cleaved gene model," where a single gene is incorrectly annotated as multiple separate genes due to assembly breaks. This causes overestimation of single-exon genes and depletion of multi-exon genes, with one study finding that over 40% of gene families can have the wrong number of genes in draft assemblies. [2]

3. Why are repetitive regions problematic for assembly? Repetitive elements pose a significant challenge because when read lengths are shorter than the repetitive element itself, it becomes difficult to uniquely anchor these reads. This creates ambiguity, causing assembly fragmentation for interspersed repeats (like transposable elements) and collapse for tandem repeats (like satellites or multicopy genes), where the true number of repeat units is lost. Regions like telomeres, centromeres, and heterochromatic sex chromosomes (W, Y) are often missing. [3]

4. My assembly has a good N50 contig length. Does this mean it is high quality? Not necessarily. While N50 is a useful metric for assembly continuity, it can be misleading. It is possible to have an assembly with long contigs that also contains a high rate of misassemblies. A comprehensive quality assessment should also evaluate accuracy using tools like BUSCO (for completeness), CRAQ (for error identification), and LAI (for assessing repetitive element assembly). [1]

5. What is genomic "dark matter" and how can we assemble it? Genomic "dark matter" refers to regions that are systematically missing or misassembled in drafts due to technological biases, primarily repeat-rich regions and areas with extreme GC content. Assembling this dark matter requires a multiplatform approach, combining long-read sequencing (PacBio, Nanopore) to span repeats, linked-reads, and proximity-ligation data (Hi-C) to scaffold, and often PCR-free libraries to mitigate GC-bias. [3]

6. Can I use short-read data to improve an existing draft assembly? Yes. Methods like the IMAGE (Iterative Mapping and Assembly for Gap Elimination) pipeline use Illumina reads to improve draft assemblies. This approach aligns reads to contig ends and performs local assemblies to produce gap-spanning contigs, effectively closing gaps and merging contigs without the need for new data. This has been shown to close over 50% of gaps in some genome projects. [4]

Troubleshooting Guides

Problem 1: High Proportion of Fragmented or Missing Genes

Symptoms: BUSCO analysis reveals a high number of fragmented or missing benchmark genes. Gene callers predict an abnormally high number of single-exon genes.

Diagnosis and Solutions:

- Root Cause: Genome fragmentation is causing cleaved gene models. [2]

- Solution A (Wet Lab): Generate paired-end RNA-seq data. This provides direct evidence of transcript structures, allowing annotation tools to connect exons that were separated in the draft assembly. [2]

- Solution B (Computational): Employ a combination of gene prediction methods. For metagenomic-style short reads, a consensus of multiple predictors (e.g., GeneMark, Orphelia, MGA) can boost annotation accuracy by 1-4%. For longer reads, a majority vote or intersection of predictors is often best. [5]

Problem 2: Suspected Structural Errors and Misassemblies

Symptoms: Alignments of raw reads back to the assembly show regions with no coverage, very low coverage, or a high density of "clipped" reads, indicating potential misjoins.

Diagnosis and Solutions:

- Root Cause: Large-scale structural assembly errors, such as contig misjoins. [1]

- Solution: Use a tool like CRAQ (Clipping information for Revealing Assembly Quality). This reference-free tool maps raw reads (both NGS and long-reads) back to the assembly to identify Clip-based Regional Errors (CREs) and Clip-based Structural Errors (CSEs) at single-nucleotide resolution. It can distinguish true assembly errors from heterozygous sites. [1]

- Protocol:

- Map all available raw sequencing reads (Illumina, PacBio, or Nanopore) back to your draft assembly using a sensitive aligner.

- Run CRAQ using the assembly and the alignment file (BAM).

- Inspect the output for regions with high densities of CREs and CSEs. CSEs often indicate breakpoints for misjoined contigs.

- Split the assembly contigs at these identified breakpoints before proceeding with scaffold construction using Hi-C or optical mapping data. [1]

Problem 3: Poor Assembly of Repetitive or GC-Rich Regions

Symptoms: Specific genomic features (e.g., telomeres, centromeres, MHC genes, microchromosomes) are absent or poorly assembled. Coverage is uneven, with dips in high-GC or high-AT regions.

Diagnosis and Solutions:

- Root Cause: Technological limitations of short-read sequencing, including PCR amplification bias and inability to span long repeats. [3]

- Solution: Implement a multi-platform sequencing strategy. The most effective way to resolve genomic dark matter is to integrate complementary technologies. [3]

- Workflow:

- Core Data: Generate long-reads (PacBio HiFi or Nanopore UL) as the foundation. These reads are long enough to span most repetitive elements.

- Scaffolding: Use proximity ligation (Hi-C) or optical mapping data to scaffold the long-read contigs into chromosome-scale structures.

- Polishing and Validation: Polish the assembly with high-accuracy short reads or use the latest long-read chemistries. Validate using independent methods.

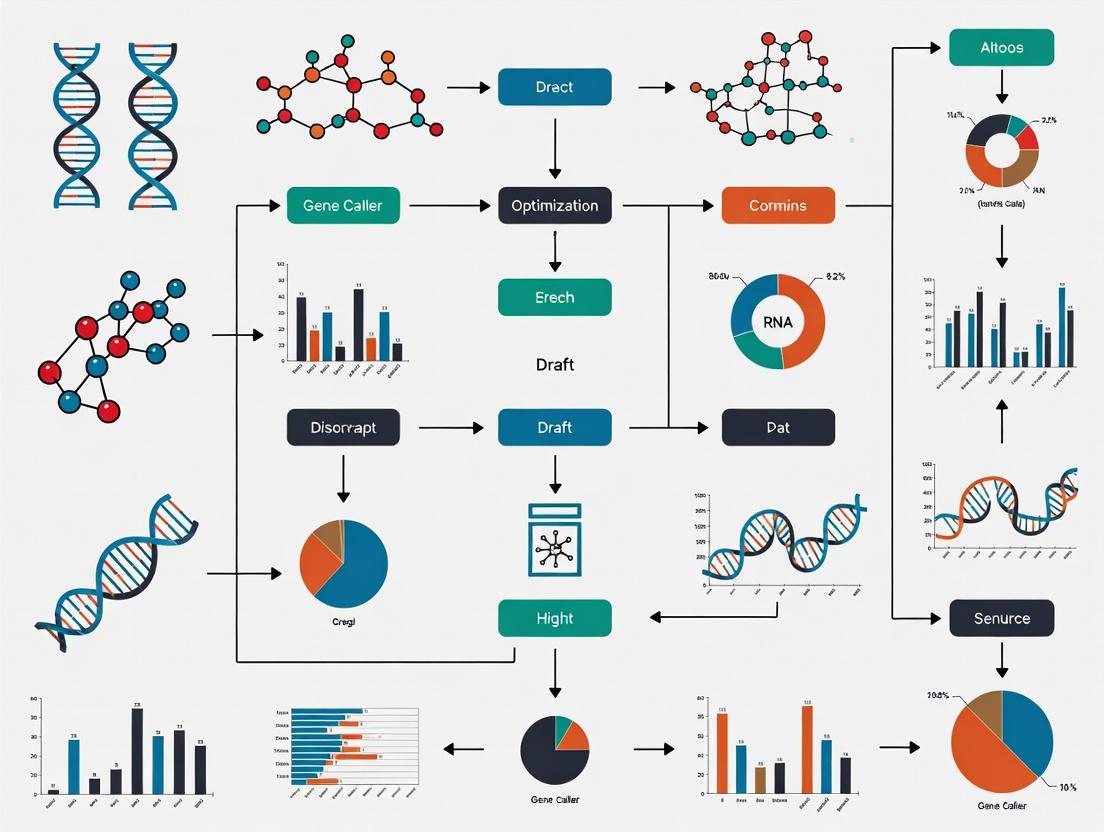

The following workflow diagram illustrates this multi-platform strategy:

Quantitative Data on Assembly Errors and Corrections

Table 1: Performance of Error Identification Tools on Simulated Data

This table compares the precision and recall of different assembly assessment tools for identifying assembly errors, based on a simulated dataset. [1]

| Tool | Type | Recall (CREs) | Precision (CREs) | Recall (CSEs) | Precision (CSEs) |

|---|---|---|---|---|---|

| CRAQ | Reference-free | >97% | >97% | >97% | >97% |

| Inspector | Reference-free | ~96% | ~96% | 28% | High |

| Merqury | Reference-free (k-mer based) | - | - | - | 87.7% (F1 Score)* |

| QUAST-LG | Reference-based | >98% | >98% | >98% | >98% |

Note: CREs = Clip-based Regional Errors; CSEs = Clip-based Structural Errors. *Merqury does not distinguish between CREs and CSEs. [1]

Table 2: Gene Prediction Program Performance by Read Length

This table summarizes the optimal strategy for combining gene prediction programs to improve annotation accuracy for different read lengths, as used in metagenomics. [5]

| Read Length | Optimal Combination Strategy | Reported Improvement in Accuracy |

|---|---|---|

| 100 bp | Consensus of all methods (majority vote) | ~4% improvement |

| 200 - 400 bp | Consensus of all methods (majority vote) | ~1% improvement |

| ≥ 500 bp | Intersection of GeneMark and Orphelia predictions | Best performance |

Experimental Protocols

Protocol 1: Iterative Mapping and Assembly for Gap Elimination (IMAGE)

This protocol uses Illumina reads to close gaps and extend contigs in an existing draft assembly. [4]

- Read Alignment: Align all available Illumina paired-end reads to the draft assembly.

- Read Gathering: Collect reads that align to the ends of contigs.

- Local Assembly: Perform a local de novo assembly of the gathered reads using an assembler like Velvet. This produces new, gap-spanning contigs.

- Contig Extension/Merging: Integrate the newly assembled Illumina contigs back into the reference assembly to extend or merge existing contigs.

- Iteration: Repeat the process until no further gaps can be closed.

The logical flow of the IMAGE protocol is shown below:

Protocol 2: Structural Annotation with the MAKER2 Pipeline

This is a foundational protocol for de novo genome annotation, which is critical for generating training data for gene callers. [6]

- Repeat Masking:

- Run

RepeatModelerto construct a species-specific repeat library. - Mask the genome assembly using

RepeatMaskerwith the custom library and RepBase databases.

- Run

- Ab Initio Gene Predictor Training:

- Train

AugustususingBUSCOto generate a species-specific gene prediction model. - Train

SNAPby running MAKER2 initially with EST or protein evidence to generate initial gene models, then using those models to train SNAP (recommended for 3 iterations).

- Train

- Evidence Integration and Annotation:

- Run the full MAKER2 pipeline, providing the masked genome, trained models, and any available transcriptomic (EST/RNA-seq) or protein evidence data.

- MAKER will integrate all evidence to produce a final, consensus set of gene annotations.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Assembly Improvement and Validation

| Reagent / Tool | Function / Application | Key Features / Notes |

|---|---|---|

| PacBio SMRT Sequencing | Long-read sequencing to span repetitive regions and resolve complex genomic structures. [3] | Provides kilobase-long reads; HiFi reads offer high accuracy. |

| Oxford Nanopore Sequencing | Long-read sequencing for ultra-long fragments, enabling telomere-to-telomere assembly. [3] | Read length primarily limited by DNA input integrity. |

| Hi-C Proximity Ligation | Scaffolding technology to order and orient contigs into chromosome-scale scaffolds. [3] | Captures chromatin interaction data for scaffolding. |

| BUSCO | Assessment of genome assembly and annotation completeness based on evolutionarily informed single-copy orthologs. [6] | Quantifies the presence of expected genes; a key quality metric. |

| CRAQ Software | Reference-free tool to identify assembly errors at single-nucleotide resolution using read clipping information. [1] | Distinguishes between assembly errors and heterozygous sites. |

| MAKER2 Annotation Pipeline | Integrates multiple sources of evidence to produce high-quality genome annotations. [6] | Can incorporate ab initio predictions, ESTs, and protein homology. |

| Helixer | Deep learning-based tool for ab initio gene prediction in eukaryotic genomes. [7] | Does not require species-specific training or extrinsic data. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the core principles for comprehensively evaluating a genome assembly?

Genome assembly quality is assessed based on three fundamental principles, often called the "3 Cs":

- Contiguity: Measures how much of the genome is assembled into long, uninterrupted segments. Metrics like N50 indicate this; a higher N50 suggests a less fragmented assembly [8] [9].

- Completeness: Assesses how much of the expected genomic sequence is present in the assembly. This evaluates whether any parts of the genome are missing [8] [9].

- Correctness: Evaluates the accuracy of each base pair and the larger-scale structure in the assembly. It ensures the assembled sequence is a true representation of the biological source, free from base-level errors or large-scale misassemblies [8] [9] [10].

FAQ 2: My assembly has a high BUSCO score but my gene caller performance is poor. Why?

A high BUSCO score confirms the presence of conserved gene space but does not guarantee the quality of repetitive regions or structural accuracy [11] [10]. Gene callers rely on accurate identification of open reading frames (ORFs), which can be disrupted by:

- Frameshifts: Assembly errors that cause shifts in the reading frame, often due to indels [8].

- Fragmented Genes: Incomplete assembly of gene sequences, leaving them in separate contigs [12].

- Misassembled Repetitive Regions: Errors in complex regions rich in repeats can lead to spurious gene predictions [11] [13]. Using the LTR Assembly Index (LAI) is recommended to assess the assembly quality of repetitive regions, which directly impacts gene caller accuracy [11].

FAQ 3: When should I use LAI over BUSCO, and can I use them together?

LAI and BUSCO are complementary metrics that assess different genomic regions. The following table outlines their specific uses and how they can be integrated.

| Metric | Primary Use Case | Genomic Region Assessed | Interpretation of High Score |

|---|---|---|---|

| BUSCO | Evaluating gene space completeness [11] [9]. | Universal single-copy orthologs (gene space). | Essential genes are complete and present; the assembly is suitable for gene-centric analyses [14]. |

| LAI | Assessing the assembly of repetitive regions, crucial for plant genomes [11]. | Long Terminal Repeat (LTR) retrotransposons. | Repetitive regions are well-assembled; the assembly has high structural completeness, beneficial for gene prediction in repeats [11]. |

They should be used together for a holistic view. An ideal assembly achieves high scores in both BUSCO and LAI [11] [13].

FAQ 4: What is a "good" N50 value for my genome assembly?

There is no universal "good" N50 value, as it depends on the genome's size and complexity. The key is context:

- Comparison to Genome Size: The N50 should be a significant fraction of the chromosome length. Some studies suggest the ratio of contig count to chromosome pair number (CC ratio) is a more robust metric [10].

- Technology Benchmark: In the era of long-read sequencing, a contig N50 over 1 Mb is often considered good [8].

- Trend, Not Absolute Value: Use N50 to compare different assemblies of the same organism. A consistently increasing N50 across assembly versions indicates improvement.

FAQ 5: How can I measure assembly correctness without a reference genome?

Several reference-free methods are available:

- K-mer Analysis: Tools like

merqurycompare the k-mers (short subsequences of length k) in your assembly to those in high-quality short-read data from the same sample. This can identify base-level errors and estimate completeness [8] [9]. - Transcriptome Alignment: Mapping full-length transcript isoforms (e.g., from Iso-Seq data) to the assembly can reveal frameshifts and indels in coding regions, which are likely assembly errors [8].

Troubleshooting Guides

Issue 1: Low BUSCO Score

A low BUSCO score indicates missing conserved genes in your assembly.

- Potential Cause 1: Insufficient sequencing coverage or high duplication rate.

- Solution: Check your sequencing depth. Remap the raw reads to the assembly to calculate the mapping rate and coverage uniformity. Low coverage may require additional sequencing.

- Potential Cause 2: High fragmentation in the assembly.

- Solution: Examine your contig N50 and number of contigs. A highly fragmented assembly will break genes apart. Consider reassembling with different parameters or using long-read sequencing data for improved contiguity [13].

- Potential Cause 3: Contamination from other organisms.

- Solution: Run screening tools (e.g., Kraken2) on your raw reads and assembly to detect and remove contaminant sequences.

Issue 2: Low LAI Score

A low LAI suggests poor assembly of repetitive regions, which is common in plant genomes [11].

- Potential Cause 1: Use of short-read sequencing technology.

- Potential Cause 2: Inappropriate assembler or parameters.

- Solution: Use assemblers specifically designed for long reads and complex genomes (e.g., CANU, Flye, HiCanu). Optimize parameters for your data type and genome [13].

- Potential Cause 3: Inadequate sequencing depth for repeats.

- Solution: Ensure sufficient sequencing depth. The LAI calculation itself requires a certain level of assembly quality to even be computed, as it relies on identifying intact LTR-RTs [11].

Issue 3: Inconsistent Gene Annotations Across Orthologs

This is a common problem in pangenome studies where orthologous genes are predicted and annotated separately for each genome.

- Potential Cause: Inconsistent gene prediction and annotation.

- Solution: Use a graph-based gene caller like

ggCaller[12].ggCallerperforms gene prediction directly on a population-wide de Bruijn graph, ensuring consistent start/stop codon identification and functional annotation across all orthologs in the dataset, thereby improving gene caller performance [12].

- Solution: Use a graph-based gene caller like

Experimental Protocols

Protocol 1: Installing and Running BUSCO v6.0.0

BUSCO assesses genome completeness based on universal single-copy orthologs [14].

Methodology:

- Installation with Conda:

- Run BUSCO Assessment:

- Mandatory Parameters:

-i [SEQUENCE_FILE]: Input genome assembly in FASTA format.-m [MODE]: Set mode togenomefor genome assemblies.-l [LINEAGE_DATASET]: Specify the BUSCO lineage dataset (e.g.,eukaryota_odb10).

- Recommended Parameters:

-c [CPU]: Number of CPU threads to use.-o [OUTPUT_NAME]: Prefix for output files and folders.

- Example Command:

- Mandatory Parameters:

- Interpretation:

The results are summarized in a short summary file (

short_summary.*.txt), reporting the percentage of complete, fragmented, and missing BUSCOs.

Protocol 2: Calculating the LTR Assembly Index (LAI)

The LAI evaluates assembly quality by quantifying the completeness of LTR retrotransposons (LTR-RTs) [11].

Methodology:

- Workflow Overview: The LAI workflow involves identifying intact LTR-RTs and calculating an index based on their ratio to fragmented ones [11].

- Software Tools: The process uses a combination of tools:

LTR_FINDER_parallelandLTRharvestfor initial LTR-RT candidate detection.LTR_retrieverto process the outputs and identify intact LTR-RTs.- The

LAIprogram to compute the final index [11].

- Web Tool Alternative: For users without a high-performance computing setup, the PlantLAI webserver (https://bioinformatics.um6p.ma/PlantLAI) provides a free service to calculate LAI for plant genomes [11].

The logical sequence and data flow between these tools in a standard LAI analysis is as follows:

Quantitative Data Tables

Table 1: LAI Quality Classification for Diploid Plant Genomes Based on a large-scale assessment of 1,136 plant genomes suitable for LAI calculation [11].

| LAI Value Range | Quality Classification | Number of Genomes (in study) | Implication for Gene Calling |

|---|---|---|---|

| 0 - 10 | Draft | 476 | High fragmentation in repetitive regions; gene calling in these areas will be unreliable. |

| 10 - 20 | Reference | 472 | Moderate quality for repeats; suitable for many gene-centric studies. |

| ≥ 20 | Gold | 135 | High continuity in repetitive regions; optimal for comprehensive gene calling and annotation. |

Table 2: Key Metrics for Interpreting Assembly Quality A summary of critical metrics and their target values for high-quality assemblies [8] [9] [10].

| Metric | Category | Target Value / Interpretation | Notes |

|---|---|---|---|

| N50 / N90 | Contiguity | As high as possible; context-dependent. | Compare against estimated chromosome length. Sensitive to fragmentation [10]. |

| BUSCO (%) | Completeness | > 95% (Complete) | Indicates essential gene space is present [8] [9]. |

| LAI | Completeness (Repetitive) | ≥ 20 (Gold) | Plant-specific; critical for repetitive region quality [11]. |

| Number of Gaps | Contiguity | As low as possible. | Directly indicates breaks in the assembly. |

| k-mer Completeness (QV) | Correctness | > 40 (Q40) | A consensus quality value (QV) of 40 indicates a base error rate of 1 in 10,000 [10]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Data Resources for Assembly Evaluation

| Item Name | Function / Application | Reference / Source |

|---|---|---|

| BUSCO | Assesses assembly completeness by searching for universal single-copy orthologs. | [14] [9] |

| LTR_retriever | Pipeline to identify intact LTR retrotransposons and calculate the LAI. | [11] |

| PlantLAI | A web-based repository and tool for calculating and accessing LAI values for plant genomes. | [11] |

| ggCaller | A graph-based gene caller for consistent gene prediction and annotation across a pangenome. | [12] |

| merqury | A reference-free tool to assess assembly quality and phasing using k-mer spectra. | [8] [13] |

| QUAST | A comprehensive tool for evaluating and comparing genome assemblies, with or without a reference. | [9] [10] |

How Assembly Defects Propagate to Gene Prediction and Variant Calling Errors

Troubleshooting Guide: Common Issues and Solutions

This guide addresses specific, complex errors that researchers encounter when assembly defects impact downstream gene prediction and variant calling.

FAQ 1: Why does my variant call set show an unexpected distribution of insertions and deletions (INDELs), particularly in repetitive sequences?

- Problem: A high number of false INDELs, or an anomalous ratio of insertions to deletions, often points to a repeat collapse or expansion in the draft assembly. When an assembler mistakes multiple distinct repeat copies for a single copy (collapse), the reads originating from the different copies are forced into one location. The natural, small variations between these copies (e.g., a missing base in one copy) manifest as apparent INDELs in the variant caller [16].

- Diagnosis:

- Check for a localized spike in read depth at the locus. A repeat collapse forces reads from multiple genomic locations into one, resulting in coverage that is a multiple of the average [16].

- Use a tool like

amosvalidateto scan for the specific signatures of repeat collapse, such as mate-pairs that appear "stretched" or "compressed" or that link to unexpected contigs [16].

- Solution: Re-assemble the region using a long-read sequencing technology. Long reads can span entire repetitive regions, allowing the assembler to correctly resolve the number and arrangement of repeat copies, thereby eliminating the false INDELs [17] [18].

FAQ 2: Why are my gene predictions fragmented or missing known conserved domains, even with high BUSCO completeness?

- Problem: This is a classic symptom of a mis-assembly, such as a rearrangement or inversion, that has broken a contiguous coding sequence. While the total number of genes (BUSCO score) may be high, their structural integrity can be compromised. Automated annotation tools like PROKKA or RAST are particularly vulnerable to mis-annotating shorter gene sequences, especially those for transposases or hypothetical proteins, in flawed assemblies [19].

- Diagnosis:

- Perform a self-alignment of the assembled contigs to identify large-scale structural problems.

- Validate the assembly using mate-pair or Hi-C data. Mis-assemblies will show as a high number of mate-pairs with incorrect orientations or distances, violating the constraints of the sequencing library [16].

- Solution: Implement a hybrid assembly and polishing strategy. Combine long-read data (e.g., from Oxford Nanopore) with high-accuracy short reads (e.g., from Illumina). Assemble with a long-read assembler like Flye, then polish the assembly with multiple rounds of tools like Racon followed by Pilon, which uses short reads to correct base-level errors [18]. This approach significantly improves both assembly continuity and base-level accuracy, leading to more reliable gene models.

FAQ 3: Why does my clinical variant screening miss known pathogenic variants listed in ClinVar?

- Problem: This is a recall (sensitivity) failure often caused by the variant caller's inability to place short reads confidently in "hard-to-map" regions of the genome. These regions, which include segmental duplications and areas with low complexity or homopolymers, are often poorly assembled or represented in draft genomes [20]. If the region is absent or garbled in the assembly, the variant within it cannot be called.

- Diagnosis:

- Use a predictive model like StratoMod to identify genomic contexts where your specific sequencing and variant calling pipeline is likely to have low recall [20].

- Manually inspect the alignment (BAM) files in the genomic loci of the missed ClinVar variants to see if read mapping is poor.

- Solution: For clinical or diagnostic applications, do not rely on a single technology. Use a multi-platform sequencing strategy. For example, while Illumina excels in homopolymer-rich regions, Oxford Nanopore Technologies (ONT) shows higher performance in segmental duplications and hard-to-map regions [20]. A pipeline that integrates calls from both can achieve a more complete variant set.

FAQ 4: How can I distinguish a true somatic mutation from an artifact introduced during assembly?

- Problem: Assembly artifacts, such as those from PCR duplicates or base-calling errors, can mimic true low-allele-fraction somatic variants. This is a critical issue in cancer genomics [17] [21].

- Diagnosis:

- Scrupulously mark and remove PCR duplicates using tools like Picard or by employing unique molecular identifiers (UMIs) during library preparation [17].

- Check the strand bias of the putative variant. True variants should be supported by reads from both strands, whereas some sequencing artifacts appear preferentially on one strand.

- Solution: Apply error-correction and polishing to your sequencing reads before assembly. For long-read data, use tools like Canu or Racon for pre-assembly error correction. For the final assembly, perform iterative polishing with high-fidelity short reads [18] [22]. This drastically reduces the baseline error rate, making true biological signals easier to distinguish.

Table 1: Impact of Assembly Polishing on Accuracy

| Polishing Scheme | BUSCO Completeness (%) | SNV Accuracy (Q-Score) | INDEL Accuracy (Q-Score) | Key Improvement |

|---|---|---|---|---|

| No Polishing | 95.2 | 28 | 22 | Baseline (high error rate) |

| Racon (1 round) | 96.1 | 35 | 29 | Major error reduction |

| Racon + Pilon | 98.7 | 40 | 35 | Optimal for gene prediction & variant calling |

Source: Adapted from benchmarking data on HG002 human genome material [18].

Table 2: Annotation Error Rates by Gene Function

| Gene Functional Category | Typical Error Rate in Draft Assemblies | Common Annotation Error |

|---|---|---|

| Transposases / Mobile Elements | ~2.1% | Frameshifts, premature stops |

| Short Hypothetical Proteins (<150 nt) | ~0.9% | Missed or incorrectly defined ORFs |

| Conserved Single-Copy Orthologs | <0.1% | Highly reliable |

| Genes in Repetitive Regions | Highly Variable | Fragmentation, missed exons |

Source: Based on a comparison of assembly and annotation tools for *E. coli clones [19].*

Experimental Protocols

Protocol 1: Validating an Assembly for Mis-assemblies

Purpose: To identify large-scale errors (collapses, expansions, rearrangements) in a draft genome assembly using sequencing read data.

Materials: Draft assembly (FASTA), original sequencing reads (FASTQ) and their alignments to the assembly (BAM).

Methodology:

- Depth of Coverage Analysis: Calculate the per-base read depth using

samtools depth. Plot the distribution. Peaks of coverage that are multiples of the average suggest repeat collapses [16]. - Mate-Pair Analysis: Using a tool like

amosvalidate, check for mate-pairs that violate library constraints—i.e., pairs that are too far apart, in the wrong orientation, or link different contigs. These are strong indicators of a breakpoint in a mis-assembly [16]. - Self-Consistency Check: Look for correlated single-nucleotide polymorphisms (SNPs) across multiple reads in a small region. While SNPs can be real, a cluster of them in a repeat region can indicate that reads from different repeat copies have been forced together, each bringing their own unique variants [16].

Protocol 2: A Hybrid Workflow for High-Quality, Variant-Ready Assemblies

Purpose: To generate a contiguous and base-accurate genome assembly suitable for sensitive gene prediction and variant calling.

Materials: High-molecular-weight DNA, Oxford Nanopore Technologies (ONT) PromethION sequencer, Illumina NovaSeq sequencer.

Methodology:

- Data Generation: Sequence the genome to a target coverage of 40-50x with ONT long reads and 30-35x with Illumina short reads [18].

- Assembly: Perform de novo assembly using a long-read assembler such as Flye [18] [23].

- Iterative Polishing:

- Long-Read Polishing: Polish the Flye assembly with Racon, using the raw ONT reads, for 2-3 rounds. This corrects the majority of stochastic sequencing errors [18].

- Short-Read Polishing: Further polish the assembly with Pilon, using the high-accuracy Illumina short reads. This corrects residual errors, particularly in homopolymer regions [18].

- Validation: Assess the final assembly quality using QUAST (for contiguity), BUSCO (for gene completeness), and Merqury (for base-level accuracy) [18].

Workflow and Relationship Diagrams

Diagram: Error Propagation Pathway

Diagram: Assembly Validation and Polishing Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Robust Genome Analysis

| Tool / Resource | Function | Relevance to Problem |

|---|---|---|

| Flye | Long-read de novo assembler | Creates highly contiguous initial assemblies, providing the best scaffold for downstream analysis [18]. |

| Racon & Pilon | Assembly polishing tools | Corrects base-level errors; Racon uses long reads, Pilon uses short reads for maximum accuracy [18] [22]. |

| amosvalidate | Mis-assembly detection pipeline | Automates the detection of large-scale assembly errors like collapses and rearrangements [16]. |

| StratoMod | Interpretable ML classifier | Predicts where a specific variant calling pipeline is likely to miss true variants (low recall), allowing for targeted validation [20]. |

| BUSCO | Assembly completeness assessment | Benchmarks the assembly against a set of expected universal single-copy orthologs, ensuring gene space is captured [18] [22]. |

| Unique Molecular Identifiers (UMIs) | Molecular barcoding | Tags original DNA molecules to identify and remove PCR duplicates, preventing false positive variant calls [17]. |

Troubleshooting Guides and FAQs

How does genome assembly quality directly impact gene annotation completeness and accuracy?

Poor genome assembly quality leads to fragmented, missing, or misassembled genes, which directly compromises downstream genetic and genomic studies. Key impacts include [24]:

- Fragmented Genes: Incomplete assemblies result in gene models being split across multiple contigs. This prevents the identification of complete coding sequences and can lead to erroneous conclusions about gene function.

- Inaccurate Gene Counts: Draft assemblies can both add and subtract genes; over 40% of gene families may have an inaccurate number of genes in draft assemblies compared to higher-quality versions [24].

- Compromised Transcriptomic Analyses: Assembly errors, such as premature stop codons and frame-shift indels, critically affect RNA-seq read mapping. This results in downstream errors that obscure meaningful biological signals and propagate into false candidate gene identification [24].

What are the most reliable metrics to evaluate the functional completeness of a Triticeae genome assembly for gene-related studies?

For gene-related studies, the most reliable metrics extend beyond basic assembly contiguity (like N50) [24]:

- BUSCO (Benchmarking Universal Single-Copy Orthologs): Evaluates the presence of a predefined set of highly conserved orthologous genes as a proxy for genome-wide completeness. A high percentage of complete BUSCOs indicates good gene space representation [25] [24].

- Transcript Mappability: The efficiency with which RNA-seq reads from the same organism map back to the assembly. Key indicators include high overall alignment rate, covered length, and depth. This directly reflects how well the assembly represents the expressed genome [24].

- Gene Annotation Statistics: The number of high-confidence genes annotated and the proportion of the assembly they represent can indicate effective gene space capture [25].

Which reference genome assemblies are currently recommended for wheat, rye, and triticale research?

Selecting an appropriate reference is critical to minimize bias. Recent evaluations recommend [24]:

- Wheat (T. aestivum): The SY Mattis assembly is recommended as a robust reference for gene-related studies.

- Rye (S. cereale): The Lo7 assembly is a recommended reference.

- Triticale (× Triticosecale): For this hybrid, the combination of the SY Mattis (wheat) and Lo7 (rye) assemblies is suggested. Furthermore, it is recommended to incorporate the D genome sequence in reference assemblies for triticale, as introgression, translocation, and substitution of the D genome into the triticale genome frequently occur during breeding [24].

Quantitative Data on Assembly and Annotation

Table 1: Comparison of Key Metrics for Two Rye Genome Assemblies

| Metric | Weining Rye Assembly [25] | Lo7 Rye Assembly [24] |

|---|---|---|

| Assembly Size | 7.74 Gb | 6.74 Gb |

| Estimated Genome Size | 7.86 Gb | Information Not Specified |

| Percentage Assigned to Chromosomes | 93.67% | Information Not Specified |

| Contig N50 | 480.35 kb | Information Not Specified |

| Scaffold N50 | 1.04 Gb | Information Not Specified |

| Total Repeat Content | 90.31% | Information Not Specified |

| Number of High-Confidence Genes | 45,596 | 34,441 |

Table 2: Assembly and Gene Annotation Metrics across Triticeae Species [25] [24]

| Species & Genotype | Genome Type | Assembly Size (Gb) | Number of Predicted Genes |

|---|---|---|---|

| Rye (Weining) | RR | 7.74 | 45,596 (HC) |

| Rye (Lo7) | RR | 6.74 | 34,441 |

| Hexaploid Wheat (Chinese Spring) | AABBDD | 14.58 | 106,914 |

| Tetraploid Wheat | AABB | ~10.5 - 10.7 | 66,559 - 88,002 |

Experimental Protocols

Protocol: Evaluating Assembly Completeness and Correctness with BUSCO and RNA-seq

This protocol is adapted from methodologies used in recent evaluations of Triticeae genomes [24].

1.0 Evaluation of Functional Completeness with BUSCO

- 1.1 Select an appropriate lineage-specific BUSCO dataset (e.g.,

viridiplantae_odb10for plants). - 1.2 Run the BUSCO analysis on the genome assembly using standard parameters.

- 1.3 Interpret Results: The output reports the percentage of complete (single-copy and duplicated), fragmented, and missing BUSCO genes. A high-quality assembly will have a high percentage of complete BUSCOs (e.g., >95%) and a low percentage of fragmented and missing genes [25] [24].

2.0 Assessment of Assembly Correctness via RNA-seq Read Mapping

- 2.1 Obtain high-quality RNA-seq reads from the same genotype, if possible.

- 2.2 Map the RNA-seq reads to the genome assembly using a splice-aware aligner (e.g., STAR, HISAT2).

- 2.3 Calculate Key Metrics:

- Overall Alignment Rate: The percentage of reads that successfully map to the assembly.

- Covered Length: The total number of bases in the assembly covered by at least one read.

- Mapping Depth: The average depth of coverage across the transcribed regions.

- 2.4 Analyze Results: High values for all three metrics indicate an assembly that accurately represents the transcribed regions of the genome. A low alignment rate or numerous coverage gaps suggest assembly errors or missing sequences [24].

Protocol: The TRITEX Assembly Pipeline for Chromosome-Scale Assemblies

The TRITEX pipeline is an open-source workflow for constructing high-quality, chromosome-scale sequence assemblies of Triticeae genomes. The following overview summarizes its key steps [26].

1.0 Input Data Preparation The pipeline utilizes a combination of sequencing data libraries [26]:

- PCR-free Illumina Paired-End (PE) libraries with tight insert size distribution (~450 bp).

- Mate-Pair (MP) libraries with varying insert sizes (e.g., 2-4 kb, 5-7 kb, 8-10 kb).

- 10X Genomics Linked-Read libraries.

- Hi-C data for chromatin conformation capture.

- (Optional) Ultra-dense genetic maps.

2.0 Iterative Unitig Assembly with Multi-k-mer Approach

- 2.1 Merge and error-correct PE450 reads to create long, accurate fragments.

- 2.2 Perform iterative de novo assembly using Minia3, starting with a k-mer size of 100 and progressively increasing to 500.

- 2.3 Use the unitigs from the final iteration (k=500) for scaffolding. This approach achieves unitig N50 of 20-30 kb [26].

3.0 Scaffolding and Mis-assembly Correction

- 3.1 Scaffold the unitigs using SOAPDenovo2 with the PE800 and mate-pair libraries (MP3, MP6, MP9), achieving scaffold N50 beyond 1 Mb.

- 3.2 Detect and correct mis-joins introduced during assembly and scaffolding using physical coverage information from 10X linked reads [26].

4.0 Construction of Chromosomal Pseudomolecules

- 4.1 Order and orient the corrected super-scaffolds along chromosomes using Hi-C data and genetic maps.

- 4.2 Visually inspect contact matrix heatmaps for each chromosome to identify and correct remaining chimeras or misoriented blocks.

- 4.3 Repeat cycles of inspection and correction until contact matrices show the expected Rabl configuration (strong main diagonal, weak anti-diagonal) [26].

TRITEX Pipeline Workflow: From raw data to chromosome-scale assembly. [26]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Sequencing Technologies and Software for Triticeae Genome Assembly

| Reagent / Tool | Function / Application | Relevance to Triticeae Genomics |

|---|---|---|

| PacBio Long-Read Sequencing | Generates long reads (HiFi), ideal for resolving complex repetitive regions. | Used in the Weining rye and improved Chinese Spring wheat assemblies to span repetitive elements and close gaps [25] [27]. |

| Oxford Nanopore (ONT) | Produces ultra-long reads, valuable for assembling across centromeres and telomeres. | Key technology for achieving a near-complete assembly of wheat Chinese Spring, including centromeric regions [27]. |

| Hi-C Sequencing | Captures chromatin conformation data for scaffolding and ordering contigs into chromosomes. | Used in TRITEX and other pipelines to order super-scaffolds into chromosomal pseudomolecules and validate assembly structure [25] [26]. |

| 10X Genomics Linked Reads | Provides long-range phasing information and helps in detecting mis-assemblies. | A critical component of the TRITEX pipeline for super-scaffolding and correcting mis-joins [26]. |

| BUSCO | Software to assess genome completeness based on universal single-copy orthologs. | A standard metric for evaluating the functional gene space completeness of assemblies like Weining rye [25] [24]. |

| TRITEX Pipeline | An open-source computational workflow for chromosome-scale sequence assembly. | Provides a standardized, open-source method for assembling complex Triticeae genomes to high quality [26]. |

Logical flow of how assembly quality enables downstream applications.

Selecting and Applying Modern Gene and Variant Callers

Variant calling is a fundamental process in genomics that involves identifying genetic variants, such as single nucleotide polymorphisms (SNPs) and insertions/deletions (indels), from sequencing data. The advent of artificial intelligence (AI) has revolutionized this field, introducing tools that offer superior accuracy compared to traditional statistical methods [28]. For researchers working with draft assemblies, selecting and optimizing the appropriate AI-based variant caller is crucial for generating reliable results in downstream analyses. This technical support center focuses on three leading deep learning-based variant callers—DeepVariant, Clair3, and DeepTrio—providing troubleshooting guidance, performance comparisons, and experimental protocols to optimize their performance in genomic research.

The table below summarizes the key characteristics, strengths, and limitations of DeepVariant, Clair3, and DeepTrio.

| Tool | Primary Developer | Core Methodology | Supported Data Types | Key Advantages | Common Challenges |

|---|---|---|---|---|---|

| DeepVariant | Google Health [28] | Convolutional Neural Networks (CNNs) analyzing pileup images of aligned reads [28] | Illumina, PacBio HiFi, ONT [28] | High accuracy, automatically produces filtered variants without need for post-calling refinement [28] | High computational cost; warnings about missing GPU libraries when run on CPU [28] [29] |

| Clair3 | HKU-BAL [30] | Combines pileup calling for speed with full-alignment for precision [30] | ONT, PacBio, Illumina; specialized versions for RNA (Clair3-RNA) and multi-platform data (Clair3-MP) [30] [31] [32] | Fast runtime, superior performance at lower coverages, active development with regular updates [30] [28] | Requires matching the model to specific sequencing technology for optimal results [30] |

| DeepTrio | Google Health [28] | Extension of DeepVariant using CNNs to analyze family trio data (child and parents) jointly [28] | Designed for trio WGS and WES | Improves accuracy by leveraging familial inheritance patterns, excellent for de novo mutation detection [28] [33] | Complex setup for trio analysis; may require quality score (QUAL) filtering adjustments to retain true positive calls [34] |

Quantitative benchmarking on bacterial nanopore sequencing data demonstrates the high performance of these deep learning tools. In a comprehensive study, Clair3 and DeepVariant achieved SNP F1 scores of 99.99% and indel F1 scores of approximately 99.5% using high-accuracy basecalling models, outperforming traditional methods and even matching or exceeding the accuracy of short-read "gold standard" Illumina data [35].

Troubleshooting Guides and FAQs

DeepVariant

Q: I see warnings about missing libraries (e.g., libcuda.so.1, libcudart.so.11.0) when running DeepVariant. Does this affect the results?

A: These warnings are typically non-critical if you are running DeepVariant on a CPU-only system. The messages indicate that the system is looking for GPU (CUDA) libraries but cannot find them. The process will fall back to using the CPU [29]. You can safely ignore these warnings, as the tool will continue to execute. To suppress these warnings, you can set the environment variable TF_ENABLE_ONEDNN_OPTS=0, though this may slightly alter numerical results due to different computation orders [29].

Q: What is the computational resource requirement for running DeepVariant?

A: DeepVariant is computationally intensive. It is compatible with both GPU and CPU, but using a GPU significantly accelerates processing. Users should ensure they have substantial memory and storage available for whole-genome sequencing data [28].

Clair3

Q: How do I select the correct pre-trained model for my sequencing data in Clair3?

A: Clair3's performance is highly dependent on using a model trained for your specific sequencing technology and chemistry. The developers provide different models for ONT (e.g., R9.4.1, R10.4.1), PacBio HiFi, and Illumina data [30]. For specialized applications, use the dedicated tool versions:

- Bacterial genomics: Use the fine-tuned R10.4.1 model trained on 12 bacterial genomes for improved performance [30].

- RNA-seq data: Use Clair3-RNA, which is specifically optimized for the challenges of long-read RNA sequencing, such as uneven coverage and RNA editing events [31].

- Multi-platform data: Use Clair3-MP to integrate data from different technologies (e.g., ONT and Illumina) for improved variant calling in difficult genomic regions [32].

Q: How can I improve Clair3's performance in complex genomic regions?

A: For a single data type, ensure you are using the most accurate basecalling model available (e.g., "sup" for ONT). If you have access to sequencing data from a second platform (e.g., both ONT and Illumina for the same sample), using Clair3-MP is highly recommended. It has been shown to significantly boost SNP and indel F1 scores in difficult-to-map regions like segmental duplications and large low-complexity regions [32].

DeepTrio

Q: How should I handle QUAL scores in DeepTrio output to avoid missing true positive calls?

A: Some users report that a default QUAL threshold (e.g., 10) applied during the joint genotyping step with glnexus_cli can filter out true positive calls, which often have QUAL scores between 0 and 30 [34]. To address this, you can change the --config parameter in glnexus_cli from DeepVariantWGS to DeepVariant_unfiltered. This disables the stringent quality filter, allowing you to apply custom, more appropriate filtration later in your pipeline [34].

Q: For what primary use case is DeepTrio designed?

A: DeepTrio is specifically designed for germline variant calling in family trios (a child and both parents). Its key strength is leveraging the Mendelian inheritance patterns within the trio to improve the accuracy of variant calling for all members, which is particularly powerful for identifying de novo mutations (new variants in the child that are absent in the parents) with high confidence [28] [33].

Experimental Protocols for Benchmarking

To ensure the optimal performance of variant callers in your research, follow this standardized benchmarking workflow.

Step 1: Data Preparation and Truth Set Generation

Generating a reliable set of known variants (a "truth set") is critical for meaningful benchmarking.

- Recommended Method (Projection): For draft assemblies or bacterial genomes, a robust method involves creating a mutated reference genome. This is done by identifying variants between your sample's reference and a closely related "donor" genome (e.g., with ~99.5% Average Nucleotide Identity). The high-confidence variants from this comparison are then applied to your original reference to create a new "mutated" reference. This guarantees a biologically realistic set of known variants against which to test your caller [35].

- Materials:

- Reference Genome: Your sample's assembled reference.

- Closely Related Donor Genome: A genome from a different strain of the same species.

- Software Tools:

minimap2andmummerfor variant discovery between the two genomes, andbcftoolsfor variant manipulation [35].

Step 2: Read Alignment and Variant Calling

- Alignment: Align your sample's sequencing reads to the mutated reference generated in Step 1 using an appropriate aligner (e.g.,

minimap2for long-read ONT or PacBio data) [35] [32]. - Variant Calling: Run the AI-based variant callers (DeepVariant, Clair3, etc.) on the resulting BAM file, using the mutated reference as the reference genome. This will produce a VCF file of variant calls.

Step 3: Performance Evaluation

- Tool: Use

vcfdistto compare your called variants (VCF) against the known truth set [35]. - Key Metrics: The tool will classify variants into True Positives (TP), False Positives (FP), and False Negatives (FN), allowing you to calculate:

- Precision: TP / (TP + FP) - The proportion of called variants that are correct.

- Recall: TP / (TP + FN) - The proportion of true variants that were detected.

- F1 Score: The harmonic mean of precision and recall, providing a single metric for overall performance [35].

The Scientist's Toolkit: Essential Research Reagents and Materials

The table below lists key resources required for implementing the experimental protocols and troubleshooting steps outlined in this guide.

| Item Name | Function / Application | Specification Notes |

|---|---|---|

| High-Quality Reference Genome | Serves as the baseline for read alignment and variant identification. | For non-model organisms, use your own draft assembly. Ensure contiguity is as high as possible. |

| Closely Related Donor Genome | Used to create a biologically realistic variant truth set via the projection method. | Select a strain with an ANI of ~99.5% to your reference for a suitable number of variants [35]. |

| Sequencing Data | The raw data for variant discovery. | Can be ONT, PacBio, or Illumina. Use the same DNA extraction for cross-platform comparisons to avoid biases [35]. |

| Minimap2 Aligner | Efficiently aligns long-read sequencing data to a reference genome. | The standard aligner for ONT and PacBio data; used in multiple benchmarking studies [35] [32]. |

| vcfdist Software | Evaluates variant calling accuracy by comparing a VCF file to a known truth set. | Critical for calculating precision, recall, and F1 scores in a standardized way [35]. |

| Clair3 Pre-trained Models | Provides the trained neural network parameters for specific sequencing technologies. | Must be selected to match your sequencing platform (e.g., ONT R10.4.1) for optimal results [30]. |

Advanced Applications and Integration

Multi-Platform Sequencing with Clair3-MP

Integrating data from different sequencing technologies can significantly boost performance. Clair3-MP is specifically designed for this purpose.

Protocol: To use Clair3-MP, simply provide the BAM files from different platforms (e.g., ONT and Illumina) as input. The tool leverages the strengths of each: the long-range information from ONT to resolve complex regions and the high base-level accuracy of Illumina to polish indel calls [32].

Expected Outcome: The most significant improvements are observed in difficult genomic regions. For example, when combining 30x coverage each from ONT and Illumina, Clair3-MP showed notable increases in the F1 score for SNPs and indels within large low-complexity regions, segmental duplications, and collapse duplication regions compared to using either dataset alone [32].

Optimizing for Specific Organisms

While pre-trained models are often based on human data, they can generalize well. However, for optimal performance in bacterial genomics, use Clair3's R10.4.1 model fine-tuned on 12 bacterial genomes [30]. Benchmarking on a diverse panel of 14 bacterial species demonstrated that deep learning-based callers like Clair3 and DeepVariant could achieve variant accuracy that matches or exceeds Illumina sequencing, making long-read technology a viable and powerful option for bacterial outbreak investigation and epidemiology [35].

This guide provides technical support for researchers using Sawfish and DRAGEN SV structural variant callers. The following table summarizes their core architectures to help you select the appropriate tool.

| Feature | Sawfish | DRAGEN SV |

|---|---|---|

| Primary Technology | Pacific Biosciences (PacBio) HiFi Reads [36] | Illumina Short-Read & Paired-End Sequencing [37] [38] |

| Variant Call Type | Joint SV & Copy Number Variant (CNV) caller [36] | SV and indel caller (≥50 bp); CNV via separate workflow [38] [39] |

| Core Methodology | Local sequence assembly [36] | Breakend association graph & integrated Manta methods [37] [38] |

| Optimal Use Case | Germline SV discovery & genotyping from HiFi data [36] | Germline (small cohorts), somatic (tumor-normal/tumor-only) from short-read data [38] |

| Key Strength | Unified view of SVs and CNVs; high resolution from assembly [36] | Efficient, single-workflow analysis combining paired and split-read evidence [38] |

Troubleshooting Guides

Issue 1: Poor SV Call Accuracy in Low-Complexity Regions (LCRs)

Problem Description

A high rate of false-positive or false-negative SV calls in repetitive genomic areas, such as low-complexity regions (LCRs) and segmental duplications (SegDups). These regions cover only about 1.2% of the GRCh38 genome but contain a significant majority (~69%) of confident SVs in benchmark samples like HG002 [40]. Error rates for long-read callers in these areas are notably high, ranging between 77.3% and 91.3% [40].

Diagnostic Steps

- Annotate Your Calls: Use the BED file of LCRs (e.g., from resources like UCSC Genome Browser) to intersect with your SV callset. A high concentration of calls in these regions, especially if they disagree between callers, suggests this issue [40].

- Use a Graph Reference: Align your reads to a graph-based pangenome reference (e.g., DRAGEN Multigenome graph). This provides better resolution in polymorphic or complex regions compared to a linear reference [39].

- Leverage Multiple Callers: Run several SV callers on the same dataset. A lack of consensus on a specific SV call in an LCR is a strong indicator of a potential false positive [40].

Resolution

- For DRAGEN SV: Utilize the

--sv-exclude-regionsoption with a BED file of problematic LCRs to remove these regions from the graph-building process [37] [38]. - For Sawfish: Ensure you are using the highest possible read depth and quality of HiFi data, as assembly-based methods benefit significantly from accurate long reads in repetitive regions [36] [15].

- General Best Practice: Manually inspect the read alignments in a tool like IGV for SVs in LCRs that are critical to your research. Treat all such calls with caution and require orthogonal validation [40].

Issue 2: Inability to Detect Very Large (>1 Mb) Germline CNVs

Problem Description

The SV caller fails to report or confidently genotype very large deletions and duplications that span over one megabase.

Diagnostic Steps

- Check Variant Size Limits: DRAGEN SV does not report germline deletions and duplications larger than 1 Mb by default, as it relies on split-read and read-pair breakpoint evidence, which can be insufficient for such large regions [38].

- Verify Caller Capability: Confirm that your analysis is using the correct tool for the variant type. Sawfish is designed for a unified view of SVs and CNVs [36], whereas DRAGEN SV is optimized for breakpoint detection. For large CNVs without clear breakpoints, a dedicated CNV caller is often needed.

Resolution

- For DRAGEN SV:

- Adjust the maximum scorable variant size using the command-line options

--sv-max-del-scored-variant-size(for deletions) and--sv-max-dup-scored-variant-size(for duplications) [38]. - For comprehensive CNV calling, run the separate DRAGEN CNV Workflow in addition to the SV caller, as it uses read-depth analysis which can detect these large events [39].

- Adjust the maximum scorable variant size using the command-line options

- For Sawfish: This issue is less likely, as Sawfish performs integrated copy number segmentation synchronized with SV breakpoints, which is specifically designed to improve the classification of large variants [36].

Issue 3: Excessive Runtime or Memory Usage

Problem Description

The analysis pipeline runs unusually slowly or fails due to excessive memory consumption.

Diagnostic Steps

- Inspect Read Quality: High levels of discordant alignments can overburden the SV caller with excessive candidates. Check the DRAGEN aligner metrics for soft-clipped bases, supplementary alignments, and improperly paired reads. If these metrics are significantly above 10%, it indicates sample quality issues [38].

- Check Sample Size: DRAGEN SV is optimized for the joint analysis of 5 or fewer diploid individuals. While larger cohorts are not blocked, they may lead to stability or call quality issues [38].

- Review Input Data: For Sawfish, ensure your input HiFi reads meet the recommended quality and length specifications. Low-quality data can cause the local assembly step to become computationally expensive [36].

Resolution

- Address Data Quality: Investigate potential upstream issues in library preparation, input material quantity, and sequencing run quality [38].

- Use Exclusion Regions: Provide a BED file of regions to exclude (

--sv-exclude-regionsin DRAGEN) to reduce the computational burden during graph building [37] [38]. - Subsample for Testing: Run your analysis on a single chromosome or a genomic subset to first verify the workflow and parameters.

Frequently Asked Questions (FAQs)

Q1: Can I use DRAGEN SV for somatic variant calling in cancer research? Yes, DRAGEN SV provides scoring models for three primary applications: 1) germline variants in small sets of diploid samples, 2) somatic variants in a matched tumor-normal sample pair, and 3) somatic and germline variants in a tumor-only sample [38]. For liquid tumor samples, enable the liquid tumor mode to account for tumor-in-normal (TiN) contamination [38].

Q2: What is the minimum recommended sequencing depth for reliable SV calling with these tools? While requirements vary, a benchmarking study on long-read data found that variant calling after genome assembly with a 10x sequencing depth of accurate HiFi data allowed reliable detection of true-positive variants, providing a cost-effective methodology [15]. For short-read data, higher depths (e.g., 25-30x) are typically used.

Q3: How does Sawfish achieve a "unified view" of SVs and CNVs? Sawfish applies copy number segmentation to sequencing coverage levels and synchronizes SV breakpoints with copy number change boundaries. This process allows it to merge redundant calls into single variants that describe both breakpoint and copy number detail, providing a combined assessment of all large variants in a sample [36].

Q4: What is "forced genotyping" in DRAGEN SV and when should I use it? Forced genotyping allows you to score a set of known SVs from an input VCF file against your sample data, even if the evidence for those variants is weak or absent. This is particularly useful for:

- Detecting known SVs in low-depth samples at higher recall.

- Confidently asserting the absence of a known SV allele (homozygous reference genotype) [38]. It can be run in standalone mode or integrated with standard SV discovery [38].

Q5: My assembly is from a non-human species (e.g., a crop plant). Are these callers still applicable? The core algorithms may be applicable, but performance is highly dependent on the genome. For complex, repetitive genomes like those of wheat, rye, or triticale, the completeness and correctness of the reference assembly itself are critical. Before SV calling, evaluate your draft assembly's quality using tools like BUSCO and RNA-seq read mappability to ensure it is a robust reference [24]. Sawfish, designed for HiFi data, may be particularly well-suited for such organisms [36].

Experimental Workflow & Benchmarking

Standardized SV Calling Protocol

To ensure reproducible and robust SV detection in your research, follow this generalized workflow. The specific tools (Sawfish or DRAGEN) would be inserted at the variant calling stage.

Performance Benchmarking Insights

Independent evaluations provide critical data on SV caller performance. The following table summarizes key benchmarking results from a 2025 study that compared various tools using the HG002 benchmark dataset [39].

| Caller | Sequencing Technology | Key Performance Finding |

|---|---|---|

| DRAGEN v4.2 | Illumina Short-Read (srWGS) | Delivered the highest accuracy among ten srWGS callers tested [39]. |

| Manta + minimap2 | Illumina Short-Read (srWGS) | Achieved performance comparable to DRAGEN, highlighting the impact of alignment software [39]. |

| Sniffles2 | PacBio Long-Read (lrWGS) | Outperformed other tested tools for PacBio data [39]. |

| Duet | Oxford Nanopore (lrWGS) | Achieved the highest accuracy at coverages up to 10x [39]. |

| Dysgu | Oxford Nanopore (lrWGS) | Yielded the best results at higher coverages [39]. |

The Scientist's Toolkit

| Research Reagent / Resource | Function / Application |

|---|---|

| HG002 Benchmark Dataset (GIAB) | A gold-standard set of truth variants from the Genome in a Bottle Consortium used to validate and benchmark SV calling performance [39] [40]. |

| GRCh38 & T2T-CHM13 Reference Genomes | Linear reference genomes. The T2T-CHM13 is a complete, gapless assembly that can improve SV calling in previously unresolved regions [41] [39]. |

| Graph-Based Pangenome Reference | A reference structure that incorporates multiple haplotypes, providing better resolution for SV calling in complex and polymorphic regions compared to linear references [39]. |

| LCR and SegDup Annotation BED Files | Genomic interval files that define low-complexity and segmentally duplicated regions. Used to filter or interpret SV calls prone to high error rates [39] [40]. |

| BUSCO Software | Tool to assess the completeness and quality of genome assemblies based on universal single-copy orthologs, which is a prerequisite for confident SV calling [24]. |

| truvari Software | A benchmark tool for comparing two SV callsets, used to calculate performance metrics like precision and recall against a truth set [40]. |

Frequently Asked Questions (FAQs)

Q1: Why should I use multiple variant callers instead of just the single best one? Using a combination of multiple variant callers is recommended because there is no single caller that consistently outperforms all others across different datasets, sequencing technologies, and variant types [42] [43]. Individual callers have different strengths and weaknesses, and their performance can vary widely. Combining them leverages their complementary approaches to increase overall detection accuracy and confidence in the called variants [42] [44].

Q2: What is the simplest way to combine calls from multiple variant callers?

A straightforward and effective method is to use a consensus strategy. For Single Nucleotide Variants (SNVs), accept variants called by a majority of the tools in your combination (specifically, n-1 callers, where n is the total number of callers used) [42]. For indel calling, combining two callers and accepting variants called by both is a good starting point, as adding more callers does not necessarily increase accuracy [42].

Q3: My combined pipeline is running very slowly and using a lot of memory. How can I fix this? This is a common issue when processing large genomic datasets. To resolve it, we recommend the following steps:

- Identify the Bottleneck: Check the logs to determine which specific step (e.g., a particular variant caller) is consuming the most resources [45].

- Optimize Data Flow: If processing a large volume of data, implement pagination or split the workflow into primary and secondary pipelines to manage memory usage more effectively [45].

- Adjust Deployment: After restructuring, test the pipeline. If resource errors persist, consider increasing the computational resources (e.g., memory allocation) available for the pipeline run [45].

Q4: How can I systematically assign confidence to variants from a combined caller? Beyond simple consensus, you can use a statistical approach like stacked generalization (stacking) [43]. This involves building a model, such as a logistic regression, that uses the detection status of each caller (and optionally other genomic features like sequencing depth) as input to predict the probability of a site being a true somatic mutation [43]. This model, trained on a validation dataset, provides a confidence score for each variant call.

Troubleshooting Guides

Issue 1: Low Concordance Between Variant Callers

Problem: You have applied multiple callers to the same dataset, but there is very little overlap in the variants they report.

Investigation & Resolution:

- Confirm Data Inputs: Ensure all variant callers are using the same aligned read files (BAM/CRAM) and reference genome version. Inconsistent inputs are a common source of discordance.

- Check Caller Versions and Parameters: Differences in default parameters or software versions can significantly impact results. Document and standardize the versions and key settings (e.g., minimum mapping quality, minimum base quality) used for all callers [42].

- Analyze Variant Characteristics: Investigate where the disagreements occur. Discordance is often higher in specific genomic contexts, such as low-complexity regions, areas with low sequencing coverage, or for specific variant types like indels [44]. Visualize the read alignments at discordant sites using a tool like IGV to assess supporting evidence.

- Leverage a Gold Standard: If available, use a validated reference standard or a set of manually curated true positives for your dataset to understand each caller's performance and bias [42] [43].

Issue 2: Pipeline Execution Fails Due to Timeout or Memory Overflow

Problem: The pipeline execution is aborted because it exceeds the allowed time or memory limits.

Investigation & Resolution:

- Analyze Logs: Use the pipeline's monitoring interface to check the execution logs. Identify the specific point of failure and which connector or variant caller was running [45] [46].

- Increase Resource Limits: If the pipeline is consistently hitting limits, increase the execution timeout or the allocated memory in the pipeline's configuration [45].

- Restructure the Pipeline: For large datasets, avoid processing everything in a single large job.

- Optimize Data Handling: Ensure that temporary and staging file locations are correctly configured and have sufficient space [46].

Issue 3: High False Positive Rate in Final Variant Set

Problem: The final list of variants, generated by combining multiple callers, contains an unacceptably high number of false positives upon validation.

Investigation & Resolution:

- Adjust Consensus Stringency: If you are using a union or a low-intersection threshold (e.g., accepting variants from any caller), try a more stringent rule. For SNVs, requiring variants to be called by

n-1callers often provides a better balance between sensitivity and precision [42]. - Apply Additional Filtering: Implement post-calling filters based on genomic features. Common filters include:

- Variant Quality Score: Apply a minimum threshold.

- Read Depth: Filter out variants with extremely low or high depth.

- Variant Allele Frequency (VAF): Filter based on the fraction of reads supporting the variant.

- Mapping Quality: Exclude variants where the supporting reads have low mapping quality.

- Use a Regeno typing Tool: Tools like SV2 can be used to re-assess the genotype likelihoods of structural variants using a support-vector machine, which can help filter out false positives [44].

- Intersection with Known Artifacts: Filter the variant calls against databases of known repetitive or problematic genomic regions (e.g., DAC Blacklisted Regions, RepeatMasker) to remove common artifacts [44].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key software tools and resources used in building and evaluating comprehensive variant detection pipelines.

| Item Name | Function/Benefit |

|---|---|

| BWA-MEM [42] [44] | A widely used algorithm for aligning sequencing reads to a reference genome. This is a critical first step to generate input data (BAM files) for variant callers. |

| GAEP (Genome Assembly Evaluating Pipeline) [47] | A comprehensive pipeline for evaluating genome assembly quality from multiple perspectives, including continuity, completeness, and correctness, which provides the foundational genomic context for variant calling. |

| Benchmarking Universal Single-Copy Orthologs (BUSCO) [24] | A tool to assess the completeness of a genome assembly based on evolutionarily informed expectations of gene content. This helps evaluate the quality of the reference used for variant calling. |

| Ensemble/Venn Diagram Strategy [42] [43] | A simple yet powerful method to combine variant calls by taking the intersection or union of outputs from different callers. |

| Stacked Generalization (Stacking) [43] | A statistical framework for combining the outputs of multiple variant callers into a single, more accurate classifier by using a logistic regression model. |

| Integrative Genomics Viewer (IGV) [44] | A high-performance visualization tool for interactive exploration of large genomic datasets, essential for the manual validation of variant calls. |

| SV2 [44] | A support-vector machine-based tool for re-genotyping structural variants, which helps improve genotype accuracy and filter false positives. |

Experimental Protocols & Data Presentation

Protocol 1: Building a Simple Consensus Caller

This protocol outlines a straightforward method for combining SNV calls from multiple callers.

Methodology:

- Caller Selection and Execution: Select at least three somatic SNV callers that employ different core algorithms (e.g., based on probabilistic models, machine learning, etc.) [42]. Run all selected callers on the same tumor-normal paired BAM files using default or standardized parameters.

- Data Preparation: Extract the "PASS" variant calls from each caller's output VCF file. Convert the VCF files into a standardized format that records the genomic position and the caller(s) that identified each variant.

- Generate Consensus: For each genomic position, count the number of callers that reported a variant. Apply the consensus rule: accept SNVs that were called by

n-1callers, wherenis the total number of callers used [42]. - Performance Evaluation: Compare the final set of consensus variants against a validated reference standard (if available) to calculate performance metrics like precision, sensitivity, and F1-score [42].

Protocol 2: Evaluating Assembly Quality for Variant Calling

A high-quality genome assembly is crucial for accurate variant detection. This protocol describes key evaluation methods.

Methodology:

- BUSCO Analysis: Run BUSCO analysis on the genome assembly using a lineage-specific dataset (e.g.,

poales_odb10for plants). BUSCO assesses completeness by searching for a set of universal single-copy orthologs [24]. - Transcript Mapping: Map RNA-seq reads from the same species back to the genome assembly using a splice-aware aligner like HISAT2 or STAR. Calculate key metrics such as the overall alignment rate, the total number of bases covered, and the average read depth across genes [24].

- Analysis of Assembly Errors: Investigate the assembled gene sequences for the presence of internal stop codons, which can indicate frame-shift errors or mis-assemblies. A high frequency of these errors is a significant negative indicator of assembly accuracy [24].

- Integrated Assessment: Integrate the results from the steps above. A high-quality assembly for gene-related studies will typically show a high proportion of complete BUSCOs, a high RNA-seq read mapping rate, and a low frequency of internal stop codons in its gene models [24].

Quantitative Data on Caller Combination Performance

Table 1: Performance of Different Consensus Strategies on WGS SNV Calling Data adapted from a study combining six SNV callers on a somatic reference standard [42].

| Consensus Strategy | Median F1-Score (across combinations) | Key Observation |

|---|---|---|

| Union (Any caller) | Lower F1 | Highest sensitivity, but lowest precision. |

| Majority (n-1 callers) | Highest Median F1 | Optimal balance of precision and sensitivity. |

| Intersection (All callers) | Lower F1 | Highest precision, but lowest sensitivity. |

Table 2: Impact of Assembly Quality on RNA-seq Mapping Data based on an evaluation of multiple Triticeae crop genome assemblies [24].

| Assembly Quality Metric | Correlation with RNA-seq Mappability |

|---|---|

| BUSCO Completeness (Complete Genes) | Positive Correlation |

| Frequency of Internal Stop Codons | Significant Negative Correlation |

| Contig N50 (Continuity) | Positive influence on mappability in complex genomes. |

Workflow and Relationship Diagrams

Variant Detection Pipeline Workflow

Variant Acceptance Logic

The DRAGEN (Dynamic Read Analysis for Genomics) platform provides a highly optimized framework for comprehensive genomic variant discovery, offering exceptional speed and accuracy. For research focused on optimizing gene caller performance on draft assemblies, DRAGEN delivers a unified solution that identifies all variant types—from single-nucleotide variations (SNVs) to structural variations (SVs)—within approximately 30 minutes of computation time from raw reads to variant detection [48]. This performance is achieved through hardware acceleration, machine learning-based variant detection, and multigenome mapping with pangenome references [49].

DRAGEN's comprehensive approach is particularly valuable for draft assembly optimization, as it simultaneously detects SNVs, insertions/deletions (indels), short tandem repeats (STRs), structural variations (SVs), and copy number variations (CNVs) using a single workflow [48]. This eliminates the need for multiple specialized tools and ensures consistent variant calling across different variant classes. The platform's ability to handle challenging genomic regions through specialized methods for medically relevant genes (including HLA, SMN, GBA, and LPA) further enhances its utility for research applications requiring high sensitivity and precision [49].

Core Workflow: From Raw Reads to Variant Calls

The DRAGEN pipeline transforms raw sequencing data into comprehensive variant calls through a series of optimized steps. The entire process leverages hardware acceleration and sophisticated algorithms to achieve both speed and accuracy, typically completing a whole-genome analysis in approximately 30 minutes [48]. The workflow begins with quality assessment and proceeds through alignment, duplicate marking, and variant calling across all variant classes simultaneously.

Diagram: DRAGEN Comprehensive Variant Calling Workflow. The pipeline processes raw sequencing data through quality control, mapping, and simultaneous detection of multiple variant types.

Key Algorithmic Components

DRAGEN's variant detection employs several sophisticated algorithmic approaches tailored to different variant types. For SNV and indel calling (<50 bp), the platform uses de Bruijn graph assembly followed by a hidden Markov model with sample-specific noise estimation [49]. For structural variations (≥50 bp) and copy number variations (≥1 kbp), DRAGEN extends the Manta algorithm with improvements including a new mobile element insertion detector, optimized proper pair parameters for large deletion calling, and refined assembly steps [49]. The CNV caller employs a modified shifting levels model with the Viterbi algorithm to identify the most likely state of input intervals [49].

The platform's multigenome mapping approach uses a pangenome reference consisting of GRCh38 plus 64 haplotypes from 32 samples, enabling better representation of sequence diversity [48] [49]. This approach significantly improves mapping accuracy in complex genomic regions, which is particularly valuable for optimizing gene caller performance on draft assemblies. The entire mapping process for a 35× WGS paired-end dataset requires approximately 8 minutes of computation time [49].

Troubleshooting Guides and FAQs

Common Workflow Issues and Solutions

Q: The analysis stopped unexpectedly with an FPGA error. How can I recover?

A: If you pressed Ctrl+C during a DRAGEN step or encountered an unexpected termination, the FPGA may require resetting. To recover from an FPGA error, shut down and restart the server [50]. For analyses run via SSH, Illumina recommends using screen or tmux to prevent unexpected termination of analysis sessions [50].

Q: Why does my analysis fail with permission errors? A: Running analyses as the root user can lead to permissions issues when managing data generated by the software [50]. Always use a non-root user for analyses. If using CIFS (SMB 1.0) mounted volumes, you may encounter permission check issues that cause the Nextflow workflow to exit prematurely when using a non-root user [50]. The workaround is to use newer SMB protocols or configure Windows Active Directory for analysis with non-root users.

Q: How can I increase coverage using multiple FASTQ files?