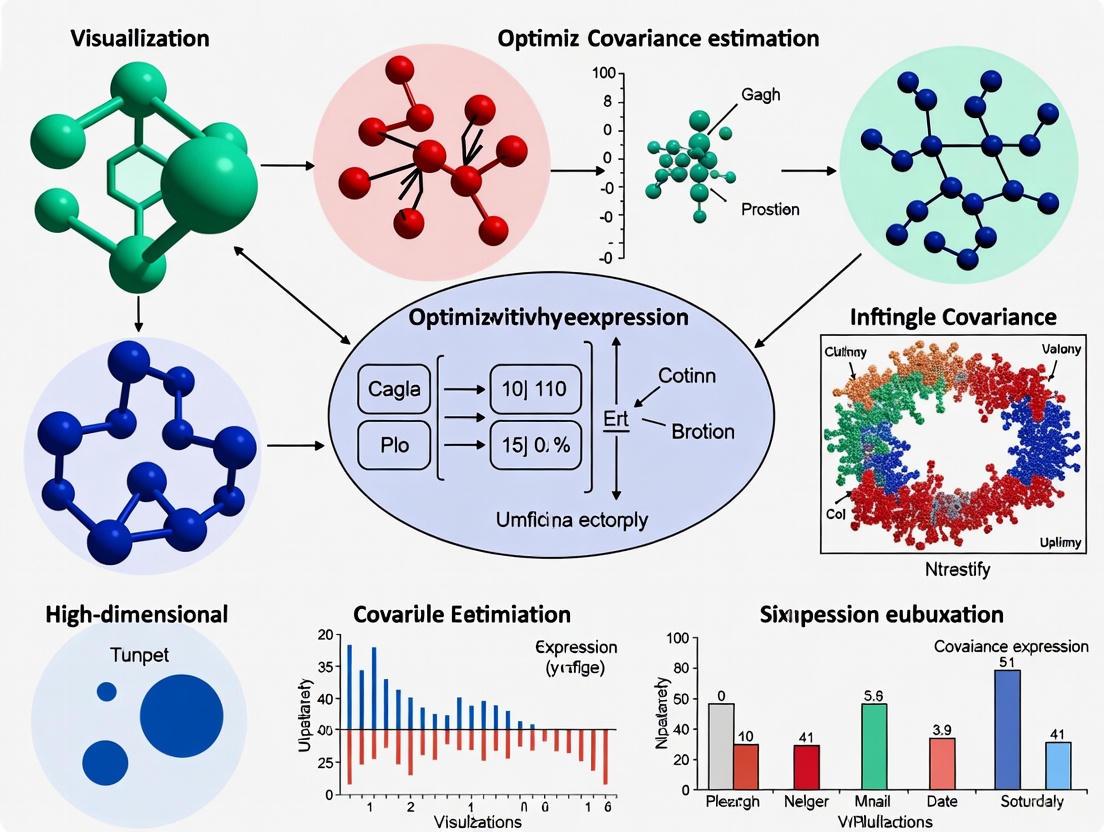

Optimizing High-Dimensional Covariance Estimation for Robust Gene Expression Analysis in Biomedical Research

Accurate covariance matrix estimation is fundamental for analyzing high-dimensional gene expression data, enabling critical tasks in drug discovery and disease research such as co-expression network analysis, module identification, and biomarker...

Optimizing High-Dimensional Covariance Estimation for Robust Gene Expression Analysis in Biomedical Research

Abstract

Accurate covariance matrix estimation is fundamental for analyzing high-dimensional gene expression data, enabling critical tasks in drug discovery and disease research such as co-expression network analysis, module identification, and biomarker discovery. This article provides a comprehensive guide for researchers and drug development professionals, exploring the foundational challenges of high-dimensional data where the number of genes (p) far exceeds sample sizes (n). We detail cutting-edge methodological solutions including sparse regularization, conditional covariance regression, and robust estimation techniques. The content further addresses practical troubleshooting for outlier management and computational optimization, while presenting rigorous validation frameworks and comparative analyses to ensure biological relevance and statistical reliability in genomic applications.

Navigating the High-Dimensional Landscape: Why Covariance Matters in Genomics

In high-dimensional genomic studies, researchers often face the "p >> n" problem, where the number of features (p - genes, SNPs, or other molecular markers) vastly exceeds the number of observed samples or individuals (n). This fundamental imbalance creates significant statistical challenges for accurate covariance estimation, which is crucial for genomic prediction, gene expression analysis, and identifying biological relationships.

The primary consequence of high-dimensionality is that the standard sample covariance matrix becomes unstable, prone to overfitting, and often singular (non-invertible), complicating many downstream statistical analyses [1] [2]. In plant breeding and human genomics, this scenario has become increasingly common with the advent of high-throughput phenotyping (HTP) platforms and other technologies that generate massive amounts of secondary feature data [1]. Effectively managing this dimensionality is essential for producing reliable, reproducible biological insights.

Computational & Statistical Strategies

Several statistical methods have been developed to address the challenges of high-dimensional genomic data. These approaches generally aim to reduce dimensionality, stabilize estimates, or incorporate prior biological knowledge.

Table 1: Strategies for High-Dimensional Covariance Estimation

| Method | Core Principle | Best-Suited Applications | Key Advantages |

|---|---|---|---|

| glfBLUP [1] | Uses genetic latent factor analysis to reduce secondary features to a lower-dimensional set of uncorrelated factors for multitrait genomic prediction. | Plant breeding programs with high-throughput phenotyping (HTP) data. | Data-driven dimensionality reduction; produces interpretable, biologically relevant parameters. |

| Covariance Matrix Shrinkage [2] | Shrinks the extreme sample covariance estimates towards a more stable target matrix, reducing estimation error. | Portfolio optimization in finance; general high-dimensional problems even with sufficient data. | Desensitizes optimizers to small input variations; helps control leverage and estimation risk. |

| Factor Analysis-Based Methods (e.g., MegaLMM) [1] | Models data as linear combinations of independent factors and unique trait contributions. | High-dimensional phenotypic and genomic data. | Flexible modeling of complex underlying structures in noisy data. |

| Regularized Selection Index (siBLUP) [1] | Linearly combines secondary features into a single regularized index. | Scenarios requiring a single composite trait from many correlated features. | Sign reduces dimensionality to one, simplifying the prediction model. |

| LASSO-Based Methods (lsBLUP) [1] | Uses LASSO regression to predict a focal trait from high-dimensional features. | Situations where feature selection is desired alongside prediction. | Performs variable selection, helping to identify the most predictive features. |

Detailed Experimental Protocols

Protocol 1: glfBLUP for Genomic Prediction with HTP Data

This protocol outlines the steps for implementing the genetic latent factor Best Linear Unbiased Prediction (glfBLUP) pipeline, designed to integrate high-dimensional secondary phenotyping data into genomic selection models [1].

Objective: To improve the accuracy of genomic prediction for a focal trait (e.g., crop yield) by incorporating high-dimensional secondary features (e.g., hyperspectral reflectivity measurements) while overcoming multicollinearity and the p >> n problem.

Materials:

- Data Matrix (

Y_s): A matrix containingnindividuals (rows) andpsecondary features (columns). - Kinship Matrix (

K): A genomic relationship matrix derived from SNP markers. - Focal Trait Data (

y_f): A vector of phenotypic measurements for the trait of interest. - Computational Environment: Statistical software capable of factor analysis and mixed-model solving (e.g., R, Python).

Workflow Diagram: High-Dimensional Genomic Prediction Workflow

Methodology:

- Model Secondary Features: Decompose the secondary feature data (

Y_s) into genetic (G_s) and residual (E_s) components. The model is represented as:vec(Y_s) ~ N(0, Σ_ss^g ⊗ ZKZ^T + Σ_ss^ε ⊗ I_n)whereΣ_ss^gandΣ_ss^εare the genetic and residual covariance matrices among secondary features, andZis an incidence matrix linking individuals to genotypes [1]. - Dimensionality Reduction via Factor Analysis: Fit a maximum likelihood factor model to the estimated genetic correlation matrix. The goal is to explain the covariance structure using a smaller number (

k) of uncorrelated genetic latent factors. - Estimate Factor Scores: Calculate genetic latent factor scores for each genotype. These scores represent the reduced-dimension summary of the original

psecondary features. - Multitrait Genomic Prediction: Incorporate the estimated latent factor scores as additional traits in a multivariate genomic best linear unbiased prediction (GBLUP) model alongside the focal trait. This model leverages the shared genetic information between the latent factors and the focal trait to enhance prediction accuracy.

Protocol 2: Phospho-Flow Cytometry for High-Dimensional Single-Cell Signaling

This protocol provides a framework for acquiring high-dimensional single-cell data, a common scenario in immunology and cancer research where the number of parameters per cell (p) can exceed the number of independent samples (n) [3].

Objective: To measure intracellular protein phosphorylation signaling networks at single-cell resolution across multiple cell subsets in human peripheral blood mononuclear cells (PBMCs) in response to stimuli.

Materials:

- Biological Sample: Cryopreserved human PBMCs.

- Stimuli: Cytokines (e.g., IL-4, IFN-γ, IL-6) and other stimulants (e.g., H₂O₂).

- Antibody Cocktail: Metal-tagged antibodies for mass cytometry targeting cell surface markers (for immunophenotyping) and intracellular phospho-proteins.

- Fixative: 16% Paraformaldehyde.

- Permeabilization Buffer: Ice-cold methanol.

- Mass Cytometer (e.g., Fluidigm CyTOF) or High-Parameter Flow Cytometer.

Workflow Diagram: Phospho-Flow Cytometry Experimental Pipeline

Methodology:

- Cell Stimulation: Thaw and rest PBMCs. Aliquot cells and stimulate with pre-optimized concentrations of cytokines (e.g., 20 ng/mL IL-4, IFN-γ) or other stimuli for a precise duration (e.g., 15 minutes) to activate specific signaling pathways [3].

- Fixation and Permeabilization: Immediately halt signaling and preserve phospho-epitopes by adding paraformaldehyde. Subsequently, permeabilize cells with ice-cold methanol to allow intracellular antibody access.

- Antibody Staining: Incubate cells with a pre-titrated antibody cocktail. The panel should include markers to identify major immune cell lineages (e.g., CD45, CD3, CD19, CD14) and phospho-specific antibodies targeting signaling proteins (e.g., pSTAT1, pSTAT3, pAKT).

- Data Acquisition: Acquire data on a mass cytometer, which converts metal-tag signals into digital data for each cell, resulting in a high-dimensional matrix where rows are single cells and columns are measured parameters.

- Data Analysis: Use dimensionality reduction techniques (e.g., UMAP, t-SNE) and clustering algorithms (e.g., FlowSOM) to visualize and identify cell populations and their associated signaling states [3].

Troubleshooting Guide & FAQs

Frequently Asked Questions

Q1: My covariance matrix is singular during genomic prediction. What are my options? A: A singular matrix often occurs when p > n. Solutions include using shrinkage estimators to stabilize the matrix [2] or employing dimensionality reduction techniques like glfBLUP, which uses factor analysis to project the data onto a lower-dimensional space of latent factors, ensuring a full-rank, invertible covariance structure for downstream analysis [1].

Q2: How can I validate my findings from high-dimensional data to ensure they are not artifacts? A: Independent validation is crucial. For genomic findings, confirm genetic variants using an orthogonal method like targeted PCR. In gene expression studies, validate RNA-seq results with qPCR on selected genes [4]. Always process negative controls alongside experimental samples to identify contamination, and use cross-validation within your statistical models to assess generalizability [4].

Q3: What are the most common sources of error in high-dimensional biological data? A: The most pervasive errors include:

- Sample Mislabeling/Mix-ups: Can affect up to 5% of samples in some labs, leading to fundamentally incorrect conclusions [4].

- Batch Effects: Systematic technical variations between processing batches that can be mistaken for biological signals [4].

- Technical Artifacts: PCR duplicates, adapter contamination, and sequencing errors can mimic real biological phenomena [4].

Q4: Why is shrinkage beneficial even when I have more samples than features (n > p)? A: Shrinkage is not only for ensuring a positive definite matrix. Its primary benefit is the reduction of estimation error. By shrinking noisy sample estimates towards a more stable target, you desensitize downstream analyses (like mean-variance optimization) to small fluctuations in the input data, leading to more robust and stable results, such as less extreme portfolio weights in financial applications or more reliable genetic parameter estimates [2].

The Scientist's Toolkit

Table 2: Essential Research Reagent Solutions

| Item | Function/Application | Key Characteristics |

|---|---|---|

| Cell-ID Intercalator-Ir [3] | Nucleic acid stain for mass cytometry used to identify intact, DNA-containing cells. | Distinguishes whole cells from debris; allows for cell viability assessment. |

| EQ Four Element Calibration Beads [3] | Used to normalize signal intensity and correct for instrument drift over time in mass cytometry. | Essential for data quality control and ensuring comparability across acquisition runs. |

| Cryopreserved Human PBMCs [3] | A standardized source of primary human immune cells for functional signaling assays. | Allows for experiment-to-experiment reproducibility; reflects native biological states. |

| Phospho-Specific Antibody Panels [3] | Antibodies conjugated to heavy metals (mass cytometry) or fluorochromes to detect post-translational modifications (e.g., phosphorylation) at single-cell resolution. | Enable multiplexed measurement of signaling network activity in complex cell populations. |

| DNase I [3] | Enzyme that digests DNA; used during cell processing to prevent clumping caused by released DNA from dead cells. | Improves cell viability and sample quality for accurate single-cell analysis. |

### Troubleshooting Guide: Co-expression Network Analysis & Drug Repurposing

Q1: My high-dimensional co-expression matrix is not positive definite, causing model failures. How can I resolve this?

A: In high-dimensional settings (p > n), the sample covariance matrix is often unstable or not positive definite. To address this:

- Solution 1: Employ Regularization Techniques. Use methods like sparse covariance regression, which imposes an

L1penalty to shrink off-diagonal elements toward zero, promoting sparsity and ensuring a positive definite estimate [5] [6]. - Solution 2: Apply Modified Cholesky Decomposition (MCD). This technique decomposes the covariance matrix into a unit lower triangular matrix and a diagonal matrix, guaranteeing a positive definite estimate. If no natural variable ordering exists, use an ensemble approach that averages estimates from multiple random variable orderings to improve accuracy [6].

Q2: How can I identify if genetic variants (eQTLs) are influencing my gene co-expression patterns? A: You need to detect co-expression Quantitative Trait Loci (co-expression QTLs).

- Method: Implement a high-dimensional covariance regression framework. This models the covariance matrix itself as a function of subject-level covariates, such as genetic variations. The model uses a combined sparsity structure to identify which covariates have non-zero effects and which specific edges in the co-expression network are modulated by them [5].

- Tool: The method of moments estimation with a sparse group lasso penalty can be applied. A subsequent debiased inference procedure allows for statistical testing to determine the significance of specific covariate effects on gene-gene co-expressions [5].

Q3: My network-based drug repurposing pipeline is yielding too many candidate drugs. How can I prioritize the most promising ones? A: Effective prioritization requires integrating multiple data layers.

- Strategy 1: Leverage Cell-Type-Specific Networks. Integrate single-cell genomics data to build cell-type-specific gene regulatory networks. Drugs that target key transcription factors or genes within disease-relevant cell-type-specific modules are higher-priority candidates [7].

- Strategy 2: Incorporate Transcriptional Reversal Evidence. Use graph neural networks to prioritize novel risk genes. Then, screen drug candidates for their ability to reverse the disorder-associated transcriptional phenotype. Drugs with experimental evidence for reversing this signature should be prioritized [7].

- Strategy 3: Utilize AI and Network Proximity. Apply network-based AI models that measure the proximity of a drug's targets to disease modules in heterogeneous knowledge graphs. Candidates with targets significantly closer to the disease module are more likely to be effective [8].

Q4: How can I handle highly correlated secondary features from high-throughput phenotyping in genomic prediction? A: High correlation (multicollinearity) complicates parameter estimation and interpretation.

- Solution: Dimensionality Reduction via Factor Analysis. Use methods like genetic latent factor BLUP (glfBLUP). This pipeline applies factor analysis to reduce high-dimensional, correlated secondary features into a smaller set of uncorrelated genetic latent factors. These factors are then used in a multivariate genomic prediction model, which improves accuracy and provides biologically interpretable parameters [1].

### Frequently Asked Questions (FAQs)

Q1: What is the key advantage of using network-based approaches over traditional methods for drug repurposing? A: Network-based approaches move beyond single-target strategies by modeling the complex interactions between biomolecules. The core principle is that drugs whose target proteins are located close to a disease module in a molecular network (e.g., protein-protein interaction networks) have a higher probability of therapeutic efficacy. This systems-level view can identify non-obvious drug-disease associations that traditional methods might miss [8].

Q2: Are there specific AI models particularly suited for drug repurposing? A: Yes, different AI models excel at different tasks:

- Graph Neural Networks (GNNs): Ideal for analyzing network-structured data, such as identifying novel risk genes and drug candidates within biological networks [7].

- Heterogeneous Knowledge Graph Mining: Integrates diverse data types (e.g., drug–target, drug–disease, protein–protein interactions) to predict new drug-disease associations [8].

- Random Forests and Support Vector Machines (SVMs): Often used for classification tasks, such as predicting whether a drug will have efficacy for a specific disease indication based on its features [8].

Q3: My real-time PCR data shows amplification in No-Template Controls (NTCs). What could be the cause? A: Amplification in NTCs typically indicates contamination. Common causes are:

- Carryover contamination from high-concentration samples or amplicons.

- Contaminated reagents, including the assay itself (though TaqMan assays are guaranteed not to amplify in NTCs), water, or master mix [9].

- Probe or primer degradation can also sometimes lead to nonspecific amplification. A full troubleshooting guide for real-time PCR is recommended to diagnose the specific issue [9].

Table 1: Key Outputs from a Single-Cell Network Medicine Study on Psychiatric Disorders [7]

| Analysis Category | Key Finding | Quantitative Outcome |

|---|---|---|

| Gene Regulator Identification | Cell-type-specific druggable transcription factors were identified. | Analysis performed across 23 cell-type-level gene regulatory networks. |

| Drug Candidate Prioritization | Graph neural networks and a repurposing framework identified drug molecules. | 220 drug molecules identified as potential candidates. |

| Transcriptional Reversal | Evidence found for drugs reversing disease-associated gene expression. | 37 drugs showed supporting evidence. |

| Genetic Mechanism Insight | cell-type-specific eQTLs linking genetic variation to drug targets discovered. | 335 drug-cell eQTLs discovered. |

Table 2: Essential Research Reagent Solutions for Computational Analysis

| Reagent / Solution | Function / Application | Key Feature |

|---|---|---|

| TaqMan Gene Expression Assays [9] | Target-specific amplification and detection in gene expression studies (qPCR). | Guaranteed no amplification in No-Template Controls (NTCs). |

| High-Dimensional Covariance Regression Model [5] | Estimates a conditional covariance matrix that varies with high-dimensional covariates (e.g., for co-expression QTL detection). | Uses a sparse group lasso penalty for variable selection and positive definiteness. |

| Ensemble Sparse Covariance Estimator [6] | Provides a positive definite and sparse estimate of the covariance matrix when no natural variable ordering exists. | Averages estimates from multiple random variable orderings via the Modified Cholesky Decomposition. |

| glfBLUP (Genetic Latent Factor BLUP) Pipeline [1] | Integrates high-dimensional, correlated secondary phenotyping data into genomic prediction models. | Uses factor analysis for dimensionality reduction and produces interpretable latent factors. |

### Experimental Protocols

Protocol 1: Network-Based Drug Repurposing Using Single-Cell Genomics [7]

- Data Integration: Collect population-scale single-cell genomics data for the disease of interest.

- Network Construction: Reconstruct 23 cell-type-specific gene regulatory networks.

- Module Identification: Identify druggable transcription factors and co-regulated gene modules within these cell-type-specific networks.

- Risk Gene Prioritization: Apply graph neural networks on the identified modules to prioritize novel risk genes.

- Drug Screening: Leverage the prioritized genes in a network-based drug repurposing framework to identify drug molecules that target the specific cell-type-level pathology.

- Validation: Test the top candidate drugs for their ability to reverse the disorder-associated transcriptional phenotype in a relevant model system.

Protocol 2: Detecting Co-expression Quantitative Trait Loci (co-expression QTLs) [5]

- Model Formulation: Stipulate a covariance regression model where the covariance matrix

Σis a linear function of genetic covariatesx. - Parameter Estimation: Employ a blockwise coordinate descent algorithm to solve the resulting least squares problem with a combined sparse group lasso penalty.

- Statistical Inference: Apply a computationally efficient debiased inference procedure to quantify the uncertainty of the estimated parameters and identify statistically significant co-expression QTLs.

- Interpretation: Analyze the resulting model to determine which genetic variants modulate the co-expression between specific pairs of genes.

### Workflow and Pathway Visualizations

Frequently Asked Questions (FAQs)

1. What is the curse of dimensionality and how does it affect my gene expression data? The curse of dimensionality refers to phenomena that arise when analyzing data in high-dimensional spaces, such as gene expression datasets with thousands of features (genes) but relatively few samples. As dimensions increase, data becomes sparse, making it difficult to find meaningful patterns [10]. In practice, this leads to several issues: the Euclidean distance between samples becomes less informative, the volume of the space increases so fast that available data becomes sparse, and the amount of data needed for reliable results grows exponentially with dimensionality [10]. For genetic research, this can manifest as difficulty in identifying truly significant gene regulatory relationships amid the high-dimensional noise.

2. Why does my model perform poorly on validation data despite high training accuracy? This is a classic symptom of overfitting, which is a direct consequence of the curse of dimensionality [11]. When your feature dimension (p) greatly exceeds your sample size (N), models can memorize noise and spurious correlations in the training data rather than learning generalizable patterns [11]. This is particularly problematic in gene expression studies where you might have expression measurements for 20,000 genes but only a few hundred cell samples. The Hughes phenomenon specifically describes how classifier performance improves with additional features up to a point, then deteriorates as more features are added [11].

3. What are the most effective ways to mitigate dimensionality issues in covariance estimation? The most effective approaches include dimensionality reduction techniques like Principal Component Analysis (PCA), feature selection methods, and regularization [12] [13]. For covariance estimation specifically, methods that leverage sparsity assumptions or use regularized covariance estimators often perform best. The QWENDY method, which uses quadruple covariance matrices to infer gene regulatory networks from single-cell expression data, represents a specialized approach that addresses these challenges by studying how covariance matrices evolve across time points [14].

4. How much data do I need for reliable high-dimensional analysis? A common rule of thumb suggests at least 5 training examples for each dimension in your representation [10]. However, in genetic research where collecting samples is expensive and time-consuming, this is often impractical. With 20,000 genes, this would require 100,000 samples. Therefore, dimensionality reduction or feature selection becomes essential. The key is ensuring you have sufficient data to support the complexity of your model while accounting for the exponential data requirements imposed by high-dimensional spaces [10].

Troubleshooting Guides

Problem: Poor Model Generalization

Symptoms

- High accuracy on training data but significantly lower accuracy on test/validation data

- Model performance deteriorates as more features are added

- High variance in performance across different data splits

Diagnosis and Solutions

Table: Solutions for Poor Generalization

| Solution | Implementation | Use Case |

|---|---|---|

| L1 Regularization (Lasso) | Apply L1 penalty to shrink less important feature coefficients to zero [13] | When you need automated feature selection and interpretability |

| Feature Selection | Use SelectKBest with ANOVA F-value or similar statistical measures [12] | When domain knowledge suggests many irrelevant features |

| Dimensionality Reduction (PCA) | Project data onto principal components that capture most variance [13] | When features are correlated and you want to preserve global structure |

| Ensemble Methods | Use Random Forests or other ensemble techniques [12] | When you have heterogeneous data with complex interactions |

Step-by-Step Protocol: Regularized Covariance Estimation

- Preprocessing: Remove constant features using VarianceThreshold and impute missing values [12]

- Feature Scaling: Standardize features to zero mean and unit variance using StandardScaler [13]

- Feature Selection: Apply SelectKBest with f_classif to select top k features [12]

- Dimensionality Reduction: Apply PCA to further reduce dimensionality [12]

- Model Training: Implement regularized model (Lasso/Random Forest) on reduced feature set [12]

- Validation: Use robust cross-validation to ensure generalizability [11]

Problem: Computational Bottlenecks

Symptoms

- Extremely long training times

- Memory overflow errors

- Inability to process full dataset

Diagnosis and Solutions

Table: Computational Optimization Strategies

| Strategy | Implementation | Expected Improvement |

|---|---|---|

| Dimensionality Reduction | Apply PCA to reduce feature count while preserving ~95% variance [13] | Reduces computational complexity from O(p³) to O(k³) where k< |

| Feature Selection | Remove irrelevant features before model training [12] | Eliminates redundant computations |

| Algorithm Selection | Use algorithms optimized for high-dimensional data [10] | Better scaling with dimensionality |

Problem: Uninformative Distance Metrics

Symptoms

- Clustering algorithms fail to find meaningful patterns

- Nearest neighbor searches return seemingly random results

- Distance concentrations where most pairwise distances become similar [10]

Diagnosis and Solutions

Step-by-Step Protocol: Meaningful Distance Calculation

- Feature Scaling: Normalize all features to comparable ranges using StandardScaler [13]

- Feature Weighting: Assign weights to features based on importance [13]

- Dimensionality Reduction: Apply PCA or t-SNE to project to lower dimensions [13]

- Alternative Metrics: Consider correlation-based distance or other domain-specific similarity measures

Experimental Protocols

Protocol 1: Comprehensive Dimensionality Reduction Pipeline

This protocol adapts best practices for gene expression data [12].

Protocol 2: Covariance Matrix Estimation for Gene Regulatory Networks

This protocol implements concepts from QWENDY methodology for gene regulatory network inference [14].

Workflow Overview

Key Steps:

- Input Preparation: Single-cell expression data across multiple time points (typically 4 time points as in QWENDY) [14]

- Covariance Calculation: Compute covariance matrices for each time point

- Matrix Evolution Analysis: Study how covariance structures change over time

- Analytical Solution: Solve regulatory relationships without optimization [14]

- Network Inference: Rank regulatory relationships by strength

Protocol 3: Visualization of High-Dimensional Gene Expression Data

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Computational Tools for High-Dimensional Gene Expression Analysis

| Tool/Reagent | Function | Application Note |

|---|---|---|

| PCA (Principal Component Analysis) | Linear dimensionality reduction [13] | Preserves global data structure; ideal for initial data exploration |

| t-SNE | Nonlinear dimensionality reduction for visualization [13] | Preserves local structures; excellent for cluster visualization |

| SelectKBest | Feature selection based on statistical tests [12] | Efficiently filters irrelevant features using ANOVA F-value |

| Lasso Regression (L1) | Regularized regression with built-in feature selection [13] | Automatically shrinks irrelevant feature coefficients to zero |

| Random Forest | Ensemble method robust to high dimensionality [12] | Handles heterogeneous data well; provides feature importance scores |

| VarianceThreshold | Removes low-variance features [12] | Effective first step to eliminate uninformative genes |

| StandardScaler | Feature standardization [13] | Critical for meaningful distance calculations in high dimensions |

| QWENDY Method | Gene regulatory network inference via covariance matrices [14] | Specifically designed for temporal single-cell gene expression data |

Advanced Diagnostic Framework

Decision Framework for Method Selection

This technical support resource provides gene expression researchers with practical solutions to overcome the challenges of high-dimensional data analysis, with specific emphasis on optimizing covariance estimation through proven statistical foundations of sparsity and regularization.

Troubleshooting Guide: Frequently Asked Questions

Q1: Why does my covariance matrix fail to identify statistically significant pathways in high-dimensional gene expression data?

A: This commonly occurs when technical variance dominates biological signal. Implement these specific solutions:

- Apply Count-Based Transformations: For RNA-seq data, use a variance-stabilizing transformation (VST) or log2(X+1) transform before covariance estimation to mitigate mean-variance dependence [15].

- Utilize Regularization Techniques: Employ statistical shrinkage methods like Schäfer-Strimmer covariance estimator to stabilize eigenvalues when genes (p) >> samples (n). This prevents ill-conditioned matrices that obscure true biological correlations.

- Incorporate Pathway-Informed Priors: Use databases like KEGG or Reactome to construct a biological network prior. Fusing this structured information with your expression data via graphical lasso can enhance signal detection in sparse data environments.

Q2: How can I distinguish technical covariance from biologically relevant co-expression in my analysis?

A: Distinguishing these sources is critical for valid interpretation. Follow this experimental and computational protocol:

- Execute a Negative Control Experiment: Process synthetic RNA spike-ins (e.g., from the External RNA Controls Consortium, ERCC) alongside your biological samples. Calculate the covariance structure of these spike-in controls, which reflects pure technical noise.

- Compute a Corrected Covariance Matrix: Subtract the technical covariance matrix (from spike-ins) from your biological sample covariance matrix. This yields a technical-noise-filtered covariance estimate.

- Validate with Orthogonal Methods: Correlate high-weight gene pairs from your corrected covariance analysis with known protein-protein interactions (e.g., from STRING database) or confirm co-localization in single-molecule RNA FISH experiments [16].

Q3: My pathway enrichment results are inconsistent after replicating the experiment with new samples. How can I improve robustness?

A: Inconsistency often stems from unaccounted batch effects or overfitting. Implement this multi-step solution:

- Design with Batch Covariates: Record processing batches, technician ID, and reagent lots. Model these as covariates in your conditional covariance estimation.

- Apply ComBat or SVA: Use these algorithms before covariance calculation to remove hidden sub-groups and batch effects that artificially inflate correlations.

- Apply Stability Selection: Instead of a single covariance estimate, use bootstrapping (n=1000 resamples). A stable, biologically relevant pathway will consistently appear across >90% of bootstrap replicates, while spurious correlations will be unstable.

Q4: What are the best practices for visualizing covariance structures in relation to molecular pathways?

A: Effective visualization bridges statistical patterns with biology.

- Create a Hierarchical Layout: In your network graph, position genes belonging to the same pathway within a shared parent node. Use the DOT

clusterattribute to visually group them. - Map Covariance Strength to Edge Properties: Set the

penwidthattribute of edges to be proportional to the magnitude of the partial correlation coefficient. Use thecolorattribute (e.g.,#EA4335for positive,#4285F4for negative correlations) to distinguish interaction types. - Integrate Node Functional Data: Color-code node borders (

colorattribute) based on additional functional data (e.g.,#FBBC05for transcription factors,#34A853for metabolic enzymes). This overlay provides immediate functional context to the covariance structure.

Experimental Protocols for Covariance-Pathway Integration

Protocol 1: Establishing Causality in Covariance Networks

Objective: Determine if correlated gene expression implies regulatory causality.

Methodology:

- Perturbation Experiment: Select a high-degree hub gene from your covariance network. Perform a CRISPR-based knockout or siRNA knockdown in a relevant cell line (e.g., Huh-7 for liver metabolism studies) [17].

- Expression Profiling: Profile the transcriptome (RNA-seq) of the perturbed cells and isogenic controls in triplicate.

- Differential Covariance Analysis: Recompute the covariance matrix for both conditions. A true regulatory target of the hub gene will show significantly weakened correlations (adjusted p-value < 0.05) with its expected partners in the knockout network.

- Validation: Confirm the identified target via qPCR and assess the functional consequence with a relevant phenotypic assay (e.g., proliferation, metabolite shift).

Protocol 2: Temporal Covariance Tracking for Dynamic Pathways

Objective: Capture how covariance structures evolve during a biological process.

Methodology:

- Time-Series Sampling: Design an experiment with dense time-point sampling (e.g., a T-cell activation assay with samples at 0, 2, 4, 8, 12, 24, 48 hours post-stimulation) [18].

- Sliding-Window Covariance: For each consecutive set of 3 time points (e.g., 0-2-4h, 2-4-8h), calculate a separate covariance matrix. This creates a temporal sequence of covariance matrices.

- Identify Module Dynamics: Perform non-negative matrix factorization (NMF) on the sequence of matrices to identify gene modules with synchronous covariance strengthening or weakening.

- Link to Functional Outcomes: Correlate the activation trajectory of specific modules with measured functional outcomes (e.g., cytokine secretion from [18]), establishing a covariance-to-phenotype relationship.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential reagents and computational tools for covariance-pathway research.

| Item Name | Function/Benefit in Analysis | Example Use-Case |

|---|---|---|

| Lipid Nanoparticles (LNPs) [16] [19] | Deliver gene editing machinery (CRISPR) for in vitro and in vivo perturbation studies to test causality in covariance networks. | Knockdown of hub genes identified in a covariance network to validate regulatory influence. |

| External RNA Controls (ERCC) | Quantify technical noise and batch effects, allowing for computational correction of the covariance matrix. | Spiking into RNA-seq samples to create a per-experiment technical covariance profile for noise subtraction. |

| Variant Call Format (VCF) Files [20] | Provide genotype information to distinguish covariance driven by cis-acting genetic variants (eQTLs) from other regulatory mechanisms. | Integrating SNP data from VCF files to perform conditional covariance analysis, controlling for underlying genetic structure. |

| Wastewater Sample Sequencing [15] | A novel source for population-level pathogen genomic data to study pathogen-host interaction networks and co-evolution. | Tracking community-wide spread of viral variants (e.g., XBB.1.5) to correlate with host gene expression response patterns. |

| Phenotypic Age Acceleration (PhenoAgeAccel) Metric [17] | A biomarker of biological aging used to stratify cohorts and test if covariance structures differ by biological vs. chronological age. | Grouping patient-derived samples by PhenoAgeAccel to identify age-related dysregulation in co-expression networks. |

| Single Nucleotide Variant (SNV) Fingerprinting [15] | High-sensitivity method for tracking specific low-abundance sequences in complex mixtures, useful for validating subtle network changes. | Detecting the early emergence of specific gene isoforms or pathogen strains that may influence host network covariance. |

Table: Key quantitative findings from recent studies relevant to experimental design and interpretation.

| Study Focus / Parameter | Quantitative Result | Relevance to Covariance Estimation |

|---|---|---|

| Antibody Titer & Infection Risk [17] | Antibody >2500 IU/mL: HR=0.04; PhenoAgeAccel >0: HR=4.75 | Demonstrates a non-linear relationship between a molecular marker (antibody) and outcome, justifying non-linear covariance measures like distance correlation. |

| Wastewater-Based Epidemiology (WBE) Lead Time [15] | XBB.1.5 detection in wastewater vs. clinical: ~2 weeks earlier; XBB.1.9: ~months earlier | Supports using WBE data as an early, sensitive source for constructing dynamic, time-shifted host-pathogen co-expression networks. |

| Vaccine Efficacy (VE) Over Time [18] | VE vs. infection (initial): 60-80%; VE (6-12 months): 40-60%; VE vs. severe disease: >80% (stable) | Shows different temporal decay rates for different biological processes, motivating the use of time-windowed covariance analysis. |

| Immune Response Predictors [17] | Natural infection vs. vaccination odds ratio for high antibody: OR=124.79; ≥3 vaccine doses: OR=4.36 | Highlights powerful confounding factors (immune history) that must be regressed out before estimating the core covariance structure of interest. |

Visualizing Workflows and Pathways

The following diagrams, generated with Graphviz, illustrate core experimental and analytical workflows.

Diagram 1: Technical Noise Correction Protocol

Diagram 2: Covariance-Pathway Integration Analysis

Diagram 3: Key Molecular Pathways with High Covariance

Advanced Statistical Frameworks for Conditional Covariance Modeling

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between estimating a sparse covariance matrix versus a sparse inverse covariance matrix? Sparsity in a covariance matrix (Σ) indicates marginal independencies between variables; a zero entry between two variables means they are uncorrelated. In contrast, sparsity in an inverse covariance matrix (Σ⁻¹) indicates conditional independencies; a zero entry means two variables are independent given all the other variables. The choice depends on whether your research question involves the direct relationship between variables or their relationship after accounting for others [21].

Q2: My optimization problem for sparse covariance estimation is non-convex. How can I reliably solve it?

The penalized likelihood problem for sparse covariance estimation is indeed non-convex. A standard and reliable approach is to use a majorize-minimize (MM) algorithm. This method works by iteratively solving a sequence of simpler, convex problems that majorize the original non-convex objective. Specifically, the concave part of the objective (like the log det ∑ term) is replaced with its tangent plane, leading to a convex semidefinite program that can be solved at each iteration until convergence [21].

Q3: When applying sparse Quadratic Discriminant Analysis (QDA) to genomics data, how do I choose the block size for the covariance structure? The optimal block size depends on the underlying (and unknown) biological structure. Simulation studies in high-dimensional settings (e.g., with 10,000 genes) suggest that a block size of 100 is a robust default choice that performs well even when the true block size is different. Performance can be sensitive to this parameter, so it is recommended to perform cross-validation over a range of potential block sizes (e.g., 100, 150, 200, ..., 400) if computationally feasible [22].

Q4: Why is simple elementwise thresholding sometimes competitive with more complex methods for identifying sparsity? Research has shown that for the specific task of identifying the zero-pattern (the sparsity structure) in a covariance matrix, simple elementwise thresholding of the sample covariance matrix can be surprisingly competitive with more complex penalized likelihood methods. This is because the primary goal in structure identification is to correctly set small, noisy entries to zero, which thresholding does directly. However, more advanced methods may still provide benefits in the accuracy of the estimated non-zero values and ensure positive definiteness [21].

Troubleshooting Guides

Problem: Algorithm Fails to Converge or Produces Non-Positive Definite Matrix

Symptoms: The estimation algorithm throws an error related to positive definiteness, fails to converge after a large number of iterations, or produces a covariance matrix with invalid eigenvalues.

Solutions:

- Check Initialization: Use a positive definite matrix for initialization, such as the diagonal sample covariance matrix,

diag(S₁₁, ..., S_pp), rather than the potentially singular full sample covariance matrixS[21]. - Adjust Penalty Parameter (λ): A value of

λthat is too high can over-penalize off-diagonal elements, potentially driving the matrix towards non-positive definiteness. Implement a cross-validation routine to select an optimalλthat balances sparsity and model fit. - Inspect the Majorize-Minimize Loop: If using a custom implementation of the MM algorithm, verify that the convex problem at each iteration is being solved correctly and that the objective function is non-increasing with each step [21].

Problem: Poor Classification Performance with Sparse QDA on Genomic Data

Symptoms: The Sparse QDA (SQDA) classifier yields high misclassification rates on test data.

Solutions:

- Tune the Error Margin Parameter: The error margin determines which feature blocks are selected. If the sample size is small, use a larger error margin (e.g., 0.10 to 0.15) to include more potentially predictive features and guard against high variance. For larger sample sizes, a smaller error margin (e.g., 0.05) can more aggressively exclude noise [22].

- Re-evaluate the Block Diagonal Assumption: SQDA's performance can degrade if the true covariance structure is not well-approximated by a block-diagonal matrix. Explore other structured estimators (e.g., banded [23]) or assess the correlation structure of your data beforehand.

- Increase Sample Size if Possible: Simulation studies show that the performance of SQDA, like many high-dimensional methods, is significantly influenced by sample size. Performance generally improves with more samples [22].

Experimental Protocols

Protocol 1: Sparse Covariance Matrix Estimation via Majorize-Minimize Algorithm

This protocol details the steps for estimating a sparse covariance matrix using a penalized likelihood approach [21].

1. Problem Formulation:

* Objective: Minimize the following penalized negative log-likelihood:

Minimize_{Σ ≻ 0} { log det Σ + tr(Σ⁻¹S) + λ‖P * Σ‖₁ }

* Parameters:

* S: The sample covariance matrix.

* λ: The regularization parameter controlling sparsity.

* P: A penalty matrix of non-negative elements (often P_ij = 1 for i ≠ j and 0 on the diagonal to avoid shrinking variances).

2. Algorithm Initialization:

* Initialize Σ̂⁽⁰⁾ with a positive definite matrix, typically diag(S₁₁, ..., S_pp).

3. Iterative Majorize-Minimize Steps: Repeat until convergence:

* Majorization Step: At iteration t, form the convex majorizing function using the current estimate Σ̂⁽ᵗ⁻¹⁾.

* Minimization Step: Solve the convex optimization problem:

Σ̂⁽ᵗ⁾ = arg min_{Σ ≻ 0} [ tr( (Σ̂⁽ᵗ⁻¹⁾)⁻¹ Σ ) + tr(Σ⁻¹S) + λ‖P * Σ‖₁ ]

* Convergence Check: Stop when the relative change in the objective function or the estimate Σ̂ falls below a predefined tolerance (e.g., 10⁻⁵).

4. Tuning Parameter Selection:

* Use K-fold cross-validation (e.g., K=5 or K=10) to select the λ value that maximizes the predictive likelihood on the held-out validation folds.

Protocol 2: Sparse QDA for Tissue Classification from Gene Expression

This protocol outlines the application of Sparse QDA (SQDA) for classifying tissue types (e.g., tumor vs. normal) using high-dimensional gene expression data [22].

1. Preprocessing: * Normalize the gene expression data (e.g., TPM, FPKM) across all samples. * Split data into training and independent testing sets.

2. Block-Based Feature Selection:

* Block Formation: On the training set, group genes into blocks of a predetermined size (e.g., 100 genes). Blocks can be formed based on correlation or arbitrarily.

* Block Evaluation: Perform cross-validation within the training set for each block independently. Calculate the cross-validation error for each block.

* Block Selection: Retain only those blocks whose cross-validation error is within a pre-specified error margin (e.g., 0.05) of the smallest error observed among all blocks.

3. Model Estimation:

* For each class k (e.g., tumor, normal), estimate a sparse covariance matrix Σₖ using only the genes from the selected blocks. Use the method described in Protocol 1 or a comparable sparse estimator.

* Estimate the class mean vectors μₖ using the sample means on the training data.

4. Classification:

* For a new test sample x, assign it to the class k that maximizes the quadratic discriminant function:

δₖ(x) = -(1/2)(x - μₖ)ᵀ Σₖ⁻¹ (x - μₖ) - (1/2) log det Σₖ + log πₖ

where πₖ is the prior probability of class k.

Workflow and Relationship Diagrams

Sparse Covariance Estimation Workflow

Covariance Graph vs. Markov Network

Research Reagent Solutions

The following table lists key computational tools and methodological "reagents" essential for implementing structured covariance estimation.

| Research Reagent | Function/Brief Explanation | Key Context from Search |

|---|---|---|

Lasso Penalty (‖P * Σ‖₁) |

A regularization term added to the likelihood that encourages sparsity by shrinking small covariance entries to zero [21]. | The core penalty function in sparse covariance estimation [21]. |

| Majorize-Minimize (MM) Algorithm | An optimization procedure that iteratively solves a sequence of convex approximations to a non-convex problem, ensuring convergence to a local minimum [21]. | Used to solve the non-convex penalized likelihood problem for sparse covariance estimation [21]. |

| Beta-Mixture Shrinkage Prior | A Bayesian prior distribution used to induce sparsity in covariance matrix estimates, implemented via block Gibbs sampling [24]. | Foundation for the bmspcov and sbmspcov functions in the bspcov R package [24]. |

Joint ℓ₁-norm & Eigenvalue Penalty |

A penalty function that promotes both sparsity and well-conditioning of the estimated matrix by also controlling the variance of its eigenvalues [25]. | Enables simultaneous estimation of a sparse and well-conditioned covariance matrix [25]. |

| Tapering/Thresholding Estimators | Simple, non-iterative estimators that set matrix entries to zero based on their magnitude (thresholding) or their position relative to the diagonal (tapering) [23]. | Proven to achieve minimax optimal rates for estimating banded and sparse covariance operators [23]. |

| Block-Based Cross-Validation | A feature selection method where variables are grouped into blocks, and blocks are retained based on their individual cross-validation performance [22]. | Used in Sparse QDA (SQDA) for high-dimensional genomic data to select informative gene blocks [22]. |

What is covariance regression and why is it used in genetics research?

Covariance regression models how a covariance matrix changes with subject-level covariates, rather than assuming a common covariance structure across all subjects [5]. In genetics, this allows gene co-expression networks to vary with individual characteristics like genetic variations, age, or sex. This is crucial for identifying co-expression quantitative trait loci (QTLs)—genetic variants that affect how genes are co-expressed [5].

How does this differ from traditional covariance estimation?

Traditional methods assume a homogeneous population with a common covariance matrix, while covariance regression accounts for individual heterogeneity. High-dimensional implementations incorporate sparsity to ensure estimability and interpretability when both responses and covariates are high-dimensional [5].

Troubleshooting Guide: Common Experimental Issues

Model Fitting & Convergence Problems

| Problem | Possible Causes | Solutions |

|---|---|---|

| Failure to converge [26] | Initial parameter values too far from optimum; highly scattered data; insufficient data in critical X ranges [26]. | Provide better initial estimates; collect less scattered data; increase sample size in key variable ranges [26]. |

| Computationally prohibitive runtime | High-dimensional responses and covariates; complex model structure [5]. | Use sparse estimation methods (sparse group lasso); implement blockwise coordinate descent algorithms [5]. |

| Non-positive definite covariance estimate | Small sample size; model misspecification; convergence issues [6]. | Check sample size adequacy; ensure proper regularization; verify algorithm convergence [5]. |

Interpretation & Validity Concerns

| Problem | Possible Causes | Solutions |

|---|---|---|

| Uninterpretable covariate effects | Overly complex model; lack of sparsity; inappropriate parameterization [5]. | Apply sparsity penalties; use debiased inference for significance testing; simplify model structure [5]. |

| Violation of homogeneity assumptions | Regression slopes not homogeneous across groups; heterogeneous populations [27]. | Test homogeneity of regression slopes assumption before interpretation [27]. |

| Sensitivity to variable ordering | Using Cholesky-based methods without natural variable ordering [6]. | Use ensemble methods across multiple orderings; consider permutation-invariant approaches [6]. |

Frequently Asked Questions (FAQs)

Method Selection & Setup

What are the key considerations when choosing a covariance regression model for gene expression data? For high-dimensional gene expression data with subject-level covariates, prioritize methods that: (1) accommodate both continuous and discrete covariates, (2) enforce sparsity on covariate effects and affected edges, (3) guarantee positive definite covariance estimates, and (4) scale computationally to large dimensions [5]. Sparse covariance regression with combined sparsity structures is particularly suitable [5].

How do I handle non-Gaussian data or non-linear relationships in covariance regression? Copula Gaussian graphical models can handle non-Gaussian data including binary, ordinal, and mixed measurements [28]. For non-linear effects, consider Bayesian nonparametric approaches or random forests, though these may increase computational demands [5].

Implementation & Technical Issues

What diagnostics should I run after fitting a covariance regression model? Check: (1) convergence of optimization algorithm, (2) positive definiteness of estimated covariance matrices across covariate values, (3) residual patterns for systematic misfit, and (4) stability of sparsity patterns through cross-validation [5] [26].

How can I ensure my covariance estimates are positive definite? Methods based on Cholesky decomposition, modified Cholesky decomposition, or matrix logarithm transformations naturally guarantee positive definiteness [6]. For other approaches, verify that theoretical conditions for positive definiteness are satisfied, particularly in high dimensions [5].

What are the trade-offs between Bayesian and frequentist approaches to covariance regression? Bayesian methods (e.g., BGGM) offer natural uncertainty quantification and can handle complex data types but have higher computational demands [28]. Frequentist approaches with sparse regularization and debiased inference provide computational efficiency and scalability to very high dimensions while still enabling inference [5].

Experimental Protocols

Protocol: Sparse Covariance Regression for Co-expression QTL Detection

Purpose: Identify genetic variants that modify gene co-expression patterns.

Workflow:

Materials:

- Gene expression matrix: ( Y \in \mathbb{R}^{n \times p} ) with ( n ) subjects and ( p ) genes [5]

- Genetic variant data: ( X \in \mathbb{R}^{n \times q} ) with ( q ) SNPs [5]

- Additional covariates: ( Z \in \mathbb{R}^{n \times r} ) (e.g., age, sex) [5]

Procedure:

- Data Preprocessing: Normalize gene expression data, quality control on genetic variants, adjust for batch effects if present.

- Model Specification: Implement sparse covariance regression model: ( \Sigmai = \Psi0 + \sum{j=1}^q x{ij}\Psij ) where ( \Psij ) are sparse coefficient matrices [5].

- Parameter Estimation: Apply blockwise coordinate descent algorithm with sparse group lasso penalty to estimate ( \Psi_j ) matrices [5].

- Statistical Inference: Implement debiased inference procedure to identify significant genetic effects on covariance elements [5].

- Interpretation: Map significant co-expression QTLs to gene networks and biological pathways.

Protocol: Covariance-on-Covariance Regression for Multi-omics Integration

Purpose: Model how covariance structures in one molecular domain (e.g., proteomics) predict covariance structures in another (e.g., transcriptomics).

Workflow:

Materials:

- Outcome data: ( y{it} \in \mathbb{R}^q ) for ( t=1,\ldots,vi ) observations per subject [29]

- Predictor data: ( x{is} \in \mathbb{R}^p ) for ( s=1,\ldots,ui ) observations per subject [29]

- Covariance matrices: ( \Sigmai \in \mathbb{R}^{q\times q} ) (outcome) and ( \Deltai \in \mathbb{R}^{p\times p} ) (predictor) [29]

Procedure:

- Covariance Estimation: Calculate subject-specific covariance matrices for both domains.

- Projection Identification: Find linear projections ( \gamma ) and ( \theta ) that maximize association: ( \log(\gamma^\top\Sigmai\gamma) = \alpha\log(\theta^\top\Deltai\theta) + w_i^\top\beta ) [29].

- Model Fitting: Estimate association parameters using ordinary least squares or maximum likelihood.

- Significance Testing: Evaluate strength of covariance-on-covariance relationships.

- Biological Interpretation: Relate significant associations to known biological networks and pathways.

Research Reagent Solutions

| Research Need | Solution Options | Function & Application |

|---|---|---|

| High-dimensional covariance estimation | Modified Cholesky decomposition (MCD) [6]; Ensemble sparse estimation [6] | Guarantees positive definiteness; handles no natural ordering of genes |

| Bayesian covariance modeling | BGGM R package [28]; Matrix-F prior distribution [28] | Bayesian inference for Gaussian graphical models; hypothesis testing |

| Covariance regression implementation | Sparse group lasso [5]; Debiased inference [5] | Simultaneous sparsity on covariates and their effects; uncertainty quantification |

| Non-Gaussian data modeling | Copula GGMs [28]; Binary/ordinal data methods [28] | Handles diverse data types common in genetic studies |

| Covariance-on-covariance regression | Common diagonalization approaches [29]; OLS-type estimators [29] | Models relationships between covariance matrices from different domains |

Welcome to the Technical Support Center

This resource provides troubleshooting guides and frequently asked questions (FAQs) to support researchers, scientists, and drug development professionals in applying shrinkage estimation techniques to optimize covariance matrix and regression coefficient estimation in high-dimensional gene expression research.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental trade-off that shrinkage estimation techniques address? Shrinkage techniques manage the bias-variance trade-off deliberately. In high-dimensional settings, like with genomic data where the number of genes (predictors) far exceeds the number of samples, maximum likelihood estimates often exhibit high variance. Shrinkage methods introduce a small amount of bias by pulling estimates toward a target (like zero or a diagonal structure) to achieve a substantial reduction in variance, leading to lower overall prediction error and more stable, generalizable models [30] [31] [32].

FAQ 2: When should I choose Lasso over Ridge Regression for my gene expression data? The choice depends on your goal. Lasso (L1 regularization) is preferable when you believe only a small subset of genes are relevant and you wish to perform feature selection, as it can shrink some coefficients to exactly zero [33] [32]. Ridge (L2 regularization) is a better choice when you believe many genes may have small, non-zero effects and are potentially correlated; it shrinks coefficients but does not set them to zero, which helps manage multicollinearity [31] [33]. If you need both feature selection and handling of correlated predictors, Elastic Net is a suitable hybrid [31] [33].

FAQ 3: My high-dimensional covariance matrix is ill-conditioned. How can shrinkage help?

The sample covariance matrix becomes unreliable or even singular when the number of features p is large compared to the number of observations n. Shrinkage estimators stabilize this by combining the high-variance sample covariance matrix with a low-variance, structured target matrix (e.g., a diagonal matrix). This reduces the condition number and minimizes the Mean Squared Error (MSE) of the estimate, which is crucial for downstream analyses like linear discriminant analysis [30] [34].

FAQ 4: What is the Oracle Property and why is it important? An oracle procedure has the oracle property, meaning it can correctly identify the subset of true predictors with coefficients of zero with probability tending to 1, as if the true model were known in advance. Furthermore, it provides asymptotically unbiased and efficient estimates for the non-zero coefficients. Methods like the Adaptive Lasso possess this property, whereas standard Lasso does not, making them attractive for variable selection in high-dimensional biological data [31] [35].

Troubleshooting Guides

Issue 1: Poor Model Generalization on New Data

- Symptoms: The model fits the training gene expression data perfectly but performs poorly on independent validation cohorts.

- Diagnosis: This is a classic sign of overfitting, where the model has high variance and has learned the noise in the training data.

- Solution: Apply regularization.

- Standardize your gene expression predictors to have a mean of zero and a standard deviation of one.

- Use cross-validation to tune the shrinkage penalty parameter

λ. - For Ridge Regression, a closed-form solution exists, and

λcan be selected via REML [31]. - For Lasso or Elastic Net, use algorithms like coordinate descent to solve the optimization problem and select

λthat minimizes cross-validated error [31] [35].

Issue 2: Inability to Handle Highly Correlated Transcription Factors

- Symptoms: The model is unstable; small changes in the data lead to large changes in the selected genes and their coefficient estimates.

- Diagnosis: Standard Lasso may arbitrarily select one variable from a group of highly correlated predictors and ignore the others.

- Solution:

Issue 3: Selecting the Optimal Shrinkage Target for Covariance Matrices

- Symptoms: Your covariance matrix estimate does not lead to improvements in downstream tasks like classification.

- Diagnosis: The choice of the shrinkage target significantly impacts performance. A one-size-fits-all target (e.g., identity matrix) may not be optimal.

- Solution: Choose a target based on the suspected data structure.

- If gene expressions are expected to have heterogeneous variances, shrink towards a diagonal matrix with unequal variances rather than an identity matrix [30].

- If you suspect a sparse covariance structure, consider targets that promote sparsity or use non-parametric, data-splitting estimation techniques [34].

Experimental Protocols and Workflows

Protocol 1: Implementing a Shrinkage-based Linear Discriminant Analysis (LDA) for Cancer Subtype Classification

This protocol details the steps to classify samples (e.g., tumor vs. normal) using high-dimensional gene expression data.

Principle: Fisher's LDA requires estimating the precision matrix (inverse covariance matrix) and mean vectors for each class. In high-dimensional settings, the sample covariance matrix is unstable or non-invertible, necessitating shrinkage.

Procedure:

- Data Preprocessing: Log-transform and standardize the gene expression data per sample.

- Estimate Precision Matrix:

- Calculate the sample covariance matrix

S. - Apply a shrinkage estimator. For example, use the Ledoit-Wolf linear shrinkage towards an identity matrix:

S' = (1 - λ)S + λI, whereλis analytically determined [34]. - Invert

S'to get the precision matrix estimate.

- Calculate the sample covariance matrix

- Estimate Mean Vectors:

- For each class, instead of the simple sample mean, consider a shrinkage mean estimator (e.g., Non-Parametric Empirical Bayes - NPEB) to further reduce noise [34].

- Construct Discriminant Rule: Plug the shrunken estimates of the precision matrix and mean vectors into the standard Fisher's linear discriminant function [34].

- Validation: Evaluate classification accuracy using cross-validation or an independent test set.

Workflow Diagram: The following diagram illustrates the logical workflow for implementing a shrinkage-based LDA classifier.

Protocol 2: Fitting a Regularized Accelerated Failure Time (AFT) Model for Survival Analysis

This protocol is for identifying genes associated with patient survival time from high-dimensional, low-sample-size microarray data.

Principle: The AFT model directly relates log survival time to gene expression. Regularization is required to select relevant genes and prevent overfitting.

Procedure:

- Data Preparation: Handle censored survival data. One approach is to use Kaplan-Meier weights to impute the censored observations [35].

- Model Fitting with L1/2 Regularization:

- The objective is to minimize:

||W(y - Xβ)||² + λ||β||_{1/2}whereWare Kaplan-Meier weights,yis log survival time,Xis the gene expression matrix, andβare the coefficients [35]. - Use a coordinate descent algorithm with a Newton-Raphson iterative method to solve this non-convex optimization problem [35].

- The objective is to minimize:

- Tuning Parameter Selection: Use

k-fold cross-validation to select the penalty parameterλthat minimizes the prediction error. - Gene Selection and Prediction: The final model will have a sparse set of genes with non-zero coefficients. These are the selected prognostic genes, and the model can be used to predict survival for new patients.

Method Comparison and Selection

Table 1: Comparison of Key Shrinkage and Regularization Methods

| Method | Penalty Term | Key Property | Best Suited For | Considerations |

|---|---|---|---|---|

| Ridge Regression [31] [33] | L2: (\lambda \sum \beta_j^2) | Shrinks coefficients, but not to zero. | Scenarios with many small, non-zero effects; handling multicollinearity among genes. | Does not perform feature selection; all genes remain in the model. |

| Lasso Regression [31] [33] | L1: (\lambda \sum |\beta_j|) | Can shrink coefficients to exactly zero. | Feature selection when the true model is sparse (few important genes). | Unstable with highly correlated genes; may select one randomly. |

| Elastic Net [31] [33] | L1 + L2: (\lambda1 \sum |\betaj| + \lambda2 \sum \betaj^2) | Groups correlated variables; performs selection. | Datasets with highly correlated genes (e.g., genes in a pathway). | Has two tuning parameters to optimize. |

| Adaptive Lasso [31] | L1: (\lambda \sum \hat{\omega}j |\betaj|) | Satisfies the Oracle Property; consistent variable selection. | When unbiased variable selection is the primary goal. | Requires constructing data-driven weights. |

| L1/2 Regularization [35] | Lq: (\lambda \sum |\beta_j|^{1/2}) | Can yield sparser solutions than L1. | Survival analysis (e.g., AFT model) to achieve high sparsity and accuracy. | Non-convex optimization; requires specialized algorithms. |

| Covariance Shrinkage [30] | Shrinkage toward a diagonal target | Reduces MSE of covariance matrix. | When the diagonal elements (variances) of the covariance matrix exhibit substantial variation. | Improves estimation of the inverse covariance matrix. |

Method Selection Workflow Diagram: The following diagram provides a logical guide for selecting an appropriate shrinkage method based on your research goals and data structure.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Computational Tools and Packages for Shrinkage Estimation

| Tool / Package Name | Environment | Primary Function | Application in Gene Expression Research |

|---|---|---|---|

glmnet [31] [36] |

R | Efficiently fits Lasso, Ridge, and Elastic Net models. | Workhorse package for regularized regression to identify gene-outcome associations. |

| Coordinate Descent Algorithm [31] [35] | Core Algorithm | Optimization method for fitting regularized models. | Enables efficient computation for high-dimensional gene expression data where p >> n. |

| Cross-Validation (e.g., k-fold) | Universal | Method for tuning the penalty parameter λ. |

Prevents overfitting by selecting λ that maximizes predictive performance on unseen data. |

| Non-Parametric Empirical Bayes (NPEB) [34] | R / Custom | Shrinks mean estimates toward a common value. | Improves estimation of class-specific mean expression levels in discriminant analysis. |

| Kaplan-Meier Weights [35] | Survival Software | Handles right-censored data in survival models. | Allows for the implementation of regularized AFT models with censored survival times. |

Troubleshooting Guides & FAQs

Frequently Asked Questions (FAQs)

Q1: What are the minimum hardware requirements to run a standard PhenoPLIER analysis? Running a full PhenoPLIER analysis is computationally intensive. The recommended hardware includes an Intel Core i5 (or equivalent) with at least 4 cores, 64 GB of RAM (32 GB may suffice for smaller scopes), and approximately 1.2 TB of free disk space to accommodate input data and result files [37].

Q2: My analysis failed during the data projection step. What could be the cause? This is often related to data formatting. Ensure your gene-trait association file (e.g., from a TWAS) is properly formatted. The input should be a matrix where rows represent traits and columns represent genes, containing standardized effect sizes. Verify that the gene identifiers match those used in the pre-computed latent variable (LV) model [38] [37].

Q3: How can I interpret the biological meaning of a significant Latent Variable (LV)? Each LV represents a group of co-expressed genes. To interpret an LV, examine its gene weights and annotation. High-weight genes drive the LV's association. Furthermore, check if the LV is associated with specific pathways, cell types, or biological conditions from the Recount3 dataset, which provides context for its function [38].

Q4: Are there faster alternatives to run PhenoPLIER for a proof-of-concept study? Yes, you can run the provided quick demo, which uses a subset of real data. Depending on your internet connection and hardware, the demo should take between 2 to 5 minutes to complete, allowing you to quickly validate your setup and understand the workflow [37].

Q5: How does PhenoPLIER compare to single-gene approaches? PhenoPLIER's module-based approach offers increased robustness in detecting genetic associations. It can prioritize biologically important candidate targets that lack strong individual gene-level associations in TWAS, which single-gene strategies might miss [38].

Common Errors and Solutions

| Error / Issue | Probable Cause | Solution |

|---|---|---|

| Environment setup failure | Missing dependencies or incorrect Conda environment. | Use the provided Docker image (docker pull miltondp/phenoplier) to ensure a consistent, pre-configured environment [37]. |

| Memory overflow during computation | Large dataset exceeds available RAM. | Reduce the number of traits or genes in the initial analysis. Ensure your system meets the 64 GB RAM recommendation [37]. |

| LV-trait association results are nonsensical | Incorrect gene identifier mapping between your dataset and the LV model. | Standardize all gene identifiers to a common format (e.g., ENSEMBL IDs) as used in the MultiPLIER model [38] [37]. |

| Unable to replicate known drug-disease link | The relevant gene module may not be active in the cell types/tissues profiled in your dataset. | Context is key. Ensure the gene expression compendium used to build the LVs includes cell types or conditions relevant to the drug's mechanism of action [38]. |

Experimental Protocols & Methodologies

Core Protocol: Executing a PhenoPLIER Analysis

The following methodology outlines the key steps for applying the PhenoPLIER framework to map gene-trait associations into a latent space of gene modules [38].

1. Input Data Preparation

- Gene-Trait Associations: Obtain summary statistics from a Transcriptome-Wide Association Study (TWAS). The primary input is a matrix of standardized effect sizes (e.g., from S-MultiXcan or S-PrediXcan) for genes across multiple traits [38].

- Latent Variable (LV) Model: Download the pre-computed MultiPLIER model. This model, derived from the Recount3 compendium of RNA-seq samples, contains the latent space representation (gene modules) [38] [37].

- Optional - Drug Perturbation Data: For drug-repurposing analyses, include transcriptional response data from resources like the LINCS L1000 database [38].

2. Projection of Data into Latent Space This step converts gene-level statistics into module-level scores.

- For each trait (or drug perturbation) and each LV, calculate an LV score.

- The score is computed as the sum of the product of the standardized gene effect sizes and the corresponding gene weights within that LV [38].

- Mathematically: ( LV{score} = \sum (wi * zi) ), where ( wi ) is the weight of gene ( i ) in the LV, and ( z_i ) is the z-score of gene ( i ) for the trait.

3. Association Testing and Inference

- Perform regression analysis to test the association between the LV scores and traits.

- Identify LVs that are significantly associated with the trait of interest. These LVs represent gene modules whose collective expression pattern is linked to the trait [38].

- Annotate significant LVs using the prior knowledge from the MultiPLIER model to understand the biological context (e.g., cell type, pathway) of the association.

4. Downstream Analysis: Clustering and Drug Repurposing

- Trait Clustering: Cluster traits based on their LV association profiles to identify traits with shared transcriptomic underpinnings [38].

- Drug-Disease Prediction: Map drug perturbation profiles into the same LV space. A drug whose transcriptional response signature opposes the LV signature of a disease is predicted to be a potential therapeutic [38].

Workflow Visualization

The diagram below illustrates the key steps and data flow in a standard PhenoPLIER analysis.

Key Research Reagent Solutions

The table below lists essential materials and resources required to implement the PhenoPLIER framework.

| Category | Item / Resource | Function / Description | Source / Reference |

|---|---|---|---|

| Software | PhenoPLIER Code | The main analysis pipeline and codebase. | GitHub: greenelab/phenoplier [37] |

| Docker Image | A containerized environment for reproducible analysis. | Docker Hub: miltondp/phenoplier [37] | |

| Data | MultiPLIER Model | Pre-computed latent space of gene modules from Recount3. | Included in PhenoPLIER setup [38] [37] |

| PhenomeXcan | A comprehensive resource of gene-trait associations for discovery. | Used in the original study [38] | |

| LINCS L1000 | Database of drug-induced transcriptional profiles. | Used for drug-repurposing analysis [38] | |

| Computing | High-Performance Workstation/Cluster | Runs analysis; requires substantial RAM and storage. | Minimum 32-64 GB RAM, ~1.2 TB storage [37] |

Advanced Configuration: Optimizing Covariance Estimation

Theoretical Context in High-Dimensional Research

PhenoPLIER's foundation lies in condensing high-dimensional gene expression data into a lower-dimensional latent space. This process is intrinsically linked to the challenge of covariance estimation. In high-dimensional settings where the number of features (genes) far exceeds the number of samples, traditional covariance estimators fail [5] [1].

The framework addresses this by using matrix factorization techniques (PLIER) that not only reconstruct the data but also enforce alignment with prior knowledge, effectively imposing a structure that guides the estimation of the underlying covariance patterns among genes [38]. This is crucial because gene-gene interactions form the basis of the co-expression modules.

Advanced methods like high-dimensional covariance regression are being developed to model how covariance matrices themselves change with subject-level covariates (e.g., genetic variants). This allows for the detection of co-expression Quantitative Trait Loci (co-expression QTLs)—genetic variants that affect the co-variance between genes, providing even deeper mechanistic insights [5].

Relationship Between Key Concepts

The following diagram illustrates the logical and computational relationships between high-dimensional data, covariance estimation, and the derivation of gene modules in frameworks like PhenoPLIER.

Troubleshooting Guides

Guide 1: Interpreting Unclear Results from Covariance-Based Co-Expression Networks

Problem: After constructing a gene co-expression network using a high-dimensional covariance matrix to identify toxicological pathways, the results are unclear or do not align with known biology.

Explanation & Solution: This often occurs when the estimated covariance matrix is noisy or unreliable, failing to accurately capture the true biological relationships. This is common when the number of genes (p) is large relative to the number of samples (n).

- Step 1: Verify Data Preprocessing. Ensure your gene expression data has been properly normalized to correct for technical biases like sequencing depth and gene length, which can severely distort covariance estimates [39].

- Step 2: Apply a Robust Covariance Estimator. The standard sample covariance matrix performs poorly in high dimensions. Replace it with a robust estimator designed for such settings.

- For general use: Consider a robust pilot estimator that satisfies concentration properties even when data are not sub-Gaussian, then apply adaptive thresholding to obtain a sparse, reliable matrix [40].

- For covariate-dependent analyses: If co-expression is believed to vary with subject-level covariates (e.g., genotype, dose), use a high-dimensional covariance regression framework. This models the covariance matrix as a function of covariates, which is crucial for identifying features like co-expression Quantitative Trait Loci (QTLs) [5].

- Step 3: Validate with Visualization. Use parallel coordinate plots to visually inspect the relationships between samples for your top candidate genes. Clean differential expression should show consistent patterns within treatment groups and inconsistent patterns between them. Messy lines may indicate poor signal-to-noise ratio [39].

Guide 2: Validating Target Engagement in a New Cellular Model

Problem: A chemical probe that is potent in biochemical assays shows no phenotypic effect in a new cellular model, leading to uncertainty about whether it engages its intended target.

Explanation & Solution: Lack of efficacy can stem from either invalid target biology or a failure to engage the target in the new cellular context. Measuring target engagement—the direct interaction between the small molecule and its protein target in a living system—is essential to distinguish between these possibilities [41].

- Step 1: Choose the Appropriate Method. Select a method based on your target protein and available tools.

- For Kinase Targets: Use chemoproteomic platforms like kinobeads or the KiNativ platform. These methods allow broad profiling of inhibitor-kinase interactions directly in native proteomes, revealing both on-target and off-target engagement [41].

- For Covalent Inhibitors: Use Activity-Based Protein Profiling (ABPP). Competitive ABPP with tagged covalent probes can directly measure target engagement in living cells [41].

- For Non-Covalent/Reversible Inhibitors: Use Cellular Thermal Shift Assay (CETSA) or photoaffinity labeling-based methods coupled with mass spectrometry to stabilize or capture probe-protein interactions [41].