Optimizing Parameters for Model Quality Assessment: Advanced Strategies for Biomedical Researchers

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing parameters for model quality assessment.

Optimizing Parameters for Model Quality Assessment: Advanced Strategies for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers, scientists, and drug development professionals on optimizing parameters for model quality assessment. It explores foundational principles from cutting-edge CASP16 evaluations, details methodological applications of machine learning and genetic algorithms, presents troubleshooting and optimization techniques for complex biological models, and establishes robust validation and comparative frameworks. By synthesizing the latest advancements, this resource aims to equip professionals with practical strategies to enhance the reliability, accuracy, and clinical utility of predictive models in biomedical research.

Foundations of Model Quality Assessment: Core Concepts and Evolving Standards

Defining Model Quality Assessment in Biomedical Research

In biomedical research, the assessment of model quality is not merely a technical checkpoint but a fundamental requirement for ensuring research validity, reproducibility, and eventual clinical translation. Whether working with magnetic resonance imaging (MRI) biomarkers, artificial intelligence algorithms, or sequence alignment tools, researchers face the common challenge of defining and measuring what constitutes a "high-quality" model within their specific domain. This technical support center addresses the multifaceted nature of model quality assessment across various biomedical research contexts, providing troubleshooting guidance and methodological frameworks to enhance the rigor and reliability of your research outputs.

Understanding Quality Dimensions Across Biomedical Models

Table 1: Key Quality Dimensions Across Biomedical Research Models

| Quality Dimension | Imaging & Biomarkers (MRI) | AI/ML Models | Sequence Alignment | Biological Drugs |

|---|---|---|---|---|

| Accuracy | Biological relevance, measurement precision [1] | Factual correctness, reduction of hallucinations [2] [3] | Reference standard comparison, TM-score [4] | Therapeutic potency, identity confirmation [5] |

| Robustness | Scanner/platform reproducibility [1] | Generalization across datasets [2] | Parameter sensitivity, gap penalty stability [6] | Batch-to-batch consistency [5] |

| Reproducibility | Harmonization across sites/vendors [1] | Code sharing, reproducibility checklists [1] [7] | Overlap score, multiple overlap score [6] | Manufacturing process control [5] |

| Interpretability | Well-characterized confounds [1] | Explainability, transparency [8] | Alignment visualization [6] | Impurity profiling, characterization [5] |

| Technical Validation | Phantom studies, traveling-heads [1] | Benchmarking, qualitative error analysis [2] | Statistical testing, reference alignment [4] | Safety testing, stability studies [5] |

Troubleshooting Guides

Common Problem: Poor Model Generalization Across Datasets

Issue: Your model performs well on training data but fails to generalize to external validation sets or real-world clinical data.

Diagnosis Steps:

- Assess Dataset Similarity: Evaluate distribution shifts between training and validation datasets using statistical distance measures [2]

- Check for Data Leakage: Ensure no patient overlap or temporal contamination exists between sets

- Analyze Failure Patterns: Categorize errors by data source, patient demographics, or acquisition parameters [1]

Solutions:

- Implement domain adaptation techniques or harmonization strategies to align different datasets [1]

- Apply rigorous cross-validation with appropriate grouping factors (e.g., by medical center)

- Use data augmentation strategies that mimic real-world variability [8]

Prevention:

- Follow the METRICS checklist for comprehensive study design and reporting [7]

- Collect diverse, representative training data from multiple sources

- Establish prospective validation protocols before model deployment

Common Problem: Inconsistent Results Across Experimental Replicates

Issue: Your model or assay produces variable outcomes when repeated under apparently identical conditions.

Diagnosis Steps:

- Quantify Variability: Calculate coefficient of variation or intra-class correlation coefficients

- Identify Variation Sources: Distinguish technical from biological variability using variance component analysis

- Audit Procedural Consistency: Review protocol adherence across personnel and sessions

Solutions:

- Implement standardized operating procedures with detailed quality control checkpoints [1]

- Introduce reference standards or controls in each experimental batch [5]

- Apply statistical harmonization methods to adjust for batch effects [1]

Prevention:

- Establish robust training and certification for all personnel

- Maintain detailed documentation of all procedural details and environmental conditions

- Implement automated quality control pipelines with predefined acceptability criteria [1]

Common Problem: Unexplainable or Clinically Implausible Model Outputs

Issue: Your model generates predictions that lack biological plausibility or cannot be explained in terms of domain knowledge.

Diagnosis Steps:

- Perform Ablation Studies: Identify which input features most drive predictions

- Conduct Domain Expert Review: Have clinicians or biologists assess face validity of outputs

- Check for Confounding: Evaluate whether models are leveraging spurious correlations

Solutions:

- Incorporate domain knowledge constraints during model development [8]

- Implement explainable AI techniques that provide insight into decision processes [8]

- Use adversarial validation to detect dataset-specific artifacts

Prevention:

- Involve domain experts throughout model development, not just at validation stage

- Prioritize interpretable models when possible, balancing performance with explainability

- Conduct thorough literature reviews to ensure biological plausibility of findings

Experimental Protocols for Quality Assessment

Protocol 1: Comprehensive Model Validation Across Multiple Datasets

Purpose: To establish model robustness and generalizability across diverse patient populations and data acquisition conditions.

Materials:

- Primary training dataset

- At least three independent validation datasets from different sources

- Computing infrastructure for model training and evaluation

- Statistical analysis software (R, Python, etc.)

Procedure:

- Data Characterization: Document key characteristics of each dataset (demographics, acquisition parameters, clinical protocols) [1]

- Blinded Evaluation: Apply trained model to validation sets without outcome labels visible

- Performance Quantification: Calculate relevant metrics (accuracy, AUC, calibration measures) for each dataset separately

- Stratified Analysis: Evaluate performance across clinically relevant subgroups (e.g., by disease severity, age, sex)

- Statistical Comparison: Test for significant performance differences across datasets using appropriate methods (e.g., DeLong's test for AUC comparisons)

- Qualitative Assessment: Have domain experts review model outputs for plausibility and clinical relevance [2]

Quality Control:

- Predefine acceptable performance thresholds for each dataset

- Document all data preprocessing steps consistently across datasets

- Maintain version control for both model code and evaluation scripts

Protocol 2: Inter-Rater Reliability Assessment for Qualitative Evaluations

Purpose: To establish consistency and objectivity when human evaluation is required for model output assessment.

Materials:

- Set of model outputs for evaluation (minimum n=50 recommended)

- At least three independent raters with appropriate domain expertise

- Structured evaluation rubric with clearly defined criteria

- Statistical software for reliability calculations

Procedure:

- Rater Training: Conduct standardized training session using examples not included in formal assessment

- Independent Rating: Each rater evaluates all outputs independently using the structured rubric

- Data Collection: Record ratings systematically with timestamps and rater identifiers

- Reliability Calculation: Compute inter-rater reliability statistics (Cohen's κ, ICC, or Fleiss' κ depending on design) [7]

- Consensus Meeting: Discuss discrepant ratings to identify sources of disagreement

- Rubric Refinement: Modify evaluation criteria based on consensus discussions to improve future reliability

Quality Control:

- Establish minimum reliability thresholds before study initiation (e.g., κ > 0.6)

- Monitor for rater drift over time with periodic recalibration

- Document all consensus decisions and rubric modifications

Frequently Asked Questions

Q: How many datasets do I need to properly validate my model's generalizability?

A: While there's no universal number, current best practices suggest at least three independent datasets from different sources (e.g., different medical centers, patient populations, or acquisition protocols) [1]. The key is demonstrating consistent performance across clinically relevant variations that your model would encounter in real-world deployment.

Q: What should I do when my quantitative metrics look good but domain experts question the model's outputs?

A: This discrepancy often indicates that your evaluation metrics may not capture important domain-specific considerations. Prioritize expert feedback over metric optimization in such cases. Implement explainability techniques to understand model behavior, and consider refining your model to incorporate domain knowledge constraints or biological plausibility checks [8].

Q: How can I assess quality when no gold standard reference exists?

A: In absence of gold standards, employ consensus approaches with multiple experts, use surrogate outcomes with established validity, or implement cross-validation strategies that leverage the available data most effectively. For sequence alignment, tools like MUMSA use consensus across multiple alignment methods as a proxy for biological accuracy [6].

Q: What are the most critical components to document for model reproducibility?

A: The METRICS checklist provides a comprehensive framework covering: Model used and exact settings, Evaluation approach, Timing of testing, Transparency of data source, Range of tested topics, Randomization of query selection, Individual factors in query selection, Count of queries executed, and Specificity of prompts and language used [7].

Q: How do I balance between model performance and interpretability in biomedical applications?

A: The appropriate balance depends on the specific application context. For high-stakes clinical decision support, favor interpretability even at some performance cost. For exploratory research, more complex models may be acceptable if coupled with robust validation. Consider hybrid approaches that combine interpretable components with high-performance algorithms where needed [8].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Quality Assessment Experiments

| Item | Function in Quality Assessment | Example Applications | Quality Considerations |

|---|---|---|---|

| Reference Phantoms | Standardized objects with known properties for instrument calibration [1] | MRI scanner validation, assay calibration | Stability, traceability to reference standards |

| Benchmark Datasets | Pre-validated datasets for model comparison and benchmarking [2] [4] | Algorithm validation, performance claims | Composition documentation, pre-defined train/test splits |

| Statistical Harmonization Tools | Methods to adjust for technical variability across sites or batches [1] | Multi-center studies, batch effect correction | Transparency of assumptions, parameter sensitivity |

| Adversarial Validation Sets | Intentionally challenging cases to test model limitations [2] | Robustness assessment, failure mode analysis | Representative of edge cases, clinical relevance |

| Explainability Toolkits | Software libraries for model interpretation and visualization [8] | Understanding model decisions, feature importance | Methodological appropriateness, visual clarity |

| Version Control Systems | Tracking of code, data, and model versions for reproducibility [1] | Experimental documentation, collaboration | Consistent use across team, integration with data management |

Advanced Quality Assessment Framework

Key Recommendations for Implementation

Define Quality Context-Specifically: Recognize that quality requirements differ based on application context—what qualifies as high-quality for biomarker discovery may differ from clinical triage applications [1].

Implement Continuous Monitoring: Quality assessment should not be a one-time event but rather an ongoing process throughout the model lifecycle, with regular re-evaluation as data distributions and application contexts evolve [8].

Engage Multidisciplinary Teams: Include domain experts, statisticians, clinical end-users, and sometimes even patients in defining quality criteria and evaluation processes to ensure all relevant perspectives are considered [8].

Document Transparently: Maintain comprehensive documentation of all quality assessment procedures, results, and limitations to enable proper interpretation and reproducibility of your findings [7].

Plan for Failures: Develop protocols for responding to quality assessment failures, including model retraining, refinement, or in some cases, decommissioning when quality standards cannot be maintained.

Frequently Asked Questions (FAQs)

General Concepts

Q1: What is the difference between global accuracy and local confidence in predictive models? Global accuracy assesses the overall correctness of a model's predictions across an entire dataset, while local confidence provides granular, per-prediction reliability estimates. In drug discovery, global metrics like accuracy can be misleading with imbalanced data, whereas local confidence measures help identify specific predictions that can be trusted.

Q2: Why are traditional metrics like accuracy insufficient for drug discovery models? Traditional metrics often fail because drug discovery datasets are typically imbalanced, with far more inactive compounds than active ones. A model can achieve high accuracy by always predicting "inactive" while missing all active compounds, which are the primary targets. Domain-specific metrics like precision-at-K and rare event sensitivity are better suited.

Q3: How can I incorporate confidence estimates into my decision-making process? Utilize models that provide uncertainty estimates and integrate them into a probabilistic framework. For example, compare the confidence intervals of predictions against your project's success criteria to calculate the probability that a compound will meet your thresholds, rather than relying solely on a single predicted value.

Technical Implementation

Q4: What methodologies can improve local confidence measures in protein complex prediction? Advanced pipelines like DeepSCFold use sequence-based deep learning to predict protein-protein structural similarity (pSS-score) and interaction probability (pIA-score). These provide a foundation for constructing deep paired multiple-sequence alignments, significantly enhancing interface accuracy in complex structure modeling.

Q5: How do I evaluate machine learning models for drug-target interaction (DTI) prediction? Beyond standard metrics, employ confidence measures based on causal intervention. This technique modifies embedding representations and re-scores drug-target triplets, improving the authenticity and accuracy of link predictions in knowledge graphs compared to traditional ranking methods.

Troubleshooting Guides

Poor Global Performance

Problem: Your model shows high global accuracy but fails to identify active compounds in validation experiments.

Solution:

- Step 1: Check your dataset balance. Calculate the ratio of active to inactive compounds.

- Step 2: Switch to domain-specific metrics. Implement precision-at-K to evaluate performance on top-ranked predictions.

- Step 3: Optimize for rare event sensitivity if searching for low-frequency active compounds.

- Step 4: Rebalance your training data or use weighted loss functions to address class imbalance.

Unreliable Local Confidence Estimates

Problem: Confidence scores for individual predictions don't correlate with actual error rates.

Solution:

- Step 1: Implement calibration techniques to ensure confidence scores reflect true probabilities.

- Step 2: Use causal intervention methods for knowledge graph embeddings, which actively intervene on input vectors to assess robustness.

- Step 3: Define applicability domains to identify when your model is extrapolating beyond its reliable knowledge space.

- Step 4: Incorporate multiple uncertainty sources, including model uncertainty and data noise.

Inadequate Protein Complex Interface Prediction

Problem: Your protein complex models have good global structure but poor interface accuracy.

Solution:

- Step 1: Move beyond sequence co-evolution. Integrate structure-aware information using methods that predict structural complementarity.

- Step 2: Construct better paired multiple sequence alignments using both sequence similarity and predicted interaction probabilities.

- Step 3: Focus evaluation on interface-specific metrics like interface TM-score rather than global quality measures.

- Step 4: Utilize specialized model quality assessment methods like DeepUMQA-X for complex structures.

Key Metrics and Parameters Tables

Classification Metrics for Drug Discovery

Table 1: Comparison of evaluation metrics for classification models in drug discovery

| Metric | Calculation | Optimal Use Cases | Limitations |

|---|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Balanced datasets, preliminary screening | Misleading with imbalanced data |

| Precision-at-K | TP among top K predictions / K | Virtual screening, lead prioritization | Doesn't evaluate full ranking |

| Rare Event Sensitivity | TP/(TP+FN) for rare class | Toxicity prediction, rare disease targets | Requires careful threshold setting |

| F1 Score | 2×(Precision×Recall)/(Precision+Recall) | Balanced importance of precision and recall | May dilute focus on critical predictions |

| Pathway Impact Metrics | Enrichment in relevant biological pathways | Target validation, mechanism understanding | Requires comprehensive pathway databases |

Regression and Structure Prediction Metrics

Table 2: Key metrics for regression and structure prediction models

| Metric | Application | Interpretation | Ideal Range |

|---|---|---|---|

| TM-score | Protein structure prediction | Measures structural similarity (0-1 scale) | >0.5: correct fold >0.8: high accuracy |

| pLDDT | Per-residue confidence (AlphaFold) | Local Distance Difference Test (0-100 scale) | >90: high confidence <50: low confidence |

| Interface RMSD | Protein complex prediction | Root Mean Square Deviation at binding interface | Lower values indicate better prediction |

| Enrichment Factor | Virtual screening | Fold-enrichment of actives in top ranked compounds | Higher values indicate better performance |

Experimental Protocols

Protocol 1: Implementing Causal Intervention for DTI Prediction Confidence

Purpose: Enhance confidence measurement in drug-target interaction prediction using knowledge graph embeddings with causal intervention.

Materials:

- Knowledge graph datasets (Hetionet, BioKG, or DRKG)

- KGE models (TransR, HolE, TuckER, etc.)

- Causal intervention confidence measurement algorithm

Methodology:

- Knowledge Graph Embedding Training

- Train selected KGE models on your drug-target dataset using standard parameters

- For high-dimensional models, set embedding dimension to 200

- For low-dimensional models, set embedding dimension to 50

Causal Intervention Implementation

- For each drug-target pair, intervene on the embedding representation

- Replace entity embeddings with top-K most similar entities (K=3,5,10,100,200,300)

- Reconstruct new triplets with intervened entities

Confidence Score Calculation

- Compute new scores for intervened triplets

- Derive final confidence through consistency calculation

- Compare with traditional confidence measures

Validation

- Evaluate using established DTI benchmarks

- Measure improvement in prediction authenticity and accuracy

Protocol 2: Protein Complex Structure Modeling with DeepSCFold

Purpose: Implement high-accuracy protein complex structure prediction with enhanced local confidence estimates.

Materials:

- Monomeric protein sequences for complex components

- Multiple sequence databases (UniRef30, UniRef90, UniProt, Metaclust, BFD, MGnify, ColabFold DB)

- DeepSCFold pipeline

- AlphaFold-Multimer for structure prediction

Methodology:

- Monomeric MSA Generation

- Generate individual MSAs for each protein chain from multiple sequence databases

- Use standard tools (HHblits, Jackhammer, MMseqs) with default parameters

Structural Similarity and Interaction Probability Prediction

- Apply pSS-score model to rank homologs by structural similarity to query

- Apply pIA-score model to predict interaction probabilities between chains

- Integrate species annotations and known complex data from PDB

Paired MSA Construction

- Combine monomeric MSAs using structural similarity and interaction probability

- Create multiple paired MSA variants for comprehensive sampling

Complex Structure Prediction and Selection

- Run AlphaFold-Multimer with constructed paired MSAs

- Select top-1 model using DeepUMQA-X quality assessment

- Use selected model as template for final iteration

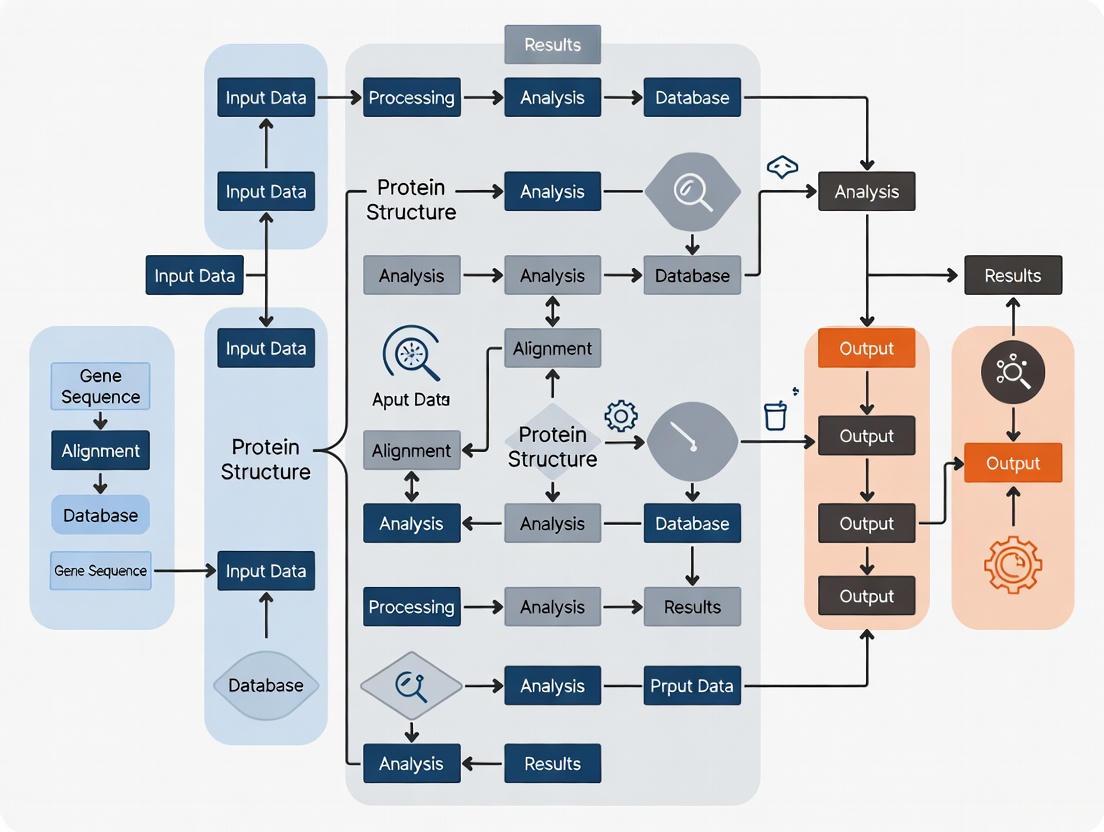

Workflow and Relationship Diagrams

Model Quality Assessment Workflow

Confidence Measurement Comparison

Research Reagent Solutions

Table 3: Essential computational tools and resources for model quality assessment

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| AlphaFold-Multimer | Software | Protein complex structure prediction | Predicting quaternary structures of protein assemblies |

| DeepSCFold | Pipeline | Enhanced complex modeling with structural complementarity | When standard methods lack co-evolution signals |

| Causal Intervention CI | Algorithm | Confidence measurement for knowledge graphs | Drug-target interaction prediction verification |

| ESMPair | Tool | Paired MSA construction using protein language models | Capturing inter-chain co-evolutionary information |

| StarDrop | Software | Multi-parameter optimization with uncertainty | Compound prioritization with confidence estimates |

| MOE (Molecular Operating Environment) | Platform | Comprehensive molecular modeling and QSAR | Structure-based drug design and ADMET prediction |

Frequently Asked Questions (FAQs) on CASP16 Framework and Complex Assembly Challenges

Q1: What were the primary evaluation modes introduced in CASP16 for assessing model quality? CASP16 expanded its evaluation framework with three primary modes to rigorously assess model accuracy, especially for multimeric assemblies [9]:

- QMODE1: Assessed the global accuracy of the entire structure.

- QMODE2: Focused on the local accuracy of interface residues between subunits.

- QMODE3: Tested model selection performance from large-scale pools of pre-generated models, such as those created by MassiveFold. A novel penalty-based ranking scheme was developed to handle interdependent scores and varying quality distributions [9].

Q2: Which target types remained the most challenging in CASP16, and why? Antibody-antigen (AA) complexes were notably the most challenging targets [10] [11]. The primary difficulty stems from the lack of co-evolutionary signals across the protein-protein interfaces, which AlphaFold-based methods heavily rely on [11]. This challenge is pronounced in host-pathogen interactions, where the evolutionary history of interaction is shorter, further limiting these signals [11].

Q3: What was the key bottleneck in prediction pipelines identified in CASP16? Model ranking and selection emerged as a major bottleneck [10] [11]. While top groups could generate high-accuracy models through massive sampling, most struggled to identify their best model as their first (top-ranked) submission. The performance in model selection varied significantly across monomeric, homomeric, and heteromeric targets, highlighting the ongoing challenge for complex assemblies [9].

Q4: Did CASP16 demonstrate progress in predicting complex stoichiometry? CASP16 introduced a Phase 0 experiment that required predictors to predict protein complex structures without prior knowledge of the stoichiometry [10] [11]. The results indicated moderate success; however, stoichiometry prediction remains particularly challenging for high-order assemblies and targets that lack homologous templates in the database [10] [11].

Q5: How did traditional docking methods fare against deep learning approaches in CASP16? Notably, the kozakovvajda group significantly outperformed other methods on challenging antibody-antigen targets by achieving over a 60% success rate without primarily relying on AlphaFold-Multimer (AFM) or AlphaFold3 (AF3) [10] [11] [12]. They employed a traditional protein-protein docking approach coupled with extensive sampling and integration of machine learning with physics-based knowledge, demonstrating that alternative strategies beyond the current AF-based paradigm are highly promising for specific target classes [11] [12].

Troubleshooting Guides for Model Quality Assessment

Guide 1: Improving Model Selection (QMODE3 Performance)

Problem: Inability to consistently select the highest-quality model from a large pool of decoys, leading to suboptimal first model submissions.

Solutions:

- Incorporate AlphaFold3-Derived Features: Results showed that methods using per-atom pLDDT from AlphaFold3 performed best in estimating local accuracy and demonstrated high utility for experimental structure solution [9].

- Implement Advanced Ranking Schemes: Adopt penalty-based ranking methodologies similar to those developed for CASP16's QMODE3 assessment, which are designed to handle score interdependence and varying prediction quality distributions across different target types [9].

- Analyze Pipeline Efficacy: Review the model ranking pipeline of top-performing groups like PEZYFoldings, which demonstrated a notable advantage in selecting their best models as their first models [10] [11].

Guide 2: Handling Targets with Weak Co-evolutionary Signals

Problem: Poor prediction accuracy for complexes like antibody-antigen or host-pathogen interactions, where interface co-evolution is minimal.

Solutions:

- Hybrid Sampling Strategy: Do not rely exclusively on AFM/AF3. For AA targets, complement deep learning with traditional docking approaches and massive sampling, as proven by the success of the kozakovvajda group [11] [12].

- Optimize Modeling Constructs: Follow the strategy of leading groups like the Yang lab, which refined the "constructs" (using partial rather than full sequences) for modeling, thereby improving input quality for the prediction engine [11].

- Leverage Phase 2 Resources: For resource-limited groups, utilize large pre-computed model pools like the MassiveFold (MF) dataset provided in CASP16 Phase 2, which contained thousands of models per target to enable large-scale sampling and selection even without extensive computing resources [10] [11].

Guide 3: Achieving High Accuracy with Default AFM/AF3

Problem: Default runs of AlphaFold-Multimer or AlphaFold3 do not yield optimal results for complex targets.

Solutions:

- Optimize Input MSAs: Top-performing groups consistently outperformed default AFM/AF3 predictions by curating and optimizing input Multiple Sequence Alignments (MSAs) [10] [11].

- Employ Massive Model Sampling: Generate a large number of models (massive sampling) and then apply rigorous selection criteria. This was a key strategy used by top performers in both CASP15 and CASP16 [10] [11].

- Refine Construct Strategy: Carefully select the protein segments (constructs) used for modeling. Using partial sequences rather than full sequences can significantly enhance the final model accuracy [11].

Quantitative Data from CASP16 Assessment

Table 1: Performance of Leading Groups in CASP16 Oligomer Prediction

| Group Name | Core Modeling Engine | Key Strategy | Notable Achievement / Success Rate |

|---|---|---|---|

| MULTICOM series & Kiharalab | AlphaFold-Multimer (AFM) / AlphaFold3 (AF3) | Optimized MSAs, massive sampling, construct refinement | Top performers based on best model quality across all phases [10] [11] |

| kozakovvajda | Traditional protein-protein docking (non-AFM/AF3 primary) | Extensive sampling, machine learning integrated with physics | >60% success rate on antibody-antigen targets [10] [11] [12] |

| PEZYFoldings | AFM/AF3 | Superior model ranking pipeline | Demonstrated notable advantage in selecting best model as first model [10] [11] |

| Yang-Multimer | AFM/AF3 | Refined modeling constructs | Performance lead more pronounced when evaluating first submitted models [10] [11] |

| AF3-server | AlphaFold3 (via web server) | Default AF3 server parameters | Provided baseline performance; many participants outperformed it with optimized pipelines [11] |

Table 2: CASP16 Model Quality Assessment (MQA) Evaluation Metrics

| Evaluation Mode | Scope of Assessment | Primary Metric / Challenge | Key Insight from CASP16 |

|---|---|---|---|

| QMODE1 | Global structure accuracy | Overall fold similarity [9] | - |

| QMODE2 | Interface residue accuracy | Accuracy of interfacial contacts [9] | - |

| QMODE3 | Model selection from large pools | Penalty-based ranking for score interdependence [9] | Performance varied significantly across monomeric, homomeric, and heteromeric targets [9] |

Experimental Protocols for Key CASP16 Experiments

Protocol 1: Phase 0 – Stoichiometry Prediction Experiment

Objective: To predict the complete structure of a protein complex without prior knowledge of its subunit stoichiometry [11].

Methodology:

- Input: Amino acid sequences of the constituent protein chains without stoichiometric information [11].

- Prediction: Participants submit quaternary structure models that include their predicted stoichiometry.

- Assessment: Predictions are evaluated on both the correctness of the assembled stoichiometry and the accuracy of the three-dimensional structural model [11].

Interpretation: This experiment tests the pipeline's ability to infer quaternary structure from sequence alone, a common scenario in biological research. Success in this phase indicates a more powerful and autonomous modeling system [11].

Protocol 2: Phase 2 – Large-Scale Model Selection Experiment

Objective: To assess the ability to identify high-quality models from a massive pool of pre-generated candidate structures [9] [11].

Methodology:

- Input Provision: Participants are provided with a large pool of predicted structures (e.g., 8,040 models per target) generated by MassiveFold (MF) [11].

- Selection Task: Predictors run their Quality Assessment (QA) tools to select and rank the best models from this pool.

- Evaluation (QMODE3): Submissions are evaluated using a novel penalty-based ranking scheme that accounts for the interdependence of scores and the distribution of model quality within the pool. The focus is on the ability to select the best models from thousands of alternatives [9].

Interpretation: This protocol is designed to accelerate progress in model selection methods, a major bottleneck. It allows resource-limited groups to focus on developing advanced QA algorithms without needing massive computing power for sampling [11].

Experimental Workflow and Signaling Pathways

Research Reagent Solutions

| Tool / Resource Name | Function in Experiment | Application Note |

|---|---|---|

| AlphaFold-Multimer (AFM) | Core engine for protein complex structure prediction [10] [11] | Default performance was significantly outperformed by top groups who optimized its inputs and conducted massive sampling [10]. |

| AlphaFold3 (AF3) | Core engine for predicting complexes of proteins, DNA, RNA, and ligands [10] [11] | Reduced MSA dependence is promising for targets like antibody-antigen complexes. Provides per-atom pLDDT for local confidence estimation [9] [11]. |

| MassiveFold (MF) | Generated large pools of models (e.g., 8,040/target) for community use [11] | Enabled resource-limited groups to participate in large-scale sampling and focus on model selection in Phase 2 [11]. |

| ColabFold | Provides standardized multiple sequence alignments (MSAs) [11] | Used in the "Model 6" experiment to isolate the effect of MSA quality from other methodological advances [11]. |

| kozakovvajda Docking Pipeline | Traditional protein-protein docking with extensive sampling [11] [12] | Demonstrated superior performance on antibody-antigen targets, integrating machine learning with physics-based sampling [12]. |

| PEZYFoldings QA Pipeline | Model Quality Assessment and Ranking [10] [11] | Identified as having a notable advantage in selecting the best model as the first model, a key bottleneck for others [10]. |

The Critical Role of pLDDT and AlphaFold3-Derived Features in Modern Assessment

Frequently Asked Questions (FAQs)

Q1: What does the pLDDT score measure in AlphaFold 3, and how should I interpret its values?

A1: The pLDDT (predicted Local Distance Difference Test) is a per-atom estimate of AlphaFold 3's confidence in its structural prediction, scaled from 0 to 100 [13]. Higher scores indicate higher expected accuracy. Unlike AlphaFold 2, which calculated pLDDT per amino acid residue, AlphaFold 3 provides this score for every individual atom, offering more granular insight for all molecule types in a complex, including proteins, nucleotides, and ligands [13]. The scores are typically interpreted using the following scale:

Table: Interpretation of pLDDT Confidence Scores

| pLDDT Score Range | Confidence Level | Typical Structural Interpretation |

|---|---|---|

| > 90 | Very High | High backbone and side chain accuracy [14] |

| 70 - 90 | Confident | Correct backbone, potential side chain misplacement [14] |

| 50 - 70 | Low | Low confidence; may be unstructured or incorrect [14] |

| < 50 | Very Low | Very likely to be incorrect [14] [13] |

Low pLDDT scores can indicate two main scenarios: either the region is naturally flexible or intrinsically disordered, or AlphaFold 3 lacks sufficient information to predict it with confidence [14].

Q2: My AlphaFold 3 prediction has a region with very low pLDDT (<50) that looks like tangled "barbed wire." What does this mean?

A2: This "barbed wire" appearance is a recognized behavior in low-confidence regions [15]. It is characterized by wide looping coils, an absence of packing contacts, and numerous validation outliers, indicating a non-protein-like conformation [15]. In such regions, the atomic coordinates are considered non-predictive, meaning they have no meaningful relationship to the true biological structure. For downstream structural biology tasks, such as preparing models for molecular replacement, these regions should be removed [15].

Q3: How do I assess the confidence of predicted interactions within a complex using AlphaFold 3?

A3: For complexes, metrics that evaluate relative positioning are more informative than global scores. AlphaFold 3 provides several key confidence scores for this purpose [13]:

- Predicted Aligned Error (PAE): This measures the confidence in the relative position of any two tokens (e.g., residues, atoms) in the structure [13]. High PAE values indicate low confidence in their relative placement. A PAE plot is essential for visualizing interaction confidence. Low PAE between different molecules (e.g., a protein and a ligand) suggests a stable, confidently predicted interaction [13].

- ipTM and pairwise ipTM: The interface pTM (ipTM) score measures the precision of each entity's prediction within the context of the whole complex. A pairwise ipTM score above 0.8 indicates a confidently predicted interaction between two specific molecules [13].

- pTM: This assesses the accuracy of the overall complex structure. However, it is less useful for small structures or short chains, where PAE and pLDDT are better indicators [13].

Q4: A region of my protein has a medium pLDDT score (e.g., 60) but appears well-structured. Is this region usable?

A4: Potentially, yes. Beyond the pLDDT score, it is crucial to examine the local structure and packing. Research has identified a "near-predictive" mode within some low-pLDDT regions, where the conformation can be nearly accurate and useful for applications like molecular replacement [15]. You can use tools like phenix.barbed_wire_analysis to automatically categorize regions of an AlphaFold prediction based on pLDDT, packing scores, and MolProbity validation metrics to identify these valuable near-predictive segments [15].

Troubleshooting Guide

Problem 1: Over-interpreting low-confidence regions as structured domains.

- Symptoms: Relying on the Cartesian coordinates of regions with pLDDT < 50 for functional analysis or as molecular replacement search models.

- Solutions:

- Categorize low-pLDDT behavior: Use analysis tools (e.g.,

phenix.barbed_wire_analysis) to distinguish between non-predictive "barbed wire," intermediate "pseudostructure," and potentially useful "near-predictive" regions [15]. - Validate with packing metrics: Near-predictive regions will have adequate packing contacts. For helix and coil residues, a score of >0.6 contacts per heavy atom is considered adequately packed [15].

- Cross-reference with disorder databases: Compare low-pLDDT regions with annotations in databases like MobiDB to determine if the region is a known intrinsically disordered region (IDR) [15].

- Categorize low-pLDDT behavior: Use analysis tools (e.g.,

Problem 2: Misjudging protein-ligand or protein-nucleic acid interaction confidence.

- Symptoms:

- Assuming a ligand's position is correct based solely on its proximity to the protein in the predicted model.

- Being unsure if two proteins in a complex truly interact.

- Solutions:

- Analyze the PAE plot: Focus on the PAE between the molecules of interest. Low PAE values (indicating high confidence) between a protein and a ligand, or between two protein chains, strongly suggest a predicted interaction [13].

- Check the pairwise ipTM score: For protein-protein interactions within a complex, a pairwise ipTM score > 0.8 indicates high confidence in the interaction interface [13].

- Examine ligand pLDDT: AlphaFold 3 provides a pLDDT score for every ligand atom, which estimates the confidence in its position relative to the polymers in the complex [13].

Problem 3: Poor overall complex model confidence scores (low pTM/ipTM) due to flexible regions.

- Symptoms: The overall pTM and ipTM scores for a complex are low (e.g., pTM < 0.5, ipTM < 0.6), making the entire prediction seem unreliable.

- Solutions:

- Inspect the PAE for ordered sub-regions: Disordered regions can drive down global scores. Carefully examine the PAE plot between the well-ordered (high pLDDT) parts of the macromolecules. If the PAE between these ordered parts is low, it suggests their relative orientation is confidently predicted, regardless of the low overall scores [13].

- Consider biological context: The flexible regions might be biologically relevant linkers or intrinsically disordered regions that do not adopt a fixed structure [14].

Experimental Protocols for Confidence Metric Validation

Protocol 1: Systematic Analysis of Low-pLDDT Regions using phenix.barbed_wire_analysis

This protocol helps characterize the behavior of low-confidence regions in AlphaFold predictions, as described in [15].

- Input Preparation: Obtain your AlphaFold-predicted structure in PDB or mmCIF format. Ensure the pLDDT values are stored in the B-factor column (this is the standard AlphaFold output).

- Tool Execution: Run the

phenix.barbed_wire_analysistool on the structure file. The tool performs the following steps automatically:- Adds hydrogens to the structure using

Reduce. - Performs contact analysis with

Probeto calculate packing scores. - Identifies secondary structure elements.

- Runs MolProbity validations (Ramachandran, CaBLAM, rotamers, etc.).

- Adds hydrogens to the structure using

- Output Interpretation: The tool categorizes each residue into one of several behavioral modes:

- High-confidence (pLDDT ≥ 70): Usable for most structural biology applications.

- Near-predictive (pLDDT < 70): Resembles folded protein and may be a nearly accurate prediction. Identified by adequate packing and fewer validation outliers.

- Pseudostructure (pLDDT < 70): An intermediate behavior with misleading, ill-formed secondary-structure-like elements.

- Barbed Wire (pLDDT < 70): Extremely non-protein-like, characterized by a high density of validation outliers and no packing contacts. These regions should be removed for downstream tasks.

- Downstream Application: Use the tool's output to create a pruned structure file containing only high-confidence and near-predictive residues for use in molecular replacement or as a refined starting model.

The workflow for this analysis is summarized in the following diagram:

Protocol 2: Validating Protein-Protein Interactions in a Complex

This protocol uses AlphaFold 3's confidence metrics to evaluate the reliability of a predicted binary protein complex.

- Run AlphaFold 3: Generate the structural model of the complex.

- Extract Confidence Metrics: From the output, collect the following data:

- The pLDDT scores for each chain to assess local model quality.

- The PAE plot, which is a 2D matrix showing the predicted error in the relative position of every residue (or token) pair.

- The pairwise ipTM score for the two protein chains.

- Analyze the Pairwise ipTM: A score above 0.8 indicates a confidently predicted interaction between the two proteins [13].

- Interpret the PAE Matrix: Examine the block of the PAE matrix that corresponds to the interaction between the two proteins. Consistently low PAE values (darker colors in the plot) across the interface indicate high confidence in the relative orientation of the two subunits. High PAE would suggest the relative orientation is uncertain.

- Triangulate Evidence: A confident interaction is supported by a combination of a high pairwise ipTM score and a low-PAE interface between the two protein chains.

Table: Key Resources for AlphaFold3 Assessment and Troubleshooting

| Resource Name | Type | Primary Function | Relevance to Assessment |

|---|---|---|---|

| AlphaFold Protein Structure Database (AFDB) [15] | Database | Repository of pre-computed AlphaFold predictions for proteomes. | Provides a large-scale dataset for surveying prediction behaviors and validating findings. |

| MobiDB [15] | Database | Curated database of protein disorder annotations. | Allows cross-referencing of low-pLDDT regions with known intrinsically disordered regions (IDRs). |

Phenix Software Suite (specifically phenix.barbed_wire_analysis) [15] |

Software Tool | Automates the categorization of AlphaFold predictions into behavioral modes (e.g., Barbed Wire, Near-Predictive). | Critical for identifying which low-pLDDT regions may still have predictive value. |

| MolProbity [15] | Software Tool | Provides comprehensive structure validation, including Ramachandran, rotamer, and clash analysis. | Generates objective metrics to complement pLDDT and identify non-protein-like geometry. |

| Protein Data Bank (PDB) [16] | Database | Archive of experimentally determined 3D structures of proteins and nucleic acids. | Serves as the source of "ground truth" for validating and training structure prediction models. |

| EQAFold [17] | Method/Algorithm | An enhanced framework that refines AlphaFold's pLDDT prediction head for more accurate self-confidence scores. | Represents a cutting-edge approach to improving the reliability of confidence metrics themselves. |

Frequently Asked Questions (FAQs)

Q1: What are the primary evaluation modes used in CASP16 for model quality assessment? CASP16 employed three main evaluation modes (QMODE) to assess the accuracy of protein structure models. QMODE1 focused on estimating the global structure accuracy of the entire model. QMODE2 shifted the focus to the accuracy specifically at the interface residues of multimeric assemblies. QMODE3 was a novel mode designed to test the performance of methods in selecting high-quality models from large pools of AlphaFold2-derived models generated by MassiveFold [9].

Q2: Why has the research focus in model accuracy estimation shifted towards protein complexes? The focus has shifted because of the dramatic success of AlphaFold2 in accurately predicting single-domain protein (monomer) structures. With the problem of monomer structure prediction largely considered solved, the importance of assessing single-domain model quality has decreased. The field's new frontier and primary challenge is now the estimation of model accuracy for protein complexes, which is crucial for understanding cellular function [18].

Q3: What was a key methodological advancement in CASP16's QMODE3 evaluation? A key advancement for the QMODE3 evaluation in CASP16 was the development and implementation of a novel penalty-based ranking scheme. This new scheme was specifically designed to handle the challenges of score interdependence and the varying distributions of prediction quality across different models [9].

Q4: Which methods performed best in the CASP16 assessment? The results from CASP16 showed that methods which incorporated features derived from AlphaFold3 were the top performers. In particular, the use of per-atom pLDDT scores was highly effective for estimating local accuracy. These methods also demonstrated high utility for experimental structure solution workflows [9].

Q5: What are the main challenges in estimating the accuracy of protein complex models? Current challenges in the field are multifaceted and can be categorized into four distinct facets: generating accurate Topology Global Scores, reliable Interface Total Scores, precise Interface Residue-Wise Scores, and trustworthy Tertiary Residue-Wise Scores [18].

Troubleshooting Common Experimental Issues

Issue 1: Poor performance in selecting high-quality models from a large pool (QMODE3).

- Problem: Your method struggles to identify the best models from a large set of pre-generated predictions (e.g., from MassiveFold).

- Solution:

- Incorporate AlphaFold3 Features: Integrate per-atom confidence measures (pLDDT) from AlphaFold3, which were a key differentiator for top-performing methods in CASP16 [9].

- Implement Advanced Ranking: Develop or adopt a ranking system that accounts for score interdependence between models, similar to the penalty-based scheme used in CASP16 [9].

- Validate Across Assemblies: Test your selection protocol separately on monomeric, homomeric, and heteromeric targets, as performance can vary significantly between these categories [9].

Issue 2: Inaccurate estimation of interface residue accuracy in complexes (QMODE2).

- Problem: Your accuracy estimates for residues at the interface between protein chains are unreliable.

- Solution:

- Focus on Interface-Specific Metrics: Move beyond global scores and utilize metrics specifically designed to evaluate interface geometry and residue contacts [18].

- Combine Residue-Wise and Total Scores: Employ a multi-faceted approach that evaluates both the total interface quality and the per-residue accuracy at the interface [18].

- Leverage Consensus Information: For residue-wise scores, consider consensus-based methods that leverage predictions from multiple models to improve reliability [18].

Issue 3: Differentiating between high-quality and near-native models.

- Problem: Your method fails to make fine-grained distinctions between models that are already very good.

- Solution:

- Utilize Local Confidence Measures: Employ per-atom or per-residue local confidence measures, which provide a more granular view of model quality than a single global score [9].

- Benchmark on Diverse Targets: Ensure your method is trained and tested on a diverse set of targets, including complexes of varying sizes and types, to prevent overfitting to a particular protein topology [9].

Quantitative Data from CASP16 Evaluation

Table 1: CASP16 Model Quality Assessment (MQA) Evaluation Modes and Metrics

| Evaluation Mode | Primary Focus | Key Metrics / Methods | Notable CASP16 Findings |

|---|---|---|---|

| QMODE1 | Global Structure Accuracy | OpenStructure-based metrics for overall model quality [9] | Foundation for assessing entire model structure. |

| QMODE2 | Interface Residues Accuracy | OpenStructure-based metrics focused on interface regions of complexes [9] | Critical for evaluating multimeric protein assemblies. |

| QMODE3 | Model Selection Performance | Novel penalty-based ranking scheme for large model pools [9] | Performance varied significantly between monomeric, homomeric, and heteromeric targets. |

Table 2: Key Methodological Approaches in Complex EMA Research

| Approach Facet | Description | Purpose | Associated Challenges |

|---|---|---|---|

| Topology Global Score | A single score estimating the overall quality of the complex structure. | To provide a quick, overall quality check and enable initial model ranking [18]. | May lack the granularity to identify local errors, especially at interfaces. |

| Interface Total Score | A score that specifically assesses the entire interface region between chains. | To evaluate the global quality of the interaction interface in a complex [18]. | Might average out very good and very poor regions within the same interface. |

| Interface Residue-Wise Score | A per-residue score estimating accuracy at the interface. | To pinpoint specific residues at the interface that are likely modeled incorrectly [18]. | Requires high precision to be useful for guiding model refinement. |

| Tertiary Residue-Wise Score | A per-residue score for the entire model (monomer or complex). | To identify local errors anywhere in the structure, not just the interface [9]. | Computational cost and integrating information across the entire structure. |

Experimental Workflow for Model Assessment

The following diagram illustrates a generalized experimental protocol for conducting a model quality assessment, integrating the QMODE frameworks from CASP16.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Protein Model Quality Assessment

| Resource / Tool | Type | Primary Function in EMA |

|---|---|---|

| AlphaFold2 | Software / Database | Generates high-accuracy protein structure predictions; used to create large model pools for assessment and selection [9]. |

| AlphaFold3 | Software | Provides advanced structure prediction for complexes and, crucially, per-atom confidence measures (pLDDT) for local accuracy estimation [9]. |

| MassiveFold | Software / Pipeline | Used to generate large-scale, AlphaFold2-derived model pools, which are the basis for model selection tasks like QMODE3 [9]. |

| OpenStructure | Software Framework | Provides the core set of metrics and tools used in CASP for the official evaluation of model accuracy (e.g., for QMODE1 and QMODE2) [9]. |

| per-atom pLDDT | Confidence Metric | A local accuracy measure output by AlphaFold3; identifies reliable and unreliable regions within a model at the atomic level [9]. |

Methodological Approaches: Implementing Machine Learning and Optimization Algorithms

Frequently Asked Questions (FAQs)

General Parameter Optimization

What is the fundamental trade-off in parameter tuning? Parameter tuning primarily involves the bias-variance tradeoff. Increasing model complexity (e.g., greater depth in tree-based models) reduces bias, allowing the model to better fit training data. However, more complex models require more data to fit effectively and can lead to overfitting. The optimal model carefully balances complexity with predictive power. [19]

My model shows high training accuracy but low test accuracy. What should I do?

This indicates overfitting. Control overfitting by directly controlling model complexity through parameters like max_depth and min_child_weight, or by adding randomness using parameters like subsample and colsample_bytree. Reducing the step size (eta or learning rate) can also help, though you must remember to increase the number of training rounds (num_round) accordingly. [19]

Hyperparameter tuning isn't yielding significant improvements. Why? If extensive tuning shows minimal gains, possible reasons include:

- The default parameters are already suitable for your dataset.

- The dataset lacks sufficient complexity to benefit from further tuning.

- You might have reached the performance limit of your chosen algorithm.

- The issue may lie with the data itself, such as poor feature quality or insufficient signal. [20] [21]

- Investigate your feature set; too many features relative to samples can be detrimental. [21]

BP-ANN Optimization

How can I overcome issues like local minima and slow convergence in BP-ANN? To address common drawbacks like converging to local minima, slow speed, and poor generalization, use optimization algorithms. The Grey Wolf Optimization (GWO) algorithm has been shown to effectively optimize initial weights and biases, leading to faster convergence and better performance compared to algorithms like Particle Swarm Optimization (PSO). [22]

What is a good strategy for designing the feature vector for a BP-ANN? Design your feature set with the assistance of the Minimal Redundancy Maximum Relevance (MRMR) method. This helps select features that have high relevance to the target variable while being minimally redundant with each other, leading to a more effective model. [22]

SVM Optimization

Which performance metrics are most important for tuning SVMs in medical applications? The choice of optimization metric significantly impacts SVM performance. For biomedical applications, consider a range of indices measuring different aspects:

- Discrimination: Area Under the ROC Curve (AUC)

- Calibration: Hosmer-Lemeshow goodness-of-fit statistic (HL χ²)

- Overall Error: Mean Squared Error (MSE) or Mean Cross-Entropy Error (CEE) [23] Models optimized for different metrics yield different performance characteristics. [23]

What are the main parameters to tune for an SVM? Key hyperparameters include:

- Regularization parameter (C): Controls the trade-off between achieving a low error on training data and minimizing model complexity. A smaller

Cencourages a larger margin, even if it leads to more training errors. [24] - Kernel parameters: The choice of kernel (e.g., linear, polynomial, Radial Basis Function) and its parameters (e.g.,

gammafor RBF,degreefor polynomial) are critical for handling non-linear decision boundaries. [25] [24] [23]

How do I handle non-linearly separable data with SVMs? Use the kernel trick. This maps original data into a higher-dimensional feature space where it becomes linearly separable. The Radial Basis Function (RBF) kernel is a popular choice for this transformation. [25] [24]

RF & XGBoost Optimization

How should I handle an imbalanced dataset in XGBoost? Your strategy depends on your goal:

- If you care only about the overall performance metric (like AUC), balance positive and negative weights using

scale_pos_weight. - If you need to predict the right probability, do not re-balance the dataset. Instead, set

max_delta_stepto a finite number (e.g., 1) to help convergence. [19]

What are the key parameters for controlling overfitting in XGBoost? Control overfitting through parameters that manage model complexity and randomness:

- Model Complexity:

max_depth,min_child_weight,gamma. - Randomness:

subsample,colsample_bytree. [19] Using a lowerlearning_ratewith a higher number of estimators (n_estimators) can also improve performance and reduce overfitting. [20]

Troubleshooting Guides

Guide: Diagnosing Poor Tuning Results in Tree-Based Models (XGBoost/RF)

Problem: Extensive hyperparameter tuning yields minimal to no improvement over a model with default parameters. [20]

Investigation Protocol:

Verify Your Evaluation Setup

- Ensure you are using a robust validation method like k-fold cross-validation to reliably estimate model performance and avoid misleading results from a single train-test split. [20]

Audit the Hyperparameter Search

- Check search spaces: Ensure the ranges for key parameters are wide enough. For example, if using a low learning rate, ensure

n_estimatorsis searched over a sufficiently high range (e.g., up to 10,000). [20] - Focus on impactful parameters: Prioritize tuning

subsampleandcolsample_bytree, as they are often critical for performance. [20] - Run enough trials: When using advanced HPO frameworks like Optuna, ensure a sufficient number of trials (e.g., several hundred to a few thousand) to adequately explore the search space. [20]

- Check search spaces: Ensure the ranges for key parameters are wide enough. For example, if using a low learning rate, ensure

Conduct a Data-Centric Analysis

- Perform feature selection: A large number of features (e.g., 200) relative to samples can be problematic. Use techniques like

SelectKBestor analyze feature importance/correlation to reduce dimensionality. [21] - Analyze prediction errors: Manually review cases where the model fails to understand patterns of errors and potential data issues. [21]

- Check for data quality: Look for outliers and handle missing values appropriately, as low R² scores can often stem from uncleaned data. [21]

- Perform feature selection: A large number of features (e.g., 200) relative to samples can be problematic. Use techniques like

Benchmark with Simpler Models

- Try simpler models like Linear or Ridge Regression as a baseline. If they perform similarly, the data problem may not be highly complex, or the features may lack strong predictive power. [21]

Guide: Optimizing an SVM for a Biomedical Classification Task

Problem: Tuning an SVM model for a high-stakes domain like medical prediction requires careful consideration of performance metrics and model parameters. [23]

Experimental Protocol:

Data Preparation and Kernel Selection

- Standardize features, as SVMs are sensitive to the scale of data.

- Choose appropriate kernels based on data characteristics. Radial (SVM-R) and polynomial (SVM-P) kernels are common starting points due to their good performance in many domains. [23]

Define the Optimization Strategy

- Select optimization metrics: Choose metrics based on clinical relevance. For a comprehensive view, optimize for multiple metrics like AUC (discrimination) and HL χ² (calibration). [23]

- Choose a tuning method: Use a systematic approach like grid search or advanced frameworks like Optuna or Hyperopt. [25] [26] [23]

Execute a Nested Cross-Validation

- Use a nested approach with inner and outer loops to select the best parameters on training data and provide an unbiased evaluation of model performance on unseen test data. [23]

Compare to a Baseline Model

- Compare the optimized SVM's performance to a traditional model like logistic regression optimized using the same indices to validate its utility. [23]

Table: Key SVM Parameters for Biomedical Tuning

| Parameter | Description | Considerations for Biomedical Data |

|---|---|---|

| Kernel Type | Transform function for non-linear data | Radial (RBF) and Polynomial kernels are widely used. [23] |

| C (Soft Margin) | Penalty for misclassified training points | A smaller C creates a wider margin, potentially improving generalization. [24] |

| Kernel Parameter (e.g., gamma, degree) | Defines the influence of a single training example (gamma) or polynomial complexity (degree). | Critical for model performance; requires careful tuning via grid search or evolutionary algorithms. [23] |

Guide: Building a Robust BP-ANN for Sensor Data

Problem: Creating a BP-ANN model for gas identification and concentration measurement using a single ultrasonically radiated sensor. [22]

Methodology:

Feature Vector Design

- Extract relevant features from the sensor's response curve with and without ultrasound.

- Use the Minimal Redundancy Maximum Relevance (MRMR) algorithm to select an optimal set of features that are maximally relevant to the target while being minimally redundant. [22]

Model Structure Selection

- Experiment with different network architectures. Research suggests a Double Hidden Layer BP (DHBP) network can outperform single hidden layer (SHBP) and recurrent (Elman) networks for this specific task. [22]

Parameter Optimization

- Employ the Grey Wolf Optimization (GWO) algorithm to find the optimal initial weights and biases for the network, overcoming the BP-ANN's tendency to get stuck in local minima. [22]

Model Evaluation

- Evaluate the final model on its gas recognition accuracy (%) and mean concentration measurement error (%) on a held-out test set. [22]

Table: BP-ANN Model Comparison for Gas Analysis [22]

| Model Aspect | Option A | Option B | Best Performing Option |

|---|---|---|---|

| Feature Selection | Manual/Experience-based | MRMR-assisted | MRMR-assisted |

| Network Structure | Single Hidden Layer (SHBP) | Double Hidden Layer (DHBP) | Double Hidden Layer (DHBP) |

| Optimization Algorithm | Particle Swarm (PSO) | Grey Wolf (GWO) | Grey Wolf (GWO) |

| Reported Performance | --- | --- | 97.3% accuracy, 5.79% error |

The Scientist's Toolkit: Essential Research Reagents

Table: Key Hyperparameter Optimization Frameworks & Tools

| Tool / Solution | Function / Description | Common Application Context |

|---|---|---|

| Optuna | A hyperparameter optimization framework that uses define-by-run APIs for efficient parameter search. [25] | Multi-class SVM tuning, [25] XGBoost/LightGBM tuning. [19] [20] |

| Hyperopt | A Python library for serial and parallel optimization over awkward search spaces. [25] | Multi-class SVM tuning, [25] general model optimization. |

Grid Search (e.g., GridSearchCV) |

Exhaustive search over a specified parameter grid. | Foundational tuning method for SVMs and other models. [23] |

| Grey Wolf Optimization (GWO) | A metaheuristic algorithm inspired by grey wolf hunting behavior. | Optimizing initial weights and biases in BP-ANN models. [22] |

| k-Fold Cross-Validation | A resampling procedure used to evaluate models on limited data samples. | Essential for robust model evaluation and hyperparameter tuning across all frameworks. [25] [20] |

Experimental Protocols & Workflows

Detailed Protocol: Nested CV for SVM in Medical Data

This protocol is adapted from a study optimizing SVM mortality prediction models. [23]

Objective: To develop and evaluate a robust SVM model for predicting patient mortality after percutaneous coronary intervention (PCI).

Materials (Research Reagents):

- Data: 7914 PCI cases with 21 clinically relevant features (e.g., Age, CHF Class, Creatinine, Diabetes). [23]

- Software: GIST SVM toolkit or equivalent (e.g.,

scikit-learn). [23] - Kernels: Radial Basis Function (RBF) and Polynomial. [23]

Procedure:

Data Partitioning:

- Randomly split the entire dataset into a training set (e.g., 70%) and a hold-out test set (e.g., 30%). Use stratification if the outcome is imbalanced. [23]

Nested Tuning Loop:

- Outer Loop (Performance Estimation): Perform k-fold cross-validation (e.g., 3-fold) on the training set.

- Inner Loop (Parameter Selection): For each fold of the outer loop, further split the training fold into a "kernel training" set and a "sigmoid training" set (or use a validation set). Use this inner loop to conduct a grid search or other HPO method to find the best kernel parameters (

word) and soft margin constant (C). [23]

Model Training and Evaluation:

- Train the final model with the best-averaged parameters on the entire training set.

- Evaluate the final model on the untouched hold-out test set using the chosen performance metrics (AUC, HL χ², MSE, CEE). [23]

The workflow for this nested tuning process is as follows:

Workflow: Systematic Approach to Resistant Tuning

This workflow provides a logical pathway for troubleshooting when hyperparameter tuning shows minimal returns, synthesizing recommendations from multiple sources. [20] [21]

Integrating Genetic Algorithms for Multi-Objective Parameter Optimization

Troubleshooting Guides & FAQs

Q1: My genetic algorithm converges to a suboptimal solution. What could be wrong? This is often caused by a lack of genetic diversity, leading to premature convergence. To address this:

- Increase Mutation Rate: Slightly raise the mutation probability to introduce more diversity. A typical range is 0.01 to 0.1 [27].

- Review Selection Pressure: Ensure your selection method (e.g., tournament or roulette wheel selection) does not favor a few top individuals too aggressively, which can cause the population to become homogeneous too quickly [28].

- Implement Elitism Strategically: While elitism preserves good solutions, retaining too many can dominate the population. Copy only the single best or a few best solutions to the next generation [28].

Q2: How can I handle linear and bound constraints in my multi-objective optimization problem?

The gamultiobj solver and similar genetic algorithm implementations can directly handle linear and bound constraints [29].

- Bound Constraints: Define lower (

lb) and upper (ub) bounds for each variable to restrict the search space. - Linear Constraints: Specify linear inequality constraints (

A*x <= b) and equality constraints (Aeq*x = beq). The algorithm will ensure solutions satisfy these within a defined tolerance [29]. If you use custom crossover or mutation functions, you must ensure they also produce offspring that satisfy these constraints.

Q3: What does it mean if my algorithm's performance plateaus for many generations? A plateau often indicates that the algorithm is exploring the search space but is not finding fitter solutions.

- Check Exploration vs. Exploitation: The balance may be off. If mutation rates are too low, the algorithm might be stuck. If they are too high, it may be wandering randomly. Adjust parameters to encourage more exploration [30].

- Vectorize Your Fitness Function: If coding in MATLAB, vectorizing your fitness function can significantly speed up evaluation. This involves writing the function to process a matrix of points (a population) all at once, rather than one point at a time, which can lead to faster generations and help escape plateaus more quickly [29].

- Analyze the Pareto Front: Use visualization tools like

gaplotparetoto see if the front of non-dominated solutions is still spreading. If it is, the algorithm may still be making progress in diversity even if the hypervolume isn't changing drastically [29].

Q4: How do I choose an appropriate fitness function for a multi-objective problem? The fitness function is critical as it guides the search.

- Direct Objective Calculation: In multi-objective optimization, the fitness function typically returns a vector where each element is the value of a different objective you wish to minimize or maximize. For example, a function may return

[objective1(x), objective2(x)][29]. - Pareto Dominance: The genetic algorithm's selection process is then based on Pareto dominance, where a solution is better if it is superior in at least one objective without being worse in any other. You do not need to combine objectives into a single score [29].

Experimental Protocols & Data

Detailed Methodology: Optimizing a Dosing Guideline

This protocol is based on a study that used a GA to optimize vancomycin dosing in adults [31].

1. Problem Definition:

- Objective: To find the optimal combination of loading and maintenance doses (in mg) and dosing intervals (tau, in hours) for different patient weight and kidney function classes.

- Constraints: Dose strengths, dosing interval lengths, and maximum infusion rates were constrained to ensure clinical feasibility [31].

2. GA Configuration:

- Genotype Encoding: The solution (a "genotype") was encoded as a set of control variables representing the specific doses and intervals for each patient class [31].

- Fitness Evaluation: A population of 512 solutions was used. The fitness of each dosing regimen was evaluated using a pharmacokinetic/pharmacodynamic (PK/PD) model to simulate outcomes like drug concentration (Cmax, Cmin) and area under the curve (AUC). The goal was to optimize these metrics towards therapeutic targets [31].

- Selection and Evolution: A steady-state GA was employed. In each generation, 10% of the least-fit solutions were purged and replaced by new offspring created from the fitter members of the population [31].

3. Performance Analysis: The optimized GA-based dosing guideline was compared against established clinical guidelines. The table below summarizes key performance metrics, demonstrating the GA's ability to derive an effective regimen [31].

Table 1: Performance Comparison of Vancomycin Dosing Guidelines

| Performance Metric | Original Guideline | GA-Based Solution |

|---|---|---|

| Cmax after Loading Dose (mg/L) | 26.5 | 33.7 |

| Cmin after Loading Dose (mg/L) | 9.01 | 15.7 |

| AUC₀–₂₄h (mg·h)/L | 376 | 485 |

| Fraction of AUC in Target Range (0-24h) | 0.336 | 0.492 |

Detailed Methodology: Molecular Design with STELLA

This protocol outlines the use of the STELLA framework, which employs an evolutionary algorithm for de novo drug design [32].

1. Workflow Overview: The STELLA workflow is an iterative process consisting of four main stages [32]:

- Initialization: An initial pool of molecules is generated by applying fragment-based mutations to a user-provided seed molecule.

- Molecule Generation: New variants are created from the pool using three operators:

- FRAGRANCE Mutation: A fragment replacement method.

- MCS-based Crossover: Recombines molecules based on their maximum common substructure.

- Trimming: Edits molecules to optimize properties.

- Scoring: Each generated molecule is evaluated using an objective function that incorporates multiple user-defined pharmacological properties (e.g., docking score, quantitative estimate of drug-likeness (QED)).

- Clustering-based Selection: All molecules are clustered by structural similarity. The best-scoring molecule from each cluster is selected for the next iteration, ensuring a balance between quality and diversity. The clustering threshold is progressively tightened to shift focus from exploration to exploitation [32].

2. Performance Benchmarking: In a case study to identify PDK1 inhibitors, STELLA was benchmarked against REINVENT 4, a deep learning-based tool. The results below highlight the performance advantage of the metaheuristic approach [32].

Table 2: Molecular Generation Performance: STELLA vs. REINVENT 4

| Metric | REINVENT 4 | STELLA |

|---|---|---|

| Number of Hit Compounds | 116 | 368 |

| Hit Rate Per Iteration/Epoch | 1.81% | 5.75% |

| Mean Docking Score (GOLD PLP Fitness) | 73.37 | 76.80 |

| Mean QED | 0.75 | 0.77 |

Workflow Visualization

The following diagram illustrates the core workflow of a genetic algorithm for multi-objective optimization, integrating concepts from the cited experimental protocols [29] [32] [28].

GA Workflow for Multi-Objective Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Libraries for Genetic Algorithm Research

| Item Name | Function / Application |

|---|---|

| MATLAB Global Optimization Toolbox | Provides the gamultiobj function for performing multi-objective optimization using a genetic algorithm. It includes features for constraint handling, visualization, and vectorization [29]. |

| Geatpy | A Python library for evolutionary computing and genetic algorithms. It was used for multi-objective optimization of neutron transport parameters, demonstrating its capability in handling complex, computationally intensive scientific problems [33]. |

| STELLA Framework | A metaheuristics-based generative molecular design framework. It combines an evolutionary algorithm with a clustering-based method for extensive multi-parameter optimization in drug discovery [32]. |

| R (with tidyverse) | Used for data management, calculations, and graphical analysis within a GA workflow for clinical dosing optimization [31]. |

| OpenMOC | An open-source neutron transport code. It serves as a simulation environment whose parameters can be optimized using genetic algorithms, illustrating the use of GAs to tune complex computational models [33]. |

Frequently Asked Questions (FAQs)

FAQ 1: What are the key confidence metrics in AlphaFold, and how should I interpret them? AlphaFold provides two primary confidence metrics that are crucial for assessing prediction reliability. The predicted Local Distance Difference Test (pLDDT) is a per-residue estimate of local confidence, where scores above 90 indicate high reliability, 70-90 are good, 50-70 are low, and below 50 should be considered very low confidence, often corresponding to disordered regions [34]. The Predicted Aligned Error (PAE) represents the expected positional error in Angstroms between residues after optimal alignment, with low PAE values indicating high confidence in relative domain positioning [35]. These metrics should always be consulted before using models for downstream applications.

FAQ 2: How can I improve predictions for protein complexes and multimeric structures? Predicting protein complexes remains challenging due to difficulties in capturing inter-chain interaction signals [36]. For multimer prediction, consider using specialized implementations like AlphaFold-Multimer or ColabFold with explicit multiple-chain input [37]. Recent advances like DeepSCFold have demonstrated improvements by using sequence-derived structural complementarity rather than relying solely on co-evolutionary signals, achieving 11.6% and 10.3% improvement in TM-score compared to AlphaFold-Multimer and AlphaFold3 respectively on CASP15 targets [36]. For large complexes, CombFold can assemble structures from subunit predictions [37].

FAQ 3: What parameter adjustments can optimize prediction accuracy for challenging targets? Several key parameters can be tuned for improved results. The max_recycles parameter (found in ColabFold advanced settings) controls the number of iterative refinement cycles; increasing this to 12-48 with a tolerance (tol) of 0.5-1.0 Å can significantly improve convergence [37]. For proteins with limited evolutionary information, consider integrating physicochemical and statistical features or using structural complementarity approaches that don't rely solely on co-evolution [36]. Implementing model quality assessment feedback loops has also shown promise for iterative refinement [38].

Troubleshooting Guides

Problem: Low Confidence Predictions (pLDDT < 70)

Symptoms: Large regions of model showing yellow, orange, or red coloring in confidence visualization; high variability between different model instances.

Solution Protocol:

- Verify your input sequence: Ensure proper formatting without non-standard amino acids or formatting characters [37].

- Enhance multiple sequence alignments: Supplement with additional genomic databases (UniRef30, UniRef90, Metaclust, BFD) if using local installation [36].

- Increase sampling: Generate multiple models (num_models = 3-5) with different random seeds to assess consistency [37].

- Adjust recycling parameters: Set max_recycles to 12-48 with tolerance 0.5-1.0 Å to allow proper convergence [37].

- Consider alternative approaches: For persistent low-confidence regions, investigate protein language models like ESMFold which may perform better when evolutionary information is sparse [39].

Problem: Inaccurate Protein-Protein Interaction Interfaces

Symptoms: Incorrect binding orientations despite high monomer confidence; high PAE between interacting domains; biologically implausible interfaces.

Solution Protocol: