Optimizing Principal Component Selection for RNA-seq Analysis: A Practical Guide for Biomedical Researchers

Selecting the optimal number of principal components (PCs) is a critical step in RNA-seq data analysis that directly impacts the accuracy of downstream interpretations, from differential expression to cell type...

Optimizing Principal Component Selection for RNA-seq Analysis: A Practical Guide for Biomedical Researchers

Abstract

Selecting the optimal number of principal components (PCs) is a critical step in RNA-seq data analysis that directly impacts the accuracy of downstream interpretations, from differential expression to cell type identification. This guide provides a comprehensive framework for researchers and drug development professionals, covering the foundational principles of Principal Component Analysis (PCA) in transcriptomics, practical methods for determining component numbers, troubleshooting common pitfalls, and validation strategies using both traditional metrics and emerging trajectory-aware evaluation methods. By synthesizing current best practices and comparative analyses of dimensionality reduction techniques, this article empowers scientists to make informed decisions that enhance the biological relevance and reproducibility of their RNA-seq findings.

Understanding PCA in RNA-seq: From Mathematical Foundations to Biological Interpretation

The Role of Dimensionality Reduction in High-Dimensional Transcriptomics Data

Dimensionality reduction (DR) is a critical preprocessing step in the analysis of high-dimensional transcriptomics data. Technologies like single-cell RNA sequencing (scRNA-seq) can measure the expression of thousands of genes across tens of thousands of cells, creating datasets that are computationally challenging and suffer from the "curse of dimensionality" [1]. DR techniques address this by projecting data into a lower-dimensional space, preserving essential biological signals while reducing noise and redundancy. This enables effective visualization, clustering, and trajectory inference, which are fundamental for identifying novel cell types, understanding cellular heterogeneity, and tracing developmental lineages [2]. Within the context of optimizing the number of principal components for RNA-seq research, selecting appropriate DR methods and parameters becomes paramount for generating biologically meaningful results.

FAQs and Troubleshooting Guides

FAQ 1: What are the fundamental differences between PCA and non-linear methods like UMAP and t-SNE?

Answer: PCA is a linear dimensionality reduction technique that creates new, uncorrelated variables (principal components) through an orthogonal transformation of the original data. The principal components are linear combinations of the original features and are ranked by the amount of variance they explain, with the first component capturing the most variance [1] [2]. It is highly interpretable, computationally efficient, and is typically used to obtain the top 10-50 principal components for downstream analysis tasks [1].

In contrast, t-SNE and UMAP are non-linear, graph-based techniques. They excel at revealing local structure and cluster relationships in high-dimensional data [1] [2].

- t-SNE focuses on preserving local similarities by modeling probabilities in high and low-dimensional spaces, making it excellent for identifying distinct cell populations [3] [2].

- UMAP often provides better preservation of global structure (the relationships between clusters) compared to t-SNE and generally has superior run-time performance [2] [4].

The choice between them depends on the analysis goal: use PCA for initial, fast dimensionality reduction or when interpretability is key; use t-SNE for detailed cluster separation analysis; and use UMAP for a balance of local and global structure preservation with faster computation [1] [4].

FAQ 2: How do I choose the number of principal components to retain for my RNA-seq analysis?

Answer: Optimizing the number of principal components (PCs) is a crucial step. Retaining too few can discard biologically relevant signal, while too many can introduce noise. There is no universal rule, but several strategies can guide this decision within the context of your RNA-seq research:

- Scree Plot Analysis: Visualize the percentage of variance explained by each consecutive principal component. A common approach is to look for an "elbow" in the plot—the point where the marginal gain in explained variance drops significantly. The components before this elbow are typically retained.

- Cumulative Variance Explained: Set a threshold for the total variance you wish to capture (e.g., 80-90%) and select the number of PCs required to reach it.

- Downstream Task Performance: A practical method is to use the output of PCA (the selected PCs) for downstream tasks like clustering and then evaluate the biological coherence of the results. The optimal number of PCs is often the one that leads to the most stable and biologically interpretable clusters.

It is critical to remember that PCA is a multi-dimensional technique. The bi-plot of PC1 vs. PC2 only shows the largest sources of variation, and important signals may be hidden in higher components [5].

FAQ 3: My PCA plot shows unexpected clustering, like a strong batch effect. What should I do?

Answer: Unexpected clustering in a PCA plot, such as a clear separation driven by processing batch rather than biological condition, is a common issue that indicates a batch effect [6].

Troubleshooting Steps:

- Confirm the Artifact: Correlate the principal component driving the separation (e.g., PC1) with your experimental metadata (e.g., sequencing batch, preparation date, technician). If the clusters align with a technical rather than biological variable, you have identified a batch effect.

- Incorporate Batch in Model: If using a tool like DESeq2 for differential expression, you can include the batch as a covariate in your design formula (e.g.,

design = ~ batch + condition). This will adjust for the batch effect during modeling [6]. - Investigate the Drivers: Examine the genes that contribute most (have the highest loadings) to the principal component responsible for the batch effect. This can provide insight into the nature of the technical artifact [5].

If the batch effect is confirmed and cannot be statistically corrected, it may compromise the data's utility for detecting true biological differences, and the experiment may need to be re-run with better-controlled conditions.

FAQ 4: Why does my UMAP/t-SNE visualization look different every time I run it?

Answer: Non-linear methods like UMAP and t-SNE use stochastic processes (random initialization) and optimization algorithms like gradient descent. Therefore, slight variations in the resulting plots between runs are normal [3].

How to Ensure Reproducibility and Trust Your Results:

- Set a Random Seed: Always set a random seed (e.g.,

set.seed(123)in R) before running t-SNE or UMAP. This ensures that you get the exact same output every time you run the code on the same data. - Understand Parameter Sensitivity: These methods are sensitive to hyperparameters. For t-SNE, the

perplexityparameter influences the balance between local and global structure. For UMAP, then_neighborsparameter determines the scale of the structures it captures. It is good practice to run the algorithms with different parameter values to see if the core conclusions hold [2] [4]. - Focus on the Biology: The exact positions of clusters may shift, but the overall topology—the presence of distinct clusters and their relative relationships—should be stable across runs with a fixed seed. Trust the visualization if it consistently shows the same major biological structures.

Performance Comparison of Dimensionality Reduction Methods

The following table summarizes key characteristics of popular DR methods based on benchmarking studies, providing a guide for method selection. Note that performance can vary based on dataset and specific implementation.

Table 1: Comparison of Dimensionality Reduction Methods for Transcriptomic Data

| Method | Type | Key Strengths | Key Limitations | Best Use Cases |

|---|---|---|---|---|

| PCA [1] [2] | Linear | Computationally efficient; highly interpretable; preserves global variance. | Poor handling of non-linear data; less effective for visualization of complex clusters. | Initial data exploration; noise reduction; as input for other DR methods. |

| t-SNE [1] [2] [4] | Non-linear | Excellent preservation of local structure and cluster separation. | High computational cost; sensitive to parameters; poor preservation of global structure. | Identifying and visualizing distinct cell populations and local similarities. |

| UMAP [1] [2] [4] | Non-linear | Better preservation of global structure than t-SNE; faster runtime. | Sensitive to parameter choices; can produce artificial trajectories. | General-purpose non-linear visualization where both local and global structure are important. |

| PaCMAP [4] | Non-linear | Designed to optimize both local and global structure; robust to parameter choices. | Less established in some bioinformatics pipelines. | When a balanced preservation of local and global structure is required. |

Table 2: Quantitative Benchmarking of DR Methods (Based on Representative Studies)

| Method | Local Structure Preservation | Global Structure Preservation | Stability | Computational Speed |

|---|---|---|---|---|

| PCA | Low [4] | High [4] | High | Very Fast [3] |

| t-SNE | Very High [2] [4] | Low [4] | Moderate | Slow [3] |

| UMAP | High [2] [4] | Moderate [4] | High [2] | Moderate [3] |

| PaCMAP | High [4] | High [4] | High [4] | Fast [4] |

Experimental Protocols and Workflows

Protocol 1: Standard Dimensionality Reduction Workflow for scRNA-seq Data

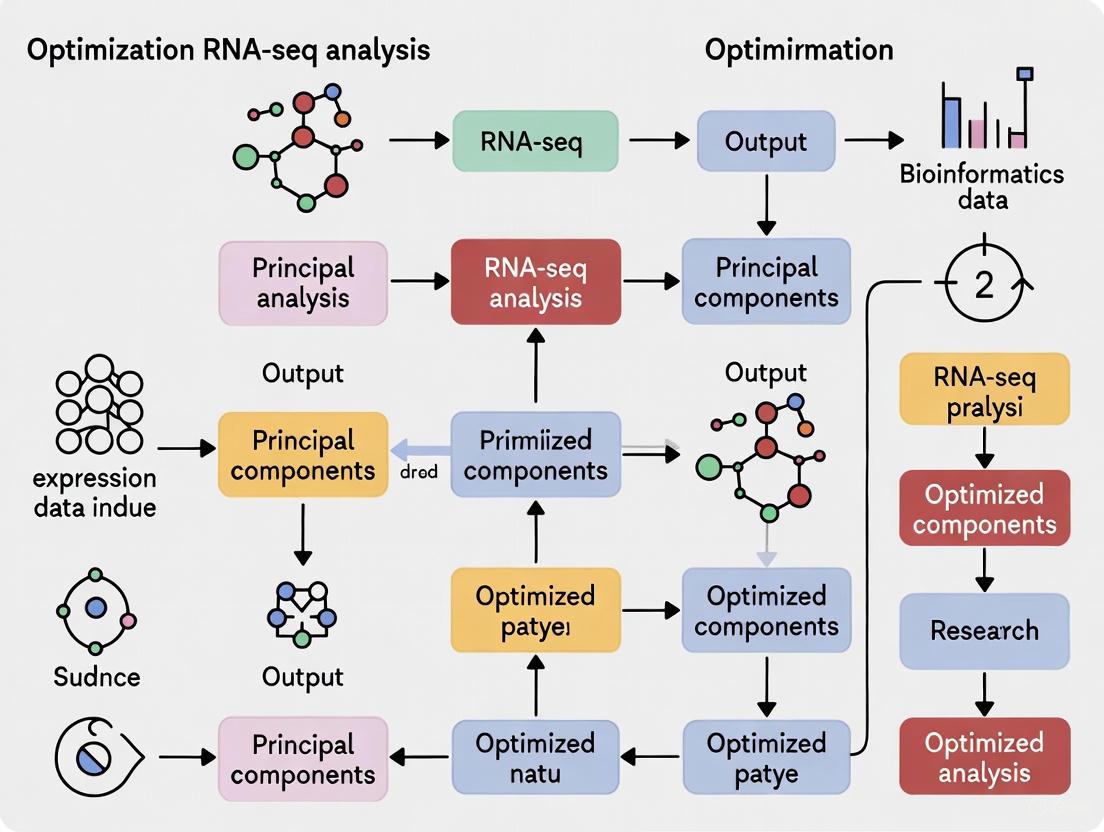

The following diagram outlines a standard workflow for applying dimensionality reduction to single-cell RNA-seq data, as implemented in tools like Scanpy.

Standard DR Workflow for scRNA-seq

Detailed Methodology:

- Raw Count Matrix: Begin with a gene-by-cell count matrix.

- Quality Control & Normalization: Filter out low-quality cells and genes, and correct for technical variations like sequencing depth. A common approach is to use the shifted logarithm transformation on normalized counts [1].

- Feature Selection: Identify highly variable genes that are likely to contain biologically relevant information. This step reduces noise before DR [1].

- Primary Dimensionality Reduction (PCA): Perform PCA on the scaled data of highly variable genes. This step efficiently reduces dimensionality and denoises the data. The top principal components are used for subsequent analysis [1].

- Non-linear Reduction (t-SNE/UMAP): For visualization, compute a neighborhood graph from the PCA-reduced data, then apply a non-linear method like UMAP or t-SNE to project the data into 2D or 3D [1].

- Clustering & Visualization: Use the low-dimensional embedding to identify cell clusters and visualize them.

- Biological Interpretation: Annotate clusters based on known marker genes and perform differential expression analysis to understand the biological meaning.

Protocol 2: Method Selection for Specific Biological Questions

Use this decision guide to select an appropriate dimensionality reduction method based on your research goals.

DR Method Selection Guide

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Key Software Tools for Dimensionality Reduction in Transcriptomics

| Tool / Package | Function | Key Features | Common Use Case |

|---|---|---|---|

| Scanpy [1] | Python-based scRNA-seq analysis | Integrated workflow from QC to DR and clustering; implements PCA, t-SNE, UMAP. | Comprehensive analysis of single-cell data in a Python environment. |

| Seurat | R-based scRNA-seq analysis | Popular, well-documented R toolkit with extensive visualization and DR capabilities. | Standard for single-cell analysis in the R/Bioconductor ecosystem. |

| DESeq2 [6] [5] | R package for differential expression | Includes utilities for PCA on stabilized transformed counts (e.g., VST or rlog). | Visualizing sample-to-sample distances in bulk RNA-seq experiments. |

| FlowJo [7] | Commercial flow cytometry analysis | Provides plugins for running UMAP and t-SNE directly on flow cytometry data. | Dimensionality reduction and visualization for high-dimensional cytometry. |

| GrandPrix [2] | DR tool based on Gaussian Processes | Uses variational inference for scalable probabilistic DR. | Probabilistic dimensionality reduction for large datasets. |

| ZIFA [2] | Zero-Inflated Factor Analysis | Explicitly models dropout events common in scRNA-seq data. | Dimensionality reduction when dropouts are a major concern. |

Principal Component Analysis (PCA) is a fundamental dimensionality reduction technique widely used in transcriptomic studies, including RNA sequencing (RNA-seq) research. In the context of high-dimensional genomic data where the number of genes (variables) far exceeds the number of samples (observations)—a classic P≫N problem—PCA provides an essential tool for exploratory data analysis, visualization, and noise reduction [8] [9]. The method simplifies complex datasets by transforming original variables into a new set of uncorrelated variables called principal components (PCs), which successively capture maximum variance in the data [10] [11]. For RNA-seq researchers, PCA enables efficient data exploration by identifying dominant patterns, detecting batch effects, and visualizing sample relationships in lower-dimensional space [8].

Mathematical Principles of PCA

Core Mathematical Foundation

PCA operates through a linear transformation that projects high-dimensional data onto a new coordinate system defined by principal components [12]. The mathematical procedure involves several key steps:

Standardization and Centering: Variables are centered by subtracting their means and often scaled to unit variance to ensure equal contribution [10]. This step is crucial when variables have different measurement units or scales.

Covariance Matrix Computation: The covariance matrix captures how variables deviate from their means together [10]. For a dataset with p variables, this produces a p×p symmetric matrix where diagonal elements represent variances and off-diagonal elements represent covariances between variable pairs [10].

Eigendecomposition: The covariance matrix is decomposed into eigenvectors and eigenvalues [10] [11]. Eigenvectors determine the directions of the new feature space (principal components), while eigenvalues indicate the magnitude of variance carried by each component [10].

The fundamental optimization problem solved by PCA is finding the weight vector w(1) that maximizes the variance of the projected data [12]:

w(1) = argmax‖w‖=1 {‖Xw‖²} = argmax {wᵀXᵀXw/wᵀw}

This Rayleigh quotient is maximized when w is the principal eigenvector of XᵀX [12]. Subsequent components are found recursively by removing the variance explained by previous components and repeating the process on the residual matrix [12].

Geometric and Algebraic Interpretations

Geometrically, PCA can be viewed as fitting an ellipsoid to the data, where each axis represents a principal component [12]. The eigenvectors define the axes directions, while eigenvalues correspond to the lengths of these axes [12]. Algebraically, PCA is equivalent to singular value decomposition (SVD) of the column-centered data matrix [11]. This connection provides computational advantages and deeper theoretical insights into the method.

Variance Explanation in PCA

Concept of Explained Variance

In PCA, variance explanation refers to the proportion of total dataset variability captured by each principal component [10]. The total variance in a standardized dataset equals the number of variables, with each variable contributing one unit of variance [11]. When variables are standardized, the sum of all eigenvalues equals the total variance [11].

The variance explained by the k-th principal component is calculated as:

Variance Explained(k) = λk / Σ(λi)

where λk is the k-th eigenvalue, and the denominator represents the sum of all eigenvalues [10]. This ratio indicates how much of the total information (variance) in the original data is compressed into each component [10].

Variance Interpretation Guidelines for RNA-seq Data

Table 1: Interpretation of Variance Explained in PCA for RNA-seq Studies

| Variance Distribution | Implication for RNA-seq Analysis | Recommended Action |

|---|---|---|

| First 2 PCs capture >70% variance | Strong global structure likely driven by few technical or biological factors | Investigate top loadings for biological interpretation; check for batch effects |

| Variance spread evenly across many PCs | Complex data with many contributing factors | Consider higher-dimensional analysis; avoid over-interpreting 2D visualizations |

| <50% total variance in first 5 PCs | High noise or weak signals | Apply more aggressive filtering; consider biological replication |

| Sharp drop after first few PCs (elbow) | Clear separation between signal and noise | Use elbow for dimensionality selection |

For RNA-seq data, where the intrinsic dimensionality is often lower than the measured 20,000+ genes, PCA effectively separates biological signals from technical noise [8] [9]. The first few components typically capture dominant biological processes or major batch effects, while later components often represent random noise or subtle biological variations [8].

Troubleshooting PCA in RNA-seq Experiments

Common Issues and Solutions

Table 2: Troubleshooting Guide for PCA in RNA-seq Analysis

| Problem | Potential Causes | Solutions | Prevention Strategies |

|---|---|---|---|

| Batch effects dominating PC1 | Sample processing dates, different sequencers, library preparation batches | Include batch as covariate in model; apply batch correction methods | Randomize samples across batches; include control samples in each batch |

| Non-linear patterns not captured | PCA assumes linear relationships; non-linear biological patterns | Apply non-linear methods (t-SNE, UMAP, kernel PCA) alongside PCA | Use PCA for initial assessment, then apply non-linear methods for subpopulation identification |

| Inconsistent results after data filtering | Different gene filtering thresholds altering covariance structure | Standardize filtering (e.g., retain genes with expression >5 in ≥50% samples) | Document and maintain consistent preprocessing pipelines |

| PCA visualization shows no clear separation | Biological heterogeneity; subtle transcriptomic differences | Increase sample size; focus on known marker genes; use supervised methods | Conduct power analysis before experimentation; define biological hypotheses clearly |

| Unexpected outliers in PCA plot | Sample quality issues, contamination, or unique biological states | Check RNA quality metrics (RIN); verify sample identity; inspect outlier-specific genes | Implement strict QC thresholds; sequence technical replicates |

Addressing PCA Limitations in Biological Data

PCA has specific mathematical limitations that can impact its effectiveness for RNA-seq data:

Linearity Assumption: PCA identifies linear relationships, but biological systems often exhibit nonlinear patterns [13] [14]. When applied to data with nonlinear structures, PCA may fail to capture meaningful relationships and potentially obscure genuine associations [13] [14].

Orthogonality Constraint: Principal components are mathematically constrained to be orthogonal, which may not align with the true underlying biological factors that could be correlated [15].

Variance Maximization Focus: PCA prioritizes high-variance features, which may not always correspond to biologically meaningful signals, especially when relevant biological processes manifest as subtle transcriptional changes [15].

Scale Variance: PCA results are sensitive to data scaling, potentially overemphasizing genes with higher expression levels or greater variability unless proper standardization is applied [15] [10].

Experimental Protocols for PCA in RNA-seq

Standardized RNA-seq PCA Workflow

RNA-seq PCA Workflow Diagram

Step 1: Data Preprocessing

- Quality Control: Filter samples based on RNA quality metrics (RIN >7) and library quality controls [8].

- Gene Filtering: Remove genes with low expression (e.g., <5 counts across most samples) to reduce noise [8].

- Normalization: Apply appropriate normalization (e.g., TPM, FPKM, or variance-stabilizing transformations) to account for sequencing depth and gene length differences [8].

Step 2: Data Transformation

- Log Transformation: Apply log2 transformation to count data to stabilize variance and reduce the influence of extreme values.

- Standardization: Scale genes to have zero mean and unit variance (z-score normalization) to prevent high-expression genes from dominating the analysis [10].

Step 3: PCA Implementation

- Covariance Matrix: Compute the covariance matrix of the transformed data matrix [10].

- Eigendecomposition: Perform eigendecomposition to extract eigenvectors (loadings) and eigenvalues (variances) [10] [11].

- Component Scores: Project original data onto principal components to generate PC scores for each sample [11].

Step 4: Results Interpretation

- Variance Assessment: Calculate percentage of variance explained by each component [10].

- Visualization: Create PCA plots (PC1 vs PC2, etc.) to visualize sample relationships [8].

- Loading Examination: Identify genes with highest absolute loadings on each component to interpret biological meaning [11].

Optimizing Number of Components Selection

Component Selection Decision Diagram

Several methods can determine the optimal number of components to retain:

- Scree Plot Visualization: Plot eigenvalues in descending order and look for an "elbow" point where the curve flattens [15].

- Cumulative Variance Threshold: Retain components until a predetermined variance percentage is reached (typically 70-90%) [15].

- Broken Stick Model: Compare observed eigenvalues with those expected from random data, retaining components that explain more variance than expected by chance [15].

- Bootstrap Methods: Use resampling to generate confidence intervals for eigenvalues and retain components with eigenvalues significantly >1 [15].

For RNA-seq studies, we recommend a composite approach combining multiple methods with biological validation to determine the optimal number of components.

Research Reagent Solutions for RNA-seq PCA

Table 3: Essential Research Reagents and Tools for RNA-seq PCA Analysis

| Reagent/Tool | Function in PCA Workflow | Implementation Example |

|---|---|---|

| Quality Control Tools (FastQC, MultiQC) | Assess RNA and library quality before PCA | Filter samples with RIN <7 and unusual GC content [8] |

| Normalization Methods (DESeq2, edgeR, limma) | Standardize counts across samples for valid comparisons | Apply variance-stabilizing transformation to raw counts [8] |

| Statistical Platforms (R, Python) | Perform covariance matrix computation and eigendecomposition | Use R's prcomp() or Python's sklearn.decomposition.PCA [8] |

| Visualization Packages (ggplot2, plotly) | Create PCA plots and scree plots for interpretation | Generate interactive 3D PCA plots for sample exploration [8] |

| Batch Correction Tools (ComBat, limma removeBatchEffect) | Address technical confounding in PCA visualization | Apply prior to PCA when batch information is known [8] |

Frequently Asked Questions (FAQs)

Q1: Why does PC1 often separate my experimental groups while PC2 shows batch effects? A: PC1 captures the largest variance source in your data, which could be either biological or technical. When experimental groups show strong transcriptomic differences, these often dominate PC1. Batch effects typically appear in subsequent components when they explain less variance than the biological effect. To address this, include batch information in your experimental design and apply batch correction methods before PCA.

Q2: How many components should I retain for differential expression analysis? A: For differential expression analysis, retaining too many components may remove biological signal, while retaining too few may leave confounding noise. A practical approach is to retain components that collectively explain 70-80% of total variance or until the scree plot elbow. Validate by checking if known biological signals remain in the residual data.

Q3: Can PCA be used for feature selection in RNA-seq? A: While PCA transforms rather than selects features, examining PC loadings can identify genes driving observed patterns. Genes with extreme loading values (positive or negative) on biologically relevant components represent candidates for further investigation. However, for formal feature selection, methods like LASSO or dedicated feature selection techniques are more appropriate.

Q4: Why do my PCA results change dramatically after removing low-count genes? A: Low-count genes contribute predominantly noise to the covariance structure. Their removal eliminates spurious correlations, potentially revealing stronger biological patterns. This is expected behavior and generally improves analysis quality. Maintain consistent filtering thresholds (e.g., requiring ≥5 counts in ≥50% of samples) across comparisons.

Q5: How can I handle non-linear patterns that PCA fails to capture? A: For non-linear data structures, consider complementing PCA with non-linear dimensionality reduction techniques such as t-SNE, UMAP, or kernel PCA [13] [15] [14]. These methods can capture complex relationships that linear PCA might miss, though they may sacrifice some interpretability.

Q6: What does it mean when my PCA shows no clear clustering pattern? A: Absence of clear clusters may indicate: (1) genuine biological homogeneity among samples, (2) high technical noise overwhelming biological signals, (3) insufficient sample size to detect differences, or (4) relevant biology captured in higher components. Investigate quality metrics, increase sample size, or explore higher-dimensional projections.

Frequently Asked Questions (FAQs)

What is the primary purpose of a PCA biplot in RNA-seq analysis? A PCA biplot is a powerful visualization tool that allows researchers to simultaneously observe both the similarity between samples (as points) and the influence of original variables (genes or transcripts, as vectors) in the reduced dimensional space of the principal components. It helps in identifying patterns, such as clusters of samples based on biological replicates or batches, and the genes that are driving these patterns [16] [17].

How can I tell if a principal component captures biological signal or technical noise? There is no single definitive method, but a combination of approaches is best. Biplot interpretation is key: if the component separates samples along known biological groups (e.g., treatment vs. control) and is associated with genes with known biological relevance, it likely represents biological variation. Technical noise (e.g., batch effects, sequencing depth) often correlates with technical covariates and may not form biologically meaningful patterns. The scree plot helps determine if the variance explained by a component is substantial enough to be considered signal [16] [18].

My samples form tight clusters in the biplot, but not by biological group. What does this mean? This is a strong indicator that a major source of variation in your data is not your primary biological variable of interest. The clustering could be driven by technical artifacts, such as batch effects from different processing dates, or by a strong, unaccounted-for biological covariate (e.g., patient sex, age). You should investigate the metadata for the samples within each cluster to identify the common factor [17].

Why are the angles between the variable vectors in the biplot important? The angles between the vectors representing variables (genes) indicate their correlation with one another in the dataset projected onto the PC plane.

- A small angle between two vectors indicates that the two variables are positively correlated [16].

- Vectors pointing in opposite directions (接近 180°) indicate that the variables are negatively correlated [16].

- A 90° angle suggests the two variables are not correlated [16].

How many principal components should I include for a robust biplot in RNA-seq? The goal is to include enough PCs to capture the true biological signal while excluding those representing mostly noise. A scree plot, which plots the eigenvalues (amount of variance explained) for each PC, is the primary diagnostic tool. Look for the "elbow" – the point where the curve bends and the eigenvalues start to flatten out. PCs before this elbow are typically retained [16]. Common rules of thumb include the Kaiser rule (retain PCs with eigenvalues > 1) and retaining enough PCs to explain a sufficient proportion of total variance (e.g., 70-80%) [16] [18]. For RNA-seq data with many variables, the elbow is often more informative than the Kaiser rule.

Troubleshooting Common Biplot Interpretation Challenges

Problem 1: The Biplot is Dominated by a Single Technical Covariate

- Symptoms: Samples cluster strongly by batch, sequencing lane, or other technical factors, obscuring biological groups. The vectors for genes associated with the technical factor are long and dominate the principal components.

- Diagnostic Checklist:

- Check the sample metadata and color points in the biplot by different technical covariates.

- Confirm the finding with a scree plot. The first one or two PCs may explain an unusually high proportion of variance if a strong batch effect is present.

- Solutions:

- Pre-processing: Apply a batch correction algorithm (e.g., ComBat, Harmony, or the

RemoveBatchEffectfunction in R/Bioconductor) to the normalized count data before performing PCA. - Include as Covariate: In downstream linear models, include the technical covariate as a fixed effect to account for its variation.

- Pre-processing: Apply a batch correction algorithm (e.g., ComBat, Harmony, or the

Problem 2: Indistinct Clustering and Poor Separation of Biological Groups

- Symptoms: The biplot shows a "cloud" of points with no clear separation between expected biological groups (e.g., different cell types or disease states).

- Diagnostic Checklist:

- Verify the quality control metrics for your samples. High levels of technical noise in individual samples can mask biological signal.

- Ensure you are using the most variable genes as input for the PCA, not all genes. PCA is driven by variance, and using low-variance genes adds mostly noise.

- Solutions:

- Filter Genes: Perform PCA on the top N (e.g., 1000-5000) most variable genes across samples.

- Check PC Selection: The separation might be present in a higher PC (e.g., PC3 or PC4). Examine pairwise plots of higher PCs.

- Consider Alternative Methods: If the relationships are highly non-linear, methods like t-SNE or UMAP might reveal clusters that PCA cannot. However, always interpret these with caution as they prioritize local over global structure [16].

Problem 3: Misinterpretation of Variable Vectors and Distances

- Symptoms: Incorrect conclusions about the correlation between genes or the importance of a gene for a specific sample.

- Diagnostic Checklist:

- Recall that the absolute length of a variable vector indicates how well it is represented in the 2D PC space—a short vector means its variation is not well captured by the plotted PCs [16].

- Remember that the position of a sample point relative to a variable vector should be interpreted by projecting the point onto the vector. A point does not "belong" to the gene whose vector it is physically closest to on the plot [17].

- Solutions:

- Focus on Angles: When assessing gene-gene relationships, prioritize the interpretation of the angles between vectors over their absolute positions [16].

- Project Points: To understand which variables influence a sample, mentally drop a perpendicular line from the sample point onto a variable vector. The position of this projection along the vector's direction indicates the influence.

Quantitative Guide to PCA Outputs

Table 1: Key PCA Statistics and Their Interpretation

| Statistic | Description | Interpretation in RNA-seq Context | Ideal Value/Range |

|---|---|---|---|

| Eigenvalue | The variance accounted for by each principal component (PC) [18]. | A high eigenvalue for a PC suggests it captures a major source of variation in the data (biological or technical). | Retain PCs with eigenvalue >1 (Kaiser rule) or those before the "elbow" in the scree plot [16] [18]. |

| Proportion of Variance | (Eigenvalue / Total Variance) for each PC [18]. | Indicates what percentage of the total dataset variability is explained by a specific PC. | The first 2-3 PCs should capture a large portion (e.g., >50-70%) for a good 2D/3D summary [16]. |

| Cumulative Proportion | The running total of variance explained by consecutive PCs [18]. | Helps decide the total number of PCs to retain for downstream analysis (e.g., clustering). | Aim for >80% of total variance explained by all retained PCs [16] [18]. |

| Loadings | The coefficients (weights) of the original variables in the linear combination that forms each PC [19]. | The absolute value of a gene's loading on a PC indicates that gene's importance for that PC. | Genes with high absolute loadings (positive or negative) are the key drivers of the variation captured by that PC. |

Experimental Protocol: PCA for RNA-seq Data Analysis

The following workflow is standard for extracting and interpreting principal components from RNA-seq data.

1. Data Preprocessing and Normalization

- Input: Raw count matrix (genes x samples).

- Normalization: Account for differences in library size. For RNA-seq, methods like DESeq2's median-of-ratios` or EdgeR's TMM are standard. This produces normalized, often log-transformed (e.g., log2(CPM+1)), expression values.

- Gene Selection: Filter to include only the top N genes with the highest variance across samples. This focuses the PCA on biologically informative genes.

2. Performing the Principal Component Analysis

- Standardization: Center the data (mean = 0) and, crucially, scale each gene to have unit variance (standard deviation = 1). This prevents highly expressed genes from dominating the PCs purely due to their scale [20].

- Calculation: Perform singular value decomposition (SVD) or eigen-decomposition on the standardized data matrix. This can be done with functions like

prcomp()orprincomp()in R [20].

3. Visualization and Interpretation (Biplot Creation)

- Scores Plot: Plot the sample coordinates (PC scores) for the first two PCs (PC1 vs. PC2). Color points by known biological and technical groups to identify patterns.

- Loadings Plot: Superimpose the variable loadings as vectors onto the scores plot to create a biplot [16]. The directions and lengths of these vectors indicate the contribution of each gene to the PCs.

4. Determination of Component Number

- Generate a Scree Plot: Plot the eigenvalues of each PC in descending order.

- Apply Decision Rules: Use the scree test (look for the "elbow") and the Kaiser criterion (eigenvalue >1) to decide how many PCs represent meaningful signal versus noise [16] [18].

The logical relationships and workflow for interpreting PCA in the context of RNA-seq data are summarized in the following diagram.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for PCA in RNA-seq

| Tool / Resource | Function | Application Note |

|---|---|---|

| R Statistical Software | Open-source environment for statistical computing and graphics. | The primary platform for performing custom PCA and generating high-quality biplots. |

| Seurat R Package | A comprehensive toolkit for single-cell RNA-seq data analysis [21]. | Its RunPCA() function is optimized for scRNA-seq data and is widely used in the field. |

| FactoMineR & factoextra R Packages | Provides user-friendly functions for multivariate analysis, including PCA and visualization [20]. | Excellent for creating publication-ready biplots and scree plots with minimal code. |

| Scikit-learn (Python) | Machine learning library that includes a robust PCA implementation. | Used for PCA in Python-based bioinformatics pipelines via the decomposition.PCA module [19]. |

| DESeq2 / EdgeR R Packages | Methods for differential expression analysis of RNA-seq data. | Used for the initial normalization and transformation of raw count data prior to PCA. |

The Critical Link Between PC Selection and Downstream Analysis Quality

Frequently Asked Questions (FAQs)

FAQ 1: Why is the number of Principal Components (PCs) so critical for my RNA-seq analysis? Selecting the correct number of PCs is a fundamental step that directly impacts all subsequent analysis. Too few PCs can oversimplify your data, discarding biologically meaningful variation and leading to poorly separated clusters. Conversely, too many PCs introduce noise, which can result in overfitting and spurious findings, reducing the reproducibility and reliability of your results such as differential expression or trajectory inference [22]. The goal is to retain the signal—the biological variation of interest—while excluding technical noise.

FAQ 2: My PCA plot shows a strong separation along PC1 that correlates with sequencing batch. Is this a problem? Yes, this is a common and significant problem known as batch effects. PCA is highly effective at revealing major sources of variation in your data, which are often technical artifacts like those from different sequencing batches, library preparation dates, or overall sequencing depth [23] [24]. If these technical factors dominate the biological signal (e.g., differences between cancer subtypes), your downstream analysis will be confounded. It is crucial to address these batch effects through normalization or correction methods before proceeding with PC selection and further analysis.

FAQ 3: Are there automated methods to choose the number of PCs, and are they reliable? Several automated methods exist, such as the elbow method in a scree plot or statistical tests like the Tracy-Widom statistic. However, their reliability can vary. The Tracy-Widom statistic, for example, is known to be highly sensitive and may inflate the number of significant PCs [25]. While these methods provide a valuable starting point, they should not be followed blindly. The optimal number of PCs often requires a combination of automated metrics, visual inspection of PCA plots, and biological reasoning based on the expected cell types or conditions in your experiment.

FAQ 4: How does PC selection specifically affect my clustering results? The number of PCs used as input for clustering algorithms (e.g., K-means, hierarchical clustering) directly controls the resolution and accuracy of the identified cell populations or sample groups. Using an insufficient number of PCs may cause distinct cell types to merge, while excessive PCs can lead to the splitting of a homogeneous population into multiple false sub-clusters due to noise [26]. Benchmarking studies have shown that the quality of clustering, as measured by metrics like Within-Cluster Sum of Squares (WCSS) and Mutual Information, is highly dependent on the dimensionality of the input data [26].

Troubleshooting Guides

Issue 1: Poor Cluster Separation in Downstream Analysis

Symptoms

- Clusters on your t-SNE or UMAP plot are poorly defined or overlapping.

- Known, biologically distinct cell types or sample groups do not form separate clusters.

- Clustering metrics (e.g., silhouette score) are low.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient PCs retained, discarding biological signal. [22] | 1. Generate a scree plot and note the "elbow" point.2. Check the proportion of variance explained by the last PC you included.3. Re-run clustering with a gradually increasing number of PCs and observe cluster stability. | Increase the number of PCs used for clustering. A good practice is to use the number of PCs where the cumulative variance explained reaches a plateau (e.g., 80-90%). |

| Technical variation (batch effects) dominating the first few PCs. [23] | 1. Color your PCA plot by technical metrics (e.g., batch, sequencing depth).2. Check if these technical factors strongly correlate with the first few PCs. | Apply a batch correction algorithm (e.g., ComBat, Harmony) to your data before performing PCA. Re-check the PCA plot to confirm the batch effect is reduced. |

| Over-correction or data distortion from using too many PCs. [22] | 1. Observe if small, likely spurious, clusters appear when using a very high number of PCs.2. Check if the results become less reproducible on a different subset of the data. | Systematically reduce the number of PCs and monitor the stability of the major clusters. Use a statistically guided method (e.g., Tracy-Widom) to set an upper limit. |

Issue 2: Inconsistent or Non-Reproducible Results

Symptoms

- PCA results change dramatically with minor changes in the dataset (e.g., adding or removing a few samples).

- Findings cannot be replicated in a similar, independent dataset.

- Principal components are unstable and cannot be biologically interpreted.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Outliers are exerting undue influence on the PCA. [23] | 1. Create a PCA plot and look for samples that are isolated from the main cloud of data points.2. Calculate multivariate standard deviation ellipses; samples outside a 2 or 3 standard deviation threshold are potential outliers. [23] | Identify and scrutinize outlier samples. If they are determined to be technical artifacts, consider their careful removal. If they are valid biological outliers, they may warrant separate analysis. |

| Inappropriate data preprocessing. | 1. Verify the normalization method used (e.g., for RNA-seq, CPM, TPM, or DESeq2's median of ratios).2. Check if the data was scaled (mean-centered and unit-variance) before PCA, which is standard practice. [23] | Re-visit your preprocessing steps. Ensure the data is properly normalized and scaled before performing PCA. |

| The selected number of PCs is not robust. [25] | 1. Use a resampling method (e.g., bootstrapping) to see if the selected PCs are stable.2. Check if the proportion of variance explained by key PCs is consistently high across subsamples. | Choose a number of PCs that is stable across multiple resampling iterations. Consider using more robust dimensionality reduction methods like Randomized SVD for large datasets. [26] |

Experimental Protocols & Data Presentation

Protocol 1: A Standard Workflow for PC Selection in RNA-seq Analysis

Objective: To systematically determine the optimal number of principal components for downstream clustering analysis of RNA-seq data.

Materials and Reagents:

- Normalized Count Matrix: A gene expression matrix (samples x genes) that has been normalized for sequencing depth and transformed (e.g., VST for bulk RNA-seq, log-normalized for scRNA-seq).

- Computational Environment: R or Python with necessary libraries (e.g.,

stats,scikit-learn).

Methodology:

- Data Preprocessing: Center and scale the normalized expression matrix so that each gene has a mean of zero and a standard deviation of one. This ensures all genes contribute equally to the PCA. [23]

- PCA Computation: Perform PCA on the preprocessed matrix. Retain a generous number of potential PCs (e.g., 50) for initial evaluation.

- Generate a Scree Plot: Plot the proportion (or percentage) of variance explained by each consecutive principal component. Look for the "elbow" – the point where the explained variance drops sharply and then forms a gradual tail.

- Calculate Cumulative Variance: Compute the cumulative variance explained by the first k PCs. A common threshold is to choose the smallest k that explains a substantial amount (e.g., 80-90%) of the total variance.

- Inspect Batch Effects: Color the PCA plot (PC1 vs. PC2) by technical batches. If strong batch effects are present, apply a correction method and return to step 2.

- Downstream Validation: Use the selected PCs as input for your clustering algorithm. Evaluate clustering quality using internal metrics (e.g., Dunn Index, Gap Statistic for unlabeled data) or external metrics (e.g., Hungarian algorithm, Mutual Information for labeled data). [26] Iterate with different PC numbers to find the value that optimizes your chosen metrics.

Quantitative Comparison of PC Selection Methods

The table below summarizes various methods for selecting the number of PCs, highlighting their advantages and limitations.

| Method | Description | Advantages | Limitations | Reported Performance/Threshold |

|---|---|---|---|---|

| Elbow (Scree) Plot | Visual identification of the point where variance gain drops. [22] | Intuitive and simple to implement. | Subjective; the "elbow" is not always clear. | Often used as a first heuristic; effective when a clear plateau is visible. |

| Cumulative Variance | Selects PCs that explain a pre-defined percentage of total variance (e.g., 90%). [22] | Provides a quantitative and reproducible threshold. | The threshold is arbitrary; may retain irrelevant PCs or discard biological signal if set poorly. | A common threshold is 80-90%, but this is dataset-dependent. |

| Tracy-Widom Statistic | Statistical test to identify PCs that explain more variance than expected by noise. [25] | Provides a p-value for significance of each PC. | Known to be highly sensitive, often inflating the number of significant PCs. [25] | In one population genetics study, it was found to be overly sensitive compared to other methods. [25] |

| Random Matrix Theory (RMT) | Uses RMT to distinguish signal eigenvalues from noise eigenvalues. [27] | Mathematically grounded and less sensitive to outliers. | Methodologically complex; requires specialized implementation. | In single-cell RNA-seq, an RMT-guided sparse PCA approach consistently outperformed standard PCA in cell-type classification. [27] |

The Scientist's Toolkit: Research Reagent Solutions

| Item/Tool | Function in PC Analysis & RNA-seq |

|---|---|

| PCA Algorithms (SVD) | The core computational method for decomposing the data matrix into principal components. Essential for the initial reduction. [23] |

| Batch Correction Tools (e.g., Harmony, ComBat) | Algorithms used to remove technical variation (batch effects) that can confound the first few PCs and mask biological signal. [23] |

| Clustering Algorithms (e.g., K-means, Hierarchical) | Downstream methods used to group samples or cells based on the reduced-dimensional space provided by the selected PCs. [26] |

| Variance Stabilizing Transformation (VST) | A preprocessing step for RNA-seq count data that stabilizes variance across the mean expression range, making it more suitable for PCA. |

| Randomized SVD | An approximate PCA method that offers significant computational speed-ups for very large datasets while maintaining accuracy. [26] |

| Sparse PCA Algorithms | Methods that produce principal components with sparse loadings, making them easier to interpret biologically (e.g., identifying marker genes). [27] |

Workflow Visualization

The following diagram illustrates the logical workflow for troubleshooting and optimizing PC selection, integrating the concepts from the FAQs and guides above.

Diagram Title: PC Selection and Troubleshooting Workflow

Frequently Asked Questions

What are eigenvectors and eigenvalues in the context of RNA-seq PCA? In PCA, eigenvectors (also called loadings) define the direction of the principal components, which are the new axes of the projected data. They represent the "recipe" or linear combination of the original genes that make up each new component [28] [16]. Eigenvalues quantify the amount of variance the data has along each corresponding eigenvector. A higher eigenvalue means that its principal component captures more variation from the original dataset [5] [10].

My first two Principal Components (PCs) explain a low percentage of variance. Is my experiment a failure? Not necessarily. A low cumulative variance (e.g., below 60-70%) for PC1 and PC2 simply means that the strongest sources of variation in your data are not two-dimensional. The key is to check if the observed separation aligns with your experimental design. The relevant biological signal might be captured in PC3 or PC4 [5]. Use the scree plot to decide how many PCs to examine.

How do I interpret a PCA biplot? A PCA biplot overlays a score plot (showing samples as dots) and a loading plot (showing variables/genes as vectors) [16].

- Sample Clusters: Samples located close to each other have similar gene expression profiles.

- Variable Influence: The direction and length of a vector show how strongly that gene influences the PCs. Longer vectors have a greater influence.

- Correlations: The angle between vectors indicates the correlation between genes. Acute angles suggest positive correlation, obtuse angles suggest negative correlation, and 90-degree angles suggest no correlation [16].

How many principal components should I retain for my RNA-seq analysis? There is no universal answer, but two common methods are:

- The Scree Plot: Plot the eigenvalues (or the proportion of variance explained) for each PC and look for the "elbow" – the point where the curve bends and the slope flattens. Retain the PCs before the elbow [29] [16].

- The Kaiser Rule: Retain all PCs with an eigenvalue greater than 1 [16]. For RNA-seq, a cumulative variance of 70-80% captured by the retained PCs is often a good target [16].

Troubleshooting Guide: Interpreting Your PCA Output

Problem: No Clear Group Separation in the PCA Plot

- Potential Cause 1: The largest sources of variation in your data are technical (e.g., batch effects, library preparation dates) rather than biological. These can obscure the biological signal of interest.

- Solution: Investigate if the samples cluster by technical factors. If so, include these as covariates in your differential expression analysis (e.g., using

DESeq2orlimma).

- Solution: Investigate if the samples cluster by technical factors. If so, include these as covariates in your differential expression analysis (e.g., using

- Potential Cause 2: The biological effect you are studying is weak or the variation between groups is small compared to the variation within groups.

- Solution: Check the variance explained by higher PCs (e.g., PC3, PC4). The signal might be present but not visible in PC1 and PC2 [5]. Ensure your sample size has sufficient statistical power to detect the effect.

Problem: An Individual Sample is an Outlier

- Potential Cause: The outlier sample may suffer from poor RNA quality, a failed library construction, or a sample mix-up.

- Solution: First, check the QC metrics for that specific sample (mapping rates, ribosomal RNA contamination, etc.). If the QC is poor, consider removing the sample. If the QC is acceptable, the sample might represent a genuine biological outlier worth further investigation.

Problem: The Scree Plot Shows No Clear "Elbow"

- Potential Cause: Your dataset has many small, independent sources of variation, with no single dominant factor.

- Solution: Use the Kaiser rule (retain PCs with eigenvalue >1) or a cumulative variance threshold (e.g., retain enough PCs to explain >80% of the total variance) [16]. You may also need to rely more on your biological hypothesis to decide which PCs are relevant.

Core Concepts and Data Interpretation

Eigenvectors and Eigenvalues

PCA simplifies high-dimensional RNA-seq data (thousands of genes) by creating new, uncorrelated variables called Principal Components (PCs). The first PC (PC1) is the direction that captures the maximum variance in the data, the second PC (PC2) captures the next highest variance while being orthogonal to PC1, and so on [29] [10].

- Eigenvectors (Loadings): These are the coefficients assigned to each original gene in the linear combination that forms a PC. They indicate the contribution, or "weight," of each gene to that PC [30] [28]. A gene with a high absolute loading value on PC1 is a major driver of the largest variation in your dataset.

- Eigenvalues: The eigenvalue for a PC is a single number that equals the sum of the squared distances from the projected points to the origin [28]. It represents the amount of variance captured by that PC. The higher the eigenvalue, the more important that PC is in describing the data.

The relationship between eigenvalues and the proportion of variance explained is calculated as follows:

Proportion of Variance for PCn = (Eigenvalue of PCn) / (Sum of all Eigenvalues)

The following diagram illustrates the core relationships in a PCA result:

The Scree Plot

A scree plot is a critical diagnostic tool for deciding how many PCs to retain. It is a simple line plot that shows the eigenvalue (or the proportion of variance explained) for each principal component, ordered from largest to smallest [30] [16].

- How to Read It: The x-axis represents the principal component number (PC1, PC2, PC3...), and the y-axis represents the eigenvalue or the proportion of variance explained.

- The "Elbow": The ideal scree plot is steep at first, then bends at an "elbow" before flattening out. The components before this elbow are the ones that contain the most signal and should be retained. Components after the elbow are often considered to represent noise [16].

The table below summarizes a typical scree plot output and its interpretation:

| Principal Component | Eigenvalue | Proportion of Variance Explained (%) | Cumulative Variance (%) | Recommended Action |

|---|---|---|---|---|

| PC1 | 12.5 | 38.8 | 38.8 | Retain |

| PC2 | 5.1 | 16.3 | 55.1 | Retain |

| PC3 | 2.7 | 8.5 | 63.6 | Retain (Borderline) |

| PC4 | 1.5 | 4.8 | 68.4 | Discard |

| PC5 | 1.1 | 3.4 | 71.8 | Discard |

Example interpretation: The scree plot shows an elbow after PC3. The first three PCs explain ~64% of the total variance and are likely to contain the most biologically relevant information.

Experimental Protocol: Running PCA on RNA-seq Data

This protocol outlines the key steps for performing and interpreting a PCA on a normalized RNA-seq count matrix using R.

Objective: To reduce the dimensionality of the gene expression data and visualize sample relationships and key drivers of variation.

Materials and Reagents:

| Item | Function / Explanation |

|---|---|

| Normalized Count Matrix | Input data. Raw counts should be normalized (e.g., via TMM in edgeR or variance stabilizing transformation in DESeq2) to correct for library size and other technical biases [5] [31]. |

| R Statistical Environment | Software platform for computation. |

prcomp() function |

The core R function used to perform PCA. It is preferred over princomp() for better numerical accuracy [30]. |

| ggplot2 package | R package used for creating publication-quality visualizations, including PCA and scree plots. |

Step-by-Step Procedure:

Normalization & Transformation:

- Begin with a raw count matrix. Do not use TPM values for differential analysis or PCA that feeds into it; use normalized counts (e.g., from

DESeq2oredgeR) [5]. - It is common to transform the normalized counts using a logarithmic function (e.g.,

log2(norm_counts + 1)) or a variance-stabilizing transformation (VST) to reduce the influence of extremely highly expressed genes.

- Begin with a raw count matrix. Do not use TPM values for differential analysis or PCA that feeds into it; use normalized counts (e.g., from

Data Preparation:

- The

prcomp()function expects samples to be in rows and variables (genes) to be in columns. Since a typical RNA-seq matrix has samples as columns and genes as rows, you must transpose the matrix. - Code:

pca_matrix <- t(my_normalized_log_transformed_matrix)

- The

Perform PCA:

- Execute the PCA using the

prcomp()function. Thescale.argument is critical. - Scaling: Setting

scale. = TRUEis generally recommended. This standardizes the data so that each gene has a mean of 0 and a standard deviation of 1, ensuring that highly expressed genes do not dominate the PCA simply because of their scale [30] [10]. - Code:

pca_result <- prcomp(pca_matrix, scale. = TRUE)

- Execute the PCA using the

Extract Outputs:

- Scores (PC scores):

pca_scores <- pca_result$x(The coordinates of your samples in the new PC space). - Loadings (Eigenvectors):

pca_loadings <- pca_result$rotation(The weight of each gene in each PC). - Eigenvalues:

pca_eigenvalues <- (pca_result$sdev)^2(The variance explained by each PC).

- Scores (PC scores):

Generate Diagnostic Plots:

- Scree Plot:

- PCA Plot (PC1 vs. PC2):

Interpret Results:

- Use the scree plot to determine the number of meaningful PCs.

- Examine the PCA plot for clustering of samples according to experimental conditions and identify any outliers.

- Analyze the loadings for the key PCs to identify which genes are the strongest drivers of the observed variation.

Practical Methods for Determining Optimal Principal Components in RNA-seq Workflows

Frequently Asked Questions

Q1: What is a Scree Plot and why is it critical for RNA-seq PCA? A Scree Plot is a simple line segment plot that shows the fraction of total variance in the data explained by each successive principal component (PC) in a Principal Component Analysis (PCA) [32]. It is essential for RNA-seq research because it helps determine the optimal number of PCs to retain, balancing the need for dimensionality reduction against the loss of critical biological information [33] [30]. This prevents over-interpretation of noise or under-representation of true transcriptomic signal.

Q2: My Scree Plot has multiple elbows. How do I determine the correct number of components? Multiple elbows are a common challenge, making the test subjective [32]. In such cases, employ these additional, more objective criteria to make a final decision:

- Kaiser-Guttman Criterion: Retain all PCs with eigenvalues greater than 1 [32] [34].

- Proportion of Variance Explained: Retain enough PCs to cumulatively explain at least 70-80% of the total variance in your dataset [32].

- Contextual Evaluation: For RNA-seq, consider if the selected PCs separate your biological replicates (e.g., by treatment group or cell type) in the resulting score plot. A poorly chosen cutoff may obscure these patterns.

Q3: After selecting PCs via the Scree Plot, my sample clusters still look poor. What could be wrong? A poor score plot can result from several issues:

- Low-Quality Samples: The dataset may contain low-quality RNA-seq samples that do not represent the population. Use a PCA plot of transcript integrity numbers (TIN scores) to identify and potentially remove such outliers before re-running the analysis [35].

- Insufficient Scaling: If your data was not properly scaled (centered and normalized) before PCA, highly expressed genes can dominate the first PC, masking other sources of variation. Ensure you perform scaling during the PCA computation [36] [30].

- High Noise: If the biological signal is weak, PCA might be capturing technical noise. Consider if PCA is the best method; alternatives like t-SNE or UMAP might be more effective for visualization, though they do not provide variance explained metrics like a Scree Plot [32].

Q4: Are there more robust alternatives to the standard Scree Plot? Yes. The standard Scree Plot can be sensitive to outliers. You can consider:

- Robust PCA (RPCA): This method decomposes the data into a low-rank component and a sparse error component, making it more resilient to outliers (e.g., from dead pixels or X-ray spikes in imaging data, or anomalous samples) [37].

- Automated Elbow Detection: Some software libraries, like HyperSpy, can automatically estimate the "elbow" or "knee" by finding the point in the Scree Plot with the maximum distance to the line connecting the first and last points [37].

Experimental Protocol: Scree Plot Analysis for RNA-seq Data

This protocol details the steps to perform PCA and generate a Scree Plot from a gene expression matrix, common in RNA-seq analysis.

1. Data Preparation and Preprocessing Begin with a gene expression matrix (e.g., FPKM, TPM, or counts after variance stabilization) where rows represent samples and columns represent genes.

- Subset Numerical Data: Ensure all data is numerical. Remove any non-numerical columns (e.g., gene symbols should be row names, not a column) [38] [39].

- Transpose the Matrix: PCA in this context expects samples as rows and variables (genes) as columns. If your matrix has genes as rows, transpose it [30].

- Center and Scale the Data: It is critical to standardize the data so that each gene has a mean of 0 and a standard deviation of 1. This prevents highly expressed genes from disproportionately influencing the PCs [36] [30]. Most PCA functions have a parameter for this (e.g.,

scale.unit = TRUEin R'sFactoMineRorscale = TRUEinprcomp) [34] [39].

2. Perform Principal Component Analysis Execute PCA on the preprocessed data. The following code snippets use R and Python.

- Using R (

prcomp): - Using R (

FactoMineR): - Using Python (

scikit-learn):

3. Extract Variance Explained and Create the Scree Plot Extract the variance explained by each PC and create the plot.

- Calculate Key Metrics:

- Variances (Eigenvalues): The raw variance captured by each PC. Calculated as the square of the standard deviations of the PCs (

pca$sdevin R) [38]. - Proportion of Variance Explained: Each eigenvalue divided by the total sum of all eigenvalues [30] [39].

- Cumulative Variance Explained: The running total of the proportion of variance explained.

- Variances (Eigenvalues): The raw variance captured by each PC. Calculated as the square of the standard deviations of the PCs (

- Create the Scree Plot:

- Using R (

ggplot2): - Using Python (

matplotlib):

- Using R (

4. Interpret the Plot and Determine the Number of Components Identify the "elbow" point—the PC where the slope of the curve sharply decreases and begins to flatten. The PCs just before this point are typically retained [32]. Validate this choice using the Kaiser rule (eigenvalue > 1) and the cumulative variance threshold (e.g., >80%) [32].

The logical workflow for this entire process is summarized in the following diagram:

Quantitative Data for Scree Plot Interpretation

The table below summarizes the key metrics for a hypothetical RNA-seq PCA with 4 principal components. This structure helps in objectively evaluating the Scree Plot.

Table 1: Key Metrics for Scree Plot Interpretation (Hypothetical Data)

| Principal Component | Eigenvalue | Variance Explained (%) | Cumulative Variance Explained (%) | Kaiser Rule (Eigenvalue >1) | Meets 80% Threshold? |

|---|---|---|---|---|---|

| PC1 | 8.45 | 62.01% | 62.01% | Yes | No |

| PC2 | 3.37 | 24.74% | 86.75% | Yes | Yes |

| PC3 | 1.21 | 8.91% | 95.66% | Yes | Yes |

| PC4 | 0.59 | 4.34% | 100.00% | No | Yes |

Interpretation Guide:

- Elbow Point: In this example, the most dramatic drop in variance occurs after PC2, making it the likely elbow.

- Kaiser Rule: Suggests retaining PC1, PC2, and PC3 (eigenvalues >1).

- 80% Variance Threshold: Is achieved by PC2 (86.75%).

- Final Decision: For dimensionality reduction and visualization in a 2D plot, selecting PC1 and PC2 is justified as they explain most of the variance (86.75%) and the elbow is at PC2. If more signal is required, PC3 could be included.

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Tools for PCA and Scree Plot Analysis in RNA-seq

| Tool Name / Software Package | Language/Environment | Primary Function | Relevance to RNA-seq PCA |

|---|---|---|---|

| FactoMineR & factoextra | R | Multivariate data analysis and visualization. | Streamlines PCA implementation and generates publication-ready Scree Plots and score plots [34]. |

| prcomp() | R (base stats) | Core function for performing PCA. | A standard and reliable function for computing principal components [38] [30]. |

| scikit-learn | Python | Machine learning library. | Provides the PCA class for performing decomposition and accessing explained variance [36]. |

| ggplot2 | R | Grammar of graphics plotting system. | Offers flexible and customizable creation of Scree Plots [39]. |

| ggfortify | R | Unified interface for plotting results of various models. | Can quickly plot prcomp results, including Scree Plots and sample score plots [35]. |

| RSeQC | Command Line / Python | RNA-seq quality control tool. | Calculates TIN scores, enabling RNA quality assessment via PCA to identify outlier samples [35]. |

| Cufflinks/Cuffnorm | Command Line | Tool for transcript assembly and expression quantification. | Generates FPKM values, a common normalized input matrix for PCA in RNA-seq [35]. |

Frequently Asked Questions

1. What is the cumulative explained variance ratio in PCA? The cumulative explained variance ratio is the sum of the variance percentages explained by the first m principal components. It indicates how much of the original data's information is captured when you retain those components. For example, if the first principal component (PC1) explains 50% of the variance and the second (PC2) explains 30%, the cumulative explained variance ratio up to PC2 is 80% [33].

2. Why is a threshold in the 70-90% range commonly used for selecting principal components? This range is a widely adopted heuristic that balances information retention with dimensionality reduction. Selecting enough components to capture 70-90% of the total variance ensures that the majority of the signal, including important biological variation, is preserved while effectively filtering out noise [40]. The specific target within this range can be adjusted based on the study's goals.

3. My PCA plot doesn't show clear group separation. Should I increase the number of components? Increasing the number of components might reveal separation in higher dimensions (e.g., PC3 vs. PC4). However, you should first check the variance explained by these higher components. If the cumulative variance is already high (e.g., >90%), the lack of separation might be due to high biological similarity between samples or high technical noise, rather than an insufficient number of components [8].

4. How does RNA-seq data preprocessing affect the cumulative variance? The choice of normalization and transformation can significantly impact the variance structure. For instance, using log-CPM (Counts Per Million) values with TMM normalization is a common approach that adjusts for library size differences before performing PCA. These steps ensure that the largest sources of variance in the data reflect biological conditions rather than technical artifacts [31].

5. Are fixed thresholds like 70-90% always appropriate? No. The optimal threshold is dataset-dependent. For some studies, the first two components might capture over 90% of the variance, making the decision straightforward. In other, more complex datasets, you might need many more components to reach 70% variance. Always complement the threshold with other methods, like the scree plot, to make an informed decision [41].

Experimental Protocols & Data Analysis

Protocol 1: A Standard Workflow for PCA on RNA-seq Data

This protocol outlines the key steps for performing and interpreting PCA on a gene expression matrix from an RNA-seq experiment [31] [42].

- Input Data Preparation: Start with a raw count matrix where rows represent genes and columns represent samples. Prepare a metadata table that describes the experimental conditions for each sample (e.g., treatment, batch, patient ID).

- Normalization and Transformation: Normalize the raw counts to account for differences in library size and sequencing depth. A common method is to calculate logCPM (Counts Per Million) values, often using the TMM (Trimmed Mean of M-values) normalization method [31].

- Data Scaling: Standardize the transformed data by applying a Z-score normalization across samples for each gene. This means centering each gene's expression to a mean of zero and scaling it to unit variance. This step ensures that all genes contribute equally to the PCA and prevents highly expressed genes from dominating the variance [31].

- PCA Execution: Perform the principal component analysis on the normalized, transformed, and scaled data matrix. This can be done using standard statistical software or dedicated packages like

pcaExplorerin R [42]. - Component Selection: Determine the number of components to retain using the methods detailed in the following sections.

- Visualization and Interpretation: Visualize the samples in the space defined by the first few principal components (e.g., PC1 vs. PC2). Color the points by the experimental variables from your metadata to assess whether the primary sources of variance align with the study design [43] [8].

Protocol 2: Method Comparison for Selecting the Number of Components

This protocol applies and compares multiple data-driven methods to select the number of principal components, moving beyond a fixed threshold [41].

- Objective: To robustly determine the number of principal components (k) for downstream analysis.

- Experimental Input: A normalized, scaled, and transformed RNA-seq gene expression matrix.

- Methodology: Apply the following six methods and synthesize the results.

- Expected Output: A final value for k (the number of components) that is supported by multiple lines of evidence.

Data-Driven Threshold Selection Methods

The table below summarizes six methods for selecting the optimal number of principal components, a process also known as hyperparameter tuning for n_components [41].

| Method | Methodology | Implementation Steps | Best Use Case |

|---|---|---|---|

| The Scree Plot | Visual inspection of the variance explained by each component. | Plot the eigenvalues or percentage of variance against the component number. Look for an "elbow" point where the curve bends and the slope flattens. | Quick, intuitive initial assessment. |

| Cumulative Variance Rule | Retain components until a pre-defined threshold of total variance is captured. | Calculate the cumulative explained variance ratio. Select the smallest number of components where this ratio first meets or exceeds a threshold (e.g., 70%, 80%, or 90%). | When a specific amount of information retention is required [40]. |

| Kaiser Criterion | Retain components with eigenvalues greater than 1. | Calculate eigenvalues from the PCA. Any component with an eigenvalue > 1 is considered more informative than a single standardized variable. | As a conservative baseline method; often used in social sciences. |

| Proportion of Variance Explained | Ensure each retained component explains at least a minimum level of variance. | Set a minimum threshold for the proportion of variance a component must explain (e.g., 5%). Retain all components that meet this criterion. | Filtering out very minor components. |

| Broken Stick Model | Compare observed eigenvalues to those expected from random data. | Retain components whose observed eigenvalues are larger than the eigenvalues generated by a broken stick distribution. | Robust, statistically grounded selection against a null model. |

| Parallel Analysis | Compare eigenvalues to those from a randomized dataset. | Perform PCA on multiple randomly permuted versions of your dataset. Retain components in the real data whose eigenvalues exceed those from the permuted data. | Considered one of the most accurate methods for avoiding overfitting. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in PCA for RNA-seq |

|---|---|

| R/Bioconductor | An open-source software environment for the statistical analysis and comprehension of genomic data, including many packages for RNA-seq analysis [42]. |

| pcaExplorer | A dedicated R/Bioconductor package that provides an interactive Shiny app for exploring RNA-seq data with PCA. It aids in quality control, visualization, and functional interpretation of components [42]. |

| DESeq2 | A widely used R package for differential expression analysis. It provides robust methods for normalizing RNA-seq count data, which is a critical preprocessing step before PCA [42]. |

| Scikit-learn (Python) | A popular machine learning library for Python that includes a well-implemented PCA() class, useful for applying the various selection methods described [41]. |

| HTSeq / featureCounts | Software tools used to process raw sequencing reads into a count matrix by assigning reads to genomic features, which serves as the primary input for PCA [44] [42]. |

| Log-CPM Values | The log-transformed counts-per-million values. This transformation is part of standard RNA-seq data preprocessing to make samples comparable before conducting PCA [31]. |

| TMM Normalization | A normalization method used to compute effective library sizes, which are then used to calculate CPM values, helping to remove technical variation [31]. |

| Z-score Normalization | The process of mean-centering and scaling variables to unit variance. Applied across samples for each gene, it ensures all genes contribute equally to the PCA results [31]. |

Frequently Asked Questions

FAQ 1: How does batch effect correction influence the selection of principal components? Batch effect correction directly alters the covariance structure of your data, which is what Principal Component Analysis (PCA) operates on. When successful, it removes technical variance, allowing principal components (PCs) to capture more biological signal. Studies show that applying batch effect correction to RNA-seq data before PCA can significantly improve the performance of downstream classifiers in cross-study predictions [45] [46]. However, the outcome is context-dependent; improper correction can sometimes remove biological signal along with technical noise, worsening performance [45] [47].

FAQ 2: I've applied batch effect correction, but my samples still cluster by batch in the PCA. What should I do?

This indicates residual batch effects. First, verify that your experimental design includes all your conditions of interest in each batch; correction is extremely difficult if a biological condition is confounded with a single batch [48]. Second, consider alternative or additional correction methods. Some advanced approaches integrate quality scores (e.g., Plow) or use a reference batch with minimal dispersion (ComBat-ref) for more robust correction [47] [49]. Finally, explore whether outlier samples are driving the effect, as their removal can sometimes improve correction [47].

FAQ 3: Does the type of normalization I use before PCA matter for RNA-seq count data? Yes, profoundly. Normalization accounts for technical variability like sequencing depth. Using raw counts can lead to PCs dominated by this technical variance rather than biology.

- Log-Normalization: A common approach (e.g.,

log(1 + CPM)), but it can induce artificial bias with small counts [50]. - Count-Based Models (e.g., Pearson Residuals, GLM-PCA): These directly model the count nature of RNA-seq data and can account for batches and cell-specific factors during normalization, often providing a better foundation for PCA [50] [51]. The choice between methods involves a trade-off between theoretical advantages and computational efficiency [50].

FAQ 4: What is the most reliable method for choosing the number of principal components after preprocessing? There is no single universally "correct" answer; the optimal method depends on your goal [52].

- For General Exploration: The scree plot (plotting eigenvalues) lets you visually identify an "elbow" where the variance explained per component drops off [53].

- For Retaining a Specific Amount of Information: The cumulative explained variance method is straightforward. You select the number of components required to explain a preset threshold (e.g., 80-90%) of the total variance in the data [52] [54].

- For Downstream Supervised Learning: Treat the number of PCs as a hyperparameter. Use cross-validation on your final model (e.g., a classifier) to select the number of components that yields the best predictive performance (e.g., highest F1-score) [52].

Troubleshooting Guides

Issue 1: Biological Signal Is Lost After Batch Effect Correction

Problem: After applying batch effect correction, the separation between your biological groups (e.g., tumor vs. normal) in the PCA plot has diminished or disappeared.

| Potential Cause | Solution | Supporting Evidence |

|---|---|---|

| Over-Correction | Use a milder correction method or a reference-based approach (e.g., ComBat-ref) that adjusts one batch towards a stable reference, helping to preserve biological signal [49]. | A study on RNA-seq data preprocessing found that correction improved performance on one test set but worsened it on another, highlighting the risk of over-correction [45] [46]. |