Optimizing STAR for Large-Scale RNA-seq: A Comprehensive Guide to Accelerate Biomedical Discovery

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for optimizing the STAR aligner in large-scale RNA-seq studies.

Optimizing STAR for Large-Scale RNA-seq: A Comprehensive Guide to Accelerate Biomedical Discovery

Abstract

This guide provides researchers, scientists, and drug development professionals with a comprehensive framework for optimizing the STAR aligner in large-scale RNA-seq studies. Covering foundational principles, advanced methodological workflows, practical troubleshooting, and rigorous validation strategies, it addresses critical challenges in cloud infrastructure, computational efficiency, and cost-effectiveness. Drawing from recent performance analyses and real-world applications, we present actionable optimization techniques that can significantly reduce execution time and computational costs while maintaining high data quality, ultimately accelerating transcriptomic research in drug discovery and clinical applications.

Understanding STAR Aligner Fundamentals for Large-Scale Transcriptomics

The Role of STAR in Modern RNA-seq Analysis Pipelines

The Spliced Transcripts Alignment to a Reference (STAR) aligner employs a novel two-step algorithm that enables ultrafast and accurate mapping of RNA-seq reads, which is particularly crucial for handling spliced transcripts where exons are non-contiguous [1] [2].

Core Algorithmic Steps

STAR's alignment strategy consists of two main phases [1] [2]:

Seed Searching: STAR searches for the Maximal Mappable Prefix (MMP) - the longest substring of the read that exactly matches one or more locations on the reference genome. This sequential search of unmapped read portions makes the algorithm extremely efficient. The algorithm uses uncompressed suffix arrays for rapid searching with logarithmic scaling against reference genome size.

Clustering, Stitching, and Scoring: In the second phase, seeds are clustered based on proximity to "anchor" seeds, then stitched together using a dynamic programming algorithm that allows for mismatches, insertions, deletions, and splice junctions. This process reconstructs complete read alignments across splice junctions.

Performance Characteristics

STAR demonstrates exceptional performance characteristics that make it suitable for large-scale RNA-seq analyses [1]:

| Performance Metric | Capability | Comparison to Other Aligners |

|---|---|---|

| Mapping Speed | >50x faster than other aligners | Aligns 550 million 2×76 bp paired-end reads per hour on 12-core server |

| Read Length Adaptability | Suitable for both short (36 bp) and long reads (several kb) | Outperforms aligners designed only for short reads |

| Memory Requirements | 16-32 GB for mammalian genomes | Higher than some aligners but justified by performance gains |

| Accuracy | 80-90% validation rate for novel splice junctions | High precision and sensitivity |

Implementation Guide: Running STAR in Practice

Basic Two-Step Workflow

Implementing STAR follows a structured two-step process that ensures efficient alignment [2]:

Critical Parameters for Large-Scale Analyses

Optimizing STAR parameters is essential for handling large-scale datasets efficiently. Below are key parameters with recommended settings:

| Parameter Category | Key Parameters | Recommended Setting | Function |

|---|---|---|---|

| Genome Indexing | --sjdbOverhang |

ReadLength - 1 (max 100) | Specifies the length of the genomic sequence around annotated junctions |

| Read Alignment | --outFilterMultimapNmax |

10 (default) | Maximum number of multiple alignments allowed for a read |

| Output Control | --outSAMtype |

BAM SortedByCoordinate | Output sorted BAM files for downstream analysis |

| Quantification | --quantMode |

GeneCounts | Output read counts per gene |

Troubleshooting Common STAR Issues

Frequently Encountered Problems and Solutions

Problem: "FATAL ERROR: quality string length is not equal to sequence length"

This common error typically indicates issues with input FASTQ files [3].

- Cause: Malformed FASTQ records, often due to improper trimming or file corruption

- Solution:

- Inspect the problematic read using:

grep -A 3 "READ_ID" file.fastq - Verify sequence and quality strings have equal length

- Check trimming parameters - avoid arbitrary cropping that might create inconsistencies

- Inspect the problematic read using:

Problem: Excessive Memory Usage

- Cause: Mammalian genomes require substantial RAM [4] [1]

- Solution:

- Allocate at least 32GB RAM for human/mouse genomes

- Use

--genomeSAsparseto reduce memory requirements for large genomes - Consider using a compute cluster with sufficient resources

Problem: Slow Alignment Performance

- Cause: Insufficient computational resources or suboptimal parameters [5]

- Solution:

- Increase thread count with

--runThreadNbased on available cores - Use fast local storage for temporary files

- Implement the optimizations discussed in Section 4

- Increase thread count with

Optimization Strategies for Large-Scale Datasets

Cloud-Based Scaling and Cost Optimization

Recent research has identified specific optimizations for running STAR in cloud environments for large-scale transcriptomics projects [5]:

| Optimization Category | Strategy | Impact |

|---|---|---|

| Computational | Early stopping of alignment process | 23% reduction in total alignment time |

| Infrastructure | Selecting appropriate EC2 instance types | Significant cost reduction |

| Cost Management | Using spot instances for non-critical jobs | Up to 70% cost savings without performance loss |

| Data Distribution | Efficient STAR index distribution to worker nodes | Reduced startup time for parallel processing |

Performance Tuning Parameters

For large-scale analyses, these advanced parameters can significantly improve performance:

--limitOutSJcollapsed: Prevents memory overflow with many novel junctions--outBAMsortingThreadN: Dedicated threads for BAM sorting parallelization--genomeLoad: Controls genome loading behavior in shared memory systems

Essential Research Reagent Solutions

Core Computational Tools for STAR Pipeline

| Tool/Resource | Function | Usage in Pipeline |

|---|---|---|

| STAR Aligner [4] [1] | Spliced alignment of RNA-seq reads | Core alignment algorithm - maps reads to reference genome |

| SRA-Toolkit [5] | Access and conversion of SRA files | prefetch downloads SRA files; fasterq-dump converts to FASTQ |

| DESeq2 [5] | Differential expression analysis | Normalization and statistical analysis of count data from STAR |

| SAMtools | Processing alignment files | Handles BAM file operations and utilities |

| Resource | Content | Application |

|---|---|---|

| Ensembl Database | Reference genomes and annotations | Provides FASTA and GTF files for genome indexing |

| NCBI SRA [5] | Public repository of sequencing data | Source of input RNA-seq datasets for analysis |

| iGenome | Pre-built reference indices | Community-shared genome indices for various species |

Frequently Asked Questions (FAQs)

Q: What are the minimum computational resources required for STAR with human genome alignment? A: Mammalian genomes require at least 16GB of RAM, ideally 32GB. Multi-core processors (8-12 cores) significantly improve performance through parallelization [4] [1].

Q: How does STAR handle paired-end reads differently from single-end? A: STAR processes paired-end reads as a single entity, clustering and stitching seeds from both mates concurrently. This increases sensitivity as only one correct anchor from either mate is sufficient for accurate alignment [1].

Q: Can STAR detect novel splice junctions and fusion transcripts? A: Yes, STAR can perform unbiased de novo detection of canonical and non-canonical splices, as well as chimeric (fusion) transcripts, without prior knowledge of junction loci [1].

Q: What is the recommended read length for optimal STAR performance?

A: STAR works efficiently with various read lengths, from short (36bp) to long reads (several kb). The --sjdbOverhang parameter should be set to read length minus 1, with a maximum of 100 [2].

Q: How can I validate that my STAR installation is working correctly? A: The STAR GitHub repository provides test datasets and examples. You can compile the software and run a small test alignment to verify proper functionality [4].

Computational Demands and Challenges of Large-Scale RNA-Seq Datasets

Technical Support Center

This guide provides troubleshooting and FAQs for researchers optimizing STAR (Spliced Transcripts Alignment to a Reference) for large-scale RNA-seq datasets, framed within a thesis on enhancing its performance for extensive transcriptome research.

Troubleshooting Guides

Issue 1: Genome Generation Process Killed Due to Memory Allocation Failure

Problem

During genome index generation, the process is killed, and the terminal shows an error similar to: terminate called after throwing an instance of 'std::bad_alloc' what(): std::bad_alloc [6].

Explanation

The std::bad_alloc error typically indicates that the computer has run out of available RAM while building the genome index. STAR's algorithm uses uncompressed suffix arrays (SAs) for speed, which requires significant memory, especially for large genomes like human (hg38) [1] [6]. This is often exacerbated when running the software within a Virtual Machine (VM), as the host system also requires memory, reducing the amount fully available to STAR [6].

Solution

- Increase Available RAM: The most effective solution is to access a computing node with more RAM. Building a human genome index often requires more than the 32 GB of RAM available in the reported scenario [6].

- Use Pre-built Indices: If available, download a pre-built genome index for your reference genome and STAR version to avoid the generation step entirely [6].

- Optimize Virtual Machine Settings: If using a VM, ensure the allocated RAM is no more than ~80% of the host's total physical RAM to prevent memory swapping, which uses much slower storage drives [6].

- Consider Alternative Aligners: For systems with limited RAM, consider aligners like HISAT2, Salmon, or Kallisto, which may have lower memory footprints, especially if the primary goal is gene-level quantification [6].

Issue 2: Extremely Slow Alignment Speed

Problem STAR alignment for a sample is anomalously slow, taking days instead of hours to complete [7].

Explanation A primary cause for severely slow alignment is a reference genome composed of a very large number of contigs or scaffolds (e.g., millions). This disrupts the efficient clustering and stitching of seeds in STAR's algorithm [7]. While STAR is designed for high-speed mapping (e.g., >50x faster than other aligners [1]), performance drastically degrades when the number of contigs exceeds 50,000-100,000 [7].

Solution

- Consolidate Contigs: Concatenate many short contigs into a single "super-contig" separated by

Npadding.- Sort contigs by length and keep the longest ones (e.g., 50,000) separate.

- Combine the remaining short contigs into one super-contig. Padding each short contig to a uniform length (e.g., 1 kb) simplifies post-alignment coordinate conversion [7].

- Generate the genome index using the combined FASTA files (long contigs and the super-contig) [7].

- Modify Annotations: Modify the GTF annotation file to match the new genome structure by either:

- Post-Alignment Processing: After mapping, convert alignment coordinates from the super-contig back to the original separate contigs [7].

Issue 3: Storage Space Exceeded During Analysis

Problem An alignment job fails because it exceeds the storage quota, even though the initial FASTQ files are smaller than the quota [8].

Explanation RNA-seq analysis creates intermediate and output files that can be much larger than the original input files. STAR alignment, in particular, can generate substantial temporary data and output (e.g., BAM files) that quickly consume storage space [8].

Solution

- Check and Purge Data: Review your analysis directory and permanently delete any unneeded data from previous runs. On systems like Galaxy, purge deleted data and reset the quota calculation by logging out and back in [8].

- Monitor Output File Types: Be aware that outputs like

Aligned.sortedByCoord.out.bamand extensive log files (Log.out) are generated and consume space [7] [2]. - Switch Aligners: If storage pressure persists, use a lighter-weight aligner like HISAT2 [8].

Frequently Asked Questions (FAQs)

Q1: What are the core algorithmic steps in STAR that make it fast, and why is it memory-intensive? STAR's speed comes from a two-step process: 1) Seed searching: It uses sequential Maximum Mappable Prefix (MMP) searches against an uncompressed suffix array (SA) of the reference genome, allowing for extremely fast lookup with logarithmic scaling [1] [2]. 2) Clustering/stitching: Seeds are clustered and stitched together based on proximity [1]. The memory intensity primarily arises from storing and manipulating the uncompressed SA of the entire genome in RAM for rapid access [1].

Q2: How do I choose the value for the critical --sjdbOverhang parameter?

The --sjdbOverhang parameter should be set to the maximum read length minus 1 [2]. For example, for 100 bp paired-end reads, use --sjdbOverhang 99. This parameter specifies the length of the donor/acceptor sequence on each side of a junction, and the default value of 100 is sufficient for most cases, even with varying read lengths [2].

Q3: My genome has a standard number of chromosomes. How much RAM do I need for genome generation and alignment? While requirements vary by genome size, for a human genome (hg38):

- Genome Generation: This is the most memory-intensive step. The process was reported to fail with 32 GB of RAM [6]. A separate successful run was performed on a cluster with "much higher memory allocation," suggesting that 32 GB is likely insufficient, and 64 GB or more may be needed [6].

- Read Alignment: This requires less RAM than indexing. One successful example for aligning to a human genome (chr1 only) used

--mem 16G[2]. For a full genome, a safe starting point is 32 GB of RAM.

Q4: Can STAR align long reads from technologies like PacBio? Yes, STAR can align long reads. However, there is a built-in maximum read length limit. Users have reported needing to adjust this threshold instead of trimming their long-read FASTQ files to meet the default limit [9].

Experimental Protocols for Performance Benchmarking

Protocol 1: Optimizing a Complex Genome for STAR Alignment

This protocol addresses the challenge of slow alignment with highly fragmented genomes [7].

Sort and Separate Contigs:

- Input: Reference genome FASTA file.

- Use a script (e.g., in Python or Bioawk) to sort all contigs by length in descending order.

- Output 1 (

Long.fa): The top N longest contigs (e.g., 50,000). - Output 2 (

Short.fa): All remaining contigs.

Create Super-Contig:

- Write a script to process

Short.fa. - Pad each short contig to a uniform length (e.g., 1000 bp) with

Ncharacters. - Concatenate all padded sequences into a single sequence in a new FASTA file (

SuperContig.fa), assigning it a unique name (e.g.,chrSuper). - Critical: Record the start coordinate within the super-contig for each original short contig.

- Write a script to process

Modify Annotation File (GTF):

- Option A (Filtering): Use

greporawkto filter the original GTF file, removing all annotation lines where the chromosome name matches a contig in theShort.fafile. - Option B (Coordinate Transformation): Write a script to parse the original GTF and the coordinate map from Step 2. For each feature on a short contig, add the super-contig start coordinate to its start and end positions. Change the chromosome name to

chrSuper.

- Option A (Filtering): Use

Generate Genome Index:

- Command:

STAR --runMode genomeGenerate --genomeDir /path/to/NewIndex --genomeFastaFiles Long.fa SuperContig.fa --sjdbGTFfile AnnotModified.gtf --runThreadN [Number][7].

- Command:

Align Reads and Convert Coordinates:

- Perform alignment using the new index.

- Post-process the resulting BAM file using a custom script to convert coordinates of alignments to

chrSuperback to their original contig names using the recorded map.

Protocol 2: Standard Workflow for Spliced Alignment with STAR

This is the standard protocol for aligning RNA-seq reads with STAR [2].

Genome Index Generation:

Read Alignment:

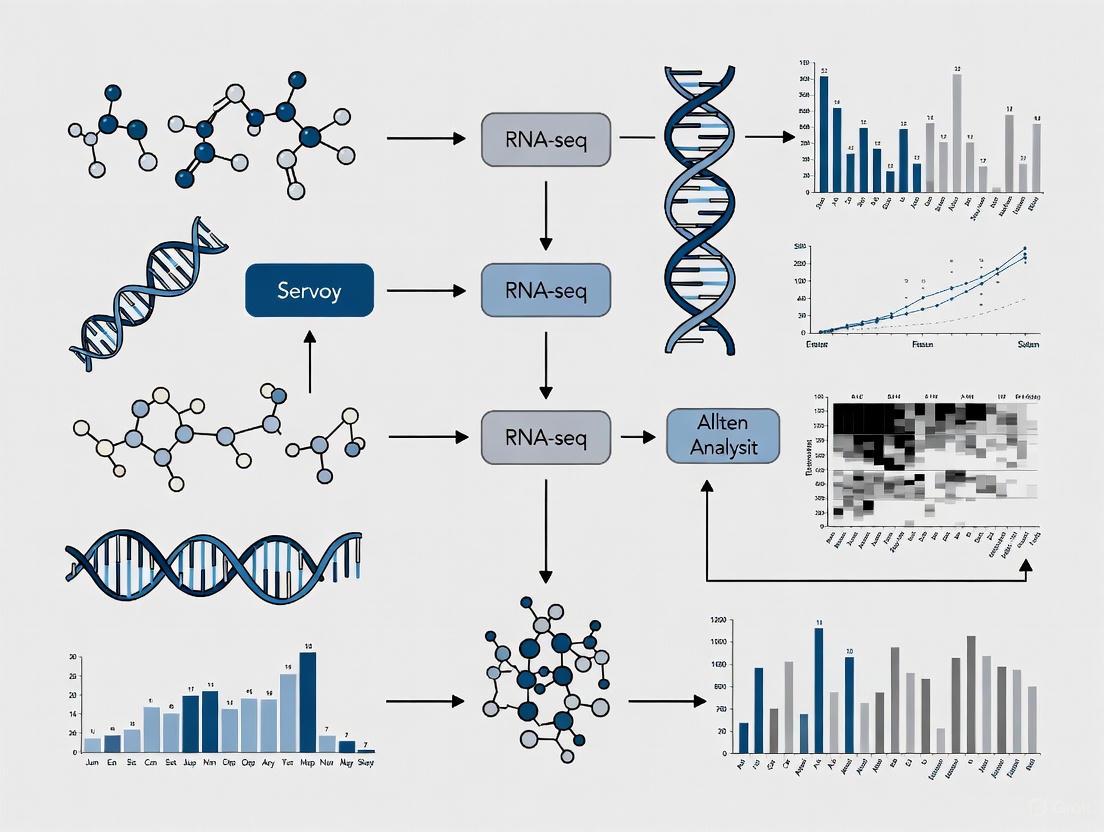

Workflow and Relationship Diagrams

STAR Alignment and Troubleshooting Workflow

STAR's Two-Step Alignment Algorithm

Research Reagent Solutions

This table details key computational "reagents" and their functions for a STAR-based RNA-seq analysis pipeline.

| Item | Function in Analysis | Example/Note |

|---|---|---|

| STAR Aligner | Performs the core task of spliced alignment of RNA-seq reads to a reference genome. | Ultrafast speed, but memory-intensive; requires careful parameter tuning [1] [2]. |

| Reference Genome (FASTA) | The DNA sequence of the organism used as the map for aligning sequencing reads. | Quality and contiguity are critical. A fragmented genome severely impacts STAR's speed [7]. |

| Annotation File (GTF/GFF) | Provides genomic coordinates of known genes, transcripts, and exons. | Used during genome indexing to improve junction detection sensitivity [2]. |

| Pre-built Genome Index | A pre-computed set of files that allows STAR to skip the time and memory-intensive indexing step. | Can be downloaded if available for your genome and STAR version, saving computational resources [6]. |

| Computational Resources | Adequate RAM, CPU cores, and storage space are essential reagents for running STAR successfully. | A lack of these will cause job failures (e.g., std::bad_alloc) [6] [8]. |

The STAR (Spliced Transcripts Alignment to a Reference) workflow is a multi-stage process that converts raw sequencing data from the Sequence Read Archive (SRA) into sorted BAM files ready for downstream analysis. The table below summarizes the key stages, their main tools, and critical output files for quality assessment [10] [5] [11].

| Workflow Stage | Primary Tool(s) | Key Inputs | Key Outputs | Purpose & Importance |

|---|---|---|---|---|

| 1. Data Retrieval | SRA-Toolkit (prefetch, fasterq-dump) [5] | SRA accession numbers | FASTQ files | Obtains raw sequence reads from public repositories like NCBI SRA [5]. |

| 2. Quality Control (QC) | Falco (FastQC), MultiQC, Cutadapt [10] | Raw FASTQ files | QC reports (HTML), trimmed FASTQ | Assesses sequence quality, adapter contamination, and overall library health [10]. |

| 3. Genome Indexing | STAR | Genome FASTA, annotation GTF | Genome Indices | Creates a reference index for rapid and accurate splice-aware alignment [5]. |

| 4. Alignment | STAR [10] [5] [11] | Trimmed FASTQ, Genome Indices | SAM/BAM files, mapping statistics | Maps sequencing reads to the reference genome, accounting for introns. |

| 5. Post-Alignment QC & Quantification | STAR, RSEM, Salmon [11] | Aligned BAM files | Read counts per gene, QC metrics | Generates a count matrix for differential expression analysis and assesses alignment quality [10] [11]. |

The following diagram illustrates the logical flow and dependencies between these stages:

Frequently Asked Questions (FAQs) and Troubleshooting

General Workflow Questions

Q1: What are the key advantages of using STAR over other aligners for large-scale RNA-seq projects?

STAR is a well-established and accurate aligner that performs splice-aware alignment, which is essential for accurately mapping RNA-seq reads across exon-intron boundaries [11]. For large-scale projects, its efficiency in processing tens of terabytes of data is critical [5]. Furthermore, a hybrid approach using STAR for initial alignment followed by Salmon for quantification leverages the detailed alignment information from STAR for quality control while using Salmon's advanced models for handling uncertainty in read assignment, providing a robust best-practice solution [11].

Q2: Should I trim my RNA-seq reads before alignment with STAR?

For standard RNA-seq libraries, trimming offers little to no benefit and is often unnecessary prior to mapping with STAR [12]. STAR is designed to handle adapter sequences and varying read quality internally. Trimming is generally only recommended for specialized library types, such as small RNA libraries.

Common STAR Errors and Solutions

Users frequently encounter specific issues during the STAR alignment step. The table below outlines common problems, their potential causes, and recommended solutions.

| Problem | Symptoms / Error Messages | Likely Causes | Solutions & Troubleshooting Steps |

|---|---|---|---|

| Empty/Small BAM files [13] [12] | - BAM file is very small (e.g., 20MB for human).- Quality scores in BAM are "?".- Most gene counts are zero. | - Incorrect reference genome.- High rate of unmapped reads.- Potential issues with the input FASTQ. | 1. Check the Log.final.out and ReadsPerGene.out.tab STAR output files to confirm the mapping rate [12].2. Verify you are using the correct, high-quality reference genome and annotation (GTF) for your species.3. Ensure the genome index was built with the same GTF file used in the analysis. |

| BAM Sorting Error [14] | FATAL ERROR: number of bytes expected from the BAM bin does not agree with the actual size on disk |

- Insufficient disk space during BAM sorting.- Limit on open files (ulimit). |

1. Ensure hundreds of GB of free disk space are available [14].2. Increase the ulimit -n value (e.g., to 10000) [14].3. Use the --limitBAMsortRAM parameter to control memory usage for sorting. |

| Low Mapping Rate | - Low percentage of uniquely mapped reads in Log.final.out. |

- Poor RNA quality (degraded samples).- Contamination (e.g., from host or other species).- Library preparation issues.- Mismatched genome. | 1. Check RNA quality metrics (RIN/RQN) before sequencing [15].2. For specific sample types like blood, consider additional depletion (e.g., globin removal) [16].3. Investigate potential contamination by aligning to a combined reference (e.g., human + viral) [12]. |

Q3: How can I optimize STAR for speed and cost-efficiency in a cloud environment?

Significant performance gains can be achieved through several optimizations [5]:

- Early Stopping: Implementing an early stopping feature can reduce total alignment time by up to 23% [5].

- Instance Selection: Choose compute-optimized (C-series) or memory-optimized (M-series) cloud instances with high-throughput disks. The optimal level of parallelism (number of CPU cores) should be determined through benchmarking.

- Spot Instances: STAR is suitable for using spot instances (preemptible VMs), which can drastically reduce costs without significantly impacting workflow reliability [5].

- Index Distribution: Pre-distributing the STAR genome index to worker instances, rather than building it on-the-fly, saves considerable time [5].

Experimental Protocols for Key Workflow Stages

Protocol 1: Building a STAR Genome Index

A correct genome index is foundational for a successful alignment.

Methodology:

- Gather Input Files: Download the reference genome sequence in FASTA format and the corresponding annotation in GTF format from a source like Ensembl.

- Run STAR Indexing Command: Explanation of Key Parameters [11]:

--runMode genomeGenerate: Directs STAR to run in genome indexing mode.--genomeDir: Path to the directory where the index will be stored.--sjdbOverhang 99: Specifies the length of the genomic sequence around annotated junctions. This should be set toReadLength - 1. For common 100bp paired-end reads,99is the ideal value.--runThreadN: Number of CPU threads to use for faster indexing.

Protocol 2: Executing the Alignment and Generating a Sorted BAM

This is the core step where reads are mapped to the reference genome.

Methodology:

- Input: Quality-checked (and optionally trimmed) FASTQ files and the pre-built genome index.

- Run STAR Alignment Command: Explanation of Key Parameters [10] [11] [12]:

--readFilesIn: Specifies the paths to the input FASTQ files (R1 and R2 for paired-end).--readFilesCommand "gunzip -c": Tells STAR how to decompress gzipped input files.--outSAMtype BAM SortedByCoordinate: Outputs the alignments directly as a coordinate-sorted BAM file, which is the standard input for many downstream tools.--quantMode GeneCounts: Instructs STAR to count the number of reads per gene, generating aReadsPerGene.out.tabfile based on the provided GTF. This is a crucial file for differential expression analysis.

Protocol 3: Implementing the STAR-Salmon Hybrid Workflow

This best-practice workflow combines the alignment-based QC of STAR with the robust quantification of Salmon.

Methodology [11]:

- Perform alignment with STAR using the

--quantMode TranscriptomeSAMparameter. This generates a BAM file aligned to the transcriptome instead of the genome. - Use this transcriptome BAM file as direct input to Salmon in its alignment-based mode (

salmon quant -a). - Salmon will then generate highly accurate, bias-corrected abundance estimates for genes and transcripts, effectively handling the uncertainty of multi-mapping reads.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of the STAR workflow depends on both bioinformatics tools and high-quality starting materials. The table below details key resources and their functions.

| Item / Resource | Function / Role in the Workflow | Critical Specifications & Notes |

|---|---|---|

| Total RNA | The starting biological material for library preparation. | - Quantity: ≥ 1-2 µg is ideal [15].- Quality: RIN (RNA Integrity Number) > 8 or RQN > 7 for polyA-selection [15]. |

| Stranded Library Prep Kit | Converts RNA into a sequence-ready library. | - Strandedness: Stranded (directional) libraries are strongly recommended as they preserve the information about which genomic strand was transcribed [15]. |

| rRNA Depletion Kit | Removes abundant ribosomal RNA (rRNA) to enrich for mRNA and other RNAs. | - Selection: Required for non-polyadenylated RNAs (e.g., bacteria, lncRNA) or degraded samples (e.g., FFPE) [16] [15]. |

| Reference Genome (FASTA) | The DNA sequence of the target organism used as the mapping scaffold. | - Source: Use a primary source like Ensembl or GENCODE. Must match the annotation file. |

| Annotation File (GTF/GFF) | Defines the genomic coordinates of genes, transcripts, and exons. | - Source: Must be from the same source and version as the reference genome for accurate alignment and quantification [11]. |

| STAR Aligner | The core software that performs splice-aware alignment of RNA-seq reads. | - Resources: Requires significant RAM (~32GB for human) and fast storage for optimal performance [5]. |

| SRA-Toolkit | A set of tools to download and extract data from the NCBI Sequence Read Archive. | - Tools: prefetch downloads SRA files; fasterq-dump converts them to FASTQ format [5]. |

Frequently Asked Questions (FAQs)

Q1: What are the most common causes of genome index generation failures in STAR?

The most frequent issues are insufficient RAM, incompatible reference genome and annotation file formats, and incorrect parameter settings for complex genomes. For large genomes like wheat (~13.5 GB), you may encounter std::bad_alloc errors due to memory limitations, requiring parameter adjustments like reducing --genomeChrBinNbits [17].

Q2: Why do my reads fail to align after successful trimming? This often indicates truncated FASTQ files or quality control issues. The error "quality string length is not equal to sequence length" suggests file corruption during upload or trimming. Always verify read quality with tools like FastQC before alignment [18].

Q3: What does "no valid exon lines in the GTF file" mean and how do I fix it? This occurs when STAR cannot parse exon features from your annotation file. Solutions include: removing header lines from the GTF file, ensuring the GTF uses the same chromosome naming convention (e.g., "chr1" vs. "1") as your reference genome, or obtaining a properly formatted GTF from sources like UCSC or Ensembl [18].

Q4: How can I optimize STAR for large-scale RNA-seq datasets in cloud environments? Research shows that early stopping optimization can reduce total alignment time by 23% [5]. Additionally, select compute-optimized instance types, use spot instances for cost efficiency, ensure proper data partitioning, and implement efficient STAR index distribution to worker nodes [5].

Troubleshooting Guides

Genome Index Generation Issues

Problem: std::bad_alloc error or crash during genome indexing [17].

Solutions:

- Reduce memory usage:

- Use fewer threads (memory requirements increase linearly with thread count)

- Adjust

--genomeChrBinNbitsfor genomes with many scaffolds:min(18, log2(GenomeLength/NumberOfReferences)) - Use

--limitGenomeGenerateRAMto explicitly set memory limit

- Verify input files:

- Ensure reference FASTA is not corrupted

- Check that GTF/GFF files are properly formatted and compatible

Table: Recommended Parameters for Large Genomes [17]

| Genome Size | Threads | genomeChrBinNbits | Minimum RAM |

|---|---|---|---|

| < 3 GB | 8-12 | 14 | 32 GB |

| 3-10 GB | 4-8 | 14-15 | 64 GB |

| > 10 GB | 2-4 | 15-16 | 125+ GB |

Read Alignment Failures

Problem: "FATAL ERROR in reads input" or low mapping rates [18] [19].

Solutions:

- Validate input reads:

- Check for truncated FASTQ files by comparing sequence and quality string lengths

- Re-upload corrupted files rather than attempting repair

- Run quality control with FastQC or similar tools pre-alignment

- Address systematic alignment errors:

- Be aware that splice-aware aligners can introduce erroneous spliced alignments between repeated sequences [19]

- Consider post-alignment filtering with tools like EASTR to remove falsely spliced alignments

- For ribo-minus libraries, expect higher rates of spurious alignments requiring additional filtering [19]

Table: Common Alignment Error Patterns and Solutions [18] [19]

| Error Pattern | Probable Cause | Solution |

|---|---|---|

| "quality string length ≠ sequence length" | Truncated FASTQ | Re-upload files, verify integrity |

| Low mapping rate, many multi-mappers | Repetitive genome regions | Use EASTR filtering, adjust --outFilterMultimapNmax |

| "phantom" introns in repetitive regions | Alignment artifacts between repeats | Enable --alignEndsType Local and filter with EASTR |

Reference-Annotation Mismatch

Problem: "no valid exon lines in the GTF file" or reference-annotation identifier mismatch [18].

Solutions:

- Ensure chromosome naming consistency:

- UCSC genomes use "chr1" while Ensembl uses "1"

- Obtain reference and annotation from the same source when possible

- Use conversion tools like

Replace column by valuesif mismatch exists

- Obtain properly formatted annotation:

- Download from UCSC:

https://hgdownload.soe.ucsc.edu/goldenPath/<database>/bigZips/genes/ - Remove header lines from GTF files before use

- Validate GTF contains "exon" features in the third column

- Download from UCSC:

Workflow Optimization

STAR Alignment Strategy

STAR Alignment Workflow Diagram

Experimental Protocols

Protocol: Comprehensive STAR Alignment for Large-Scale Studies [5] [2]

Genome Index Generation:

- Download reference genome (FASTA) and annotations (GTF) from consistent sources

- Generate genome index:

Read Alignment:

- Execute alignment:

Post-Alignment Processing:

- Convert SAM to BAM:

samtools view -bS Aligned.out.sam > Aligned.out.bam - Sort BAM:

samtools sort Aligned.out.bam > Aligned.sorted.bam - Index BAM:

samtools index Aligned.sorted.bam - Quality assessment with Qualimap or RNA-SeQC

- Convert SAM to BAM:

Research Reagent Solutions

Table: Essential Materials for STAR Alignment Workflows [20] [2]

| Reagent/Resource | Function | Source Examples |

|---|---|---|

| Reference Genome FASTA | Genomic scaffold for read alignment | Ensembl, NCBI, UCSC |

| Annotation File (GTF) | Gene model information for splice-aware alignment | Ensembl, GENCODE, RefSeq |

| STAR Aligner Software | Spliced alignment of RNA-seq reads | GitHub: https://github.com/alexdobin/STAR |

| Quality Control Tools | Pre- and post-alignment quality assessment | FastQC, Qualimap, MultiQC |

| SAM/BAM Tools | Processing and analysis of alignment files | Samtools, BEDTools |

Advanced Optimization Techniques

For large-scale analyses processing "tens or hundreds of terabytes of RNA-sequencing data" [5], implement these cloud-native strategies:

Early Stopping Optimization: Reduces total alignment time by 23% through intelligent termination conditions [5]

Resource Allocation:

- Select compute-optimized instance types (c5 family on AWS)

- Leverage spot instances for cost reduction

- Implement auto-scaling based on workload

Data Distribution:

- Pre-distribute STAR indices to worker nodes to avoid redundant computation

- Use high-throughput storage solutions for temporary files

- Implement efficient data partitioning strategies for parallel processing

These foundational practices in reference genome preparation and index structure optimization form the basis for efficient, scalable RNA-seq analysis using the STAR aligner, particularly crucial for large-scale transcriptomics studies in both research and drug development contexts.

How can I improve the runtime of the STAR aligner in a cloud environment?

Optimizing STAR in the cloud involves selecting the right compute resources and configuration. Adhere to the following methodology for cost-efficient and scalable alignment:

Experimental Protocol for Cloud Optimization:

- Instance Selection: Choose compute-optimized or memory-optimized Amazon EC2 instances (e.g., instances in the C, M, or R families). Test different types to identify the most cost-effective option for your specific data and STAR version [5].

- Parallelism Tuning: Conduct a scalability test by running STAR with a subset of your data while varying the number of CPU cores (

--runThreadNparameter). Plot the runtime against the core count to identify the point where performance gains plateau, indicating the optimal core count for your instance type [5]. - Leverage Spot Instances: For interrupt-tolerant, large-scale batch jobs, use AWS Spot Instances to significantly reduce compute costs [5].

- Implement Early Stopping: To reduce total alignment time, implement a check for the presence of the final output BAM file's index. If the index exists from a previous successful run, you can skip re-running the alignment for that sample, achieving an estimated 23% reduction in processing time [5].

Performance and Scalability Data:

Optimization Technique Expected Performance Improvement Key Consideration Optimal Core Allocation Reduces runtime until a plateau is reached [5] Prevents resource wastage; the optimal number is instance- and data-dependent. Use of Spot Instances Significant cost reduction for large-scale processing [5] Instance termination can occur; design workflows to be fault-tolerant. Early Stopping Up to 23% reduction in total alignment time [5] Requires a system to track successful sample completion.

The pipeline tools after STAR (Picard/GATK) do not scale well and become a bottleneck. How can this be addressed?

This is a recognized limitation in scalable RNA-seq variant calling pipelines. The sequential nature of tools like Picard's MarkDuplicates and GATK's HaplotypeCaller limits their ability to utilize multiple cores efficiently [21].

Experimental Protocol for Cluster-Level Parallelization:

- Data Partitioning: Split the input FASTQ files or the aligned BAM files from STAR into smaller, non-overlapping chunks based on genomic regions [21].

- Distributed Processing: Use a distributed computing framework like Apache Spark to process these chunks in parallel across multiple nodes in a cluster. A solution like SparkRA has been developed specifically for this purpose [21].

- Result Merging: After parallel processing, the results from each chunk (e.g., variant calls) are aggregated and merged into a final, unified output file [21].

Scalability Data for Distributed Pipelines:

Scaling Scenario Speedup Compared to Original GATK Pipeline Notes Single Node (20 hyper-threaded cores) ~4x faster (5h reduced to 1.3h) [21] Achieved by parallelizing the bottlenecked Picard and GATK tools. Cluster (16 nodes) ~7.7x faster compared to a single node [21] Demonstrates effective scaling across multiple compute nodes. Versus Halvade-RNA ~1.2x faster on a cluster [21] Attributes performance gain to Spark's in-memory processing vs. Hadoop's disk-based model.

What are the essential quality control checkpoints in a transcriptomics pipeline?

A robust QC protocol is critical for generating reliable data. Checks should be performed at multiple stages.

- Experimental Protocol for Tiered Quality Control:

- Raw Read QC: Use FastQC to analyze sequence quality scores, GC content, adapter contamination, overrepresented k-mers, and duplicated reads. Outliers with significant deviations (e.g., >30% disagreement in GC content) should be investigated or discarded [22].

- Alignment QC: After mapping with STAR, use tools like Picard, RSeQC, or Qualimap to assess the percentage of mapped reads, uniformity of read coverage across exons, and strand specificity. A low mapping percentage or strong 3' bias can indicate poor RNA quality or library preparation issues [22].

- Expression Quantification QC: Analyze the distribution of read counts across genes and samples. Check for correlation between biological replicates and use Principal Component Analysis (PCA) to identify potential sample outliers or batch effects [23] [22].

Quality Control Workflow for Transcriptomics

How should I design my experiment for a large-scale Transcriptomics Atlas study?

Proper experimental design ensures that the data generated has the statistical power to answer your biological questions.

- Experimental Protocol for Study Design:

- Sequencing Depth: Determine the required number of sequenced reads per sample. While five million mapped reads may suffice for quantifying medium- to high-abundance transcripts, deeper sequencing (e.g., 50-100 million reads) is necessary to detect lowly expressed genes or novel isoforms [22].

- Biological Replicates: Include a sufficient number of biological replicates (e.g., cells or tissues from different individuals) to account for natural biological variation. The number of replicates depends on the expected effect size and variability, and is crucial for statistical power in differential expression analysis [22].

- Batch Effects: Minimize technical artifacts by processing samples from different experimental groups simultaneously. Randomize sample processing order and, if batches are unavoidable, include control samples across all batches to enable statistical correction later [23] [22].

My pipeline failed with a "MissingOutputException" during assembly. What does this mean?

This error is common in workflow management systems (e.g., Snakemake, Nextflow) and indicates that a rule or process completed successfully, but an expected output file was not created.

- Troubleshooting Protocol:

- Verify Tool Output: Check the log files of the failed rule (e.g.,

run_spades). The tool itself may have failed internally or produced output files with names different from those the pipeline expected [24]. - Check File System Latency: If the output files appear after a delay, use your workflow manager's

--latency-waitoption (or equivalent) to increase the time the system waits for outputs before declaring an error [24]. - Create Symbolic Links: If the tool generates the correct file but under a different name, a solution is to modify the pipeline script to create a symbolic link (using

ln -s) from the actual output file to the filename the pipeline expects [24].

- Verify Tool Output: Check the log files of the failed rule (e.g.,

| Item | Function in the Pipeline | Specification Notes |

|---|---|---|

| STAR Aligner | Maps RNA-seq reads to a reference genome, handling spliced alignment accurately and efficiently [25]. | Requires a pre-computed genome index. Resource-heavy (RAM: tens of GiB) [5]. |

| SRA-Toolkit | Provides utilities (prefetch, fasterq-dump) to download and convert public RNA-seq data from the NCBI SRA database into FASTQ format [5]. |

Essential for populating a Transcriptomics Atlas with public datasets. |

| Reference Genome | A FASTA file serving as the foundational scaffold for read alignment and quantification [5] [26]. | Sources include Ensembl and UCSC. Must match the organism and version of the annotation file. |

| Gene Annotation (GTF) | A GTF file defining the coordinates of known genes, transcripts, and exons, used for read counting and quantification [26]. | Critical for accurate gene-level and isoform-level analysis. |

| Apache Spark | A distributed in-memory computing framework used to parallelize non-scalable pipeline steps (e.g., Picard/GATK tools) across a compute cluster [21]. | Key for overcoming scalability bottlenecks in large-scale processing. |

Scalability Bottleneck and Solution in RNA-seq Pipeline

Implementing Scalable STAR Workflows: From Cloud Architecture to Experimental Design

Designing Cloud-Native Architectures for STAR Alignment Pipelines

Troubleshooting Guides

Performance and Cost Optimization

Issue: Pipeline execution is too slow or computationally expensive.

- Potential Cause 1: Using an outdated or inefficient reference genome.

- Solution: Use the latest Ensembl genome release. One study found that using "toplevel" sequences from Ensembl release 111 instead of release 108 resulted in a 12x faster execution time on average and reduced the required index size from 85 GiB to 29.5 GiB [27].

- Potential Cause 2: Processing datasets with inherently low mappability.

- Solution: Implement an "early stopping" strategy. By analyzing the

Log.progress.outfile after 10% of reads are processed, you can terminate jobs with insufficient mapping rates (e.g., below 30%). This can reduce total STAR execution time by approximately 23% [27] [5]. - Potential Cause 3: Suboptimal cloud instance type selection.

- Solution: For STAR's high memory requirements, consider memory-optimized instances (e.g., AWS r6a.4xlarge). Test different instance families and sizes to find the most cost-efficient type for your specific data [27] [5].

Issue: Instance fails to start or the pipeline crashes due to memory overflow.

- Potential Cause 1: The precomputed genomic index is larger than the instance's available memory.

- Solution: Ensure the instance type has enough RAM to load the entire STAR index. For the 29.5 GiB human genome index (Ensembl 111), an instance with at least 32 GiB of RAM is a reasonable starting point [27].

- Potential Cause 2: Multiple processes are competing for memory on a single node.

- Solution: Manage the level of parallelism. While STAR can use multiple threads, ensure that the combined memory footprint of all threads does not exceed the available system RAM [5].

Data and Workflow Management

Issue: Failures in downloading or accessing input data (SRA files).

- Potential Cause: Network timeouts or issues with the source data repository.

- Solution: Implement robust error handling and retry logic in your workflow script for data download steps (e.g., using

prefetchandfasterq-dumpfrom the SRA Toolkit) [5].

Issue: Difficulty managing and scaling thousands of alignment jobs.

- Potential Cause: Manual job scheduling and a static cluster cannot efficiently handle the workload.

- Solution: Use a dynamic, cloud-native architecture.

- Queue-Based Work Distribution: Use a messaging service (e.g., AWS SQS) to hold all the SRA IDs that need processing. Worker instances can poll this queue for tasks [27] [5].

- Auto-Scaling: Use an Auto-Scaling Group to automatically launch or terminate worker instances based on the number of tasks in the queue [27].

- Spot Instances: Leverance spot instances for significant cost savings, as they are suitable for fault-tolerant, batch-processing jobs like STAR alignment [5].

Frequently Asked Questions (FAQs)

Q1: Which cloud instance type is the most cost-effective for running STAR? The most cost-effective instance depends on the genome size and your throughput requirements. Conduct a small-scale benchmark with your specific data. Memory-optimized instances (e.g., AWS R6a family) are often a good fit. Using spot instances instead of on-demand can also lead to substantial cost reductions [5].

Q2: How can I quickly check if my STAR alignment is likely to succeed?

Monitor the Log.progress.out file, which reports the current percentage of mapped reads. If the mapping rate is very low (e.g., <10%) after processing a substantial portion of the reads (e.g., 10%), the job is a candidate for early termination, saving time and resources [27].

Q3: Our lab is new to cloud computing. What is the easiest way to run a STAR pipeline in the cloud? Consider using managed workflow services and pre-built cloud environments. The NIGMS Sandbox provides reusable tutorials and Jupyter notebooks for RNA-seq analysis on Google Cloud Platform, which can serve as a template [28].

Q4: What are the key differences between alignment-based (STAR) and alignment-free (Salmon) methods? The table below summarizes the core differences, which can guide your tool selection [29] [28].

Table: Comparison of RNA-seq Quantification Methods

| Feature | Alignment-Based (STAR) | Alignment-Free (Salmon, Kallisto) |

|---|---|---|

| Core Method | Maps reads to a reference genome | Uses pseudo-alignment in k-mer space |

| Pros | Accurate splice junction detection; Good for novel transcript discovery | Much faster; Allows for bootstrap re-sampling |

| Cons | Computationally intensive & slower | May miss novel splice boundaries; Less accurate for novel transcripts |

| Best For | Complex transcriptomes; Splice-aware analysis | Large datasets where speed is critical |

Experimental Protocols and Data

Protocol: Implementing Early Stopping for STAR

- Execute STAR with the

--quantModeflag to generate theLog.progress.outfile. - Monitor Progress: While the job is running, periodically check the

Log.progress.outfile to extract the current percentage of mapped reads. - Apply Threshold: Define a minimum mapping rate threshold (e.g., 30%) and a decision point (e.g., after 10% of total reads have been processed).

- Terminate or Continue: If the mapping rate at the decision point is below the threshold, manually or automatically terminate the job. Otherwise, allow it to continue to completion [27].

Protocol: Benchmarking Genome Versions

- Generate Indices: Build separate STAR indices for the different genome versions (e.g., Ensembl 108 vs. 111) using the same "toplevel" sequence type.

- Standardize Test Set: Select a representative subset of FASTQ files from your dataset for benchmarking.

- Control Environment: Run STAR alignment for the test set on both indices using the same instance type and parameters.

- Measure Outcomes: Record the total execution time, memory usage, and final mapping rate for each run [27].

Table: Sample Experimental Results for Genome Version Benchmarking

| Genome Version | Index Size (GiB) | Total Execution Time | Mean Mapping Rate |

|---|---|---|---|

| Ensembl Release 108 | 85.0 | 155.8 hours | >90% |

| Ensembl Release 111 | 29.5 | 12.7 hours | >90% |

Workflow and Architecture Diagrams

Cloud-Native STAR Pipeline Architecture

STAR Alignment Early Stopping Logic

The Scientist's Toolkit

Table: Essential Research Reagents and Resources for a Cloud STAR Pipeline

| Resource Name | Function / Purpose | Key Details |

|---|---|---|

| STAR Aligner | Spliced alignment of RNA-seq reads to a reference genome. | Version 2.7.10b; requires high RAM; supports novel junction detection [27] [1]. |

| SRA Toolkit | Download and convert sequence data from the NCBI SRA database. | Contains prefetch (download) and fasterq-dump (convert to FASTQ) [5]. |

| Ensembl Reference Genome | The reference sequence and annotation for alignment. | Use the latest "toplevel" unmasked genome for best results (e.g., Release 111) [27] [29]. |

| DESeq2 | Differential expression analysis from count data. | R package for normalization and statistical testing post-alignment [27] [28]. |

| Cloud Object Storage (S3) | Long-term, durable storage for pipeline inputs and results. | Holds STAR indices, raw SRA/FASTQ files, and final output files (e.g., BAM, counts) [27] [5]. |

Optimal AWS EC2 Instance Selection for Resource-Intensive Alignments

A technical guide for researchers scaling genomic discoveries in the cloud

This technical support center provides targeted guidance for researchers and scientists encountering computational challenges while running resource-intensive alignment tools, such as STAR, on AWS EC2. The recommendations are framed within the context of optimizing large-scale RNA-seq data analysis, a critical step in modern genomics and drug development research.

Frequently Asked Questions

1. My STAR alignment job failed with a message that it was "killed" or exceeded its memory allocation. What happened?

This error typically occurs when the EC2 instance runs out of RAM. The STAR aligner loads the entire genomic index into memory, which can require tens of gigabytes, depending on the genome [5] [27].

- Solution: Select a memory-optimized instance family (e.g., R, X, or high-memory instances [30]). For the human genome, start with an instance that has at least 128 GB of RAM, such as an

r6a.4xlarge, which has been successfully used in transcriptomics research [27]. Always verify your genome's index size and choose an instance with ample overhead.

2. How can I reduce cloud computing costs without significantly increasing processing time?

Consider the following cost-saving strategies:

- Use Spot Instances: For interruptible and fault-tolerant workflows, Spot Instances can provide significant savings. Research has confirmed the applicability of Spot Instances for running the STAR aligner [5].

- Implement Early Stopping: An "early stopping" optimization can be implemented by monitoring the

Log.progress.outfile generated by STAR. Terminating jobs with a mapping rate below a certain threshold (e.g., 30%) after processing only 10% of the reads can reduce total execution time by nearly 20% [27]. - Right-size Your Resources: Using a newer genome release (e.g., Ensembl Release 111) can drastically reduce index size and runtime. One experiment showed a 12x speedup and an index size reduction from 85 GiB to 29.5 GiB, allowing for the use of smaller, cheaper instances [27].

3. My data download and ingestion steps are a bottleneck. How can I improve this?

The initial data preparation stage often involves parallel downloads and format conversions.

- Solution: Architect your workflow to use dynamic parallelism. For example, use AWS Step Functions with a Map state to launch multiple AWS Batch jobs in parallel, each handling a specific Sequence Read Run (SRR) ID [31]. This approach efficiently scales the ingestion of FASTQ files from repositories like the NCBI SRA.

4. What is the best way to select an instance type for my specific alignment workload?

With over 800 EC2 instance types available, use the AWS EC2 Instance Selector CLI tool. This tool allows you to filter instance types based on your specific resource needs [32].

- Example Command: To find current generation, x86_64 instances with at least 64 vCPUs and 128 GiB of memory, you could run:

Troubleshooting Guides

Issue: High Compute Costs for Large-Scale RNA-seq Analysis

Problem: Processing tens of terabytes of RNA-seq data with the STAR aligner is proving to be prohibitively expensive.

Diagnosis and Resolution:

Application-Level Optimization:

- Use Updated Genomic References: As highlighted in the FAQs, always use the latest version of your genomic references. The reduction in compute requirements from a newer Ensembl release is one of the most effective optimizations [27].

- Parallelize Across a Cluster: For processing many samples, do not rely on a single large instance. Design a scalable, cloud-native architecture where a manager node distributes tasks to a pool of worker EC2 instances. Workers can pull SRA IDs from a queue (like Amazon SQS), process them, and upload results to a shared store (like Amazon S3). An Auto Scaling Group can manage the worker pool, scaling it based on the number of tasks [27].

Infrastructure-Level Optimization:

- Select Cost-Efficient Instances: Empirical performance analysis is crucial. Research into transcriptomics pipelines has identified that the

r6a.4xlargeinstance type offers a good balance of memory and compute for STAR alignment tasks [27]. The following table summarizes instance families relevant to bioinformatics workloads [30]:

- Select Cost-Efficient Instances: Empirical performance analysis is crucial. Research into transcriptomics pipelines has identified that the

| Instance Category | Example Families | Ideal For |

|---|---|---|

| Compute Optimized | C, Hpc [30] | Steps requiring high-performance processing (e.g., fasterq-dump). |

| Memory Optimized | R, X, High Memory, Z [30] | STAR alignment (loads entire index into RAM). |

| General Purpose | M, T [30] | General pipeline orchestration, lower-resource tasks. |

Issue: Alignment Workflow Failures or Unreliable Execution

Problem: The pipeline fails intermittently due to node failures or resource exhaustion.

Diagnosis and Resolution:

Checkpointing and State Management:

- Use a database like Amazon DynamoDB to track the status of data ingestion and alignment jobs. This provides checkpointing and avoids repetitive processing of the same sample, saving cost and time [31].

- For workflows, use an orchestrator like AWS Step Functions, which adds reliability and makes it easier to trace invocations and troubleshoot errors [31].

Building for Resilience:

- If using Spot Instances, design your application to handle interruptions gracefully. This can be achieved by frequently checkpointing progress to a persistent store like S3, so a new instance can resume the work [27].

The following reagents and software tools are critical for setting up and executing a STAR-based RNA-seq analysis pipeline in the AWS cloud [31] [5] [27].

| Item | Function |

|---|---|

| SRA-Toolkit | A collection of tools to download (prefetch) and convert (fasterq-dump) sequence files from the NCBI SRA database into FASTQ format. |

| STAR Aligner | A widely used, accurate aligner for mapping RNA-seq reads to a reference genome. It is resource-intensive, requiring significant RAM and CPU. |

| Reference Genome | A species-specific reference (e.g., from Ensembl). Using the latest "toplevel" genome is recommended for completeness, but note that newer releases can offer massive performance gains. |

| Annotation File (GTF/GFF3) | Provides genomic feature coordinates. Used by STAR during alignment to inform splice junction discovery and for downstream quantification. |

| DESeq2 | An R package used for normalizing count data and identifying differentially expressed genes from the output of STAR. |

Experimental Protocols & Optimization Methodologies

Protocol: Early Stopping for Low-Quality Alignments

This protocol describes how to implement an early stopping optimization to save computational resources.

- Execute STAR Alignment: Initiate the STAR aligner as usual.

- Monitor Progress File: During execution, periodically read the

Log.progress.outfile generated by STAR. - Calculate Mapping Rate: Extract the current percentage of mapped reads from the log.

- Apply Decision Logic: Once a predetermined fraction of total reads (e.g., 10%) has been processed, check the mapping rate.

- Terminate or Continue: If the mapping rate is below a set threshold (e.g., 30%), terminate the alignment job early. Otherwise, allow it to continue to completion [27].

This workflow is visualized below, illustrating the logical flow for this optimization.

Protocol: Selecting an Optimal EC2 Instance Type

This methodology outlines an experimental approach to select the most cost-effective instance type for your specific alignment workload.

- Define a Benchmark Dataset: Select a representative subset of your RNA-seq samples (e.g., 10-20 files with varying sizes).

- Choose Candidate Instances: Based on general guidance, select a few candidate instance types from memory-optimized (R-family) and compute-optimized (C-family) families. The

r6a.4xlargeis a strong candidate to include [27]. - Run Controlled Experiments: Process the same benchmark dataset on each candidate instance type. Use orchestration tools like AWS Batch to ensure consistent runtime conditions [33].

- Collect Metrics: For each run, record:

- Total execution time (wall time).

- CPU and memory utilization (via Amazon CloudWatch).

- Total cost (based on instance price and runtime).

- Analyze and Select: Identify the instance type that delivers the best balance of performance and cost (e.g., lowest cost per sample while meeting time constraints). Research has shown that systematic analysis can identify the most suitable and cost-efficient instance type for STAR [5].

The high-level architecture for a scalable, cloud-native alignment pipeline is shown below, integrating many of the solutions discussed.

Frequently Asked Questions

1. What is a STAR index and why is distributing it efficiently so important? The STAR index is a pre-computed reference structure created from a reference genome and annotations. STAR uses this index to perform its ultra-fast alignment of RNA-seq reads [1]. For large-scale analyses processing tens to hundreds of terabytes of data, the alignment step is a major bottleneck [5]. Efficiently distributing this index to all compute workers is a critical challenge, as delays in transferring this large file (often ~30 GB for the human genome) can drastically impact the overall time and cost of a research project [5].

2. What are the main strategies for distributing the STAR index to compute instances? Research into cloud-based transcriptomics pipelines has identified three primary methods [5]:

- Shared File System: The index is stored on a single, high-performance network-attached storage (e.g., AWS EFS, Lustre) that all compute instances can access.

- Container Image: The index is packaged directly into a Docker container image, which is then deployed to every compute instance.

- Instance Storage: The index is copied to the local, high-throughput disk (e.g., NVMe SSD) of each compute instance at the start of a job.

3. Which instance types are most cost-effective for running STAR alignments?

Performance analyses indicate that compute-optimized instance types (e.g., the c5 family in AWS EC2) are among the most suitable and cost-effective for the STAR aligner. The alignment performance scales with the number of cores, making instances with a high vCPU count beneficial. Furthermore, using spot instances (preemptible, lower-cost cloud instances) has been verified as a viable and reliable option for running these resource-intensive aligners, leading to significant cost reductions [5].

4. How much memory (RAM) is required to run STAR? STAR is memory-intensive. The minimum requirement is approximately 10 times the genome size in bytes. For the human genome (~3 billion bases), this equates to about 30 GB of RAM, with 32 GB being a common recommendation to ensure smooth operation [2] [34].

Troubleshooting Guide

| Problem | Possible Cause | Solution |

|---|---|---|

| High job startup latency | Index is being downloaded from an external source for every job. | Pre-load the index into a shared filesystem or use a container image to eliminate transfer time at runtime [5]. |

| Slow alignment speed (I/O wait) | Index is stored on a slow or congested network filesystem. | Use a high-throughput filesystem (e.g., Lustre) or, for the best performance, copy the index to the instance's local NVMe storage [5]. |

| "Out of Memory" error | The compute instance does not have enough RAM for the selected reference genome. | Select an instance type with sufficient RAM (e.g., >30 GB for human). Monitor memory usage in the STAR log files [2] [34]. |

| Inconsistent performance across workers | Underlying hardware or network performance varies between compute nodes. | Use a uniform instance type for all workers and ensure the index distribution method provides consistent access speeds [5]. |

Experimental Protocols & Data

Protocol 1: Benchmarking Index Distribution Methods

This methodology is adapted from cloud-based performance analyses of the STAR aligner workflow [5].

- Aim: To quantitatively compare the efficiency of different STAR index distribution strategies.

- Experimental Setup:

- Methods: Implement the three distribution strategies: Shared File System (e.g., NFS/EFS), Container Image, and Local Instance Storage.

- Metrics: Measure the total alignment time, which includes the time to make the index available to the worker and the core alignment execution time.

- Infrastructure: Run the experiment on a cluster of compute-optimized instances (e.g., AWS c5.9xlarge) using a spot instance fleet to assess cost-effectiveness.

- Key Findings:

- Storing the index on the local instance storage (NVMe) provided the fastest alignment times, as it eliminates network latency.

- Using a container image resulted in the most stable and consistent job startup times.

- The shared filesystem was the simplest to implement but could become a performance bottleneck with many concurrent workers [5].

Table 1: Quantitative Comparison of STAR Index Distribution Methods

| Distribution Method | Relative Alignment Time | Ease of Implementation | Consistency | Best For |

|---|---|---|---|---|

| Local Instance Storage | Fastest | Medium | High | Performance-critical, homogeneous clusters |

| Container Image | Medium | High | Highest | Dynamic, scalable cloud environments |

| Shared File System | Slowest (can be a bottleneck) | Easiest | Low (with many workers) | Prototyping or small-scale clusters |

Protocol 2: Selecting an Optimal Instance Type

- Aim: To identify the most cost-efficient cloud instance for STAR alignment jobs.

- Experimental Setup:

- Run identical STAR alignment jobs on a variety of instance types (e.g., compute-optimized

c5, memory-optimizedr5, general-purposem5). - Record the total execution time and calculate the cost based on the instance's hourly price.

- Run identical STAR alignment jobs on a variety of instance types (e.g., compute-optimized

- Key Findings:

- Compute-optimized instances (c5) generally provide the best balance of CPU power and cost for STAR's multi-threaded workload.

- The performance scales with the number of cores, but with diminishing returns. An optimal core count should be determined empirically for your specific dataset [5].

- Spot instances can be used reliably for STAR alignment, reducing compute costs by 60-80% without significantly impacting throughput [5].

Table 2: Research Reagent Solutions for STAR Alignment

| Item | Function / Description | Example / Specification |

|---|---|---|

| STAR Aligner | The core software for performing spliced alignment of RNA-seq reads to a reference genome. | Version 2.7.10b or later [5]. |

| Reference Genome | The standard DNA sequence for the species being studied, used to create the alignment index. | Human genome assembly GRCh38 (hg38) [2]. |

| Annotation File | A GTF file containing known gene models, which STAR uses to improve junction mapping. | Ensembl annotation (e.g., Homo_sapiens.GRCh38.92.gtf) [2]. |

| SRA Toolkit | A suite of tools to download and convert public RNA-seq data from repositories like NCBI SRA. | Used for prefetch and fasterq-dump to obtain input FASTQ files [5]. |

| Containerization | Technology to package the STAR software, its dependencies, and the genome index into a portable image. | Docker or Singularity images [5]. |

Workflow Visualization

STAR Index Distribution Strategy Selection

Leveraging Spot Instances for Cost-Effective Large-Scale Processing

Frequently Asked Questions

Q1: What are Spot Instances and why should I use them for my STAR alignment workflow? Spot Instances are cloud computing resources offered at up to a 90% discount compared to On-Demand prices, allowing you to access spare cloud capacity [35]. For large-scale RNA-seq projects processing terabytes of data, this can translate to annual savings of £120,000 or more [36]. They are ideal for fault-tolerant, flexible workloads like genomic alignment.

Q2: Can I reliably use Spot Instances for production-level research pipelines? Yes, with proper design. While Spot Instances can be interrupted with as little as a 30-second to 2-minute notice [35], strategies like checkpointing and using a hybrid of Spot and On-Demand Instances can maintain reliability for critical operations while maximizing savings [36]. Automation tools can further manage this complexity [35].

Q3: My STAR job was interrupted. How can I avoid losing progress? Implement a checkpointing system. This involves regularly saving the state of your alignment process. If an interruption occurs, the job can resume from the last checkpoint instead of starting over [36]. Designing your workflow with fault-tolerance in mind is key to leveraging Spot Instances successfully.

Q4: Which instance types are most cost-effective for STAR alignment on Spot? Research indicates that memory-optimized and high-throughput compute instances are often well-suited for the STAR aligner [5]. To select the best Spot Instance, use your cloud provider's Spot Instance Advisor to check the frequency of interruption and choose less popular instance types to improve stability [35].

Troubleshooting Guides

Problem: Frequent Spot Instance Interruptions

Solution: Improve instance selection and distribution.

- Diversify Your Fleet: Instead of relying on a single instance type, use a Spot Fleet (AWS) or similar managed group. Request multiple instance types simultaneously across different Availability Zones to increase your chances of obtaining and maintaining capacity [35].

- Consult the Spot Advisor: Before launching, check the Frequency of Interruption for your chosen instance type in the cloud console. Opt for types with a lower historical interruption rate (e.g., <5%) [35].

- Leverage Rebalance Recommendations: Enable this feature to receive an early warning when a Spot Instance is at an elevated risk of interruption. This allows your autoscaling group to proactively launch a replacement instance before the current one is terminated [37].

Problem: Data Loss or Pipeline Failure on Interruption

Solution: Architect your pipeline for resilience.

- Implement Checkpointing for STAR: Configure your workflow to periodically save alignment progress to persistent storage (e.g., Amazon S3). This is crucial for long-running alignment jobs. Upon interruption, a new instance can be spun up to continue from the last saved state [36].

- Use Persistent Storage: Ensure all input data, reference genomes (like the STAR index), and output directories are mounted on robust, network-attached storage (e.g., AWS FSx, EBS) that persists independently of the compute instance's lifecycle.

- Design with Microservices: In containerized environments (e.g., Docker, Kubernetes), design your application to be stateless. This allows pods to be easily terminated and restarted on new instances without affecting the overall service [35].

Problem: Insufficient Spot Capacity or High Prices

Solution: Optimize your bidding and fallback strategy.

- Set a Maximum Price: When configuring your Spot request, set your maximum price to be the On-Demand price. This ensures your instance will only be interrupted if the Spot price exceeds the On-Demand rate, not because of your bid [35].

- Adopt a Hybrid Strategy: For a production-grade pipeline, use a mix of Spot and On-Demand Instances. Configure your cluster to run the majority of workloads on Spot Instances but fail over to On-Demand Instances during periods of scarce Spot capacity or for critical, time-sensitive jobs that cannot tolerate interruptions [36] [37].

The table below summarizes potential cost savings from using Spot Instances for HPC workloads, which includes resource-intensive tasks like RNA-seq alignment with STAR [36].

| Instance Type | On-Demand Hourly Rate (£) | Spot Hourly Rate (£) | Typical Savings (%) |

|---|---|---|---|

| Standard Compute | 0.10 | 0.02 | 80% |

| High-Memory | 0.60 | 0.15 | 75% |

| GPU | 2.25 | 0.45 | 80% |

Monthly Cost Scenarios (for 10 instances running continuously) [36]:

- High-Memory Instances:

- On-Demand Cost: £4,320

- Spot Cost: £1,080

- Savings: £3,240 (75%)

- GPU Instances (5 instances):

- On-Demand Cost: £8,100

- Spot Cost: £1,620

- Savings: £6,480 (80%)

Experimental Protocol: Optimizing STAR in the Cloud

This protocol outlines key optimizations for running the STAR aligner cost-effectively on cloud infrastructure, incorporating findings from performance analyses [5].

1. Initial Data and Index Distribution

- Objective: Minimize startup latency by efficiently distributing the STAR genomic index to worker instances.

- Methodology:

- Store the precomputed genomic index in a high-throughput object storage service (e.g., Amazon S3).

- Upon instance launch, use a parallelized download tool to transfer the index to a high-performance local SSD or a fast, scalable network file system (e.g., FSx for Lustre) shared across nodes.

2. Early Stopping Optimization

- Objective: Reduce total alignment time by stopping the

fasterq-dumptool once sufficient data is retrieved. - Methodology:

- Integrate a progress monitoring script into the data download and conversion step.

- Configure the script to terminate the

fasterq-dumpprocess once a predetermined, sufficient file size is reached. This optimization has been shown to reduce total alignment time by 23% [5].

3. Determining Optimal Intra-Node Parallelism

- Objective: Find the most cost-efficient number of CPU cores for STAR on a given instance type.

- Methodology:

- Select a representative RNA-seq sample and a target instance type (e.g., a high-memory instance).

- Run the STAR alignment multiple times, varying the

--runThreadNparameter (e.g., from 4 to the maximum vCPUs on the instance). - Measure the wall-clock time and total cost for each run. The optimal thread count is often below the maximum, as STAR's scalability diminishes with added threads, making fewer cores on a cheaper instance more cost-effective [5].

4. Validating Spot Instance Suitability

- Objective: Confirm that the STAR workflow can run reliably and with significant savings on Spot Instances.

- Methodology:

- Deploy the optimized pipeline on a Spot Fleet using multiple instance types and Availability Zones.

- Run a large batch of jobs (e.g., processing hundreds of SRA samples).

- Monitor the job success rate, total execution time, and total cost.

- Compare these metrics against a baseline run entirely on On-Demand Instances to calculate actual savings and assess reliability [5].

Workflow Visualization

Optimized RNA-seq Alignment with Spot Instances

The Scientist's Toolkit: Research Reagent Solutions

| Item / Tool | Function in the Experiment |

|---|---|

| STAR Aligner | A splice-aware aligner that accurately maps RNA-seq reads to a reference genome. It is resource-intensive but provides highly reliable results, making it a primary focus for cloud optimization [5] [38]. |

| SRA-Toolkit | A collection of tools to download (prefetch) and convert (fasterq-dump) RNA-seq files from the NCBI SRA database into the FASTQ format required by STAR [5]. |

| Spot Instance Advisor | A cloud provider tool that provides historical data on interruption rates and potential savings for different instance types, aiding in the selection of stable Spot Instances [37]. |

| High-Throughput File System (e.g., FSx for Lustre) | Provides a fast, scalable storage backend for hosting the large STAR genomic index and handling high I/O demands during parallel alignment, reducing bottlenecks [5] [39]. |

| Automation & Orchestration (e.g., AWS Batch, Nextflow) | Managed services or workflow managers that automate the deployment, scaling, and fault-tolerance of the pipeline, crucial for managing a fleet of Spot Instances and handling interruptions [39] [35]. |

Troubleshooting Guides

Guide 1: Resolving Transcript Length Mismatch Between STAR and Salmon

Problem Description Users encounter a critical error when feeding STAR-aligned BAM files to Salmon for quantification. The error message indicates a sequence length discrepancy, for example: "SAM file says target NM_001001193.1 has length 508, but the FASTA file contains a sequence of length [502 or 501]" [40]. This prevents successful quantification.

Diagnosis and Root Cause This is a known issue stemming from how the STAR aligner generates the transcriptome BAM file. The problem occurs when the transcriptome alignment produced by STAR is not perfectly consistent with the reference transcriptome FASTA file used by Salmon, particularly in how transcript boundaries or sequences are represented [40] [41]. The issue is not with the Salmon tool itself but with the input generated by the alignment step [40].

Solution Steps

- Re-index your transcriptome: Ensure the STAR index was built with the same, precise transcriptome FASTA file you provide to Salmon. Consistency between the reference files used at all stages is crucial.

- Explore alternative aligners: As a troubleshooting step, consider using a different aligner specifically for generating the transcriptome BAM file, as suggested in community discussions [40].

- Bypass alignment for quantification: If the primary goal is gene expression quantification, you can use Salmon directly in its quasi-mapping mode (without STAR alignment). This is a valid and often faster approach. One user reported success by running an analysis without an aligner and linking the output to the Salmon directory [41].

Prevention Strategy Always use identical, version-controlled reference genomes and transcriptome FASTA files across your entire workflow, from genome indexing with STAR to quantification with Salmon.

Guide 2: Addressing High Multi-Mapping Rates in STAR Alignment

Problem Description Alignment rates for human RNA-seq data are expected to be 80-90%, but some experiments report uniquely mapped reads as low as 58-75%. A high percentage of reads (e.g., 18-35%) are mapped to multiple loci, raising concerns about data quality and downstream analysis validity [42].

Diagnosis and Root Cause High multi-mapping rates can result from several factors:

- Technical artifacts: Insufficient ribosomal RNA (rRNA) depletion during library preparation can be a contributor [42].

- Biological factors: The presence of highly homologous gene families or paralogous sequences makes it intrinsically difficult to assign some reads uniquely [42].

- RNA quality: Degraded or low-quality RNA samples can exacerbate multi-mapping.

Solution Steps

- Check for rRNA contamination: Use fast and sensitive tools like

bbdukto quantify the level of rRNA contamination in your raw reads. One analysis found that a 2% rRNA level was not significant enough to explain a 30% multi-mapping rate [42]. - Evaluate downstream impact: Generate a PCA/MDS plot post-quantification. If samples cluster by experimental group rather than by multi-mapping rate, it is generally acceptable to proceed with differential expression analysis [42].

- Use appropriate counting tools: Tools like Salmon, kallisto, or RSEM use an expectation-maximization algorithm to optimally distribute multi-mappers counts between transcripts/genes, which is superior to simply discarding them [42].

Interpretation Guidelines The following table summarizes key alignment metrics and their interpretations for STAR output:

Table 1: Interpreting Key STAR Alignment Metrics

| Metric | Typical Range (Human) | Interpretation | Action Required |

|---|---|---|---|

| Uniquely Mapped Reads | 80-90% [42] | Ideal alignment rate | None |