Optimizing Your RNA-seq Preprocessing Workflow: A Practical Guide for Reliable Gene Expression Analysis

A robust RNA-seq preprocessing workflow is the critical foundation for all downstream transcriptomic analyses, directly impacting the accuracy and reproducibility of biological insights.

Optimizing Your RNA-seq Preprocessing Workflow: A Practical Guide for Reliable Gene Expression Analysis

Abstract

A robust RNA-seq preprocessing workflow is the critical foundation for all downstream transcriptomic analyses, directly impacting the accuracy and reproducibility of biological insights. This article provides a comprehensive guide for researchers and drug development professionals, detailing each step from foundational concepts and best-practice methodologies to advanced troubleshooting and validation techniques. We explore the necessity of quality control, adapter trimming, and read alignment, compare the performance of popular tools like FastQC, Trimmomatic, HISAT2, and Salmon, and outline strategies for workflow optimization to enhance efficiency and cost-effectiveness. By integrating validation and comparative analysis, this guide empowers scientists to construct and optimize reliable, species-appropriate RNA-seq preprocessing pipelines for confident differential gene expression and biomarker discovery.

Laying the Groundwork: Core Principles of RNA-seq Preprocessing

RNA sequencing (RNA-seq) is a fundamental technique in molecular biology that enables detailed analysis of the transcriptome. This technical support guide details the critical steps and considerations for the preprocessing phase of RNA-seq data analysis, which transforms raw sequencing data (FASTQ files) into a gene count matrix suitable for downstream differential expression and other bioinformatic analyses. This process is a cornerstone of reproducible research and is vital for extracting accurate biological insights from transcriptomic data [1] [2].

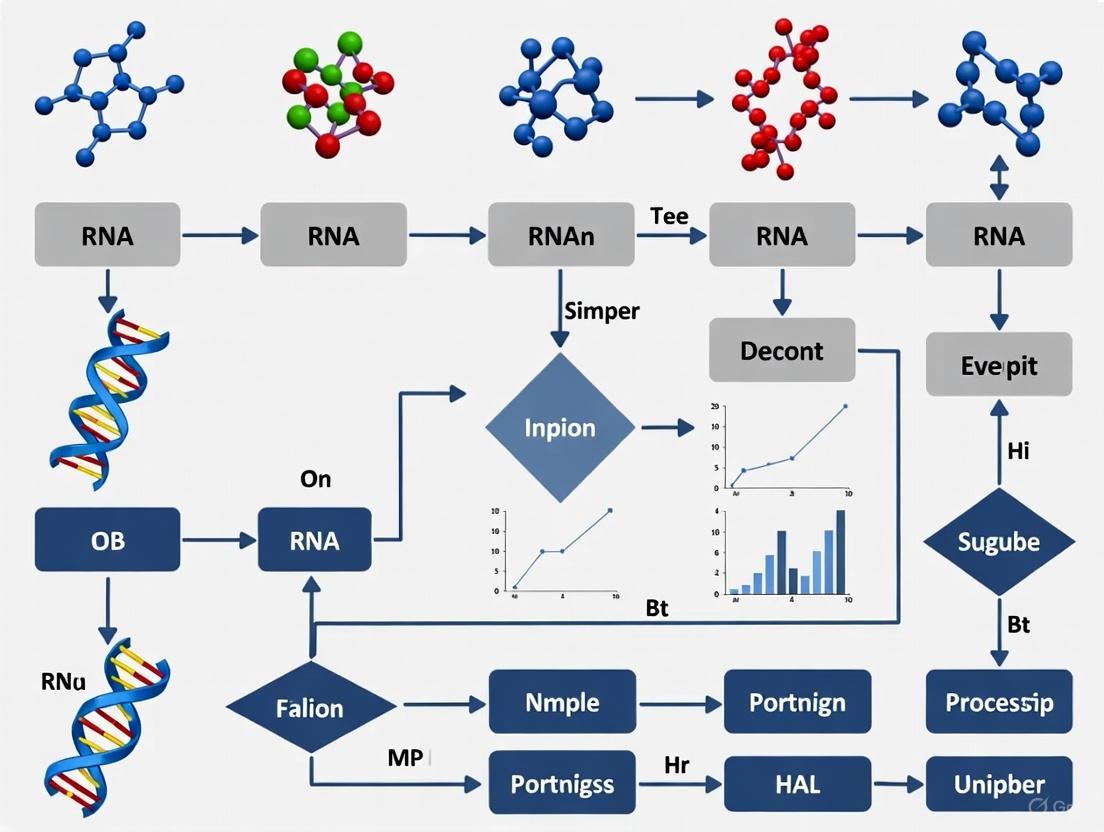

The following workflow provides a high-level overview of the primary steps in the RNA-seq preprocessing pipeline, from raw data to a normalized count matrix.

Troubleshooting Guides

Troubleshooting RNA Extraction and Quality

Successful RNA-seq analysis begins with high-quality RNA. The table below outlines common RNA extraction issues, their causes, and recommended solutions [3].

| Problem | Causes | Solutions |

|---|---|---|

| RNA Degradation | RNase contamination; improper storage; repeated freeze-thaw cycles. | Use RNase-free reagents and equipment; store samples at -85°C to -65°C; aliquot samples to avoid freeze-thaw cycles [3]. |

| Low Purity (Downstream Inhibition) | Protein, polysaccharide, or fat contamination; salt residue. | Reduce starting sample volume; increase volume of lysis reagent; increase number of ethanol rinses [3]. |

| Genomic DNA Contamination | High sample input; incomplete removal of DNA during extraction. | Reduce sample input volume; use reverse transcription reagents with a genomic DNA removal module [3]. |

| Low RNA Yield | Excessive sample amount; inadequate lysis reagent volume; incomplete homogenization. | Adjust sample amount to ensure effective homogenization; ensure sufficient volume of lysis reagent (e.g., TRIzol) [3]. |

Troubleshooting Computational Preprocessing

Issues can also arise during the computational steps of the pipeline. Key considerations for experimental design and tool selection are critical for ensuring data quality [1] [2].

| Problem | Causes | Solutions |

|---|---|---|

| Poor Read Alignment | Incorrect alignment tool parameters; high sequence divergence; adapter contamination. | Use splice-aware aligners (e.g., STAR); trim adapter sequences with tools like fastp or Trim Galore; consider species-specific optimization of parameters [1]. |

| Technical Variation & Batch Effects | Library preparation batch effects; differences in flow cell or sequencing lane. | Multiplex samples across lanes; use a randomized or blocked experimental design; apply batch effect correction tools (e.g., ComBat, Limma) during normalization [4] [5] [2]. |

| Inaccurate Quantification | PCR duplicates; ambiguous read mapping; not accounting for gene length or sequencing depth. | For single-cell data, use UMIs to collapse PCR duplicates; use quantification tools (e.g., Salmon, Kallisto) that are aware of transcript length and library depth [5] [6]. |

Frequently Asked Questions (FAQs)

Q1: What is the difference between FASTA and FASTQ file formats? The FASTA format contains sequence data (nucleotide or amino acid) preceded by a description line starting with a ">" symbol. The FASTQ format, used for raw sequencing data, extends this by including quality scores for each base call in the sequence, which are essential for quality control [7] [8].

Q2: Why is normalization necessary in RNA-seq, and what are the common methods? Normalization adjusts raw count data to remove technical variations (e.g., sequencing depth, gene length) that would otherwise mask true biological differences [5]. Common methods include:

- Within-sample: TPM (Transcripts Per Million) and FPKM/RPKM adjust for sequencing depth and gene length, allowing for comparisons of expression between different genes within the same sample [5].

- Between-sample: TMM (Trimmed Mean of M-values) assumes most genes are not differentially expressed and calculates scaling factors relative to a reference sample to compare expression across samples [5].

Q3: What is a "batch effect" and how can it be corrected? A batch effect is technical variation introduced when samples are processed in different groups (batches), such as on different days or by different personnel. This variation can confound biological results [4] [5]. Computational correction methods like ComBat (which uses empirical Bayes statistics) and tools in the Seurat package can be used to remove these effects after normalization, provided the batch information is known [4] [5].

Q4: How are PCR duplicates handled differently in bulk vs. single-cell RNA-seq? In bulk RNA-seq, duplicates are often removed based on their genomic start and end coordinates. In single-cell RNA-seq, Unique Molecular Identifiers (UMIs) are used. Reads with the same UMI that map to the same gene are considered technical duplicates from PCR amplification and are collapsed into a single count, while reads with different UMIs are considered to come from original mRNA molecules and are counted separately [6].

Q5: What are the key considerations for designing an RNA-seq experiment?

- Replicates: Biological replicates (samples from different individuals/conditions) are essential for capturing biological variance and should be prioritized over technical replicates [2].

- Sequencing Depth and Read Length: Sufficient depth is needed to detect expressed genes, especially those with low expression. Longer reads (e.g., paired-end) can improve alignment accuracy and the ability to detect alternative splicing events [2].

- Randomization: To avoid confounding batch effects with biological conditions, samples from all experimental groups should be randomized during library preparation and sequencing [2].

The Scientist's Toolkit: Research Reagent Solutions

The following table lists essential tools, software, and materials used in a typical RNA-seq preprocessing workflow.

| Item | Function in the Pipeline |

|---|---|

| fastp / Trim Galore | Used for quality control, adapter trimming, and filtering of low-quality reads from raw FASTQ files [1]. |

| STAR | A splice-aware aligner that accurately maps RNA-seq reads to a reference genome, accounting for intron-exon boundaries [6] [2]. |

| Salmon / Kallisto | Tools for transcript quantification. They use lightweight alignment or pseudoalignment to rapidly estimate the abundance of transcripts from RNA-seq data [6] [8]. |

| UMI-tools / zUMIs | Software packages designed to process single-cell RNA-seq data, including the demultiplexing of samples and collapsing of PCR duplicates using UMIs [6]. |

| EdgeR / DESeq2 | R packages used for downstream differential expression analysis. They require a normalized count matrix as input and employ statistical models based on the negative binomial distribution [2]. |

| Cell Ranger | A specialized pipeline (from 10x Genomics) for processing single-cell RNA-seq data generated using their platform, handling all steps from demultiplexing to count matrix generation [6]. |

Optimized Workflow and Normalization Strategies

As part of thesis research on workflow optimization, it has been demonstrated that the default parameters of analysis software are not universally optimal across all species. A comprehensive study testing 288 analysis pipelines on fungal data established that careful selection and tuning of tools at each step can provide more accurate biological insights than using default configurations [1]. The diagram below illustrates the key decision points and strategies for normalization and batch effect correction within an optimized workflow.

FAQs on Experimental Design

Q1: What is the minimum number of biological replicates required for a robust RNA-seq experiment?

Biological replicates are essential for capturing the natural variation within a population and are a prerequisite for statistical significance testing in differential expression analysis. The absolute minimum is 3 replicates per condition [9], with 4 being the optimum minimum [9]. This is because most statistical algorithms used for differential expression, such as DESeq2, require a minimum of 3 samples per group to function correctly [10]. Treating cells from a single sample as replicates in single-cell RNA-seq is a statistical mistake; biological replicates (multiple independent samples) are still necessary, and methods like "pseudobulking" should be used to account for between-sample variation [11].

Q2: How do I choose between sequencing depth and the number of replicates when resources are limited?

When facing budget constraints, the general recommendation is to prioritize the number of biological replicates over extreme sequencing depth [12]. More replicates provide a better estimate of biological variance, which increases the statistical power to detect differentially expressed genes. A well-replicated study with modest depth is more robust than an under-replicated one sequenced very deeply. If possible, a staged sequencing approach can be a compromise, where the same samples are sequenced in multiple runs and the data is combined later [12].

Q3: Should I use paired-end or single-end sequencing for my experiment?

The choice depends on your research goals. Paired-end (PE) sequencing is preferable for applications like de novo transcript discovery, isoform expression analysis, and alternative splicing studies because it provides more mapping information [13]. For standard gene expression profiling in well-annotated organisms, the cheaper single-end (SE) reads are often sufficient [13]. The NIH CCBR also notes that single-end sequencing is typically the most economical option [9].

Q4: How does my library preparation method impact the data I can generate?

The library preparation method directly determines the type of RNA species you can analyze and the applications possible [12]. The key decision is often between a 3' enrichment method and a whole transcriptome approach.

- 3' mRNA-Seq: This method is cost-effective and excellent for gene expression profiling. However, as reads are localized to the 3' end of transcripts, it is not suitable for investigating alternative splicing, differential transcript usage, or transcript isoform identification [12].

- Whole Transcriptome Sequencing: This provides full transcript coverage and is necessary for the analyses mentioned above. It requires either poly(A) enrichment (for coding mRNA) or rRNA depletion (for non-coding RNAs or degraded samples) [13] [12]. The table below summarizes these choices.

Table 1: Choosing a Library Preparation Method

| Method | Best For | Pros | Cons |

|---|---|---|---|

| 3' mRNA-Seq | Gene expression profiling, high-throughput projects [12] | Highly multiplexable, minimal computational resources needed [12] | Not suitable for splicing or isoform analysis [12] |

| Whole Transcriptome (Poly(A)) | Analyzing polyadenylated mRNA (coding) [13] [12] | Focuses on mRNA; high fraction of exonic reads [13] | Requires high-quality RNA (RIN >8); misses non-polyA RNA [13] [9] |

| Whole Transcriptome (rRNA depletion) | Non-coding RNAs, degraded samples (e.g., FFPE), prokaryotes [13] [12] | Works with degraded RNA; captures both coding and non-coding RNA [12] | More expensive; higher ribosomal RNA residue possible [13] |

Q5: How can I avoid batch effects in my RNA-seq study?

Technical variation from library preparation and sequencing lanes is a major source of batch effects [2]. To minimize this:

- Process all RNA extractions simultaneously. Extractions done at different times can introduce strong batch effects [9].

- Multiplex your samples. If possible, all samples should be indexed, pooled, and run across all sequencing lanes to confound lane effects with other variables [2] [9].

- Use a blocking design. If full multiplexing is impossible, ensure that replicates for each experimental condition are distributed across all processing batches and sequencing lanes. This allows bioinformatic tools to measure and correct for these technical effects later [2] [9].

Troubleshooting Guides

Problem: Inconsistent or statistically weak differential expression results.

- Potential Cause 1: Insufficient replicates.

- Solution: Increase the number of biological replicates. With only 2 replicates, it is impossible to reliably estimate biological variance, and statistical power is very low. Plan for at least 3-4 biological replicates per condition [9].

- Potential Cause 2: Confounding batch effects.

- Solution: Review your experimental metadata. If samples were processed in batches, use statistical models in your differential expression analysis (e.g., in DESeq2) to include "batch" as a covariate to correct for its effect [9].

- Potential Cause 3: Incorrect analysis for single-cell data.

- Solution: Do not treat individual cells as biological replicates. Use a "pseudobulk" approach, where counts are aggregated for each cell type within each biological sample, and then standard bulk RNA-seq differential expression tools are applied to these aggregated counts [11].

Problem: Low mapping rates or biased coverage.

- Potential Cause 1: Poor RNA quality.

- Potential Cause 2: Inadequate read trimming.

- Potential Cause 3: Incorrect library type for the organism.

The Scientist's Toolkit: Key Reagents and Materials

Table 2: Essential Research Reagents and Materials for RNA-seq

| Item | Function |

|---|---|

| RNA Extraction Kit | Isolates high-quality total RNA from cells or tissues. The choice of kit may vary based on sample type (e.g., bacterial vs. mammalian) and RNA species of interest (e.g., small RNAs) [12] [10]. |

| Library Preparation Kit | Converts purified RNA into a sequencing-compatible library. The specific kit determines the scope of the experiment (e.g., 3' counting, whole transcriptome, small RNA) [12] [10]. |

| Poly(dT) Magnetic Beads | Used in poly(A) selection to enrich for messenger RNA by binding to the poly-adenylated tail. This removes ribosomal RNA (rRNA) [13]. |

| Ribosomal RNA Depletion Probes | Probes that hybridize to and remove abundant rRNA, allowing for the sequencing of other RNA species. Essential for prokaryotes, non-polyA RNAs, or degraded samples [13] [12]. |

| Spike-in Control RNAs (e.g., ERCC, SIRV) | Synthetic RNA molecules added to the sample in known quantities. They are used to assess technical performance, detect amplification biases, and sometimes to aid in normalization [12]. |

| Unique Molecular Identifier (UMI) Adapters | Adapters containing random molecular barcodes that tag individual RNA molecules before amplification. This allows for the accurate counting of original transcript molecules and correction for PCR duplication bias [11]. |

Experimental Design and Analysis Workflow

The following diagram outlines the key decision points and their consequences in planning an RNA-seq study, integrating the concepts of replicates, library preparation, and sequencing strategy.

To ensure the success of your RNA-seq experiment and the optimization of your preprocessing workflow, adhere to these core principles:

- Replicate Power: Biological replicates are non-negotiable. Plan for a minimum of 3, and ideally 4 or more, independent biological replicates per condition to ensure statistically robust results [9].

- Design to Control Batch Effects: From the start, design your experiment to minimize technical confounding. Process samples randomly and use multiplexing across sequencing lanes to make batch effects measurable and correctable [2] [9].

- Align Methods with Goals: Let your biological question dictate your technical choices. If studying isoform usage, you must use a whole transcriptome, paired-end sequencing approach. For simple gene counting, 3' mRNA-Seq is sufficient and more cost-effective [13] [12].

- Validate with a Pilot Study: Before launching a large-scale project, conduct a pilot experiment with a representative subset of samples. This allows you to verify that your chosen RNA quality, library preparation, and sequencing depth parameters will deliver the data required to answer your research question [12].

FAQs and Troubleshooting Guides

This guide addresses common questions and issues researchers encounter when working with key file formats in the RNA-seq preprocessing workflow. Proper handling of these formats is crucial for generating accurate and reproducible results in transcriptome analysis.

FASTQ Format

What is the basic structure of a FASTQ file? Each sequence in a FASTQ file consists of exactly four lines [14] [15]:

- Sequence Identifier and Description: Begins with an

@character and contains information about the sequencing run and the cluster [14] [16]. - The Sequence: The actual base calls (A, C, T, G, N) [14].

- A Separator: A

+sign, which may optionally be followed by the same sequence identifier [14]. - Quality Scores: Encoded quality scores for each base in the sequence line. The length of this line must match the length of the sequence line [14] [16]. The scores are Phred-scaled and typically use ASCII characters with an offset of 33 (Sanger encoding) [15].

How can I quickly check the number of reads in a FASTQ file? You can use command-line tools:

- Using

seqkit stats: The commandseqkit stats your_file.fqprovides comprehensive statistics, including the number of sequences [14]. - Using basic Bash commands: The command

echo $(( $(wc -l < your_file.fq) / 4 ))calculates the number of records by dividing the total line count by four [16].

My FASTQ files are too large to open in a text editor. How can I view them? FASTQ files are not meant to be opened in standard text editors due to their size [15]. Use command-line tools instead:

- Use

head -n 4 your_file.fqto view the first read [14]. - Use

zcatfor compressed.gzfiles (e.g.,zcat your_file.fastq.gz | head -n 4).

How do I combine multiple FASTQ files from the same sample?

You can consolidate files using the cat command. However, for a large number of files, a more robust method is recommended [16]:

The base quality of my reads is low. What should I do? Low quality at the 3' end of reads is common. Use trimming/filtering tools like fastp or Trim Galore (which integrates Cutadapt and FastQC) to remove low-quality bases and adapter sequences before proceeding to alignment [1]. This step can significantly improve the subsequent mapping rate [1].

SAM/BAM Format

What is the difference between SAM and BAM files?

- SAM (Sequence Alignment Map) is a human-readable, tab-delimited text format [17].

- BAM is the compressed binary version of SAM. It is much smaller in size and allows for efficient indexing and random access, which is required by many downstream analysis tools [17].

What are the mandatory fields in a SAM/BAM alignment record? Each alignment line has 11 mandatory fields [18]:

Table: Mandatory Fields in a SAM/BAM File

| Col | Field | Type | Brief Description |

|---|---|---|---|

| 1 | QNAME | String | Query template name (read identifier) |

| 2 | FLAG | Integer | Bitwise flag encoding multiple properties (e.g., paired-end, strand, mapping quality) |

| 3 | RNAME | String | Reference sequence name the read aligns to |

| 4 | POS | Integer | 1-based leftmost mapping position of the first aligned base |

| 5 | MAPQ | Integer | Mapping quality, indicating the reliability of the alignment (-10log10(Pr(mapping position is wrong))) |

| 6 | CIGAR | String | String that describes the alignment (e.g., matches, deletions, insertions, splicing) |

| 7 | RNEXT | String | Reference name of the mate/next read |

| 8 | PNEXT | Integer | Position of the mate/next read |

| 9 | TLEN | Integer | Observed template length (insert size) |

| 10 | SEQ | String | The raw sequence itself |

| 11 | QUAL | String | The base quality scores, encoded in the same Phred+33 format as the FASTQ file |

How do I interpret the bitwise FLAG field?

The FLAG is an integer that is the sum of numeric codes representing different alignment attributes. For example, a FLAG value of 99 (64 + 32 + 2 + 1) for a paired-end read means: the read is paired, it is the first read in the pair, its mate is mapped, and both reads are mapped in a proper pair. Use online tools like the SAMflag tool or the samtools flag command to decode these values [18].

My downstream tool requires a sorted BAM file. How do I create one?

Use samtools sort. Sorting by coordinate is essential for many tasks, including variant calling and visualization [17].

The -@ option allows you to use multiple threads to speed up the process [17].

How can I view alignments for a specific genomic region?

You first need to index the sorted BAM file and then use samtools view [17].

What is the CIGAR string and why is it important for RNA-seq?

The CIGAR (Compact Idiosyncratic Gapped Alignment Report) string describes how a read aligns to the reference. It is crucial for RNA-seq because it can represent spliced alignments. Operations like N (skipped region from the reference) denote introns, showing where splicing has occurred. For example, a CIGAR string of 50M1000N50M means 50 bases match the reference, then 1000 bases are skipped (an intron), followed by another 50 matching bases [17].

GTF/GFF3 Format

What is the difference between GFF3 and GTF files? While both are 9-column, tab-delimited formats for genomic annotations, they have key differences in history and usage [19] [20]:

Table: Comparison of GFF3 and GTF File Formats

| Aspect | GTF (Gene Transfer Format) | GFF3 (General Feature Format version 3) |

|---|---|---|

| Origin & Focus | Evolved from GFF2; more gene-centric. | Developed by the Sequence Ontology Project; broader scope for all genomic features (genes, RNAs, regulatory regions). |

| Feature Types (3rd column) | Limited types (e.g., gene, transcript, exon, CDS, UTR) [19]. |

A wider variety of types (e.g., gene, mRNA, tRNA, ncRNA, chromosome, biological_region) [19]. |

| Attribute Column (9th column) | Uses specific, structured key-value pairs (e.g., gene_id "ENSG000001"; transcript_id "ENST000001"). |

More flexible key-value pairs. Uses a flat list of tags. Relationships are often defined with the Parent tag. |

| Hierarchy Representation | Implied hierarchy based on shared gene_id and transcript_id attributes. |

Explicit hierarchy using the ID and Parent attributes to build a tree structure. |

Should I use GTF or GFF3 for RNA-seq analysis?

For standard RNA-seq differential expression analysis, the GTF format from Ensembl is most commonly used. Its structured attribute column with consistent gene_id and transcript_id tags is directly compatible with quantification tools like featureCounts and HTSeq [20]. However, always check which format your specific analysis tool requires.

The gene IDs in my GTF/GFF3 file do not match the ones in my expression matrix. What is wrong?

This is a common issue. The problem often lies in the version numbers of the gene identifiers. For example, ENSG000001 and ENSG000001.5 are treated as different IDs. Ensure that the annotation file (GTF/GFF3) and the reference genome used for alignment are from the same source and version. Mismatches can lead to failed quantification.

How can I convert between GFF3 and GTF formats? Use dedicated bioinformatics tools like AGAT or GenomeTools to convert between formats while preserving the integrity of the annotation information [20]. For example, to convert GFF3 to GTF with AGAT:

The Scientist's Toolkit

Table: Essential Tools and File Formats for RNA-seq Preprocessing

| Item Name | Function / Role in the Workflow |

|---|---|

| FastQC | Generates a quality control report for raw or trimmed FASTQ files, highlighting potential issues [14]. |

| fastp / Trim Galore | Performs adapter trimming and quality filtering of FASTQ reads to improve downstream mapping [1]. |

| STAR / HISAT2 | Aligns (maps) sequencing reads from FASTQ files to a reference genome, producing SAM/BAM files. |

| SAMtools | A versatile toolkit for manipulating SAM/BAM files, including sorting, indexing, and filtering [17]. |

| BAM Index (.bai) | A separate index file for a sorted BAM file that enables fast random access to genomic regions [17]. |

| Ensembl GTF Annotation | Provides gene model annotations, defining the coordinates of genes, transcripts, and exons for read quantification [19] [20]. |

| seqkit | A cross-platform and efficient toolkit for FASTA/Q file manipulation [14] [16]. |

Experimental Protocols and Data

Protocol: Assessing FASTQ File Quality with FastQC and seqkit

- Obtain Basic Statistics: Run

seqkit stats *.fqto get a quick overview of read counts and average read lengths for all your files [14]. - Generate a Quality Report: Run

fastqc *.fqto start the analysis. FastQC will process each file and generate an HTML report [14]. - Interpret Key FastQC Modules:

- Per Base Sequence Quality: Check that quality scores are high (e.g., >Q28) across all bases, with a common drop at the read ends.

- Per Base Sequence Content: The four lines for A, C, G, T should be roughly parallel. Deviations can indicate adapter contamination or overrepresented sequences.

- Overrepresented Sequences: Identifies sequences (like adapters) that appear much more frequently than others.

Quantitative Data from a Typical RNA-seq Run

The table below shows example statistics generated by seqkit stats for a paired-end RNA-seq dataset, illustrating the consistency expected between read pairs (R1 and R2) [14]:

Table: Example FASTQ File Statistics for HBR and UHR Samples

| File | Format | Type | Number of Sequences | Average Length (bp) |

|---|---|---|---|---|

| HBR1R1.fq | FASTQ | DNA | 118,571 | 100 |

| HBR1R2.fq | FASTQ | DNA | 118,571 | 100 |

| HBR2R1.fq | FASTQ | DNA | 144,826 | 100 |

| HBR2R2.fq | FASTQ | DNA | 144,826 | 100 |

| UHR1R1.fq | FASTQ | DNA | 227,392 | 100 |

| UHR1R2.fq | FASTQ | DNA | 227,392 | 100 |

Workflow Visualization

The following diagram illustrates the role of each file format within a standardized RNA-seq preprocessing workflow, which is critical for optimization research [1].

Frequently Asked Questions

Q1: What is RNA-seq strandedness and why is incorrectly specifying it so detrimental? Strandedness refers to whether the RNA-seq library preparation protocol preserves the original orientation (strand) of the RNA transcript. Incorrectly specifying this parameter during analysis can lead to severe consequences, including:

- Loss of >95% of reads during mapping to a reference [21].

- Increased false positives and negatives in differential expression analysis, with one study noting over 10% false positives and over 6% false negatives [21].

- Compromised accuracy in transcript assembly and quantification, especially for genes that overlap on opposite strands [21] [13].

Q2: How can I quickly determine the strandedness of my RNA-seq data if it's not documented? If strandedness is unavailable from metadata or the sequencing facility, you can use dedicated tools.

how_are_we_stranded_here: A Python library designed to quickly infer strandedness from paired-end RNA-seq data. It works by pseudoaligning a sample of reads (default: 200,000) to a transcriptome and then usingRSeQC's infer_experiment.pyto determine the read orientation relative to transcripts. It is fast, with runs often taking less than a minute [21] [22].RSeQC's infer_experiment.py: This tool can be run directly on an aligned BAM file. It samples reads and compares their genomic coordinates and strand to a reference gene model to gauge the strand-specific protocol used [23].

Q3: Should I always discard single-end (SE) reads from a paired-end (PE) library during pre-processing? Not necessarily. Common practice involves:

- Processing PE reads where both pairs pass quality filtering as proper pairs.

- Retaining reads where only one of the pair passes filtering as a single-end read. This prevents the loss of valuable data that still indicates the presence of a transcript [24] [25]. These "orphaned" reads are typically counted as one fragment, the same as a properly aligned pair [24].

Q4: What is a Q30 quality score and why is it considered a benchmark? The Phred Quality Score (Q score) measures the probability that a base was called incorrectly by the sequencer [26] [27]. A score of Q30 means there is a 1 in 1,000 probability of an incorrect base call, which translates to a base call accuracy of 99.9% [26]. This benchmark ensures that virtually all reads are perfect, minimizing false-positive variant calls and providing a high-confidence foundation for downstream analysis [26].

Experimental Protocols for Key Determinations

Protocol 1: Quick Strandedness Determination usinghow_are_we_stranded_here

This protocol is designed for rapid, pre-alignment quality control [21].

- Installation: Install the library via pip (

pip install how_are_we_stranded_here) or conda (conda install --channel bioconda how_are_we_stranded_here). - Prerequisite: Obtain the transcriptome FASTA and GTF annotation files for your organism.

- Indexing: Create a kallisto index of the organism's transcriptome. Note: This is the most time-consuming step (e.g., ~6-7 minutes for human) but is reusable for the same species [21].

- Execution: Run the tool on your paired-end FASTQ files. By default, it samples 200,000 reads for pseudoalignment.

- Interpretation: The tool outputs a "stranded proportion."

- Proportion ~1.0: Data is stranded (all reads explained by one orientation).

- Proportion ~0.5: Data is unstranded (a near-even mix of orientations) [21].

Protocol 2: Strandedness Determination from a BAM File usingRSeQC

Use this protocol if you already have aligned BAM files [23].

- Tool Setup: Ensure RSeQC is installed, with

infer_experiment.pyaccessible. - Data Preparation: Convert your GTF annotation file to BED12 format using the

gtf2bedscript or a similar tool. - Execution: Run

infer_experiment.pyon your sorted BAM file, providing the BED12 file as the reference gene model. - Interpretation: The tool reports the fraction of reads explained by "1++,1--,2+-,2-+" (FR/Second Strand) and "1+-,1-+,2++,2--" (RF/First Strand).

- If one fraction is >0.9, your data is stranded, and you should use the corresponding layout.

- If the two fractions are close to 0.5, your data is unstranded [23].

Structured Data for Easy Comparison

Table 1: Decoding Strandedness Terminology Across Software

Software tools use different terms for the same library types. This table helps translate between them [23].

| Library Type | Infer Experiment (RSeQC) Output |

HISAT2 | featureCounts | HTSeq-Count |

|---|---|---|---|---|

| PE - SF | "1++,1--,2+-,2-+" | --rna-strandness F |

-s 1 |

--stranded=yes |

| PE - SR | "1+-,1-+,2++,2--" | --rna-strandness R |

-s 2 |

--stranded=reverse |

| SE - SF | "++,--" | --rna-strandness F |

-s 1 |

--strended=yes |

| SE - SR | "+-,-+" | --rna-strandness R |

-s 2 |

--stranded=reverse |

| Unstranded | Undecided / ~50% split | --rna-strandness unspecified |

-s 0 |

--stranded=no |

Table 2: Interpreting Sequencing Quality Scores

This table defines standard quality thresholds used in data trimming and filtering [26] [27].

| Quality Score (Q) | Probability of Incorrect Base Call | Base Call Accuracy | Common Application in RNA-seq |

|---|---|---|---|

| Q20 | 1 in 100 | 99% | A common, though lenient, threshold for trimming low-quality bases [27]. |

| Q30 | 1 in 1,000 | 99.9% | The benchmark for high-quality data; ensures virtually all reads are perfect [26]. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Item | Function in RNA-seq Preprocessing |

|---|---|

| FastQC | Provides an initial quality report on raw sequence data in FASTQ files, assessing per-base sequence quality, GC content, adapter contamination, and more [25] [13]. |

| Trimmomatic / fastp | Performs adapter trimming and removal of low-quality bases to clean the raw reads, which is crucial for improving downstream mapping rates [1] [25] [13]. |

| RSeQC | A suite of tools for comprehensive quality control on RNA-seq data after alignment, including the vital infer_experiment.py for strandedness determination [21] [23] [13]. |

| STAR / HISAT2 | "Splice-aware" aligners that accurately map RNA-seq reads to a reference genome, accounting for reads that span exon-intron junctions [25] [13]. |

| kallisto | A pseudoaligner used for fast transcript-level quantification and a key component of the how_are_we_stranded_here workflow [21]. |

Workflow Diagrams

Strandedness Determination Workflow

RNA-seq Preprocessing Quality Control

A Step-by-Step Guide to Implementing a Robust Preprocessing Pipeline

Core Concepts: FastQC and MultiQC

What are FastQC and MultiQC, and what are their primary functions in an RNA-seq workflow?

FastQC is a quality control tool that provides a simple way to perform initial quality checks on raw sequence data from high-throughput sequencing pipelines. Its main functions are to import data from BAM, SAM, or FastQ files; provide a quick overview of potential problem areas; generate summary graphs and tables for rapid data assessment; and export results to HTML-based reports for easy sharing and documentation [28] [29].

MultiQC is an aggregation tool that searches specified directories for analysis logs and compiles them into a single interactive HTML report. It is particularly valuable in RNA-seq workflows for comparing QC metrics across all samples simultaneously, enabling researchers to quickly identify outliers and consistency issues. MultiQC can parse output from 36 different bioinformatics tools, including FastQC, STAR, Qualimap, and Salmon, providing a unified view of quality throughout the RNA-seq pipeline [30].

What is the fundamental structure of a FASTQ file, and how are quality scores encoded?

A FASTQ file contains sequence reads with quality information and follows a specific four-line structure for each record [28]:

- Line 1: Always begins with '@' followed by information about the read

- Line 2: The actual DNA sequence

- Line 3: Always begins with a '+' and sometimes contains the same information as line 1

- Line 4: Has a string of characters representing quality scores; must contain the same number of characters as line 2

Quality scores (Phred scores) are encoded as ASCII characters and represent the probability that a base was called incorrectly. The most commonly used encoding is fastqsanger (Phred-33). The quality score is calculated as Q = -10 × log10(P), where P is the probability that a base call is erroneous [28].

Table: Interpretation of Phred Quality Scores

| Phred Quality Score | Probability of Incorrect Base Call | Base Call Accuracy |

|---|---|---|

| 10 | 1 in 10 | 90% |

| 20 | 1 in 100 | 99% |

| 30 | 1 in 1000 | 99.9% |

| 40 | 1 in 10,000 | 99.99% |

Troubleshooting Common FastQC Issues

Why does my RNA-seq data "fail" Per base sequence content, and is this truly problematic?

This is a common concern. The "Per base sequence content" module often raises a failure flag for RNA-seq data due to non-randomness in the first 10-12 bases, which is actually expected behavior rather than indicating poor data quality [31].

Root Cause: During RNA-seq library preparation, 'random' hexamer priming occurs. This priming is not perfectly random, leading to an enrichment of particular bases in these initial nucleotide positions. This bias is a technical artifact of the library preparation method, not a sequencing error.

Assessment & Solution: This "failure" can generally be ignored for RNA-seq data, as it reflects expected biological protocol limitations rather than sequencing quality issues. Most alignment tools (STAR, HISAT2, Salmon) can adequately handle this bias during mapping [31].

Why do I see a drop in quality scores toward the end of reads, and when should I be concerned?

A gradual decrease in quality toward the 3' end of reads is common in Illumina sequencing and can result from two expected phenomena [31]:

- Signal Decay: Fluorescent signal intensity naturally decays with each sequencing cycle due to degrading fluorophores and a proportion of strands in the cluster not elongating.

- Phasing: As cycles progress, clusters lose synchronicity as some strands experience random failure of nucleotides to incorporate, leading to signal blurring.

Worrisome patterns that warrant contacting your sequencing facility include [31]:

- Sudden, dramatic drops in quality scores at specific positions

- Consistently low quality scores across the entire read length

- Large percentages of reads with low average quality scores

Table: Interpreting Quality Score Patterns in FastQC

| Pattern | Likely Cause | Action Required |

|---|---|---|

| Gradual decrease at 3' end | Expected signal decay/phasing | None typically needed |

| Sharp, sudden quality drop | Instrument failure | Contact sequencing facility |

| Consistently low scores across all bases | Overclustering or poor sequencing | Contact sequencing facility |

| Small bump of low-quality reads | Minor sequencing issue | Check if percentage is significant |

My MultiQC report shows conflicting pass/fail status between different quality metrics. How should I interpret this?

This situation, where samples pass one quality metric but fail another, requires careful interpretation of the underlying data rather than relying solely on the pass/fail status [32].

Example Scenario: In one case, samples showed "good" results for per sequence quality scores (all samples passed) but "failed" for sequence quality histograms showing mean quality scores for each base position. The solution was to recognize that while the overall average quality was high (>35), FastQC's failure trigger was based on lower quartile values falling below thresholds at specific base positions [32].

Interpretation Strategy:

- Focus on the actual data trends rather than binary pass/fail indicators

- Check if "failures" occur in expected areas (e.g., first few bases in RNA-seq)

- Compare metrics across all samples to identify true outliers

- Use MultiQC's interactive features to explore the underlying data behind each metric

Experimental Protocols

Protocol: Running FastQC Quality Assessment

Objective: Assess quality of raw RNA-seq FASTQ files using FastQC [28].

Materials Needed:

- Raw FASTQ files from RNA-seq experiment

- Computer cluster with SLURM scheduler or local compute environment

- FastQC software module

Methodology:

- Access Computing Environment: Start an interactive session on the cluster:

Navigate to Data Directory:

Load FastQC Module:

Run FastQC with Multi-threading (using 6 cores for 6 FASTQ files):

Organize Results:

Alternative Job Submission Script:

For larger datasets, create a job submission script (mov10_fastqc.run) with SLURM directives [28]:

Protocol: Generating MultiQC Reports for Cross-Sample Comparison

Objective: Aggregate and compare QC metrics from multiple tools and samples using MultiQC [30].

Materials Needed:

- Output from FastQC, STAR, Qualimap, and/or Salmon

- MultiQC software module

Methodology:

- Create Output Directory:

Load MultiQC Module:

Run MultiQC (example with multiple tool outputs):

Transfer and View Report: Use FileZilla or SCP to transfer the HTML report to your local machine for viewing in a web browser.

Protocol: Comprehensive RNA-seq QC Assessment

Objective: Perform complete quality assessment from raw reads to alignment statistics [30] [25].

Key Metrics to Track:

- Number of raw reads

- Percentage of reads aligned to genome

- Percentage of reads associated with genes

- Sequence duplication levels

- 5'-3' bias

- GC content distribution

Assessment Guidelines:

- Alignment Rates: A good quality sample should have at least 75% of reads uniquely mapped. Values below 60% warrant troubleshooting [30].

- Sequence Duplication: High duplication percentages may indicate low library complexity or over-amplification [30].

- 5'-3' Bias: Investigate biases approaching 0.5 or 2, which could indicate RNA degradation or sample preparation issues [30].

- Exonic vs. Intergenic Reads: Expect >60% exonic reads for well-annotated organisms. High intergenic reads (>30%) may indicate DNA contamination [30].

Workflow Visualization

Table: Essential Software Tools for RNA-seq Quality Control

| Tool Name | Primary Function | Key Application in RNA-seq QC |

|---|---|---|

| FastQC | Raw read quality assessment | Provides initial quality metrics on raw FASTQ files, identifying adapter contamination, quality score distribution, and sequence biases [29] [25] |

| MultiQC | QC metric aggregation | Compiles results from multiple tools and samples into a single interactive report, enabling cross-sample comparison and outlier detection [30] |

| Trimmomatic | Read trimming | Removes adapter sequences and low-quality bases from reads, improving downstream alignment rates [25] |

| Trim Galore | Wrapper for trimming | Integrates Cutadapt and FastQC to perform adapter and quality trimming with automatic quality reporting [1] |

| fastp | Rapid preprocessing | Performs adapter trimming, quality filtering, and polyG tail trimming with integrated QC reporting [1] |

| STAR | Splice-aware alignment | Aligns RNA-seq reads to reference genome, providing mapping statistics and splice junction information [30] [25] |

| Salmon | Transcript quantification | Provides fast transcript-level quantification and mapping rate statistics without full alignment [30] |

| Qualimap | Alignment QC | Evaluates alignment characteristics including coverage bias, RNA-seq specificity, and 5'-3' bias [30] |

Table: Critical QC Metrics and Interpretation Guidelines

| QC Metric | Optimal Range | Potential Issue | Recommended Action |

|---|---|---|---|

| % Uniquely Mapped Reads | >75% | <60% indicates potential alignment issues | Check RNA integrity, reference genome compatibility, or alignment parameters [30] |

| % Duplicate Reads | Variable, <50% typical | High duplication suggests low complexity | Consider library preparation optimization if consistently high [30] |

| 5'-3' Bias | ~1.0 (0.8-1.2) | Values <0.5 or >2 indicate bias | May indicate RNA degradation; check RIN scores if available [30] |

| % Exonic Reads | >60% for human/mouse | <50% may indicate DNA contamination | Verify ribosomal RNA depletion efficiency [30] |

| % GC Content | Species-specific | Deviations from expected distribution | May indicate contamination or technical artifacts [31] |

| Average Quality Score | >Q30 across most bases | Scores | Consider quality trimming or contact sequencing facility [28] [25] |

Within the framework of RNA-seq preprocessing workflow optimization research, the initial steps of adapter trimming and quality filtering are paramount. These steps directly influence the quality of all subsequent analyses, including alignment accuracy, quantification reliability, and the ultimate biological interpretations derived from differential expression and alternative splicing studies [1]. Current research demonstrates that the default parameters of analysis software are often applied uniformly across data from different species without optimization. However, the performance and accuracy of these tools can vary significantly when processing data from diverse organisms, including humans, animals, plants, and fungi [1]. For laboratory researchers, navigating the complex landscape of analytical tools to construct a bespoke and efficient workflow presents a substantial challenge. This guide addresses this gap by providing a detailed, evidence-based troubleshooting resource for three predominant trimming tools—Trimmomatic, fastp, and Cutadapt—to empower researchers in building robust, optimized RNA-seq preprocessing pipelines.

Tool Comparison and Selection Guide

Selecting an appropriate trimming tool is the first critical decision in the preprocessing workflow. The choice often balances factors such as operational simplicity, processing speed, and the richness of features. The following table summarizes the key characteristics, strengths, and limitations of Trimmomatic, fastp, and Cutadapt to guide this selection.

Table 1: Comparison of Adapter Trimming and Quality Filtering Tools

| Tool | Primary Strength | Best For | Common Challenges | Citation |

|---|---|---|---|---|

| Trimmomatic | High sensitivity for adapter detection in paired-end data (palindrome mode) | Users requiring robust, proven methods for complex adapter contamination | Complex parameter setup; confusing adapter file specifications | [33] [34] [35] |

| fastp | Integrated quality control and reporting; rapid processing speed | Researchers seeking an all-in-one solution for fast preprocessing and QC | May provide different results compared to other tools on the same data | [1] [33] |

| Cutadapt | Flexible and precise handling of diverse adapter types and configurations | Applications requiring custom or non-standard adapter layouts | Requires clear understanding of 5' vs. 3' adapter definitions | [36] |

The decision process can be visualized as a workflow to help researchers select the most suitable tool based on their primary requirements.

Troubleshooting Common Adapter Trimming Issues

Trimmomatic: No Adapters Trimmed Despite Known Contamination

Problem Description: A user runs Trimmomatic with a known correct adapter sequence but the tool reports that zero reads were trimmed, even when visual inspection (e.g., via grep) confirms adapter presence in the raw FASTQ files [33].

Diagnosis and Solution: This common issue typically stems from two main sources: incorrect adapter sequence specification or misunderstanding Trimmomatic's operational modes.

- Incorrect Adapter Sequence: The sequences provided to Trimmomatic must be the reverse complement of the adapter sequences observable in the raw FASTQ files. The palindromic mode, in particular, requires this. If your adapter sequence is

AGATCGGAAGAGC, you might need to supply its reverse complement to Trimmomatic [33]. - Operational Mode Confusion: Trimmomatic has two distinct modes for adapter clipping: "simple mode" and "palindrome mode." The palindromic mode is automatically triggered based on the presence of a

/in the adapter name in the provided FASTA file (e.g.,>Prefix/1). This mode is highly sensitive for paired-end data but requires specific sequence formatting. For a fair comparison with other tools that use the "old fashioned" way of cutting, it is often recommended to avoid the palindromic mode and use the standard simple mode with the common core adapter sequence (e.g.,AGATCGGAAGAGC) [33].

Experimental Protocol for Validation: To systematically diagnose and resolve this issue, follow this protocol:

- Verify Adapter Presence: Use

grep -B1 -A2 "AGATCGGAAGAGC" your_raw_file.fastq | head -20to visually confirm adapter contamination. - Check Adapter File: Ensure your adapter FASTA file contains the correct sequences. For standard Illumina adapters, using just the common core sequence

AGATCGGAAGAGCis often sufficient and more reliable [33]. - Run a Controlled Test: Execute Trimmomatic with a minimal parameter set, ensuring you are not using the palindromic mode inadvertently. A typical command for single-end data might be:

- Compare with Alternate Tool: Run the same dataset through

fastporbbdukwith the same core adapter sequence to verify if the issue is tool-specific [33].

Inconsistent Trimming Results Across Different Tools

Problem Description: When analyzing the same dataset with Trimmomatic, fastp, and Cutadapt, the percentage of trimmed reads and bases differs drastically, leading to confusion about which result to trust [33].

Diagnosis and Solution: The discrepancies arise from several factors inherent to how these tools are designed and configured.

- Different Default Behaviors: Each tool has a unique set of default parameters and underlying algorithms for adapter detection and quality trimming. For instance, some tools may be more aggressive in quality-based trimming by default.

- Adapter Sequence and

k-merMatching: Thek-mersize used for matching (kinbbdukor similar parameters in other tools) significantly impacts sensitivity. A longerkrequires a more exact match, potentially reducing false positives but also missing partial adapter sequences [33]. - Under-the-Hood Filtering: Tools may apply additional, non-obvious filtering steps (e.g.,

tboandtpeinbbdukfor trimming one-base overlaps in paired-end data) that are not immediately apparent to the user [33].

Experimental Protocol for Harmonization: To perform a fair and consistent comparison between tools:

- Standardize the Adapter: Use the exact same core adapter sequence for all tools (e.g.,

AGATCGGAAGAGC). Do not rely on auto-detection for benchmarking [33]. - Document All Parameters: Explicitly set and document all key parameters related to stringency, such as error rates and minimum match lengths, aiming for equivalent values across tools where possible.

- Benchmark with a Subset: Use a subset of your data (e.g., 1 million reads) for rapid iteration and comparison.

- Evaluate Downstream Impact: The true test of a trimming tool's efficacy is its effect on downstream analysis. Compare the alignment rate, the percentage of uniquely mapped reads, and the number of detected genes in the subsequent RNA-seq workflow after using each tool's output [1].

fastp: Unbalanced Base Distribution After Trimming

Problem Description: After processing with fastp, while overall base quality (Q20/Q30) is improved, an unbalanced base distribution is observed in the tail of reads, potentially indicating over-trimming or a processing artifact [1].

Diagnosis and Solution: This issue, noted in benchmarking studies, suggests that while fastp effectively enhances data quality, it may introduce sequence biases in certain scenarios [1]. This can be particularly critical for applications like single-cell RNA-seq or when analyzing transcriptome ends.

- Parameter Tuning: Investigate and adjust parameters related to quality filtering and read cutting, such as

--cut_front,--cut_tail,--cut_window_size, and--cut_mean_quality. - Leverage Reporting: Use the comprehensive JSON report generated by fastp to identify the specific stage where the distribution becomes unbalanced.

- Tool Supplementation: If the issue persists, consider using fastp primarily for its speed and adapter trimming, followed by a more conservative quality trimming with another tool, or switch to an alternative like Trimmomatic's

MAXINFOtarget=0.5, which adaptively trims reads based on a balance between read length and accuracy [1] [35].

Frequently Asked Questions (FAQs)

Q1: What is the single most important parameter to get right in adapter trimming?

A1: The correct adapter sequence itself is paramount. Using the wrong sequence will lead to a failure to remove contaminants, regardless of other parameter tuning. Always confirm the adapter type used in your library preparation kit with your sequencing facility. For standard Illumina multiplexed adapters, the core sequence AGATCGGAAGAGC is a common starting point [33].

Q2: Should I trim for quality even if my data looks good and the aligner handles it? A2: Yes, it is generally considered best practice. While modern aligners are robust, proactive quality trimming improves alignment rates and reduces mis-mappings. Evidence suggests that tailored parameter configurations, as opposed to default settings, can provide more accurate biological insights [1]. Trimming low-quality bases gives a clearer picture of the actual high-quality sequence data available for downstream analysis [34].

Q3: How do I handle 5' adapters versus 3' adapters in Cutadapt?

A3: In Cutadapt, the option used defines the adapter type. Use -a for a regular 3' adapter (the adapter follows the sequence of interest) and -g for a regular 5' adapter (the adapter precedes the sequence of interest). Furthermore, you can "anchor" adapters to the starts or ends of reads for more precise matching: use -g ^ADAPTER for an anchored 5' adapter (must be at the very start of the read) and -a ADAPTER$ for an anchored 3' adapter (must be at the very end of the read) [36].

Q4: What is the recommended quality threshold for leading/trailing trimming? A4: A value of 3 is often used to remove the special Illumina 'low quality segment' (marked with a quality score of 2). For more aggressive quality-based trimming, a threshold in the range of 10-15 is common, with 15 being more conservative [35]. The choice can be informed by the quality score distribution in your FastQC report.

Table 2: Key Research Reagent Solutions for RNA-seq Preprocessing

| Item | Function/Description | Example/Note |

|---|---|---|

| Reference Materials | Provides "ground truth" for benchmarking and validating workflow performance. | Quartet and MAQC reference RNA samples with known differential expression profiles [37]. |

| Spike-in Control RNAs | Allows for monitoring of technical performance and normalization. | External RNA Control Consortium (ERCC) synthetic RNAs spiked into samples [37]. |

| Adapter Sequence Files | FASTA files containing standard Illumina adapter and primer sequences for accurate trimming. | Files like TruSeq3-SE.fa or TruSeq3-PE.fa provided with Trimmomatic [34]. |

| Quality Control Software | Assesses the quality of raw and processed sequencing data. | FastQC is the standard tool; fastp provides integrated QC [1] [34]. |

Optimizing the adapter trimming and quality filtering stage is a non-trivial yet crucial component of RNA-seq preprocessing workflow optimization. As large-scale benchmarking studies have revealed, the choice of tools and their parameters introduces a significant source of variation in final results, especially when aiming to detect subtle differential expression with clinical relevance [37]. The following integrated workflow summarizes the key steps for reliable preprocessing, from raw data to trimmed output.

The evidence is clear: a one-size-fits-all approach to RNA-seq preprocessing is suboptimal. Researchers are encouraged to benchmark their chosen tools on a subset of their data, leveraging reference materials where possible, to establish a tailored and validated preprocessing workflow that ensures the highest data quality for their specific biological questions and study systems [1] [37].

A technical support guide for researchers optimizing RNA-seq preprocessing workflows.

Accurate alignment of transcribed RNA to a reference genome is a critical step in RNA sequencing analysis, enabling the connection of genomic information with phenotypic and physiological data [38]. The choice of alignment tool directly impacts the quality of all downstream analyses, including differential gene expression and transcript isoform discovery. This guide provides a structured comparison of mainstream alignment strategies—the full-aligners HISAT2 and STAR, and the pseudoaligner Salmon—to help you select and troubleshoot the optimal tool for your specific research context within a drug development or scientific research environment.

Tool Selection Guide

1. Which tool should I choose for standard gene expression quantification in a model organism with a good reference genome?

For most standard gene expression analyses, all three tools are excellent choices, as they produce highly correlated raw count distributions and significantly overlapping differential expression results [38]. The decision often comes down to your specific computational constraints and research goals.

- Choose STAR when you prioritize high sensitivity for detecting novel splice junctions and have access to substantial computational resources (e.g., a server with >30 GB RAM for the human genome).

- Choose HISAT2 if you are working with limited memory (RAM) or need a faster runtime. It is a robust and efficient general-purpose aligner [39] [25].

- Choose Salmon when your primary goal is fast and accurate gene/transcript quantification and you are willing to forgo the generation of a genomic BAM file, which is useful for visualization but computationally expensive to create.

2. How do I approach alignment if my primary interest is in differential expression analysis for drug treatment studies?

If your goal is Differential Gene Expression (DGE) analysis, you can confidently use any of these tools with a standard downstream analysis package like DESeq2. A comparative study showed that while the raw counts from different mappers are highly correlated, the choice of the differential expression software can have a more dramatic impact on the final results than the choice of aligner itself [38]. Therefore, ensure consistency in your DGE methodology across comparisons.

3. What are the key computational considerations when choosing an aligner?

The computational profiles of these tools differ significantly, which is a crucial factor in project planning, especially when processing large datasets.

Table 1: Computational Resource Comparison of RNA-seq Aligners

| Aligner | Typical Memory Usage | Speed | Primary Index Type | Key Consideration |

|---|---|---|---|---|

| STAR | High (e.g., ~38 GB for human GRCh37) [40] | Fast, but resource-intensive [41] | Suffix Array [39] | Requires high-throughput disks for optimal scaling with multiple threads [41]. |

| HISAT2 | Lower than STAR [40] | Very fast (~3x faster than other aligners in one comparison) [39] | Graph FM-index [38] | Ideal for environments with memory limitations. |

| Salmon | Low (in quasi-mapping mode) | Very fast ("lightweight") [42] | Duplex-linked de Bruijn graph [38] | Bypasses traditional alignment, leading to significant speed and resource gains. |

4. My aligner reports many multimapped reads. Is this a problem, and how should it be handled?

Multimapped reads, which align to multiple locations in the genome due to repetitive sequences or paralogs, are a common challenge. Most aligners, including BWA and STAR, will report a quantitative measure of these multireads [39]. Tools like Salmon and kallisto have built-in statistical models to probabilistically assign these reads, which is a key advantage of their quantification-focused approach [38]. For alignment-based tools, it is standard practice to either discard multireads or use tools that can account for them in a probabilistic manner during the quantification step.

Troubleshooting FAQs

1. I am seeing a high rate of spurious spliced alignments in repetitive regions. How can I resolve this?

Widely used splice-aware aligners like STAR and HISAT2 can sometimes introduce erroneous spliced alignments between repeated sequences (e.g., Alu elements in humans) [43]. This can lead to the inclusion of falsely spliced transcripts, or "phantom" introns, in your analysis.

- Solution: Use a tool like EASTR (Emending Alignments of Spliced Transcript Reads) to post-process your alignment files. EASTR detects and removes these falsely spliced alignments by examining the sequence similarity between the flanking regions of an intron and their frequency in the genome [43]. Applying EASTR before transcript assembly has been shown to substantially reduce false positive introns, exons, and transcripts in diverse species, including human, maize, and Arabidopsis thaliana [43].

2. A large percentage of my reads are not aligning. What are the potential causes and fixes?

A high rate of unaligned reads can stem from both biological and technical issues [39].

- Potential Causes:

- Reference Mismatch: Your sample may have significant genetic polymorphisms relative to the reference genome, or the reference may be incomplete.

- Adapter Contamination: Sequencing adapters were not adequately trimmed from your raw reads.

- Low-Quality Reads: The presence of many low-quality bases prevents confident alignment.

- High RNA Complexity: Libraries prepared with rRNA-depletion (ribo-minus) methods have been observed to have a higher proportion of spurious alignments and may present more mapping challenges compared to poly(A) selected libraries [43].

- Troubleshooting Steps:

- Re-inspect Raw Reads: Use FastQC and MultiQC on your raw and trimmed FASTQ files to check for adapter content, overall quality, and GC content [25].

- Verify Trimming: Ensure your pre-processing step (using tools like Trimmomatic or Trim Galore!) successfully removed adapters and low-quality bases [25] [44].

- Check Reference Compatibility: Confirm that you are using the correct version of the reference genome and that your annotation file (GTF/GFF) matches the genome version.

3. How can I determine the strandedness of my RNA-seq library, and why is it important?

Stranded information is crucial when analyzing genomic regions with overlapping genes on opposite DNA strands. Incorrectly specifying strandedness can lead to misassignment of reads and inaccurate quantification.

- Solution: Most modern pipelines can automatically infer strandedness. For example, the nf-core/RNA-seq pipeline will sub-sample reads and use Salmon Quant to infer the strandedness, classifying the library as forward-stranded, reverse-stranded, or unstranded based on configurable thresholds [40]. The results are then displayed in the MultiQC report for verification [40]. You can also check your library preparation kit's manual, as the method (e.g., the standard dUTP method) determines the expected strand orientation.

Experimental Protocols & Workflows

Standard Operating Procedure (SOP): RNA-seq Alignment and Quantification

The following workflow outlines the key steps for RNA-seq data processing, from raw reads to gene counts [25] [42].

Phase 1: Pre-processing of Raw Reads

- Step 1.1: Quality Check. Use FastQC on raw FASTQ files to assess read quality, GC content, and adapter contamination. Collate reports across all samples with MultiQC [25].

- Step 1.2: Adapter and Quality Trimming. Use a tool like Trimmomatic or Trim Galore! to perform three key clean-up steps simultaneously [25]:

- Remove adapter sequences.

- Trim low-quality bases from the ends of reads (e.g., Phred score < 25).

- Discard reads that become very short (e.g., < 20 bases) after trimming.

- Step 1.3: Quality Re-check. Run FastQC again on the trimmed FASTQ files to confirm improvements in data quality [25].

Phase 2: Alignment and Quantification

This phase can follow one of two primary paths, as shown in the workflow diagram.

Protocol 1: Traditional Alignment-based Approach

- Alignment: Use a splice-aware aligner like STAR or HISAT2 to map reads to the reference genome. The aligner must be provided with:

- The reference genome (FASTA) and its pre-built index.

- Gene annotation file (GTF/GFF) that matches the genome version.

- Information on whether the data is single-end or paired-end and the library's strandedness [25].

- Quantification: Use a tool like featureCounts or HTSeq to generate a count matrix based on the genomic alignments (BAM files), assigning reads to genes.

- Alignment: Use a splice-aware aligner like STAR or HISAT2 to map reads to the reference genome. The aligner must be provided with:

Protocol 2: Lightweight Alignment/Pseudoalignment Approach

- Pseudoalignment and Quantification: Use Salmon (in quasi-mapping mode) or Kallisto. These tools require a reference transcriptome (FASTA) and bypass the generation of a full genomic BAM file, directly producing a count matrix of transcript abundances [42]. This method is typically much faster and less resource-intensive [42].

Phase 3: Downstream Analysis

- The final count matrix from either protocol is used for Differential Expression Analysis with tools like DESeq2 or edgeR [42].

Validation and Benchmarking Protocol

To validate your RNA-seq pipeline and results, consider this benchmarking approach:

- Housekeeping Gene Set: Identify a set of constitutively expressed genes in your dataset. Their expression should remain stable across conditions, providing a benchmark for precision [44].

- qRT-PCR Validation: Select a subset of genes (e.g., 30-32) representing high, low, and medium expression levels for validation using quantitative RT-PCR. Use a robust normalization method (e.g., global median normalization or the most stable gene) for the qRT-PCR data [44].

- Performance Metrics: Compare the RNA-seq results against the qRT-PCR gold standard to measure the accuracy and precision of the different alignment and quantification pipelines [44].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Category | Function / Application |

|---|---|---|

| STAR | Software | Spliced Transcripts Alignment to a Reference; aligns RNA-seq reads using a suffix array index [39] [25]. |

| HISAT2 | Software | Hierarchical Indexing for Spliced Alignment of Transcripts 2; uses a graph FM-index for memory-efficient alignment [38] [25]. |

| Salmon | Software | Provides fast, bias-aware quantification of transcript expression using quasi-mapping or pseudoalignment [38] [42]. |

| DESeq2 | Software | Performs differential gene expression analysis from count data using a negative binomial model [38] [42]. |

| Trimmomatic | Software | A flexible tool for the adapter removal and quality trimming of FASTQ files [25] [44]. |

| FastQC | Software | Provides quality control reports for raw sequencing data, highlighting potential issues [25]. |

| EASTR | Software | Post-alignment tool that detects and removes falsely spliced alignments in repetitive regions [43]. |

| Reference Genome (FASTA) | Data | The genomic sequence of the organism used as the alignment scaffold [25]. |

| Gene Annotation (GTF/GFF) | Data | File specifying the locations and structures of genes and transcripts on the reference genome [25]. |

| SRA Toolkit | Software | Collection of tools to access and convert data from the NCBI Sequence Read Archive (SRA) [41]. |

Frequently Asked Questions (FAQs)

Q1: A very high percentage of my reads are being reported as __no_feature by HTSeq-count. What are the primary causes?

- Incorrect Strandedness Setting: This is a very common cause. The default setting in HTSeq-count is

--stranded=yes. If your RNA-Seq library was prepared with a non-strand-specific protocol, this will cause approximately half of your reads to be lost as they will not be assigned to features on the correct strand. Always confirm your library type and set the--strandedoption accordingly (yes,no, orreverse) [45] [46]. - Mismatched Chromosome Names: The chromosome names (seqnames) in your BAM file and your GTF/GFF file must match exactly. A common discrepancy is "chr1" in the BAM file versus "1" in the GTF file, or vice versa. Visually inspect the headers of both files to ensure consistency [47] [48] [49].

- Incorrect Feature Type or Attribute: By default, HTSeq-count uses

exonas the feature--typeandgene_idas the--idattr. If your annotation file uses different values (e.g.,CDSfor type orIDfor the attribute), you must specify these parameters in the command [45] [46]. - Poor Read Alignment or Library Quality: If reads do not align correctly to genomic regions containing features, they cannot be counted. Examine your BAM files in a genome browser alongside the annotation file to verify that reads align to exonic regions. Also, check for issues like high levels of sequence duplication or overrepresented sequences from poor library construction [49].

Q2: What is the difference between HTSeq-count's union, intersection-strict, and intersection-nonempty modes?

These modes determine how a read is assigned when it overlaps multiple genomic features. The following table summarizes the three overlap resolution modes [45]:

Table: HTSeq-count Overlap Resolution Modes

| Mode | Description | Use Case |

|---|---|---|

union |

A read is counted for a feature if it overlaps the feature and any of its overlapping features do not form an set with more than one feature. This is the default and recommended for most analyses. | Standard RNA-seq analysis. Provides a balanced approach. |

intersection-strict |

A read is only counted for a feature if every single base of the read overlaps the feature. | Very conservative counting; may underestimate counts. |

intersection-nonempty |

A read is counted for a feature if at least one base of the read overlaps the feature and the read does not also overlap any other feature. | Less conservative than intersection-strict. |

Q3: My quantification tool (HTSeq-count or featureCounts) fails to run or produces zero counts, even though my BAM file has alignments. What should I check?

- File Format Validity: Ensure your BAM file is not corrupted or truncated. Use tools like

samtools quickcheckto validate the BAM file. If using a SAM file as input for HTSeq-count, confirm it has a proper header [50] [51]. - Reference Mismatch: The most severe cause is a complete mismatch between the reference genome used for alignment and the annotation file used for counting. For example, attempting to count reads aligned to a transcriptome (e.g., with XM/XP accessions) using a genome-based GTF file will fail because the coordinates are incompatible. Always use an annotation file that corresponds to the exact same reference build your reads were aligned to [47] [52].

- GTF/GFF Formatting: Check that your annotation file is properly formatted. Some tools are sensitive to header lines or specific fields. Ensure the file is not malformed [49].

Troubleshooting Guides

Diagnosis Workflow

The following diagram outlines a logical workflow for diagnosing common issues in read counting.

Guide: Resolving Strandedness Issues

Incorrect strandedness settings are a prevalent source of low count yields. This protocol provides a method to empirically determine the correct setting.

Experimental Protocol: Determining RNA-seq Library Strandedness

- Select a Subset: Isolate a subset of your BAM file (e.g., 100,000 properly aligned read pairs) using

samtools view. - Run Inferring Experiment: Use the

infer_experiment.pyscript from the RSeQC package [49].-r genes.bed: A BED file of gene coordinates.-i sample_subset.bam: Your subset BAM file.

- Interpret Output: The script will output a fraction, for example:

Fraction of reads failed to determine: 0.05Fraction of reads explained by "1++,1--,2+-,2-+": 0.90Fraction of reads explained by "1+-,1-+,2++,2--": 0.05This result indicates that 90% of reads are in the forward strand orientation, meaning the data is strand-specific. You would then use--stranded=yesin HTSeq-count. If the two fractions are roughly equal (~0.5 each), your data is unstranded, and you should use--stranded=no.

Parameter Optimization Tables

Table: Key HTSeq-count Parameters for Optimization [45]

| Parameter | Default Value | Description & Optimization Guidance |

|---|---|---|

--stranded |

yes |

Critical to check. Set to no for non-strand-specific libraries. reverse for certain stranded protocols [46]. |

--mode |

union |

Determines how reads overlapping multiple features are handled. union is recommended for most use cases [45] [46]. |

--type |

exon |

The feature type from the GTF/GFF file to count against. Must match the annotation (e.g., could be "CDS", "mRNA") [45] [46]. |

--idattr |

gene_id |

The GTF attribute used to group features into counting units. Must match the annotation (e.g., gene_id, ID, transcript_id) [45] [52]. |

--a |

10 |

Minimum alignment quality score. Reads with lower scores are skipped. Adjust if many uniquely mapped reads have low scores. |

Table: Interpretation of HTSeq-count's Special Counters [45]

| Special Counter | Description | Troubleshooting Implication |

|---|---|---|

__no_feature |

Read does not overlap any feature. | High percentage: Check strandedness, chromosome names, annotation file, or alignment quality. |

__ambiguous |

Read overlaps multiple features. | High percentage: Expected in genes with overlapping exons/UTRs. Consider a different --mode. |

__alignment_not_unique |

Read multi-mapped to the genome. | High percentage: Expected in repetitive regions. Filter your BAM for uniquely mapped reads first. |

__too_low_aQual |

Read was skipped due to low mapping quality. | Check the distribution of MAPQ scores in your BAM file. |

The Scientist's Toolkit

Table: Essential Research Reagents and Tools for Post-Alignment Quantification

| Item | Function / Explanation |

|---|---|

| SAM/BAM File | The aligned sequencing reads, the primary input for quantification. Must be sorted (by name or position) as required by the tool [45]. |

| Annotation File (GTF/GFF3) | Provides the genomic coordinates of features (e.g., genes, exons). Must be from the same genome build and have consistent chromosome naming as the BAM file [48] [49]. |

| HTSeq-count | A Python-based script to assign reads to genomic features and generate a count matrix. Highly configurable for different annotation styles [45]. |

| featureCounts | A popular alternative from the Subread package, known for fast performance and efficient handling of multi-mapping reads [46] [49]. |

| samtools | A toolkit for manipulating SAM/BAM files. Used for sorting, indexing, subsetting, and validating alignment files before quantification [45] [51]. |

| RSeQC | A Python package for RNA-seq quality control. Its infer_experiment.py script is crucial for determining library strandedness [49]. |

| Genome Browser (IGV/UCSC) | Allows visual inspection of read alignments against the reference genome and annotation tracks, which is vital for verifying assignments and troubleshooting [46] [49]. |

In RNA sequencing (RNA-seq) analysis, normalization is not merely a optional step but a fundamental prerequisite for ensuring data integrity and biological validity. It adjusts raw transcriptomic data to account for various technical factors that may mask actual biological effects and lead to incorrect conclusions [5]. The process corrects for technical variability including sequencing depth, gene length, and sample-to-sample variability [5]. This technical guide explores four prominent normalization methods—CPM, RPKM/FPKM, TPM, and TMM—with particular emphasis on their applicability for cross-sample comparison, a critical consideration in robust experimental design.

Understanding the Normalization Landscape

Why Normalization is Essential

RNA-seq generates raw count data that is influenced by multiple technical factors rather than just biological differences. Sequencing depth varies between samples, meaning a sample with more total reads will naturally have higher counts for most genes [5]. Gene length also affects quantification, as longer transcripts generate more fragments than shorter ones at the same expression level [5]. Normalization methods exist to minimize these technical variables, ensuring reliable transcriptomic data comparison [5].

The Three Stages of RNA-seq Normalization

RNA-seq normalization occurs at three distinct levels, each serving different analytical purposes:

- Within-sample normalization: Enables comparison of gene expression within an individual sample by adjusting for transcript length and sequencing depth [5].

- Within-dataset normalization (between samples): Facilitates comparison across different samples within the same experiment by accounting for technical variations like sequencing depth [5].

- Across-datasets normalization: Corrects for batch effects when integrating data from multiple independent studies sequenced at different times, with varying methods, or across multiple facilities [5].

Normalization Methods Deep Dive

CPM (Counts Per Million)