PCA Loading Calculation for Gene Selection in Transcriptomics: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive guide to Principal Component Analysis (PCA) loading calculation for effective gene selection in transcriptomic studies.

PCA Loading Calculation for Gene Selection in Transcriptomics: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to Principal Component Analysis (PCA) loading calculation for effective gene selection in transcriptomic studies. Targeting researchers, scientists, and drug development professionals, we explore the mathematical foundations of PCA loadings, practical methodologies for gene selection across diverse applications including drug response analysis and spatial transcriptomics, optimization strategies to address common pitfalls, and comparative validation against alternative dimensionality reduction techniques. By synthesizing recent benchmarking studies and methodological advances, this resource equips researchers with robust frameworks for extracting biologically meaningful gene signatures from high-dimensional transcriptomic data, ultimately enhancing drug discovery and clinical translation.

Understanding PCA Loadings: Mathematical Foundations and Biological Interpretation in Transcriptomics

Transcriptomics data, which captures genome-wide expression levels, is characterized by an extreme asymmetry between features and samples: a typical experiment may profile over 40,000 gene products from only a few dozen or hundred biological samples [1]. This "large d, small n" problem, where the number of dimensions (genes) vastly exceeds the number of observations (samples), constitutes the core challenge in transcriptomics analysis [1]. Without effective dimensionality reduction, downstream statistical analyses become computationally infeasible and statistically unreliable due to multicollinearity, overfitting, and noise accumulation.

The curse of dimensionality manifests in several critical ways in transcriptomic studies. First, the distance between data points becomes less meaningful in high-dimensional space, complicating clustering and classification. Second, the abundance of non-informative genes (those exhibiting only random or technical variation) can obscure biologically meaningful signals. Third, the computational burden of processing tens of thousands of features can be prohibitive, especially for interactive exploratory analysis. Principal Component Analysis (PCA) has emerged as a fundamental tool to address these challenges by transforming the original high-dimensional gene expression data into a lower-dimensional space of principal components (PCs) that capture the dominant patterns of variation [1].

PCA Fundamentals and Gene Selection Rationale

Theoretical Basis of PCA

Principal Component Analysis is a multivariate technique that identifies orthogonal directions of maximum variance in high-dimensional data. Mathematically, given a gene expression matrix X with p genes (features) and n samples (observations), PCA computes the eigenvectors and eigenvalues of the covariance matrix Σ of X [1]. These eigenvectors, called principal components (PCs), form a new set of uncorrelated variables that are linear combinations of the original genes:

[ PCi = w{i1}Gene1 + w{i2}Gene2 + \cdots + w{ip}Gene_p ]

where the coefficients (w_{ij}) are the loadings representing the contribution of each gene to the respective principal component. The eigenvalues correspond to the amount of variance explained by each PC, with the first PC capturing the largest possible variance, the second PC capturing the next largest variance while being orthogonal to the first, and so on [1].

The fundamental property that makes PCA valuable for addressing the curse of dimensionality is that typically only a small number of PCs (often 2-10) are needed to capture the majority of biological variation in the data, while the remaining components often represent noise [1]. This enables a dramatic reduction from tens of thousands of gene dimensions to a manageable number of meta-features (PCs) for downstream analysis.

PCA Loadings for Gene Selection

While PCA is commonly used for sample visualization and clustering, its loading structure provides a powerful mechanism for gene selection. The absolute magnitude of a gene's loading on a principal component indicates how strongly that gene influences the component's direction [2]. Genes with larger absolute loadings contribute more substantially to the observed patterns of variation in the data.

The calculation of PCA loadings enables a data-driven approach to feature selection that prioritizes genes based on their contribution to meaningful variation rather than arbitrary thresholds. This methodology is particularly valuable because it responds to the specific covariance structure of each dataset, identifying genes that collectively explain the dominant biological patterns. When applying PCA loading-based gene selection, researchers typically:

- Perform PCA on the normalized gene expression matrix

- Examine the loadings for biologically interpretable components (often the first 2-5 PCs)

- Select genes exceeding a threshold in absolute loading values (e.g., >0.02 as used in one protocol) [2]

- Validate the selected gene set through downstream biological interpretation and functional analysis

This approach effectively filters out genes that contribute minimally to the major axes of variation, thereby mitigating the curse of dimensionality while preserving biologically relevant signals.

Protocol: PCA Loading Calculation for Gene Selection

Experimental Workflow

The following protocol details the complete workflow for PCA-based gene selection, from data preprocessing through gene prioritization. This methodology is adapted from established bioinformatics protocols with specific modifications for enhanced gene selection [2].

Step-by-Step Methodology

Step 1: Data Preprocessing and Quality Control

Begin with a raw count matrix from RNA-seq or normalized intensity values from microarray data. For RNA-seq data, apply appropriate normalization methods such as TPM (Transcripts Per Million) or DESeq2's median of ratios. For microarray data, use RMA (Robust Multi-array Average) or quantile normalization. Examine sample-level metrics including total counts, detected genes, and outlier samples. Remove samples failing quality thresholds.

Step 2: Initial Gene Filtering

Apply basic filters to reduce the initial gene set to those demonstrating evidence of biological relevance:

- Statistical significance filtering: Retain genes with p-value < 0.05 in differential expression analysis between comparison groups [2]

- Fold change thresholding: Include genes with absolute fold change > |1.2| between conditions [2]

- Expression level filtering: Optional minimum expression threshold (e.g., ≥1 TPM in ≥20% of samples)

Note: The pool of 4,731 genes was preselected based on statistical significance (p < 0.05) and fold change (>|1.2|) in the referenced protocol [2].

Step 3: PCA Calculation

Center the filtered data matrix to mean zero and optionally scale to unit variance. Scaling is recommended when genes have substantially different variances. Perform PCA decomposition using preferred computational environment:

R implementation:

MATLAB implementation:

Step 4: Loading Extraction and Interpretation

Extract the loading matrix, where columns represent principal components and rows represent genes. Identify the most biologically relevant components for further analysis—typically the first 2-5 components that explain the majority of variance. Examine the loading patterns to interpret biological themes associated with each component.

Step 5: Gene Selection Based on Loading Threshold

Calculate the Euclidean norm of loadings across selected components or examine absolute loadings from individual components. Apply a loading threshold to select influential genes:

Loading norm calculation:

Note: A cutoff of 0.02 was used to select genes with the highest norm value as they have the largest influence on PCA distribution of samples in the referenced protocol [2].

Step 6: Validation and Downstream Analysis

Validate the selected gene set through functional enrichment analysis (Gene Ontology, KEGG pathways). Proceed with downstream analyses using the reduced gene set, including clustering, classification, or network construction.

Research Reagent Solutions

Table 1: Essential research reagents and computational tools for PCA-based gene selection

| Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| Statistical Environment | R Statistical Language [1] | Data preprocessing, PCA implementation, visualization | General transcriptomics analysis |

| MATLAB [2] | High-performance PCA computation | Engineering-focused research environments | |

| PCA Packages | prcomp (R) [1] | Efficient PCA computation | Standard PCA implementation |

| princomp (MATLAB) [1] | PCA with various normalization options | MATLAB-based workflows | |

| SmartPCA (EIGENSOFT) [3] | Advanced PCA with outlier detection | Population genetics, large-scale studies | |

| Visualization Tools | ggplot2 (R) | Loading plots, variance explanation | Publication-quality figures |

| UMAP [4] | Non-linear dimensionality reduction | Comparative visualization with PCA | |

| Gene Expression Platforms | TempO-Seq [5] | Targeted transcriptome profiling | Focused gene panels, fixed samples |

| RNA-Seq pipelines | Whole transcriptome sequencing | Comprehensive expression profiling |

Comparative Performance of Dimensionality Reduction Methods

Benchmarking Framework and Metrics

While PCA remains widely used, recent benchmarking studies have evaluated its performance against modern dimensionality reduction techniques. A comprehensive assessment of 30 DR methods used both internal and external validation metrics to evaluate their ability to preserve biological patterns in drug-induced transcriptomic data from the Connectivity Map (CMap) dataset [4].

Internal validation metrics assess the inherent structure of the reduced space without reference to external labels [4]:

- Davies-Bouldin Index (DBI): Measures cluster separation (lower values better)

- Silhouette Score: Quantifies cluster cohesion and separation (higher values better)

- Variance Ratio Criterion (VRC): Assesses between-cluster vs within-cluster variance

External validation evaluates how well the reduced representation aligns with known biological categories [4]:

- Normalized Mutual Information (NMI): Measures agreement between clusters and true labels

- Adjusted Rand Index (ARI): Assesses similarity between data partitions

Performance Comparison

Table 2: Performance comparison of dimensionality reduction methods on transcriptomic data

| Method | Category | Key Strengths | Limitations | Performance Ranking |

|---|---|---|---|---|

| PCA [4] | Linear | Computational efficiency, interpretability | Poor preservation of local structure | Consistently ranked in bottom performers [4] |

| t-SNE [4] | Non-linear | Excellent local structure preservation | Computational intensity, parameter sensitivity | Top performer for local structure |

| UMAP [4] | Non-linear | Balance of local/global structure | Complex parameter tuning | Top performer for biological similarity |

| PaCMAP [4] | Non-linear | Enhanced global structure preservation | Limited track record | Top performer across multiple metrics [4] |

| PHATE [4] | Non-linear | Captures trajectory relationships | Specialized for developmental data | Strong for dose-response data [4] |

| Sparse PCA [1] | Linear | Built-in feature selection | Computational complexity | Improved interpretability vs standard PCA |

The benchmarking revealed that PCA consistently underperformed compared to modern non-linear methods across multiple evaluation metrics, particularly in preserving biological similarity and enabling accurate clustering of transcriptomic profiles [4]. Specifically, PaCMAP, TRIMAP, t-SNE, and UMAP consistently ranked in the top five across diverse evaluation datasets, while PCA ranked relatively poorly despite its widespread application [4].

Advanced Applications and Integrative Approaches

Supervised and Sparse PCA Variations

To address limitations of standard PCA, several enhanced variants have been developed:

Supervised PCA incorporates response variable information to guide component identification, often improving predictive performance for specific biological endpoints [1]. This approach is particularly valuable when prior knowledge exists about sample groupings or outcomes of interest.

Sparse PCA incorporates regularization to produce loading vectors with many exact zero entries, resulting in more interpretable components that depend on smaller gene subsets [1]. This built-in feature selection can directly address the curse of dimensionality by automatically identifying sparse gene sets that capture dominant data structures.

Functional PCA extends the methodology to time-course gene expression data, modeling continuous temporal patterns rather than static snapshots [1]. This is particularly relevant for developmental studies or perturbation time series.

Integration with Explainable Machine Learning

Recent approaches integrate PCA with explainable machine learning frameworks to enhance biomarker discovery. The DeepGene pipeline employs a two-stage ensemble strategy that combines filter-based techniques (including PCA loading analysis) with explainable AI methods to identify robust, interpretable gene biomarkers [6].

This integrated approach demonstrated 3-10% improvement in classification accuracy across six cancer microarray datasets compared to conventional methods, while providing greater stability in feature selection [6]. The ensemble strategy mitigates the instability often observed in individual feature selection methods, including PCA loading thresholds.

Similarly, SHAP (Shapley Additive exPlanations) analysis has been successfully integrated with transcriptomics classification to identify cell-type specific immune signatures in age-related macular degeneration [7]. This approach identified 81 genes that effectively distinguished disease from control samples (AUC-ROC of 0.80), with the resulting model providing biological insights into disease mechanisms [7].

Pathway and Network-Informed PCA

Moving beyond gene-level analysis, PCA can be applied to predefined biological pathways or network modules. Rather than analyzing all genes simultaneously, this approach:

- Groups genes into functional units based on prior knowledge (pathways, protein complexes)

- Applies PCA separately to each functional unit

- Uses the resulting PCs as representative features for downstream analysis

This strategy respects the modular organization of biological systems while substantially reducing dimensionality, and has been shown to improve interpretation and replication of findings [1].

Implementation Considerations and Best Practices

Critical Parameter Optimization

Successful application of PCA-based gene selection requires careful attention to several parameters:

Loading threshold selection: Rather than using arbitrary thresholds, consider data-driven approaches such as:

- Permutation-based significance (shuffling labels to establish null distribution)

- Top-k gene selection (fixed number of most influential genes)

- Proportion of variance explained (cumulative contribution to component variance)

Component selection: The number of components to include in loading calculations should balance variance explanation and biological interpretability. While scree plots and Kaiser criterion (eigenvalue >1) provide traditional guidance, consider also:

- Tracy-Widom statistics for significance testing [1]

- Biological coherence of component loadings

- Downstream analysis performance with different component sets

Limitations and Caveats

Despite its utility, PCA-based gene selection has important limitations that researchers must consider:

Interpretation challenges: A recent critical evaluation highlighted that PCA results can be highly sensitive to data artifacts and analytical choices, potentially leading to biased interpretations [3]. The same study demonstrated that PCA outcomes can be easily manipulated to generate desired results through selective population inclusion or marker selection [3].

Variance-bias: PCA prioritizes high-variance genes, which may not always align with biological significance. Technical artifacts or outlier samples can disproportionately influence components.

Linear assumptions: PCA captures only linear relationships between genes, potentially missing important non-linear dependencies that methods like UMAP or t-SNE can detect [4].

Recommendations for Robust Analysis

To maximize reliability and biological relevance of PCA-based gene selection:

- Apply multiple feature selection methods and compare results (ensemble approaches often outperform individual methods) [6]

- Validate selected gene sets in independent datasets when possible

- Incorporate biological knowledge through pathway-informed analyses or integration with functional annotations

- Compare with non-linear methods like UMAP or PaCMAP, particularly when analyzing complex biological responses [4]

- Document all parameter choices and conduct sensitivity analyses to ensure robustness of findings

The curse of dimensionality remains a fundamental challenge in transcriptomics, and PCA loading calculation provides a mathematically principled approach to gene selection that addresses this issue. While traditional PCA offers computational efficiency and interpretability, recent benchmarking indicates that modern non-linear methods often outperform PCA in preserving biological structures [4]. Nevertheless, PCA-based gene selection continues to offer value, particularly when enhanced through sparse implementations, integration with explainable machine learning, or pathway-informed modifications.

The evolving landscape of dimensionality reduction in transcriptomics points toward ensemble approaches that combine multiple feature selection strategies [6] [7], with PCA remaining a valuable component in this multifaceted toolkit. As transcriptomics technologies continue to advance, producing increasingly complex and high-dimensional data, the development of robust dimensionality reduction methods will remain essential for extracting biologically meaningful insights from the vast complexity of gene expression data.

Principal Component Analysis (PCA) is an indispensable dimensionality reduction technique in transcriptomics, where researchers routinely analyze datasets containing thousands of genes (variables) across limited samples. This high-dimensionality creates significant challenges for visualization, analysis, and mathematical operations—a phenomenon known as the "curse of dimensionality" [8]. PCA addresses this by performing an orthogonal transformation that converts potentially correlated gene expression variables into a set of linearly uncorrelated principal components, ordered such that the first component captures the greatest variance in the data [9]. This transformation enables researchers to project high-dimensional gene expression data into lower-dimensional spaces while preserving essential structural information, thereby simplifying the identification of patterns, clusters, and outliers within complex biological datasets [8] [9].

The calculation and interpretation of PCA loadings—the coefficients representing the contribution of each original variable to each principal component—are particularly crucial for gene selection in transcriptomics research. These loadings provide mechanistic insights into which genes drive the observed variation between samples, allowing researchers to prioritize genes for further experimental investigation and biological interpretation [10]. This protocol will deconstruct PCA methodology with emphasis on loading calculation and its practical application for gene selection in transcriptomic studies.

Theoretical Foundations: The Mathematics of PCA

Variance Maximization and Component Extraction

The fundamental operation of PCA begins with the covariance matrix computation derived from the mean-centered gene expression matrix [11]. For a dataset with genes as variables and samples as observations, this step identifies how each gene's expression co-varies with others across samples. The eigenvalues and eigenvectors of this covariance matrix are then calculated, with eigenvectors representing the principal components (directions of maximum variance) and eigenvalues indicating the amount of variance explained by each component [11] [9]. These eigenvectors are sorted in decreasing order of their corresponding eigenvalues, establishing the hierarchy of principal components from most to least informative [11].

The mathematical transformation can be represented as follows: given a mean-centered gene expression matrix ( X ), PCA solves the eigen decomposition problem ( C = VΛV^T ) where ( C ) is the covariance matrix of ( X ), ( V ) contains the eigenvectors (principal components), and ( Λ ) is a diagonal matrix containing the eigenvalues. The projection of original data into the principal component space is achieved through the linear transformation ( Y = XV ), where ( Y ) represents the coordinates of samples in the new component space [11] [9].

Loading Calculation and Interpretation

PCA loadings are defined as the eigenvectors scaled by the square root of their corresponding eigenvalues, effectively representing the correlation between original genes and principal components. The loading ( l{ij} ) for gene ( i ) on component ( j ) is calculated as ( l{ij} = v{ij} \sqrt{λj} ), where ( v{ij} ) is the ( i )-th element of the ( j )-th eigenvector and ( λj ) is the ( j )-th eigenvalue [10].

These loadings provide crucial biological insights by:

- Identifying genes that contribute most significantly to each component

- Revealing coordinated gene expression patterns

- Enabling biological interpretation of components through gene functional analysis

- Guiding feature selection for downstream analyses [10]

For accurate interpretation, researchers should note that genes with larger absolute loading values on a specific component have greater influence on that component's direction in the expression space.

Table 1: Key Mathematical Components of PCA

| Component | Mathematical Representation | Biological Interpretation |

|---|---|---|

| Eigenvalues | λ₁, λ₂, ..., λₚ | Variance explained by each principal component |

| Eigenvectors | v₁, v₂, ..., vₚ | Direction of maximum variance in expression space |

| Loadings | lᵢⱼ = vᵢⱼ × √λⱼ | Correlation between gene i and component j |

| Projected Data | Y = X × V | Sample coordinates in new component space |

PCA-Based Gene Selection Protocol

Computational Framework for Loading Analysis

The following protocol outlines a standardized approach for PCA-based gene selection from transcriptomics data, incorporating quality control and validation steps essential for robust biological interpretation.

Data Preprocessing and Quality Control

- Input Data Requirements: Begin with a normalized gene expression matrix (samples × genes) from RNA-seq or microarray experiments. For single-cell RNA-seq data, ensure appropriate normalization for technical artifacts and dropout events [12].

- Normalization Procedures: Apply relevant normalization methods (e.g., TPM for bulk RNA-seq, UMI count normalization for scRNA-seq) to make expression values comparable across samples.

- Mean-Centering: For each gene, subtract the mean expression across all samples to center the data, a prerequisite for PCA computation [11] [10].

- Quality Assessment: Examine data distributions and remove genes with excessive zeros or minimal variation, as they contribute little to principal components.

PCA Implementation and Loading Extraction

- Covariance Matrix Calculation: Compute the covariance matrix from the preprocessed, mean-centered gene expression matrix [11].

- Eigen Decomposition: Perform eigen decomposition on the covariance matrix to obtain eigenvalues and eigenvectors using standard numerical algorithms [11].

- Loading Calculation: Scale eigenvectors by the square root of corresponding eigenvalues to generate the loading matrix [10].

- Variance Explanation Assessment: Calculate the proportion of variance explained by each component as the ratio of its eigenvalue to the sum of all eigenvalues.

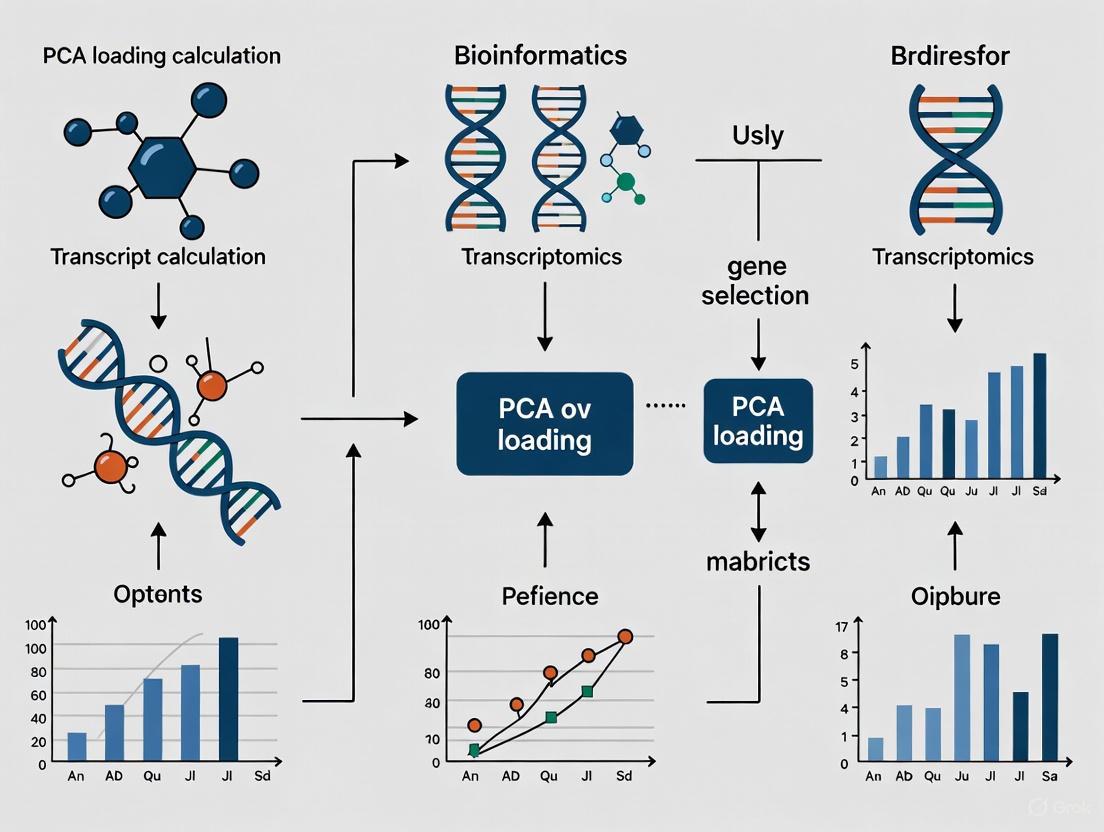

Diagram 1: PCA Workflow for Gene Selection. This workflow illustrates the standardized protocol for extracting biologically relevant genes through PCA loading analysis.

Advanced PCA Applications in Transcriptomics

PCA-Based Unsupervised Feature Extraction (PCAUFE)

The PCAUFE method represents a sophisticated application of loading analysis for identifying critical genes from transcriptomic data with limited samples. This approach leverages statistical outlier detection in principal component spaces to select biologically relevant features [10]:

- Component Selection: Identify principal components that best separate biological conditions (e.g., diseased vs. healthy) using statistical tests on component loadings.

- Outlier Gene Identification: Apply χ² tests to detect genes embedded as outliers in the relevant principal component spaces.

- Multiple Testing Correction: Adjust P-values using Benjamini-Hochberg procedure to control false discovery rates.

- Biological Validation: Confirm selected genes encode proteins with functional relationships to the studied condition [10].

In a COVID-19 transcriptomics study, PCAUFE successfully identified 123 critical genes from 60,683 candidate probes, demonstrating superior selection efficiency compared to conventional methods like LIMMA, edgeR, and DESeq2 [10].

Kernel PCA for Spatial Transcriptomics

Recent advances have extended PCA applications through nonlinear transformations using kernel functions. Kernel PCA employs radial basis function (RBF) kernels to project single-cell RNA-seq and spatial transcriptomics data into shared latent spaces, enabling integration of multimodal data for spatial RNA velocity inference [13]. This approach captures complex nonlinear relationships between gene expression patterns and spatial organization within tissues, advancing our understanding of spatially dynamic biological processes like cellular differentiation [13].

Comparative Performance Analysis

Case Study: COVID-19 Transcriptomic Analysis

A comprehensive evaluation of PCA-based gene selection was performed through analysis of COVID-19 patient transcriptomes. The study compared PCAUFE against established gene selection methodologies using multiple classification approaches to predict COVID-19 status from selected genes [10].

Table 2: Performance Comparison of Gene Selection Methods in COVID-19 Transcriptomics

| Selection Method | Number of Genes Selected | Logistic Regression AUC | SVM AUC | Random Forest AUC |

|---|---|---|---|---|

| PCAUFE | 123 | >0.9 | >0.9 | >0.9 |

| LIMMA | 18,458 | ~0.9 | ~0.9 | ~0.9 |

| edgeR | 4,452 | ~0.9 | ~0.9 | ~0.9 |

| DESeq2 | 5,696 | ~0.9 | ~0.9 | ~0.9 |

The results demonstrate that PCAUFE achieved comparable classification performance (>0.9 AUC across all models) using significantly fewer genes (123) compared to conventional methods that required thousands of genes [10]. This efficiency in gene selection enhances biological interpretability by focusing attention on a manageable set of high-value targets.

Methodological Comparisons in Spatial Transcriptomics

In spatial transcriptomics, PCA-based approaches have demonstrated advantages for gene panel selection and data integration. The PERSIST framework, which uses deep learning models inspired by PCA principles, outperformed conventional selection methods like Seurat and Cell Ranger in identifying informative gene targets for spatial transcriptomics studies [14]. Similarly, Kernel PCA-based integration of single-cell RNA-seq with spatial transcriptomics in the KSRV framework demonstrated superior accuracy and robustness compared to existing methods like SIRV and spVelo [13].

Research Reagent Solutions

Table 3: Essential Computational Tools for PCA in Transcriptomics

| Tool/Package | Application Context | Key Function |

|---|---|---|

| BiPCA [12] | Omics count data | Rank estimation and denoising for heterogeneous data |

| PCAUFE [10] | Disease transcriptomics | Unsupervised feature extraction from limited samples |

| KSRV [13] | Spatial transcriptomics | Nonlinear data integration using Kernel PCA |

| PERSIST [14] | Spatial transcriptomics | Deep learning-based gene panel selection |

| ScanPy [14] | Single-cell transcriptomics | PCA implementation and gene selection based on variance |

Implementation Protocol: Kernel PCA for Spatial Transcriptomics

Advanced Workflow for Spatial Data Integration

The KSRV framework demonstrates a cutting-edge application of PCA methodology for integrating single-cell RNA-seq with spatial transcriptomics data [13]:

- Domain Adaptation: Apply PRECISE domain adaptation framework to align distributions of single-cell and spatial transcriptomics data, mitigating batch effects.

- Kernel Matrix Generation: Perform Kernel PCA with radial basis function (RBF) kernel separately on each dataset to generate kernel matrices capturing nonlinear relationships.

- Component Alignment: Compute eigenvectors of kernel matrices and apply singular value decomposition (SVD) to orthogonalize components, retaining those with cosine similarity >0.3.

- Latent Space Projection: Project both datasets onto the resulting common latent space to achieve alignment while preserving nonlinear gene expression patterns.

- Expression Prediction: Use k-nearest neighbors (k=50) regression in the aligned space to predict spliced and unspliced gene expression at each spatial location [13].

Diagram 2: Kernel PCA Protocol for Spatial Transcriptomics. This workflow enables spatial RNA velocity inference through nonlinear integration of single-cell and spatial omics data.

This protocol has been validated using 10x Visium and MERFISH datasets, successfully revealing spatial differentiation trajectories in mouse brain and organogenesis models [13]. The method enables reconstruction of spatial differentiation trajectories at single-cell resolution, demonstrating generalizability and biological interpretability across diverse datasets.

PCA loading calculation represents a powerful methodology for gene selection in transcriptomics research, bridging statistical dimensionality reduction with biological discovery. Through both linear and nonlinear implementations, PCA-based approaches enable efficient identification of functionally relevant genes from high-dimensional expression data. The continued evolution of PCA methodologies—including PCAUFE for unsupervised feature extraction and Kernel PCA for spatial transcriptomics integration—ensures these techniques remain indispensable for researchers seeking to extract meaningful biological insights from complex transcriptomic datasets. As transcriptomics technologies continue advancing toward higher dimensionality and spatial resolution, sophisticated PCA applications will remain essential tools for linking gene expression patterns to biological function and disease pathology.

Principal Component Analysis (PCA) serves as a fundamental dimensionality reduction technique in transcriptomics research, enabling researchers to navigate high-dimensional gene expression data. The interpretation of loading values is paramount for identifying genes that drive biological variability and inform subsequent gene selection strategies. This Application Note provides a detailed protocol for calculating, interpreting, and applying PCA loadings within transcriptomics, focusing on the critical relationship between high absolute loadings and gene importance. We outline standardized methodologies for data preprocessing, dimensionality assessment, and biological interpretation, supplemented with structured tables and workflow visualizations to guide researchers and drug development professionals in making principled feature selection decisions.

In the context of transcriptomics, where datasets characteristically encompass thousands of genes (variables) across relatively few samples or cells, PCA provides a powerful mechanism to reduce complexity and reveal underlying biological signals. The technique operates by creating new, uncorrelated variables known as principal components (PCs), which are linear combinations of the original genes and successively capture the maximum possible variance within the data [15]. The coefficients defining these linear combinations are the PCA loadings.

Each loading value quantifies the contribution and direction of influence of a single original gene to a single principal component. A loading's magnitude (absolute value) indicates the weight or importance of that gene in defining the component's direction, while its sign (positive or negative) indicates the nature of the correlation between the gene and the component [16]. Genes with high absolute loadings on a particular PC are the most influential variables in the orientation of that component within the high-dimensional expression space. Consequently, identifying these genes is a critical step for feature selection, as they often represent drivers of the major axes of biological variation, such as cell type identity, pathway activation, or response to experimental perturbation [17].

Interpretation of High Absolute Loadings

Quantitative and Qualitative Assessment

Interpreting loading values requires assessing both their numerical value and their pattern across multiple components. The following points are crucial for accurate interpretation:

- Magnitude and Relative Weight: The larger the absolute value of a loading, the more important the corresponding gene is in the calculation of the component. This is because the loading assigns a weight to the original variable (gene) within the linear combination that forms the PC [16]. There is no universal threshold for a "high" loading; its determination is often subjective and should be guided by the distribution of loading values for that specific PC and the researcher's specialized knowledge [16].

- Variance Explanation: The square of a loading value (e.g., ( (0.659)^2 = 0.434 )) represents the proportion of that gene's variance that is explained by the principal component [18]. For a gene with a loading of 0.659 on PC1, this component explains 43.4% of that gene's variance. This metric helps contextualize the biological impact of a gene's contribution.

- Identification of Key Drivers: By examining the genes with the highest absolute loadings on a biologically relevant PC, researchers can pinpoint the key transcriptional drivers of the variation captured by that component. For example, in a single-cell analysis of kidney tissue, a component (F1) with high positive loadings for specific genes was interpreted as representing the identity program of proximal tubule cells [17].

A Protocol for Systematic Loading Interpretation

The following workflow outlines a standardized protocol for interpreting PCA loadings in a transcriptomics study. This procedure ensures a systematic approach from data preparation to biological insight.

Figure 1: A standardized workflow for the interpretation of PCA loadings in transcriptomics data analysis.

Step 1: Data Preprocessing and Normalization Before performing PCA, the raw gene count matrix must be preprocessed and normalized. The choice of normalization method can significantly impact the correlation structure of the data and, consequently, the PCA model and the resulting loadings [19]. For instance, a comparative evaluation of twelve normalization methods for RNA-seq data demonstrated that while PCA score plots might appear similar, the biological interpretation of the models can depend heavily on the normalization method applied [19]. Standard steps include quality control, filtering of lowly expressed genes, and normalization to account for library size and other technical biases.

Step 2: Perform PCA and Extract the Loading Matrix Conduct PCA, typically on the correlation matrix, to ensure all genes are standardized and contribute equally regardless of their expression level. The output includes a loading matrix, where rows represent genes and columns represent principal components. Each entry is a loading value for a specific gene on a specific PC [16].

Step 3: Determine the Number of Significant Principal Components Not all PCs are biologically meaningful. Use objective criteria to decide how many components to retain for interpretation. Common methods include:

- Kaiser Criterion: Retain PCs with eigenvalues greater than 1 [16].

- Scree Plot: Retain PCs before the point where the plot of eigenvalues levels off, forming an "elbow" [16].

- Cumulative Proportion of Variance Explained: Retain a number of PCs that explain an acceptable level of total variance (e.g., 70-90%) for the specific application [16].

Step 4: Identify Genes with High Absolute Loadings for Each Significant PC For each significant PC, sort the genes by the absolute value of their loadings. The genes at the top of this list are the major contributors to that component. The specific number of genes to select from this list is a trade-off between comprehensiveness and focus, and may be guided by the scree plot of loading values or a pre-defined cut-off (e.g., top 50 genes).

Step 5: Interpret the Biological Function of High-Loading Genes Functionally annotate the list of high-loading genes for a given PC using gene ontology (GO) enrichment analysis, pathway mapping (e.g., KEGG), and literature review. This step transforms a statistical result into a biological hypothesis. For example, a PC with high loadings for known immune cell marker genes likely represents a major axis of immune cell variation in the dataset.

Step 6: Validate Findings Through Downstream Analysis Corroborate the biological interpretation by examining the distribution of PC scores (the projected values of observations onto the PC) across known experimental conditions or cell types. If a PC is interpreted as a "cell cycle" component, its scores should show systematic variation between cell cycle phases. Validation can also involve independent experiments or cross-referencing with other omics data.

Critical Considerations and Best Practices

The Impact of Data Normalization

The choice of normalization method is not merely a preprocessing step but a critical analytical decision that directly influences loading values. Different normalization techniques alter the variance and covariance structure of the data, which in turn affects which genes have high loadings on the leading components [19]. Researchers should test the robustness of their key findings across different normalization schemes that are appropriate for their data type (e.g., plate-based vs. droplet-based scRNA-seq).

Loadings vs. Other Metrics for Gene Selection

While PCA loadings are a powerful tool for feature selection, they are not the only available method. The performance of any feature selection strategy, including PCA-based selection, is highly dependent on the dataset. For routine tasks like identifying abundant and well-separated cell types, even randomly selected genes can perform adequately if the number of features is large enough [20]. However, for more challenging tasks such as identifying rare cell types or subtle cellular states, the selection strategy becomes paramount. In these cases, a principled method like selecting genes with high PCA loadings on relevant components can significantly outperform a random selection, even when using the entire transcriptome [20] [21].

The Scientist's Toolkit

Table 1: Essential Research Reagent Solutions for PCA-Based Transcriptomics Analysis

| Item Name | Function/Application in Protocol |

|---|---|

scRNA-seq Normalization Tools (e.g., SCTransform, Scanpy's pp.normalize_total) |

Corrects for technical variation in sequencing depth and other biases, establishing a reliable foundation for PCA. The choice of tool impacts loading interpretation [19]. |

PCA Computational Library (e.g., Scikit-learn in Python, prcomp in R) |

Performs the core principal component analysis, calculating eigenvectors (loadings) and eigenvalues for the input normalized data matrix [15]. |

| Feature Selection Framework (e.g., PERSIST, BigSur) | Provides a principled, potentially supervised approach to gene selection that can complement or be compared to PCA loading-based selection, especially for spatial transcriptomics [14] or challenging clustering tasks [20]. |

| Pathway Enrichment Tool (e.g., g:Profiler, Enrichr) | Functionally annotates lists of high-loading genes to determine the biological processes, pathways, and functions represented by a principal component [17]. |

| Factor Interpretation Software (e.g., sciRED) | Aids in the interpretation of factors (PCs) by automatically matching them to known covariates and providing interpretability metrics, thus streamlining the biological validation of loading-based hypotheses [17]. |

The interpretation of high absolute loadings in PCA is a cornerstone of effective gene selection in transcriptomics research. By rigorously adhering to the protocols outlined herein—from thoughtful data normalization to the systematic identification and validation of high-loading genes—researchers can reliably extract meaningful biological insights from complex datasets. This approach facilitates the discovery of key transcriptional drivers underlying major axes of variation, ultimately informing hypothesis generation and prioritization in both basic research and drug development pipelines.

In transcriptomics research, managing the high dimensionality of data, where the number of genes (variables) far exceeds the number of samples (observations), presents a significant challenge. This "curse of dimensionality" complicates analysis, visualization, and interpretation [8]. Principal Component Analysis (PCA) serves as a fundamental dimensionality reduction technique that addresses this by transforming complex datasets into a simpler set of summary indices known as principal components (PCs) [22]. While the computational aspects of PCA are well-documented, understanding the geometric relationship between principal components and their loadings provides deeper insight for interpreting biological patterns in gene expression data. This geometric foundation is crucial for applications such as gene selection, where loadings identify the genes contributing most significantly to observed variation [14].

This application note elaborates on the geometric interplay between principal components and loadings, providing detailed protocols for their calculation and interpretation within transcriptomics. We frame this relationship within the context of gene selection for spatial transcriptomics studies, where optimally chosen gene panels are essential for effective experimental design [14].

Theoretical Foundation: A Geometric Interpretation

The Principal Components as Axes of Maximum Variance

Geometrically, PCA can be understood as fitting a line, plane, or hyperplane to a swarm of data points in a high-dimensional space. Each data point, representing a sample in transcriptomics, is positioned within a variable space where each original variable (e.g., a gene's expression level) constitutes one coordinate axis [22]. The goal is to approximate this data cloud in a least-squares sense.

The principal components (PCs) are the orthogonal axes of this new coordinate system. The first principal component (PC1) is the line that passes through the average point of the data and best accounts for the shape of the point swarm, representing the direction of maximum variance [23] [22]. The second principal component (PC2) is orthogonal to the first and accounts for the next largest source of variation, and so on. Each observation can be projected onto these new axes, yielding new coordinate values known as scores [22].

The Loadings as Directional Cosines

The loadings provide the critical link between the original variables and the new principal components. Geometrically, the principal component loadings express the orientation of the model plane (defined by the PCs) within the original high-dimensional variable space [22].

Mathematically, the direction of a principal component (e.g., PC1) in relation to the original variables is defined by the cosine of the angles between the PC axis and the original variable axes. A loading is essentially this cosine value, indicating how much each original variable contributes to the formation of a new component [22]. A loading vector p1 contains the directional cosines for PC1, defining its position relative to all original variables.

Table 1: Geometric Interpretation of PCA Elements

| Element | Geometric Meaning | Mathematical Representation | Transcriptomics Interpretation |

|---|---|---|---|

| Variable Space | K-dimensional space (K = number of genes) where each gene is an axis [22]. | K coordinate axes defined by original variables. | The high-dimensional space of all measured genes. |

| Principal Component (PC) | New orthogonal axis; direction of maximum variance in the data cloud [23]. | Eigenvector of the covariance matrix. | A composite "meta-gene" capturing coordinated expression. |

| Loading | Directional cosine; orientation of a PC relative to original gene axes [22]. | pₖ = Vₖ (Eigenvector), or cos(α) between PC and gene axis. |

Weight representing a gene's contribution to a PC. |

| Score | Coordinate of a sample's projection onto a PC [22]. | tᵢ = Vᵀxᵢ (Projection of observation i onto PC). |

The expression level of a sample for the "meta-gene". |

Calculation Protocols

Protocol 1: Standard PCA and Loading Calculation

This protocol details the steps for performing PCA and extracting loadings from a gene expression matrix, where rows represent samples (observations) and columns represent genes (variables).

Table 2: Key Reagent Solutions for PCA in Transcriptomics

| Research Reagent / Tool | Function / Description | Example Implementations |

|---|---|---|

| Standardized Data Matrix (X) | Input data; rows are samples, columns are genes. | Normalized and transformed gene expression counts (e.g., TPM, FPKM). |

| Covariance Matrix Calculator | Computes the covariance matrix of the mean-centered data. | numpy.cov in Python, cov() in R. |

| Eigen Decomposition Solver | Calculates eigenvectors and eigenvalues of the covariance matrix. | numpy.linalg.eig, prcomp in R, EIGENSOFT [24]. |

| SVD Solver | Alternative, numerically stable method for PCA computation. | svd() in R, scipy.linalg.svd in Python. |

| Bioconductor Packages | Specialized tools for genomic data analysis. | Exvar R package for gene expression and genetic variation analysis [25]. |

Procedure:

- Data Preprocessing and Standardization: Begin with a normalized gene expression matrix. Standardize the data by centering each variable (gene) on its mean and scaling it to unit variance. This ensures that all genes contribute equally to the analysis, preventing highly expressed genes from dominating the variance purely due to their scale [23].

- Covariance Matrix Computation: Compute the covariance matrix of the standardized data. This symmetric matrix (of size P×P, where P is the number of genes) captures how all pairs of genes vary together from their means [23].

- Eigen Decomposition: Perform eigen decomposition on the covariance matrix. The resulting eigenvectors (

v_k) define the principal components, and their corresponding eigenvalues (λ_k) represent the amount of variance explained by each PC [23]. - Extraction of Loadings: The eigenvectors themselves are the loadings. The eigenvector

v_1is the loading vector for PC1, where each element is the loading of a specific gene on PC1 [22].

Protocol 2: Loading-Driven Gene Selection for Spatial Transcriptomics

This protocol utilizes PCA loadings to identify informative gene targets for spatial transcriptomics studies, leveraging reference single-cell RNA-sequencing (scRNA-seq) data.

Procedure:

- Reference Data Preparation: Obtain a reference scRNA-seq dataset for the cell population of interest. Preprocess the data using standard normalization and log-transformation.

- Dimensionality Reduction and Loading Calculation: Perform PCA on the reference dataset using Protocol 1. Extract the loading matrix.

- Gene Ranking and Selection: For a target number of principal components (e.g., the first 10 PCs that explain ~99% of cumulative variance), rank genes based on the absolute value of their loadings. Genes with the largest absolute loadings on the most significant PCs contribute most strongly to the major axes of variation.

- Panel Finalization: Select the top-ranked genes from step 3 to form the final gene panel. This panel can be validated by assessing its power to reconstruct the full scRNA-seq expression profile or to accurately predict cell type labels in a supervised manner [14].

Advanced Methodologies and Applications

Probabilistic and Geometric Extensions

Standard PCA operates within a Euclidean framework. However, biological data, such as neural population activity, may be distributed around a nonlinear manifold [26]. Probabilistic Geometric PCA (PGPCA) extends the standard framework by incorporating a predefined nonlinear manifold, deriving a geometric coordinate system to capture data deviations from this manifold [26]. The loadings in this context are learned via an Expectation-Maximization (EM) algorithm, as the standard singular value decomposition (SVD) used in PCA is no longer directly applicable [26].

For hyperspectral image analysis in remote sensing, which shares similarities with spatial transcriptomics in handling high-dimensional data, the geometrically approximated PCA (gaPCA) method has been developed. Unlike standard PCA, which maximizes variance, gaPCA approximates principal components by focusing on the geometric range of the data, potentially preserving more information related to smaller or rare structures [27].

Sparse PCA for Enhanced Gene Selection

A limitation of standard PCA is that each principal component is typically a linear combination of all genes, making biological interpretation challenging. Sparse PCA addresses this by imposing sparsity constraints on the loadings, forcing many loadings to zero [28]. This results in principal components that are linear combinations of only a small subset of genes, thereby directly yielding a gene panel for downstream experiments [28]. The challenge lies in selecting the appropriate sparsity parameter. A Random Matrix Theory (RMT)-guided sparse PCA has been proposed to automate this selection, making the process nearly parameter-free and robust for single-cell RNA-seq data [28].

Protocol 3: RMT-Guided Sparse PCA for Robust Gene Selection

This protocol uses advanced statistical theory to infer a sparse gene panel from scRNA-seq data.

Procedure:

- Data Biwhitening: Transform the scRNA-seq count matrix

Xusing a biwhitening procedure. This involves estimating diagonal matricesA(cell-cell covariance) andB(gene-gene covariance), then transforming the data toZ = C X D, whereCandDare chosen so that the variances ofZacross cells and genes are approximately 1. This stabilizes variance and prepares the data for RMT analysis [28]. - Outlier Eigenspace Identification: Compute the covariance matrix of the biwhitened data

Z. Use RMT principles to identify the "outlier eigenspace"—the set of eigenvectors whose corresponding eigenvalues lie outside the support of the noise distribution predicted by RMT. This space contains the true biological signal [28]. - Sparse PCA with RMT Guidance: Apply a sparse PCA algorithm to the biwhitened data. Use the RMT-based angle predictions between the signal and outlier eigenspaces to automatically guide the selection of the sparsity parameter. This ensures the inferred sparse components faithfully represent the true signal subspace [28].

- Gene Panel Extraction: The resulting sparse principal components will have loadings where only a subset of entries are non-zero. The genes corresponding to these non-zero loadings constitute the final, robust gene panel [28].

The geometric relationship between principal components and loadings is fundamental to interpreting PCA in transcriptomics. Loadings, defined as the directional cosines that orient the new component axes within the original gene space, provide the key to identifying genes that drive major sources of variation in the data. The protocols outlined—from standard PCA loading calculation to advanced sparse PCA methods—provide a structured approach for leveraging this geometric insight. This enables the informed selection of targeted gene panels for spatial transcriptomics, enhancing the efficiency and resolution of studies aimed at understanding cellular organization and function within tissues.

In transcriptomics research, principal component analysis (PCA) is a foundational tool for dimensionality reduction, gene selection, and exploratory data analysis. The calculation of PCA loadings—the coefficients representing the contribution of each original variable (gene) to the principal components—is critically dependent on proper data preprocessing. Normalization, the process of removing unwanted technical variation while preserving biological signal, fundamentally shapes the covariance structure of the data and consequently determines the PCA loading calculations [19]. This application note examines how normalization choices impact PCA loading calculations within transcriptomics research, providing experimental protocols and analytical frameworks for researchers, scientists, and drug development professionals.

Table 1: Common Normalization Methods in Transcriptomics and Their Core Principles

| Normalization Method | Core Principle | Assumptions | Suitability for PCA |

|---|---|---|---|

| TMM (Trimmed Mean of M-values) | Scales libraries based on a trimmed mean of log expression ratios [29] [30] | Most genes are not differentially expressed [30] [31] | High - Reduces between-sample technical variability [30] |

| RLE (Relative Log Expression) | Calculates a scaling factor as the median of ratios to a pseudoreference [30] | Similar to TMM; most genes are non-DE [30] | High - Produces stable PCA results with low variability [30] |

| Quantile | Forces all samples to have identical expression distributions [29] [31] | All samples should have similar expression profiles [31] | Variable - May distort biological signals in unbalanced data [19] [31] |

| TPM/FPKM | Within-sample normalization accounting for sequencing depth and gene length [30] [32] | Appropriate for comparing expression within a sample [30] | Lower - Can introduce artifacts in between-sample PCA [30] |

| Median | Centers expression values using the median of each sample [29] | Central tendency is comparable across samples [29] | Moderate - Simple approach but sensitive to outliers |

Normalization Impact on PCA Loading Calculations

Theoretical Foundations

The mathematical relationship between normalization and PCA loadings is direct and consequential. PCA operates by eigenvalue decomposition of the covariance or correlation matrix of the expression data. Normalization methods directly manipulate this covariance structure by:

- Adjusting the mean and variance of individual genes across samples

- Reshaping the distribution of expression values

- Altering the pairwise correlations between genes

Research has demonstrated that while the overall visualization in PCA score plots might appear similar across normalization methods, the biological interpretation derived from the loading vectors differs substantially [19]. This is particularly critical for gene selection, where researchers identify biologically relevant features based on their contributions to principal components.

Empirical Evidence of Normalization Effects

Comparative studies reveal several key patterns in how normalization impacts PCA outcomes:

- Between-sample methods (TMM, RLE, GeTMM) produce more stable loading patterns and generate PCA models with lower variability compared to within-sample methods (TPM, FPKM) [30]

- Quantile normalization can produce misleading loading calculations when the assumption of similar expression distributions across samples is violated, which occurs in unbalanced transcriptome data such as cancer vs. normal samples or different tissue types [19] [31]

- The magnitude and direction of gene loadings can shift significantly across normalization methods, potentially altering which genes are selected as important features in downstream analysis [19]

Table 2: Impact of Normalization Methods on PCA Loading Stability and Data Structure

| Normalization Method | Loading Stability | Data Correlation Structure | Performance with Unbalanced Data |

|---|---|---|---|

| TMM | High | Preserves biological covariance | Good - Robust to moderate imbalances |

| RLE | High | Maintains inter-gene relationships | Good - Similar to TMM |

| GeTMM | High | Incorporates gene length correction | Good - Handles various data types |

| Quantile | Variable | Forces identical distributions | Poor - Can introduce artifacts |

| TPM/FPKM | Lower | Emphasizes within-sample patterns | Poor - Increases between-sample variability |

| UQ (Upper Quartile) | Moderate | Uses upper quartile scaling | Moderate |

Experimental Protocols for Evaluating Normalization Impact

Protocol 1: Systematic Assessment of Normalization Methods

Objective: To evaluate how different normalization methods impact PCA loading calculations and gene selection in transcriptomic data.

Materials and Reagents:

- RNA-seq or microarray dataset with appropriate experimental design

- Computational environment (R/Python) with necessary packages

- Reference gene sets or spike-in controls (if available) for validation [31]

Procedure:

- Data Preparation: Begin with raw count data from RNA-seq or intensity data from microarrays. Perform basic quality control to remove low-quality samples and genes.

- Normalization Application: Apply multiple normalization methods (see Table 1) to the dataset using standardized parameters.

- PCA Execution: Perform PCA on each normalized dataset using the same computational parameters and number of components.

- Loading Extraction: Extract the loading vectors for the first 5-10 principal components from each analysis.

- Comparative Analysis:

- Calculate the correlation between loading vectors across normalization methods

- Identify the top 100 genes by absolute loading value for each method and component

- Assess overlap in selected genes across methods using Jaccard similarity indices

- Biological Validation: Perform gene set enrichment analysis on selected genes to evaluate biological coherence.

Protocol 2: Validation Using Unbalanced Transcriptomic Data

Objective: To assess normalization performance when analyzing datasets with global expression shifts, such as cancer vs. normal samples.

Materials and Reagents:

- Transcriptomic dataset with known global shifts (e.g., different tissues, cancer/normal, different developmental stages)

- Housekeeping gene sets or invariant transcripts for reference [31]

Procedure:

- Dataset Selection: Identify or create a dataset with expected global expression differences between conditions.

- Normalization Application: Apply both conventional (quantile, TPM) and specialized methods (GRSN, Xcorr) designed for unbalanced data [31].

- PCA and Loading Analysis:

- Perform PCA on each normalized dataset

- Extract loading vectors and identify condition-associated genes

- Performance Metrics:

- Calculate the silhouette width for sample separation in PCA space [32]

- Assess the proportion of variance explained by biological vs. technical factors

- Evaluate the false discovery rate for identifying known condition-specific markers

The following workflow diagram illustrates the experimental protocol for evaluating normalization impact:

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Key Research Reagent Solutions for Normalization Studies

| Reagent/Resource | Function | Application Context |

|---|---|---|

| ERCC Spike-in Controls [32] | External RNA controls for normalization | Platform-specific normalization assessment |

| Housekeeping Gene Panels [31] | Endogenous invariant references | Validation of normalization performance |

| Reference Transcript Sets [31] | Data-driven invariant features | Normalization of unbalanced data |

| UMI Barcodes [32] | Unique Molecular Identifiers for accurate counting | Digital counting protocols to reduce technical noise |

| Platform-Specific Kits (10X Genomics, Smart-seq) [32] | Library preparation with protocol-specific biases | Technology-specific normalization optimization |

Implementation Framework and Recommendations

Decision Framework for Normalization Selection

Based on empirical evidence, we propose the following decision framework for selecting normalization methods in PCA-based gene selection:

For balanced experimental designs with similar expression distributions across samples, TMM or RLE normalization methods provide stable loading calculations and reliable gene selection [30].

For unbalanced data with global expression shifts (e.g., different tissues, cancer vs. normal), consider data-driven reference methods that identify invariant transcript sets rather than assuming most genes are non-differentially expressed [31].

For single-cell RNA-seq data, employ platform-specific normalization that accounts for zero inflation and technical noise, which dramatically impact covariance structures and subsequent loading calculations [32].

Always validate normalization choices by:

- Comparing loading stability across methods

- Assessing biological coherence of selected genes

- Testing robustness through data subsampling

Integration with Downstream Analysis

The impact of normalization extends beyond initial PCA to influence downstream analysis including:

- Differential expression results when using PCA-reduced data

- Cell type identification in single-cell studies [14]

- Pathway analysis based on genes selected through high loadings

- Biomarker discovery pipelines relying on stable feature selection

Therefore, the normalization method should be considered an integral component of the entire analytical workflow rather than an independent preprocessing step.

Normalization methods fundamentally reshape the covariance structure of transcriptomic data, directly impacting PCA loading calculations and subsequent gene selection. Between-sample normalization methods like TMM and RLE generally provide more stable loading patterns for PCA-based analysis compared to within-sample methods. In specialized contexts with unbalanced data or specific technological platforms, researchers should select normalization methods that align with their data characteristics and biological questions. Through systematic evaluation using the protocols outlined in this application note, researchers can make informed decisions about normalization to ensure robust and biologically meaningful PCA loading calculations in transcriptomics research.

Practical Implementation: Calculating and Applying PCA Loadings for Gene Selection

Principal Component Analysis (PCA) is a multivariate statistical technique employed to systematically reduce the dimensionality of high-dimensional transcriptomics data while preserving major sources of variation [33] [34]. In the context of gene expression analysis, PCA transforms the gene expression matrix into a set of orthogonal principal components (PCs), where each PC represents a linear combination of gene expression values [34]. The loadings—the coefficients assigned to each gene in these linear combinations—provide a powerful mechanism for gene selection by quantifying the contribution of individual genes to each PC [17]. Genes with high absolute loading values on biologically relevant PCs are considered drivers of the observed transcriptional variation. This protocol details a comprehensive workflow from raw RNA-sequencing count data to the selection of biologically informative genes using PCA loadings, framed within a broader thesis on robust gene selection strategies for transcriptomics research.

Materials

Research Reagent Solutions

Table 1: Essential Research Reagents and Computational Tools

| Item Name | Function/Application | Specifications |

|---|---|---|

| R or Python Environment | Primary computational platform for data analysis | R (≥4.2.0) with Bioconductor packages, or Python (≥3.8) with sci-kit learn and Scanpy. |

| Normalization Algorithms | Corrects for technical variations (e.g., sequencing depth) | Methods include TPM, DESeq2's median-of-ratios, or scran's pool-based sizes [19]. |

| PCA Implementation | Performs the core dimensionality reduction | Functions: prcomp() in R, PCA from scikit-learn, or specialized tools like GraphPCA for spatial data [35]. |

| Varimax Rotation | Enhances the interpretability of PC loadings | A rotation method that simplifies the loading structure, making genes associate more strongly with fewer components [17]. |

| Pathway Analysis Tools | Functional interpretation of selected genes | Tools like clusterProfiler for Gene Ontology or KEGG pathway enrichment [36]. |

Protocol

Data Preprocessing and Normalization

Time Taken: Approximately 1-2 hours, depending on dataset size.

- Load Data: Import the raw gene-by-sample count matrix into your analytical environment (e.g., R or Python).

- Filter Genes: Remove genes with low expression across the majority of samples to reduce noise. A common threshold is to keep genes with at least 10 counts in a minimum of 5-10% of all samples.

- Normalize Data: Apply a normalization method to correct for library size and other technical artifacts. The choice of method significantly impacts the PCA outcome and its biological interpretation [19].

- For bulk RNA-seq data, methods like Transcripts Per Million (TPM) or the median-of-ratios method (e.g., from DESeq2) are widely used.

- For single-cell or spatial transcriptomics data, consider methods implemented in tools like Scanpy or Seurat, which may include library size normalization followed by log-transformation (log1p).

- Standardize Genes (Optional): Center each gene's expression values to have a mean of zero and, in some cases, scale to have a unit variance. This step is crucial if the analysis aims to identify genes with high variance relative to their own expression levels, rather than just highly abundant genes.

Table 2: Common Normalization Methods and Their Impact on PCA

| Normalization Method | Mathematical Principle | Impact on PCA & Gene Selection |

|---|---|---|

| Transcripts Per Million (TPM) | Normalizes for sequencing depth and gene length. | Can be effective but may emphasize highly expressed genes. Biological interpretation of PCs can be heavily dependent on the normalization used [19]. |

| DESeq2's Median-of-Ratios | Models counts using a negative binomial distribution and estimates size factors. | Robust to composition bias. The subsequent log transformation helps stabilize variance, leading to more stable PCA results. |

| Log-Transformation (log1p) | Applies a natural log transformation after adding a pseudocount (e.g., log1p). | Stabilizes variance across the dynamic range of expression data, preventing highly variable genes from dominating the PCA purely due to their count magnitude. |

Principal Component Analysis and Loading Extraction

Time Taken: Several minutes to an hour, depending on data dimensions.

- Perform PCA: Execute PCA on the preprocessed gene expression matrix (samples x genes). The function will return the principal components (the coordinates of samples in the new space, called scores) and the loadings (the coefficients of the genes, sometimes called rotations).

- In R, using the

prcomp()function is standard. - In Python,

sklearn.decomposition.PCAcan be used.

- In R, using the

- Determine Significant PCs: Use a scree plot (a bar plot of the variance explained by each PC) to identify the number of components that capture the majority of the biological signal. Look for an "elbow" point where the explained variance drops off markedly [34]. Alternatively, use the Cattell criterion to select PCs before the variance levels off to a linear decrease.

- Extract Loadings: Extract the loading matrix from the PCA result. This is a matrix of size g x c, where g is the number of genes and c is the number of computed components. Each column represents a PC, and each row contains a gene's loading for that component.

Interpreting Loadings and Selecting Genes

Time Taken: 1-2 hours.

- Apply Varimax Rotation (Optional but Recommended): To enhance the interpretability of the loadings, apply a varimax rotation to the subset of significant PCs. This rotation simplifies the loading structure, encouraging each gene to have a high loading on a single component and near-zero loadings on others, which facilitates clearer biological interpretation [17].

- Identify Informative Genes: For each biologically relevant PC (e.g., PC1 separates treatment from control), identify genes with the highest absolute loading values. A common practice is to select the top N genes (e.g., top 50-200) per component.

- Set a Threshold: Instead of a fixed number N, one can set a loading magnitude threshold (e.g., |loading| > 0.05) to select genes.

- Consider Composite Scores: For a more robust gene set, aggregate high loadings across multiple significant PCs.

- Validate Gene Selection: Cross-reference the selected gene list with known marker genes from the literature or public databases to assess biological plausibility.

Downstream Analysis and Validation

Time Taken: 2-4 hours.

- Functional Enrichment Analysis: Input the list of selected genes into a functional enrichment tool such as clusterProfiler [36] to identify over-represented Gene Ontology terms or KEGG pathways. This step translates the statistical gene list into biological insight.

- Evaluate in Predictive Models: Validate the biological relevance of the selected genes by using them as features in a machine learning task, such as classifying sample groups (e.g., disease vs. healthy). A tool like gSELECT can be used to evaluate the classification performance of the predefined gene set [37]. High predictive accuracy reinforces the functional importance of the selected genes.

- Compare to Ground Truth: If available, compare the PCA-based gene selection results with those from other feature selection methods, such as XGBoost feature importance [38] or WGCNA hub genes [36], to build consensus on the most critical genes.

Application Notes

- Normalization is Critical: The choice of normalization method is not merely a preprocessing step but a major determinant of the PCA outcome. Different methods can lead to different correlation patterns in the data, ultimately affecting which genes are highlighted by the loadings [19]. It is good practice to test the robustness of key findings across normalization schemes.

- Interpretation Over Variance: The PC that explains the most variance (PC1) may not always be the most biologically informative. It may represent a strong technical batch effect or other confounding factor. Always correlate PC scores with known sample metadata to assign biological meaning to components before interpreting their loadings.

- Beyond PCA: For specific data types, consider spatially-aware dimensionality reduction methods like GraphPCA [35] or RASP [39] for spatial transcriptomics data, or sciRED [17] for single-cell data, which can better account for data structure and confounders.

- Leverage Loadings for Targeted Assays: The gene lists generated from PCA loadings are excellent candidates for designing targeted panels in validation studies using technologies like targeted spatial transcriptomics [40].

In modern computational pharmacogenomics, a central challenge is extracting meaningful biological signals from the high-dimensional transcriptomic data generated by drug perturbation studies [41]. The Connectivity Map (CMap) database stands as a pivotal resource, containing millions of gene expression profiles from cell lines treated with thousands of chemical compounds [42]. However, the extreme dimensionality of this data—tens of thousands of genes measured under different conditions—poses significant obstacles for analysis and interpretation [41] [4].

This case study explores the application of Principal Component Analysis (PCA) to overcome the "curse of dimensionality" in CMap data analysis. PCA serves as a linear dimensionality reduction technique that transforms high-dimensional gene expression data into a lower-dimensional space of principal components (PCs) while preserving maximal data covariance [43]. Within the context of transcriptomics, these PCs function as "metagenes" or linear combinations of original gene expressions that capture coordinated biological variation [1]. The PCA loadings—the coefficients assigning original variables to each PC—provide the critical mathematical transformation for calculating these components and identifying genes that contribute most significantly to each dimension of variation [43].

For researchers investigating drug response signatures, PCA loadings offer a powerful mechanism for gene selection by highlighting features that drive the most variance in drug perturbation responses, thereby enabling more efficient downstream analyses and enhanced biological interpretation [1].

Background

The Connectivity Map (CMap) Resource

The CMap initiative was established to create a comprehensive catalog of cellular signatures representing systematic perturbations with genetic and pharmacologic agents [42]. The core hypothesis was that signatures with high similarity might reveal previously unrecognized connections between proteins operating in the same pathway, between small molecules and their protein targets, or between compounds with similar functions but structural dissimilarity [42].

The CMap database contains over 1.5 million gene expression profiles from approximately 5,000 small-molecule compounds and 3,000 genetic reagents tested across multiple cell types [42]. To generate data at this scale, the Broad Institute developed the L1000 high-throughput gene expression profiling technology, which provides a relatively inexpensive and rapid method for generating transcriptional signatures [42]. These vast datasets are housed and made accessible through the CLUE (CMap and LINCS Unified Environment) cloud-based compute infrastructure [42].

The standard CMap analytical approach involves comparing disease-specific gene expression signatures against reference profiles of drug-induced transcriptional changes [41]. A positive connectivity score indicates similarity between disease and drug signatures, while a negative score suggests the drug may reverse the disease signature [41]. However, working directly with the full gene expression matrix (typically containing >20,000 genes) presents substantial computational and analytical challenges that dimensionality reduction techniques like PCA can effectively address [41] [4].

Principal Component Analysis in Transcriptomics

PCA is a multivariate technique that identifies orthogonal directions of maximum variance in high-dimensional data [43]. When applied to transcriptomic data, PCA:

- Reduces dimensionality by transforming correlated gene expressions into a smaller set of uncorrelated principal components [1]

- Preserves covariance by maintaining the covariance structure of the original data in the reduced space [3]

- Orders components by variance explained with the first PC capturing the largest proportion of variance, the second PC the next largest, and so on [43]

The mathematical transformation in PCA is defined as:

[ T = XW ]

Where (X) is the original data matrix (samples × genes), (W) is the loading matrix (genes × components), and (T) is the scores matrix (samples × components) [43]. The loadings matrix (W) contains the coefficients that define how each original variable contributes to each principal component, with each column of (W) representing a different PC [43].

In bioinformatics applications, PCA has been extensively used for exploratory analysis, data visualization, clustering, and as a preprocessing step for regression analysis [1]. When analyzing drug-induced transcriptomic data, PCA can effectively address the "large d, small n" problem (where the number of genes greatly exceeds the number of samples) that commonly occurs in pharmacogenomic studies [1] [4].

Computational Framework

PCA Loading Calculation for Gene Selection

The process of calculating PCA loadings for gene selection in CMap data involves a structured workflow with distinct stages:

Figure 1: Analytical workflow for PCA loading calculation and gene selection from CMap data.

Data Preprocessing