PCA on Microarray vs RNA-seq Data: A Practical Guide for Performance and Application in Biomedical Research

This article provides a comprehensive comparison of Principal Component Analysis (PCA) performance on microarray and RNA-seq transcriptomic data.

PCA on Microarray vs RNA-seq Data: A Practical Guide for Performance and Application in Biomedical Research

Abstract

This article provides a comprehensive comparison of Principal Component Analysis (PCA) performance on microarray and RNA-seq transcriptomic data. Tailored for researchers and drug development professionals, it explores the foundational principles of each technology's data structure and its implications for PCA. The content covers practical methodological approaches for applying PCA, addresses common troubleshooting and optimization challenges, and validates performance through comparative analysis of real-world case studies. By synthesizing findings from recent benchmarking studies, this guide offers actionable insights for selecting the appropriate platform and analytical strategy to maximize the biological insights gained from transcriptomic dimensionality reduction.

Microarray vs RNA-seq: Understanding Core Data Structures and Their Impact on PCA

Gene expression analysis is a cornerstone of modern molecular biology, enabling researchers to understand cellular processes, disease mechanisms, and drug responses. Over recent decades, two principal technological approaches have emerged for transcriptome profiling: hybridization-based methods (primarily microarrays) and sequencing-based methods (including RNA sequencing, RNA-Seq). These technologies operate on fundamentally different principles for detecting and quantifying nucleic acids. Hybridization-based techniques rely on the binding of fluorescently labeled nucleic acids to complementary probes immobilized on a solid surface, with signal intensity corresponding to expression levels. In contrast, sequencing-based methods utilize next-generation sequencing platforms to directly determine the nucleotide sequence of cDNA molecules, providing digital counts of transcript abundance through computational alignment and enumeration.

The evolution of these platforms has created important considerations for researchers designing transcriptomic studies, particularly as both technologies remain in active use. While RNA-seq has gained substantial market share, microarray data still comprises a significant portion of existing gene expression repositories and continues to be used in new studies due to specific advantages in certain applications. Understanding the fundamental operational differences, performance characteristics, and appropriate use cases for each technology is essential for robust experimental design and data interpretation in genomics research, especially in pharmaceutical development and biomarker discovery.

Core Technological Principles and Workflows

Hybridization-Based Technologies

Hybridization-based technologies, predominantly represented by DNA microarrays, function through the principle of complementary base pairing between target sequences and immobilized probes. The experimental workflow begins with RNA extraction from biological samples, followed by reverse transcription to create complementary DNA (cDNA). This cDNA is then fluorescently labeled and hybridized to a microarray chip containing hundreds of thousands of predefined oligonucleotide probes spotted at specific locations. After extensive washing to remove non-specifically bound molecules, the chip is scanned to measure fluorescence intensity at each probe location, which corresponds to the abundance of the corresponding transcript in the original sample.

The fundamental characteristics of hybridization-based approaches include their dependence on predefined probes, which limits detection to known sequences included in the array design, and a signal output that is analog in nature, representing continuous fluorescence intensity values. This analog nature creates limitations at both low and high expression levels, where background noise and signal saturation respectively affect accurate quantification. Microarray technology matured rapidly throughout the 1990s and 2000s, becoming the workhorse method for large-scale gene expression studies and generating the bulk of data in repositories such as the Gene Expression Omnibus (GEO) during that period [1].

Sequencing-Based Technologies

Sequencing-based technologies for transcriptome quantification, primarily RNA sequencing (RNA-Seq), employ a fundamentally different approach based on direct nucleotide determination. The typical workflow begins with RNA extraction, followed by enrichment for specific RNA types (e.g., poly-A selection for mRNA). The RNA is then converted to a sequencing library through fragmentation, reverse transcription to cDNA, adapter ligation, and possible amplification. These prepared libraries are loaded onto next-generation sequencing platforms that perform massive parallel sequencing, generating millions of short DNA reads. These reads are then computationally mapped to a reference genome or transcriptome, with expression levels quantified by counting the number of reads aligned to each gene.

Key advantages of sequencing-based methods include their hypothesis-free nature, as they do not require prior knowledge of transcript sequences, enabling discovery of novel genes, splice variants, and mutations. Unlike the analog signals from microarrays, RNA-Seq provides digital read counts as its primary output, offering a wider dynamic range for quantification. Since its emergence in the mid-2000s, RNA-Seq has gradually become the predominant transcriptomic profiling method, comprising approximately 85% of all submissions to GEO as of 2023 [1].

Table 1: Core Fundamental Differences Between Hybridization and Sequencing Technologies

| Feature | Hybridization-Based (Microarrays) | Sequencing-Based (RNA-Seq) |

|---|---|---|

| Basic Principle | Complementary base pairing to immobilized probes | Direct nucleotide sequencing of cDNA |

| Detection Dependency | Requires predefined probe sequences | Does not require prior sequence knowledge |

| Output Signal | Analog fluorescence intensity | Digital read counts |

| Dynamic Range | Limited (~10³) due to background and saturation | Wide (>10⁵) with digital counting |

| Target Limitations | Limited to probes on the array | Virtually unlimited potential targets |

| Primary Applications | Profiling known transcripts, focused studies | Discovery work, novel transcript identification |

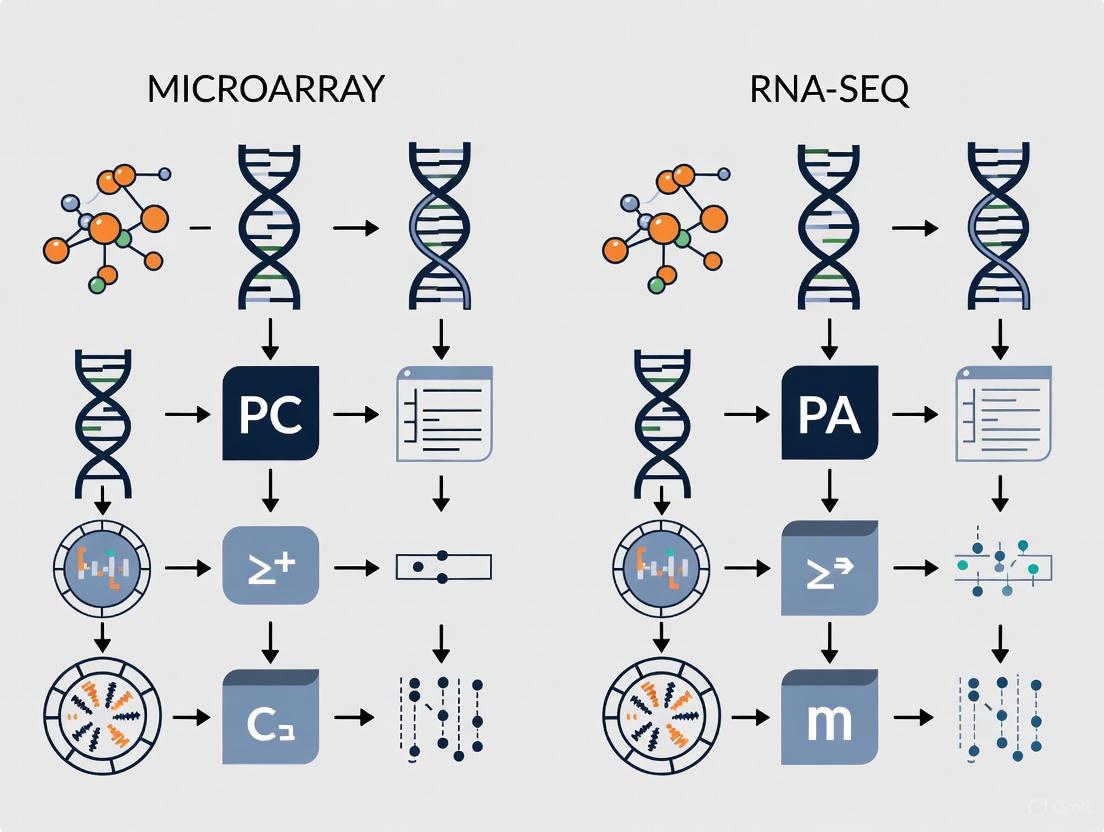

Visualizing Fundamental Workflow Differences

The diagram below illustrates the core procedural differences between hybridization-based and sequencing-based quantification workflows, highlighting key stages where methodological divergences occur.

Performance Comparison and Experimental Data

Technical Performance Metrics

Direct comparisons between hybridization and sequencing technologies reveal distinct performance characteristics that influence their suitability for different research applications. Microarray technology demonstrates good sensitivity for moderate to highly expressed transcripts but suffers from limited dynamic range (approximately 10³) due to background fluorescence at low expression levels and signal saturation at high abundances. In contrast, RNA-Seq provides a significantly wider dynamic range (>10⁵) due to its digital counting nature, enabling more accurate quantification of both lowly and highly expressed genes. This technical advantage translates to practical benefits, with RNA-Seq demonstrating higher specificity and sensitivity, particularly for detecting differentially expressed genes with low abundance [2].

The capability for novel discovery represents another fundamental differentiator between the platforms. Microarrays can only detect transcripts with complementary probes on the array, making them inherently biased toward known sequences. RNA-Seq, as an unbiased method, can identify novel transcripts, gene fusions, splice variants, and sequence polymorphisms without prior knowledge of their existence. This discovery potential makes RNA-Seq particularly valuable for exploratory research in less-characterized biological systems or for comprehensive transcriptome characterization [2].

Concordance in Gene Expression Profiling

Despite their technical differences, multiple studies have demonstrated reasonable concordance between hybridization and sequencing platforms when analyzing the same biological samples. A 2025 study comparing microarray and RNA-Seq technologies using identical blood samples from 35 participants found a median Pearson correlation coefficient of 0.76 for gene expression profiles, indicating strong overall agreement. In differential expression analysis, RNA-Seq identified 2,395 differentially expressed genes (DEGs), while microarray identified 427 DEGs, with 223 DEGs shared between the platforms. Pathway analysis revealed 205 perturbed pathways identified by RNA-Seq and 47 by microarray, with 30 pathways overlapping between the technologies [1].

An earlier comparison study published in 2007 examining microarray and Massively Parallel Signature Sequencing (MPSS) on biological replicates found that DNA microarray platforms generally provided highly correlated data, while moderate correlations between microarrays and MPSS were obtained. The study attributed disagreements between the technologies to limitations inherent to both approaches, including challenges with low-abundance transcripts, tag-to-gene mapping ambiguity, and absence of restriction sites for enzyme-based methods [3]. These findings underscore that while both methods can generate biologically meaningful data, they should be considered complementary rather than directly interchangeable.

Table 2: Experimental Performance Comparison Between Microarray and RNA-Seq

| Performance Metric | Microarray | RNA-Seq | Experimental Context |

|---|---|---|---|

| Gene Detection | 15,828 genes detected [1] | 22,323 genes detected [1] | Analysis of human whole blood samples |

| Differentially Expressed Genes | 427 DEGs identified [1] | 2,395 DEGs identified [1] | Youth with HIV vs. controls |

| Shared DEGs | 223 DEGs shared between platforms [1] | 223 DEGs shared between platforms [1] | Same samples, same statistical analysis |

| Pathway Detection | 47 perturbed pathways [1] | 205 perturbed pathways [1] | IPA pathway analysis |

| Dynamic Range | ~10³ [2] | >10⁵ [2] | Technical comparison studies |

| Correlation Between Platforms | Pearson r = 0.76 [1] | Pearson r = 0.76 [1] | Same blood samples analyzed |

Impact on Transcriptomic Benchmark Concentration Modeling

The performance differences between technologies take on particular importance in regulatory toxicology applications, where transcriptomic benchmark concentration (BMC) modeling provides quantitative information for chemical risk assessment. A 2025 toxicogenomic study comparing microarray and RNA-Seq for concentration-response modeling of cannabinoids found that despite RNA-Seq identifying larger numbers of differentially expressed genes with wider dynamic ranges, both platforms displayed equivalent performance in identifying functions and pathways impacted by compound exposure through gene set enrichment analysis. Most importantly, transcriptomic point of departure values derived through BMC modeling were at similar levels for both platforms, supporting the continued utility of microarray data for chemical risk assessment [4].

This finding has significant practical implications for toxicogenomics and drug development, suggesting that while RNA-Seq offers superior technical capabilities, microarray data remains sufficient and appropriate for many applications, particularly those focused on pathway identification and benchmark concentration modeling. The study authors noted that considering the relatively low cost, smaller data size, and better availability of software and public databases for data analysis and interpretation, "microarray is still a viable method of choice for traditional transcriptomic applications such as mechanistic pathway identification and concentration response modeling" [4].

Experimental Design and Methodological Considerations

Sample Preparation and Protocol Details

Proper experimental design begins with appropriate sample handling and preparation, which varies significantly between hybridization and sequencing approaches. For microarray analysis using the Affymetrix platform, a standard protocol involves using 100 ng of total RNA that undergoes reverse transcription with a T7-linked oligo(dT) primer, followed by second-strand cDNA synthesis. Subsequently, complementary RNA (cRNA) is synthesized through in vitro transcription with biotinylated nucleotides, followed by fragmentation and hybridization to microarray chips. After 16 hours of hybridization at 45°C, chips are washed, stained, and scanned to generate raw image files for analysis [4].

For RNA-Seq library preparation, the Illumina Stranded mRNA Prep protocol typically begins with 100 ng of total RNA followed by poly-A selection to enrich for mRNA. The RNA is then fragmented and reverse-transcribed into cDNA, with subsequent adapter ligation for sequencing. Libraries are quantified and quality-controlled before being loaded onto sequencing platforms. A key distinction is that RNA-Seq requires substantially more sophisticated bioinformatic processing of raw sequencing reads, including quality control, adapter trimming, alignment to reference genomes, and read counting for each gene [4] [2].

Data Processing and Analytical Approaches

Data processing methodologies differ substantially between the technologies due to their fundamentally different data types. Microarray data processing typically includes background correction, quantile normalization, and summarization of probe-level intensities, often using algorithms such as Robust Multi-Array Averaging (RMA). The output is continuous expression values on a logarithmic scale that can be analyzed using conventional statistical methods [1].

RNA-Seq data analysis involves quality control of raw reads, adapter trimming, alignment to a reference genome or transcriptome, and generation of count data for each gene. The count-based nature of RNA-Seq data requires specialized statistical methods that account for its discrete distribution, often using negative binomial models implemented in packages like DESeq2. Normalization approaches must account for factors like sequencing depth and gene length, with methods such as TPM (transcripts per million) or FPKM (fragments per kilobase million) used for cross-sample comparisons [1].

A critical consideration in cross-platform comparisons is the application of consistent statistical approaches. The 2025 study by found that applying the same non-parametric statistical methods (Mann-Whitney U tests) to both microarray and RNA-Seq data from the same samples reduced discrepancies and improved concordance in differential expression results, suggesting that analytical approach selection significantly impacts cross-platform comparisons [1].

PCA Performance on Microarray vs. RNA-Seq Data

The Role of Principal Component Analysis in Transcriptomics

Principal Component Analysis (PCA) serves as an essential computational method for analyzing high-dimensional transcriptomic datasets, enabling dimensionality reduction, visualization of sample relationships, and identification of batch effects. PCA is widely applied to both microarray and RNA-Seq data for quality control, exploratory data analysis, and as a preprocessing step for downstream machine learning applications. In single-cell RNA-sequencing (scRNA-seq) especially, PCA has become an indispensable tool for handling the extreme dimensionality of datasets containing millions of cells, where it is used for feature selection, denoising, and as input for clustering and trajectory inference algorithms [5].

The computational demands of PCA become particularly important with large-scale transcriptomic datasets. Benchmarking studies have revealed that for massive scRNA-seq datasets (e.g., >1 million cells), traditional PCA implementations that load entire data matrices into memory become computationally prohibitive. This has driven the development of memory-efficient PCA algorithms based on Krylov subspace methods and randomized singular value decomposition that maintain accuracy while reducing computational requirements [5].

Comparative Performance in Data Structure Resolution

When applied to microarray versus RNA-Seq data, PCA demonstrates different characteristics in resolving biological and technical variance structures. RNA-Seq data, with its wider dynamic range and greater sensitivity to low-abundance transcripts, typically captures more biological variation in initial principal components. However, the higher dimensionality and sparsity of RNA-Seq data can also introduce computational challenges not encountered with microarray data. The digital nature of RNA-Seq data means that proper normalization and transformation (e.g., variance-stabilizing transformation) are particularly critical before PCA application to avoid technical artifacts dominating the variance structure [1].

Microarray data, being continuous and approximately normally distributed after log-transformation, often exhibits more stable covariance estimation in PCA, potentially providing more robust separation of major biological effects. Studies comparing PCA results between the two platforms have found that while RNA-Seq typically captures more total transcriptional variance, the major axes of biological variation are generally consistent between platforms when analyzing the same samples. This consistency supports the continued utility of legacy microarray data in meta-analyses and database construction, even as RNA-Seq becomes the dominant transcriptomic profiling technology [1].

Essential Research Reagent Solutions

The following table details key reagents and materials essential for implementing both hybridization-based and sequencing-based gene expression quantification workflows, based on methodologies cited in the literature.

Table 3: Essential Research Reagents for Gene Expression Quantification

| Reagent/Material | Function | Technology Application |

|---|---|---|

| PAXgene Blood RNA Kit | Stabilizes RNA in blood samples during collection and storage | Both platforms [1] |

| GLOBINclear Kit | Depletes globin mRNA to improve signal in blood samples | Both platforms [1] |

| GeneChip 3' IVT Express Kit | Amplifies and labels RNA for microarray hybridization | Microarray [1] |

| GeneChip Human Genome U133 Plus 2.0 Array | Contains probes for 54,675 transcripts across 20,174 genes | Microarray [1] |

| Poly(A) mRNA Magnetic Isolation Module | Enriches for mRNA through poly-A tail selection | RNA-Seq [1] |

| NEBNext Ultra II RNA Library Prep Kit | Prepares sequencing libraries from RNA samples | RNA-Seq [1] |

| Stranded mRNA Prep Kit | Prepares directional RNA-Seq libraries | RNA-Seq [2] |

| Biotinylated Nucleotides | Incorporates label for microarray detection | Microarray [4] |

| Platform-Specific Sequencing Adapters | Enables binding to flow cell and cluster generation | RNA-Seq [6] |

| Quality Control Reagents | Assesses RNA integrity and library quality | Both platforms [4] |

Hybridization-based and sequencing-based technologies for gene expression quantification represent complementary rather than mutually exclusive approaches for transcriptome profiling. While RNA-Seq offers clear technical advantages in dynamic range, sensitivity, and discovery potential, microarray technology maintains relevance due to lower costs, simpler data analysis, and extensive legacy data resources. The choice between platforms should be guided by specific research objectives, with RNA-Seq preferred for exploratory studies requiring novel transcript discovery, and microarrays remaining viable for focused hypothesis testing, especially in contexts like toxicogenomic screening where pathway identification and benchmark concentration modeling are primary goals.

The research community's growing experience with both technologies suggests that appropriate statistical analysis and experimental design can yield highly concordant biological insights regardless of platform. As computational methods continue to evolve, particularly for integrating and reanalyzing legacy datasets, both hybridization and sequencing data will remain valuable resources for understanding gene expression in health, disease, and chemical response.

In the field of transcriptomics, two primary technologies have dominated the landscape for genome-wide gene expression analysis: microarrays and RNA sequencing (RNA-seq). These technologies fundamentally differ in how they capture and represent molecular data, utilizing distinct data structures that significantly influence downstream analytical outcomes. Microarrays generate data based on continuous fluorescence intensity measurements, relying on the hybridization affinity of predefined labeled probes to target cDNA sequences. In contrast, RNA-seq produces discrete digital read counts through direct sequencing of cDNA strands via next-generation sequencing technologies [7]. This fundamental distinction in data acquisition creates ripple effects throughout the analytical pipeline, particularly affecting methods like Principal Component Analysis (PCA) which is sensitive to the underlying data structure and variance composition.

The choice between these technologies extends beyond mere technical preference, influencing the dynamic range, sensitivity, reproducibility, and analytical capabilities of transcriptomic studies. As research increasingly focuses on detecting subtle differential expression patterns in complex biological systems—such as distinguishing between disease subtypes or stages—understanding how these data structures perform in multivariate analyses like PCA becomes critical for drawing accurate biological conclusions [8]. This guide provides an objective comparison of these technologies, with particular emphasis on their performance characteristics in PCA applications.

Technology Comparison: Fundamental Principles and Performance Characteristics

Operational Principles and Data Generation

Microarray Technology: Microarrays employ a hybridization-based approach where fluorescently labeled cDNA molecules bind to complementary DNA probes attached to a solid surface. The resulting signal is a continuous fluorescence intensity value that represents the relative abundance of specific RNA transcripts. This technology requires prior knowledge of the sequence for probe design and detects only predefined transcripts [7]. The data structure is inherently analog in nature, with intensity measurements suffering from limitations including background fluorescence, signal saturation at high abundance levels, and nonspecific binding [4].

RNA-Seq Technology: RNA-seq utilizes direct sequencing of cDNA molecules through next-generation sequencing platforms. This produces discrete, digital read counts that represent the number of times a particular transcript fragment has been sequenced. Unlike microarrays, RNA-seq does not require pre-specified probes and can detect novel transcripts, including previously unannotated genes, splice variants, gene fusions, and non-coding RNAs [2]. The digital nature of counting individual molecules provides a fundamentally different data structure with different statistical properties for downstream analysis.

Performance Comparison and Quantitative Metrics

Table 1: Comprehensive Comparison of Microarray and RNA-Seq Performance Characteristics

| Performance Parameter | Microarray Technology | RNA-Seq Technology |

|---|---|---|

| Basic Principle | Hybridization-based detection | Sequencing-based counting |

| Data Structure | Continuous fluorescence intensity | Discrete digital read counts |

| Dynamic Range | ~10³ [2] | >10⁵ [2] |

| Background Noise | High due to nonspecific binding [4] | Low, especially with unique mapping |

| Dependence on Prior Knowledge | Required for probe design [7] | Not required; can detect novel features |

| Reproducibility | High between technical replicates [9] | Higher stochastic variability [9] |

| Sensitivity for Low-Abundance Transcripts | Limited by background fluorescence [2] | Can be enhanced by increasing sequencing depth [2] |

| Cost Considerations | Lower per sample [4] | Higher per sample, but decreasing |

| Sample Throughput | High for standardized designs | Variable depending on sequencing depth |

Experimental Protocols for Technology Comparison

Microarray Experimental Workflow

The standard protocol for gene expression microarrays follows these key steps:

RNA Extraction and Quality Control: Total RNA is extracted from biological samples using kits such as miRNeasy Mini Kit (Qiagen). RNA quality is assessed using spectrophotometry (NanoDrop) and bioanalyzer systems (Agilent 2100 Bioanalyzer) to ensure RNA Integrity Number (RIN) >7 [9].

cDNA Synthesis and Labeling: RNA (typically 50-100 ng) is reverse-transcribed into complementary DNA (cDNA) while incorporating fluorescent labels (e.g., Cy3 or Cy5 dyes) using kits such as the GeneChip WT Plus Reagent Kit [9].

Hybridization: Labeled cDNA is hybridized to a microarray chip containing immobilized DNA probes. This process typically occurs over 16-20 hours at controlled temperatures to ensure specific binding [9].

Washing and Scanning: After hybridization, the array is washed to remove non-specifically bound cDNA and then scanned using a laser scanner to detect fluorescence signals at each probe location [9].

Image Processing and Data Extraction: The scanned image is processed to convert fluorescence signals into quantitative intensity values. Background correction and normalization are applied to generate final expression values [9].

RNA-Seq Experimental Workflow

The standard protocol for RNA sequencing involves these critical steps:

RNA Extraction and Quality Control: Similar to microarray protocols, total RNA is extracted and quality is verified using RIN scores to ensure sample integrity [9].

Library Preparation: This critical step involves several sub-steps:

- rRNA Depletion or poly-A Selection: Either ribosomal RNA is removed using probes, or mRNA is enriched using oligo(dT) beads to capture polyadenylated transcripts [4].

- Fragmentation: RNA is fragmented into appropriate sizes for sequencing (typically 200-500 bp).

- cDNA Synthesis: Fragmented RNA is reverse-transcribed into double-stranded cDNA.

- Adapter Ligation: Sequencing adapters are ligated to cDNA fragments to facilitate amplification and sequencing. Common kits include Illumina Stranded mRNA Prep kit or TruSeq Total RNA Sample Preparation Kit [4] [9].

Sequencing: The prepared libraries are sequenced using platforms such as Illumina HiSeq, NovaSeq, or similar systems, generating millions to billions of short sequence reads [9].

Bioinformatic Processing:

- Quality Control: Raw sequence data is assessed using tools like FastQC to evaluate base quality scores, GC content, and potential contaminants.

- Alignment: Reads are mapped to a reference genome using aligners such as STAR or HISAT2 [10].

- Quantification: Expression levels are estimated by counting reads that align to specific genomic features using tools like featureCounts or HTSeq.

Figure 1: Comparative Experimental Workflows for Microarray and RNA-Seq Technologies

PCA Performance in Analytical Context

Data Structure Implications for Multivariate Analysis

The performance of Principal Component Analysis on transcriptomic data is significantly influenced by the underlying data structure of each technology. PCA operates by identifying directions of maximum variance in high-dimensional datasets, and the fundamental differences between continuous fluorescence intensities and digital read counts create distinct variance patterns:

Variance Structure: Microarray data, with its continuous intensity measurements, demonstrates variance that is often more homoscedastic across expression levels. RNA-seq digital count data follows Poisson or negative binomial distributions where variance increases with mean expression level, requiring specialized normalization approaches before PCA [10].

Signal-to-Noise Characteristics: A multi-center study comparing both technologies found that PCA-based signal-to-noise ratio (SNR) values varied significantly between platforms, with microarrays sometimes demonstrating better reproducibility in detecting subtle differential expression when biological differences between sample groups are small [8]. RNA-seq may show higher stochastic variability, particularly for low-abundance transcripts, which can affect the separation of samples in principal component space [9].

Batch Effect Sensitivity: Both technologies are susceptible to batch effects, but RNA-seq demonstrates particularly pronounced technical variations arising from differences in library preparation protocols, sequencing depth, and bioinformatic processing choices. These technical artifacts can dominate the principal components if not properly addressed, potentially obscuring biological signals [8] [10].

Impact on Detection of Biologically Relevant Patterns

Table 2: PCA Performance Comparison for Microarray and RNA-Seq Data

| Analytical Consideration | Microarray Performance | RNA-Seq Performance |

|---|---|---|

| Separation of Distinct Sample Types | Effective for large biological differences [8] | Excellent for large biological differences; wider dynamic range helps [2] |

| Detection of Subtle Expression Patterns | More stable for small biological differences [8] | Higher variability can mask subtle differences [8] |

| Reproducibility Across replicates | Higher consistency in technical replicates [9] | Higher stochastic variability, especially for low-expression genes [9] |

| Handling of Low-Abundance Transcripts | Limited by background fluorescence and saturation [4] [2] | Can detect rare transcripts but with higher technical noise [2] |

| Data Normalization Requirements | Background correction, quantile normalization [9] | Requires specialized methods (e.g., DESeq2, edgeR) for count data [10] |

| Sensitivity to Technical Artifacts | Probe-specific effects, hybridization efficiency | Batch effects from library prep, sequencing depth [8] |

Figure 2: Relationship Between Data Structures and PCA Performance Outcomes

Research Reagent Solutions for Transcriptomics Studies

Table 3: Essential Research Reagents and Platforms for Transcriptomic Technologies

| Reagent/Platform | Function | Technology Application |

|---|---|---|

| Affymetrix GeneChip PrimeView Arrays | Pre-designed microarray chips for gene expression profiling | Microarray analysis [4] |

| Affymetrix HTA 2.0 Arrays | Human Transcriptome Arrays with probes covering exons and junctions | Comprehensive transcriptome analysis [9] |

| Illumina Stranded mRNA Prep Kit | Library preparation for RNA-seq with strand specificity | RNA-seq library construction [4] |

| TruSeq Total RNA Sample Preparation Kit | Library preparation with ribosomal RNA depletion | RNA-seq of total RNA including non-polyadenylated transcripts [9] |

| GeneChip WT Plus Reagent Kit | Target labeling and amplification for microarray analysis | Microarray sample processing [9] |

| miRNeasy Mini Kit (Qiagen) | Total RNA extraction including small RNAs | Sample preparation for both technologies [9] |

| Agilent 2100 Bioanalyzer | RNA quality assessment using microfluidics | Quality control for both technologies [9] |

| EZ1 RNA Cell Mini Kit | Automated RNA purification system | RNA extraction for transcriptomic studies [4] |

The choice between microarray and RNA-seq technologies for transcriptomic studies involves careful consideration of research goals, analytical priorities, and practical constraints. Each technology produces fundamentally different data structures—continuous fluorescence intensities versus digital read counts—that significantly impact PCA performance and biological interpretation.

Microarray technology, with its continuous intensity data structure, demonstrates advantages in reproducibility and cost-efficiency, particularly for studies focused on detecting subtle differential expression between similar sample types. The technology's maturity, standardized analytical pipelines, and lower per-sample cost make it suitable for large-scale studies where the target transcripts are well-annotated and biological differences may be subtle [4] [8] [9].

RNA-seq technology offers unparalleled discovery power through its digital read count data structure, providing a wider dynamic range and ability to detect novel transcripts and isoforms. While demonstrating excellent performance for distinguishing samples with large biological differences, its higher stochastic variability requires careful experimental design and more complex bioinformatic processing. The technology is particularly valuable for exploratory studies, applications requiring detection of novel features, or when analyzing transcriptomes without complete annotation [2] [8].

For PCA applications specifically, researchers should consider that microarray data often provides more stable results for detecting subtle patterns in similar samples, while RNA-seq excels at global profiling of diverse sample types. The decision matrix should incorporate study objectives, sample types, bioinformatic capabilities, and budget constraints to select the most appropriate technology for the specific research context.

The choice between microarray and RNA sequencing (RNA-seq) technologies represents a fundamental decision in transcriptomic research, with significant implications for data interpretation and biological conclusions. Within the specific context of performing Principal Component Analysis (PCA)—a core method for visualizing sample relationships and reducing data dimensionality—understanding the inherent technical biases of each platform is crucial. These biases, rooted in the underlying measurement principles of each technology, can directly influence the variance structure of the dataset and consequently, the outcome of PCA. This guide provides an objective, data-driven comparison of microarray and RNA-seq performance, focusing on the key parameters of dynamic range, background noise, and detection limits, and their impact on transcriptomic analysis.

Fundamental Technological Differences and Their Biases

The distinct operational principles of microarrays and RNA-seq are the direct cause of their differing technical biases. The following workflow illustrates the key steps where these biases are introduced.

Experimental Workflow and Source of Bias

Figure 1: Experimental workflows for Microarray and RNA-seq technologies. The points where key technical biases are introduced are highlighted, which subsequently influence the variance structure critical for PCA.

Microarray Technology relies on hybridization-based detection, where fluorescently labeled cDNA molecules bind to complementary DNA probes attached to a solid surface [4] [2]. The signal is measured as fluorescence intensity, an analog measurement. This process is susceptible to cross-hybridization, where non-specific binding occurs, and signal saturation for highly expressed transcripts [11] [2]. The technology is limited to detecting only the transcripts for which probes were pre-designed.

RNA-seq Technology is based on sequencing-by-synthesis, which involves fragmenting RNA, converting it to a cDNA library, and digitally counting the number of sequences (reads) that align to a reference genome or transcriptome [4] [2]. This digital counting method avoids many of the hybridization-related issues inherent to microarrays and is not constrained by pre-defined probes, allowing for the discovery of novel transcripts [12] [2].

Quantitative Comparison of Technical Parameters

The fundamental differences in technology translate into quantifiable disparities in performance. The following table summarizes the direct comparison of key technical parameters that influence data quality and analytical outcomes.

Table 1: Direct comparison of technical performance parameters between Microarray and RNA-seq.

| Technical Parameter | Microarray | RNA-seq | Supporting Experimental Evidence |

|---|---|---|---|

| Dynamic Range | ~10³ [2] | >10⁵ [2] | RNA-seq's digital counting does not suffer from signal saturation at the high end or background limitation at the low end, unlike analog fluorescence detection in microarrays [12] [2]. |

| Background Noise | High, due to cross-hybridization and non-specific binding [11] [12]. | Low, due to specific alignment of sequences to the genome [12] [13]. | Microarray data shows a consistent background fluorescence level requiring background subtraction algorithms, while RNA-seq noise is more random and can be modeled and filtered computationally [11] [13]. |

| Detection Limit & Sensitivity | Lower sensitivity, especially for low-abundance transcripts [11] [2]. | Higher sensitivity, can detect rare transcripts and weakly expressed genes [11] [2]. | In a T cell activation study, RNA-seq was superior in detecting low-abundance transcripts and identified a larger number of differentially expressed genes (DEGs), particularly those with low expression [11]. |

| Transcript Discovery | Limited to pre-designed probes for known transcripts. | Capable of de novo detection of novel transcripts, splice variants, and gene fusions [2]. | RNA-seq does not rely on existing genome annotation for probe selection, thus avoiding related biases and enabling the discovery of novel features [11] [2]. |

| Data Reproducibility | High intra-platform reproducibility but can suffer from inter-laboratory variability. | Highly reproducible with low technical variation [11]. | A study by Marioni et al. found that RNA-seq data on the Illumina platform was highly reproducible, with relatively little technical variation [11]. |

Impact on PCA and Transcriptomic Analysis

The technical parameters detailed above have a direct and measurable impact on the data structure that serves as input for PCA. The following diagram conceptualizes how platform-specific biases propagate to influence the principal components.

How Biases Influence PCA Results

Figure 2: The propagation of technical biases from raw data to PCA results. The inherent limitations of each platform shape the data's variance structure, which directly determines the principal components.

Variance Structure: PCA operates by identifying the directions of greatest variance in a dataset. RNA-seq's wider dynamic range means that true biological differences in gene expression, from very low to very high, can contribute significantly to these principal components. In contrast, microarray's compressed dynamic range may cause the variance to be dominated by technical factors or a smaller subset of highly expressed genes, potentially obscuring biologically relevant patterns [14].

Impact of Noise: The background noise and cross-hybridization in microarrays introduce a technical variance that is not biologically meaningful. This noise can become a component of the variance captured by the principal components, potentially distorting the sample separation in the PCA plot. RNA-seq's lower background noise helps ensure that the variance analyzed by PCA is more likely to reflect true biological signal [11] [13].

Impact of Detection Limits: The inability of microarrays to detect low-abundance and novel transcripts means that the expression matrix provided to PCA is incomplete. RNA-seq, with its superior sensitivity, provides a more complete picture of the transcriptome. The presence or absence of these additional transcripts can significantly alter the covariance structure of the data, leading to different principal components and sample clustering [11] [2]. For instance, in a study on colorectal cancer, systematic technical biases between platforms led to differences in transcriptomic subtyping, a process often reliant on dimensionality reduction techniques like PCA [14].

Case Study: Experimental Data and Protocols

Experimental Protocol from a Comparative Study

A 2025 study provided a direct, updated comparison using the same biological samples (iPSC-derived hepatocytes exposed to cannabinoids) analyzed on both platforms [4].

- Cell Culture and Exposure: iPSC-derived hepatocytes (iCell Hepatocytes 2.0) were cultured and exposed to a concentration range of cannabichromene (CBC) and cannabinol (CBN) for 24 hours [4].

- RNA Extraction: Total RNA was purified using an automated RNA purification instrument (Qiagen EZ1 Advanced XL) with an on-column DNase digestion step. RNA quality was assessed for integrity (RIN) using an Agilent 2100 Bioanalyzer [4].

- Microarray Processing: Total RNA (100 ng) was processed using the GeneChip 3' IVT PLUS Reagent Kit (Affymetrix) and hybridized to GeneChip PrimeView Human Gene Expression Arrays. Scanned images were processed using the Robust Multi-chip Average (RMA) algorithm for background adjustment, quantile normalization, and summarization [4].

- RNA-seq Processing: Sequencing libraries were prepared from 100 ng of total RNA using the Illumina Stranded mRNA Prep, Ligation Kit, which includes poly-A selection of mRNA. Libraries were sequenced on an Illumina platform to generate a typical output of 50 million paired-end reads per sample [4] [1].

Key Findings and Quantitative Data

Despite the profound technical differences, this study found that the two platforms could yield similar functional conclusions, though with important distinctions in the raw data [4].

Table 2: Key experimental findings from the comparative study of CBC and CBN [4].

| Analysis Metric | Microarray Results | RNA-seq Results | Interpretation |

|---|---|---|---|

| Overall Gene Expression Patterns | Similar concentration-dependent patterns for both CBC and CBN. | Similar concentration-dependent patterns for both CBC and CBN. | Both platforms captured the overall global response to chemical exposure consistently. |

| Number of Differentially Expressed Genes (DEGs) | Fewer DEGs identified. | Larger numbers of DEGs identified, with a wider dynamic range. | RNA-seq's higher sensitivity and dynamic range allowed detection of more subtle and extreme expression changes. |

| Functional Enrichment (GSEA) | Equivalent performance in identifying impacted functions and pathways. | Equivalent performance in identifying impacted functions and pathways. | Downstream functional analysis converged despite differences in the initial DEG list. |

| Transcriptomic Point of Departure (tPoD) | tPoD values were on the same level for both compounds. | tPoD values were on the same level for both compounds. | For quantitative concentration-response modeling, both platforms performed equivalently in this context. |

Another study on human peripheral blood cells further illustrates the scale of the difference in detection power. RNA-seq identified 2,395 differentially expressed genes (DEGs) between study groups, while microarray identified only 427 DEGs, with an overlap of 223 genes between the platforms [1]. This demonstrates that while there is concordance for a core set of genes, RNA-seq provides access to a much broader spectrum of the transcriptome's dynamics.

The Scientist's Toolkit: Essential Reagent Solutions

Table 3: Key research reagents and kits used in the featured experimental protocols.

| Reagent / Kit | Function | Example Use Case |

|---|---|---|

| Qiagen EZ1 RNA Cell Mini Kit | Purification of total intracellular RNA, including an on-column DNase digestion step to remove genomic DNA contamination. | RNA extraction from iPSC-derived hepatocytes for both microarray and RNA-seq [4]. |

| Agilent RNA 6000 Nano Kit | Assessment of RNA integrity (RIN) using the Agilent 2100 Bioanalyzer, a critical quality control step prior to library preparation. | QC of total RNA samples to ensure only high-quality (RIN > 7) RNA is used for downstream analysis [4] [1]. |

| GeneChip 3' IVT PLUS Reagent Kit (Affymetrix) | For amplification, biotin-labeling, and fragmentation of complementary RNA (cRNA) for microarray hybridization. | Target preparation for hybridization to Affymetrix GeneChip arrays [4] [1]. |

| Illumina Stranded mRNA Prep, Ligation Kit | Poly-A selection of mRNA and construction of strand-specific sequencing libraries for Illumina platforms. | RNA-seq library preparation from total RNA [4] [1]. |

| PAXgene Blood RNA Kit | Stabilization of RNA and extraction from whole blood samples, preserving the in vivo transcriptome profile. | RNA isolation for transcriptomic studies using human whole blood [1]. |

| GLOBINclear Kit | Depletion of globin mRNA from whole blood RNA samples to increase sequencing depth on non-globin transcripts. | Globin reduction to improve detection of non-erythrocyte transcripts in human blood studies [1]. |

Both microarray and RNA-seq technologies are capable of generating robust transcriptomic data for PCA and other analyses, as evidenced by their concordance in high-level pathway identification and concentration-response modeling [4]. However, they are not interchangeable. The choice of platform has a profound effect on the underlying data structure.

- RNA-seq offers clear technical advantages in dynamic range, sensitivity, and specificity, which generally provide a more complete and accurate representation of the transcriptome's variance. This leads to PCA results that are more likely to be driven by true biological differences across the full spectrum of gene expression.

- Microarray technology, while lower in cost and benefiting from well-established analysis pipelines and public databases, is constrained by its hybridization-based chemistry. The resulting biases can compress variance and introduce technical noise, which may influence the principal components.

Researchers must align their choice of technology with their experimental goals. For discovery-phase research, detection of low-abundance transcripts, or when analyzing organisms without a well-defined genome, RNA-seq is the superior choice. For focused studies where the transcripts of interest are well-characterized and highly expressed, or where budget and data storage are primary constraints, microarrays remain a viable and effective tool. Critically, when integrating public datasets for meta-analysis or building predictive models, investigators must account for platform-specific technical biases to ensure accurate and reproducible biological insights.

Principal Component Analysis (PCA) remains an essential exploratory tool for transcriptomic studies, serving critical roles in quality assessment, outlier detection, and visualization of sample relationships in high-dimensional gene expression data [15] [5]. The fundamental objective of PCA is dimensionality reduction—transforming thousands of gene expression measurements into a simplified set of uncorrelated principal components that capture the greatest variance within the dataset [16] [17]. The first principal component (PC1) aligns with the largest source of variance, followed by PC2 capturing the next largest remaining variance, and so on [18].

The application of PCA, however, is profoundly influenced by the underlying properties of the input data. This guide provides a systematic comparison of how these data properties—specifically linearity assumptions and variance structure—manifest differently in microarray and RNA-seq technologies, ultimately affecting PCA performance and interpretation. Understanding these technical distinctions is crucial for researchers, scientists, and drug development professionals working with transcriptomic data across platforms.

Fundamental Technological Differences Between Microarray and RNA-Seq

Microarray technology, the established platform for over a decade, employs a hybridization-based approach to profile transcriptome-wide gene expression by measuring fluorescence intensity of predefined transcripts [4]. In contrast, RNA-seq, which emerged in the mid-2000s, is based on counting reads that can be reliably aligned to a reference sequence, providing a wider dynamic range and ability to detect novel transcripts including splice variants and non-coding RNAs [4] [11].

Table 1: Core Technological Differences Between Platforms

| Feature | Microarray | RNA-Seq |

|---|---|---|

| Measurement Basis | Fluorescence intensity from hybridization [4] | Read counting via sequencing [4] |

| Dynamic Range | Limited [4] [11] | Broader [4] [11] |

| Background Noise | Higher due to nonspecific binding [4] | Lower [4] |

| Transcript Coverage | Predefined transcripts only [4] [19] | Whole transcriptome, including novel transcripts [4] [19] |

| Data Structure | Continuous intensity values [4] | Count-based data [11] |

These fundamental technological differences directly impact the data properties relevant to PCA—particularly the variance structure and dynamic range—which we explore in the following sections.

Impact of Variance Structure and Dynamic Range on PCA

Variance Characteristics by Platform

The variance structure embedded in gene expression data directly dictates how PCA prioritizes components. RNA-seq demonstrates a broader dynamic range than microarray, allowing for detection of more differentially expressed genes with higher fold-change [11]. This expanded dynamic range means RNA-seq captures more extreme expression values, which can disproportionately influence principal component directions if not properly addressed.

Microarray data typically exhibits more constrained variance structure due to technological limitations including background noise and nonspecific binding [4]. The predefined transcript detection also means unexpected sources of biological variation may remain undetected, potentially limiting the biological insights obtainable through PCA.

Evidence from Comparative Studies

Comparative studies consistently demonstrate that RNA-seq identifies more differentially expressed protein-coding genes and provides a wider quantitative range of expression level changes compared to microarrays [19]. One toxicogenomic study found approximately 78% of DEGs identified with microarrays overlapped with RNA-seq data, with Spearman's correlation ranging from 0.7 to 0.83 [19]. Despite this discordance, both platforms often identify similar enriched biological pathways, though RNA-seq may provide additional mechanistic insights through detection of more comprehensive gene sets [19].

Normalization Methods and Their Impact on PCA

The Critical Role of Normalization

Normalization of gene expression data represents an essential preprocessing step that significantly impacts subsequent PCA results [20]. As PCA is fundamentally based on covariance patterns [16], normalization methods that alter variance structure will directly influence component derivation. One comprehensive evaluation of twelve normalization methods applied to RNA-seq data found that while PCA score plots often appear similar across normalization techniques, the biological interpretation of the models can depend heavily on the normalization method applied [20].

Platform-Specific Normalization Considerations

For microarray data, the Robust Multi-chip Average (RMA) algorithm is commonly employed, consisting of background adjustment, quantile normalization, and summarization steps [4]. RNA-seq data requires distinct normalization approaches accounting for its count-based nature, with methods like DESeq2's median-of-ratios providing effective normalization [15].

Table 2: Normalization Methods for Cross-Platform Analysis

| Normalization Method | Mechanism | Effect on PCA Variance Structure |

|---|---|---|

| Quantile Normalization (QN) | Forces all samples to have identical empirical distribution [21] | Standardizes variance across platforms, enabling combined analysis [21] |

| Training Distribution Matching (TDM) | Transforms RNA-seq to match microarray distribution [21] | Makes variance structures comparable for machine learning applications [21] |

| Nonparanormal Normalization (NPN) | Semiparametric approach using truncated empirical distribution [21] | Preserves more platform-specific variance characteristics [21] |

| Z-score Standardization | Centers to mean and scales by standard deviation [21] | Can introduce variability if platforms have different mean-variance relationships [21] |

Cross-Platform Normalization Strategies

When integrating datasets from both platforms, cross-platform normalization becomes essential. Recent research indicates that quantile normalization and Training Distribution Matching allow for supervised and unsupervised model training on microarray and RNA-seq data simultaneously [21]. Nonparanormal normalization and z-scores are also appropriate for some applications, including pathway analysis [21].

Experimental Protocols for Platform Comparison

Sample Preparation and Processing

For meaningful comparison between platforms, the same RNA samples should be used for both microarray and RNA-seq analysis [19]. In practice, total RNA is extracted from biological samples (e.g., liver tissue from rat toxicity studies), with aliquots of the same total RNA samples used as input for each platform [19].

Microarray Protocol:

- Process samples using platform-specific kits (e.g., GeneChip 3' IVT PLUS Reagent Kit for Affymetrix)

- Hybridize to appropriate arrays (e.g., GeneChip PrimeView Human Gene Expression Arrays)

- Scan chips and process image files using manufacturer's software [4]

- Normalize data using RMA algorithm with background adjustment, quantile normalization, and summarization [4]

RNA-seq Protocol:

- Prepare sequencing libraries using kit-based approaches (e.g., Illumina Stranded mRNA Prep)

- Sequence on appropriate platform (e.g., NextSeq500)

- Generate FastQ files and align to reference genome using specialized tools (e.g., OSA4)

- Quantify expression as counts or RPKM/FPKM values [19]

PCA Implementation and Analysis

The following workflow diagram illustrates the key steps in performing PCA for transcriptomic data:

For RNA-seq data specifically, the computational implementation typically involves:

Critical considerations during implementation include whether to scale variables (divide by standard deviation) before PCA. By default, the prcomp() function centers but does not scale the data, which may be appropriate for log-transformed RNA-seq data but should be carefully considered based on the specific research context [18] [16].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools

| Item | Function/Purpose | Example Products/Implementations |

|---|---|---|

| RNA Isolation Kits | Extract high-quality total RNA from biological samples | Qiazol extraction with on-column DNase I treatment [19] |

| Microarray Platforms | Hybridization-based transcriptome profiling | Affymetrix GeneChip PrimeView Arrays [4] |

| RNA-seq Library Prep Kits | Prepare sequencing libraries from RNA | Illumina Stranded mRNA Prep Kit [19] |

| Quality Control Instruments | Assess RNA integrity | Agilent 2100 Bioanalyzer with RNA Nano Kit [19] |

| PCA Implementations | Compute principal components | R's prcomp(), PCA() from FactoMineR [18] [5] |

| Interactive Visualization Tools | Explore PCA results interactively | pcaExplorer R/Bioconductor package [15] |

| Cross-Platform Normalization Methods | Enable integrated analysis of microarray and RNA-seq | Quantile Normalization, Training Distribution Matching [21] |

The pcaExplorer package deserves special mention as it provides a user-friendly Shiny interface for interactive exploration of PCA results, specifically designed for RNA-seq data [15]. This tool enhances standard analysis workflows by providing state saving and automated creation of reproducible reports, facilitating more efficient exploratory data analysis [15].

The properties of input data—particularly variance structure and dynamic range—significantly impact PCA performance and interpretation for transcriptomic studies. RNA-seq technology offers advantages in detecting more differentially expressed genes with wider dynamic range, while microarray benefits from established analysis pipelines and lower computational requirements. The selection between platforms should be guided by research objectives, with RNA-seq preferred for novel discovery and microarray remaining viable for focused hypothesis testing.

Successful application of PCA requires careful consideration of normalization strategies, especially when integrating data across platforms. Quantile normalization and Training Distribution Matching emerge as effective approaches for cross-platform analysis, enabling researchers to leverage the growing volumes of publicly available transcriptomic data. As sequencing costs continue to decrease and analysis methods improve, RNA-seq will likely become the predominant platform, though understanding the variance structure differences between technologies remains essential for proper experimental design and data interpretation.

Principal Component Analysis (PCA) is a fundamental statistical technique for dimensionality reduction, widely used to explore high-dimensional transcriptomic data. It transforms potentially correlated variables into a smaller set of uncorrelated principal components that retain most of the original information [22]. The performance and interpretability of PCA are heavily influenced by data preprocessing decisions, particularly normalization and transformation methods. This guide provides an objective comparison of how these preprocessing choices affect PCA outcomes when applied to the two dominant transcriptomic technologies: microarrays and RNA sequencing (RNA-seq). Understanding these relationships is crucial for researchers, scientists, and drug development professionals seeking to extract meaningful biological insights from their data.

Fundamental Technology Differences

Microarrays and RNA-seq employ fundamentally different principles for transcriptome profiling. Microarrays utilize a hybridization-based approach where fluorescently-labeled cDNA samples bind to predefined probes on a chip, with signal intensity indicating expression levels [7]. This technology requires prior knowledge of the sequences being detected. In contrast, RNA-seq is a sequencing-based method that involves converting RNA to complementary DNA (cDNA) followed by high-throughput sequencing to generate reads that are counted and mapped to a reference genome or transcriptome [7] [23].

Comparative Strengths and Limitations

Table 1: Key Technical Differences Between Microarray and RNA-seq Technologies

| Feature | Microarray | RNA-seq |

|---|---|---|

| Detection Principle | Hybridization to predefined probes | Direct sequencing of cDNA fragments |

| Prior Sequence Knowledge Required | Yes | No |

| Dynamic Range | ~10³ [7] | >10⁵ [7] |

| Ability to Detect Novel Transcripts | Limited | Extensive (splice variants, non-coding RNAs) [4] [7] |

| Background Noise | Higher | Lower |

| Data Type | Fluorescence intensity | Digital read counts |

| Typical Data Size | Smaller | Larger |

RNA-seq offers several technical advantages including a wider dynamic range, higher sensitivity for detecting low-abundance transcripts, and the ability to identify novel genes, splice variants, and non-coding RNAs [7]. However, microarrays maintain benefits including lower cost, simpler data analysis pipelines, and more established analytical software and reference databases [4].

Preprocessing Fundamentals for Transcriptomic Data

The Critical Role of Normalization

Normalization adjusts for technical variations to ensure that expression differences reflect true biological signals rather than artifacts of measurement. For both microarray and RNA-seq data, normalization addresses issues such as varying sample concentrations, hybridization efficiencies, and sequencing depths [10] [23]. The necessity and implementation of normalization, however, differ between platforms.

In RNA-seq analysis, raw counts cannot be directly compared between samples because the number of reads mapped to a gene depends not only on its true expression level but also on the total sequencing depth for that sample [23]. Normalization mathematically adjusts these counts to remove such biases. For microarray data, normalization addresses issues with background fluorescence, uneven hybridization, and probe-specific effects.

PCA and its Dependence on Data Structure

PCA is a linear dimensionality reduction technique that identifies the directions (principal components) of maximum variance in a dataset [22] [24]. The first principal component (PC1) captures the greatest variance, with subsequent components accounting for remaining variation in decreasing order while being orthogonal to previous components [22]. How variance is distributed across genes and samples directly impacts PCA results, making appropriate preprocessing critical for meaningful analysis.

As [20] demonstrates, normalization methods directly influence correlation patterns in the data, which in turn affects the PCA model complexity, sample clustering in the low-dimensional space, and biological interpretation of the components.

Platform-Specific Preprocessing Workflows

Microarray Preprocessing Pipeline

Microarray preprocessing typically involves background correction, normalization, and summarization. The robust multi-chip average (RMA) algorithm is commonly employed, consisting of three steps: background adjustment, quantile normalization, and summarization of probe-level data to generate expression values [4].

Figure 1: Standard microarray preprocessing workflow prior to PCA

RNA-seq Preprocessing Pipeline

RNA-seq preprocessing involves more complex steps including quality control, adapter trimming, read alignment, and quantification. The normalization approach must be carefully selected based on the experimental design and research questions.

Figure 2: Comprehensive RNA-seq preprocessing workflow prior to PCA

Normalization Methods and Their Impact on PCA

RNA-seq Normalization Techniques

Multiple normalization approaches exist for RNA-seq data, each with different implications for PCA outcomes:

- Shifted Logarithm: A simple approach using log(count + pseudo-count), where the pseudo-count choice significantly affects results [25]. The relationship between pseudo-count (y₀) and overdispersion (α) is y₀ = 1/(4α) [25].

- Variance-Stabilizing Transformation (VST): Based on the delta method, with the acosh transformation (1/√α × acosh(2αy + 1)) being theoretically optimal for gamma-Poisson distributed data [25].

- Pearson Residuals: Calculated as (ygc - μ̂gc)/√(μ̂gc + α̂gμ̂gc²), where μ̂gc and α̂_g come from fitting a gamma-Poisson generalized linear model [25]. This approach better handles size factor variations compared to delta method-based transformations.

Comparative Performance of Normalization Methods

Table 2: Impact of Normalization Methods on PCA Performance

| Normalization Method | PCA Cluster Separation | Technical Noise Removal | Biological Signal Preservation | Recommendation Context |

|---|---|---|---|---|

| Shifted Logarithm | Variable (depends on pseudo-count) | Moderate | Moderate | Good default choice [25] |

| VST (acosh) | Theoretical optimal, practical limitations | Good | Good | When size factors are similar |

| Pearson Residuals | Good, especially with varying size factors | Excellent | Good | Recommended for datasets with varying sequencing depths [25] |

| Quantile Normalization | Good for cross-platform comparisons | Good | Moderate | Microarray focus, cross-study RNA-seq [10] |

| CPM (Counts Per Million) | Poor (overdispersions underestimated) | Poor | Poor | Not recommended for PCA [25] |

Research indicates that while PCA score plots may appear similar across different normalization methods, the biological interpretation of the models can differ significantly [20]. A comprehensive evaluation of 12 normalization methods found that correlation patterns in normalized data varied substantially depending on the method used, directly impacting PCA interpretation [20].

Experimental Evidence: Platform Comparisons with PCA

Case Study: Toxicogenomic Applications

A comparative study of rat liver samples exposed to hepatotoxicants found that both RNA-seq and microarray platforms revealed similar overall gene expression patterns in PCA [19]. However, RNA-seq identified more differentially expressed protein-coding genes and provided a wider quantitative range of expression level changes [19]. Despite these technical differences, both platforms displayed equivalent performance in identifying functions and pathways impacted by compound exposure through gene set enrichment analysis.

In a study comparing cannabinoids (CBC and CBN), both platforms revealed similar overall gene expression patterns with regard to concentration, and transcriptomic point of departure values derived through benchmark concentration modeling were equivalent between platforms [4]. This suggests that for traditional transcriptomic applications like mechanistic pathway identification, microarrays remain a viable choice.

Performance in Classification Contexts

When PCA is used as a preprocessing step for classification, the choice of normalization significantly impacts outcomes. Research on RNA-seq data preprocessing pipelines for transcriptomic predictions across independent studies found that batch effect correction improved performance when classifying tissue of origin against an independent GTEx test dataset [10]. However, the same preprocessing operations worsened classification performance when the independent test dataset was aggregated from separate studies in ICGC and GEO [10].

Practical Guidelines for Researchers

Platform Selection Guidelines

Table 3: Platform Recommendation Based on Research Objectives

| Research Goal | Recommended Platform | Rationale | Optimal Preprocessing for PCA |

|---|---|---|---|

| Novel Transcript Discovery | RNA-seq | Ability to detect unknown transcripts [7] | Pearson residuals or VST |

| Traditional Pathway Analysis | Either (platforms equivalent) [4] | Similar functional enrichment results | Platform-specific standard methods |

| Large-Scale Studies with Budget Constraints | Microarray | Lower cost, smaller data size [4] | RMA with quantile normalization |

| Detection of Low-Abundance Transcripts | RNA-seq | Superior sensitivity [7] | Pearson residuals with careful quality control |

| Concentration-Response Modeling | Either (platforms equivalent) [4] | Similar point of departure values | Platform-specific standard methods |

Essential Research Reagents and Tools

Table 4: Key Research Reagent Solutions for Transcriptomic Studies

| Item | Function | Platform Application |

|---|---|---|

| TruSeq Stranded mRNA Library Prep Kit | RNA-seq library preparation | RNA-seq [19] |

| GeneChip PrimeView Human Gene Expression Arrays | Microarray hybridization | Microarray [4] |

| Qiazol | RNA extraction and purification | Both platforms [19] |

| DNase I | Genomic DNA removal | Both platforms [19] |

| BioAnalyzer with RNA 6000 Nano Reagent Kit | RNA quality assessment (RIN) | Both platforms [4] |

| STAR Aligner | RNA-seq read alignment | RNA-seq [10] |

| HTSeq-count/featureCounts | Read quantification | RNA-seq [23] |

Decision Framework for Preprocessing Choices

Figure 3: Preprocessing decision framework for optimal PCA performance

The optimal performance of Principal Component Analysis on transcriptomic data is inextricably linked to appropriate preprocessing decisions. While RNA-seq offers technical advantages in detection range and novelty, microarray platforms remain competitive for traditional applications, particularly when considering cost and analytical maturity. The choice of normalization method significantly influences PCA outcomes, with methods like Pearson residuals generally outperforming simpler approaches for RNA-seq data, especially with varying size factors. Researchers must align their preprocessing pipeline with their biological questions, technical resources, and analytical expertise to ensure that PCA reveals meaningful biological patterns rather than technical artifacts. As both technologies continue to evolve, so too will the preprocessing methodologies that maximize their analytical potential.

Implementing PCA: Best Practices and Workflows for Each Platform

In the field of transcriptomics, researchers must make critical decisions regarding experimental design to ensure robust, interpretable, and biologically relevant results. The choice between microarray and RNA-seq technologies, the determination of appropriate sample size, and the proper implementation of replicates are foundational considerations that directly impact data quality and subsequent conclusions. This guide provides an objective comparison of microarray and RNA-seq performance, with a specific focus on their characteristics in Principal Component Analysis (PCA), supported by experimental data and detailed methodologies.

Platform Technologies: Microarray vs. RNA-Seq

Fundamental Technology Principles

Microarray technology is based on a hybridization-based approach where fluorescently labeled cDNA is detected through hybridization to complementary sequences on a solid surface. The output is a continuous fluorescence intensity measurement, which serves as a proxy for gene expression levels [1]. The technology relies on predefined probes, making it suitable for profiling known sequences [26].

RNA sequencing (RNA-seq) utilizes next-generation sequencing (NGS) of cDNA molecules, providing a digital readout of transcript abundance through direct counting of sequence reads. This platform can identify transcripts not typically detectable by microarrays, including splice variants and non-coding RNAs (e.g., miRNA, lncRNA) [4] [27].

Experimental Workflows

The experimental workflows for both platforms share initial steps but diverge in their core detection methodologies. The following diagram illustrates the key stages for each platform:

Research Reagent Solutions

The following table details essential materials and reagents used in transcriptomics studies:

Table 1: Key Research Reagents and Platforms for Transcriptomic Analysis

| Item Category | Specific Examples | Function in Experiment |

|---|---|---|

| Microarray Platforms | Affymetrix GeneChip PrimeView Human Gene Expression Arrays, Gene Chip Human Genome U133 Plus 2.0 Array [4] [1] | Solid surface with immobilized probes for hybridization-based gene expression detection |

| RNA-seq Library Prep Kits | Illumina Stranded mRNA Prep Kit, NEBNext Ultra II RNA Library Prep Kit for Illumina [4] [1] | Convert RNA to sequencing-ready libraries with appropriate adapters |

| RNA Isolation Kits | PAXgene Blood RNA Kit, EZ1 RNA Cell Mini Kit [4] [1] | Purify high-quality total RNA from biological samples |

| RNA Quality Assessment | Agilent 2100 Bioanalyzer with RNA 6000 Nano Reagent Kit [4] | Assess RNA Integrity Number (RIN) to ensure sample quality |

| Amplification & Labeling | GeneChip 3' IVT PLUS Reagent Kit [4] | Amplify and fluorescently label cDNA for microarray detection |

| Globin Reduction | GLOBINclear Kit [1] | Deplete abundant globin mRNA from blood samples to improve detection of other transcripts |

Experimental Design Fundamentals

Replicates: Technical vs. Biological

Proper experimental replication is crucial for drawing statistically valid conclusions. The distinction between technical replicates and biological replicates is particularly important:

Technical Replicates: Multiple measurements of the same biological sample to account for measurement error and technical variability. These help assess the precision of the experimental protocol but do not provide evidence of biological reproducibility [28] [29].

Biological Replicates: Measurements from different biological sources (e.g., different animals, primary cell cultures from different donors) that account for biological variability. These are essential for making inferences about the population from which the samples were drawn [28].

As noted in one analysis, "if we have multiple measures on a single suspension from one individual mouse, we can only draw a conclusion about that particular suspension from that particular mouse" [28]. This highlights that without proper biological replication, the generalizability of findings is severely limited.

Sample Size Considerations

Determining appropriate sample size is critical for achieving sufficient statistical power. For small sample sizes, optimization-based approaches can be more effective than random assignment for creating statistically equivalent groups [30]. One proposed method matches experimental groups "to minimize the en-masse discrepancies in means and variances," which makes "statistics much more precise, concentrating them tightly around their nominal values while still being unbiased estimates" [30].

In genetic toxicology studies, it has been shown that "for optimal power in statistical testing, it is preferable to use equal total numbers of flies in the control and treated series" [31]. This principle of balanced group sizes applies broadly to transcriptomics experiments.

Performance Comparison: Microarray vs. RNA-Seq

Analytical Capabilities and Data Output

Table 2: Platform Capabilities and Performance Metrics

| Feature | Microarray | RNA-Seq |

|---|---|---|

| Dynamic Range | Limited [4] | Wide [4] |

| Probe/Read Type | Predefined probes [26] | All transcripts, including novel ones [4] |

| Typical DEGs Identified | 427 DEGs (example study) [1] | 2395 DEGs (example study) [1] |

| Pathways Identified | 47 perturbed pathways (example study) [1] | 205 perturbed pathways (example study) [1] |

| Correlation Between Platforms | Median Pearson r = 0.76 [1] | Median Pearson r = 0.76 [1] |

| Cost Considerations | Lower per sample cost [4] | Higher sequencing costs [4] |

| Data Analysis Maturity | Well-established methods [4] | Rapidly evolving algorithms [27] |

Concordance in Differential Expression Analysis

Despite technological differences, studies show significant concordance between platforms when appropriate statistical methods are applied. One comparative analysis using the same blood samples found that "the two platforms displayed equivalent performance in identifying functions and pathways impacted by compound exposure through gene set enrichment analysis (GSEA)" [4]. Furthermore, "transcriptomic point of departure (tPoD) values derived by the two platforms through BMC modeling were on the same levels" [4].

Another study reported that "RNA-seq identified 2395 differentially expressed genes (DEGs), while microarray identified 427 DEGs, with 223 DEGs shared between the two platforms" [1]. The overlap in functional interpretation was greater than the gene-level overlap, with "30 pathways shared" out of 47 identified by microarray and 205 by RNA-seq [1].

PCA Performance and Data Quality Assessment

Data Characteristics Affecting PCA

Principal Component Analysis (PCA) is commonly used to assess data quality and identify sample relationships and batch effects. The different data structures generated by microarray and RNA-seq influence PCA results:

Microarray Data: Continuous, normally distributed fluorescence intensity values (after log transformation) are generally suitable for PCA using conventional Euclidean distance metrics [1].

RNA-seq Data: Count-based data typically follows a negative binomial distribution, requiring variance-stabilizing transformation (VST) or regularized log transformation before PCA to avoid dominance by highly expressed genes [1].

The following diagram illustrates the data processing and PCA evaluation workflow for both platforms:

Metrics for Evaluating PCA Quality

Several metrics can assess PCA quality when comparing platforms:

Percentage of Variance Explained: The cumulative percent variance (CPV) retained by the first k principal components indicates how well the reduced dimensions capture the dataset's structure [32].

Variance of Reconstruction Error (VRE): This method evaluates how well the PCA model reconstructs the original data and can be used to determine the optimal number of components [32].

Information-Theoretic Criteria: Measures such as Rissanen's Minimum Description Length (MDL) provide alternative approaches for component selection [32].

Studies suggest that "CPV is convenient and easy, and does a decent job, but VRE and cross-validation methods are usually better" for evaluating PCA quality [32].

Experimental Protocols for Platform Comparison

Sample Preparation Methodology

For rigorous comparison studies, the same RNA samples should be used for both platforms:

Cell Culture & Treatment: Human iPSC-derived hepatocytes are cultured and exposed to compounds of interest in triplicate, maintaining consistent DMSO concentrations across treatments [4].

RNA Extraction: Total RNA is purified using automated systems (e.g., EZ1 Advanced XL), with DNase digestion to remove genomic DNA contamination [4].

Quality Control: RNA concentration and purity are measured via spectrophotometry (NanoDrop), with RNA integrity determined using microfluidics-based systems (Agilent Bioanalyzer) [4]. Samples should have RIN values above 7 for reliable results [1].

Platform-Specific Processing