PCA vs. MANOVA: Choosing the Right Tool for High-Dimensional Gene Expression Analysis

This article provides a comprehensive guide for researchers and bioinformaticians on applying Principal Component Analysis (PCA) and Multivariate Analysis of Variance (MANOVA) to high-dimensional gene expression data.

PCA vs. MANOVA: Choosing the Right Tool for High-Dimensional Gene Expression Analysis

Abstract

This article provides a comprehensive guide for researchers and bioinformaticians on applying Principal Component Analysis (PCA) and Multivariate Analysis of Variance (MANOVA) to high-dimensional gene expression data. It covers the foundational principles of both methods, detailing their specific applications in genomics—from exploratory data visualization and batch effect detection with PCA to formal hypothesis testing of group differences with MANOVA. The content addresses critical troubleshooting aspects, including managing the curse of dimensionality, correcting for multiple testing, and optimizing power. Finally, it offers a direct comparison of the methods' performance, limitations, and suitability for different research goals, empowering scientists to make informed methodological choices in drug development and clinical research.

Understanding the Core Principles: When to Use PCA vs. MANOVA in Genomics

In high-dimensional gene expression analysis, researchers must navigate a complex landscape of statistical techniques to extract meaningful biological insights. Principal Component Analysis (PCA) and Multivariate Analysis of Variance (MANOVA) represent two fundamental but distinct approaches, serving exploratory and confirmatory data analysis goals, respectively. This guide provides an objective comparison of these methodologies, supported by experimental data and detailed protocols, to inform their application in genomic research and drug development. Framed within the broader thesis of optimizing analytical workflows, we contrast the unsupervised dimensionality reduction capabilities of PCA against the supervised group difference testing of MANOVA, highlighting their complementary roles in the research pipeline.

Core Conceptual Frameworks and Mathematical Foundations

1.1 Exploratory Data Analysis with PCA Principal Component Analysis is an unsupervised dimensionality reduction technique that transforms high-dimensional data into a set of linearly uncorrelated variables called principal components. These components are ordered so that the first few retain most of the variation present in the original dataset [1]. PCA operates on the original feature matrix, such as gene expression values, and functions by identifying new axes that maximize variance through eigenvalue decomposition of the covariance or correlation matrix [2]. The mathematical goal is an orthogonal transformation that converts potentially correlated variables into a new coordinate system of principal components, where the greatest variance lies on the first coordinate, the second greatest variance on the second coordinate, and so forth. This makes PCA particularly valuable for initial data exploration, noise reduction, and visualizing the overall structure of genomic data.

1.2 Confirmatory Analysis with MANOVA Multivariate Analysis of Variance is a supervised statistical test that extends ANOVA to scenarios with multiple dependent variables. It assesses whether there are statistically significant differences between three or more groups of explanatory variables across multiple outcome variables simultaneously [3]. Whereas ANOVA tests group differences on a single continuous outcome, MANOVA evaluates differences on a combination of outcome variables, making it ideal for testing predefined hypotheses about group separations. The test compares population mean vectors; for example, it can test whether different experimental treatments produce different responses across multiple gene expression profiles. MANOVA works by calculating within-group and between-group covariance matrices, with several test statistics available for significance testing, including Wilks' Lambda, Pillai's Trace, Hotelling's Trace, and Roy's Largest Root [3] [1].

Comparative Analysis: PCA vs. MANOVA

Table 1: Key Differences Between PCA and MANOVA

| Characteristic | Principal Component Analysis | Multivariate Analysis of Variance |

|---|---|---|

| Primary Goal | Exploratory dimensionality reduction and visualization | Confirmatory testing of group differences on multiple outcomes |

| Analysis Type | Unsupervised | Supervised |

| Input Data | Original feature matrix | Multiple dependent variables with group structure |

| Key Output | Principal components that maximize variance | Test statistics for significant group differences |

| Variable Role | No distinction between dependent/independent variables | Clear distinction between dependent and independent variables |

| Data Structure | Effective for linear data structures | Requires categorical independent variables |

| Interpretation | Identifies dominant patterns and data structure | Determines if groups have different population mean vectors |

| Common Applications | Initial data exploration, outlier detection, clustering | Hypothesis testing, experimental group comparisons |

2.1 Divergent Analytical Goals and Applications The fundamental distinction lies in their analytical purposes: PCA serves exploratory data analysis by revealing the inherent structure of data without pre-existing hypotheses, while MANOVA serves confirmatory data analysis by testing specific hypotheses about group differences [4]. In gene expression studies, PCA might help researchers discover previously unknown sample clusters or identify dominant patterns of gene co-expression across all samples [5]. In contrast, MANOVA would formally test whether predefined sample groups show statistically significant differences in their multivariate gene expression profiles.

2.2 Technical Requirements and Data Structures PCA requires a continuous data matrix without missing values and operates effectively on linear data structures [2]. MANOVA requires categorical independent variables and continuous dependent variables that meet assumptions of multivariate normality, homogeneity of covariance matrices, and independence of observations [3]. The techniques also differ in their outputs: PCA produces principal components that can be visualized in lower-dimensional space, while MANOVA provides test statistics that determine whether to reject null hypotheses about group equality.

Experimental Protocols and Applications in Gene Expression Analysis

3.1 PCA Protocol for Gene Expression Microarray Data The standard workflow for PCA in gene expression analysis involves specific steps to ensure robust results:

Data Preprocessing: Begin with normalized gene expression data from microarray or RNA-seq experiments. For the Affymetrix Human U133A microarray platform, this includes quality control checks using metrics like Relative Log Expression to identify problematic arrays [5].

Data Standardization: Standardize the data matrix to have mean zero and unit variance for each gene to prevent highly expressed genes from dominating the analysis.

Covariance Matrix Computation: Calculate the covariance matrix of the standardized expression data to understand how genes vary together.

Eigenvalue Decomposition: Perform eigenvalue decomposition of the covariance matrix to obtain eigenvectors and eigenvalues. The eigenvectors represent the principal components, while the eigenvalues indicate the variance explained by each component.

Component Selection: Select the first 2-3 principal components for visualization, or use scree plots to determine how many components to retain for further analysis. In gene expression studies, the first three PCs typically explain approximately 36% of the total variance [5].

Interpretation: Interpret the principal components by examining the loading scores to identify which genes contribute most to each component. Biologically relevant interpretations emerge when components separate known sample types.

Application Example: In a study analyzing 5,372 samples from 369 different tissues, cell lines, and disease states, the first three PCs separated hematopoietic cells, malignant samples, and neural tissues, respectively [5]. The fourth PC correlated with an array quality metric, representing measurement noise. This demonstrates PCA's utility in identifying major biological and technical patterns in large, heterogeneous datasets.

3.2 MANOVA Protocol for Differential Expression Analysis The MANOVA protocol for testing group differences in gene expression profiles involves:

Experimental Design: Define clear experimental groups with adequate sample sizes. For example, testing the effect of three different medications on both weight change and cholesterol levels [3].

Assumption Checking: Verify multivariate normality using tests such as Mardia's test, and check homogeneity of covariance matrices using Box's M test [1].

Test Statistic Selection: Choose an appropriate test statistic based on data characteristics. Wilks' Lambda is most commonly used and is calculated as:

Wilks' Lambda = |E| / |T|

where E is the within-group covariance matrix and T is the total covariance matrix [3].

Hypothesis Testing: Formulate null and alternative hypotheses. For example:

- H₀: The mean vectors of gene expression profiles are equal across all treatment groups.

- H₁: At least one treatment group has a different mean vector of gene expression profiles.

Significance Determination: Convert the test statistic to an F-statistic and obtain a p-value using statistical software. A significance threshold of α = 0.05 is commonly used.

Post-hoc Analysis: If significant differences are found, conduct post-hoc tests to determine which specific groups differ.

Application Example: In a study of sugarcane quality parameters, researchers used MANOVA Biplot to determine that pre-harvest wilting treatments did not significantly alter quality metrics despite a strong correlation between quality variables such as Brix, Pol, and juice purity [6]. This demonstrates MANOVA's ability to test specific hypotheses about treatment effects on multiple correlated outcome variables.

Integrated Analytical Workflow for Genomic Studies

The relationship between exploratory and confirmatory analysis in genomic studies follows a logical progression that can be visualized as a workflow:

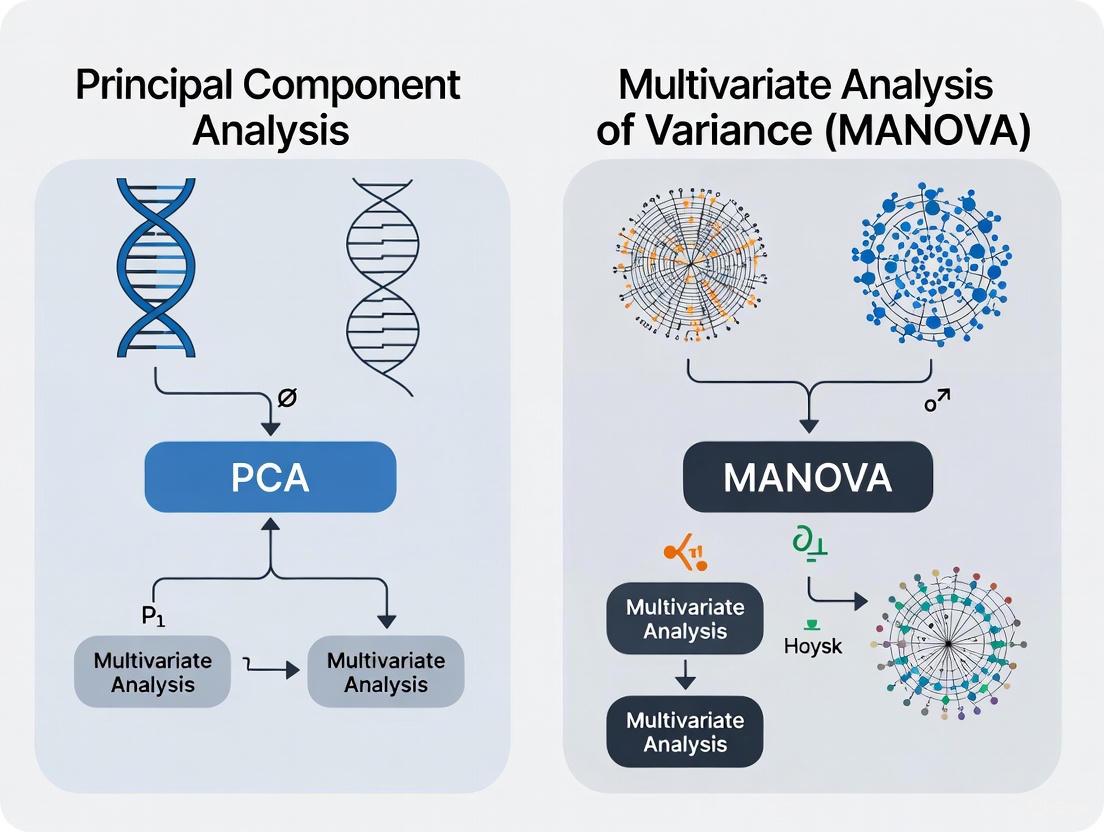

Figure 1: Integrated analytical workflow showing the complementary relationship between PCA and MANOVA in genomic studies.

This workflow illustrates how exploratory and confirmatory analyses are not in opposition but rather work together in a complementary fashion [7]. PCA helps generate hypotheses by revealing patterns in the data, while MANOVA formally tests these hypotheses using rigorous statistical frameworks.

Limitations, Considerations, and Alternative Approaches

5.1 Limitations of PCA PCA has several important limitations in gene expression analysis. It assumes linear relationships between variables, which may not capture complex biological interactions [2]. The technique is sensitive to sample composition; studies have shown that the specific principal components identified depend strongly on the sample distribution in the dataset [5]. When a dataset contains many samples from a particular tissue type, that tissue may dominate early principal components regardless of its biological significance. Additionally, PCA may fail to detect biologically relevant information embedded in higher-order components, particularly for tissue-specific information that remains in the residual space after subtracting the first three PCs [5].

5.2 Limitations of MANOVA MANOVA requires meeting several statistical assumptions that can be challenging with genomic data. The test assumes multivariate normality, homogeneity of covariance matrices, and independence of observations [3]. Violations of these assumptions can lead to inaccurate results. MANOVA also becomes increasingly complex to interpret with many dependent variables, and it provides an overall test of significance without immediately indicating which specific variables drive group differences.

5.3 Alternative and Complementary Methods Several alternative approaches address limitations of both PCA and MANOVA:

Canonical Variates Analysis: Particularly effective for designed experiments with replicates, as it enhances group discrimination by keeping subjects belonging to the same group close together in the transformed space [8].

t-Distributed Stochastic Neighbor Embedding: A nonlinear dimensionality reduction technique particularly effective for visualizing high-dimensional gene expression data and identifying clusters [9].

PCA-Projected F-test: Combines the dimensionality reduction of PCA with rigorous statistical testing, providing better empirical power performance than classical MANOVA Wilks' Lambda-test in high-dimensional settings with small sample sizes [9].

Table 2: Research Reagent Solutions for Gene Expression Analysis

| Reagent/Resource | Function in Analysis | Application Context |

|---|---|---|

| Affymetrix Microarray Platforms | Genome-wide expression profiling | Generating high-dimensional gene expression data [5] |

| R Statistical Software | Implementation of PCA, MANOVA, and related methods | Primary tool for statistical analysis and visualization [5] [6] |

| NCSS Multivariate Analysis Module | Commercial software for MANOVA, PCA, and other multivariate tests | User-friendly implementation of complex statistical models [1] |

| aomisc R Package | Provides Canonical Variates Analysis functions | Enhanced group discrimination for designed experiments [8] |

| vegan R Package | Community ecology package with ordination methods | PCA implementation and biodiversity analysis [8] |

PCA and MANOVA serve distinct but complementary roles in high-dimensional gene expression analysis. PCA excels as an exploratory tool for visualizing data structure, identifying patterns, and reducing dimensionality, while MANOVA provides rigorous confirmatory testing for group differences across multiple outcome variables. The most effective analytical strategies employ both techniques sequentially: using PCA to generate hypotheses from complex genomic data, then applying MANOVA to formally test these hypotheses within a statistical framework. Understanding the strengths, limitations, and proper applications of each method enables researchers to draw more reliable biological conclusions from complex gene expression datasets, ultimately advancing drug development and genomic science.

In high-dimensional gene expression analysis, researchers are often faced with the challenge of extracting meaningful biological signals from datasets where the number of variables (genes) far exceeds the number of observations (samples). This "large p, small n" problem necessitates robust dimensionality reduction techniques that can uncover underlying patterns while managing computational complexity. Principal Component Analysis (PCA) and Multivariate Analysis of Variance (MANOVA) represent two fundamentally different approaches for handling multivariate data. This guide provides an objective comparison of these methodologies, examining their performance characteristics, statistical power, and practical applicability in genomic research to help scientists select the appropriate tool for their analytical needs.

Understanding the Core Methodologies

Principal Component Analysis (PCA): A Dimension Reduction Workhorse

PCA is an unsupervised dimensionality reduction technique that transforms high-dimensional data into a new coordinate system comprised of orthogonal principal components (PCs). These components are linear combinations of the original variables, ordered such that the first PC captures the maximum possible variance in the data, the second PC captures the next highest variance while being orthogonal to the first, and so on [10].

The mathematical foundation of PCA involves several key steps. First, the data is standardized to have zero mean and unit variance, ensuring that variables with larger scales do not disproportionately influence the results. Next, the covariance matrix is computed to capture the relationships between all pairs of variables. Eigen decomposition of this covariance matrix yields eigenvectors (which define the directions of the principal components) and eigenvalues (which represent the amount of variance explained by each component) [10]. The top k eigenvectors are selected based on their corresponding eigenvalues, effectively projecting the data onto a lower-dimensional subspace while preserving the maximal variance structure.

In genetic association studies, PCA has demonstrated particular utility for analyzing multiple correlated phenotypes. Contrary to widespread practice, research has shown that testing only the top PCs often has low power, whereas combining signals across all PCs can significantly improve power to detect genetic variants with opposite effects on positively correlated traits and variants exclusively associated with a single trait [11].

MANOVA: The Traditional Multivariate Approach

MANOVA represents the traditional multivariate generalization of ANOVA, designed to test for statistically significant differences between groups across multiple dependent variables simultaneously. The method tests whether the mean vectors of the groups are equal, while accounting for correlations between response variables [12]. MANOVA models the total variance-covariance matrix by partitioning it into components attributable to different experimental factors and their interactions, followed by hypothesis testing typically using statistics such as Wilks' Lambda, Pillai's Trace, or Hotelling's T².

However, MANOVA faces fundamental limitations when applied to high-dimensional biological data. The method has strict requirements for sample size, demanding more observations than variables—a condition rarely met in genomic studies where thousands of genes are measured across relatively few samples [12] [13]. This limitation arises from the need to estimate a full covariance matrix, which becomes singular when the number of variables exceeds the number of observations. Additionally, MANOVA assumes multivariate normality, homogeneity of covariance matrices, and independence of observations—assumptions frequently violated in high-throughput genomic data [12].

Direct Performance Comparison: PCA vs. MANOVA

Table 1: Methodological Comparison of PCA and MANOVA for High-Dimensional Data Analysis

| Characteristic | PCA | MANOVA |

|---|---|---|

| Data Requirements | No strict sample size requirements | Requires more samples than variables |

| Dimensionality Handling | Excellent for high-dimensional data ("large p, small n") | Fails with high-dimensional data due to singular covariance matrices |

| Statistical Power | High when combining all components [11] | Limited with high-dimensional data |

| Implementation Complexity | Low; efficient algorithms available | High; requires regularization for high-dimensional data |

| Interpretability | Components may lack biological meaning | Direct group difference testing |

| Assumptions | Few assumptions beyond linearity | Multivariate normality, homogeneity of covariance matrices |

| Multiple Testing Burden | Reduced through dimension reduction | Severe without prior dimension reduction |

Table 2: Experimental Performance Comparison Across Biological Data Types

| Application Domain | PCA Performance | MANOVA Performance | Key Findings |

|---|---|---|---|

| Genetic Association Studies | Powerful for detecting pleiotropic variants [11] | Not directly applicable without modification | Combined-PC approach showed near-optimal power across scenarios |

| Imaging Genetics | Extensively used for brain endophenotype analysis [14] | Limited application due to high dimensionality | PCA enables multivariate analysis of correlated neuroimaging phenotypes |

| Metabolomics | ASCA (ANOVA-SCA) effectively handles designed experiments [12] | Requires regularization (rMANOVA) | All ANOVA-based methods detected significant factors, with similar performance |

| Multi-Source Data Integration | Enables integration through shared latent spaces [13] | Cannot directly handle distinct variable spaces | Bayesian multi-way models extend PCA concepts for multi-source data |

Experimental Protocols and Validation

Protocol 1: Evaluating PCA Power in Genetic Association Studies

Objective: To assess the power of different PCA strategies for identifying genetic variants associated with multiple correlated traits.

Methodology:

- Data Generation: Simulate multiple positively correlated, normally distributed phenotypes (Y₁, Y₂, ..., Yₙ) with mean 0 and variance 1, influenced by an unknown variable U and a scaled genotype G (both normally distributed with mean 0 and variance 1).

- Trait Construction: Construct trait vectors using the model: Yᵢ = c∗u + √vᵢ∗g + √(1−c−vᵢ)∗εᵢ, where εᵢ denotes independent random noise normally distributed with mean 0 and variance 1 [11].

- PCA Implementation: Compute principal components of the trait correlation matrix, deriving both top-variance PCs and all PCs.

- Association Testing: Test associations between genotype G and (a) individual traits, (b) top PCs only, and (c) all PCs combined using joint tests.

- Power Calculation: Compute statistical power using noncentral chi-square distributions with appropriate degrees of freedom [11].

Key Findings: Analysis of up to 100 correlated traits demonstrated that testing only the top PCs often has low power, whereas combining signals across all PCs substantially improves power, particularly for detecting genetic variants with opposite effects on positively correlated traits and variants exclusively associated with a single trait [11].

Protocol 2: Comparing Multivariate Methods in Metabolomics

Objective: To evaluate the performance of ANOVA-based multivariate methods (ASCA, rMANOVA, GASCA) for determining significant experimental factors and relevant variables in metabolomic studies.

Methodology:

- Experimental Design: Generate two LC-MS datasets with different complexity: (1) yeast samples with two extraction protocols (single factor), and (2) zebrafish embryos exposed to two endocrine disruptor chemicals at two concentration levels (multiple factors) [12].

- Data Preprocessing: Process raw chromatograms to obtain total ion current (TIC) profiles and integrated peak areas.

- Method Application: Apply ASCA, rMANOVA, and GASCA to assess statistical significance of experimental factors using permutation tests (typically 10,000 permutations).

- Variable Selection: Identify relevant variables (potential markers) contributing most to factor effects.

- Validation: Compare results with standard methods (univariate tests, PLS-DA with VIP scores) to evaluate reliability [12].

Key Findings: All three ANOVA-based methods successfully detected statistically significant factors, with ASCA and rMANOVA producing p-values at the lower threshold of permutations. GASCA showed more variation between ionization modes but identified relevant variables that strongly aligned with those detected by PLS-DA, suggesting higher reliability for biomarker discovery [12].

Visualization of Analytical Workflows

PCA Workflow for High-Dimensional Biological Data

MANOVA Limitations and Modern Extensions

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Research Reagents and Computational Tools for Multivariate Analysis

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| R Statistical Environment | Software Platform | Comprehensive statistical computing and graphics | Implementation of PCA, MANOVA, and specialized packages for omics data |

| Python (scikit-learn, glycowork) | Programming Language | Machine learning and compositional data analysis | PCA implementation and specialized analysis pipelines for glycomics [15] |

| ASCA+ Toolkit | Chemometrics Package | ANOVA-simultaneous component analysis | Designed metabolomic studies with multiple experimental factors [12] |

| Multi-Way CCA | Bayesian Model | Multi-way, multi-source data integration | Integrated analysis of metabolic and gene expression profiles [13] |

| KernelDEEF | Computational Method | Completely data-driven profile comparison | Conversion of single-cell expression data to donor-by-feature matrices [16] |

| mAP Framework | Statistical Framework | Profile strength and similarity evaluation | Assessment of phenotypic activity in high-dimensional profiling data [17] |

The comparative analysis reveals distinct advantages and limitations for both PCA and MANOVA in high-dimensional gene expression research. PCA emerges as the more versatile and practical approach for exploratory analysis and dimension reduction in typical "large p, small n" scenarios, while MANOVA and its modern extensions offer rigorous hypothesis testing frameworks when methodological assumptions can be satisfied.

For researchers designing genomic studies, the following evidence-based recommendations are provided:

Prioritize PCA-based approaches for initial exploratory analysis of high-dimensional genomic data, particularly when sample sizes are limited relative to the number of variables measured.

Implement combined-PC testing strategies rather than analyzing only top-variance components, as this approach maintains power to detect diverse genetic association patterns [11].

Consider regularized MANOVA variants or ASCA when analyzing data from designed experiments with multiple factors, as these methods balance statistical rigor with practical applicability to high-dimensional data [12].

Adopt multi-source integration methods when combining heterogeneous data types (e.g., transcriptomics and metabolomics), as these specialized techniques can reveal biological insights not apparent from single-source analyses [13].

The choice between PCA and MANOVA ultimately depends on specific research objectives, data characteristics, and analytical requirements. PCA excels in dimension reduction and pattern discovery, while MANOVA and its extensions provide formal statistical testing for experimental factors. Understanding these complementary strengths enables researchers to select optimal strategies for extracting meaningful biological insights from complex genomic datasets.

Multivariate Analysis of Variance (MANOVA) is a sophisticated statistical procedure used to determine whether there are statistically significant differences between the means of multiple groups across several dependent variables simultaneously. As an extension of Analysis of Variance (ANOVA), MANOVA allows researchers to analyze the effect of one or more independent variables on multiple continuous dependent variables while considering the interrelationships between these outcome measures. This multivariate technique is particularly valuable in complex research domains like genomics and drug development, where phenomena are typically influenced by multiple correlated outcome measures rather than isolated variables.

The fundamental principle behind MANOVA is its ability to combine multiple dependent variables into a weighted linear composite, creating a new "latent variate" upon which group differences are tested. This approach provides several advantages over conducting multiple ANOVAs, including enhanced statistical power for detecting specific patterns and better control over experiment-wise Type I error rates. In high-dimensional biological research, such as gene expression analysis, MANOVA offers a framework for understanding how experimental conditions collectively influence multiple molecular outcomes, providing a more holistic view of treatment effects than univariate methods.

Fundamental Concepts and Comparison with ANOVA

Core Differences Between MANOVA and ANOVA

MANOVA expands upon the traditional ANOVA framework by accommodating multiple dependent variables in a single analysis. While ANOVA assesses whether group means differ on a single outcome variable, MANOVA evaluates whether groups differ on a combination of several outcome measures. This fundamental distinction creates significant implications for research design, interpretation, and application across scientific domains.

Table 1: Key Differences Between ANOVA and MANOVA

| Parameter | ANOVA | MANOVA |

|---|---|---|

| Full Name | Analysis of Variance | Multivariate Analysis of Variance |

| Dependent Variables | Single continuous dependent variable | Two or more continuous dependent variables |

| Objective | Determine differences in group means for one outcome | Determine independent variable effects on multiple outcomes and their interactions |

| Nature | Parametric | Multivariate parametric |

| Test Statistics | F-statistic | Wilks' Lambda, Pillai's Trace, Hotelling-Lawley Trace, Roy's Largest Root |

| Variance Assessment | Assesses ratio between group mean differences and within-group variance | Optimally combines variables to enhance group differences using variance-covariance |

| Error Rate Control | Individual test error rate | Controls experiment-wise error rate for multiple dependent variables |

When to Choose MANOVA Over ANOVA

MANOVA provides particular advantages in specific research scenarios. It is ideally suited when dependent variables are moderately correlated conceptually or statistically, as the technique leverages these relationships to identify patterns that might remain hidden in separate univariate analyses. For example, in pharmaceutical research, MANOVA could simultaneously analyze how different drug formulations affect multiple efficacy endpoints (e.g., biomarker levels, symptom scores, functional measures) while accounting for their natural correlations.

The method offers greater statistical power when analyzing correlated dependent variables, enabling detection of smaller effects that might be missed by individual ANOVA tests. This advantage stems from MANOVA's ability to account for variance-covariance structures in the data. Additionally, by conducting one multivariate test instead of multiple univariate tests, researchers maintain better control over the family-wise error rate, reducing the likelihood of false positive findings when examining multiple outcome measures.

Mathematical Foundation of MANOVA

The MANOVA Model

The MANOVA procedure operates on the general linear model framework, expressed mathematically as:

Y = βX + ε

Where Y is an n × m matrix of dependent variables (n observations on m response variables), X is an n × p matrix of predictor variables, β is a p × m matrix of regression coefficients, and ε is an n × m matrix of residuals. This formulation extends the univariate general linear model to accommodate multiple response variables simultaneously.

The null hypothesis tested in MANOVA is:

H₀: μ₁ = μ₂ = ⋯ = μₖ

Where μᵢ represents the vector of means for the i-th group across all dependent variables. The alternative hypothesis states that at least one group mean vector differs from the others. MANOVA evaluates this hypothesis by partitioning the total variance-covariance matrix into between-groups and within-groups components, analogous to how ANOVA partitions sum of squares.

Test Statistics in MANOVA

MANOVA employs several test statistics to evaluate multivariate significance, each with particular strengths and applications:

Wilks' Lambda (Λ) : The most commonly reported MANOVA statistic, calculated as the ratio of the determinant of the within-groups sum of squares and cross-products matrix to the determinant of the total sum of squares and cross-products matrix: Λ = |W|/|T| = |W|/|B + W|, where W is the within-group matrix and B is the between-group matrix. Smaller values of Wilks' Lambda indicate stronger evidence against the null hypothesis.

Pillai's Trace : The sum of the explained variances of the discriminant functions, calculated as V = trace[B(T)⁻¹]. This statistic is generally more robust to violations of assumptions, particularly when sample sizes are small or homogeneity of covariance is questionable.

Hotelling-Lawley Trace : The sum of the eigenvalues of the matrix BW⁻¹, representing the ratio of between-groups to within-groups variation. This statistic is useful when group sizes are unequal but assumptions are met.

Roy's Largest Root : The largest eigenvalue of BW⁻¹, which tests only the first discriminant function. This statistic is most powerful when one dominant function separates groups but is sensitive to assumption violations.

Table 2: MANOVA Test Statistics and Their Formulas

| Test Statistic | Formula | Interpretation |

|---|---|---|

| Wilks' Lambda | Λ = |W|/|T| = |W|/|B + W| | Smaller values indicate significant group differences |

| Pillai's Trace | V = trace[B(T)⁻¹] | More robust to assumption violations |

| Hotelling-Lawley Trace | U = trace(BW⁻¹) | Ratio of between to within-group variation |

| Roy's Largest Root | θ = λₘₐₓ(BW⁻¹) | Tests only the first and largest discriminant function |

MANOVA in Gene Expression Analysis

Applications in Genomic Research

In high-dimensional gene expression analysis, MANOVA offers distinct advantages for detecting differentially expressed genes across multiple experimental conditions. Traditional approaches often summarize multiple probe-level measurements into single scores before conducting differential expression analysis, risking information loss and potentially reaching inaccurate conclusions. MANOVA addresses this limitation by simultaneously analyzing multiple probe-level measurements, preserving the multivariate nature of the data and potentially increasing detection power.

For oligonucleotide arrays like Affymetrix GeneChips, where multiple probes measure each gene's mRNA abundance, robustified MANOVA approaches have been developed specifically for detecting differentially expressed genes in both one-way and two-way experimental designs. These methods can be extended to identify special patterns of gene expression through profile analysis across multiple populations, utilizing probe-level data without restrictive distributional assumptions through permutation-based testing.

MANOVA vs. PCA in High-Dimensional Data

While both MANOVA and Principal Component Analysis (PCA) handle multivariate data, they serve distinct purposes in gene expression research. PCA is primarily a dimension-reduction technique that transforms correlated variables into a smaller set of uncorrelated principal components, capturing maximum variance in the data. In contrast, MANOVA is a group-comparison method that tests whether population means differ across multiple dependent variables.

In practice, these methods can be complementary. PCA might precede MANOVA to reduce dimensionality while preserving data structure, especially when dealing with thousands of genes where MANOVA would be computationally prohibitable. However, when focusing on specific gene sets or pathways, MANOVA directly tests experimental effects on multiple correlated expression measures, potentially detecting coordinated expression changes that would be missed in univariate analyses.

Figure 1: Comparative Workflow of PCA and MANOVA in Gene Expression Analysis

Experimental Design and Protocols

Implementing MANOVA in Gene Expression Studies

The application of MANOVA to gene expression data requires careful experimental design and execution. A typical protocol involves:

1. Probe-Level Data Preparation: Rather than summarizing probe-level data into single expression values, maintain multiple probe measurements as dependent variables. This preserves the multivariate nature of the data and allows MANOVA to detect patterns across probes.

2. Experimental Design Specification: For one-way MANOVA, different experimental conditions (e.g., treatment vs. control) serve as the grouping variable. For two-way MANOVA, multiple factors (e.g., treatment type and time point) can be incorporated with their interaction terms.

3. Assumption Checking: Verify multivariate normality using Mardia's test or Q-Q plots. Assess homogeneity of variance-covariance matrices using Box's M test (with significance set at α=.001 due to sensitivity). Check for multicollinearity among dependent variables, with correlations ideally below r=.90.

4. Robustified MANOVA Implementation: Apply permutation-based testing when distributional assumptions are violated, as implemented in robustified MANOVA packages specifically designed for gene expression data.

5. Interpretation and Follow-up: Upon finding significant multivariate effects, conduct appropriate post-hoc analyses to identify which specific genes and conditions contribute to the significant results, using methods like discriminant function analysis or protected univariate ANOVAs.

Research Reagent Solutions for MANOVA Experiments

Table 3: Essential Research Reagents and Materials for Gene Expression MANOVA Studies

| Reagent/Material | Function in MANOVA Experiments |

|---|---|

| Affymetrix GeneChip Arrays | Platform for simultaneous measurement of multiple probe-level expressions for each gene |

| RNA Extraction Kits | Isolation of high-quality RNA for accurate gene expression measurement |

| cDNA Synthesis Kits | Reverse transcription of RNA to cDNA for hybridization to arrays |

| Hybridization Reagents | Facilitate binding of cDNA to array probes for accurate signal detection |

| Statistical Software (R, SPSS, SAS) | Implementation of MANOVA and robustified MANOVA procedures with permutation tests |

| Quantile Normalization Tools | Standardization of data distributions for assumption compliance |

Assumptions and Methodological Considerations

Critical Assumptions for Valid MANOVA

MANOVA relies on several key assumptions that researchers must verify before interpreting results:

Multivariate Normality: Each dependent variable should follow a normal distribution within groups. While MANOVA is somewhat robust to minor violations, severe non-normality can affect test validity. Transformation of variables or use of non-parametric alternatives may be necessary when this assumption is violated.

Homogeneity of Variance-Covariance Matrices: The population variance-covariance matrices across groups should be equal. This multivariate extension of homogeneity of variance is tested using Box's M statistic, with violations potentially leading to inflated Type I error rates.

Absence of Multicollinearity: Dependent variables should be moderately correlated but not too highly correlated (generally r < .90). Extreme multicollinearity can cause computational problems and interpretation difficulties.

Independence of Observations: All cases should be independent of each other, with no systematic pattern in participant selection or data collection.

Adequate Sample Size: Each group should contain more cases than the number of dependent variables, with larger samples improving power and robustness to assumption violations. A general guideline is N > (p + m), where N is sample size per group, p is number of dependent variables, and m is number of groups.

Addressing Common Challenges in Genomic Applications

High-dimensional gene expression data presents unique challenges for MANOVA implementation. When the number of genes (dependent variables) exceeds sample size, traditional MANOVA becomes infeasible due to rank deficiency in the variance-covariance matrix. In such cases, regularized MANOVA approaches or preliminary dimension reduction techniques like PCA may be employed.

For detecting differentially expressed genes, robustified MANOVA methods utilizing permutation tests offer advantages when distributional assumptions are questionable. These approaches have demonstrated superior performance in maintaining false discovery rates while increasing power compared to univariate methods, particularly when the number of experimental groups is small.

Comparative Performance in High-Dimensional Biology

Advantages of MANOVA in Detecting Multivariate Patterns

MANOVA offers several distinct advantages over univariate approaches in genomic and pharmaceutical research:

Enhanced Pattern Detection: By considering multiple dependent variables simultaneously, MANOVA can identify treatment effects that manifest across combinations of variables rather than in individual measures. For example, a drug might not significantly affect individual biomarker levels but could produce a detectable pattern across multiple correlated biomarkers.

Type I Error Control: When analyzing multiple outcome variables, conducting separate ANOVAs inflates the family-wise error rate. MANOVA maintains the experiment-wise error rate at the nominal level (e.g., α=.05) by testing all outcomes simultaneously.

Increased Power for Correlated Outcomes: With moderately correlated dependent variables, MANOVA often demonstrates greater statistical power to detect group differences than separate univariate tests, particularly when group differences manifest in the covariance structure rather than in mean differences on individual variables.

Limitations and Alternative Approaches

Despite its advantages, MANOVA presents certain limitations that researchers should consider:

Interpretation Complexity: Results from MANOVA can be more challenging to interpret than simple ANOVA findings, requiring understanding of multivariate statistics and potentially follow-up analyses.

Sensitivity to Assumption Violations: MANOVA is generally more sensitive to violations of assumptions like multivariate normality and homogeneity of variance-covariance matrices than univariate ANOVA.

Sample Size Demands: As the number of dependent variables increases, MANOVA requires larger sample sizes to maintain statistical power and validity.

Limited Suitability for Ultra-High-Dimensional Data: In studies with thousands of genes, traditional MANOVA becomes computationally prohibitable, necessitating dimension reduction or regularized multivariate methods.

When MANOVA assumptions are severely violated or data dimensionality is extremely high, alternative approaches such as Regularized MANOVA, Distance-Based Methods (PERMANOVA), or Machine Learning Algorithms may be more appropriate for detecting multivariate group differences in gene expression data.

In the field of genomics, researchers frequently encounter a significant analytical challenge known as the "Large p, Small n" problem. This scenario occurs when the number of features or variables (p), such as genes, vastly exceeds the number of observations or samples (n). Gene expression studies from technologies like microarrays and RNA sequencing routinely generate data with tens of thousands of genes from only dozens or hundreds of samples, creating substantial statistical challenges for meaningful analysis. This dimensionality problem is particularly pronounced in single-cell RNA sequencing (scRNA-seq) data, where count matrices are "inherently high-dimensional and sparse" [18]. The analytical difficulties arising from this imbalance include increased risk of overfitting, where models memorize noise rather than learning true biological signals; reduced generalizability of findings; and computational inefficiencies. Furthermore, the presence of many irrelevant or redundant features can obscure the detection of genuinely important biological signals, complicating the identification of disease-relevant genes and pathways [19] [20].

Within this challenging landscape, dimensionality reduction techniques become essential tools for extracting meaningful biological insights. Among these, Principal Component Analysis (PCA) and Multivariate Analysis of Variance (MANOVA) represent two fundamentally different approaches to handling high-dimensional data. PCA operates as an unsupervised method that seeks to capture maximum data variance through linear combinations of the original variables, while MANOVA serves as a supervised technique for testing mean differences across groups across multiple response variables. The core challenge with MANOVA in high-dimensional settings is its fundamental requirement that the total sample size must be larger than the data dimension, a condition frequently violated in gene expression studies [9]. This article provides a comprehensive comparison of these methodological approaches within the context of gene expression analysis, examining their relative strengths, limitations, and appropriate applications for addressing the "Large p, Small n" challenge.

Analytical Framework: PCA versus MANOVA

Theoretical Foundations and Methodologies

Principal Component Analysis (PCA) is a cornerstone dimensionality reduction technique that transforms high-dimensional data into a new coordinate system comprised of orthogonal components that sequentially capture the maximum possible variance. The mathematical foundation of PCA relies on eigenvalue decomposition of the covariance matrix or singular value decomposition (SVD) of the data matrix. For a count matrix (X) with dimensions (m \times n) (where m represents cells and n represents genes), the SVD is expressed as (X = U\Sigma V^\top), where the principal components are derived from the columns of (V) [18]. PCA functions as an unsupervised method, meaning it does not utilize sample group labels in its dimensionality reduction process. This characteristic makes it particularly valuable for exploratory data analysis, visualization, and noise reduction before conducting formal statistical testing.

In contrast, Multivariate Analysis of Variance (MANOVA) represents a supervised statistical technique that extends ANOVA to handle multiple dependent variables simultaneously. The method tests the hypothesis that the population means of different groups are equal across multiple response variables, essentially examining whether group classifications explain a significant portion of the variance in the data. The classical MANOVA approach, particularly through tests like Wilks' Lambda, faces fundamental limitations in high-dimensional settings because it "requires a larger total sample size than the data dimension and mostly relies on an asymptotic null distribution" [9]. This requirement becomes problematic in gene expression studies where the number of genes (p) typically far exceeds the number of samples (n).

Comparative Performance in High-Dimensional Settings

Recent research has directly addressed the performance limitations of traditional MANOVA when applied to high-dimensional gene expression data. A novel methodology that combines t-SNE visualization with a PCA-projected exact F-test has demonstrated superior performance compared to classical MANOVA. In a Monte Carlo study, this projected F-test exhibited "better empirical power performance than the classical Wilks' Lambda-test" derived from MANOVA [9]. The key advantage of this approach lies in its accommodation of high-dimensional data with small sample sizes while maintaining an exact null distribution for the test statistic.

The following table summarizes the core methodological differences and performance characteristics of PCA, MANOVA, and the emerging hybrid approach:

Table 1: Comparison of Dimensionality Reduction and Testing Methods for High-Dimensional Gene Expression Data

| Feature | PCA | Classical MANOVA | PCA-Projected F-test |

|---|---|---|---|

| Analysis Type | Unsupervised | Supervised | Supervised |

| Primary Function | Variance capture, dimensionality reduction | Multi-group mean comparison | Multi-group mean comparison |

| Sample Size Requirement | No strict minimum | Sample size > data dimension | Accommodates small sample sizes |

| Theoretical Basis | Eigenvalue decomposition, SVD | Likelihood ratio tests (e.g., Wilks' Lambda) | Exact F-distribution on projected data |

| High-Dimensional Performance | Effective for visualization, noise reduction | Performance degrades with high dimensionality | Maintains power in high dimensions |

| Key Limitation | Does not utilize group information | Relies on asymptotic distributions | Requires initial dimension reduction step |

The superiority of the PCA-projected F-test approach stems from its two-step methodology: first employing dimension reduction (often through t-SNE or PCA) to visualize cluster structures, then applying rigorous statistical testing on the reduced space to validate differences between identified clusters. This integrated approach "bridges the gap between exploratory and confirmatory data analysis" while enhancing interpretability of complex gene expression data [9].

Experimental Protocols and Benchmarking Studies

Experimental Designs for Method Evaluation

Benchmarking studies for dimensionality reduction techniques typically employ carefully designed experiments using both labeled and unlabeled datasets with known ground truths. For labeled datasets, such as the Sorted PBMC Dataset (2,882 cells, 7,174 genes) and the 50/50 Jurkat:293T Cell Mixture Dataset (~3,400 cells), clustering accuracy is measured using the Hungarian algorithm and Mutual Information [18]. These metrics evaluate how well the dimensionality-reduced data preserves the known biological structure. For unlabeled datasets, internal validation metrics such as the Dunn Index and Gap Statistic assess cluster separation quality, while the Within-Cluster Sum of Squares (WCSS) quantifies variability preservation [18].

Experimental protocols typically involve applying multiple dimensionality reduction techniques to the same datasets and evaluating their performance across several criteria. For instance, studies have compared standard PCA (using full SVD), randomized SVD-based PCA, and Random Projection methods including Sparse Random Projection (SRP) and Gaussian Random Projection (GRP) [18]. The benchmarking process evaluates not only the computational efficiency but also the effectiveness in downstream analyses, particularly clustering performance and structure preservation.

Key Findings from Comparative Studies

Recent benchmarking studies have revealed several important insights regarding dimensionality reduction methods for high-dimensional gene expression data:

Random Projection (RP) methods have demonstrated competitive performance compared to traditional PCA. In some evaluations, RP "not only surpasses PCA in computational speed but also rivals and, in some cases, exceeds PCA in preserving data variability and clustering quality" [18]. This is particularly valuable for large-scale scRNA-seq studies where computational efficiency is a practical concern.

The projected F-test approach, which combines dimension reduction with rigorous statistical testing, has shown "better empirical power performance than the classical Wilks' Lambda-test" derived from MANOVA, especially in high-dimensional settings with small sample sizes [9].

Alternative feature selection methods specifically designed for high-dimensional genetic data have emerged as valuable alternatives to pure dimension reduction. The copula entropy-based feature selection (CEFS+) approach, which captures full-order interaction gains between features, has demonstrated superior performance in classification tasks, particularly "on high-dimensional genetic datasets" [19].

Knowledge-guided approaches that incorporate biological network information have shown promise in enhancing method performance. For example, the knowledge-slanted random forest integrates protein-protein interaction networks to modify feature selection probabilities, resulting in "improved precision in outcome prediction" compared to conventional methods, especially with very small sample sizes (n ≤ 30) [21].

The following workflow diagram illustrates the relationship between different analytical approaches for addressing the "Large p, Small n" challenge in gene expression studies:

Diagram 1: Analytical Approaches for Large p, Small n Data

Advanced Methodologies Addressing High-Dimensional Challenges

Feature Selection Innovations

Beyond conventional dimensionality reduction techniques, specialized feature selection methods have emerged as powerful alternatives for addressing the "Large p, Small n" challenge. Unlike dimension reduction that transforms features into new components, feature selection identifies informative subsets of original features, maintaining interpretability. The weighted Fisher score (WFISH) approach represents one such innovation that "assigns weights based on gene expression differences between classes" to prioritize biologically significant genes in high-dimensional classification problems [20]. When combined with random forest and k-nearest neighbors classifiers, WFISH has demonstrated lower classification errors compared to existing techniques across multiple benchmark datasets.

Another promising approach, copula entropy-based feature selection (CEFS+), employs a "maximum correlation minimum redundancy strategy for greedy selection" that specifically captures interaction gains between features [19]. This capability is particularly valuable in genomics, where "certain diseases are jointly determined by two or more genes" whose collective value exceeds their individual contributions. In comprehensive evaluations using three classifiers across five datasets, CEFS+ achieved the highest classification accuracy in 10 out of 15 scenarios, with particularly strong performance on high-dimensional genetic datasets.

Integrative Learning and Knowledge-Guided Approaches

Integrative learning represents a paradigm shift in addressing the small sample size problem by jointly analyzing multiple datasets containing the same set of variables. This approach "has the potential to mitigate the challenge of small n and large p" by enhancing the detection of weak yet important signals through aggregated information across studies [22]. The Structured Integrative Learning (SIL) framework further advances this concept by incorporating a priori known graphical structures of features, encouraging "joint selection of features that are connected in the graph" [22]. This integration of biological network information enhances statistical power while accounting for heterogeneity across datasets.

Knowledge-guided methods explicitly incorporate existing biological knowledge to improve analytical performance in high-dimensional settings. The knowledge-slanted random forest exemplifies this approach by using "biological networks as prior knowledge into the model to improve its performance and explainability" [21]. Through a random walk with restart algorithm on protein-protein interaction networks, this method modifies feature selection probabilities during random forest construction, resulting in improved prediction precision and identification of more biologically relevant genes, particularly in scenarios with very small sample sizes (n ≤ 30).

Table 2: Advanced Methodologies for Addressing the "Large p, Small n" Challenge

| Methodology | Core Innovation | Advantages | Representative Applications |

|---|---|---|---|

| Projected F-test | Combines dimension reduction with exact F-test | Superior power to MANOVA; exact null distribution | Cluster validation in t-SNE plots [9] |

| Random Projection | Johnson-Lindenstrauss lemma for dimension reduction | Computational efficiency; preserves pairwise distances | Large-scale scRNA-seq analysis [18] |

| WFISH Feature Selection | Weighted differential expression scoring | Prioritizes biologically informative genes | Binary classification of tumor samples [20] |

| CEFS+ Feature Selection | Copula entropy with interaction capture | Identifies synergistic gene relationships | Disease classification from expression data [19] |

| Structured Integrative Learning | Multi-dataset analysis with graph information | Enhances weak signal detection; accounts for heterogeneity | Cross-study biomarker identification [22] |

| Knowledge-Slanted RF | Biological network-guided feature selection | Improved explainability; small sample performance | Disease-relevant gene identification [21] |

Successfully navigating the "Large p, Small n" challenge requires both methodological sophistication and appropriate data resources. The following table outlines key reagents and resources essential for research in this domain:

Table 3: Essential Research Reagents and Resources for High-Dimensional Gene Expression Analysis

| Resource Type | Specific Examples | Function and Application | Key Characteristics |

|---|---|---|---|

| Reference Datasets | GTEx (Genotype-Tissue Expression) [23] | Pan-tissue transcriptome analysis; benchmark studies | ~17,000 transcriptomes across 54 tissues; age and sex metadata |

| Reference Datasets | Sorted PBMC Dataset [18] | Method benchmarking and validation | 2,882 cells with 7 annotated cell populations |

| Biological Networks | Protein-Protein Interaction (PPI) Networks [21] | Prior knowledge for guided learning; pathway context | Encapsulates known functional relationships between genes |

| Software Tools | kslboruta R package [21] | Implementation of knowledge-slanted feature selection | Integrates PPI networks with random forest algorithm |

| Experimental Controls | Jurkat:293T Cell Mixture [18] | Technical validation of analytical pipelines | ~3,400 cells with known 50:50 mixture ratio |

| Annotation Databases | Gene Ontology, KEGG Pathways [22] | Biological interpretation of results | Curated functional and pathway information |

The "Large p, Small n" problem remains a fundamental challenge in gene expression studies, requiring sophisticated analytical approaches that balance statistical rigor with biological interpretability. While traditional methods like MANOVA face significant limitations in high-dimensional settings, emerging approaches such as the PCA-projected F-test offer superior performance for cluster validation by combining the variance-capturing capability of PCA with exact statistical testing. The continuing evolution of feature selection methods (WFISH, CEFS+) and knowledge-guided frameworks (Structured Integrative Learning, knowledge-slanted random forests) represents a promising direction for enhancing signal detection in small sample contexts while maintaining biological relevance.

For researchers navigating this landscape, the optimal strategy often involves selecting methods aligned with specific research objectives: dimension reduction techniques like PCA and random projection for visualization and noise reduction; projected testing approaches for rigorous hypothesis testing in high dimensions; advanced feature selection for identifying interpretable gene subsets; and integrative methods for boosting power through combined datasets. As the field advances, the integration of biological knowledge with statistical innovation will continue to drive progress in unraveling the complexity of gene expression data within the challenging "Large p, Small n" paradigm.

Principal Component Analysis (PCA) has established itself as a fundamental tool in the exploratory analysis of high-dimensional biological data, particularly in gene expression studies. As a dimensionality reduction technique, PCA transforms high-dimensional datasets into a new set of variables called principal components (PCs), which are linear combinations of the original features ordered by the amount of variance they explain. This transformation allows researchers to visualize the overall structure of complex datasets and identify patterns, clusters, and outliers that might otherwise remain hidden in thousands of dimensions. In the context of high-dimensional gene expression research, PCA provides unsupervised information on the dominant directions of highest variability, enabling investigators to compare these patterns with sample annotations or phenotypic information to detect previously unknown relationships or characterize poorly annotated samples.

The application of PCA extends beyond mere dimensionality reduction to critical quality assessment functions, including the detection of technical artifacts known as batch effects. These are systematic non-biological variations between groups of samples that result from experimental features not of biological interest, such as processing date, technician, or reagent batch. Left undetected, batch effects can confound biological interpretation and lead to spurious discoveries. PCA serves as a primary visual tool for determining whether batch effects exist after applying global normalization methods, allowing researchers to identify when samples cluster by technical rather than biological factors. When applying PCA to gene expression data, the standard approach involves computing principal components from a centered and scaled feature matrix, with the resulting components representing directions of maximum variance in the original data. The visualization of samples in the space defined by the first two principal components then provides a powerful overview of the major sources of variation across all samples and features.

Table 1: Core Dimensionality Reduction Techniques for Batch Effect Detection

| Method | Input Data | Distance Measure | Primary Application | Batch Effect Detection Capability |

|---|---|---|---|---|

| PCA | Original feature matrix | Covariance/correlation matrix | Linear data, feature extraction | Moderate (may miss batch effects that aren't the largest variance source) |

| PCoA | Distance matrix | Various (Bray-Curtis, Jaccard, etc.) | Visualization of inter-sample relationships | Good (flexible distance measures can capture technical variations) |

| NMDS | Distance matrix | Rank-order relations | Complex datasets, nonlinear analysis | Good (preserves rank-order of sample relationships) |

| t-SNE/UMAP | Original feature matrix or distance matrix | Probability distributions | Visualization of complex structures | Excellent (can reveal subtle batch effects) |

PCA Workflow for Batch Effect Identification

Standard PCA Protocol for Quality Assessment

Implementing PCA for batch effect identification requires a systematic workflow to ensure reliable detection of technical artifacts. The first step involves data preprocessing, where the feature data (typically a gene expression matrix with samples as columns and genes as rows) undergoes centering and scaling to ensure all features contribute equally regardless of their original measurement scale. This standardization is crucial when analyzing gene expression data where different genes may exhibit vastly different expression ranges. The computational implementation then involves singular value decomposition (SVD) of the preprocessed data matrix, which decomposes the data into orthogonal matrices that represent the principal components and their loadings. For modern omics datasets containing tens of thousands of features and hundreds of samples, specialized computational approaches are necessary to handle this scale efficiently.

The visualization phase involves projecting samples into the reduced dimensional space defined by the first few principal components, typically PC1 and PC2, which capture the largest proportion of variance in the dataset. In this visualization, each point represents a sample, and the spatial arrangement reveals similarities and differences between samples. Batch effects are identified when samples cluster according to technical factors such as processing date, sequencing batch, or laboratory technician rather than biological variables of interest. The interpretation requires careful examination of the principal component loadings to determine which features (genes) drive the separation between batches. This approach enables researchers to distinguish technical artifacts from true biological signals before proceeding with downstream analyses.

Limitations of Standard PCA and Guided PCA Approach

While standard PCA is valuable for initial data exploration, it possesses a critical limitation for batch effect detection: it identifies linear combinations of variables that contribute maximum variance, which means it may not detect batch effects if they are not the largest source of variability in the data. This limitation is particularly problematic in gene expression studies where strong biological signals (e.g., tissue type, disease status) often dominate the variance structure, potentially obscuring more subtle technical artifacts. Research has demonstrated that when batch effects are not the primary source of variation, traditional PCA methods do not work effectively for their detection, potentially leading to undetected technical confounding.

To address this limitation, guided PCA (gPCA) has been developed as an extension that specifically targets batch effect identification. Unlike standard unsupervised PCA, gPCA incorporates a batch indicator matrix into the analysis, guiding the singular value decomposition to explicitly look for batch effects in the data. The method produces a test statistic (δ) that quantifies the proportion of variance attributable to batch effects by comparing the variance of the first principal component from gPCA to that from unguided PCA. Large values of δ (approaching 1) indicate substantial batch effects, and statistical significance can be assessed through permutation testing. This approach provides a quantitative framework for batch effect detection that surpasses the visual inspection of standard PCA plots, offering greater sensitivity for identifying technical artifacts that might otherwise remain hidden beneath biological variation.

Table 2: Comparison of PCA Approaches for Batch Effect Detection

| Feature | Standard PCA | Guided PCA (gPCA) |

|---|---|---|

| Objective | Identify directions of maximum variance | Specifically detect batch effects |

| Input | Feature matrix only | Feature matrix + batch indicator matrix |

| Detection Method | Visual inspection of PC plots | Quantitative test statistic (δ) |

| Sensitivity | Limited to largest variance sources | Targeted to batch effects regardless of magnitude |

| Output | Qualitative assessment | Quantitative p-value and effect size |

| Best Use Case | Initial exploratory analysis | Formal batch effect testing |

Comparative Performance Evaluation: PCA Versus Alternative Methods

Multivariate Statistical Approaches

Beyond PCA, several multivariate statistical methods offer complementary approaches for batch effect detection and visualization. Principal Coordinate Analysis (PCoA) operates on a distance matrix rather than the original feature matrix, making it suitable for analyzing sample similarities using various distance measures such as Bray-Curtis or Jaccard indices. This flexibility allows PCoA to capture different nuances of interspecies relationships in microbial community studies or technical variations in gene expression datasets. Non-metric Multidimensional Scaling (NMDS) represents another distance-based approach that focuses on preserving the rank-order of sample relationships rather than absolute distances, making it particularly suitable for complex datasets with nonlinear structures where traditional PCA may underperform.

Recent research has introduced PERMANOVA (Permutational Analysis of Variance) as a powerful multivariate statistical test for batch effect evaluation. Studies comparing PERMANOVA to standard univariate testing methods have demonstrated its superior power in detecting batch effects across different sample sizes, with the Clark and Jaccard distance metrics showing particularly high sensitivity. Unlike traditional ANOVA, PERMANOVA does not assume normality or homogeneity of variances, making it suitable for the complex distributions often observed in genomic and radiomic features. When combined with effect size measures such as the Robust Effect Size Index (RESI), PERMANOVA provides both statistical significance testing and quantitative assessment of batch effect magnitude, addressing limitations of p-value-based approaches that become significant at extremely small effect sizes in large sample sizes.

Method Benchmarking and Performance Metrics

Comprehensive benchmarking studies have evaluated the performance of various batch effect detection and correction methods across different experimental scenarios. Quantitative assessments reveal that while PCA remains valuable for initial data exploration, it may be insufficient as a standalone method for comprehensive batch effect identification, particularly when technical artifacts are correlated with biological variables of interest. In comparative analyses, PERMANOVA has demonstrated higher power than standard univariate statistical tests across various sample sizes, with values of 0.952 and 1.0 at sample sizes of 100 and 2500 respectively when using Clark distance, compared to 0.812 and 0.991 for the best-performing univariate test (Anderson-Darling) at the same sample sizes.

The integration of multiple assessment methods creates a more robust framework for batch effect evaluation. A recommended pipeline employs PERMANOVA for initial dataset-level screening to identify the presence of batch effects, followed by RESI to quantify the effect size of batch at the feature level. This combined approach provides both statistical rigor and practical interpretability, enabling researchers to make informed decisions about whether and how to address batch effects in their data. Visual inspection methods like PCA and t-SNE complement these quantitative approaches by providing intuitive representations of data structure and batch-related clustering, creating a comprehensive assessment strategy that leverages the strengths of multiple methodologies.

PCA in the Context of MANOVA for High-Dimensional Data

Theoretical Foundations and Comparative Strengths

In high-dimensional gene expression analysis, researchers often face the choice between PCA and MANOVA (Multivariate Analysis of Variance) for exploring and testing multivariate group differences. While both methods handle multiple dependent variables simultaneously, they approach this task with fundamentally different objectives. PCA is an unsupervised dimension reduction technique that identifies the linear combinations of variables that explain maximum variance in the dataset without reference to group labels or experimental factors. In contrast, MANOVA is a supervised statistical test that evaluates whether population means on multiple dependent variables differ across groups defined by categorical independent variables.

The application of these methods to high-dimensional biological data reveals distinct advantages and limitations for each approach. PCA excels at exploratory analysis, providing visualization of overall data structure and revealing patterns that might not be hypothesized in advance. However, it lacks formal statistical testing framework for group differences. MANOVA offers rigorous hypothesis testing for group differences but becomes statistically problematic in high-dimensional settings where the number of variables exceeds the number of samples, a common scenario in genomics research. When comparing the two methods, PCA demonstrates greater utility for initial data quality assessment and batch effect detection, while MANOVA provides formal testing once batch effects have been addressed and biological hypotheses have been formulated.

Integrated Analytical Approaches

Rather than viewing PCA and MANOVA as competing methods, researchers can leverage them as complementary tools in a comprehensive analytical workflow. PCA serves as the first step for data quality assessment, identifying potential batch effects and outliers that might confound subsequent analyses. Once data quality issues have been addressed, MANOVA can test specific biological hypotheses about group differences in multivariate space. This sequential approach capitalizes on the strengths of both methods while mitigating their individual limitations.

Advanced hybrid methods have emerged that combine elements of both approaches. Principal Variance Component Analysis (PVCA) integrates the strengths of PCA and variance components analysis to quantify the contributions of different batch variables to overall variance in the dataset. This method provides a breakdown of key sources of variation, with unexplained variation classified as "residual." In ideal circumstances, the variation associated with known batch variables should be low and residual variation high, indicating minimal technical confounding. Similarly, guided PCA represents another hybrid approach that incorporates supervised elements (batch indicators) into the unsupervised PCA framework, creating a targeted method for batch effect detection that overcomes the limitation of standard PCA in detecting non-dominant variance sources.

Experimental Protocols and Implementation Guidelines

Standardized PCA Protocol for Batch Effect Detection

Implementing PCA for batch effect detection requires careful attention to methodological details to ensure reliable and reproducible results. The following protocol provides a standardized approach for gene expression datasets:

Sample Preparation and Data Generation: Process samples across multiple batches intentionally, ensuring that biological groups of interest are distributed across different batches when possible. For gene expression analysis, extract RNA and perform microarray or RNA-seq analysis following standard protocols, carefully documenting all technical parameters including processing date, technician, reagent lots, and instrument details.

Data Preprocessing: Format the data as a sample × gene matrix with expression values. For RNA-seq data, transform raw counts using variance-stabilizing transformation or log2(CPM + 1). Center and scale each gene to mean = 0 and standard deviation = 1 to ensure equal contribution of all genes regardless of expression level. Address missing values using appropriate imputation methods if necessary, though mean value imputation is commonly applied to centered data.

PCA Computation: Perform singular value decomposition (SVD) on the preprocessed data matrix using computational tools such as the prcomp() function in R or the PCA implementation in Python's scikit-learn. Retain all principal components initially for comprehensive assessment. Generate a scree plot showing the proportion of variance explained by each component to inform decisions about how many components to retain for further analysis.

Visualization and Interpretation: Create scatter plots of samples in the space defined by the first two principal components (PC1 vs. PC2) and subsequent component pairs (PC1 vs. PC3, PC2 vs. PC3). Color-code points according to potential batch variables (processing date, technician, etc.) and biological variables (disease status, tissue type, etc.). Interpret results by examining whether samples cluster more strongly by technical factors than biological factors, which indicates potential batch effects.

Research Reagent Solutions and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for PCA-Based Batch Effect Analysis

| Resource Category | Specific Tools/Reagents | Function/Purpose |

|---|---|---|

| Statistical Software | R Statistical Environment with packages (pcaMethods, sva, limma) | Primary computational platform for PCA and batch effect analysis |

| Python Libraries | scikit-learn, Scanpy, Scipy | Alternative computational environment for PCA implementation |

| Batch Correction Algorithms | ComBat, Harmony, Mutual Nearest Neighbors (MNN) | Correct identified batch effects while preserving biological variation |

| Visualization Tools | ggplot2, matplotlib, plotly | Create publication-quality visualizations of PCA results |

| Specialized Platforms | MetaBatch, CDIAM Multi-Omics Studio | Integrated web-based platforms for batch effect assessment |

| RNA Sequencing Kits | Illumina TruSeq, SMARTer Ultra Low Input | Generate gene expression data for analysis |

| Quality Control Reagents | Bioanalyzer RNA kits, Qubit quantification assays | Ensure input material quality before expression profiling |

Advanced Applications and Future Directions

Integration with Other Omics Data Types

The application of PCA for batch effect detection has expanded beyond gene expression analysis to encompass diverse omics technologies, including metabolomics, proteomics, and radiomics. In metabolomics studies, platforms like MetaBatch have been developed specifically to assess and correct for batch effects in data from mass spectrometry and NMR spectroscopy. These implementations adapt the core PCA framework to address technology-specific challenges, such as the high proportion of missing values and strong analytical variation typical in metabolomic datasets. Similarly, in radiomics, where features are extracted from medical images, PCA and related multivariate methods help identify batch effects associated with different scanners, acquisition parameters, or reconstruction algorithms.

The growing importance of multi-omics integration presents both challenges and opportunities for PCA-based batch effect detection. When combining data from multiple omics platforms, batch effects can manifest both within and between technologies, creating complex confounding patterns. Advanced implementations of PCA can be applied to concatenated or integrated omics datasets to identify these complex batch effects, though specialized methods like Multi-Omics Factor Analysis (MOFA) may offer enhanced capability for cross-platform batch effect identification. As multi-omics studies become more prevalent, the development of integrated batch effect assessment pipelines that combine PCA with platform-specific quality metrics will become increasingly important for ensuring data quality and biological validity.

Emerging Methodologies and Best Practices