Principal Component Analysis in Bioinformatics: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive exploration of Principal Component Analysis (PCA) and its pivotal role in bioinformatics.

Principal Component Analysis in Bioinformatics: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive exploration of Principal Component Analysis (PCA) and its pivotal role in bioinformatics. Tailored for researchers, scientists, and drug development professionals, it covers foundational concepts from dimensionality reduction and geometric intuition to the complete methodological workflow. The guide details specialized applications in genomics and metabolomics, addresses common pitfalls and optimization strategies, and offers a critical comparison with alternative methods like Linear Mixed Models. By synthesizing theory with practical, current applications, this resource empowers practitioners to effectively leverage PCA for analyzing high-dimensional biological data, from exploratory analysis to hypothesis generation.

Understanding PCA: Taming High-Dimensional Biological Data

Defining PCA and the 'Curse of Dimensionality' in Omics Data

In bioinformatics research, the analysis of omics data—whether genomics, transcriptomics, proteomics, or metabolomics—presents a unique statistical challenge known as the "curse of dimensionality." This phenomenon occurs when the number of measured variables (P) drastically exceeds the number of biological samples (N), creating a paradigm where P ≫ N [1]. In practical terms, a typical transcriptomic study might measure the expression levels of over 20,000 genes across fewer than 100 samples [2] [1]. This high-dimensional landscape creates substantial mathematical and computational obstacles, including singular variance-covariance matrices that render traditional statistical operations impossible and increase the risk of overfitting in predictive models [1] [3].

Principal Component Analysis (PCA) emerges as a fundamental computational technique to navigate this challenging terrain. As one of the oldest and most widely applied dimension reduction approaches, PCA transforms high-dimensional omics data into a lower-dimensional space while preserving the essential patterns and relationships within the data [4] [5]. By constructing linear combinations of the original variables called principal components (PCs), PCA enables researchers to project complex biological data into an intuitive visual space, identify technical artifacts, detect sample outliers, and uncover underlying biological patterns that might otherwise remain hidden in the high-dimensional wilderness [6] [7].

Understanding the Curse of Dimensionality

Fundamental Concepts and Mathematical Challenges

The "curse of dimensionality" refers to the various phenomena that arise when analyzing and organizing data in high-dimensional spaces that do not occur in low-dimensional settings. In omics research, this curse manifests when the number of features (variables) vastly exceeds the number of observations (samples) [1]. The core mathematical challenge emerges from the fact that the covariance matrix (XᵀX) becomes singular when P > N, meaning it cannot be inverted—a requirement for many statistical operations including multiple linear regression [1]. This creates an underdetermined system where infinite solutions exist for mathematical equations that form the foundation of standard statistical analyses.

The computational consequences extend beyond theoretical mathematical constraints. As dimensions increase, conventional distance measures lose meaning, clustering becomes increasingly difficult, and the data becomes sparse, requiring exponentially more samples to maintain the same statistical power [3]. This dimensional explosion also severely complicates data visualization, as the human brain cannot intuitively perceive relationships beyond three dimensions [1].

Quantitative Landscape of Omics Data Dimensionality

Table 1: Characteristic Scale of Omics Data Dimensionality

| Omics Data Type | Typical Number of Features (P) | Typical Sample Size (N) | Representative P:N Ratio |

|---|---|---|---|

| Transcriptomics | 20,000+ genes | < 100 samples | 200:1 |

| Metabolomics | 1,000+ metabolites | 50-200 samples | 20:1 |

| Proteomics | 10,000+ proteins | < 100 samples | 100:1 |

| Methylomics | 450,000+ CpG sites | < 500 samples | 900:1 |

Recent analyses of bioinformatics literature reveal that the median number of features in multi-omics studies is approximately 33,415 with a median sample size of 447, though significant outliers exist with some datasets containing over 70,000 features [2]. This substantial dimensional mismatch necessitates specialized statistical approaches that can navigate the high-dimensional landscape without succumbing to its mathematical pitfalls.

Principal Component Analysis: Mathematical Foundations

Core Algorithm and Computational Approach

Principal Component Analysis is an orthogonal linear transformation that reposition data from the original high-dimensional space to a new coordinate system [5]. The fundamental mathematical operation involves eigen-decomposition of the data covariance matrix or singular value decomposition (SVD) of the data matrix itself [4] [5]. Given a centered data matrix X of dimensions n × p (where n is the number of samples and p is the number of variables), PCA identifies a set of new variables, termed principal components, which are linear combinations of the original variables.

The first principal component (PC1) is defined as the linear combination that captures the maximum variance in the data:

w_(1) = argmax‖w‖=1 {‖Xw‖²}

Subsequent components (PC2, PC3, etc.) are computed sequentially, with each additional component capturing the maximum remaining variance while being constrained to be orthogonal to all previous components [5]. The resulting principal components are ordered by the amount of variance they explain, with the first component explaining the most variance, the second component explaining the next most, and so forth [7].

Key Mathematical Properties of Principal Components

The principal components derived from PCA exhibit several mathematically valuable properties. First, different PCs are orthogonal to each other, effectively eliminating collinearity problems often encountered with original gene expressions [4]. Second, in bioinformatics data analysis, the number of non-zero eigenvalues is at most min(n-1, p), meaning the dimensionality of PCs can be much lower than that of the original measurements [4]. Third, the variance explained by PCs decreases sequentially, with the first few components typically explaining the majority of variation in the dataset [4]. Finally, any linear function of the original variables can be expressed in terms of the principal components, meaning that when focusing on linear effects, using PCs is equivalent to using the original data [4].

PCA Workflow in Omics Data Analysis

Standardized Processing Pipeline

The application of PCA to omics data follows a systematic workflow designed to ensure robust and interpretable results. The initial critical step involves data preprocessing, including centering and scaling the feature data to ensure all variables contribute equally regardless of their original measurement scale [6]. This step is particularly important in omics datasets where different molecular entities may exhibit orders of magnitude differences in abundance.

Following preprocessing, the algorithm computes the covariance matrix and performs eigen-decomposition to identify the principal components [6]. For large omics datasets containing tens of thousands of features and hundreds or thousands of samples, specialized computational implementations are required to efficiently handle the scale of data [6]. The resulting components are then visualized through various graphical representations, with score plots and scree plots being the most fundamental for initial interpretation [7].

Interpretation Framework for PCA Results

Interpreting PCA results requires a systematic approach to extract meaningful biological insights. The scree plot provides a critical first step, displaying the variance explained by each principal component and guiding researchers in determining how many components to retain for further analysis [8]. The score plot then visualizes sample relationships in the reduced dimensional space, with proximity indicating similarity in molecular profiles [7].

Table 2: PCA Interpretation Guide for Omics Data

| Pattern Observed | Potential Interpretation | Recommended Action |

|---|---|---|

| Distinct separation along PC1/PC2 | Strong group differences | Proceed with differential analysis |

| Tight clustering of QC samples | High technical reproducibility | Continue with confidence |

| Samples outside confidence ellipses | Potential outliers | Investigate technical/biological causes |

| Group mixing without separation | Weak group differences | Consider supervised methods |

| Batch-based clustering | Batch effects present | Apply batch correction |

Quality control samples play a particularly important role in PCA interpretation. When quality control (QC) samples—technical replicates prepared by pooling sample extracts—cluster tightly together on the score plot, this indicates high analytical consistency and methodological rigor [7]. Conversely, when biological replicates within the same experimental group show tight clustering, this demonstrates low biological variability within that group [7].

Advanced PCA Applications in Bioinformatics

Meta-Analysis and Multi-Study Integration

Meta-analytic PCA (MetaPCA) has emerged as a powerful approach for integrating multiple omics datasets addressing similar biological hypotheses [9]. This framework addresses the common challenge where individual labs generate datasets with moderate sample sizes that benefit from combination with data from other studies. MetaPCA develops common principal components across multiple studies through two primary approaches: decomposition of the sum of variance (SV) or maximization of the sum of squared cosines (SSC) across studies [9].

The SV approach computes a weighted sum of covariance matrices across studies, with weights typically being the reciprocal of the largest eigenvalue of each study's covariance matrix [9]. The SSC approach instead identifies optimal vectors that minimize the sum of angles between the vector and the eigen-space spanned by each individual study [9]. Regularized versions of MetaPCA incorporate sparsity constraints through elastic net (eNet) penalty or penalized matrix decomposition (PMD) to facilitate feature selection alongside dimension reduction [9].

Supervised, Sparse, and Functional PCA Variations

Several specialized PCA variants have been developed to address specific analytical challenges in omics data. Supervised PCA incorporates response variables to guide the dimension reduction, often leading to improved empirical performance for predictive modeling [4]. Sparse PCA incorporates regularization to produce principal components with sparse loadings, enhancing biological interpretability by focusing on smaller subsets of meaningful features [4] [9]. Functional PCA extends the framework to analyze time-course gene expression data, capturing dynamic patterns across temporal measurements [4].

Additionally, PCA has been adapted to accommodate biological structures and interactions. In pathway-based analysis, PCA can be conducted on genes within the same biological pathways, with the resulting PCs representing pathway-level effects [4]. Similarly, network-based approaches apply PCA to genes within network modules, creating components that represent modules of tightly connected genes [4]. These advanced applications demonstrate how the core PCA framework can be extended to address the complex hierarchical organization of biological systems.

Experimental Protocols and Methodologies

Standard PCA Implementation Protocol

Implementing PCA for omics analysis requires careful attention to computational details to ensure robust results. The following protocol outlines the key steps for applying PCA to a typical transcriptomics dataset:

Data Preprocessing: Begin with normalized gene expression data (e.g., TPM for RNA-seq or normalized intensities for microarrays). Center each gene to mean zero and scale to unit variance to ensure equal contribution from all features [6].

Covariance Matrix Computation: Calculate the p × p sample covariance matrix from the preprocessed data matrix. For large p, this step may employ computational optimizations to manage memory requirements [4].

Eigen-decomposition: Perform singular value decomposition (SVD) on the covariance matrix to obtain eigenvalues and corresponding eigenvectors. Standard implementations include the

prcompfunction in R orprincompin MATLAB [4].Component Selection: Determine the number of components to retain using the scree plot or based on cumulative variance explained (often targeting 70-90% of total variance) [8].

Projection and Visualization: Project the original data onto the selected principal components and generate 2D or 3D score plots colored by experimental groups [7].

This protocol typically requires 4-8 hours of computational time depending on dataset size and can be implemented using standard bioinformatics programming environments including R, Python, or specialized platforms like Metware Cloud [6] [7].

Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for PCA in Omics

| Item | Function | Example Implementations |

|---|---|---|

| Data Normalization Tools | Standardize feature scales | RMA for microarrays, TMM for RNA-seq |

| Covariance Computation Libraries | Efficient matrix operations | Numpy, Scipy, R base |

| Eigen-decomposition Algorithms | Compute eigenvalues/vectors | SVD, NIPALS, Power iteration |

| Visualization Packages | Generate score and loading plots | ggplot2, plotly, matplotlib |

| Batch Correction Methods | Address technical variability | ComBat, SVA, ARSyN |

| High-Performance Computing Environment | Handle large-scale data | R, Python, MATLAB, Metware Cloud |

Comparative Analysis with Alternative Methods

While PCA remains a cornerstone technique for exploratory analysis of omics data, several alternative dimensionality reduction methods offer complementary strengths. Non-linear techniques such as t-distributed Stochastic Neighbor Embedding (t-SNE) and Uniform Manifold Approximation and Projection (UMAP) often provide enhanced separation of clusters for visualization purposes [6]. However, these methods lack the mathematical transparency of PCA, as their results depend on hyperparameter selection and the components cannot be directly interpreted as linear combinations of original features [6].

For classification tasks where group labels are known, supervised methods such as Partial Least Squares-Discriminant Analysis (PLS-DA) and Orthogonal PLS-DA (OPLS-DA) often provide better separation between pre-defined groups [7]. These techniques explicitly incorporate class information to maximize separation between groups, unlike the unsupervised nature of PCA that simply captures maximum variance without regard to experimental conditions [7].

The choice between PCA and alternative methods ultimately depends on the analytical objectives. PCA remains superior for quality assessment, noise reduction, and initial data exploration due to its deterministic nature, mathematical transparency, and lack of hyperparameter sensitivity [6]. When the goal shifts to classification or capturing complex non-linear relationships, complementary methods may provide additional insights.

Principal Component Analysis serves as an indispensable computational toolkit in the bioinformatics arsenal, providing a mathematically robust framework for navigating the high-dimensional landscapes characteristic of modern omics data. By transforming overwhelming dimensionality into intelligible patterns, PCA enables researchers to identify technical artifacts, detect biological outliers, visualize sample relationships, and generate mechanistic hypotheses. While the curse of dimensionality presents formidable analytical challenges, PCA and its evolving variants—including sparse, supervised, and meta-analytic implementations—continue to provide essential dimension reduction capabilities that balance computational efficiency with biological interpretability. As omics technologies advance toward increasingly comprehensive molecular profiling, PCA will undoubtedly remain a foundational technique for converting complex data into biological knowledge.

Principal Component Analysis (PCA) is a cornerstone multivariate data analysis technique that provides a general framework for systemic approaches in pharmacology and bioinformatics [10]. At its core, PCA represents a fundamental style of scientific reasoning centered on treating variance as information. In an era of data-intensive biology, where high-throughput technologies generate massive multidimensional datasets, PCA serves as a critical "hypothesis-generating" tool that creates a statistical mechanics framework for biological systems modeling without the need for strong a priori theoretical assumptions [10]. This perspective is particularly valuable for overcoming narrow reductionist approaches in drug discovery and molecular biology, allowing researchers to identify latent structures and patterns in complex biological data that would otherwise remain hidden in high-dimensional spaces.

The technique, known under various names including Factor Analysis, Singular Value Decomposition (SVD), and Essential Dynamics, has a history spanning more than a century, with the first theoretical papers dating back to 1873 [10]. Its applications in bioinformatics range across all main themes of pharmacological and biomedical sciences, from Quantitative Structure-Activity Relationships (QSAR) and data mining to diverse 'omics' approaches including genomics, transcriptomics, and metabolomics [10]. As large-scale studies of gene expression with multiple sources of biological and technical variation become widely adopted, characterizing these drivers of variation becomes essential to understanding disease biology and regulatory genetics [11].

Geometric Foundations of PCA

The Core Geometric Problem

The geometric interpretation of PCA begins with a fundamental observation: scientific investigations often require representing a system of points in multidimensional space by the "best-fitting" straight line or plane [10]. Imagine a dataset represented as a cloud of points in a high-dimensional space, where each dimension corresponds to a measured variable (e.g., gene expression levels, molecular descriptors, or protein coordinates). PCA identifies the directions in this space that optimally capture the spread or variance of the data.

The technique solves two simultaneous geometric problems:

- Minimizing reconstruction error: Finding the lower-dimensional representation that best preserves the original data structure

- Maximizing projected variance: Identifying directions that capture the greatest spread in the data

These two perspectives are mathematically equivalent [12]. In the geometric framework, PCA finds the "best-fitting" line (in 2D), plane (in 3D), or hyperplane (in higher dimensions) to the data cloud by minimizing the perpendicular distances from points to this model subspace, unlike classical regression which minimizes vertical distances with respect to an independent variable [10].

The Curse of Dimensionality in Biological Data

Biological data frequently suffers from the "curse of dimensionality," where the number of variables (P) far exceeds the number of observations (N) [1]. In transcriptomic datasets, for example, researchers commonly analyze more than 20,000 genes across fewer than 100 samples [1]. This P≫N scenario creates significant challenges for visualization, analysis, and mathematical operations:

- Computational challenges: High-dimensional spaces require exponentially more computational resources

- Visualization limitations: Human perception is limited to three dimensions

- Mathematical instability: Statistical operations become unreliable in undersampled high-dimensional spaces

Table 1: Examples of Data Matrices with Varying Dimensionality

| Matrix | Observations (N) | Variables (P) | Visualization Capability |

|---|---|---|---|

| Matrix 1 | 6 | 1 (Gene A) | 1D scatter plot |

| Matrix 2 | 6 | 2 (Genes A, B) | 2D scatter plot |

| Matrix 3 | 6 | 3 (Genes A, B, C) | 3D scatter plot |

| Matrix 4 | 6 | 4 (Genes A, B, C, D) | Partial 3D with color coding |

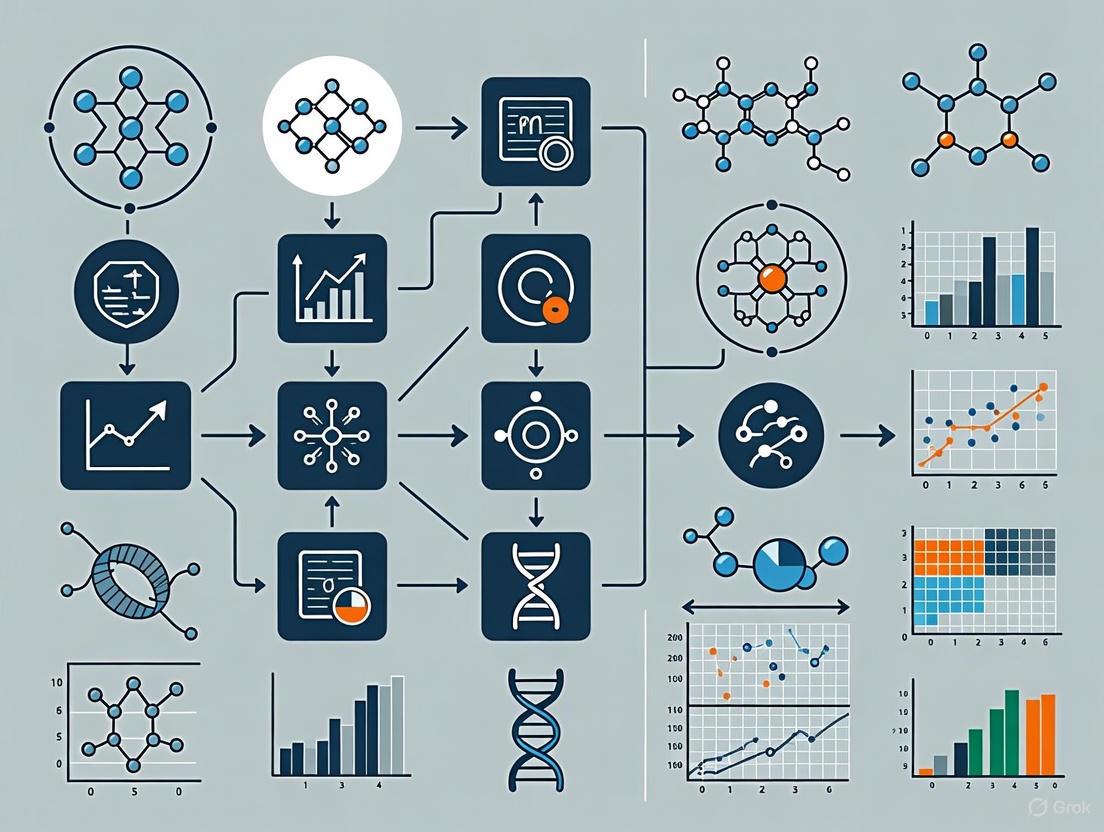

Figure 1: Geometric Intuition of PCA - Projection from High to Low-Dimensional Space

Statistical Interpretation: Variance as Information

The Variance Maximization Principle

The statistical interpretation of PCA reveals why variance is treated as information rather than noise in this framework. PCA works by finding the directions of maximum variance in the data, which become the "principal components" [13]. These components are linear combinations of the original variables according to the formula:

PC = aX₁ + bX₂ + cX₃ + ... + kXₙ

where X₁-Xₙ are the experimental/observational variables, and the coefficients a, b, c,...,k are estimated by least squares optimization [10]. Principal components serve as both the "best summary" of the information present in the n-dimensional data cloud and the directions along which between-variable correlation is maximal [10].

The sequential variance capture follows this pattern:

- First principal component (PC1): Captures the greatest variance in the data

- Second principal component (PC2): Orthogonal (perpendicular) to the first, capturing the remaining variance

- Subsequent components: Continue finding additional orthogonal directions of decreasing variance

Eigenvectors and Eigenvalues: The Heart of PCA

The solution to the variance maximization problem emerges from linear algebra through eigenvectors and eigenvalues of the covariance matrix [13]. For a covariance matrix C, eigenvectors represent the principal components (directions of variance), while eigenvalues indicate how much variance each corresponding eigenvector captures [13] [12].

The connection between the geometric optimization problem and linear algebra solution can be understood through Lagrange multipliers [12]. The goal of maximizing wᵀCw (the projected variance) under the constraint ‖w‖=1 (unit vector) leads to the eigenvector equation Cw - λw = 0, which simplifies to Cw = λw [12]. The surprising result is that the directions of maximum variance (principal components) exactly correspond to the eigenvectors of the covariance matrix, with the amount of variance explained given by their corresponding eigenvalues.

Table 2: Interpretation of Eigenvectors and Eigenvalues in PCA

| Mathematical Element | Geometric Interpretation | Statistical Interpretation |

|---|---|---|

| Eigenvector | Direction of a principal component in the original variable space | Linear combination coefficients for original variables |

| Eigenvalue | Relative length of the principal component axis | Amount of variance explained by the component |

| Eigenvector Magnitude | Stability of the component direction | Importance of each original variable to the component |

| Eigenvalue Ratio | Relative importance of each component | Percentage of total variance captured |

Methodological Framework: The PCA Process

Step-by-Step Computational Protocol

The standard PCA workflow consists of five key steps that transform raw data into principal components:

Data Standardization (Mean Centering)

Covariance Matrix Calculation

- Compute the covariance matrix of standardized data

- Captures relationships between all pairs of features

- Diagonal elements represent variances of individual features

- Off-diagonal elements represent covariances between feature pairs [13]

Eigenvalue and Eigenvector Decomposition

- Calculate eigenvalues and eigenvectors of the covariance matrix

- Eigenvectors represent principal components (directions of maximum variance)

- Eigenvalues indicate amount of variance captured by each component [13]

Principal Component Selection

- Sort eigenvalues in descending order and select top components

- Common criteria: Kaiser criterion (eigenvalue >1), scree plot elbow, or cumulative variance explained (typically 70-95%) [13]

Data Transformation

- Project original data onto selected principal components

- Create new dataset with reduced dimensionality [13]

Figure 2: PCA Workflow - From Raw Data to Dimensionality Reduction

Implementation in Bioinformatics Research

PCA implementation in bioinformatics requires specialized tools and considerations for biological data:

Python Implementation (Scikit-learn):

R Implementation (variancePartition): The variancePartition package in R uses linear mixed models to quantify the contribution of each dimension of variation to each gene, enabling interpretation of complex gene expression studies with multiple sources of variation [11]. The model has the form:

y = ΣXⱼβⱼ + ΣZₖαₖ + ε

where y is gene expression across samples, Xⱼ are fixed effects, Zₖ are random effects, and ε is residual noise [11]. This approach partitions the total variance into fractions attributable to each aspect of the study design.

Table 3: Bioinformatics PCA Toolkit - Essential Research Reagents

| Tool/Software | Application Context | Key Functionality | Implementation |

|---|---|---|---|

| Scikit-learn | General bioinformatics data | Basic PCA, dimensionality reduction | Python |

| variancePartition | Complex gene expression studies | Variance decomposition, linear mixed models | R/Bioconductor |

| MDAnalysis | Molecular dynamics trajectories | Protein conformational analysis | Python |

| NichePCA | Spatial transcriptomics | Spatial domain identification | R |

| VolSurf+ | Drug discovery, QSAR | Molecular descriptor analysis | Commercial |

| Flare V9 | Protein-ligand interactions | MD trajectory analysis with PCA | Commercial |

Applications in Bioinformatics and Drug Discovery

Gene Expression Analysis and Spatial Transcriptomics

PCA has become indispensable for analyzing high-dimensional gene expression data. In complex transcriptomic studies, PCA helps prioritize drivers of variation based on genome-wide summaries and identify genes that deviate from genome-wide trends [11]. Recent advances demonstrate that simple PCA-based algorithms for unsupervised spatial domain identification rival the performance of ten competing state-of-the-art methods across single-cell spatial transcriptomic datasets [15]. The NichePCA approach provides intuitive domain interpretation with exceptional execution speed, robustness, and scalability [15].

In practice, PCA reveals striking patterns of biological and technical variation that are reproducible across multiple datasets [11]. For example, it can simultaneously characterize variation attributable to disease status, sex, cell or tissue type, ancestry, genetic background, experimental stimulus, or technical variables [11].

Molecular Dynamics and Protein Conformational Analysis

In drug discovery, PCA reduces the dimensionality of molecular dynamics (MD) simulation data while preserving significant information about protein conformational spaces [16]. When applied to MD trajectories, PCA transforms the 3D coordinates from all frames into linear orthogonal vectors called principal components, which represent the collective motions of atoms in a protein [16].

A key application involves comparing PCA with traditional metrics like Root Mean Square Deviation (RMSD). In one case study, while RMSD analysis suggested equivalent conformations at 10, 30, and 45 nanoseconds of simulated time, PCA revealed these conformations were not equivalent and identified three distinct macrostates explored by the protein [16]. This demonstrates PCA's superior ability to capture conformational heterogeneity and identify biologically relevant states.

Drug Discovery and Blood-Brain Barrier Permeability

PCA facilitates drug discovery by analyzing molecular descriptors to improve drug-like properties. In a study of quercetin analogues for neuroprotection, PCA identified descriptors related to intrinsic solubility and lipophilicity (logP) as mainly responsible for clustering compounds with the highest blood-brain barrier (BBB) permeability [17]. Among 34 quercetin analogues, PCA helped classify compounds with respect to structural characteristics that enable BBB penetration while maintaining binding affinity to inositol phosphate multikinase (IPMK), a target for neurodegenerative diseases [17].

The analysis revealed that although all quercetin analogues showed insufficient BBB permeation based on calculated distribution values, four trihydroxyflavone compounds formed a distinct cluster with the most favorable permeability characteristics, guiding future synthetic optimization [17].

Figure 3: PCA Applications - Interpreting Biological Variance Patterns

Advanced Interpretation: Variance Partitioning in Complex Studies

Variance Partitioning Framework

For complex study designs with multiple dimensions of variation, variance partitioning extends PCA's capabilities by quantifying the contribution of each variable to overall expression variation [11]. The linear mixed model framework allows multiple dimensions of variation to be considered jointly and accommodates discrete variables with many categories [11].

The variancePartition approach calculates:

- Variance fractions: The proportion of total variance attributable to each variable

- Genome-wide summaries: Patterns across all genes

- Gene-level resolution: Identification of genes deviating from genome-wide trends

Case Study: Memory and Learning Research

In behavioral neuroscience, PCA helps de-convolve hidden independent factors modulating observed variables [10]. A Morris Water Maze study comparing female rats exposed to enriched versus standard environments used PCA to identify three independent behavioral dimensions: "spatial learning," "visual discrimination," and "reversal learning" [10]. Rather than analyzing 14 correlated performance measurements separately, researchers could interpret these three latent factors, revealing that environmental enrichment specifically enhanced the "spatial learning" dimension without affecting other components [10].

This demonstrates how PCA transforms complex, multidimensional behavioral data into interpretable, biologically meaningful dimensions that more accurately reflect underlying neurobiological processes than any single measurement.

Principal Component Analysis represents more than just a statistical technique—it embodies a fundamental approach to scientific inquiry where variance is treated as meaningful information rather than noise. By providing a "hypothesis-generating" framework [10], PCA enables researchers to explore complex biological systems without strong a priori assumptions, making it particularly valuable in exploratory stages of bioinformatics research and drug discovery.

The geometric intuition of PCA as a projection that minimizes reconstruction error while maximizing preserved variance, coupled with the statistical implementation through eigen decomposition of covariance matrices, creates a powerful unified framework for dimensionality reduction. As biological datasets continue growing in size and complexity, with technologies like spatial transcriptomics [15] and molecular dynamics simulations [16] generating increasingly high-dimensional data, PCA's role as an essential tool for extracting meaningful patterns will only become more critical.

The applications across bioinformatics—from interpreting gene expression variation [11] to analyzing protein dynamics [16] and optimizing drug properties [17]—demonstrate PCA's versatility and power. By embracing the perspective that "variance is information," researchers can continue leveraging PCA to uncover latent structures in biological data that advance our understanding of complex biological systems and accelerate therapeutic development.

Principal Component Analysis (PCA) stands as a cornerstone dimensional reduction technique in bioinformatics, enabling researchers to extract meaningful patterns from high-dimensional biological data. The mathematical foundation of PCA rests upon the concepts of covariance matrices, eigenvectors, and eigenvalues, which together facilitate the transformation of complex datasets into a lower-dimensional space while preserving essential information. In fields ranging from genomics to drug discovery, PCA provides an unsupervised approach to identify population structures, classify samples, and pinpoint key variables driving observed variation.

The core principle involves finding directions of maximum variance in the data, known as principal components, which are encoded mathematically as eigenvectors of the covariance matrix. The corresponding eigenvalues quantify the amount of variance captured along each direction. This mathematical framework has proven particularly valuable in bioinformatics where datasets often contain thousands of variables (e.g., gene expression levels) measured across relatively few samples, creating the "curse of dimensionality" problem where the number of variables P far exceeds the number of observations N [1]. By leveraging covariance relationships and eigen decomposition, PCA effectively mitigates this challenge, enabling robust analysis and interpretation of biological data.

Theoretical Foundations

Covariance Matrix: Capturing Data Relationships

The covariance matrix serves as the fundamental building block for PCA, providing a comprehensive mathematical representation of how variables in a dataset relate to one another. For a dataset with P variables, the covariance matrix Σ is a P × P symmetric matrix where the diagonal elements represent the variances of individual variables, and off-diagonal elements represent the covariances between variable pairs [18]. Formally, for a centered data matrix X (with zero mean for each variable), the covariance matrix is computed as Σ = (XᵀX)/(N-1) for N observations.

The covariance matrix encodes the geometry of the data distribution. When most off-diagonal elements are near zero, variables are largely uncorrelated, and the data cloud appears roughly spherical. Non-zero covariances indicate correlated variables, creating an elongated data distribution oriented along specific directions in the high-dimensional space. PCA leverages this covariance structure to identify the most informative directions for projection [18].

Eigenvectors and Eigenvalues: Direction and Magnitude of Variance

Eigenvectors and eigenvalues emerge from the eigen decomposition of the covariance matrix, forming the mathematical core of PCA. For a square matrix Σ, an eigenvector v is a non-zero vector that satisfies the equation:

Σv = λv

where λ is a scalar known as the eigenvalue corresponding to eigenvector v [19]. This equation reveals a fundamental property: when the covariance matrix Σ acts on its eigenvector v, the result is simply a scaled version of v—the direction remains unchanged, only the magnitude is modified by λ.

Geometrically, each eigenvector of the covariance matrix represents a direction in the original feature space, while its corresponding eigenvalue quantifies the variance along that direction [18]. The eigenvector with the largest eigenvalue points in the direction of maximum variance in the dataset, making it the first principal component. Subsequent eigenvectors (principal components) are orthogonal to previous ones and capture the next highest variance directions, with their eigenvalues indicating their relative importance [19].

Table 1: Key Mathematical Components of PCA

| Component | Mathematical Role | Geometric Interpretation | Biological Significance |

|---|---|---|---|

| Covariance Matrix | Symmetric P×P matrix capturing variable relationships | Shape and orientation of data distribution | Reveals coordinated patterns in biological features (e.g., gene co-expression) |

| Eigenvectors | Directions that remain unchanged when covariance matrix is applied | Principal axes of the data ellipsoid | Major patterns of biological variation (e.g., population structure, treatment response) |

| Eigenvalues | Scalars representing scaling factors along eigenvectors | Lengths of the principal axes | Amount of variance captured by each pattern; indicates biological importance |

| Principal Components | Orthogonal projections onto eigenvectors | Coordinates along rotated axes | Simplified representation of samples in reduced dimension space |

Computational Methodology

The PCA Algorithm: A Step-by-Step Protocol

The implementation of PCA follows a systematic computational procedure that transforms raw data into its principal components. The following protocol details each step, from data preparation to dimension reduction:

Data Centering: Subtract the mean from each variable to create a centered dataset with zero mean across all dimensions. This ensures the first principal component describes the direction of maximum variance rather than the data centroid [19].

- Mathematical formulation: X_centered = X - μ, where μ is the mean vector.

Covariance Matrix Computation: Calculate the covariance matrix of the centered data.

- Mathematical formulation: Σ = (Xcenteredᵀ × Xcentered)/(N-1), where N is the number of samples [19].

Eigen Decomposition: Perform eigen decomposition of the covariance matrix to obtain eigenvectors and eigenvalues.

- Solve the characteristic equation: det(Σ - λI) = 0, where I is the identity matrix [19].

- For each eigenvalue λ, solve the equation Σv = λv to find the corresponding eigenvector v.

Sorting by Variance: Sort eigenvectors in descending order of their corresponding eigenvalues. This ranks principal components by the amount of variance they explain [19].

Projection: Select the top-k eigenvectors (principal components) and project the original data onto this subspace to achieve dimension reduction.

- Mathematical formulation: Xreduced = Xcentered × Vk, where Vk is the matrix containing the first k eigenvectors as columns [19].

Workflow Visualization

The following diagram illustrates the logical flow of the PCA methodology, from data input to dimension-reduced output:

Determining Optimal Component Number

A critical step in PCA implementation is selecting the appropriate number of components to retain. This decision balances dimensionality reduction against information preservation. Two primary approaches guide this selection:

Scree Plot Analysis: Plot eigenvalues in descending order and identify the "elbow point" where the curve flattens, indicating diminishing returns for additional components [19].

Cumulative Variance Threshold: Retain the minimum number of components that capture a predetermined percentage of total variance (typically 90-95%) [19].

- Calculated as: Cumulative Variance(k) = (Σᵢ₌₁ᵏ λᵢ) / (Σᵢ₌₁ᵖ λᵢ)

Table 2: PCA Performance on Bioinformatics Datasets

| Dataset | Original Dimensions | Optimal Components | Variance Retained | Application Context |

|---|---|---|---|---|

| Iris Morphology | 4 features | 2 components | 95.3% | Species classification [19] |

| NIBR-PDXE | 72,545 genomic features | Not specified | Comparable to individual models | Pan-cancer drug response prediction [20] |

| 1000 Genomes Project | 1,055,401 SNPs | 3-4 components | Population structure resolution | Population genetics [21] |

| Spatial Transcriptomics | Variable by experiment | PCA rivals state-of-art methods | State-of-the-art performance | Spatial domain identification [15] |

Bioinformatics Applications

Genomic Data Analysis

PCA has become an indispensable tool in population genetics and genomic analysis, particularly for elucidating population structure from single nucleotide polymorphism (SNP) data. Tools such as VCF2PCACluster leverage PCA to efficiently analyze tens of millions of SNPs across thousands of samples, enabling researchers to identify genetic clusters corresponding to geographic populations and evolutionary histories [21]. In one representative study, PCA of chromosome 22 data from the 1000 Genomes Project (1,055,401 SNPs across 2,504 samples) clearly distinguished African, Asian, European, and American populations with high accuracy (99.5% concordance with known populations) [21]. The computational efficiency of modern PCA implementations makes such large-scale analyses feasible even on standard workstations, with memory usage independent of SNP count in optimized tools.

Drug Discovery and Development

In pharmaceutical research, PCA enables the integration of multi-omic data for predicting drug response and identifying novel therapeutic targets. The pan-cancer, pan-treatment (PCPT) model represents an advanced application wherein PCA reduces the dimensionality of high-dimensional genomic features (gene expression, copy number variation, mutations) from patient-derived xenograft models before training machine learning classifiers [20]. This approach overcomes limitations of cancer-specific models by appending cancer type and treatment as input features alongside the reduced genomic profiles, creating a unified framework that maintains accuracy while enhancing generalizability across cancer types [20].

PCA also facilitates drug classification and target identification through its integration with deep learning architectures. Stacked autoencoders coupled with optimization algorithms can extract robust features from pharmaceutical datasets, achieving high classification accuracy (95.52%) for druggable targets while reducing computational complexity [22]. Similarly, PCA-based feature selection combined with multi-criteria decision-making provides a robust framework for identifying biologically relevant features in gene expression data, enhancing model interpretability in drug screening applications [23].

Transcriptomics and Pathway Analysis

Pharmacotranscriptomics-based drug screening (PTDS) has emerged as a powerful paradigm where PCA plays a crucial role in analyzing gene expression changes following drug perturbations [24]. By reducing the dimensionality of transcriptomic profiles, PCA enables researchers to identify dominant patterns of drug response, classify compounds based on their transcriptomic signatures, and elucidate mechanisms of action—particularly for complex therapeutics like traditional Chinese medicine [24]. Furthermore, in spatial transcriptomics, simple PCA-based algorithms such as NichePCA rival state-of-the-art methods in identifying biologically meaningful spatial domains, offering intuitive interpretation with exceptional execution speed and scalability [15].

Experimental Protocols

Protocol 1: PCA for Population Genetics

This protocol details the application of PCA to identify population structure from genomic variation data, based on methodologies from [21]:

Data Acquisition: Obtain genotype data in VCF format containing SNP information across multiple samples. Public repositories like the 1000 Genomes Project provide standardized datasets.

Quality Control Filtering:

- Remove non-biallelic sites (singletons and multiallelic SNPs) and indels

- Apply filters for minor allele frequency (MAF > 0.05), missingness per marker (<25%), and Hardy-Weinberg equilibrium (HWE p-value > 0.001)

- Tools: VCF2PCACluster or PLINK2 can implement these filters

Kinship Matrix Calculation: Compute genetic relationship matrix using recommended methods (NormalizedIBS or CenteredIBS) to account for population structure

Eigen Decomposition: Perform PCA on the kinship matrix using efficient numerical libraries (Eigen library)

Cluster Analysis: Apply clustering algorithms (EM-Gaussian, K-means, DBSCAN) to the top principal components to identify genetic populations

Visualization: Generate 2D/3D plots of samples along principal component axes, coloring points by cluster assignment or known population labels

Protocol 2: PCA for Drug Response Prediction

This protocol outlines the integration of PCA with machine learning for predicting cancer treatment response, adapted from [20]:

Data Collection: Compile patient-derived xenograft data including:

- Genomic features: gene expression (GEX), copy number variation (CNV), copy number alterations (CNA), single nucleotide variations (SNV)

- Treatment information: drug names, combinations, doses

- Response outcomes: tumor volume changes classified as sensitive/resistant

Data Preprocessing:

- Normalize gene expression values (e.g., FPKM normalization for RNA-seq)

- Binarize categorical copy number data (Amp5, Amp8, Del0.8)

- Center and scale all genomic features

Dimensionality Reduction:

- Apply PCA separately to each genomic feature type or concatenated features

- Retain sufficient components to explain >90% of variance

- Append cancer type and treatment name as additional input features

Model Training: Train ensemble classifiers (Random Forest) using the reduced feature set to predict treatment response

Validation: Evaluate model performance using cross-validation across different cancer types and treatments

Table 3: Key Resources for PCA-Based Bioinformatics Research

| Resource Category | Specific Tools/Datasets | Function in PCA Workflow | Application Context |

|---|---|---|---|

| Genomic Data Repositories | 1000 Genomes Project, UK Biobank, 3000 Rice Genomes Project | Source of raw genotype/phenotype data | Population genetics, trait association studies [21] |

| PDX Resources | NIBR-PDXE (Novartis PDX Encyclopedia) | Drug response data with genomic features | Preclinical drug development, biomarker discovery [20] |

| PCA Software Tools | VCF2PCACluster, PLINK2, GCTA, scikit-learn PCA | Implement efficient PCA computation | General-purpose dimensionality reduction [21] |

| Visualization Libraries | matplotlib, seaborn, plotly | Create publication-quality PCA plots | Result interpretation and presentation [19] |

| Drug-Target Databases | DrugBank, Swiss-Prot, ChEMBL | Source of pharmaceutical compound data | Drug discovery, target identification [22] |

Advanced Applications and Future Directions

The mathematical foundation of PCA continues to enable innovative applications across bioinformatics. Recent advances include the integration of PCA with deep learning architectures, where PCA-reduced features serve as input to neural networks for improved classification of druggable targets [22]. Similarly, combining PCA with multi-criteria decision-making methods like MOORA creates powerful hybrid approaches for unsupervised feature selection in high-dimensional bioinformatics data [23].

Future directions focus on scaling PCA to increasingly large datasets while enhancing interpretability. As single-cell technologies and spatial transcriptomics generate ever-larger datasets, efficient PCA implementations like VCF2PCACluster that minimize memory usage will grow in importance [15] [21]. Furthermore, the development of supervised PCA variants that incorporate outcome variables during dimension reduction holds promise for more targeted feature extraction in precision medicine applications.

The enduring relevance of PCA's mathematical foundation—covariance, eigenvectors, and eigenvalues—ensures its continued centrality in bioinformatics research, providing a principled approach to navigating the high-dimensional data landscapes that define modern biology.

Principal Components as 'Metagenes' or Latent Variables

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique in bioinformatics, addressing the "curse of dimensionality" common in high-throughput genomic studies. This whitepaper elucidates the role of PCA in transforming high-dimensional biological data into a lower-dimensional set of latent variables, often termed 'metagenes' or principal components (PCs). We detail the mathematical framework, provide protocols for application in genomic data analysis, and evaluate advanced PCA-based methodologies. Aimed at researchers and drug development professionals, this guide synthesizes current best practices, computational tools, and analytical frameworks to empower robust data-driven discovery in bioinformatics.

Bioinformatics data, particularly from gene expression microarrays or single-cell RNA sequencing (scRNA-seq), is characterized by a "large d, small n" paradigm, where the number of measured variables (e.g., genes) vastly exceeds the number of observations (e.g., samples) [4] [1]. This high-dimensionality presents significant challenges for statistical analysis, visualization, and interpretation. The curse of dimensionality refers to the computational and analytical problems that arise in this context, including the inability to visualize data beyond three dimensions and the mathematical intractability of models when P (variables) >> N (observations) [1]. For instance, in a typical transcriptomic dataset, it is common to analyze over 20,000 genes across fewer than 100 samples [1].

Dimensionality reduction techniques, broadly classified into variable selection and feature extraction, are essential to overcome these challenges. PCA is a classic feature extraction approach that constructs a new set of variables, called principal components (PCs), which are linear combinations of the original genes [4]. These PCs, often conceptualized as 'metagenes', 'super genes', or latent variables, capture the essential patterns of variation in the data while reducing noise and computational burden [4]. This whitepaper frames PCA not just as a statistical tool, but as a critical methodology for generating biologically meaningful latent constructs in bioinformatics research.

Theoretical Foundations of PCA and 'Metagenes'

Mathematical Definition and Properties

PCA is an orthogonal linear transformation that projects data to a new coordinate system wherein the greatest variance lies on the first coordinate (the first PC), the second greatest variance on the second coordinate, and so on [5]. Formally, given a data matrix X of dimensions n × p (with n samples and p genes), PCA transforms it into a new matrix T = XW, where W is a p × p matrix of weights whose columns are the eigenvectors of the covariance matrix X^TX [5].

The principal components possess several key properties that make them ideal for bioinformatics applications [4]:

- Orthogonality: Different PCs are orthogonal to each other, effectively solving multicollinearity problems in regression analysis.

- Maximal Variance: The first PC explains the largest possible variance in the data, with each subsequent component explaining the maximum remaining variance under the orthogonality constraint.

- Dimensionality Reduction: Often, the first few PCs capture the majority of the data's variance, allowing for a significant reduction from p dimensions to a much smaller number k.

- Equivalence: Any linear function of the original genes can be expressed in terms of the PCs, ensuring no loss of linear signal.

'Metagenes' as Biological Constructs

In gene expression analysis, the principal components are biologically interpreted as metagenes [4]. A metagene represents a coordinated pattern of gene expression across a set of samples. It is a latent variable that may correspond to an unobserved biological factor, such as the activity of a specific pathway, a cellular phenotype, or a response to an experimental perturbation. The loadings (weights) of the original genes on the PC indicate each gene's contribution to that pattern, allowing researchers to infer which genes drive the observed variation.

Practical Applications and Experimental Protocols

PCA and its derived metagenes are applied across diverse areas of bioinformatics. The following table summarizes the primary use cases.

Table 1: Key Applications of PCA in Bioinformatics

| Application Area | Description | Utility |

|---|---|---|

| Exploratory Analysis & Data Visualization [4] | Projecting high-dimensional gene expressions onto 2 or 3 PCs for graphical examination. | Enables visualization of sample clustering, outliers, and broad data structure in 2D or 3D plots. |

| Clustering Analysis [4] | Using the first few PCs (which capture signal) instead of all genes (which contain noise) for clustering genes or samples. | Improves clustering robustness by reducing the influence of noisy variables. |

| Regression Analysis [4] | Using the top k PCs as covariates in predictive models for disease outcomes. | Solves the P >> N problem, making standard regression techniques applicable. |

| Population Genetics [21] | Analyzing genetic variation from millions of SNPs across thousands of individuals to determine population structure. | Identifies genetic ancestry and subpopulations without prior knowledge of group labels. |

| Accommodating Pathway/Network Structure [4] | Performing PCA on genes within a pre-defined pathway or network module. | Generates a single score representing the aggregate activity of a biological pathway or module. |

| Spatial Transcriptomics [15] | Identifying spatially coherent domains in tissue sections based on gene expression patterns. | Unsupervised discovery of tissue microenvironments or niches. |

Standard Protocol: PCA for Gene Expression Analysis

This protocol outlines the steps for a typical PCA on a gene expression matrix (samples × genes) to identify metagenes.

Workflow Overview

Step-by-Step Methodology

- Input Data Preparation: Begin with a normalized gene expression matrix. For bulk RNA-seq, this is typically a counts matrix transformed to log-counts-per-million. For single-cell RNA-seq, create a pseudo-bulk matrix by aggregating counts per sample or use a properly normalized cell-level matrix [25].

- Quality Control and Filtering: Remove non-informative genes. A common practice is to filter out genes with very low expression or low variance across samples. For single-cell data, additional steps are critical: exclude genes with zero expression in more than 90% of individuals (

π₀ ≥ 0.9) to mitigate sparsity-induced skewness [25]. - Data Transformation and Scaling:

- Transformation: Apply a log(x+1) transformation to reduce the right-skewness of expression data [25].

- Centering: Center each gene (variable) to a mean of zero. This is mandatory for PCA.

- Scaling: Scale each gene to unit variance. This is recommended when genes are measured on different scales or when their variances differ substantially. For sequencing data, where variance often depends on mean expression, scaling is crucial.

- Covariance Matrix and Decomposition: Compute the covariance matrix (or the correlation matrix if data was scaled) of the pre-processed data matrix. Perform eigendecomposition on this matrix to obtain eigenvalues and eigenvectors. The eigenvectors are the PCs (metagenes), and the eigenvalues represent the variance explained by each PC.

- Selection of Principal Components: Determining the optimal number of PCs (k) to retain is critical. Conflicting methods exist, and their performance can vary [26].

- Scree Test: Plot eigenvalues in descending order and look for an "elbow" where the curve flattens. Subjective and can be ambiguous.

- Cumulative Variance: Retain enough PCs to explain a pre-specified percentage of total variance (e.g., 70-80%). This method offers greater stability than others [26].

- Kaiser-Guttman Criterion: Retain PCs with eigenvalues greater than 1. This method tends to retain too many PCs in high-dimensional settings [26].

- Interpretation and Downstream Analysis:

- Projected Data: Use the matrix T (n × k) for downstream analyses like clustering or regression.

- Loadings: Examine the loadings (weights in matrix W) to interpret the biological meaning of each metagene. Genes with large absolute loadings drive the variation captured by that PC.

Advanced Protocol: PCA for Pathway and Interaction Analysis

PCA can be extended to model complex biological hierarchies and interactions [4].

Workflow Overview

Step-by-Step Methodology

- Pathway/Module Definition: Obtain gene sets from databases like KEGG, Reactome, or define modules from gene co-expression networks.

- Stratified PCA: For each pathway or module, extract the expression matrix for its constituent genes. Perform a separate PCA on each of these sub-matrices.

- Activity Score Generation: From each pathway-specific PCA, retain the first PC (or the first few PCs) to serve as a summary "activity score" for that pathway in each sample.

- Integrated Modeling: Construct a new design matrix where the features are the pathway activity scores instead of individual genes. This matrix has a much lower dimensionality (number of pathways << number of genes).

- Modeling Interactions:

- Approach A1 (Main Effects): Use the activity scores from multiple pathways as covariates in a regression model. Their co-inclusion can capture some interactive effects [4].

- Approach A2 (Explicit Interactions): For two pathways, create a new set of variables that are the second-order interactions (products) between all genes in the first pathway and all genes in the second. Perform PCA on this combined set of original genes and interaction terms to derive latent interaction variables [4].

Performance and Validation in Bioinformatics

Software and Computational Efficiency

The computational demand of PCA is a key consideration with large genomic datasets. The following table compares the performance of several PCA tools when analyzing tens of millions of single-nucleotide polymorphisms (SNPs).

Table 2: Performance Comparison of PCA Tools on Large-Scale Genotype Data (2,504 samples, ~1 million SNPs) [21]

| Tool | Input Format | Peak Memory Usage | Run Time | Additional Functions |

|---|---|---|---|---|

| VCF2PCACluster | VCF | ~0.1 GB | ~7 min (16 threads) | Kinship estimation, Clustering, Visualization |

| PLINK2 | VCF | >200 GB | Comparable to VCF2PCACluster | Basic GWAS, filtering |

| GCTA | Specific format | High | Comparable | GREML model |

| TASSEL | Specific format | >150 GB | >400 min | Phylogenetics, Diversity analysis |

| GAPIT3 | Specific format | >150 GB | >400 min | GWAS, Kinship |

As shown, VCF2PCACluster demonstrates superior memory efficiency because its processing strategy is independent of the number of SNPs, consuming memory based only on sample size [21]. This makes it suitable for analyzing tens of millions of SNPs on moderately powered computers.

Impact on Statistical Power in Association Studies

Incorporating latent variables from PCA is essential for increasing power in expression quantitative trait locus (eQTL) detection, both in bulk and single-cell RNA-seq data. However, the optimal number of PCs or PEER factors to include in the association model varies significantly by cell type [25].

- Data Quality is Critical: In single-cell eQTL studies, applying PCA to a pseudo-bulk matrix without proper quality control can result in highly correlated factors (Pearson's r = 0.63 – 0.99) and poor performance. The recommended pre-processing includes excluding genes with >90% zero expression, log(x+1) transformation, and standardization, which reduces skewness and yields valid latent variables [25].

- Optimal Number of Factors: A sensitivity analysis is required. The number of eGenes detected may continually increase with the number of factors in some cell types (e.g., CD4NC), but peak and then decrease in others (e.g., CD4SOX4) due to overfitting [25]. There is no one-size-fits-all number.

- Using Highly Variable Genes: Generating PCs from the top 2000 highly variable genes (HVGs) achieves similar eGene discovery power as using all genes but reduces runtime by approximately 6.2-fold [25].

Advanced PCA-Based Methodologies

Extensions of Standard PCA

To address specific limitations of standard PCA, several advanced variants have been developed:

- Sparse PCA: Incorporates regularization to produce principal components with sparse loadings (i.e., many loadings are set to zero). This enhances biological interpretability by clearly identifying a small subset of genes that drive each metagene [4].

- Supervised PCA: In this approach, the dimensionality reduction step is guided by the outcome variable. Only genes most correlated with the outcome are used to perform PCA, leading to components with greater predictive power for regression or classification tasks [4].

- Functional PCA: This technique is designed to analyze time-course gene expression data, where measurements are taken over a continuum of time. It models the smooth underlying functional trends, treating the observed data as a set of curves [4].

Integration with Artificial Intelligence

PCA remains a relevant and widely used unsupervised learning method within the broader context of AI-driven drug discovery. It is categorized as an unsupervised learning technique and is employed for tasks such as chemical clustering, diversity analysis, and dimensionality reduction of large chemical libraries [27]. Its simplicity, speed, and interpretability make it a valuable tool for initial data exploration and preprocessing, even alongside more complex deep learning models.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item / Resource | Type | Function / Application |

|---|---|---|

| VCF2PCACluster [21] | Software Tool | Dedicated tool for fast, memory-efficient PCA and clustering directly from VCF files. Ideal for large-scale genotype data. |

| PLINK2 [21] | Software Tool | A whole-genome association toolset that includes PCA functionality, widely used in population genetics. |

R prcomp function [4] |

Software Function | A standard function in the R statistical environment for performing PCA. Highly flexible and integrable into custom analysis pipelines. |

SAS PRINCOMP [4] |

Software Procedure | A procedure in the SAS software suite for conducting PCA. |

MATLAB princomp [4] |

Software Function | A function in MATLAB for performing PCA. |

| Highly Variable Genes (HVGs) [25] | Analytical Strategy | A pre-filtering step to select genes with the highest variance across samples before PCA. Maintains discovery power while drastically improving computational efficiency. |

| Kinship Estimation Methods (e.g., Normalized_IBS) [21] | Statistical Method | Methods to estimate genetic relatedness matrices, which can be used as input for PCA to improve population structure inference in genetic studies. |

| Clustering Algorithms (e.g., K-Means, EM-Gaussian) [21] | Downstream Tool | Algorithms used to cluster samples based on the top PCs, automatically revealing population structure or sample subgroups. |

Visualizing Population Structure and Sample Clustering with PCA

Principal Component Analysis (PCA) stands as a cornerstone multivariate technique in bioinformatics, providing researchers with a powerful tool to reduce the complexity of high-dimensional datasets while preserving covariance structure. This in-depth technical guide explores the fundamental principles, methodologies, and applications of PCA for visualizing population structure and sample clustering in genetic and biomedical research. We present comprehensive experimental protocols, detailed analytical frameworks, and critical considerations for implementing PCA across diverse research contexts, from population genetics to drug discovery. Within the broader thesis of bioinformatics research, PCA serves as a critical hypothesis-generating tool that enables researchers to identify patterns, substructures, and relationships within complex biological data that would otherwise remain hidden in high-dimensional space.

Principal Component Analysis (PCA) represents a fundamental dimensionality reduction approach that has become indispensable across bioinformatics domains. In essence, PCA transforms high-dimensional data into a new coordinate system where the greatest variances lie along the first axes (principal components), allowing researchers to visualize the strongest trends in datasets with minimal information loss [4] [10]. The technique is particularly valuable for addressing the "curse of dimensionality" - a pervasive challenge in bioinformatics where the number of variables (P) far exceeds the number of observations (N), creating computational and analytical bottlenecks [1]. For example, in transcriptomic studies, researchers routinely analyze >20,000 gene expressions across fewer than 100 samples, making dimensionality reduction not just beneficial but essential for meaningful analysis [1].

The mathematical foundation of PCA involves computing eigenvalues and eigenvectors of the variance-covariance matrix of the original data [4]. This process generates principal components (PCs) that are orthogonal to each other, with the first PC explaining the largest proportion of variance, the second PC the next largest, and so forth [4]. In bioinformatics contexts, these PCs have been variously termed 'metagenes', 'super genes', or 'latent variables' when applied to gene expression data [4]. The ability of PCA to summarize information from multiple correlated variables into fewer artificial variables makes it particularly powerful for clustering analysis and data visualization [28].

Fundamental Principles and Mathematical Foundation

Core Mathematical Concepts

PCA operates on a fundamental mathematical framework centered on eigen decomposition of covariance matrices. Given a genotype matrix G of dimension N×D, where N represents individuals and D represents genetic variants, the data is first mean-centered to create matrix X [29]. The covariance matrix C is then computed as:

Cij = 1/(mij-1) × ΣXsiXsj - 1/(mij(mij-1)) × (ΣXsi)(ΣXsj)

where sums are over mij sites with non-missing genotypes for both sample i and sample j [29]. The principal components are obtained as eigenvectors of this covariance matrix, normalized to have Euclidean length equal to 1, and ordered by the magnitude of corresponding eigenvalues [29]. The resulting PCs have crucial statistical properties: they are orthogonal to each other, have dimensionality much lower than original measurements, explain decreasing proportions of variance, and can represent any linear function of the original variables [4].

Key Parameters and Measurements

Table 1: Key Parameters in PCA Implementation

| Parameter | Description | Considerations |

|---|---|---|

| Number of PCs | The count of principal components to retain for analysis | No consensus; Tracy-Widom statistic, arbitrary selection, or percentage variance explained approaches used [30] |

| Variance Explained | Percentage of total variance captured by each PC | Typically decreases with each subsequent component; first 2-3 PCs often visualized [30] |

| Window Length | Size of genomic segments for local PCA | Balance between signal (longer windows) and resolution (shorter windows) [29] |

| Linkage Threshold | r² value for LD pruning | Commonly 0.1-0.2; removes spurious correlations from physical linkage [31] |

Experimental Design and Methodological Protocols

Population Genetics Workflow

The standard workflow for population structure analysis using PCA involves sequential steps from data preparation through visualization, with particular attention to addressing population stratification and linkage disequilibrium concerns.

Figure 1: PCA Analysis Workflow for Population Genetics

Detailed Protocol for Genetic Data

Step 1: Data Preparation and Quality Control Begin with variant call format (VCF) files containing genomic data. Filter for quality metrics including call rate, minor allele frequency, and Hardy-Weinberg equilibrium. Recode genotypes numerically, typically encoding AA, AB, and BB as 0, 1, and 2 respectively [30]. For bioinformatics data where N (observations) is much smaller than P (variables), proper normalization is essential - typically centering variables to mean zero and sometimes scaling to variance one [4].

Step 2: Linkage Pruning Prune variants in linkage disequilibrium using tools like PLINK with commands such as:

This command specifies a 50Kb window, 10bp step size, and r² threshold of 0.1 to remove correlated variants [31]. This step is crucial as LD violates PCA's assumption of variable independence.

Step 3: PCA Calculation Execute PCA on pruned datasets using implementation-specific commands. For PLINK:

This generates eigenvalues and eigenvectors for subsequent analysis [31].

Step 4: Visualization and Interpretation Plot individuals using the first two or three PCs, typically accounting for the largest variance proportions. Color code by putative population origin or other relevant factors. Calculate percentage variance explained as (eigenvalue_i / sum(eigenvalues)) × 100 [31].

Alternative Applications Protocol

For non-genetic applications such as drug discovery, the protocol adapts to different data types. In chemical library design, PCA is applied to 20+ structural and physicochemical parameters including molecular weight, hydrogen bond donors/acceptors, rotatable bonds, stereocenter count, topological polar surface area, and octanol/water partition coefficients [32]. The workflow involves:

- Calculating physicochemical descriptors for all compounds

- Standardizing parameters to comparable scales

- Performing PCA on the correlation matrix

- Visualizing compounds in PC space to identify clustering patterns

- Interpreting loadings to understand structural drivers of separation [32]

Essential Research Reagents and Computational Tools

Table 2: Essential Research Reagent Solutions for PCA Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| PLINK | Genome association analysis | LD pruning and PCA computation for genetic data [31] |

| EIGENSOFT (SmartPCA) | Population genetics analysis | Specialized PCA implementation for genetic studies [30] |

| R Statistical Environment | Data analysis and visualization | Flexible PCA implementation and visualization [4] [31] |

| Instant JChem | Chemoinformatics platform | Calculation of physicochemical parameters for compound analysis [32] |

| VCC Laboratory | Online chemical property calculator | Determination of partition coefficients and solubility [32] |

| MDAnalysis | Molecular dynamics analysis | PCA of protein trajectories and conformational sampling [16] |

Data Interpretation and Analytical Frameworks

Visualizing and Interpreting PCA Results

PCA results are typically visualized as scatterplots with the first two PCs as axes, where each point represents an individual sample. The spatial relationships between points reflect genetic similarities, with closely clustered points indicating shared ancestry or population membership [30] [31]. When interpreting these plots, researchers should note:

- Continual Variation: Clines or gradients may indicate continuous gene flow between populations [31]

- Discrete Clustering: Distinct, separated clusters suggest population substructure or divergent ancestry [33]

- Outlier Positioning: Samples falling between clusters may represent admixed individuals or technical artifacts [30]

The percentage of variance explained by each PC should be displayed on corresponding axes, providing context for the biological significance of observed patterns [31]. Importantly, PCA plots should be interpreted as approximations of complex relationships, with higher PCs potentially capturing additional biologically relevant structure.

Figure 2: PCA Result Interpretation Framework

Advanced Interpretation Techniques

Local PCA represents an advanced approach that examines heterogeneity in patterns of relatedness across genomic regions [29]. By dividing the genome into windows and performing PCA separately on each, researchers can identify regions where population structure effects vary substantially, potentially indicating selective pressures or chromosomal inversions [29]. The methodology involves:

- Dividing the genome into contiguous windows

- Performing PCA separately on each window

- Measuring dissimilarity in relatedness patterns between windows

- Visualizing resulting dissimilarity matrices using multidimensional scaling

- Combining similar windows to visualize local population structure effects [29]

This approach has revealed substantial heterogeneity in population structure effects across megabase scales in human, Medicago truncatula, and Drosophila melanogaster datasets [29].

Critical Methodological Considerations and Limitations

Despite its widespread application, PCA carries significant limitations that researchers must acknowledge. A 2022 study demonstrated that PCA results can be artifacts of the data and can be easily manipulated to generate desired outcomes, raising concerns about the validity of numerous genetic studies relying heavily on PCA [30]. Specific critical considerations include:

Sensitivity to Analysis Decisions PCA outcomes are strongly influenced by marker selection, sample composition, implementation details, and analytical parameters [30]. The number of PCs to retain lacks consensus, with recommendations ranging from 2 to 280 components depending on the study [30]. This flexibility enables potential cherry-picking of results that support predetermined conclusions.

Data Structure Artifacts The apparent clusters in PCA plots may reflect technical artifacts rather than biological reality. In one compelling demonstration, PCA of a simple color model (with red, green, and blue as distinct "populations") failed to properly represent true distances between colors in the reduced dimensional space [30]. This suggests PCA may perform poorly even in ideal conditions with maximized differentiation between groups.

Population Genetics Assumptions In genetic studies, PCA relies on the assumption that allele frequency differences drive population separation, potentially oversimplifying complex evolutionary histories. The method may struggle to distinguish recently diverged populations or adequately represent admixture patterns [33]. Studies comparing PCA to alternative methods like t-SNE and Generative Topographic Mapping found these non-linear methods could identify more fine-grained population clusters, particularly within continental groups [33].

Statistical Limitations PCA is largely a parameter-free, assumption-free method that involves no significance testing, effect size evaluation, or error estimation [30]. This "black box" nature makes it difficult to assess result robustness or quantify uncertainty, potentially leading to overinterpretation of visual patterns.

Comparative Methods and Emerging Alternatives

While PCA remains widely used, several alternative dimensionality reduction techniques offer complementary insights:

t-Distributed Stochastic Neighbor Embedding (t-SNE) This non-linear method can capture higher percentages of data variance and identify more fine-grained population clusters [33]. Unlike PCA, t-SNE excels at preserving local structure but cannot project new data without retraining.

Generative Topographic Mapping (GTM) GTM generates posterior probabilities of class membership, allowing probability-based ancestry assessment [33]. This approach enables both improved visualization and ancestry classification with uncertainty quantification.

Local PCA Applications Rather than treating PCA as a global analysis, window-based approaches reveal how population structure varies across the genome, potentially identifying regions affected by linked selection or inversions [29].

Table 3: Comparison of Dimensionality Reduction Methods in Genetics

| Method | Key Advantages | Limitations | Appropriate Context |

|---|---|---|---|

| Standard PCA | Fast computation; Simple interpretation; Wide software support | Limited fine-scale resolution; Linear assumptions; Sensitive to parameters | Initial data exploration; Major population structure |

| t-SNE | Captures non-linear patterns; Fine-scale clustering | Cannot project new points; Computational intensity; Parameter sensitivity | Detailed population substructure; Within-continent differentiation |

| GTM | Probability framework; Projection capability; Classification potential | Complex implementation; Limited adoption in genetics | Ancestry classification; Admixed population analysis |

| Local PCA | Identifies genomic heterogeneity; Links to selective processes | Window size selection; Multiple testing concerns | Selection scans; Chromosomal inversion detection |

Applications Across Bioinformatics Domains

Population Genetics and Ancestry Analysis

PCA serves as the foremost analysis in most population genetic studies, used to characterize individuals and populations, draw historical conclusions about origins and dispersion, and identify outliers [30]. In genome-wide association studies (GWAS), PCA corrects for population stratification to prevent spurious associations [4] [29]. The method has been particularly valuable for identifying genetic clusters that correspond to geographic origins, though its resolution for fine-scale population structure remains limited [33].

Drug Discovery and Chemical Informatics