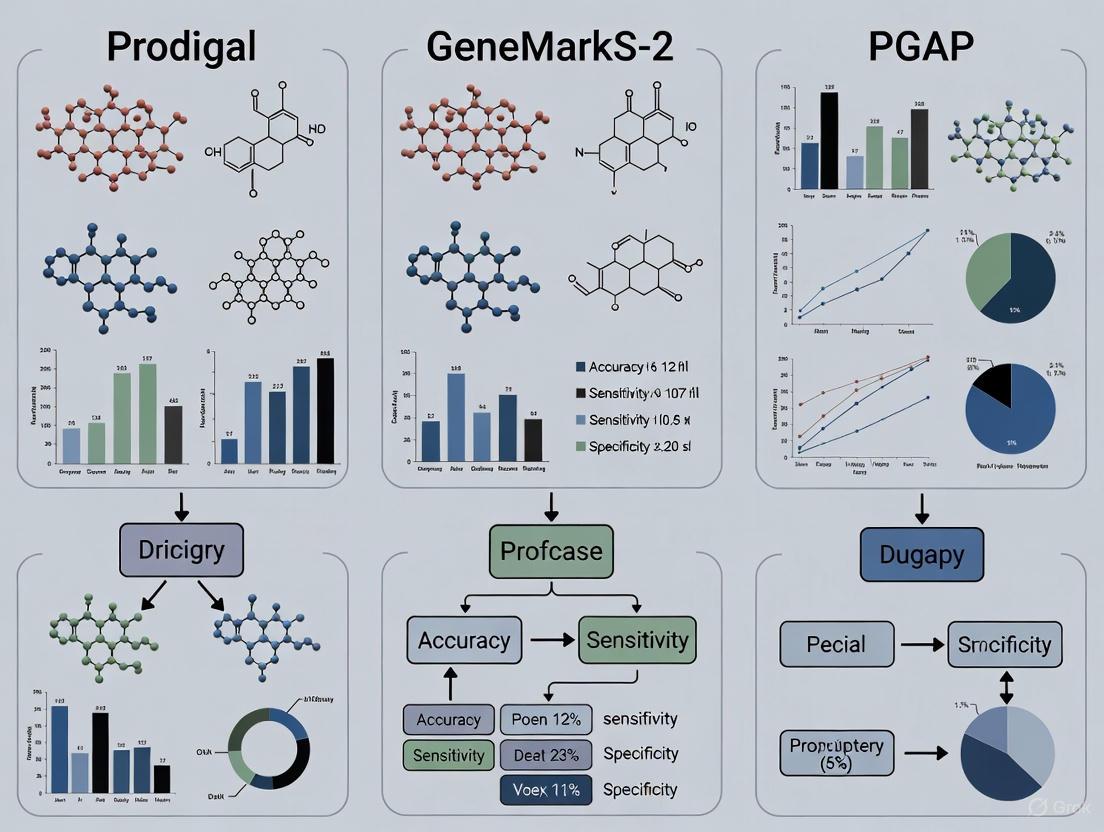

Prokaryotic Gene Prediction Showdown: A Critical Comparison of Prodigal, GeneMarkS-2, and PGAP for Genomic Annotation

Accurate gene prediction is foundational for downstream analyses in genomics, from functional annotation to drug target identification.

Prokaryotic Gene Prediction Showdown: A Critical Comparison of Prodigal, GeneMarkS-2, and PGAP for Genomic Annotation

Abstract

Accurate gene prediction is foundational for downstream analyses in genomics, from functional annotation to drug target identification. This article provides a comprehensive, evidence-based comparison of three leading prokaryotic gene-finding tools: Prodigal, GeneMarkS-2, and the PGAP pipeline. We explore their foundational algorithms, practical application methodologies, and common challenges, with a focus on the critical issue of discrepant gene start predictions. By synthesizing performance data from recent benchmarks and validation studies, this review offers researchers and drug development professionals a clear framework for selecting and optimizing annotation strategies to enhance the reliability of their genomic data, ultimately supporting more accurate predictions in biomedical and clinical research.

The Prokaryotic Gene Prediction Landscape: Core Algorithms and Divergent Strategies

Accurate computational gene finding represents a foundational step in modern genomics, forming the solid groundwork upon which downstream analyses in basic biology and drug discovery are built [1]. The ability to precisely identify gene structures within nucleotide sequences is crucial for constructing accurate species proteomes, functionally annotating proteins, and inferring cellular networks [1]. In the context of drug development, genetic screening has been shown to roughly double the chance that a preclinical finding will successfully translate to clinical application [2]. As high-throughput sequencing technologies continue to generate vast amounts of genomic data at an unprecedented pace, the critical role of accurate gene prediction has only intensified, with implications for diagnosing genetic disorders, understanding evolutionary relationships, and identifying novel therapeutic targets [3].

Performance Comparison of Major Gene Finding Tools

Three of the most prominent tools in prokaryotic gene finding are Prodigal, GeneMarkS-2, and the PGAP pipeline within the NCBI annotation system. Each employs distinct algorithmic approaches to the challenge of gene identification:

- Prodigal (Protein Dynamic Programming Gene-Finding Algorithm) utilizes dynamic programming and is primarily oriented toward searching for canonical Shine-Dalgarno ribosome binding sites (RBS), with parameters optimized for Escherichia coli genes with verified starts [1] [4].

- GeneMarkS-2 employs a self-trained hidden Markov model with heuristics that incorporates multiple models of sequence patterns in gene upstream regions within the same genome, allowing it to handle diverse translation initiation mechanisms including Shine-Dalgarno RBSs, non-canonical RBSs, and leaderless transcription [1] [4].

- PGAP (NCBI Prokaryotic Genome Annotation Pipeline) represents an integrated annotation system that combines ab initio gene prediction with alignment-based methods using annotated starts of homologous genes [1].

Comparative Performance Analysis

A comprehensive computational experiment evaluating these three tools was conducted using 5,488 representative prokaryotic genomes from the NCBI collection, with genomes categorized by GC-content "bins" to assess performance across different genomic contexts [1]. The study specifically measured the percentage of genes per genome for which start site predictions differed between the computational tools, providing a robust assessment of annotation consistency.

Table 1: Gene Start Prediction Discrepancies Across GC Content Ranges

| GC Content Range | Avg. % Genes with Differing Start Predictions | Notes |

|---|---|---|

| Low GC Genomes | ~7% | More consistent predictions |

| High GC Genomes | ~15-22% | Highest discrepancy rates |

| Overall Average | 7-22% | Varies significantly by GC content |

The results demonstrated that gene start predictions consistently differed for a substantial proportion of genes in each genome, with high GC genomes showing notably larger differences [1]. This discrepancy rate of 15-25% between tool predictions represents a serious challenge for the field, particularly given the limited availability of experimentally verified gene starts for benchmarking and validation [1].

Table 2: Algorithmic Approaches and Strengths of Gene Finding Tools

| Tool | Algorithmic Approach | Strengths | Limitations |

|---|---|---|---|

| Prodigal | Dynamic programming, optimized for canonical SD RBSs | Fast, parameters optimized for E. coli | Primarily oriented toward canonical SD patterns |

| GeneMarkS-2 | Self-trained hidden Markov model with multiple upstream region models | Handles diverse translation initiation mechanisms | Requires sufficient sequence data for effective training |

| PGAP | Combination of ab initio and homology-based methods | Leverages homologous gene annotations | Dependent on quality and availability of homologs in databases |

Advanced Approaches for Improved Accuracy

Hybrid and Integrated Methods

To address the limitations of individual tools, researchers have developed hybrid approaches that combine multiple prediction methods:

StartLink and StartLink+: The StartLink algorithm infers gene starts from conservation patterns revealed by multiple alignments of homologous nucleotide sequences, without using existing gene-start annotations or information on RBS sequence patterns [1]. StartLink+ combines both ab initio and alignment-based methods, with output defined only for genes where independent StartLink and GeneMarkS-2 predictions concur. This approach achieves remarkable accuracy of 98-99% on sets of genes with experimentally verified starts, though it delivers predictions for only 73% of genes per genome on average [1].

Phage Commander: This application exemplifies the multi-tool approach by running bacteriophage genome sequences through nine different gene identification programs simultaneously and integrating the results within a single output table [4]. Benchmarking using eight high-quality bacteriophage genomes with experimentally validated genes demonstrated that the most accurate annotations are obtained by exporting genes identified by at least two or three programs, followed by manual curation [4].

Experimental Workflow for Gene Finding and Annotation

The following diagram illustrates a comprehensive workflow for computational genome annotation, integrating both structural and functional annotation components:

Figure 1: Comprehensive workflow for computational genome annotation, illustrating the sequential processes from raw sequence to quality-controlled annotation, incorporating multiple prediction methods and validation steps.

Impact on Genomics and Drug Discovery

Applications in Functional Genomics and Therapeutic Development

Accurate gene finding creates essential foundations for multiple downstream applications with significant implications for drug discovery:

Expression Forecasting: Emerging computational methods now offer expression forecasting—prediction of genetic perturbation effects on the transcriptome—which serves as a new type of general-purpose screening tool in drug development [2]. Compared to physical Perturb-seq and similar assays, in silico modeling is cheaper, less labor-intensive, and easier to apply to less accessible cell types [2]. These approaches are currently being used to optimize reprogramming protocols, search for anti-aging transcription factor cocktails, and nominate drug targets for heart disease [2].

Antibiotic Resistance Annotation: Databases such as proGenomes2 provide dedicated antibiotic resistance annotations of both antimicrobial resistance genes and resistance-conveying single nucleotide variants, leveraging resources like the Comprehensive Antibiotic Resistance Database and ResFams [5]. Accurate identification of these genetic elements is crucial for understanding pathogen resistance mechanisms and developing effective countermeasures.

Bacteriophage Therapy: The accurate annotation of bacteriophage genomes is particularly important given the growing interest in phages as alternatives to antibiotics for treating drug-resistant infections [4]. Phages are attractive therapeutic agents because they rapidly lyse their host bacteria, are highly specific to their host, and co-evolve to reduce resistance development [4].

Quality Control and Database Considerations

The critical importance of accurate gene finding extends to database quality and consistency:

Database Consistency: proGenomes2 addresses widespread inconsistencies in genomic databases by providing 87,920 high-quality genomes with consistent taxonomic and functional annotations, normalized identifiers, and improved linkage to NCBI BioSample database [5]. Such standardization is essential for reliable comparative analyses.

Pan-genome Representations: proGenomes2 provides pan-genomes for species clusters, representing the genetic diversity within a species through non-redundant sets of genes [5]. This approach reduces 283 million genes to 63 million non-redundant sequences while providing far greater coverage of the functional repertoire than representative genomes alone.

Table 3: Key Databases and Computational Resources for Gene Annotation

| Resource Name | Type | Primary Function | Application in Research |

|---|---|---|---|

| NCBI RefSeq | Database | Comprehensive collection of genome sequences and annotations | Primary source of genomic data; reference for comparative annotation |

| UniProt | Database | Protein sequences and functional information | Evidence for homology-based gene prediction and functional annotation |

| InterPro | Database | Protein families, domains, and functional sites | Functional characterization of predicted genes |

| proGenomes2 | Database | 87,920 high-quality genomes with consistent annotations | Comparative genomics; pan-genome analyses; habitat-specific studies |

| CARD | Database | Comprehensive Antibiotic Resistance Database | Annotation of antimicrobial resistance genes |

| Dfam | Database | Transposable elements and repeats | Repeat masking in structural annotation |

| eggNOG | Tool | Orthology prediction and functional annotation | General functional annotation of protein-coding genes |

| Phage Commander | Tool | Integration of multiple gene identification programs | Consensus-based gene prediction for bacteriophage genomes |

Methodological Protocols

Experimental Protocol for Gene Finding Benchmarking

The following diagram outlines a standardized methodology for benchmarking gene finding tools, based on published large-scale comparisons:

Figure 2: Benchmarking methodology for gene finding tools, illustrating the systematic comparison approach using thousands of genomes across different GC-content ranges.

Detailed Methodology

Based on the large-scale comparison study involving 5,488 representative prokaryotic genomes [1], the experimental protocol for benchmarking gene finding tools includes:

Genome Selection and Categorization:

Tool Execution and Parameterization:

- Execute each gene finding tool (Prodigal, GeneMarkS-2, PGAP) using default parameters unless specific experimental conditions require customization.

- For Prodigal, maintain orientation toward canonical Shine-Dalgarno RBS detection while recognizing limitations in genomes with non-canonical translation initiation mechanisms [1].

- For GeneMarkS-2, leverage its ability to handle multiple models of sequence patterns in gene upstream regions within the same genome [1].

- For PGAP, utilize its combination of ab initio and homology-based approaches [1].

Performance Metrics and Analysis:

- Calculate discrepancy rates by comparing start site predictions across tools for each gene in the dataset.

- Analyze patterns of disagreement relative to genomic features, particularly GC content and presence of alternative translation initiation mechanisms.

- Validate predictions using genes with experimentally verified starts where available, though such datasets remain limited in size [1].

Accurate gene finding remains a critical challenge in genomics with far-reaching implications for basic biological research and drug discovery. The performance comparison between Prodigal, GeneMarkS-2, and PGAP reveals significant discrepancies in gene start predictions, particularly in high GC genomes where disagreement rates reach 15-25% of genes [1]. This inconsistency underscores the need for improved algorithms, standardized benchmarking, and experimental validation. Hybrid approaches such as StartLink+ that combine multiple prediction methods demonstrate that substantially higher accuracy (98-99%) can be achieved when independent predictions concur [1]. Similarly, tools like Phage Commander that integrate multiple gene identification programs show improved accuracy through consensus-based approaches [4]. As genomics continues to play an expanding role in drug discovery and therapeutic development, advancing the accuracy and reliability of computational gene finding will remain essential for extracting meaningful biological insights from the growing wealth of genomic data.

Accurate prokaryotic gene prediction is a fundamental prerequisite for downstream genomic analyses, including functional annotation, metabolic pathway reconstruction, and drug target identification [6]. Among the widely used tools for this purpose, Prodigal (Protein-Coding Gene Prediction Algorithm) has established itself as a popular choice for high-throughput annotation pipelines due to its computational efficiency and reliability [6]. However, its performance characteristics and underlying biases must be objectively evaluated against contemporary alternatives such as GeneMarkS-2 and the PGAP (Prokaryotic Genome Annotation Pipeline) to provide researchers with evidence-based selection criteria.

This guide synthesizes current research to compare the performance of these three predominant gene prediction tools, with particular emphasis on their accuracy in identifying translation initiation sites (TIS), handling diverse genomic features, and suitability for different research contexts. Understanding the methodological distinctions between these tools—Prodigal's optimized parameters for Escherichia coli Shine-Dalgarno sequences, GeneMarkS-2's multiple model approach for heterogeneous upstream regions, and PGAP's hybrid homology-guided strategy—enables researchers to make informed decisions based on their specific genomic data and research objectives [7].

Experimental evaluations consistently demonstrate that no single gene prediction tool ranks as the most accurate across all genomes or assessment metrics [6]. Performance is inherently dependent on the genomic characteristics of the target organism, with factors such as GC content, ribosomal binding site (RBS) type, and prevalence of leaderless transcription significantly influencing tool-specific accuracy [7].

Table 1: Overall Performance Characteristics Across Prokaryotic Genomes

| Tool | Primary Approach | Optimal Use Cases | Key Limitations |

|---|---|---|---|

| Prodigal | Ab initio statistical model | High-throughput annotation of bacteria with canonical Shine-Dalgarno RBS [7] | Primarily oriented toward canonical SD patterns; parameters optimized for E. coli [7] |

| GeneMarkS-2 | Self-training algorithm with multiple models | Genomes with heterogeneous translation initiation mechanisms (SD, non-SD, leaderless) [7] | Requires sufficiently long sequences for effective training [7] |

| PGAP | Hybrid pipeline combining ab initio and homology-based methods | Annotation when homologous sequences are available; NCBI's standardized pipeline [7] [6] | Dependent on reference database quality and completeness [6] |

Benchmarking analyses reveal substantial discrepancies in gene start predictions between these tools, affecting 15–25% of genes in a typical genome [7]. These inconsistencies present a serious challenge for genomic annotation, particularly given the limited availability of genes with experimentally verified translation initiation sites—approximately 2,900 genes across only 10 species as referenced in recent studies [7].

Comparative Performance Data

Translation Initiation Site (TIS) Prediction Accuracy

Accurate identification of translation initiation sites represents one of the most challenging aspects of prokaryotic gene prediction, with significant implications for defining authentic protein N-termini and upstream regulatory elements [7]. Comparative analyses using genes with experimentally verified starts reveal distinct performance patterns among the tools.

Table 2: TIS Prediction Accuracy on Experimentally Verified Gene Sets

| Evaluation Context | Prodigal Performance | GeneMarkS-2 Performance | PGAP Performance | Notes |

|---|---|---|---|---|

| Genes with verified starts | Not explicitly reported | 98–99% accuracy when combined with StartLink (as StartLink+) [7] | Not explicitly reported | StartLink+ represents consensus between StartLink and GeneMarkS-2 [7] |

| Discrepancy with database annotations | 7–22% of genes per genome [7] | 7–22% of genes per genome [7] | 7–22% of genes per genome [7] | Higher differences observed in GC-rich genomes [7] |

| Genomic GC-content sensitivity | Decreased accuracy in high-GC genomes [7] | Decreased accuracy in high-GC genomes [7] | Decreased accuracy in high-GC genomes [7] | High GC increases potential ORFs and ambiguous start codons [7] |

The StartLink+ approach, which combines GeneMarkS-2 with the alignment-based StartLink algorithm, demonstrates exceptionally high accuracy (98–99%) on verified gene sets, though this comes at the cost of reduced coverage—predicting starts for only 73% of genes per genome on average [7]. This trade-off between accuracy and comprehensiveness represents a critical consideration for researchers prioritizing precise start codon identification.

Tool Performance Across Taxonomic Groups

Different prokaryotic clades exhibit distinct sequence patterns in gene upstream regions, directly impacting tool performance [7]:

- Enterobacterales: Prodigal shows strong performance in these mid-GC genomes with predominant Shine-Dalgarno RBS patterns, reflecting its optimization for E. coli [7].

- Archaea and Actinobacteria: GeneMarkS-2 demonstrates advantages in these clades where leaderless transcription is prevalent, as it employs multiple models for heterogeneous upstream regions [7].

- FCB Group: Both tools face challenges in these low-to-mid-GC genomes with non-canonical AT-rich RBS patterns [7].

Experimental Protocols and Methodologies

Benchmarking Gene Prediction Tools

To ensure reproducible and scientifically valid tool comparisons, researchers have established standardized evaluation protocols. The ORForise framework provides a comprehensive set of 12 primary and 60 secondary metrics for assessing coding sequence (CDS) prediction tools [6].

Table 3: Essential Research Reagents and Computational Resources

| Resource Type | Specific Examples | Research Function |

|---|---|---|

| Verified Gene Sets | E. coli (769 genes), M. tuberculosis (701 genes), H. salinarum (530 genes) [7] | Gold-standard benchmarks for TIS prediction accuracy [7] |

| Reference Genomes | Ensembl Bacteria model organisms* | Standardized genomes for cross-tool performance comparisons [6] |

| Evaluation Frameworks | ORForise [6] | Systematic assessment using multiple metrics to identify tool strengths/weaknesses [6] |

| Pan-genome Databases | Species-level pan-genomes for human microbiome [8] | Reference databases for homology-dependent methods [8] |

Experimental workflow typically involves:

- Data Collection: Curating complete bacterial genomes from NCBI GenBank with high-quality annotations [9].

- ORF Extraction: Identifying all potential open reading frames using tools like ORFipy [9].

- Tool Execution: Running each gene prediction tool with recommended parameters.

- Validation: Comparing predictions against experimentally verified starts or trusted annotations.

- Metric Calculation: Using frameworks like ORForise to compute precision, recall, and other relevant metrics [6].

StartLink+ Validation Methodology

The high-accuracy StartLink+ approach employs a specific methodology for TIS validation [7]:

This conservative approach achieves its 98–99% accuracy by only retaining predictions where both independent methods converge on the same translation initiation site [7].

Emerging Approaches and Future Directions

Genomic Language Models

Recent advances in genomic language models (gLMs) inspired by natural language processing show promise for overcoming limitations of traditional methods. Models like GeneLM use transformer architectures pretrained on bacterial genomes to identify coding sequences and refine translation initiation site predictions [9]. These approaches capture contextual dependencies in DNA sequences that may be missed by traditional statistical models, potentially offering improved performance across diverse genomic contexts [9].

Pan-genome Informed Annotation

Tools like PGAP2 and GeneMark-HM leverage expanding databases of prokaryotic pan-genomes to improve annotation accuracy [10] [8]. By incorporating information from thousands of genomes, these approaches can identify species-specific patterns and improve gene prediction in novel metagenomic assemblies [8]. The GeneMark-HM pipeline specifically uses a database of species-level pan-genomes for the human microbiome to select optimal models for metagenomic contigs [8].

Prodigal remains a highly efficient and effective choice for high-throughput annotation of prokaryotic genomes, particularly when analyzing bacteria with canonical Shine-Dalgarno ribosomal binding sites. However, evidence from comparative studies indicates that researchers should carefully consider their specific genomic context and accuracy requirements when selecting a gene prediction tool.

For projects prioritizing annotation speed and computational efficiency for large-scale genomic surveys of typical bacterial genomes, Prodigal provides an excellent balance of performance and resource requirements. When maximizing translation initiation site accuracy is paramount, especially for downstream experimental applications, a consensus approach like StartLink+ that combines GeneMarkS-2 with homology-based methods may be worth the additional computational investment. For comprehensive genome annotation incorporating both ab initio prediction and homology evidence, integrated pipelines like PGAP offer a balanced solution.

The ongoing development of machine learning approaches and expansion of pan-genome databases will likely further refine prokaryotic gene prediction, potentially reducing current discrepancies between tools and improving annotation accuracy across diverse taxonomic groups.

Accurate gene prediction is a cornerstone of prokaryotic genomics, forming the essential foundation for downstream analyses in drug development and functional genomics. For years, the conventional view held that prokaryotic genes were led by promoters and initiated via Shine-Dalgarno (SD) ribosome binding sites. However, advanced sequencing technologies have revealed a more complex reality, including widespread leaderless transcription and non-canonical translation initiation mechanisms that challenge traditional gene-finding tools [11] [1].

This comparison guide objectively evaluates three prominent gene prediction tools—GeneMarkS-2, Prodigal, and PGAP—within the broader thesis of understanding their performance characteristics across diverse genomic contexts. We focus particularly on GeneMarkS-2's innovative approach to modeling atypical sequence patterns, presenting experimental data and performance metrics to guide researchers, scientists, and drug development professionals in selecting appropriate bioinformatic tools for their specific applications.

Methodological Comparison: Core Algorithms and Innovation

GeneMarkS-2: Multi-Model Approach for Regulatory Diversity

GeneMarkS-2 introduced groundbreaking algorithmic innovations specifically designed to address the diversity of gene regulatory patterns in prokaryotes. Its core advancement lies in employing a multi-model framework that combines self-training with precomputed heuristic models [11].

- Native and Atypical Gene Detection: The algorithm uses self-training to derive a species-specific model for native genes while simultaneously deploying an array of 41 precomputed bacterial and 41 archaeal atypical models to identify harder-to-detect genes, potentially those acquired through horizontal gene transfer [11].

- Regulatory Signal Classification: GeneMarkS-2 identifies distinct sequence patterns around gene starts, categorizing prokaryotic genomes into five groups (A-D and X) based on their transcription and translation initiation mechanisms. This includes genomes dominated by SD RBSs (Group A), non-SD RBSs (Group B), and those with significant leaderless transcription in bacteria (Group C) and archaea (Group D) [11].

- Leaderless Transcription Modeling: Unlike previous tools, GeneMarkS-2 specifically models sequence patterns characteristic of leaderless transcription, where genes lack 5' untranslated regions and RBSs entirely, a mechanism particularly prevalent in archaea but also observed in numerous bacterial species [11].

Prodigal: Optimized for Canonical Structures

Prodigal (Protein Dynamic Programming Gene-Finding Algorithm) represents an efficient approach optimized primarily for canonical gene structures. Its parameters were originally optimized for Escherichia coli genes with verified starts, making it primarily oriented toward searching for Shine-Dalgarno consensus patterns. While it incorporates models for non-canonical RBSs, its fundamental architecture assumes leadered transcription as the default mechanism [1].

PGAP: Database-Driven Annotation

The Prokaryotic Genome Annotation Pipeline (PGAP) employs a hybrid approach that combines homology-based methods with ab initio prediction. Unlike the self-training methodology of GeneMarkS-2, PGAP relies heavily on comparative analysis against existing databases of annotated gene starts, making its performance dependent on the quality and comprehensiveness of reference data [1]. Recent developments in PGAP2 have expanded its capabilities for pan-genome analysis, focusing on orthologous gene clustering and large-scale genomic comparisons rather than fundamental gene start prediction [12].

Table 1: Core Algorithmic Approaches of Gene Prediction Tools

| Tool | Primary Method | RBS Modeling | Leaderless Transcription | Training Dependency |

|---|---|---|---|---|

| GeneMarkS-2 | Self-training with multiple heuristic models | Explicit models for SD, non-SD, and absent RBS | Direct modeling of leaderless patterns | Self-training; species-specific |

| Prodigal | Dynamic programming with periodic Markov models | Primarily optimized for SD consensus; some non-canonical | Limited explicit modeling | Pre-trained models; E. coli optimized |

| PGAP | Hybrid: homology-based with ab initio prediction | Derived from reference annotations | Indirect through homologous sequences | Dependent on reference database quality |

Performance Comparison: Experimental Data and Benchmarking

Accuracy on Experimentally Verified Gene Starts

Rigorous benchmarking against genes with experimentally verified starts provides the most reliable assessment of prediction accuracy. These validation sets, though limited in size (containing approximately 2,841 genes across multiple species as of December 2019), offer unambiguous ground truth for evaluation [1].

In comparative studies using these verified gene sets, GeneMarkS-2 demonstrated superior accuracy in gene start prediction when compared to other state-of-the-art tools. When combining GeneMarkS-2 with the alignment-based StartLink method (as StartLink+), the accuracy reached an impressive 98-99% on genes where both methods produced concordant predictions [1].

Disagreement Analysis Across Genomic Landscapes

Large-scale comparative analysis across 5,488 representative prokaryotic genomes reveals significant discrepancies in gene start predictions between tools. These disagreements are not random but show systematic patterns correlated with genomic GC content [1].

- GC Content Influence: Prediction disagreements are most pronounced in GC-rich genomes, where differences affect 10-15% of genes on average. In AT-rich genomes, tools show better consensus, with annotations deviating from StartLink+ predictions in only ∼5% of genes [1].

- Archaeal Genomes: In archaeal species, where leaderless transcription is prevalent (affecting 83.6% of species), GeneMarkS-2's specialized models provide distinct advantages. Computational predictions in these genomes have been experimentally validated for species including Halobacterium salinarum, Haloferax volcanii, and Thermococcus onnurineus [1].

- Bacterial Diversity: Among bacterial species, GeneMarkS-2 identified that only 61.5% primarily use SD RBSs, while 10.4% utilize non-SD type RBSs, and 21.6% show significant leaderless transcription (up to 40% of transcripts) [1].

Table 2: Performance Metrics Across Prokaryotic Genomic Groups

| Genomic Category | Representative Species | Key Characteristic | GeneMarkS-2 Advantage | Typical Disagreement Rate |

|---|---|---|---|---|

| Group A (SD-dominated) | Escherichia coli | Strong Shine-Dalgarno consensus | Moderate | ~5-7% |

| Group B (Non-SD RBS) | Bacteroides species | Non-canonical RBS patterns | Significant | ~10-12% |

| Group C (Bacterial leaderless) | Mycobacterium tuberculosis | Up to 40% leaderless transcripts | Substantial | ~12-15% |

| Group D (Archaeal leaderless) | Halobacterium salinarum | High frequency leaderless transcription | Critical | ~15-20% |

| Group X (Weak signals) | Cyanobacteria | Unknown initiation mechanism | Dominant | ~20-25% |

Handling Atypical Genes and Horizontal Transfer

GeneMarkS-2 demonstrates particular strength in identifying atypical genes with compositions deviating from genomic norms, often indicative of horizontal gene transfer. By employing its library of precomputed atypical models covering the GC content range from 30% to 70%, the tool effectively recognizes genes that might escape detection by species-specific models alone [11].

In real-world applications, such as the annotation of a coronene-degrading Halomonas elongata strain, researchers employed a triple-tool approach using PROKKA, PRODIGAL, and GeneMarkS-2 to ensure comprehensive gene prediction, followed by alignment methods to resolve discrepancies—a strategy that highlights the complementary value of these tools [13].

Experimental Protocols and Methodologies

GeneMarkS-2 Training and Prediction Workflow

The experimental protocol for GeneMarkS-2 operation involves a sophisticated self-training procedure that adapts to species-specific patterns while incorporating knowledge of diverse regulatory mechanisms:

GeneMarkS-2 Algorithmic Workflow

Validation Methods for Gene Start Predictions

Researchers have employed several experimental techniques to validate computational gene start predictions, creating gold-standard datasets for benchmarking:

- N-terminal Protein Sequencing: This method provides direct experimental evidence of protein start positions and has been applied to create verified gene sets for bacteria including E. coli, M. tuberculosis, and R. denitrificans, as well as archaea including H. salinarum and N. pharaonis [1].

- Mass Spectrometry: Used to identify N-terminal peptides and verify translation start sites, complementing sequencing approaches [1].

- Differential RNA Sequencing (dRNA-seq): This technique accurately identifies transcription start sites, enabling reliable operon annotation and detection of promoters and translation initiation sites [11].

- Proteomics Validation: Large-scale identification of N-terminal peptides in species like Halobacterium salinarum has provided experimental support for computational predictions of gene starts [11].

Essential Research Reagent Solutions

Table 3: Key Experimental Resources for Gene Prediction Validation

| Reagent/Resource | Primary Function | Application Context | Key Consideration |

|---|---|---|---|

| dRNA-seq Kit | Identification of transcription start sites | Experimental TSS validation for leaderless gene detection | Requires specialized library preparation |

| N-terminal Sequencing Reagents | Direct protein start confirmation | Creating gold-standard datasets for algorithm benchmarking | Low-throughput and resource-intensive |

| Long-read Sequencing (ONT) | Complete genome assembly without fragmentation | Provides context for operon structure and gene boundaries | Superior for repetitive regions and extreme GC content [13] |

| PROKKA Pipeline | Integrated gene prediction and annotation | Rapid initial genome annotation | Combines multiple tools including Prodigal [13] |

| CDD Database | Conserved domain identification | Functional annotation of hypothetical proteins | Uses e-value threshold 0.001 for domain searches [13] |

| BUSCO | Genome completeness assessment | Quality control for gene space coverage | Employs E-value cutoff 0.001 for ortholog detection [13] |

Discussion and Research Implications

The performance differences between GeneMarkS-2, Prodigal, and PGAP have significant implications for genomic research and drug development applications. GeneMarkS-2's superior handling of diverse RBS patterns and leaderless transcription makes it particularly valuable for studying non-model organisms, extremophiles, and pathogens with atypical translation initiation mechanisms.

The biological significance of accurately identifying leaderless genes extends beyond annotation accuracy, as leaderless transcripts exhibit differential responses to antibiotics—some inhibitors of translation initiation affect leadered transcripts but not leaderless ones [1]. This understanding is instrumental for predicting drug effects on pathogens and developing targeted antimicrobial strategies.

For researchers working with metagenomic samples or draft genomes, StartLink+ (combining GeneMarkS-2 with homology-based methods) offers particular promise, though its application is limited by the availability of homologs in databases [1]. In cases where all three tools disagree—particularly prevalent in GC-rich genomes and those with weak regulatory signals—experimental validation remains the gold standard.

As prokaryotic genomics continues to expand into diverse taxonomic groups and environments, tools like GeneMarkS-2 that explicitly model the mechanistic diversity of gene expression will become increasingly essential for accurate genome interpretation and downstream biological insights.

In the field of comparative genomics, accurately identifying orthologs—genes in different species that evolved from a common ancestral gene through speciation—is fundamental to research across evolutionary biology, functional annotation, and drug discovery. Orthologs typically retain the same biological function over evolutionary time, making their correct identification essential for transferring functional knowledge from well-characterized model organisms to less-studied species, understanding evolutionary relationships, and identifying conserved metabolic pathways as potential drug targets [14] [15]. The Prokaryotic Genome Annotation Pipeline (PGAP) has emerged as a sophisticated solution that addresses the limitations of purely ab initio gene prediction tools like Prodigal and GeneMarkS-2 by implementing a hybrid, homology-guided approach to ortholog identification and genome annotation [16] [17].

This guide provides an objective performance comparison between these annotation methodologies, presenting experimental data that illustrates their respective strengths and limitations in various genomic contexts. As the volume of genomic data continues to expand exponentially, with thousands of prokaryotic genomes now available for many species, the development of robust annotation pipelines that can leverage this comparative information has become increasingly important for biological research and therapeutic development [10] [17].

Methodological Frameworks: Contrasting Annotation Approaches

PGAP: A Hybrid, Pan-Genome Informed Pipeline

The NCBI Prokaryotic Genome Annotation Pipeline (PGAP) employs a sophisticated hybrid methodology that integrates both homology-based and ab initio prediction approaches. Unlike pipelines that run ab initio prediction first, PGAP calculates alignment-based evidence for protein-coding and non-protein-coding regions prior to executing ab initio prediction. This evidence is then incorporated by GeneMarkS+ into the gene prediction process, allowing the reconciliation of extrinsic homology evidence with intrinsic sequence patterns [17].

A key innovation in PGAP is its pan-genome approach to protein annotation. For a given taxonomic clade, PGAP defines a set of core proteins that are present in at least 80% of clade members. These core proteins, representing evolutionarily conserved genes, are used to generate a map of protein "footprints" on newly submitted genomic sequences. This approach leverages the growing wealth of comparative genomic information to improve annotation accuracy, particularly for well-studied clades where core genes may comprise up to 75% of the total annotated genes in a single genome [17].

PGAP's annotation process encompasses multiple levels of genomic features, including:

- Protein-coding gene prediction

- Structural RNA genes (5S, 16S, 23S rRNAs)

- Transfer RNA genes

- Small non-coding RNAs

- CRISPR regions

- Other functional elements [16]

Prodigal and GeneMarkS-2:Ab InitioPrediction Tools

In contrast to PGAP's hybrid approach, Prodigal (Protein Digestion for Algal Libraries) employs a purely ab initio methodology based on statistical models of coding sequences. It identifies protein-coding genes by analyzing sequence composition patterns, including codon usage, ribosomal binding sites, and sequence periodicity, without relying on external homology evidence. Prodigal's parameters were originally optimized for Escherichia coli genes with verified starts, making it particularly oriented toward searching for canonical Shine-Dalgarno ribosome binding sites [7].

GeneMarkS-2 represents an advancement in ab initio prediction through its self-training approach that uses multiple models of sequence patterns in gene upstream regions within the same genome. This allows it to handle the diversity of translation initiation mechanisms found across prokaryotic taxa, including Shine-Dalgarno RBSs, non-canonical RBSs, and leaderless transcription. The tool can adapt to different translation initiation mechanisms present in the same genome, making it more flexible across diverse taxonomic groups [7].

Table: Comparison of Core Methodological Approaches

| Feature | PGAP | Prodigal | GeneMarkS-2 |

|---|---|---|---|

| Primary approach | Hybrid (homology + ab initio) | Ab initio (statistical) | Ab initio (self-training) |

| Homology evidence | Pre-computed protein clusters & pan-genome | Not utilized | Not utilized |

| Start site prediction | Integrated evidence | Statistical patterns | Multiple RBS models |

| Taxonomic scope | Broad (Bacteria & Archaea) | Primarily Bacteria | Bacteria & Archaea |

| Dependencies | External protein databases | None | None |

Performance Benchmarking: Experimental Data and Comparative Analysis

Gene Start Prediction Accuracy

Accurate prediction of translation initiation sites (TIS) remains one of the most challenging aspects of gene annotation. Experimental validation studies have revealed significant discrepancies between different annotation methods. In a comprehensive analysis of 5,488 representative prokaryotic genomes, researchers observed that gene start predictions differed between methods for 15-25% of genes in a typical genome [7].

To address this challenge, the StartLink algorithm was developed to infer gene starts from conservation patterns revealed by multiple alignments of homologous nucleotide sequences. When combined with GeneMarkS-2 predictions in the StartLink+ pipeline, the accuracy reached 98-99% on sets of genes with experimentally verified starts. This represents a significant improvement over standalone ab initio methods [7].

Comparative analysis revealed that annotated gene starts in databases deviated from StartLink+ predictions for approximately 5% of genes in AT-rich genomes and 10-15% of genes in GC-rich genomes. This suggests that GC-rich genomes present particular challenges for accurate start site annotation, potentially due to increased numbers of potential open reading frames and ambiguous start codon selection [7].

Table: Gene Start Prediction Accuracy Across Methods

| Method | Approach | Accuracy on Verified Genes | Coverage | Key Strength |

|---|---|---|---|---|

| StartLink+ | Hybrid (alignment + ab initio) | 98-99% | ~73% of genes/genome | Highest accuracy when predictions agree |

| GeneMarkS-2 | Ab initio (self-training) | ~90-95% | 100% | Handles multiple RBS types |

| Prodigal | Ab initio (statistical) | ~85-90% | 100% | Optimized for SD-RBS |

| PGAP | Hybrid (homology-guided) | ~90-97% | 100% | Integrated evidence |

Ortholog Detection Performance

Standardized benchmarking through the Quest for Orthologs (QfO) consortium has provided comprehensive performance evaluations of orthology inference methods. These benchmarks employ multiple assessment strategies, including species tree discordance tests, reference gene tree comparisons, and functional conservation metrics [14] [15].

In species tree discordance tests, which evaluate the accuracy of species trees reconstructed from putative orthologs, methods demonstrate different precision-recall trade-offs. Tree-based methods like PANTHER and graph-based approaches like OMA show distinct performance profiles, with OMA groups achieving high precision but lower recall, while PANTHER exhibits higher recall but lower precision. PGAP's hybrid approach positions it in the middle of this spectrum, offering a balanced trade-off suitable for many applications [15].

The introduction of Feature Architecture Similarity (FAS) as a new benchmark has provided additional insights into ortholog prediction quality. FAS measures the conservation of protein domains, transmembrane regions, and other structural features between predicted orthologs. Analysis reveals that ortholog pairs unanimously supported by all methods have average bi-directional FAS scores >0.9, while those supported by only one or two methods have scores <0.7, indicating substantial differences in feature architectures [14].

Handling of Genomic Diversity

PGAP's pan-genome approach demonstrates particular strength when annotating genomes within well-populated clades. In analysis of major bacterial groups, core genes represented substantial portions of total gene content:

- Escherichia-Shigella (1,502 genomes): 3,220 core protein clusters

- Salmonella (527 genomes): 3,393 core protein clusters

- Staphylococcus aureus (445 genomes): 2,066 core protein clusters [17]

This conservation of core genes enables PGAP to leverage pre-computed protein clusters to improve annotation accuracy and consistency across related strains. However, for novel genes or those present in only a subset of strains, PGAP still relies on ab initio prediction capabilities, creating a balanced approach that performs well across both conserved and variable genomic regions.

Advanced Methodologies: Experimental Protocols for Annotation Assessment

Orthology Benchmarking Service Protocol

The Quest for Orthologs (QfO) Benchmarking Service provides a standardized framework for evaluating orthology inference methods. The experimental protocol consists of:

Reference Proteome Selection: Curated set of 78 reference proteomes (48 Eukaryotes, 23 Bacteria, 7 Archaea) from UniProtKB, selected for taxonomic diversity and annotation quality [14].

Ortholog Prediction: Methods infer orthologs across the reference proteomes, with predictions converted to pairwise ortholog relationships as a common denominator for comparison.

Benchmark Execution: Multiple benchmark categories are applied:

- Species Tree Discordance: Measures concordance between species trees reconstructed from orthologs and established species phylogenies.

- Reference Gene Tree Assessment: Evaluates concordance with manually curated gene trees from SwissTree and TreeFam-A.

- Feature Architecture Similarity: Assesses conservation of protein domains and structural features.

Performance Quantification: Precision (positive predictive value) and recall (sensitivity) are calculated where possible, with methods compared using standardized metrics [15].

Diagram: Orthology Benchmarking Workflow. The standardized protocol evaluates methods across multiple benchmark categories to generate comparable performance metrics.

Gene Start Validation Protocol

Experimental validation of gene start predictions employs a multi-stage process:

Verified Gene Sets Curation: Compilation of genes with experimentally determined translation initiation sites through N-terminal protein sequencing, mass spectroscopy, or frame-shift mutagenesis. Key model organisms include:

- Escherichia coli: 769 verified genes

- Mycobacterium tuberculosis: 701 verified genes

- Halobacterium salinarum: 530 verified genes [7]

Computational Prediction: Independent gene start predictions generated by:

- Ab initio methods: GeneMarkS-2, Prodigal

- Homology-based method: StartLink

- Hybrid approach: StartLink+

Accuracy Assessment: Comparison of computational predictions against experimental data, with metrics including:

- Percentage of exact start site matches

- Distance to verified start when incorrect

- Influence of genomic GC content on accuracy

Mechanism Classification: Characterization of translation initiation mechanisms:

- Shine-Dalgarno RBSs

- Leaderless transcription

- Non-canonical RBS patterns [7]

Emerging Technologies: Genomic Language Models and Next-Generation Pipelines

Genomic Language Models (gLMs)

Inspired by advances in natural language processing, genomic Language Models (gLMs) represent a promising new approach to gene prediction. Models like DNABERT treat DNA sequences as structured linguistic data, using k-mer tokenization and transformer architectures to capture contextual dependencies within genetic sequences [9].

These models employ a two-stage classification framework:

- CDS Identification: Classification of open reading frames into coding (CDS) and non-coding regions

- TIS Refinement: Identification of correct translation initiation sites within coding regions

In comparative evaluations, gLMs have demonstrated reduced missed CDS predictions and improved TIS identification compared to traditional tools like Prodigal, GeneMark-HMM, and Glimmer, particularly when tested against experimentally verified sites [9].

PGAP2: Scalable Pan-Genome Analysis

The development of PGAP2 addresses the need for scalable pan-genome analysis capable of handling thousands of genomes. This integrated software package employs fine-grained feature analysis within constrained regions to facilitate rapid and accurate identification of orthologous and paralogous genes [10].

Key innovations in PGAP2 include:

- Dual-level regional restriction strategy: Reduces search complexity by focusing on confined identity and synteny ranges

- Quantitative characterization: Introduces parameters derived from distances between clusters

- Enhanced visualization: Interactive HTML and vector plots for feature analysis

Validation with simulated and carefully curated datasets demonstrates that PGAP2 outperforms existing methods in stability and robustness, even under conditions of high genomic diversity [10].

Diagram: PGAP2 Analysis Workflow. The next-generation pipeline incorporates enhanced quality control and orthology inference through dual-network analysis.

Table: Key Bioinformatics Resources for Genome Annotation and Orthology Analysis

| Resource | Type | Primary Function | Application in Research |

|---|---|---|---|

| PGAP | Annotation pipeline | Hybrid genome annotation | Structural & functional annotation of bacterial/archaeal genomes |

| PGAP2 | Pan-genome analysis | Large-scale ortholog identification | Genetic diversity studies & ecological adaptability analysis |

| Quest for Orthologs Benchmark | Evaluation service | Orthology method assessment | Tool selection & method development |

| StartLink+ | Gene start predictor | Translation initiation site identification | Gene annotation refinement & validation |

| DNABERT | Genomic language model | Deep learning-based gene prediction | Alternative approach for CDS & TIS identification |

| Reference Proteomes | Data resource | Standardized protein sequences | Benchmarking & comparative analyses |

| SwissTree | Curated gene trees | High-confidence phylogenetic references | Orthology method validation |

The comparative analysis of PGAP, Prodigal, and GeneMarkS-2 reveals distinctive performance characteristics that inform their appropriate application contexts. PGAP's hybrid, homology-guided approach provides robust ortholog identification, particularly for genomes within well-characterized clades where pan-genome information enhances annotation accuracy. Its balanced performance in orthology benchmarking makes it suitable for comparative genomic studies requiring consistent annotation across multiple related organisms.

Ab initio tools like Prodigal and GeneMarkS-2 remain valuable for annotating genomes with limited comparative data or when computational resources are constrained. GeneMarkS-2's ability to handle diverse translation initiation mechanisms provides an advantage for taxa with non-canonical genetic codes, while Prodigal offers computational efficiency for standard bacterial genomes.

Emerging methodologies, particularly genomic language models and next-generation pan-genome pipelines, show promise for addressing persistent challenges in gene prediction, especially for accurate translation initiation site identification and handling genomic diversity. As these technologies mature, they may redefine the standards for genomic annotation, potentially combining the strengths of both homology-based and ab initio approaches through advanced machine learning techniques.

For researchers and drug development professionals, selection of an appropriate annotation pipeline should consider taxonomic context, available comparative data, and specific research objectives. PGAP's hybrid approach offers a compelling solution for many applications, particularly when ortholog identification accuracy is paramount for downstream functional analysis and interpretation.

Accurate gene start prediction is a fundamental challenge in prokaryotic genome annotation. While current ab initio gene prediction tools demonstrate high accuracy in identifying the 3' ends of genes, a significant discrepancy exists in pinpointing the precise translation initiation sites (TIS). Research reveals that predictions for gene starts differ in 15-25% of genes across popular algorithms, creating substantial challenges for researchers relying on precise genome annotations for downstream applications in drug development and functional genomics [1].

This discrepancy persists because determining the exact nucleotide where translation begins is computationally complex. The problem is exacerbated by biological variability in translation initiation mechanisms and the limited availability of genes with experimentally verified starts—only 2,841 genes across five species have such verification [1]. This guide objectively compares the performance of three predominant tools—Prodigal, GeneMarkS-2, and PGAP—in resolving these critical discrepancies.

Quantitative Comparison of Gene Start Predictions

A comprehensive analysis of 5,488 representative prokaryotic genomes reveals the scope of gene start prediction inconsistencies between tools. The rate of disagreement varies significantly with genomic GC content, highlighting the challenge of achieving consensus across diverse organisms [1].

Table 1: Average Percentage of Genes with Differing Start Predictions Per Genome

| GC Content Range | Prodigal vs. GeneMarkS-2 vs. PGAP Disagreement Rate |

|---|---|

| Low GC Genomes | ~7% |

| High GC Genomes | ~15-22% |

Table 2: Experimentally Verified Gene Sets for Benchmarking

| Species | Genes with Experimentally Verified Starts |

|---|---|

| Escherichia coli | 1,583 |

| Mycobacterium tuberculosis | 648 |

| Rhodobacter denitrificans | 318 |

| Halobacterium salinarum | 195 |

| Natronomonas pharaonis | 97 |

| Total | 2,841 |

Biological Mechanisms Underlying Prediction Discrepancies

The divergence in gene start predictions stems from biological complexity that algorithms model differently. Three primary mechanisms govern translation initiation in prokaryotes, and their prevalence varies across species:

Shine-Dalgarno (SD) Driven Initiation

Genomes in this category exhibit canonical Shine-Dalgarno ribosome binding sites upstream of gene starts. Prodigal is primarily optimized for this mechanism, having been trained on E. coli genes with verified starts [1]. Approximately 61.5% of bacterial species predominantly use SD RBSs [1].

Leaderless Transcription

In this mechanism, genes lack 5' untranslated regions (UTRs), with transcription starting immediately at the translation initiation site. Leaderless transcription is particularly prevalent in archaea (83.6% of species), but also appears in bacteria like Mycobacterium tuberculosis [1]. GeneMarkS-2 incorporates specific models for detecting these patterns.

Non-Shine-Dalgarno (Non-SD) Initiation

Approximately 10.4% of bacterial species utilize non-canonical RBS patterns that lack the SD consensus [1]. These alternative sequence patterns require specialized detection approaches that standard SD-focused models may miss.

Diagram: Biological variability in translation initiation mechanisms contributes significantly to prediction discrepancies. Tools optimized for different mechanisms yield conflicting start calls.

Tool-Specific Methodologies and Performance

Prodigal (PROkaryotic DYnamic programming Gene-finding ALgorithm)

Prodigal uses dynamic programming to identify optimal gene configurations based on coding scores derived from GC frame bias analysis [18]. The algorithm connects start and stop codons in a tiling path that maximizes the overall coding potential while respecting constraints on gene overlaps [18].

Performance Characteristics:

- Primarily oriented toward canonical Shine-Dalgarno RBS patterns

- Optimized using E. coli genes with verified starts

- Allows maximum 60 bp overlap for same-strand genes

- Restricts opposite strand gene overlap to 200 bp

GeneMarkS-2

This algorithm employs a multi-model approach that self-trains on input sequences to identify species-specific patterns while simultaneously utilizing pre-computed atypical models for divergent genes [11]. A key advancement is its recognition of five distinct categories of sequence patterns around gene starts (Groups A-D and X) [11].

Performance Characteristics:

- Specifically models leaderless transcription and non-SD RBS patterns

- Uses 41 bacterial and 41 archaeal atypical models covering GC content from 30-70%

- Identifies promoter signals for leaderless transcription in bacteria and archaea

- Accommodates genomes with very weak regulatory signals (Group X)

NCBI's Prokaryotic Genome Annotation Pipeline (PGAP)

PGAP utilizes a hybrid approach that combines ab initio prediction with homology-based evidence from aligned homologous genes [1]. This pipeline represents the annotation standard for NCBI's RefSeq database.

Performance Characteristics:

- Leverages conserved start sites across homologs

- Dependent on quality and diversity of existing annotations

- May propagate historical annotation errors through homology chains

Advanced Approaches for Resolution

StartLink and StartLink+ Algorithms

To resolve persistent discrepancies, specialized tools have emerged. StartLink predicts gene starts by analyzing conservation patterns in multiple alignments of homologous nucleotide sequences, while StartLink+ combines both ab initio and alignment-based methods [1].

Performance Metrics:

- StartLink provides predictions for ~85% of genes per genome on average

- StartLink+ achieves 98-99% accuracy on genes with experimentally verified starts

- StartLink+ delivers predictions for ~73% of genes per genome

- When StartLink and GeneMarkS-2 predictions match, error probability is ~1%

Table 3: StartLink+ Performance Compared to Database Annotations

| Genome Type | Discrepancy Rate Between StartLink+ and Database Annotations |

|---|---|

| AT-rich genomes | ~5% of genes |

| GC-rich genomes | ~10-15% of genes |

Experimental Protocols for Benchmarking

Methodology for Comparative Studies

Standardized benchmarking approaches enable objective performance assessment across tools:

Reference Data Curation: Utilize genes with experimentally verified starts from N-terminal protein sequencing, mass spectroscopy, and frame-shift mutagenesis [1].

Whole-Genome Analysis: Execute each gene-finding tool on representative sets of prokaryotic genomes (e.g., 5,488 genomes from NCBI's RefSeq) [1].

Clade-Specific Validation: Conduct computational experiments across diverse taxonomic groups including Archaea, Actinobacteria, Enterobacterales, and FCB group to assess performance across different translation initiation mechanisms [1].

Discrepancy Quantification: Calculate the percentage of genes per genome where start predictions differ between tools, with special attention to GC-content stratification [1].

Diagram: Standardized experimental workflow for benchmarking gene start prediction tools ensures objective performance comparisons across diverse biological contexts.

The Scientist's Toolkit: Essential Research Reagents

Table 4: Key Bioinformatics Tools and Databases for Gene Start Resolution

| Tool/Database | Primary Function | Application in Gene Start Research |

|---|---|---|

| StartLink+ | Gene start prediction | Combines ab initio and homology-based approaches for high-accuracy start calls [1] |

| BASys2 | Genome annotation | Next-generation system providing up to 62 annotation fields per gene with visualization [19] |

| Manual Annotation Studio (MAS) | Collaborative annotation | Enables team-based manual curation with multiple homology search tools [20] |

| UniProtKB/Swiss-Prot | Protein sequence database | Source of high-quality curated sequences for homology-based validation [3] |

| InterPro | Protein family database | Integrates multiple databases for functional domain analysis [3] |

| RNA-seq Data | Transcriptomic evidence | Experimental data for validating expressed regions and start sites [21] |

The 15-25% discrepancy in gene start predictions between Prodigal, GeneMarkS-2, and PGAP stems from fundamental differences in how these tools model biological variability in translation initiation mechanisms. Prodigal excels in SD-dominated genomes, while GeneMarkS-2 provides superior performance for leaderless and non-SD transcription. PGAP leverages homology but may propagate historical errors.

For researchers requiring maximum accuracy, StartLink+ offers a robust solution with 98-99% verified accuracy, though with reduced coverage. The optimal strategy employs multiple tools with awareness of their respective strengths, particularly considering the target genome's GC content and phylogenetic classification. As annotation technologies evolve—exemplified by next-generation systems like BASys2—integration of multiple evidence types and improved modeling of biological diversity will continue to resolve these critical discrepancies, providing more reliable foundations for drug discovery and functional genomics research.

From Theory to Practice: Implementing and Integrating Annotation Pipelines

Optimal Input Formats and Data Requirements for Each Tool (GFF3, FASTA, GBFF)

Accurate gene prediction is a foundational step in genomic analysis, informing downstream applications in functional annotation and comparative genomics. Researchers primarily rely on tools like Prodigal, GeneMarkS-2, and the NCBI Prokaryotic Genome Annotation Pipeline (PGAP) for prokaryotic gene finding. The performance and utility of these tools are significantly influenced by the input formats and data types they support. This guide objectively compares the input requirements, capabilities, and performance of these three prominent tools, providing a structured framework for selecting the optimal pipeline based on specific research objectives and data availability. Understanding the nuances of supported file formats—such as FASTA, GFF3, and GenBank Flat File (GBFF)—is critical for maximizing prediction accuracy, ensuring compatibility with public databases, and facilitating reproducible research.

Tool Input Formats and Data Requirements at a Glance

The table below summarizes the core input requirements and format support for Prodigal, GeneMarkS-2, and PGAP.

Table 1: Input Format and Data Requirements for Prokaryotic Gene Prediction Tools

| Tool | Primary Input | Supported Annotation Inputs | Key Input Requirements & Features |

|---|---|---|---|

| Prodigal | FASTA (DNA sequence) | Does not accept pre-existing annotation files for its core prediction | • Requires assembled genomic sequence in FASTA format.• Runs ab initio; does not incorporate external gene models.• Well-suited for new, unannotated draft genomes. |

| GeneMarkS-2 | FASTA (DNA sequence) | Can utilize hints from external evidence (e.g., RNA-Seq) in GFF format | • Primary input is genomic FASTA.• Supports a hint-based mechanism to integrate evidence like RNA-Seq alignments (in GFF) to improve prediction accuracy, particularly for start codons.• Self-training algorithm adapts to sequence composition. |

| PGAP | FASTA (DNA sequence) | GFF3, GTF, NCBI TBL (Feature Table) | • FASTA is the minimal required input.• Richly supports annotation input via GFF3/GTF, allowing users to submit, refine, or update existing gene models.• Follows specific NCBI GFF3 conventions for attribute handling (e.g., locus_tag, product). |

Detailed Format Specifications and NCBI Requirements

Submitting annotations to public repositories like GenBank requires adherence to specific formatting standards. The GFF3 specification is a community standard, but the NCBI has specific requirements for submissions via PGAP [22].

GFF3 Format Essentials

The GFF3 format is a 9-column, tab-delimited file that provides a flexible way to represent genomic features and their hierarchical relationships [23]:

seqid: Name of the chromosome or scaffold.source: Name of the program or data source that generated the feature.type: Type of feature (e.g.,gene,CDS,mRNA), which should be a term from the Sequence Ontology.start: Start position of the feature (1-based indexing).end: End position of the feature.score: A numerical score or.if unavailable.strand:+for forward,-for reverse strand.phase:0,1, or2, indicating the reading frame for CDS features.attributes: A semicolon-separated list of tag-value pairs providing additional information (e.g.,ID,Parent).

Hierarchical structures are defined using ID and Parent attributes. For example, exons are linked to their parent mRNA, and mRNAs are linked to their parent gene [24] [23].

NCBI-Specific GFF3 Conventions

When preparing a GFF3 file for NCBI submission via PGAP, several specific rules apply [22]:

- The

locus_tagqualifier is required for gene features. The GFF3IDattribute is not automatically used as thelocus_tag. - For mRNA and CDS features,

transcript_idandprotein_idqualifiers are required, respectively. These can be provided in a specific format (gnl|dbname|ID) or will be auto-generated. - The

productname must be specified on the CDS or RNA feature, not solely on the mRNA or gene. If a CDS lacks aproductqualifier, it will be named "hypothetical protein," and this name will overwrite any product name on the corresponding mRNA. - Multi-exon genes can be represented using child exon features or by multiple RNA feature rows sharing the same ID.

- The

Nameattribute in GFF3 is ignored by the NCBI submission process.

Experimental Benchmarking and Performance Data

Independent benchmarking studies reveal how these tools perform in practice, particularly regarding the challenging task of pinpointing correct translation initiation sites (TIS).

Start Codon Prediction Accuracy

A critical performance differentiator among gene finders is their accuracy in predicting translation initiation sites. A large-scale computational experiment comparing GeneMarkS-2, Prodigal, and PGAP on 5,488 representative prokaryotic genomes revealed significant discrepancies [7]. The study found that gene start predictions differed from existing annotations for 15-25% of genes in a genome, with higher rates of disagreement in GC-rich genomes [7].

The development of tools like StartLink and StartLink+, which combine alignment-based and ab initio methods, highlights this challenge. When StartLink and GeneMarkS-2 predictions agreed, the error rate was remarkably low (~1%). This consensus approach (StartLink+) achieved 98-99% accuracy on genes with experimentally verified starts and suggested that 5-15% of existing database annotations might be incorrect [7].

The AssessORF study, which used proteomics data and evolutionary conservation to benchmark gene predictions, provided a broader overview of tool performance [25].

Table 2: Benchmarking Gene Prediction Performance with AssessORF

| Tool / Annotation Source | Agreement with Evidence | Notable Biases and Issues |

|---|---|---|

| GenBank (PGAP) | 88-95% | All sources showed a bias towards selecting start codons that were further upstream than the actual start. No single tool was a clear winner across all scenarios. |

| GeneMarkS-2 | 88-95% | |

| Prodigal | 88-95% | |

| Glimmer | ~88% (lowest) |

The AssessORF benchmark concluded that while most programs correctly identify coding regions, there remains considerable room for improvement in start codon detection, and all programs are prone to a specific upstream bias [25].

Experimental Protocols for Performance Comparison

To ensure reproducible and objective comparisons between gene prediction tools, a standardized experimental protocol is essential. The following workflow, based on methodologies from the cited literature, outlines a robust framework for performance benchmarking [7] [25].

Workflow for Benchmarking Gene Prediction Tools

Key Methodological Steps

- Input Data Curation: Begin with a high-quality assembled genome in FASTA format. For tests involving annotation submission, prepare a GFF3 file that strictly adheres to the NCBI's specific conventions, including proper use of

locus_tag,transcript_id,protein_id, andproductattributes [22]. - Tool Execution: Run Prodigal, GeneMarkS-2, and PGAP on the identical FASTA sequence. It is critical to use default parameters unless a specific parameter sensitivity analysis is the goal. For PGAP, runs should be configured to simulate both de novo prediction and annotation-refinement scenarios if a GFF3 file is provided.

- Generation of Consensus Sets: To establish a high-confidence set of gene predictions, employ a consensus approach. The method used by StartLink+ is effective: for each gene, compare the TIS predicted by GeneMarkS-2 and an alignment-based tool (or another ab initio tool like Prodigal). Predictions where both tools agree can be considered high-confidence [7].

- Validation and Benchmarking: Compare the tool outputs against a trusted "gold standard" dataset. The most reliable benchmarks use:

- Genes with experimentally verified starts: Derived from N-terminal protein sequencing or ribosome profiling [7] [25].

- Evolutionary conservation: AssessORF uses the conservation of start and stop codons across syntenic regions in related genomes to infer correctness [25].

- Proteomics support: Mass spectrometry data can confirm a gene is translated, providing evidence for its existence, though it may not precisely define the start site [25].

- Performance Analysis: Key metrics include:

- Sensitivity and Specificity: For overall gene presence/absence.

- Translation Initiation Site (TIS) Accuracy: The percentage of genes where the predicted start codon matches the verified start.

- Analysis of Discrepancies: Categorize disagreements between tools and the reference to identify systematic biases (e.g., the noted upstream start bias).

Successful gene prediction and annotation require a suite of computational tools and resources beyond the core prediction algorithms.

Table 3: Essential Resources for Gene Prediction and Annotation Analysis

| Resource / Tool | Function / Purpose | Relevance to Gene Prediction |

|---|---|---|

| AssessORF [25] | An R package for benchmarking prokaryotic gene predictions. | Provides a standardized method to evaluate the accuracy of Prodigal, GeneMarkS-2, and other tools against evidence from proteomics and evolutionary conservation. |

| StartLink/StartLink+ [7] | Tools for inferring gene starts from multiple sequence alignments and consensus with ab initio predictions. | Used to generate high-confidence start codon predictions and to identify potentially mis-annotated genes in databases. |

| Format Converters (e.g., Galaxy, Readseq, EMBOSS Seqret) [26] | Web platforms and command-line tools for converting between biological data formats (e.g., GBK to GFF3). | Crucial for preparing existing annotations in various formats for submission to pipelines like PGAP or for comparative analyses. |

| GFF3 Validators (e.g., from GMOD) [22] | Standalone validators to check GFF3 files for syntactic correctness. | Essential pre-submission step to ensure GFF3 files for NCBI PGAP are properly formatted and avoid processing errors. |

| Genomic Language Models (gLMs) [9] | Emerging deep learning models (e.g., DNABERT) for gene prediction. | Represent the next generation of gene finders, showing promise in improving CDS and TIS prediction accuracy beyond traditional methods. |

The accurate prediction of genes in prokaryotic genomes is a foundational step in genomic, metagenomic, and biotechnological research. The choice of annotation tool directly influences the quality of downstream analyses, including ortholog clustering, phylogenetic inference, and metabolic pathway reconstruction. For years, tools like Prodigal, GeneMarkS-2, and automated pipelines like the NCBI's Prokaryotic Genome Annotation Pipeline (PGAP) have been the mainstays for researchers. However, their performance varies significantly in terms of accuracy, speed, and the biological features they can annotate, making tool selection a critical decision. This guide provides an objective, data-driven comparison of these three major annotation tools, framing their performance within a standard workflow that progresses from raw sequence data to biological insight. We summarize experimental data from benchmark studies and present detailed methodologies to help researchers, scientists, and drug development professionals select the optimal tool for their specific project needs.

Performance Comparison: Prodigal vs. GeneMarkS-2 vs. PGAP

Evaluating gene-finding tools primarily revolves around their accuracy in identifying a gene's coding sequence (CDS) and, more challengingly, its precise translation initiation site (TIS). Discrepancies in TIS prediction can lead to incorrect protein N-terminal sequences, affecting functional and structural predictions. Furthermore, practical considerations like processing speed and the depth of functional annotation are crucial for large-scale projects.

Quantitative Performance Metrics

The following tables consolidate key performance metrics from comparative studies.

Table 1: Gene Start Prediction Accuracy and Agreement [1]

| Metric | Prodigal | GeneMarkS-2 | PGAP | Notes |

|---|---|---|---|---|

| Gene Start Disagreement | 7-22% (varies by GC) | 7-22% (varies by GC) | 7-22% (varies by GC) | Percentage of genes per genome where start predictions differ between tools; higher in GC-rich genomes. |

| StartLink+ Accuracy | - | 98-99% | - | Accuracy achieved when StartLink (alignment-based) and GeneMarkS-2 predictions concur. |

| Disagreement with Annotation | ~15% (GC-rich) | ~15% (GC-rich) | ~15% (GC-rich) | StartLink+ predictions differed from database annotations for 5-15% of genes. |

Table 2: Practical Runtime and Annotation Depth [19] [27] [13]

| Tool / Pipeline | Approx. Runtime (Single Genome) | Annotation Depth | Key Strengths |

|---|---|---|---|

| Prodigal | Minutes [27] | Ab initio gene caller | Speed, efficiency for large-scale metagenomic projects. |

| GeneMarkS-2 | Not explicitly stated | Ab initio with multiple RBS models | Handles diverse translation initiation mechanisms (SD, non-SD, leaderless). |

| PGAP | 2.5 - 3 hours [27] | Comprehensive functional annotation | Integration with curated NCBI databases, high-quality functional assignments. |

| BASys2 | ~30 seconds [19] | Very deep (up to 62 fields/gene) | Extreme speed, metabolite annotation, 3D protein structure data. |

Analysis of Comparative Data

The data reveals a core challenge: even state-of-the-art tools disagree on gene starts for a significant minority of genes. One study found that for 15-25% of genes in a genome, the predictions of gene starts from different tools would not match [1]. This disagreement is more pronounced in GC-rich genomes [1]. This highlights the importance of experimental validation for critical genes.

GeneMarkS-2 demonstrates high accuracy when its predictions are corroborated by homology-based methods. The StartLink+ tool, which combines StartLink (alignment-based) and GeneMarkS-2 predictions, achieved 98-99% accuracy on genes with experimentally verified starts [1].

From a practical standpoint, the choice involves a trade-off between speed and comprehensiveness. Prodigal is the undisputed leader for rapid annotation, often completing a genome in minutes, making it ideal for high-throughput environments like metagenomics [27]. In contrast, PGAP is more comprehensive but slower, taking several hours per genome, as it leverages a broader suite of databases and tools for functional annotation [27] [28]. A next-generation tool like BASys2 attempts to bridge this gap, offering deep annotation (up to 62 data fields per gene) in as little as 30 seconds by using a fast genome-matching and annotation transfer strategy [19].

Experimental Protocols for Benchmarking

To objectively compare annotation tools, researchers employ standardized benchmarking protocols. The methodologies below are derived from published comparative studies.

Protocol 1: Benchmarking Gene Start Prediction Accuracy

This protocol is designed to assess the most challenging aspect of gene prediction: identifying the true translation initiation site.

1. Data Curation:

- Obtain a set of genomes with genes that have experimentally verified translation initiation sites (TIS). These are typically determined via N-terminal protein sequencing or mass spectrometry [1]. Example test sets include genes from E. coli, M. tuberculosis, and H. salinarum [1].

- As a larger, more generalizable test set, use a curated collection of complete bacterial genomes from NCBI GenBank with high-quality annotations [9].

2. ORF Extraction and Labeling:

- Use a tool like ORFipy to scan genome sequences and extract all possible open reading frames (ORFs) that begin with a start codon (ATG, TTG, GTG, CTG) and end with a stop codon [9].

- Label the data for two tasks:

- CDS Dataset: A positive label is assigned to an ORF if its start or end position aligns with an annotated CDS in the reference file [9].

- TIS Dataset: For ORFs that match an annotated CDS, create a sequence window centered on the annotated start codon (e.g., 30 nucleotides upstream and downstream). A positive label indicates a true TIS [9].

3. Tool Execution and Comparison:

- Run the target gene finders (Prodigal, GeneMarkS-2, PGAP) on the genome sequences.

- Compare the TIS predictions of each tool against the verified or curated annotation standard.

- Calculate standard metrics: Precision, Recall, and F1-score for both CDS and TIS identification.

Protocol 2: Evaluating Performance on Novel Sequences

This protocol tests a tool's ability to handle sequences that lack close homologs in databases, assessing its core ab initio capabilities.

1. Data Preparation and Simulation:

- Select a genome with high-quality annotation. To simulate novelty, create a "reference-only" database by removing all sequences from the target species' clade from your BLAST database [1].

- Alternatively, use authentic metagenomic assemblies from environments with poor representation in databases.