Protein Structure Validation Metrics: A Comprehensive Guide for Researchers and Drug Developers

This article provides a comprehensive guide to protein structure validation metrics, essential for researchers and drug development professionals who rely on accurate 3D protein models.

Protein Structure Validation Metrics: A Comprehensive Guide for Researchers and Drug Developers

Abstract

This article provides a comprehensive guide to protein structure validation metrics, essential for researchers and drug development professionals who rely on accurate 3D protein models. It covers the foundational principles of structure validation, explains key methodological approaches and their practical applications, offers troubleshooting strategies for optimizing model quality, and presents a comparative analysis of validation tools. By integrating knowledge-based, experimental, and emerging computational metrics, this resource enables scientists to critically assess structural models, improve the reliability of their data for downstream applications like drug design, and understand the evolving landscape of structure validation with the advent of AI-based prediction tools.

The Essentials of Protein Structure Validation: Why Accuracy Matters in Biomedical Research

Defining Protein Structure Validation and Its Critical Role in Drug Design and Basic Research

Protein structure validation is the process of assessing the quality, reliability, and accuracy of three-dimensional protein models. This critical evaluation ensures that structural models derived from experimental techniques like X-ray crystallography and nuclear magnetic resonance (NMR) spectroscopy, or from computational methods like AI-based prediction, are structurally sound and biologically relevant. The profound importance of this field was recognized when the groundbreaking AI system AlphaFold2 was awarded the 2024 Nobel Prize in Chemistry, highlighting the transformative potential of accurate protein models [1].

In both basic research and drug discovery, protein structures serve as fundamental blueprints for understanding biological mechanisms and designing therapeutic interventions. Structure validation provides the essential quality control measures needed to distinguish reliable models from erroneous ones, thereby ensuring the integrity of scientific conclusions and the efficacy of structure-based drug design campaigns. Without rigorous validation, researchers risk basing their work on incorrect structural data, potentially leading to flawed hypotheses and failed experiments.

Fundamental Concepts and Validation Metrics

The Philosophical and Technical Foundations

The theoretical underpinnings of protein structure prediction and validation face several fundamental challenges. The Levinthal paradox highlights the seemingly impossible task of a protein sampling all possible conformations to find its native structure within a biologically relevant timeframe. Meanwhile, Anfinsen's dogma, which posits that a protein's native structure is determined solely by its amino acid sequence, presents limitations when interpreted too strictly, as it may not fully account for the environmental dependence of protein conformations [1]. These philosophical challenges create real barriers to predicting functional structures through static computational means alone, necessitating robust validation approaches.

Proteins exist as dynamic ensembles of conformations rather than single static structures, particularly those with flexible regions or intrinsic disorders. Current AI approaches face inherent limitations in capturing this dynamic reality of proteins in their native biological environments [1]. This understanding has driven the development of validation metrics that can assess not only static accuracy but also physiological plausibility.

Key Validation Metrics and Scores

A comprehensive array of validation metrics has been developed to evaluate different aspects of protein structure quality. These scores can be categorized into several classes based on their methodological approach and what they measure.

Table 1: Key Protein Structure Validation Metrics

| Metric Name | Type | Description | Optimal Values |

|---|---|---|---|

| DockQ [2] | Global quality | Measures interface quality in protein complexes | >0.8 (High), 0.23-0.8 (Medium), <0.23 (Incorrect) |

| pLDDT [2] | Local accuracy | Predicted local distance difference test | >90 (High), 70-90 (Confident), 50-70 (Low), <50 (Very Low) |

| ipLDDT [2] | Interface-specific | Interface version of pLDDT for complexes | Similar to pLDDT thresholds |

| pTM [2] | Global accuracy | Predicted template modeling score | Higher values indicate better global fold |

| ipTM [2] | Interface-specific | Interface pTM for complex assessment | >0.8 indicates high-quality interfaces |

| pDockQ [2] | Interface quality | Predicts DockQ from interfacial contacts | Higher values indicate better interface quality |

| VoroIF-GNN [2] | Interface quality | Graph neural network using Voronoi tessellation | Higher values indicate better interface accuracy |

| MolProbity [3] | Steric quality | Combines Ramachandran, rotamer, and clash analysis | Lower values indicate better steric quality |

| Verify3D [3] | Profile compatibility | 3D-1D profile compatibility score | >0 usually indicates acceptable environment |

| ProsaII [3] | Energy potential | Knowledge-based energy potential | Negative values indicate favorable energies |

These metrics can be further categorized as either global scores, which assess the overall structure, or interface-specific scores, which focus specifically on protein-protein interaction interfaces in complexes. Studies have demonstrated that interface-specific scores generally provide more reliable evaluation of protein complex predictions compared to their global counterparts [2].

Experimental Protocols and Methodologies

Benchmarking Prediction Methods

Rigorous benchmarking of protein structure prediction methods requires standardized datasets and evaluation protocols. One comprehensive study evaluated predictions from ColabFold (with and without templates) and AlphaFold3 using a benchmark set of 223 heterodimeric high-resolution structures from the Protein Data Bank [2]. The experimental protocol involved:

- Target Selection: Starting with 671 complexes, filtering to 257, then to 223 targets after ensuring biological assembly matched asymmetric unit

- Prediction Generation: Running ColabFold with templates (CF-T), template-free (CF-F), and AlphaFold3 (AF3)

- Parameter Settings: Using three recycles followed by relaxation for ColabFold, generating five predictions per target

- Evaluation: Calculating DockQ and multiple prediction-based scores for 1,115 models per method

The results demonstrated that AlphaFold3 (39.8%) and ColabFold with templates (35.2%) produced the highest proportion of 'high' quality models (DockQ > 0.8), while template-free ColabFold had notably fewer high-quality models (28.9%) [2]. This benchmarking approach provides a standardized methodology for comparing emerging prediction tools.

Generalized Linear Model for Validation

A sophisticated statistical approach for combining multiple validation metrics employs a generalized linear model (GLM). This method integrates diverse protein structure quality scores into a single quantity with intuitive meaning: the predicted coordinate root-mean-square deviation (RMSD) between the model and the unavailable "true" structure (GLM-RMSD) [3].

The methodology proceeds as follows:

- Data Collection: Compiling validation scores for known structures with reference RMSD values

- Score Selection: Choosing complementary validation scores (e.g., Verify3D, ProsaII, MolProbity, GNM)

- Model Fitting: Using a gamma distribution from the exponential family with an identity link function: g(μ) = μ + 1

- Validation: Correlating GLM-RMSD with actual RMSD to reference structures

When applied to CASD-NMR and CASP datasets, this approach achieved correlation coefficients of 0.69 and 0.76 between predicted and actual RMSDs, substantially outperforming individual scores (which ranged from -0.24 to 0.68) [3].

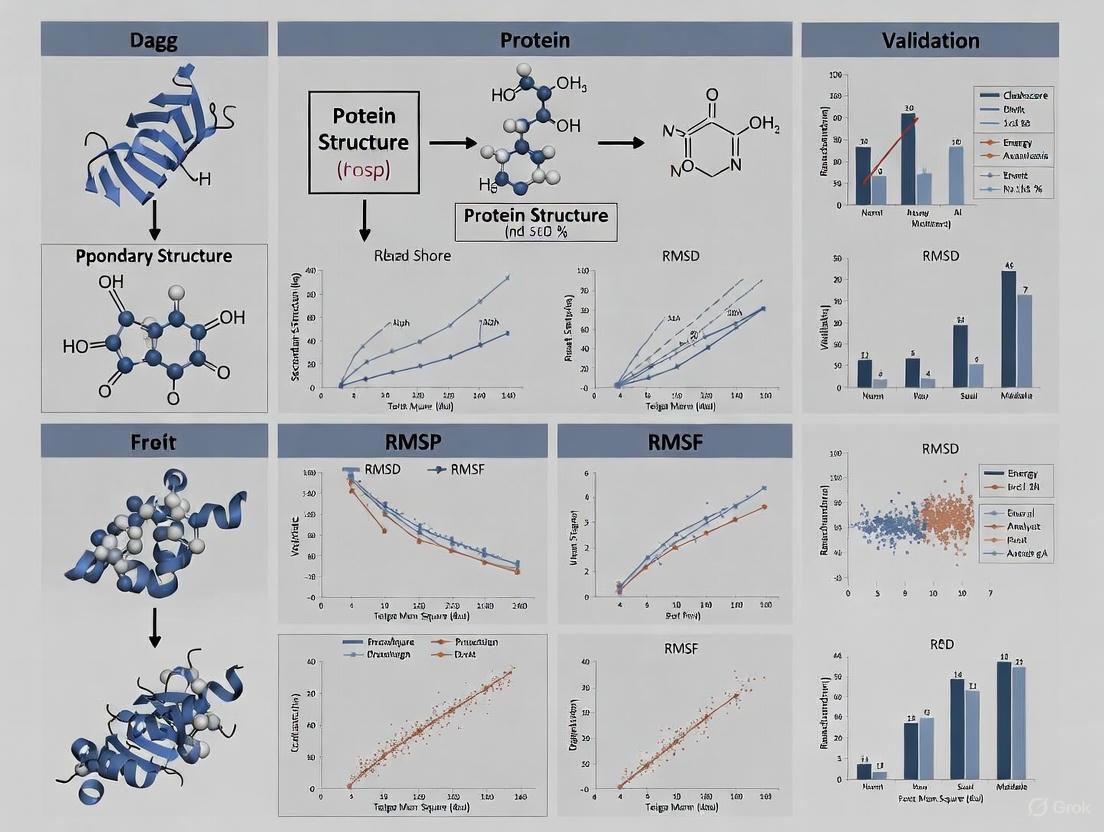

Diagram 1: GLM-RMSD workflow for integrated validation

Advanced Scoring Metrics for AI-Based Structure Prediction

Performance of Current Assessment Scores

The rapid advancement of AI-based protein structure prediction, particularly with AlphaFold2/3 and ColabFold, has necessitated the development of specialized assessment scores. A comprehensive evaluation of widely used scoring metrics examined their performance on predictions from ColabFold (with and without templates) and AlphaFold3 [2]. The study benchmarked optimal cutoffs using a set of 223 heterodimeric, high-resolution protein structures and their predictions.

Key findings included:

- ipTM and model confidence achieved the best discrimination between correct and incorrect predictions

- Interface-specific scores (ipTM, ipLDDT, pDockQ) proved more reliable for evaluating protein complexes than corresponding global scores

- Assessment scores performed best on ColabFold without templates, despite it producing fewer high-quality models

- VoroIF-GNN and pDockQ2 emerged as top-performing interface evaluation methods

The study led to the development of C2Qscore, a weighted combined score designed to improve model quality assessment, which has been integrated into the ChimeraX plug-in PICKLUSTER v.2.0 [2].

C2Qscore Integration and Application

The development of C2Qscore represents the cutting edge in integrated validation approaches for protein complexes. This weighted combined score was trained on predictions for 223 heterodimeric high-resolution structures and tested on two independent datasets: X-ray crystallographic structures and dimers from larger assemblies derived from cryoEM [2].

The power of combined scoring became apparent when analyzing dimers from large assemblies solved by cryoEM, where the study revealed limitations of existing metrics when multiple configurations of heterodimers are possible [2]. This highlights the importance of developing robust validation methods that can handle the complexity of biological systems.

Table 2: Essential Research Tools for Protein Structure Validation

| Tool/Resource | Type | Primary Function | Access Method |

|---|---|---|---|

| PSVS Server [3] | Validation Suite | Comprehensive structure validation | Web server |

| MolProbity [3] | Validation Tool | All-atom contact analysis | Web server/standalone |

| ChimeraX [2] | Visualization | Interactive structure analysis | Desktop application |

| PICKLUSTER v.2.0 [2] | Analysis Plugin | Complex validation with C2Qscore | ChimeraX plugin |

| C2Qscore [2] | Scoring Metric | Combined quality assessment | Command-line tool |

| TEMPy [4] | EM Validation | Assessment of EM density fits | Python library |

| VoroIF-GNN [2] | Interface Scoring | Interface-specific accuracy estimate | Standalone tool |

| PDB Validation Server [4] | Validation Service | wwPDB official validation reports | Web server |

These tools form the essential toolkit for researchers engaged in protein structure validation. The PSVS server provides a comprehensive suite of validation scores, while MolProbity specializes in all-atom contact analysis, identifying steric clashes and poor rotamer placements [3]. ChimeraX with the PICKLUSTER plugin offers interactive visualization and analysis capabilities, integrating the advanced C2Qscore metric for complex assessment [2].

For electron microscopy structures, TEMPy provides specialized assessment of three-dimensional electron microscopy density fits [4]. The wwPDB validation server offers official validation reports for structures deposited in the Protein Data Bank, serving as the gold standard for experimental structure validation [4].

Application in Drug Discovery and Basic Research

Critical Role in Structure-Based Drug Design

In drug discovery, accurate protein structures are crucial for rational drug design, virtual screening, and understanding drug mechanisms of action. Structure validation ensures that these critical applications rest on a solid foundation. The limitations of current AI approaches become particularly relevant in drug design contexts, where precise characterization of binding sites and protein-ligand interactions is paramount [1].

The environmental dependence of protein conformations creates special challenges for structure-based drug design. Proteins in their native biological environments may adopt different conformations than those captured in crystallographic databases, potentially leading to misleading drug design strategies if not properly validated [1]. This underscores the need for validation approaches that can account for physiological relevance beyond mere geometric correctness.

Future Directions and Challenges

Despite significant advances, protein structure validation faces ongoing challenges. The limitations of static models in representing dynamic protein ensembles necessitate the development of validation methods for conformational ensembles rather than single structures [1]. Future approaches must better account for:

- Intrinsically disordered proteins and flexible regions

- Environmental influences on protein conformation

- Multiple biologically relevant states and transitions

- Integration with experimental data from multiple sources

Complementary computational strategies focused on functional prediction and ensemble representation are emerging as essential directions for future development [1]. These approaches will redirect efforts toward more comprehensive biomedical applications of AI technology that acknowledge protein dynamics.

Diagram 2: Protein structure validation in research workflow

Protein structure validation serves as the critical bridge between structure determination and biological application, ensuring that models used in basic research and drug design are accurate and reliable. As AI-based prediction methods continue to advance, robust validation approaches become increasingly important for assessing model quality and guiding appropriate usage.

The development of sophisticated combined scores like GLM-RMSD and C2Qscore represents significant progress in integrating multiple quality measures into unified metrics. Meanwhile, the recognition of inherent limitations in current approaches—particularly regarding protein dynamics and environmental dependence—points toward exciting future directions in the field. As validation methods evolve to address these challenges, they will continue to play an indispensable role in maximizing the impact of structural biology on biomedical research and therapeutic development.

The field of structural biology is undergoing a revolution, driven by the advent of sophisticated artificial intelligence (AI) systems for protein structure prediction, recognized by the 2024 Nobel Prize in Chemistry [1]. These AI tools, such as AlphaFold2, ColabFold, and AlphaFold3, claim to bridge the gap between amino acid sequence and three-dimensional structure, yet beneath this apparent success lies a fundamental challenge: the reliance on experimentally determined structures of known proteins that may not fully represent the thermodynamic environment controlling protein conformation at functional sites [1]. This technical guide examines how knowledge-based metrics, derived from statistical distributions of experimental structures, provide crucial validation frameworks for assessing the accuracy and reliability of both experimental and computationally predicted protein models.

Knowledge-based metrics leverage the rich information contained within the Protein Data Bank (PDB), one of biology's richest open-source repositories housing over 242,000 macromolecular structural models alongside their experimental data [5]. By systematically analyzing patterns across these structures, researchers can establish quantitative benchmarks for model quality assessment, particularly crucial for functional sites where protein dynamics and environmental factors play significant roles [1] [5]. These metrics have become indispensable for drug discovery professionals who require confidence in structural models for downstream applications including functional studies, protein engineering, and rational drug design [2].

Fundamental Concepts and Theoretical Framework

The Foundation in Experimental Structural Data

The PDB serves as the fundamental resource for deriving knowledge-based metrics, providing a vast archive of structures determined through X-ray crystallography, cryo-EM, nuclear magnetic resonance (NMR), and neutron diffraction [5]. Each entry contains an atom table recording atomic coordinates along with key attributes including atom type, residue identity, B-factor (atomic displacement parameter), and occupancy. The adoption of the mmCIF format has enabled a far richer and more extensible representation than the legacy PDB format, accommodating new ligands with five-character identifiers and very large macromolecular assemblies that exceed the capacity of the original format [5].

Statistical distributions derived from these experimental structures enable the identification of conserved patterns, such as protein folds, binding-site features, and subtle conformational shifts among related proteins, that would be impossible to detect from any single structure [5]. These distributions form the reference against which new structures, whether experimentally determined or computationally predicted, are evaluated. The fundamental principle underpinning knowledge-based metrics is that protein structures follow recognizable statistical patterns reflecting biophysical constraints and evolutionary optimization.

Key Challenges and Limitations

Despite their power, knowledge-based metrics face several epistemological challenges. The Levinthal paradox highlights that the conformational space available to proteins is astronomically large, while Anfinsen's dogma that sequence determines structure requires nuanced interpretation in the context of environmental dependence [1]. Furthermore, the millions of possible conformations that proteins can adopt, especially those with flexible regions or intrinsic disorders, cannot be adequately represented by single static models derived from crystallographic and related databases [1].

Another significant challenge arises from the fact that machine learning methods used to create structural ensembles are based on experimentally determined structures under conditions that may not fully represent the thermodynamic environment controlling protein conformation at functional sites [1]. This limitation creates barriers to predicting functional structures solely through static computational means, emphasizing the continued importance of experimental validation and metrics sensitive to dynamic reality.

Essential Scoring Metrics for Protein Structure Validation

Global vs. Interface-Specific Metrics

For evaluating protein structures, particularly complexes, metrics can be categorized as global or interface-specific. Global metrics assess the overall model quality, while interface-specific metrics focus specifically on protein-protein interaction regions, which are often critical for function. Recent comprehensive benchmarking studies indicate that interface-specific scores generally provide more reliable evaluation for protein complex predictions compared to corresponding global scores [2].

Table 1: Essential Knowledge-Based Metrics for Protein Structure Validation

| Metric Name | Type | Optimal Cutoff | Primary Application | Strengths |

|---|---|---|---|---|

| ipTM (interface pTM) | Interface-specific | >0.8 (high quality) | Protein complexes | Best discrimination between correct/incorrect predictions [2] |

| Model Confidence | Composite | Varies by application | General assessment | High discriminative power [2] |

| pDockQ/pDockQ2 | Interface-specific | >0.8 (high quality) | Protein complexes | Derived from interfacial contacts and residue quality [2] |

| VoroIF-GNN | Interface-specific | Higher values indicate better quality | Protein complexes | Uses Voronoi tessellation for contact-based assessment [2] |

| ipLDDT (interface pLDDT) | Interface-specific | >90 (high quality) | Protein complexes | Adaptation of LDDT for interfaces [2] |

| iPAE (interface PAE) | Interface-specific | Lower values indicate better quality | Protein complexes | Measures interface residue alignment error [2] |

| DockQ | Reference-based | >0.8 (high), 0.23-0.8 (med) | Ground truth assessment | Combines Fnative, LRMS, iRMS [2] |

Performance Benchmarks for Current Prediction Methods

Recent systematic evaluations of protein complex prediction methods provide critical benchmarks for expected performance levels. One comprehensive study assessed predictions from ColabFold with templates (CF-T), ColabFold without templates (CF-F), and AlphaFold3 (AF3) using a benchmark set of 223 heterodimeric high-resolution protein structures [2].

Table 2: Performance Comparison of Protein Complex Prediction Methods

| Method | High-Quality Models (DockQ >0.8) | Incorrect Models (DockQ <0.23) | Cases Where All Models Incorrect | Key Strengths |

|---|---|---|---|---|

| AlphaFold3 (AF3) | 39.8% | 19.2% | 91.1% | Best overall performance, lowest incorrect rate [2] |

| ColabFold with Templates (CF-T) | 35.2% | 30.1% | 79.1% | Similar to AF3 when templates available [2] |

| ColabFold without Templates (CF-F) | 28.9% | 32.3% | 81.9% | Assessment scores perform best on CF-F models [2] |

The study revealed that ColabFold with templates and AlphaFold3 perform similarly, with both outperforming ColabFold without templates in generating high-quality models [2]. Notably, the assessment scores themselves perform best on ColabFold without templates, suggesting metric performance may vary depending on the prediction method used.

Experimental Protocols for Metric Implementation

Workflow for Comprehensive Structure Validation

The following diagram illustrates the recommended workflow for implementing knowledge-based metrics in protein structure validation, particularly focused on protein complexes:

Benchmarking and Threshold Establishment

For reliable implementation of knowledge-based metrics, establishing appropriate quality thresholds is essential. Based on benchmarking against 223 heterodimeric high-resolution structures, the following experimental protocol is recommended:

Dataset Curation: Select high-resolution experimental structures relevant to your target. For protein complexes, prefer heterodimers over homodimers as they present more challenging evaluation scenarios. Filter structures to ensure biological assemblies match asymmetric units to avoid alignment issues [2].

Multiple Prediction Generation: Generate multiple models (typically 5) using selected prediction methods (ColabFold with/without templates, AlphaFold3) with three recycles followed by relaxation [2].

Metric Calculation: Compute both global and interface-specific metrics for all models. Critical metrics include ipTM, model confidence, pDockQ2, and VoroIF, which have demonstrated superior discriminative power [2].

Threshold Application: Apply established cutoffs for quality classification:

- High quality: DockQ >0.8, ipTM >0.8

- Medium quality: DockQ 0.23-0.8

- Incorrect: DockQ <0.23 [2]

Combined Score Implementation: For improved assessment, consider implementing weighted combined scores like C2Qscore, which integrates multiple metrics and has shown enhanced performance for model quality assessment [2].

Research Reagent Solutions

Table 3: Essential Research Reagents and Tools for Structural Bioinformatics

| Tool/Resource | Type | Function | Access |

|---|---|---|---|

| PDB (Protein Data Bank) | Database | Primary repository of experimental structural data | https://www.rcsb.org/ [5] |

| ChimeraX | Visualization Software | Interactive visualization with plugin architecture | https://www.cgl.ucsf.edu/chimerax/ [2] |

| PICKLUSTER v.2.0 | ChimeraX Plugin | Integrates C2Qscore for model quality assessment | Plugin installation [2] |

| C2Qscore | Command-line Tool | Weighted combined score for model assessment | https://gitlab.com/topf-lab/c2qscore [2] |

| ColabFold | Prediction Server | Protein structure prediction with/without templates | https://colab.research.google.com/github/sokrypton/ColabFold/ [2] |

| AlphaFold3 | Prediction Server | Protein complex prediction with ligands/nucleic acids | https://alphafoldserver.com/ [2] |

| PISCES Server | Curation Tool | Sequence identity filtering and quality assessment | http://dunbrack.fccc.edu/pisces/ [5] |

Advanced Applications in Drug Discovery

Addressing Dynamic Reality in Functional Sites

While current AI-based protein structure prediction tools have demonstrated remarkable capabilities, they face inherent limitations in capturing the dynamic reality of proteins in their native biological environments [1]. This challenge is particularly relevant for drug discovery applications, where understanding functional sites and their conformational flexibility is critical for rational drug design. Knowledge-based metrics derived from statistical distributions of experimental structures provide essential constraints for evaluating models intended for drug discovery applications.

The limitations of static representations are especially pronounced for proteins with flexible regions or intrinsic disorders, whose millions of possible conformations cannot be adequately represented by single static models derived from crystallographic databases [1]. For these challenging cases, ensemble representations and metrics sensitive to dynamics become increasingly important for meaningful validation.

Future Directions and Integrative Approaches

The field is evolving toward more comprehensive validation approaches that acknowledge protein dynamics and environmental dependencies. Promising directions include:

Ensemble Representation: Moving beyond single static models to represent conformational ensembles that better capture protein dynamics [1].

Functional Prediction Focus: Redirecting efforts from purely structural accuracy toward metrics predictive of biological function [1].

Hierarchical Bayesian Models: Adopting advanced statistical approaches, similar to those used in experimental statistics by companies like Amazon and Etsy, to measure true cumulative experimental impact [6].

Integrative Validation: Combining knowledge-based metrics with experimental data from multiple sources, including cryo-EM maps and spectroscopic data, for comprehensive assessment.

These advances will enable more reliable application of protein structure models in drug discovery, ultimately enhancing our ability to target biologically relevant conformations and dynamics for therapeutic development.

The accurate determination of a protein's three-dimensional structure is fundamental to understanding its biological function and facilitating drug discovery. While advanced AI systems like AlphaFold2 and AlphaFold3 have revolutionized protein structure prediction by achieving accuracy competitive with experimental methods, the critical validation step involves assessing how well these computational models fit experimental data [7] [1]. Proteins are inherently dynamic entities that sample a continuum of conformational states to fulfill their biological roles, yet most prediction methods yield single static conformations, creating a fundamental challenge in structural biology [8]. Experimental techniques such as X-ray crystallography, nuclear magnetic resonance (NMR) spectroscopy, and cryo-electron microscopy (cryo-EM) inherently report on ensemble-averaged data rather than singular static snapshots, necessitating robust metrics to evaluate how well computational models align with experimental observations.

The validation process is particularly crucial because proteins exist as dynamic ensembles of multiple conformations, and these motions are often crucial for their functions [8]. Current structure prediction methods predominantly yield a single conformation, overlooking the conformational heterogeneity revealed by diverse experimental modalities. This limitation is recognized in the PDB, where multi-conformer annotations are widespread, reflecting inherent structural variability captured in crystallography [8]. Underpinning the entire validation framework is the critical balance between computational prediction and experimental verification, ensuring that structural models not only appear physically plausible but also faithfully represent empirical observations across multiple experimental conditions and techniques.

Core Metrics for Electron Density Fit

Crystallographic R-factors and Quality Indicators

In X-ray crystallography, the fit of an atomic model to the experimental electron density map is quantitatively assessed using several key metrics. The most fundamental of these are the R-factors, which measure the agreement between the observed structure-factor amplitudes (from the experimental data) and those calculated from the refined model [9]. The conventional R-factor (refinelsRfactorgt) and the weighted R-factor (refinelswRfactorref) serve as primary indicators, with lower values generally indicating better fit. A comprehensive survey of over one million crystallographic datasets revealed typical R-factor values and their distributions across the Cambridge Structural Database, providing crucial reference points for evaluating model quality [9].

Beyond R-factors, several additional metrics provide valuable insights into the refinement quality and model accuracy. The maximum and minimum residual electron density values (refinediffdensitymax and refinediffdensitymin) indicate regions where the model fails to fully explain the observed density, potentially highlighting areas of disorder, errors in modeling, or unmodeled solvent components [9]. The goodness-of-fit metric (refinelsgoodnessoffitref) assesses how well the model agrees with the experimental data relative to the estimated errors, while the maximum parameter shift (refinelsshift/sumax) during the final refinement cycles indicates structural stability [9]. These metrics, when considered collectively, provide a comprehensive picture of how well an atomic model explains the observed crystallographic data.

Table 1: Key Crystallographic Quality Metrics from CIF Data

| Metric Name | CIF Data Item | Interpretation | Typical Values |

|---|---|---|---|

| R-factor | refinelsRfactor_gt | Agreement between observed and calculated structure factors | Lower values indicate better fit (often <0.20) |

| Weighted R-factor | refinelswRfactor_ref | Weighted agreement of all reflections | Usually higher than R-factor |

| Maximum Residual Density | refinediffdensitymax | Unexplained positive electron density | Values close to zero preferred |

| Minimum Residual Density | refinediffdensitymin | Unexplained negative electron density | Values close to zero preferred |

| Goodness of Fit | refinelsgoodnessoffitref | Agreement relative to estimated errors | Ideal value接近 1.0 |

| Maximum Shift/Error | refinelsshift/sumax | Structural stability in final refinement | Small values (<0.01) indicate stability |

Real-Space Correlation and Density Fit Analysis

Beyond the reciprocal-space metrics derived from structure factors, real-space correlation coefficients provide crucial information about how well local regions of the model fit the electron density. Recent advancements in computational approaches have enabled more accurate prediction of solution X-ray scattering profiles at wide angles from atomic models by generating high-resolution electron density maps [10]. The DENSS software package implements methods that account for the excluded volume of bulk solvent by calculating unique adjusted atomic volumes directly from atomic coordinates, eliminating the need for one of the free fitting parameters commonly used in existing algorithms and resulting in improved accuracy of calculated SWAXS profiles [10].

The quality of electron density fit is particularly evident in regions of structural heterogeneity. As noted in recent analyses of the PDB, crystallographic refinements now increasingly permit explicit modeling of alternative conformations ("altlocs") within overlapping density regions [8]. Advances in resolution, coupled with more widespread application of room-temperature crystallographic experiments, have facilitated this multi-conformer modeling, reflecting the inherent structural variability captured in modern crystallography. Assessment of model-to-density fit in these regions requires specialized approaches that can handle the continuous conformational heterogeneity often obscured in static electron density maps.

Diagram 1: Crystallographic structure determination and validation workflow. The process involves iterative cycles of model building and refinement, with multiple quality metrics assessed during validation.

Metrics for Experimental Restraints

NMR Restraint Violations and Ensemble Validation

In NMR spectroscopy, protein structures are determined using experimental restraints including nuclear Overhauser effects (NOEs) for distance constraints, J-couplings for torsion angles, and chemical shifts for local structural information. The quality of NMR structures is primarily assessed by analyzing the violations of these experimental restraints—the extent to which the atomic coordinates deviate from the measured constraints [8]. Lower violation energies indicate better agreement with experimental data, with typical quality assessments considering the root-mean-square deviation (RMSD) of violations and the number of significant restraint violations per residue.

Traditional NMR structure determination employs restrained molecular dynamics (MD) simulations, requiring hundreds of independent trajectories to adequately sample conformational spaces consistent with experimental data [8]. This computationally intensive process struggles to balance accuracy, efficiency, and ensemble diversity. The resulting ensembles must satisfy all experimental restraints while maintaining proper stereochemistry and representing biologically relevant conformational diversity. The validation of such ensembles includes assessing both the agreement with experimental data and the reasonableness of the structural geometry, creating a multi-dimensional validation challenge that no single metric can fully capture.

Hybrid Methods for Integrating Experimental Data

Recent advancements have introduced novel approaches for integrating experimental data directly into structure prediction pipelines. Methods like Distance-AF improve AlphaFold2-predicted models by incorporating user-specified distance constraints through an overfitting mechanism that iteratively updates network parameters until predicted structures satisfy given distance constraints [11]. This approach adds a distance-constraint loss term that measures the divergence between distances in the predicted structure and user-provided distances of pairs of Cα atoms, combined with AlphaFold2's original loss terms [11].

Similarly, experiment-guided AlphaFold3 represents a framework that treats AlphaFold3 as a sequence-conditioned structural prior and casts ensemble modeling as posterior inference of protein structures given experimental measurements [8]. This approach incorporates experimental data during the sampling process of AlphaFold3's diffusion-based structure module, directing conformational exploration toward regions compatible with experimental constraints. For NMR data, this method has demonstrated an ability to generate ensembles that obey NOE-derived distance restraints while dramatically accelerating the structure determination process from many hours to a few minutes [8].

Table 2: Metrics for Experimental Restraint Validation

| Metric Category | Specific Metrics | Application Context | Interpretation Guidelines |

|---|---|---|---|

| NMR Restraint Violations | NOE violation energy, RMSD of violations, Number of violations per residue | NMR structure determination | Lower values indicate better agreement with experimental data |

| Distance Constraints | Mean distance error, Constraint satisfaction rate | Cross-linking MS, FRET, guided prediction | Values should be within experimental error margins |

| Cryo-EM Fit | Map-model correlation, Fourier shell correlation (FSC), Local resolution | Cryo-EM structure determination | Correlation coefficients >0.8 generally indicate good fit |

| Hybrid Method Scores | Combined score (e.g., C2Qscore), Model confidence, ipTM | Integrative structural biology | Higher scores indicate better overall quality |

Assessment Metrics for AI-Predicted Models

Interface and Global Quality Scores

The rapid advancement of AI-based protein structure prediction has necessitated the development of specialized assessment metrics tailored to evaluate predicted models, particularly for protein complexes. Recent comprehensive benchmarking studies have evaluated widely used scoring metrics for assessing models predicted by ColabFold (with and without templates) and AlphaFold3 [2] [12]. The results demonstrate that interface-specific scores are consistently more reliable for evaluating protein complex predictions compared to corresponding global scores, with ipTM (interface pTM) and model confidence achieving the best discrimination between correct and incorrect predictions [2] [12].

The performance of these assessment scores varies across prediction methods. Interestingly, while ColabFold with templates and AlphaFold3 perform similarly in generating high-quality predictions (with 35.2% and 39.8% 'high' quality models respectively, as measured by DockQ > 0.8), the assessment scores perform best on ColabFold without templates [2]. This highlights the complex relationship between prediction accuracy and the metrics used to evaluate them. Based on these comprehensive analyses, researchers have developed weighted combined scores like C2Qscore to improve model quality assessment by integrating multiple individual metrics [2] [12].

PAE, pLDDT, and Confidence Metrics

AlphaFold and related prediction systems provide per-residue and pairwise accuracy estimates that serve as crucial internal validation metrics. The predicted aligned error (PAE) represents AlphaFold's internal estimate of positional uncertainty at different regions of the model, with interface PAE (iPAE) specifically focusing on residue-residue interactions in complexes [2]. The predicted local distance difference test (pLDDT) provides a per-residue estimate of local confidence, with interface pLDDT (ipLDDT) offering a specialized version for evaluating interaction interfaces [2].

These confidence metrics have been shown to reliably predict the actual accuracy of the corresponding predictions, enabling researchers to identify regions of high and low reliability within structural models without experimental validation [7]. However, it is important to recognize that these are predictive metrics based on the model's internal consistency and training, not direct measurements of accuracy against experimental data. They should therefore be used as guides rather than absolute determinants of model quality, particularly for novel folds or proteins with limited homologous sequences in databases.

Diagram 2: AI-predicted model validation metrics. Prediction models are assessed through both internal confidence metrics and external experimental validation, with specialized scores for interface regions.

Integrated Validation Frameworks and Protocols

Experiment-Guided Structure Determination

The integration of experimental data with computational prediction has led to powerful hybrid approaches for structure determination. The experiment-guided AlphaFold3 framework implements a three-stage ensemble-fitting pipeline that combines guided sampling, artifact correction, and ensemble selection [8]. In the first stage, AlphaFold3's diffusion-based structure module is adapted to incorporate experimental measurements during sampling using a non-i.i.d. sampling scheme that jointly samples the ensemble. The second stage addresses artifacts introduced during guided sampling using computationally efficient force-field relaxation to project candidate structures onto physically realistic conformations. The final stage employs a matching-pursuit ensemble selection algorithm to iteratively refine the ensemble by maximizing agreement with experimental data while preserving structural diversity [8].

This approach has demonstrated significant success in both crystallographic and NMR applications. In crystallography, Density-guided AlphaFold3 produces structures that are consistently more faithful to observed electron density maps than unguided AlphaFold3, in some cases even outperforming PDB-deposited structures' faithfulness to the density [8]. For NMR, NOE-guided AlphaFold3 refines structural ensembles to satisfy NOE-derived distance restraints more faithfully than standard predictions, in some cases surpassing the accuracy of existing PDB-deposited NMR ensembles while reducing determination time from hours to minutes [8].

Distance-Constraint Integration Protocols

The Distance-AF method provides a detailed protocol for integrating distance constraints into AlphaFold2 predictions through an overfitting mechanism [11]. The process begins with the standard Evoformer module processing multiple sequence alignments, after which a single sequence embedding is passed to the structure module along with user-specified residue-pair distance constraints [11]. The key innovation is the addition of a distance-constraint loss term that measures the divergence between distances in the predicted structure and user-provided distances of Cα atom pairs, combined with AlphaFold2's original loss terms (FAPE loss, angle loss, and violation terms) [11].

The Distance-AF protocol has demonstrated remarkable effectiveness in modifying domain orientations guided by limited distance constraints, with benchmark studies showing improvements in RMSD to native structures by an average of 11.75 Å compared to standard AlphaFold2 models [11]. The method exhibits sensitivity to constraint quality but maintains reasonable accuracy even with approximate distances biased by up to 5 Å, demonstrating robustness for practical applications where exact distances may be uncertain. This approach has proven valuable for multiple scenarios including fitting structures into cryo-EM density maps, modeling active and inactive conformations of proteins, and generating ensembles consistent with NMR data [11].

Research Reagent Solutions

Table 3: Essential Tools and Software for Structure Validation

| Tool Name | Primary Function | Application Context | Key Features |

|---|---|---|---|

| DENSS | Electron density prediction from atomic models | SWAYS/SWAXS data analysis | Calculates unique adjusted atomic volumes, eliminates free parameters [10] |

| C2Qscore | Combined quality assessment for protein complexes | AI model validation | Weighted combination of multiple metrics, integrated in PICKLUSTER v.2.0 [2] [12] |

| Experiment-guided AlphaFold3 | Integration of experimental data with AF3 predictions | Hybrid structure determination | Three-stage pipeline: guided sampling, relaxation, ensemble selection [8] |

| Distance-AF | Incorporation of distance constraints into AF2 | Constraint-driven modeling | Overfitting mechanism with distance-constraint loss term [11] |

| checkCIF | Crystallographic validation | X-ray structure validation | IUCr validation service, comprehensive quality indicators [9] |

| PICKLUSTER ChimeraX plugin | Interactive model analysis | Protein complex validation | Integrates multiple scoring metrics including C2Qscore [2] [12] |

| AlphaLink | Integration of cross-linking MS data | Distance restraint incorporation | Converts XL-MS restraints into distogram bins [11] |

The prediction of protein tertiary structures from amino acid sequences has become a routine part of molecular biology, with numerous servers available for building 3D atomic models [13]. However, the utility of these predicted structures in downstream applications—such as drug design, enzyme mechanism studies, and site-directed mutagenesis—depends entirely on the researcher's ability to assess their quality and reliability [14] [15]. Protein structure validation metrics provide the essential tools for this assessment, answering three fundamental questions: Which 3D protein models are the best? How good are the models? Where are the errors located in the models? [13] These metrics fall into two broad categories: global quality measures that evaluate the overall fold of the protein, and local quality measures that assess residue-specific accuracy [13] [14]. Understanding both types of measures is crucial for researchers to properly interpret computational models and apply them appropriately in biological investigations.

This technical guide provides an in-depth examination of global and local quality assessment methods for protein structures, with a focus on their underlying principles, computational methodologies, and practical applications in biomedical research. We frame this discussion within the broader context of protein structure validation metrics, emphasizing how the integration of both global and local perspectives enables more informed use of computational models in scientific research and drug development.

Global quality measures provide a single value or score that represents the overall accuracy of a protein structural model compared to a reference native structure. These measures are particularly valuable for quickly ranking multiple models of the same protein to identify the most accurate predictions [13] [16].

Key Global Quality Metrics

Table 1: Fundamental Global Quality Assessment Metrics for Protein Structures

| Metric | Description | Interpretation | Optimal Values | Key Applications |

|---|---|---|---|---|

| RMSD (Root Mean Square Deviation) | Average distance between corresponding atoms after optimal alignment [17] | Lower values indicate better agreement; 0Å = perfect match [17] | <2-3Å for reliable models [17] | Overall structural comparison, model refinement tracking |

| TM-score (Template Modeling Score) | Scale-invariant measure quantifying structural similarity, less sensitive to local errors than RMSD [18] | 0-1 scale; >0.5 indicates same fold, <0.17 random similarity [18] | >0.8 for high accuracy models | Fold recognition, template-based modeling |

| GDT (Global Distance Test) | Percentage of Cα atoms within specified distance cutoffs from native structure [16] | Higher percentages indicate better models; 0-100 scale | >80 for high quality | CASP assessment, model ranking |

| pLDDT (predicted Local Distance Difference Test) | Per-residue confidence score multiplied by a factor of 100 [7] [15] | 0-100 scale; >90 very high, <50 very low confidence [15] | >70 for reliable regions [17] | AlphaFold2 confidence estimation, model reliability |

Methodologies for Global Quality Assessment

Global quality assessment methods typically operate through two primary approaches: single-model methods that evaluate individual structures in isolation, and consensus methods that compare multiple models for the same target [13]. Single-model methods, such as VoroMQA and ProQ3D, analyze physical and statistical properties of the structure including residue contact potentials, torsion angles, and burial propensities [13] [14]. These methods are computationally efficient and provide consistent scoring for individual models.

Consensus or clustering approaches (e.g., ModFOLDclust2, MULTICOM_CLUSTER) leverage the observation that structurally similar regions across multiple models for the same target are more likely to be correct [13]. These methods generally achieve higher accuracy but require generating multiple models, increasing computational costs [13]. Hybrid approaches like ModFOLD8 combine the strengths of both strategies by integrating multiple pure-single and quasi-single model scores using neural networks [13].

Local Quality Assessment: Residue-Specific Accuracy

While global measures provide an overall assessment, local quality measures offer per-residue estimates of accuracy, which is critical for most practical applications of protein structure models [14]. Local errors in otherwise good global folds can significantly impact biological interpretations, particularly in functional sites.

Key Local Quality Metrics

Table 2: Local Quality Assessment Metrics for Residue-Level Validation

| Metric | Description | Scale | Interpretation | Method Examples |

|---|---|---|---|---|

| lDDT (local Distance Difference Test) | Local superposition-free score evaluating distance differences for all atom pairs within a threshold [13] | 0-1 | >0.7 high local accuracy; per-residue evaluation [18] | ModFOLD8, AlphaFold2 |

| S-score | Residue-specific similarity score converted to predicted distance from native (Å) [13] | 0-1 similarity or Å distance | Lower Å values indicate higher accuracy; inverse S-score function: d = 3.5√((1/s)−1) [13] | ModFOLD8 |

| CAD (Contact Distance Agreement) | Agreement between predicted residue contacts and Euclidean distances in the model [13] | Varies | Better agreement indicates more accurate local structure | ModFOLD8 CDA scores |

| RSRZ (Real Space R Z-score) | Measures how well each residue fits experimental electron density [15] | Standard deviations | Values >2 indicate poor fit to experimental data | X-ray validation |

Advanced Local Quality Assessment Methods

Innovative approaches to local quality assessment have emerged that consider spatial context rather than treating residues in isolation. The Graph-based Model Quality assessment method (GMQ) represents protein structures as graphs where residues are connected based on spatial proximity, then uses conditional random fields to explicitly model the influence of neighboring residues' quality on each target residue [14]. This approach recognizes that the accuracy of a residue's position is often correlated with the accuracy of its spatially neighboring residues [14].

ModFOLD8 employs a sophisticated neural network architecture that combines 13 different scoring methods (9 pure single-model and 4 quasi-single-model) using a sliding window of per-residue scores [13]. The network is trained to predict both S-scores and lDDT scores, then converts similarity scores back to predicted distances in Ångströms from the native structure using the inverse S-score function: d = 3.5√((1/s)−1) [13].

Experimental Protocols and Workflows

Integrated Quality Assessment Protocol

Diagram 1: Integrated workflow for comprehensive protein structure quality assessment, combining both global and local validation metrics.

ModFOLD8 Implementation Protocol

The ModFOLD8 server implements a sophisticated hybrid approach for quality assessment through the following detailed protocol:

Input Preparation: Provide the amino acid sequence for the target protein and at least one 3D model for evaluation. Multiple alternative models can be submitted for comparative analysis [13].

Reference Model Generation: For quasi-single model methods, generate 135 reference models using the IntFOLD pipeline or utilize reference models from LOMETS for ResQ scoring [13].

Feature Extraction: Calculate nine pure single-model inputs including ProQ methods (ProQ2, ProQ2D, ProQ3D, ProQ4), VoroMQA, Contact Distance Agreement scores (CDA, CDADMP, CDASC), and Secondary Structure Agreement score (SSA) [13].

Quasi-Single Model Scoring: Compute four quasi-single model inputs including ResQ, Disorder B-factor Agreement (DBA), ModFOLDclustsingle (MF5s), and ModFOLDclustQsingle (MFcQs) by comparing the input model against reference sets [13].

Neural Network Processing: Process the 13 scoring method inputs through neural networks using a sliding window (size=5) of per-residue scores, with 65 input neurons, 33 hidden neurons, and 1 output neuron [13].

Score Conversion: Convert similarity scores to predicted distances in Ångströms from the native structure using the inverse S-score function: d = 3.5√((1/s)−1) for each residue [13].

Output Generation: Produce global scores (ModFOLD8rank for ranking, ModFOLD8cor for correlations, ModFOLD8 for balanced performance) and local quality estimates for each residue [13].

GMQ Local Assessment Protocol

The Graph-based Model Quality assessment method employs the following specialized protocol for local error prediction:

Graph Construction: Represent the protein structure model as a graph where nodes correspond to Cα positions and edges connect residues closer than distance cutoffs (typically 4.0-5.5 Å) [14].

Clique Identification: Identify fully connected sub-graphs (cliques) where all residues are mutually spatially adjacent, recording adjacent cliques as a tree structure [14].

Feature Encoding: For each residue, encode 25 features characterizing its structural environment and sequence properties [14].

Conditional Random Field Application: Apply CRF to compute the probability of accuracy classification using the function:

Pθ(Y|X) = (1/Z(x)) ∏Ψc(Yc, Xc; θ)

where factors Ψc combine features of target residues and predicted labels of neighboring residues [14].

Binary Classification: Perform binary prediction indicating whether each residue position is within a specified error cutoff (e.g., 2Å, 4Å) or not, considering four possible label combinations (00, 01, 10, 11) for residue pairs [14].

Iterative Refinement: Refine predictions by considering larger graphs and incorporating secondary structure-specific edge weights to improve accuracy [14].

Table 3: Essential Tools and Resources for Protein Structure Quality Assessment

| Tool/Resource | Type | Primary Function | Key Features | Access |

|---|---|---|---|---|

| ModFOLD8 | Quality Assessment Server | Global & local quality estimation | Hybrid approach combining 13 scoring methods; CASP top performer [13] | https://www.reading.ac.uk/bioinf/ModFOLD/ |

| AlphaFold2 | Structure Prediction | 3D structure prediction from sequence | Provides pLDDT confidence scores for each residue [7] [15] | https://alphafold.ebi.ac.uk/ |

| GMQ | Local Quality Assessment | Residue-specific error prediction | Graph-based approach using conditional random fields [14] | Contact authors |

| Foldseek | Structure Search & Comparison | Rapid structural similarity search | 3Di alphabet enables fast database searches [18] | https://foldseek.com/ |

| RCSB PDB | Structure Database | Experimental structure repository | Validation reports for experimental structures [15] | https://www.rcsb.org/ |

Applications in Biomedical Research and Drug Discovery

The integration of global and local quality measures enables sophisticated applications of computational protein structures in biomedical research. For drug discovery, global quality measures help identify structurally reliable targets, while local quality assessment is crucial for evaluating binding site accuracy where small errors can significantly impact virtual screening and docking studies [14] [15]. AlphaFold2 models with pLDDT scores >90 in binding regions can be used with higher confidence for initial drug screening, though experimental validation remains essential [17] [15].

In enzyme mechanism studies, the accurate positioning of catalytic residues and substrate-binding elements is paramount. Local quality measures such as lDDT and S-scores help identify reliably modeled active sites, guiding mutagenesis experiments and functional analyses [14]. For proteins with multiple domains connected by flexible linkers, global measures may indicate high overall quality while local assessment reveals uncertainties in inter-domain orientations, as reflected in predicted aligned error (PAE) plots from AlphaFold2 [17].

Protein structure validation requires both global and local perspectives to fully understand model limitations and appropriate applications. Global quality measures efficiently identify the best overall folds and enable rapid model ranking, while local quality assessment provides the residue-level resolution needed for most practical applications in biotechnology and drug development [13] [14]. The integration of these approaches through hybrid methods like ModFOLD8 and innovative algorithms like GMQ represents the state-of-the-art in quality assessment [13] [14].

As protein structure prediction methods continue to advance, with AlphaFold2 achieving near-experimental accuracy for many targets [7], quality assessment remains essential for establishing trust in computational models and guiding their biological application [13]. Researchers must consider both global and local quality measures when utilizing predicted structures, recognizing that even high-quality global folds may contain local errors that impact specific functional interpretations [17] [15]. The ongoing development and refinement of validation metrics will continue to enhance the utility of computational structural biology across biomedical research.

However, I can provide a framework for your document based on general knowledge and indicate the type of information you would need to gather.

Protein structure validation is a critical step in structural biology, ensuring that theoretical models and experimentally determined structures are stereochemically reasonable and biologically relevant. With the increasing reliance on computational models, such as those generated by AlphaFold, and the known presence of errors in some public repository entries [19] [20], the use of robust validation suites is indispensable. These tools provide objective metrics to assess the quality of a protein model, which is foundational for any subsequent research, including rational drug design and understanding biological function [21] [22]. This guide provides an in-depth examination of three cornerstone validation suites: MolProbity, PROCHECK, and Verify3D.

A protein structure validation suite evaluates a model against a set of empirical rules derived from high-resolution structures. The core philosophy is to identify regions of the model that deviate from known physicochemical principles and geometric constraints.

Table 1: Core Functionality of Major Validation Suites

| Validation Suite | Primary Validation Focus | Core Methodology | Typical Output Metrics |

|---|---|---|---|

| MolProbity | Steric clashes, rotamer outliers, and backbone conformation | Analyzes all-atom contacts and torsion angles. | Clashscore, Ramachandran plot outliers, rotamer outliers. |

| PROCHECK | Stereochemical quality of the backbone and side chains | Evaluates residue geometry via Ramachandran plot and other dihedral angles. | Ramachandran plot statistics, G-factor. |

| Verify3D | Sequence-to-structure compatibility and fold recognition | Assesses the compatibility of a 3D model with its own amino acid sequence. | 3D-1D profile score, residue-wise compatibility scores. |

Detailed Methodologies and Protocols

3.1. MolProbity MolProbity is an all-atom contact analysis tool known for its focus on identifying steric clashes and evaluating side-chain rotamers.

- Experimental Protocol for Use:

- Input Preparation: Prepare your protein structure file in PDB format.

- Submission: Access the MolProbity web server or install the standalone software. Upload your PDB file.

- Analysis Execution: The server performs a series of checks, including:

- All-Atom Contact Analysis: Identifies atoms that are closer than the sum of their van der Waals radii.

- Rotamer Analysis: Evaluates the chi-angle distributions of side chains against a rotamer library to flag outliers.

- Ramachandran Analysis: Assesses the phi/psi torsion angles of the backbone.

- Output Interpretation: Review the generated report, focusing on key metrics like the Clashscore (number of serious steric clashes per 1000 atoms) and the percentage of residues in the favored and allowed regions of the Ramachandran plot.

3.2. PROCHECK PROCHECK is one of the classic tools for assessing the stereochemical quality of a protein structure, with a strong emphasis on the Ramachandran plot.

- Experimental Protocol for Use:

- Input Preparation: Ensure your PDB file contains the necessary header information (e.g.,

HELIX,SHEETrecords can improve analysis). - Software Execution: Run the PROCHECK software via command line or a web interface.

- Analysis Execution: The core of its methodology involves:

- Ramachandran Plot Calculation: Calculates the phi and psi angles for all non-glycine and non-proline residues.

- Residue Planarity and Chirality Checks: Verifies the geometry of peptide bonds and chiral centers.

- Output Interpretation: The key output is the Ramachandran plot, which categorizes residues into "most favored," "additional allowed," "generously allowed," and "disallowed" regions. A high-quality model typically has over 90% of residues in the "most favored" regions. The G-factor provides an overall measure of stereochemical quality.

- Input Preparation: Ensure your PDB file contains the necessary header information (e.g.,

3.3. Verify3D Verify3D operates on a different principle, evaluating the compatibility of a 3D structure with its amino acid sequence, which is particularly useful for assessing the overall fold.

- Experimental Protocol for Use:

- Input Preparation: Provide the protein structure in PDB format.

- Submission: Run the Verify3D program or use its web server.

- Analysis Execution: Its methodology involves:

- 3D-1D Profile Generation: The 3D structure is sliced into segments, and the environment (e.g., solvent accessibility, polarity) of each residue is calculated.

- Profile Comparison: This environmental profile is compared against a database of known, reliable structures to generate a compatibility score for each residue.

- Output Interpretation: The output is a residue-by-residue plot of the 3D-1D score. A reliable model will have almost all of its residues scoring above a threshold of 0.2.

Visualization of the Validation Workflow

The following diagram illustrates a logical workflow for integrating these tools into a comprehensive structure validation pipeline.

Figure 1: A typical workflow for protein structure validation.

Research Reagent Solutions

The following table lists key resources and tools essential for conducting protein structure validation.

Table 2: Essential Research Reagents and Tools for Structure Validation

| Item | Function in Validation | Explanation / Example |

|---|---|---|

| Protein Data Bank (PDB) | Primary data source. | The worldwide repository for 3D structural data of proteins and nucleic acids, used as the input for validation [19]. |

| PDB-REDO Databank | Refined data source. | A resource providing re-refined and rebuilt versions of PDB entries, which can serve as improved inputs for validation studies [20]. |

| Molecular Visualization Software (e.g., PyMOL) | Visualization and analysis. | Used to visually inspect the structure and the specific regions (e.g., steric clashes, loop regions) flagged by validation suites [21] [22]. |

| REFMAC | Crystallographic refinement. | A program for the refinement of macromolecular models against X-ray data, often used in pipelines like PDB-REDO to improve model quality before final validation [20]. |

| Rosetta Force Field | Energy-based scoring. | Used in tools like Foldit to score model quality by evaluating steric clashes, Ramachandran space usage, and other physicochemical properties [20]. |

A Practical Guide to Key Validation Metrics and Their Interpretation

Stereochemical validation is a cornerstone of structural biology, ensuring that three-dimensional atomic models of macromolecules are not only consistent with the experimental data but also conform to known physical and chemical principles. For researchers and drug development professionals, the reliability of a protein structure is paramount, as it forms the basis for understanding biological mechanisms, rational drug design, and virtual screening campaigns. The core metrics of this validation process are the Ramachandran plot, which assesses backbone conformation; rotamer outliers, which evaluate side-chain packing; and the clashscore, which quantifies steric overlaps. These metrics provide complementary views of model quality. Historically, validation was often a final check before deposition; however, a modern, effective refinement strategy integrates these tools throughout the structure solution process to actively guide corrections, leading to more robust and biologically accurate models [23].

The adoption of these metrics by the worldwide Protein Data Bank (wwPDB) as standard validation criteria underscores their critical importance. The wwPDB now incorporates MolProbity's clashscore, Ramachandran, and rotamer analyses into its validation pipeline, providing depositors and users with percentile scores that contextualize a structure's quality against the entire PDB [24]. This shift has had a tangible impact on the quality of the structural database. Since the widespread adoption of tools like MolProbity, the average all-atom clashscores for new depositions in the 1.8-2.2 Å resolution range have improved approximately threefold, demonstrating how rigorous validation drives better modeling practices across the scientific community [24] [23].

Foundational Concepts and Statistical Criteria

The Ramachandran Plot

The Ramachandran plot is a two-dimensional scatter plot of the backbone dihedral angles φ (phi) against ψ (psi) for each residue in a protein structure. It visualizes the sterically allowed and disallowed conformations for the polypeptide backbone. The plot is divided into favored, allowed, and outlier regions based on empirical data from high-quality structures. Modern implementations, such as those in MolProbity, PHENIX, and the wwPDB, use reference data derived from the Top8000 dataset—a curated set of over 7,900 protein chains filtered at the 70% homology level and further refined by excluding residues with high B-factors (> 30 Ų) or alternate conformations [24]. This stringent filtering ensures that the derived conformational distributions are clean and reproducible. The current criteria categorize amino acids into six distinct groups (general, Glycine, Proline, pre-Proline, Isoleucine/Valine, and Trans-proline), each with its own specific φ, ψ plot, acknowledging the unique steric constraints of each residue type [24] [23]. The outlier contour is drawn such that only about one in 5,000 high-quality reference residues falls outside it, making any outlier in a model a significant flag for potential error [23].

Rotamer Outliers

Rotamer outliers refer to side-chain conformations that deviate significantly from the low-energy torsional angles (rotamers) observed in high-resolution structures. The evaluation of rotamers relies on rotamer libraries, which are statistical distributions of the side-chain dihedral angles χ₁, χ₂, etc. MolProbity's rotamer validation is also updated using the Top8000 dataset, providing a more nuanced and modern understanding of preferred side-chain packing [24]. A rotamer is typically flagged as an outlier if its probability is in the lowest percentile (e.g., <0.3%). Importantly, a side-chain rotamer outlier often co-occurs with other validation outliers, such as Cβ deviations or steric clashes. A Cβ deviation is a particularly powerful metric; it measures the displacement of the Cβ atom from its ideal position based on the backbone coordinates. A significant Cβ deviation indicates that the side chain's orientation is forcing the Cβ into a non-tetrahedral geometry, which is a strong indicator that either the side-chain or the local backbone fit is incorrect [23].

Clashscore

The clashscore is a measure of steric strain within a model, calculated as the number of serious all-atom steric overlaps (≥ 0.4 Å) per 1,000 atoms [24]. This metric is unique to MolProbity and represents a significant advancement over earlier "bump checks" because it includes explicit hydrogen atoms. The methodology involves two key steps: first, the Reduce program adds all hydrogen atoms, optimizes the hydrogen-bond networks, and flips Asn, Gln, and His side chains where necessary to resolve clashes. Second, the Probe program analyzes all non-covalent atom pairs, identifying any pairs whose van der Waals surfaces overlap by 0.5 Å or more [24] [23]. The all-atom contact analysis is exceptionally sensitive to local fitting problems. It is crucial to understand that the goal is not to achieve a clashscore of zero, which is likely impossible and may indicate over-fitting, but to have a score comparable to the best reference structures, which typically have a few small, unresolved clashes [24].

Table 1: Key Stereochemical Quality Metrics and Their Interpretation

| Metric | What It Measures | Calculation Method | Interpretation & Goal |

|---|---|---|---|

| Ramachandran Plot | Backbone torsion angle (φ/ψ) plausibility [23] | Comparison to φ/ψ distributions from the Top8000 reference dataset; residues categorized as favored, allowed, or outlier [24] | >98% in favored regions is excellent for a well-modeled structure at high resolution. Outliers require inspection and justification. |

| Rotamer Outliers | Side-chain conformation plausibility [23] | Comparison of χ angles to rotamer libraries from the Top8000 dataset; rotamers flagged with a percentile score [24] | A low rate of outliers (<1-2%) is expected. Often linked to Cβ deviations and clashes. |

| Clashscore | Steric hindrance or atomic overlaps [24] | Number of all-atom clashes (≥0.4 Å) per 1,000 atoms, calculated by Probe after adding H atoms with Reduce [23] | Lower is better. Compare to the average for the resolution range (e.g., a score of 4 is excellent for mid-resolution X-ray) [23]. |

Experimental and Computational Methodologies

Workflow for Integrated Validation and Correction

A modern structural biology workflow integrates validation not as a final step, but as a cyclical process of diagnosis and correction throughout model building and refinement. The following diagram illustrates this iterative workflow, which leverages tools like MolProbity and Coot to systematically improve model quality.

This integrated workflow ensures that local errors are identified and corrected early, preventing the accumulation of problems that can hinder refinement and map interpretation. The key is to prioritize residues that are flagged by multiple validation metrics, as this strongly indicates a genuine error in the local fit rather than a mere statistical outlier.

Protocols for Key Validation Experiments

Protocol 1: Running a Full MolProbity Validation Analysis

- Input Preparation: Obtain your coordinate file in PDB or mmCIF format. Ensure structure factors are available if performing a full wwPDB validation.

- Submission: Access the MolProbity web service (http://molprobity.biochem.duke.edu or its mirror at http://molprobity.manchester.ac.uk) and upload your coordinate file.

- Automated Processing: The server runs a suite of tools:

- Reduce: Adds all H atoms, optimizes hydrogen bonds, and flips Asn/Gln/His side chains.

- Probe: Calculates all-atom contacts and generates the clashscore.

- Ramalyze: Evaluates backbone dihedral angles against the Top8000 dataset.

- Rotalyze: Evaluates side-chain rotamers against the updated library.

- Cbetadev: Calculates Cβ deviations to identify backbone/side-chain conflicts [24] [23].

- Interpretation: Review the interactive results. The output includes a summary score, detailed tables of outliers, and interactive 3D visualizations in KiNG or through links to Coot for guided correction.

Protocol 2: Correcting a Common Multi-Outlier (e.g., Rotamer Outlier with Clash)

- Identify the Residue: From the MolProbity report, locate a residue flagged as a rotamer outlier that also shows serious steric clashes.

- Visual Inspection: In Coot, center the view on this residue. Display the electron density map (2mFₒ-DFᶜ and mFₒ-DFᶜ) to assess the quality of the fit.

- Analyze Contacts: Use the "Check/Delete Clashes" function in Coot (which uses MolProbity's all-atom contact analysis) to visualize the specific atomic overlaps as red spikes or dots [23].

- Initiate Correction:

- If the density is poor, consider rebuilding the side chain or even the local backbone.

- If the density is clear, use the "Rotamer" tool in Coot to cycle through the most common, low-energy rotamers. Select a rotamer that fits the density and simultaneously eliminates the steric clashes.

- For Asn, Gln, or His residues, use the "Simple Rotamers" function or let Reduce/Probe automatically determine the correct flip state to resolve clashes and optimize H-bonds [24].

- Real-space Refinement: Perform a final real-space refinement of the residue within Coot to regularize its geometry while maintaining a good fit to the density.

Advanced and Emerging Techniques

Network-Based Validation

Beyond traditional geometric metrics, complex network analysis offers a global perspective on model quality by representing a protein structure as a network. In this representation, amino acid residues are nodes, and close contacts between residues form the edges. Studies analyzing over 50,000 such residue networks have shown that correct protein structures exhibit distinct network properties compared to incorrect models. Specifically, correct models have a higher average node degree (more densely intra-connected), higher graph energy (more stable connections), and a lower shortest path length (more efficient information transfer between residues) [25]. This method can identify global packing errors that might not be apparent from local criteria alone. For instance, an analysis of an incorrect model (PDB id: 2F2M) revealed a group of 22 residues connected to the rest of the protein by only a single link, a topological flaw that was corrected in the later structure (PDB id: 3B5D) [25].

Low-Resolution and Cryo-EM Validation

Validating structures determined by cryo-electron microscopy (cryo-EM) or low-resolution X-ray crystallography presents unique challenges due to decreased map clarity. To address this, the CaBLAM (Cα-CO Virtual Angle Analysis) method was developed. CaBLAM utilizes the virtual dihedral angles defined by Cα atoms and carbonyl O atoms to assess the quality of the backbone conformation, particularly the secondary structure. It is exceptionally effective at diagnosing problems in α-helices and β-sheets at resolutions where traditional Ramachandran plots become less sensitive [24]. For RNA structures in low-resolution models, the ERRASER (Energy Refinement of RNA Starter Set from Experimental Data) method is integrated into phenix.refine to correct backbone conformations [23]. These tools are now part of the standard wwPDB validation pipeline for the corresponding structure types, ensuring robust quality assessment across all resolution ranges.

Table 2: The Scientist's Toolkit: Essential Software for Stereochemical Validation

| Tool / Resource | Function | Access / Integration |

|---|---|---|

| MolProbity | Comprehensive all-atom validation server; calculates clashscore, Ramachandran, rotamer, and CaBLAM outliers [24] | Web server (Duke or Manchester), command line, or integrated within Phenix [24] |

| Phenix Software Suite | Integrated system for structure solution; includes phenix.molprobity and other validation modules for real-time feedback during refinement [24] |

GUI or command-line; uses CCTBX libraries shared with MolProbity [24] [23] |

| Coot | Model-building and validation tool; provides interactive visualization of MolProbity outliers and tools for real-space correction [23] | Standalone application; directly links to MolProbity for clash visualization and rotamer fitting [23] |

| Reduce | Adds and optimizes H atoms, assigns His protonation, and flips Asn/Gln/His side chains to resolve clashes [23] | Runs automatically within MolProbity, Phenix, and Coot validation pipelines [24] [23] |

| wwPDB Validation Server | Provides official pre-deposition validation reports using MolProbity and other criteria, giving percentiles vs. the PDB [24] [4] | Online server accessible during PDB deposition; produces a PDF report for journal review [24] |

Stereochemical quality metrics, centered on Ramachandran plots, rotamer analysis, and clashscores, provide an indispensable framework for assessing and ensuring the reliability of macromolecular structures. The integration of these tools, particularly those employing all-atom contact analysis, into cyclical refinement workflows has demonstrably elevated the quality of the entire Protein Data Bank. For researchers in structural biology and drug development, a deep understanding of these metrics is not merely academic—it is a practical necessity. Correctly interpreting and acting upon validation outliers prevents the propagation of errors that could misguide functional interpretations or drug design efforts. The field continues to advance with methods like CaBLAM for low-resolution models and network analysis for global assessment, ensuring that validation practices evolve alongside the techniques used to determine structures. The ultimate goal remains the deposition of structurally sound and biologically meaningful models, a task in which rigorous stereochemical validation is paramount.