Resolving Poor Sample Separation in Heatmap Clustering: A Troubleshooting Guide for Biomedical Researchers

Poor sample separation in heatmap clustering is a frequent challenge in biomedical data analysis, often leading to misleading biological interpretations.

Resolving Poor Sample Separation in Heatmap Clustering: A Troubleshooting Guide for Biomedical Researchers

Abstract

Poor sample separation in heatmap clustering is a frequent challenge in biomedical data analysis, often leading to misleading biological interpretations. This article provides a comprehensive, step-by-step framework for diagnosing and resolving these issues, tailored for researchers, scientists, and drug development professionals. We cover foundational principles of clustering algorithms and data requirements, explore advanced methodological choices, detail a systematic troubleshooting protocol for common pitfalls, and guide the validation of results using robust internal and external metrics. By integrating current best practices and validation techniques, this guide empowers researchers to achieve clear, reliable, and biologically meaningful cluster separation in their genomic, proteomic, and other high-dimensional datasets.

Understanding the Core Principles of Clustering and Data Requirements

Frequently Asked Questions (FAQs)

Q1: Why do my case and control samples fail to separate in a clustered heatmap? Insufficient sample separation in heatmaps often stems from the clustering algorithm's inherent limitations or data preparation issues. The K-means algorithm, frequently used in heatmap generation, makes restrictive assumptions about data structure—it assumes clusters are spherical, equally sized, and similar in density [1]. When your biological data violates these assumptions (e.g., contains irregular cluster shapes or varying densities), separation fails. Additionally, inadequate sample size, insufficient differential expression signal, or inappropriate data scaling can contribute to poor separation [2] [3].

Q2: How can I manually control sample order when using column splits in ComplexHeatmap?

When using column_split in ComplexHeatmap, the default alphabetical order overrides manual column orders. The solution is to define column_split as a factor with explicitly specified levels in your desired order [4]. Instead of column_split <- meta_df$Species, use:

This ensures your specified group order is preserved while maintaining correct sample-group assignments.

Q3: When should I choose DBSCAN over K-means for my clustering analysis? DBSCAN excels over K-means in several scenarios [5]:

- Your data contains clusters of arbitrary, non-spherical shapes

- Clusters have varying densities

- You need to automatically detect and separate outliers

- The number of clusters is unknown beforehand K-means fails with non-spherical clusters and equal-density assumptions, while DBSCAN uses density connectivity, making it robust for biological data with natural cluster variations [1].

Q4: What are the best color practices for heatmap interpretation? Avoid rainbow color scales as they create misperceptions of value magnitudes and lack consistent directionality [6]. Instead:

- Use sequential scales for non-negative values (e.g., raw TPM) with single-hue progressions

- Use diverging scales when a meaningful center exists (e.g., standardized values)

- Ensure color-blind-friendly combinations (blue-orange, blue-red, blue-brown)

- Maintain color simplicity with 3 consecutive hues maximum [6] [7]

Troubleshooting Guide: Poor Sample Separation

Diagnostic Framework

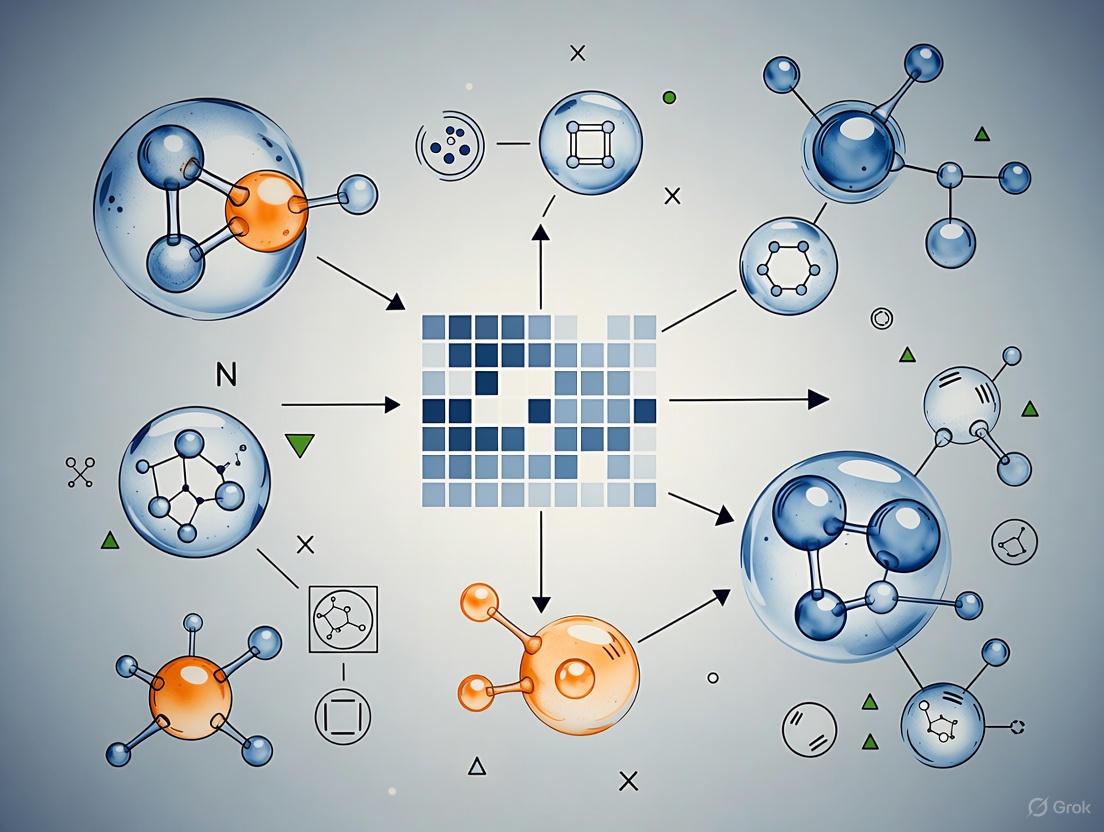

Algorithm Selection Guide

Table 1: Clustering Algorithm Comparison for Biological Data

| Algorithm | Best For | Limitations | Key Parameters | Implementation Tips |

|---|---|---|---|---|

| K-means | Spherical clusters, similar cluster sizes, known cluster count [1] | Fails with non-spherical shapes, different densities, outliers [1] | K (number of clusters) | Scale data first; use gap statistic to determine optimal K [3] |

| DBSCAN | Arbitrary shapes, varying densities, outlier detection [5] | Struggles with varying density clusters, sensitive to parameter selection | eps (neighborhood distance), min_samples (core points) [5] | Start with min_samples = 2×dimensions; use k-distance plot for eps |

| MAP-DP | Unknown cluster count, mixed data types, outlier robustness [1] | Computationally more complex than K-means | prior count, variance parameters [1] | Suitable for biological data with natural clustering uncertainty |

| Hierarchical | Nested cluster structures, dendrogram visualization | Computational cost for large datasets | linkage method, distance metric | Use for heatmap integration; choose appropriate distance metric [3] |

Step-by-Step Resolution Protocol

Protocol 1: Systematic Improvement of Sample Separation

Data Preprocessing

- Scale features using z-score normalization:

z = (value - mean) / standard deviation[3] - Check for batch effects and apply correction if needed

- Ensure adequate sample size for statistical power

- Scale features using z-score normalization:

Distance Metric Selection

- Euclidean: For linear, continuous data

- Manhattan: For high-dimensional spaces

- Correlation-based: For gene expression patterns

- Implement in pheatmap:

clustering_distance_rows/clustering_distance_cols[3]

Alternative Visualization Validation

Advanced Quality Control

Research Reagent Solutions

Table 2: Essential Tools for Clustering Analysis

| Tool/Category | Specific Solution | Function | Implementation |

|---|---|---|---|

| Heatmap Packages | ComplexHeatmap [4] | Advanced heatmap customization | R/Bioconductor |

| pheatmap [3] | Publication-quality clustered heatmaps | R | |

| heatmaply [3] | Interactive heatmap exploration | R | |

| Clustering Algorithms | K-means [1] | Basic spherical clustering | Most platforms |

| DBSCAN [5] | Density-based clustering | Python: scikit-learn | |

| MAP-DP [1] | Bayesian nonparametric clustering | Specialized implementation | |

| Color Solutions | Viridis [6] | Color-blind-friendly sequential palettes | Python/R |

| ColorBrewer [6] | Curated color schemes | R/Python | |

| Custom diverging palettes [7] | Highlighting midpoints | All platforms |

Advanced Technical Reference

Algorithm Workflows

Experimental Parameters for Robust Clustering

Table 3: Optimal Parameter Settings for Biological Data Clustering

| Data Scenario | Recommended Algorithm | Critical Parameters | Validation Approach |

|---|---|---|---|

| Gene Expression (RNA-seq) | Hierarchical + Heatmap [3] | Distance: correlation, Linkage: Ward's [3] | Biological replicate concordance |

| Unknown Subtypes | MAP-DP [1] | Prior count: 1, Variances: data-driven | Clinical outcome correlation |

| Noisy Data with Outliers | DBSCAN [5] | eps: from k-distance plot, min_samples: 5-10 | Outlier biological significance |

| Clear Spherical Grouping | K-means [1] | K: gap statistic, multiple random starts | Within-cluster sum of squares |

Advanced Troubleshooting Techniques

Cohort-Based Error Analysis When standard clustering fails, implement cohort-based model inspection [9]:

- Define cohorts based on experimental conditions or sample characteristics

- Assess clustering performance within each cohort separately

- Identify cohorts where separation consistently fails

- Investigate batch effects or technical artifacts specific to problematic cohorts

Most-Wrong Prediction Analysis Examine samples with high prediction confidence but incorrect cluster assignments [9]:

- Identify misclassified samples with high cluster probability

- Analyze feature patterns in these problematic samples

- Determine if misclassification represents:

- Biological reality (true intermediate states)

- Technical artifacts (batch effects)

- Algorithm limitations

Multi-Algorithm Consensus Deploy multiple clustering algorithms simultaneously:

- Run K-means, DBSCAN, and hierarchical clustering

- Identify samples with consistent clustering across methods

- Investigate discordantly classified samples

- Use consensus clusters for robust biological interpretation

Critical Data Prerequisites for Successful Sample Separation

Frequently Asked Questions (FAQs)

Q1: My heatmap shows poor visual separation between my predefined sample groups (e.g., Cancer vs. Control). What is the most likely cause?

A: Poor visual separation often indicates that the biological or technical variation driving the clustering is stronger than the variation from your variable of interest. This does not necessarily mean your clustering algorithm has failed; it may simply reflect the underlying data structure. Common causes include:

- Confounding Factors: Unaccounted batch effects, patient subpopulations, or other clinical variables (e.g., age, gender, cancer subtype) can introduce variation that dominates the clustering pattern [10].

- Data Preprocessing Issues: The presence of outliers can dominate the color scale, making true biological differences less visible [10].

- True Biological Reality: In some cases, the data may not support a clear separation between your hypothesized groups [10].

Q2: How can I improve the visual clarity and separation in my heatmap?

A: You can take several steps to enhance visual separation:

- Refine Gene Selection: Use stricter criteria for selecting features (e.g., genes), combining a significance threshold (adjusted p-value) with a minimum fold-change requirement (e.g., |log2FC| > 1). This focuses the heatmap on features with larger and more biologically meaningful differences [10].

- Address Outliers: For visualization purposes, trim the data by scaling values beyond a specific quantile (e.g., 1st and 99th percentiles) back to that quantile's value. This prevents extreme outliers from compressing the color range for the majority of your data [10].

- Ensure Proper Data Scaling: Always apply standardization (e.g., z-scoring) to rows and/or columns so that the color mapping reflects relative differences across features or samples [10].

Q3: What does it mean if my samples cluster well, but not by my experimental groups?

A: This is a critical finding. It suggests that a source of variation other than your primary group designation is the strongest driver of the data structure. You should investigate potential confounding factors, such as batch effects, sample processing dates, or other unmeasured clinical variables [10]. This unexpected clustering can sometimes reveal new biological insights or subtypes.

Q4: How can I validate that my clustering results are generalizable and not specific to my dataset?

A: The gold standard for validating a clustering model is to assess its generalisability on an external dataset from a different cohort or institution [11]. A model that identifies similar, clinically recognisable clusters in an external population demonstrates strong validity and robustness [11].

Troubleshooting Guide: Poor Sample Separation

This guide provides a step-by-step methodology to diagnose and address poor sample separation in heatmap clustering.

Step 1: Diagnose the Problem

Before applying fixes, understand what your data and initial results are telling you.

- Action: Perform a Principal Component Analysis (PCA) and examine the plot. Check if your sample groups separate along any of the principal components.

- Interpretation: If samples do not separate by group in the PCA, it is unlikely they will separate clearly in a heatmap, as both methods are capturing major sources of variation [10].

Step 2: Optimize Feature Selection

The features (e.g., genes) you include are the most critical factor for successful separation.

- Action: Avoid using a simple significance cutoff. Combine statistical significance with a measure of effect size.

- Protocol: When performing differential analysis, filter your results to include only features that meet both of the following criteria:

- Adjusted p-value (FDR) < 0.05

- Absolute log2 Fold Change > 1 [10]

- Advanced Tip: Test different cutoffs (e.g., top 200-500 most variable genes) to see if a more restricted gene list improves separation by reducing noise [10].

Step 3: Control Data Preprocessing and Quality

The quality of your input data directly impacts the quality of your clusters [12].

- Action 1: Handle Missing Data

- Protocol: Use robust imputation methods, such as Multiple Imputation by Chained Equations (MICE), to estimate missing values without introducing significant bias [11].

- Action 2: Treat Outliers for Visualization

- Protocol: Apply a winsorization or quantile trimming function to your data matrix after initial scaling. This compresses extreme values for a better visual spread without affecting the clustering of most data points [10].

Step 4: Validate and Interpret Clusters

Always assess the quality and meaning of your resulting clusters.

- Action 1: Evaluate Cluster Stability and Quality

- Protocol: Use internal validation metrics to guide the choice of cluster number (k) and assess result quality. Common metrics include [12]:

- Elbow Method: Plots the within-cluster variance against the number of clusters; the "elbow" indicates the optimal k.

- Silhouette Score: Measures how similar an object is to its own cluster compared to other clusters. Values range from -1 to 1, with higher values indicating better-defined clusters.

- Davies-Bouldin Index: Measures the average similarity between each cluster and its most similar one. Lower values indicate better separation.

- Protocol: Use internal validation metrics to guide the choice of cluster number (k) and assess result quality. Common metrics include [12]:

- Action 2: Interpret Clusters Clinically

- Protocol: Statistically compare the clinical and demographic variables across the identified clusters. A clinically recognisable and interpretable cluster is often more valuable than one defined only by mathematical separation [11].

Experimental Protocol for Validating Cluster Generality

Objective: To determine if the clusters identified in a development dataset are generalizable to an independent external population [11].

Workflow Diagram:

Methodology:

Dataset Preparation:

- Secure two independent datasets: a development dataset (e.g., SICS cohort) for model training and an external validation dataset (e.g., MUMC+ cohort) from a different source [11].

- Align variables and preprocess both datasets identically. Exclude variables with a high percentage (>40%) of missing values in the external dataset from both datasets to ensure comparability [11].

- Handle missing data using a robust method like Multiple Imputation by Chained Equations (MICE) [11].

- Standardize all numerical variables in the development dataset to a z-distribution. Use the mean and standard deviation from the development dataset to standardize the external validation dataset [11].

Model Training and Application:

- Train your chosen clustering model (e.g., K-means, DEC) exclusively on the preprocessed development dataset [11].

- Directly apply the trained model (without retraining) to the preprocessed external validation dataset. This tests the model's ability to generalize.

Analysis of Generality:

Research Reagent Solutions

The following table details key computational tools and their functions for clustering analysis.

| Tool / Technique | Function in Clustering Analysis |

|---|---|

| K-means | A distance-based partitioning algorithm; simple and fast for well-separated, spherical clusters. Often used with pre-defined 'k' [12]. |

| Deep Embedded Clustering (DEC) | Uses a neural network (autoencoder) to learn feature representations and perform clustering simultaneously; handles non-linear relationships well [11]. |

| X-Shaped VAE (X-DEC) | An adaptation of DEC using a variational autoencoder designed to handle mixed data types (numerical and categorical) more appropriately, improving cluster stability [11]. |

| Multiple Imputation by Chained Equations (MICE) | A statistical technique for handling missing data by creating multiple plausible imputations, reducing bias in the clustering input [11]. |

| Principal Component Analysis (PCA) | A dimensionality reduction technique used to visualize high-dimensional data and identify major sources of variation before clustering [12]. |

What are the primary factors that cause poor sample separation in a clustered heatmap?

Poor sample separation in clustered heatmaps often stems from three key areas: data preparation, distance metric selection, and clustering method choice [13] [3].

- Data Quality and Scaling: Without proper normalization, variables with large values can dominate the clustering process, drowning out signals from variables with lower values [3]. Scaling (e.g., using Z-score) ensures variables contribute equally to distance calculations [3].

- Distance Metric Selection: The choice of distance metric (e.g., Euclidean, Pearson correlation) fundamentally determines which samples appear similar [13] [14]. Selecting an inappropriate metric for your data type can obscure true biological patterns [14].

- Clustering Method: Different linkage methods (e.g., complete, average, Ward) produce different cluster structures [13]. Average-linkage hierarchical clustering, for instance, merges clusters based on the average distance between all pairs of observations [15].

How can I troubleshoot unexpected or weak clustering results?

Follow this systematic approach to diagnose clustering issues [3]:

- Verify Data Preprocessing: Ensure your data is properly normalized and scaled. For gene expression data, consider using log-transformed values (e.g., log2 CPM) and Z-score scaling across rows to highlight relative expression [3].

- Experiment with Distance Metrics: Test different distance measures. Euclidean distance measures magnitude differences, while Pearson correlation captures pattern similarity, which is often more biologically relevant [13] [14].

- Adjust Clustering Algorithms: Try different linkage methods. If results are inconsistent, confirm that manual clustering matches the heatmap function's clustering by checking parameters like dendrogram reordering [16].

- Incorporate Phenotype Data: Use side annotations to color-code samples by clinical or experimental phenotypes (e.g., disease status, treatment). This helps visually assess if clusters correspond to known biological groups [13].

What are the best practices for ensuring reproducible and biologically meaningful clustering?

To achieve robust and interpretable clusters [14]:

- Standardize Analysis Parameters: Document and consistently use the same distance metric and clustering method when comparing datasets. The

pheatmapandComplexHeatmappackages in R allow explicit parameter specification [3] [16]. - Perform Association Testing: After cutting dendrograms into clusters, statistically test for enrichment of phenotypic traits within clusters using chi-squared tests for categorical annotations or ANOVA for continuous variables [13].

- Validate with Known Groups: If sample subtypes are known, check that the clustering recapitulates these groups. Poor alignment may indicate technical artifacts or stronger confounding biological variables [13] [14].

Table 1: Common Distance Metrics and Their Impact on Cluster Separation

| Distance Metric | Best Use Case | Sensitivity to Noise | Effect on Separation |

|---|---|---|---|

| Euclidean [15] [3] | Measuring magnitude differences | Moderate | Groups samples by absolute value similarity |

| Pearson Correlation | Identifying co-expression patterns [13] | Lower | Groups samples by expression profile shape |

| Uncentered Pearson | Pattern matching with magnitude dependence [13] | Lower | Hybrid of correlation and magnitude |

Table 2: Hierarchical Clustering Linkage Methods

| Linkage Method | Cluster Shape | Sensitivity to Outliers | Common Application |

|---|---|---|---|

| Average [15] | Balanced, spherical | Low | General purpose, robust |

| Complete [3] | Compact, diameter-sensitive | High | Creates tight, distinct clusters |

| Ward.D [16] | Spherical, variance-minimizing | Moderate | Creates clusters of minimal variance |

Experimental Protocol for Diagnosing Poor Separation

Objective: Systematically identify the cause of poor sample separation in a gene expression heatmap.

Materials:

- Normalized expression matrix (e.g., log2 CPM, TPM)

- R packages:

pheatmap,ComplexHeatmap, orheatmaply[3] - Sample phenotype annotation data

Methodology:

- Data Scaling: Scale the expression matrix by row (gene) using Z-score transformation:

Z = (value - mean) / standard deviation[3]. - Parameter Testing: Generate multiple heatmaps, systematically varying:

- Phenotype Overlay: Annotate the heatmap with known sample phenotypes (e.g., ER status, cancer subtype) [13].

- Cluster Validation: Cut the dendrogram to obtain cluster assignments and test for significant phenotype associations using chi-square tests [13].

- Interactive Exploration: For large datasets, use

heatmaplyto create interactive heatmaps for detailed inspection of individual values [3].

Diagnostic Workflow Visualization

Research Reagent Solutions

Table 3: Essential Software Tools for Heatmap Clustering and Diagnostics

| Tool Name | Language | Primary Function | Key Feature for Troubleshooting |

|---|---|---|---|

| pheatmap [3] | R | Static clustered heatmaps | Built-in scaling and diverse distance metrics |

| ComplexHeatmap [16] [13] | R | Advanced annotated heatmaps | Flexible manual dendrogram input and annotation |

| heatmap3 [13] | R | Enhanced heatmap generation | Automatic association tests between clusters and phenotypes |

| seaborn.clustermap [14] | Python | Clustered heatmaps | Integration with Python statistical and ML ecosystems |

| NG-CHM [14] | Web | Interactive heatmaps | Dynamic exploration, zooming, and detailed value inspection |

The Impact of Data Sparsity, High Dimensionality, and Noise on Clustering

Frequently Asked Questions (FAQs)

How do data sparsity and high dimensionality negatively impact the performance of traditional clustering algorithms like K-Means?

Data sparsity and high dimensionality create several interconnected problems that degrade the performance of distance-based clustering algorithms such as K-Means:

- Loss of Meaningful Distance Metrics: In high-dimensional spaces, data points tend to be spread out, causing the concept of "nearest" and "farthest" to become less meaningful. The relative contrast between the nearest and farthest neighbor of a data point diminishes, making it difficult for algorithms that rely on distance measures to form coherent clusters [17].

- Increased Computational Complexity: The computational cost of algorithms grows exponentially with the number of dimensions due to the sheer volume of features that must be processed. This makes clustering computationally intensive and slow [17].

- High Risk of Overfitting: Models have more flexibility to fit the training data closely in high-dimensional spaces. This often results in them learning spurious correlations and noise instead of the underlying biological signal, leading to poor generalization on new data [17].

- Concentration of Distance Distributions: Euclidean distances between all pairs of points become increasingly similar as dimensionality increases, preventing the formation of well-separated clusters [17].

What are the most effective preprocessing steps to mitigate the effects of noise in my clustering data?

Effective preprocessing is crucial for mitigating noise. A robust protocol includes the following steps [12] [18] [19]:

- Filtering Low-Information Features: Remove genes or features with nonzero expression in fewer than 1% of cells. This eliminates lowly expressed and noisy genes, retaining only biologically relevant ones [19].

- Handling Missing Values: Most clustering algorithms will not work with missing values. Options include:

- Feature Selection based on Variance: Calculate the variance of each feature and select the top 2000 highest-variance genes for analysis. This focuses the clustering on the most informative features [19].

- Standardization and Normalization: Perform standardization (e.g., Z-score normalization) and normalization on the processed data to bring all features to a common scale, preventing variables with larger ranges from dominating the clustering process [12] [19].

My clusters are not biologically interpretable and show poor separation in a heatmap. What advanced clustering strategies should I consider?

When traditional methods fail, consider these advanced strategies designed for high-dimensional, noisy biological data:

- Adopt Adaptive, Parameter-Free Frameworks: Use frameworks like SONSC (Separation-Optimized Number of Smart Clusters), which automatically infers the optimal number of clusters without supervision. It uses a novel internal validity metric, the Improved Separation Index (ISI), that is robust to noise and jointly evaluates intra-cluster compactness and inter-cluster separation [20].

- Implement Graph-Based Clustering: Employ methods like scGGC, which integrates graph autoencoders. These methods construct a unified cell-gene adjacency matrix to capture complex, non-linear interactions that traditional distance metrics miss. This approach is particularly effective for sparse single-cell RNA-seq data [19].

- Leverage Deep Clustering Models: Utilize models like Deep Embedded Clustering (DEC) or scDeepCluster, which use deep neural networks to learn non-linear feature transformations and cluster assignments simultaneously. These models embed the clustering loss within the encoder, iteratively optimizing the results to reveal complex structural features [20] [19].

- Apply Dimensionality Reduction before Clustering: Use techniques like PCA (Principal Component Analysis) or t-SNE as a preprocessing step to project your data into a lower-dimensional space that retains essential structure while discarding noise. This simplifies the clustering task [17].

Beyond the Elbow Method, what robust techniques can I use to determine the true number of clusters (k) in my high-dimensional dataset?

While the Elbow Method is common, it can be subjective. More robust techniques include:

- Internal Cluster Validity Indices (CVIs): These metrics evaluate clustering quality without ground truth labels.

- Silhouette Score: Measures how similar each point is to its own cluster compared to other clusters. Values range from -1 to 1, with higher values indicating better separation and compactness [12] [20].

- Davies-Bouldin Index: Lower values indicate better separation between clusters [12].

- Improved Separation Index (ISI): A novel, noise-resilient metric that jointly evaluates intra-cluster compactness and inter-cluster separability. It is specifically designed for complex biomedical data and can be maximized to find the optimal number of clusters automatically [20].

- Model-Based Selection: Frameworks like SONSC iteratively maximize the ISI across a range of candidate

kvalues, automatically determining the optimal number of clusters without requiring manual parameter tuning [20].

Troubleshooting Guides

Guide: Troubleshooting Poor Sample Separation in Heatmap Clustering

Problem: Clusters in your heatmap appear poorly separated, with indistinct boundaries and mixed expression patterns, making biological interpretation difficult.

Primary Causes & Solutions:

Cause 1: High Data Dimensionality and Sparsity The high number of features (e.g., genes) introduces noise and dilutes the true biological signal.

Solution: Implement Dimensionality Reduction

- Feature Selection: Select the top

N(e.g., 2000) most variable features. This focuses the analysis on genes that contribute most to population differences [19]. - Principal Component Analysis (PCA): Apply PCA to project the data onto principal components that capture the maximum variance. Use the reduced-dimensional data for clustering and visualization [17].

- t-SNE/uMAP: For visualization purposes, use

t-SNEoruMAPto further reduce the data to 2 or 3 dimensions. These techniques are excellent for visualizing complex cluster structures [17].

- Feature Selection: Select the top

Cause 2: Suboptimal Number of Clusters (k) Using an incorrect

kforces the algorithm to either over-split or over-merge natural groups in the data.Solution: Employ Robust Cluster Validation

- Run Multiple Algorithms: Test a range of

kvalues (e.g., from 3 to 15) using K-Means. - Calculate Validity Indices: For each

k, calculate the Silhouette Score and Davies-Bouldin Index. - Visualize and Select: Plot these metrics against

k. Choose thekthat maximizes the Silhouette Score and minimizes the Davies-Bouldin Index. For automated and robust results, consider using a framework like SONSC that maximizes the Improved Separation Index [12] [20].

- Run Multiple Algorithms: Test a range of

Cause 3: High Noise Level in the Data Technical noise and outliers can distort cluster centroids and boundaries.

Solution: Enhance Data Preprocessing and Use Robust Algorithms

- Aggressive Filtering: Filter out low-quality cells and low-expression genes more stringently.

- Outlier Detection and Removal: Identify and treat outliers using methods like IQR (Interquartile Range) or Z-score filtering before clustering [12].

- Choose Noise-Resilient Algorithms: Instead of K-Means, try density-based algorithms like DBSCAN, which can handle outliers, or advanced frameworks like scGGC that use graph structures and adversarial training to improve robustness [18] [19].

Summary of Solutions for Poor Sample Separation:

| Primary Cause | Recommended Solution | Brief Rationale |

|---|---|---|

| High Dimensionality & Sparsity | Dimensionality Reduction (PCA, Feature Selection) | Reduces noise, focuses on informative features, mitigates distance concentration [17] [19]. |

| Suboptimal Number of Clusters (k) | Robust Cluster Validation (Silhouette, ISI) | Determines the true number of underlying biological groups, preventing over/under-clustering [12] [20]. |

| High Noise Level | Enhanced Preprocessing & Robust Algorithms (e.g., DBSCAN, scGGC) | Removes distorting outliers and uses algorithms less sensitive to noise [12] [18] [19]. |

Guide: Addressing the "Curse of Dimensionality" in Single-Cell Clustering

Problem: Your single-cell RNA-seq data is high-dimensional and sparse, leading to poor clustering accuracy and failure to identify rare cell types.

Recommended Protocol:

The following workflow, inspired by the scGGC model, is designed to effectively tackle high-dimensional single-cell data [19]:

Step-by-Step Methodology:

Data Preprocessing and Filtering:

- Input: Raw gene expression matrix.

- Action: Remove genes with nonzero expression in fewer than 1% of cells. Select the top 2000 genes with the highest variance. Perform standardization and normalization on the processed data [19].

- Purpose: To eliminate noise and retain biologically relevant, informative features.

Cell-Gene Graph Construction:

- Action: Construct a unified adjacency matrix that incorporates both cell-cell and cell-gene relationships. This is represented as a block matrix:

A = [[Cell-Cell, Cell-Gene], [Gene-Cell, Gene-Gene]][19]. - Purpose: To capture the complex, multi-level interactions within the data that simple distance metrics cannot, providing a richer structural context for clustering.

- Action: Construct a unified adjacency matrix that incorporates both cell-cell and cell-gene relationships. This is represented as a block matrix:

Graph Autoencoder Training:

- Action: Train a graph autoencoder using the constructed adjacency matrix. The encoder uses graph convolution layers to map high-dimensional data into a low-dimensional latent space. The decoder reconstructs the adjacency matrix from this latent representation.

- Loss Function: Minimize the reconstruction loss, typically the Frobenius norm between the original and reconstructed adjacency matrices [19].

- Purpose: To perform non-linear dimensionality reduction that preserves the complex graph structure of the data.

Initial Clustering and High-Confidence Cell Selection:

- Action: Extract the low-dimensional latent features for each cell and perform initial clustering using K-Means. For each resulting cluster, calculate the distance of each cell to the cluster centroid. Select the cells closest to the centroids as "high-confidence" samples [19].

- Purpose: To generate a preliminary clustering and identify the most reliable representatives for each cluster.

Adversarial Training for Cluster Refinement:

- Action: Use the selected high-confidence cells to train a Generative Adversarial Network (GAN). The trained model is then used to optimize and refine the clustering results for the entire dataset.

- Purpose: To improve the generalization ability and accuracy of the final clusters, making them more robust and biologically coherent [19].

The Scientist's Toolkit: Essential Research Reagents & Computational Tools

The following table details key computational tools and reagents used in advanced clustering workflows for high-dimensional biomedical data.

Research Reagent Solutions:

| Item Name | Function / Purpose | Example Use Case |

|---|---|---|

| SONSC Framework | An adaptive, parameter-free clustering framework that automatically determines the optimal number of clusters by maximizing the Improved Separation Index (ISI) [20]. | Ideal for initial exploratory analysis on new datasets where the number of clusters is unknown. |

| Graph Autoencoder | A neural network model that performs non-linear dimensionality reduction on graph-structured data, preserving complex topological relationships [19]. | Used in the scGGC pipeline to learn low-dimensional representations of single-cell data that capture cell-gene interactions. |

| Principal Component Analysis (PCA) | A linear dimensionality reduction technique that projects data onto orthogonal axes of maximum variance [17] [19]. | A standard preprocessing step to reduce dataset dimensionality before applying clustering algorithms. |

| t-SNE | A non-linear dimensionality reduction technique specialized for visualizing high-dimensional data in 2D or 3D by preserving local neighborhoods [17]. | Creating scatter plots to visually assess cluster separation and identify potential subpopulations. |

| Silhouette Score | An internal cluster validity index that measures how well each data point fits its assigned cluster compared to other clusters [12] [20]. | Quantitatively comparing the quality of different clustering results or different values of k. |

| Improved Separation Index (ISI) | A novel internal validity metric that jointly and robustly evaluates intra-cluster compactness and inter-cluster separation, designed for noisy data [20]. | Used within the SONSC framework for unsupervised, robust determination of the optimal cluster number. |

| Adversarial Neural Network | A deep learning model comprising a generator and a discriminator trained in competition, used to refine clusters and improve model generalization [19]. | The final step in the scGGC pipeline to enhance clustering accuracy using high-confidence cells. |

Protocol: Implementing the scGGC Pipeline for Single-Cell Clustering

Objective: To cluster single-cell RNA-seq data by integrating graph representation learning and adversarial training to overcome data sparsity, high dimensionality, and noise.

Workflow Overview:

Detailed Steps:

Input Data:

- Begin with a raw gene expression matrix

X_rawof dimensionsm x n, wheremis the number of genes andnis the number of cells [19].

- Begin with a raw gene expression matrix

Data Preprocessing:

- Filtering: Remove any genes that are expressed (have nonzero values) in fewer than 1% of all cells.

- Feature Selection: Calculate the variance of each gene and retain the top 2000 genes with the highest variance to create a filtered matrix

X_filtered. - Normalization: Apply library size normalization and log-transformation (e.g., log1p) to

X_filtered. Follow this with Z-score standardization to obtain the final processed matrixX_processed[19].

Cell-Gene Graph Construction:

- Cell-Gene Matrix: The normalized matrix

X_processedis used directly as the cell-gene adjacency block. - Cell-Cell Matrix: Compute a cell-cell similarity graph (e.g., using K-Nearest Neighbors on a PCA-reduced version of

X_processed). - Unified Graph: Construct the full adjacency matrix

Aby combining the cell-cell and cell-gene blocks into a single, large graph structure as previously described [19].

- Cell-Gene Matrix: The normalized matrix

Graph Autoencoder Training:

- Model Setup: Implement a graph autoencoder where the encoder consists of multiple graph convolutional layers (e.g., using the update rule:

H^(l+1) = σ(A * H^(l) * W^(l))). The decoder uses a simple inner product:Â = sigmoid(Z * Z^T). - Training: Train the model to minimize the reconstruction loss

L_rec = ||A - Â||_F^2, where||.||_Fis the Frobenius norm. After training, extract the low-dimensional node embeddingsZ[19].

- Model Setup: Implement a graph autoencoder where the encoder consists of multiple graph convolutional layers (e.g., using the update rule:

Initial Clustering and High-Confidence Selection:

- Clustering: Apply K-Means clustering to the cell embeddings

Zto get an initial set of cluster labels. - Selection: For each cluster, compute the Euclidean distance of every cell to the cluster centroid. The cell with the smallest distance in each cluster is selected as a high-confidence sample [19].

- Clustering: Apply K-Means clustering to the cell embeddings

Adversarial Training for Refinement:

- Model Setup: Train a Generative Adversarial Network (GAN) where the generator learns to produce realistic cell embeddings, and the discriminator learns to distinguish between real high-confidence cell embeddings and generated ones.

- Cluster Refinement: Use the trained discriminator's features or the generator's output to refine the cluster assignments for all cells, resulting in the final, robust clusters [19].

Output: Final, biologically coherent cell clusters ready for downstream analysis such as marker gene identification and cell type annotation.

Selecting and Applying the Right Clustering Techniques

Troubleshooting Guide: Poor Sample Separation in Heatmaps

This guide addresses common data preprocessing issues that lead to poor sample separation and clustering in heatmap analysis, a frequent challenge in biomedical and drug development research.

FAQ 1: Why does my heatmap fail to show clear cluster separation between my sample groups (e.g., treated vs. control)?

Poor cluster separation often stems from improper data scaling, which can mask the underlying biological variance. If features (e.g., gene expression levels) are on vastly different scales, algorithms may incorrectly prioritize high-magnitude features over more biologically relevant ones with smaller ranges [21] [22]. This distorts distance calculations, a core component of clustering algorithms, leading to uninformative dendrograms [23].

FAQ 2: My data contains many missing values from failed experiments or sensor dropouts. Should I remove these samples or impute the values?

Removing samples with missing values is a common but often suboptimal approach, as it can introduce significant bias and reduce statistical power [24]. For robust results, use advanced imputation methods that account for data structure. The XGBoost-MICE (Multiple Imputation by Chained Equations) method is highly effective for complex datasets, as it models nonlinear relationships between variables to accurately estimate missing values [25]. Studies show that using such model-based imputation can improve subsequent machine learning classifier accuracy by up to 19.8% compared to simple methods [24].

FAQ 3: Which scaling technique should I use before performing hierarchical clustering for my heatmap?

The optimal scaling method depends on your data's distribution and the presence of outliers. The table below summarizes the best practices.

| Scaling Technique | Best Used For | Sensitivity to Outliers | Impact on Clustering |

|---|---|---|---|

| Standardization (Z-score) [21] [26] | Data that is approximately normally distributed; features with consistent variance. | Moderate | Centers data, giving all features equal weight in distance calculation. Ideal for PCA. |

| Robust Scaling [21] [27] | Data with significant outliers or skewed distributions (common in gene expression). | Low | Uses median and IQR; preserves true structure by minimizing outlier influence. |

| Min-Max Scaling [21] [22] | Data with a bounded range; neural network inputs; images. | High | Squishes all values to a set range (e.g., 0-1). Outliers can compress the scale for other points. |

| Log Scaling [26] | Data that follows a power-law distribution (e.g., gene count data, wealth distributions). | Varies | Transforms multiplicative relationships into additive ones, helping to normalize skewed data. |

For most biological data, which often contains outliers, Robust Scaling or Log Scaling followed by standardization is generally recommended to achieve clear and truthful sample separation [21] [26].

Experimental Protocol: Data Preprocessing for Optimal Clustering

The following workflow ensures your data is optimally prepared for heatmap and cluster analysis.

Step-by-Step Procedure:

Handle Missing Values:

- Assessment: Identify the proportion and pattern of missing data. If >5% of values are missing, imputation is crucial [24].

- Imputation: Use the XGBoost-MICE method for high accuracy [25].

- Algorithm: Multiple Imputation by Chained Equations (MICE) creates several complete datasets by iteratively regressing each variable with missing data on all other variables.

- Model Integration: Replace the simple regression model within MICE with an XGBoost regressor for each variable. XGBoost's ability to model complex, nonlinear relationships leads to more accurate imputations [25].

- Convergence: Iterate until the imputed values stabilize across rounds (typically 6-10 iterations) [25].

Diagnose Data Distribution & Scale Features:

Generate and Evaluate Heatmap:

- Perform hierarchical clustering on the preprocessed data to generate the heatmap with dendrograms.

- Assess the improvement in cluster separation between known sample groups (e.g., diseased vs. healthy).

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational "reagents" for data preprocessing in bioinformatics research.

| Tool / Solution | Function | Typical Use Case |

|---|---|---|

| RobustScaler (sklearn) [21] | Scales features using median and IQR, minimizing outlier effects. | Preprocessing RNA-seq data or other assays with technical outliers before clustering. |

| XGBoost-MICE [25] | A advanced statistical method for handling missing data. | Imputing missing values in large-scale omics datasets (e.g., proteomics, metabolomics). |

| MinMaxScaler (sklearn) [21] | Rescales features to a fixed range, usually [0, 1]. | Preparing bounded data for neural network input. |

| StandardScaler (sklearn) [21] | Standardizes features by removing the mean and scaling to unit variance. | Preprocessing normally distributed data for Principal Component Analysis (PCA). |

In heatmap-based research, effective sample separation is paramount. When clusters fail to emerge clearly, the entire analytical foundation becomes questionable. Researchers often face ambiguous results where samples that should separate based on biological hypotheses instead appear intermixed in clustering visualization. This technical guide addresses this critical challenge by comparing three fundamental clustering approaches—K-means, model-based, and density-based methods—to help you diagnose and resolve sample separation issues.

Each algorithm operates on different foundational principles, making them uniquely suited to particular data structures and analytical challenges. K-means partitions data into spherical clusters based on centroid proximity [28]. Model-based clustering assumes data points within clusters follow specific probability distributions [18]. Density-based methods identify clusters as high-density regions separated by low-density areas [29]. Understanding these core mechanisms is the first step toward troubleshooting failed separations in your heatmap visualizations.

Algorithm Comparative Analysis

Fundamental Principles and Mathematical Foundations

K-means Clustering follows an iterative expectation-maximization approach to partition data into K pre-defined clusters. The algorithm aims to minimize the within-cluster sum of squares (WCSS), calculated as the sum of squared Euclidean distances between data points and their respective cluster centroids [30] [31]. The mathematical objective function is:

[ J = \sum{i=1}^k \sum{x \in Si} |x - \mui|^2 ]

where (\mui) represents the centroid of cluster (Si) [31]. The algorithm alternates between assigning points to the nearest centroid (E-step) and updating centroids based on current assignments (M-step) until convergence [28].

Model-Based Clustering assumes data is generated from a mixture of underlying probability distributions, typically Gaussian mixtures. The algorithm calculates the posterior probability of cluster assignments given the data using Bayes' theorem [32]. For an expression data matrix (X) with clustering (C), the approach maximizes:

[ P(C|X) \propto \prod{k=1}^K \prod{n=1}^N \iint p(\mu,\tau) \prod{g \in Ck} p(x_{gn}|\mu,\tau) d\mu d\tau ]

where (p(\mu,\tau)) represents the normal-gamma prior distribution [32]. This statistical framework allows the method to handle different cluster shapes, sizes, and orientations.

Density-Based Clustering (DBSCAN) identifies clusters as contiguous high-density regions requiring two parameters: ε (eps), the maximum distance between points to be considered neighbors, and MinPts, the minimum number of points required to form a dense region [29]. A point is classified as a core point if it has at least MinPts within its ε-neighborhood. Border points fall within the ε-neighborhood of a core point but lack sufficient neighbors themselves, while noise points belong to neither category [29] [33]. The algorithm connects core points that are density-reachable to form clusters of arbitrary shapes.

Comparative Performance Table

Table 1: Algorithm Comparison for Troubleshooting Poor Sample Separation

| Aspect | K-means | Model-Based | Density-Based (DBSCAN) |

|---|---|---|---|

| Cluster Shape Assumption | Spherical clusters [28] [1] | Ellipsoidal/clusters based on distributional assumptions [18] | Arbitrary shapes [29] [33] |

| Prior Knowledge Required | Number of clusters (K) [28] | Probability distribution type (optional) [18] | ε and MinPts parameters [29] |

| Handling Outliers | Highly sensitive; outliers distort centroids [1] | Moderate; can model outlier components | Excellent; explicitly identifies noise points [29] [33] |

| Impact on Heatmap Visualization | Clear, equally-sized groupings when assumptions met [30] | Flexible boundaries adapting to data distribution | Reveals irregular patterns; highlights outliers separately |

| Data Distribution Assumptions | Equal cluster densities and sizes [1] | Matches specified distributional forms | Varying densities possible with advanced variants |

| Failure Mode in Heatmaps | Over-segments non-spherical data; creates artificial balance | Overfitting with complex models | Misses global patterns if local density varies greatly |

Troubleshooting Guides & FAQs

Diagnostic Framework for Poor Sample Separation

Diagram: Troubleshooting Pathway for Failed Cluster Separation

Frequently Asked Questions

Q: My heatmap shows samples that should cluster separately appearing mixed. Which algorithm should I try first?

A: Begin with density-based clustering (DBSCAN) if you suspect non-spherical clusters or have outliers. DBSCAN excels at identifying irregular cluster shapes and separating them from noise [29] [33]. If you have strong theoretical reasons to believe your clusters are spherical and well-separated, K-means may suffice, but beware of its sensitivity to violations of these assumptions [1].

Q: How can I determine the optimal K for K-means when my samples aren't separating well?

A: Use the elbow method by plotting within-cluster sum of squares (WCSS) against different K values [30]. The "elbow point" where WCSS decline plateaus suggests an optimal K. For more rigorous selection, consider model-based methods that automatically determine cluster count using Bayesian Information Criterion (BIC) [31].

Q: My data contains significant outliers that are distorting heatmap clustering. What's the best approach?

A: Density-based methods like DBSCAN explicitly identify and separate outliers as noise points [29] [33]. Alternatively, model-based approaches can be more robust to outliers than K-means, particularly if you use distributions with heavier tails than Gaussian [18].

Q: How do I set the ε and MinPts parameters for DBSCAN when my samples have varying densities?

A: Use the k-distance graph approach: calculate the distance to the k-th nearest neighbor for each point, sort these distances, and look for the "elbow" where distances increase sharply [29]. Set ε at this elbow point and MinPts slightly higher than your data dimensionality. For varying densities, consider HDBSCAN, which extends DBSCAN to handle different densities [29].

Q: Can I combine multiple clustering approaches to improve sample separation in my heatmaps?

A: Yes, ensemble methods that combine multiple algorithms often provide more robust results. A common approach is to use DBSCAN to identify and remove outliers, then apply K-means or model-based clustering on the remaining data [31]. Model-based multi-tissue clustering algorithms demonstrate how prior information from one context can improve clustering in another [32].

Experimental Protocols for Algorithm Validation

K-means Cluster Validation Protocol

Data Preprocessing: Standardize features to zero mean and unit variance using Z-score normalization to prevent variables with larger scales from dominating the clustering [30] [18].

Centroid Initialization: Employ K-means++ initialization rather than random seeding to improve convergence to optimal solutions [30]. Run the algorithm multiple times (typically 10) with different initializations and select the solution with lowest WCSS [28].

Parameter Tuning: Systematically explore K values from 2 to √n (where n is sample count). Calculate WCSS for each K and identify the elbow point [30].

Stability Assessment: Use silhouette analysis to measure how well each sample lies within its cluster. Calculate the mean silhouette width across all samples, with values approaching 1 indicating better separation [28].

Visual Validation: Project results onto first two principal components and color-code by cluster assignment to visually confirm separation matches heatmap patterns [33].

Model-Based Clustering Selection Protocol

Model Specification: Test multiple distributional forms (spherical, diagonal, elliptical) if using Gaussian Mixture Models. For count data, consider Poisson mixtures; for binary data, Bernoulli mixtures [18].

Bayesian Information Criterion (BIC) Calculation: Compute BIC values across different cluster numbers and models. Select the configuration with the lowest BIC score, indicating optimal balance between fit and complexity [31].

Uncertainty Assessment: Examine posterior probabilities of cluster assignments. Samples with probabilities near 0.5 indicate ambiguous classification and potential separation issues [32].

Cross-Validation: Implement k-fold cross-validation, ensuring cluster patterns remain stable across data subsets to confirm robustness [1].

DBSCAN Parameter Optimization Protocol

Parameter Exploration: Systematically vary ε (from 0.1 to 1.0 in standardized space) and MinPts (from 3 to 20) while monitoring the proportion of points classified as noise [29].

Differential Separation: For datasets with varying densities, implement HDBSCAN which extends DBSCAN to handle different densities without requiring a single global ε parameter [29].

Cluster Stability: Use Jaccard similarity to compare clusters generated from bootstrapped data samples. Stable clusters will maintain high similarity scores across samples [31].

Boundary Point Analysis: Examine the classification of border points. Consider reducing MinPts if biologically relevant samples are being classified as noise [29].

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Computational Tools for Clustering Troubleshooting

| Tool/Resource | Function | Application Context |

|---|---|---|

| Variance Thresholding | Removes low-variance features | Preprocessing step to eliminate uninformative variables before clustering [30] |

| Principal Component Analysis (PCA) | Reduces dimensionality while preserving variance | Visualizing high-dimensional clusters in 2D/3D space; noise reduction [33] |

| StandardScaler | Standardizes features to mean=0, variance=1 | Critical preprocessing for distance-based algorithms like K-means [30] |

| Elbow Method | Identifies optimal cluster number (K) | Determining appropriate K value for K-means when true separation is unknown [30] |

| Silhouette Analysis | Measures cluster cohesion and separation | Quantifying success of clustering when true labels unavailable [28] |

| Distance Metrics | Calculates pairwise sample dissimilarities | Foundation for all clustering algorithms; choice affects results [31] |

Advanced Integration Strategies

Hybrid Approaches for Challenging Separations

When standard algorithms fail, consider hybrid approaches that leverage the strengths of multiple methods. A particularly effective strategy uses DBSCAN first to identify and remove outliers and noise points, then applies model-based clustering on the refined dataset to identify the primary cluster structure [31]. This approach combines DBSCAN's robustness to outliers with the probabilistic flexibility of model-based methods.

For multi-tissue or multi-condition experiments where samples should theoretically align across conditions, consider specialized multi-task clustering algorithms that jointly model multiple related datasets [32]. These methods can transfer cluster information across related domains, improving separation when individual datasets are too sparse for reliable clustering.

Diagnostic Metrics for Algorithm Selection

Beyond the standard performance metrics, several diagnostic approaches can guide algorithm selection for challenging heatmap separations:

Cluster Stability: Measure using the Jaccard similarity between clusters generated from different algorithm initializations or data subsamples. High stability across runs suggests robust clusters [31].

Biological Validation: When ground truth is unknown, validate clusters using enrichment analysis for known biological pathways or functions. Meaningful enrichment suggests successful separation [32].

Neighborhood Preservation: Quantify how well local neighborhoods in high-dimensional space are preserved in cluster assignments using metrics like trustworthiness and continuity.

By systematically applying these troubleshooting approaches and understanding the fundamental assumptions of each clustering algorithm, researchers can significantly improve sample separation in heatmap visualizations, leading to more biologically meaningful results and more confident conclusions in their research.

Leveraging Dimensionality Reduction (PCA, t-SNE) to Enhance Separation

Frequently Asked Questions

1. What is the primary reason my heatmap clusters show poor separation, and how can dimensionality reduction help? Poor separation often occurs because the high-dimensional data contains too much noise or the relationships between features are non-linear. Dimensionality reduction techniques like PCA and t-SNE help by projecting the data into a lower-dimensional space, preserving the most important structures (like clusters) and filtering out noise, thereby enhancing visual separation in your heatmap [34] [35].

2. Should I use PCA or t-SNE prior to clustering and generating my heatmap? The choice depends on your goal and data structure. PCA is a linear technique best for preserving global data variance and is deterministic and fast [36]. t-SNE is a non-linear technique best for preserving local relationships and cluster separation for visualization, though it is stochastic and can produce different results on the same data [36]. For an initial, fast analysis, start with PCA. If you suspect strong non-linear patterns and need the best possible cluster visualization for interpretation, use t-SNE [35].

3. My t-SNE results look different every time I run it. Is this an error? No, this is expected behavior. t-SNE is a stochastic algorithm, meaning it uses random initialization and can produce different results on the same dataset [36]. For reproducible results, you should set a random seed before running the analysis.

4. How do I interpret the distances between clusters in a t-SNE plot? In a t-SNE plot, you can trust that points close together in the low-dimensional plot were also close together in the high-dimensional space. However, the distance between separate clusters is not meaningful [36]. The arrangement of non-neighboring groups should not be used for inference.

5. What are the best color palettes to use for my clustered heatmap to improve readability? The best color palette depends on your data [7]:

- Sequential Palette: Use for numeric data that is entirely positive or entirely negative (e.g., gene expression levels). It uses a gradient from light (low values) to dark (high values), often with a single hue or a warm-to-cool spectrum [37].

- Diverging Palette: Use for numeric data with a meaningful central point, like zero (e.g., log-fold changes). It uses two contrasting hues on either end of a light-colored center [37]. Avoid using too many colors or rainbow palettes, as they can increase cognitive load and lead to misinterpretation [7].

Troubleshooting Guides

Guide 1: Addressing Poor Cluster Separation in Your Heatmap

Problem: After performing clustering and generating a heatmap, the samples (rows or columns) do not form distinct, well-separated clusters.

Investigation & Solution Workflow: The following diagram outlines a systematic approach to diagnose and resolve poor separation.

Detailed Steps:

Preprocess Your Data: The quality of your input data is critical.

- Scaling/Normalization: Variables on different scales can dominate the distance calculations used in clustering and dimensionality reduction. Standardize your data so that each feature has a mean of 0 and a standard deviation of 1 [34].

- Handle Missing Values: Most algorithms cannot handle missing data. Use appropriate methods like imputation (e.g., k-nearest neighbor imputation) or removal, depending on the extent and nature of the missingness [18].

Apply Linear Dimensionality Reduction (PCA):

- Purpose: To perform an initial, fast reduction of dimensionality while preserving global variance. It can denoise the data by focusing on components with the highest variance [34] [35].

- Method: Project your data onto the first

nprincipal components (PCs). The number of PCs can be chosen based on the cumulative variance explained (e.g., enough PCs to explain >80-90% of the variance) [38].

Apply Non-Linear Dimensionality Reduction (t-SNE):

- Purpose: If your data has complex, non-linear structures that PCA cannot capture, t-SNE can create a low-dimensional map where local similarities and clusters are emphasized [39] [36].

- Method: Use the PCA-reduced data or the original preprocessed data as input for t-SNE. Using PCA as a first step can improve t-SNE performance and speed [38].

Tune Hyperparameters: If t-SNE still does not yield good separation, adjust its hyperparameters.

- Perplexity: This is the most important parameter. It can be interpreted as the number of effective nearest neighbors. Typical values are between 5 and 50. Low perplexity favors local structure, high perplexity favors more global structure [39].

Guide 2: Choosing Between Dimensionality Reduction Methods

Problem: I am unsure whether to use PCA, t-SNE, or another method for my specific dataset and goal.

Decision Logic: The flowchart below helps select the appropriate method based on the data characteristics and research objective.

Method Comparison Table:

| Feature | PCA (Principal Component Analysis) | t-SNE (t-Distributed Stochastic Neighbor Embedding) |

|---|---|---|

| Primary Goal | Dimensionality reduction; preserving global variance [36] | Data visualization; preserving local neighborhoods and cluster structure [36] |

| Algorithm Type | Linear [35] | Non-linear [35] |

| Preservation | Global data structure and variance [34] [36] | Local data structure and clusters [36] |

| Determinism | Deterministic (same result every time) [36] | Stochastic (different results unless random seed is set) [36] |

| Scalability | Fast and efficient for large datasets [18] | Computationally expensive for very large datasets [39] |

| Interpretability | Output components (PCs) can be interpreted as linear combinations of original features [35] | The low-dimensional map is not directly interpretable; it's for visualization [39] |

| Best Use Case | Initial data exploration, denoising, compression, and as a pre-processing step for other algorithms [38] | Creating illustrative 2D/3D plots of high-dimensional data to reveal cluster patterns [39] [35] |

Experimental Protocols

Protocol 1: Standard Workflow for Enhanced Heatmap Generation

This protocol integrates dimensionality reduction directly into the heatmap generation process to improve cluster separation.

1. Data Preprocessing:

- Input: Raw data matrix (samples x features).

- Handling Missing Values: Apply k-nearest neighbor (KNN) imputation to estimate and fill missing values [18].

- Scaling: Standardize the data by centering each feature to zero mean and scaling to unit variance using the formula

(X - mean(X)) / std(X)[34].

2. Dimensionality Reduction (PCA & t-SNE):

- Initial Reduction with PCA:

- Apply PCA to the scaled data matrix.

- Determine the number of principal components (k) to retain by selecting the smallest

kwhere cumulative explained variance is >90% [38]. - Project the data onto the top

kcomponents to create a denoised, lower-dimensional representation.

- Non-Linear Projection with t-SNE:

- Use the PCA-reduced data (or the scaled data if no PCA is used) as input for t-SNE.

- Set the hyperparameters:

n_components=2for a 2D plot,perplexity=30(a good starting point), and set arandom_statefor reproducibility [39]. - Run t-SNE to obtain a 2D embedding of your data.

3. Clustering and Visualization:

- Clustering on Low-Dimensional Space: Perform clustering (e.g., K-means, hierarchical clustering) on the t-SNE coordinates or the principal components to assign cluster labels to each sample [31].

- Generate Annotated Heatmap: Create a heatmap of the original data (samples x features), but order the samples (rows) based on the clusters identified in the previous step. Use a diverging color palette (e.g., blue-white-red) if the data is centered, or a sequential palette otherwise, to best represent the values [37].

Protocol 2: Hyperparameter Tuning for t-SNE

Objective: Systematically optimize the perplexity parameter in t-SNE to achieve the best possible cluster separation for a given dataset.

Procedure:

- Preprocessing: Start with your preprocessed and scaled dataset.

- Define Perplexity Range: Create a list of perplexity values to test (e.g.,

[5, 15, 30, 50]). - Iterate and Plot: For each perplexity value in the list:

- Run t-SNE with that perplexity value and a fixed random seed.

- Generate a scatter plot of the resulting 2D embedding.

- Color the points based on known ground-truth labels if available.

- Evaluate: Compare the plots. The optimal perplexity value is the one that produces the tightest, most distinct clusters with minimal overlap and visual "crowding" [39].

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function |

|---|---|

| Scikit-learn (Python) | A core machine learning library providing robust implementations of PCA, t-SNE, and various clustering algorithms (e.g., K-means). Essential for executing the analysis [34]. |

| RColorBrewer (R) / Seaborn (Python) | Libraries specializing in color palettes for data visualization. They provide colorblind-safe sequential and diverging palettes that are critical for creating interpretable heatmaps [7] [37]. |

| FactoMineR (R) / Scanpy (Python) | Domain-specific packages for multivariate analysis and single-cell genomics, respectively. They offer integrated, optimized pipelines for performing PCA, clustering, and visualization on complex biological data [34]. |

| Stable Random Seed | A simple but crucial tool for ensuring the reproducibility of stochastic algorithms like t-SNE. By setting a seed, you guarantee that your results can be replicated in future runs [36]. |

A frequent challenge in single-cell RNA sequencing (scRNA-seq) analysis is poor sample separation in heatmap clustering, which can obscure true biological variation and complicate the identification of distinct cell types or states. This issue often stems from technical artifacts, suboptimal experimental design, or inappropriate computational choices. This guide addresses the root causes and provides actionable solutions to enhance data quality and clustering resolution.

Frequently Asked Questions

Q1: Why do my heatmaps show poor separation between known cell types? Poor separation often results from high ambient RNA, batch effects, or ineffective feature selection. Ambient RNA can blur distinct expression profiles by adding background noise to all cells [40], while batch effects from processing samples on different days or with different protocols can introduce technical variation that masks biological differences [41]. Furthermore, clustering on too many or too few genes can dilute the signal; selecting features that do not capture relevant biological variation prevents the algorithm from finding clear separations [42].

Q2: My cell viability is high, but my data is still noisy. What could be wrong? High viability is a good start, but other factors can introduce noise. Mitochondrial stress is a key indicator; even in viable cell preparations, cells can experience stress during dissociation, leading to an upregulation of mitochondrial genes that dominates the transcriptomic signal [43] [40]. Low sequencing coverage per cell can also be a factor, as it increases the sparsity of the data and makes it harder to distinguish cell populations [44]. Finally, overly harsh tissue dissociation can trigger stress response genes, creating a transcriptomic signature that overwhelms more subtle, biologically relevant signals [41].

Q3: How can I improve my clustering results bioinformatically? Start by optimizing feature selection. Using Highly Variable Genes (HVGs) is a common and effective practice for improving integration and clustering performance [42]. For complex datasets involving multiple samples, employing batch-aware feature selection or performing lineage-specific feature selection before integration can significantly enhance results [42]. Advanced clustering algorithms like scHSC that use contrastive learning and hard sample mining are specifically designed to improve separation in sparse scRNA-seq data [45].

Troubleshooting Guides

Issue 1: High Ambient RNA Contamination

Problem: Ambient RNA from lysed cells is captured during library preparation, creating a background noise that makes distinct cell populations appear more similar.

Solution:

- Bioinformatic Correction: Use tools like SoupX or CellBender to estimate and subtract the ambient RNA profile from your count matrix [40].

- Experimental Improvement: Minimize cell death during tissue dissociation. Work quickly and keep cells cold (on ice) to arrest metabolism and reduce RNA leakage [41]. Use density centrifugation to remove dead cells and debris before loading cells into your scRNA-seq platform [41].

Issue 2: Technical Batch Effects

Problem: Samples processed in different batches cluster more strongly by batch than by biological condition.

Solution:

- Experimental Design: Plan for biological replication across batches. If possible, use sample multiplexing to process samples from different conditions simultaneously in a single batch [44] [41].

- Data Integration: Use batch correction tools like Harmony or integration methods within frameworks such as Scanpy or Seurat after performing careful quality control on individual samples [46] [40]. Fixing and storing samples for later processing in a single, large batch can also mitigate this issue [41].

Issue 3: Poor Feature Selection for Clustering

Problem: The selected genes do not capture the biological variation of interest, leading to uninformative clustering.

Solution:

- Select a Robust Set of Features: Benchmarking studies show that using 2,000 Highly Variable Genes (HVGs) selected with a batch-aware method provides a strong baseline for high-quality integrations [42].

- Avoid Common Pitfalls: Do not rely on large, random gene sets or stably expressed "housekeeping" genes for clustering, as these typically result in poor performance [42].

- Consider Advanced Methods: For specialized projects like building a reference atlas, investigate lineage-specific feature selection to improve integration within specific cell lineages [42].

Diagnostic Metrics and Target Values

Use this table of quality control metrics to diagnose issues in your data before clustering.

| Metric | Description | Target Range | Interpretation |

|---|---|---|---|

| Mitochondrial Read Percentage [43] [40] | Fraction of reads mapping to mitochondrial genes. | <10% for PBMCs; can be higher for other cell types. | High percentage indicates cellular stress or broken cells. |

| Median Genes per Cell [40] | The median number of genes detected per cell barcode. | Protocol- and cell type-dependent. | Too low suggests poor cell quality/coverage; too high may indicate multiplets. |

| Median UMI Counts per Cell [40] | The median number of transcripts detected per cell barcode. | Protocol- and cell type-dependent. | Correlates with sequencing depth; low counts increase data sparsity. |

| Cell Doublet Rate [43] | Estimated percentage of droplets containing more than one cell. | Technology-dependent (e.g., ~0.8% per 1,000 cells recovered). | High rates can artificially connect distinct clusters. Use tools like Scrublet [43]. |

Advanced Workflow: The scHSC Method for Enhanced Clustering

For datasets with subtle distinctions, advanced deep learning methods can significantly improve separation. The scHSC framework is specifically designed to address the sparsity and noise of scRNA-seq data by focusing on "hard" samples that are difficult to classify [45].

Methodology:

- Data Preprocessing: Begin with a normalized and log-transformed count matrix. Select highly variable genes and standardize the data to zero mean and unit variance [45].

- Topological Structure Capture: Construct a K-nearest neighbor (KNN) graph from the processed data. Apply a Generalized Laplacian Smoothing Filter to denoise the gene expression matrix and preserve local neighborhood structure [45].

- Contrastive Learning with Hard Sample Mining:

- The filtered data and graph structure are passed through separate encoders.

- A similarity matrix is computed to identify "hard positives" (similar cells that appear dissimilar) and "hard negatives" (dissimilar cells that appear similar), often caused by dropout events.

- The model dynamically assigns higher weights to these hard samples during training, forcing the algorithm to learn a more discriminative embedding space [45].

- Output: The result is a refined set of cell embeddings where biologically distinct populations are better separated, leading to cleaner clusters in downstream analyses like heatmap visualization.

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function | Example/Note |

|---|---|---|

| HEPES or Hanks' Buffered Salt Solution [41] | Cell suspension media without Ca²⁺/Mg²⁺ | Prevents cation-induced cell clumping and aggregation. |

| Ficoll or OptiPrep [41] | Density gradient medium | Separates viable cells/nuclei from dead cells and debris during sample prep. |

| Commercial Enzyme Cocktails [41] | Tissue-specific dissociation | Kits from Miltenyi Biotec ensure reproducible single-cell suspensions. |

| Unique Molecular Identifiers (UMIs) [47] [48] | Molecular barcoding | Tags individual mRNA molecules to correct for amplification bias and improve quantification accuracy. |

| GentleMACS Dissociator [41] | Automated tissue dissociation | Provides rapid, standardized, and gentle dissociation of solid tissues. |

| Cell Ranger [40] | Primary data processing | 10x Genomics' pipeline for aligning reads, counting UMIs, and generating feature-barcode matrices. |

| Harmony [46] | Batch effect correction | Algorithm for integrating multiple datasets by removing technical batch effects. |

| Scanpy [45] [43] | Python-based analysis toolkit | A comprehensive environment for scRNA-seq data analysis, including normalization, clustering, and visualization. |

A Systematic Protocol for Diagnosing and Fixing Separation Issues

Frequently Asked Questions

Q1: My heatmap shows poor separation between sample clusters. What are the primary causes? Poor sample separation in heatmap clustering often stems from three main areas: inadequate data preprocessing, inappropriate algorithm selection, or an incorrect number of clusters (k). Issues such as unscaled data, which allows variables with larger scales to dominate the distance calculations, are a common culprit. Similarly, using a clustering algorithm unsuited to your data's structure (e.g., using K-means for non-spherical clusters) or choosing an unoptimal 'k' can result in poorly resolved clusters [18] [49].

Q2: How can I determine if my data preprocessing is the issue? You can diagnose preprocessing issues by checking two key factors:

- Feature Scaling: Clustering algorithms are sensitive to the scale of the data. If your variables are on different scales, those with larger ranges will dominate the clustering. You should normalize or standardize your data to ensure each feature contributes equally [18] [49].

- Missing Data: Most clustering algorithms will not work with missing values. Common strategies include imputation (filling in missing values with the mean, median, or a predictive model) or removing incomplete records, as their presence can distort the results [18].

Q3: What does it mean if my clustering is "overfit" or "underfit," and how does that affect my heatmap?

- Overfitting in cluster analysis occurs when the algorithm creates clusters that are overly complex, fitting the noise in the data rather than the underlying pattern. Signs on a heatmap include excessively fragmented clusters with only a few data points or irregular shapes that don't align with the data's natural structure [18].

- Underfitting means the clustering algorithm has failed to capture the true structure of the data, often because the model is too simplistic. This would manifest as a heatmap where distinct groups of samples are incorrectly merged into a small number of broad, non-informative clusters [18].

Q4: My heatmap colors lack contrast, making clusters hard to distinguish. Is this a visualization or a data problem?

This can be both a data and a visualization problem. From a data perspective, a lack of contrast can indicate that the differences in values (e.g., gene expression, protein abundance) between your true biological groups are subtle. From a visualization standpoint, it could mean your color scale is not optimally configured for the range and distribution of your data. You should experiment with different color midpoints (zmid) and limits (zmin, zmax) to improve contrast [50].

Diagnostic Protocols and Procedures

Protocol 1: Systematic Workflow for Diagnosing Poor Sample Separation

Follow this logical workflow to identify the root cause of poorly defined clusters in your heatmap.

Protocol 2: Determining the Optimal Number of Clusters

A critical step in cluster analysis is determining the correct number of clusters (k). Using an incorrect 'k' is a primary reason for poor sample separation. The following two methods are standard practice.