Running STAR Aligner on HPC: A Complete Guide for Biomedical Researchers

This comprehensive guide details the implementation of the STAR (Spliced Transcripts Alignment to a Reference) RNA-seq aligner on high-performance computing clusters.

Running STAR Aligner on HPC: A Complete Guide for Biomedical Researchers

Abstract

This comprehensive guide details the implementation of the STAR (Spliced Transcripts Alignment to a Reference) RNA-seq aligner on high-performance computing clusters. Covering foundational concepts through advanced optimization, it addresses critical needs for researchers and drug development professionals working with large-scale genomic data. The article provides practical methodologies for deployment, troubleshooting for common performance bottlenecks, and validation techniques to ensure computational efficiency and scientific accuracy in transcriptomic analysis, enabling faster discovery in biomedical research.

Understanding STAR Aligner and HPC Architecture for Genomic Research

Spliced Transcripts Alignment to a Reference (STAR) is an RNA-seq mapper that performs highly accurate spliced sequence alignment at an ultrafast speed [1]. Designed to address the unique challenges of transcriptome data, STAR uses a novel strategy based on sequential maximum mappable seed search in uncompressed suffix arrays followed by seed clustering and stitching procedures [2]. This algorithm allows STAR to outperform other aligners by a factor of more than 50 in mapping speed while simultaneously improving alignment sensitivity and precision [2]. STAR's capability to align hundreds of millions of paired-end reads per hour on modest servers, coupled with its accuracy in detecting canonical and non-canonical splice junctions, makes it particularly valuable for large-scale transcriptome studies such as the ENCODE project [2]. This application note details optimized protocols for implementing STAR on high-performance computing (HPC) clusters, addressing both computational requirements and experimental best practices for the research and drug development community.

STAR Algorithm and Core Technology

Algorithmic Foundation

STAR employs a unique two-step alignment strategy that fundamentally differs from traditional RNA-seq mappers. Rather than extending DNA alignment algorithms, STAR was specifically designed to align non-contiguous sequences directly to the reference genome [2]. The algorithm consists of two distinct phases:

Seed Searching: STAR searches for the Maximal Mappable Prefix (MMP) for every read, defined as the longest substring starting from a read position that matches one or more locations on the reference genome exactly [2] [3]. This sequential searching of only the unmapped portions of reads represents a key innovation that underlies STAR's efficiency. The MMP search is implemented through uncompressed suffix arrays, providing logarithmic scaling of search time with reference genome size [2].

Clustering, Stitching, and Scoring: In the second phase, STAR builds complete read alignments by clustering seeds based on proximity to selected "anchor" seeds, then stitching them together using a dynamic programming algorithm that allows for mismatches and single indels [2] [3]. This approach naturally accommodates spliced alignments while maintaining high precision.

Advanced Detection Capabilities

Beyond basic read alignment, STAR provides several advanced features crucial for comprehensive transcriptome analysis:

Junction Detection: STAR performs unbiased de novo detection of canonical splices without prior knowledge of splice junction loci, enabling discovery of novel splicing events [2]. Experimental validation of 1,960 novel intergenic splice junctions using Roche 454 sequencing demonstrated STAR's high precision, with success rates of 80-90% [2].

Chimeric and Fusion Transcript Detection: The algorithm can identify chimeric alignments where read segments map to distal genomic loci, different chromosomes, or different strands [2]. This capability includes detecting both mate-chimeric alignments and internally chimeric reads, precisely pinpointing fusion transcript locations [2].

Long Read Compatibility: Unlike many early RNA-seq aligners designed for short reads (≤200 bases), STAR can accurately align long reads emerging from third-generation sequencing technologies, potentially reaching full-length RNA molecules [2].

HPC Implementation and Resource Allocation

Computational Resource Requirements

Implementing STAR effectively on HPC clusters requires careful attention to computational resource allocation. The table below summarizes key resource requirements for typical STAR workflows:

Table 1: Computational Resource Requirements for STAR Analysis

| Resource Type | Minimum Requirement | Recommended for Large Genomes | Use Case Specification |

|---|---|---|---|

| CPU Cores | 4-6 cores | 12+ cores | Scales with number of simultaneous alignments [3] |

| Memory | 16 GB | 32+ GB | Genome index size dependent [3] |

| Storage I/O | Standard HDD | High-speed SSD | For temporary files and genome indices [3] |

| Temporary Storage | 50+ GB | 100+ GB | For genome generation output [3] |

STAR's memory intensity stems from its use of uncompressed suffix arrays, which trade increased memory usage for significant speed advantages over compressed implementations used in other aligners [2]. This tradeoff makes HPC deployment particularly advantageous, as cluster environments typically provide sufficient shared memory resources.

HPC Cluster Configuration

Successful STAR implementation on HPC clusters requires proper environment configuration:

Job Scheduler Configuration: STAR jobs should be submitted with explicit core and memory allocation using scheduler-specific directives. For SLURM, this includes

--cpus-per-task,--mem, and--timeparameters to ensure adequate resources [3].Parallel Processing Setup: STAR's

--runThreadNparameter must match the number of cores allocated in the job submission script [3]. Proper thread configuration ensures optimal utilization of cluster resources without overloading compute nodes.Storage Optimization: Genome indices should be stored on high-speed storage systems, with temporary files directed to local scratch space when available to reduce I/O bottlenecks during alignment [3].

Experimental Protocols and Workflows

Genome Index Generation

Creating a custom genome index is the critical first step in STAR analysis. The protocol below outlines the process for HPC implementation:

Table 2: STAR Genome Index Generation Parameters

| Parameter | Setting | Explanation |

|---|---|---|

--runMode |

genomeGenerate |

Specifies index generation mode [3] |

--genomeDir |

/path/to/genome_indices |

Output directory for indices [3] |

--genomeFastaFiles |

/path/to/FASTA_file |

Reference genome FASTA file [3] |

--sjdbGTFfile |

/path/to/GTF_file |

Annotation GTF file [3] |

--sjdbOverhang |

read_length - 1 |

Optimal value for junction databases [3] |

--runThreadN |

6 (or available cores) |

Number of parallel threads [3] |

Step-by-Step Protocol:

Prepare Reference Files: Obtain reference genome FASTA files and annotation GTF files from curated sources like ENSEMBL or GENCODE. For the human genome, these files are often available through shared cluster databases [3].

Configure Storage: Create output directories in scratch space with sufficient storage capacity. Genome indices for large genomes can require substantial storage (e.g., ~30GB for human) [3].

Submit Index Generation Job: Execute the genome generation step using a batch job script. Example SLURM script:

Validate Output: Verify generation of complete index files, including genome parameters, suffix arrays, and junction databases before proceeding to alignment.

Read Alignment Protocol

Once genome indices are prepared, perform read alignment with the following protocol:

Input Preparation: Ensure FASTQ files are available in accessible storage, preferably on high-speed systems. For paired-end reads, specify both files separated by spaces in the

--readFilesInparameter [3].Output Directory Setup: Create dedicated directories for alignment results with appropriate permissions for file writing.

Alignment Execution: Run STAR alignment with optimized parameters. Example command:

Output Management: Process alignment outputs, including BAM files, splice junction tables, and alignment statistics for downstream analysis.

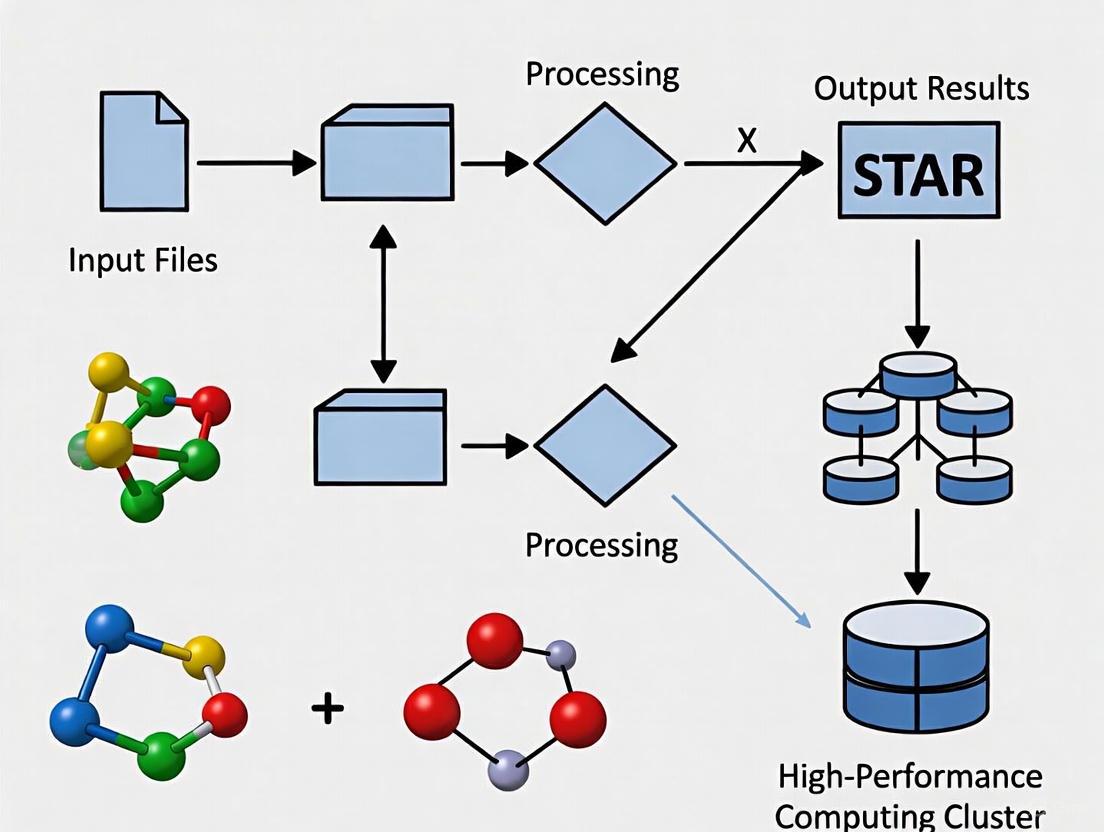

The following workflow diagram illustrates the complete STAR analysis process on an HPC cluster:

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Resources for STAR Analysis

| Item | Function | Specification |

|---|---|---|

| Reference Genome | Genomic coordinate system for read alignment | Species-specific FASTA files (e.g., GRCh38 for human) [3] |

| Annotation File | Gene model information for junction annotation | GTF format from ENSEMBL, GENCODE, or RefSeq [3] |

| RNA-seq Reads | Input sequencing data for alignment | FASTQ format, single or paired-end [3] |

| Genome Indices | Pre-processed reference for ultrafast alignment | STAR-generated index files [3] |

| HPC Cluster | Computational environment for alignment | Multi-core servers with sufficient memory [3] |

Performance Optimization and Troubleshooting

Parameter Optimization for Specific Applications

STAR's alignment algorithm can be controlled by numerous parameters to optimize performance for specific experimental designs:

Table 4: Key STAR Parameters for Performance Optimization

| Parameter | Default | Optimized Setting | Application Context |

|---|---|---|---|

--outFilterMultimapNmax |

10 | 20 | Highly repetitive transcriptomes |

--alignSJoverhangMin |

5 | 10 | Increased stringency for junctions |

--alignSJDBoverhangMin |

3 | 5 | Improved junction detection |

--limitBAMsortRAM |

0 | 50000000000 | Large dataset memory management |

--outFilterMismatchNmax |

10 | 5 | High-quality read libraries |

Troubleshooting Common HPC Issues

Memory Allocation Errors: Increase

--limitBAMsortRAMwhen processing large datasets or use--outSAMtype BAM Unsortedto reduce memory footprint [3].Slow Alignment Performance: Ensure genome indices are stored on local scratch space rather than network-attached storage to reduce I/O bottlenecks.

Incomplete Alignment: Verify that the

--sjdbOverhangparameter is set to read length minus 1, which is critical for accurate junction detection [3].

The following diagram illustrates STAR's core algorithmic approach to alignment:

Comparative Analysis with Alternative Tools

When selecting an alignment tool for RNA-seq analysis, researchers should consider the comparative strengths of STAR versus other approaches:

STAR vs. Kallisto: While Kallisto uses a pseudoalignment approach that is faster and more memory-efficient for transcript quantification, STAR provides base-level resolution through full genome alignment, enabling detection of novel splice junctions and fusion transcripts [4]. Kallisto is preferable for large-scale quantification studies with well-annotated transcriptomes, while STAR is superior for discovery-based applications requiring precise junction mapping and novel isoform detection [4].

Alignment Sensitivity: STAR's exhaustive maximum mappable prefix approach provides higher sensitivity for detecting non-canonical splices and complex splicing patterns compared with traditional split-read aligners [2].

HPC Compatibility: STAR's multi-threading implementation and efficient memory management make it particularly well-suited for HPC environments compared with some alternative aligners that have more limited parallelization capabilities [3].

STAR represents a significant advancement in RNA-seq alignment technology, combining unprecedented speed with accurate spliced alignment detection. Its unique two-step algorithm based on maximal mappable prefix searching and seed stitching enables comprehensive transcriptome characterization, including canonical splicing, non-canonical junctions, and chimeric transcripts. Implementation on HPC clusters effectively addresses STAR's memory requirements while leveraging parallel processing capabilities for large-scale analyses. Following the protocols and optimization strategies outlined in this application note will enable researchers and drug development professionals to maximize STAR's capabilities for their transcriptome studies, from basic research to therapeutic development.

RNA sequencing (RNA-seq) has become an indispensable tool in biomedical research, providing unprecedented insights into the continuously changing cellular transcriptome. Since its emergence in 2008, RNA-seq has experienced exponential adoption, with publications containing RNA-seq data reaching an all-time high of 2,808 in 2016 [5]. This technology enables researchers to identify differentially expressed genes between biological conditions, discover novel transcripts, and unravel complex regulatory networks underlying disease pathogenesis and drug response [6]. The application of RNA-seq spans diverse areas including cancer genomics, infectious disease research, neurodegenerative disorders, and drug development, where it facilitates the identification of novel therapeutic targets and biomarkers.

The fundamental principle of RNA-seq involves converting RNA populations to cDNA libraries followed by high-throughput sequencing to generate millions of short reads. These reads are then computationally aligned to a reference genome or transcriptome to quantify gene expression levels [5]. The critical importance of proper alignment cannot be overstated, as it directly influences all downstream analyses including differential expression, splice variant identification, and variant calling. This protocol focuses specifically on the application of the Spliced Transcripts Alignment to a Reference (STAR) aligner within high-performance computing (HPC) environments, providing researchers with a robust framework for processing RNA-seq data at scale.

RNA-seq Technology and Experimental Design

Library Preparation Strategies

RNA-seq library preparation begins with RNA isolation, followed by critical enrichment or depletion steps to target specific RNA populations. For standard gene expression analysis, two primary strategies exist: polyA selection which enriches for messenger RNA by capturing the polyadenylated tail, and ribosomal RNA depletion which removes abundant rRNA transcripts to reveal other RNA species [6]. The choice between these methods depends on research objectives: polyA selection is ideal for protein-coding gene analysis, while rRNA depletion enables detection of non-coding RNAs and partially degraded samples. For stranded libraries, which preserve information about the transcriptional origin, Illumina's TruSeq kits have become standard, providing strand orientation without significant additional cost [6].

Following RNA selection, fragmentation generates appropriately sized templates for sequencing. The RNA is then reverse transcribed into double-stranded cDNA, and sequencing adapters are ligated to fragment ends. After potential PCR amplification, fragments undergo size selection (typically 300-500bp) to finalize the library [6]. Understanding these preparation steps is crucial, as each decision influences downstream computational approaches and analytical possibilities.

Sequencing Considerations

Two fundamental sequencing configurations exist: single-end and paired-end reads. Single-end sequencing reads only one end of each fragment, while paired-end sequencing reads both ends, providing additional alignment confidence and structural information [6]. Although paired-end sequencing is approximately twice as expensive, it offers significant advantages for detecting splice variants, conducting de novo assemblies, and analyzing genomes with numerous paralogous genes. The choice of Illumina sequencing platform (MiSeq, HiSeq, NextSeq, NovaSeq) affects read length, quality, quantity per run, and required sequencing time [6].

Multiplexing enables efficient sample processing by pooling multiple libraries in a single lane using unique molecular barcodes (indices). This approach requires careful experimental design to balance sequencing depth across samples while maximizing lane capacity. For most gene expression studies, 20-30 million reads per sample provides sufficient coverage, though this requirement varies based on genome complexity and research goals [6].

Experimental Design and Quality Control

Robust experimental design is paramount for generating biologically meaningful RNA-seq data. Batch effects - technical variations introduced during sample processing - can profoundly impact results and must be minimized through careful planning [5]. Key strategies include processing control and experimental samples simultaneously, harvesting at consistent times of day, minimizing personnel changes, and sequencing all samples in a single run when possible [5]. Appropriate replication is equally critical; biological replicates (samples from different biological units) must be distinguished from technical replicates (repeated measurements of the same biological unit) to ensure statistical power accurately reflects biological variation rather than technical noise.

Table 1: Common Sources of Batch Effect and Mitigation Strategies

| Source | Strategy to Mitigate Batch Effect |

|---|---|

| Experimental | |

| User variability | Minimize users or establish inter-user reproducibility |

| Temporal effects | Harvest controls/experimentals simultaneously |

| Environmental conditions | Use intra-animal, littermate, and cage mate controls |

| RNA Isolation & Library Prep | |

| Technical variability | Perform RNA isolation on same day for all samples |

| Handling differences | Standardize freeze-thaw cycles across samples |

| Sequencing | |

| Run effects | Sequence all groups in a single run when possible |

Computational Requirements for RNA-seq Analysis

High-Performance Computing Infrastructure

RNA-seq analysis, particularly with the STAR aligner, demands substantial computational resources best provided by HPC clusters. These systems enable researchers to process large datasets efficiently through parallel computing while maintaining data integrity and reproducibility [7]. The Star HPC cluster at Hofstra University represents one such environment, supported by a $1.5M investment from Hofstra University and the National Science Foundation, but similar infrastructures exist at many research institutions [8]. Access to these clusters typically requires formal application demonstrating legitimate research needs, with approval processes varying by institution [7].

HPC clusters follow a structured architecture centered on a login node that serves as the gateway to the system. This node should not be used for computationally intensive tasks, but rather for job submission, file management, and monitoring. Actual computation occurs on compute nodes accessed through job schedulers like Slurm using sbatch or srun commands [7]. Understanding this architecture is essential for efficient resource utilization.

Storage and Data Management

Cluster storage follows a tiered system with specific purposes for each directory type. The home directory (/home/username) typically provides limited storage (often 10-100GB) for scripts, configuration files, and small datasets. Project directories offer expanded space for active research data and may support collaboration among group members. Scratch space provides high-speed temporary storage for intermediate files during job execution [7]. Researchers must adhere to institutional quotas and data management policies, implementing regular backup strategies for critical analysis code and configuration files.

Table 2: Computational Requirements for RNA-seq Analysis with STAR

| Resource | Minimum Recommendation | Ideal Configuration |

|---|---|---|

| Memory | 16 GB | 32 GB or higher |

| Processors | 4 cores | 12+ cores |

| Storage | 100 GB free space | 500 GB+ free space |

| Operating System | Linux or Mac OS | Linux distribution |

Software Management with Environment Modules

HPC clusters utilize environment modules to manage software versions and dependencies. These modules allow users to load specific software environments without system-wide installations [7]. A typical workflow might involve:

This modular approach maintains conflicting software versions and ensures reproducibility. Researchers should document all loaded modules for their analyses to enable method replication.

STAR Alignment Methodology

STAR (Spliced Transcripts Alignment to a Reference) employs a novel RNA-seq alignment algorithm based on sequential maximum mappable seed search followed by clustering, stitching, and scoring steps. Unlike traditional aligners designed for DNA, STAR specifically addresses the challenges of RNA-seq data, particularly splice junctions where exons join and introns are removed during processing [9]. The aligner achieves high accuracy through a two-step process: first identifying maximal mappable prefixes from read sequences, then clustering these seeds to account for possible splices.

STAR demonstrates superior performance compared to other splice-aware aligners like HISAT2 and TopHat2, with better mapping rates and faster processing times [9]. This performance comes at the cost of higher memory usage, particularly during the genome indexing phase. Additionally, STAR excels at identifying both canonical and non-canonical splice junctions and can detect chimeric (fusion) transcripts, making it particularly valuable in cancer genomics applications [9].

Genome Index Generation

The initial critical step in STAR analysis involves generating genome indices, which dramatically accelerates the subsequent alignment process. This step requires a reference genome in FASTA format and gene annotation in GTF or GFF3 format [9].

For GFF3 annotations, an additional parameter must be specified to define parent-child relationships:

The --sjdbOverhang parameter should be set to read length minus 1 (e.g., 149 for 150bp reads), defining the length of genomic sequence around annotated junctions used for constructing the splice junction database [9].

Read Alignment

Once genome indices are prepared, read alignment proceeds using either single-end or paired-end mode:

For compressed FASTQ files (*.fastq.gz), include --readFilesCommand zcat to enable decompression during alignment. The --outSAMtype BAM SortedByCoordinate parameter generates coordinate-sorted BAM files ready for downstream analysis without additional processing [9].

For studies focused on novel splice junction discovery, a 2-pass mapping approach is recommended, where splice junctions identified in an initial alignment are used to rebuild genome indices for a second alignment round. This method increases sensitivity for detecting novel splicing events but requires approximately double the computation time [9].

Implementation on HPC Clusters

Workflow Orchestration

Implementing STAR analysis within HPC environments requires job submission through workload managers like Slurm. The following script demonstrates a typical implementation:

This script requests appropriate computational resources, loads necessary software modules, and executes the alignment process. The --runThreadN parameter should match the number of CPUs requested in the Slurm configuration to maximize efficiency [10] [9].

Data Management and Transfer

Effective data management is crucial for large-scale RNA-seq analyses. Before initiating alignment, organize directory structures logically:

Transfer input data from personal computers or sequencing facilities to cluster storage using secure copy (scp) or rsync:

Monitor disk usage regularly and clean intermediate files when analyses are complete to avoid exceeding storage quotas [7].

Workflow Integration Platforms

Platforms like Galaxy and Seven Bridges provide graphical interfaces for constructing and executing STAR workflows without direct command-line interaction [10] [11]. These platforms enable researchers to build reproducible analysis pipelines by connecting predefined tools through graphical interfaces. The Seven Bridges implementation, for example, connects STAR Genome Generate with the main STAR aligner, plus quality control tools like FASTQ Quality Detector and Picard SortSAM [11]. While offering accessibility benefits, these platforms may provide less flexibility than direct command-line execution on HPC systems.

Output Analysis and Quality Assessment

Output File Interpretation

STAR generates multiple output files containing alignment results and quality metrics. The primary alignment file Aligned.sortedByCoord.out.bam contains reads sorted by genomic position, ready for variant calling or visualization [9]. Key quality metrics reside in Log.final.out, providing comprehensive alignment statistics:

This file reports critical metrics including uniquely mapped read percentages, splice junction counts, and indel rates. Additional files like SJ.out.tab contain filtered splice junctions detected during mapping, essential for alternative splicing analyses [9].

Quality Control Assessment

Comprehensive quality assessment involves multiple complementary approaches. The Log.final.out file provides primary alignment statistics, where researchers should note:

- Uniquely mapped reads: Ideally >70-80% for high-quality data

- Splice junctions: Total counts should align with expectations for the organism

- Mismatch rates: Typically <1% for high-quality alignments

- Multimapping reads: Expectedly higher in transcriptomes with gene families

Additional quality metrics come from tools like FastQC for sequence quality, RSeQC for RNA-specific metrics, and MultiQC for aggregating results across samples [10]. These complementary assessments identify potential issues like 3' bias, ribosomal RNA contamination, or adapter sequences requiring additional preprocessing.

Downstream Applications

Successfully aligned RNA-seq data enables diverse downstream analyses depending on research objectives. Differential expression analysis identifies genes significantly altered between conditions using tools like edgeR, DESeq2, or limma [5]. Isoform-level analysis leverages splice junction information to detect alternative splicing events using tools like MISO or rMATS. Variant calling identifies single nucleotide polymorphisms and insertions/deletions within transcribed regions, while fusion gene detection can reveal oncogenic rearrangements in cancer studies [9].

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for RNA-seq Analysis

| Item | Function | Examples/Formats |

|---|---|---|

| RNA Isolation Kits | Extract high-quality RNA from samples | PicoPure RNA Isolation Kit |

| Library Prep Kits | Prepare sequencing libraries from RNA | NEBNext Ultra DNA Library Prep Kit |

| PolyA Selection Kits | Enrich for messenger RNA | NEBNext Poly(A) mRNA Magnetic Isolation Kit |

| Reference Genome | Genomic sequence for read alignment | FASTA format (e.g., mm10, GRCh38) |

| Gene Annotation | Genomic feature coordinates | GTF/GFF3 format (e.g., Ensembl, GENCODE) |

| STAR Aligner | Splice-aware read alignment | Pre-compiled binaries or source code |

| SAMtools | Manipulation of alignment files | BAM/SAM format utilities |

| Subread/featureCounts | Read quantification per gene | Gene count tables |

Diagram 1: Comprehensive RNA-seq Analysis Workflow from Sample to Interpretation

HPC Cluster Architecture for STAR Implementation

Diagram 2: HPC Cluster Architecture for RNA-seq Analysis

RNA-seq alignment with STAR on high-performance computing clusters represents a powerful methodology for biomedical research, enabling comprehensive transcriptome analysis across diverse conditions and experimental designs. The integration of robust experimental design with appropriate computational infrastructure creates a foundation for biologically meaningful discoveries. This protocol details the complete workflow from library preparation through alignment and quality assessment, emphasizing the critical interplay between wet-lab procedures and computational execution. As RNA-seq technologies continue evolving toward single-cell and long-read applications, the fundamental principles outlined here provide a scalable framework for adapting to new methodological developments while maintaining analytical rigor and reproducibility in biomedical research.

The alignment of RNA-sequencing data is a foundational step in transcriptomic analysis, and the STAR (Spliced Transcripts Alignment to a Reference) aligner has emerged as a widely used tool for this purpose due to its high accuracy and unique strategy for handling spliced alignments [3]. However, STAR is a resource-intensive application that requires significant computational power, memory, and efficient data handling capabilities [12]. To process large-scale transcriptomic datasets efficiently, researchers must leverage High-Performance Computing (HPC) clusters specifically configured to meet STAR's demands. This application note details the essential HPC cluster components—compute nodes, parallel filesystems, and job schedulers—required to optimize STAR performance, framed within the context of broader thesis research on scalable genomic analysis. We provide detailed technical specifications, performance data, and experimental protocols to guide researchers and drug development professionals in configuring HPC environments for efficient STAR-based analysis.

Compute Node Configuration for STAR

STAR's Computational Demands and Node Selection

STAR's alignment strategy involves a two-step process of seed searching followed by clustering, stitching, and scoring, which creates specific computational requirements [3]. The algorithm achieves highly efficient mapping but is particularly memory-intensive, requiring substantial RAM to operate effectively. STAR's performance scales with available cores, but with diminishing returns beyond optimal core counts, making node selection a critical factor in overall workflow efficiency.

Recent research has quantified STAR's performance characteristics across different cloud instance types, providing valuable data for node selection in on-premises HPC clusters [12]. The studies analyzed cost-efficiency trade-offs and scalability patterns, identifying optimal core counts per node for STAR workflows. These investigations revealed that while STAR can utilize multiple cores, the parallel efficiency decreases as core count increases, necessitating careful balance between per-node performance and cluster-wide resource allocation.

Quantitative Performance Analysis

Table 1: STAR Performance Metrics Across Different Compute Configurations

| Instance Type | CPU Architecture | Core Count | Memory (GB) | Alignment Time | Relative Efficiency | Optimal Use Case |

|---|---|---|---|---|---|---|

| BM.Optimized3.36 | Intel Xeon 6354 | 36 | 384 | Baseline | 100% | Memory-intensive workloads |

| BM.Standard.E5 | AMD EPYC 9J14 | 64 | 512 | -15% | 115% | Balanced compute/memory |

| BM.Standard.E5 | AMD EPYC 9J14 | 128 | 1024 | -23% | 135% | Large-scale batch processing |

| c5n.metal | Intel Xeon 8275L | 72 | 192 | +12% | 92% | Cost-constrained projects |

Research indicates that the optimal core count for a single STAR alignment job typically ranges between 16-32 cores, with performance gains diminishing significantly beyond this point [12]. This finding suggests that HPC configurations should prioritize nodes with moderate core counts but high memory bandwidth rather than maximum core density. For the typical STAR workflow involving multiple simultaneous alignments, clusters should be configured with homogeneous nodes containing 64-128 GB RAM and 16-32 high-performance cores each, allowing efficient scheduling of multiple alignment jobs across the cluster.

Memory and Storage Requirements

STAR's memory requirements are primarily driven by the reference genome index size. For the human genome, the STAR index typically requires ~30GB of RAM [3] [12]. However, additional memory overhead for sequence data processing means that compute nodes should be configured with at least 64GB of RAM to ensure stable operation, with 128GB recommended for processing large datasets or running multiple concurrent alignment tasks on a single node. Local storage performance significantly impacts STAR's operation, particularly during the genome generation phase and when handling large FASTQ files. High-throughput local NVMe SSDs are recommended for temporary workspace directories, while shared input data and final results should reside on parallel filesystems.

Parallel Filesystems for STAR Workflows

The Critical Role of Parallel Filesystems in Transcriptomics

STAR workflows involve substantial data movement, with input FASTQ files often ranging from hundreds of megabytes to tens of gigabytes per sample, and output BAM files potentially exceeding the input size [12]. Parallel filesystems are essential for HPC clusters running STAR because they enable multiple compute nodes to simultaneously access shared reference genomes, input datasets, and write output files without creating I/O bottlenecks [13] [14]. This capability is particularly crucial for transcriptomic atlas projects processing hundreds of terabytes of RNA-seq data across thousands of simultaneous alignments.

Traditional network filesystems like NFS become significant bottlenecks in HPC environments due to their centralized metadata server architecture and limited aggregate bandwidth [14]. In contrast, parallel filesystems distribute data and metadata across multiple storage nodes, enabling linear scaling of bandwidth as the system grows. This architecture is essential for maintaining high throughput when hundreds of compute nodes need simultaneous access to reference genomes and are generating large alignment files concurrently.

Parallel Filesystem Options and Performance Characteristics

Table 2: Parallel Filesystem Comparison for HPC Genomics Workloads

| Filesystem | Strengths | Weaknesses | Metadata Performance | Aggregate Bandwidth | STAR Workload Suitability |

|---|---|---|---|---|---|

| Lustre | Proven exascale scalability, high throughput | Complex deployment/management, steep learning curve | Excellent with multiple MDS | Multi-TB/s possible | Excellent for large-scale production environments |

| BeeGFS | Flexible configuration, easier deployment | Less mature at extreme scale | Good with dynamic metadata | Scales linearly with OSTs | Very good for mid-sized research clusters |

| IBM Spectrum Scale (GPFS) | Enterprise features, multi-site replication | Higher licensing costs, requires expertise | Excellent with distributed metadata | High with proper configuration | Excellent for regulated environments |

| NFS | Simple deployment and management | Single-point bottlenecks, limited concurrency | Poor under concurrent load | Limited by single server | Suitable only for very small clusters |

For STAR workflows, Lustre often represents the optimal balance of performance, scalability, and community support [13] [14]. Its architecture separates metadata servers (MDS) from object storage targets (OSTs), allowing concurrent access patterns that match STAR's operational requirements. A properly configured Lustre filesystem can service data requests from thousands of compute nodes simultaneously, making it ideal for large-scale transcriptomic projects.

Configuration Recommendations for STAR

To optimize STAR performance on parallel filesystems, specific configuration adjustments are necessary. For Lustre, stripe counts should be set between 4-8 for individual files, balancing concurrent access with metadata overhead [14]. Reference genome indices should be stored with higher stripe counts (8-16) to ensure multiple compute nodes can access different portions simultaneously without contention. Experimental results demonstrate that proper Lustre striping configuration can improve STAR alignment throughput by up to 40% compared to default settings, particularly when processing multiple samples concurrently [12].

Diagram 1: Parallel Filesystem Architecture for STAR. The distributed nature of data across OSTs enables high-throughput access for multiple compute nodes.

Job Schedulers and Resource Management

Scheduler Integration with STAR Workflows

Job schedulers play a critical role in HPC clusters by managing resource allocation, job queues, and task distribution across compute nodes [15] [16]. For STAR workflows, schedulers ensure that alignment jobs are matched with nodes containing adequate memory and CPU resources while managing the complex dependencies between different stages of transcriptomic analysis. Common schedulers used in HPC environments include SLURM, Sun Grid Engine (SGE), PBS Pro, and LSF, with SLURM emerging as the de facto standard in many academic and research environments.

The scheduler's primary functions for STAR workflows include: managing job submission and queues, allocating appropriate compute resources (cores, memory, time), handling job arrays for embarrassingly parallel sample processing, managing job dependencies between alignment and post-processing steps, and enforcing fair-share policies among multiple users [15]. Proper scheduler configuration is essential for maintaining high cluster utilization while ensuring timely completion of STAR alignment jobs.

Resource Request Optimization for STAR

Effective resource specification in job scripts is critical for both job performance and efficient cluster utilization. STAR jobs that request insufficient memory will fail, while those requesting excessive resources waste allocation and may experience longer queue times [15] [16]. Based on empirical testing, the following resource requests typically yield optimal performance for human transcriptome alignment:

- CPU cores: 16-32 cores per STAR job (diminishing returns above 32 cores)

- Memory: 64-128 GB RAM (accommodates genome index + working memory)

- Wall time: 4-12 hours depending on input file size and core count

- Temporary storage: 100-500 GB local scratch space for intermediate files

Table 3: Job Scheduler Command Comparison for STAR Workflows

| Task | SLURM Command | SGE Command | Key Parameters for STAR |

|---|---|---|---|

| Submit interactive job | srun |

qrsh |

--cpus-per-task=16 --mem=64G |

| Submit batch job | sbatch <script> |

qsub <script> |

--time=12:00:00 --mem=64G |

| Request resources | --time=12:00:00 --mem=64G |

-l h_rt=12:00:00 -l m_mem_free=64G |

Adjust based on sample size |

| Array job submission | --array=1-100 |

-t 1-100 |

For multi-sample processing |

| Job status check | squeue |

qstat |

Monitor queue position |

| Job termination | scancel <job_id> |

qdel <job_id> |

Clean failed jobs |

Scheduler Configuration Strategies

HPC administrators should implement scheduler configurations specifically optimized for STAR workloads [16]. This includes creating specialized partitions or queues for genomics workloads with appropriate time and memory limits, implementing backfilling algorithms to maximize cluster utilization while respecting job priorities [16], configuring preemption policies for large-scale production runs, and establishing reservation systems for time-sensitive analysis. The scheduler should be integrated with the parallel filesystem to enable data-aware scheduling, where jobs are dispatched to nodes with optimal access to required reference genomes and input datasets [14].

Integrated Experimental Protocol for STAR on HPC

The following integrated protocol describes the complete workflow for executing STAR alignment on an HPC cluster, incorporating performance optimizations based on recent research findings [3] [12]. The protocol assumes a cluster environment running SLURM as the job scheduler and Lustre as the parallel filesystem, though it can be adapted for other technologies.

Diagram 2: STAR HPC Workflow. The optimized process from data transfer through alignment to downstream analysis.

Step-by-Step Execution Protocol

Preliminary Setup and Data Preparation

Data Transfer: Move FASTQ files from sequencing facility or local storage to the parallel filesystem. Use

rsyncfor reliable transfer of large datasets [15]. For very large datasets (multiple TB), consider using dedicated Data Transfer Nodes (DTNs) if available.Reference Genome Preparation: Download the appropriate reference genome (e.g., GRCh38) from Ensembl or GENCODE. Create a dedicated directory on the parallel filesystem with sufficient stripe count (recommended: 8-16 for reference files).

STAR Genome Index Generation: Generate the genome index using a dedicated batch job with high memory allocation. The following SLURM script exemplifies optimal configuration:

Alignment Execution with Optimized Parameters

- Job Script Preparation: Create a SLURM job script tailored to your dataset size. The following script implements optimizations documented in recent performance studies [12]:

Job Submission and Monitoring: Submit the job using

sbatch star_align.slurmand monitor progress usingsqueue -u $USER. For large array jobs, monitor overall progress by tracking output file generation.Performance Validation: After job completion, verify alignment rates and check for any error patterns. Examine the Log.final.out files generated by STAR to ensure consistent performance across samples.

Troubleshooting and Performance Validation

Common issues in STAR HPC execution include memory allocation errors (solved by increasing --mem request), excessively long queue times (addressed by adjusting time requests or using higher-priority queues), and I/O bottlenecks (mitigated by optimizing filesystem striping). Implement the following validation steps:

Resource Usage Audit: Use

sacct -j <jobid> --format=JobID,JobName,MaxRSS,Elapsed,AllocCPUsto analyze actual memory usage and optimize future resource requests.Parallel Efficiency Calculation: Calculate parallel efficiency by comparing alignment times with different core counts: Efficiency = (T₁ / Tₙ) / (N × T₁) where T₁ is time with single core and Tₙ is time with N cores.

Cost-Benefit Analysis: For cloud-based HPC implementations, compute the cost-effectiveness using the formula: Cost Efficiency = (Samples × Read Depth) / (Instance Cost × Alignment Time).

Table 4: Essential Research Reagents and Computational Resources for STAR HPC Workflows

| Resource Category | Specific Resource | Function/Application | Implementation Notes |

|---|---|---|---|

| Reference Genomes | GRCh38 (human) | Primary alignment reference | Download from Ensembl/GENCODE; requires ~3GB storage |

| Annotation Files | GTF/GFF3 annotations | Gene structure annotation for splice junction awareness | Ensembl releases provide comprehensive annotations |

| Software Tools | STAR aligner (v2.7.10b+) | Core alignment algorithm | Requires compilation with GCC or Intel compilers for optimal performance |

| Quality Control | FastQC | Pre-alignment read quality assessment | Run on subset of data to inform alignment parameters |

| Scheduler Environment | SLURM workload manager | Resource allocation and job scheduling | Configure with genomics-optimized partitions |

| Parallel Filesystem | Lustre parallel filesystem | High-throughput data access for multiple nodes | Configure with 4-8 stripes for optimal STAR performance |

| Container Platform | Singularity/Apptainer | Environment reproducibility and software portability | Package STAR with dependencies for consistent execution |

| Performance Tools | Intel VTune, perf Linux profiler | Performance analysis and bottleneck identification | Critical for optimizing memory and CPU utilization |

Configuring HPC clusters with appropriate compute nodes, parallel filesystems, and job schedulers is essential for efficient execution of STAR RNA-seq alignment. The optimal configuration balances high-memory compute nodes (64-128GB RAM) with moderate core counts (16-32 cores), leveraged with high-throughput parallel filesystems like Lustre, and managed through sophisticated schedulers like SLURM. Recent research demonstrates that careful optimization of these components can reduce alignment time by 23% or more through early stopping optimization and proper resource allocation [12]. For researchers undertaking large-scale transcriptomic studies, particularly in drug development and personalized medicine applications, implementing these HPC best practices for STAR workflows will significantly accelerate analysis timelines and improve resource utilization, ultimately enabling more comprehensive and reproducible genomic research.

For researchers running resource-intensive genomic applications like the STAR (Spliced Transcripts Alignment to a Reference) aligner, selecting the appropriate high-performance computing (HPC) environment is a critical strategic decision. This choice fundamentally influences computational throughput, cost structures, and operational workflows. The emergence of sophisticated cloud HPC services from providers like AWS and Azure presents a compelling alternative to traditional university clusters, each with distinct advantages and trade-offs. The STAR aligner, particularly when processing large-scale transcriptomic datasets such as a Transcriptomics Atlas, demands substantial RAM, high-throughput disks, and efficient multi-threading capabilities [12]. This document provides detailed application notes and protocols to guide researchers in effectively leveraging both environments for optimal STAR alignment performance.

Comparative Analysis: University Clusters vs. Cloud Platforms

The decision between university clusters and cloud platforms involves weighing factors including performance, cost, control, and accessibility. The following analysis synthesizes these dimensions to inform platform selection.

Table 1: Platform Comparison for HPC Resources

| Feature | University HPC Cluster | AWS Cloud Platform | Azure Cloud Platform |

|---|---|---|---|

| Performance & Hardware | Fixed, shared hardware; potential queues for GPU/High-CPU nodes [17]. | On-demand i4i/c6i instances; custom Graviton CPUs; high-speed EBS storage; Elastic Fabric Adapter [18] [12]. |

On-demand H-/D- series VMs for HPC; Azure NetApp Files; accelerated networking [19] [20]. |

| Cost Structure | Often subsidized or allocated via grants; no direct usage cost for researchers [17]. | Complex pricing (On-Demand, Savings Plans, Spot Instances); egress fees; can offer >70% savings with commitments [19] [20]. | Similar to AWS; flexible pricing; potential discounts via Microsoft agreements; $200 initial credit [19] [20]. |

| Access & Control | Administered by central IT; user has limited control; shared environment [17]. | Full infrastructure control; infrastructure-as-code (e.g., AWS CDK, Terraform) [18]. | Full infrastructure control; deep integration with Microsoft ecosystem and developer tools [19]. |

| Scalability | Fixed capacity; "cloud bursting" possible but complex to implement [17] [21]. | Instant, elastic scaling; managed services like AWS Batch and Parallel Computing Service (PCS) for dynamic scaling [18]. | Instant, elastic scaling; services like Azure Batch and Arc for hybrid scenarios [19]. |

| Ecosystem & Tools | Pre-configured scientific software stacks; Slurm/PBS schedulers [17]. | Vast service ecosystem (200+); AWS Batch, PCS, ParallelCluster; SageMaker for AI/ML [19] [18]. | Vast service ecosystem (600+); Azure Batch, CycleCloud; tight integration with Microsoft AI services [19] [22]. |

Quantitative Performance and Cost Data for STAR

Informed platform selection requires an understanding of application-specific performance. The table below summarizes key metrics from optimized STAR aligner experiments in the cloud.

Table 2: STAR Aligner Performance and Cost Analysis on AWS Cloud

| Metric | Value/Observation | Experimental Context |

|---|---|---|

| Early Stopping Optimization | 23% reduction in total alignment time [12]. | Implemented by checking for intermediate output files, allowing the pipeline to skip already completed processing stages [12]. |

| Optimal Instance Type | i4i instances identified as most cost-efficient [12]. | Evaluation based on cost-per-alignment metric, balancing compute speed, memory, and storage I/O for STAR's requirements [12]. |

| Spot Instance Suitability | Confirmed suitable for resource-intensive aligners [12]. | Successfully used for STAR alignment workloads, leveraging significantly lower cost Spot Instances for interruptible tasks [12]. |

| Parallelism per Node | Requires finding the optimal core count [12]. | Scalability tests conducted to determine the most cost-efficient number of cores per instance before performance plateaus [12]. |

Experimental Protocols for HPC Environments

Protocol 1: Executing STAR on a University HPC Cluster

This protocol assumes a Slurm-based workload manager, common in many academic environments.

A. Workload Manager Script (Slurm)

B. Key Steps and Considerations

- Resource Specification: Precisely request CPU (

--cpus-per-task), memory (--mem), and time (--time) based on STAR's requirements and dataset size to avoid job termination and ensure efficient scheduling [17]. - Software Management: Use environment modules to load specific, reproducible versions of STAR and SRA-Toolkit.

- Data Management: Stage input data (SRA files, genome indices) on the cluster's high-performance parallel file system (e.g., Lustre, BeeGFS) for optimal I/O [17].

- Job Submission and Monitoring: Submit the script using

sbatch job_script.shand monitor withsqueue -u $USER.

Protocol 2: Executing STAR on AWS Cloud

This protocol uses AWS Batch for orchestration, abstracting underlying infrastructure management.

A. Cloud Architecture and Setup The optimized, cloud-native architecture for running the Transcriptomics Atlas pipeline on AWS involves several managed services working in concert [12].

B. Infrastructure-as-Code (IaC) Template Snippet This YAML snippet, compatible with the AWS Cloud Development Kit (CDK), defines a compute environment optimized for STAR.

C. Containerized STAR Execution Script The pipeline logic, including the critical "early stopping" optimization [12], is implemented within the Docker container.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Reagents for STAR Alignment

| Item | Function/Description | Application Note |

|---|---|---|

| STAR Aligner | Aligns RNA-seq reads to a reference genome, handling splices and chimeric sequences [12]. | Use version 2.7.10b for reproducibility. Requires significant RAM (~64GB for human genome) and benefits from high core counts [12]. |

| SRA-Toolkit | Prefetches and converts sequence files from the NCBI SRA database into FASTQ format [12]. | Run prefetch followed by fasterq-dump. Consider --split-files for paired-end reads. |

| Reference Genome & Index | A species-specific genome (FASTA) and a corresponding pre-built STAR index [12]. | Download from Ensembl. The STAR index must be built for your specific read length and genome version. Pre-building and distributing this is crucial for cloud performance [12]. |

| Docker/Singularity | Containerization technologies to package the entire workflow (STAR, SRA-Toolkit, scripts) [22]. | Ensures consistency between university clusters and cloud environments. The image is stored in AWS ECR or Azure Container Registry for cloud execution [22]. |

| High-Throughput Storage | Parallel file systems (Lustre) or high-performance block storage (AWS EBS io2, Azure Premium SSD) [17] [12]. | Critical for I/O performance. STAR's performance scales with disk speed. On AWS, i4i instances include fast local NVMe storage that can be utilized [12]. |

| DESeq2 | An R package for differential expression analysis of normalized count data from STAR [12]. | Used in the final step of the Transcriptomics Atlas pipeline for normalization and statistical analysis. |

The choice between university HPC clusters and cloud platforms is not merely technical but strategic, impacting research agility and cost.

- For Pilot Studies and Tight Budgets: Utilize university clusters for their cost-effective, subsidized access, ideal for method development and smaller-scale analyses [17].

- For Large-Scale, Time-Sensitive Projects (e.g., Full Transcriptomics Atlases): Leverage cloud platforms (AWS or Azure). Their elastic scalability, combined with optimizations like Spot Instances and early stopping, dramatically reduces time-to-results and can be cost-competitive for massive workloads [12].

- Adopt a Container-First Strategy: Packaging the STAR workflow in a Docker container enables seamless movement between on-premises and cloud environments, providing ultimate flexibility and mitigating vendor lock-in [22] [23].

- Implement Cost-Control Guards: In the cloud, aggressively use Spot Instances, set budget limits with AWS Budgets/Azure Cost Management, and architect for cost efficiency (e.g., the early stopping check) to avoid unexpected expenditures [18] [12].

The modern computational researcher can strategically leverage both worlds, using the university cluster for day-to-day work and bursting to the cloud for monumental tasks, thus accelerating the pace of genomic discovery.

In the analysis of RNA-sequencing (RNA-seq) data, the ability to detect non-linear RNA alignments represents a significant advancement beyond standard gene expression quantification. These chimeric alignments can arise from two primary biological sources: circular RNAs (circRNAs) and fusion transcripts from chromosomal rearrangements. The detection of these events is crucial for a complete understanding of the transcriptome, particularly in disease contexts such as cancer, where fusion transcripts often act as driver mutations [24] [25]. The STAR aligner (Spliced Transcripts Alignment to a Reference) provides a unique and powerful framework for identifying these chimeric events. However, working with this data on a high-performance computing (HPC) cluster presents specific challenges, including managing the high memory footprint of the aligner and the computational burden of processing large, high-dimensional medical omics datasets [24]. This application note details protocols for leveraging STAR's chimeric detection capabilities within an HPC environment, enabling researchers to uncover biologically relevant insights with high precision and efficiency.

STAR's Alignment Strategy and Chimeric Output

Core Algorithmic Approach

The STAR aligner employs a novel two-step strategy that underlies its exceptional speed and accuracy. This process is fundamental to its ability to detect complex splicing events and chimeric junctions [3] [26].

- Seed Searching: For each sequencing read, STAR searches for the longest sequence that exactly matches one or more locations on the reference genome, known as the Maximal Mappable Prefix (MMP). The first matched segment is designated

seed1. The algorithm then searches only the unmapped portion of the read to find the next longest MMP, designatedseed2. This sequential searching of unmapped read portions is a key factor in STAR's efficiency [26]. - Clustering, Stitching, and Scoring: In the second step, the separately mapped seeds are clustered based on their proximity to a set of non-multi-mapping "anchor" seeds. These clusters are then stitched together to form a complete alignment for the read, with the final alignment scored based on mismatches, indels, and gaps [3] [26].

Workflow Visualization

The following diagram illustrates STAR's two-step alignment strategy which enables sensitive detection of splice junctions and chimeric transcripts.

Enabling Chimeric Detection

To activate chimeric read detection, STAR requires the --chimSegmentMin parameter to be set to a positive value, which defines the minimum length in base pairs required for each segment of a chimeric alignment. In practice, a value of 15-30 bp is commonly used, where larger values increase specificity and smaller values increase sensitivity [24]. It is critical to use a STAR index containing all reference chromosomes and unplaced contigs, as reads from unplaced contigs may otherwise map chimerically without a proper reference [24].

Computational Workflow for Chimeric Analysis on HPC

The complete workflow for chimeric analysis extends beyond alignment to include specialized post-processing tools. The following diagram outlines the key stages from raw data to annotated results.

Key Computational Tools for Downstream Analysis

| Tool Name | Primary Function | Input | Key Outputs | Applicable Data |

|---|---|---|---|---|

| STARChip [24] | Processes chimeric alignments from STAR | STAR chimeric output | Annotated circRNA & high-precision fusions | Bulk RNA-seq |

| scFusion [25] | Detects gene fusions in single-cell RNA-seq | STAR-mapped scRNA-seq data | Cellular heterogeneity of gene fusions | Single-cell RNA-seq |

| Arriba [25] | Fusion transcript detection | RNA-seq reads | High-confidence fusion calls | Bulk RNA-seq |

| STAR-Fusion [25] | Fusion detection & quantification | FASTQ or BAM | Annotated fusion events | Bulk RNA-seq |

HPC Resource Requirements and Configuration

Implementing this workflow on an HPC cluster requires careful consideration of computational resources. The table below summarizes key requirements based on published protocols and tools.

Table 1: HPC Resource Requirements for STAR Chimeric Analysis

| Resource Type | Minimum Recommended | Ideal for Large Datasets | Notes |

|---|---|---|---|

| CPU Cores | 6-8 cores [3] | 16-32 cores | Enables parallel processing during alignment and analysis |

| Memory (RAM) | 32 GB [24] | 64-128 GB | STAR is memory-intensive during genome indexing and alignment |

| Storage | 50 GB temporary space | 500 GB+ scratch space | Large FASTQ and intermediate BAM files require substantial storage |

| Job Wall Time | 2-4 hours (alignment) | 12+ hours (full pipeline) | Varies by dataset size and read depth |

A sample SLURM submission script for generating a STAR genome index on an HPC cluster:

Application Note 1: Circular RNA (circRNA) Detection with STARChip

Biological Significance and Detection Principles

Circular RNAs (circRNAs) are a widespread class of RNA molecules formed by back-splicing events, where a downstream 5' splice site joins with an upstream 3' splice site [24]. They are characterized by their:

- Covalently closed loop structure with no free ends

- Tissue-specific expression patterns

- Potential regulatory functions, including microRNA sponging

- Association with various diseases, including cancer

Detailed Protocol for circRNA Detection

Experimental Workflow

The following diagram details the step-by-step process for identifying and validating circRNAs from RNA-seq data using the STARChip pipeline on HPC infrastructure.

Key Filtration and Validation Steps

STARChip implements multiple filtration strategies to eliminate false positives:

- Read Support Requirements: circRNA must be supported by a user-defined minimum number of reads and present in a minimum number of samples when analyzing cohorts [24].

- Completeness Filter: ≥95% of supporting reads must align completely within the proposed circRNA structure [24].

- Strand Imbalance Filter: circRNA with 10× more reads on one strand in at least 50% of samples are removed as potential artifacts [24].

Quantitative Output Metrics

STARChip generates comprehensive quantitative outputs for downstream analysis, including:

Table 2: STARChip circRNA Output Metrics

| Output Metric | Description | Interpretation |

|---|---|---|

| Backsplice Read Count | Number of reads spanning the back-splice junction | Direct measure of circRNA abundance |

| Sample Frequency | Number of samples in which circRNA is detected | Confidence metric; higher frequency increases reliability |

| Genomic Context | Annotation as exonic, intronic, or intergenic | Insight into potential biogenesis mechanism |

| Adjacent Linear Expression | Expression of linear transcripts from the same locus | Context for interpretation of potential functions |

| Reads Per Million (RPM) | Normalized abundance value | Enables cross-sample comparison |

Application Note 2: Fusion Transcript Detection

Biological and Clinical Significance

Gene fusions resulting from chromosomal rearrangements are well-established drivers of oncogenesis [24] [25]. Clinically significant fusions include:

- BCR-ABL1 in chronic myeloid leukemia

- TMPRSS2-ERG in prostate cancer

- ALK fusions in lung cancer These fusions serve as important diagnostic markers and therapeutic targets, with several fusion-targeting drugs approved by the FDA [25].

Bulk RNA-seq Fusion Detection with STARChip

Computational Protocol

The STARChip pipeline implements a high-precision approach for fusion detection in bulk RNA-seq data:

- Chimeric Alignment Processing: STARChip processes the chimeric alignments generated by STAR, identifying unique split-mapped reads and discordant read pairs mapped to different genes [24].

- Automatic Read Thresholding: The tool implements automatic read support thresholds to balance sensitivity and specificity. The default threshold provides approximately 32% sensitivity with minimal false positives in healthy tissues (0.28 fusion reads per million mapped reads) [24].

- Annotation and Filtering: Potential fusions are annotated against reference databases and filtered against known artifacts.

Single-Cell Fusion Detection with scFusion

Specialized Challenges and Solutions

Single-cell RNA-seq data presents unique challenges for fusion detection, including high noise levels, technical artifacts from amplification, and sparse data per cell [25]. The scFusion tool addresses these challenges through a sophisticated multi-stage approach:

- Candidate Generation: Identification of fusion candidates from STAR-mapped reads, followed by initial filtering of pseudogenes, lncRNAs, and genes without approved symbols [25].

- Statistical Modeling: Application of a Zero-Inflated Negative Binomial (ZINB) model to account for over-dispersion and excess zeros in the supporting read counts, with regression modeling for gene expression and GC-content dependencies [25].

- Deep Learning Filtering: A bi-directional Long Short-Term Memory (bi-LSTM) network filters technical artifacts by analyzing junction sequence patterns, achieving a median AUC of 0.884 across multiple cancer datasets [25].

Key Performance Metrics

In validation studies, scFusion demonstrated:

- High sensitivity and precision in simulated data with varying cell numbers and read depths

- Successful detection of known recurrent fusions like IgH-WHSC1 in multiple myeloma

- Identification of invariant TCR gene recombinations in mucosal-associated invariant T cells, which many bulk methods fail to detect [25]

Critical Research Reagents and Software Solutions

| Resource Category | Specific Tool/Reagent | Function/Purpose | Implementation Notes |

|---|---|---|---|

| Alignment Software | STAR aligner [24] [3] | Splice-aware alignment with chimeric detection | Requires 32+ GB RAM for mammalian genomes |

| circRNA Detection | STARChip [24] | Specialized circular RNA detection | Perl/Bash/R pipeline; uses multithreading |

| Fusion Detection (Bulk) | STARChip [24], Arriba [25] | Fusion transcript identification | STARChip optimized for STAR output |

| Fusion Detection (Single-cell) | scFusion [25] | Gene fusion detection in scRNA-seq | Uses statistical & deep learning models |

| Reference Annotations | ENSEMBL GTF files [3] | Gene model annotations for alignment | Critical for accurate splice junction mapping |

| HPC Scheduler | SLURM [3] | Job management on compute clusters | Enables scalable processing of large cohorts |

HPC-Specific Resource Solutions

| HPC Component | Recommended Solution | Benefit for Chimeric Analysis |

|---|---|---|

| Compute Nodes | 16+ core nodes with 64+ GB RAM | Handles memory-intensive STAR alignment |

| Storage System | High-speed scratch space | Accommodates large temporary BAM files |

| Job Scheduler | SLURM or equivalent | Manages multi-sample processing pipelines |

| Module System | Environment modules | Maintains version control for dependencies |

The integration of STAR's chimeric detection capabilities with specialized downstream analysis tools like STARChip and scFusion provides a powerful framework for comprehensive transcriptome characterization. When implementing these workflows on HPC clusters, researchers should adhere to several key best practices:

- Resource Allocation: Always request sufficient memory (≥32 GB) for STAR alignment to prevent job failures [3].

- Parameter Optimization: Adjust

--chimSegmentMinbased on read length and experimental goals, balancing sensitivity and specificity [24]. - Multi-sample Analysis: Leverage the power of cohort analysis in STARChip to improve circRNA detection confidence through sample frequency filters [24].

- Validation Strategies: Plan for orthogonal validation of computationally predicted fusions and circRNAs, particularly for novel discoveries.

- HPC Efficiency: Utilize distributed computing options in STARChip to process large sample cohorts efficiently, reducing overall analysis time [24].

This comprehensive approach enables researchers to fully leverage the unique capabilities of STAR for detecting non-canonical splice junctions and chimeric transcripts, advancing our understanding of transcriptome complexity and its role in health and disease.

Implementing STAR Workflows: From Installation to Production Analysis

In a shared High-Performance Computing (HPC) environment, a diverse array of software applications and versions are installed in non-standard locations for maintenance, practicality, and security reasons [27]. Loading all these versions simultaneously is neither feasible nor desirable due to potential conflicts. Environment modules provide a solution to this complexity by offering a controlled mechanism to manage software environments. The module system, such as Lmod, allows users to dynamically modify their shell environment to make specific software packages available, preventing conflicts and simplifying version control [27] [28]. For researchers using the STAR (Spliced Transcripts Alignment to a Reference) aligner in RNA-sequencing workflows, proper module handling is essential for accessing the correct software versions and dependencies, ensuring reproducible and efficient genomic analyses [3] [12].

Quantitative Analysis of STAR Aligner Performance

STAR is a widely used RNA-seq aligner known for its high accuracy and speed, though it is memory-intensive [3] [12]. The following table summarizes key performance metrics from recent studies, which are critical for resource allocation and experimental planning on HPC systems.

Table 1: Performance Metrics and Resource Requirements for STAR Aligner

| Metric Category | Specific Metric | Value or Range | Context / Conditions |

|---|---|---|---|

| General Performance | Alignment Speed | >50x faster than other aligners | Cited as a key advantage [3] |

| Memory Usage | RAM Requirements | Tens of GiBs | Depends on reference genome size [12] |

| Cloud Optimization | Time Reduction via Early Stopping | 23% | Optimization in cloud-based transcriptomics [12] |

| Data Handling | Typical Data Rates (LCLS-II) | 200 GB/s to >1 TB/s | Example of data volume for alignment [29] |

| Computational Resources | Cores Used in APS Case Study | Up to 1,200 cores | For real-time tomographic reconstruction [29] |

Research Reagent Solutions for STAR Workflows

The following table outlines the essential software and data components required to execute a STAR alignment workflow on an HPC cluster.

Table 2: Essential Research Reagents and Computational Tools for STAR Alignment

| Reagent/Tool Name | Function / Purpose | Key Parameters / Notes |

|---|---|---|

| STAR Aligner | Performs splice-aware alignment of RNA-seq reads to a reference genome. | Requires large amount of RAM; uses a two-step process (seed searching, clustering/stitching/scoring) [3] [12]. |

| Reference Genome (FASTA) | Reference sequence against which reads are aligned. | File in FASTA format (e.g., Homo_sapiens.GRCh38.dna.chromosome.1.fa) [3]. |

| Annotation File (GTF) | Gene annotation file used to guide the alignment of spliced transcripts. | File in GTF format (e.g., Homo_sapiens.GRCh38.92.gtf) [3]. |

| SRA Toolkit | Suite of tools to access and convert sequence data from the NCBI SRA database. | prefetch retrieves SRA files; fasterq-dump converts SRA to FASTQ format [12]. |

| GCC Compiler | Provides necessary software libraries and dependencies for many scientific applications. | Often a prerequisite module that must be loaded before STAR (e.g., gcc/6.2.0) [3]. |

| Environment Modules (Lmod) | Tool for managing software environments and dependencies on HPC systems. | Commands: module load, module list, module avail [27] [28]. |

Experimental Protocols

Protocol 1: Managing Software Environment with Modules

This protocol details the fundamental steps for managing your HPC software environment before running STAR.

- Discover Available Modules: Use the

module availcommand to list all available software packages. To narrow the list, specify the software name (e.g.,module avail starormodule avail gcc) [27] [28]. - Load Prerequisite Modules: Load any required dependencies. For STAR, this often includes a compiler like GCC and the STAR module itself. Multiple modules can be loaded simultaneously. Specific versions can be loaded to ensure reproducibility [3] [28].

- Verify Loaded Modules: Check which modules are currently active in your session using

module list[27] [28]. - Unload or Switch Modules: To remove a module, use

module unload <module_name>. To switch between versions of the same software, usemodule switch <old_module> <new_module>(e.g.,module switch intel intel/2016b) [27]. - Resolve Conflicts: Be mindful of conflicts between incompatible modules, particularly between different MPI implementations. If conflicts occur, unload the conflicting modules before loading new ones [27].

- Set a Default Environment (Optional): To automatically load a set of modules at login, first clear the initial state and then add the desired modules.

Verify with

module initlist[27].

Protocol 2: Genome Index Generation with STAR

Creating a genome index is a critical first step for STAR alignment, which can be submitted as a batch job to the HPC scheduler.

- Create an Index Directory: Create a directory on scratch space with high I/O capacity for the genome indices.

- Create a Job Submission Script: Write a SLURM script (e.g.,

genome_index.run) to run the resource-intensive indexing process. The--sjdbOverhangparameter should be set to (read length - 1) [3]. - Submit the Job: Execute the script using the scheduler (e.g.,

sbatch genome_index.run).

Protocol 3: RNA-seq Read Alignment with STAR

After generating or locating the genome indices, reads can be aligned. The following command exemplifies an alignment run, which can be incorporated into a similar job script as in Protocol 2.

- Prepare the Environment: Ensure the required modules are loaded and you are in your data directory.

- Execute Alignment Command: Run STAR with parameters for alignment. This command specifies the output as a coordinate-sorted BAM file, which is commonly required for downstream analysis [3].

Workflow Visualization

Diagram 1: STAR Module Loading and Alignment Workflow on HPC.

Diagram 2: STAR's Two-Step Alignment Strategy.

For researchers analyzing RNA-seq data on high-performance computing (HPC) clusters, the Spliced Transcripts Alignment to a Reference (STAR) aligner has become an indispensable tool due to its exceptional speed and accuracy. The critical first step in any STAR analysis pipeline is genome indexing, executed via the --runMode genomeGenerate command. This process creates a specialized database of the reference genome that dramatically accelerates the subsequent read alignment phase. Proper configuration of this indexing step is particularly crucial in HPC environments where computational resources must be balanced between efficiency and performance across diverse research applications, from basic transcriptome mapping to comprehensive drug discovery initiatives.

STAR's algorithmic efficiency stems from its innovative two-step process comprising seed searching followed by clustering, stitching, and scoring [2]. Unlike earlier aligners that performed arbitrary splitting of read sequences, STAR employs sequential maximum mappable prefix (MMP) searching using uncompressed suffix arrays (SA), enabling rapid exact match searches against large reference genomes with logarithmic scaling complexity [3] [2]. The genome indexing phase precomputes these data structures, transforming the reference genome into an optimized format that facilitates the ultra-rapid alignment capabilities for which STAR is renowned—outperforming other aligners by more than a factor of 50 in mapping speed while simultaneously improving alignment sensitivity and precision [2].

STAR Algorithm and Indexing Fundamentals

Core Algorithmic Architecture

STAR's genome indexing process constructs specialized data structures that enable its unique alignment strategy. The algorithm operates through two distinct phases that leverage the precomputed index:

Seed Searching Phase: STAR identifies the longest sequences from reads that exactly match one or more locations in the reference genome, known as Maximal Mappable Prefixes (MMPs) [3] [2]. This sequential searching of only unmapped read portions using uncompressed suffix arrays creates a significant speed advantage over compressed suffix array implementations used in other aligners, though it trades off increased memory usage [2]. For each read, STAR begins from the first base and extends until it can no longer find exact matches, with these initial MMPs designated as "seeds." The algorithm then repeats the process for the remaining unmapped portions of the read, creating subsequent seeds until the entire read is processed or no further matches can be found.

Clustering, Stitching, and Scoring Phase: After seed identification, STAR clusters them based on proximity to selected "anchor" seeds—preferentially those with minimal multi-mapping potential [3] [2]. The software then stitches seeds together using a dynamic programming approach that allows for mismatches and indels while reconstructing the complete read alignment. This clustering process occurs concurrently for paired-end reads, treating mate pairs as fragments of the same sequence, which increases alignment sensitivity as correct anchoring from either mate can facilitate proper alignment of the entire read pair [2].

Table: STAR Algorithm Phase Characteristics

| Algorithm Phase | Key Process | Data Structures | Primary Output |

|---|---|---|---|

| Seed Searching | Sequential MMP identification | Uncompressed suffix arrays | Maximal Mappable Prefixes (seeds) |

| Clustering | Anchor-based seed grouping | Genomic coordinate space | Seed clusters |

| Stitching | Dynamic programming assembly | Local genomic windows | Continuous alignments |

| Scoring | Alignment quality assessment | Scoring matrices | Final aligned reads |

Key Data Structures in Genome Indexing

The --runMode genomeGenerate process constructs several critical data structures that enable STAR's rapid alignment performance:

Suffix Arrays (SA): Uncompressed suffix arrays provide the fundamental indexing structure that allows for efficient binary search of genomic sequences, enabling logarithmic-time identification of Maximal Mappable Prefixes during alignment [2]. These arrays contain all possible suffixes of the reference genome sorted lexicographically, facilitating rapid exact match searches without the computational overhead of compression algorithms used in other aligners.

Genome Sequence Storage: The raw reference genome sequences are stored in a format optimized for rapid access during the seed extension phase of alignment, allowing efficient handling of mismatches and indels when exact matching reaches its limits [3].

Junction Databases: When provided with annotation files (GTF format), STAR pre-computes potential splice junctions that inform the clustering and stitching process, though the algorithm maintains the capability for de novo junction discovery during alignment [3].

The memory-intensive nature of these uncompressed data structures means that genome indexing for large mammalian genomes typically requires at least 30 GB of RAM, making HPC resources essential for production-scale analyses [30].

Critical Parameters for Genome Generate Optimization

Essential Genome Indexing Parameters

Optimizing STAR's --runMode genomeGenerate requires careful attention to several key parameters that control the structure and capabilities of the resulting index: