Somatic Short Variant Discovery: Best Practices for Robust Analysis in Cancer Research

This article provides a comprehensive guide to somatic short variant discovery, addressing the key challenges and solutions for researchers and drug development professionals.

Somatic Short Variant Discovery: Best Practices for Robust Analysis in Cancer Research

Abstract

This article provides a comprehensive guide to somatic short variant discovery, addressing the key challenges and solutions for researchers and drug development professionals. It covers foundational concepts, methodological workflows, advanced troubleshooting, and rigorous validation strategies. By synthesizing current evidence and tool evaluations, the guide establishes best practices for achieving high-precision, high-sensitivity variant calling in cancer genomics, ultimately supporting reliable biomarker identification and therapeutic development.

Understanding the Landscape of Somatic Variation and Its Challenges

Somatic short variants are mutations that occur in the DNA of somatic (non-germline) cells and are not inherited. These variants are pivotal in cancer genomics, as they can drive tumor initiation, progression, and response to therapy. The two primary classes of somatic short variants are Single Nucleotide Variants (SNVs) and short insertions and deletions (indels). An SNV involves a change in a single nucleotide, while an indel involves the insertion or deletion of a small number of base pairs (typically less than 50 bp) [1]. Accurate detection of these variants is a cornerstone of precision oncology, providing critical insights for diagnostic, prognostic, and therapeutic decision-making [2].

The identification of these variants requires specialized next-generation sequencing (NGS) approaches, such as whole-genome or whole-exome sequencing of tumor samples, often with a matched normal sample to distinguish somatic mutations from inherited germline polymorphisms [3] [4]. The subsequent analytical workflow involves complex computational methods to call, filter, and annotate variants, ultimately classifying their oncogenic potential to determine clinical actionability [5] [2].

Methodologies for Discovery and Analysis

The standard workflow for somatic short variant discovery is a multi-step process that transforms raw sequencing data into a curated list of high-confidence, annotated variants.

Core Computational Workflow

The established best-practice pipeline involves several critical stages, each with dedicated tools and analytical goals [3] [2].

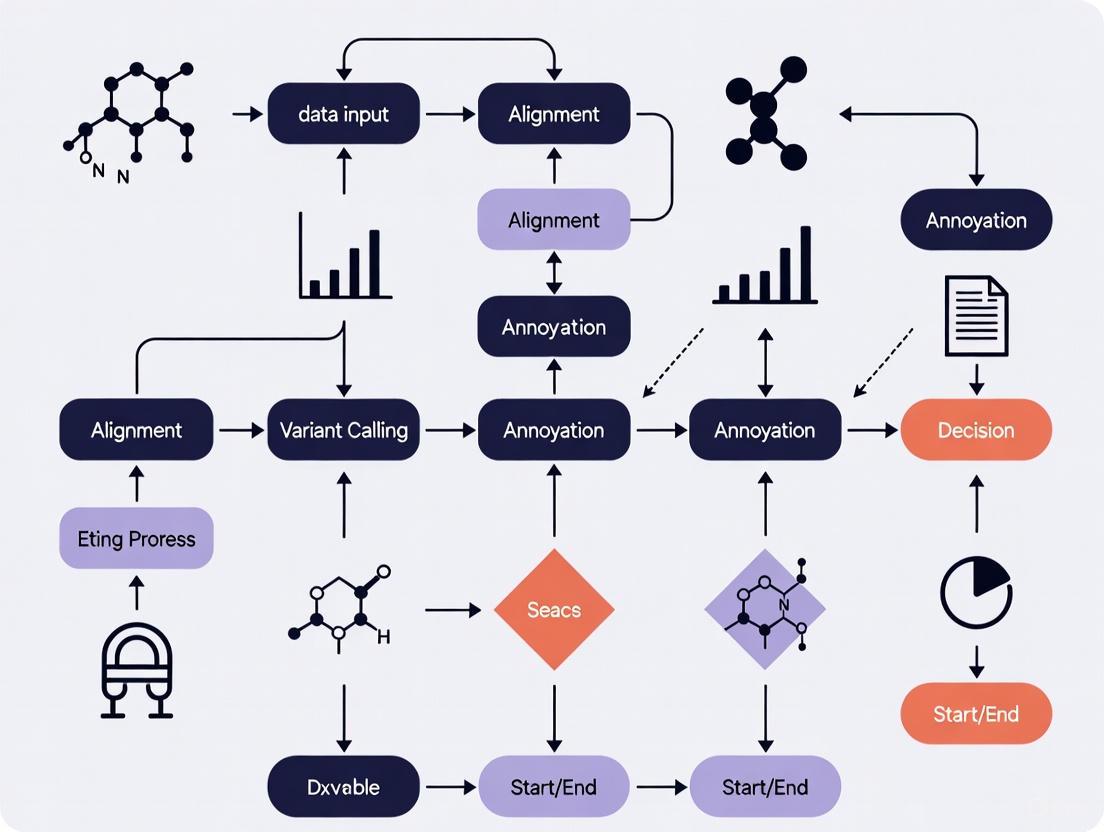

Figure 1. The standard somatic short variant discovery workflow. This pipeline, outlined by the GATK Best Practices, starts with pre-processed BAM files and proceeds through variant calling, quality control, filtering, and functional annotation to produce a final list of somatic variants [3].

- Call Candidate Variants: This initial step uses a variant caller, such as Mutect2, to perform a comprehensive scan of the aligned sequencing data (BAM files) to identify potential variant sites. Mutect2 operates by performing local de-novo assembly of haplotypes in genomic regions that show evidence of variation. It then aligns each read to these candidate haplotypes and applies a Bayesian somatic likelihoods model to calculate the probability that a variant is a true somatic mutation versus a sequencing error [3].

- Calculate Contamination: This quality control step, involving tools like

GetPileupSummariesandCalculateContamination, estimates the fraction of reads in the tumor sample that come from cross-sample contamination. This is crucial for avoiding false positives, and modern tools are designed to work effectively even in samples with significant copy number variation and without a matched normal [3]. - Learn Orientation Bias Artifacts: Using

LearnReadOrientationModel, this step learns the parameters of a model for sequencing artifacts related to strand orientation. This is particularly important for samples like FFPE (Formalin-Fixed Paraffin-Embedded) tissues, where DNA damage can introduce specific, reproducible biases that mimic real variants [3]. - Filter Variants: The

FilterMutectCallstool applies a series of hard filters and probabilistic models to the raw candidate variants. It accounts for correlated errors, alignment artifacts, strand bias, polymerase slippage (for indels), and contamination. It also uses the contamination and orientation bias models learned in previous steps to refine the variant calls and automatically set a filtering threshold to balance sensitivity and precision [3]. - Annotate Variants: Finally, a tool like Funcotator adds biological and clinical context to the filtered variants. It annotates each variant with information such as the affected gene, the predicted effect on the protein (e.g., missense, frameshift), and known associations from databases like COSMIC, dbSNP, and gnomAD. The output can be in Variant Call Format (VCF) or Mutation Annotation Format (MAF), facilitating downstream interpretation [3].

Emerging and Specialized Technologies

While short-read NGS is the workhorse of somatic variant discovery, new technologies are pushing the boundaries of sensitivity and accuracy.

- Duplex Sequencing: Techniques like NanoSeq achieve ultra-low error rates (below 5 errors per billion base pairs) by sequencing both strands of each original DNA molecule. This allows for the detection of extremely low-frequency mutations in polyclonal tissues, providing a powerful tool to study early carcinogenesis and the somatic mutation landscape in aging and disease with single-molecule sensitivity [6].

- Long-Read Sequencing: Technologies from PacBio and Oxford Nanopore generate reads that are thousands of base pairs long. A comparative evaluation has shown that while short- and long-read data have similar performance for SNV and small deletion detection, long-read sequencing is significantly more accurate for calling insertions larger than 10 base pairs and for detecting variants in repetitive genomic regions [1].

- Deep Learning: New computational methods like VarNet use weakly supervised deep learning models to accurately identify SNVs and indels from NGS data, representing a shift towards data-driven, rather than rule-based, variant calling [4].

The following table summarizes the key methodological approaches for detecting somatic short variants.

Table 1: Core Methodologies for Somatic Short Variant Discovery

| Method Category | Key Example(s) | Primary Use Case | Key Advantages |

|---|---|---|---|

| Short-Read Bulk NGS | GATK Mutect2 [3], Strelka2 [2] | Standard tumor-normal or tumor-only somatic analysis. | Well-established, high-throughput, cost-effective. |

| Error-Corrected NGS | NanoSeq [6] | Detecting ultra-low frequency variants in polyclonal samples. | Extremely low error rate (< 5×10⁻⁹); single-molecule sensitivity. |

| Long-Read Sequencing | PacBio HiFi, ONT [1] | Resolving complex regions and large insertions. | Excellent for repetitive regions and insertions >10 bp. |

| Deep Learning | VarNet [4] | Accurate SNV/indel detection from tumor tissue. | Data-driven approach; can improve accuracy over traditional methods. |

Technical Protocols and Performance Benchmarking

Implementing a robust somatic variant detection pipeline requires careful consideration of experimental design and bioinformatic tool performance.

Key Experimental Considerations

- Input Material: The workflow requires BAM files derived from tumor and, if available, matched normal samples. These BAM files must be pre-processed according to best practices, including alignment, duplicate marking, and base quality score recalibration [3]. The protocol can be applied to both fresh-frozen and FFPE-derived DNA, though the latter requires specific steps to account for fixation-induced artifacts [3] [4].

- Variant Calling Modes: The Mutect2 tool is designed to identify somatic SNVs and indels in a single tumor sample from one individual, either with or without a matched normal sample [3].

- Addressing Artifacts: For FFPE samples, the

LearnReadOrientationModelstep is critical to model and filter out artifacts caused by DNA damage during fixation [3].

Performance Benchmarking of Technologies

Recent large-scale benchmarking efforts, such as those by the SMaHT Network, have evaluated sequencing technologies and computational methods for detecting diverse somatic mutations. These studies have shown that using a combination of bulk short-read and long-read sequencing, donor-specific assemblies, and the human pangenome improves variant calling and extends mutation catalogs to challenging genomic regions [7].

A comprehensive evaluation of variant callers using short- and long-read data revealed critical performance differences [1]:

- SNVs and Deletions: The recall and precision for SNV and indel-deletion detection were similar between short- and long-read data in non-repetitive regions.

- Insertions: The detection of insertions larger than 10 bp was significantly less sensitive with short-read-based algorithms compared to long-read-based methods.

- Repetitive Regions: The recall of SV detection with short-read algorithms was significantly lower in repetitive regions, especially for small- to intermediate-sized SVs.

Table 2: Performance Comparison of Sequencing Technologies for Variant Detection

| Variant Type | Region | Short-Read Performance | Long-Read Performance |

|---|---|---|---|

| SNVs | Non-repetitive | High recall & precision [1] | High recall & precision [1] |

| Indels (Deletions) | Non-repetitive | High recall & precision [1] | High recall & precision [1] |

| Indels (Insertions >10bp) | All | Poorly detected [1] | High sensitivity [1] |

| All Variants | Repetitive (e.g., STRs, SegDups) | Lower recall; alignment errors [1] | Higher recall; spans repeats [1] |

| Low-Frequency Variants | N/A | Limited by error rate (~10⁻⁷) [6] | Excellent with duplex methods (<5×10⁻⁹ error rate) [6] |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Solutions for Somatic Variant Discovery

| Item | Function in the Workflow |

|---|---|

| Pre-processed BAM Files | The starting input for the variant discovery pipeline; contains aligned sequencing reads from tumor and normal samples [3]. |

| Reference Genome (e.g., GRCh38) | A standardized genomic sequence against which tumor samples are compared to identify variants [1]. |

| Panel of Normal (PON) VCF | A resource of common artifacts and germline variants found in a set of normal samples, used to filter out false positives in tumor-only analyses [3]. |

| Targeted Capture Panel (e.g., for NanoSeq) | A set of baits to enrich specific genes (e.g., 239-gene panel) for high-sensitivity, targeted mutation profiling [6]. |

| Annotation Databases (e.g., COSMIC, dbSNP, gnomAD) | Curated knowledgebases used by annotation tools like Funcotator to provide biological and clinical context to variants [3] [2]. |

| Functional Annotation Tool (e.g., Funcotator, VEP, SnpEff) | Software that determines the functional impact of a variant (e.g., missense, stop-gain) and links it to external datasources [3] [2]. |

Interpretation and Clinical Application

The final and most critical step is the biological and clinical interpretation of the identified somatic short variants.

Standards for Classifying Oncogenicity

To ensure consistent interpretation, professional consortia have developed a Standard Operating Procedure (SOP) for classifying the oncogenicity of somatic variants [5]. Inspired by the ACMG/AMP germline guidelines, this framework assigns variants to one of five categories:

- Oncogenic

- Likely Oncogenic

- Variant of Uncertain Significance (VUS)

- Likely Benign

- Benign

The classification uses a point-based system that weighs evidence from various sources, including:

- Very Strong: Mutations in well-known oncogenic hotspots (e.g., KRAS p.Gly12) or well-established functional studies.

- Strong: Located in a well-curated mutation hotspot or functional domain in an oncogene or tumor suppressor.

- Moderate/Supporting: Computational predictions, population frequency data, and other ancillary evidence [5].

Clinical Reporting Actionability

For clinical reporting, the AMP/ASCO/CAP guidelines provide a tiered system for somatic variants based on their clinical actionability [2]:

- Tier I: Variants with strong clinical significance for diagnosis, prognosis, or therapy.

- Tier II: Variants with potential clinical significance.

- Tier III: Variants with unknown significance.

- Tier IV: Variants deemed benign or likely benign.

This structured approach to interpretation and reporting is fundamental for translating genomic findings into actionable insights for patient care, enabling the use of targeted therapies and personalized treatment strategies.

Figure 2. The somatic variant interpretation workflow. After variant calling, the biological oncogenicity of a variant is classified according to the ClinGen/CGC/VICC SOP. This biological classification then feeds into the determination of clinical actionability based on the AMP/ASCO/CAP tiered system to generate a final clinical report [5] [2].

The accurate detection of somatic short variants is foundational to precision oncology but is substantially challenged by biological and technical factors. Intratumor heterogeneity (ITH) leads to variants with low variant allele frequency (VAF) that are often clinically actionable, while standard sequencing and analysis methods are susceptible to technical artifacts that can be misinterpreted as genuine heterogeneity. This whitepaper synthesizes current evidence on the prevalence and impact of low-VAF variants, outlines the limitations of conventional sequencing approaches, and presents best-practice experimental and computational workflows for distinguishing true biological signals from noise in somatic variant discovery.

The Clinical and Biological Scale of the Challenge

The Pervasiveness of Low VAF Variants in Clinical Samples

Large-scale genomic studies reveal that low VAF variants are not rare occurrences but a common feature in clinical cancer samples. An analysis of 331,503 solid tumors profiled using the FDA-approved FoundationOneCDx test demonstrated that 29% of all patients had at least one somatic variant detected at VAF ≤10%, and 16% had at least one variant at VAF ≤5% [8]. This translates to nearly one-third of patients presenting with potentially consequential low-frequency variants.

The prevalence of these variants varies significantly across cancer types. Among frequently diagnosed tumors, the percentage of cases harboring at least one variant at VAF ≤10% was found to be 37% for pancreatic cancer, 35% for non-small cell lung cancer (NSCLC), 29% for colorectal cancer, and 24% for prostate cancer [8]. This distribution correlates with sample purity, as 68% of pancreatic cancer samples had tumor purity below 40%, higher than other tumor types [8].

Table 1: Prevalence of Low VAF Variants Across Major Cancer Types

| Cancer Type | Patients with ≥1 VAF ≤10% | Patients with ≥1 VAF ≤5% | Median Tumor Purity |

|---|---|---|---|

| Pancreatic | 37% | 19% | ~43% (cohort median) |

| NSCLC | 35% | 18% | ~43% (cohort median) |

| Colorectal | 29% | 15% | ~43% (cohort median) |

| Breast | 23% | 11% | ~43% (cohort median) |

| Prostate | 24% | 12% | ~43% (cohort median) |

Clinically Actionable Variants Frequently Occur at Low VAF

The clinical significance of low VAF variants is particularly evident at specific therapeutic hotspots. Analysis of 5,095 clinical samples sequenced with the CancerSCAN panel showed that a substantial proportion of clinically actionable variants in key driver genes are present at low allele fractions [9]:

Table 2: Prevalence of Low VAF Hotspot Mutations

| Gene/Hotspot | % of Mutations at VAF <5% | % of Mutations at VAF <10% | Clinical Context |

|---|---|---|---|

| EGFR T790M | 24% | Not reported | Resistance to EGFR-TKI |

| PIK3CA E545 | 17% | Not reported | Oncogenic driver |

| KRAS G12 | 12% | Not reported | Oncogenic driver |

| EGFR (all hotspots) | 16% | 28% | Various |

| KRAS (all hotspots) | 11% | 21% | Various |

| PIK3CA (all hotspots) | 12% | 26% | Various |

| BRAF (all hotspots) | 10% | 17% | Various |

Treatment resistance-associated alterations particularly tend to manifest at low VAF. In the FoundationOneCDx cohort, resistance alterations had significantly lower median VAF than driver alterations [8]. This pattern is mechanistically explained by the subclonal expansion of resistant cell populations under therapeutic selective pressure.

Technical Limitations in Detecting True Biological Signals

The Problem of Technical Artifacts in Variant Calling

Standard whole-exome sequencing (WES) approaches demonstrate concerning limitations in reliably distinguishing genuine intratumor heterogeneity from technical artifacts. A rigorous study evaluating WES on three distinct tumor regions with technical replicates found that 69% of somatic variants identified by a cancer-only pipeline were false positives [10].

Even with matched normal DNA—considered the gold standard—significant technical noise persists. Between technical replicate pairs, only 36-78% of somatic variants were consistently detected despite using matched normal DNA for filtering [10]. Critically, 34-80% of discordant somatic variants that could be interpreted as ITH were actually technical noise rather than true biological heterogeneity [10].

The sources of these artifacts are multifaceted, arising from library preparation, sequencing errors, and alignment challenges, particularly in low-mappability regions. Without orthogonal validation, detection of subclonal mutations by WES remains unreliable [10].

Limitations of Conventional Sequencing Approaches

Bulk sequencing methodologies, while clinically practical, fundamentally obscure cellular-level heterogeneity by averaging signals across diverse cell populations [11]. This limitation is particularly problematic for detecting rare subclones that may drive resistance or metastasis.

The relationship between sequencing depth and detection sensitivity is quantitatively critical. At typical WES depths of 100-200x, detection of variants below 10-15% VAF becomes statistically challenging. While targeted panels achieve higher depths (500-1000x), their breadth is necessarily limited, potentially missing off-panel drivers [8].

Formalin-fixed paraffin-embedded (FFPE) tissues, representing the majority of clinical samples, present additional technical challenges due to DNA fragmentation, cross-linking, and degradation, which exacerbate coverage variability and artifact generation [8].

Best Practices for Reliable Somatic Variant Discovery

Computational Workflows for Somatic Short Variant Discovery

The Genome Analysis Toolkit (GATK) provides a rigorously validated best practices workflow for somatic short variant discovery (SNVs and Indels) that specifically addresses the challenges of low VAF variants and technical artifacts [3].

Somatic Short Variant Discovery Workflow

This workflow employs Mutect2 for initial variant calling via local de novo assembly of haplotypes, which is particularly important for detecting variants in heterogeneous samples [3]. Subsequent specialized steps address key technical challenges:

- Calculate Contamination: Uses GetPileupSummaries and CalculateContamination tools to estimate cross-sample contamination, specifically designed to work in samples with significant copy number variation [3].

- Learn Read Orientation Model: Particularly crucial for FFPE samples, this step models and corrects for orientation-specific artifacts that can mimic true variants [3].

- Filter Mutect Calls: Applies multiple hard filters and probabilistic models to remove alignment artifacts, strand bias, polymerase slippage artifacts, germline variants, and contamination [3].

The Funcotator annotation tool finally adds functional context to variants, drawing from databases including GENCODE, dbSNP, gnomAD, and COSMIC to assist biological interpretation [3].

Experimental Design and Validation Strategies

Tumor Heterogeneity Quantification

The Tumor Heterogeneity (TH) index, calculated using Shannon's index with VAFs of mutated loci, provides a quantitative measure of ITH. Validation studies have shown that TH indices from targeted panel sequencing (381 genes) correlate well with those from whole-exome sequencing (Spearman rs = 0.70, p < 0.001) [12].

The reliability of TH measurement depends on panel size, with 300-gene panels showing strong correlation (rs = 0.87) with WES-based measurements, while smaller 50-gene panels perform poorly (rs = 0.50) [12]. Clinically, high TH index correlates with advanced pathological stage and worse progression-free survival in colorectal and breast cancers [12].

Orthogonal Validation Methods

Orthogonal validation remains essential for confirming low-frequency variants. Digital PCR (dPCR) provides highly sensitive and quantitative validation, with studies showing high correlation between dPCR and NGS VAF measurements [9]. For research applications, single-cell whole genome sequencing with methods like Primary Template-directed Amplification (PTA) significantly improves variant detection sensitivity and enables direct observation of cellular heterogeneity without the averaging effects of bulk sequencing [11].

Essential Research Reagents and Tools

Table 3: Key Research Reagents and Solutions for Studying Tumor Heterogeneity

| Reagent/Tool | Function | Application Notes |

|---|---|---|

| FoundationOneCDx | FDA-approved comprehensive genomic profiling | 324-gene panel; validated for low VAF detection down to 5% in clinical samples [8] |

| CancerSCAN Panel | Custom targeted sequencing | 381 cancer-related genes; optimized for hotspot mutation detection [9] |

| GATK Mutect2 | Somatic variant caller | Uses local de novo assembly; part of best practices workflow [3] |

| ResolveDNA with PTA | Single-cell whole genome amplification | Reduces allelic dropout; enables SNV/CNV detection at single-cell level [11] |

| Funcotator | Variant annotation tool | Adds functional context from multiple databases (GENCODE, dbSNP, COSMIC) [3] |

| Northstar Select | Liquid biopsy CGP assay | 84-gene panel; LOD of 0.15% VAF for SNV/Indels; addresses low-shedding tumors [13] |

Tumor heterogeneity, low VAF variants, and sequencing artifacts present interconnected challenges that require integrated computational and experimental solutions. The high prevalence of clinically actionable low VAF variants underscores the necessity for sensitive detection methods, while the substantial rate of technical artifacts in standard approaches demands rigorous validation frameworks. The field is advancing toward more sophisticated single-cell analyses and computational methods that can distinguish true biological heterogeneity from technical noise, ultimately enabling more precise therapeutic targeting in oncology.

The choice between Formalin-Fixed Paraffin-Embedded (FFPE) and fresh frozen (FF) tissue represents a fundamental trade-off in somatic variant discovery, balancing practical availability against technical data quality. FFPE specimens, archived in hospital biobanks for decades, offer an unparalleled resource for retrospective clinical research, with an estimated 400 million to over a billion samples available globally [14]. In contrast, fresh frozen tissues remain the gold standard for nucleic acid quality but present significant logistical challenges for collection, processing, and storage [14]. As next-generation sequencing (NGS) becomes central to precision oncology and biomarker discovery, understanding how preservation methods introduce artifacts and impact variant calling is crucial for developing robust analytical pipelines. This technical guide examines the molecular consequences of each preservation method, provides quantitative comparisons of sequencing artifacts, and outlines mitigation strategies to ensure data reliability within somatic short variant discovery workflows.

Molecular Mechanisms of FFPE-Induced Artifacts

The formalin fixation process chemically modifies DNA through several well-characterized mechanisms that directly impact sequencing accuracy.

Primary Damage Pathways

- Cross-linking and Adduct Formation: Formaldehyde reacts with nucleophilic groups on DNA bases (particularly amino groups), forming hydroxymethyl adducts and methylene bridges that create protein-DNA and DNA-DNA cross-links [15]. These modifications alter base-pairing characteristics and can block polymerase progression during library amplification.

- Deamination: Spontaneous cytosine deamination to uracil represents the most prevalent FFPE artifact, leading to C>T/G>A false substitutions during sequencing [15]. This process occurs post-fixation due to inactivation of cellular repair enzymes, with frequency increasing with sample age.

- Fragmentation: Formalin fixation accelerates glycosidic bond cleavage, generating apurinic/apyrimidinic (AP) sites that undergo β-elimination, resulting in DNA backbone fragmentation [15]. FFPE-DNA typically fragments to 225-300 bp, substantially smaller than the optimal 360-480 bp range for WGS [16].

- Oxidation Damage: Though less common, oxidative damage can introduce C>A/G>T transversions through base oxidation mechanisms [15].

The combination of these processes creates a complex artifact profile where false positive variants coexist with regions of information loss due to severe damage.

Comparative Sequencing Performance and Artifact Profiles

DNA and RNA Quality Metrics

Table 1: Nucleic Acid Quality Comparison Between FFPE and Fresh Frozen Tissue

| Quality Metric | Fresh Frozen Tissue | FFPE Tissue | Impact on Sequencing |

|---|---|---|---|

| DNA Integrity | High (intact strands) | Fragmented (DIN: 5.5±0.6) [17] | Reduced library complexity, amplification bias |

| RNA Quality | Preserved ribosomal peaks | Degraded (DV200: 59-79%) [14] | 3' bias in RNA-Seq, reduced transcript detection |

| Average Fragment Size | >7,500 bp [17] | ~200-500 bp [16] | Shorter reads, lower mappability |

| Cross-linking | Minimal | Extensive protein-DNA cross-links | Reduced amplification efficiency |

| Chemical Modifications | Minimal | Cytosine deamination, base adducts | False positive variants, base misincorporation |

Artifact Burden in Whole Genome Sequencing

Recent studies quantifying FFPE-derived artifacts reveal substantial challenges for variant calling:

- Small Variant Enrichment: FFPE processing results in a median 20-fold enrichment in artifactual calls across mutation classes compared to matched fresh frozen samples [16]. Single nucleotide variant (SNV) and indel calling precision drops to approximately 50% and 62%, respectively, without specialized processing.

- Variant Class Differences: Structural variant (SV) calling maintains higher precision (80%) but suffers from reduced sensitivity (57%) in FFPE samples due to reduced coverage and shorter read fragments [16].

- Coverage Impacts: FFPE libraries show shorter average insert sizes (166-358 bp versus 356-503 bp for FF) and increased GC bias, resulting in lower effective coverage despite similar raw sequencing depth [16].

Table 2: Quantitative Comparison of Somatic Variant Detection in Matched FFPE-FF Pairs

| Variant Class | Fold-Change (FFPE/FF) | Precision | Sensitivity | Key Challenges |

|---|---|---|---|---|

| SNVs | 2.0x increase [16] | ~50% [16] | 85% [16] | C>T/G>A artifacts from deamination |

| Indels | 2.4x increase [16] | ~62% [16] | 75% [16] | Polymerase slippage at damaged sites |

| Structural Variants | 0.76x (median) [16] | 80% [16] | 57% [16] | Reduced mapping quality, shorter fragments |

| Copy Number Variants | Variable | Lower reliability [16] | Comparable | Higher noise, hyper-segmentation |

Impact on Biomarker Detection

The artifact burden in FFPE samples directly impacts the reliability of clinically relevant biomarkers:

- Tumor Mutational Burden (TMB): FFPE processing artificially elevates genome-wide TMB estimates (median: 10.28 versus 3.45 in FF) due to enrichment of artifacts in non-coding regions, though coding TMB remains relatively unaffected with proper processing [16].

- Mutation Signatures: FFPE damage mimics true mutational processes, with 45 of 56 samples showing enrichment of SBS37 signature (median proportion: 23.4% versus 3.6% in FF) [16].

- Homologous Recombination Deficiency (HRD): FFPE artifacts impair HRD detection, with 7/7 samples correctly classified as HRD in FF being misclassified in FFPE by HRDetect, and 4/7 by CHORD [16].

- Microsatellite Instability (MSI): While not quantified in the current studies, similar context-specific artifacts could impact MSI calling in FFPE samples.

Diagram 1: FFPE artifact propagation from tissue processing to biomarker impact. FFPE tissue shows increased artifacts across variant classes with direct consequences for clinical biomarker assessment.

Experimental Protocols for Artifact Mitigation

Pre-Analytical Quality Control

Rigorous quality assessment before sequencing is essential for reliable FFPE data:

- DNA QC Metrics: Implement multi-parameter assessment including degradation index (DI < 2 recommended), A260/A230 ratios (target >1.8), and fragment size distribution [17]. The Infinium HD FFPE QC kit ΔCt value should be <-1.0 for optimal performance [18].

- RNA QC Metrics: Use DV200 values (>70% optimal, >50% acceptable) rather than RIN for FFPE-RNA assessment [14]. Correlation between DV200 and unique gene detection is stronger in degraded samples.

- Targeted QC Workflow:

- Extraction: Use FFPE-optimized kits (Maxwell FFPE Plus DNA Kit) with extended de-crosslinking steps [17].

- Quantification: Employ fluorometric methods (Qubit) rather than spectrophotometry for accurate concentration measurement of fragmented DNA.

- Integrity: Calculate DNA Integrity Number (DIN) via TapeStation or similar platforms, with DIN >5 considered acceptable for WGS [17].

Wet-Lab Mitigation Strategies

Several laboratory protocols can reduce FFPE artifact burden:

- DNA Repair Treatments: Pre-library preparation treatment with FFPE-specific repair mixes (NEBNext FFPE DNA Repair v2 Kit) addresses deamination and abasic sites through uracil-DNA glycosylase (UDG) and AP endonuclease activities [17].

- Library Preparation Optimization:

- Enzymatic fragmentation in optimized buffers reduces error transfer between strands compared to sonication methods [6].

- Duplex sequencing methods (NanoSeq) using dideoxynucleotides during A-tailing prevent extension of single-stranded nicks, achieving error rates <5×10^-9 errors per bp [6].

- Hybridization capture panels show better performance than amplicon-based approaches for FFPE material due to more even coverage of fragmented DNA.

Computational Correction Methods

Bioinformatic tools specifically address FFPE artifacts in sequencing data:

- FFPErase: A random forest classifier that filters SNV/indel artifacts and improves concordance between matched FF/FFPE datasets, enabling clinical-grade reporting across variant classes [16]. In validation studies, FFPErase demonstrated 99% sensitivity compared to FDA-approved panel tests while reporting 24% more clinically relevant findings.

- Consensus Calling: Employing multiple variant callers and requiring variants to be supported by ≥2 callers reduces FFPE-specific structural variant calls by 98%, though is less effective for SNVs and indels [16].

- Error-Corrected Sequencing Bioinformatics: Specialized pipelines for duplex sequencing data account for family-based consensus calling, significantly reducing false positives in low-VAF variants [6].

Diagram 2: Comprehensive FFPE artifact mitigation workflow integrating pre-analytical, wet-lab, and computational strategies to ensure high-confidence variant detection.

Table 3: Key Research Reagents and Computational Tools for FFPE-Focused Variant Discovery

| Resource Category | Specific Product/Tool | Application Context | Performance Notes |

|---|---|---|---|

| DNA Extraction | Maxwell FFPE Plus DNA Kit (Promega) | DNA isolation from FFPE | Higher yield from cross-linked samples vs. standard methods [17] |

| DNA Repair | NEBNext FFPE DNA Repair v2 Kit (NEB) | Pre-library repair | Reduces deamination artifacts via UDG treatment [17] |

| Library Prep | Ultra II FS Library Prep Kit (NEB) | Low-input/damaged DNA | Minimizes error introduction during library construction [17] |

| Error-Corrected Seq | NanoSeq [6] | Ultra-sensitive detection | Error rate <5×10^-9 bp; compatible with targeted capture |

| Targeted Panels | Illumina TruSight Oncology 500 [19] | Comprehensive genomic profiling | Higher success rate with FFPE vs. whole genome approaches |

| Computational Tools | FFPErase [16] | SNV/indel artifact filtering | Random forest classifier; 99% sensitivity vs. clinical panels |

| Variant Callers | Mutect2 (GATK) [3] | Somatic short variant discovery | Includes FFPE-specific filters for orientation bias |

| Quality Control | MultiQC [20] | Sequencing QC aggregation | Integrates metrics across multiple steps in workflow |

The choice between FFPE and fresh frozen tissue necessitates careful consideration of study objectives, available samples, and analytical resources. While fresh frozen tissue remains the gold standard for nucleic acid integrity and variant calling accuracy, methodological advances now enable reliable somatic variant discovery from FFPE samples when appropriate safeguards are implemented. For researchers working within the constraints of clinical samples, we recommend:

- Prioritize FFPE-specific protocols from extraction through analysis, including DNA repair treatments and artifact-aware bioinformatic pipelines.

- Implement rigorous quality control at multiple stages, with clear thresholds for DNA/RNA quality metrics specific to FFPE material.

- Utilize error-corrected sequencing methods like NanoSeq when detecting low-frequency variants is critical, particularly in polyclonal samples [6].

- Apply computational artifact correction tools like FFPErase for FFPE WGS data, significantly improving variant calling precision while maintaining sensitivity [16].

- Validate FFPE-derived biomarkers against known standards when possible, particularly for quantitative applications like TMB and HRD assessment.

As sequencing technologies continue to evolve, the performance gap between FFPE and fresh frozen tissues will likely narrow further, unlocking the immense potential of historical clinical archives for somatic variant discovery in cancer research and therapeutic development.

Ethical Principles and Clinical Validity in Somatic Testing

Somatic genomic testing has become a cornerstone of precision oncology, enabling the detection of acquired mutations that drive cancer progression and guide therapeutic decisions. The clinical application of this technology necessitates a rigorous framework that integrates robust technical validity with steadfast ethical principles. This guide provides an in-depth examination of the standards and methodologies essential for implementing somatic testing within research and clinical development, with a specific focus on best practices for somatic short variant discovery. As testing paradigms evolve from targeted panels to comprehensive whole-exome and whole-genome approaches, researchers and drug development professionals must navigate complex analytical and ethical landscapes to ensure reliable, actionable results while maintaining patient trust and safety.

Ethical Framework for Somatic Testing

The integration of somatic testing into clinical and research workflows introduces unique ethical considerations that extend beyond conventional laboratory validation. A primary ethical concern involves the potential for incidental germline findings. Tumor genomic sequencing, while intended to identify somatic changes, can reveal the presence of pathogenic germline variants with significant implications for both patients and their biological relatives [21]. These findings may indicate inherited cancer susceptibility syndromes, creating complex counseling dilemmas regarding disclosure and family communication.

Effective management of these challenges requires systematic pretest education that clearly explains the benefits, risks, and potential outcomes of testing, including the possibility of identifying germline variants [21]. Studies indicate that patients may experience anxiety or feel overwhelmed by complex genomic information, particularly when unexpected findings emerge. These concerns may be amplified among racial and ethnic minority groups due to historical medical mistrust and fears of genetic discrimination, potentially widening disparities in precision medicine uptake if not adequately addressed.

Health care systems must develop coordinated processes spanning test referral, pretest counseling, result communication, and posttest follow-up [21]. The National Society of Genetic Counselors offers educational resources, including courses on clinical and laboratory perspectives for somatic genetic testing with case examples of common counseling dilemmas. When dedicated genetics personnel are limited, clinicians can employ shared decision-making approaches, such as the Agency for Healthcare Research and Quality's SHARE model, to help patients weigh benefits, harms, and risks according to their personal values and preferences.

Table: Key Ethical Considerations and Recommended Practices in Somatic Testing

| Ethical Consideration | Clinical Implications | Recommended Practice |

|---|---|---|

| Incidental Germline Findings | Identification of hereditary cancer predisposition with implications for patients and families | Implement pretest counseling about potential germline discoveries; establish referral pathways to genetic counselors |

| Health Disparities | Potential widening of equity gaps in precision medicine access | Develop culturally competent educational materials; address medical mistrust through transparent communication |

| Informed Consent | Patients may be unprepared for potential outcomes and limitations of testing | Provide comprehensive pretest education covering benefits, risks, and limitations of somatic testing |

| Result Communication | Complex genomic information may cause patient anxiety or misunderstanding | Utilize layered result reporting; ensure availability of post-test counseling for result interpretation |

| Data Privacy | Concerns about genetic discrimination and data security | Implement robust data protection protocols; provide clear information about privacy safeguards |

Establishing Clinical Validity

Clinical validity refers to a test's ability to accurately and reliably identify specific genomic alterations and correlate them with clinically relevant outcomes. For somatic testing, this encompasses analytical sensitivity (true positive rate) and analytical specificity (true negative rate) for detecting various variant types across different tumor types and sample qualities.

The DH-CancerSeq assay validation demonstrates key parameters for establishing clinical validity. In one validation study, 94 patient DNA samples isolated from formalin-fixed, paraffin-embedded (FFPE) tissue with known clinically reported variants were used to assess performance against the TruSight Tumor 170 targeted panel [22]. True positives were defined as variants detected by both methods, while false negatives were those detected only by the comparator method. Sensitivity was calculated as TP/(TP + FN), while specificity was derived as TN/(TN + FP) [22]. This rigorous validation approach ensures that variant calling meets necessary standards for clinical implementation, particularly for tumor-only WES which faces challenges with variant calling at low depth of coverage (≤100×) [22].

The interpretation of somatic variants follows standardized classification guidelines established by professional organizations including the American College of Medical Genetics and Genomics (ACMG), the Association for Molecular Pathology (AMP), and the College of American Pathologists (CAP) [23]. These guidelines recommend a five-tier terminology system: "pathogenic," "likely pathogenic," "uncertain significance," "likely benign," and "benign" [23]. This standardized approach facilitates consistent reporting and interpretation across laboratories, though specific disease groups may develop additional gene-specific guidance based on unique evidence considerations.

Table: Performance Metrics for Somatic Variant Detection in Validation Studies

| Metric | Calculation | DH-CancerSeq Validation [22] | DeepSomatic Performance [24] |

|---|---|---|---|

| Analytical Sensitivity | TP/(TP + FN) | Established against TST170 using 94 samples | Outperformed existing callers across technologies |

| Analytical Specificity | TN/(TN + FP) | Evaluated using hotspot variants | Particularly high performance for indels |

| SNV Detection | F1-score | Similar input requirements to targeted panel | F1-score of 0.9616 (Strelka2) to 0.9521 (MuTect2) in benchmark |

| Indel Detection | F1-score | 86 samples with different insertions/deletions | Consistent outperformance versus existing tools |

| Coverage Requirements | Minimum depth | Considerable DOC needed for reliable VAF ≥5% in tumor-only WES | Evaluated across various sequencing coverages |

Experimental Protocols for Somatic Short Variant Discovery

Sample Processing and Quality Control

Robust somatic variant discovery begins with meticulous sample preparation and quality assessment. DNA extraction from formalin-fixed, paraffin-embedded (FFPE) tissue can be performed using established protocols such as the AllPrep DNA/RNA FFPE Protocol on the QIAcube or the Purigen Ionic FFPE to Pure DNA Kit [22]. Proper extraction is critical for obtaining sufficient quality DNA from clinical specimens, which often have limited quantity and may be compromised by fixation artifacts.

Extracted DNA must undergo rigorous quality assessment through quantification methods such as Qubit dsDNA Quantitation, High Sensitivity [22]. Quality metrics should include DNA concentration, fragment size distribution, and purity assessments to ensure samples meet minimum requirements for library preparation. For FFPE samples, additional quality indicators such as degradation index may inform processing decisions and interpretation of resulting data.

Library Preparation and Sequencing

Library preparation methodologies vary depending on the intended sequencing approach. For whole-exome sequencing, the SureSelect XTHS kit with V8 probe set has been successfully implemented with automation on the Magnis robot [22]. Including no template controls in every batch is essential for monitoring contamination. Final libraries should be quality-checked and quantified using appropriate methods such as the High Sensitivity D1000 ScreenTape on the 4150 TapeStation system [22].

Sequencing can be performed on platforms such as the Illumina NovaSeq 6000 in batches of up to 64 samples to achieve sufficient depth for somatic variant detection [22]. The specific sequencing depth required depends on the application, with tumor-only WES typically requiring sufficient coverage to call variants reliably at variant allele fractions (VAFs) of ≥5% [22]. The increasing availability of long-read sequencing technologies from Oxford Nanopore Technologies and Pacific Biosciences offers alternative approaches with advantages for complex genomic regions and variant phasing [24].

Bioinformatics Analysis

The bioinformatics pipeline for somatic short variant discovery involves multiple sophisticated steps to distinguish true somatic variants from artifacts and germline polymorphisms:

Data Processing and Quality Control Initial processing includes demultiplexing, adapter trimming, and alignment to reference genomes. Quality control metrics should be assessed at both the FASTQ and binary alignment map levels, including properly paired reads, duplication rates, and depth of coverage [22]. These metrics guide critical decisions regarding sequencing depth and sample inclusion.

Variant Calling For short-read data, Mutect2 (part of the GATK toolkit) employs a Bayesian somatic likelihoods model to call SNVs and indels via local de novo assembly of haplotypes [3]. The tool aligns reads to candidate haplotypes using the Pair-HMM algorithm, then applies a Bayesian model to obtain log odds for alleles being somatic variants versus sequencing errors [3].

For long-read data, DeepSomatic utilizes a deep learning approach, creating tensor-like representations of read features from tumor and normal samples [24]. A convolutional neural network then classifies candidates as reference, germline, or somatic variants [24]. This method has demonstrated consistent outperformance of existing callers across both short-read and long-read technologies [24].

Variant Filtering and Annotation FilterMutectCalls addresses Mutect2's assumption of independent read errors by implementing hard filters for alignment artifacts and probabilistic models for strand bias, polymerase slippage, germline variants, and contamination [3]. Functional annotation with tools such as Funcotator adds gene-level information, variant classifications, and annotations from databases including GENCODE, dbSNP, gnomAD, and COSMIC [3].

Somatic Variant Discovery Workflow

Computational Methods for Somatic Variant Discovery

Short-Read Sequencing Analysis

The GATK somatic short variant discovery pipeline represents a widely adopted approach for analyzing Illumina sequencing data [3]. This workflow requires BAM files for tumor and, when available, matched normal samples that have undergone appropriate pre-processing according to GATK Best Practices [3]. The process involves two main stages: generating candidate somatic variants and applying filters to obtain a high-confidence call set.

Key steps in the GATK pipeline include:

- Calculate Contamination: Using GetPileupSummaries and CalculateContamination tools to estimate cross-sample contamination fractions for each tumor sample, with special design for samples without matched normals and those with significant copy number variation [3].

- Learn Orientation Bias Artifacts: Applying LearnReadOrientationModel to determine prior probabilities of single-stranded substitution errors, particularly important for FFPE samples with characteristic damage patterns [3].

- Filter Variants: Using FilterMutectCalls to account for correlated errors through hard filters and probabilistic models, automatically setting thresholds to optimize the F-score (harmonic mean of sensitivity and precision) [3].

Long-Read Sequencing Analysis

DeepSomatic adapts the DeepVariant germline calling framework for somatic variant discovery by modifying pileup images to contain both tumor and normal aligned reads [24]. The method employs a three-step process:

- make_examples: Creates tensor-like representations of read features, with normal sample reads on top and tumor reads below [24].

- call_variants: Uses a convolutional neural network to classify candidates as reference, germline, or somatic [24].

- postprocess_variants: Tags each candidate with its classification [24].

DeepSomatic has demonstrated consistently superior performance across sequencing technologies, particularly for indel detection [24]. Its development addressed the critical challenge of limited training data for somatic variants by creating and releasing a dataset of five matched tumor-normal cell line pairs sequenced with Illumina, PacBio HiFi, and Oxford Nanopore Technologies [24].

ClairS-TO represents another advanced deep learning method specifically designed for long-read tumor-only somatic variant calling, utilizing an ensemble of two disparate neural networks trained on the same samples but for opposite tasks [25]. The "affirmative network" determines how likely a candidate is a somatic variant, while the "negational network" assesses how likely it is not somatic [25]. This approach demonstrates particular utility in real-world scenarios where matched normal samples are frequently unavailable.

Tumor-Only Analysis Considerations

Tumor-only sequencing analysis presents distinct challenges for distinguishing true somatic variants from germline polymorphisms without a matched normal sample for comparison. Advanced methods address this through:

- Panel of Normals: Creating databases of common germline variants and technical artifacts found in normal samples to filter false positives [3].

- Population Frequency Filtering: Using population databases such as gnomAD to exclude variants with high population frequency (>1%) [22].

- Integrated Classification: Applying statistical methods to classify variants as germline or somatic using estimated tumor purity, ploidy, and copy number profiles [25].

Bioinformatics Pipeline Architecture

Essential Research Reagents and Materials

Table: Key Research Reagents for Somatic Variant Discovery

| Reagent/Resource | Specific Example | Application Note |

|---|---|---|

| DNA Extraction Kit | AllPrep DNA/RNA FFPE Protocol (Qiagen); Purigen Ionic FFPE to Pure DNA Kit | Optimized for degraded FFPE material; includes quality assessment steps |

| Library Prep Kit | SureSelect XTHS with V8 probe set (Agilent) | Designed for whole-exome sequencing; compatible with automation platforms |

| Sequencing Platform | Illumina NovaSeq 6000; Oxford Nanopore PromethION; PacBio Revio | Platform choice depends on required read length, accuracy, and application |

| Positive Control | Horizon Discovery HD789 | Validated reference material for assay performance monitoring |

| Bioinformatics Tool | AUGMET; GATK Mutect2; DeepSomatic; ClairS-TO | Selection depends on sequencing technology and available matched normal |

| Reference Database | gnomAD; COSMIC; ClinVar; dbSNP | Essential for variant annotation and filtering of germline polymorphisms |

| Variant Annotation | Funcotator; VEP | Provides functional context and clinical interpretation for called variants |

The integration of ethical principles with rigorous technical standards forms the foundation of responsible somatic testing in precision oncology. Successful implementation requires coordinated processes spanning test selection, wet laboratory procedures, bioinformatics analysis, and result interpretation, all while maintaining patient-centered communication and consent practices. As sequencing technologies evolve toward more comprehensive approaches including whole-exome and whole-genome sequencing, and as computational methods incorporate advanced machine learning techniques, the standards for clinical validity and ethical implementation must correspondingly advance. Researchers and drug development professionals play a critical role in upholding these standards to ensure that somatic testing continues to fulfill its promise in advancing cancer care while maintaining patient trust and equitable access.

Building a Robust Somatic Variant Calling Pipeline

Next-generation sequencing (NGS) has revolutionized genomic research and clinical diagnostics, providing powerful tools for deciphering the genetic basis of disease. For researchers focused on somatic short variant discovery, selecting the appropriate sequencing strategy is paramount to the success of their investigations. The three primary approaches—whole genome sequencing (WGS), whole exome sequencing (WES), and targeted gene panels—each offer distinct advantages and limitations that must be carefully balanced against research goals, resources, and analytical capabilities [26]. This technical guide provides an in-depth comparison of these methodologies within the context of somatic variant discovery, offering researchers a framework for selecting optimal strategies for their specific applications.

The global NGS market reflects the growing importance of these technologies, particularly in drug discovery where it's projected to grow from $1.45 billion in 2024 to $4.27 billion by 2034, demonstrating a compound annual growth rate of 18.3% [27]. This expansion is driven by the ability of NGS to deliver high-throughput genomic data that accelerates target identification, biomarker discovery, and personalized medicine development. For somatic variant discovery, understanding the technical specifications and performance characteristics of each approach is fundamental to generating reliable, actionable data.

Whole Genome Sequencing (WGS)

WGS sequences the entire genome, including both protein-coding and non-coding regions, providing the most comprehensive view of an individual's genetic makeup [26] [28]. This method enables researchers to identify almost all genetic changes in a patient's DNA, from single nucleotide variants to structural variations [26]. In clinical oncology applications, WGS can identify somatic driver mutations in tumor genomes, constitutional mutations predisposing to cancer, and mutational signatures that may inform about disease mechanisms or environmental mutagens [28].

The comprehensiveness of WGS is particularly valuable for solving the "missing heritability" problem in complex diseases. A recent 2025 Nature study analyzing 347,630 WGS samples from the UK Biobank demonstrated that WGS captured nearly 90% of the genetic signal across 34 diseases and traits based on heritability estimates from family studies [29]. This represents a significant advancement over other methods, with WGS specifically identifying impactful variants in non-coding regions that would be missed by other approaches.

Whole Exome Sequencing (WES)

WES focuses specifically on the protein-coding regions of the genome (the exome), which constitutes less than 2% of the entire genome but harbors the majority of known disease-causing variants [26] [30]. By sequencing only these coding regions, WES provides a cost-effective method for analyzing a large number of samples while maintaining focus on areas most likely to contain pathogenic variants [26].

In practice, WES is often analyzed using virtual panels—predetermined sets of genes known to be associated with the patient's features [30]. This means that despite all exonic regions being sequenced, analysis may be restricted to clinically relevant genes. Alternatively, a gene-agnostic, family-based approach (such as trio sequencing) can be used to identify novel genetic causes of disease [30]. Research has shown that WES has an overall diagnostic yield of 28.8% in clinical cases, increasing to 31% when three family members are analyzed together [26].

Targeted Gene Panels

Targeted gene panels represent the most focused approach, sequencing a predefined set of genes or genomic regions associated with specific conditions [31]. These panels are meticulously designed to target genes implicated in particular pathways, mutations, or diseases, offering high precision and sensitivity for detecting minute changes including single nucleotide polymorphisms (SNPs), insertions and deletions (indels), and copy number variations (CNVs) [31].

The focused nature of targeted panels generates a concise dataset with reduced data noise compared to WGS or WES, making analysis more manageable and cost-effective [31]. This approach is particularly valuable in oncology, where panels can be designed to include genes with known clinical actionability, enabling streamlined identification of biomarkers and therapeutic targets [31]. The technology has proven so effective that it forms the basis for many companion diagnostics used to guide cancer treatment decisions [27].

Technical Comparison and Performance Metrics

Table 1: Comparative Analysis of Key Sequencing Methodologies for Somatic Variant Discovery

| Parameter | Targeted Gene Panels | Whole Exome Sequencing (WES) | Whole Genome Sequencing (WGS) |

|---|---|---|---|

| Genomic Coverage | Predefined gene sets (dozens to hundreds of genes) | ~1-2% of genome (protein-coding exons) | ~100% of genome (coding + non-coding) |

| Variant Types Detected | SNPs, Indels, CNVs (high sensitivity for targeted regions) | SNPs, small Indels (some CNVs with lower accuracy) | SNPs, Indels, CNVs, structural variants, repeats |

| Typical Read Depth | Very high (500x - 1000x+) | Moderate (100x - 200x) | Lower (30x - 100x) |

| Cost Per Sample | $ | $$ | $$$ |

| Data Volume | Low (GB range) | Moderate (~10-15 GB) | High (~100 GB) |

| Turnaround Time | Days [32] | Weeks [30] | Weeks to months [28] |

| Advantages | Cost-effective, high sensitivity, simplified analysis, ideal for clinical applications [31] | Balanced coverage and cost, useful for novel gene discovery [26] [30] | Most comprehensive, detects non-coding variants, better CNV detection [26] [28] |

| Limitations | Limited to known genes, may miss novel findings [31] | Misses non-coding variants, lower sensitivity for CNVs [30] [33] | Higher cost, complex data analysis, storage challenges [26] [34] |

Table 2: Technical Performance Metrics from Validation Studies

| Metric | Targeted Panel Performance | WES Performance | WGS Performance |

|---|---|---|---|

| Sensitivity | 98.23% for unique variants [32] | High for coding SNPs/Indels [30] | Superior for rare variants [29] |

| Specificity | 99.99% [32] | High with appropriate filtering [30] | High but may generate more false positives [26] |

| Variant of Uncertain Significance (VUS) Rate | Lower due to focused analysis | Moderate [30] | Higher due to comprehensive coverage [28] |

| Ability to Detect Novel Associations | Limited to panel content | Good for coding regions [30] | Excellent across genome [29] |

| Heritability Explained | Limited to targeted genes | 17.5% of total genetic variance [29] | ~90% of genetic signal [29] |

Key Technical Considerations for Somatic Variant Discovery

For somatic short variant discovery, several technical factors require special consideration. The limit of detection (LOD) is particularly important when identifying low-frequency somatic mutations. Targeted panels typically achieve the best LOD, with validated assays detecting variants at 2.9% variant allele frequency (VAF) [32]. WES can detect low VAF variants but with less reliability, while WGS performance depends on sequencing depth.

Coverage uniformity varies significantly between methods. Targeted panels demonstrate >98% of target regions with coverage ≥100× unique molecules [32], while WES can suffer from uneven coverage due to hybridization efficiency variations in capture probes [26]. WGS provides more uniform coverage across the genome, though some challenging regions (e.g., those with pseudogenes or repetitive elements) may still pose difficulties [28].

The ability to detect structural variants and CNVs differs substantially across platforms. WGS outperforms both WES and targeted panels for identifying these variant types [26] [28]. WES has limited sensitivity for structural variations, including copy number variants, inversions, and translocations [33], while targeted panels can detect CNVs but only in the predefined target regions.

Methodologies and Experimental Protocols

Workflow Comparison Across Sequencing Methods

The following diagram illustrates the core workflow for targeted NGS panels, highlighting the standardized process from sample to result:

Diagram 1: Targeted NGS panel workflow. This streamlined process enables rapid turnaround times of 4 days for in-house assays [32].

Detailed Methodological Considerations

Sample Collection and Quality Control

The initial sample collection step is critical for all sequencing methods. For somatic variant discovery in oncology, sample types include peripheral blood, tissue biopsies, and liquid biopsies (circulating tumor DNA) [31]. Each sample type has specific considerations: tissue biopsies must be collected under sterile conditions with time-sensitive handling to maintain nucleic acid integrity, while liquid biopsies require specialized tubes to stabilize ctDNA during transport [31].

DNA input requirements vary by methodology. Targeted panels typically require ≥50 ng of DNA input for optimal performance [32], while WES and WGS may have different specifications based on library preparation methods. Sample quality assessment is essential, as degraded samples can lead to incomplete or erroneous sequencing regardless of the platform chosen [31].

Library Preparation and Target Enrichment

Library preparation methodologies differ significantly between the three approaches:

Targeted Panels: Employ either hybrid capture-based enrichment (using probes complementary to target regions) or amplicon-based enrichment (using specific primers to amplify target regions through PCR) [31] [32]. The hybrid capture method generally provides better coverage uniformity, while amplicon approaches can be more efficient for smaller target regions.

WES: Uses hybridization capture to enrich for protein-coding regions specifically. This process involves fragmenting genomic DNA and using probes to capture exonic regions, resulting in sequencing data primarily for these areas and a small amount of adjacent non-coding DNA [30].

WGS: Requires no target enrichment, as the entire genome is sequenced. Patient DNA is fragmented, and sequencing data are generated for the entire genome without selective amplification of specific regions [28].

Sequencing Platforms and Data Generation

Multiple sequencing platforms are available for generating NGS data. Second-generation short-read technologies from Illumina and Thermo Fisher Scientific remain the most commonly used for all three approaches due to their high accuracy and throughput [35]. Third-generation long-read technologies from Oxford Nanopore Technologies and PacBio are gaining popularity for their ability to resolve structural variants and repetitive regions [35].

The choice of platform affects read length, error profiles, and the ability to detect certain variant types. For somatic short variant discovery, short-read platforms generally provide sufficient accuracy for SNP and indel detection, while long-read technologies may be beneficial for complex structural variations [35].

Research Reagent Solutions for Sequencing Workflows

Table 3: Essential Research Reagents and Materials for Sequencing Applications

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Specialized Blood Collection Tubes | Stabilize ctDNA in liquid biopsies | Essential for maintaining sample integrity during transport [31] |

| DNA Extraction Kits | Isolate high-quality nucleic acids | Spin column kits, magnetic beads, or phenol-chloroform extraction [31] |

| Hybrid Capture Probes | Enrich target regions in WES and targeted panels | Design affects coverage uniformity and efficiency [26] [31] |

| Library Preparation Kits | Prepare DNA fragments for sequencing | Compatibility with automation systems reduces human error [32] |

| Sequence Capture Arrays | Immobilized oligonucleotides for exome capture | Critical for WES target enrichment [26] |

| Quality Control Assays | Assess DNA quality and quantity | Bioanalyzer, qPCR; essential for reliable results [31] |

| Barcoded Adapters | Multiplex samples during sequencing | Enable pooling of multiple samples [31] |

| Automated Library Preparation Systems | Standardize library prep process | Reduce contamination risk and improve consistency [32] |

Application-Based Strategy Selection

Decision Framework for Sequencing Approach Selection

The following decision diagram outlines key considerations for selecting the appropriate sequencing method based on research objectives and constraints:

Diagram 2: Decision framework for sequencing strategy selection. Research goals, resources, and technical requirements determine the optimal approach.

Application-Specific Recommendations

Clinical Oncology and Precision Medicine

For clinical oncology applications where timely results and clinical actionability are priorities, targeted gene panels are often the preferred choice [31]. Their focused nature enables faster turnaround times (as short as 4 days for in-house assays) [32], higher sensitivity for low-frequency variants, and simpler data interpretation—critical factors for guiding treatment decisions. Panels can be customized to include genes with established biomarkers for targeted therapies, such as EGFR, BRAF, and KRAS [31].

When designing targeted panels for somatic variant discovery, include genes with established clinical utility and consider incorporating emerging biomarkers to maintain relevance. Validation should establish performance metrics for all variant types included, with particular attention to limit of detection for low-frequency somatic mutations [32].

Rare Disease and Novel Gene Discovery

For rare disease investigation where the genetic cause is unknown, WES provides an optimal balance of comprehensiveness and cost-effectiveness [30]. By sequencing all protein-coding regions, WES enables discovery of novel disease genes while focusing on genomic regions most likely to contain pathogenic variants. The trio sequencing approach (sequencing both parents and the affected child) significantly enhances diagnostic yield by facilitating variant filtering based on inheritance patterns [30].

Research shows WES has an overall diagnostic yield of 28.8% in clinical cases, increasing to 31% when three family members are analyzed [26]. For rare metabolic disorders, WES has demonstrated ability to diagnose 32% of previously unspecified developmental disorders [26].

Complex Disease and Comprehensive Variant Discovery

For complex diseases where non-coding variants, structural variations, or comprehensive variant profiling are essential, WGS provides superior capabilities [26] [29]. The ability to capture variation across the entire genome makes WGS particularly valuable for solving "missing heritability" in complex traits [29].

Recent large-scale studies have demonstrated that WGS captures nearly 90% of the genetic signal across diverse diseases and traits, significantly outperforming WES, which explained only 17.5% of total genetic variance in the same study [29]. WGS also shows particular strength in identifying rare variant associations, such as those influencing lipid traits where it recovered over 30% of the rare variant heritability for HDL and LDL cholesterol [29].

Emerging Trends and Future Directions

The field of genomic sequencing continues to evolve rapidly, with several trends shaping future applications in somatic variant discovery:

AI and Machine Learning Integration: The combination of AI and machine learning with NGS is revolutionizing drug discovery through automated genomic data analysis and predictive modeling [27]. These tools can predict gene-drug interactions and functional consequences of mutations more efficiently than traditional bioinformatics methods, ultimately improving target identification and personalized medicine development.

Declining Sequencing Costs: The cost of whole genome sequencing continues to decrease, making comprehensive genomic analysis increasingly accessible [34] [35]. While WGS was once prohibitively expensive for large studies, emerging technologies promise to further reduce costs, potentially making WGS the default approach for many applications.

Cloud-Based Data Analysis: Cloud computing is increasingly used to manage and analyze large genomic datasets due to the scalability and processing power it offers [27]. Cloud platforms enable global collaboration and reduce the need for local computational infrastructure, making large-scale WGS analysis more feasible for individual laboratories.

Long-Read Sequencing Technologies: Third-generation long-read sequencing platforms from Oxford Nanopore and PacBio are maturing, offering improved accuracy and the ability to resolve complex genomic regions that challenge short-read technologies [35]. These platforms are particularly valuable for detecting structural variants and phasing mutations.

Selecting the appropriate sequencing strategy represents a critical decision point in somatic short variant discovery research. Each approach—targeted panels, WES, and WGS—offers distinct advantages that must be aligned with research objectives, resources, and analytical capabilities.

Targeted panels provide the most practical solution for focused clinical applications where known genes are of interest, high sensitivity is required, and rapid turnaround is essential. WES offers a balanced approach for broader discovery efforts within coding regions, particularly for rare disease investigation and novel gene identification. WGS delivers the most comprehensive variant detection across the entire genome, making it ideal for complex disease studies and situations where maximum genetic information is required.

As sequencing technologies continue to advance and costs decline, the landscape of somatic variant discovery will undoubtedly evolve. However, the fundamental principles of aligning methodological capabilities with research needs will remain essential for generating robust, meaningful results in genomic research and precision medicine.

This guide details the core bioinformatics workflow for processing next-generation sequencing (NGS) data, a foundational component of somatic short variant discovery research. The accuracy of identifying somatic mutations in cancer genomes is critically dependent on the quality of the initial data processing steps, from raw sequence reads to aligned BAM files [36]. This document provides researchers, scientists, and drug development professionals with a comprehensive technical guide to these essential procedures, establishing the data integrity foundation required for robust variant calling and interpretation in accordance with best practices.

The journey from raw sequencing data to analysis-ready aligned files involves multiple, interconnected steps. Each stage includes specific quality control checkpoints to ensure data integrity. The following diagram illustrates the complete workflow and its key components:

Raw Data Quality Control

Understanding FASTQ Format and Quality Scores

Raw sequencing data is typically delivered in FASTQ format, which contains both nucleotide sequences and corresponding quality information for each base [37]. The quality score (Q-score) is expressed in Phred scale, calculated as Q = -10 log₁₀(P), where P is the probability of an incorrect base call [38]. A Q-score of 30 indicates a 1 in 1000 error probability (99.9% accuracy), which is generally considered the minimum acceptable quality for most sequencing experiments [37].

Essential QC Metrics and Tools

Systematic quality assessment of raw FASTQ files is crucial for identifying issues that could compromise downstream analyses. The following table summarizes the key metrics and tools for this initial QC stage:

Table 1: Essential Quality Control Metrics for Raw Sequencing Data

| QC Metric | Description | Optimal Range | Potential Issues |

|---|---|---|---|

| Per-base Sequence Quality | Quality scores across all sequencing cycles [37] | Q-score > 30 across reads [38] | Quality drops at read ends indicate sequencing chemistry issues [38] |

| GC Content | Distribution of guanine-cytosine pairs across reads [38] | Species-specific (~49-51% for exomes) [38] | Deviations >10% may indicate contamination [38] |

| Adapter Contamination | Presence of library adapter sequences in reads [37] | Minimal to no adapter content | Incomplete adapter removal during library prep [37] |

| Sequence Duplication | Proportion of PCR-amplified duplicate reads [38] | Varies by application; <20% typically good | Over-amplification during library preparation [38] |

FastQC is the most widely used tool for initial quality assessment of raw sequencing data [37] [38] [39]. It provides a comprehensive visual report of these key metrics, flagging any parameters that deviate from typical patterns.

Read Trimming and Filtering

When quality issues are identified, tools such as Trimmomatic or Cutadapt can be employed to trim low-quality bases and remove adapter sequences [37] [39]. This preprocessing step maximizes the number of reads that can be successfully aligned to the reference genome and improves the accuracy of downstream variant calling [37]. Key trimming parameters typically include:

- Quality Threshold: Remove bases with quality scores below 20 [37]

- Minimum Read Length: Discard reads shorter than 20 bases after trimming [37]

- Adapter Sequences: Remove known adapter sequences used in library preparation [37]

Read Alignment

Alignment Algorithms and Reference Genomes

The alignment process involves mapping sequencing reads to a reference genome to determine their genomic origin. The choice of alignment algorithm depends on read length and application requirements:

- BWA-MEM: Recommended for reads ≥70 bp, providing optimal alignment accuracy for most modern sequencing platforms [40]

- BWA-aln: Suitable for shorter reads (<70 bp), though largely superseded by BWA-MEM for contemporary applications [40]

The quality of the reference genome significantly impacts alignment accuracy. For human studies, standard references include GRCh38, with some implementations incorporating decoy viral sequences to prevent erroneous alignment of non-human sequences [40].

Post-Alignment Processing

Following initial alignment, several processing steps refine the data:

- Sorting and Merging: Coordinate-based sorting of alignments and merging of files from multiple sequencing runs [40]

- Duplicate Marking: Identification and flagging of PCR duplicates using tools like Picard MarkDuplicates to prevent artificial inflation of variant evidence [40]

- Base Quality Score Recalibration (BQSR): Systematic correction of base quality scores using known variant databases to improve variant calling accuracy [40]

Alignment Quality Control

Essential Alignment Metrics

Quality control of aligned BAM files provides critical insights into sample and technical quality that may not be apparent from raw data alone [38]. The following table outlines key alignment metrics and their interpretations:

Table 2: Key Quality Control Metrics for Aligned BAM Files

| QC Metric | Description | Optimal Range | Potential Issues |

|---|---|---|---|

| Alignment Rate | Percentage of reads successfully mapped to reference [38] | >90% for whole genome; >70% for exome/capture [38] | Poor library quality or reference mismatch [38] |

| Read Depth | Average number of reads covering each base [38] | Varies by application; >100x for somatic variant calling | Inadequate sequencing depth for confident variant calling [38] |

| Insert Size | Length of original DNA fragments [38] | Matches library preparation expectations | Library preparation artifacts [38] |

| Duplicate Rate | Percentage of PCR duplicate reads [40] | <20% typically acceptable | Over-amplification during library preparation [40] |

Three-Stage QC Strategy

A comprehensive quality control strategy should be implemented at three distinct stages: raw data, alignment, and variant calling [38]. This multi-layered approach ensures that quality issues are identified early, potentially saving significant computational resources and preventing erroneous conclusions in downstream analyses. Quality control at the alignment stage focuses on alignment quality, which is crucial for successful variant detection, while variant calling QC serves as the final opportunity to identify samples with quality issues not detected earlier [38].

The Researcher's Toolkit

Successful implementation of the core bioinformatics workflow requires familiarity with essential software tools and resources. The following table catalogs key solutions for NGS data processing:

Table 3: Essential Research Reagent Solutions for NGS Data Processing

| Tool/Resource | Function | Application Context |

|---|---|---|

| FastQC [37] [38] [39] | Quality control analysis of raw sequencing data | Initial assessment of FASTQ files from any sequencing platform |

| Trimmomatic/Cutadapt [37] [39] | Read trimming and adapter removal | Preprocessing of raw reads before alignment |

| BWA [40] [39] | Read alignment to reference genome | Primary alignment of sequencing reads to reference genomes |

| SAMtools [38] [41] | Processing and analysis of aligned data | Manipulation and QC of SAM/BAM format files |

| Picard [40] | Data processing and QC metrics | Marking duplicates, collection of alignment metrics |

| GATK [3] [42] [40] | Base quality recalibration, variant discovery | Processing of aligned data and subsequent variant calling |

The computational pipeline from raw reads to aligned BAM files constitutes the critical foundation of somatic short variant discovery. Methodical execution of quality control at each processing stage—raw data, alignment, and post-processing—ensures the integrity of downstream variant calls [38]. As somatic variant discovery increasingly informs clinical decision-making in oncology, adherence to these standardized workflows and quality control procedures becomes essential for generating reliable, reproducible results that can effectively guide therapeutic strategies [36] [2].