Sparse PCA vs. Standard PCA for Gene Selection: A Comprehensive Guide for Genomic Research

This article provides a comprehensive framework for researchers and drug development professionals to evaluate and apply sparse Principal Component Analysis (PCA) against standard PCA for gene selection in high-dimensional genomic...

Sparse PCA vs. Standard PCA for Gene Selection: A Comprehensive Guide for Genomic Research

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to evaluate and apply sparse Principal Component Analysis (PCA) against standard PCA for gene selection in high-dimensional genomic studies. It covers the foundational principles of how sparse PCA addresses key limitations of standard PCA, such as poor interpretability in High-Dimensional, Low-Sample Size (HDLSS) settings. The content details methodological advances, including techniques for incorporating biological information, offers strategies for troubleshooting common issues like over-regularization and non-orthogonality, and provides a rigorous comparative analysis for validating performance. By synthesizing current research and practical applications, this guide aims to empower scientists to make informed choices that enhance the biological insight and reliability of their dimensionality reduction and feature selection workflows.

Unpacking the Core Principles: Why Standard PCA Falls Short in Genomics

In the field of genomic research, the curse of dimensionality presents a fundamental analytical challenge. With studies routinely measuring 20,000+ genes across limited samples, researchers require dimensionality reduction techniques that are both interpretable and consistent. This guide provides an objective comparison between Standard Principal Component Analysis (PCA) and its modern successor, Sparse PCA, for gene selection tasks. Based on current experimental evidence, Sparse PCA demonstrates superior performance in biomarker identification, biological interpretability, and noise resistance, making it particularly valuable for drug development applications where understanding molecular mechanisms is critical.

Technical Comparison: Standard PCA vs. Sparse PCA

The table below summarizes the core technical differences between Standard PCA and Sparse PCA methodologies relevant to genomic analysis.

Table 1: Fundamental Methodological Differences Between Standard PCA and Sparse PCA

| Aspect | Standard PCA | Sparse PCA |

|---|---|---|

| Core Objective | Capture maximum variance with orthogonal components [1] | Capture maximum variance with sparse, interpretable components [1] [2] |

| Loading Vectors | Dense (typically all non-zero coefficients) [1] [3] | Sparse (many zero coefficients enforced via constraints) [1] [3] [2] |

| Interpretability | Low—each component is a linear combination of all original variables [1] [3] | High—components highlight key variable subsets [3] [2] |

| HDLSS Performance | Inconsistent in High-Dimensional, Low-Sample Size settings [1] | Designed for HDLSS contexts via sparsity assumptions [1] |

| Orthogonality | Components are orthogonal by construction [1] | Components are often non-orthogonal, sharing information [1] |

Experimental Performance Benchmarking

Independent validation studies across multiple biological datasets demonstrate the practical advantages of Sparse PCA for gene selection. The following table synthesizes key performance metrics from recent experimental evaluations.

Table 2: Experimental Performance Comparison on Genomic Data Tasks

| Evaluation Metric | Standard PCA | Sparse PCA (AWGE-ESPCA) | Sparse PCA (RMT-Guided) |

|---|---|---|---|

| Pathway Selection Accuracy | Baseline | Superior pathway enrichment selection [4] | Not Reported |

| Noise Resistance | Moderate | High—accurate target gene identification under noise [4] | Not Reported |

| Cell-Type Classification Accuracy | Baseline (e.g., on scRNA-seq) [5] | Not Reported | Consistently outperforms PCA, autoencoders, and diffusion methods [5] |

| Computational Time | Fast | Moderate [2] | Not Reported |

| Key Advantage | Computational speed, simplicity [6] [2] | Biological interpretability, biomarker identification [4] [3] | Hands-off parameter selection, robust denoising [5] |

Detailed Experimental Protocols

AWGE-ESPCA for Cu²⁺-StressedHermetia illucensGenomics

Objective: To identify key genes and pathways affecting growth under copper stress using a novel Sparse PCA framework [4].

Methodology:

- Dataset: Newly constructed Cu²⁺-stressed Hermetia illucens (black soldier fly) genomic dataset [4].

- Model Core: The AWGE-ESPCA model integrates two key innovations:

- Validation: Performance was compared against four state-of-the-art Sparse PCA models and baseline supervised/unsupervised models across five independent experiments [4].

RMT-Guided Sparse PCA for Single-Cell RNA-Seq

Objective: To denoise single-cell RNA sequencing data and infer sparse principal components that better approximate the true underlying biological signal [5].

Methodology:

- Preprocessing - Biwhitening: A novel algorithm simultaneously estimates and applies diagonal matrices to stabilize variance across both genes and cells. This transforms the data matrix ( X ) into ( Z = CXD ), where ( C ) and ( D ) are diagonal matrices with positive entries, ensuring cell-wise and gene-wise variances are approximately one [5].

- Random Matrix Theory (RMT) Integration: The spectral distribution of the biwhitened data's covariance matrix is analyzed. RMT provides an analytical mapping to distinguish outlier eigenvalues (potential signal) from the noise bulk, guiding the automatic selection of the sparsity parameter in the subsequent Sparse PCA step [5].

- Benchmarking: The method was tested across seven scRNA-seq technologies and four sparse PCA algorithms. Downstream cell-type classification accuracy was used as the key performance metric against PCA-, autoencoder-, and diffusion-based methods [5].

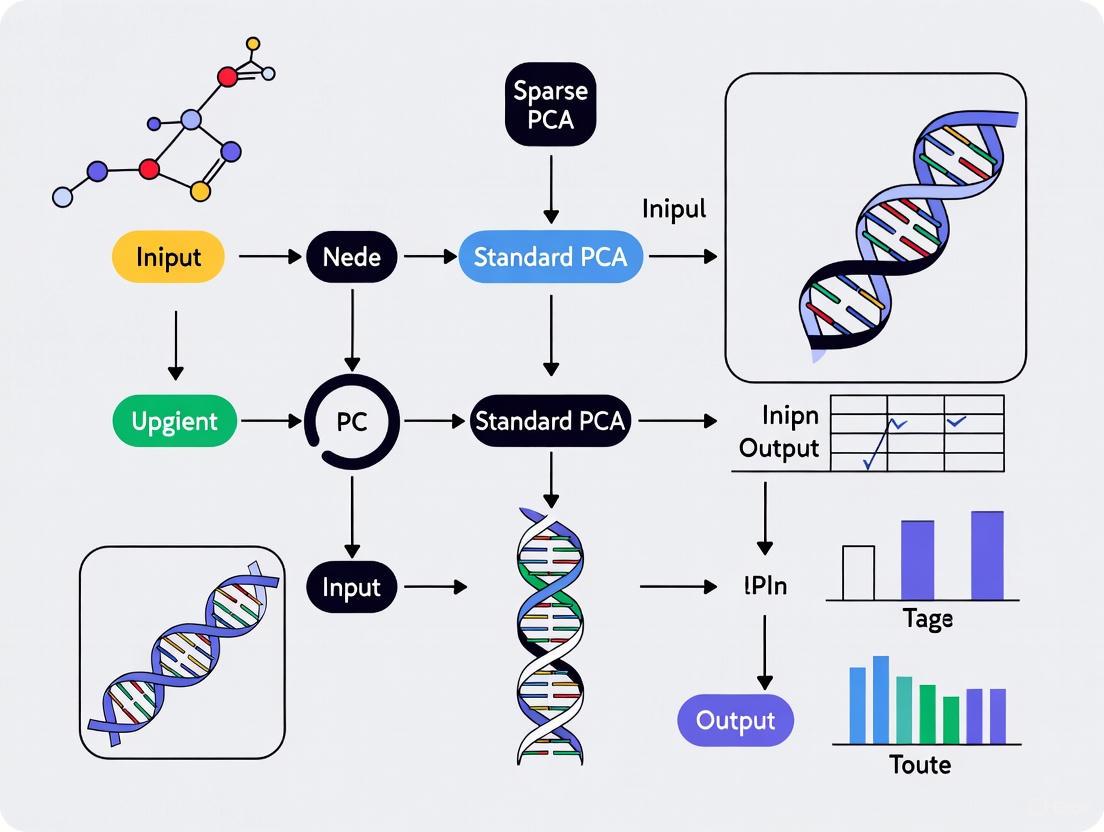

Diagram 1: RMT-Guided Sparse PCA Workflow

Signaling Pathways and Biological Interpretation

Sparse PCA enhances biological discovery by directly linking computational results to known pathway biology. The AWGE-ESPCA model exemplifies this by integrating prior knowledge of gene-pathway relationships.

Diagram 2: Pathway-Aware Gene Selection Logic

Table 3: Key Computational Tools for Implementing Sparse PCA in Genomic Research

| Tool/Resource | Function | Implementation Example |

|---|---|---|

| scikit-learn | Python library providing implementations of standard and Sparse PCA [2] | SparsePCA(n_components=10, alpha=1) [2] |

| R-package ssMRCD | R package for robust covariance estimation for multi-source data, enabling outlier-robust PCA [7] | Used in sparse, outlier-robust PCA for multi-source data [7] |

| Biwhitening Algorithm | Preprocessing step to stabilize variance across cells and genes for RMT-guided Sparse PCA [5] | Simultaneously optimizes diagonal matrices C and D for variance stabilization [5] |

| Random Matrix Theory (RMT) | Mathematical framework to guide sparsity parameter selection, making Sparse PCA nearly parameter-free [5] | Analyzes eigenvalue distribution to distinguish signal from noise [5] |

| Adaptive Noise Elimination Regularizer | Novel regularizer specifically designed to handle noise in non-human genomic data (e.g., insect genomes) [4] | Core component of the AWGE-ESPCA model [4] |

For gene selection in high-dimensional genomic studies, Sparse PCA provides a demonstrable advance over Standard PCA where biological interpretability is a primary concern. Its ability to yield sparse, easily interpretable components that directly highlight key genes and pathways makes it particularly valuable for drug development workflows aimed at identifying novel biomarkers and therapeutic targets.

Future methodological development is likely to focus on integrating Sparse PCA with other data modalities and enhancing robustness further. Promising directions include multi-source Sparse PCA that jointly analyzes related datasets to distinguish global from local patterns [7], and continued refinement of automated parameter selection to make these powerful techniques more accessible to biological researchers [5].

How Standard PCA Works and Where It Fails for Gene Selection

In the field of bioinformatics and precision oncology, analyzing high-dimensional genomic data presents a fundamental challenge. Gene expression data typically contains thousands of variables (genes) measured across relatively few samples, creating what is known as the "high dimension, low sample size" problem. Researchers need powerful dimensionality reduction techniques to identify meaningful biological patterns and select relevant genes for further investigation. Principal Component Analysis (PCA) has served as a foundational tool for this purpose, but its limitations have prompted the development of more advanced sparse PCA methods. This guide provides an objective comparison of these approaches, examining their performance characteristics and practical applications in gene selection research.

Fundamentals of Principal Component Analysis

Principal Component Analysis is a multivariate statistical technique that transforms complex, high-dimensional data into a lower-dimensional representation while preserving as much variance as possible. The method works by identifying new variables, called principal components, which are linear combinations of the original variables and orthogonal to each other.

Mathematically, PCA finds projections (\boldsymbol{\alpha} \in \mathfrak{R}^{p}) that maximize the variance of the standardized linear combination (X\alpha), formalized as:

[\max_{\boldsymbol{\alpha}\ne {\mathbf{0}}} {\boldsymbol{\alpha}}^{\text{T}}\mathbf{X}^{\text{T}}\mathbf{X}{\boldsymbol{\alpha}} ~\text{subject to} {\boldsymbol{\alpha}}^{\text{T}}{\boldsymbol{\alpha}} =1]

For subsequent components, additional constraints ensure they are uncorrelated with previous components [8]. The solution to this optimization problem results in an eigenvalue decomposition of the sample covariance matrix (\mathbf{X}^{\text{T}}\mathbf{X}), where the principal component loadings correspond to the eigenvectors and the amount of variance explained is proportional to the eigenvalues [9].

In practical terms, PCA achieves dimensionality reduction by projecting the original data onto a new coordinate system where the greatest variances lie along the first coordinate (first principal component), the second greatest along the second coordinate, and so on. This allows researchers to summarize large gene expression datasets with far fewer components while retaining the most important structural information.

Critical Limitations of Standard PCA for Gene Selection

Despite its mathematical elegance and widespread use, standard PCA faces significant limitations when applied to gene selection tasks in genomic research, particularly in the context of high-dimensional biological data.

Lack of Interpretability and Biological Meaning

The most significant limitation of standard PCA for gene selection is its lack of interpretability. Since each principal component is a linear combination of all genes in the dataset, interpreting which specific genes drive the observed patterns becomes challenging. As noted in research, "the principal component loadings are linear combinations of all available variables, the number of which can be very large for genomic data" [8]. This means that when researchers identify an interesting pattern in the principal components, they cannot easily determine which specific genes are responsible, undermining the biological interpretability of results.

Inability to Produce Sarse Solutions

In standard PCA, all variables (genes) receive non-zero coefficients in the principal components, making it impossible to perform automatic gene selection. This "all-in" characteristic means that researchers must manually examine loading scores post-hoc to identify important genes, a process that becomes increasingly subjective as dataset dimensionality grows. As one study explains, "It is therefore desirable to obtain interpretable principal components that use a subset of the available data to deal with the problem of interpretability of principal component loadings" [8].

Statistical Inconsistency in High Dimensions

In high-dimensional settings where the number of variables (genes) far exceeds the number of observations, standard PCA can suffer from statistical inconsistency. The computed coefficients may not reliably converge to their true population values as sample size increases, potentially leading to misleading results [9]. This limitation is particularly problematic in genomic studies where measuring thousands of genes across limited patient samples is common.

Ignoring Biological Network Information

Standard PCA operates as a purely mathematical technique without incorporating prior biological knowledge. As researchers have recognized, "complex biological mechanisms occur through concerted relationships of multiple genes working in networks that are often represented by graphs" [8]. By treating all genes as independent variables, standard PCA fails to leverage valuable information about known biological pathways, gene interactions, and functional relationships that could improve both the accuracy and interpretability of results.

Table 1: Key Limitations of Standard PCA for Gene Selection

| Limitation | Impact on Gene Selection | Practical Consequence |

|---|---|---|

| Lack of interpretability | Difficult to identify specific genes driving patterns | Reduced biological insight |

| Dense solutions | No automatic gene selection | Manual, post-hoc gene identification |

| Statistical inconsistency | Unreliable coefficients in high dimensions | Potentially misleading results |

| Ignores biological networks | Misses known gene relationships | Suboptimal use of prior knowledge |

Sparse PCA: Methodological Advances for Gene Selection

Sparse PCA methods address the limitations of standard PCA by incorporating sparsity constraints that force some coefficients to exactly zero, thereby automatically performing gene selection within the dimensionality reduction process. Several methodological approaches have been developed.

Penalized Regression Formulations

Some sparse PCA methods reformulate PCA as a regression-type problem and impose penalty terms such as the lasso ((L_1)-norm) or elastic net penalties on the principal component loadings. These penalties shrink some coefficients to zero, effectively removing irrelevant genes from the components while maintaining most of the explained variance [8] [9].

Structured Sparse PCA Methods

More advanced sparse PCA methods incorporate biological structure into the sparsity constraints. For example, Fused and Grouped sparse PCA methods "enable incorporation of prior biological information in variable selection" by considering how variables are connected within biological pathways or networks [8]. These approaches can identify functionally related gene groups rather than just individual genes.

Bayesian Sparse PCA

Bayesian approaches to sparse PCA, such as SuSiE PCA, provide uncertainty quantification through posterior inclusion probabilities. This method "evaluates uncertainty in contributing variables through posterior inclusion probabilities" and has demonstrated advantages in "signal detection and model robustness" compared to other sparse PCA approaches [10].

Table 2: Sparse PCA Method Categories and Their Applications

| Method Type | Key Characteristics | Typical Applications |

|---|---|---|

| Penalized regression (SPCA) | L1-norm penalties on loadings | General high-dimensional gene selection |

| Structured sparse PCA | Incorporates biological network information | Pathway analysis, functional genomics |

| Bayesian sparse PCA | Provides uncertainty quantification | Robust gene selection, hypothesis generation |

Comparative Performance Analysis: Experimental Evidence

Multiple studies have conducted empirical comparisons between standard and sparse PCA methods, quantifying their performance differences in gene selection tasks.

Feature Selection Accuracy

In simulation studies, structured sparse PCA methods demonstrate superior performance in identifying true signal variables while effectively ignoring noise. Research shows these methods "achieve higher sensitivity and specificity when the graph structure is correctly specified, and are fairly robust to misspecified graph structures" [8]. This translates to more accurate identification of biologically relevant genes with fewer false positives.

Computational Efficiency

The computational performance of sparse PCA methods varies significantly by implementation. In one comparison, "SuSiE PCA identifies modules with a higher enrichment of ribosome-related genes than sparse PCA, while being ∼ 18x faster" [10]. This computational advantage enables analysis of larger genomic datasets more feasible.

Biological Relevance

Sparse PCA methods consistently outperform standard PCA in identifying biologically meaningful gene sets. Applications to real genomic data have shown that these methods "identified pathways that are suggested in the literature to be related with glioblastoma" [8], demonstrating their ability to recover known biology more effectively than standard PCA.

Table 3: Performance Comparison of PCA Methods in Genomic Studies

| Performance Metric | Standard PCA | Sparse PCA | Structured Sparse PCA |

|---|---|---|---|

| Feature selection capability | None (manual post-hoc) | Automatic | Automatic with biological context |

| Interpretability | Low | High | Highest |

| Biological relevance | Variable | Improved | Significantly improved |

| Handling of high-dimensional data | Statistical inconsistency | Improved consistency | Best consistency |

| Computational speed | Fast | Variable (slower) | Slowest |

Experimental Protocols for Method Evaluation

To ensure fair and reproducible comparisons between standard and sparse PCA methods, researchers should follow standardized evaluation protocols.

Data Generation and Simulation Design

Simulation studies should include data generation schemes with sparseness residing in different structures: "right singular vectors or the loadings, instead of also incorporating models with sparseness in the weights" [9]. This comprehensive approach prevents over-optimistic conclusions about method performance.

Initialization Strategies

For sparse PCA methods that use iterative optimization, initialization strategies significantly impact results. Studies should compare different initialization approaches rather than relying exclusively on right singular vectors from standard PCA, as this practice "seem[s] to ignore the fact that these quantities represent different model structures" [9].

Evaluation Metrics

Comprehensive evaluation should include multiple metrics:

- Proportion of variance accounted for (VAF): Measures reconstruction accuracy

- Sensitivity and specificity: Assesses feature selection accuracy

- Biological validation: Examines enrichment of known biological pathways

- Stability: Evaluates consistency across subsamples or similar datasets

Implementation Workflow for Gene Selection Studies

The following diagram illustrates a typical workflow for implementing sparse PCA in gene selection studies, incorporating best practices from recent research:

Implementing and evaluating PCA methods for gene selection requires specific data resources and computational tools.

Table 4: Essential Resources for PCA-Based Gene Selection Research

| Resource Category | Specific Examples | Application in Research |

|---|---|---|

| Genomic Databases | GEO [11], TCGA [11], GTEx [12] | Source of gene expression data for analysis |

| Biological Pathway Resources | KEGG [13] [11], Pathway Commons [13] | Provides prior biological knowledge for structured methods |

| Software Tools | FUSION [12], UTMOST [12], SuSiE PCA [10] | Implementation of specialized sparse PCA methods |

| Programming Environments | R, Python with scikit-learn | General-purpose implementation of standard and basic sparse PCA |

Standard PCA remains a valuable tool for initial data exploration and dimensionality reduction, but its limitations for gene selection are significant and well-documented. Sparse PCA methods address these limitations by producing interpretable, sparse solutions that automatically perform gene selection while maintaining statistical consistency in high-dimensional settings. Among sparse PCA variants, structured methods that incorporate biological network information and Bayesian approaches with uncertainty quantification show particular promise for genomic applications.

As research in this field advances, future developments will likely focus on integrating additional biological knowledge, improving computational efficiency for ultra-high-dimensional data, and enhancing methodological robustness. For researchers conducting gene selection studies, the evidence suggests that sparse PCA methods, particularly those incorporating biological structure, generally outperform standard PCA while providing more interpretable and biologically relevant results.

Principal Component Analysis (PCA) has long been a cornerstone of genomic data analysis, valued for its ability to reduce high-dimensional data while preserving maximal variance. However, standard PCA produces principal components (PCs) that are linear combinations of all available genes in the original dataset, creating significant interpretability challenges when researchers attempt to identify which specific genes drive biological patterns. Sparse PCA (sPCA) represents a transformative advancement by introducing sparsity constraints that force many loading coefficients to exactly zero, resulting in components comprised of meaningful gene subsets rather than all measured genes. This paradigm shift enables researchers to pinpoint specific biomarkers and biological mechanisms with unprecedented precision, fundamentally changing how we extract insight from complex biological data.

Conceptual Framework: How Sparse PCA Overcomes Standard PCA Limitations

The Mathematical Foundation of Sparsity

The fundamental difference between standard PCA and sparse PCA lies in their optimization objectives. Standard PCA identifies orthogonal directions that capture maximum variance in the data without constraints on the number of variables contributing to each component. Sparse PCA modifies this objective by incorporating sparsity-inducing penalties:

$$ \min{\mathbf{W}} \|X - X\mathbf{W}\mathbf{W}^T\|F^2 + \lambda \|\mathbf{W}\|_1 $$

Where $X$ is the data matrix, $\mathbf{W}$ is the projection matrix, and $\lambda$ controls the sparsity penalty [2]. Larger $\lambda$ values force more coefficients to zero, enhancing interpretability but potentially reducing variance explained. This deliberate trade-off enables researchers to balance biological interpretability against statistical completeness based on their specific research goals.

Enhanced Biological Interpretability

The primary advantage of sparse PCA in genomic research stems from how it addresses the "dense loading problem" of standard PCA. In standard PCA, when analyzing thousands of genes, each principal component typically contains non-zero loadings for all genes, making it exceptionally difficult to determine which specific genes are biologically relevant [3]. Sparse PCA produces components with only a subset of genes having non-zero loadings, immediately highlighting potentially important biomarkers [2]. This capability is particularly valuable in fields like cancer subtyping, where identifying driver genes among thousands of possibilities can lead to breakthroughs in understanding disease mechanisms and developing targeted therapies.

Table 1: Core Differences Between Standard PCA and Sparse PCA

| Characteristic | Standard PCA | Sparse PCA |

|---|---|---|

| Loading Coefficients | Dense (mostly non-zero) | Sparse (many exact zeros) |

| Interpretability | Challenging, all genes contribute | High, focuses on key gene subsets |

| Variable Selection | Not inherent | Built into the method |

| Biological Insight | Identifies global patterns | Pinpoints specific biomarkers |

| Implementation Complexity | Simple, deterministic | More complex, requires parameter tuning |

Methodological Advances: Robust and Multi-Source Sparse PCA

Random Matrix Theory for Automated Parameter Selection

A significant challenge in traditional sparse PCA implementation has been the sensitivity to penalty parameter selection ($\lambda$), where suboptimal choices could introduce misleading artifacts mistaken for biological signal [5]. Recent advances have integrated Random Matrix Theory (RMT) to guide sparsity parameter selection, rendering sparse PCA nearly parameter-free while maintaining robustness. The RMT-guided approach includes a novel biwhitening procedure that simultaneously stabilizes variance across genes and cells, enabling automatic identification of the optimal sparsity level based on the theoretical properties of large covariance matrices [5] [14]. This methodological innovation addresses a critical limitation that previously hindered widespread sparse PCA adoption in genomic studies.

Handling Multi-Source Data and Outliers

Genomic studies increasingly integrate multiple data sources (e.g., gene expression, DNA methylation, miRNA expression), creating new analytical challenges. Recent sparse PCA extensions simultaneously (i) select important features, (ii) detect global sparse patterns across multiple data sources, (iii) identify local source-specific patterns, and (iv) maintain resistance to outliers [7]. These methods employ regularization problems with penalties that accommodate global-local structured sparsity patterns, using outlier-robust covariance estimators like the spatially smoothed MRCD (ssMRCD) as plug-ins to permit joint, robust analysis across multiple data sources [7]. This multi-source capability is particularly valuable for cancer subtyping, where different molecular data types can provide complementary insights into disease mechanisms.

Experimental Performance Comparison Across Genomic Applications

Single-Cell RNA Sequencing Analysis

In systematic benchmarks across seven single-cell RNA-seq technologies and four sparse PCA algorithms, RMT-guided sparse PCA consistently outperformed standard PCA, autoencoder-, and diffusion-based methods in cell-type classification tasks [5]. The method demonstrated particular strength in accurately estimating the true underlying gene-gene covariance structure ($\mathbb{E}[S]$) when the number of cells and genes were large but comparable - a common scenario in real-world single-cell experiments where typical studies capture a few thousand cells while measuring around twenty thousand genes [5].

Table 2: Performance Comparison in Single-Cell RNA-seq Classification

| Method | Cell Type Accuracy | Interpretability | Robustness to Noise | Computational Demand |

|---|---|---|---|---|

| Standard PCA | Baseline | Moderate | Low | Low |

| Sparse PCA (RMT-guided) | Highest | High | High | Moderate |

| Vanilla Autoencoder | High | Low | Moderate | High |

| Variational Autoencoder | High | Low | Moderate | Highest |

| Diffusion Methods | Moderate | Low | Moderate | Moderate |

Cancer Subtype Detection from Multi-Omics Data

In cancer subtype detection using multi-omics data integration, sparse PCA methods have demonstrated superior performance compared to standard linear approaches. A comprehensive evaluation across four cancer types (Glioblastoma multiforme, Colon Adenocarcinoma, Kidney renal clear cell carcinoma, and Breast invasive carcinoma) using three data types (gene expression, DNA methylation, and miRNA expression) revealed that sparse PCA consistently improved subtype separation and marker gene identification compared to standard PCA [15]. However, the study also found that different autoencoder variants (vanilla, sparse, denoising, and variational) sometimes outperformed both standard and sparse PCA in specific cancer types, suggesting that method selection should be context-dependent [15].

Large-Scale Gene Expression Studies

For bulk RNA-seq and large-scale gene expression analyses, sparse PCA has proven particularly valuable in biomarker discovery. The forced sparsity enables identification of compact gene signatures directly from high-dimensional data without requiring pre-filtering steps that might eliminate biologically important but low-variance genes. In practical applications, sparse PCA has successfully identified biologically coherent gene modules in complex diseases where standard PCA produced components lacking clear biological interpretation due to the "dense loading" problem [3] [2].

Experimental Protocols and Workflows

Standard Sparse PCA Implementation

The basic experimental protocol for sparse PCA implementation in genomic studies involves:

Data Preprocessing: Standard normalization and scaling of gene expression data, typically using log-transformation for RNA-seq data followed by z-scoring.

Dimensionality Reduction: Application of sparse PCA to the processed data matrix using algorithms such as:

- The SCoTLASS algorithm introduced by Jolliffe et al. incorporating LASSO constraints

- The regression-based formulation by Zou et al. using elastic-net penalties

- Regularized SVD approaches as proposed by Shen and Huang [7]

Sparsity Parameter Tuning: Selection of optimal sparsity parameters through:

- Cross-validation based on reconstruction error

- Variance explanation thresholds

- RMT-guided automatic selection (recommended) [5]

Component Interpretation: Biological interpretation of sparse components by:

- Identifying genes with non-zero loadings

- Pathway enrichment analysis of gene sets within each component

- Correlation with clinical outcomes or experimental conditions

Advanced RMT-Guided Sparse PCA Protocol

For single-cell RNA-seq applications, the advanced RMT-guided protocol provides more robust results:

Biwhitening Procedure: Simultaneously estimate diagonal matrices $C$ and $D$ with positive entries such that cell-wise and gene-wise variances of $Z = CXD$ are approximately 1 using a Sinkhorn-Knopp inspired algorithm [5].

Covariance Matrix Estimation: Compute the sample covariance matrix $S$ from the biwhitened data.

Outlier Eigenspace Identification: Use RMT to identify the outlier eigenspace based on the theoretical spectral distribution $\rho_S$ of the biwhitened data [5].

Sparsity Level Selection: Automatically select the sparsity parameter such that the inferred sparse subspace and the outlier subspace approximately match the angle predicted by RMT [5].

Sparse PCA Application: Apply preferred sparse PCA algorithm (SCoTLASS, elastic-net, or regularized SVD) with the RMT-guided sparsity parameter.

The Scientist's Toolkit: Essential Research Reagents and Computational Solutions

Table 3: Essential Computational Tools for Sparse PCA Implementation

| Tool/Algorithm | Primary Function | Application Context | Key Reference |

|---|---|---|---|

| RMT-guided sPCA | Automated sparsity selection | Single-cell RNA-seq analysis | [5] |

| Elastic-net sPCA | Regression-based sparse PCA | General genomic applications | [7] [16] |

| SCoTLASS | LASSO-constrained PCA | High-dimensional biomarker discovery | [7] |

| Robust Multi-source sPCA | Handling multiple data sources | Multi-omics integration | [7] |

| ssMRCD Estimator | Outlier-robust covariance estimation | Data with quality issues | [7] |

Sparse PCA represents a genuine revolution in genomic data analysis by addressing the critical interpretability limitations of standard PCA. The forced sparsity in component loadings enables direct biological interpretation by highlighting specific genes rather than presenting dense linear combinations of all measured genes. Recent methodological advances, particularly RMT-guided parameter selection and robust multi-source implementations, have addressed earlier limitations and expanded sparse PCA's applicability across diverse genomic contexts.

For researchers implementing these methods, we recommend:

- Prioritize RMT-guided sparse PCA for single-cell RNA-seq applications due to its automated parameter selection and noise resilience

- Consider multi-source sparse PCA when integrating multiple omics data types to capture both global and source-specific patterns

- Validate biological findings through complementary experimental techniques, remembering that while "all sparse PCA models are wrong, some are useful" for generating testable hypotheses [16]

- Balance sparsity with variance explanation by exploring multiple sparsity levels when biological interpretation is prioritized over complete variance capture

The sparse PCA revolution continues to evolve, with ongoing developments in nonlinear sparse factorization, integration with deep learning architectures, and applications to spatial transcriptomics promising to further enhance our ability to extract meaningful biological insight from increasingly complex genomic datasets.

In the field of genomic research, Principal Component Analysis (PCA) is a fundamental tool for dimensionality reduction. However, a critical divergence exists between its standard form, which produces dense loadings, and its modern sparse variant, which yields sparse, interpretable genesets. This guide objectively compares these methodologies, focusing on their performance and output for gene selection research.

Core Conceptual Differences and Output Characteristics

The fundamental difference lies in the structure and interpretability of the outputs generated by standard PCA and sparse PCA.

- Standard PCA (Dense Loadings): This traditional approach identifies principal components (PCs) as linear combinations of all input variables. The loadings, which are the coefficients of these linear combinations, are typically non-zero for all genes. This "dense" output makes biological interpretation challenging, as it is difficult to discern which specific genes are driving the variation captured by each PC [17].

- Sparse PCA (Sparse, Interpretable Genesets): Sparse PCA introduces constraints or penalties that force the loadings of many genes to be exactly zero. This results in each PC being defined by a compact set of genes, effectively producing interpretable gene sets. This sparsity facilitates the identification of biologically relevant pathways and mechanisms, as the output directly points to a small subset of influential genes [17] [8].

The table below summarizes the key differences in their outputs.

| Feature | Standard PCA (Dense Loadings) | Sparse PCA (Sparse Genesets) |

|---|---|---|

| Loading Structure | Dense (mostly non-zero coefficients) | Sparse (many zero coefficients) |

| Biological Interpretability | Difficult; PCs are combinations of all genes | High; PCs are defined by small, specific gene sets |

| Primary Goal | Maximize explained variance for data summarization | Balance explained variance with interpretable feature selection |

| Use Case in Genomics | Data pre-processing, noise reduction, visualization | Identifying key genes and pathways, generating biological hypotheses |

Experimental Performance and Quantitative Comparison

Empirical studies demonstrate that sparse PCA methods significantly enhance the ability to extract meaningful biological signals from high-dimensional genomic data.

Performance in Identifying Ground Truth

In a benchmark study on single-cell RNA-sequencing (scRNA-seq) data from stimulated immune cells, a supervised method (Spectra) designed to find interpretable gene programs was tested against other factorization techniques. The key performance metric was the association of identified gene programs with known experimental perturbations [18].

| Method | Identified IFNγ Program | Identified LPS Program | Identified TCR Program |

|---|---|---|---|

| Spectra (Sparse) | Yes (Correct cell type) | Yes (Correct cell type) | Yes (Correct cell type) |

| expiMap | No | No | No |

| Slalom | No | No | No |

Robustness in Complex Biological Contexts

When applied to a challenging breast cancer scRNA-seq dataset, sparse methods demonstrated superior robustness and specificity [18].

- Alignment with Known Biology: A large majority (171 out of 197) of the factors identified by Spectra were strongly constrained by prior biological knowledge, with over 50% of their genes overlapping with known gene sets.

- Cell-Type Specificity: Sparse methods can effectively restrict gene programs to biologically relevant cell types. For instance, a CD8+ T cell exhaustion program was correctly confined to T cells, whereas other methods misassigned it to myeloid and NK cells.

- Overcoming Pleiotropy: Sparse factorization correctly identified an IFNγ response program across all cell types. In contrast, a simple gene set scoring method was confounded by baseline expression differences and detected the response almost exclusively in myeloid cells.

Experimental Protocols and Methodologies

The evaluation of these methods relies on specific experimental workflows and data processing steps.

General Workflow for Comparative Analysis

The following diagram illustrates a standard protocol for comparing dense and sparse PCA outputs on genomic data.

Detailed Methodology for Sparse PCA with Biological Information

Advanced sparse PCA methods incorporate prior biological knowledge to guide the selection of genes. The protocol for such a method, like Fused or Grouped Sparse PCA, involves [8]:

Input Preparation:

- Data Matrix: A normalized and centered

n x pgene expression matrix, wherenis the number of samples andpis the number of genes. - Biological Network: A graph

G=(C, E, W)representing prior knowledge, whereCis the set of genes (nodes),Eis the set of edges indicating known interactions, andWis the weight of the nodes.

- Data Matrix: A normalized and centered

Optimization Problem: The sparse PCA is formulated as an optimization problem that incorporates the biological graph structure. The objective is to find principal component loadings

αthat:- Maximize Variance: Capture the maximum variance in the data (

αᵀXᵀXα). - Enforce Sparsity: Use a Lasso or similar penalty (e.g.,

||α||₁) to shrink small loadings to zero. - Incorporate Structure: Apply a smoothing penalty (e.g., a generalized Fused Lasso) that encourages connected genes in the graph

Gto have similar loadings. This promotes the selection of biologically coherent gene sets.

- Maximize Variance: Capture the maximum variance in the data (

Algorithm and Computation: An efficient algorithm is used to solve the non-convex optimization problem, often involving alternating minimization or proximal methods to handle the sparsity and structural penalties.

Output Analysis: The resulting sparse loadings are analyzed. Genes with non-zero loadings form interpretable gene sets, which are then validated through pathway enrichment analysis or association with clinical outcomes.

The Scientist's Toolkit: Essential Research Reagents

The following table lists key computational tools and resources essential for conducting research in this field.

| Research Reagent / Resource | Type | Primary Function |

|---|---|---|

| Spectra | Software Algorithm | Supervised discovery of interpretable gene programs from single-cell data by incorporating prior gene sets and cell-type labels [18]. |

| Gene Set Enrichment Analysis (GSEA) | Analytical Method | Evaluates if a pre-defined gene set is statistically enriched at the extremes of a ranked gene list, aiding in the interpretation of sparse genesets [19]. |

| Fused/Grouped Sparse PCA | Analytical Method | Sparse PCA variants that incorporate biological network information to produce more coherent and interpretable gene sets [8]. |

| Immunology Knowledge Base | Prior Knowledge | A curated resource of immunological gene sets (e.g., 231 gene sets for cell types and processes) used as input for supervised methods like Spectra [18]. |

| Molecular Signature Database (MSigDB) | Database | A collection of annotated gene sets for use with GSEA and other interpretation tools [19]. |

The evidence clearly demonstrates that sparse, interpretable genesets offer a substantial advantage over dense loadings for biological discovery in genomics. While standard PCA remains useful for initial data exploration and noise reduction, its dense outputs are often biologically uninterpretable. In contrast, sparse PCA outputs directly generate testable hypotheses by pinpointing specific, and often biologically coherent, groups of genes. For researchers and drug development professionals focused on identifying key genes and pathways underlying complex diseases, sparse PCA methods that incorporate prior biological knowledge represent a superior and more powerful approach.

Understanding the HDLSS Setting and the 'Curse of Dimensionality'

In fields such as genomics and medical imaging, researchers often encounter a data paradigm known as High-Dimensional Low Sample Size (HDLSS). In these scenarios, the number of features (p) for each sample—such as genes in an expression study—drastically exceeds the number of available observations (n). This imbalance presents significant challenges for statistical analysis and machine learning, a problem often termed the "curse of dimensionality" [20].

The curse of dimensionality refers to phenomena that arise in high-dimensional spaces which do not occur in low-dimensional settings. As the number of dimensions grows, the volume of the space increases so rapidly that available data becomes sparse, making it difficult to find meaningful patterns [21]. This is particularly problematic in gene selection research, where the goal is to identify a small subset of biologically relevant genes from thousands of measured candidates. Within this context, Principal Component Analysis (PCA) and its variant, Sparse PCA, are critical tools for dimensionality reduction. This guide provides an objective comparison of these two methods, focusing on their application for gene selection in HDLSS settings.

The HDLSS Challenge and the Curse of Dimensionality

HDLSS data is characterized by a vast feature space with a comparatively tiny sample size. For instance, a genomic study might measure the expression levels of 20,000 genes from only 100 patients [20]. This setup creates several specific obstacles:

- Overfitting: Models trained on HDLSS data can fit the training data exceptionally well by memorizing noise, but they fail to generalize to new, unseen data [20].

- Distance Concentration: In high-dimensional space, the concept of distance becomes less meaningful. The Euclidean distance between all pairs of points tends to become very similar, weakening the effectiveness of algorithms that rely on distance calculations, such as clustering [21] [20].

- Data Sparsity: The data points reside in a tiny fraction of the vast high-dimensional volume, making it difficult to estimate underlying data structures reliably [21].

- Interpretation Difficulty: With thousands of variables, understanding which ones are truly driving the observed outcomes becomes a monumental task.

These challenges necessitate specialized approaches to data analysis, making dimensionality reduction not just beneficial but essential.

Dimensionality Reduction: A Necessary Step

Dimensionality reduction techniques aim to mitigate the curse of dimensionality by transforming the high-dimensional data into a lower-dimensional space while preserving its essential structure. These methods are broadly categorized into feature selection and feature extraction [22].

- Feature Selection: This involves identifying and retaining a subset of the most relevant features from the original set. Techniques include variance thresholds, correlation thresholds, and genetic algorithms [22]. In genomics, this is directly related to gene selection [23].

- Feature Extraction: This involves creating a new, smaller set of features that are combinations of the original ones. PCA is the most classic example of this approach [22].

The following workflow illustrates a typical process for analyzing HDLSS genomic data, highlighting where PCA and Sparse PCA fit in:

Sparse PCA vs. Standard PCA: A Head-to-Head Comparison

While both standard PCA and Sparse PCA are feature extraction techniques, their underlying mechanics and outputs differ significantly, leading to distinct advantages and disadvantages.

Core Methodologies

- Standard PCA: This is an unsupervised algorithm that creates new features, called principal components, which are linear combinations of all original variables. These components are orthogonal (uncorrelated) and are ranked by the amount of variance they explain from the original data. The first component (PC1) explains the most variance, PC2 the second-most, and so on [17] [22]. The coefficients used in these linear combinations are called component weights.

- Sparse PCA: Sparse PCA modifies the PCA objective by imposing a "sparsity-inducing" constraint or penalty (e.g., a lasso penalty). This forces many of the component weights to be exactly zero [17]. The result is principal components that are linear combinations of only a small subset of the original variables. It's crucial to note that different sparse PCA methods exist, primarily categorized by whether they impose sparsity on the component loadings (for interpretability) or the component weights (for summarization) [17].

Objective Comparison

The table below summarizes the key differences between the two approaches.

| Aspect | Standard PCA | Sparse PCA |

|---|---|---|

| Core Objective | Maximize variance explained using linear combinations of variables. | Maximize variance explained under a constraint that limits the number of non-zero coefficients. |

| Model Output | Dense components; all original variables contribute to every component. | Sparse components; each component is comprised of only a few original variables. |

| Interpretability | Low. Components are often difficult to interpret as all variables have a non-zero weight. | High. The presence of zero weights clearly indicates which variables are irrelevant to a component. |

| Theoretical Basis | Solved via Singular Value Decomposition (SVD) or Eigenvalue Decomposition. | Solves a modified optimization problem, often using penalties like Lasso. |

| Primary Use Case | General-purpose dimensionality reduction for data compression and visualization. | Exploratory data analysis and feature selection in high-dimensional settings. |

| Handling of Redundant Features | Can be influenced by groups of correlated variables, potentially inflating their contribution. | Tends to select a single variable from a group of correlated ones, simplifying the model. |

Supporting Experimental Data

Empirical studies and benchmarks provide evidence for the performance differences between these methods. The following table summarizes key experimental findings.

| Experiment Context | Standard PCA Performance | Sparse PCA Performance | Key Takeaway |

|---|---|---|---|

| Neuroimaging (Alzheimer's Classification) | Balanced accuracy of 66.3% (with 50 MRIs per class) and 77.7% (with 243/210 samples) [24]. | Balanced accuracy improved to 74.3% and 86.3%, respectively, using a geometry-based variational autoencoder (a sparse-like method) [24]. | Sparse methods can yield significant gains in classification metrics in HDLSS settings by preventing overfitting. |

| Personality Questionnaire & Autism Gene Data | Suitable for general summarization but provides less insight into specific driving items/genes due to dense components [17]. | More effective for exploratory analysis; sparse loadings clearly show which questionnaire items or genes correlate with each component [17]. | Sparse PCA is superior for interpretability, helping researchers understand correlation patterns and identify key features. |

| Theoretical HDLSS Behavior | Inconsistent estimation of component loadings/weights in high dimensions [17]. | Sparse representations are employed to achieve consistency in estimation and improve reliability [17]. | Sparse PCA addresses a fundamental theoretical weakness of standard PCA in the HDLSS context. |

The Scientist's Toolkit: Essential Research Reagents and Solutions

When conducting gene selection research using PCA methods, researchers typically rely on a suite of computational tools and data types. The following table details these essential "research reagents."

| Item | Function in PCA/Gene Selection |

|---|---|

| DNA Microarray / RNA-seq Data | The primary high-dimensional input data, providing expression levels for thousands of genes across a limited sample size [23]. |

| Normalized & Centered Data Matrix | A preprocessed data matrix where each variable (gene) has been centered to have zero mean and scaled to have unit variance. This is a critical prerequisite for PCA to prevent variables with large scales from dominating the components [17] [22]. |

| Computational Environments (Python/R) | Platforms offering libraries (e.g., scikit-learn in Python, stats in R) that implement both standard and sparse PCA algorithms, allowing for direct experimental comparison [22]. |

| Sparsity-Inducing Penalties (L1/Lasso) | The mathematical "reagents" that are added to the PCA optimization problem to force sparsity. The tuning parameter (λ) controls the strength of the penalty and the degree of sparsity [17]. |

| Cross-Validation Framework | A resampling method used to reliably evaluate model performance and tune hyperparameters (like the sparsity parameter) in HDLSS settings where data is scarce [20]. |

In the context of HDLSS data and gene selection research, the choice between standard PCA and Sparse PCA is not merely a matter of preference but of strategic fit. Standard PCA remains a powerful, general-purpose tool for data compression and visualization when interpretability of the components is not the primary concern. However, for the core task of gene selection—where the goal is to identify a parsimonious set of biologically relevant biomarkers—Sparse PCA holds a distinct advantage.

The experimental evidence consistently shows that Sparse PCA enhances interpretability by producing components that are directly linked to a small subset of genes, improves model generalizability by reducing overfitting, and provides more reliable estimates in high-dimensional settings. For researchers and drug development professionals aiming to extract meaningful, actionable insights from complex genomic data, Sparse PCA is often the more appropriate and effective tool for the task.

Implementing Sparse PCA: Methods for Incorporating Biological Knowledge

Principal Component Analysis (PCA) is a cornerstone of multivariate analysis, widely used to summarize large sets of variables into fewer dimensions with minimal information loss [17]. In genomic studies, where data often consists of thousands of genes measured across limited samples, PCA serves as a crucial tool for dimensionality reduction, noise filtering, and pattern discovery. However, traditional PCA produces components that are linear combinations of all variables, making biological interpretation challenging in gene selection research [25]. Sparse PCA addresses this limitation by imposing sparsity constraints on the component coefficients, driving many coefficients to zero to enhance interpretability and restore statistical consistency in high-dimensional settings [9].

The fundamental distinction in sparse PCA methodologies lies in where sparsity is imposed: on the component weights used to compute scores from original variables, or on the component loadings representing correlations between variables and components [17] [9]. This distinction is crucial for genomic applications, as sparse weights are more suitable for creating simplified summary scores for downstream analysis, while sparse loadings better serve exploratory data analysis to understand correlation patterns [17]. This guide provides a comprehensive comparison of sparse PCA algorithms, their performance characteristics, and practical implementation for gene selection research.

Algorithmic Foundations and Methodologies

Traditional Sparse PCA Approaches

Early sparse PCA methods relied on relatively straightforward mathematical techniques to induce sparsity in principal components.

Thresholding and Rotation: Prior to the development of advanced penalized methods, sparse PCA was primarily achieved through post-processing of standard PCA results. The thresholding method improves interpretability by filtering out variables with small loadings and retaining only those with large coefficients [25]. While computationally efficient, this approach works best when clear distinctions exist between large and small loadings. The rotation method (e.g., varimax rotation) finds a transformation matrix that simplifies the loading structure by maximizing the variance of squared loadings, creating a clearer separation between large and small values [25]. A significant limitation is that rotated components no longer successively explain maximum variance, introducing ambiguity in component selection.

SCoTLASS (Simplified Component Technique-LASSO): As the first method to incorporate LASSO concepts into sparse PCA, SCoTLASS imposes an ℓ₁-norm constraint on the loading vectors as a relaxation of the NP-hard ℓ₀-norm constraint [25]. It solves the optimization problem:

maximize vi^TΣvi subject to vi^Tvi = 1, |vi|₁ ≤ k, vi^Tv_k = 0 for i < j

where Σ is the covariance matrix and k controls sparsity. When k > √p, SCoTLASS reduces to traditional PCA; when k = 1, only one loading component is nonzero [25]. A significant limitation in genomic applications (where p ≫ n) is that SCoTLASS selects at most n non-zero elements, potentially omitting biologically relevant genes.

Regression-Based Sparse PCA

- SPCA (Sparse PCA as a regression problem): Zou, Hastie, and Tibshirani reformulated PCA as a regression-type problem solvable via elastic-net penalties [25]. This approach leverages the singular value decomposition framework and imposes combined ℓ₁ and ℓ₂ norm penalties on loading vectors. The elastic-net penalty effectively promotes sparsity while handling correlated variables, making it particularly suitable for genomic data where genes often exhibit group behaviors. SPCA represents a significant advancement as it doesn't suffer from the same cardinality limitations as SCoTLASS in high-dimensional settings.

Penalized Matrix Decomposition (PMD)

The Penalized Matrix Decomposition provides a generalized framework for sparse PCA by incorporating penalty functions directly into the matrix decomposition process. PMD formulations allow for various sparsity-inducing penalties and can be optimized using iterative algorithms. Related approaches include:

Cardinality-Constrained Sparse PCA: d'Aspremont et al. established sparse PCA methods subject to cardinality constraints based on semidefinite programming (SDP) [17]. These approaches directly control the number of nonzero elements but present computational challenges for large-scale genomic data.

Power Method Variations: Journée et al. and Yuan and Zhang introduced modifications of the power method to achieve sparse PCA solutions using sparsity-inducing penalties [17]. These algorithms offer improved computational efficiency for high-dimensional data.

Advanced and Specialized Sparse PCA Methods

Recent research has produced specialized sparse PCA variants addressing specific challenges in genomic data analysis:

RMT-guided Sparse PCA: Chardès developed a Random Matrix Theory-based approach that guides sparse PCA inference using biwhitening and automatic sparsity parameter selection [5]. The method first applies a novel biwhitening algorithm to simultaneously stabilize variance across genes and cells, then uses RMT predictions to select sparsity levels that make inferred subspaces consistent with theoretical angle predictions [5]. This approach addresses the critical challenge of parameter selection in sparse PCA and demonstrates strong performance across diverse single-cell RNA-seq technologies.

AWGE-ESPCA: Miao et al. proposed an edge Sparse PCA model incorporating adaptive noise elimination regularization and weighted gene network information [26]. Specifically designed for genomic data analysis, this method integrates known gene-pathway quantitative information as prior knowledge into the SPCA framework, preferentially selecting genes in pathway-rich regions. The adaptive noise elimination regularization addresses the significant noise challenges present in non-human genomic data.

Automatic Thresholding Sparse PCA: Yata and Aoshima investigated threshold-based SPCA (TSPCA) and proposed a novel thresholding estimator using customized noise-reduction methodology [27]. Their approach provides computational efficiency while maintaining consistency under mild conditions, unaffected by specific threshold values. This method offers practical advantages for large-scale genomic applications where computational resources are constrained.

Table 1: Comparative Overview of Sparse PCA Algorithms

| Algorithm | Sparsity Type | Key Mechanism | Genomic Applications | Key Advantages |

|---|---|---|---|---|

| SCoTLASS | Sparse Loadings | ℓ₁-norm constraint | Exploratory data analysis | Direct sparsity control |

| SPCA | Sparse Weights | Elastic-net penalty | Summary scores for prediction | Handles correlated variables |

| PMD Framework | Both | Penalized matrix decomposition | General purpose | Flexible penalty functions |

| RMT-guided | Both | Biwhitening + RMT criteria | Single-cell RNA-seq | Automatic parameter selection |

| AWGE-ESPCA | Sparse Loadings | Pathway-weighted regularization | Genomic biomarker discovery | Incorporates biological priors |

| Automatic TSPCA | Sparse Loadings | Noise-reduction thresholding | High-dimensional clustering | Computational efficiency |

Performance Comparison and Experimental Evaluation

Methodological Considerations for Evaluation

When evaluating sparse PCA performance, researchers must consider several methodological aspects:

Data Generation Models: Most simulation studies generate data based on structures with sparse singular vectors or sparse loadings, neglecting models with sparse weights [9]. This practice can lead to over-optimistic conclusions about certain methods. Proper evaluation requires data generation schemes that represent all three sparse structures.

Initialization Strategies: Sparse PCA methods often employ iterative routines that converge to local optima. A common but questionable practice is initializing exclusively with right singular vectors from standard PCA [9]. This approach ignores that weights, loadings, and singular vectors represent different model structures in the sparse setting.

Performance Metrics: Comprehensive evaluation should include multiple performance measures: squared relative error (accuracy in parameter estimation), misidentification rate (accuracy in sparsity pattern recovery), percentage of explained variance (model fit), and variable selection consistency [17] [9].

Experimental Results from Comparative Studies

Guerra-Urzola et al. conducted an extensive simulation study evaluating sparse PCA methods under different data-generating models and conditions [17]. Their findings provide crucial insights for method selection:

Context-Dependent Performance: No single sparse PCA method dominates across all scenarios. Method performance depends critically on whether the data-generating process aligns with sparse weights, sparse loadings, or sparse singular vectors.

Sparse Loadings Methods demonstrate superior performance for exploratory data analysis tasks where understanding variable-component relationships is primary [17]. These methods more accurately recover the underlying correlation structures between genes and latent components.

Sparse Weights Methods excel in summarization tasks where the goal is creating simplified component scores for downstream prediction or classification [17]. These are particularly valuable when sparse PCA serves as a preprocessing step for regression or clustering.

RMT-guided sparse PCA has demonstrated consistent outperformance over PCA-, autoencoder-, and diffusion-based methods in cell-type classification tasks across seven single-cell RNA-seq technologies [5]. The automatic parameter selection aspect of this approach addresses a major practical limitation in applied genomic research.

Table 2: Quantitative Performance Comparison Across Genomic Applications

| Application Domain | Best Performing Algorithm | Compared Alternatives | Key Performance Metrics | Experimental Results |

|---|---|---|---|---|

| Single-cell RNA-seq Classification | RMT-guided Sparse PCA | Standard PCA, Autoencoders, Diffusion methods | Cell-type classification accuracy | Consistent outperformance across 7 technologies |

| Pathway-Centric Gene Selection | AWGE-ESPCA | Standard SPCA, Supervised/unsupervised baseline models | Pathway and gene selection capability | Superior biological relevance in identified genes |

| High-Dimensional Clustering | Automatic TSPCA | Regularized SPCA, Thresholding SPCA | Computational time, clustering accuracy | Fast computation with satisfactory accuracy |

| Drug Response Prediction | Semi-supervised weighted SPCA | Ridge regression, Deep learning models | Sensitivity, Specificity | 0.92 sensitivity, 0.93 specificity (11-57% improvement) |

Implementation Protocols for Genomic Research

Experimental Workflow for Gene Selection

The following diagram illustrates a comprehensive experimental workflow for applying sparse PCA in genomic research:

Protocol Details and Best Practices

Data Preprocessing: For genomic data, proper preprocessing is critical. This includes standard normalization, variance stabilization, and potentially biwhitening to simultaneously stabilize variance across genes and cells [5]. Gene expression data should be centered and scaled to unit variance before applying sparse PCA [17].

Model Selection Guidance: Choose sparse weights methods (e.g., SPCA) when the primary goal is creating simplified component scores for downstream prediction tasks. Select sparse loadings methods (e.g., SCoTLASS, AWGE-ESPCA) when aiming to understand correlation patterns and identify genes associated with latent factors [17] [9].

Parameter Tuning: Sparsity parameters significantly impact results. Use cross-validation, information criteria, or RMT-based approaches for objective parameter selection [5] [27]. For pathway-centric analyses, incorporate biological priors as in AWGE-ESPCA to guide sparsity patterns [26].

Initialization Strategies: Address the local optima problem through multiple random initializations in addition to singular vector initialization [9]. This approach helps avoid suboptimal solutions that might miss biologically relevant genes.

Validation and Interpretation: Validate sparse PCA results through biological enrichment analysis (e.g., GO, KEGG pathways) and comparison with established gene signatures [26] [13]. Calculate proportion of explained variance to assess model fit [9].

Table 3: Key Research Reagents and Computational Resources for Sparse PCA in Genomics

| Resource Category | Specific Examples | Function in Sparse PCA Research | Implementation Notes |

|---|---|---|---|

| Genomic Databases | GDSC, GEO, Cell Model Passports, EMBL-EBI | Source of gene expression and drug response data | Preprocess for missing values, normalize across platforms |

| Pathway Resources | KEGG, GO, Pathway Commons | Biological validation of selected genes | Used as priors in weighted SPCA (AWGE-ESPCA) |

| Computational Tools | R (elasticnet, PMA), Python (scikit-learn) | Implementation of SPCA and PMD algorithms | Custom modifications needed for specialized methods |

| Validation Benchmarks | Cell type annotations, Drug response measurements (IC₅₀) | Performance assessment of sparse PCA results | Use waterfall distribution for response binarization |

| Biological Specimens | Cell lines (e.g., lymphoblastoid cells), Patient-derived xenografts | Ground truth for experimental validation | Address batch effects and technical variability |

Sparse PCA represents a significant advancement over standard PCA for high-dimensional genomic data, addressing both interpretability challenges and statistical consistency issues in the p ≫ n setting. The choice between sparse weights methods (e.g., SPCA) and sparse loadings methods (e.g., SCoTLASS) should be guided by the primary research objective: summarization for downstream analysis versus exploratory pattern discovery [17] [9].

Emerging approaches that incorporate biological priors (AWGE-ESPCA) [26] or automatic parameter selection through RMT [5] demonstrate how domain-specific knowledge can enhance method performance and practicality. For gene selection research, these specialized methods show promise in bridging the gap between statistical optimality and biological relevance.

Future methodological developments should focus on integrating multiple omics data types within sparse PCA frameworks, addressing the small-n-large-p challenge more effectively, and improving computational efficiency for increasingly large-scale genomic datasets. As sparse PCA methodologies continue to evolve, their application in gene selection research will undoubtedly yield deeper biological insights and enhanced biomarkers for clinical application.

Principal Component Analysis (PCA) is a foundational tool for dimensionality reduction in genomic research. However, its standard application produces dense loadings, which are linear combinations of all variables, making biological interpretation challenging in high-dimensional settings. Sparse PCA (SPCA) addresses this by producing principal components with zero loadings for irrelevant variables, enhancing interpretability. A significant advancement in this field is the incorporation of prior biological knowledge into the sparsity process. Fused and Grouped Sparse PCA are two such methods that leverage known biological structures, such as gene networks and pathways, to guide the selection of variables, leading to more biologically insightful and reliable results [8] [28].

This guide objectively compares the performance of these structured SPCA methods against alternative sparse and standard PCA approaches, providing a clear framework for researchers to select the appropriate tool for genomic data analysis.

The core objective of sparse PCA is to obtain principal component loadings where many coefficients are exactly zero. Fused and Grouped Sparse PCA extend this by integrating external biological information.

- Standard Sparse PCA: Conventional SPCA methods impose sparsity through penalties like the lasso (L1-norm) on the loadings, performing variable selection in a purely data-driven manner without external biological context [8] [29].

- Fused Sparse PCA: This method incorporates graph or network information representing relationships between variables (e.g., gene interactions). It uses a fusion penalty that encourages smoothness or similarity between the loadings of variables connected within the network. This promotes the selection of biologically related variables [8].

- Grouped Sparse PCA: This method uses prior knowledge about group structures, such as gene pathways. It employs a group penalty (e.g., similar to group lasso) to encourage the selection of entire groups of variables together, ensuring that all variables within a significant pathway are either included or excluded from a component [8].

- Integrative Sparse PCA (iSPCA): Designed for multiple independent datasets, iSPCA uses a group penalty across datasets to encourage a common sparsity structure, identifying genes consistently relevant across studies [30].

- Sparse Non-Negative Generalized PCA: This method incorporates structural dependencies, sparsity, and non-negativity constraints, making it particularly suitable for data like NMR spectroscopy where the underlying spectra are non-negative [29].

A critical distinction in SPCA is between sparse loadings and sparse weights. Loadings represent the correlation between the original variables and the components, while weights are the coefficients used to form the component scores. In standard PCA, these are proportional, but in sparse PCA, imposing sparsity on one does not equate to sparsity in the other, affecting the interpretation [31]. Methods like Fused and Grouped SPCA typically aim for sparse loadings to enhance the interpretability of the components themselves.

Comparative Performance Analysis

Simulation studies and real-data applications demonstrate the relative strengths of these methods. The table below summarizes key performance metrics from published research.

Table 1: Quantitative Performance Comparison of PCA Methods

| Method | Key Feature | Sensitivity/Specificity | Interpretability | Data Context |

|---|---|---|---|---|

| Fused/Grouped SPCA | Incorporates biological network/pathway structure | Higher when graph is correctly specified [8] | High due to biologically meaningful sparsity [8] | Single dataset with prior graph/group info [8] |

| Standard SPCA | Purely data-driven sparsity (e.g., lasso) | Lower than structured methods [8] | Moderate, lacks biological context [8] | General-purpose high-dimensional data [8] |

| Integrative SPCA (iSPCA) | Joint analysis of multiple datasets | Outperforms single-dataset analysis & meta-analysis [30] | High, reveals consensus signals [30] | Multiple independent datasets [30] |

| Sparse Non-Negative GPCA | Accounts for dependencies & non-negativity | Improved feature selection for NMR data [29] | High, produces physically plausible loadings [29] | Data with known structure (e.g., spectroscopy) [29] |

| Inherently Sparse PCA | Identifies uncorrelated data blocks | N/A | High, orthogonal by construction [1] | Data with block-diagonal covariance structure [1] |

Table 2: Application-Based Performance in Mendelian Randomization (97 Lipid Metabolites)

| Method | Sparsity Achievement | Instrument Strength (F-statistic) | Biological Insight |

|---|---|---|---|

| Standard MVMR | Not applicable | Very low (mean: 0.81), severe bias [32] | Unstable, unreliable estimates [32] |

| Standard PCA + MR | No sparsity | Good | Major lipid classes identified but loads all traits [32] |

| Sparse Component Analysis (SCA) + MR | High | Good | Superior balance of sparsity and biological grouping [32] |

Key Findings from Experimental Data

- Robustness to Misspecification: Fused and Grouped SPCA methods are not only effective when the biological structure is correctly specified but are also fairly robust to misspecified graph structures, maintaining good performance [8].

- Application in Glioblastoma: Application to a glioblastoma gene expression dataset successfully identified pathways known in the literature to be related to the disease, validating the biological relevance of the method [8] [28].

- Advantage in Multi-Dataset Studies: iSPCA outperforms the approach of simply pooling all datasets together, as it can account for study-specific variations while strengthening the consensus signal [30].

Detailed Experimental Protocols

To ensure reproducibility and provide a clear framework for benchmarking, this section outlines the standard experimental protocols used in the cited studies.

Workflow for Evaluating Fused/Grouped SPCA

The following diagram illustrates a typical workflow for applying and validating structured SPCA methods on genomic data.

Protocol for Simulation Studies

Simulations are crucial for objectively comparing method performance under controlled conditions with a known ground truth.

Data Generation:

- Generate a synthetic data matrix

Xfrom a multivariate normal distribution with a pre-specified covariance matrixΣ. - The covariance structure is designed to embed known sparse patterns in the true principal components. This can be based on:

- Sparse Loadings/Weights: Only a subset of variables has non-zero contributions to the components [31].

- Graph Structure: Variables are connected in a predefined network (e.g., scale-free network), and true loadings are smooth across connected nodes [8].

- Group Structure: Variables are assigned to groups, and true loadings are non-zero for all members of active groups [8].

- Generate a synthetic data matrix

Method Application:

- Apply the methods under comparison (e.g., Standard SPCA, Fused SPCA, Grouped SPCA) to the generated data

X. - For Fused/Grouped SPCA, provide the algorithm with the known graph or group structure. Note: To test robustness, experiments can be repeated with a perturbed or misspecified structure [8].

- Apply the methods under comparison (e.g., Standard SPCA, Fused SPCA, Grouped SPCA) to the generated data

Performance Evaluation:

- Sensitivity & Specificity: Compare the estimated non-zero loadings against the true non-zero loadings. Calculate the True Positive Rate (Sensitivity) and True Negative Rate (Specificity) [8].

- Variance Explained: Measure the proportion of total variance explained by the first few sparse components.

- Parameter Estimation Error: Calculate the L2-norm difference between the true loadings and the estimated loadings.

Protocol for Real Data Analysis (e.g., Glioblastoma or Lipidomics)

Real-data applications validate the biological interpretability of the findings.

Data Preprocessing:

Incorporation of Prior Biology:

- Pathway Databases: Obtain gene set information from sources like Kyoto Encyclopedia of Genes and Genomes (KEGG) or Gene Ontology (GO) for Grouped SPCA [13].

- Interaction Networks: Use protein-protein interaction networks (e.g., from Pathway Commons) or gene regulatory networks to define the graph for Fused SPCA [8].

Analysis Execution:

- Apply the SPCA methods to the preprocessed data matrix, inputting the relevant biological structures.

- Select the optimal tuning parameters (e.g., penalty parameters λ) via cross-validation or information criteria to control the sparsity level.

Validation and Interpretation:

- Pathway Enrichment: For the genes with non-zero loadings in a component, perform over-representation analysis to check if known biological pathways are significantly enriched [8] [28].

- Literature Comparison: Check if the identified genes and pathways have previously established relationships with the disease under study (e.g., glioblastoma [8] or coronary heart disease via lipid traits [32]).

The Scientist's Toolkit

This section details essential reagents, datasets, and software tools required to implement the analyses described in this guide.

Table 3: Essential Research Reagents and Solutions for Structured SPCA

| Item Name | Function / Purpose | Examples / Sources |

|---|---|---|

| Genomic Datasets | Provides the high-dimensional data matrix X for analysis. |

GDSC (cancer drug response) [13]; GEO (gene expression) [13]; Glioblastoma datasets [8]; Lipid metabolite GWAS summaries [32] |

| Biological Pathway Databases | Defines group structures for Grouped SPCA. | KEGG [13]; Gene Ontology (GO) [13]; Pathway Commons [13] |

| Biological Network Databases | Defines graph structures for Fused SPCA. | Pathway Commons; STRING (protein-protein interactions) |

| Analysis Software & Packages | Implements the computational algorithms for SPCA. | R packages (e.g., PMA for standard SPCA); Custom algorithms in R/MATLAB for Fused/Grouped SPCA [8]; SCA algorithm for Mendelian randomization [32] |

| Validation Software | Used for biological interpretation of results. | Enrichment analysis tools (e.g., clusterProfiler in R) |

The integration of prior biological information through Fused and Grouped Sparse PCA represents a significant step beyond standard sparse PCA. Experimental data consistently shows that these methods can achieve a superior balance between statistical performance and biological interpretability. They exhibit higher sensitivity and specificity for feature selection when the biological structure is correctly specified and demonstrate robustness to minor misspecifications.

For researchers working with genomic data, the choice of method should be guided by the nature of the available biological knowledge and the analysis goal. When known pathways or gene networks are available and the aim is to generate interpretable, biologically grounded components, Fused or Grouped SPCA are compelling choices. For multi-study integrations, iSPCA is preferred, while for data with specific structures like NMR spectra, Sparse Non-Negative GPCA is highly effective. This comparative guide provides the necessary framework and evidence to inform these critical methodological decisions.

Leveraging Network and Pathway Data in Regularization Penalties