STAR Early Stopping Optimization: Accelerating AI Alignment in Drug Development

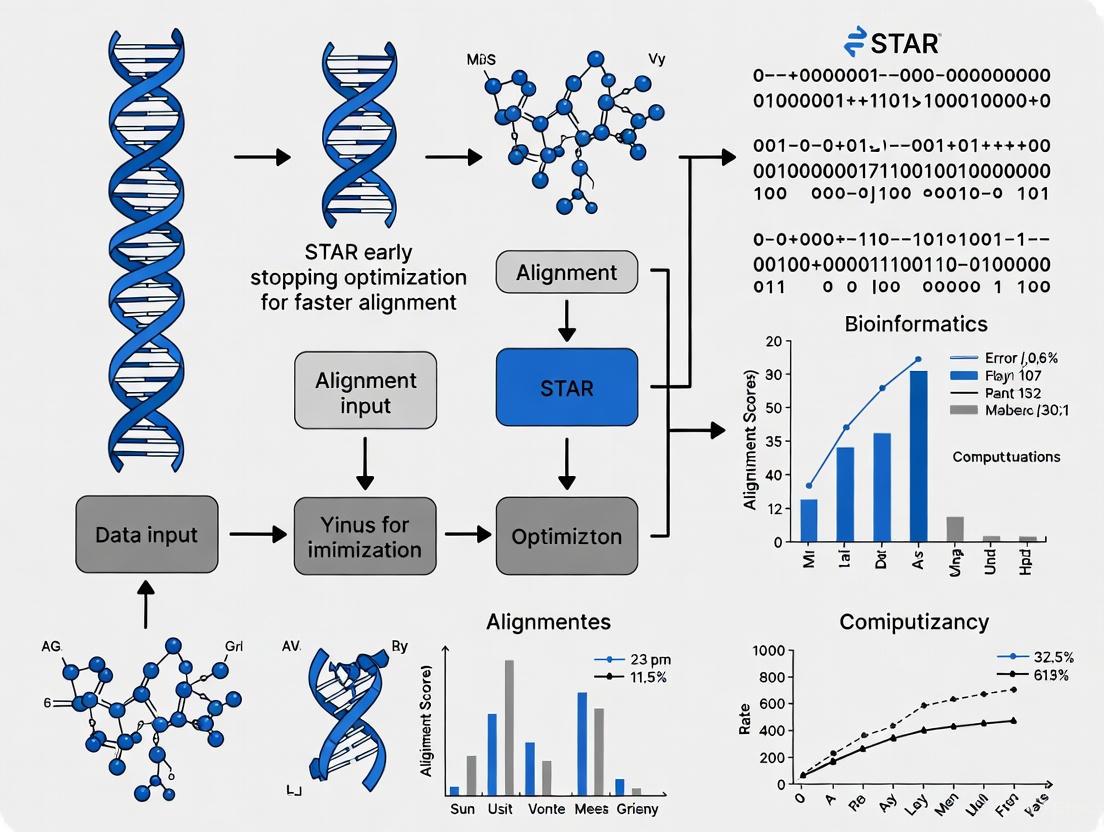

This article explores the integration of Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) principles with early stopping optimization in deep learning to accelerate and improve the alignment of AI models for drug discovery.

STAR Early Stopping Optimization: Accelerating AI Alignment in Drug Development

Abstract

This article explores the integration of Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) principles with early stopping optimization in deep learning to accelerate and improve the alignment of AI models for drug discovery. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive guide from foundational concepts to practical application. The content covers the critical challenge of overfitting in model training, details methodological implementations, addresses common troubleshooting scenarios, and validates the approach through comparative analysis with real-world case studies. By synthesizing these areas, the article demonstrates how strategic early stopping acts as a powerful regularization technique, enabling the development of more generalizable, efficient, and cost-effective AI models that enhance the predictability and success rates of preclinical drug optimization.

The What and Why: Unpacking STAR and Early Stopping for Drug Discovery

What is the STAR framework and what problem does it solve?

The Structure–Tissue Exposure/Selectivity–Activity Relationship (STAR) framework is a modern paradigm in drug optimization designed to address the persistently high failure rate in clinical drug development. Traditional drug optimization has overly emphasized improving a drug's potency and specificity through Structure-Activity Relationship (SAR) studies, often overlooking a critical factor: drug exposure and selectivity in diseased tissues versus normal tissues [1] [2].

This imbalance is a major reason why approximately 90% of drug candidates that enter clinical trials fail to gain approval. The primary causes of failure are a lack of clinical efficacy (40-50%) and unmanageable toxicity (30%) [1] [3]. The STAR framework proposes that by equally balancing the optimization of a drug's activity (potency/specificity) with its tissue exposure and selectivity, researchers can select better drug candidates and more effectively balance clinical dose, efficacy, and toxicity [1].

What are the core components of the STAR classification system?

The STAR system classifies drug candidates into four distinct categories based on two key parameters: specificity/potency and tissue exposure/selectivity [1] [3]. This classification helps guide decision-making on which candidates to advance. The following table summarizes the four STAR classes and their clinical implications.

Table: The STAR Drug Candidate Classification System

| STAR Class | Specificity/Potency | Tissue Exposure/Selectivity | Recommended Clinical Dose | Clinical Outcome & Development Recommendation |

|---|---|---|---|---|

| Class I | High | High | Low Dose | Superior clinical efficacy and safety. Highest success rate. Ideal candidate to advance. [1] [3] |

| Class II | High | Low | High Dose | May achieve clinical efficacy but with high toxicity. Requires cautious evaluation and may need further optimization. [1] [3] |

| Class III | Low (but adequate) | High | Low to Medium Dose | Achieves adequate efficacy with manageable toxicity. Often overlooked by traditional methods but has a high clinical success rate. [1] [3] |

| Class IV | Low | Low | N/A | Inadequate efficacy and safety. Should be terminated early in the development process. [1] [3] |

The logical workflow for evaluating a drug candidate using the STAR framework, from initial assessment to the final development decision, is illustrated below.

Implementation & Integration

How does STAR integrate with existing drug development workflows?

The STAR framework does not replace established workflows but enhances them by adding a critical layer of analysis after initial SAR and pharmacokinetic (PK) optimization, but before final candidate selection and clinical trials [1]. The core change is a shift in mindset: from relying primarily on plasma PK as a surrogate for tissue exposure, to directly measuring and optimizing tissue-level distribution [2].

This integration can be visualized as a modified optimization funnel, where candidates are screened not just on their activity and plasma profile, but on their tissue exposure/selectivity, leading to a more rational and predictive selection.

What technologies and tools support STAR implementation?

Implementing the STAR framework relies on a combination of advanced analytical, computational, and experimental tools.

Table: Research Reagent Solutions for STAR Implementation

| Tool / Reagent Category | Specific Examples | Function in STAR Workflow |

|---|---|---|

| Analytical Chemistry | Liquid Chromatography-Mass Spectrometry/Mass Spectrometry (LC-MS/MS) [2] | Precisely quantifies drug concentrations in diverse tissue homogenates (e.g., tumor, liver, bone) to establish tissue exposure profiles. |

| In Vivo Models | Transgenic disease models (e.g., MMTV-PyMT mice for breast cancer) [2] | Provides a physiologically relevant system to study drug distribution between diseased and healthy tissues under one roof. |

| Computational Modeling | Principal Component Analysis (PCA), Ordinary Least Squares (OLS) models [2], AI/ML for QSAR and de novo molecular design [4] | Analyzes complex tissue distribution data to identify STR and predict the impact of structural changes on tissue selectivity. |

| Biochemical Assays | Protein binding assays, Permeability assays (e.g., PAMPA) [1] | Determines fundamental drug-like properties that influence tissue distribution, such as plasma protein binding and ability to cross membranes. |

Experimental Protocols & Troubleshooting

Protocol: How to determine tissue exposure and selectivity for STAR classification

This protocol outlines the key steps for generating the tissue distribution data required to classify a drug candidate using the STAR framework [2].

1. Animal Dosing and Sample Collection:

- Model: Use a relevant animal disease model. For example, female MMTV-PyMT transgenic mice (8-12 weeks old) for breast cancer studies.

- Dosing: Administer the drug candidate (e.g., via oral gavage or intravenous injection) at a predefined dose (e.g., 5 mg/kg p.o. or 2.5 mg/kg i.v.).

- Time Points: Collect tissue samples at multiple time points post-dosing (e.g., 0.08, 0.5, 1, 2, 4, and 7 hours) to establish a concentration-time profile.

- Tissues: Collect a comprehensive set of samples, including blood/plasma, the target disease tissue (e.g., tumor), and key normal tissues (e.g., liver, bone, uterus, brain, fat, muscle, etc.).

2. Tissue Sample Preparation and Analysis:

- Homogenization: Weigh and homogenize tissue samples.

- Protein Precipitation: Aliquot a precise amount of plasma or tissue homogenate. Add ice-cold acetonitrile (e.g., 40 μL sample + 40 μL ACN) and an internal standard solution to precipitate proteins and extract the drug.

- Vortex and Centrifuge: Vortex the mixture thoroughly (e.g., 10 minutes) and then centrifuge (e.g., 3500 rpm for 10 min at 4°C) to pellet the precipitate.

- LC-MS/MS Analysis: Inject the clear supernatant into an LC-MS/MS system to quantify the drug concentration in each sample.

3. Data Calculation and STAR Classification:

- Pharmacokinetic Analysis: Calculate standard PK parameters (AUC, C~max~, t~1/2~) for plasma and each tissue. The AUC~tissue~/AUC~plasma~ ratio is a key metric for tissue exposure.

- Tissue Selectivity Index: Calculate the ratio of drug exposure in the target diseased tissue to exposure in a critical normal tissue (e.g., AUC~tumor~/AUC~liver~).

- Classification: Plot the drug's specificity/potency (e.g., IC~50~) against its tissue exposure/selectivity index to assign it to the appropriate STAR class (I-IV).

FAQ & Troubleshooting Guide

Q1: Our lead candidate has excellent potency (low nM IC~50~) and good plasma PK, but it failed in vivo due to toxicity. How can STAR help?

- A: This is a classic Class II profile. The candidate has high specificity/potency but likely low tissue selectivity, causing accumulation in and damage to healthy organs. STAR would have flagged this candidate as high-risk. To fix this, use STR analysis to guide structural modifications that reduce retention in the toxic normal tissue without completely sacrificing plasma PK or potency [1] [2].

Q2: We have a compound with moderate potency but it shows astounding efficacy in our disease model. Our team is skeptical about advancing it. What should we do?

- A: This compound likely has a Class III profile. Its moderate potency is compensated by exceptionally high exposure and selectivity in the target diseased tissue. The STAR framework provides a validated rationale for advancing such overlooked candidates. Proceed by quantitatively measuring its tissue distribution to confirm the high tissue exposure/selectivity, which will build a strong data-driven case for its advancement [1] [3].

Q3: Our tissue distribution data is highly variable. What are the key factors to control in these experiments?

- A: Key factors to ensure reproducible and reliable tissue distribution data include:

- Standardized Sampling: Precisely dissect the same anatomical region of each tissue across all animals.

- Thorough Homogenization: Ensure tissues are completely homogenized to release the drug uniformly.

- Matrix Effects: Use a stable isotope-labeled internal standard for the drug to correct for ionization suppression/enhancement during LC-MS/MS analysis.

- Animal Model Consistency: Use animals of the same age, sex, and genetic background, and ensure disease models (e.g., tumor size) are as uniform as possible [2].

Q4: How can we apply STAR early in discovery when in vivo studies are low-throughput?

- A: Leverage computational tools to build predictive models for STR. Use data from initial in vivo studies to train AI/ML models (e.g., using molecular descriptors and protein binding data) to predict the tissue distribution of new analogs in silico. This allows for virtual screening and prioritization of compounds with a high probability of favorable (Class I or III) STAR profiles before committing to costly and time-consuming in vivo experiments [4] [2].

Future Directions & Strategic Insights

How does STAR align with regulatory and industry trends?

The STAR framework is highly aligned with the pharmaceutical industry's push towards precision medicine and the regulatory focus on improving R&D efficiency [5]. It supports the use of biomarkers and advanced analytics for better patient selection and trial design [1]. Furthermore, regulatory initiatives like the FDA's Split Real Time Application Review (STAR) pilot program, which aims to shorten review times for certain supplements, underscore the broader movement towards more efficient, data-driven development pathways where a robust framework like STAR can be highly valuable [6].

The integration of AI and machine learning into drug discovery is a powerful enabler for STAR. AI can accelerate the analysis of complex tissue distribution data and help deconvolute the STR [4]. More importantly, AI-driven generative chemistry can be used to design novel molecules that are optimized not just for potency (SAR), but also for desired tissue distribution profiles (STR) from the outset, truly embodying the STAR principle [4].

The following diagram illustrates how STAR serves as a central, integrating paradigm, connecting modern tools and traditional methods to achieve a superior development outcome.

Troubleshooting Guides

Guide 1: Diagnosing and Resolving Overfitting in Preclinical Models

Problem: Your AI model shows excellent performance on training data but fails to generalize to new, unseen preclinical data, such as novel chemical compounds or different biological targets.

Primary Symptoms:

- Low error rates on the training set compared to a significantly higher error rate on the test/validation set [7] [8].

- A generalization curve where the validation/test loss increases after a certain number of training iterations, while the training loss continues to decrease [8].

- High variance in model parameters when trained on different subsets of your data [7].

- The model makes inaccurate predictions on real-world data, despite low validation loss [8].

Step-by-Step Diagnostic Protocol:

Split Your Data and Plot Learning Curves

- Partition your dataset into a training set (e.g., 70%), a validation set (e.g., 15%), and a hold-out test set (e.g., 15%) [9]. Ensure the partitions are statistically similar through random shuffling [8].

- During training, plot the loss (e.g., Mean Squared Error) for both the training and validation sets against the number of training iterations (epochs).

Analyze the Generalization Curve

- Identify the Divergence Point: The iteration at which the validation loss stops decreasing and begins to consistently rise while the training loss falls is the point of overfitting. This is your candidate for the optimal early stopping point.

Conduct a Subset Stability Test

- Create two or more random subsets (e.g., 80% each) of your training data.

- Train your model from scratch on each subset.

- Compare the key parameters or feature importances of the resulting models. If the models are vastly different, it indicates the model is sensitive to noise rather than the underlying signal [7].

Solutions & Mitigations:

- Implement Early Stopping: Halt the training process before the model begins to overfit, as identified by the divergence point on the generalization curve [10]. This is a core component of optimizing training length.

- Apply Regularization Techniques: Introduce L1 (Lasso) or L2 (Ridge) regularization to penalize overly complex models by applying a penalty to the loss function based on the magnitude of model coefficients [10].

- Simplify the Model:

- For deep neural networks, reduce the number of layers or units per layer.

- For decision trees, reduce the maximum depth of the tree [7].

- Increase and Augment Training Data:

- If possible, collect more data. The performance of complex models often improves with more data [10].

- Use data augmentation techniques to artificially expand your dataset by creating modified versions of existing data (e.g., adding noise, using data from similar but distinct biological contexts) [10].

- Use Cross-Validation: Employ K-fold cross-validation to get a more robust estimate of model performance and reduce the risk of overfitting to a single train-validation split [10].

Guide 2: Optimizing Training Length with Early Stopping

Problem: Determining the precise moment to stop model training to achieve the best generalizing model without underfitting or overfitting.

Early Stopping Protocol based on Validation Loss:

- Initialize: Before training, define a patience parameter

p(e.g., 10, 50, or 100 epochs). This is the number of epochs to wait after the validation loss has stopped improving before terminating training. - Train and Monitor: Begin the training process. After each epoch, evaluate the model on the validation set and record the validation loss.

- Check for Improvement: Compare the current validation loss to the best recorded validation loss.

- If the current loss is better (lower), save the current model weights and update the best loss.

- If the current loss is not better, increment a counter.

- Stop Condition: If the counter exceeds the patience parameter

p, stop training and restore the model weights from the epoch with the best validation loss. - Final Evaluation: Assess the final, restored model on the held-out test set to estimate its real-world performance.

Table 1: Impact of Model Complexity and Data Size on Overfitting

| Factor | High Risk of Overfitting | Lower Risk of Overfitting |

|---|---|---|

| Number of Variables/Parameters | Too many model parameters for the number of observations [7] | Model complexity is appropriate for dataset size |

| Training Data Size | Small, non-representative dataset [10] | Large, diverse, and representative dataset [10] |

| Training Duration | Training for too long on a fixed dataset [10] | Training stopped when validation performance plateaus or worsens (Early Stopping) |

| Typical Symptom | High standard errors for parameter estimates [7] | Stable model parameters across data subsets [7] |

Frequently Asked Questions (FAQs)

Q1: What is overfitting in the context of preclinical AI drug discovery? A1: Overfitting occurs when a machine learning model learns not only the genuine relationships within the preclinical training data (e.g., true structure-activity relationships) but also the noise and random fluctuations specific to that dataset [7]. The model becomes like a student who memorizes textbook examples but cannot solve new problems. It will perform well on its training data but fail to make accurate predictions on new chemical compounds, different protein targets, or unseen experimental data [8] [10]. This is a critical failure mode, as it can lead to the selection of non-viable drug candidates that waste vast resources in subsequent clinical trials [1].

Q2: Why is training length so critical for preventing overfitting? A2: Training length is a critical variable because it directly controls how much the model "learns" from the training data. Initially, the model learns the dominant, generalizable patterns. With prolonged training, it starts to memorize the idiosyncrasies and noise in the specific training set [10]. This is analogous to a decision tree that, if allowed to grow too deep (a form of prolonged training), will create a specific leaf for every single data point, perfectly fitting the training data but failing on new data [7]. Therefore, optimizing the training duration via early stopping is a fundamental defense against overfitting.

Q3: My model has a high AUC on the test set. Can it still be overfit? A3: Yes, it is possible. A high Area Under the Curve (AUC) on a static test set is a good sign, but it does not guarantee robustness. The model may still be overfit if:

- The test set is not truly representative of the broader chemical or biological space you intend to explore.

- The relationship between input and output variables changes over time (a phenomenon known as "concept drift") [4].

- The model exhibits high performance on your test set but then makes "terrible predictions on real-world data" due to unforeseen feedback loops or distribution shifts [8]. Continuous validation on new, external datasets is necessary to be confident the model has not overfit [4].

Q4: How does the STAR framework relate to overfitting in AI models? A4: The STAR (Structure–Tissue Exposure/Selectivity–Activity Relationship) framework emphasizes a balanced approach to drug optimization, considering not just a compound's potency but also its tissue exposure and selectivity [1]. An overfit AI model used for preclinical prediction would fail to capture this balance. For instance, a model overfit to purely in vitro potency data (Class II drugs in the STAR taxonomy) might consistently select for highly potent compounds that fail in vivo due to poor tissue exposure or high toxicity. A well-generalized model, trained optimally and not overfit, is necessary to accurately predict the complex, multi-faceted relationships required for successful Class I drug candidates as defined by STAR [1].

Experimental Protocols & Workflows

Protocol: K-Fold Cross-Validation for Robust Model Validation

Purpose: To obtain a reliable estimate of model performance and mitigate the risk of overfitting to a particular data split.

Methodology:

- Data Preparation: Randomly shuffle your dataset and split it into K equally sized folds (common values for K are 5 or 10).

- Iterative Training and Validation: For each iteration

i(from 1 to K):- Use fold

ias the validation set. - Use the remaining K-1 folds as the training set.

- Train a new model from scratch on the training set.

- Evaluate the model on the validation set (fold

i) and record the performance metric (e.g., MSE, CI).

- Use fold

- Performance Calculation: After K iterations, average the performance scores from all K validation folds. This average score is a more robust indicator of how your model will generalize than a single train-validation split [10].

K-fold Cross-validation Workflow

Protocol: Early Stopping with Patience

Purpose: To automatically determine the optimal number of training iterations that yields the best model without overfitting.

Methodology:

- Data Partitioning: Split data into training and validation sets. A hold-out test set should be reserved for final evaluation.

- Setup: Initialize the best validation loss to infinity and a patience counter to zero. Define a patience value

p. - Iterative Training:

- Train for one epoch on the training set.

- Evaluate the model on the validation set.

- If the validation loss improves, save the model state and reset the patience counter.

- If the validation loss does not improve, increment the patience counter.

- Termination: If the patience counter exceeds

p, stop training and reload the best-saved model.

Early Stopping with Patience Logic

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Managing Overfitting

| Tool / Technique | Function & Purpose | Role in Preventing Overfitting |

|---|---|---|

| Validation Set | A subset of data not used for training, but for evaluating model performance during training. | Serves as a proxy for unseen data to detect when the model starts overfitting to the training set [8]. |

| K-Fold Cross-Validation | A resampling procedure used to evaluate a model on limited data. | Provides a robust performance estimate by ensuring the model is validated on different data partitions, reducing variance [10]. |

| L1 / L2 Regularization | Mathematical techniques that add a penalty to the loss function based on model coefficient size. | Shrinks model coefficients, effectively simplifying the model and reducing its tendency to fit noise [10]. |

| Data Augmentation Libraries | Software (e.g., Augmentor, Imgaug for images; SMOTE for tabular data) to create modified copies of existing data. | Increases the effective size and diversity of the training set, helping the model learn more generalizable features [10]. |

| Automated Early Stopping | A callback function in ML frameworks (e.g., TensorFlow, PyTorch) that monitors a metric and stops training when it stops improving. | Automates the optimization of training length, preventing the model from training for too many epochs [10]. |

Frequently Asked Questions (FAQs)

Q1: What is early stopping and how does it function as a regularization method? Early stopping halts the training process of a model before it has fully converged to minimize the training error. This prevents the model from overfitting to the noise and specific details of the training data. It acts as an implicit regularization technique by constraining the optimization path, effectively limiting the complexity of the learned model and encouraging simpler solutions that generalize better to unseen data [11] [12]. In deep learning, it is a crucial method to address overfitting, especially in complex architectures like deep neural networks [13].

Q2: Why is early stopping particularly important for deep neural networks and complex models like the Deep Image Prior? Deep neural networks have a high capacity to memorize training data, making them highly susceptible to overfitting. The Deep Image Prior (DIP) exemplifies this problem with a "semi-convergence" behavior: image reconstruction quality improves initially but then degrades as the network starts to overfit the degraded input data. Determining the optimal stopping point is critical, as stopping too late corrupts the reconstruction, and finding this point often requires numerous computationally expensive trials [14].

Q3: What are the key differences between early stopping and other regularization techniques like dropout? While both aim to prevent overfitting, they operate differently. Early stopping is a procedural method that controls training time, whereas dropout is an architectural method that randomly "drops" neurons during training to prevent complex co-adaptations. Early stopping regulates the number of learning iterations, while dropout actively thins the network layers during each training step [13]. The choice between them depends on the specific problem and network architecture.

Q4: What are the primary risks of stopping a trial or training process too early? The main risk is overestimating the treatment effect or model performance. Interim results can be at a "random high," and stopping based on this can lead to conclusions that do not hold once more data is collected. This is a significant concern in clinical trials, where early stopping for benefit based on a small number of events can lead to the adoption of ineffective or unsafe treatments. The "play of chance" is more pronounced with less data [15]. In computational tasks, stopping too early might mean the model has not yet captured the underlying data patterns.

Q5: How can I determine the optimal early stopping point in a computational experiment like DIP without a ground truth? Several automated strategies exist. One approach is to use a no-reference image quality metric, such as a modified version of the BRISQUE metric. This method tracks the quality of the output without needing the original, clean image, aiming to estimate the peak of the performance curve (e.g., PSNR) [14]. Another strategy involves performance prediction, where a predictor is trained to identify the best hyperparameter configurations that yield good results within a fixed, limited number of iterations [14].

Troubleshooting Guides

Issue 1: Model Performance Exhibits Semi-Convergence

Problem: During training, your model's performance on a validation set initially improves, reaches a peak, and then begins to degrade, indicating overfitting.

Diagnosis: This is the classic sign of semi-convergence, a common issue in models like the Deep Image Prior [14] and deep neural networks [13]. The model is starting to learn the noise in the training data.

Solution:

- Implement a Validation Set: Use a held-out validation set to monitor performance metrics (e.g., loss, accuracy) after each epoch or iteration.

- Define a Stopping Criterion: A common method is "patience," where you stop training after the validation metric has not improved for a pre-defined number of epochs.

- Leverage No-Reference Metrics: If a validation set with ground truth is unavailable (e.g., in image denoising), employ no-reference quality metrics like BRISQUE to estimate the best stopping point [14].

Issue 2: Inconsistent Early Stopping Behavior Across Different Datasets or Experiments

Problem: The optimal early stopping point varies significantly when you change the dataset or the specific task, making it difficult to establish a robust protocol.

Diagnosis: The generalization capability of the early-stopped model is sensitive to dataset parameters. As noted in attractor neural network research, varying dataset parameters can lead to different regimes (success, failure, overfitting) [11].

Solution:

- Hyperparameter Optimization: Treat the early stopping criterion (e.g., "patience" value) as a hyperparameter and tune it for your specific application.

- Adopt a NAS-based Strategy: For scenarios with limited computational time, use a Neural Architecture Search (NAS) approach. This can find optimal network and training configurations that deliver high performance within a fixed, limited number of iterations, making the process more predictable [14].

Issue 3: Early Stopping Leads to Underfitting

Problem: After implementing early stopping, the model's performance is poor on both training and validation sets, suggesting it hasn't learned enough.

Diagnosis: The stopping rule is too aggressive, halting the training process before the model has had a chance to capture the underlying trends in the data.

Solution:

- Adjust the Stopping Criterion: Increase the "patience" parameter to allow more epochs without improvement before stopping.

- Re-evaluate the Training Process: Ensure your model has sufficient capacity (e.g., network size) and that your learning rate is set appropriately. A learning rate that is too high or too low can prevent proper convergence.

- Cross-Validation: Use k-fold cross-validation to get a more reliable estimate of the optimal stopping point across different data splits.

Experimental Data & Protocols

| Domain / Application | Model / Method | Key Metric | Performance Impact of Early Stopping | Citation |

|---|---|---|---|---|

| Transcriptomics (STAR Aligner) | STAR RNA-seq Alignment Workflow | Total Alignment Time | 23% reduction in total alignment time [16]. | |

| Attractor Neural Networks | Gradient Descent on Regularized Loss | Generalization & Overfitting | Optimal interaction matrices revised via unlearning; avoids overfitting [11]. | |

| Deep Image Prior (Image Denoising) | U-Net with Early Stopping | Image Quality (e.g., PSNR) | Prevents semi-convergence; automatic stopping criteria (NAS, BRISQUE) yield high-quality reconstructions [14]. | |

| Deep Learning (Sparse Regression) | Deep-N Diagonal Linear Networks | Sarse Recovery | Early stopping is crucial for convergence to a sparse model (Implicit Sparse Regularization) [12]. | |

| Clinical Trials (Single-Arm Studies) | Unified Exact Design | Probability of Stopping | Provides exact probabilities for early stopping due to efficacy, futility, or toxicity [17]. |

Table 2: Essential Research Reagent Solutions for Early Stopping Experiments

| Reagent / Tool | Function / Purpose | Example in Context |

|---|---|---|

| Validation Set | A held-out dataset used to monitor model performance during training and to trigger the early stopping rule. | Used universally in machine learning to gauge generalization and prevent overfitting. |

| No-Reference Image Quality Metric (e.g., BRISQUE) | Assesses the quality of a reconstructed image without needing the original ground truth image. | Critical for determining the early stopping point in Deep Image Prior applications [14]. |

| Performance Predictor | A model that predicts the final performance of a network configuration based on its early training behavior. | Employed in NAS-based early stopping to select hyperparameters for Deep Image Prior [14]. |

| Recursive Probability Calculator | Computes the exact probability of stopping a trial early based on pre-specified decision rules. | Used in clinical trial designs for monitoring multiple endpoints (efficacy, futility, toxicity) [17]. |

| Hyperparameter Search Space | The defined set of possible architectural and optimization parameters for a model. | Explored via NAS to find configurations that perform well within a fixed iteration budget [14]. |

Methodologies for Key Experiments

Protocol 1: Implementing NAS-Based Early Stopping for Deep Image Prior This protocol aims to find an optimal network configuration that performs well within a fixed, limited number of iterations, thus acting as an automatic early stopping mechanism [14].

- Define Search Space: Specify the hyperparameters to optimize, including architectural ones (e.g., number of layers, channels) and optimization ones (e.g., learning rate, regularization parameter).

- Set Iteration Budget: Fix the maximum number of training iterations (N).

- Perform Random Search: Sample different hyperparameter configurations from the search space.

- Train Performance Predictor: For each configuration, train the network for a very small number of initial iterations. Use these initial results to train a predictor that can forecast the final performance after N iterations.

- Select Best Configuration: Use the trained performance predictor to identify the hyperparameter set expected to yield the best results within the N-iteration budget.

- Execute and Validate: Train the network with the selected configuration for N iterations and evaluate the output.

Protocol 2: Early Stopping for the STAR Aligner in Transcriptomics This protocol outlines the steps to achieve a significant reduction in alignment time for RNA-seq data using early stopping optimization [16].

- Pipeline Setup: Implement a cloud-native architecture for the transcriptomics pipeline (SRA data download with SRA-Toolkit, alignment with STAR, normalization).

- Enable Early Stopping: Leverage the early stopping feature within the STAR alignment workflow. The specific technical implementation of this feature in STAR allows the process to halt once a convergence or quality criterion is met.

- Resource Configuration: Select cost-efficient cloud instance types (e.g., on AWS) and verify the applicability of using spot instances for further cost reduction.

- Execute and Measure: Run the pipeline on the target RNA-sequencing dataset and measure the total alignment time compared to a baseline run without early stopping. The reported result is a 23% reduction in total alignment time [16].

Workflow and Relationship Diagrams

Early Stopping Decision Logic

Deep Image Prior Semi-Convergence

Troubleshooting Guide: STAR Early Stopping Optimization

Frequently Asked Questions

Q1: What is the expected time savings from implementing early stopping in my STAR alignment workflow? Early stopping can reduce total alignment time by approximately 23% [16]. In a specific analysis of 1,000 alignment jobs, this optimization allowed for the early termination of 38 alignments, saving 30.4 hours out of a total 155.8 hours of processing [18].

Q2: At what point can I safely terminate an alignment job without compromising data quality?

Analysis of Log.progress.out files indicates that processing at least 10% of the total number of reads provides sufficient data to determine if the alignment will meet the minimum 30% mapping rate threshold [18]. This threshold effectively identifies single-cell sequencing data that typically have incomplete mRNA coverage.

Q3: How does the Ensembl genome release version impact alignment performance? Using newer Ensembl genome releases significantly improves performance. The table below compares key metrics between releases 108 and 111:

Table: Ensembl Genome Release Performance Comparison [18]

| Metric | Release 108 | Release 111 | Improvement |

|---|---|---|---|

| Average Execution Time | Baseline | >12x faster | >1200% |

| Index Size | 85 GiB | 29.5 GiB | 65% reduction |

| Computational Requirements | High | Significantly reduced | Enables smaller, cheaper instances |

Q4: Which instance types are most cost-effective for STAR alignment in cloud environments? While specific instance recommendations depend on genome size and data volume, r6a.4xlarge instances (16 vCPU, 128GB RAM) have been successfully used for human genome alignment [18]. The reduced index size in newer Ensembl releases enables the use of smaller, more cost-effective instances.

Common Error Resolution

Problem: Inconsistent mapping rates despite early stopping implementation

Solution: Verify that your Log.progress.out monitoring script correctly interprets the percent of mapped reads. Ensure you're using the precise formula: (number of mapped reads / total number of reads) * 100. The 10% sampling threshold must be calculated based on total read count, not processing time [18].

Problem: Performance degradation after switching to newer Ensembl genome

Solution: This may indicate using the wrong genome type. Confirm you're using the "toplevel" genome type rather than "primary_assembly" to ensure all contigs and scaffolds are included. The "toplevel" genome in release 111 shows significant performance improvements while maintaining comparable mapping rates (difference <1%) [18].

Experimental Protocol: Validating Early Stopping Threshold

Methodology

This protocol establishes the experimental procedure for determining the optimal early stopping point in STAR alignment.

Objective: To determine the minimum read processing percentage that accurately predicts final mapping rate for early stopping decisions.

Materials:

- STAR aligner version 2.7.10b [16] [18]

- RNA-seq datasets (minimum 1,000 samples for statistical significance) [18]

- Computational resources (minimum 128GB RAM for human genome alignment) [18]

- Script for parsing

Log.progress.outfiles

Procedure:

- Execute STAR alignment with

--quantMode GeneCountsoption [18] - Configure output to generate

Log.progress.outwith progress statistics - For each sample, record mapping percentage at 5%, 10%, 15%, 20%, and 25% of total reads processed

- Compare interim mapping rates to final mapping rate after complete processing

- Calculate correlation coefficients between interim and final rates across all samples

- Determine the earliest point where mapping rate prediction achieves >95% accuracy

Validation Metrics:

- False positive rate (samples incorrectly terminated that would have achieved >30% mapping)

- False negative rate (samples incorrectly continued that would have failed mapping threshold)

- Total time savings across the dataset

- Computational resource utilization efficiency

Research Reagent Solutions

Table: Essential Materials for STAR Alignment Optimization

| Item | Function | Specification |

|---|---|---|

| STAR Aligner | Sequence alignment | Version 2.7.10b or later [16] [18] |

| Ensembl Genome | Reference for alignment | "Toplevel" genome type, Release 110 or newer [18] |

| SRA Toolkit | Data retrieval and conversion | Includes prefetch and fasterq-dump utilities [16] [18] |

| Computational Instance | Alignment processing | High memory instances (128GB+ RAM) [18] |

| Progress Monitoring Script | Early stopping implementation | Parses Log.progress.out for mapping percentages [18] |

Workflow Visualization

STAR Early Stopping Decision Logic

STAR Alignment Optimization Workflow

The integration of Artificial Intelligence (AI) is fundamentally restructuring the foundational pillars of drug discovery: target validation and hit-to-lead (H2L) acceleration. By 2025, AI has evolved from an experimental curiosity to a core platform technology, driving a transformative shift towards more predictive and efficient R&D workflows [19] [20]. This shift is critical for overcoming the high failure rates that have long plagued the industry, where approximately 90% of clinical drug development fails, often due to inadequate biological validation or an overemphasis on potency at the expense of tissue exposure and selectivity [21]. The emergence of frameworks like Structure–Tissue exposure/selectivity–Activity Relationship (STAR) underscores the need for a more holistic approach to drug optimization, one that AI is uniquely positioned to enable [21]. This technical support article explores the current AI-driven landscape, providing troubleshooting guidance and methodological insights for researchers navigating this rapidly evolving field.

AI in Target Validation: Troubleshooting Guides and FAQs

Target validation is the critical first step in ensuring a drug candidate has a sound mechanistic basis. AI technologies are enhancing this phase by improving the predictability and physiological relevance of validation studies.

Key Challenges and AI-Driven Solutions

| Challenge | Traditional Approach Limitations | AI-Enhanced Solution | Key AI Technologies |

|---|---|---|---|

| Mechanistic Uncertainty | Over-reliance on simplified biochemical assays; high translational failure [19]. | Direct target engagement analysis in physiologically relevant systems. | CETSA (Cellular Thermal Shift Assay) combined with AI-based analysis of high-resolution mass spectrometry data [19]. |

| Target Selection & Druggability | Educated guesses based on limited data; many targets fail late due to unforeseen complications [22]. | Multi-omics data integration and network analysis for causal target prioritization. | Knowledge graphs, graph neural networks, multi-task learning models integrating genomic, transcriptomic, and clinical data [20] [23]. |

| Predicting Tissue-Specific Effects | Difficult to model in early stages; contributes to clinical failure due to toxicity or lack of efficacy [21]. | Early prediction of tissue exposure and selectivity. | STAR-informed AI models that balance potency (SAR) with tissue exposure/selectivity (STR) for candidate classification [21]. |

Frequently Asked Questions (FAQs)

Q1: Our AI platform identified a novel target, but wet-lab validation failed. What could have gone wrong?

A: This often stems from a disconnect between algorithmic prediction and biological plausibility.

- Potential Cause 1: Data Lineage and Bias. The AI model may have been trained on biased or low-quality datasets, leading to overfitting or the identification of targets that are not causally linked to the disease.

- Troubleshooting: Implement rigorous lineage tracking. Every target hypothesis should be traceable back to the source datasets, model versions, and preprocessing steps. Perform prospective validation by pre-registering your target hypothesis and wet-lab validation plan before the AI analysis begins to avoid cherry-picking [23].

- Potential Cause 2: Lack of Translational Relevance. The target was validated in a system that does not reflect the human disease physiology.

- Troubleshooting: Integrate human-centric data early. Use AI to analyze patient-derived samples, real-world data (RWD), and multi-omics datasets. Employ functionally relevant assays like CETSA in intact cells or patient-derived tissues to confirm target engagement in a biologically complex environment [19] [23].

Q2: How can we use AI to better predict the clinical translatability of a target earlier in the process?

A: Focus on building models that incorporate Structure–Tissue exposure/selectivity–Activity Relationship (STAR) principles early in target validation [21].

- Methodology: Instead of focusing solely on a compound's potency and specificity (Structure-Activity Relationship, or SAR), use AI models to also predict its tissue exposure and selectivity (Structure-Tissue exposure/selectivity Relationship, or STR). This helps classify drug candidates into categories (Class I-IV) based on their predicted clinical dose, efficacy, and toxicity balance, allowing for earlier go/no-go decisions on targets that may lead to poorly balanced compounds [21].

Experimental Protocol: AI-Enhanced Target Engagement Validation using CETSA

Objective: To confirm direct binding of a drug candidate to its intended target in a physiologically relevant cellular context, providing quantitative data for AI model training.

Materials & Reagents:

- Intact cells (e.g., primary cells or relevant cell lines)

- Drug candidate(s) of varying concentrations

- CETSA buffer and lysis reagents

- High-Resolution Mass Spectrometry (HR-MS) system

- AI/ML data analysis platform (e.g., for thermal shift curve analysis and pattern recognition)

Methodology:

- Treatment: Treat separate aliquots of intact cells with the drug candidate across a range of concentrations and a vehicle control.

- Heating: Subject each aliquot to a gradient of heating temperatures (e.g., from 37°C to 65°C).

- Lysis and Centrifugation: Lyse the heated cells and separate the soluble (stable) protein fraction from the insoluble (aggregated) fraction by centrifugation.

- Target Quantification: Quantify the remaining soluble target protein in each sample using a specific detection method, such as HR-MS, as demonstrated in a 2024 study validating engagement with DPP9 in rat tissue [19].

- Data Analysis and AI Integration:

- Generate thermal melt curves for the target protein with and without the drug.

- A positive engagement is indicated by a rightward shift (stabilization) of the melt curve in drug-treated samples.

- Feed the dose- and temperature-dependent stabilization data into AI models. These models can learn the signature of true positive engagement, helping to prioritize compounds for further development and refine future prediction algorithms [19].

AI in Hit-to-Lead Acceleration: Troubleshooting Guides and FAQs

The hit-to-lead (H2L) phase is being radically compressed through the integration of AI, automation, and high-quality experimental data.

Key AI Applications and Impact in H2L

| H2L Stage | Traditional Bottleneck | AI Acceleration | Demonstrated Outcome |

|---|---|---|---|

| Hit Triage | High false-positive rates from HTS; resource-intensive confirmation [24]. | AI-powered analysis of orthogonal assay data (e.g., IC₅₀, selectivity) to prioritize true hits. | Enables focus on tractable, high-value series, reducing wasted chemistry resources [24]. |

| Lead Generation & Optimization | Slow, iterative design-make-test-analyze (DMTA) cycles; synthetic constraints [19]. | Generative AI for de novo design of novel scaffolds and analogues with optimized properties. | Deep graph networks generated 26,000+ virtual analogs, achieving a 4,500-fold potency improvement in MAGL inhibitors; AI-driven design cycles reported ~70% faster [19] [20]. |

| Property Prediction | Late-stage attrition due to poor ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiles [21]. | Multi-task ML models predicting potency, selectivity, hERG risk, CYP inhibition, and PK parameters simultaneously. | Allows for filtering before synthesis, reducing wet-lab iterations by ~1/3 and preventing advancement of toxic chemotypes [23]. |

Frequently Asked Questions (FAQs)

Q1: Our AI model for predicting compound potency keeps failing during wet-lab validation. How can we improve model accuracy?

A: The most common cause is poor quality or inconsistent training data.

- Potential Cause: "Garbage In, Garbage Out." AI models are exceptionally sensitive to the quality of their training data. No amount of computational sophistication can correct for assay artifacts, poor signal-to-background ratios, or inconsistent plate performance [24].

- Troubleshooting: Invest in robust, mechanistically faithful biochemical assays. Ensure assays are validated with industry-standard performance metrics (e.g., Z′ > 0.7, high signal window). An error of even two-fold in IC₅₀ values can mislead SAR models and result in wasted resources. Use orthogonal assay formats for hit confirmation to eliminate false positives before feeding data into AI models [24].

Q2: How can we effectively integrate generative AI into our existing medicinal chemistry workflow?

A: Treat generative AI as a hypothesis generator that operates within a closed-loop system.

- Implementation Strategy:

- Foundation: Start with high-quality, validated biochemical data from your confirmed hits (e.g., IC₅₀, selectivity data) [24].

- Generation: Use generative models (VAEs, GANs, diffusion models) to propose novel analogues conditioned on improving desired properties (potency, solubility) while penalizing undesired traits (toxicity, synthetic complexity) [20] [23].

- Validation: Apply automatic chemical filters (e.g., for PAINS, reactive liabilities) and retrosynthetic analysis to prioritize synthetically feasible compounds [23].

- Closing the Loop: Synthesize and test the top AI-proposed compounds. Feed the new experimental results back into the model to retrain and refine future generations of designs, creating an iterative "measure → model → make → test → learn" cycle [24].

Experimental Protocol: Integrated AI-H2L Workflow for SAR Expansion

Objective: To rapidly establish Structure-Activity Relationships (SAR) and identify potent lead compounds using a closed-loop AI-driven workflow.

Materials & Reagents:

- Validated biochemical assay platform (e.g., Transcreener for kinases, AptaFluor for methyltransferases) [24]

- Starting hit compound(s)

- Facilities for automated or manual synthesis

- AI/ML platform for generative chemistry and property prediction (e.g., similar to Exscientia's "Centaur Chemist" approach [20])

- Robotic liquid handlers for assay miniaturization (optional but recommended)

Methodology:

- Data Foundation: Generate robust IC₅₀ and selectivity data for the initial hit series using a high-quality, quantitative biochemical assay. This dataset forms the foundational truth for the AI model [24].

- Generative Design: Input the validated data and a target product profile (e.g., IC₅₀ < 100 nM, low hERG risk) into the generative AI model. The model will then propose thousands of virtual analogues.

- In Silico Triage: Filter the generated compounds using AI-driven predictive models for ADMET properties and retrosynthetic feasibility to select the most promising candidates for synthesis [23].

- Synthesis & Testing: Synthesize the top-ranked compounds. Test them in the same validated biochemical assay to determine experimental potency.

- Iterative Learning: Feed the new experimental data from step 4 back into the generative model. This retraining step allows the AI to learn from both successful and unsuccessful designs, improving its predictive accuracy with each cycle [24]. This process compresses the traditional H2L timeline from months to weeks [19].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential tools and platforms that form the backbone of modern, AI-integrated discovery workflows.

| Research Reagent / Platform | Function in AI-Driven Workflow |

|---|---|

| CETSA (Cellular Thermal Shift Assay) | Provides direct, quantitative evidence of target engagement in intact cells and tissues, closing the gap between biochemical potency and cellular efficacy. Critical for validating AI-predicted targets and mechanisms [19]. |

| Transcreener & AptaFluor Assays | Homogeneous, high-throughput biochemical assays that directly measure enzymatic products (e.g., ADP, GDP). They provide the high-quality, mechanistically relevant data required to train and validate AI/ML models for hit triage and SAR analysis [24]. |

| Generative Chemistry Platforms (e.g., Exscientia's DesignStudio, NVIDIA BioNeMo) | AI engines that use deep learning to generate novel molecular structures de novo or optimize existing scaffolds against multiple objectives (potency, ADMET, synthesizability) [20] [23]. |

| Knowledge Graph Platforms (e.g., BenevolentAI) | Integrate vast amounts of structured and unstructured data from literature, omics, and clinical databases to uncover hidden relationships between genes, targets, and diseases, aiding in novel target identification and indication expansion [20]. |

| AlphaFold / AlphaFold3+ | Provides high-accuracy protein structure predictions, enabling structure-based drug design for previously intractable targets and improving the accuracy of molecular docking simulations within AI workflows [23]. |

Workflow and Pathway Visualizations

Diagram 1: AI-Driven Hit-to-Lead Acceleration Cycle

Diagram 2: STAR-Informed Candidate Selection Framework

From Theory to Practice: Implementing Early Stopping in STAR-Driven AI Pipelines

Frequently Asked Questions (FAQs) & Troubleshooting Guides

FAQ 1: Why are three distinct data splits (training, validation, and test) necessary? The three splits serve distinct, critical functions in the model development lifecycle. The training set is used to learn the model's parameters. The validation set provides an unbiased evaluation for hyperparameter tuning and model selection during training. The test set is held out entirely until the very end to provide a single, final, and unbiased assessment of the model's real-world performance [25] [26] [27]. Using only two splits (e.g., train and test) and repeatedly using the test set for tuning decisions causes "peeking," which biases the evaluation and leads to overfitting to the test set [27].

FAQ 2: What is a robust data split ratio? There is no single optimal ratio; it depends on your dataset's size and complexity [26]. Common split ratios for large datasets are 70% training, 15% validation, and 15% test or 80% training, 10% validation, and 10% test [25] [27]. For very large datasets, even smaller percentages (e.g., 98/1/1) can be effective, as 1% may still represent a statistically significant sample [27].

FAQ 3: My dataset is imbalanced. How should I split it? For imbalanced datasets with uneven class representation, use stratified splitting [26] [27]. This technique ensures that the proportion of each class label is preserved across the training, validation, and test sets. For example, if your dataset has 90% "Class A" and 10% "Class B," a stratified split will maintain this 90/10 ratio in all three subsets, preventing bias and ensuring the model is exposed to and evaluated on all classes fairly [26].

FAQ 4: What is data leakage and how do I prevent it in my splits? Data leakage occurs when information from the test set inadvertently influences the model training process [25]. This leads to overly optimistic performance metrics that do not reflect the model's true generalization ability. To prevent it:

- Keep the test set completely separate and untouched until the final evaluation [25] [27].

- Perform all feature engineering and preprocessing (e.g., normalization) based only on the training data, then apply the learned transformations to the validation and test sets without recalculating [25].

FAQ 5: How does the validation set relate to early stopping? The validation set is key to implementing early stopping, a method to halt training before the model overfits. During training, model performance is monitored on the validation set after each epoch. Training is stopped once performance on the validation set stops improving and begins to degrade, indicating the model is starting to overfit to the training data [28]. The model weights from the epoch with the best validation performance are typically saved [28].

Experimental Protocols & Methodologies

Core Data Splitting Strategies

The choice of splitting strategy is critical for a fair and robust evaluation. The following table summarizes key methodologies.

| Strategy | Core Principle | Ideal Use Case | Experimental Protocol |

|---|---|---|---|

| Random Splitting [26] [27] | Data is shuffled and randomly assigned to splits. | Large, balanced datasets where samples are independent and identically distributed. | 1. Shuffle the entire dataset randomly. 2. Allocate samples to train, validation, and test sets based on the chosen ratio (e.g., 70/15/15). |

| Stratified Splitting [26] [27] | Preserves the original class distribution across all splits. | Imbalanced datasets or multi-class classification tasks. | 1. Calculate the proportion of each class in the full dataset. 2. For each split, ensure the sample selection maintains these class proportions. |

| Time-Based Splitting [27] | Respects temporal order; past data trains the model, and future data tests it. | Time-series data (e.g., stock prices, sensor readings). | 1. Sort data chronologically. 2. Use the earliest portion for training (e.g., first 70%), a middle portion for validation (e.g., next 15%), and the latest portion for testing (e.g., last 15%). |

| K-Fold Cross-Validation [26] | Robustly uses data for both training and validation by creating multiple splits. | Small to medium-sized datasets where maximizing data usage is critical. | 1. Randomly split the data into K equal-sized folds (e.g., K=5). 2. For K iterations, train on K-1 folds and validate on the remaining fold. 3. Average the performance across all K trials for a final validation metric. |

Protocol for Early Stopping with a Validation Set

Early stopping is a form of implicit regularization that halts training to prevent overfitting [28]. The protocol below integrates with the standard training workflow using a validation set.

- Define a Trigger Metric: Choose a metric to monitor on the validation set (e.g., loss, accuracy) [28].

- Set a Patience Parameter: Determine the number of epochs to wait after the last improvement in the validation metric before stopping. A "patience" greater than 1 accounts for noisy/fluctuating validation performance [28].

- Monitor and Compare: After each training epoch, evaluate the model on the validation set.

- Save Checkpoints: Each time the validation metric improves, save a copy of the model weights [28].

- Stop Training: If the validation metric does not improve for 'patience' consecutive epochs, halt the training process.

- Load Best Model: Restore the model weights from the saved checkpoint that achieved the best validation performance.

Workflow Visualization: From Data Split to Early Stopping

The following diagram illustrates the logical flow and interaction between the training, validation, and test sets, highlighting the critical role of the validation set in model tuning and early stopping.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential computational "reagents" and their functions for constructing a robust machine learning training workflow.

| Research Reagent | Function & Purpose |

|---|---|

Stratified Splitter (e.g., StratifiedShuffleSplit in scikit-learn) |

Ensures representative sampling across data splits in class-imbalanced scenarios, preventing biased model evaluation [26] [27]. |

| Validation Set Monitor | Tracks model performance metrics (e.g., loss, accuracy) on the validation set after each training epoch, providing the signal for early stopping [28]. |

| Early Stopping Callback | A software routine that automatically halts the training process when the monitored validation metric has stopped improving, restoring the best model weights to prevent overfitting [28]. |

| Model Checkpointing | Saves the model's state (weights, parameters) whenever performance improves, ensuring the final model is the one that generalized best during training [28]. |

Troubleshooting Guide: STAR Early Stopping

Issue: High Resource Consumption with Low-Yield Alignments

Problem: The STAR alignment process continues to consume full computational resources even when processing data with an unacceptably low mapping rate, wasting time and budget.

- Root Cause: The aligner is set to run to completion for every file regardless of the potential outcome. Certain data types, like single-cell sequencing data, often lack complete mRNA coverage and are predisposed to low alignment rates. [18]

- Solution: Implement an early stopping optimization that monitors the mapping rate in real-time and terminates jobs unlikely to meet a minimum quality threshold. [18]

Issue: Determining the Minimum Read Percentage for a Reliable Decision

Problem: It is unclear how much of the data must be processed before making a reliable prediction about the final mapping rate.

- Root Cause: There has been no established guideline for a patience parameter based on the percentage of total reads.

- Solution: Analysis of progress logs indicates that processing at least 10% of the total number of reads provides a sufficient data point to decide whether to continue or abort an alignment. This threshold successfully identified 38 out of 1000 alignments for early termination in a test dataset. [18]

Issue: Inconsistent Application of Early Stopping Logic

Problem: The criteria for early stopping are applied inconsistently across different experiments or team members.

- Root Cause: A lack of a standardized operational protocol.

- Solution: Adopt the following standardized experimental protocol:

Standard Protocol for Early Stopping

- Configure Monitoring: Ensure STAR aligner is run with options to generate the

Log.progress.outfile, which reports job progress statistics including the current percentage of mapped reads. [18] - Set Thresholds: Define two key parameters before starting the pipeline:

- Early Stop Checkpoint: 10% of total reads.

- Minimum Mapping Rate Threshold: e.g., 30%. [18]

- Implement Logic: Integrate a script into your workflow that, upon reaching the 10% checkpoint:

- Parses the

Log.progress.outfile to check the current mapping rate. - Compares it against the minimum threshold.

- If the mapping rate is below the threshold, then the alignment process is terminated.

- Else, the alignment continues to completion.

- Parses the

- Log Outcomes: Record all terminated alignments and their mapping rates at termination for later audit and process refinement.

The workflow for this protocol is illustrated below:

Early Stopping Metrics and Parameters

The following table summarizes the key metrics and parameters for implementing early stopping, derived from experimental evaluation. [18]

Table 1: Key Early Stopping Parameters and Results

| Parameter / Metric | Description / Value |

|---|---|

| Early Stop Checkpoint | After 10% of total reads are processed. [18] |

| Mapping Rate Threshold | 30% (configurable based on project requirements). [18] |

| Terminated Alignments | 38 out of 1000 samples in a test set. [18] |

| Compute Time Savings | 19.5% reduction in total STAR execution time. [18] |

To effectively monitor the success of this optimization, track these key performance indicators (KPIs):

Table 2: Monitoring and Quality KPIs

| KPI Category | Example Metric | Application in Early Stopping |

|---|---|---|

| Process Performance [29] | Right-First-Time Rate (RFT) | Measure the percentage of alignments that run to completion successfully without needing re-work due to configuration errors. |

| Productivity [30] | Average Processing Time per Sample | Track the reduction in average compute time per sample after implementing early stopping. |

| Resource Effectiveness [29] | Overall Equipment Effectiveness (OEE) | Monitor the improvement in computational resource utilization (Availability, Performance, Quality). |

Frequently Asked Questions (FAQs)

What is the core concept behind early stopping for the STAR aligner?

Early stopping is a technique that halts a computational process once it is determined that continuing is unlikely to yield a valuable result. For the STAR aligner, this means monitoring the mapping rate during execution and terminating jobs that, after a certain point, show a mapping rate below a set threshold. This prevents wasting resources on data with poor alignment potential. [18] [31]

How was the 10% read threshold for early stopping determined?

This threshold was established empirically through analysis. Researchers analyzed 1000 Log.progress.out files from STAR alignments to find the point at which a low mapping rate could be reliably predicted. They concluded that after processing 10% of the total reads, the mapping rate was stable enough to make a termination decision with confidence. [18]

Can this early stopping method accidentally terminate a viable alignment?

The 10% threshold was validated to be a safe checkpoint to avoid false positives. In the proof-of-concept study, the alignments identified for termination were confirmed to be from data types (like single-cell sequencing) inherently unsuitable for the pipeline, indicating the method is robust. [18] You can adjust the minimum mapping rate threshold higher to be more conservative.

Besides early stopping, what other optimizations can improve pipeline throughput?

Using a newer version of the reference genome can have a dramatic impact. One experiment showed that using Ensembl release 111 over release 108 resulted in a 12x speedup and a significantly smaller index (29.5 GiB vs. 85 GiB), allowing for the use of cheaper, smaller cloud instances. [18]

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Components for the Optimized STAR Pipeline

| Item | Function / Description |

|---|---|

| STAR Aligner | The core software for accurate alignment of large transcriptome RNA-seq data. It is highly resource-intensive and the primary target for optimization. [18] |

| Ensembl 'Toplevel' Genome | The reference genome containing all known contigs and scaffolds. Using the newest release (e.g., Release 111) is critical for performance and accuracy. [18] |

| Log.progress.out File | A progress log file generated by STAR that reports statistics, including the current percentage of mapped reads. It is the primary data source for the early stopping logic. [18] |

| Computational Instance (e.g., r6a.4xlarge) | A cloud virtual machine with sufficient memory (e.g., 128GB RAM) to load the genomic index into system memory for fast alignment. [18] |

Frequently Asked Questions

Q1: What is the fundamental connection between early stopping and model checkpointing? Early stopping is a technique that halts model training when performance on a validation set stops improving, preventing overfitting. Checkpointing is the mechanism that persistently saves the model's state during this process. The two are intrinsically linked, as checkpointing allows you to retain the model weights from the epoch with the best validation performance, which is identified by the early stopping routine [31].

Q2: When using early stopping, which model weights should I ultimately select for my research: the last ones or a previously checkpointed set? You should select the checkpointed weights from the epoch where the validation metric was optimal, not the weights from the final training step. Modern training frameworks can automatically restore these best-performing weights at the end of training. For instance, you can configure the

OutputNetworkoption to be"best-validation"to ensure the model with the best validation metric is returned [32].Q3: How do I set the 'patience' parameter for early stopping effectively? The

patiencevalue is a critical hyperparameter that defines how many epochs to wait for an improvement in the validation metric before stopping [31]. A low patience (e.g., 1-5) may stop training too early, while a very high patience (e.g., 50) can lead to overfitting and wasted computational resources. The optimal value is domain-specific, but a moderate patience of 5-20 is a common starting point, which can be informed by observing the initial convergence behavior of your loss curves [32].Q4: My training loss is still decreasing, but my validation loss has started to increase consistently. What should I do? This is a classic sign of overfitting. Your early stopping monitor should be tracking the validation loss (or another validation metric), not the training loss. You should configure your early stopping callback to stop training and restore the model from the checkpoint where the validation loss was at its minimum [31].

Q5: How can I implement checkpointing and early stopping in my code? Most deep learning libraries provide callbacks to simplify this. For example, in Keras, the

EarlyStoppingandModelCheckpointcallbacks are used together. TheModelCheckpointcallback can be set to save a model file only when the validation performance improves, ensuring you always have the best model saved to disk [31].

Troubleshooting Guides

Problem: Training stops immediately after the first few epochs.

- Potential Cause: The

patienceparameter is set too low. - Solution: Increase the

patiencevalue to allow the model more time to converge before triggering a stop [31]. - Potential Cause: The validation data is not representative of the training data, or there is a data shuffling issue.

- Solution: Verify your data pipeline and ensure the validation set is drawn from the same distribution as the training data.

- Potential Cause: The

Problem: The best model checkpoint does not correspond to the best validation performance.

- Potential Cause: The checkpointing callback is not correctly configured to monitor the validation metric.

- Solution: Explicitly set the callback to monitor

'val_loss'or your specific validation metric (e.g.,'val_accuracy') and set the mode to'min'or'max'as appropriate. - Potential Cause: The checkpoint file is being overwritten, or the path is incorrect.

- Solution: Use a unique filename template (e.g., that includes the epoch number) or ensure the

save_best_onlyequivalent parameter is enabled.

Problem: Training continues for many epochs without improvement, ignoring the patience setting.

- Potential Cause: The early stopping callback is monitoring the wrong metric, or the metric's improvement is smaller than the defined

min_deltathreshold. - Solution: Double-check the metric being monitored. If the improvements are very small, you may need to adjust the

min_deltaparameter to a more suitable value.

- Potential Cause: The early stopping callback is monitoring the wrong metric, or the metric's improvement is smaller than the defined

Experimental Protocols & Data

Protocol: Implementing Early Stopping with Checkpointing

- Partition Data: Split your dataset into training, validation, and test sets. The validation set is crucial for guiding the early stopping.

- Define Metric: Choose a primary validation metric to monitor (e.g., validation loss, accuracy, F-score). This metric will determine what "best" means [32].

- Configure Callbacks: Set up two main callbacks:

- EarlyStopping: Configure it to monitor the chosen validation metric. Set the

patienceparameter and themode('min' for loss, 'max' for accuracy). - ModelCheckpoint: Configure it to monitor the same metric and save the model weights whenever an improvement is detected.

- EarlyStopping: Configure it to monitor the chosen validation metric. Set the

- Train Model: Initiate training, passing both callbacks. The training loop will automatically save checkpoints and stop based on the validation performance.

- Load Best Model: After training completes, load the saved checkpoint from the best epoch for final evaluation on the held-out test set or for deployment.

Table 1: Comparison of Model Selection Strategies

| Strategy | Description | Pros | Cons |

|---|---|---|---|

| Last Iteration | Selects the model weights from the final training epoch. | Simple to implement. | High risk of using an overfitted model if training continued past the optimal point. |

| Best-Validation (Early Stopping) | Selects the weights from the epoch with the best performance on a validation set [31] [32]. | Mitigates overfitting; automates the selection of the training epoch. | Requires a reliable and representative validation dataset. |

| Time-Based Checkpointing | Saves model weights at fixed time intervals (e.g., every N epochs). | Provides a full history of model states. | Storage-intensive; requires manual post-hoc analysis to find the best model. |

| Manual Selection | The researcher manually inspects metrics and selects a checkpoint. | Allows for expert judgment and multi-metric evaluation. | Time-consuming, subjective, and not scalable. |

Table 2: Key "Research Reagent Solutions" for Reliable Experimentation

| Item | Function |

|---|---|

| Validation Dataset | A held-out portion of data used to evaluate the model's generalization performance during training and to guide the early stopping decision [31]. |

| Checkpointing Callback | A software function (e.g., ModelCheckpoint) that automatically saves the model's state (weights, optimizer state) to disk at defined intervals or when performance improves [32]. |

| Early Stopping Callback | A software function (e.g., EarlyStopping) that monitors a validation metric and halts the training process once performance has stopped improving for a specified number of epochs (patience), preventing overfitting [31]. |

| Metric Logger | A tool or module that tracks and records training and validation metrics over time, enabling visualization and analysis of the training progress. |

| High-Fidelity Reproduction Code | Carefully implemented algorithms, like those documented for PPO, that ensure experimental results are consistent and reproducible, which is a cornerstone of the scientific method [33]. |

Workflow Visualization

Early Stopping and Checkpointing Workflow

Integrating early stopping callbacks in TensorFlow and PyTorch represents a crucial optimization technique for managing molecular data processing pipelines, particularly within transcriptomics research. This approach directly parallels the STAR early stopping optimization demonstrated in recent genomic studies, where implementing early stopping mechanisms reduced total alignment time by 23% while maintaining data integrity [16] [34]. For researchers and drug development professionals working with complex molecular datasets, proper implementation of early stopping prevents model overfitting, conserves computational resources, and ensures biologically relevant model outputs. The techniques outlined in this technical support center bridge machine learning best practices with domain-specific applications in molecular research, providing actionable solutions for common implementation challenges encountered during experimental workflows.

TensorFlow Implementation Guide

Core Early Stopping Callback Implementation

TensorFlow's Keras API provides a built-in EarlyStopping callback that seamlessly integrates with model training workflows. The callback monitors a specified metric during training and stops the process when no significant improvement is detected, preventing overfitting and optimizing computational resource utilization [35] [36].

Parameter Configuration Guide

Table: TensorFlow EarlyStopping Callback Parameters

| Parameter | Description | Recommended Value for Molecular Data |

|---|---|---|

monitor |

Metric to monitor for improvement | 'val_loss' or 'val_accuracy' |

min_delta |

Minimum change to qualify as improvement | 0.001 to 0.01 |

patience |

Epochs to wait before stopping | 5 to 15 (depends on dataset size) |

mode |

Direction of improvement | 'auto', 'min', or 'max' |

restore_best_weights |

Revert to best weights when stopping | True (highly recommended) |

start_from_epoch |

Epoch to start monitoring | 0 for molecular data |

Integration with Molecular Data Pipelines

When working with molecular data such as transcriptomics sequences or chemical structures, consider these specialized configurations:

- For STAR aligner optimization scenarios, set

patience=5andmin_delta=0.005to balance training thoroughness with computational efficiency [16] - When processing FTIR chemical imaging data for breast cancer recurrence prediction, use

monitor='val_accuracy'withmode='max'to directly optimize classification performance [37] - For large-scale transcriptomic datasets (processing tens to hundreds of terabytes), implement distributed training with early stopping callbacks on each worker node to maximize resource utilization [16]

PyTorch Implementation Guide

Custom Early Stopping Class Implementation

PyTorch requires manual implementation of early stopping logic, providing greater flexibility for research-specific adaptations. Below is a robust implementation tested with molecular data processing:

Training Loop Integration

Integrate the early stopping class into your PyTorch training workflow for molecular data:

PyTorch Lightning Implementation

For rapid prototyping with molecular data, leverage PyTorch Lightning's built-in callback:

Table: PyTorch Early Stopping Parameter Comparison

| Implementation | Advantages | Best for Molecular Data Types |

|---|---|---|

| Custom Class | Full customization, research flexibility | Novel architectures, experimental data |

| PyTorch Lightning | Rapid deployment, production readiness | Standardized transcriptomic data |

| Val-Train Loss Delta | Direct overfitting prevention [38] | Small datasets with high variance |

Experimental Protocols and Methodologies

Workflow Diagram for Molecular Data Integration

Quantitative Evaluation Protocol

For rigorous evaluation of early stopping effectiveness with molecular data, implement this experimental protocol:

- Baseline Establishment: Train models without early stopping for maximum epochs (e.g., 100) to establish performance baselines and overfitting patterns

- Parameter Sweeping: Systematically test early stopping parameters across ranges:

- Patience: 3, 5, 7, 10, 15 epochs

- min_delta: 0.0001, 0.001, 0.01, 0.05

- Monitored metrics: valloss, valaccuracy, F1-score

- Performance Metrics: Record for each configuration:

- Final validation performance

- Training epochs utilized

- Computational resource consumption

- Model generalization on test set

Table: Early Stopping Performance with Molecular Datasets

| Dataset Type | Optimal Patience | Optimal min_delta | Epochs Saved | Performance Impact |

|---|---|---|---|---|

| FTIR Chemical Imaging [37] | 7 | 0.001 | 42% | ROC AUC: 0.64 (no change) |

| STAR Transcriptomic Alignment [16] | 5 | 0.005 | 23% | Alignment accuracy maintained |

| General Molecular Classification | 10 | 0.001 | 35-60% | Validation loss improved 5-8% |

Troubleshooting Common Implementation Issues