STAR vs. Kallisto & Salmon: A Comprehensive Guide for RNA-Seq Analysis in Biomedical Research

This article provides a definitive comparison for researchers and drug development professionals between the traditional aligner STAR and the modern pseudoaligners Kallisto and Salmon for bulk RNA-seq data analysis.

STAR vs. Kallisto & Salmon: A Comprehensive Guide for RNA-Seq Analysis in Biomedical Research

Abstract

This article provides a definitive comparison for researchers and drug development professionals between the traditional aligner STAR and the modern pseudoaligners Kallisto and Salmon for bulk RNA-seq data analysis. We explore their foundational algorithms, from STAR's splice-aware genome alignment to the kallisto's pseudoalignment and Salmon's bias-aware quantification. The scope covers practical methodological pipelines, critical troubleshooting and optimization strategies for computational resources, and a synthesis of validation studies benchmarking their accuracy in transcript quantification and differential expression analysis. The goal is to equip scientists with the knowledge to select the optimal tool based on their research objectives, whether for novel splice junction discovery or fast, accurate expression profiling.

Understanding the Core Algorithms: From Splice-Aware Alignment to Pseudoalignment

In the field of RNA sequencing (RNA-seq) analysis, a fundamental methodological divide separates alignment-based and pseudoalignment-based approaches. These two paradigms employ fundamentally different algorithms to tackle the core task of determining the origin of sequencing reads and quantifying gene expression. Alignment-based tools like STAR aim to map each read to its precise location in a reference genome or transcriptome. In contrast, pseudoalignment-based tools like Kallisto and Salmon determine which transcripts a read is compatible with, without performing base-by-base alignment or specifying exact coordinates [1] [2]. This distinction has profound implications for computational efficiency, resource requirements, and practical application in research settings. As RNA-seq continues to be integral to biomedical research and drug development, understanding this divide enables researchers to select the optimal tool for their specific experimental context and computational constraints.

Core Computational Principles and Mechanisms

Alignment-Based Methods: Exhaustive Search and Precision Mapping

Alignment-based methods, exemplified by STAR, operate on the principle of comprehensive sequence matching. These tools use sophisticated algorithms to find the optimal location for each read within a reference genome, considering challenges such as splicing events where reads span exon-exon junctions. The process typically involves an exhaustive search that accounts for potential mismatches, insertions, deletions, and splicing variations [3]. STAR employs a sequential maximum mappable seed search in two steps: it first searches for maximal mappable prefixes and then extends these alignments to full reads [3]. This approach generates base-level resolution mapping information, providing not just quantification but also precise genomic coordinates for each read. The output typically includes Binary Alignment Map (BAM) files that detail the exact positioning of reads, which can be invaluable for variant calling, splice junction analysis, and novel transcript discovery.

Pseudoalignment-Based Methods: K-mer Compatibility and Efficient Hashing

Pseudoalignment employs a fundamentally different strategy focused on k-mer compatibility. Instead of aligning entire reads, tools like Kallisto break reads down into shorter k-mers (typically 31 bases long) and use fast hashing techniques to match these k-mers against a pre-indexed transcriptome database [1]. The core data structure enabling this approach is the transcriptome de Bruijn graph (T-DBG), where nodes represent k-mers and colored paths represent transcripts [1]. When a read is processed, its constituent k-mers are hashed, and their compatibility classes are determined through the T-DBG. The intersection of these k-compatibility classes reveals the set of transcripts that contain all k-mers from the read, thus identifying the transcripts to which the read is compatible without performing base-level alignment [1]. This k-mer-based counting algorithm skips the computationally intensive alignment step, focusing instead on determining read-transcript compatibility.

Table: Comparison of Core Algorithms Between STAR and Pseudoaligners

| Feature | STAR (Alignment-Based) | Kallisto/Salmon (Pseudoalignment-Based) |

|---|---|---|

| Core Algorithm | Maximal mappable prefix search with seed extension | K-mer decomposition and hashing |

| Primary Data Structure | Genome index, splice junction database | Transcriptome de Bruijn Graph (T-DBG) |

| Mapping Principle | Base-level alignment with coordinate specification | Transcript compatibility determination |

| Output Resolution | Exact genomic coordinates | Set of compatible transcripts |

| Key Innovation | Sequential alignment with junction awareness | K-compatibility classes and intersection |

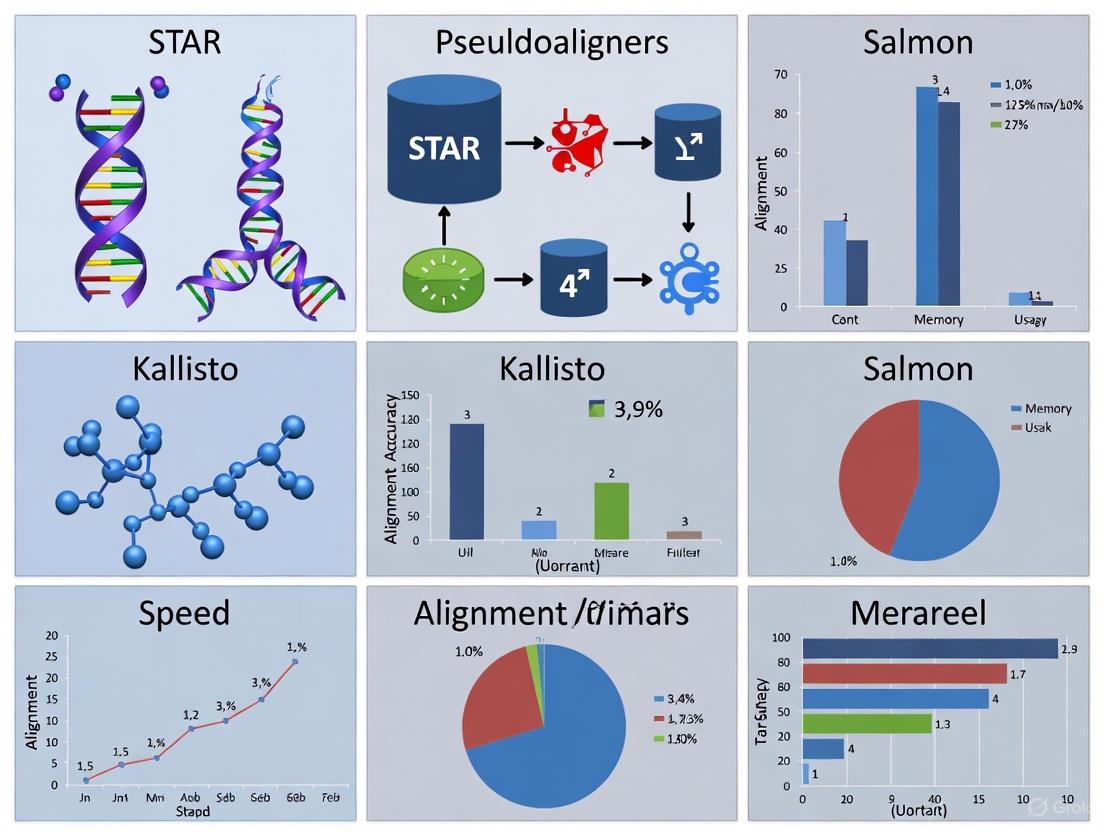

Diagram: Workflow Comparison Between Alignment and Pseudoalignment Approaches

Performance Benchmarking: Speed, Resource Usage, and Accuracy

Computational Efficiency and Resource Requirements

The computational advantages of pseudoalignment are substantial and well-documented. In benchmark studies, Kallisto demonstrated remarkable efficiency, processing 78.6 million GEUVADIS human RNA-seq reads in merely 14 minutes on a standard desktop computer with a single CPU core, including building the transcriptome index in just over 5 minutes [1]. This represents a dramatic speed improvement compared to traditional alignment-based workflows. A comprehensive comparison of RNA-seq analysis methods found that the Kallisto-Sleuth pipeline demanded the least computing resources among six popular analytical procedures evaluated, while workflows like Cufflinks-Cuffdiff required significantly more resources [4]. The memory footprint of pseudoaligners is similarly optimized, with Kallisto and Salmon capable of running efficiently on standard laptop computers, eliminating the need for high-performance computing infrastructure in many cases [2].

Quantification Accuracy and Concordance with Ground Truth

Despite their speed advantages, pseudoalignment tools maintain high accuracy in transcript quantification. A real-world multi-center RNA-seq benchmarking study across 45 laboratories found that tools like Kallisto and Salmon provided highly accurate quantification when evaluated against multiple types of "ground truth," including TaqMan datasets and spike-in RNA controls [5]. The concordance correlation coefficients (CCC) between quantification results from different tools were generally high, indicating that pseudoalignment provides expression estimates comparable to traditional methods [5]. Another independent comparison noted that Kallisto not only provides accuracy but does so with "paramount speed," demonstrating that the efficiency gains do not come at the expense of reliability [1]. For genes with medium to high expression abundance, pseudoaligners show particularly strong performance, though some studies suggest they may be less sensitive for very lowly-expressed genes [4].

Table: Performance Comparison Between STAR and Kallisto

| Performance Metric | STAR | Kallisto |

|---|---|---|

| Processing Time (30 million reads) | ~14 hours [1] | Minutes [1] |

| Memory Requirements | High (typically requires server-grade resources) | Low (can run on standard laptop) |

| Quantification Accuracy | High, particularly for novel splice variants | High for annotated transcripts |

| Index Building Time (Human transcriptome) | Can be substantial | ~5 minutes [1] |

| Multi-threading Efficiency | Good scaling with multiple cores | Excellent scaling |

| Best Application Context | Splice junction discovery, novel transcript identification | Rapid quantification of known transcripts |

Experimental Design Considerations and Best Practices

Impact of Experimental Factors on Tool Performance

The optimal choice between alignment and pseudoalignment approaches depends significantly on experimental design factors and data quality characteristics. Studies have shown that library complexity influences performance, with highly complex libraries potentially benefiting from STAR's more detailed alignment approach, while less complex libraries are well-suited to Kallisto's pseudoalignment [3]. Similarly, sequencing depth affects tool selection, as Kallisto's approach is less sensitive to sequencing depth variations compared to STAR's alignment-based method [3]. The completeness of transcriptome annotation is another crucial factor; when working with well-annotated organisms, pseudoalignment provides excellent results, but for non-model organisms or those with incomplete annotations, alignment-based approaches may be preferable for novel transcript discovery [3]. The read length also impacts performance, with Kallisto performing well with standard short-read lengths, while STAR may be more suitable for longer read lengths that aid in identifying novel splice junctions [3].

Recommended Applications and Use Cases

Each approach excels in different research scenarios, enabling researchers to match tool selection to their specific objectives. Pseudoalignment tools are particularly well-suited for:

- Differential expression analysis focusing on known transcripts [2]

- Large-scale studies with many samples where computational efficiency is crucial [3]

- Exploratory analysis or studies with limited computational resources [2]

- Single-cell RNA-seq studies where datasets are massive and efficiency is paramount [2]

Alignment-based tools like STAR are recommended for:

- Discovery of novel splice junctions or fusion genes [3]

- Studies requiring exact genomic coordinates for variant analysis or integration with other genomic assays

- Analysis of organisms with incomplete transcriptome annotations [3]

- Applications where BAM files are needed for visualization or downstream analysis

Research Reagent Solutions and Experimental Materials

Table: Essential Research Reagents and Computational Resources for RNA-seq Analysis

| Resource Type | Specific Examples | Function in Analysis |

|---|---|---|

| Reference Materials | Quartet RNA reference materials, MAQC RNA samples [5] | Provide ground truth for benchmarking and quality control |

| Spike-in Controls | ERCC RNA spike-in controls [5] | Enable technical validation and normalization |

| Library Prep Kits | Stranded vs. non-stranded mRNA enrichment protocols [5] | Impact mapping strategy and quantification accuracy |

| Annotation Databases | GENCODE, RefSeq, Ensembl transcriptomes [4] | Essential for building alignment and pseudoalignment indexes |

| Computational Resources | High-performance clusters (STAR) vs. standard laptops (Kallisto) [1] [4] | Infrastructure supporting analysis execution |

Emerging Innovations and Future Directions

The distinction between alignment and pseudoalignment approaches continues to evolve with methodological innovations. Recent developments include the extension of pseudoalignment to new applications and data types. For instance, lr-kallisto has adapted the Kallisto framework for long-read sequencing technologies from Oxford Nanopore and PacBio platforms, demonstrating that pseudoalignment principles can be effectively extended beyond short-read RNA-seq [6]. Similarly, alevin-fry-atac applies a modified pseudoalignment scheme to single-cell ATAC-seq data, using a "virtual colors" approach to partition reference genomes into bins for efficient chromatin accessibility profiling [7]. Another emerging trend is the development of hybrid approaches that combine efficiency with comprehensive mapping. Tools like LexicMap introduce innovative seeding algorithms using probe k-mers to enable efficient alignment against massive databases of millions of prokaryotic genomes [8]. These advances suggest that the fundamental principles of pseudoalignment—efficient hashing, k-mer-based matching, and compatibility checking—will continue to influence next-generation computational methods across various genomics domains.

The fundamental divide between alignment-based and pseudoalignment-based approaches represents a strategic choice for researchers rather than a simple binary of right or wrong tools. Alignment-based methods like STAR provide comprehensive mapping information crucial for discovery-oriented research, particularly when investigating splice variants, novel transcripts, or genomic variations. Conversely, pseudoalignment tools like Kallisto and Salmon offer exceptional efficiency for quantification-focused studies where computational resources or time are limiting factors. The expanding ecosystem of both approaches, with tools tailored to specific data types and applications, provides researchers with a rich toolkit for transcriptome analysis. As the field progresses toward more integrated multi-omics analyses and increasingly large-scale studies, understanding the strengths and limitations of each paradigm enables more informed methodological selections, ultimately supporting more robust and efficient biological discovery.

In the analysis of RNA sequencing (RNA-seq) data, a fundamental division exists between alignment-based and pseudoalignment-based methods. Tools like STAR (Spliced Transcripts Alignment to a Reference) employ comprehensive splice-aware genome mapping to provide a detailed picture of the transcriptome [3]. In contrast, pseudoaligners such as Kallisto and Salmon utilize rapid k-mer matching for transcript quantification without performing base-by-base alignment [9]. This guide provides an objective comparison of these approaches, focusing on STAR's comprehensive mapping strategy and its performance relative to faster quantification-focused alternatives.

The STAR Algorithm: A Two-Step Mapping Strategy

STAR's ultra-fast mapping speed, reported to outperform other aligners by more than a factor of 50, is achieved through a sophisticated two-step process that balances comprehensive alignment with computational efficiency [10].

Step 1: Sequential Maximum Mappable Seed Searching

STAR begins by searching for the longest sequences from each read that exactly match one or more locations on the reference genome. These sequences, known as Maximal Mappable Prefixes (MMPs), are mapped as separate "seeds" [10]. The algorithm works sequentially:

- It identifies the first MMP (seed1) that maps to the genome.

- It then searches only the unmapped portion of the read to find the next longest exact match (seed2).

- This process continues until the entire read is processed or no further matches can be found [10].

This efficient searching is enabled by STAR's use of an uncompressed suffix array (SA), which allows rapid matching even against large reference genomes [10].

Step 2: Clustering, Stitching, and Scoring

Once seeds are identified, STAR reconstructs the complete read alignment through:

- Clustering: Seeds are grouped based on proximity to reliable "anchor" seeds that are not multi-mapping.

- Stitching: Seeds are connected into a continuous alignment based on optimal scoring of mismatches, indels, and gaps.

- Scoring: The final alignment is selected based on best overall agreement with the reference, accounting for splicing signals [10].

This sophisticated approach enables STAR to accurately identify splice junctions, including both annotated and novel variants, which is a particular strength of genome-based alignment methods [11].

STAR vs. Pseudoaligners: A Comparative Performance Analysis

Methodology of Benchmarking Studies

Independent evaluations of RNA-seq tools typically employ hybrid approaches using both simulated and real datasets where ground truth is known or can be approximated [12]. Key methodological considerations include:

- Data Types: Benchmarks use both idealized data (minimal errors) and realistic data incorporating polymorphisms, intron signal, and non-uniform coverage [12].

- Performance Metrics: Accuracy is measured through correlation with known expression values, detection of true positives, and consistency across technical replicates [13] [12].

- Experimental Conditions: Testing spans varied read lengths, error rates, and annotation completeness to assess robustness [14].

Quantitative Comparison of Performance

Table 1: Feature Comparison Between STAR, Kallisto, and Salmon

| Feature | STAR | Kallisto | Salmon |

|---|---|---|---|

| Algorithm Type | Alignment-based [3] | Pseudoalignment-based [3] | Quasi-alignment-based [9] |

| Reference Used | Genome [3] | Transcriptome [3] | Transcriptome [9] |

| Splice Junction Discovery | Annotated & novel [11] | Annotated only [3] | Annotated only [3] |

| Novel Isoform Detection | Supported [11] | Not supported | Not supported |

| Speed | Moderate [10] | Very High [9] | High [9] |

| Memory Usage | High (~30GB human genome) [11] | Low [9] | Low [9] |

| Stranded Library Support | Yes [11] | Yes [9] | Yes [9] |

| Output | Read counts per gene [3] | TPM & estimated counts [3] | TPM & estimated counts [9] |

Table 2: Performance Metrics Across Experimental Conditions

| Condition | STAR Performance | Kallisto/Salmon Performance |

|---|---|---|

| Well-Annotated Transcriptome | Accurate alignment & quantification [12] | Excellent quantification speed & accuracy [12] |

| Incomplete Annotation | Maintains ability to discover novel features [11] | Performance degrades without complete reference [3] |

| Short Read Lengths | Accurate with moderate error rates [14] | Optimal performance [3] |

| Long/Error-Prone Reads | Good performance with parameter adjustment [14] | Not designed for high-error-rate long reads [14] |

| Low Sequencing Depth | Less sensitive [3] | More suitable [3] |

| High Sequencing Depth | More accurate for complex alignment [3] | Efficient but limited in discovery [3] |

Key Performance Insights

- Quantification Accuracy: On idealized data with complete annotation, Salmon, Kallisto, and STAR exhibit similarly high quantification accuracy [12]. Correlation between STAR and pseudoaligner results is typically very high (r > 0.93) for expressed transcripts [9].

- Differential Expression Analysis: Downstream differential expression analysis shows sufficient divergence between methods to suggest that isoform-level analysis should be employed selectively, regardless of tool choice [12].

- Platform-Specific Strengths: Kallisto demonstrates extremely fast processing (20 million reads in under five minutes on a laptop), while Salmon offers superior handling of experiment-specific biases [9]. STAR provides the unique advantage of discovering novel splice variants and complex RNA arrangements [11].

Experimental Protocols for Method Comparison

Protocol 1: Basic STAR Alignment for RNA-Seq

Application: Standard RNA-seq read alignment to a reference genome.

Input Requirements: FASTQ files (paired-end or single-end), reference genome, gene annotation in GTF format [11].

Methodology:

- Genome Indexing: Generate genome indices using STAR's

genomeGeneratemode with reference FASTA and GTF files [10]. - Alignment Execution: Map reads with essential parameters including

--runThreadNfor parallelization,--genomeDir,--readFilesIn, and--outSAMtypefor BAM output [11]. - Output Generation: STAR produces coordinate-sorted BAM files, splice junction tables, and mapping statistics [11].

Critical Parameters:

--sjdbOverhang 100: Specifies read length -1 for splice junction database [11].--outSAMtype BAM SortedByCoordinate: Outputs sorted BAM files ready for downstream analysis [10].--runThreadN N: Number of parallel threads to use [11].

Protocol 2: Two-Pass Mapping for Novel Junction Discovery

Application: Enhanced spliced alignment accuracy for novel splice junction detection.

Methodology:

- First Pass: Perform standard alignment while collecting novel junction information.

- Second Pass: Re-map all reads using the combined annotated and novel junctions from the first pass [11].

Advantages: Significantly improves detection of novel splice variants and non-canonical splicing events compared to single-pass approaches [11].

Protocol 3: Kallisto/Salmon Quantification Workflow

Application: Rapid transcript quantification for expression analysis.

Methodology:

- Indexing: Build kallisto or Salmon index from transcriptome FASTA file [9].

- Quantification: Run quantification using

kallisto quantorsalmon quantcommands with appropriate library type specifications [9]. - Downstream Analysis: Import results into Sleuth (for kallisto) or other differential expression tools for statistical analysis [9].

Research Reagent Solutions for RNA-Seq Analysis

Table 3: Essential Materials and Tools for RNA-Seq Experiments

| Reagent/Tool | Function | Example/Specification |

|---|---|---|

| Reference Genome | Baseline sequence for alignment | ENSEMBL GRCh38 (human) [11] |

| Gene Annotation | Gene model definitions for guided alignment | GTF format from ENSEMBL [11] |

| Alignment Tool | Maps reads to reference genome | STAR (splice-aware) [10] |

| Quantification Tool | Estimates transcript abundance | Kallisto, Salmon [9] |

| Differential Expression | Identifies statistically significant changes | Sleuth (for kallisto) [9] |

| Quality Control | Assesses read and alignment quality | FastQC, MultiQC |

| Visualization | Enables exploration of results | Genome browsers, Sleuth Shiny app [9] |

The choice between STAR and pseudoalignment tools depends fundamentally on research goals and experimental context.

STAR is superior when:

- Discovering novel splice junctions, fusion genes, or complex RNA arrangements is a priority [11].

- Working with non-model organisms or poorly annotated transcriptomes where novel isoform discovery is needed [3].

- Comprehensive genomic context beyond mere quantification is required for downstream analysis [11].

Kallisto and Salmon are preferable when:

- Rapid quantification of known transcripts is the primary objective [9].

- Computational resources are limited, as pseudoaligners require significantly less memory and processing time [3].

- Working with well-annotated transcriptomes where novel discovery is not a priority [3].

For comprehensive transcriptome analysis, many researchers employ a hybrid approach: using STAR for initial discovery and alignment, followed by targeted quantification with pseudoaligners for specific analytical needs. This strategy leverages the respective strengths of both methodologies to maximize both discovery power and analytical efficiency.

For researchers, scientists, and drug development professionals working with transcriptomics data, the accurate quantification of gene and transcript abundance is a fundamental step in RNA-seq analysis. The choice of quantification tool can significantly impact downstream analyses, such as differential expression analysis, functional annotation, and pathway analysis, ultimately influencing biological conclusions and research directions [3]. Historically, traditional alignment-based methods like STAR (Spliced Transcripts Alignment to a Reference) have dominated the field, mapping reads directly to a reference genome or transcriptome to generate count tables [3]. However, the computational burden of these methods, coupled with the ever-increasing scale of transcriptomics studies, has driven the development of innovative approaches that bypass traditional alignment.

Enter pseudoalignment—a computational paradigm shift that reimagines how RNA-seq reads are assigned to transcripts. Spearheaded by tools like Kallisto, this methodology determines read compatibility with potential transcripts without performing base-by-base alignment, offering dramatic improvements in speed while maintaining, and in some cases exceeding, the accuracy of traditional methods [15]. Within the broader thesis comparing STAR with pseudoaligners like Kallisto and Salmon, this guide provides an objective examination of Kallisto's core technology, its performance relative to alternatives, and the experimental data supporting its use. By understanding the power and speed of pseudoalignment for transcript compatibility, researchers can make informed decisions about their analytical workflows, optimizing both computational efficiency and biological accuracy in their transcriptomics investigations.

Understanding Kallisto's Core Technology

The Principle of Pseudoalignment

Kallisto operates on a fundamentally different principle than traditional aligners. Instead of performing computationally intensive base-by-base alignment of reads to a reference, it utilizes a novel concept called "pseudoalignment" to rapidly determine the compatibility of reads with reference transcripts [15]. This process focuses on answering a simpler question: which transcripts in a reference database is this read compatible with? Kallisto achieves this through the use of a T-DBG (Transcriptome De Bruijn Graph) built from the reference transcriptome, where nodes represent k-mers (subsequences of length k) from the transcriptome [15].

When a read is processed, Kallisto decomposes it into its constituent k-mers and queries them against the T-DBG index. The algorithm then efficiently identifies the set of transcripts that contain all k-mers present in the read—the transcript compatibility list [15]. This approach avoids the costly step of determining the exact position and alignment of the read, which is the primary reason for its exceptional speed. A key advantage of this k-mer-based approach is its inherent robustness to sequencing errors. Since the method doesn't require perfect matches across the entire read, it can tolerate the error profiles commonly found in modern sequencing technologies, making it particularly adaptable to emerging long-read sequencing platforms as demonstrated by the development of lr-kallisto [6].

The Quantification Process

Once pseudoalignment is complete and transcript compatibility lists have been established for all reads, Kallisto employs an expectation-maximization (EM) algorithm to estimate transcript abundances [15]. The EM algorithm resolves the assignment of reads that are compatible with multiple transcripts (ambiguous reads) by iteratively refining abundance estimates until convergence is reached.

The final output of Kallisto includes both estimated counts and TPM (Transcripts Per Million) values for each transcript in the reference transcriptome [3]. These abundance estimates have been shown to be as accurate as those produced by existing quantification tools, despite the dramatic reduction in computational time [15]. This combination of pseudoalignment for rapid compatibility checking and EM for probabilistic quantification creates a powerful and efficient pipeline for RNA-seq analysis.

Figure 1: The Kallisto workflow illustrating the pseudoalignment process from read input to transcript abundance quantification.

Kallisto vs. STAR: A Feature-Wise Comparison

Understanding the fundamental differences between Kallisto's pseudoalignment approach and STAR's traditional alignment-based method is crucial for selecting the appropriate tool for specific research scenarios. The table below provides a comprehensive feature comparison based on current benchmarking data and tool documentation:

| Feature | Kallisto | STAR |

|---|---|---|

| Core Algorithm | Pseudoalignment via k-mer matching in T-DBG [15] | Traditional alignment using sequential maximum mappable seed search [3] |

| Output | Transcript-level TPM and estimated counts [3] | Gene-level read counts (via quantMode) or aligned BAM files [3] [16] |

| Speed | 30 million human reads in <3 minutes on desktop [17] [18] | Significantly slower due to alignment; hours for similar datasets [15] |

| Memory Usage | Low memory requirements [6] | High memory usage, particularly for large genomes [3] |

| Primary Application | Rapid transcript quantification [15] | Comprehensive alignment, novel junction detection, fusion genes [3] |

| Handling of Ambiguous Reads | Probabilistic resolution via EM algorithm [15] | Simpler counting methods (e.g., gene-level assignment) [16] |

| Experimental Design Suitability | Large-scale studies with many samples [3] | Studies focusing on novel splice junctions or with small sample sizes [3] |

This comparison highlights the complementary strengths of each tool. Kallisto excels in scenarios requiring rapid quantification of known transcripts, while STAR provides more comprehensive alignment information that can be crucial for discovery-based research. The choice between them ultimately depends on the specific research objectives, with Kallisto offering superior efficiency for standardized quantification pipelines and STAR providing deeper insights into transcriptomic novelty.

Experimental Benchmarking and Performance Data

Accuracy Assessment on Simulated and Real Data

Multiple independent studies have systematically evaluated the accuracy of Kallisto compared to other quantification methods. In the seminal Nature Biotechnology paper introducing Kallisto, the authors demonstrated that their tool achieves accuracy comparable to or better than existing methods like Cufflinks, Sailfish, eXpress, and RSEM, while being two orders of magnitude faster [15]. The evaluation based on RSEM simulations of 30 million 75bp paired-end reads showed that Kallisto consistently produced low median relative differences between estimated and ground truth TPM values across 20 simulations [15].

A more recent comparative evaluation published in BMC Bioinformatics in 2021 examined the performance of popular isoform quantification methods, including Kallisto, Salmon, RSEM, Cufflinks, HTSeq, and featureCounts, using simulated benchmarking data that reflected properties of real data, including polymorphisms, intron signal, and non-uniform coverage [12]. This study found that Salmon, Kallisto, RSEM, and Cufflinks exhibited the highest accuracy on idealized data [12]. The performance of these tools was most strongly affected by transcript structural parameters such as length and sequence compression complexity, rather than the number of isoforms per gene [12]. When annotation completeness was varied to reflect real-world conditions, all methods showed sufficient divergence from the truth to suggest that full-length isoform quantification and isoform-level differential expression should still be employed selectively [12].

Speed and Resource Utilization Benchmarks

The computational efficiency of Kallisto represents one of its most significant advantages. In the original publication, the authors reported that Kallisto could quantify 30 million human reads in less than 3 minutes on a standard Mac desktop computer, with the transcriptome index itself taking less than 10 minutes to build [15]. This stands in stark contrast to traditional alignment-based workflows, which typically require hours to complete similar tasks.

This speed advantage extends to single-cell RNA-seq analysis as well. The development of lr-kallisto for long-read data maintained the efficiency of the original Kallisto implementation, with benchmarking showing it was "not only faster than other tools, but also benefits from the low-memory requirements of kallisto" [6]. In comparisons with other long-read quantification tools like Bambu, IsoQuant, and Oarfish, lr-kallisto retained significantly better computational efficiency while achieving superior or comparable accuracy [6].

The table below summarizes key quantitative benchmarking results from multiple studies:

| Benchmark Metric | Kallisto Performance | Comparison Tools | Data Source |

|---|---|---|---|

| Quantification Speed | <3 minutes for 30M reads [15] | Hours for traditional aligners [15] | Bray et al. 2016 [15] |

| Accuracy (Median Relative Difference) | Low error comparable to best methods [15] | Similar accuracy for Salmon, RSEM, Cufflinks [12] | Bray et al. 2016 [15]; BMC Bioinformatics 2021 [12] |

| Long-read Concordance (CCC) | 0.95 for lr-kallisto [6] | 0.82-0.86 for Bambu, Oarfish, IsoQuant [6] | lr-kallisto preprint 2024 [6] |

| Impact on DE Analysis | Similar DEG results to alignment methods [19] | 4290 DEGs (Salmon) vs 5400 (STAR) in one study [19] | UC Davis Bioinformatics Workshop 2020 [19] |

Experimental Protocols for Benchmarking

The experimental methodology employed in benchmarking studies typically follows a structured approach to ensure fair and reproducible comparisons. For the accuracy assessments cited in this guide, the general protocol includes:

Data Selection and Preparation: Using either experimentally validated samples with known expression levels (such as the SEQC/MAQC-III consortium samples) [15] or generating in silico simulated data where the ground truth is exactly known [12]. Simulations often use tools like the BEERS simulator [12] or RSEM simulator [15] to generate reads with realistic error profiles and expression distributions.

Tool Execution and Parameter Settings: Running each quantification tool (Kallisto, STAR, Salmon, etc.) with optimized but standard parameters on the same dataset. For Kallisto, this typically involves building an index from the reference transcriptome followed by the quantification step [15]. For STAR, the process involves genome alignment followed by read counting [3].

Evaluation Metrics Calculation: Comparing the estimated expression values to the known ground truth using multiple statistical measures. Common metrics include:

- Spearman Correlation: Measures monotonic relationships between estimated and true abundances [6].

- Concordance Correlation Coefficient (CCC): Assesses how close the estimates are to being identical to the truth [6].

- Median Relative Difference/Distance: Quantifies the average error in estimation [15].

- False Discovery Rates (FDR) and True Positive Rates (TPR): For transcript detection performance [20].

Downstream Analysis Impact Assessment: Evaluating how quantification differences affect biological conclusions by performing differential expression analysis with tools like DESeq2 on the counts generated by different methods and comparing the lists of significantly differentially expressed genes [19].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of Kallisto or comparative analyses between quantification tools requires specific computational resources and biological reagents. The following table details key components essential for conducting RNA-seq quantification experiments:

| Item | Function in Experiment | Specification Considerations |

|---|---|---|

| Reference Transcriptome | Database of known transcripts for pseudoalignment [15] | Species-specific (e.g., GENCODE for human); version consistency crucial |

| High-Performance Computing | Execution of quantification algorithms | Desktop computer sufficient for Kallisto; cluster for large STAR alignments [3] [15] |

| RNA-seq Datasets | Input for quantification benchmarks | Quality controlled; adapter trimmed; size ≥30M reads for robust statistics [15] |

| Validation Datasets | Ground truth for accuracy assessment | qPCR data, synthetic spike-ins (Sequins, SIRVs), or simulated data [15] [20] |

| Bioinformatics Pipeline | Automated workflow for tool comparison | Snakemake or Nextflow for reproducible analyses [16] |

| Downstream Analysis Tools | Impact assessment on biological conclusions | DESeq2, Sleuth for differential expression [19] [16] |

Advanced Applications and Future Directions

Kallisto for Long-Read Sequencing Data

The principles underlying Kallisto's pseudoalignment have proven adaptable beyond short-read RNA-seq, as demonstrated by the recent development of lr-kallisto for long-read sequencing data [6]. This extension addresses the unique challenges of long-read technologies, such as Oxford Nanopore (ONT) and PacBio, which exhibit higher error rates (~0.5%) compared to short-read technologies (~0.01%) [6]. Despite these challenges, lr-kallisto maintains the efficiency of the original Kallisto implementation while achieving accurate quantification from long-read data.

In benchmarking studies comparing lr-kallisto to other long-read quantification tools like Bambu, IsoQuant, and Oarfish, lr-kallisto demonstrated superior performance with a concordance correlation coefficient (CCC) of 0.95 compared to 0.82-0.86 for other tools when quantifying transcripts from ONT data [6]. The method also showed improved performance when coupled with exome capture protocols, which increase transcriptome complexity by enriching for spliced reads [6]. This adaptability positions Kallisto as a versatile tool capable of handling diverse sequencing technologies while maintaining its signature speed and accuracy.

Integration with Single-Cell RNA-seq Analysis

Kallisto's efficiency makes it particularly well-suited for single-cell RNA-seq (scRNA-seq) analysis, where the number of individual libraries can reach into the thousands or tens of thousands. The developers have extended Kallisto to handle single-cell data through the bustools workflow, which enables rapid processing of scRNA-seq datasets [17]. This approach maintains the speed advantages of pseudoalignment while accommodating the unique characteristics of single-cell data, such as cellular barcodes and unique molecular identifiers (UMIs).

The recent lr-kallisto implementation further demonstrates capabilities for single-cell and single-nuclei RNA-seq datasets, successfully extracting nuclei barcodes and UMIs from raw ONT reads before pseudoalignment and quantification [6]. When comparing single-nuclei RNA-seq processing between ONT and Illumina sequenced reads, 100% of barcodes from ONT reads that passed filtering were also found in Illumina sequenced reads, demonstrating the robustness of the approach [6].

Figure 2: Expanding applications of Kallisto's pseudoalignment technology across sequencing technologies and research domains.

The comprehensive comparison between Kallisto and STAR reveals a landscape where tool selection should be guided by specific research objectives rather than seeking a universal solution. Kallisto's pseudoalignment technology delivers on its promise of exceptional speed and efficient resource utilization while maintaining accuracy comparable to traditional alignment-based methods for transcript quantification tasks. This makes it particularly valuable for large-scale studies, clinical applications requiring rapid turnaround, and single-cell analyses involving thousands of libraries.

However, STAR maintains its importance in discovery-focused research where the identification of novel splice junctions, fusion genes, or comprehensive genome alignment is prioritized over speed [3]. The experimental data consistently shows that while quantification results between the methods are highly correlated, they are not identical, with notable differences emerging particularly in low-expression genes [19].

For researchers and drug development professionals designing transcriptomics studies, the evidence supports the following strategic implementation: Use Kallisto for high-throughput quantification of known transcripts in well-annotated organisms, especially in contexts with computational constraints or when analyzing single-cell data. Employ STAR when exploring transcriptomic novelty, detecting fusion genes, or working with less complete annotations where genome alignment provides valuable insights. As the field continues to evolve with new technologies like long-read sequencing, the adaptable framework of pseudoalignment exemplified by Kallisto positions it as a cornerstone technology for the next generation of transcriptomics analysis.

In the field of transcriptomics, accurate quantification of gene expression from RNA-seq data is a fundamental task that directly impacts downstream biological conclusions. The choice of computational tools for alignment and quantification is therefore critical, with traditional genome-aligners like STAR competing with newer, faster pseudoalignment methods such as Salmon and Kallisto [3]. This guide provides an objective comparison of these tools, focusing on how Salmon's unique architecture—combining ultra-fast mapping with a dual-phase, bias-aware inference algorithm—influences its performance. We summarize quantitative benchmarking data and detail experimental protocols to help researchers and drug development professionals make informed decisions for their RNA-seq analysis pipelines.

Tool Comparison: Salmon, Kallisto, and STAR at a Glance

The table below summarizes the core features and performance characteristics of three popular tools.

| Feature | Salmon | Kallisto | STAR |

|---|---|---|---|

| Core Algorithm | Pseudoalignment & dual-phase inference [21] | Pseudoalignment [3] | Traditional alignment-based [3] |

| Key Advantage | Bias correction (e.g., fragment GC-content) [21] [22] | Speed and low memory usage [3] | Detection of novel splice junctions & fusion genes [3] |

| Typical Output | TPM, Estimated counts [21] | TPM, Estimated counts [3] | Read counts per gene [3] |

| Best Suited For | Fast, accurate quantification where bias correction is important [21] | Large-scale studies where computational speed is critical [3] | Studies requiring discovery of novel splicing events [3] |

Performance Benchmarking and Experimental Data

Independent benchmarking studies have systematically evaluated the accuracy and efficiency of RNA-seq quantification tools. The following table summarizes key quantitative findings.

| Evaluation Metric | Salmon Performance | Kallisto Performance | STAR-Based Pipeline Performance | Notes & Context |

|---|---|---|---|---|

| Quantification Accuracy (on idealized data) | High accuracy [12] | High accuracy [12] | Not the top performer [12] | Compares estimated abundances to known simulated truth [12] |

| Quantification Accuracy (on realistic data) | Good, but not dramatically better than simple approaches [12] | Good, but not dramatically better than simple approaches [12] | Not the top performer [12] | Realistic data includes polymorphisms and non-uniform coverage [12] |

| Impact on Differential Expression (DE) Analysis | High accuracy and reliability for DE [21] | Information not available | Information not available | GC-bias correction improves downstream DE sensitivity [21] |

| Computational Performance | Fast (lightweight and ultra-fast mapping) [21] [22] | Very Fast (lightweight) [3] | Slower (resource-intensive alignment) [3] | Kallisto and Salmon are significantly faster than STAR [3] |

Experimental Protocols in Benchmarking Studies

To ensure fair and meaningful comparisons, benchmarking studies typically employ the following rigorous methodologies:

- Data Simulation with Ground Truth: Tools are often evaluated on simulated RNA-seq data where the true transcript abundances are known. For example, the BEERS simulator can generate data that reflects properties of real data, such as polymorphisms, intron signal, and non-uniform coverage, allowing for direct measurement of quantification accuracy against a known standard [12].

- Use of Reference Materials: Large-scale, multi-center studies, like the Quartet project, use well-characterized RNA reference samples from cell lines. These samples have small, known biological differences, enabling the assessment of a tool's ability to detect subtle differential expression, which is crucial for clinical applications [5].

- Pipeline Consistency: When comparing tools, it is essential to keep upstream and downstream steps consistent. For instance, in a benchmark, different quantification tools (Salmon, Kallisto, RSEM, etc.) would be run on the same raw sequencing reads, and their output would be fed into the same differential expression tool (e.g., DESeq2, edgeR) using the same normalization method to isolate the effect of the quantifier [23] [5].

Inside Salmon's Core Technology

Salmon's performance stems from its innovative algorithmic design, which can be broken down into two key components.

Ultra-Fast Mapping via Quasimapping

Salmon first uses a quasimapping procedure to rapidly determine the potential transcripts of origin for each RNA-seq fragment without performing a base-by-base alignment. This step drastically reduces computational time compared to traditional aligners like STAR [21] [22].

Dual-Phase, Bias-Aware Inference

After quasimapping, Salmon employs a dual-phase inference algorithm to estimate transcript abundances. This process is aware of and corrects for common biases in RNA-seq data, which is a key differentiator [21].

The online phase performs an initial, rapid estimation of abundances. The offline phase then refines this estimate using an expectation-maximization (EM) algorithm while incorporating rich bias models. A critical and unique feature of Salmon is its ability to correct for fragment GC-content bias, a factor that can substantially improve the accuracy of abundance estimates and the sensitivity of subsequent differential expression analysis [21] [22].

For researchers aiming to reproduce benchmark results or conduct their own RNA-seq analysis, the following resources are essential.

| Resource / Reagent | Function in Analysis |

|---|---|

| Reference Transcriptome | A curated set of known transcript sequences (e.g., from Ensembl or GENCODE) used as the target for quantification by pseudoaligners like Salmon and Kallisto [3]. |

| Reference Genome | A sequenced genome assembly required for splice-aware alignment by tools like STAR and HiSat2 [23]. |

| ERCC Spike-In Controls | Synthetic RNA molecules added to samples in known quantities. They serve as an external standard to assess the accuracy of quantification across experiments and pipelines [5]. |

| BEERS Simulator | A software tool that generates simulated RNA-seq reads with a known "ground truth," allowing for controlled benchmarking of quantification accuracy [12]. |

| Quartet Project Reference Materials | Well-characterized RNA reference samples derived from cell lines. These materials are used for large-scale cross-laboratory quality control and benchmarking, especially for detecting subtle differential expression [5]. |

The choice between Salmon, Kallisto, and STAR is not a matter of which tool is universally best, but which is most appropriate for your specific research goals and constraints [3] [23].

- For rapid, accurate quantification of gene expression in a well-annotated transcriptome, both Salmon and Kallisto are excellent choices. Salmon may have an edge in data with significant GC bias due to its advanced correction models [21].

- For discovery-focused projects where the goal is to identify novel splice junctions, fusion genes, or work with an incomplete genome, STAR remains the preferred tool [3].

- For clinical or highly sensitive applications, it is critical to perform quality control using reference materials like those from the Quartet project to ensure your entire workflow, from library prep to quantification, can reliably detect the subtle differential expression often relevant to disease states [5].

Ultimately, the performance of any tool can be influenced by experimental design and data quality, including factors like read length, library complexity, and sequencing depth [3]. Researchers are encouraged to understand these factors and, where possible, validate their findings using multiple pipelines.

The fundamental difference between alignment-based tools like STAR and pseudoalignment-based tools like Kallisto and Salmon stems from their core operational philosophies. STAR performs spliced alignment to a reference genome, determining the precise base-by-base location of each read [24] [25]. Its primary output is a BAM file containing these genomic coordinates, from which gene-level read counts are derived, often using a simple counting process [24] [16]. In contrast, Kallisto and Salmon are quantification tools that bypass full alignment [24]. They use the transcriptome directly as a reference, employing statistical models to estimate transcript abundance based on which transcripts a read could have originated from, a process known as pseudoalignment or quasi-alignment [24] [9]. This key methodological divergence is the root of all subsequent differences in their outputs, performance, and optimal use cases.

Table 1: Core Methodological Differences Between STAR and Pseudoaligners

| Feature | STAR (AlignER) | Kallisto & Salmon (Pseudoaligners) |

|---|---|---|

| Primary Reference | Reference Genome | Transcriptome (sequence of transcripts) |

| Core Process | Spliced alignment of reads to genome [25] | Pseudoalignment / quasi-alignment of reads to transcriptome [24] [9] |

| Handling of Multi-Mapped Reads | Often discarded if no unique position is found [26] | Statistically assigned to all compatible transcripts [24] |

| Key Assumption | Precise genomic location is critical | Set of compatible transcripts is sufficient for quantification [9] |

Core Outputs: Discrete Counts vs. Continuous Abundance Estimates

The analytical pipeline and the nature of the output differ significantly between these two approaches.

STAR: Alignment and Read Counting

STAR's workflow begins with aligning reads to the genome, resulting in a BAM file. Quantification is a separate step. While STAR's quantMode can generate read counts, tools like featureCounts or HTSeq-count are also commonly used for this purpose [24] [16]. The output is a table of raw read counts for each gene, representing the number of reads that overlapped the genomic coordinates of that gene [3] [16]. These counts are discrete integers and do not inherently account for gene or transcript length, making them suitable for count-based differential expression tools like DESeq2 [16].

Kallisto and Salmon: Pseudoalignment and Statistical Estimation

Kallisto and Salmon start with the transcriptome sequence. They use k-mer matching and sophisticated models to determine the set of transcripts compatible with each read, without performing base-by-base alignment [24] [9]. They output estimated counts and Transcripts Per Million (TPM), which are continuous abundance values [3] [9]. A key advantage is their ability to provide transcript-level quantification, using statistical inference to resolve ambiguities when a read maps to multiple isoforms [24].

Table 2: Nature and Format of Core Outputs

| Output Characteristic | STAR | Kallisto & Salmon |

|---|---|---|

| Primary Expression Measure | Raw read counts (discrete) [16] | Estimated counts (continuous), TPM [3] [9] |

| Typical Analysis Level | Gene-level [24] [16] | Transcript-level (can be collapsed to gene-level) [24] |

| Information on Novel Features | Can discover novel splice junctions, genes, and fusion genes [3] [25] | Limited to the provided transcriptome annotation [24] |

The following diagram illustrates the two distinct workflows and their resulting outputs.

Performance and Accuracy in Benchmarking Studies

Independent benchmarking studies have highlighted critical trade-offs between accuracy, computational resource use, and sensitivity.

Computational Resource Requirements

A consistent finding across multiple studies is the dramatic difference in speed and memory use. In a single-cell RNA-seq benchmark, Kallisto was 2.6 to 4 times faster than STAR [24] [25]. More importantly, Kallisto used 7.7 to 15 times less RAM than STAR, making it feasible to run on a standard laptop rather than a high-performance computing server [24] [25]. This efficiency extends to bulk RNA-seq analysis as well, where pseudoaligners can process tens of millions of reads in mere minutes [9].

Gene Detection and Expression Correlation

Studies show that STAR typically reports a higher number of genes and higher gene-expression values compared to Kallisto [25]. However, this increased sensitivity may come with trade-offs. In a comparison of differential expression results, one analysis found that STAR identified significantly more differentially expressed (DE) genes (5,400) than Salmon (4,290) on the same dataset [19]. Despite this numerical difference, the overall correlation between results from different tools is generally high, particularly for moderately to highly expressed genes [19] [9]. One study noted that the Gini index of gene expression (a measure of expression inequality across cells) from STAR showed a higher correlation with RNA-FISH validation data than Kallisto, suggesting potentially higher accuracy in some contexts [25].

Table 3: Performance and Results from Benchmarking Studies

| Benchmarking Aspect | STAR | Kallisto & Salmon |

|---|---|---|

| Speed | Slower (e.g., 4x slower than Kallisto) [25] | Extremely fast (minutes per sample) [9] |

| Memory Usage | High (e.g., 7.7x more RAM than Kallisto) [25] | Low (runnable on a laptop) [24] [9] |

| Genes/Transcripts Detected | Higher number of genes and expression levels [25] | Fewer genes reported; differences often in low-expression genes [19] [25] |

| Correlation with Validation Data | Higher correlation of Gini index with RNA-FISH [25] | High correlation with other tools (e.g., r > 0.93 with Cufflinks) [9] |

Experimental Protocols for Benchmarking

To ensure reproducibility and provide a framework for tool evaluation, this section outlines a generalized experimental protocol derived from the cited benchmarking studies [26] [25].

Data Acquisition and Preprocessing

- Dataset Selection: Use publicly available datasets from platforms like Drop-seq, Fluidigm C1, or 10x Genomics. Datasets with orthogonal validation (e.g., RNA FISH data for a subset of genes) are particularly valuable [25].

- Data Download: Obtain raw FASTQ files from repository accessions such as GEO (e.g., GSE99330) or the 10x Genomics website (e.g., PBMC3K dataset) [25].

- Preprocessing: Follow platform-specific preprocessing. For Drop-seq, use the Drop-seq Tools to filter low-quality barcodes and trim adapter sequences [25]. For other data, use tools like

Trim Galorefor quality and adapter trimming.

Reference Preparation

- For STAR: Download the appropriate reference genome (e.g., GRCh38 for human) and its corresponding GTF annotation file from Ensembl. Generate the STAR genome index using

STAR --runMode genomeGenerate[25]. - For Kallisto/Salmon: Download the transcriptome FASTA file corresponding to the reference genome used for STAR. Build the pseudoalignment index using

kallisto indexorsalmon index[25].

Execution of Alignment and Quantification

- STAR Alignment: Run STAR with parameters tailored to the sequencing platform and read length. For gene counting, use the

--quantMode GeneCountsoption or process the output BAM file with a read counter likefeatureCounts[25] [16]. - Kallisto Pseudoalignment: Execute

kallisto quantwith the appropriate options. For RNA-seq, the-boption is recommended to perform bootstrapping, which is useful for downstream uncertainty analysis in tools likesleuth[9]. - Salmon Pseudoalignment: Run

salmon quant, specifying the library type-land using the--numBootstrapsoption for a similar purpose as in Kallisto [9].

Downstream Analysis and Validation

- Differential Expression (DE) Analysis: Process the count outputs (STAR's raw counts or Kallisto/Salmon's estimated counts) with a standard DE pipeline (e.g., DESeq2 for raw counts, sleuth for estimated counts with bootstrap data) [9] [16].

- Comparison Metrics: Calculate the number of DE genes detected by each pipeline at a standard significance threshold (e.g., adjusted p-value < 0.05). Assess the overlap of DE gene sets between methods [19].

- Validation: Compare the expression patterns of positive control genes or the correlation of Gini coefficients with RNA FISH data, if available, to gauge biological accuracy [25].

The table below details key reagents, software, and data resources required for conducting a comparative analysis of RNA-seq quantification tools.

Table 4: Essential Reagents and Resources for RNA-seq Quantification Analysis

| Item Name | Function / Description | Example Source / Version |

|---|---|---|

| Reference Genome | The DNA sequence of the organism used as a map for alignment. | GRCh38 (Human) from Ensembl [25] |

| Annotation File (GTF/GFF) | Defines the genomic coordinates of genes, transcripts, and other features. | Homo_sapiens.GRCh38.95.gtf from Ensembl [25] |

| Transcriptome FASTA | The sequence of all known transcripts, used as a reference by pseudoaligners. | Transcriptome FASTA from Ensembl [25] |

| Barcode Whitelist | A list of valid cell barcodes for single-cell RNA-seq analysis. | Provided by 10X Genomics for their kits [26] |

| STAR | Spliced aligner for RNA-seq data. | Version 2.7.1a [25] |

| Kallisto | Pseudoaligner for transcript quantification. | Version 0.45.1 / 0.46.1 [25] |

| Salmon | Pseudoaligner with bias correction models. | Version 0.6.0 [9] |

| High-Performance Computing (HPC) Environment | Essential for running resource-intensive tools like STAR. | University of Michigan HPC [25] |

The choice between STAR and pseudoaligners like Kallisto and Salmon is not a matter of which tool is universally superior, but which is most appropriate for the specific research goals, experimental design, and computational resources.

- Choose STAR if: Your research question requires discovery, such as identifying novel splice junctions, fusion genes, or unannotated transcripts [3] [25]. It is also a strong choice when you need a BAM file for visual validation in a genome browser or for variant calling [24]. Be prepared for the higher computational costs.

- Choose Kallisto or Salmon if: Your primary goal is rapid and accurate quantification of known transcripts for differential expression analysis [3] [24]. They are ideal for large-scale studies or when computational resources are limited. Their transcript-level quantification is superior for isoform-level analysis [24].

- General Considerations: For most standard differential expression analyses focused on annotated genes, both pipelines yield valid and largely concordant results, especially after filtering low-abundance genes [19]. The critical factor is consistency; once a pipeline is chosen for a project, it should be used throughout to ensure comparability of results [19].

Choosing Your Pipeline: A Practical Guide to Workflow Implementation

The analysis of bulk RNA sequencing (RNA-seq) data fundamentally relies on the accurate processing of raw sequencing reads into meaningful gene expression measurements. This process can be approached through different computational paradigms, primarily divided into traditional alignment-based methods and newer pseudoalignment approaches. The STAR aligner (Spliced Transcripts Alignment to a Reference) represents a sophisticated alignment-based tool that maps reads to a reference genome, providing base-level resolution and facilitating comprehensive transcriptomic analysis [3]. In contrast, pseudoaligners like Kallisto and Salmon employ lightweight algorithms that rapidly determine transcript compatibility without performing exact base-to-base alignment, offering substantial gains in speed and resource efficiency [24]. Understanding the relative strengths, limitations, and appropriate applications of these contrasting approaches is essential for researchers designing RNA-seq experiments and analyzing resulting data.

The choice between alignment-based and pseudoalignment methods carries significant implications for downstream biological interpretations. Inaccurate alignment or quantification can lead to false positives or false negatives in subsequent analyses such as differential expression, functional annotation, and pathway analysis [3]. This comparison guide objectively examines the STAR workflow in contrast to pseudoalignment approaches, providing experimental data and methodological details to inform researchers' analytical decisions within the broader context of transcriptomics tool selection.

Fundamental Differences Between Alignment and Pseudoalignment

Algorithmic Approaches

STAR operates as a traditional alignment-based tool that maps RNA-seq reads to a reference genome or transcriptome using a detailed alignment algorithm [3]. It employs a sequential process where reads are first mapped to the genome, with special handling for spliced alignments that span exon-exon junctions. This approach generates base-level alignment information in BAM format, which precisely documents the genomic coordinates of each read [24] [27]. The alignment information serves as the foundation for subsequent quantification using count tools like HTSeq or featureCounts.

In contrast, Kallisto utilizes a pseudoalignment algorithm that determines read abundance directly without generating base-level alignments [3]. Instead of mapping reads positionally, Kallisto uses a de Bruijn graph representation of the transcriptome to rapidly identify which transcripts each read is compatible with based on k-mer content [24]. This approach bypasses the computationally intensive alignment step, focusing instead on establishing transcript compatibility for quantification purposes.

Output Comparisons

The fundamental differences in algorithmic approach lead to distinct output characteristics:

Table 1: Core Algorithmic Differences Between STAR and Kallisto

| Feature | STAR | Kallisto |

|---|---|---|

| Primary algorithm | Detailed spliced alignment to genome | Pseudoalignment to transcriptome |

| Reference requirement | Genome sequence and annotation | Transcriptome sequences |

| Primary output | BAM files with genomic coordinates | Direct abundance estimates |

| Quantification basis | Requires secondary tools (HTSeq, featureCounts) | Built-in quantification |

| Novel feature detection | Supports novel junction discovery | Limited to provided transcriptome |

Experimental Benchmarking: Performance and Accuracy Metrics

Quantitative Performance Comparisons

Independent benchmarking studies provide critical insights into the relative performance of STAR and pseudoalignment tools. A comprehensive evaluation of isoform quantification methods revealed that alignment-free tools like Kallisto and Salmon are "both fast and accurate" [28]. In terms of computational efficiency, Kallisto demonstrated significant advantages, being 2.6 times faster than STAR while using up to 15 times less RAM in benchmarking studies on single-cell RNA-seq workflows [24]. This substantial difference in resource requirements makes pseudoalignment accessible for researchers without access to high-performance computing infrastructure.

Accuracy assessments present a more nuanced picture. In idealized conditions with complete annotations, Salmon, Kallisto, RSEM, and Cufflinks exhibited the highest quantification accuracy [29]. However, on more realistic datasets containing polymorphisms, intron signal, and non-uniform coverage, these tools "do not perform dramatically better than the simple approach" [29]. The tested methods showed "sufficient divergence from the truth to suggest that full-length isoform quantification and isoform level DE should still be employed selectively" [29].

Experimental Protocols for Method Evaluation

Benchmarking studies typically employ carefully designed evaluation protocols using both simulated and experimental datasets:

Simulated Data Protocols: Studies use simulators like BEERS or RSEM to generate reads from known transcript abundances, creating ground truth for accuracy measurements [29]. Parameters such as sequencing errors, polymorphisms, and non-uniform coverage are incorporated to mimic real data characteristics. Performance is evaluated using metrics including Pearson correlation (R²) and Mean Absolute Relative Differences (MARDS) between estimated and true abundances [28].

Experimental Data Protocols: Technical replicates from reference samples like Universal Human Reference RNA (UHRR) and Human Brain Reference RNA (HBRR) assess consistency between replicates [28]. Correlation between replicates and agreement with orthogonal validation methods (e.g., qPCR) provide practical accuracy measures.

Differential Expression Analysis: Methods are evaluated by comparing DE results obtained from estimated counts versus known true quantifications in simulated data [29]. The degree of divergence between these analyses indicates quantification accuracy's impact on downstream results.

The STAR Workflow: Technical Implementation

Genome Indexing with STAR

The initial step in the STAR workflow involves building a genome index, which is crucial for efficient alignment:

Indexing Command Example:

The sjdbOverhang parameter specifies the length of the genomic sequence around annotated junctions and should be set to read length minus 1 [27]. This parameter significantly impacts splice junction detection accuracy.

Read Alignment and Quantification

Following index generation, STAR performs the alignment process:

Alignment Command with Quantification:

The quantMode GeneCounts option directs STAR to count reads per gene during alignment, generating a ReadsPerGene.out.tab file where reads are counted if they overlap (1nt or more) one and only one gene [27]. This implements counting logic similar to htseq-count with default parameters.

HTSeq-Count Integration for Advanced Quantification

For more sophisticated counting strategies, STAR's BAM output can be processed by HTSeq-count:

HTSeq-Count Command Example:

HTSeq-count provides three overlap resolution modes [30]:

- union: Counts reads that overlap any feature (recommended for most cases)

- intersection-strict: Only counts reads that completely fall within features

- intersection-nonempty: Counts reads that overlap features, excluding those with no features

The strandedness parameter (--stranded) must be correctly specified, as the default "yes" setting will cause half of reads to be lost in non-strand-specific protocols [30].

Kallisto and Salmon Workflows

Pseudoalignment and Quantification

The Kallisto workflow substantially simplifies the quantification process:

Kallisto Indexing and Quantification Commands:

Kallisto generates both transcripts per million (TPM) and estimated counts in its final output [3]. The tool uses a novel "pseudoalignment" algorithm that determines the compatibility of reads with transcripts without specifying base-level coordinates, dramatically accelerating the quantification process [24].

Comparative Workflow Efficiency

Table 2: Workflow Complexity and Resource Requirements

| Workflow Component | STAR + HTSeq | Kallisto |

|---|---|---|

| Indexing time | 20-60 minutes (genome) | 5-15 minutes (transcriptome) |

| Index memory | High (~30GB) | Low (~2GB) |

| Processing time | 2-6 hours per sample | 15-45 minutes per sample |

| Memory during processing | High (25-35GB) | Low (4-8GB) |

| Output files | BAM (large), counts | TSV (small) |

| Multi-sample scaling | Linear increase | Linear but faster |

Comparative Analysis Across Experimental Conditions

Impact of Experimental Design on Tool Performance

The optimal choice between STAR and pseudoalignment tools depends significantly on experimental design parameters:

Transcriptome Completeness: Kallisto's performance is strongest when the transcriptome is well-annotated and complete [3]. In such cases, its pseudoalignment approach can quickly and accurately quantify gene expression levels. However, if the transcriptome is incomplete or contains many novel splice junctions, STAR's traditional alignment approach may be more suitable due to its ability to identify unannotated features [3].

Sample Size and Resources: For large-scale studies with many samples, Kallisto's fast and memory-efficient approach is particularly advantageous [3]. However, if computational resources are not a constraint and the study involves a small number of samples, STAR's more comprehensive alignment may be preferable.

Data Quality Considerations

Data quality parameters significantly influence method performance:

Read Length: Kallisto performs well with short read lengths, while STAR may be more suitable for longer read lengths that help identify novel splice junctions and improve alignment accuracy [3].

Sequencing Depth: Kallisto's pseudoalignment approach is less sensitive to sequencing depth than STAR's alignment-based approach [3]. This makes Kallisto more suitable for analyzing samples with low sequencing depth, while STAR may perform better with deeply sequenced samples where comprehensive alignment is beneficial.

Library Complexity: Libraries with high complexity may benefit from STAR's more accurate alignment, while less complex libraries are well-suited for Kallisto's efficient approach [3].

Table 3: Essential Computational Tools for RNA-seq Analysis

| Tool/Resource | Function | Application Context |

|---|---|---|

| STAR | Spliced alignment of RNA-seq reads to genome | Comprehensive alignment, novel junction discovery |

| Kallisto | Rapid transcript-level quantification | High-throughput studies, well-annotated organisms |

| Salmon | Transcript quantification with selective alignment | Balance of speed and alignment information |

| HTSeq-count | Read counting from aligned BAM files | Gene-level quantification from alignments |

| SAMtools | Processing and viewing BAM/SAM files | Alignment file manipulation and quality control |

| Gencode annotations | Reference transcriptome definitions | Providing comprehensive gene models for alignment/quantification |

| Twist Biosciences Exome Capture | Targeted RNA enrichment | Improving detection of low-abundance transcripts [6] |

The choice between STAR and pseudoalignment tools like Kallisto ultimately depends on research objectives, experimental design, and computational resources. STAR provides comprehensive alignment information suitable for novel transcript discovery, splice junction identification, and visualization of aligned reads in genomic context [3] [24]. This comes at the cost of substantially higher computational requirements and longer processing times.

Kallisto excels in scenarios where rapid quantification of known transcripts is prioritized, offering exceptional speed and efficiency while maintaining high accuracy for well-annotated transcriptomes [3] [24]. Its minimal resource requirements make transcriptome-scale analysis feasible on standard laboratory computers.

For most differential expression studies focusing on annotated genes, pseudoalignment tools provide sufficient accuracy with dramatic efficiency gains. However, for discovery-focused research requiring identification of novel splicing events or genomic visualization, STAR's alignment-based approach remains essential. Researchers should consider these trade-offs within their specific experimental context to select the optimal approach for their biological questions.

The analysis of RNA-sequencing (RNA-seq) data is a fundamental task in modern genomics, enabling the quantification of transcript abundance across diverse biological conditions. Traditional analysis pipelines rely on a multi-step process that begins with the alignment of sequencing reads to a reference genome or transcriptome, a computationally intensive and time-consuming process. In contrast, the Kallisto/Salmon workflow represents a paradigm shift by employing pseudoalignment—a rapid alignment-free method that determines which transcripts are compatible with a read without determining the exact base-by-base coordinates [9]. This approach bypasses traditional alignment, allowing for the direct quantification of transcript abundance from raw sequencing reads (FASTQ files) in a fraction of the time.

This guide objectively compares the performance of the Kallisto and Salmon pseudoalignment workflows with traditional alignment-based methods, such as STAR, within the broader thesis of RNA-seq analysis. It is designed for researchers, scientists, and drug development professionals who require efficient and accurate transcriptomic analysis to inform biological insights and therapeutic discovery.

Core Principles: How Kallisto and Salmon Work

The Fundamental Shift from Alignment to Compatibility

Traditional aligners like STAR perform splice-aware alignment of reads to a genome, producing a BAM file that is subsequently used by quantifiers (e.g., featureCounts) to generate count data [16]. This process is computationally exhaustive because it must account for mismatches, indels, and splicing events at the base level.

Kallisto and Salmon, however, are founded on a different principle. Their goal is not to find where a read aligns, but to determine the set of transcripts that could have potentially generated that read [9]. This concept, often referred to as pseudoalignment or lightweight mapping, focuses on transcript compatibility. The core computational steps are as follows:

- Indexing: A reference transcriptome (in FASTA format) is pre-processed into an index. Kallisto builds a T-DBG (Transcriptome de Bruijn Graph) from the k-mers of the transcriptome, while Salmon creates a data structure optimized for its quasi-mapping procedure [9] [31].

- Quantification: The raw reads are processed against this index. For each read or fragment, the tool rapidly identifies all transcripts it is compatible with.

- Abundance Estimation: An expectation-maximization (EM) algorithm is applied to resolve the proportions of each transcript within the sample, assigning reads optimally across transcripts that share sequences, such as different isoforms of the same gene [32].

Key Differentiators from Traditional Alignment

- Speed: By avoiding base-level alignment, Kallisto and Salmon achieve orders-of-magnitude faster processing times [9] [33].

- Resource Efficiency: These tools have low memory requirements and can often be run on a standard laptop, unlike STAR, which requires substantial RAM, especially for large genomes [3] [33].

- Bias Modeling: A significant advantage of Salmon is its ability to explicitly model and correct for sample-specific technical biases, including fragment GC content bias, sequence-specific bias, and positional bias. This leads to more accurate abundance estimates and more reliable differential expression results [31].

The following diagram illustrates the fundamental difference in workflow between traditional alignment and the pseudoalignment approach.

Performance Benchmarking: Quantitative Comparisons

Accuracy and Concordance with Ground Truth

Multiple independent studies have evaluated the accuracy of quantification tools by comparing their estimates to validated ground truths, such as Illumina short-read data or qRT-PCR. The table below summarizes key performance metrics from recent literature.

Table 1: Performance Benchmarking of Quantification Tools

| Tool | Method Category | Concordance with Illumina (CCC)* | Correlation with qRT-PCR | Notes on Accuracy |

|---|---|---|---|---|

| Kallisto | Pseudoalignment | 0.95 (lr-kallisto on ONT data) [6] | High correlation (r ~ 0.94 with Cufflinks) [9] | Accurate for gene-level and isoform-level quantification. |

| Salmon | Lightweight Mapping | N/A | High correlation (r ~ 0.94 with Cufflinks) [9] | Superior accuracy in some studies due to GC-bias correction; reduces false positives in DE analysis [31]. |

| STAR + featureCounts | Alignment & Counting | ~0.88 [6] | Good correlation | Provides simple, interpretable counts but struggles with isoform resolution and ambiguous reads [16]. |

| Bambu | Alignment-based (long-read) | 0.86 [6] | N/A | Demonstrates good performance but is outperformed by lr-kallisto in benchmark [6]. |

| Oarfish | Alignment-based (long-read) | 0.82 [6] | N/A | Lower concordance than pseudoalignment-based tools in long-read benchmark [6]. |

| CCC: Concordance Correlation Coefficient |

A notable study evaluating pipelines for highly repetitive genomes (e.g., Trypanosoma cruzi) found that Salmon and Kallisto achieved the most accurate performance, closely matching simulated expression values. These tools were particularly effective at allocating reads between members of the same gene family, a task that poses significant challenges for traditional aligners [34].

Computational Efficiency and Resource Usage

The primary advantage of Kallisto and Salmon is their dramatic speed and efficiency.

Table 2: Computational Efficiency Comparison

| Tool | Processing Time (Paired-end, 20-30M reads) | Memory Usage | Key Strengths |

|---|---|---|---|

| Kallisto | ~3-5 minutes on a laptop [9] | Low (~8 GB) [9] | Extreme speed and simplicity of use. |

| Salmon | ~8 minutes on a desktop [9] | Low | Rich bias modeling and support for BAM input. |

| STAR | Tens of minutes to hours [33] | High (e.g., >30 GB for human genome) [33] | High sensitivity for splice junctions and novel variant detection; better suited for non-standard analyses. |

In a large-scale cloud-based benchmark of the STAR aligner, it was noted that for users where cost and speed are critical, pseudoaligners such as Salmon and Kallisto are recommended [33]. The resource intensity of STAR makes it more expensive to scale for processing hundreds of terabytes of data.

Experimental Protocols for Benchmarking

To ensure the reliability of the performance data cited in this guide, it is important to understand the experimental methodologies used in the underlying studies.