Strategies for Reducing False Positives in Prokaryotic Gene Prediction: A Guide for Biomedical Researchers

Accurate prokaryotic gene annotation is critical for functional genomics and drug discovery, yet high false-positive rates persistently undermine the reliability of automated predictions.

Strategies for Reducing False Positives in Prokaryotic Gene Prediction: A Guide for Biomedical Researchers

Abstract

Accurate prokaryotic gene annotation is critical for functional genomics and drug discovery, yet high false-positive rates persistently undermine the reliability of automated predictions. This article synthesizes current methodologies and best practices for mitigating false positives, addressing a critical need for researchers and drug development professionals. We first explore the foundational causes of erroneous predictions, from algorithmic biases to biological complexities. We then detail a suite of methodological solutions, from multi-tool frameworks to advanced machine learning classifiers, providing practical application guidance. The article further covers essential troubleshooting and optimization techniques for parameter adjustment and data curation. Finally, we present a rigorous framework for the validation and comparative assessment of gene finders, empowering scientists to make informed, data-driven tool selections for their specific genomic projects and ultimately enhancing the fidelity of downstream biomedical research.

Understanding the Root Causes of False Positives in Prokaryotic Gene Finders

Systematic Biases in Historical Data and Model Organism Focus

Frequently Asked Questions

Q1: What are the main types of systematic bias that affect prokaryotic gene finders? Systematic biases in gene prediction primarily stem from historical data imbalances and algorithmic design limitations. Key biases include:

- Training Data Bias: Early gene finders were developed when few prokaryotic genomes were available, creating a historical bias toward certain well-studied organisms. This limits their accuracy on novel, divergent, or environmental species not represented in training sets [1] [2].

- GC-Content Bias: The performance of many gene finders degrades for genomes with extremely high or low GC content, as their statistical models are often tuned to "typical" genomic compositions [3].

- Confirmation Bias in Annotation: Automated pipelines may perpetuate past errors. If a gene was incorrectly annotated in a key reference genome, subsequent tools trained on that data may reinforce the false positive [2].

Q2: How can a "universal model" like Balrog help reduce false positives? Traditional gene finders like Glimmer3 and Prodigal require genome-specific training, which can overfit the model to a particular genome's noise and lead to excess predictions of "hypothetical proteins" [1] [2]. Balrog employs a universal model trained on a large, diverse collection of prokaryotic genomes. This approach learns the fundamental signature of a protein-coding sequence across the tree of life. Because it is not fine-tuned to any single genome's idiosyncrasies, it is less likely to over-predict false positives, thereby reducing the number of hypothetical gene calls while maintaining high sensitivity [1] [2].

Q3: My research involves a GC-rich archaeal genome. Which gene finder should I use? GC-rich and archaeal genomes are particularly challenging for many gene finders due to their divergent sequence patterns and translation initiation mechanisms [3]. Evaluations have shown that algorithms specifically designed to handle a wider variety of genomic patterns, such as MED 2.0 and Balrog, demonstrate a competitive advantage for these genomes [3] [2]. MED 2.0 uses a non-supervised learning process to derive genome-specific parameters without prior training data, making it robust for atypical genomes [3].

Troubleshooting Guides

Issue: Suspected High False Positive Rate in Gene Predictions

Problem: Your genome annotation contains an unexpectedly high number of "hypothetical protein" calls, and you suspect many may be false positives.

Investigation and Solutions:

Benchmark with a Universal Model

- Action: Run your genomic sequence through a universal model-based gene finder like Balrog and compare its output to your current results.

- Rationale: As shown in the table below, Balrog consistently predicts fewer total genes while maintaining high sensitivity for known genes, indicating a potential reduction in false positives [1] [2].

- Protocol: Install Balrog from GitHub and execute it on your target genome with default parameters. Compare the consolidated list of predicted genes against the output from your standard tool.

Validate with an Orthology-Based Filter

- Action: Use a tool like GeneWaltz to filter predicted genes.

- Rationale: GeneWaltz uses a codon-substitution matrix built from orthologous gene pairs. It assigns higher scores to genuine coding regions and can be used to test the significance of predictions from other gene finders, effectively weeding out false positives, especially among short genes [4].

- Protocol: Input the gene predictions from your primary gene finder into GeneWaltz alongside a closely related reference genome. Use the significance score (e.g., P-value < 0.01) to filter the candidate genes.

Experimentally Validate Selected Predictions

- Action: For critical genes or a random sample of hypothetical proteins, design experimental validation.

- Rationale: Computational predictions are not conclusive. Techniques like RT-PCR or proteomic mass spectrometry can provide direct evidence for gene expression.

- Protocol:

- Design PCR Primers that flank the predicted gene, ensuring they span an intron if working with eukaryotic contamination.

- Perform RT-PCR using RNA extracted from the organism under study.

- Sequence the PCR product to confirm it matches the predicted gene sequence.

Issue: Poor Gene Prediction Performance on a GC-Rich Genome

Problem: Standard gene-finding tools are performing poorly on your newly sequenced GC-rich bacterial or archaeal genome, missing known genes or making implausible predictions.

Solution:

Switch to a Robust Algorithm

- Action: Use a gene finder known to perform well on GC-rich genomes, such as MED 2.0 or Balrog [3] [2].

- Rationale: These algorithms use statistical models (EDP in MED 2.0; a temporal convolutional network in Balrog) that are less sensitive to strong compositional biases and do not rely on training data from a narrow GC range [3] [2].

Verify with a Complementary Method

- Action: Perform a homology-based search using BLASTX against a non-redundant protein database.

- Rationale: This independent method relies on sequence similarity rather than intrinsic genomic signals. Regions with significant similarity to known proteins can help confirm true coding regions and refine the boundaries of ab initio predictions [3].

Performance Data & Experimental Protocols

Table 1: Comparative Performance of Gene Finders on Diverse Prokaryotic Genomes

Table showing the average number of known genes detected and "extra" genes predicted across a test set of 30 bacteria and 5 archaea. A lower number of "extra" genes indicates a reduction in potential false positives [1] [2].

| Gene Finder | Average Known Genes Detected (Bacteria) | Average "Extra" Genes Predicted (Bacteria) | Average Known Genes Detected (Archaea) | Average "Extra" Genes Predicted (Archaea) |

|---|---|---|---|---|

| Balrog | 2,248 | 664 | 1,661 | 565 |

| Prodigal | 2,250 | 747 | 1,663 | 689 |

| Glimmer3 | 2,245 | 949 | 1,670 | 949 |

Protocol 1: Evaluating Gene Finder Accuracy with a Test Genome

Purpose: To benchmark the false positive rate of a gene finder using a genome with a well-established "truth set" of known genes.

Materials:

- A reference genome with a validated annotation (e.g., Escherichia coli K-12 MG1655).

- Gene finding software (e.g., Balrog, Prodigal).

- Computing environment with Unix command line.

Methods:

- Data Preparation: Download the genomic FASTA file and the corresponding annotation file (GFF format) for the reference genome.

- Gene Prediction: Run the gene finder on the genomic FASTA file.

- Example Balrog command:

balrog -i genome.fna -o balrog_predictions.gff

- Example Balrog command:

- Result Parsing: Extract the list of predicted genes from the output GFF file.

- Comparison: Compare the predictions to the validated annotation. A gene is considered a "true positive" if its stop codon position matches a known gene. Predictions with no match in the validated set are tallied as "extra" genes [1] [2].

- Analysis: Calculate sensitivity (True Positives / All Known Genes) and the false discovery rate (Extra Genes / All Predicted Genes).

Protocol 2: Filtering Predictions with GeneWaltz

Purpose: To reduce false positives from an initial gene prediction set using the GeneWaltz orthology-based filter [4].

Materials:

- A list of gene predictions (nucleotide sequences) from a primary gene finder.

- A genomic sequence from a closely related organism.

- GeneWaltz software.

Methods:

- Alignment: Create a global alignment between the target genome and the related genome.

- Scoring: Run GeneWaltz on the alignment file and the list of candidate genes. GeneWaltz will calculate a significance score (P-value) for each candidate based on its codon substitution matrix [4].

- Filtering: Apply a significance threshold (e.g., P < 0.01) to the list of candidates. Genes that do not meet the threshold are considered less likely to be true coding sequences and can be flagged for further review or removal [4].

The Scientist's Toolkit

Table 2: Key Research Reagents and Computational Tools

Essential materials and software for conducting gene prediction and validation experiments.

| Item Name | Type | Function/Brief Explanation |

|---|---|---|

| Balrog | Software Tool | A universal prokaryotic gene finder that uses a deep learning model to reduce false positives without genome-specific training [1] [2]. |

| MED 2.0 | Software Tool | A non-supervised gene prediction algorithm effective for GC-rich and archaeal genomes [3]. |

| GeneWaltz | Software Tool | A filtering tool that uses a codon-substitution matrix to identify and reduce false positive gene predictions [4]. |

| GIAB Reference Samples | Biological Standard | Well-characterized human genome samples (e.g., NA12878) used for benchmarking and training validation methods in sequencing studies [5]. |

| Sanger Sequencing | Experimental Method | The gold-standard method for orthogonal confirmation of computationally predicted genetic variants [5]. |

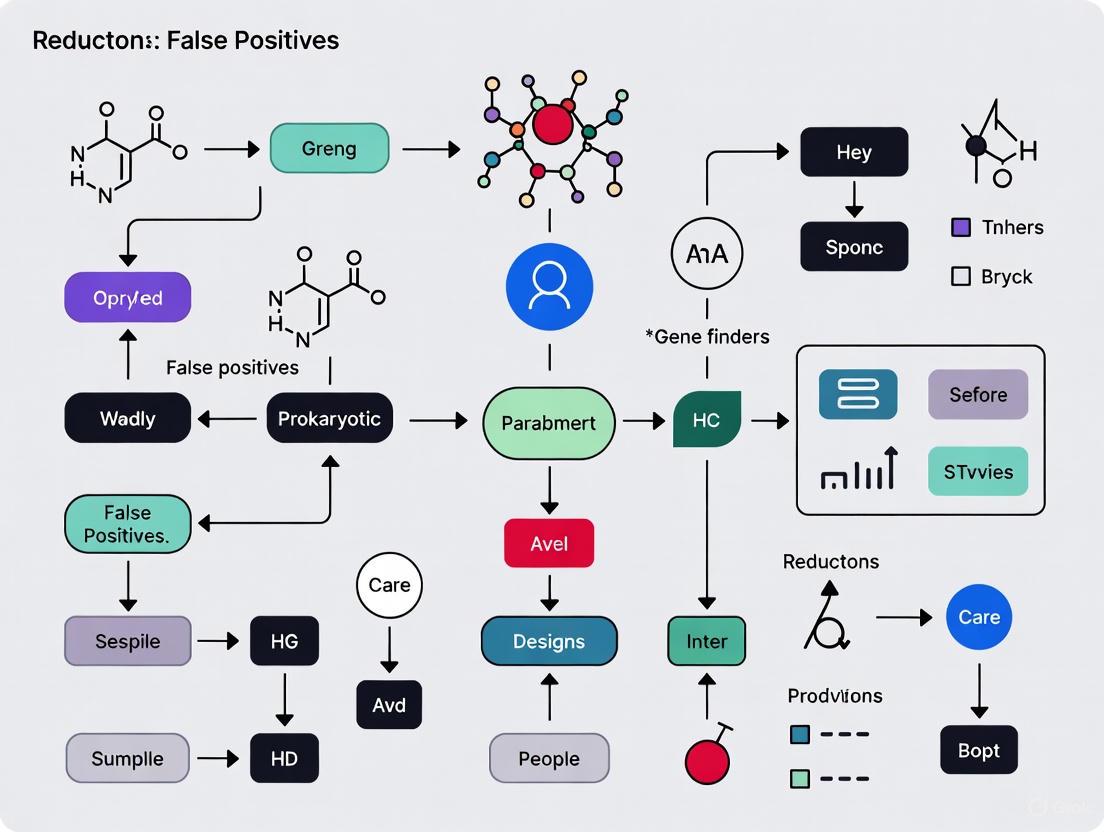

Workflow Visualization

Gene Finder Evaluation & Bias Mitigation

Universal vs. Traditional Gene Finder Models

Algorithmic Limitations in Detecting Non-Standard Genetic Features

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the major sources of false positives in prokaryotic gene prediction, and how can I mitigate them? Traditional gene finders like Prodigal and Glimmer often overpredict short ORFs as false positive coding sequences (CDSs), especially in high-GC genomes where the number of potential ORFs increases dramatically [6]. To mitigate this, consider using genomic Language Models (gLMs) like GeneLM, which have demonstrated a significant reduction in false positives by learning contextual dependencies in DNA sequences, moving beyond simple statistical and homology-based methods [6].

Q2: My analysis revealed a potential disease variant from a direct-to-consumer (DTC) test. How reliable is this result? DTC raw data have a high documented false-positive rate. A clinical study found that 40% of variants reported in DTC raw data were not confirmed upon clinical diagnostic testing [7]. You should always confirm any potentially significant finding in a clinical laboratory that uses validated methods like Sanger sequencing or next-generation sequencing with Sanger confirmation [7].

Q3: Are there specialized tools for detecting foreign genetic material in eukaryotic genomes, which might be misannotated? Yes, non-standard integrations like endogenous viral elements (EVEs) and bacterial sequences can be identified using dedicated tools. EEfinder is a general-purpose tool designed for this specific task, automating the steps of similarity search, taxonomy assignment, and merging of truncated elements with a reported sensitivity of 97% compared to manual curation [8].

Q4: How can AI help in delineating complex syndromes with overlapping genetic features? AI-driven approaches can objectively split syndromic subgroups. For example, combining GestaltMatcher for facial phenotype analysis with DNA methylation (DNAm) episignature analysis using a Support Vector Machine (SVM) model has proven effective. This multi-omics approach can differentiate disorders with minimal sample requirements, validating splitting decisions even for ultra-rare diseases [9].

Key Experimental Protocols

Protocol 1: Two-Stage Gene Prediction Using a Genomic Language Model (gLM)

This protocol, based on the GeneLM study, uses a transformer architecture for accurate CDS and Translation Initiation Site (TIS) prediction [6].

- Data Collection and Processing:

- Obtain complete bacterial genomes from NCBI GenBank. Use only those with "complete" status and "reference genome" classification.

- Extract potential Open Reading Frames (ORFs) using a tool like ORFipy. Retain ORFs beginning with (ATG, TTG, GTG, CTG) and ending with a stop codon (TAA, TAG, TGA). Do not filter out nested or overlapping ORFs.

- Dataset Labeling:

- CDS Dataset: Compare extracted ORF coordinates with annotated CDSs in the GFF file. Label an ORF as positive (1) if its start or end aligns with an annotated CDS. Perform length-based downsampling on negative samples to balance the dataset.

- TIS Dataset: Use only ORFs that match an annotated CDS. Extract a 60-nucleotide sequence centered on the start codon (30bp upstream and downstream). Label the sequence as positive (1) for a true TIS.

- Tokenization and Model Fine-Tuning:

- Tokenize DNA sequences using a k-mer tokenizer (e.g., k=6). Use the DNABERT model, which provides pre-trained 768-dimensional embeddings for each k-mer.

- Employ a two-stage fine-tuning:

- Stage 1: Fine-tune the model on the CDS dataset to classify coding vs. non-coding regions.

- Stage 2: Fine-tune a separate model on the TIS dataset to refine start site predictions.

- Validation:

- Compare GeneLM predictions against those from traditional tools (Prodigal, GeneMark) and, if available, experimentally verified TIS sites to assess accuracy.

Protocol 2: Clinical Confirmation of Variants from Direct-to-Consumer (DTC) Tests

This protocol outlines the steps for validating DTC raw data results in a clinical lab setting [7].

- Sample Receipt & Requisition: The ordering clinician must provide a detailed test requisition form and the specific DTC genetic variant report (gene, nucleotide change, protein change).

- Test Selection & Methodological Validation:

- The clinical lab selects the appropriate diagnostic test (e.g., single-site analysis, full-gene sequencing, or a multi-gene panel).

- Testing is performed using clinically validated methods. This typically involves Next-Generation Sequencing for multi-gene panels or comprehensive analysis, followed by Sanger sequencing confirmation of the specific variant in question. This two-method approach ensures high accuracy.

- Variant Classification & Reporting:

- The identified variant is classified according to professional guidelines (e.g., ACMG) as Benign, Likely Benign, Variant of Uncertain Significance, Likely Pathogenic, or Pathogenic.

- A formal clinical report is issued, confirming or refuting the presence of the variant and providing its clinical classification to guide patient management.

Performance Data of Gene Prediction Tools

Table 1: Comparative Accuracy of Gene Prediction Methods

| Method | Type | Key Strengths | Documented Limitations |

|---|---|---|---|

| Prodigal, Glimmer, GeneMark | Traditional (Statistical/HMM) | Fast, widely adopted | Struggles with high-GC genomes; prone to overpredicting short ORFs (false positives) [6] |

| GeneLM (gLM) | Deep Learning (Transformer) | Reduces false CDS predictions; superior TIS accuracy; captures long-range contextual dependencies [6] | Higher computational demand; requires large, high-quality datasets for training [6] |

Table 2: Documented Error Rates in Genetic Testing

| Test Type | Scenario | Error / Limitation Rate | Reference / Context |

|---|---|---|---|

| Direct-to-Consumer (DTC) Raw Data | False positive variants upon clinical confirmation | 40% [7] | Clinical lab study of 49 patient samples |

| BeginNGS Newborn Screening | False positive reduction using purifying hyperselection method | 97% reduction (to <1 in 50 subjects) [10] | Comparison against gold standard diagnostic sequencing |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Tools for Genomic Analysis

| Item | Function / Application |

|---|---|

| NCBI GenBank Database | Primary public database for obtaining annotated reference genomes and sequence data [6]. |

| ORFipy | A fast, flexible Python tool for extracting Open Reading Frames (ORFs) from genomic sequences [6]. |

| DNABERT | A pre-trained genomic language model based on the BERT architecture; used to generate context-aware embeddings for k-mer tokens of DNA sequences [6]. |

| EEfinder | A specialized tool for identifying endogenized viral and bacterial elements (EVEs) in eukaryotic genomes, aiding in the detection of horizontal gene transfer and removing contamination in metagenomic studies [8]. |

| TileDB | A database technology used for federated querying of genomic data, enabling analysis across biobanks without moving sensitive data, as utilized in the BeginNGS platform [10]. |

| Sanger Sequencing | Gold-standard method for clinical confirmation of genetic variants due to its high accuracy; used to validate NGS and DTC findings [7]. |

Workflow Diagrams

Two-Stage gLM Gene Prediction

Clinical DTC Variant Confirmation

FAQs and Troubleshooting Guides

FAQ 1: How does genomic context contribute to false positive gene predictions in prokaryotes?

Genomic context, particularly the prevalence of short open reading frames (ORFs) in non-coding regions, is a primary source of false positives in prokaryotic gene finders. In any random sequence, a large number of ORFs exist, and many are too short to be genuine protein-coding genes. However, distinguishing these random ORFs from real genes becomes difficult below a certain length threshold, which varies by organism and is heavily influenced by GC content. This leads to over-annotation, where many short, random ORFs are incorrectly annotated as genes [11].

FAQ 2: What strategies do modern gene finders use to distinguish short real genes from random ORFs?

Modern gene-finding algorithms employ several advanced strategies to address this challenge:

- Statistical Significance Testing: Tools like EasyGene estimate the statistical significance of a predicted gene. Instead of relying on arbitrary length cut-offs, they calculate the expected number of ORFs in one megabase of random sequence at the same significance level. This measure, often denoted as 'R', properly accounts for the length distribution of random ORFs [11].

- Hidden Markov Models (HMMs): HMMs can model the complex statistical differences between coding and non-coding regions, including codon usage biases and signals like ribosome binding sites (RBS). These models are trained on a reliable set of genes from the target genome to learn organism-specific patterns [11].

- Dynamic Programming and GC Bias Analysis: Prodigal uses dynamic programming to select an optimal set of genes from all possible ORFs. It analyzes the GC frame plot bias—the preference for G's and C's in each of the three codon positions—within ORFs to construct preliminary coding scores, helping to filter out spurious ORFs [12].

FAQ 3: Why is accurate Translation Initiation Site (TIS) prediction difficult, and how can errors be minimized?

Accurate TIS prediction is challenging because longer ORFs in genomic sequences contain multiple potential start codons. Errors in TIS identification can lead to incorrect N-terminal protein sequence annotation. Minimization strategies include:

- Integrated RBS Modeling: Gene finders like EasyGene and Prodigal incorporate explicit sub-models for the Ribosome Binding Site and the nucleotides between the RBS and the start codon, which improves the identification of the correct start site [11] [12].

- Organism-Specific Training: Prodigal operates in a fully unsupervised fashion, automatically learning the properties of the input genome—such as start codon usage (ATG, GTG, TTG) and RBS motif patterns—to build a tailored profile for more accurate TIS prediction [12].

FAQ 4: How can I improve gene prediction accuracy in high GC-content genomes?

High GC-content genomes present a particular challenge because they contain fewer stop codons and a higher number of spurious, long ORFs, which increases false positive predictions [12]. To improve accuracy:

- Use Organism-Specific Gene Finders: Avoid one-size-fits-all approaches. Use gene finders like Prodigal or EasyGene that automatically construct a training profile from the input genome itself. This allows the algorithm to learn and adapt to the specific codon usage and statistical biases of the high-GC organism [11] [12].

- Leverage Comparative Genomics: Use tools like VISTA or PipMaker to visualize and compare your genomic sequence with orthologous sequences from related, well-annotated organisms. Conserved coding sequences will stand out as regions of high homology, helping to validate predictions [13].

FAQ 5: What are the best practices for annotating a newly sequenced prokaryotic genome to minimize false positives?

Following standardized annotation guidelines is crucial for minimizing false positives and ensuring consistency.

- Systematic Gene Identification: Assign a systematic

locus_tagidentifier to all genes. This should be a unique alphanumeric identifier (e.g.,OBB_0001) and not confer functional meaning [14]. - Accurate Protein Naming: Use concise, neutral names for proteins based on established nomenclature where possible. For proteins of unknown function, use "hypothetical protein" or "uncharacterized protein." Avoid descriptive phrases, references to homology, molecular weight, or species of origin in the product name [14].

- Proper Pseudogene Annotation: If a gene is a pseudogene, do not add "pseudo" to the gene name. Instead, use the

/pseudogenequalifier on the gene feature in the annotation table [14].

Troubleshooting Common Experimental Issues

Problem 1: Annotated genes are unusually short and lack homology to known proteins.

- Potential Cause: The gene finder is likely annotating random, non-functional ORFs as false positive genes.

- Solution:

- Re-analyze your genome using a gene finder that provides a statistical significance measure for its predictions, such as EasyGene.

- Filter the output based on this significance value (e.g., the expected value

R). A lowerRvalue indicates higher significance. - For the remaining short ORFs, perform a careful homology search. If no significant matches are found and the statistical support is weak, consider removing them from the final annotation.

Problem 2: There is a high rate of overlapping gene predictions.

- Potential Cause: The gene finder may be misinterpreting the genomic context or lacking rules to handle gene overlaps.

- Solution: Check the overlap rules of your chosen gene finder. For instance, Prodigal allows a maximal overlap of 60 bp for genes on the same strand and 200 bp for 3' ends of genes on opposite strands, while prohibiting 5' end overlaps [12]. If overlaps exceed these boundaries, it may indicate a false positive. Manual inspection and validation using RNA-Seq data or comparative genomics are recommended for conflicting regions.

Problem 3: Suspected Horizontal Gene Transfer (HGT) regions are poorly annotated.

- Potential Cause: Standard, organism-specific gene finders may perform poorly in genomic islands acquired via HGT because these regions often have different sequence composition (e.g., GC content, codon usage) from the rest of the genome.

- Solution:

- First, identify potential HGT regions using tools that detect compositional biases (e.g., atypical GC content, codon adaptation index).

- For these specific regions, consider using a second, more general gene-finding algorithm or performing a targeted homology-based search (e.g., using BLAST against non-redundant databases) to supplement the primary annotation.

Table 1: Comparison of Prokaryotic Gene-Finding Tools and Their Strategies for Reducing False Positives.

| Tool | Core Algorithm | Approach to Short ORFs & False Positives | Key Strengths |

|---|---|---|---|

| EasyGene | Hidden Markov Model (HMM) | Estimates statistical significance (expected number in random sequence, R); uses HMM to score ORFs based on length and sequence patterns [11]. |

Provides a statistical confidence measure; fully automated and organism-specific [11]. |

| Prodigal | Dynamic Programming | Uses GC-frame plot bias and dynamic programming to select a maximal tiling path of high-confidence genes; excludes very short ORFs (<90 bp) to reduce false positives [12]. | Fast, lightweight, and optimized for TIS prediction; works well on draft genomes and metagenomes [12]. |

| Glimmer | Interpolated Markov Models | Uses variable-order Markov models to capture coding signatures; relies on a training set of known genes to distinguish coding from non-coding [11] [12]. | Highly accurate for many bacterial genomes; included in NCBI's annotation pipeline [12]. |

Experimental Protocols

Protocol 1: Automated Extraction of a High-Quality Training Set for Gene Finder Training

This protocol, based on the method used by EasyGene and Orpheus, allows for the automatic construction of a reliable set of genes from a raw genome sequence for training organism-specific gene finders [11].

- Maximal ORF Extraction: Extract all maximal ORFs longer than 120 bases from the query genome.

- Homology Search: Translate the ORFs and search for significant matches against a curated protein database (e.g., Swiss-Prot) using BLASTP. Use a strict significance threshold (e.g., E-value < 10^-5) and exclude proteins annotated as "putative," "hypothetical," etc.

- Identify Certain Starts: For each ORF with a significant match, find the most upstream position of the match. If there is no alternative start codon between this position and the ORF's original start, place the ORF in a set of genes with certain starts (Set A').

- Reduce Sequence Similarity: To avoid bias, reduce sequence similarity within Set A'. Compare all genes using BLASTN and iteratively remove genes with the largest number of neighbors until no similar pairs remain. The resulting set (Set A) is a high-quality, non-redundant training set.

- Final Preparation: Add 50 bases of upstream flank and 10 bases of downstream flank to each gene in the training set to capture regulatory signals like the RBS.

Protocol 2: Gene Prediction and Statistical Validation Using EasyGene

This protocol outlines the steps for using a tool like EasyGene to predict genes and assign a statistical confidence measure [11].

- Model Estimation: Provide the raw genomic sequence to EasyGene. The algorithm will automatically execute a process similar to Protocol 1 to extract a training set and then estimate the parameters for its Hidden Markov Model (HMM).

- ORF Scoring: The program will score all potential ORFs in the genome using the trained HMM. The score reflects how well the ORF matches the coding statistics of the training set.

- Calculate Statistical Significance: For each ORF, the algorithm calculates its statistical significance (

R), defined as the expected number of ORFs in one megabase of random sequence with a score at least as high. The random sequence is modeled as a third-order Markov chain with the same statistics as the target genome. - Filter and Annotate: Apply a significance threshold (e.g.,

R< 0.001) to filter out low-confidence predictions. The remaining ORFs are considered high-confidence genes for annotation.

Research Reagent Solutions

Table 2: Essential Tools and Databases for Prokaryotic Genome Annotation.

| Item Name | Function in Annotation | Usage Notes |

|---|---|---|

| Swiss-Prot Database | A curated protein sequence database providing high-quality, manually annotated data. | Used for homology searches to build reliable training sets and validate predicted genes [11]. |

| BLAST Suite | A tool for comparing nucleotide or protein sequences to sequence databases. | Critical for identifying homologous genes (BLASTP) and reducing redundancy in training sets (BLASTN) [11]. |

| Prodigal Software | A prokaryotic dynamic programming gene-finding algorithm. | Used for primary gene prediction, especially effective for identifying correct Translation Initiation Sites [12]. |

| NCBI Feature Table | A standardized five-column, tab-delimited format for genomic annotation. | Used as input for table2asn to generate the final GenBank submission file [14]. |

| VISTA/PipMaker | Comparative genomics visualization tools. | Used to align and visualize conserved coding and non-coding regions between different species, validating gene predictions [13]. |

Signaling Pathways and Workflow Diagrams

Gene Prediction with Statistical Filtering

False Positive Risk Assessment Logic

Inconsistencies Across Tools and the Myth of a Single Best Solution

Frequently Asked Questions

What are the most common sources of false positives in prokaryotic gene annotation? A significant source of false positives is the spurious translation of non-coding repetitive sequences. Research has confirmed that reference protein databases are contaminated with erroneous sequences translated from Clustered Regularly Interspaced Short Palindromic Repeats (CRISPR) regions. These non-coding DNA sequences can contain open reading frames (ORFs) that are mistakenly identified as protein-coding genes by automated prediction tools [15]. Another common issue is the under-prediction of small genes (often < 100 amino acids), which can lead to an over-correction where other short, non-coding ORFs are falsely predicted as genes [16].

My gene finder predicts a large number of small, unknown genes. Should I trust these results? Exercise caution. While some may be genuine missing genes, a high number of small, uncharacterized ORFs is a red flag. A systematic study discovered 1,153 candidate missing gene families that were consistently overlooked across prokaryotic genomes, the vast majority of which were small [16]. This indicates that current gene finders have systematic problems with small genes. It is recommended to use conservative criteria, such as requiring evidence of conservation across phylogenetically distant taxa (e.g., different taxonomic families), to distinguish real genes from genomic artifacts [16].

I am getting conflicting results from different gene finders. Which one is correct? This is a fundamental challenge in the field; there is no single "correct" tool. Different algorithms use distinct models and training data, making them susceptible to various error types. For instance, a tool optimized for sensitivity might report more putative genes, including false positives, while a more specific tool might miss genuine genes (false negatives). The key is not to seek one perfect tool but to understand the inherent biases and failure modes of each. The best practice is to use an ensemble approach, combining multiple tools and evidence sources to reach a consensus [17] [18].

How can I reduce false positives when analyzing data from a single sequencing platform? For scenarios where using multiple sequencing platforms is not feasible, you can employ computational filtering techniques. One effective method is ensemble genotyping, which integrates the results of multiple variant calling algorithms to filter out calls that are not consistently supported. This approach has been shown to exclude over 98% of false positives in mutation discovery while retaining more than 95% of true positives [17]. Alternatively, machine learning models (e.g., logistic regression) can be trained on variant quality metrics to prioritize high-confidence calls [17].

Troubleshooting Guide: Reducing False Positives

Problem: Suspected False Positives from CRISPR Regions

Issue: Your automated gene annotation predicts multiple short, hypothetical genes in close proximity, and you suspect they may originate from mis-annotated CRISPR arrays.

Investigation and Solution Protocol:

- Identify Repeat Patterns: Translate the predicted protein sequences and search for short, perfectly repeated peptide sequences. CRISPR-derived proteins often contain repeats of 7–20 amino acids separated by spacers of a similar length [15].

- Check Genomic Context: Examine the DNA sequence surrounding the suspected false genes. Search for the presence of CRISPR-associated (cas) genes, such as cas1 and cas2, within a 10 kb region upstream or downstream. The co-location of a putative gene with a cas gene cluster is strong evidence that the genomic region contains a CRISPR-Cas system and that the predicted protein is likely spurious [15].

- Database Search: Perform a BLAST search of the predicted protein against UniProtKB. Check if the top hits are annotated as "CRISPR-associated" or have reviewer comments indicating they are suspected false positives.

- Use Specialized Tools: Run the genomic sequence through a dedicated CRISPR array finder tool, such as CRISPRCasFinder or CRISPRCasdb, to independently identify CRISPR repeats. Compare the coordinates of these repeats with your predicted genes [15].

This workflow is summarized in the diagram below:

Problem: Systematic Omission of Small Genes

Issue: Standard gene finders are not predicting small but biologically real genes, leading to a high false negative rate for this class.

Investigation and Solution Protocol:

- Generate All Possible ORFs: As a first step, identify all maximal Open Reading Frames (ORFs) of a set minimum length (e.g., ≥ 99 bp or 33 amino acids) across the entire genome, regardless of their location in annotated regions [16].

- Perform Comparative Genomics: Compare all these intergenic ORFs against a database of proteins and ORFs from other prokaryotic genomes using a tool like BLASTP. The goal is to find conserved ORFs that are currently unannotated [16].

- Apply Conservative Filtering: To minimize false positives, require strong evidence for conservation. A highly effective filter is to demand that conserved ORFs are found in organisms from different taxonomic families. This ensures the signal is not due to recent common ancestry or conserved non-functional sequences [16].

- Cluster Homologous ORFs: Group the conserved intergenic ORFs into clusters (e.g., using single-linkage clustering based on BLAST alignments). These clusters represent candidate missing gene families [16].

The following table summarizes the scale of missing genes found using this methodology:

Table 1: Candidate Missing Genes Discovered via Comparative Genomics

| Category | Count | Key Characteristic |

|---|---|---|

| Candidate Missing Gene Families | 1,153 | Novel, conserved ORFs with no strong database similarity [16] |

| Absent Annotations | 38,895 | Intergenic ORFs with clear similarity to annotated genes in other genomes [16] |

| Typical Length | < 100 aa | Vast majority of missing genes are small [16] |

Quantitative Evidence of Method Inconsistencies

The problem of inconsistencies and false positives is not unique to gene prediction. A benchmark study on differential expression analysis for RNA-seq data provides a stark, quantitative example of how popular methods can fail to control false discoveries, especially with larger sample sizes [19].

Table 2: False Discovery Rate (FDR) Failures in Differential Expression Tools

| Method | Type | Reported FDR Issue |

|---|---|---|

| DESeq2 | Parametric | Actual FDR sometimes exceeded 20% when the target was 5% [19] |

| edgeR | Parametric | Similar FDR inflation; identified up to 60.8% spurious DEGs in one case [19] |

| limma-voom | Parametric | Failed to control FDR consistently in benchmarks [19] |

| Wilcoxon Rank-Sum Test | Non-parametric | Consistently controlled FDR across sample sizes and thresholds [19] |

DEGs: Differentially Expressed Genes

Table 3: Essential Resources for Robust Prokaryotic Gene Annotation

| Resource / Tool | Function / Purpose |

|---|---|

| CRISPRCasFinder | Identifies CRISPR arrays and Cas gene clusters to flag a major source of false positives [15] |

| BLAST Suite | Core tool for comparative genomics to find conserved ORFs and validate predictions [16] |

| UniProtKB | Reference protein database; used for homology searches but should be used critically knowing it contains some spurious entries [15] |

| BRAKER2 | Gene prediction pipeline that uses RNA-Seq and protein evidence to improve annotation accuracy [18] |

| FINDER | Automated annotation package that processes raw RNA-Seq data to annotate genes and transcripts, optimizing for comprehensive discovery [18] |

| Ensemble Methods | A strategy, not a single tool; combines multiple algorithms to improve consensus and reduce errors from any single method [17] |

| Taxonomically Diverse Genomes | Using comparison genomes from different families is a critical "reagent" for filtering false positives in novel gene discovery [16] |

Our Core Thesis: A Pathway to Robust Annotations

The central tenet of this technical support center is that a single, perfect bioinformatics tool is a myth. Robust results come from a rigorous, multi-faceted strategy that anticipates and mitigates specific error modes. The following diagram outlines a general workflow for achieving reliable gene annotations by embracing this philosophy.

Practical Methods and Tools to Minimize False-Positive Predictions

Frequently Asked Questions (FAQs)

Q1: What is the primary purpose of the ORForise platform? ORForise is a Python-based platform designed for the analysis and comparison of Prokaryote CoDing Sequence (CDS) gene predictions. It allows researchers to compare novel genome annotations to reference annotations (such as those from Ensembl Bacteria) or to directly compare the outputs of different prediction tools against each other on a single genome. This facilitates the systematic identification of annotation accuracy and false positives [20].

Q2: What are the common failure points when running an ORForise comparison, and how can I avoid them?

Common failures often relate to incorrect file preparation. Ensure you have the correct corresponding Genome DNA file in FASTA format (.fa) and that your annotation and prediction files are in a compatible GFF format. Also, verify that the tool prediction file corresponds to the tool specified with the -t argument. Using the precomputed testing data available in the ~ORForise/Testing directory is recommended to validate your installation and workflow [20].

Q3: My gene prediction tool shows high accuracy on model organisms but performs poorly on my novel prokaryotic species. How can ORForise help?

This is a common challenge, as many ab initio methods can learn species-specific patterns, causing their accuracy to drop when applied to non-model organisms [21]. ORForise can quantitatively benchmark the performance of one or multiple prediction tools against your best available reference genome for the novel species. The Aggregate-Compare function is particularly useful for identifying which tool, or combination of tools, yields the highest "Perfect Match" rate and the fewest "Missed Genes" for your specific organism [20].

Q4: What do "Perfect Matches," "Partial Matches," and "Missed Genes" mean in the ORForise output? These are core metrics provided by ORForise after a comparison [20]:

- Perfect Matches: The predicted CDS perfectly matches the reference gene annotation.

- Partial Matches: The predicted CDS overlaps with a reference gene but has discrepancies in the start and/or stop coordinates.

- Missed Genes: A gene present in the reference annotation was not detected by the prediction tool.

Q5: How does multi-tool assessment with ORForise help reduce false positives? By aggregating results from several prediction tools, ORForise helps you identify genes that are consistently predicted across multiple algorithms. A gene predicted by multiple, independent tools is less likely to be a false positive. Conversely, genes that are only predicted by a single tool can be flagged for further scrutiny, allowing you to focus experimental validation efforts more efficiently and reduce wasted resources on false leads [20] [4].

Troubleshooting Guide

Problem 1: Installation and Dependency Issues

| Symptom | Cause | Solution |

|---|---|---|

| Error message about missing modules or failure to install via pip. | The NumPy library, a core dependency, was not installed automatically. | Manually install NumPy using pip install numpy before installing ORForise. Use the --no-cache-dir flag with pip to ensure you are downloading the newest version of ORForise [20]. |

Scripts like Annotation-Compare are not recognized as commands. |

The Python environment or PATH is not configured correctly after pip installation. | Ensure you are using a compatible Python version (3.6-3.9). Try running the tool as a module: python -m ORForise Annotation-Compare -h [20]. |

Problem 2: Analysis Execution Errors

| Symptom | Cause | Solution |

|---|---|---|

| "Tool Prediction file error" or failure to read input files. | The provided GFF or FASTA file is malformed, in an incorrect format, or does not correspond to the specified tool. | Validate your input file formats. Ensure the genome DNA FASTA file is the same one used for generating the predictions. When comparing multiple tools, provide the prediction file locations for each tool as a comma-separated list without spaces [20]. |

| Low "Perfect Match" rates and high "Missed Genes" across all tools. | The reference annotation and tool predictions may be based on different genome assemblies or versions. | Verify that all annotations and predictions are based on the exact same genome assembly. Inconsistent underlying sequences will lead to invalid comparisons [20]. |

Problem 3: Interpreting Quantitative Results

ORForise provides two levels of metrics: 12 "Representative" metrics for a high-level overview and 72 "All" metrics for a deep dive. The table below summarizes key metrics for assessing false positive rates and general accuracy [20].

Table 1: Key ORForise Metrics for False Positive Assessment

| Metric Name | Description | Interpretation for False Positives |

|---|---|---|

| PercentageofGenes_Detected | How many reference genes were found. | Low values indicate high false negatives. |

| FalseDiscoveryRate | Proportion of predicted ORFs that do not correspond to a reference gene. | A primary false positive metric. Lower is better. |

| Precision | The ratio of correct positive predictions to all positive predictions. | Higher precision indicates fewer false positives. |

| PercentageDifferenceofAllORFs | How many more or fewer ORFs were predicted vs. the reference. | A large positive value suggests over-prediction and potential false positives. |

| PercentageDifferenceofMatchedOverlapping_CDSs | Indicates if matched ORFs overlap with each other. | High values may suggest fragmented or erroneous predictions. |

Experimental Protocol: Benchmarking a Novel Gene Finder with ORForise

Objective

To evaluate the performance of a novel or existing ab initio gene prediction tool against a trusted reference annotation for a prokaryotic genome, quantifying accuracy and false positive rates.

Materials and Reagents

Table 2: Research Reagent Solutions

| Item | Function / Description | Example / Note |

|---|---|---|

| Prokaryotic Genome DNA | The underlying DNA sequence for the analysis in FASTA format. | Ensure the sequence is complete and of high quality. |

| Reference Annotation File | A trusted GFF file containing the coordinates of known genes. | Often sourced from Ensembl Bacteria for prokaryotes [20]. |

| Tool Prediction File(s) | The GFF output from the gene prediction tool(s) being evaluated. | Ensure the file is properly formatted for the target tool. |

| ORForise Software | The analysis platform. | Install via pip: pip3 install ORForise [20]. |

| Python Environment (v3.6-3.9) | The runtime environment for ORForise. | NumPy is the only required library [20]. |

Step-by-Step Methodology

Preparation of Input Files:

- Obtain the genome DNA sequence in FASTA format.

- Obtain or prepare the reference annotation file in GFF format.

- Run your chosen gene prediction tool(s) (e.g., Prodigal, GeneMarkS) on the genome FASTA file to generate prediction files in GFF format.

Software Installation:

- Install ORForise using the command:

pip3 install ORForise. It is recommended to use the--no-cache-dirflag to get the latest version [20].

- Install ORForise using the command:

Running a Single Tool Comparison:

- Use the

Annotation-Comparefunction. The following command provides a template: - Example: Comparing a Prodigal prediction to an Ensembl reference:

- The tool will output a summary to the screen and a detailed CSV file to the specified directory [20].

- Use the

Running a Multi-Tool Aggregate Comparison:

- Use the

Aggregate-Comparefunction to evaluate several tools at once. - Provide the tool names and their corresponding file paths as comma-separated lists.

- This generates a consolidated output, allowing for direct comparison of tool performance [20].

- Use the

Data Analysis and Interpretation:

- Examine the output summary for the counts and percentages of Perfect Matches, Partial Matches, and Missed Genes.

- Analyze the detailed CSV file. Focus on the FalseDiscoveryRate and Precision metrics from the "Representative_Metrics" to directly assess the level of false positives.

- A high FalseDiscoveryRate means a large proportion of the tool's predictions are likely not real genes, a key insight for refining prediction algorithms or filtering results [20] [4].

Workflow Visualization

The following diagram illustrates the logical workflow and data flow for a typical ORForise analysis, from input preparation to result interpretation.

ORForise Analysis Workflow

Integrating Ab Initio and Evidence-Based Prediction Pipelines

Core Concepts and Workflow

What is the fundamental difference between ab initio and evidence-based gene prediction methods?

Ab initio methods predict genes solely based on the genomic DNA sequence, using statistical models trained on known gene signatures like codon usage, splice sites, and other sequence features. In contrast, evidence-based methods rely on external data sources such as RNA-Seq, EST libraries, or protein homology to identify genes. Ab initio approaches are highly sensitive but can produce more false positives, while evidence-based methods are more specific but may miss novel genes not present in reference databases [22].

How does integrating these approaches reduce false positives in prokaryotic gene finders?

Integration leverages the strengths of both methodologies. The high sensitivity of ab initio prediction is balanced by the specificity of evidence-based support. True positive genes are likely to be identified by both methods, whereas false positives from ab initio finders often lack supporting evidence. Tools like IPred implement this by requiring that ab initio predictions overlap significantly (e.g., >80%) with evidence-based predictions, effectively filtering out unsupported calls [22]. Furthermore, ensemble methods, which combine multiple prediction algorithms, have been shown to significantly reduce false positives in genomic studies [17].

The following diagram illustrates a generalized workflow for integrating these pipelines:

Implementation and Methodology

What are the key steps to implement an integrated gene prediction pipeline?

A robust integration pipeline follows a structured process. First, you must run your genomic sequence through selected ab initio and evidence-based prediction tools. Next, convert all prediction outputs to a consistent format (like GTF). Then, use an integration tool to process the results, classifying predictions based on support between methods. Finally, generate a consolidated, non-redundant gene set with quality annotations [22]. The NCBI Prokaryotic Genome Annotation Pipeline (PGAP) exemplifies this approach, combining ab initio algorithms with homology-based methods using protein family models [23] [24].

What specific experimental protocols are used for method evaluation?

Researchers typically use benchmark datasets where the true gene structures are known. The following protocol evaluates prediction accuracy:

- Data Preparation: Obtain a reference genome with validated gene annotations. For prokaryotes, this could be a well-annotated Escherichia coli strain.

- Execution: Run the target genome sequence through the ab initio, evidence-based, and integrated pipelines.

- Comparison: Use a framework like Cuffcompare to compare the predictions from each method against the validated reference [22].

- Metric Calculation: Calculate standard accuracy metrics, including:

- Sensitivity (Sn): The proportion of true genes correctly identified.

- Specificity (Sp): The proportion of predicted genes that are true genes.

- False Discovery Rate (FDR): The proportion of predicted genes that are incorrect.

The table below summarizes quantitative data from gene prediction studies, illustrating the performance of different methods.

Table 1: Gene Prediction Accuracy Metrics

| Program | Nucleotide Sn | Nucleotide Sp | Exon Sn | Exon Sp | Source |

|---|---|---|---|---|---|

| GENSCAN | 0.93 | 0.90 | 0.78 | 0.75 | [25] |

| GeneWise | 0.98 | 0.98 | 0.88 | 0.91 | [25] |

| Procrustes | 0.93 | 0.95 | 0.76 | 0.82 | [25] |

| IPred | Improved accuracy compared to single-method predictions | [22] | |||

| Ensemble Genotyping | Reduced false positives by >98% in de novo mutation discovery | [17] |

Troubleshooting Common Issues

A high number of putative novel genes are reported without evidence-based support. How should these be handled?

Predictions supported only by ab initio methods should be treated with caution as potential false positives. It is recommended to:

- Manually inspect the genomic context of these predictions using a genome browser.

- Check for the presence of promoter elements and ribosome binding sites (in prokaryotes).

- Use a tool like PSAURON, which employs a machine learning model to assess the protein-coding likelihood of a sequence and can help flag potentially spurious annotations [26].

- If possible, perform experimental validation via transcriptomics or proteomics.

The integrated pipeline is missing known genes. What could be causing this low sensitivity?

False negatives can arise from several sources:

- Evidence Limitations: The evidence-based data (e.g., RNA-Seq) might be from a specific condition where the gene is not expressed.

- Parameter Stringency: The overlap threshold in the integration tool may be set too high. Consider lowering the minimum required overlap between ab initio and evidence-based calls.

- Algorithm Bias: Ab initio predictors trained on one organism may perform poorly on another with different codon usage. Ensure your tools are appropriate for your target organism.

How can the overall quality of a final annotated genome be assessed?

For a genome-wide assessment, use universal ortholog benchmarks like BUSCO to estimate completeness. For protein-coding sequence quality, the PSAURON tool provides a proteome-wide score (0-100) representing the percentage of annotated proteins that are likely to be genuine [26]. The table below shows example scores for different organisms.

Table 2: PSAURON Proteome-Wide Assessment Scores

| Genome | # Proteins | Proteome-wide PSAURON Score |

|---|---|---|

| H. sapiens (RefSeq) | 136,194 | 97.7 |

| E. coli | 4,403 | 97.2 |

| A. thaliana | 27,448 | 95.3 |

| C. elegans | 19,827 | 96.4 |

| M. jannaschii | 1,787 | 99.4 |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Primary Function |

|---|---|---|

| NCBI PGAP | Pipeline | Automated annotation of bacterial/archaeal genomes by integrating ab initio and homology-based methods [23] [24]. |

| IPred | Software | Integrates ab initio and evidence-based GTF prediction files into a consolidated, more accurate gene set [22]. |

| PSAURON | ML Tool | Assesses the quality of protein-coding gene annotations by assigning a confidence score to each prediction [26]. |

| BUSCO | Benchmark | Estimates the completeness of a genome assembly and annotation based on universal single-copy orthologs [26]. |

| TIGRFAMs | Database | Curated collection of protein families and HMMs used for functional annotation in pipelines like PGAP [24]. |

| GeneMarkS-2+ | Algorithm | Ab initio gene prediction algorithm often incorporated within larger annotation pipelines [24]. |

Employing Machine Learning Classifiers for Enhanced Specificity

Frequently Asked Questions (FAQs)

Q1: My model has high accuracy but is still predicting many false positive genes. What could be wrong? This is a classic sign of an imbalanced dataset [27] [28]. Prokaryotic genomes contain far more non-coding regions than true genes. If your dataset has too many negative (non-coding) examples, the model can become biased. Solution: Apply sampling strategies like downsampling the majority class (non-coding ORFs) to match the distribution of the positive class (true CDS), forcing the model to learn discriminative features beyond simple length or composition[bibliography citation:2].

Q2: How can I prevent information from the test set from influencing the model training? This is known as data leakage, and it leads to deceptively high performance during testing that doesn't hold up in production [27] [28]. Solution: Ensure a strict separation of training, validation, and test sets at the very beginning of your pipeline. For genomic data, this should be done at the genome level to avoid homologous sequences contaminating the splits. Perform all data preprocessing steps (like normalization) after the split, fitting the parameters only on the training data.

Q3: What is the most common mistake in evaluating a gene-finding model? Relying solely on accuracy is misleading for genomic data [28]. Solution: Use a suite of metrics that are robust to class imbalance. Precision is critical for measuring specificity and reducing false positives, while Recall measures sensitivity. The F1-score provides a balanced view of both. Always use a confusion matrix for detailed error analysis [27].

Q4: My model isn't capturing complex gene patterns. Is it too simple? This could be a case of underfitting [27]. Solution: Consider increasing your model's complexity. For genomic sequences, transformer-based models like DNABERT can capture long-range contextual dependencies better than simpler models [6]. Alternatively, you can add more relevant biological features or reduce the strength of regularization in your current model.

Q5: How can I trust the predictions of a complex "black box" model? This is addressed by model explainability [27] [28]. Solution: Use tools like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to interpret the model's decisions. These tools can help you identify which nucleotides or k-mers the model found most important for a prediction, building trust and providing biological insights [28].

Troubleshooting Guides

Problem: High False Positive Rate in CDS Predictions

| # | Step | Action | Rationale |

|---|---|---|---|

| 1 | Verify Data Balance | Check the ratio of CDS vs. non-CDS sequences in your training dataset. | An imbalanced dataset is the most common cause of high false positives [28]. |

| 2 | Analyze Error Patterns | Use a confusion matrix to confirm that false positives are the primary error. | Confirms the nature of the problem and quantifies its severity [27]. |

| 3 | Review Feature Set | Perform feature importance analysis; eliminate highly correlated or irrelevant features. | Irrelevant features degrade performance and can lead to spurious correlations [27]. |

| 4 | Tune Hyperparameters | Optimize probability threshold or use Grid Search/Random Search to fine-tune model parameters. | The default threshold (e.g., 0.5) may not be optimal for your specific data distribution [27]. |

| 5 | Apply Explainability Tools | Use SHAP on problematic false positive predictions to see what features drove the decision. | Reveals if the model is learning correct biological signals or noise [28]. |

Problem: Model Performs Well on Training Data but Poorly on New Genomes

| # | Step | Action | Rationale |

|---|---|---|---|

| 1 | Check for Data Leakage | Audit your pipeline to ensure no test sequences were used in training or preprocessing. | Data leakage creates an unrealistic performance benchmark [28]. |

| 2 | Validate Data Splits | Ensure your train/test split is by genome, not by random sequences, to prevent homology bias. | Prevents the model from memorizing specific genomes instead of general gene patterns. |

| 3 | Simplify the Model | Apply regularization (L1/L2) or reduce model complexity to combat overfitting [27]. | A model that is too complex will memorize the training data instead of generalizing. |

| 4 | Increase Training Data | Collect more diverse genomic sequences from different bacterial species for training. | Helps the model learn a more robust and generalizable representation of a gene [27]. |

Experimental Protocols

Protocol 1: Building a Benchmark Dataset for Prokaryotic Gene Finding

This protocol outlines the creation of a high-quality, balanced dataset for training and evaluating ML-based gene finders, as described in recent literature [6].

1. Data Collection:

- Source: Download complete bacterial genomes from the NCBI GenBank database. Filter for genomes with a "complete" assembly status and "reference" classification to ensure quality [6].

- Files: For each genome, retrieve the

genome.fna(FASTA nucleotide sequences) and theannotation.gff(annotation file).

2. ORF Extraction:

- Tool: Use ORFipy [6] or a similar ORF prediction tool.

- Parameters: Scan both forward and reverse strands. Define start codons (ATG, TTG, GTG, CTG) and stop codons (TAA, TAG, TGA). Retain all ORFs, including nested and overlapping ones.

3. Labeling for CDS Classification:

- Create a dataset where each data point is a nucleotide sequence.

- Positive Label (CDS): Assign to an ORF if its start or end coordinates match an annotated CDS in the GFF file.

- Negative Label (Non-Coding): Assign to an ORF that does not match any annotated CDS.

- Sequence Length: Truncate sequences to a maximum length (e.g., 510 nucleotides) for model compatibility [6].

4. Labeling for TIS Refinement:

- Create a separate dataset from ORFs that are positive CDS hits.

- Sequence: Extract a 60-nucleotide window centered on the start codon (30bp upstream + 30bp downstream).

- Positive Label (True TIS): The authentic translation initiation site from the annotation.

- Negative Label (False TIS): Other ATG/TTG/GTG codons within the same CDS.

5. Dataset Balancing and Splitting:

- CDS Dataset: Downsample the negative (non-coding) samples to match the length distribution of the positive (CDS) samples [6].

- TIS Dataset: For fixed-length sequences, use random undersampling to achieve a 1:1 class balance [6].

- Splits: Partition the data into training, testing, and evaluation sets, ensuring no genome is represented in more than one split.

Protocol 2: Implementing a Transformer (DNABERT) Model for Gene Prediction

This protocol details the two-stage fine-tuning of a pre-trained genomic language model for gene finding [6].

1. Tokenization and Embedding:

- Tokenizer: Use a k-mer tokenizer with k=6.

- Stride: For CDS classification, use a stride of 3. For TIS classification, use a stride of 1.

- Embedding: Map each k-mer token to a 768-dimensional vector using the pre-trained DNABERT model. Add [CLS] and [EOS] special tokens.

2. Model Architecture:

- Base Model: DNABERT, which uses a BERT architecture with 12 transformer layers, 768 hidden dimensions, and 12 attention heads [6].

- Task-Specific Head: For classification, add a linear layer on top of the [CLS] token's output representation.

3. Two-Stage Fine-Tuning:

- Stage 1 - CDS Classification: Fine-tune the model on the CDS dataset to distinguish coding from non-coding sequences.

- Stage 2 - TIS Classification: Using the CDS-tuned model as a starting point, further fine-tune it on the TIS dataset to identify the correct start site within coding regions.

4. Evaluation:

- Compare the model's predictions against traditional tools (Prodigal, GeneMark-HMM, Glimmer) on held-out test genomes.

- Key Metrics: Calculate Precision, Recall, and F1-score for both CDS and TIS predictions. A successful model will show a significant increase in precision, indicating a reduction in false positives.

Table 1: Performance Comparison of Gene Prediction Tools on Bacterial Genomes This table summarizes the expected performance improvements, as demonstrated by advanced models like GeneLM, which employs a transformer architecture [6].

| Tool / Method | Type | CDS Prediction F1-Score | TIS Prediction Precision | Key Strength / Weakness |

|---|---|---|---|---|

| Prodigal | Traditional | Baseline | Baseline | Fast, widely used but can overpredict short ORFs [6]. |

| Glimmer | Traditional | Lower than Prodigal | Lower than Prodigal | Sensitive but high false positive rate [6]. |

| GeneMark-HMM | Traditional | Comparable to Prodigal | Comparable to Prodigal | Uses hidden Markov models; performance varies with genome [6]. |

| CNN/RNN Models | Deep Learning | Higher than Traditional | Higher than Traditional | Better at pattern recognition than traditional tools [6]. |

| GeneLM (gLM) | Genomic Language Model | Highest | Highest | Reduces missed CDS and increases matched annotations; superior TIS accuracy [6]. |

Table 2: Key Metrics for Evaluating Specificity in Gene Finders

| Metric | Formula | Interpretation | Focus on Specificity |

|---|---|---|---|

| Accuracy | (TP+TN)/(P+N) | Overall correctness | Less reliable for imbalanced data [28]. |

| Precision | TP/(TP+FP) | How many of the predicted genes are real? | The primary metric for reducing false positives. |

| Recall (Sensitivity) | TP/(TP+FN) | How many of the real genes were found? | Important for ensuring true genes are not missed. |

| F1-Score | 2(PrecisionRecall)/(Precision+Recall) | Harmonic mean of Precision and Recall | Balances the trade-off between false positives and false negatives. |

| Specificity | TN/(TN+FP) | How many of the non-genes were correctly rejected? | Directly measures false positive rate. |

Research Reagent Solutions

Table 3: Essential Computational Tools for ML-Based Gene Finding

| Item | Function | Example Tools / Libraries |

|---|---|---|

| Genomic Data Source | Provides high-quality, annotated bacterial genomes for training and testing. | NCBI GenBank [6] |

| ORF Extraction Tool | Identifies all potential open reading frames in a genome sequence. | ORFipy [6] |

| Sequence Tokenizer | Splits DNA sequences into discrete tokens (k-mers) for model input. | DNABERT (k=6 tokenizer) [6] |

| Pre-trained gLM | Provides foundational knowledge of genomic sequence patterns; enables transfer learning. | DNABERT [6] |

| ML Framework | Provides the environment for building, training, and evaluating deep learning models. | PyTorch, TensorFlow, Hugging Face Transformers |

| Explainability Toolkit | Interprets model predictions to build trust and uncover biological insights. | SHAP, LIME [28] |

| Experiment Tracking | Manages, logs, and compares different model runs and hyperparameters. | MLflow, Weights & Biases [27] |

Workflow and Model Diagrams

Two-Stage ML Pipeline for Gene Finding

DNABERT Model Architecture for Sequence Classification

Utilizing Specialized Tools for Challenging Genes like Short ORFs and smORFs

Frequently Asked Questions (FAQs)

General Tools and Concepts

Q1: What are smORFs and why are they challenging for standard gene finders?

A1: Small Open Reading Frames (smORFs) are typically defined as open reading frames with a length of less than 100 codons, encoding microproteins of ≤ 100 amino acids [29] [30]. They are challenging because standard prokaryotic gene prediction tools often impose arbitrary length cut-offs (e.g., 300 bases) to minimize false positives, which inadvertently filters out genuine smORFs [31] [30]. These tools also rely on features like evolutionary conservation, which can be weak or absent in short sequences, and they are frequently biased by training data from existing annotations of model organisms, which historically overlooked smORFs [31] [32].

Q2: Are there specialized tools for predicting prokaryotic smORFs?

A2: Yes, specialized tools have been developed to address the limitations of standard gene finders. These include:

- smORFer: A tool that specializes in finding short ORFs through the use of RNA-seq data, which can detect condition-specific transcription events [31].

- smORFunction: A method that predicts smORF function using a speed-optimized correlation algorithm based on gene expression data from microarrays [29].

- Prodigal: While a general prokaryotic gene finder, it uses dynamic programming and GC-frame plot analysis, which can help in identifying smaller genes, though it still primarily focuses on longer ORFs [12].

Troubleshooting Prediction and Validation

Q3: My gene finder predicts many putative smORFs. How can I prioritize them for validation?

A3: You can prioritize candidates using a multi-faceted filtering approach. The table below summarizes key metrics and strategies for prioritizing smORFs to reduce false positives.

Table: Prioritization Strategies for Putative smORF Predictions

| Priority Filter | Description | Supporting Tool/Method |

|---|---|---|

| Ribosome Binding | Evidence of ribosome association is a strong indicator of translation potential. | Ribo-seq (Ribosome Profiling) [30] |

| Evolutionary Conservation | Sequence conservation across related species suggests functional importance. | BLAST, phyloCSF [32] [33] |

| Transcriptional Evidence | Presence of RNA sequencing reads confirms the smORF is transcribed. | RNA-seq [32] [30] |

| Proteomic Validation | Direct detection of the translated microprotein is the most definitive evidence. | Mass Spectrometry (MS) [29] [30] |

Q4: I have a candidate smORF with Ribo-seq support, but MS validation failed. What could be the reason?

A4: This is a common challenge. Failure in MS validation can occur due to several reasons:

- Low Abundance: The microprotein may be expressed at levels below the detection limit of standard MS protocols [34].

- Instability: The microprotein might be rapidly degraded and not accumulate to detectable concentrations [30].

- Technical Challenges: Small proteins and peptides can be lost during standard protein extraction and preparation workflows, or their ionizable peptides may not be amenable to MS detection [30].

- Alternative Start Codons: Translation might initiate from a non-AUG start codon (e.g., GUG, UUG) that is not considered by your prediction model [35].

Technical and Experimental Considerations

Q5: What is a comprehensive experimental workflow to go from prediction to functional characterization?

A5: A robust multi-omics workflow is recommended to confidently move from smORF prediction to functional characterization.

Q6: How does the genetic code table affect smORF prediction?

A6: The choice of genetic code (translation table) is critical. Standard gene finders use a default code (e.g., transltable=1), but prokaryotes, especially in mitochondrial or specific bacterial lineages, may use alternative codes [35]. For example, in Mycoplasma, UGA is not a stop codon but codes for Tryptophan (transltable=4) [35]. Using the standard code in such organisms would prematurely truncate smORF predictions. Always verify the correct genetic code for your target organism in databases like NCBI Taxonomy [35].

Troubleshooting Guides

Problem: High False Positive smORF Predictions in a Novel Genome

Symptoms

- An unusually high number of predicted smORFs with no supporting transcriptional or evolutionary evidence.

- Overlap between many predicted smORFs and known functional elements or antisense strands without clear regulatory logic.

Solution: A Multi-Filter Verification Protocol

Follow this sequential protocol to filter out likely false positives.

- Apply Coding Potential Filters: Use tools that calculate coding potential based on sequence composition (e.g., codon usage bias, GC frame plot analysis [12]). This removes ORFs that look random.

- Require Transcriptional Evidence: Cross-reference predictions with RNA-seq data from the same organism under relevant conditions. Discard smORFs with no RNA-seq read support [32] [30].

- Demand Translational Evidence: Integrate Ribo-seq data to confirm that the smORF is bound by ribosomes. This is a powerful indicator of true translation potential [30] [34].

- Check for Evolutionary Conservation: Use BLAST or similar tools with non-stringent parameters to search for homologs in related species. Conserved smORFs are more likely to be functional [32] [33].

Problem: Failure to Detect a Known, Validated smORF

Symptoms

A smORF previously identified by experimental methods (e.g., Ribo-seq, MS) is not called by your standard gene prediction pipeline.

Solution

- Check Tool Parameters:

- Disable Length Filters: Ensure the minimum ORF length parameter is set to a very low value (e.g., 2-6 codons) or is disabled entirely [32].

- Verify Start Codon Set: Confirm that the tool is configured to recognize non-AUG start codons (GTG, TTG, etc.), which are common for smORFs [35] [12].

- Use the Correct Genetic Code: As highlighted in FAQ A6, an incorrect translation table will lead to missed genes [35].

- Use a Specialized Tool: Run a tool specifically designed for smORF detection, such as smORFer [31], which is optimized for the unique challenges of short sequences.

- Combine Ab Initio and Homology-Based Methods: Use an ab initio predictor and supplement its results with predictions based on sequence homology to known smORFs or microproteins [31] [30].

Research Reagent Solutions

The following table lists key reagents and materials essential for smORF research, as cited in experimental methodologies.

Table: Essential Research Reagents for smORF and Microprotein Studies

| Reagent / Material | Function in smORF Research | Key Considerations |

|---|---|---|

| Ribo-seq Kit | Captures ribosome-protected mRNA fragments, providing direct evidence of translation [29] [30]. | Critical for distinguishing translated smORFs from non-coding transcripts. |

| Mass Spectrometer | Detects and sequences the microproteins translated from smORFs [29] [30]. | Sensitivity is key due to low abundance; specialized protocols may be needed for small peptides. |

| RNA-seq Library Prep Kit | Confirms the smORF is transcribed from the genome [32] [30]. | Strand-specific kits help determine the correct orientation of the smORF. |

| CRISPR/Cas9 System | Enables gene knockout for functional characterization of the smORF [30]. | Used to study phenotypic consequences of smORF loss. |

| Antibodies (Custom) | Used for immunodetection (Western blot, immunofluorescence) of specific microproteins [30]. | Challenging to produce due to small size; often require tagging strategies. |

| Plasmids for Tagging (e.g., GFP, HA) | Allows for overexpression, localization, and pull-down assays of microproteins [29] [30]. | Tags must be chosen carefully to avoid interfering with the microprotein's small size and function. |

Troubleshooting and Optimizing Gene Finder Performance and Parameters

Frequently Asked Questions (FAQs)

1. How does minimum gene length setting affect false positive rates in prokaryotic gene prediction?

Setting the minimum gene length parameter is a critical step. Overly short thresholds increase the risk of predicting random, non-coding Open Reading Frames (ORFs) as genes. Evidence shows that many gene prediction tools are biased against short genes, leading to their systematic under-representation in databases. Conversely, very long thresholds can miss genuine short genes. One study noted that while many tools are developed to report CDSs as short as 110 nucleotides, a systematic overview found high rates of missed genes below 300 nt, indicating that short genes remain a challenge [31].

2. What are the best practices for setting statistical confidence thresholds?

Relying solely on p-values from univariate tests without correcting for multiple comparisons can lead to a high false discovery rate (FDR). For example, in one proteomics study, 80% of calls deemed significant by a traditional method were false positives. To avoid this, using q-values to control the FDR is recommended. This approach provides a measure of significance for each gene or protein, allowing researchers to maintain statistical power while achieving an acceptable level of false positives [36]. Furthermore, when working with spatially correlated data (e.g., from transcriptomic brain atlases), standard gene-category enrichment analysis (GCEA) can produce over 500-fold inflation of false-positive associations. Using ensemble-based null models that account for gene-gene coexpression and spatial autocorrelation is crucial to overcome this bias [37].

3. How does the choice of scoring scheme impact the discrimination between coding and non-coding regions?

Scoring schemes based on codon substitution patterns can effectively distinguish protein-coding regions from non-coding ones. The GeneWaltz method, for instance, uses a codon-to-codon substitution matrix constructed by comparing orthologous gene pairs. This matrix assigns lod scores to codon pairs, where positive scores indicate pairs commonly observed in coding regions [4].

Scoring Function: Sijk,lmn = log( oijk,lmn / eijk,lmn )

where Sijk,lmn is the score for codons ijk and lmn, o is the observed frequency in coding regions, and e is the expected frequency by chance. Regions with high aggregate scores are considered candidate coding regions. The statistical significance of these scores can then be tested using methods like Karlin-Altschul statistics to minimize false positives [4].

Troubleshooting Guides

Problem: Unacceptably High Ratio of False Positives in Predictions

Potential Causes and Solutions:

- Cause: Inadequate statistical correction for multiple testing.

- Solution: Implement FDR control using q-values instead of relying on uncorrected p-values. This provides a measure of significance that accounts for the hundreds or thousands of simultaneous tests performed in genomic or proteomic studies [36].

- Cause: Scoring system is not optimized for your target organism's genomic characteristics.

- Solution: Utilize scoring schemes that reflect the evolutionary signatures of protein-coding genes. For homology-based approaches, use codon substitution matrices derived from related organisms. Be aware that machine-learning models trained on existing genes can inherit historical biases and may perform poorly on novel gene types underrepresented in training data [4] [31].

- Cause: Minimum gene length parameter is set too low.

- Solution: Increase the minimum length threshold. Be aware that this involves a trade-off, as it may increase false negatives for genuine short genes. Refer to known benchmarks for your organism of interest [31].

Problem: Failure to Detect Short or Novel Genes

Potential Causes and Solutions:

- Cause: Inherent bias in gene prediction tools against short genes.

- Solution: Use a combination of tools or specialized algorithms designed to detect short ORFs (sORFs). Be aware that no single tool performs best across all genomes and metrics. Evaluation frameworks like ORForise can help identify the best-performing tool for your specific genome and gene type of interest [31].

- Cause: Low sequencing depth in supporting RNA-seq or other -omics data.