Supervised vs. Unsupervised PCA in Genomics: A Practical Guide for Biomedical Researchers

Principal Component Analysis (PCA) is a cornerstone of genomic data analysis, but the choice between its supervised and unsupervised implementations carries significant implications for discovery and interpretation.

Supervised vs. Unsupervised PCA in Genomics: A Practical Guide for Biomedical Researchers

Abstract

Principal Component Analysis (PCA) is a cornerstone of genomic data analysis, but the choice between its supervised and unsupervised implementations carries significant implications for discovery and interpretation. This article provides a comprehensive evaluation of both paradigms for researchers and drug development professionals. We cover the foundational principles of unsupervised PCA for exploratory analysis and the targeted nature of supervised PCA for hypothesis-driven research. The content details specific methodologies, including Supervised Categorical PCA (SCPCA) and integration with deep learning frameworks like REGLE, alongside critical troubleshooting guidance on known biases and artifacts. Through a comparative validation of applications across genome-wide association studies (GWAS), population genetics, and drug response prediction, this guide offers evidence-based recommendations to optimize genomic analysis pipelines and improve the reliability of biological insights.

Laying the Groundwork: Core Principles and Exploratory Power of Unsupervised PCA in Genomics

In the field of genomic studies, Principal Component Analysis (PCA) serves as a fundamental tool for navigating the complexity of high-dimensional data. However, its application follows two distinct paradigms—unsupervised and supervised—each with different objectives, methodologies, and applications. Unsupervised PCA is an exploratory technique that analyzes the intrinsic structure of data without reference to external labels or outcomes, making it ideal for hypothesis generation and discovery [1]. In contrast, supervised PCA incorporates known outcomes or labels into the analysis, typically as a dimension reduction step before predictive modeling, making it ideal for hypothesis testing and prediction [2].

The distinction is crucial: unsupervised methods describe what the data are, while supervised methods model what the data predict. As [2] highlights, "PCA is currently overused, at least in part because supervised approaches, such as PLS, are less familiar." This guide provides a structured comparison of these approaches, supported by experimental data and protocols from genomic research, to help researchers select the appropriate tool for their specific analytical needs.

Fundamental Principles and Workflows

Unsupervised PCA: The Discovery Engine

Unsupervised PCA operates without utilizing outcome variables, functioning as a pure pattern-discovery tool. It identifies the principal components (PCs) that capture the maximum variance in the predictor variables alone [1]. This approach is particularly valuable in early exploratory stages where researchers seek to understand the underlying structure of genomic data without preconceived hypotheses.

The mathematical foundation of unsupervised PCA involves eigen-decomposition of the covariance matrix of the data, producing linear combinations of original variables (principal components) that are orthogonal to each other. These components are ordered by the proportion of total variance they explain, with the first component capturing the largest possible variance [3].

Supervised PCA: The Prediction Framework

Supervised PCA incorporates knowledge of outcome variables to guide the dimension reduction process. While standard PCA is inherently unsupervised, the supervised approach typically involves two stages: first performing PCA on predictor variables, then using the resulting components in predictive models with outcome variables [1]. This approach ensures the reduced dimensions retain features most relevant to predicting the target.

In genomic prediction, supervised PCA often appears as Principal Component Regression (PCR), where selected principal components become predictors in regression models. As [1] notes, "Principal Component Regression (PCR) is the process of performing multiple linear regression using a specified outcome (dependent) variable, and the selected PCs from PCA as predictor variables."

Experimental Protocols and Methodologies

Protocol 1: Unsupervised Genomic Discovery with REGLE

The REGLE (Representation Learning for Genetic Discovery on Low-Dimensional Embeddings) framework provides a sophisticated protocol for unsupervised discovery in high-dimensional clinical data (HDCD) [4]:

- Data Preparation: Collect high-dimensional clinical data such as spirograms or photoplethysmograms. For genomic applications, use genotype data from biobank-scale datasets.

- Model Training: Train a variational autoencoder (VAE) to compress and reconstruct HDCD. The encoder summarizes input data into a low-dimensional "bottleneck" layer, while the decoder reconstructs data from this summary.

- Representation Learning: The VAE implicitly forces learned encodings to become disentangled—having relatively uncorrelated coordinates where separable biological factors are captured in each coordinate.

- Genetic Association Analysis: Perform genome-wide association studies (GWAS) independently on each encoding coordinate to discover genetic variants associated with the learned representations.

- Polygenic Risk Scoring: Use polygenic risk scores (PRSs) from encoding coordinates as genetic scores of general biological functions, potentially combining them to create disease-specific PRSs.

This protocol successfully identified novel genetic loci for lung and circulatory function not detected through traditional expert-defined features [4].

Protocol 2: Supervised Genomic Prediction

For supervised genomic prediction, the following protocol demonstrates the integration of PCA with prediction models [5]:

- Population Stratification: Perform PCA on genotype data to account for population structure. As demonstrated in pig genomic studies, "Principal component analysis (PCA) was performed using PLINK v1.90" to understand genetic relationships between populations.

- Reference Population Construction: Assemble training populations with both genotypic and phenotypic data. Studies show that "the predictive accuracy of GS is influenced by the size of the reference population" and genetic relatedness between reference and target populations.

- Model Training: Apply genomic prediction models such as:

- GBLUP: Genomic best linear unbiased prediction using the relationship matrix

- Bivariate GBLUP: Treats the same trait in different populations as distinct traits

- GFBLUP: Incorporates prior biological knowledge from GWAS into the prediction model

- Validation: Use cross-validation strategies (e.g., five-fold) and independent tests to evaluate prediction accuracy for traits like backfat thickness and days to reach 100kg body weight.

This protocol achieved 6.6-8.1% improvement in prediction accuracy when machine learning methods were combined with traditional genomic prediction approaches [6].

Performance Comparison: Quantitative Findings

Discovery Power in Genomic Applications

Table 1: Performance Comparison in Genomic Discovery Applications

| Metric | Unsupervised PCA | Supervised PCA | Experimental Context |

|---|---|---|---|

| Novel Locus Identification | Replicated known loci while identifying previously undetected loci [4] | Limited to signals related to specific target traits | REGLE applied to spirograms and PPG data |

| Phenotypic Variance Explained | 88% with first two PCs in color model [3] | Varies based on trait-relevant components | Color-based model with maximized FST |

| Biological Interpretability | Enables discovery of features not captured by expert-defined features [4] | Constrained by pre-specified outcomes | REGLE vs. expert-defined features (EDFs) |

| Population Structure Detection | Effectively reveals genetic stratification and outliers [3] | May miss structure unrelated to target trait | Analysis of modern and ancient human populations |

Prediction Accuracy in Genomic Selection

Table 2: Performance Comparison in Genomic Prediction Applications

| Metric | Unsupervised PCA | Supervised PCA | Experimental Context |

|---|---|---|---|

| Prediction Accuracy | Not primarily designed for prediction | 0.36-0.53 for backfat thickness; 0.26-0.46 for carcass weight [7] | Pig genomic prediction using crossbred reference populations |

| Model Improvement | N/A | 6.6-8.1% improvement over traditional methods [6] | Machine learning with genomic data in Rongchang pigs |

| Trait Specificity | General-purpose data reduction | Optimized for specific target traits | Multi-population genomic evaluation in pigs |

| Handling of Population Structure | Effective as covariate to control confounding [8] | Integrated into prediction models | GWAS population structure adjustment |

Critical Implementation Considerations

Component Selection Strategies

The selection of principal components differs fundamentally between unsupervised and supervised paradigms:

Unsupervised Setting: "[I]n the SNP-set setting, principal components with large eigenvalues tend to have increased power, whereas the opposite holds true in the multiple phenotype setting" [8]. Lower-order PCs (with large eigenvalues) are generally preferred in SNP-set analysis, while higher-order PCs (with small eigenvalues) often yield better power in multiple phenotype analysis.

Supervised Setting: Component selection should be guided by predictive performance on validation data rather than merely variance explained. Parallel Analysis is recommended over traditional eigenvalue-based methods for selecting the number of components to retain [1].

Limitations and Biases

Both approaches carry important limitations that researchers must consider:

Unsupervised PCA results "can be artifacts of the data and can be easily manipulated to generate desired outcomes" [3]. The method may not identify nonlinear relationships between variables and is sensitive to data scaling and preprocessing.

Supervised PCA may lead to overfitting if not properly validated, particularly when the number of components is optimized without independent validation. Additionally, "PCA adjustment also yielded unfavorable outcomes in association studies" in some cases [3].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Analytical Tools and Their Applications

| Tool/Software | Primary Function | Application Context | Reference |

|---|---|---|---|

| EIGENSOFT (SmartPCA) | Population structure analysis | Unsupervised discovery of genetic stratification | [3] |

| PLINK | Genome-wide association analysis | Data management and basic PCA | [5] |

| REGLE Framework | Unsupervised deep learning | Representation learning for genetic discovery | [4] |

| GBLUP Models | Genomic prediction | Supervised breeding value estimation | [5] |

| Variational Autoencoders | Nonlinear dimension reduction | Learning disentangled representations of HDCD | [4] |

| Parallel Analysis | Component selection | Determining significant PCs in unsupervised PCA | [1] |

The choice between unsupervised and supervised PCA depends fundamentally on the research objective. Unsupervised PCA excels in exploratory analysis, hypothesis generation, and characterizing unknown population structures—making it ideal for early-stage genomic discovery. Supervised PCA provides superior performance for predictive modeling, trait prediction, and genomic selection—making it essential for applied breeding programs and risk prediction.

As genomic datasets continue growing in both dimension and complexity, the strategic application of both paradigms will remain crucial. Unsupervised methods will continue driving novel discoveries by revealing patterns beyond current biological knowledge, while supervised approaches will translate these discoveries into predictive models with practical applications in medicine and agriculture. By understanding the distinct strengths, limitations, and appropriate implementations of each paradigm, researchers can more effectively leverage the full potential of multivariate analysis in genomic studies.

In the field of genomics, researchers are frequently confronted with datasets containing millions of genetic variants across thousands of individuals. This high-dimensional data poses significant challenges for analysis and interpretation. Principal Component Analysis (PCA) has emerged as a fundamental, unsupervised technique to navigate this complexity, reducing dimensionality while preserving the essential structure of genetic data. Unlike supervised methods that require predefined labels, unsupervised PCA identifies patterns and population stratification directly from the genetic variation data itself, making it indispensable for exploring population structure in diverse studies, from human biomedical research to plant and animal genetics. This guide objectively compares the performance of unsupervised PCA with alternative approaches, providing experimental data and protocols to inform researchers and drug development professionals in their genomic studies.

Unsupervised PCA is a multivariate statistical technique that reduces the dimensionality of data by transforming original variables into a new set of uncorrelated variables called principal components (PCs). These PCs are ordered so that the first few retain most of the variation present in the original data. In population genetics, when applied to genotype data, PCA summarizes the major axes of variation in allele frequencies, producing coordinates that can visualize genetic relatedness and population structure without prior population labels [9] [10].

The table below summarizes the core characteristics of unsupervised PCA and its main alternatives in genomic studies:

| Method | Core Mechanism | Key Inputs | Primary Applications in Genomics |

|---|---|---|---|

| Unsupervised PCA | Identifies eigenvectors/values of the covariance matrix of allele frequencies [10]. | Genotype matrix (e.g., VCF file) [9]. | Population structure visualization [11], outlier detection [12], data exploration. |

| Supervised PCA | Integrates PCA outcomes into a classification machine learning framework [12]. | Genotype matrix + phenotypic labels. | Enhancing diagnostic models [12], trait prediction. |

| Model-Based Clustering (e.g., STRUCTURE) | Uses a likelihood model with Bayesian MCMC to estimate ancestry proportions [10]. | Genotype matrix + assumed number of populations (K). | Inferring ancestry proportions, admixture analysis. |

| Nonlinear Dimensionality Reduction (e.g., UMAP) | Preserves local data structure using Riemannian geometry and topological data analysis [13]. | Genotype matrix + hyperparameters (e.g., neighbors). | Visualizing complex population clusters [14]. |

| Deep Learning (e.g., VAE/Autoencoder) | Learns a compressed, non-linear data representation using an encoder-decoder neural network [4]. | High-dimensional raw data (e.g., spirograms, PPG). | GWAS on complex clinical data, creating polygenic risk scores [4]. |

Experimental Protocols and Performance Benchmarks

Standard Protocol for Unsupervised PCA on Genetic Data

A typical workflow for performing unsupervised PCA on genetic data involves several key steps to ensure robust and interpretable results [9] [15]:

- Data Input and Quality Control (QC): The process begins with genotype data in Variant Call Format (VCF). Standard QC filters are applied, including removing non-biallelic sites and excluding variants based on metrics like Minor Allele Frequency (MAF), missingness per marker, and Hardy-Weinberg Equilibrium (HWE) [15]. For example, parameters like

-MAF 0.05 -Miss 0.25 -HWE 0might be used. - Linkage Pruning: A critical step to satisfy PCA's assumption of independent variables. This involves pruning SNPs in high linkage disequilibrium (LD). A common approach uses the

plinkcommand with parameters such as--indep-pairwise 50 10 0.1, which specifies a 50Kb window, a 10bp step size, and an r² threshold of 0.1 [9]. - Covariance Matrix and PC Calculation: The pruned genotype data is mean-centered, and the sample covariance matrix is computed. The eigenvectors and eigenvalues of this covariance matrix are then calculated, which correspond to the principal components and the amount of variance each explains, respectively [11].

- Determining Significant PCs: The number of statistically significant PCs can be determined using the Tracy-Widom distribution [10]. In practice, researchers may also use an arbitrary number (e.g., the first 10) or select the number where the eigenvalues appear to level off in a scree plot.

- Visualization and Clustering: The top PCs (typically PC1 and PC2) are plotted on a scatterplot to visualize population structure. Furthermore, generic clustering algorithms like K-means or model-based clustering like soft K-means can be applied to the significant PCs to assign individuals to subpopulations [10].

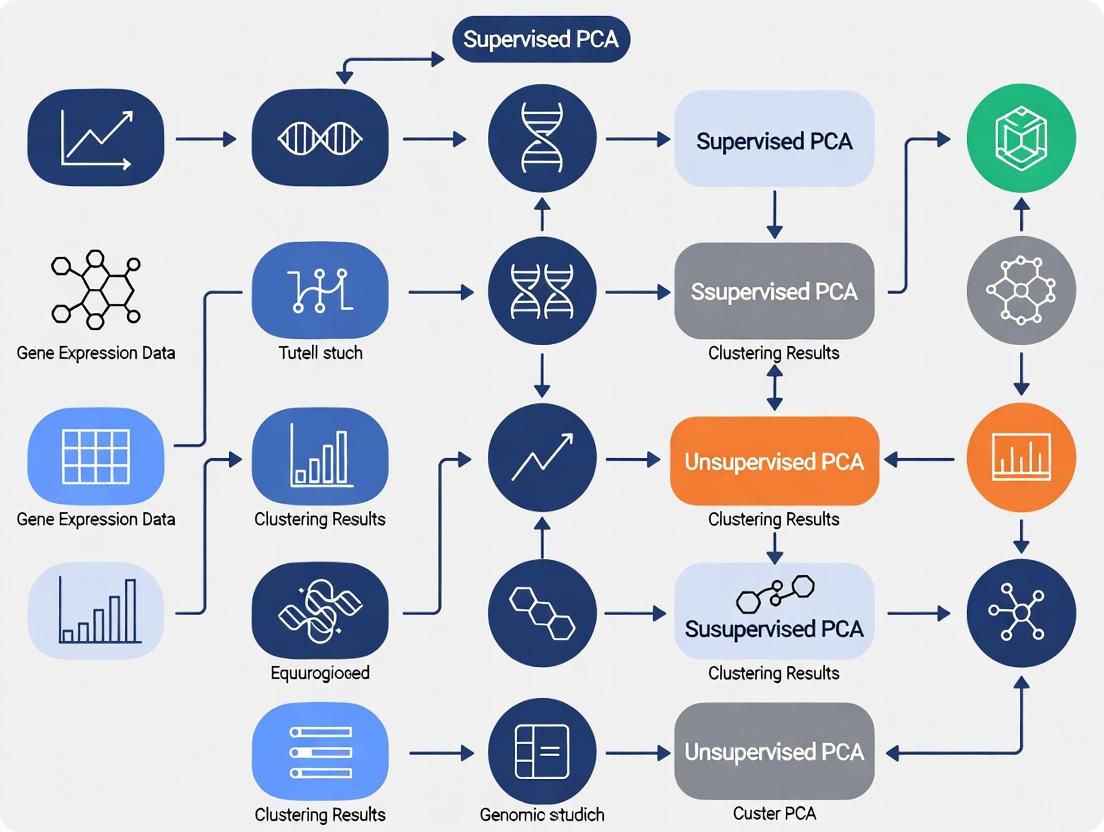

Figure 1: Standard PCA Workflow for Genetic Data.

Performance Comparison of PCA Software Tools

Different software tools are available to execute PCA on large-scale genomic data. Their performance, particularly in terms of computational efficiency and memory usage, varies significantly.

| Software Tool | Input Format | Key Features | Reported Performance (Test Data: 1000 Genomes, Chr22) | Reference |

|---|---|---|---|---|

| VCF2PCACluster | VCF | Kinship estimation, built-in clustering, and visualization. | Time: ~7 min (16 threads); Memory: ~0.1 GB (independent of SNP count). | [15] |

| PLINK2 | VCF | Widely used, extensive GWAS and QC functionalities. | Time: Comparable to VCF2PCACluster; Memory: >200 GB for 81.2M SNPs. | [15] |

| GCTA | PLINK binary | Tool for complex trait analysis, includes PCA. | Accuracy identical to VCF2PCACluster and PLINK2. | [15] |

| TASSEL/GAPIT3 | Various | GUI interface, popular in plant genetics. | Time: >400 min; Memory: >150 GB (deemed unsuitable for large-scale SNP data). | [15] |

The data shows that VCF2PCACluster demonstrates a distinct advantage in memory efficiency, maintaining a low memory footprint (~0.1 GB) even with tens of millions of SNPs, whereas PLINK2's memory consumption scales with the number of SNPs, becoming prohibitive for very large datasets [15].

Comparative Analysis: Unsupervised PCA vs. Advanced Alternatives

The performance of unsupervised PCA can be evaluated against more advanced, non-linear techniques in specific applications.

| Method | Application Context | Reported Performance and Findings | Reference |

|---|---|---|---|

| Unsupervised PCA | Population structure in the "All of Us" cohort (n=297,549). | Successfully revealed substantial population structure and genetic diversity, identifying K=7 genetic clusters. | [14] |

| UMAP (Non-linear) | Population structure in the "All of Us" cohort. | Revealed almost twice as many clusters (K=13) as PCA, though with broad concordance. Noted to preserve local structure at the expense of global patterns. | [14] [13] |

| VAE (Non-linear) | GWAS on high-dimensional clinical data (spirograms). | Reconstruction Accuracy: Outperformed PCA with same latent dimensions. Genetic Discovery: Replicated known loci and identified novel ones not found using expert-defined features. | [4] |

| PCA + Supervised ML | Classifying Autism Spectrum Disorder (ASD). | A novel implementation integrated unsupervised PCA for feature selection with supervised ML, creating a robust model to navigate complex genetic and microstructural data. | [12] |

Essential Research Reagent Solutions

The following table details key computational tools and resources essential for conducting PCA and related population structure analyses.

| Research Reagent | Function and Utility |

|---|---|

| VCF2PCACluster | A dedicated tool for fast, memory-efficient PCA, clustering, and visualization directly from VCF files [15]. |

| PLINK (1.9/2.0) | A whole-genome association toolset that provides robust functions for data management, QC, linkage pruning, and PCA [9]. |

| EIGENSOFT (SmartPCA) | A widely cited software package specifically designed for performing PCA on genetic data, includes tools to account for LD [3] [10]. |

| GENOME | A coalescent-based simulator used to generate simulated genotype data for validating and testing population structure inference methods [10]. |

| HGDP-CEPH Panel | A publicly available reference dataset of 1,064 individuals from 51 global populations, used as a benchmark for evaluating population structure [10]. |

| All of Us Researcher Workbench | A cloud-based platform providing access to genomic and health data from a diverse US cohort, enabling large-scale analyses like PCA [14] [13]. |

Critical Considerations and Best Practices

While unsupervised PCA is a powerful tool, researchers must be aware of its limitations and potential biases. A significant study highlighted that PCA results can be highly sensitive to data composition and manipulation, potentially generating artifacts or desired outcomes depending on the choice of markers, samples, and analysis parameters [3]. This underscores that PCA results are not always reliable, robust, or replicable, suggesting that a vast number of genetic studies may need reevaluation.

Best practices to mitigate these issues include:

- Rigorous Quality Control: Applying strict QC filters to genetic markers.

- Linkage Pruning: Always pruning SNPs in strong LD before analysis.

- Sensitivity Analysis: Testing the stability of results by varying sample compositions and parameters.

- Complementary Methods: Using PCA in conjunction with other methods, such as model-based ancestry inference (e.g., STRUCTURE) or local PCA [11], which can reveal heterogeneity in population structure patterns across the genome caused by factors like linked selection or chromosomal inversions. No single method should be relied upon exclusively for drawing historical or ethnobiological conclusions [3].

Unsupervised PCA remains a cornerstone technique for dimensionality reduction and initial exploration of population structure in genomic studies due to its simplicity, speed, and interpretability. Its utility is evident in large-scale biobank studies, where it efficiently reveals major axes of genetic variation. However, performance comparisons show that while tools like VCF2PCACluster offer superior memory efficiency for massive datasets, non-linear methods like VAEs can capture more complex features in certain data types, leading to improved genetic discovery. The choice between unsupervised PCA and its alternatives, including supervised frameworks, should be guided by the specific research question, data characteristics, and computational constraints. Researchers are encouraged to apply PCA with a critical understanding of its limitations, employing robust protocols and validating findings with complementary methods to ensure the generation of reliable and impactful scientific insights.

In genomic studies, Principal Component Analysis (PCA) serves as a critical first step in exploratory data analysis, enabling researchers to uncover key patterns within high-dimensional data. This guide evaluates supervised and unsupervised PCA methodologies for identifying sample outliers, batch effects, and major genetic clusters. While unsupervised PCA remains a cornerstone technique for visualizing inherent data structures, supervised approaches incorporating biological priors are emerging as powerful alternatives for specific genomic applications. We objectively compare the performance of these methodologies using experimental data from recent genomic studies, providing researchers with a framework for selecting appropriate analytical tools based on their specific research objectives and data characteristics.

Fundamental PCA Concepts in Genomics

Principal Component Analysis is a multivariate statistical technique that reduces the dimensionality of genomic datasets while preserving covariance structures. PCA transforms high-dimensional genomic data into a set of linearly uncorrelated variables termed principal components (PCs), which are ordered by the amount of variance they explain. The first few PCs typically capture the most significant biological and technical variations, allowing visualization of sample relationships in two or three dimensions [16] [3].

In population genetics, PCA applications implemented in widely-cited packages like EIGENSOFT and PLINK are extensively used as foremost analyses. PCA outcomes shape study design, characterize individuals and populations, and draw historical conclusions on origins and relatedness. The technique is particularly valuable for visualizing genetic distances between populations, with sample overlap often interpreted as evidence of shared ancestry or identity [3].

Unsupervised PCA Workflows and Applications

Standard Protocol for Unsupervised PCA

The standard unsupervised PCA protocol begins with data preprocessing, including centering and scaling the feature data to ensure equal contribution from all features. The algorithm decomposes the processed data matrix into principal components, with visualization typically focusing on the first two or three PCs that explain the greatest variance. Outlier identification employs statistical thresholds, commonly using standard deviation ellipses in PCA space with thresholds at 2.0 and 3.0 standard deviations, corresponding to approximately 95% and 99.7% of samples, respectively [16].

Robust PCA for Outlier Detection

Classical PCA (cPCA) is highly sensitive to outlying observations, which can disproportionately influence the first components and obscure true data patterns. Robust PCA (rPCA) methods address this limitation using statistical techniques to obtain principal components that remain stable despite outliers. Two prominent algorithms include PcaHubert, which demonstrates high sensitivity in outlier detection, and PcaGrid, which maintains the lowest estimated false positive rate [17].

In RNA-seq data analysis with small sample sizes, rPCA has demonstrated superior performance compared to classical approaches. In one study evaluating mouse cerebellar gene expression data, both PcaHubert and PcaGrid detected the same two outlier samples that cPCA failed to identify. This accurate detection significantly improved differential expression analysis outcomes, highlighting the practical importance of robust methods for genomic quality control [17].

Table 1: Performance Comparison of Robust PCA Methods in RNA-Seq Data Analysis

| Method | Sensitivity (%) | Specificity (%) | Key Strength | Implementation |

|---|---|---|---|---|

| PcaGrid | 100 | 100 | Lowest false positive rate | rrcov R package |

| PcaHubert | 100 | 100 | Highest sensitivity | rrcov R package |

| Classical PCA | Variable | Variable | Standard approach | Multiple packages |

| PcaCov | Not reported | Not reported | Robust covariance estimation | rrcov R package |

Batch Effect Identification

Batch effects represent systematic technical variations introduced during sample processing that can confound biological interpretation. In PCA plots, batch effects manifest as distinct clustering of samples according to batch labels rather than biological variables of interest. Research indicates that approximately 50% of publicly available RNA-seq datasets show significant batch effects when analyzed with PCA-based methods [18].

One effective approach for batch effect detection combines PCA with machine learning-derived quality scores. This method achieved comparable or superior performance to reference methods using a priori batch knowledge in 10 of 12 datasets (92%) evaluated. When coupled with outlier removal, the correction performed better than reference methods in 6 of 12 datasets [18]. These findings demonstrate how quality-aware PCA approaches can successfully identify technical artifacts without prior batch information.

Supervised PCA Frameworks

Incorporating Biological Priors

Supervised PCA frameworks integrate biological knowledge to enhance pattern discovery in genomic data. The AWGE-ESPCA model represents an advanced implementation specifically designed for genomic studies, incorporating two key innovations: adaptive noise elimination regularization to address noise challenges in non-human genomic data, and integration of known gene-pathway quantitative information as prior knowledge within the Sparse PCA framework [19].

This model demonstrates how supervised approaches can prioritize biologically meaningful features—in this case, gene probes located in pathway enrichment regions—that might be overlooked by unsupervised methods. By combining these elements, AWGE-ESPCA effectively filters for genes in pathway-rich regions while maintaining the dimensionality reduction advantages of traditional PCA [19].

Experimental Protocol for Supervised PCA

The supervised PCA protocol begins with the incorporation of biological priors, such as pathway information or quality metrics, into the model structure. For the AWGE-ESPCA model, researchers first established a Cu2+-stressed Hermetia illucens growth genome dataset, then applied adaptive noise elimination regularization to address data-specific noise challenges [19].

The supervised phase involves weighted gene network analysis that prioritizes features with established biological significance. In genomic applications, this typically means emphasizing genes with known pathway associations or established functional roles. The model then performs sparse PCA with feature constraints, enhancing biological interpretability by maintaining feature identity rather than creating composite components [19].

Validation employs independent experiments comparing performance against unsupervised benchmarks. In the AWGE-ESPCA evaluation, researchers conducted five independent experiments comparing four state-of-the-art Sparse PCA models alongside representative supervised and unsupervised baseline models [19].

Comparative Performance Analysis

Outlier Detection Capabilities

Robust PCA methods demonstrate significant advantages in outlier detection compared to classical approaches. In simulation studies with positive control outliers, PcaGrid achieved 100% sensitivity and 100% specificity across tests with varying degrees of outlier divergence. The method performed effectively for both high-"outlierness" samples with completely different expression patterns and low-"outlierness" samples with partial overlap in differentially expressed genes [17].

The practical impact of accurate outlier detection was demonstrated in a mouse cerebellar gene expression study, where removal of rPCA-identified outliers significantly improved differential expression detection between control and conditional SnoN knockout mice. Downstream validation confirmed that outlier removal enhanced biological interpretation without introducing spurious findings [17].

Table 2: Outlier Detection Performance Across PCA Methods

| Method Type | Representative Tool | Sensitivity | Specificity | Use Case Recommendation |

|---|---|---|---|---|

| Classical PCA | SmartPCA (EIGENSOFT) | Variable | Variable | Initial data exploration |

| Robust PCA | PcaGrid (rrcov) | 100% | 100% | RNA-seq with small sample sizes |

| Robust PCA | PcaHubert (rrcov) | 100% | 100% | Maximum sensitivity needs |

| Supervised PCA | AWGE-ESPCA | Not explicitly reported | Not explicitly reported | Noisy data with biological priors |

Genetic Ancestry and Population Structure

Unsupervised PCA remains widely used in population genetics to characterize genetic ancestry and population structure. Analysis of the All of Us Research Program cohort (n=297,549) using unsupervised PCA revealed substantial population structure, with clusters of closely related participants interspersed among less related individuals [14]. The cohort showed diverse genetic ancestry with major contributions from European (66.4%), African (19.5%), Asian (7.6%), and American (6.3%) continental ancestry components [14].

However, concerns about potential biases in PCA interpretations have emerged. Research demonstrates that PCA results can be significantly influenced by data composition and analytical choices, potentially generating artifacts that misinterpret population relationships [3]. Studies using intuitive color-based models alongside human population data show that PCA outcomes can be manipulated to produce desired results, raising concerns about reliability and replicability of findings derived solely from PCA [3].

Batch Effect Correction

Comparative studies evaluating batch effect correction methods demonstrate that PCA-based approaches using quality metrics can effectively address technical variation. In analyses of 12 publicly available RNA-seq datasets, correction using machine learning-predicted sample quality scores (Plow) performed comparably or better than methods using a priori batch knowledge in 11 of 12 datasets (92%) [18].

The integration of quality-aware approaches with PCA enhances batch effect identification and correction. When combined with outlier removal, quality-based correction outperformed standard batch correction in half of the evaluated datasets, demonstrating the value of incorporating technical quality metrics into the analytical framework [18].

Table 3: Batch Effect Correction Performance (12 RNA-seq Datasets)

| Correction Method | Number of Datasets with Better Performance | Number of Datasets with Comparable Performance | Number of Datasets with Worse Performance |

|---|---|---|---|

| Quality Score (Plow) Only | 1 | 10 | 1 |

| Quality Score + Outlier Removal | 6 | 5 | 1 |

| Reference Batch Correction | Baseline | Baseline | Baseline |

Research Reagent Solutions

Table 4: Essential Computational Tools for PCA in Genomic Studies

| Tool/Package | Function | Application Context | Key Features |

|---|---|---|---|

| rrcov R Package | Robust PCA implementation | Outlier detection in high-dimensional data | Multiple algorithms (PcaGrid, PcaHubert) |

| EIGENSOFT (SmartPCA) | Population genetics PCA | Genetic ancestry and population structure | Standard in population genetics |

| seqQscorer | Quality score prediction | Batch effect detection | Machine learning-based quality assessment |

| AWGE-ESPCA | Supervised sparse PCA | Genomic data with biological priors | Pathway-integrated feature selection |

| PLINK | Genome-wide association studies | Population stratification | PCA for association studies |

Unsupervised PCA methods remain essential for initial data exploration, quality control, and identifying major genetic clusters, with robust variants offering superior outlier detection. However, supervised PCA frameworks demonstrate increasing value for targeted analyses incorporating biological knowledge, particularly for noisy genomic data or studies focused on specific functional elements. The choice between these approaches should be guided by research objectives: unsupervised methods for broad exploratory analysis and supervised approaches for hypothesis-driven investigations with established biological priors. As genomic datasets grow in complexity and scale, combining both approaches may provide the most comprehensive analytical strategy, balancing discovery of novel patterns with focused investigation of biological mechanisms.

Principal Component Analysis (PCA) stands as one of the most widely used multivariate statistical techniques in genomic studies, valued for its ability to reduce the complexity of high-dimensional datasets while preserving data covariance. As an unsupervised learning method, PCA operates without prior knowledge of sample classes or experimental groups, identifying patterns solely based on the intrinsic structure of the data [20]. This characteristic creates a fundamental duality: while PCA excels in exploratory data analysis, it can mislead when applied to problems requiring discrimination between predefined groups. The technique transforms original variables into new orthogonal variables called principal components (PCs), with the first PC capturing the maximum variance in the data, followed by subsequent components each uncorrelated with the previous ones [20]. Understanding when this unsupervised approach succeeds and when it fails has become critical for researchers, scientists, and drug development professionals working with genomic data.

The distinction between unsupervised and supervised analyses represents a fundamental methodological divide in multivariate ecological data analysis [2]. Unsupervised analyses like PCA summarize variation in the data without regard to any specific response variable, while supervised approaches evaluate variables to find the combination that best explains a causal relationship [2]. These approaches are not interchangeable, particularly when the variables most responsible for a causal relationship are not the greatest source of overall variation in the data—a situation ecologists (and genomic researchers) frequently encounter [2].

Theoretical Foundations: How Unsupervised PCA Operates

The Mechanical Steps of PCA

The mathematical execution of PCA follows a standardized series of operations. First, data must be standardized to have a mean of zero and a standard deviation of one, ensuring all variables contribute equally to the analysis regardless of their original scale [20]. Next, the algorithm calculates the covariance matrix, which represents the relationships between all variables in the dataset [20]. The third step involves extracting eigenvalues and eigenvectors from this covariance matrix, with eigenvalues representing the variance explained by each corresponding eigenvector, sorted in descending order [20]. Researchers then select principal components based on the highest eigenvalues, as these capture the most significant variance in the data [20]. Typically, only a few principal components are sufficient to represent most variability in the data. Finally, the original data is projected onto a low-dimensional subspace spanned by the selected principal components [20].

Visualizing the PCA Workflow

The following diagram illustrates the standardized PCA procedure and its primary applications in genomic research:

When Unsupervised PCA Succeeds: Strengths in Genomic Applications

Effective Dimensionality Reduction and Exploratory Analysis

Unsupervised PCA demonstrates particular strength in exploratory data analysis of high-dimensional genomic data. By reducing dimensionality while preserving essential patterns, PCA enables researchers to visualize complex datasets in two or three dimensions, revealing underlying structures that might not be apparent in the original high-dimensional space [20]. This capability proves invaluable in gene expression analysis, where PCA helps identify gene expression patterns and discover relationships between different biological samples [20]. By projecting data onto a reduced set of principal components, researchers can visualize how genes behave under various experimental conditions, facilitating identification of key regulatory pathways and biomarkers without prior hypotheses about sample groupings.

The dimensionality reduction capability of PCA also addresses computational challenges inherent to genomic research. High-dimensional clinical data (HDCD) provides unique opportunities to reveal the genetic architecture of diseases and complex traits when coupled with biobank-scale genetic data [21]. However, standard genome-wide association studies (GWAS) require phenotypes to be encoded as single scalars, creating analytical challenges for HDCD [21]. PCA helps mitigate these issues by reducing coordinate space while preserving major patterns of biological variability.

Integration with Modern Biotechnology Platforms

PCA has demonstrated remarkable adaptability when integrated with advanced biotechnology platforms. In forestry research—a field with genomic applications—PCA has been successfully combined with hyperspectral imaging, LiDAR, unmanned aerial vehicles (UAVs), and remote sensing platforms [22]. These integrations have led to substantial improvements in detection and monitoring applications, demonstrating PCA's flexibility across data modalities [22]. Similarly, PCA has been combined with other analytical methods and machine learning models including Lasso regression, support vector machines, and deep learning algorithms, resulting in enhanced data classification, feature extraction, and ecological modeling accuracy [22].

The technique also shows particular utility in metabolomic studies, where it helps identify patterns in complex biochemical profiling data. One investigation compared five unsupervised machine learning methods to identify metabolomic signatures in patients with localized breast cancer, finding that PCA-based approaches could effectively stratify patients into prognosis groups with distinct clinical and biological profiles [23].

Table 1: Principal Advantages of Unsupervised PCA in Genomic Research

| Advantage | Mechanism | Typical Applications |

|---|---|---|

| Data Simplification | Reduces high-dimensional data to manageable dimensions | Preprocessing for downstream analysis, computational efficiency |

| Feature Extraction | Identifies most impactful features influencing data variance | Biomarker discovery, pattern recognition |

| Data Visualization | Projects data into low-dimensional space | Exploratory analysis, quality control, outlier detection |

| Noise Reduction | Filters extraneous signals, emphasizes dominant features | Data cleaning, signal enhancement |

| Linearity Assumption | Leverages straightforward linear transformations | Linearly separable data structures |

When Unsupervised PCA Misleads: Limitations and Pitfalls

Fundamental Statistical and Methodological Limitations

Despite its widespread application, unsupervised PCA carries significant limitations that can mislead researchers. Most critically, PCA maximizes variance without regard to class separation or biological outcomes, meaning that components capturing the greatest variation may not reflect biologically or clinically relevant patterns [2] [24]. This fundamental characteristic explains why supervised analyses often outperform PCA for discrimination tasks. As one study noted, "if the goal of a given study is to discriminate between two or more groups, then applying standard PCA for feature reduction can undesirably eliminate features that discriminate and primarily keep features that best represent both groups" [24].

The technique also relies on a linearity assumption that constrains its effectiveness in capturing nonlinear patterns present in many biological systems [20]. This limitation becomes particularly problematic in complex genomic datasets where gene interactions and regulatory networks often exhibit nonlinear behavior. Additionally, the process of dimensionality reduction through variance maximization can result in loss of valuable information, especially when biological signals are distributed across many variables rather than concentrated in a few dominant components [20].

Specific Failures in Genetic Association Studies

In genetic association studies, PCA demonstrates particular limitations when dealing with family data and structured populations. Research has shown that "PCA is known to be inadequate for family data," a problem known as 'cryptic relatedness' when unknown to researchers [25] [26]. This deficiency extends to genetically diverse human datasets, where PCA performance suffers due to "large numbers of distant relatives more than the smaller number of closer relatives" [25] [26]. Notably, this problem persists even after pruning close relatives from analyses [25].

Comparative studies between PCA and linear mixed-effects models (LMMs) have revealed systematic limitations in PCA's performance. One comprehensive evaluation found that "LMM without PCs usually performs best, with the largest effects in family simulations and real human datasets and traits without environment effects" [25] [26]. The same study concluded that "environment effects driven by geography and ethnicity are better modeled with LMM including those labels instead of PCs" [25] [26].

Interpretation Challenges and Manipulation Risks

Perhaps most concerning are findings suggesting that PCA results may be "artifacts of the data and can be easily manipulated to generate desired outcomes" [3]. One rigorous investigation demonstrated that PCA outcomes are highly sensitive to methodological choices, noting that "PCA results can be artifacts of the data and can be easily manipulated to generate desired outcomes" [3]. This manipulation risk stems from several factors: PCA is affected by choice of markers, samples, populations, specific implementations, and various flags in PCA packages—each having unpredictable effects on results [3].

Interpretation challenges further complicate PCA's application. The authors of one study remarked that "interpreting the real-world significance of the main components can be a challenging endeavor" [20], requiring deep domain expertise and careful validation. This difficulty is compounded by the lack of consensus on determining the number of meaningful components to analyze, with different researchers employing arbitrary selection criteria [3].

Table 2: Principal Limitations of Unsupervised PCA in Genomic Research

| Limitation | Consequence | Contexts of Concern |

|---|---|---|

| Maximizes Variance, Not Discrimination | Biologically irrelevant components may dominate | Supervised classification, predictive modeling |

| Linearity Assumption | Fails to capture nonlinear relationships | Complex trait architectures, gene interactions |

| Information Loss | Potential loss of biologically relevant signals | When signals are distributed across many variables |

| Inadequate for Family Data | Poor control for relatedness leads to false positives | Genetic association studies with related individuals |

| Interpretation Difficulty | Challenges in biological interpretation of components | All applications without strong validation |

| Manipulation Vulnerability | Results can be influenced by analytical choices | All applications without rigorous standardization |

Supervised Alternatives and Hybrid Approaches

Direct Supervised Competitors

Partial Least Squares (PLS) represents the most direct supervised alternative to PCA. Unlike PCA, which finds components that maximize variance in the predictor space, PLS identifies components that maximize covariance between predictors and response variables [2]. This fundamental difference makes PLS particularly effective when researchers have specific outcomes of interest. As one study emphasized, "PCA is currently overused, at least in part because supervised approaches, such as PLS, are less familiar" to many researchers [2].

Linear Mixed Models (LMMs) have also demonstrated superior performance to PCA for genetic association studies, particularly with structured populations. Comprehensive evaluations have found that "LMM without PCs usually performs best, with the largest effects in family simulations and real human datasets and traits without environment effects" [25] [26]. The same research noted that "poor PCA performance on human datasets is driven by large numbers of distant relatives more than the smaller number of closer relatives" [25].

Advanced Supervised Frameworks

The REGLE (REpresentation learning for Genetic discovery on Low-dimensional Embeddings) framework exemplifies sophisticated supervised approaches that address PCA's limitations in genomic applications. REGLE uses convolutional variational autoencoders to compute non-linear, low-dimensional, disentangled embeddings of data with highly heritable individual components [21]. This approach provides a framework to create accurate disease-specific polygenic risk scores in datasets with minimal expert phenotyping [21].

When applied to respiratory and circulatory systems, genome-wide association studies on REGLE embeddings identified "more genome-wide significant loci than existing methods and replicate known loci" for both spirograms and photoplethysmograms, demonstrating the framework's generality and superior performance [21]. Furthermore, these embeddings were associated with overall survival and produced polygenic risk scores with improved predictive performance for asthma, chronic obstructive pulmonary disease, hypertension, and systolic blood pressure across multiple biobanks [21].

Hybrid and Modified Approaches

Discriminant PCA (DPCA) represents a hybrid approach that modifies traditional PCA for better discrimination performance. This method orders eigenvectors to maximize the Mahalanobis distance between predefined groups rather than simply explaining variance [24]. In one application to diffusion tensor-based fractional anisotropy images, DPCA distinguished age-matched schizophrenia subjects from healthy controls with significantly better performance than conventional PCA [24]. The classification error with 60 components was close to the minimum error, and the Mahalanobis distance was twice as large with DPCA than with standard PCA [24].

Another innovative approach combines PCA with projection pursuit (PP) to enhance feature selection in genomic analyses. This integration helps rationalize "PCA- and tensor decomposition-based unsupervised feature extraction" by relating "the space spanned by singular value vectors with that spanned by the optimal cluster centroids obtained from K-means" [27]. This theoretical advancement helps explain why PCA-based methods can outperform conventional statistical tests in some genomic applications despite being unsupervised [27].

Experimental Comparisons and Performance Metrics

Quantitative Performance Comparisons

Direct comparisons between unsupervised PCA and supervised alternatives reveal measurable performance differences across multiple genomic applications. In one analysis of high-dimensional clinical data, REGLE embeddings demonstrated superior capability for genetic discovery compared to PCA-based approaches [21]. When applied to spirograms, REGLE consistently "outperformed an equivalent number of PCs in terms of reconstruction accuracy at small latent dimensions" [21].

In classification tasks, DPCA demonstrated substantially improved discrimination power compared to standard PCA. When distinguishing schizophrenia subjects from healthy controls using fractional anisotropy data, "the Mahalanobis distance was twice as large with DPCA, than with PCA" [24]. This enhanced separation translated to practical diagnostic improvements, with the study reporting that "with six optimally chosen tracts the classification error was zero" [24].

Methodological Protocols for Comparative Studies

Robust evaluation of PCA against supervised alternatives requires careful experimental design. For genomic association studies, researchers should:

- Account for Population Structure: Include both family data and population cohorts to test method robustness [25] [26]

- Evaluate Multiple Performance Metrics: Assess both type I error control (false positives) and power (true positives) [25]

- Test Across Trait Architectures: Include both quantitative and case-control traits with varying genetic architectures [25]

- Validate in Independent Datasets: Verify findings in external cohorts to ensure generalizability [21]

For method comparisons in descriptive applications, protocols should include:

- Reconstruction Accuracy Assessment: Measure how well methods reconstruct original data from reduced dimensions [21]

- Cluster Separation Quantification: Calculate between-group distances relative to within-group variation [24]

- Biological Validation: Connect mathematical components to established biological knowledge [27]

- Stability Testing: Evaluate result consistency across subsamples and parameter choices [3]

Decision Framework for Method Selection

The following diagram illustrates a systematic approach for selecting between unsupervised PCA and supervised alternatives based on research objectives and data characteristics:

Essential Research Reagents and Computational Tools

Critical Research Solutions for Genomic PCA Applications

Table 3: Essential Research Reagent Solutions for PCA in Genomic Studies

| Tool/Category | Specific Examples | Function in Analysis |

|---|---|---|

| Genomic Data Platforms | UK Biobank, TCGA, GEO | Provide large-scale genomic datasets for analysis [21] [27] |

| PCA Software Packages | EIGENSOFT, PLINK, SmartPCA | Implement specialized PCA algorithms for genetic data [3] |

| Supervised Alternatives | REGLE, PLS, DPCA | Offer supervised dimensionality reduction capabilities [21] [24] |

| Mixed Model Packages | GCTA, GEMMA, EMMAX | Control for population structure and relatedness [25] [26] |

| Visualization Tools | VOSviewer, ggplot2, matplotlib | Enable visualization of PCA results and component patterns [22] |

| Validation Frameworks | Cross-validation, bootstrap, permutation tests | Assess stability and significance of PCA findings [27] |

Unsupervised PCA remains a powerful tool for exploratory genomic analysis, particularly when researchers lack prior hypotheses about group structure or seek to reduce dimensionality for visualization and noise reduction. Its strengths in revealing intrinsic data patterns, integrating with diverse biotechnology platforms, and simplifying complex datasets ensure its continued relevance in genomic research. However, evidence consistently demonstrates that PCA misleads when applied to discrimination tasks, family-based genetic studies, and analyses requiring biological interpretation of components.

The strategic researcher must recognize that unsupervised and supervised approaches address fundamentally different questions. As one study concluded, "there are many applications for both unsupervised and supervised approaches in ecology [and genomics]. However, PCA is currently overused, at least in part because supervised approaches, such as PLS, are less familiar" [2]. Moving forward, the genomic research community would benefit from more nuanced methodological selections based on explicit research objectives rather than defaulting to familiar techniques. By aligning analytical approaches with specific scientific questions—employing unsupervised methods for exploration and supervised alternatives for prediction and discrimination—researchers can maximize insights while minimizing misinterpretation risks in genomic studies.

Methodologies in Action: Implementing Supervised PCA and Advanced Hybrid Models

Principal Component Analysis (PCA) is a foundational unsupervised dimensionality reduction technique widely used in genomic studies. Its primary objective is to find a sequence of best linear approximations to a given high-dimensional dataset by identifying directions of maximum variance in the covariate data alone [28]. However, this unsupervised nature becomes a significant limitation in supervised tasks where the goal is to predict a dependent response variable. Conventional PCA ignores the response variable entirely, potentially discovering components with high variability but little predictive power for the target outcome [28].

Supervised PCA addresses this fundamental limitation by generalizing the PCA framework to incorporate response variable information. Rather than seeking components with maximal variance, supervised PCA aims to find principal components with maximal dependence on the response variables [28]. This paradigm shift makes it uniquely effective for regression and classification problems with high-dimensional input data, particularly in domains like genomics where the number of predictors (e.g., genes, SNPs) greatly exceeds the number of observations.

Mathematical Framework of Supervised PCA

Core Algorithmic Principles

Supervised PCA operates on the fundamental principle of identifying a subspace in which the dependency between predictors (X) and response variables (Y) is maximized. Formally, given a p-dimensional explanatory variable X and an ℓ-dimensional response variable Y, the algorithm seeks an orthogonal transformation that maximizes the dependence between the projected data UᵀX and the outcome Y [28].

The mathematical implementation relies on the Hilbert-Schmidt Independence Criterion (HSIC) as the dependence measure. The algorithm maximizes tr(HKHL), where K is a kernel of UᵀX, L is a kernel of Y, and H is the centering matrix [28]. This optimization yields a closed-form solution: the top eigenvectors of XHLHXᵀ, which can be computed efficiently even for high-dimensional data through a dual formulation.

Key Differentiators from Unsupervised PCA

Table 1: Fundamental Differences Between Supervised and Unsupervised PCA

| Aspect | Unsupervised PCA | Supervised PCA |

|---|---|---|

| Objective | Maximize variance of covariates | Maximize dependence on response variable |

| Response Variable Usage | Ignored entirely | Central to component identification |

| Component Interpretation | Directions of maximum data spread | Directions most predictive of outcome |

| Mathematical Foundation | Eigen decomposition of covariance matrix | HSIC maximization |

| Applicability | Exploratory data analysis | Regression and classification tasks |

Unlike conventional PCA, which represents a special case of the supervised framework, supervised PCA explicitly considers the quantitative value of the target variable, making it applicable to both classification and regression problems [28]. This contrasts with many supervised dimensionality reduction techniques that only consider similarities and dissimilarities along labels, limiting them to classification tasks only.

Experimental Protocols and Performance Validation

Genomic Application Case Studies

Multiple studies have demonstrated supervised PCA's effectiveness through rigorous experimental protocols. In population genetics, researchers have developed frameworks that combine ancestry-informative SNP panels with machine learning to jointly determine genetic ancestry and geographic origins. These studies typically employ multiple classification algorithms—including logistic regression, support vector machines, k-nearest neighbors, random forest, convolutional neural networks, and XGBoost—with optimized XGBoost models achieving 95.6% accuracy and an AUC of 0.999 with 2,000 AISNPs [29].

For geographic localization, deep neural network models like Locator predict latitude and longitude directly from unphased genotypes. Notably, when trained on just 2,000 AISNPs, these models perform nearly as well as those built on high-density genomic data (597,569 SNPs) [29]. This demonstrates the power of combining carefully designed marker sets with supervised learning techniques.

Performance Comparison with Unsupervised Methods

Table 2: Performance Comparison in Genomic Studies

| Method | Accuracy | AUC | Key Strengths | Limitations |

|---|---|---|---|---|

| Supervised PCA | 95.6% [29] | 0.999 [29] | Maximizes predictive power for specific response variables | Requires labeled data |

| Unsupervised PCA | Not directly applicable | Not directly applicable | Preserves covariance structure without labels | May miss biologically relevant patterns |

| XGBoost | 95.6% [29] | 0.999 [29] | Handles complex non-linear relationships | Less interpretable than linear methods |

| Conventional GPS | Varies by implementation | Varies by implementation | Provides geographic localization | Performance depends on marker density |

In genomic studies, unsupervised PCA applications have faced significant criticism. Recent evaluations demonstrate that PCA results can be highly biased artifacts of the data and can be easily manipulated to generate desired outcomes [3]. One comprehensive analysis of twelve test cases using both color-based models and human population data revealed that PCA results may not be reliable, robust, or replicable as the field assumes [3]. These findings raise concerns about the validity of results in population genetics literature that place disproportionate reliance upon PCA outcomes.

Implementation Workflows and Signaling Pathways

The implementation of supervised PCA follows a structured workflow that incorporates response variables at critical stages, unlike unsupervised approaches that operate solely on the input data.

Workflow Comparison: Supervised vs. Unsupervised PCA

The critical distinction in workflows lies in the incorporation of the response variable. While unsupervised PCA processes only the predictor matrix X, supervised PCA integrates both X and Y through the HSIC calculation step, ensuring the resulting components maximize dependence on the response variable [28].

In practical genomic applications, tools like VCF2PCACluster have emerged to handle the computational challenges of large-scale SNP data. This tool implements kinship estimation methods (NormalizedIBS, CenteredIBS) that improve PCA by considering genetic relatedness and mitigating confounding factors [15]. The memory-efficient processing strategy operates in a line-by-line manner, with memory usage influenced solely by sample size rather than the number of SNPs [15].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Research Reagent Solutions for Supervised PCA Implementation

| Tool/Resource | Function | Application Context | Key Features |

|---|---|---|---|

| VCF2PCACluster | Kinship estimation, PCA, and clustering | Population genetics, large-scale SNP data [15] | Memory-efficient (0.1GB for 2,504 samples), VCF input, clustering visualization |

| REGLE Framework | Representation learning for genetic discovery | High-dimensional clinical data [4] | Variational autoencoders for nonlinear embeddings, combines with GWAS |

| EIGENSOFT/SmartPCA | Population genetics analysis | Ancestry inference, population structure [3] | Traditional unsupervised PCA, widely cited but potentially biased |

| AWGE-ESPCA | Sparse PCA with noise elimination | Non-human genomic data analysis [19] | Adaptive noise regularization, weighted gene networks |

| GO-PCA | PCA with gene ontology enrichment | Transcriptomic data exploration [30] | Combines PCA with functional annotation, generates interpretable signatures |

Critical Evaluation and Methodological Considerations

Limitations and Challenges of Supervised PCA

While supervised PCA offers significant advantages for predictive modeling, it comes with important methodological considerations. The dependence on labeled response variables limits its application in purely exploratory settings where no outcome variable is defined. Additionally, the method's performance is contingent on the quality and relevance of the response variable, with poorly chosen targets leading to suboptimal components.

In genetic studies, unsupervised methods face fundamental challenges. Recent critical evaluations suggest that PCA results may be highly biased artifacts rather than true representations of population structure [3]. One extensive analysis demonstrated that PCA outcomes can be easily manipulated by altering population selection, sample sizes, or marker choices, generating contradictory results and potentially absurd conclusions [3].

Emerging Hybrid Approaches

Recent advancements have introduced hybrid approaches that blend supervised and unsupervised elements. The REGLE framework employs variational autoencoders to compute nonlinear, low-dimensional embeddings of high-dimensional clinical data, which then become inputs for genome-wide association studies [4]. This approach has demonstrated superior performance in genetic discovery, replicating known loci while identifying new associations not detected through conventional methods [4].

Similarly, GO-PCA represents another hybrid approach that systematically combines PCA with nonparametric GO enrichment analysis to identify sets of genes that are both strongly correlated and closely functionally related [30]. This method automatically generates functionally labeled expression signatures that provide readily interpretable representations of biological heterogeneity.

Supervised PCA represents a significant advancement over unsupervised approaches for genomic studies where specific response variables are of interest. By maximizing dependence between projected data and outcome variables, it addresses a fundamental limitation of conventional PCA in predictive modeling contexts. Experimental results across multiple genomic applications demonstrate its superior performance in tasks ranging from ancestry inference to disease subtype identification.

Future methodological development will likely focus on increasing scalability for biobank-scale datasets, enhancing interpretability of supervised components, and developing more robust implementations resistant to overfitting. As genomic data continue to grow in volume and complexity, the strategic selection between supervised, unsupervised, and hybrid PCA approaches will remain critical for maximizing biological insight while maintaining methodological rigor.

Principal Component Analysis (PCA) stands as a classical unsupervised technique for dimensionality reduction in high-throughput genomic studies, where the number of features (e.g., genes, SNPs) vastly exceeds sample sizes [31]. However, conventional PCA operates without considering phenotype labels (e.g., disease status, treatment response), potentially capturing variance in the data unrelated to the biological question of interest [28]. This limitation has driven the development of supervised PCA frameworks that explicitly incorporate response variables to guide dimension reduction, enhancing biological discovery power in genomic applications ranging from Genome-Wide Association Studies (GWAS) to single-cell analysis [32] [31] [28].

A particularly advanced evolution in this domain is Supervised Categorical PCA (SCPCA), which addresses a critical challenge in genomic data: the categorical nature of fundamental data types like single-nucleotide polymorphisms (SNPs) [32]. Unlike traditional PCA and even some supervised variants that assume continuous, normally distributed data or make inherent assumptions about genetic risk models, SCPCA explicitly models categorical SNP data without imposing restrictive effect model assumptions, providing unique advantages for aggregated association analyses in complex disease studies [32].

Theoretical Foundations: From PCA to Specialized Frameworks

The PCA Foundation and Its Limitations

Traditional PCA operates by finding orthogonal linear projections that minimize mean squared reconstruction error between original data points and their low-dimensional projections [32]. For genomic data matrix ( X ) with ( n ) samples and ( p ) features, PCA identifies principal components (PCs) as eigenvectors of the covariance matrix ( \Sigma_x ), solving:

[ \text{argmax}{vk} \sigma{vk}^2 = \text{argmax}{vk} vk^T \Sigmax vk \quad \text{subject to} \quad vk^T v_k = 1 ]

where ( \sigma{vk}^2 ) represents variance along component ( v_k ) [33]. While effective for variance preservation, this unsupervised approach may prioritize technical artifacts or biologically irrelevant variation in genomic studies, potentially obscuring signal detection for disease-associated loci [32] [3].

The Supervised PCA Revolution

Supervised PCA extends this framework by incorporating response variable information to find components with maximal dependence on the outcome rather than merely maximum variance [28]. The core optimization problem becomes:

[ \text{argmax}_V \text{tr}(V^T Q V) \quad \text{subject to} \quad V^T V = I ]

where ( Q ) is a matrix capturing relationship between predictors and response, typically formulated using dependence measures like Hilbert-Schmidt Independence Criterion (HSIC) [28] [33].

SCPCA: Specialization for Categorical Genomic Data

SCPCA further advances this framework by specifically addressing the categorical nature of SNP data, representing genotypes as {00, 10/01, 11} without imposing numerical assumptions about risk effect models [32]. This contrasts with traditional approaches that encode SNPs as {0, 1, 2} representing minor allele counts, implicitly assuming proportional risk effects that may not reflect biological reality [32].

The methodology performs optimal linear combinations of categorical SNP genotypes, extracting principal components with maximum discriminating power for disease outcomes while respecting the inherent data structure of genomic variants [32].

Table 1: Evolution of PCA Frameworks for Genomic Data

| Method | Key Characteristic | Data Assumptions | Genomic Applications |

|---|---|---|---|

| Traditional PCA | Unsupervised; maximizes variance | Continuous, normally distributed data | Population structure visualization, batch effect detection [31] [3] |

| Supervised PCA | Incorporates response variables | General continuous data | Pathway analysis, expression quantitative trait loci [28] |

| Sparse Supervised PCA | Adds sparsity constraints for variable selection | Linear or nonlinear input-response relationships | High-dimensional feature selection [33] |

| SCPCA | Models categorical data explicitly | Categorical genotypes without risk effect model assumptions | Aggregated association analysis, pathway-based GWAS [32] |

Performance Comparison: SCPCA vs. Alternative Approaches

Experimental Framework and Benchmarks

Comprehensive evaluation of SCPCA against traditional supervised PCA (SPCA) and Supervised Logistic PCA (SLPCA) has been conducted using both simulated genotype data generated by HAPGEN2 and real Crohn's Disease genotype data from the Wellcome Trust Case Control Consortium (WTCCC) [32]. Performance assessment focused on detection power for identifying disease-associated SNPs through aggregated association analysis based on predefined functional regions like genes and pathways [32].

Table 2: Performance Comparison Across PCA Methods in Genomic Studies

| Method | Detection Power | Model Flexibility | Data Representation | Computational Efficiency |

|---|---|---|---|---|

| SCPCA | Highest based on preliminary results [32] | Maximum - no specific risk effect model assumptions [32] | Explicit categorical modeling [32] | Closed-form solution [28] |

| SPCA | Moderate [32] | Limited - assumes continuous data [32] | Continuous numerical representation [32] | Closed-form solution [28] |

| SLPCA | Lower than SCPCA [32] | Limited - assumes recessive/dominant model [32] | Binary transformation [32] | Requires iterative optimization [32] |

Key Advantages of SCPCA in Genomic Applications

SCPCA demonstrates superior performance in detecting potential disease SNPs with weak individual effects but strong joint contributions to disease phenotypes, a common scenario in complex diseases [32]. This advantage stems from two fundamental properties:

Appropriate Data Modeling: By explicitly treating SNP data as categorical without imposing numerical interpretations, SCPCA avoids potential biases introduced by assuming risk proportional to minor allele count [32].

Model Flexibility: Without pre-specified risk effect models, SCPCA can adapt to various underlying genetic architectures, capturing associations that methods with stronger assumptions might miss [32].

Experimental Protocols and Implementation

SCPCA Workflow for Genomic Association Studies

The following diagram illustrates the complete SCPCA analytical workflow for genomic association studies:

SCPCA Algorithm Implementation

The SCPCA implementation involves these key methodological steps:

Categorical Data Representation: SNP genotypes are represented using their natural categorical encoding {00, 10/01, 11} rather than numerical transformations that impose effect size assumptions [32].

Dependence Maximization: The algorithm identifies principal components with maximum dependence on the trait of interest using specialized optimization for categorical data [32].

Supervised Component Selection: Components most strongly associated with the response variable are selected for downstream association testing, excluding noise components unrelated to the trait [32].

Aggregated Association Testing: The selected components undergo logistic regression modeling to evaluate their joint effect on disease status, effectively testing aggregated genetic effects across multiple SNPs [32].

Benchmarking Protocols

Performance evaluation follows rigorous benchmarking standards similar to those used in computational genomics [34] [35]:

- Data Splitting: Datasets are divided into training and testing subsets with fixed random seeds to ensure reproducibility [36].

- Multiple Metrics Assessment: Evaluation incorporates multiple metrics at different analysis stages (embedding quality, graph structure, final partitions) to comprehensively assess performance [34].

- Comparison Baselines: Methods are compared against established alternatives (traditional SPCA, SLPCA) under identical conditions [32].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Supervised PCA in Genomic Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| HAPGEN2 | Simulated genotype data generation | Method validation and benchmarking [32] |

| WTCCC Data | Real Crohn's Disease genotype data | Performance evaluation on complex disease [32] |

| HSIC Criterion | Dependence measurement between variables | Supervised component identification [28] [33] |

| EIGENSOFT/PLINK | Established PCA implementation in genetics | Baseline comparison for traditional methods [3] |

| Genomic Benchmarks | Curated datasets for sequence classification | Standardized performance assessment [36] |

Interpretation Guidelines and Limitations

Critical Interpretation of Results

While SCPCA demonstrates superior performance in aggregated association analysis, researchers should consider several interpretation aspects:

- Component Selection: The number of components to retain requires careful consideration, as arbitrary selection (e.g., always using first 2 PCs) may miss important biological signal [3].

- Population Structure: In GWAS, SCPCA components may still reflect population stratification rather than disease association, necessitating additional controls [3].

- Reproducibility: Like all PCA-based methods, SCPCA results can be sensitive to data preprocessing, marker selection, and sample composition [3].

Limitations and Considerations

SCPCA, while powerful, has certain limitations:

- Computational Intensity: The specialized categorical processing may increase computational demands compared to standard PCA [32].

- Implementation Accessibility: Specialized implementations may be less readily available than established tools like EIGENSOFT [3].

- Interpretation Complexity: The categorical components may require additional effort for biological interpretation compared to traditional approaches.

SCPCA represents a significant methodological advancement for genomic association studies, particularly for analyzing categorical SNP data in complex disease research. By explicitly modeling the categorical nature of genetic variants without imposing restrictive effect model assumptions, SCPCA achieves higher detection power for variants with weak individual effects but important joint contributions to disease phenotypes.

The integration of supervision enables targeted discovery of biologically relevant patterns, while the categorical framework ensures appropriate treatment of fundamental genomic data types. As genomic studies increasingly focus on aggregating weak effects across functional units like genes and pathways, SCPCA provides a statistically sound and powerful framework for uncovering the complex genetic architecture of diseases.

Future development directions include integration with deep learning approaches, extension to multi-omics data integration, and adaptation for emerging single-cell genomics applications where categorical data types and high dimensionality present similar analytical challenges [34] [37].

In genomic studies, Principal Component Analysis (PCA) has long been a cornerstone technique for dimensionality reduction, enabling researchers to visualize population structure, identify patterns in gene expression, and manage the challenges of high-dimensional data. Traditional PCA operates as an unsupervised method, identifying principal components solely based on the maximum variance within the predictor variables without considering biological outcomes or known groupings. While effective for exploratory analysis, this approach often misses critical biological insights by ignoring existing knowledge about gene functions, pathways, and phenotypic outcomes.

The emergence of knowledge-integrated approaches represents a paradigm shift in genomic data analysis. These methods, including supervised PCA and Gene Ontology-PCA (GO-PCA), systematically incorporate prior biological knowledge to guide the dimensionality reduction process. By integrating established information from databases such as Gene Ontology, KEGG, and Reactome, these techniques transform PCA from a purely mathematical tool into a biologically intelligent analysis framework. This integration is particularly valuable in pharmaceutical development and precision medicine, where understanding the functional context of genomic signatures can significantly accelerate target identification and validation.