Taming Overfitting: Robust Machine Learning Strategies for Genomic Data

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of overfitting in machine learning models for genomics.

Taming Overfitting: Robust Machine Learning Strategies for Genomic Data

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on addressing the critical challenge of overfitting in machine learning models for genomics. As genomic datasets are often characterized by high dimensionality and limited samples, models are prone to learning noise instead of biological signal, leading to poor generalization and unreliable clinical predictions. We explore the foundational causes and consequences of overfitting, detail state-of-the-art mitigation methodologies from regularization to novel data augmentations, present a troubleshooting framework for model optimization, and compare the validation performance of leading algorithms. By synthesizing current best practices and emerging trends, this resource aims to equip scientists with the knowledge to build more generalizable, accurate, and trustworthy genomic predictive models for precision medicine.

The Overfitting Problem in Genomics: Why High-Dimensional Data Poses a Unique Challenge

Defining Overfitting and Underfitting in the Context of Genomic Data

Frequently Asked Questions (FAQs)

Q1: What are overfitting and underfitting in the context of genomic machine learning?

In genomic machine learning, overfitting occurs when a model learns the training data too well, including the noise and random fluctuations specific to that dataset. This results in a model that performs excellently on its training data but fails to generalize to new, unseen genomic data, such as a validation cohort or data from a different population [1] [2]. For example, a model might memorize technical artifacts from a specific sequencing batch rather than true biological signals.

Underfitting is the opposite problem. It happens when a model is too simple to capture the underlying complex patterns in the genomic data, such as the polygenic nature of many traits. An underfitted model performs poorly on both the training data and any new test data, as it has failed to learn the relevant relationships [1] [2].

Q2: Why is overfitting a particularly high risk in genomic studies?

Overfitting is a major risk in genomics due to the classic "large p, small n" problem, where the number of features (p; e.g., SNPs, genes) is vastly larger than the number of observations (n; e.g., patients, samples) [3]. Genomic datasets often contain hundreds of thousands to millions of genetic markers, while cohort sizes may be in the thousands. Since most genetic variants have no effect on a given trait, a model that uses all features is likely to fit a large number of "null variants," mistaking noise for true signal and leading to overfitting and inflated performance metrics [4] [5].

Q3: How can I tell if my genomic model is overfitted or underfitted?

You can diagnose these issues by comparing the model's performance on training versus held-out testing data:

- Sign of Overfitting: High performance (e.g., high R², accuracy) on the training set but significantly lower performance on the test set [1] [2].

- Sign of Underfitting: Poor performance on both the training set and the test set [1].

A well-fitted model should have performance metrics on the test set that are close to those on the training set, indicating good generalization [2]. The diagram below illustrates this diagnostic logic.

Q4: What are some best practices to prevent overfitting when building a genomic prediction model?

Several strategies can help mitigate overfitting:

- Use Cross-Validation: Use cross-validation to tune model parameters and get a more robust estimate of performance without needing a separate test set [2] [5].

- Increase Sample Size: Using more training data helps the model learn generalizable patterns rather than noise [1] [3].

- Apply Regularization: Techniques like Ridge (L2) and Lasso (L1) regularization penalize model complexity, preventing coefficients for irrelevant features from becoming too large [1] [4].

- Perform Feature Selection: Reduce the number of input features to include only the most biologically relevant markers, thus lowering the model's capacity to overfit [4].

- Use Dropout (for Neural Networks): Randomly "dropping out" units during training prevents complex co-adaptations and reduces overfitting [1] [2].

Q5: My model is underfitting the genomic data. What should I do?

If your model is underfitting, consider these actions to increase its capacity to learn:

- Increase Model Complexity: Use a more flexible algorithm (e.g., switch from linear regression to a non-linear model like a support vector machine or neural network) that can capture the complex architecture of genomic influences [1] [2].

- Add More Features: Perform feature engineering to include a broader set of potentially relevant genomic features or interaction terms [1].

- Reduce Regularization: Lower the strength of regularization parameters, as excessive regularization can overly constrain the model [1].

- Increase Training Duration: For iterative models like neural networks, train for more epochs to allow the model to learn more from the data [1].

Troubleshooting Guide: Common Problems and Solutions

The table below summarizes common symptoms, their likely causes, and specific corrective actions for overfitting and underfitting in genomic analyses.

Table 1: Troubleshooting Guide for Genomic Model Fitting Issues

| Problem & Symptoms | Likely Cause | Specific Corrective Actions |

|---|---|---|

| Overfitting• High accuracy on training data• Low accuracy on test/validation data• Model has high variance [1] [2] | • Model is too complex for the data [1]• Too many features (e.g., SNPs) relative to samples [4] [5]• Training data contains noise or batch effects [3] | 1. Apply Regularization: Use L1 (Lasso) or L2 (Ridge) regularization to shrink coefficients [1] [4].2. Perform Feature Selection: Use GWAS p-value thresholds or other methods to select relevant variants before modeling [4].3. Increase Training Data: Collect more samples or use data augmentation techniques [1] [3].4. Use Ensemble Methods: Implement random forests, which are less prone to overfitting [6]. |

| Underfitting• Poor accuracy on both training and test data• Model has high bias [1] [2] | • Model is too simple for the data's complexity [1]• Key predictive features are missing [1]• Excessive regularization [1] | 1. Increase Model Complexity: Choose a more flexible algorithm (e.g., deep learning, non-linear SVMs) [1] [2].2. Add Relevant Features: Incorporate additional omics data layers (e.g., transcriptomics, epigenomics) for a more complete picture [6].3. Reduce Regularization: Weaken or remove regularization constraints on the model [1].4. Engineer New Features: Create interaction terms or polynomial features to capture non-linearities [1]. |

Experimental Protocol: Implementing Cross-Validation to Control Overfitting

The following workflow outlines a standard k-fold cross-validation procedure, a critical methodology for obtaining an unbiased estimate of model performance and reducing overfitting in genomic selection and prediction studies [2] [5].

Objective: To obtain a robust and unbiased estimate of a machine learning model's performance on genomic data and to aid in tuning model hyperparameters without overfitting.

Materials:

- Genomic dataset (e.g., genotype matrix, gene expression matrix)

- Computing environment with machine learning libraries (e.g., scikit-learn in Python, Caret in R) [7]

Methodology:

- Dataset Preparation: Begin with a complete dataset where the phenotypes and genotypes are known for each subject [5].

- Splitting: Randomly partition the dataset into k equally sized subsets (folds). A common choice is k=5 or k=10 [2].

- Iterative Training and Validation:

- For each unique fold

i(whereiranges from 1 to k):- Set aside fold

ito be used as the validation set. - Use the remaining k-1 folds combined as the training set.

- Train your chosen machine learning model (e.g., ridge regression, random forest) on the training set.

- Use the trained model to predict the phenotypes of the samples in the validation set (fold

i). - Calculate and store the performance metric (e.g., R², mean squared error) for this iteration.

- Set aside fold

- For each unique fold

- Performance Calculation: After completing all k iterations, calculate the final performance metric as the average of the k stored metrics. This average provides a more reliable estimate of how the model will perform on unseen data than a single train-test split [2] [5].

Interpretation: A large discrepancy between the average cross-validation performance and the performance on the final independent test set may indicate that the model or its parameters are still overfitting to the specific partitions of the cross-validation, or that there is a data shift between your initial dataset and the final test set [3].

Research Reagent Solutions

Table 2: Key Computational Tools for Genomic Machine Learning

| Tool Name | Type | Primary Function in Genomic Analysis |

|---|---|---|

| PyCaret [8] [9] | Software Library | Low-code library that automates many steps in the machine learning workflow, making ML more accessible to non-specialists. |

| Caret [7] | Software Library | An R package for streamlined model training, hyperparameter tuning, and evaluation for classification and regression. |

| TensorFlow / PyTorch [7] | Software Framework | Open-source libraries used for building and training deep learning models, such as CNNs and RNNs, on genomic sequences. |

| GSMX [5] | R Package | An R package specifically designed for genomic selection analyses, including methods for controlling heritability overfitting. |

| STMGP [4] | Algorithm | A specialized prediction algorithm (Smooth-Threshold Multivariate Genetic Prediction) designed to avoid overfitting in polygenic risk prediction. |

Troubleshooting Guide: Solving Common High-Dimensional Data Problems

Problem 1: My model achieves perfect training accuracy but fails on new samples. Is this overfitting?

Answer: Yes, this is a classic sign of overfitting. It occurs when your model learns not only the underlying biological signal but also the noise and random fluctuations specific to your training dataset [10] [11]. In genomics, this is particularly common due to the high feature-to-sample ratio, where the number of genomic features (e.g., genes, SNPs) vastly exceeds the number of patient samples [10] [12].

Diagnostic Steps:

- Performance Gap: Monitor the difference in performance metrics (e.g., Accuracy, AUC) between your training and validation/test sets. A large gap indicates overfitting [10] [11].

- Cross-Validation: Use k-fold cross-validation to get a more robust estimate of model performance. This involves partitioning your data into k subsets, iteratively training on k-1 folds, and validating on the held-out fold [13].

- Benchmark with a Simple Model: Start with a simple model as a benchmark. If increasingly complex models do not show meaningful improvement on the validation set, the additional complexity may be leading to overfitting [11].

Solutions:

- Apply Regularization: Techniques like L1 (Lasso) and L2 (Ridge) regularization add a penalty for model complexity, discouraging over-reliance on any single feature [10] [13].

- Use Feature Selection: Before modeling, reduce the feature space by selecting only the most biologically relevant markers [14] [12].

- Implement Ensemble Methods: Methods like Random Forests (bagging) combine predictions from multiple models to "smooth out" their predictions and improve generalization [13] [11].

Problem 2: My biomarker panel identifies many significant markers, but they fail to validate in an independent cohort.

Answer: This often results from the model identifying spurious correlations that are not generalizable, a direct consequence of overfitting high-dimensional data [10]. The "significant" markers may be statistical artifacts rather than true biological signals.

Diagnostic Steps:

- Check Cohort Homogeneity: Ensure your training and validation cohorts are well-matched for key clinical and demographic variables. Differences in cohort composition can cause validation failure.

- Analyze Model Complexity: A model with too many parameters relative to the number of samples is high-risk. The goal is to find a parsimonious model.

Solutions:

- Employ Strict Validation: Always use a held-out test set or external cohort from a different institution for final evaluation [15] [16]. Do not tune your model based on test set performance.

- Prioritize Interpretable Models: When possible, use models that offer insight into feature importance. This helps in prioritizing biomarkers that are biologically plausible.

- Leverage Domain Knowledge: Integrate biological pathway information to constrain the model, focusing on features with known relevance to the disease [16].

Problem 3: My computational results are computationally expensive and difficult to interpret.

Answer: High dimensionality directly increases computational cost and can lead to "black box" models where the reasoning behind predictions is unclear [14] [16].

Diagnostic Steps:

- Profile Feature Dimensions: Analyze the number of input features. Costs often scale exponentially with dimensionality.

- Audit Model Interpretability: Determine if you can explain why the model made a specific prediction.

Solutions:

- Apply Dimensionality Reduction: Use techniques like Principal Component Analysis (PCA) to transform your data into a lower-dimensional space while preserving most of the important information [14].

- Build a Surrogate Model: For complex "black box" models like deep learning networks, you can train an interpretable model (e.g., logistic regression, decision tree) to approximate its predictions. Analyzing the simpler model can provide insights into the important features [16].

Table 1: Summary of Common Problems and Mitigation Strategies

| Problem | Root Cause | Primary Solution | Key Technique Examples |

|---|---|---|---|

| High training accuracy, low test accuracy | Model learns noise in training data | Simplify model and reduce overfitting | Regularization (L1/L2), Early Stopping, Dropout [10] [13] |

| Biomarkers fail to validate | Spurious correlations from high feature-to-sample ratio | Robust validation and feature selection | Independent test sets, External validation cohorts, RFE, VSURF [15] [12] [16] |

| High computational cost & poor interpretability | Curse of dimensionality; "black box" models | Reduce dimensions and increase transparency | PCA, t-SNE, UMAP, Surrogate models (PLS) [14] [16] |

Experimental Protocols for Robust Genomics Research

Protocol 1: A Workflow for Building a Generalizable Predictive Model

This protocol outlines a structured approach to develop a machine learning model for genomic data that mitigates overfitting, inspired by recent research in metabolomics and fetal growth restriction [15] [16].

Model Development Workflow

Key Steps:

- Initial Data Splitting: Immediately partition the entire dataset into a training set (e.g., 75%) and a locked test set (e.g., 25%). The test set should not be used for any aspect of model development or tuning; it serves solely for the final evaluation of generalization performance [15] [16].

- Preprocessing and Feature Selection: Within the training set only, perform normalization and apply feature selection algorithms (e.g., VSURF, Boruta) to identify the most predictive markers. This drastically reduces the feature-to-sample ratio [12] [16].

- Model Training with Cross-Validation: Train your model using the selected features on the training set. Use k-fold cross-validation within the training set to tune hyperparameters. This ensures an unbiased estimate of model performance during development [13].

- Final Evaluation: Apply the final model, trained on the entire training set with the chosen hyperparameters, to the locked test set. The performance on this set is the key metric for reporting how well the model is expected to perform on new data [15].

Protocol 2: Validating with an Independent External Cohort

For the highest level of evidence, validate your model on a completely independent cohort collected under different conditions (e.g., different clinic, protocol, or population) [15].

External Validation Process

Procedure: Take the final model trained on your entire development dataset (from Protocol 1) and use it to make predictions on the pristine external cohort. Calculate performance metrics based on these predictions. A significant drop in performance suggests the model may have overfit to nuances of the original dataset and is not broadly applicable [15].

Table 2: Key Techniques to Combat Overfitting in Genomics

| Technique Category | Purpose | Key Methods | Relevant Context in Genomics |

|---|---|---|---|

| Feature Selection | Reduce input variables to most informative markers | Filter (Correlation), Wrapper (RFD), Embedded (L1 Regularization) [14] [12] | Selecting 5 key metabolites from 96 for MCI diagnosis [16] |

| Dimensionality Reduction | Transform data into lower-dimensional space | PCA, t-SNE, UMAP [14] | Reducing 20,000 genes to 50 principal components for single-cell analysis [14] |

| Regularization | Penalize model complexity during training | L1 (Lasso), L2 (Ridge), Dropout (in Neural Networks) [10] [13] | Applying L1 regularization to identify key cancer-associated genes [10] |

| Validation | Estimate real-world performance | Train/Test Split, k-Fold Cross-Validation, External Validation [13] [15] | Prospective validation of FGR model in two independent cohorts [15] |

| Ensemble Methods | Combine multiple models to improve stability | Bagging (Random Forests), Boosting (XGBoost) [13] [11] | Multi-filter enhanced genetic ensemble for gene selection [12] |

FAQs on High Feature-to-Sample Ratio

What is the "curse of dimensionality" and how does it relate to my genomics data?

The "curse of dimensionality" refers to the various phenomena that occur when analyzing data in high-dimensional spaces (e.g., thousands of genes) that do not occur in low-dimensional settings [14]. Key aspects include:

- Data Sparsity: As dimensions increase, the available data becomes sparse. The amount of data needed to maintain statistical power grows exponentially with the number of features [14].

- Distance Concentration: In high dimensions, the distance between data points becomes less meaningful, as the relative difference between the nearest and farthest point diminishes, harming distance-based algorithms [14].

- Increased Overfitting Risk: With more features, the probability of a model finding chance correlations (noise) that appear predictive increases dramatically [10] [14].

What is the difference between feature selection and dimensionality reduction? When should I use each?

These are two primary strategies for reducing the number of input variables, but they work differently [14]:

- Feature Selection chooses a subset of the original features. For example, it might select 20 specific genes from a pool of 20,000 based on their statistical association with the outcome. The output is the original, unchanged features.

- Dimensionality Reduction transforms the features into a new, lower-dimensional space. For example, PCA might create 50 new "synthetic" features (principal components), each being a linear combination of all 20,000 original genes.

When to choose:

- Use Feature Selection when interpretability is crucial, and you need to know exactly which original biomarkers (e.g., specific genes or metabolites) are important for your model [14] [16].

- Use Dimensionality Reduction when features are highly correlated, when you need to visualize data, or when you believe a lower-dimensional latent structure (e.g., a biological pathway effect) drives your data [14].

How can I interpret a complex "black box" model to understand the biology behind its predictions?

For complex models like deep neural networks or large ensembles, you can use interpretability techniques:

- Global Surrogate Models: Train an interpretable model (e.g., a linear model like Partial Least Squares - PLS or a decision tree) to approximate the predictions of your complex "black box" model. You can then interpret the simpler model to gain insights into the overall logic of the complex one [16].

- Feature Importance: Most algorithms provide metrics of feature importance (e.g., Mean Decrease Accuracy in Random Forests). Analyzing these can highlight which features the model relies on most for its predictions [16].

The Scientist's Toolkit: Essential Research Reagents & Computational Solutions

Table 3: Key Tools and Resources for Managing High-Dimensional Data

| Tool / Resource | Function | Application Example in Genomics |

|---|---|---|

| Scikit-learn | A comprehensive Python library for machine learning, offering built-in tools for regularization, cross-validation, and feature selection [10]. | Implementing L1 regularization for gene selection and using k-fold cross-validation to tune model parameters. |

| Bioconductor | A bioinformatics-specific R package repository that offers specialized tools for preprocessing and analyzing high-throughput genomic data [10]. | Normalizing gene expression data from microarrays or RNA-seq before differential expression analysis. |

| Random Forest with VSURF/Boruta | Ensemble algorithm combined with sophisticated feature selection packages to identify the most relevant features [12] [16]. | Selecting a compact panel of 5 plasma metabolites from 96 for mild cognitive impairment diagnosis [16]. |

| PCA & UMAP | Dimensionality reduction techniques to visualize and compress high-dimensional data into 2 or 3 dimensions while preserving structure [14]. | Reducing 20,000 gene expressions to 2D for visualizing cell clusters in single-cell RNA sequencing data [14]. |

| Amazon SageMaker | A cloud platform that can automate machine learning workflows, including detecting and alerting when overfitting occurs during model training [13]. | Managing the training of large deep learning models on genomic data with automatic early stopping to prevent overfitting. |

The Overfitting Problem in Genomics and Biomarker Discovery

In the field of genomics research, overfitting occurs when a machine learning model learns not only the underlying biological patterns in the training data but also the noise and random fluctuations [10]. This results in a model that performs exceptionally well on training data but fails to generalize to unseen data, leading to misleading conclusions and wasted resources [10].

The consequences are particularly severe in biomarker discovery, where 95% of biomarker candidates fail between discovery and clinical use [17]. This high failure rate is often attributable to models that cannot generalize beyond the specific dataset on which they were trained.

Table 1: Statistical Performance Targets for Biomarker Validation

| Validation Type | Key Metric | Minimum Performance Target | Regulatory Reference |

|---|---|---|---|

| Analytical Validation | Coefficient of Variation | < 15% | [17] |

| Diagnostic Biomarker | Sensitivity & Specificity | Typically ≥80% (varies by indication) | FDA, 2007 [17] |

| Clinical Utility | ROC-AUC | ≥ 0.80 | [17] |

Troubleshooting Guide: Identifying and Resolving Overfitting

Q1: How can I detect if my genomic model is overfitting?

A: Monitor the performance gap between your training and validation datasets. Key indicators of overfitting include:

- High Training Accuracy, Low Validation Accuracy: Your model achieves near-perfect performance on training data (e.g., >95% accuracy) but performance drops significantly (e.g., by 15% or more) on a held-out validation set or external cohort [10] [18].

- Perfect Fit to Noise: The model's predictions align perfectly with all data points, including outliers and noise, rather than capturing the general trend. This is often visualized by an overly complex decision boundary that doesn't reflect known biology [18].

- Performance Metrics Divergence: A significant and growing difference between training and validation loss (or error) during the model's training process [18].

Q2: What are the most effective techniques to prevent overfitting in genomic models?

A: Successful strategies combine multiple approaches:

Apply Regularization Techniques:

- L1 (Lasso) & L2 (Ridge) Regularization: Add penalty terms to the model's loss function to discourage over-reliance on any single feature, promoting simpler models [10] [18]. L1 can drive feature coefficients to zero, effectively performing feature selection.

- Dropout: Randomly "drop out" a subset of neurons during training in neural network models to prevent co-adaptation and over-reliance on specific nodes [10] [18].

Implement Robust Data Handling:

- Data Augmentation: Artificially increase the size and diversity of your training dataset. In genomics, this can involve introducing controlled noise to gene expression data or simulating mutations in genomic sequences [10].

- Proper Data Splitting: Rigorously split data into training, validation, and test sets. The validation set is used for hyperparameter tuning and model selection, while the test set provides a final, unbiased evaluation [18].

Utilize Cross-Validation:

- Use k-fold cross-validation to assess model performance more reliably. The data is divided into k subsets; the model is trained on k-1 folds and validated on the remaining fold, repeating this process k times [18]. This maximizes the use of available data for both training and validation.

Control Model Complexity:

- Early Stopping: Halt the training process when performance on the validation set stops improving and begins to degrade [10] [18].

- Feature Selection: Reduce the number of input features (e.g., genes, SNPs) to include only the most biologically relevant ones, lowering the model's capacity to overfit [18].

Table 2: Troubleshooting Checklist for Overfitting

| Symptom | Potential Cause | Corrective Action |

|---|---|---|

| High variance in model performance across different datasets | Small sample size; high feature-to-sample ratio | Increase training data via augmentation; apply strong regularization (L1/L2) [10] [18] |

| Model identifies spurious biomarkers that lack biological plausibility | Noisy data; model capturing random fluctuations | Improve data preprocessing; implement feature selection; use ensemble methods [10] [19] |

| Performance drops significantly on external validation cohorts | Model learned site-specific biases | Use cross-validation; collect multi-site data; apply domain adaptation techniques [20] |

| Training loss continues to decrease while validation loss increases | Overly complex model; training for too many epochs | Apply early stopping; reduce model complexity (fewer layers/parameters) [10] [18] |

Experimental Protocol: A Rigorous Workflow for Robust Biomarker Discovery

This protocol outlines a best-practice workflow for developing genomic biomarkers while mitigating overfitting, incorporating key steps from discovery to validation [17] [21].

Phase 1: Discovery (6-12 months)

- Step 1 - Define Intended Use: Pre-specify the biomarker's purpose (diagnostic, prognostic, predictive) and the target patient population [21].

- Step 2 - Sample Size Estimation: Ensure adequate sample size. A minimum of 50-200 samples is required to establish meaningful statistical associations, with power calculations guiding exact numbers [17].

- Step 3 - Blinded Analysis: Keep personnel who generate biomarker data blinded to clinical outcomes to prevent bias during the discovery phase [21].

Phase 2: Analytical Validation (12-24 months)

- Step 4 - Assay Development: Prove your test measures the biomarker accurately and reproducibly. Key statistical benchmarks must be met [17]:

- Coefficient of variation < 15% for repeat measurements.

- Recovery rates between 80-120%.

- Step 5 - Inter-laboratory Validation: Validate the assay across multiple labs and technicians. Approximately 60% of biomarkers fail at this stage when developed in a single lab [17].

Phase 3: Clinical Validation (24-48 months)

- Step 6 - Independent Validation Cohort: Test the biomarker on a completely independent set of hundreds to thousands of patient samples [17].

- Step 7 - Demonstrate Clinical Utility: Prove that using the biomarker actually changes treatment decisions and improves patient outcomes, which is required for regulatory approval [17] [21].

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 3: Essential Tools for Robust Genomic Model Development

| Tool Category | Specific Examples | Function in Preventing Overfitting |

|---|---|---|

| Programming Frameworks | Scikit-learn, TensorFlow, PyTorch [10] | Provide built-in implementations of regularization (L1/L2), dropout, and cross-validation functions. |

| Bioinformatics Libraries | Bioconductor, BioPython [10] | Offer specialized preprocessing and feature selection methods tailored for high-dimensional genomic data. |

| Data Harmonization Tools | LOINC (Logical Observation Identifier Names and Codes) [20] | Standardize laboratory test names and units when combining datasets from multiple institutions, reducing technical batch effects. |

| Model Interpretation | SHAP (SHapley Additive exPlanations) [22] | Interprets model predictions to identify which features are driving decisions, helping flag potential overfitting to noise. |

Frequently Asked Questions (FAQs)

Q3: My model performs well internally but fails on external data. Is this always overfitting?

A: Not necessarily, but it is the most common cause. Other factors can contribute to this failure, a phenomenon sometimes called "dataset shift." These include:

- Batch Effects: Technical variations between data collected at different sites or times [21] [20].

- Population Differences: The external data may come from a population with different genetic backgrounds, comorbidities, or environmental exposures [17] [21].

- Assay Variability: Differences in laboratory protocols, reagents, or platforms can alter measurements [20].

Troubleshooting Step: Before concluding overfitting, ensure you have performed proper data normalization and harmonization across datasets [20].

Q4: How much data do I really need to avoid overfitting in genomics?

A: There is no universal number, as it depends on the model's complexity and the effect size you are trying to detect. However, general guidelines exist:

- Discovery Phase: A minimum of 50-200 samples is required to identify initial statistical associations [17].

- Validation Phase: Hundreds to thousands of patient samples are typically needed to achieve adequate statistical power for clinical validation [17].

- Rule of Thumb: The number of features (e.g., genes) should be significantly smaller than the number of samples. A high feature-to-sample ratio is a primary risk factor for overfitting [10].

Q5: Can AI and machine learning actually improve biomarker validation success rates?

A: Yes, when applied correctly. Modern AI-powered discovery platforms are transforming the field by:

- Accelerating Timelines: Cutting discovery and validation timelines from 5+ years to 12-18 months through automated analysis of multi-omics data [17].

- Improving Success Rates: Recent studies show machine learning approaches can improve validation success rates by 60% by identifying more robust biomarker signatures from the start [17].

- Identifying Complex Patterns: Discovering complex, multi-feature patterns across genomics, proteomics, and clinical data that are invisible to traditional statistical methods [17].

Distinguishing Biological Signal from Technical and Random Noise in Sequencing Data

High-throughput sequencing (HTS) has become a standard tool in life science studies, offering unprecedented resolution for quantifying biological molecules. However, this high sensitivity magnifies the impact of technical noise—non-biological variations introduced during library preparation, amplification, sequencing bias, or random hexamer priming [23]. This technical noise, particularly prevalent in low-abundance genes due to coverage bias and the stochasticity of the sequencing process, can obscure true biological signals and lead to spurious patterns in downstream analyses [23]. In the context of machine learning for genomics, these technical variations present a significant risk. If a model learns these noise-derived patterns, which are not reproducible, it results in overfitting. An overfitted model performs well on its training data but fails to generalize to new, unseen datasets, compromising its predictive power and biological utility [24] [25].

Frequently Asked Questions (FAQs)

1. What is the difference between technical noise and biological signal in my data?

A biological signal represents consistent, reproducible patterns resulting from actual biological processes, such as the differential expression of a gene across two conditions. Technical noise, on the other hand, comprises random, non-biological fluctuations introduced during the sequencing workflow. These can include low-level expression variations due to intrinsic sequencing variability, coverage bias of lower abundance genes, or biases from library preparation [23]. A key danger in machine learning is that complex models may overfit by learning this technical noise, mistaking it for a real signal, which leads to poor performance on validation data [24] [25].

2. How can I tell if my machine learning model is overfitting to technical noise?

Signs of overfitting include:

- Performance Discrepancy: The model achieves high accuracy on the training data but performs poorly on the validation or test data [24] [1].

- Excessive Complexity: The model has learned an overly complex function that perfectly fits the training data points, including their noise. Visually, this might look like a squiggly line passing through every data point instead of capturing the smooth underlying trend [24].

- High Variance: The model's predictions are highly sensitive to small changes in the training data [24] [1].

3. My dataset has a low number of replicates. Are my analyses more susceptible to noise?

Yes, datasets with a low number of replicates are particularly vulnerable. Statistical methods for batch correction and normalization are designed to mitigate biases, but their effectiveness is often limited with few replicates. A noise filter for pre-processing data can help reduce the further amplification of these biases before they impact downstream analyses [23].

4. What are some common sources of technical noise I should check for in my NGS data?

Technical noise can originate from multiple stages of an NGS experiment. The seqQscorer tool highlights several key quality features to audit [26]:

- Mapping Features: The number of reads that map uniquely to the reference genome is a strong, broad indicator of data quality.

- Raw Sequence Features: "Overrepresented sequences" (indicating adapter contamination) and "Per sequence GC content" (deviations from expected distribution) are highly predictive of quality issues.

- Experimental Variation: Differences in hybridization kinetics between probes in targeted sequencing panels can lead to grossly non-uniform coverage [27].

Troubleshooting Guides

Guide 1: Implementing a Noise Filtering Pipeline with noisyR

The noisyR package provides an end-to-end pipeline to quantify and remove technical noise from HTS datasets, helping to prevent models from learning these non-biological patterns [23] [28].

- Objective: To characterize and filter out random technical noise from count matrices or alignment files before downstream analysis or model training.

- Principle: The method assesses the consistency of signal distribution across replicates and samples, identifying a noise threshold below which gene expression is considered unreliable [23].

Workflow Diagram: noisyR Noise Filtering

Protocol Steps:

Similarity Calculation:

- For a Count Matrix: Use

calculate_expression_similarity_counts(). The function compares the similarity of gene ranks or abundances across your samples using a sliding window approach. You can choose from over 45 similarity metrics [28]. - For BAM Files: Use

calculate_expression_similarity_transcript(). This function calculates the point-to-point similarity of expression across the length of transcripts for each exon in a pairwise manner [28].

- For a Count Matrix: Use

Noise Quantification:

- Use the expression-similarity relationship from step one to determine a sample-specific noise threshold.

- The package provides functionality to determine an optimal, data-driven threshold, such as by selecting the expression level where the similarity consistently exceeds a certain value. The goal is to choose a threshold that results in the lowest variance across samples [28].

Noise Removal:

- For a Count Matrix: Use

remove_noise_from_matrix(). Genes with expression below the noise threshold in every sample are removed. To preserve data structure, the average noise threshold is added to every remaining entry [28]. - For BAM Files: Use

remove_noise_from_bams(). Genes whose exons are all below the noise threshold in every sample are removed from the BAM files [28].

- For a Count Matrix: Use

Expected Outcome: A denoised count matrix or set of BAM files. This leads to improved convergence in downstream analyses like differential expression calling and gene regulatory network inference, as predictions are less biased by technical artifacts [23].

Guide 2: Automated Quality Control with seqQscorer

Poor-quality sequencing files introduce systematic biases that act as a major source of technical noise. The seqQscorer tool uses machine learning to automate the quality control of NGS data [26].

- Objective: To automatically and objectively classify NGS data files (e.g., from RNA-seq, ChIP-seq) as high or low quality, flagging problematic datasets before analysis.

- Principle: The tool trains predictive models on a large collection of labeled NGS files from the ENCODE repository, leveraging a comprehensive set of quality features [26].

Workflow Diagram: seqQscorer Quality Control

Protocol Steps:

Feature Extraction: Run

seqQscoreron your raw NGS data (FastQ files) and their mapped results. The tool extracts four sets of features [26]:- RAW: Features from raw sequencing reads (e.g., "Overrepresented sequences," "Per sequence GC content").

- MAP: Mapping statistics (e.g., "Overall mapping rate," "Uniquely mapped reads").

- LOC: Genomic localization of reads.

- TSS: Spatial distribution of reads near transcription start sites.

Model Application: The tool applies a pre-trained model (e.g., a Random Forest or Multilayer Perceptron) that has been validated on human and mouse data for RNA-seq, ChIP-seq, and DNase-seq/ATAC-seq assays. These models combine the predictive power of multiple features to make a robust classification [26].

Interpretation: The model outputs a classification of the file's quality. Files predicted to be low-quality should be investigated further or excluded from downstream analysis and model training to reduce the introduction of systematic technical noise [26].

Guide 3: Addressing Overfitting in Genomic Machine Learning Models

This guide provides direct strategies to mitigate overfitting when training models on genomic data.

- Objective: To ensure your model generalizes well to new genomic data by learning the underlying biological signal rather than technical noise.

- Principle: Balance model complexity with the amount of available data, and use techniques that prevent the model from fitting spurious correlations [24] [1] [25].

Protocol Steps:

- Increase Training Data: Where possible, increase the number of biological replicates in your sequencing experiment. More data helps the model learn the true data distribution rather than memorizing noise [1].

- Apply Regularization: Use techniques like Lasso (L1) or Ridge (L2) regularization, which penalize overly complex models by adding a constraint on the size of the model coefficients. This effectively discourages the model from relying too heavily on any one feature [1].

- Use Dropout: If training a neural network, dropout is a highly effective technique. It works by randomly "dropping out" (i.e., temporarily removing) a percentage of neurons during training, which prevents the network from becoming overly reliant on specific connections and encourages a more robust learned representation [25].

- Perform Rigorous Validation: Always hold out a validation dataset or use cross-validation. Monitor the model's performance on both the training and validation sets. If training performance continues to improve while validation performance worsens, this is a clear sign of overfitting [24].

- Simplify the Model: Start with a simpler model architecture. A model with fewer parameters is less capable of memorizing noise. You can gradually increase complexity if the model is found to be underfitting [1].

Table 1: Comparison of Noise Filtering and QC Approaches

| Tool / Approach | Primary Input | Core Methodology | Key Outcome / Advantage |

|---|---|---|---|

| noisyR [23] [28] | Count matrix or BAM files | Assesses expression consistency across replicates to determine a data-driven noise threshold. | Outputs a denoised expression matrix; improves convergence in DE analysis and network inference. |

| seqQscorer [26] | Raw FastQ files & mapping stats | Machine learning classifier trained on ENCODE data using multiple quality feature sets. | Provides an automated, objective quality classification for NGS files, reducing human bias. |

| DLM for NGS Depth [27] | DNA probe sequences | A bidirectional RNN that uses nucleotide identity and unpaired probability to predict coverage. | Predicts sequencing depth from probe sequence, allowing for optimization of panel uniformity. |

Table 2: Performance of Predictive Models in Genomics

| Model / Tool | Application Area | Performance Metric | Result |

|---|---|---|---|

| DLM for NGS Depth [27] | Predicting sequencing depth from probe design | Accuracy (within a factor of 3) | 93% accuracy for a 39k-plex SNP panel; 89% accuracy when trained on one panel and tested on another. |

| seqQscorer ML Models [26] | Classifying NGS file quality (e.g., Human ChIP-seq) | auROC (Area Under ROC Curve) | > 0.9 auROC when using all quality features, indicating high prediction accuracy. |

| noisyR [23] | Enhancing biological signal | Impact on downstream analysis | Leads to consistent differential expression calls and enrichment results across different methods. |

The Scientist's Toolkit: Essential Research Reagents & Software

| Item Name | Function / Purpose | Example / Note |

|---|---|---|

| noisyR Package [28] | An R package for quantifying and removing technical noise from sequencing datasets. | Implements both count-based and transcript-based noise filtering. Available on GitHub. |

| seqQscorer [26] | A machine learning-based tool for automated quality control of NGS data files. | Validated on human and mouse RNA-seq, ChIP-seq, and DNase-seq/ATAC-seq data. |

| Nupack Software [27] | Calculates DNA folding probabilities and thermodynamic properties. | Used by the DLM to compute the probability that a nucleotide is unpaired, informing hybridization kinetics. |

| FastQC [26] | A popular tool for initial quality control of raw sequencing data. | Provides various analyses (e.g., per-base sequence quality, adapter contamination) but requires manual interpretation. |

| Deep Learning Model (DLM) [27] | Predicts NGS sequencing depth from DNA probe sequence to improve panel uniformity. | Employs a bidirectional recurrent neural network (RNN) with GRUs. |

Technical FAQs: Core Concepts and Diagnostics

What is overfitting in the context of genetic prediction models? Overfitting occurs when a model learns the specific patterns, including noise, in a training dataset so well that it performs poorly on new, unseen data. In genetic prediction, this means a model might incorporate effects from null genetic variants (those with no true biological effect) that appear significant due to random chance or limitations in the training sample. This results in a model that seems highly accurate in the original study but fails to generalize to independent populations [29] [30] [24].

Why are polygenic psychiatric phenotypes particularly susceptible to overfitting? Psychiatric phenotypes are highly polygenic, meaning they are influenced by thousands of genetic variants, each with very small individual effects. With the number of candidate genetic variants (predictors) far exceeding the number of individuals in typical studies, there is a high risk of including null variants. Limited statistical power to distinguish these truly susceptible variants from null variants is a primary driver of overfitting in this field [29] [30] [31].

How can I quickly diagnose if my model is overfit? The most telling sign of overfitting is a significant drop in performance between the training and test sets. For example, a model might show high accuracy or R² on the data it was trained on, but these metrics deteriorate when applied to a validation cohort [32] [24]. Other diagnostic indicators include:

- High "Events per Variable" (EPV) ratio: A low EPV (e.g., below 10-20) is a strong indicator of potential overfitting [31].

- Over-optimistic performance metrics: Reporting only the apparent (training set) accuracy without validation on an independent test set [32].

What are the consequences of using an overfit model in practice? Using an overfit model for clinical prediction can lead to inaccurate risk estimates for individuals, potentially misinancing clinical decision-making. In research, overfit models are not replicable and can misdirect scientific inquiry by highlighting false genetic associations, thereby wasting resources and slowing progress toward genuine biological insights [24] [31].

Troubleshooting Guides: Common Problems and Solutions

Problem 1: Poor Model Performance in an Independent Validation Cohort

Symptoms:

- High prediction accuracy (e.g., R², AUC) in the training dataset but significantly lower accuracy in an independent validation cohort.

- Poor calibration where predicted risks do not match observed outcome frequencies in the new dataset [30] [31].

Solutions:

- Implement Regularization: Use penalized regression methods like ridge regression or LASSO, which constrain the size of the coefficients for genetic variants, preventing any single variant from having an unduly large effect based on noise [29] [30].

- Apply Machine Learning Algorithms Designed for High-Dimensional Data: Consider methods like Smooth-Threshold Multivariate Genetic Prediction (STMGP), which combines variant selection with penalized regression, or gradient-boosted regression trees (GraBLD), which sequentially corrects weak predictors. These are specifically designed to handle the "large p, small n" problem in genomics [29] [33].

- Conduct a Power Analysis: Ensure your study has an adequate sample size. A widely adopted benchmark is to have at least 10-20 outcome events per variable (EPV) considered in the model [31].

Problem 2: Model is Too Complex and Incorporates Too Many Null Variants

Symptoms:

- The model includes a very large number of genetic variants, many of which have no established biological plausibility.

- Performance is highly sensitive to small changes in the training data.

Solutions:

- Use Prior Biological Knowledge for Feature Selection: When possible, select candidate genetic variants based on existing research evidence or functional annotations rather than relying solely on data-driven, hypothesis-free selection from GWAS [31].

- Employ Bayesian Methods: Methods like LDpred or BayesR incorporate prior assumptions about the distribution of genetic effect sizes, which can help shrink the effects of null variants toward zero [30] [33].

- Validate with External Summary Statistics: If individual-level data is limited, use summary-data-based methods like SBLUP that can leverage large-scale GWAS summary statistics from independent consortia for validation and improvement [30].

Problem 3: Inflated Apparent Heritability or Prediction Accuracy

Symptoms:

- The model explains a surprisingly high proportion of heritability in the training set, which is not replicated elsewhere.

- The number of predictors (genetic variants) is very close to or even exceeds the number of observations.

Solutions:

- Never Rely on Training-Set Performance Alone: Always report performance metrics derived from a held-out test set or, ideally, an externally validated cohort [32] [31].

- Use Internal Validation Techniques: Implement resampling methods like cross-validation or bootstrapping within your training dataset to obtain a more realistic estimate of model performance before proceeding to external validation [24].

- Simplify the Model: Increase the P-value threshold for variant inclusion in a polygenic risk score (PRS) or use more stringent LD clumping parameters to reduce the number of null variants [30].

Experimental Protocols & Data

Detailed Methodology: Implementing the STMGP Algorithm

The following protocol outlines the key steps for implementing the Smooth-Threshold Multivariate Genetic Prediction (STMGP) algorithm, a method specifically developed to mitigate overfitting in genetic predictions [29] [30].

1. Data Preparation and Quality Control (QC)

- Genotyping & Imputation: Perform genome-wide genotyping using a standardized array (e.g., HumanOmniExpressExome BeadChip). Impute to a reference panel to increase genomic coverage.

- Standard QC: Apply standard QC filters: remove samples with call rate < 0.98, exclude variants with call rate < 0.99, Hardy-Weinberg equilibrium P < 1×10⁻⁴, and minor allele frequency < 0.01.

- Population Structure: Calculate principal components (PCs) to account for population stratification.

- Phenotype: Define the target polygenic psychiatric phenotype (e.g., depressive symptoms measured by CES-D score). Consider transformations (e.g., Box-Cox) if the distribution is non-normal.

2. Training and Test Set Split

- Split the full dataset into a training cohort (e.g., for model building) and a completely independent test cohort (e.g., for validation). The study by __ used 3,685 subjects for training and 3,048 for validation [30].

3. Genome-Wide Association Study (GWAS)

- Conduct a GWAS on the training cohort, regressing the phenotype on each SNP, typically while adjusting for covariates like age, sex, and PCs.

4. STMGP Model Training

- Variant Selection: Select a set of SNPs from the GWAS based on a P-value threshold.

- Smooth-Thresholding: Assign weights to the selected SNPs. Unlike standard PRS which uses effect size estimates, STMGP uses a function of the P-value to reflect the certainty of a variant's inclusion, which helps stabilize predictions.

- Penalized Regression: Build a generalized ridge regression model using all selected SNPs as predictors. This multivariate approach accounts for linkage disequilibrium (correlation between SNPs) between predictors, unlike simple clumping and thresholding (P+T) methods. The ridge penalty helps to further control overfitting by shrinking coefficients.

5. Model Validation

- Internal Validation: Apply the trained STMGP model to the held-out test cohort.

- Performance Metrics: Calculate prediction accuracy (e.g., R² for continuous traits, AUC for binary traits) and calibration metrics.

- Benchmarking: Compare performance against other state-of-the-art methods (e.g., PRS, GBLUP, SBLUP, BayesR, ridge regression) on the same test dataset [30].

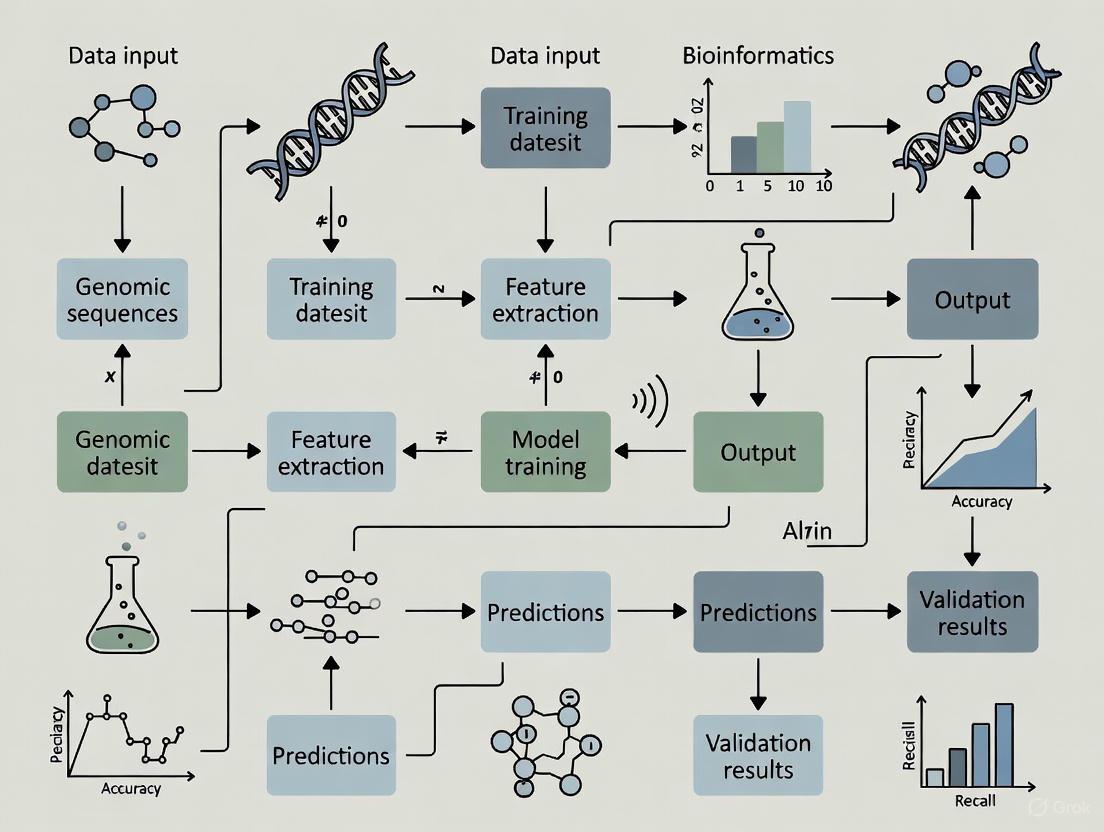

Experimental Workflow: From Data to Validated Model

The diagram below illustrates the core workflow for developing and validating a genetic prediction model while guarding against overfitting.

Quantitative Performance Comparison of Prediction Methods

The table below summarizes a comparison of different genetic prediction methods for a phenotype of depressive symptoms, as reported in a study using real-world data. STMGP demonstrated the highest accuracy with the lowest degree of overfitting [30].

| Method | Acronym Type | Key Mechanism | Prediction Accuracy (R²)* | Relative Overfitting Risk |

|---|---|---|---|---|

| Smooth-Threshold Multivariate Genetic Prediction | STMGP | Penalized regression with variant selection and weighting | Highest | Lowest |

| Polygenic Risk Score | PRS | P-value thresholding & LD clumping | Low | High |

| Genomic Best Linear Unbiased Prediction | GBLUP | Linear mixed model; all variants included as random effects | Low | High |

| Summary-Data-Based Best Linear Unbiased Prediction | SBLUP | Uses external GWAS summary statistics | Moderate | Moderate |

| BayesR | BayesR | Bayesian hierarchical model | Moderate | Moderate |

| Ridge Regression | RR | L2-penalized regression on clumped SNPs | Moderate | Moderate |

Note: *Reported values are relative comparisons from the cited study; specific R² values were not significantly different between the top performers, but STMGP consistently showed the most robust performance [30].

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function in Experiment |

|---|---|

| Genotyping Array (e.g., HumanOmniExpressExome) | Provides the raw genotype data for all samples. The foundation of the analysis [30]. |

| Quality Control (QC) Pipelines (e.g., in PLINK) | Software to filter out low-quality samples and genetic variants, ensuring data integrity before analysis [30]. |

| GWAS Summary Statistics (e.g., from PGC, GIANT) | Pre-computed association statistics from large consortia; can be used for methods like SBLUP or as a prior for Bayesian methods [33]. |

| Reference Panels (e.g., 1000 Genomes) | Used for genotype imputation (to infer untyped variants) and for estimating linkage disequilibrium (LD) in methods like LDpred [33]. |

| STMGP Software | Implements the specific STMGP algorithm, which combines SNP selection with generalized ridge regression [29] [30]. |

| LDpred / BayesR Software | Implements Bayesian methods for polygenic risk prediction that shrink effect sizes based on priors and LD information [30] [33]. |

| Validation Cohort | An independently recruited sample with genotypic and phenotypic data, essential for externally validating any prediction model to test for overfitting [30] [31]. |

Building Defenses: Methodologies to Prevent Overfitting in Genomic Models

FAQs: Core Concepts and Technique Selection

Q1: What is overfitting in the context of genomics research, and why is it a critical problem?

Overfitting occurs when a machine learning model learns the training data too well, including its noise and random fluctuations, rather than the underlying biological pattern. This results in a model that performs excellently on training data but generalizes poorly to new, unseen data [34] [35]. In genomics, this is a severe issue due to the high dimensionality of the data, where the number of features (e.g., genetic variants) often far exceeds the number of samples [34]. The consequences include:

- Misleading Biomarker Discovery: Identification of spurious genetic associations [34].

- Ineffective Clinical Applications: Models that fail to generalize can lead to incorrect diagnoses or treatment recommendations [34].

- Wasted Resources: Significant time and funding spent on validating false-positive findings [34].

Q2: When should I use L1 (Lasso) vs. L2 (Ridge) regularization for genomic data?

The choice depends on your data characteristics and research goal [35].

- Use L1 (Lasso) Regularization when you need feature selection. It is ideal for high-dimensional genomic data (e.g., from GWAS) where you suspect many features (like SNPs) are irrelevant. L1 drives the coefficients of less important features to exactly zero, creating a sparse and often more interpretable model [36] [35].

- Use L2 (Ridge) Regularization when you are dealing with multicollinearity (highly correlated features) and believe most features contribute to the prediction. L2 shrinks all coefficients proportionally but rarely zeroes them out, leading to a more stable solution when features are correlated, which is common in biological pathways [36] [35].

Q3: What is Elastic Net regularization, and what specific genomic challenge does it solve?

Elastic Net combines L1 and L2 regularization to overcome the limitations of using either one alone [36] [35]. It is particularly valuable in genomics for solving the "group effect" problem: when multiple genes or genetic markers in a pathway are highly correlated, Lasso might arbitrarily select only one from the group. Elastic Net can select entire groups of correlated variables together, providing more robust biological insight [35]. Its penalty term is a weighted combination: λ * (α * Σ|βj| + (1-α) * Σβj²), where α controls the mix between L1 and L2 [36].

Q4: How does Dropout regularization work in neural networks, and why is it useful for genomic deep learning?

Dropout is a technique used primarily in neural networks where, during each training iteration, a random subset of neurons is temporarily "dropped out," meaning their output is set to zero [37] [38]. This prevents the network from becoming too reliant on any single neuron and forces it to learn redundant, robust representations [35]. It acts as an approximation of training a large ensemble of thinner networks and averaging their predictions. This is useful in deep learning applications in genomics, such as analyzing sequence data, to prevent complex models from memorizing the training dataset [39].

Q5: What is Early Stopping, and how does it function as a regularizer?

Early Stopping is an implicit regularization technique that halts the model's training process before it begins to overfit [37] [38]. During training, model performance is monitored on a validation set. Training is stopped once the performance on the validation set (e.g., validation loss) stops improving and starts to degrade, indicating the onset of overfitting [36] [35]. This method saves computational resources and prevents the model from learning noise in the training data by limiting the effective number of training iterations [35].

Troubleshooting Guides

Issue 1: Model Performance is Poor on Validation Set Despite Good Training Performance

Problem: Your model shows a large gap between high performance on training data (e.g., low loss, high accuracy) and poor performance on the validation set. This is a classic sign of overfitting [35].

Solutions:

- Implement Cross-Validation: Never test your model on the same data it was trained on [40]. Use k-fold cross-validation to obtain a robust estimate of model performance and tune hyperparameters [34] [35].

- Apply L2 Regularization: If you are not using regularization, introduce it. Start with L2 regularization to penalize large weights and encourage a simpler model. The strength of the penalty is controlled by the

λparameter, which can be tuned via cross-validation [36] [37]. - Increase Regularization Strength: If you are already using regularization, try increasing the

λparameter. This applies a stronger penalty on model complexity [36]. - Simplify the Model Architecture: For neural networks, reduce the number of layers or neurons per layer. A model that is too complex for the amount of available data is prone to overfitting [34].

Issue 2: Unstable Feature Selection with L1 Regularization in Genomic Studies

Problem: When using L1 regularization on genomic data with highly correlated features (e.g., genes in the same biological pathway), the selected features change significantly with slight changes in the training data.

Solutions:

- Switch to Elastic Net: The L2 component in Elastic Net helps handle correlated variables more gracefully, promoting the selection of groups of correlated features rather than picking one arbitrarily [36] [35]. This leads to more stable and biologically meaningful feature sets.

- Use Domain Knowledge for Feature Pre-selection: Before applying machine learning, leverage existing biological knowledge (e.g., Gene Ontology, known pathways) to filter features, reducing dimensionality and correlation in the input data [34] [41].

Issue 3: Determining the Optimal Regularization Strength (λ)

Problem: It is challenging to choose the right value for the regularization parameter λ.

Solutions:

- Systematic Hyperparameter Tuning: Use cross-validation to evaluate model performance for a range of

λvalues (e.g., on a logarithmic scale like 0.001, 0.01, 0.1, 1, 10) [35]. Select theλthat gives the best validation performance. - Visualize the Regularization Path: For L1 and Elastic Net, plot how model coefficients change as

λvaries. This helps understand feature importance and the point at which coefficients stabilize or become zero [35]. - Monitor Validation Metrics: Use a validation set to monitor metrics like loss during training. For early stopping, this is crucial to determine the optimal stopping point [37].

Experimental Protocols and Methodologies

Protocol 1: Implementing a Regularized Machine Learning Pipeline for Genomic Data

This protocol outlines a standard workflow for building a regularized classifier for a genomic dataset (e.g., gene expression data with associated phenotypes).

1. Data Preprocessing and Partitioning

- Load and clean the genomic data (e.g.,

yeast.csvwith 186 genes and 79 expression features) [40]. - Critical Step: Partition the data into three sets: Training (e.g., 60%), Validation (e.g., 20%), and Hold-out Test (e.g., 20%).

- Preprocess the features (e.g., normalization, handling missing values).

2. Model Training with Cross-Validation

- Choose a model (e.g., Logistic Regression with L2 penalty).

- Use the training set to perform k-fold (e.g., 5-fold) cross-validation to find the optimal regularization parameter

λ. - Train multiple models on the training folds with different

λvalues and evaluate them on the validation folds.

3. Model Validation and Final Evaluation

- Train a final model on the entire training set using the best

λfound in step 2. - Evaluate this final model's performance on the held-out test set, which was not used in any part of training or tuning, to get an unbiased estimate of its generalization error [40].

The following workflow diagram illustrates this experimental protocol.

Protocol 2: Demonstrating Overfitting with a Hands-On Exercise

This exercise, inspired by educational literature, effectively demonstrates the danger of overfitting and the importance of a proper train-test split [40].

1. The Deceptive Workflow

- Load a genomic dataset (e.g., the yeast dataset).

- Immediately train a model (e.g., a classification tree) on the entire dataset.

- Use the same data to evaluate the model. You will observe high performance, creating a false sense of success.

2. Exposing the Problem

- Insert a data randomization step before training. Use a widget/tool to randomly shuffle the class labels, simulating a scenario where no real pattern exists [40].

- Run the same training and evaluation workflow. You will see that the model still reports high performance, proving it is learning noise and memorizing the data because it is being tested on its training set.

3. Implementing the Correct Workflow

- Introduce a Data Sampler widget to split the randomized data into training and testing sets.

- Train the model on the training set only.

- Evaluate the model on the separate test set. The performance will now be poor, correctly indicating that the model cannot generalize from the randomized data.

Data Presentation: Comparison of Regularization Techniques

The table below summarizes the key characteristics of the core regularization techniques.

Table 1: Comparison of Core Regularization Techniques for Genomic Data

| Technique | Mechanism | Key Strengths | Common Use Cases in Genomics | Considerations |

|---|---|---|---|---|

| L1 (Lasso) | Adds absolute value of coefficients to loss function; λ * Σ|βj| [36]. |

Creates sparse models; performs implicit feature selection; improves interpretability [36] [35]. | Genome-Wide Association Studies (GWAS) to identify key genetic markers; high-dimensional data with many irrelevant features [35]. | Unstable with highly correlated features; may arbitrarily select one feature from a correlated group [35]. |

| L2 (Ridge) | Adds squared value of coefficients to loss function; λ * Σβj² [36]. |

Handles multicollinearity well; stable solution; computationally efficient [36] [35]. | Polygenic risk score models where many small effects are expected; data with correlated predictors [35]. | Does not perform feature selection; all features remain in the model [36]. |

| Elastic Net | Combines L1 and L2 penalties; λ * (α * Σ|βj| + (1-α) * Σβj²) [36]. |

Balances sparsity and stability; selects groups of correlated variables [36] [35]. | Gene expression studies with correlated genes in pathways; general-purpose regularizer for high-dimensional genomic data [35]. | Introduces an additional hyperparameter (α) to tune [36]. |

| Dropout | Randomly drops neurons during training [37] [38]. | Prevents co-adaptation of neurons; acts as an implicit ensemble method [35]. | Deep neural networks for sequence analysis (e.g., DNA, RNA); image-based genomic analyses [39]. | Specific to neural networks; requires careful tuning of dropout rate [38]. |

| Early Stopping | Halts training when validation performance degrades [37]. | Simple to implement; saves computational resources; requires no change to loss function [36] [35]. | Training large neural networks or gradient boosting models on genomic data where training can be time-consuming [35]. | Requires a validation set to monitor; choice of 'patience' parameter can affect results [37]. |

The Scientist's Toolkit: Essential Research Reagents and Computational Materials

Table 2: Essential Tools for Regularized Machine Learning in Genomics

| Tool / Resource | Type | Primary Function | Example Use Case |

|---|---|---|---|

| scikit-learn | Software Library | Provides robust tools for ML in Python, including implementations of L1, L2, and Elastic Net regularization, and cross-validation [34]. | Building a regularized logistic regression model to predict disease status from SNP data. |

| TensorFlow / PyTorch | Software Library | Open-source libraries for building and training deep learning models, featuring built-in support for Dropout, L2, and Early Stopping [34] [37]. | Constructing a deep neural network with Dropout layers to classify genomic sequences. |

| Bioconductor | Software Suite | A suite of R packages specifically designed for the analysis and comprehension of genomic data, including preprocessing and dimensionality reduction tools [34]. | Preprocessing and normalizing raw gene expression data before applying regularized models. |

| Orange | Visual Programming Tool | An open-source data visualization and analysis tool that allows workflow-based design of ML pipelines, ideal for education and exploratory data analysis [40]. | Visually demonstrating the concepts of overfitting and the impact of train-test splits to students or collaborators. |

| Cross-Validation | Methodological Technique | A resampling procedure used to evaluate a model's ability to generalize to an independent dataset and to tune hyperparameters like λ [34] [40]. |

Reliably estimating the performance of a regularized classifier and selecting the optimal regularization strength. |

Visual Guide: Logical Relationship Between Overfitting and Regularization

The following diagram illustrates the core problem of overfitting in genomics and how different regularization techniques address it.

Troubleshooting Guide: Common Experimental Issues & Solutions

Poor Model Generalization After Augmentation

Problem: My model performs well on training data but poorly on validation/test sets, even after implementing data augmentation.

Investigation & Solutions:

- Check Augmentation Intensity: Overly aggressive augmentations can distort true biological signals.

- Action: Systematically reduce the probability or magnitude of your augmentations (e.g., lower mutation rates, reduce number of insertions/deletions). Retrain and monitor validation performance [42].

- Verify the Fine-Tuning Step (for EvoAug): The second-stage fine-tuning on original data is crucial for removing bias introduced by synthetic perturbations.

- Evaluate Augmentation Relevance: Not all evolution-inspired perturbations are equally suitable for every genomic task.

- Action: Consult the table below to diagnose which augmentations may be harming your specific task and which combinations are generally effective.

Table 1: Troubleshooting Evolution-Inspired Augmentations for Genomic DNNs

| Augmentation Type | Potential Pitfall | Affected Biological Assumption | Recommended Use & Performance Insight |

|---|---|---|---|

| Random Mutation | May reduce effect size of nucleotide variants, leading to poorer variant effect prediction [42]. | Mutations do not alter the regulatory function. | Use with caution; performance can be recovered during fine-tuning stage [42]. |

| Insertion/Deletion | Assumes distance between regulatory motifs is not critical [42]. | The spatial relationship between elements is flexible. | Can be highly effective; improves model robustness to indels [42]. |

| Translocation | Assumes the order of regulatory motifs is not critical [42]. | The order of regulatory elements can be changed without functional loss. | Effective for learning motif representations; improves generalization [42]. |

| Reverse Complement | Can be redundant if the model already uses reverse-complement invariance [42]. | Sequence function is strand-agnostic. | May not provide additional benefit if invariance is already encoded [42]. |

| Combination (Multiple Types) | Increased computational cost and training time [42]. | Multiple invariances hold true simultaneously. | Often yields the best performance, mitigating overfitting more effectively than single augmentations [42]. |

Synthetic Data Lacks Realism and Utility

Problem: The synthetic genomic data I've generated does not capture key statistical properties of the real data, leading to poor model performance when trained on it.

Investigation & Solutions:

- Assess Fidelity with Downstream Tasks: The ultimate test of synthetic data is its utility.

- Validate Statistical Similarity: Use quantitative metrics to ensure the synthetic data distribution matches the real data.

- Action: Compare measures like feature distributions, correlation matrices, and principal component analysis (PCA) plots between real and synthetic datasets [45].

- Review Generation Methodology: The choice of algorithm is critical for generating high-quality, privacy-preserving synthetic data.

- Action: Consider switching to or testing with more advanced generative models. See the table below for a comparison of tools and methods.

Table 2: Comparison of Synthetic Data Generation Tools and Methods

| Tool / Method | Key Feature | Best For | Evidence of Utility |

|---|---|---|---|

| Gretel.ai | ML-powered API for generating realistic, privacy-preserving synthetic data [45]. | Enterprise-scale synthetic data generation. | Successfully used to create synthetic mouse genotype/phenotype data that replicated GWAS results from a real study [44]. |

| Synthea | Open-source platform for generating synthetic patient health records, including genomic data [45]. | Academic research and prototyping. | Enables simulation of patient populations for research where real data is scarce or restricted [45]. |

| GANs (General) | Use a generator/discriminator architecture to produce highly realistic data [45]. | Complex, high-dimensional data generation. | Can capture intricate dependencies in genomic data, but require significant data and computational resources [45]. |

| VAEs (General) | Learn a latent representation of the data to generate new samples [45]. | Dimensionality reduction and data imputation. | Often more stable to train than GANs and can be effective for genomics [45]. |

Frequently Asked Questions (FAQs)

Q1: Why is overfitting a particularly severe problem in genomics compared to other machine learning domains? Overfitting is acute in genomics primarily due to the "small n, large p" problem (wide data), where the number of features (e.g., SNPs, genes) far exceeds the number of samples [34] [46]. This high dimensionality allows models to easily memorize noise and spurious correlations in the training data, failing to generalize to new data. The consequences are dire, leading to misleading biomarker discovery, ineffective clinical applications, and wasted resources [34].

Q2: How does EvoAug improve the interpretability of genomic deep neural networks, not just their performance? EvoAug-trained models learn more robust and accurate representations of transcription factor binding motifs. Studies have shown that the first-layer convolutional filters of models trained with EvoAug capture a wider repertoire of motifs that better reflect known motifs, both quantitatively and qualitatively [42]. Furthermore, attribution maps (like Saliency Maps) from these models are cleaner, with more identifiable motifs and less spurious noise, making model decisions easier to interpret [42].

Q3: My dataset is very small. Can these augmentation strategies still help? Yes, they can be particularly beneficial in low-data regimes. Research on EvoAug demonstrated that a model trained with augmentations on only 25% of the original training data could outperform the same model trained with standard methods on the entire dataset [43]. Synthetic data generation is also explicitly designed to overcome the limitations of small sample sizes by creating large, high-quality datasets for training [45].

Q4: What are the key ethical considerations when using synthetic genomic data? The primary ethical benefit is privacy preservation, as synthetic data contains no real patient information, mitigating re-identification risks [45]. However, it is crucial to ensure that synthetic data does not perpetuate or amplify biases present in the original data. Best practices include using diverse source datasets and applying fairness metrics during the generation process to produce more equitable data [45].

Experimental Protocol: Implementing EvoAug for a Genomic DNN

This protocol outlines the methodology for training a deep neural network using the EvoAug-TF framework, based on experiments conducted in the referenced studies [43] [42].

Objective: To improve the generalization and interpretability of a genomic DNN (e.g., for transcription factor binding prediction) by incorporating evolution-inspired data augmentations.

Materials & Computational Setup:

- Software: Python, TensorFlow 2.7+, EvoAug-TF package.

- Hardware: A computer with a GPU is recommended for faster training.

- Data: A set of aligned DNA sequences (e.g., ChIP-seq peaks) and corresponding binary labels.

Procedure:

Data Preprocessing:

- Convert genomic sequences to one-hot encoded format (A: [1,0,0,0], C: [0,1,0,0], etc.).

- Split the data into training, validation, and test sets (e.g., 70/15/15).

Stage 1: Augmentation Training:

- Configure the EvoAug augmentations. A recommended starting combination is: random mutation, insertion, deletion, and translocation.

- Set hyperparameters (e.g., mutation rate = 0.1, probability of applying any augmentation = 0.5).

- Train the model for a fixed number of epochs. During each mini-batch, EvoAug-TF will stochastically apply the selected augmentations to the sequences. Critical: The labels for the augmented sequences remain the same as the original wild-type sequence.

Stage 2: Fine-Tuning:

- Using the model weights from the end of Stage 1 as the starting point.

- Continue training the model, but only on the original, un-augmented training data.

- Use the validation set to determine when to stop training (early stopping).

Model Evaluation:

- Finally, evaluate the model on the held-out test set to assess its generalization performance.

- Compare metrics (e.g., AUC, accuracy) against a baseline model trained without any augmentations.

Table 3: Key Software Tools and Resources for Genomic Data Augmentation

| Item Name | Category | Function & Application | Reference / Source |

|---|---|---|---|

| EvoAug-TF | Software Library | A TensorFlow implementation of evolution-inspired data augmentations (mutations, indels, etc.) for training genomic DNNs. | PyPI: evoaug-tf [43] |