Validating RNA-seq Data with qPCR: A Comprehensive Guide for Robust Gene Expression Analysis

This article provides a complete framework for researchers and drug development professionals to validate RNA-seq findings using qPCR.

Validating RNA-seq Data with qPCR: A Comprehensive Guide for Robust Gene Expression Analysis

Abstract

This article provides a complete framework for researchers and drug development professionals to validate RNA-seq findings using qPCR. It covers the foundational principles of why validation is critical, even with advanced RNA-seq technologies, and delivers actionable methodological protocols for selecting reference genes and designing assays. The guide includes detailed troubleshooting for common pitfalls and a comparative analysis of validation performance across different RNA-seq workflows. By synthesizing current best practices and emerging trends, this resource empowers scientists to enhance the reproducibility, reliability, and clinical translatability of their transcriptomic data.

Why Validate? The Critical Role of qPCR in Confirming RNA-seq Findings

RNA sequencing (RNA-seq) has revolutionized gene expression analysis, providing an unbiased, comprehensive view of the transcriptome. Yet, a persistent question remains in molecular biology laboratories and manuscript review processes: are quantitative PCR (qPCR) validations of RNA-seq findings still required? This question sparks considerable debate among researchers, with perspectives varying based on technological capabilities, journal requirements, and research objectives.

The validation debate centers on balancing RNA-seq's discovery power with qPCR's precision. While RNA-seq can detect novel transcripts, splice variants, and provide genome-wide expression profiles, qPCR remains the gold standard for targeted gene expression analysis due to its sensitivity, reproducibility, and technical accessibility. This guide examines the evidence, protocols, and decision frameworks to help researchers navigate this ongoing scientific discussion.

The Technological Landscape: RNA-seq vs qPCR

Core Methodological Differences

Understanding the technical distinctions between these platforms clarifies their respective strengths and limitations.

RNA-seq employs next-generation sequencing to capture a complete snapshot of RNA populations, enabling hypothesis-free investigation. It detects both known and novel features including alternative splicing, fusion genes, and non-coding RNAs without prior sequence knowledge [1]. In contrast, qPCR provides highly accurate quantification of predefined targets through enzymatic amplification, making it ideal for confirming specific observations but unsuitable for discovery [2].

Performance Comparison

The table below summarizes key technical parameters distinguishing these technologies:

| Parameter | RNA-seq | qPCR |

|---|---|---|

| Discovery Power | High (detects novel transcripts) [1] | None (limited to known sequences) [1] |

| Throughput | High (thousands of genes simultaneously) [3] | Low (typically 1-10 genes per assay) [2] |

| Sensitivity | Can detect expression changes down to 10% [1] | High, but limited by amplification bias at extreme inputs [2] |

| Dynamic Range | >5 orders of magnitude [1] | ~7 orders of magnitude [2] |

| Sample Requirements | High-quality RNA often needed [2] | Compatible with degraded samples (e.g., FFPE) [2] |

| Turnaround Time | Days to weeks (includes bioinformatics) [2] | 1-3 days [2] |

| Cost per Sample | Higher for full transcriptome [3] | Lower for limited targets [3] |

| Bioinformatics Demand | Substantial [4] | Minimal [4] |

The Case For Validation: When qPCR Confirmation Remains Crucial

Technical Reproducibility Concerns

The RNA-seq workflow encompasses numerous steps where technical artifacts can emerge, including library preparation (e.g., biases from random hexamers versus oligo-dT priming), sequencing depth limitations affecting low-abundance transcript detection, and bioinformatic processing challenges [4]. These technical variables create potential false positives requiring confirmation.

For HLA gene expression analysis, one study demonstrated only moderate correlation between RNA-seq and qPCR (0.2 ≤ rho ≤ 0.53), highlighting how extreme polymorphism in certain gene families complicates RNA-seq quantification [5]. Such discrepancies underscore scenarios where orthogonal validation remains valuable.

Biological Reproducibility Assessment

qPCR validation using independent biological samples provides critical evidence that observations extend beyond the original experimental context. This approach tests whether differential expression patterns persist in similar samples under equivalent conditions, distinguishing robust biological effects from cohort-specific anomalies [4].

Institutional and Publishing Requirements

Many high-impact journals continue to require qPCR validation of RNA-seq findings, particularly for key results [4]. This conservative stance reflects peer review's cautious interpretation of relatively novel methodologies compared to qPCR's established track record.

The Case Against Routine Validation: When RNA-seq Stands Alone

Sufficient Biological Replication

When RNA-seq experiments incorporate adequate biological replicates (typically at least 3) that show strong agreement, the internal consistency provides substantial evidence for dispensing with qPCR validation [4] [6]. The replicated dataset itself serves as validation through internal consistency.

Resource Allocation Considerations

Validation studies consume significant time, financial resources, and precious samples. When RNA-seq represents an initial discovery phase followed by extensive functional characterization (e.g., protein-level assays), qPCR validation may represent an unnecessary intermediate step [6].

Technical Maturation of RNA-seq

As RNA-seq methodologies mature with improved library prep protocols, sequencing depth, and bioinformatic tools, its standalone reliability has increased substantially. Targeted RNA-seq panels now offer high-depth coverage of specific gene sets at lower cost, blurring the distinction between discovery and validation platforms [2].

Experimental Design: Validation Protocols and Methodologies

Effective Validation Workflow

Candidate Gene Selection Strategies

Effective validation begins with strategic gene selection from RNA-seq data. Researchers should include genes representing different expression patterns: significantly upregulated, downregulated, and unchanged transcripts [4]. Computational tools like GSV (Gene Selector for Validation) leverage RNA-seq data to identify optimal reference genes and variable targets based on expression stability and abundance thresholds [7].

For normalization, a paradigm shift is emerging where combinations of non-stable genes can outperform traditional housekeeping genes when their expression patterns balance each other across experimental conditions [8]. This approach uses RNA-seq databases to identify optimal gene combinations mathematically.

Sample Preparation for Validation Studies

Crucially, qPCR validation should employ independent biological samples—not the same RNA used for sequencing—to assess both technical and biological reproducibility [4] [6]. Using the same cDNA only tests technical concordance between platforms without addressing biological variability.

Research Reagent Solutions

| Reagent/Category | Function | Considerations |

|---|---|---|

| RNA Extraction Kits | Isolate high-quality RNA | Select based on sample type (e.g., FFPE-compatible) [2] |

| Reverse Transcriptase | cDNA synthesis | Choice between random hexamers vs oligo-dT affects coverage [4] |

| qPCR Master Mix | Amplification reaction | Contains polymerase, dNTPs, buffer, fluorescence detection chemistry |

| Reference Genes | Normalization controls | Validate stability across conditions; avoid traditional HKGs without verification [7] |

| Target-Specific Primers/Probes | Gene quantification | Design for known sequences; efficiency impacts quantification accuracy |

| RNA-seq Library Prep Kits | Library construction | Method influences GC bias and transcript representation [4] |

Decision Framework: To Validate or Not to Validate?

Circumstances Requiring Validation

- Limited Biological Replicates: When RNA-seq was performed on few biological replicates (or just one), preventing robust statistical assessment [6]

- Novel or Unexpected Findings: When results contradict established literature or reveal surprising biological mechanisms

- High-Stakes Conclusions: When findings form the foundation for extensive future research or clinical applications

- Journal Requirements: When targeting publications with mandatory validation policies [4]

- Budget-Constrained Discovery: When using RNA-seq on subset of samples followed by qPCR expansion to additional conditions [4]

Circumstances Where Validation May Be Unnecessary

- Adequate Biological Replication: When RNA-seq includes sufficient replicates (≥3) showing strong agreement [4]

- Hypothesis Generation: When RNA-seq serves as exploratory analysis followed by dedicated functional studies [6]

- Technical Replication: When additional RNA-seq datasets confirm initial findings in independent samples [6]

- Resource Constraints: When validation would consume limited samples needed for subsequent experiments

The question of RNA-seq validation persists because its answer depends on context rather than universal principles. As RNA-seq methodologies continue maturing, the validation imperative is shifting from routine practice to strategic implementation. Researchers should base validation decisions on their specific experimental design, biological system, and research goals rather than defaulting to tradition.

In clinical applications where diagnostic or therapeutic decisions hinge on results, validation remains crucial—as demonstrated by rigorous clinical RNA-seq test development for Mendelian disorders [9]. In discovery research, the field is gradually accepting well-designed RNA-seq studies without obligatory qPCR confirmation, particularly as internal replication and orthogonal functional assays provide alternative validation pathways.

The enduring partnership between RNA-seq and qPCR reflects their complementary strengths: RNA-seq for unbiased discovery and qPCR for targeted confirmation. As both technologies evolve, their optimal integration will continue refining transcriptome analysis, ensuring scientific conclusions rest on solid experimental foundations.

In the field of molecular biology, accurate gene expression analysis is fundamental to advancing our understanding of biological processes, disease mechanisms, and drug development. Two predominant technologies have emerged as the standard for transcript quantification: quantitative PCR (qPCR) and RNA sequencing (RNA-seq). While qPCR has long been considered the gold standard for targeted gene expression analysis due to its sensitivity and specificity, RNA-seq offers a comprehensive, hypothesis-free approach that enables discovery of novel transcripts and splicing variants [10] [11]. The relationship between these technologies is often complementary rather than competitive, with RNA-seq frequently employed for genome-scale discovery and qPCR serving as a validation tool for specific targets of interest [11].

Understanding the technical biases inherent in each method is crucial for proper experimental design, data interpretation, and validation strategies. Both techniques involve multi-step workflows where biases can be introduced at various stages, potentially compromising data accuracy and reliability. This guide provides a systematic comparison of the technical limitations of RNA-seq and qPCR, supported by experimental data and detailed methodologies, to assist researchers in making informed decisions about their gene expression analysis pipelines and validation approaches.

Technical Biases in RNA-seq

The RNA-seq workflow is exceptionally complex, with numerous steps where technical artifacts can be introduced, ultimately affecting the quality and interpretation of the resulting data [12]. These biases can originate from sample preservation, library preparation, sequencing, and data analysis stages. The table below summarizes the major sources of bias and potential improvement strategies:

Table 1: Key Sources of Bias in RNA-seq and Improvement Strategies

| Bias Source | Description | Suggested Improvement Strategies |

|---|---|---|

| Sample Preservation | RNA degradation during tissue autolysis or formalin-fixed paraffin-embedded (FFPE) preparation causes nucleic acid degradation and cross-linking [12]. | Use non-cross-linking organic fixatives; minimize processing and freezing-thawing cycles; use high sample input for degraded samples [12]. |

| RNA Extraction | TRIzol extraction can cause small RNA loss at low concentrations; different purification methods yield varying RNA quality [12]. | Use high RNA concentrations or avoid TRIzol; apply alternative protocols like mirVana miRNA isolation kit [12]. |

| mRNA Enrichment | 3'-end capture bias during poly(A) enrichment; rRNA depletion efficiency varies [12]. | Use rRNA depletion instead of poly(A) enrichment for certain applications; select method based on RNA species of interest [12]. |

| RNA Fragmentation | Non-random fragmentation using RNase III reduces complexity [12]. | Use chemical treatment (e.g., zinc) rather than RNase III; fragment cDNA instead of RNA [12]. |

| Primer Bias | Random hexamer priming bias; mispriming; nonspecific binding [12]. | Ligate sequencing adapters directly onto RNA fragments; use read count reweighing schemes to adjust for bias [12]. |

| Adapter Ligation | Substrate preferences of T4 RNA ligases [12]. | Use adapters with random nucleotides at ligation extremities [12]. |

| Reverse Transcription | Enzyme-specific biases in cDNA synthesis [13]. | Systematically evaluate reverse transcriptase performance for specific applications [13]. |

| PCR Amplification | Preferential amplification of sequences with specific GC content; unequal cDNA molecule amplification [12]. | Use Kapa HiFi rather than Phusion polymerase; reduce amplification cycles; use PCR additives for AT/GC-rich genomes [12]. |

Special Challenges for Polymorphic Gene Families

RNA-seq analysis faces particular challenges when quantifying genes within highly polymorphic families, such as the human leukocyte antigen (HLA) loci. The extreme polymorphism at HLA genes complicates read alignment, as short reads may fail to align properly due to significant differences from the reference genome [5]. Additionally, the high similarity between paralogs within this gene family often results in cross-alignments between genes, leading to biased expression quantification [5]. These challenges have motivated the development of specialized computational pipelines that account for known HLA diversity during alignment, significantly improving expression quantification accuracy for these immunologically crucial genes [5].

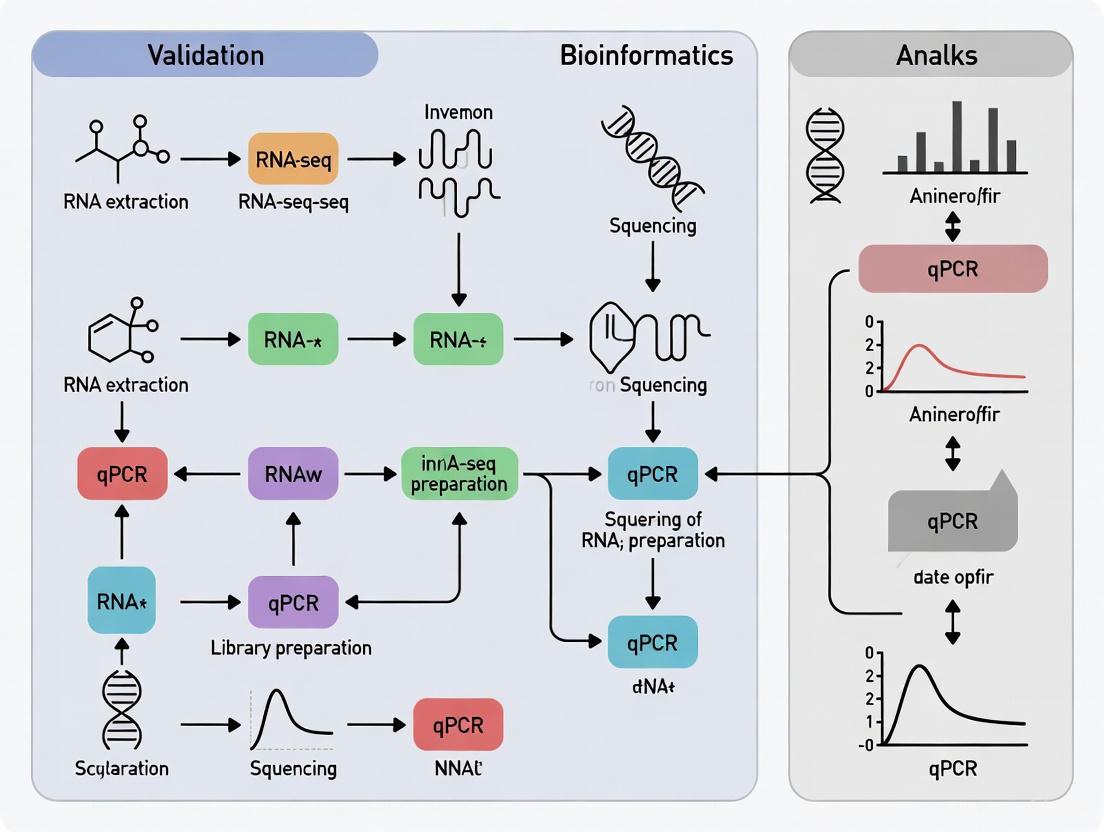

Figure 1: RNA-seq Workflow and Major Sources of Technical Bias

Impact of Library Preparation Choices

The choice of library preparation method significantly influences the type and magnitude of technical biases in RNA-seq data. Researchers must select between 3' mRNA-seq and whole transcriptome approaches based on their specific research questions [14]. While 3' mRNA-seq is highly convenient for multiplexing large sample numbers and provides accurate gene expression quantification with minimal computational resources, it is unsuitable for investigating alternative splicing, differential transcript usage, or novel isoform identification due to reads being localized to the 3' ends of transcripts [14]. Whole transcriptome library preparations, which typically require either poly(A) enrichment or rRNA depletion, provide complete transcript coverage but introduce their own biases through the selection method and may require more extensive bioinformatic processing [14].

Technical Biases in qPCR

Reverse Transcription: A Significant Source of Bias

The initial step of reverse transcribing RNA to cDNA introduces substantial quantitative biases that are frequently overlooked in qPCR experimental design [13]. Systematic experiments have demonstrated that reverse transcription exhibits both amplicon-specific and transcriptase-specific biases that can render standard calculations (e.g., ΔΔCq) of relative gene expression inaccurate or even erroneous [13]. Different commercial reverse transcriptase kits can produce markedly different results, with studies showing kit-dependent biases where the apparent differential expression between the same RNA samples varied by more than 5-fold depending on the enzyme used [13].

The integrity of RNA templates also significantly impacts reverse transcription efficiency. Experiments comparing intact and partially degraded RNA from the same source have demonstrated that RNA degradation affects different targets variably, potentially due to the structured nature of certain RNAs conferring higher resistance to cleavage [13]. This has important implications for the use of structured non-coding RNAs (such as U1 snRNA) as reference genes, as they may appear stable under conditions where mRNA integrity is compromised, leading to normalization artifacts [13].

Additional Technical Considerations in qPCR

While qPCR is often considered more straightforward than RNA-seq, it nonetheless presents several technical challenges that can introduce bias if not properly addressed:

Table 2: Key Technical Biases in qPCR and Recommended Practices

| Bias Source | Impact on Results | Recommended Practices |

|---|---|---|

| Reverse Transcription Efficiency | Enzyme- and gene-specific biases; non-linear cDNA synthesis [13]. | Systematically evaluate RT enzymes; implement controls for RT efficiency; report RT conditions following MIQE guidelines [13]. |

| PCR Amplification Efficiency | Variations between targets affect quantification accuracy [15]. | Validate amplification efficiency for each assay (90-110% ideal); use standard curves; avoid primer-dimer formation [15]. |

| Reference Gene Selection | Inappropriate normalization leads to misinterpretation of results [15]. | Use empirically validated reference genes; employ multiple reference genes; avoid single reference gene normalization [15] [13]. |

| Sample Quality | Degraded RNA affects different targets variably [13]. | Assess RNA integrity; use internal controls for degradation; apply consistent sample processing protocols [13]. |

Detection Chemistry and Assay Design Considerations

The selection of detection chemistry (e.g., TaqMan probes vs. SYBR Green dye) and assay design significantly influences qPCR specificity and sensitivity [15]. TaqMan assays provide greater specificity through the use of a target-specific probe but are more expensive and require careful validation. SYBR Green is more cost-effective but is susceptible to non-specific amplification, necessitating meticulous melt curve analysis [15]. Additionally, researchers must decide between one-step and two-step RT-qPCR protocols, with one-step offering convenience and reduced contamination risk, while two-step provides flexibility in primer selection and the ability to store cDNA for future analyses [15].

Figure 2: qPCR Workflow and Major Sources of Technical Bias

Comparative Performance: Experimental Data

Correlation Between RNA-seq and qPCR

Multiple studies have systematically compared gene expression measurements between RNA-seq and qPCR to evaluate their concordance. A comprehensive benchmarking study comparing five RNA-seq analysis workflows (Tophat-HTSeq, Tophat-Cufflinks, STAR-HTSeq, Kallisto, and Salmon) with whole-transcriptome qPCR data for 18,080 protein-coding genes revealed generally high expression correlations [16]. The Pearson correlation coefficients ranged from R² = 0.798 to 0.845 depending on the computational workflow used [16]. When comparing gene expression fold changes between samples, approximately 85% of genes showed consistent results between RNA-seq and qPCR data [16].

However, a significant proportion of genes (15-20%) displayed non-concordant expression measurements between the two technologies, defined as instances where methods yielded differential expression in opposing directions or where one method showed differential expression while the other did not [16] [17]. Importantly, the majority (approximately 93%) of these non-concordant genes exhibited relatively small fold changes (ΔFC < 2), suggesting that discrepancies are most prevalent for subtle expression differences [17]. The small fraction (approximately 1.8%) of severely non-concordant genes were typically characterized by lower expression levels and shorter transcript length [17].

Similar findings were observed in HLA expression studies, where comparisons between RNA-seq and qPCR revealed moderate correlations (0.2 ≤ rho ≤ 0.53) for HLA-A, -B, and -C genes [5]. This highlights the challenges in quantifying expression of highly polymorphic genes and suggests that technical and biological factors must be carefully considered when comparing quantifications from different platforms [5].

Table 3: Comparative Performance of RNA-seq and qPCR Based on Experimental Studies

| Performance Metric | RNA-seq Results | qPCR Results | Concordance |

|---|---|---|---|

| Expression Correlation | Varies by workflow (R² = 0.798-0.845) [16]. | Gold standard reference | High overall correlation |

| Fold Change Correlation | Varies by workflow (R² = 0.927-0.934) [16]. | Gold standard reference | ~85% genes show consistent fold changes [16] |

| Non-concordant Genes | 15-20% of genes show discrepancies with qPCR [17]. | 15-20% of genes show discrepancies with RNA-seq [17]. | Majority (93%) have ΔFC < 2 [17] |

| Problematic Gene Features | Shorter, lower expressed genes with fewer exons [16]. | Performance issues with structured RNAs and degraded samples [13]. | Severe discrepancies in ~1.8% of genes [17] |

| HLA Gene Expression | Moderate correlation with qPCR (0.2 ≤ rho ≤ 0.53) [5]. | Traditional reference method | Technical challenges for polymorphic genes [5] |

Technology-Specific Strengths and Limitations

The comparative analysis of RNA-seq and qPCR reveals distinct advantages and limitations for each technology, which should guide their application in research and validation workflows:

Table 4: Technology Comparison - Key Strengths and Limitations

| Feature | RNA-seq | qPCR |

|---|---|---|

| Discovery Power | High - detects novel transcripts, splicing variants, and fusion genes without prior knowledge [1]. | None - limited to detection of known, predefined sequences [1]. |

| Throughput | High - can profile thousands of genes across multiple samples simultaneously [1]. | Low to Medium - practical for up to approximately 30 targets; becomes cumbersome for larger numbers [10]. |

| Sensitivity | High - can detect subtle expression changes (down to 10%) and rare transcripts [1]. | Exceptional - wide dynamic range, detection down to single copy level [15] [11]. |

| Technical Biases | Complex - multiple sources including mapping, GC content, and library preparation artifacts [12]. | Simpler but Significant - primarily reverse transcription and amplification efficiency issues [13]. |

| Cost and Accessibility | Higher cost - requires specialized equipment and bioinformatics expertise [10]. | Lower cost - equipment accessible in most molecular biology labs [1]. |

| Data Complexity | High - massive datasets requiring substantial storage and computational resources [10]. | Low - straightforward data analysis with established analysis methods [15]. |

Experimental Design and Validation Strategies

Framework for Validation of RNA-seq Findings

The question of whether RNA-seq results require validation by qPCR has evolved as RNA-seq methodologies have matured. Current evidence suggests that when all experimental steps and data analyses are performed according to state-of-the-art practices, RNA-seq results are generally reliable and may not require systematic validation for all findings [17]. However, validation remains crucial in specific circumstances, particularly when research conclusions heavily depend on differential expression of a small number of genes, especially if those genes are lowly expressed or show relatively small fold changes [17].

A strategic approach to validation should consider the following scenarios where qPCR confirmation adds value:

- Critical Findings: When the entire biological story depends on differential expression of only a few genes [17].

- Low Expression Targets: When focusing on genes with low expression levels or small fold changes (<2) where technical artifacts are more likely [16] [17].

- Extended Sample Sets: When using qPCR to measure expression of selected genes in additional samples not included in the original RNA-seq study [17].

- Technical Concerns: When sample quality issues or other technical challenges may have compromised RNA-seq results [13].

Essential Research Reagents and Controls

Proper experimental design for both RNA-seq and qPCR requires careful selection of reagents and implementation of appropriate controls to minimize technical biases:

Table 5: Essential Research Reagents and Controls for Minimizing Technical Biases

| Reagent/Control Category | Specific Examples | Function and Importance |

|---|---|---|

| Reverse Transcriptase Enzymes | iScript, Transcriptor, SuperScript [13]. | Critical choice affecting quantitative accuracy; systematic evaluation recommended for each application [13]. |

| Reference Standards | ERCCs, SIRVs, Stratagene QPCR Human Reference Total RNA [13]. | Assess technical performance; normalize across platforms; identify protocol-specific biases [13]. |

| qPCR Assay Types | TaqMan probes, SYBR Green [15]. | TaqMan offers greater specificity; SYBR Green is more cost-effective; selection impacts detection accuracy [15]. |

| RNA Quality Assessment | RNA Integrity Number (RIN), degradation checks [13]. | RNA integrity significantly impacts reverse transcription efficiency and quantitative accuracy [13]. |

| Reference Genes | eEF1A1, 18S rRNA, U1 snRNA, empirically validated sets [13]. | Essential for normalization; must be empirically validated for specific experimental conditions; using multiple references is recommended [15] [13]. |

Both RNA-seq and qPCR technologies offer powerful approaches for gene expression analysis but are susceptible to distinct technical biases that researchers must acknowledge and address. RNA-seq biases predominantly stem from its complex workflow, including library preparation, sequencing, and data analysis steps, with particular challenges for polymorphic gene families and low-abundance transcripts. qPCR, while more straightforward, introduces significant biases primarily through reverse transcription efficiency and amplification artifacts. The moderate correlation (0.2 ≤ rho ≤ 0.53) observed between these technologies for challenging targets like HLA genes underscores the importance of understanding their limitations [5].

Strategic validation employing both technologies throughout the experimental workflow—using qPCR to check cDNA integrity prior to RNA-seq and to verify critical findings afterward—represents the most robust approach [11]. This integrated methodology leverages the complementary strengths of each technology while mitigating their respective limitations, ultimately leading to more reliable and reproducible gene expression data for basic research and drug development applications.

The validation of RNA sequencing (RNA-seq) findings using real-time quantitative PCR (RT-qPCR) has been a long-standing practice in transcriptomics research. While RNA-seq provides an unbiased, genome-wide view of the transcriptome, RT-qPCR is often regarded as the "gold standard" for gene expression quantification due to its high sensitivity, specificity, and reproducibility [7] [17]. However, the assumption that qPCR necessarily serves as the definitive validation method requires careful examination in light of advancing RNA-seq technologies and improved bioinformatics pipelines. This guide objectively examines the performance concordance between these technologies, explores the factors influencing agreement, and provides evidence-based recommendations for researchers and drug development professionals navigating transcriptome validation.

Quantitative Comparison of RNA-seq and qPCR Performance

Extensive benchmarking studies have systematically compared gene expression measurements between RNA-seq and qPCR platforms. The correlation between these technologies varies based on experimental conditions, analysis workflows, and gene characteristics.

Table 1: Overall Correlation Between RNA-seq and qPCR Expression Measurements

| Comparison Metric | Correlation Range | Influencing Factors | Key Findings |

|---|---|---|---|

| Expression Intensity | Pearson R²: 0.798-0.845 [16] | Analysis workflow, expression level | Pseudoalignment methods (Salmon, Kallisto) showed slightly higher correlations |

| Fold Change Correlation | Pearson R²: 0.927-0.934 [16] | Effect size, biological context | High concordance for genes with large expression differences |

| Differential Expression Concordance | 80.6%-84.9% agreement [16] | Fold change magnitude, expression level | ~15-19% of genes show non-concordant results, mostly with small fold changes |

A comprehensive benchmark using whole-transcriptome RT-qPCR data for 18,080 protein-coding genes revealed that the fraction of genes with non-concordant results between RNA-seq and qPCR ranged from 15.1% to 19.4%, depending on the RNA-seq analysis workflow [16]. Importantly, the majority of these non-concordant genes (93%) showed relatively small fold changes (ΔFC < 2) between experimental conditions, with the most severe discrepancies typically occurring in lowly expressed and shorter genes [16].

Table 2: Characteristics of Genes with Poor RNA-seq/qPCR Concordance

| Gene Feature | Impact on Concordance | Practical Implications |

|---|---|---|

| Expression Level | Lower expression → Reduced concordance [16] | High-confidence results primarily for medium-high expression genes |

| Transcript Length | Shorter transcripts → Reduced concordance [16] | Potential quantification bias for genes with shorter isoforms |

| Fold Change Magnitude | Smaller ΔFC → Higher discordance rate [16] | Greater confidence in genes with large expression differences |

| Complexity | Multi-exonic genes show better concordance [16] | Single-exon genes may require additional validation |

Experimental Protocols for Method Comparison

Benchmarking Study Design

Robust comparison of RNA-seq and qPCR requires carefully controlled experimental designs. The MAQCA (Universal Human Reference RNA) and MAQCB (Human Brain Reference RNA) samples from the MAQC-I consortium have served as well-established reference materials for such comparisons [16]. The standard protocol involves:

Sample Preparation: Isolate high-quality RNA from biological samples using standardized kits (e.g., RNeasy Mini Kit) with DNase treatment to remove genomic DNA contamination [5].

RNA Quality Control: Assess RNA integrity and quality using appropriate methods (e.g., Qubit Fluorometer, TapeStation) [18].

Library Preparation and Sequencing: For RNA-seq, prepare libraries using stranded mRNA preparation kits (e.g., Illumina Stranded mRNA prep kit) and sequence on appropriate platforms (e.g., Illumina NovaSeq) to a target depth of 20-30 million reads per sample [18] [19].

qPCR Assay Design: Design and validate primers for the target genes, ensuring high amplification efficiency and specificity. Include stable reference genes for normalization [7].

Data Analysis: Process RNA-seq data through multiple workflows (e.g., STAR-HTSeq, Kallisto, Salmon) and compare with qPCR results using correlation and concordance metrics [16].

RNA-seq Analysis Workflows

Different RNA-seq processing methods can impact concordance with qPCR results:

Reference Gene Selection Protocol

Appropriate reference gene selection is critical for both technologies. The "Gene Selector for Validation" (GSV) software provides a systematic approach for identifying optimal reference genes from RNA-seq data based on stability and expression level [7]:

Input Preparation: Compile transcripts per million (TPM) values for all genes across all samples.

Stability Filtering: Apply sequential filters to identify stable, highly expressed genes:

- Expression > 0 TPM in all samples

- Standard deviation of log₂(TPM) < 1

- No exceptional expression in any library (within 2× of log₂(TPM) average)

- Average log₂(TPM) > 5

- Coefficient of variation < 0.2 [7]

Candidate Validation: Select top candidate reference genes for experimental validation by RT-qPCR using stability assessment algorithms (GeNorm, NormFinder) [7].

Decision Framework for Orthogonal Validation

The necessity of qPCR validation depends on several factors, including experimental goals, gene characteristics, and resource constraints. The following decision pathway provides guidance for determining when orthogonal validation is most valuable:

When qPCR Validation Provides Maximum Value

- Low-Expression Genes: Genes with TPM < 10 show higher technical variability in RNA-seq [16].

- Small Effect Sizes: Fold changes < 1.5 have higher rates of non-concordance between platforms [17] [16].

- Critical Findings: When research conclusions depend heavily on a small number of genes [17].

- Extended Applications: Using qPCR to measure expression in additional samples, conditions, or strains beyond the original RNA-seq study [17].

When RNA-seq Stands Alone

- Genome-Scale Analyses: When conclusions are based on patterns across hundreds of genes rather than individual genes [17].

- High-Quality Data: When using state-of-the-art RNA-seq protocols with sufficient biological replicates (≥3) and sequencing depth (≥20M reads) [19] [17].

- Large Effect Sizes: For genes with high expression and large fold changes (>2) [16].

- Limited Resources: When budget or sample material constraints prevent orthogonal validation [17].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Tools for RNA-seq/qPCR Comparison Studies

| Category | Specific Products/Tools | Application & Function |

|---|---|---|

| RNA Isolation | RNeasy Mini Kit (Qiagen), AllPrep DNA/RNA Kit [18] [20] | Simultaneous DNA/RNA extraction from limited samples |

| RNA Quality Control | Qubit Fluorometer, TapeStation, Bioanalyzer [18] [20] | Quantification and integrity assessment |

| Library Preparation | Illumina Stranded mRNA Prep Kit, TruSeq Stranded mRNA [18] [20] | RNA-seq library construction with strand specificity |

| qPCR Reagents | SYBR Green Master Mix, TaqMan assays [7] | Fluorescence-based detection of amplification |

| Reference Materials | Universal Human Reference RNA, Human Brain Reference RNA [16] | Standardized samples for cross-platform comparison |

| Data Analysis Software | GSV (Gene Selector for Validation) [7], GeNorm [7], NormFinder [7] | Reference gene selection and validation |

| RNA-seq Pipelines | STAR-HTSeq [16], Kallisto [16], Salmon [16] | Read alignment and quantification |

RNA-seq and qPCR show strong overall concordance, particularly for medium-to-highly expressed genes with large fold changes. Under optimal conditions with sufficient replicates and modern analysis workflows, RNA-seq can provide reliable expression data without mandatory qPCR validation. However, targeted qPCR validation remains valuable for specific scenarios, including low-expression genes, small effect sizes, and when critical research conclusions depend on a limited number of genes. As RNA-seq technologies continue to mature and benchmarking studies provide more comprehensive guidance, the scientific community is increasingly recognizing RNA-seq as a validated quantitative method rather than merely a screening tool requiring blanket confirmation by qPCR.

In the field of gene expression analysis, RNA sequencing (RNA-seq) has emerged as a powerful, discovery-oriented tool that provides an unbiased view of the entire transcriptome. However, this high-throughput technology generates massive datasets that require sophisticated bioinformatic processing, introducing potential sources of technical variance that demand confirmation through independent methods. Quantitative PCR (qPCR), with its well-established precision, sensitivity, and reproducibility, has maintained its position as the gold standard for validating gene expression measurements obtained from RNA-seq experiments [21] [6]. This guide objectively compares the performance characteristics of these two technologies and provides detailed experimental protocols for researchers seeking to confirm transcriptomic findings through rigorous analytical validation.

The necessity for validation stems from the fundamental differences in how these technologies quantify nucleic acids. While RNA-seq involves cDNA library preparation, massive parallel sequencing, and complex bioinformatic processing of short reads, qPCR employs targeted amplification with fluorescence-based detection in real time, resulting in a simpler workflow with less potential for technical bias [6]. This distinction becomes particularly important when RNA-seq data forms the basis for significant biological conclusions or clinical applications, where independent verification is not just beneficial but essential for scientific rigor.

Performance Comparison: qPCR versus RNA-Seq

Technical Foundations and Performance Characteristics

Table 1: Fundamental Technical Differences Between qPCR and RNA-Seq

| Parameter | qPCR | RNA-Seq |

|---|---|---|

| Throughput | Low to medium (typically 10s-100s of targets) | High (entire transcriptome) |

| Dynamic Range | ~7-8 logs of magnitude [22] | ~5 logs of magnitude [21] |

| Sensitivity | Can detect single copies [22] | Limited for low-abundance transcripts [21] |

| Sample Requirement | Low (nanograms of RNA) | Moderate to high (micrograms of RNA) |

| Quantification Basis | Fluorescence threshold cycle (Cq) | Read counts aligned to reference |

| Multiplexing Capability | Limited (typically 2-5 plex) | Virtually unlimited |

| Discovery Power | None (hypothesis-driven) | High (hypothesis-generating) |

Analytical Performance in Validation Studies

Direct benchmarking studies have revealed important insights about the correlation between these technologies. A comprehensive assessment using well-established MAQCA and MAQCB reference samples demonstrated that multiple RNA-seq workflows (Tophat-HTSeq, STAR-HTSeq, Kallisto, and Salmon) showed high gene expression correlations with qPCR data, with Pearson correlation coefficients ranging from R² = 0.798 to 0.845 [21]. When comparing gene expression fold changes between samples, approximately 85% of genes showed consistent results between RNA-seq and qPCR data across all workflows [21].

However, each RNA-seq analysis method revealed a small but specific gene set with inconsistent expression measurements, representing about 15% of analyzed genes [21]. These inconsistent genes were typically characterized by shorter length, fewer exons, and lower expression levels, suggesting that qPCR validation remains particularly crucial for this specific gene subset [21].

Table 2: Correlation Performance Between RNA-Seq Workflows and qPCR

| Analysis Workflow | Expression Correlation with qPCR (R²) | Fold Change Correlation with qPCR (R²) | Non-Concordant Genes |

|---|---|---|---|

| Salmon | 0.845 | 0.929 | 19.4% |

| Kallisto | 0.839 | 0.930 | 16.8% |

| Tophat-HTSeq | 0.827 | 0.934 | 15.1% |

| STAR-HTSeq | 0.821 | 0.933 | 15.3% |

| Tophat-Cufflinks | 0.798 | 0.927 | 18.2% |

A more recent study focusing on the challenging HLA gene family revealed more moderate correlations between qPCR and RNA-seq (0.2 ≤ rho ≤ 0.53 for HLA class I genes), highlighting that correlation performance can vary significantly depending on the specific gene targets and the RNA-seq analysis pipeline employed [5].

Experimental Design for Validation Studies

When is qPCR Validation Appropriate?

According to established best practices, qPCR validation is particularly recommended in these scenarios:

- Confirmatory Studies: When a second method is necessary to confirm a specific observation, particularly for publications where reviewers expect verification using different technological approaches [6].

- Limited Replication: When RNA-seq data is based on a small number of biological replicates, limiting the statistical power of the sequencing experiment [6].

- Focus on Specific Targets: When the biological story hinges on expression changes in a relatively small number of critical genes [6].

- Clinical Applications: When findings may have diagnostic, prognostic, or therapeutic implications requiring the highest level of technical validation.

Conversely, qPCR validation may be less essential when RNA-seq data serves primarily for hypothesis generation that will be tested through other means (e.g., protein-level assays), or when conducting additional RNA-seq experiments on larger sample sets serves as its own validation [6].

Optimal Sample Selection for Validation

To maximize the value of validation studies, researchers should employ a different set of samples with proper biological replication rather than simply repeating measurements on the same RNA used for initial RNA-seq. This approach validates not only the technological consistency but also the biological reproducibility of the findings [6]. The sample size for qPCR validation should be determined based on statistical power considerations, typically requiring sufficient biological replicates to account for expected biological variability.

Methodologies: qPCR Experimental Protocols

RNA Quality Control and Reverse Transcription

Begin with high-quality RNA (RNA Integrity Number ≥ 8) to ensure reliable results. For the reverse transcription step, select either one-step or two-step RT-qPCR based on experimental needs:

One-Step RT-qPCR combines reverse transcription and PCR amplification in a single reaction, offering reduced hands-on time, lower contamination risk, and higher throughput capability [23]. This approach is ideal for high-throughput studies with limited targets.

Two-Step RT-qPCR separates reverse transcription from amplification, providing greater flexibility as the synthesized cDNA can be stored and used for multiple different targets across multiple reactions [23]. This approach is preferable when analyzing many targets from limited sample material.

qPCR Detection Chemistry Selection

Two primary detection chemistries are available for qPCR, each with distinct advantages:

DNA-Binding Dyes (e.g., SYBR Green): These dyes bind nonspecifically to double-stranded DNA, producing increased fluorescence with accumulating PCR product. The main advantage is their cost-effectiveness and compatibility with standard primers, though they require melt curve analysis to verify amplification specificity [23].

Probe-Based Detection (e.g., TaqMan Probes): These sequence-specific probes provide enhanced specificity through a reporter-quencher mechanism. Hydrolysis probes are cleaved during amplification, releasing fluorescence, while hairpin probes (molecular beacons) undergo conformational changes when bound to target sequences [23]. Probe-based methods enable multiplexing through different fluorescent labels but require specialized probe design and increased costs.

Standard Curve Method for Relative Quantification

The standard curve method provides a reliable approach for relative quantification that avoids potential inaccuracies in PCR efficiency estimation [24]. The procedure consists of these critical steps:

Noise Filtering: Process raw fluorescence data by applying smoothing algorithms (e.g., 3-point moving average), baseline subtraction, and amplitude normalization to reduce technical noise [24].

Threshold Selection: Automatically determine the optimal quantification threshold by identifying the value that yields the maximum coefficient of determination (r²) for the standard curve, typically achieving >99% confidence [24].

Crossing Point Calculation: Derive crossing points (CPs) directly from coordinates where the threshold line intersects the fluorescence curves after noise filtering.

Standard Curve Generation: Create a standard curve by plotting the logarithms of known template concentrations against their corresponding CP values, applying least-squares linear regression.

Relative Quantification: Calculate relative expression values from sample CPs using the standard curve equation, followed by exponentiation (base 10) to obtain non-normalized quantities.

Reference Gene Normalization: Divide target gene quantities by a normalization factor derived from stable reference genes (preferably using geometric mean of multiple validated references) [24].

Experimental Quality Control

Adherence to MIQE (Minimum Information for Publication of Quantitative Real-Time PCR Experiments) guidelines ensures generation of reproducible, high-quality data [22] [25]. Essential quality parameters include:

- PCR Efficiency: Determined from standard curve slope, with ideal efficiency ranging from 90-110% (slope of -3.6 to -3.1) [22].

- Dynamic Range: Linear range should span at least 3-4 orders of magnitude with R² ≥ 0.98 [22].

- Specificity Verification: Confirm amplification specificity through melt curve analysis (for dye-based methods) or sequence verification.

- No-Template Controls: Include controls to detect contamination or primer-dimer formation.

- Replication: Perform both technical replicates (assessing pipetting variance) and biological replicates (assessing biological variance).

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Reagent Solutions for qPCR Validation

| Reagent/Material | Function | Selection Considerations |

|---|---|---|

| Reverse Transcriptase | Synthesizes cDNA from RNA template | Processivity, fidelity, ability to handle complex RNA |

| qPCR Master Mix | Provides optimized buffer, enzymes, dNTPs for amplification | Detection chemistry (dye vs. probe), compatibility, robustness |

| Assay Primers | Target-specific amplification | Specificity, efficiency, minimal dimer formation |

| Fluorescent Probes | Sequence-specific detection (probe-based methods) | Quencher system, reporter dyes, specificity |

| DNA-Binding Dyes | Non-specific detection (dye-based methods) | Signal strength, background fluorescence, cost |

| Reference Genes | Normalization control | Stable expression across experimental conditions |

| Nuclease-Free Water | Reaction preparation | Purity, absence of contaminating nucleases |

Data Analysis Framework for Validation Studies

Comparative Analysis Workflow

Establishing a structured framework for comparing RNA-seq and qPCR results ensures objective assessment of validation success:

Interpretation Guidelines

Successful validation is demonstrated when:

- Fold change correlations between RNA-seq and qPCR show R² > 0.85 [21]

- Direction of change is consistent for statistically significant results

- Magnitude of change shows reasonable agreement (within 2-fold for most genes)

- Inconsistent results are investigated for technical artifacts or biological explanations

The 15% of genes that typically show discrepant results between the technologies deserve special attention, as these may represent either technical artifacts or biologically interesting phenomena worthy of further investigation [21].

qPCR maintains its critical role as the gold standard for analytical validation of RNA-seq findings due to its superior sensitivity, precision, and methodological simplicity. While RNA-seq provides unparalleled discovery power for transcriptome-wide exploration, qPCR delivers the verification rigor required for confirmatory studies. The experimental frameworks and methodologies presented in this guide provide researchers with a standardized approach for conducting these essential validation studies, ensuring that genomic findings meet the highest standards of technical reliability before progressing to functional studies or clinical applications.

By implementing these standardized protocols and analysis frameworks, researchers can bridge the technological gap between high-throughput discovery and targeted verification, advancing genomic science with findings that are both novel and robustly validated.

In the pipeline of modern biomedical research, biomarker discovery and validation represent two critical, sequential phases. Next-Generation Sequencing (NGS) technologies, particularly RNA sequencing (RNA-seq), have become the gold standard for unbiased, genome-wide discovery due to their ability to profile thousands of molecules without prior knowledge of the transcriptome [26] [16]. However, the transition of promising biomarkers from high-throughput discovery to clinically applicable research assays requires a method that is quantitative, reproducible, and accessible. Here, quantitative PCR (qPCR) and its digital counterpart (dPCR) play an indispensable role, serving as the bridge that validates RNA-seq findings and transforms them into reliable tools for clinical research and diagnostic development [26] [27]. This guide objectively compares the performance of these technologies, providing the experimental data and protocols essential for researchers and drug development professionals to make informed decisions.

Technology Comparison: RNA-seq vs. (d)PCR

The table below summarizes the core performance characteristics of RNA-seq, qPCR, and dPCR, highlighting their complementary roles in the biomarker workflow.

Table 1: Performance Comparison of RNA-seq, qPCR, and dPCR

| Feature | RNA-seq | qPCR | dPCR |

|---|---|---|---|

| Primary Role | Biomarker discovery, whole-transcriptome analysis [16] | Targeted validation, gene expression quantification [28] | Absolute quantification, rare target detection [29] [30] |

| Throughput | High (thousands of targets) | Medium (dozens of targets) | Low to Medium (single to multiplex targets) |

| Dynamic Range | Broad (>10^5) [16] | Broad (>10^7 for qPCR) [30] | Linear over a wide range [30] |

| Sensitivity | High (can detect low-abundance transcripts) | High | Very High (capable of detecting single molecules) [29] |

| Quantification | Relative (e.g., TPM, FPKM) | Relative (Ct) or absolute with standard curve | Absolute (copies/μL), no standard curve required [30] |

| Precision (Variability) | N/A | CV ~5.0% [30] | CV ~2.3% (2-fold lower than qPCR) [30] |

| Cost per Sample | High (~$1000/sample for RNA-seq [26]) | Low ($2-50/reaction [26]) | Moderate |

| Ease of Data Analysis | Complex, requires advanced bioinformatics | Straightforward, standardized software | Straightforward, standardized software |

Validating RNA-seq Findings with qPCR/dPCR

Correlation and Concordance in Expression Measurement

The reliability of using qPCR to validate RNA-seq data is well-established, with studies showing high overall correlation. A landmark benchmarking study comparing five major RNA-seq workflows against whole-transcriptome RT-qPCR data for over 18,000 protein-coding genes demonstrated high expression correlation, with Pearson correlation coefficients (R²) ranging from 0.798 to 0.845 [16]. When comparing gene expression fold changes—a more relevant metric for most studies—the correlations were even higher, with R² values between 0.927 and 0.934 [16]. This indicates strong concordance between the technologies for identifying differentially expressed genes.

However, a small but significant fraction of genes (15-19%) can show non-concordant results between RNA-seq and qPCR when assessing differential expression status. The majority of these discrepancies have relatively small differences in fold change (ΔFC < 1) [16]. This underscores the importance of careful assay design and validation, rather than questioning the fundamental agreement between the platforms.

Advantages of dPCR for High-Precision Validation

Digital PCR offers a key advantage in validation workflows through its superior precision and reproducibility. A direct technical comparison demonstrated that Crystal Digital PCR had a 2.3-fold lower coefficient of variation (%CV) than qPCR (2.3% vs. 5.0%) when quantifying the same target from a single master mix [30]. This precision is derived from dPCR's method of partitioning a sample into thousands of individual reactions for end-point detection and absolute quantification without the need for a standard curve [29] [30]. This makes dPCR particularly suited for validating biomarkers where small fold-changes are biologically significant, or for quantifying low-abundance targets.

Experimental Protocols for Cross-Platform Validation

Protocol 1: Validation of RNA-seq-Derived Biomarkers via qPCR

This protocol ensures robust validation of transcriptomic discoveries.

- Candidate Selection: From RNA-seq differential expression analysis, select candidate biomarkers based on statistical significance (e.g., p-value ≤ 0.05) and fold-change magnitude (e.g., |log2FC| > 1) [26].

- Endogenous Control Identification: Critically, do not default to "universal" reference genes (e.g., GAPDH, ACTB). Use tools like the HeraNorm R Shiny application to identify and validate the most stable endogenous controls specific to your dataset. HeraNorm analyzes RNA-seq count data to nominate genes with minimal expression variability (e.g., |log2FC| < 0.02, p-value ≥ 0.8) [26].

- qPCR Assay Design: Design primers and probes with the following criteria:

- Amplicon size: 70-200 bp.

- Primer Tm: 58-60°C, with <1°C difference between forward and reverse primers.

- Validate amplification efficiency (90-110%) and linearity (R² > 0.99) using a standard curve [28].

- PCR-Stop Analysis for In-Depth Validation: Perform PCR-Stop analysis to evaluate assay performance during initial cycles [28].

- Prepare multiple batches of the same sample.

- Subject batches to 0 to 5 pre-run amplification cycles.

- Run all batches in a final qPCR run together.

- Analyze the consistency of Cq shifts and amplification efficiency against the theoretical doubling of product. This reveals quantitative resolution and confirms the assay starts with its average efficiency [28].

- Data Analysis: Use the comparative Cq (2^–ΔΔCq) method to calculate relative expression changes, normalizing to the validated endogenous controls [26].

Protocol 2: dPCR for Absolute Quantification of Circulating Biomarkers

This protocol is ideal for liquid biopsy applications, such as quantifying circulating tumor DNA (ctDNA) or viral loads.

- Sample Preparation: Extract cell-free DNA (cfDNA) from plasma or other liquid biopsy sources using specialized kits that maximize the yield of short fragments [31].

- Assay Selection: Use pre-validated dPCR assays or design custom assays as in Step 3 of Protocol 1. dPCR is more tolerant of PCR inhibitors, but sample purity should still be assessed [30].

- Partitioning and Amplification: Load the sample and PCR mix into a dPCR chip or droplet generator. The Naica System (Crystal Digital PCR), for example, creates thousands of droplets or microchambers [29] [30]. Perform PCR amplification with a standard thermocycling protocol.

- Endpoint Reading and Analysis: After amplification, read each partition for fluorescence. Use the system's software (e.g., Crystal Miner) to automatically distinguish positive (target-present) from negative (target-absent) partitions and apply Poisson statistics to calculate the absolute concentration of the target in copies/μL [30].

Workflow Visualization

The following diagram illustrates the integrated pathway from biomarker discovery to clinical research assay, highlighting the distinct and complementary roles of RNA-seq and (d)PCR technologies.

The Scientist's Toolkit: Essential Research Reagent Solutions

The table below details key reagents and materials critical for successful experimentation in this field.

Table 2: Key Research Reagent Solutions and Their Functions

| Reagent/Material | Function | Key Considerations |

|---|---|---|

| Reference RNA Samples (e.g., MAQCA/MAQCB) | Benchmarking and cross-platform calibration of gene expression measurements [16]. | Well-characterized transcriptomes allow for performance assessment of both RNA-seq and qPCR workflows. |

| Stable Endogenous Controls | Normalization of qPCR data to account for technical variation (e.g., RNA input, RT efficiency) [26]. | Context-specific validation is critical. Tools like HeraNorm can identify stable genes from RNA-seq data instead of relying on unstable "universal" controls (e.g., GAPDH, miR-16). |

| Reverse Transcription Kits | Conversion of RNA to complementary DNA (cDNA) for qPCR/dPCR analysis. | High efficiency and fidelity are required to accurately represent the original RNA population and avoid bias. |

| dPCR Chips / Droplet Generators | Microfluidic devices that partition samples into thousands of nanoliter reactions for absolute quantification [29] [30]. | Materials (e.g., silicon, PDMS, COC) offer thermal conductivity and optical clarity. The number of partitions impacts precision. |

| Hot-Start Polymerases | DNA polymerases activated only at high temperatures, improving specificity and yield of PCR reactions [28]. | Reduces non-specific amplification and primer-dimer formation, which is crucial for both qPCR and dPCR sensitivity. |

| Probe-Based Chemistry (e.g., TaqMan) | Sequence-specific fluorescent detection of the amplified target in qPCR/dPCR [28]. | Provides higher specificity than intercalating dyes, essential for multiplex assays and distinguishing closely related sequences. |

The journey from biomarker discovery to a robust clinical research assay is a process of increasing specificity and validation. RNA-seq is the powerful, discovery engine that identifies candidate molecules from the entire transcriptome. qPCR serves as the versatile and accessible workhorse for validating these findings in larger cohorts. Finally, dPCR provides the precision tool for applications demanding absolute quantification and the highest level of accuracy, such as in liquid biopsies and rare event detection. By understanding their complementary strengths and implementing rigorous validation protocols, researchers can confidently translate genomic discoveries into reliable assays that advance clinical research and drug development.

From Data to Validation: A Step-by-Step Protocol for qPCR Assay Design

Leveraging RNA-seq Data to Identify Optimal Reference Genes

The validation of RNA-seq findings through quantitative real-time PCR (RT-qPCR) is a cornerstone of reliable transcriptomic research. This process, however, is heavily dependent on the use of stably expressed reference genes for accurate data normalization. The selection of inappropriate reference genes remains a major source of error, potentially leading to the misinterpretation of gene expression data. With the growing accumulation of RNA-seq datasets, a powerful strategy has emerged: leveraging these vast transcriptomic resources to systematically identify optimal, stably expressed reference genes for subsequent qPCR experiments. This guide compares the different computational and experimental approaches for this purpose, evaluates their performance, and provides a structured framework for implementation, complete with supporting experimental data.

Computational Selection Strategies from RNA-seq Data

The process of selecting candidate reference genes from RNA-seq data primarily relies on analyzing gene expression stability across samples. The following table summarizes the core computational approaches and tools available.

Table 1: Computational Methods for Identifying Reference Genes from RNA-seq Data

| Method/Software | Core Metric | Key Criteria | Advantages | Limitations |

|---|---|---|---|---|

| GSV (Gene Selector for Validation) [7] | Expression stability (Standard Deviation, Coefficient of Variation) | TPM > 0 in all samples; SD (log2(TPM)) < 1; | User-friendly GUI; Filters low-expression genes; Identifies both stable and variable genes. | Less established compared to traditional methods. |

| Coefficient of Variation (CV) Method [32] | Coefficient of Variation (CV) | Low CV across samples. | Simple, intuitive calculation. | Does not account for systematic inter-group variation. |

| Fold Change Cut-off Method [32] | Maximum Fold Change | Minimal fold-change across sample comparisons. | Simple, intuitive calculation. | Less statistical rigor than other methods. |

The GSV software represents a specialized tool that formalizes the filtering process [7]. Its algorithm applies a series of sequential filters to transcripts per million (TPM) values from RNA-seq data to select ideal reference gene candidates:

- Expression Filter: The gene must have an expression value (TPM) greater than zero in all analyzed libraries [7].

- Variability Filter: The standard deviation of the log2(TPM) values must be less than 1, ensuring low variability [7].

- Outlier Filter: No single log2(TPM) value can be more than twice the average log2(TPM), preventing exceptional expression in any one sample [7].

- Abundance Filter: The average log2(TPM) must be greater than 5, guaranteeing sufficient expression for easy detection by qPCR [7].

- Consistency Filter: The coefficient of variation must be less than 0.2, confirming stable expression relative to the mean [7].

Performance Comparison: RNA-seq-Derived vs. Traditional Reference Genes

The critical question is whether reference genes selected from RNA-seq data outperform traditional housekeeping genes. Evidence from multiple studies, summarized in the table below, shows that while RNA-seq preselection is effective, it is not universally superior to a robust statistical evaluation of traditional candidates.

Table 2: Experimental Validation of Reference Gene Performance

| Study System | RNA-seq-Derived Candidates | Traditional Candidates | Key Finding | Correlation with RNA-seq (Pearson r) |

|---|---|---|---|---|

| Human Cell Lines (TempO-seq vs. RNA-seq) [33] | Genes with concordant expression (15,480 genes) | Genes with non-concordant expression (3,810 genes) | 80% of genes showed concordant expression. Platform differences resolved by Relative Log2 Expression (RLE). | 0.77 (95% CI: 0.76–0.78) [33] |

| Abelmoschus Manihot [34] | eIF, PP2A1 (from transcriptome) | ACT2, TUA, GAPDH | eIF and PP2A1 showed the highest stability; TUA the lowest. | Not explicitly measured, but reference genes enabled validation of transcriptomics data. |

| Human iPSC Microglia & Mouse Sciatic Nerves [35] | Stable genes from RNA-seq | Conventional housekeeping genes | A robust statistical workflow for conventional candidates performed equally well. | RNA-seq preselection offered no significant advantage [35]. |

A study on human iPSC-derived microglia and mouse sciatic nerves directly challenged the necessity of RNA-seq for reference gene selection [35]. The research demonstrated that applying a robust statistical workflow—combining coefficient of variation (CV) analysis and the NormFinder algorithm—to a panel of conventional reference genes yielded normalization results that were equivalent to those obtained using stable genes pre-selected from RNA-seq data [35]. This indicates that the statistical approach for validation can be more critical than the source of the candidate genes themselves.

Integrated Workflow for Selection and Experimental Validation

A robust pipeline for establishing reference genes combines computational selection with rigorous experimental validation. The following workflow outlines the key steps from initial RNA-seq analysis to final confirmation.

Detailed Experimental Protocol for Validation

Primer Design and Validation: Design gene-specific primers for the shortlisted candidate genes. Validate primer specificity using agarose gel electrophoresis (to confirm a single product of the expected size) and melt curve analysis (to confirm a single unique peak) [34]. The amplification efficiency (E) should be between 90–110%, with a regression coefficient (R²) > 0.985 [34].

qPCR Profiling and Stability Analysis: Run qPCR assays on cDNA samples representing all experimental conditions. Analyze the resulting quantification cycle (Cq) values using multiple algorithms for a comprehensive assessment [34] [36]:

- geNorm: Calculates an average expression stability value (M). Genes with M < 1.5 are generally acceptable, and lower values indicate higher stability. geNorm also determines the pairwise variation (Vn/Vn+1) to indicate whether an additional reference gene is needed (V < 0.15 suggests n genes are sufficient) [34].

- NormFinder: Calculates a stability value based on intra- and inter-group variation, making it sensitive to systematic changes between sample groups [36].

- BestKeeper: Relies on the standard deviation (SD) and coefficient of variation (CV) of the Cq values. Genes with an SD < 1 are considered stable [36].

- RefFinder: Integrates results from geNorm, NormFinder, BestKeeper, and the ΔCq method to provide a comprehensive ranking.

Table 3: Key Reagents and Software for Reference Gene Identification and Validation

| Item | Function/Purpose | Examples/Specifications |

|---|---|---|

| RNA-seq Quantification File | Source data for computational screening. | File containing TPM or FPKM values for all genes across all samples. |

| Stability Analysis Software | Identify stable genes from RNA-seq data or qPCR Cq values. | GSV, GeNorm, NormFinder, BestKeeper, RefFinder. |

| qPCR Instrument | Platform for performing real-time quantitative PCR. | Applied Biosystems, Bio-Rad, Roche. |

| Reverse Transcription Kit | Converts purified RNA to cDNA for qPCR. | Includes reverse transcriptase, buffers, primers (oligo dT/random hexamers). |

| SYBR Green qPCR Master Mix | Chemistry for detecting PCR product accumulation. | Contains DNA polymerase, dNTPs, buffer, and fluorescent dye. |

Leveraging RNA-seq data provides a powerful, hypothesis-free method for identifying stable reference genes, moving beyond the potentially flawed assumption that traditional housekeeping genes are always suitable. The emerging consensus indicates that while RNA-seq is a valuable tool for discovering novel and optimal candidates, a rigorous statistical evaluation of a panel of genes—which may include both RNA-seq-derived and conventional candidates—is paramount. The integrated workflow of computational screening followed by multi-algorithmic validation of qPCR data provides the most reliable path to accurate gene expression normalization, thereby solidifying the foundation for validating RNA-seq findings.

In the context of validating RNA-seq findings with qPCR, robust primer design is not merely a preliminary step but a critical determinant of data reliability. The exquisite sensitivity of quantitative PCR (qPCR) means that even minor imperfections in primer design can compromise specificity and efficiency, leading to the misinterpretation of transcript abundance changes identified in RNA-seq experiments. Adherence to established primer design best practices provides the foundation for generating accurate, reproducible qPCR data that can confidently validate high-throughput sequencing results, thereby forming a crucial bridge between discovery-based transcriptomics and targeted molecular validation in drug development research.

Fundamental Principles of Primer Design

The thermodynamic and structural characteristics of primers directly govern their performance in PCR assays. Optimal design parameters ensure that primers bind specifically to their intended target with high efficiency while avoiding interactions that could generate artifactual results.

Core Design Parameters

The table below summarizes the key numerical parameters for designing effective PCR primers, as established by consensus guidelines from industry leaders and peer-reviewed literature [37] [38] [39].

| Parameter | Recommended Range | Rationale |

|---|---|---|

| Primer Length | 18–30 nucleotides [38] [40] | Balances specificity (longer) with hybridization efficiency (shorter) [37]. |

| Melting Temperature (Tm) | 60–65°C [37] [38] | Ensures specific binding at optimal polymerase activity temperatures. |

| Tm Difference Between Primers | ≤ 2°C [38] [40] | Allows simultaneous and efficient binding of both primers. |

| GC Content | 40–60% [37] [38] | Provides balanced binding strength; extremes can promote non-specific binding or secondary structures. |

| GC Clamp | 1-2 G/C bases at the 3' end [37] [40] | Stabilizes the primer-template complex at the critical point of polymerase extension. |

| Amplicon Length | 70–150 bp (qPCR) [38] | Enables efficient amplification under standard cycling conditions. |

Avoiding Common Structural Pitfalls

Secondary structures and inter-primer interactions are a frequent source of assay failure. Design practices must proactively avoid these issues:

- Hairpins: Intramolecular folding within a primer can block its binding site. Avoid regions where three or more nucleotides within the primer are complementary to each other [37] [41].

- Self-Dimers and Cross-Dimers: These occur when two copies of the same primer or the forward and reverse primers hybridize, respectively. They reduce available primer concentration and can be amplified as primer-dimer artifacts [37] [38]. Assess potential dimer formation using thermodynamic tools (e.g., OligoAnalyzer) and aim for a free energy (ΔG) weaker than -9.0 kcal/mol for any predicted structure [38].

- Sequence Repeats: Avoid runs of four or more identical bases (e.g., AAAA) or dinucleotide repeats (e.g., ATATAT), as they can cause primer slippage and mispriming [41] [39].

Specialized Design for RNA-seq Validation

Validating RNA-seq data with qPCR introduces unique challenges, primarily ensuring that primers measure the intended transcriptional changes without confounding effects from genomic DNA contamination or alternative splicing.

Targeting Constitutive Exons for Gene-Level Validation

When the goal is to validate differential expression at the gene level—as is common with bulk RNA-seq analyses—primers should be designed to target a region present across all transcript isoforms of that gene [42]. This is achieved by:

- Identifying Constitutive Exons: Determine which exons are universally present in every known and expressed isoform of the target gene. This often involves analyzing RNA-seq data or transcript annotations to find exons with a percent-spliced-in (PSI) value close to 100% [43].

- Placing Primers Across a Constitutive Intron: Design primers to bind within two neighboring constitutive exons, such that the resulting amplicon spans the exon-exon junction. This approach ensures the amplification of mature mRNA while preventing the amplification of any contaminating genomic DNA, which would contain the intervening intron [38] [42].

The following workflow diagram illustrates this strategic design process for creating RNA-seq validation assays.

Leveraging RNA-seq Data for Informed Design

RNA-seq datasets themselves can be powerful resources for guiding primer design, moving beyond static genome annotations:

- Informatics-Driven Design: Tools like PrimerSeq utilize aligned RNA-seq reads (BAM files) to directly visualize splicing patterns and estimate exon inclusion levels. This allows for the systematic design of primers targeting alternative splicing events discovered in the RNA-seq data or for confirming the constitutively spliced regions most suitable for gene-level validation [43].

- Experimental Verification: Before large-scale validation, test primer efficiency using a dilution series of cDNA to generate a standard curve. The ideal reaction efficiency is 100%, corresponding to a slope of -3.32, with an acceptable range of 90–110% (slope of -3.1 to -3.6) [38].

Experimental Protocols for Validation

Protocol 1: In Silico Primer Design and Specificity Check

This protocol ensures primers are specific and optimal before synthesis.

- Sequence Retrieval: Obtain the precise transcript sequence of your target from a curated database like NCBI RefSeq or Ensembl.

- Primer Design: Use NCBI Primer-BLAST, inputting the sequence and setting parameters (e.g., product size 70–150 bp, Tm 60–64°C, organism). Primer-BLAST integrates the design engine of Primer3 with a specificity check via BLAST [41].

- Thermodynamic Analysis: Analyze the top candidate sequences using a tool like IDT's OligoAnalyzer. Input reaction conditions (e.g., 50 mM K+, 3 mM Mg2+) to calculate precise Tm and check for secondary structures (hairpins, dimers) with ΔG > -9.0 kcal/mol [38].

- Specificity Validation: Review the Primer-BLAST output to confirm the primers only hit the intended gene and that the in silico amplicon matches the expected product [41] [40].

Protocol 2: Empirical Validation of Primer Efficiency

This protocol tests synthesized primers to confirm performance in actual reactions.

- cDNA Synthesis and Dilution: Convert a representative RNA sample to cDNA. Prepare a 5-point serial dilution (e.g., 1:5 or 1:10 dilutions).

- qPCR Run: Amplify each dilution in triplicate using the new primer set and your standard qPCR master mix.

- Standard Curve Analysis: Plot the Cq values against the log of the dilution factor. Calculate the slope of the trendline.

- Efficiency Calculation: Apply the formula: Efficiency (%) = (10^(-1/slope) - 1) * 100. Primers with an efficiency between 90% and 110% are typically considered acceptable for accurate relative quantification [38].

Comparison of Primer Design Strategies

The choice between different primer design methodologies involves a trade-off between convenience, specificity, and the ability to account for sample-specific transcriptome complexity. The table below compares these approaches.

| Design Strategy | Key Features | Best Suited For | Limitations |

|---|---|---|---|

| Traditional Tools (e.g., Primer3, Manual Design) | Designs based on a single input sequence; uses algorithms to meet standard parameters [41]. | Validating stable, well-annotated genes; general PCR applications. | May not reflect the actual splicing landscape or novel isoforms present in the specific RNA-seq samples [43]. |

| Integrated Specificity Tools (e.g., NCBI Primer-BLAST) | Combines Primer3 design with in silico specificity checking against a selected genome database [41] [40]. | Standard gene validation where the primary concern is off-target amplification. | Relies on reference genomes and annotations; does not incorporate sample-specific expression data. |

| RNA-seq Informed Design (e.g., PrimerSeq) | Uses aligned RNA-seq reads (BAM) from the experiment to visualize coverage and design primers based on empirical evidence of expressed isoforms [43]. | Validating alternative splicing events or genes with complex isoform profiles; ensures primers target expressed regions. | Requires bioinformatic preprocessing of RNA-seq data; more complex workflow. |

| Pre-Validated Assay Databases (e.g., PrimerBank, TaqMan Gene Expression Assays) | Access to commercially or publicly available primers that are often experimentally validated [44]. | Rapid startup for common model organisms (human, mouse, rat). | Cost; limited availability for non-model organisms or novel targets; sequences are sometimes not disclosed. |

Successful implementation of a qPCR validation pipeline requires both wet-lab reagents and bioinformatic tools. The following table details key solutions.

| Category / Item | Function / Application |

|---|---|

| Bioinformatics Tools | |

| Primer-BLAST (NCBI) | Integrated primer design and specificity checking against genomic databases [41]. |

| Primer3 / Primer3Plus | Core algorithm for custom primer design with extensive parameter control [42] [41]. |

| OligoAnalyzer (IDT) | Analyzes oligonucleotide properties: Tm, hairpins, dimers, and ΔG calculations [38]. |

| PrimerSeq | Stand-alone software for designing RT-PCR primers using RNA-seq data as input [43]. |

| Wet-Lab Reagents & Kits | |

| DNase I, RNase-free | Treatment of RNA samples to remove contaminating genomic DNA prior to reverse transcription [38]. |

| Reverse Transcription Kit | Conversion of purified RNA to cDNA for qPCR amplification. |

| Hot-Start DNA Polymerase | Reduces non-specific amplification and primer-dimer formation during PCR setup by requiring heat activation [38]. |

| SYBR Green or TaqMan Master Mix | Ready-to-use reaction buffers containing dyes, enzymes, and dNTPs for qPCR [38] [44]. |

| Controls | |

| Artificial Spike-in RNAs (e.g., SIRVs) | Internal controls for RNA-seq and qPCR to assess technical performance, dynamic range, and quantification accuracy [45]. |

| No-RT Control | cDNA reaction without reverse transcriptase to detect genomic DNA contamination. |

| No-Template Control (NTC) | qPCR reaction without cDNA to detect reagent contamination or primer-dimer amplification. |