Variance Stabilizing Transformations for RNA-seq PCA: A Practical Guide for Biomedical Researchers

This article provides a comprehensive guide to variance stabilizing transformations, a critical preprocessing step for Principal Component Analysis (PCA) of RNA-seq data.

Variance Stabilizing Transformations for RNA-seq PCA: A Practical Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide to variance stabilizing transformations, a critical preprocessing step for Principal Component Analysis (PCA) of RNA-seq data. Tailored for researchers and drug development professionals, it covers the foundational need for normalization to address heteroskedasticity in count data, compares established and emerging transformation methodologies, and offers practical troubleshooting advice. By integrating theoretical explanations with empirical benchmarks and real-world applications in biomarker discovery, this guide empowers scientists to make informed decisions that enhance the reliability and biological relevance of their transcriptomic analyses.

Why Variance Matters: The Critical Foundation of RNA-seq Data Preprocessing

RNA-sequencing count data exhibits a fundamental statistical property known as heteroskedasticity, where the variance of gene expression measurements depends systematically on their mean expression level [1] [2]. This mean-variance relationship presents a significant challenge for downstream statistical analyses, particularly principal component analysis (PCA), which assumes uniform variance across measurements [1] [2]. In practical terms, this means that a change in a gene's counts from 0 to 100 between different cells carries different statistical importance than a change from 1,000 to 1,100, even though the absolute difference is identical [2]. This technical artifact arises from the molecular sampling process during sequencing and affects both bulk and single-cell RNA-seq technologies, though it is particularly pronounced in single-cell data due to its extreme sparsity [3].

The gamma-Poisson distribution (also referred to as the negative binomial distribution) provides a theoretically and empirically well-supported model for unique molecular identifier (UMI)-based count data [1] [2]. Under this model, the variance of the counts Y can be expressed as Var[Y] = μ + αμ², where μ represents the mean expression and α denotes the overdispersion parameter quantifying additional biological variation beyond technical sampling noise [1] [2]. The quadratic term αμ² captures the heteroskedastic nature of the data, with highly expressed genes exhibiting disproportionately higher variance compared to lowly expressed genes.

Experimental Evidence and Empirical Characterization

Quantitative Demonstration of Heteroskedasticity

Table 1: Empirical Characteristics of Heteroskedasticity in RNA-seq Data

| Characteristic | Mathematical Representation | Experimental Manifestation | Impact on Analysis |

|---|---|---|---|

| Mean-Variance Relationship | Var[Y] = μ + αμ² | Higher variance for highly expressed genes | PCA dominated by highly variable genes |

| Sequencing Depth Effect | Strong correlation between UMI counts and cellular sequencing depth | Nearly identical regression slopes across gene abundance tiers | Technical confounder of biological variation |

| Transformation Performance | Varies by gene abundance level | Delta method fails for low-expression genes | Residuals-based methods more effective |

| Overdispersion Range | Typical α = 0.1-1.0 in single-cell data | CPM normalization assumes α = 50 | Severe over-smoothing of biological signal |

Experimental analyses of diverse RNA-seq datasets consistently demonstrate these theoretical relationships. In a study of 33,148 human peripheral blood mononuclear cells (PBMCs), researchers observed a strong linear relationship between unnormalized gene UMI counts and cellular sequencing depth across genes of all abundance levels [3]. This confounding effect persists even after conventional log-normalization, particularly for high-abundance genes, indicating inherent limitations in scaling-factor-based normalization strategies [3].

Further investigation reveals that gene variance remains confounded with sequencing depth after standard normalization approaches. When binning cells by their overall sequencing depth, cells with low total UMI counts exhibit disproportionately higher variance for high-abundance genes, effectively dampening the variance contribution from other gene groups [3]. This imbalance compromises downstream analyses by overemphasizing technical rather than biological sources of variation.

Experimental Protocol: Quantifying Mean-Variance Relationships

Purpose: To empirically characterize the mean-variance relationship in RNA-seq count data and assess the effectiveness of variance stabilization transformations.

Materials:

- RNA-seq count matrix (genes × cells/samples)

- Computational environment (R/Python with appropriate packages)

- Normalization algorithms (sctransform, DESeq2, Seurat)

Procedure:

- Data Preparation: Load count matrix and filter low-quality cells/genes using standard QC thresholds.

- Bin Genes by Expression Level: Group genes into 6-10 equal-width bins based on mean expression.

- Calculate Summary Statistics: Compute mean and variance for each gene within each bin.

- Plot Mean-Variance Relationship: Create scatterplots of log(mean) versus log(variance) for each bin.

- Fit Trend Lines: Apply LOESS regression or polynomial fits to characterize the relationship.

- Assess Transformation Efficacy: Apply variance-stabilizing transformations and recalculate mean-variance relationships.

Validation Metrics:

- Residual technical correlation with sequencing depth

- Homogeneity of variance across expression levels

- Preservation of biological heterogeneity in positive controls

Variance-Stabilizing Transformation Methodologies

Theoretical Foundations and Algorithmic Approaches

Table 2: Comparison of Variance-Stabilizing Transformation Methods

| Method Category | Key Transformations | Theoretical Basis | Advantages | Limitations |

|---|---|---|---|---|

| Delta Method | Shifted logarithm: log(y/s + y₀); Inverse hyperbolic sine: acosh(2αy+1)/√α | Delta method applied to gamma-Poisson mean-variance relationship | Computational efficiency; intuitive interpretation | Sensitive to size factor estimation; fails for low-expression genes |

| Residuals-Based | Pearson residuals: (y-μ̂)/√(μ̂+α̂μ̂²) | Regularized negative binomial regression | Effective removal of technical variation; preserves biological heterogeneity | Computational intensity; potential overfitting without regularization |

| Latent Expression | Sanity, Dino, Normalisr | Bayesian inference of latent expression states | Direct estimation of biological signal | Complex implementation; theoretical assumptions |

| Factor Analysis | GLM PCA, NewWave | Gamma-Poisson factor models | Integrated dimension reduction | Specialized software requirements |

Several computational approaches have been developed to address heteroskedasticity in RNA-seq data, each with distinct theoretical foundations and practical considerations.

Delta Method Transformations

The delta method derives variance-stabilizing transformations based on the assumed mean-variance relationship [1] [2]. For the gamma-Poisson distribution with overdispersion α, the theoretically optimal transformation is:

g(y) = (1/√α) × acosh(2αy + 1)

In practice, researchers often use the more familiar shifted logarithm as an approximation:

g(y) = log(y + y₀)

where the pseudo-count y₀ should be set to 1/(4α) based on the relationship between pseudo-count and overdispersion [2]. This transformation is typically applied after size factor normalization (y/s) to account for differences in sampling efficiency and cell size [1].

Pearson Residuals-Based Transformation

Hafemeister and Satija [3] proposed an alternative approach based on Pearson residuals from regularized negative binomial regression. The methodology proceeds as follows:

Model Specification: For each gene g and cell c, assume:

Y_gc ~ gamma-Poisson(μ_gc, α_g)log(μ_gc) = β_g,intercept + β_g,slope × log(s_c)where s_c represents the size factor for cell c.Parameter Regularization: Share information across genes with similar abundances to obtain stable parameter estimates and prevent overfitting.

Residual Calculation: Compute Pearson residuals as:

r_gc = (y_gc - μ̂_gc) / √(μ̂_gc + α̂_g × μ̂_gc²)

This approach successfully removes the influence of technical characteristics while preserving biological heterogeneity, making the residuals suitable for downstream analyses like dimensionality reduction and differential expression [3].

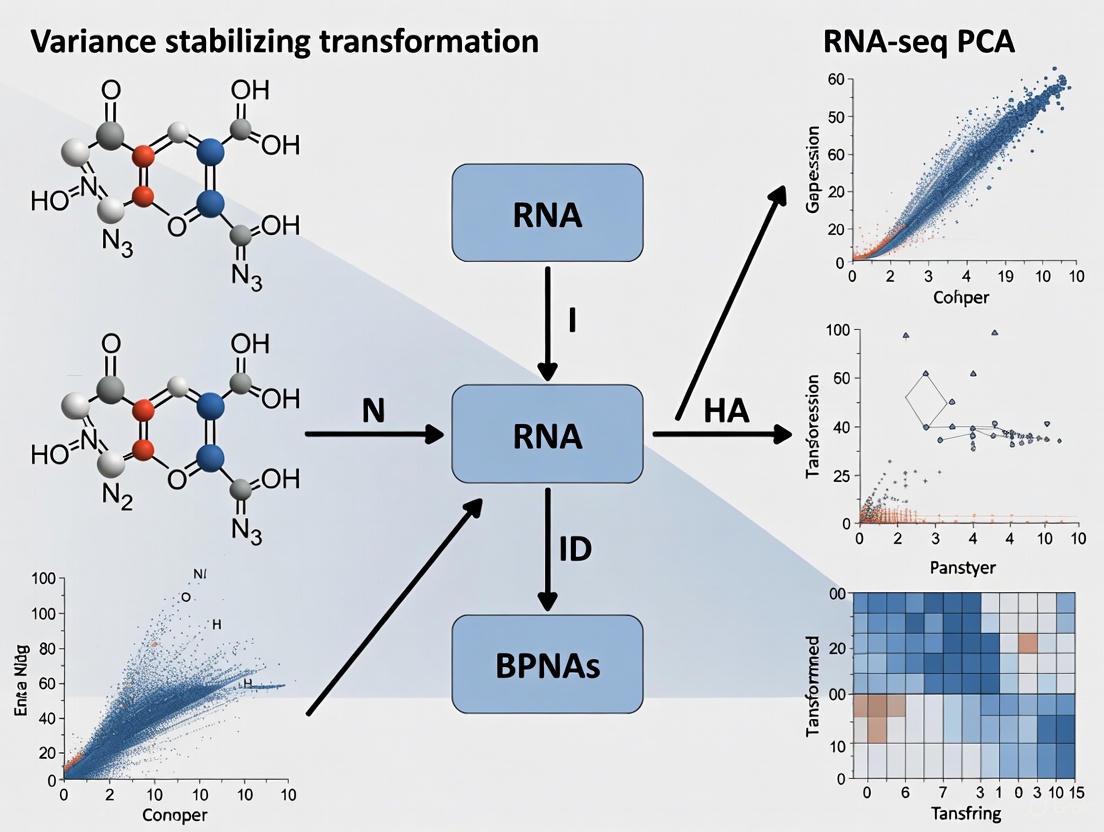

Figure 1: Workflow for Pearson Residuals-Based Variance Stabilization

Experimental Protocol: Implementation of sctransform

Purpose: To implement regularized negative binomial regression for variance stabilization of single-cell RNA-seq data.

Materials:

- UMI count matrix

- R environment with sctransform package

- Computational resources adequate for dataset size

Procedure:

- Data Input: Load UMI count matrix with genes as rows and cells as columns.

- Size Factor Estimation: Calculate sequencing depth per cell:

s_c = (∑_g y_gc) / Lwhere L is the average across all cells (typically scaled to 10,000). - Model Fitting: For each gene, fit negative binomial GLM with log(s_c) as covariate.

- Parameter Regularization: Apply regularization by pooling information across genes with similar mean expression.

- Residual Calculation: Compute Pearson residuals using regularized parameters.

- Output: Generate variance-stabilized residual matrix for downstream analysis.

Quality Control:

- Check for residual correlation with sequencing depth

- Verify preservation of biological signal in known cell type markers

- Assess homogeneity of variance across expression levels

Comparative Performance Assessment

Benchmarking Studies and Practical Recommendations

Comprehensive benchmarking of transformation approaches reveals several key insights for practical applications. Despite the appealing theoretical properties of sophisticated methods like Pearson residuals and latent expression estimation, a rather simple approach—the logarithm with a pseudo-count followed by PCA—often performs as well or better than more sophisticated alternatives in empirical tests [1] [2]. This paradoxical result highlights limitations of current theoretical analysis as assessed by bottom-line performance benchmarks.

However, important caveats exist regarding specific implementations. The choice of parameters in simple transformations significantly impacts performance. For example, using counts per million (CPM) with L = 10⁶ followed by log(y/s + 1) transformation is equivalent to setting the pseudo-count to y₀ = 0.005, which assumes an overdispersion of α = 50—two orders of magnitude larger than typical single-cell datasets [2]. In contrast, Seurat's default L = 10,000 implies a pseudo-count of y₀ = 0.5 and an overdispersion of α = 0.5, which better aligns with observed values in real data [2].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Variance Stabilization

| Tool/Package | Methodology | Application Context | Key Functions |

|---|---|---|---|

| sctransform | Regularized negative binomial regression | Single-cell RNA-seq | Variance stabilization, feature selection |

| DESeq2 | Median-of-ratios normalization + logarithmic transformation | Bulk RNA-seq | Differential expression, transformation |

| Seurat | Log-normalization or sctransform integration | Single-cell analysis | End-to-end analysis workflow |

| SCnorm | Quantile regression grouping | Single-cell RNA-seq | Scale factor estimation |

| - BASiCS | Bayesian hierarchical modeling | Single-cell RNA-seq with spike-ins | Technical noise quantification |

| Linnorm | Linear model and normalization | Single-cell RNA-seq | Transformation to homoscedasticity |

Advanced Applications and Integration with Downstream Analyses

Integration with Principal Component Analysis

The primary motivation for variance stabilization in RNA-seq data is to enable valid application of dimensionality reduction techniques like PCA, which assume homoskedasticity [1] [4]. Successful transformation removes the dependence of variance on mean expression, ensuring that principal components capture biological rather than technical sources of variation.

Experimental evidence demonstrates that effective variance stabilization alters the resulting PCA visualization in characteristic ways. In a homogeneous solution of droplets containing aliquots from the same RNA, variance-stabilizing transformations based on Pearson residuals and latent expression estimation better mixed droplets with different size factors compared to delta method-based transformations, where size factors remained a strong variance component [1] [2].

Experimental Protocol: PCA-Based Quality Assessment

Purpose: To assess the effectiveness of variance stabilization through principal component analysis.

Materials:

- Variance-stabilized data matrix

- PCA implementation (prcomp in R, scikit-learn in Python)

- Visualization tools (ggplot2, matplotlib)

Procedure:

- Data Centering: Center the variance-stabilized data matrix.

- Dimension Reduction: Perform PCA using singular value decomposition.

- Variance Calculation: Compute percentage of variance explained by each principal component.

- Visualization: Create scree plots and PC score plots colored by technical and biological covariates.

- Correlation Analysis: Calculate correlations between principal components and technical factors (e.g., sequencing depth).

Interpretation Guidelines:

- Successful transformation: minimal correlation between early PCs and technical factors

- Biological validation: separation of known cell types or conditions in early PCs

- Quality threshold: >70% of variance in first 10-20 PCs should be biological in origin

Figure 2: Integration of Variance Stabilization with Downstream Analyses

Heteroskedasticity represents a fundamental challenge in RNA-seq data analysis that must be addressed to ensure valid biological interpretation. Variance-stabilizing transformations provide a crucial preprocessing step that enables the application of conventional statistical methods, particularly PCA, to count-based sequencing data. While multiple theoretical approaches exist, empirical benchmarking suggests that both simple and sophisticated methods can achieve satisfactory results when appropriately parameterized.

Future methodological development should focus on robust parameter estimation, computational efficiency for increasingly large datasets, and integrated approaches that combine variance stabilization with downstream analysis tasks. As single-cell technologies continue to evolve with increasing cell numbers and spatial context, adapting these fundamental statistical principles to novel data structures will remain an active area of research.

Principal Component Analysis (PCA) has become a fundamental tool for exploring high-dimensional biological data, particularly in transcriptomic studies such as RNA sequencing (RNA-seq) and single-cell RNA sequencing (scRNA-seq). This unsupervised method aims to reduce dimensionality while preserving major sources of variation, allowing researchers to identify patterns, clusters, and outliers in their data. However, the application of PCA to raw, unprocessed data presents substantial risks that can compromise biological interpretation. When technical artifacts and systematic errors dominate the variance structure, they can effectively mask biological signals and lead to misleading conclusions in scientific research and drug development.

The core issue lies in PCA's inherent sensitivity to variance structure. The algorithm identifies directions of maximum variance in the dataset, but makes no distinction between biologically relevant variation and technical noise. In RNA-seq data, this technical variance can arise from multiple sources including batch effects, library preparation protocols, sequencing depth variations, and sample quality differences. When these technical factors systematically differ between sample groups, they can create the illusion of biological separation where none exists, or alternatively, obscure genuine biological differences.

Understanding these pitfalls is particularly crucial in the context of pharmaceutical research and development, where decisions about drug targets and patient stratification may rely on accurate interpretation of transcriptomic data. A flawed PCA analysis could potentially lead to misdirected research efforts, incorrect biomarker identification, or failure to recognize important biological subgroups. This application note examines the specific mechanisms through which technical variance can overtake biological signals in PCA, provides evidence of these effects from published studies, and outlines practical strategies to mitigate these risks through appropriate experimental design and data transformation.

Evidence of Technical Variance Dominance in Genomic Studies

Systematic Evidence from scRNA-seq Studies

Comprehensive analysis of single-cell RNA sequencing (scRNA-seq) data reveals how technical variability can disproportionately influence PCA results. A survey of 15 publicly available scRNA-seq datasets demonstrated striking variations in the proportion of genes reporting zero expression across cells and studies [5]. These "dropout" events, where genes expressing RNA fail to be detected due to technical limitations, create a variance structure that often dominates the principal components.

The problem intensifies because this technical variation is not consistent across cells. The probability of a gene being detected varies substantially from cell to cell, creating a technical variance structure that can be mistakenly interpreted as biological heterogeneity [5]. In one demonstrated case, differences in cell-specific detection rates driven by batch effects resulted in the false discovery of new cell populations—a critical concern for researchers identifying cell subtypes for therapeutic targeting.

Table 1: Sources of Technical Variance in RNA-seq Data and Their Impact on PCA

| Technical Variance Source | Impact on Data Structure | Effect on PCA Results |

|---|---|---|

| Dropout Events (Technical zeros) | Excess zeros, especially for lowly expressed genes | Alters distance metrics between samples; distorts cluster separation |

| Batch Effects | Systematic differences between processing batches | Creates artificial grouping that can be mistaken for biological groups |

| Library Size Variation | Differences in total read counts between samples | Positions samples along PC1 based on technical rather than biological factors |

| RNA Quality Differences | Degradation patterns affecting 3' bias | Groups samples by quality metrics rather than biological state |

Case Study: Sampling Errors in Breast Cancer Transcriptomics

A compelling example of how technical and sample quality factors can impact PCA comes from a breast cancer study analyzing RNA-seq data from invasive ductal carcinoma and adjacent normal tissues [6]. Researchers performed PCA using both gene expression values (FPKM) and transcript integrity numbers (TIN scores)—a measure of RNA quality.

The gene expression PCA plot revealed one cancer sample (C0) located far from other cancer samples, suggesting potential biological distinctness. However, the RNA quality PCA plot positioned this sample near the cancer cluster, indicating that its unusual position in the expression PCA was not driven by poor RNA quality but potentially different cellular composition [6]. Conversely, another cancer sample (C3) appeared slightly outside the cancer cluster in the gene expression PCA but was positioned far from other cancer samples in the RNA quality PCA, confirming low RNA quality as the driver of its unusual position.

Most strikingly, when the study evaluated how including these atypical samples affected differential expression analysis, they found that incorporating the low RNA-quality sample (C3) or the potentially spatially distinct sample (C0) substantially reduced the number of identified differentially expressed genes [6]. This demonstrates concretely how technical and sampling artifacts that manifest in PCA can significantly impact downstream biological interpretation.

The Mathematics of Variance Structure in PCA

Principal Component Analysis operates on a simple mathematical principle: it identifies a new set of orthogonal axes (principal components) that successively capture the maximum possible variance in the data [7]. The first principal component (PC1) aligns with the direction of greatest variance, the second component (PC2) captures the next greatest variance orthogonal to PC1, and so on. This variance-maximization approach makes PCA exceptionally sensitive to any systematic sources of variation in the data, regardless of whether they originate from biological or technical processes.

The algorithm computes these components through eigen decomposition of the covariance matrix or singular value decomposition of the data matrix. The eigenvalues (λ₁, λ₂, ..., λₚ) represent the amount of variance captured by each corresponding principal component, while the eigenvectors (or "loadings") define the direction of each component in the original high-dimensional space [7]. The percentage of total variance explained by the i-th principal component is calculated as:

[ \text{Variance Explained}(PCi) = \frac{λi}{\sum{j=1}^{p} λj} \times 100\% ]

This mathematical framework reveals why technical artifacts can dominate biological signals: if technical factors (e.g., batch effects, library size differences) generate larger magnitude variances than biological factors of interest, PCA will inevitably prioritize these technical sources in the first several components.

The Log-Transformation Paradox in RNA-seq Data

RNA-seq data analysis presents a particular challenge related to data transformation. It is common practice to log-transform count data before performing PCA, as this helps stabilize variance across the dynamic range of expression values. However, this transformation can introduce mathematical artifacts that further amplify technical variance.

As noted in scRNA-seq studies, "the proportion of genes reporting the expression level to be zero varies substantially across single cells compared to RNA-seq samples" [5]. When data in the original scale contains many zeros (common in both scRNA-seq and low-expression genes in bulk RNA-seq), applying a log transformation (typically log(x+1)) and then computing distances between samples can create a situation where the number of zeros becomes a primary driver of sample separation.

This occurs because the log transformation compresses the scale for non-zero values while maintaining the distinctiveness of zeros (which become exactly zero after log(x+1) transformation). Samples with similar patterns of zeros will cluster together in PCA space, regardless of whether those zero patterns reflect biological absence of expression or technical dropouts. This effect is particularly problematic for lower expressed genes, where scRNA-seq produces more zeros than expected, with this bias being greater for genes with lower expression levels [5].

Variance Stabilizing Transformations as a Solution

Theoretical Foundation of Variance Stabilization

Variance stabilizing transformations (VSTs) comprise a family of mathematical techniques designed to address the fundamental issue of heteroscedasticity in data—when the variance of a variable correlates with its mean value [8]. In RNA-seq data, this relationship is particularly pronounced, as count data typically exhibits variance that increases with the mean expression level. VSTs apply a function to the data that renders the variance approximately constant across the entire measurement range, creating a more stable foundation for downstream multivariate analysis like PCA.

The underlying principle of variance stabilization recognizes that for different types of data distributions, specific mathematical transformations can create a more homoscedastic structure [8]. For RNA-seq count data that often follows a negative binomial distribution, an appropriate VST can simultaneously address both the mean-variance relationship and the skewness typical of count data, thereby preventing highly expressed genes from disproportionately influencing the principal components simply due to their larger variance.

Practical Transformation Methods for RNA-seq Data

Several variance stabilizing transformations have been developed specifically for genomic data, each with particular strengths and applications:

Table 2: Variance Stabilizing Transformations for RNA-seq Data

| Transformation Method | Mathematical Formula | Ideal Use Case | Considerations |

|---|---|---|---|

| Log Transformation | y = log(x) or y = log(x+1) | Variance ∝ mean²; Right-skewed distributions [8] | Simple, widely used; +1 constant handles zeros but can introduce bias |

| Square Root Transform | y = √x or y = √(x + c) [8] | Poisson-like count data (variance = mean) [8] | Less aggressive than log; c=0.5 often used for small counts |

| Box-Cox Transformation | y = (x^λ - 1)/λ for λ ≠ 0; y = log(x) for λ = 0 [8] | Power-based adjustments with parameter optimization [8] | Flexible; automatically selects optimal λ via MLE |

| DESeq2 VST | Complex variance-model based transformation | RNA-seq count data with size factors and dispersion estimates | Specifically designed for RNA-seq; accounts for library size differences |

The selection of an appropriate transformation depends on the specific characteristics of the dataset. As a general guideline, the Box-Cox transformation offers the most flexibility through its parameter optimization, while the DESeq2 VST has been specifically engineered to address the statistical properties of RNA-seq count data.

Experimental Protocols for Reliable PCA in RNA-seq Studies

Comprehensive Preprocessing Workflow

RNA-seq PCA Preprocessing and Validation Workflow

This diagram outlines the essential steps for preparing RNA-seq data for PCA analysis, highlighting three critical validation checkpoints to assess potential technical confounding.

Step-by-Step Protocol for PCA with Variance Stabilization

Phase 1: Data Preprocessing and Quality Control

Initial Quality Assessment: Begin by calculating key quality metrics including library sizes, gene detection rates, and proportion of zeros across samples. For bulk RNA-seq, generate a TIN score PCA plot to evaluate RNA quality separate from gene expression patterns [6].

Filtering Low-Quality Features: Remove genes with consistently low counts across samples. A common threshold is requiring at least 10 counts in a minimum of 10% of samples, though this should be adjusted based on dataset size and characteristics.

Normalization for Library Size: Apply appropriate normalization methods to account for differences in sequencing depth between samples. Established methods include TMM (edgeR), median ratio (DESeq2), or upper quartile normalization.

Phase 2: Variance Stabilization Implementation

Transformation Selection: Based on data characteristics, select an appropriate variance stabilizing method. For most RNA-seq applications, the DESeq2 VST or a Box-Cox transformation with optimized parameters provides robust performance [8].

Application of Transformation: Implement the selected transformation using standard bioinformatics tools:

DESeq2 VST Example in R:

Box-Cox Transformation Example in R:

Phase 3: PCA Execution and Technical Artifact Assessment

PCA Implementation: Perform PCA on the transformed data matrix using the

prcomp()function in R, ensuring to center the data (default setting) [9]. Scaling (standardizing to unit variance) should be considered carefully, as it gives equal weight to all genes regardless of expression level.Technical Correlation Testing: Systematically test correlations between principal components and technical variables:

Visualization and Interpretation: Create PCA score plots colored by both biological conditions and technical factors to visually assess potential confounding. Generate scree plots to evaluate the proportion of variance explained by each component [9] [7].

Research Reagent Solutions for Technical Variance Mitigation

Table 3: Essential Reagents and Tools for Technical Variance Control

| Reagent/Tool Category | Specific Examples | Function in Variance Control |

|---|---|---|

| RNA Quality Assessment | Bioanalyzer RNA Integrity Number (RIN), TIN scores [6] | Prevents RNA degradation artifacts from influencing PCA results |

| UMI Barcodes | Unique Molecular Identifiers in scRNA-seq protocols [5] | Reduces technical noise in amplification and sequencing |

| Batch Tracking Systems | Laboratory information management systems (LIMS) | Enables statistical modeling and correction of batch effects |

| Spike-In Controls | ERCC RNA Spike-In Mix, SIRVs | Distinguishes technical from biological variation |

| Normalization Tools | DESeq2, edgeR, limma-voom | Correct for library size and composition biases before PCA |

The application of Principal Component Analysis to RNA-seq data requires careful consideration of the inherent technical variances that can dominate biological signals. Through evidence from multiple studies, we have demonstrated how batch effects, detection rate variations, RNA quality differences, and transformation artifacts can substantially influence PCA results and lead to incorrect biological interpretations.

The core recommendation emerging from this analysis is that researchers must implement comprehensive variance stabilization approaches before performing PCA on RNA-seq data. Additionally, systematic assessment of technical confounders should be a standard component of PCA interpretation. By adopting the protocols and best practices outlined in this application note, researchers and drug development professionals can significantly improve the reliability of their transcriptomic analyses and ensure that biological signals rather than technical artifacts drive their scientific conclusions.

Future developments in this field will likely include more sophisticated transformation methods specifically designed for the unique characteristics of different RNA-seq protocols, as well as improved integration of variance stabilization with downstream multivariate analysis techniques.

In RNA-sequencing (RNA-seq) analysis, the raw count data generated by high-throughput sequencers presents specific statistical challenges that must be addressed before meaningful biological interpretations can be made. The core goals of data transformation are twofold: to stabilize variance across the dynamic range of expression values and to correct for differences in sequencing depth between samples. These procedures are essential prerequisites for downstream applications such as Principal Component Analysis (PCA), which underpins much of exploratory data analysis in transcriptomics [10] [2].

RNA-seq data is inherently heteroskedastic, meaning that the variance of gene counts depends strongly on their mean expression level. Highly expressed genes typically show greater variability than lowly expressed genes, violating the assumption of uniform variance that many statistical methods require for optimal performance [2]. Additionally, the total number of sequencing reads (sequencing depth) varies substantially between samples, creating technical artifacts that can confound biological signals [10] [3]. Effective transformation strategies must simultaneously address both issues to enable accurate data exploration and interpretation.

Theoretical Foundation

The Nature of RNA-seq Count Data

RNA-seq data originates from high-throughput sequencing of RNA molecules that have been reverse transcribed into more stable complementary DNA (cDNA). The raw output consists of millions of short sequences (reads) that are mapped to genomic features, resulting in a count matrix where each element represents the number of reads mapped to a particular gene in a specific sample [10]. This data structure exhibits two fundamental properties that necessitate transformation:

- Mean-variance relationship: The variance of counts increases with their mean expression level, typically following a quadratic mean-variance relationship characteristic of the gamma-Poisson (negative binomial) distribution [2] [3].

- Compositional nature: The total number of reads per sample (library size) is fixed by sequencing capacity, meaning counts for individual genes represent relative rather than absolute abundances [10].

These properties create analytical challenges, as standard statistical methods assuming homoskedasticity (constant variance) and independence of measurements perform poorly on raw count data.

Mathematical Principles of Variance Stabilization

Variance stabilization aims to transform the count data such that the variance becomes approximately constant across all expression levels. For UMI-based data with a gamma-Poisson distribution and a quadratic mean-variance relationship (\mathbb{V}\text{ar}[Y] = \mu + \alpha\mu^2), the delta method yields the variance-stabilizing transformation:

[ g(y) = \frac{1}{\sqrt{\alpha}} \text{acosh}(2\alpha y + 1) ]

In practice, this is often approximated by the more familiar shifted logarithm:

[ g(y) = \log(y + y_0) ]

where the pseudo-count (y_0) is optimally set to (1/(4\alpha)) based on the relationship between pseudo-count and overdispersion [2]. Regularized negative binomial regression represents an alternative approach that models counts directly while addressing overfitting through information sharing across genes [3].

Table 1: Mathematical Transformations for Variance Stabilization

| Transformation | Formula | Key Parameters | Applicable Data Types |

|---|---|---|---|

| Shifted Logarithm | (\log(y/s + y_0)) | Size factor (s), pseudo-count (y₀) | Bulk and single-cell RNA-seq |

| Acosh Transformation | (\frac{1}{\sqrt{\alpha}} \text{acosh}(2\alpha y + 1)) | Overdispersion (α) | UMI-based data |

| Pearson Residuals | (\frac{y{gc} - \hat{\mu}{gc}}{\sqrt{\hat{\mu}{gc} + \hat{\alpha}g \hat{\mu}_{gc}^2}}) | Fitted mean (μ̂), dispersion (α̂) | Single-cell RNA-seq |

| Variance Stabilizing Transformation (VST) | Complex (see DESeq2 documentation) | Trended dispersion | Bulk RNA-seq |

Critical Normalization Strategies

Sequencing Depth Correction

Sequencing depth variation represents a major technical confounder in RNA-seq analysis, as samples with more total reads will naturally have higher counts for most genes regardless of true biological expression levels [10]. Several approaches address this challenge:

Size factor normalization estimates sample-specific scaling factors that adjust for differences in library size. The simplest approach uses the total number of reads per sample:

[ sc = \frac{\sumg y_{gc}}{L} ]

where (sc) is the size factor for cell (c), (y{gc}) is the count for gene (g) in cell (c), and (L) is a scaling constant (often (10^6) for CPM, or the average across cells) [2]. More advanced methods like DESeq2's median-of-ratios approach or edgeR's TMM (Trimmed Mean of M-values) provide robustness to extreme expression values by considering only a subset of genes presumed to be non-differentially expressed [10] [11].

Non-scaling factor approaches including Pearson residuals from regularized negative binomial regression model counts directly without explicit size factors, instead including sequencing depth as a covariate in a generalized linear model [3]. The residuals from this regression represent normalized expression values free from the influence of technical covariates.

Library Composition Effects

Library composition biases occur when a few highly expressed genes consume a substantial fraction of the sequencing budget, distorting the apparent expression levels of other genes [10]. Methods that assume a constant scaling factor across all genes struggle with this issue, as the effective normalization required differs between lowly and highly expressed genes [3].

Advanced normalization techniques address composition biases by:

- Using reference samples (TMM) that serve as a baseline for comparison

- Employing robust statistical measures (median-of-ratios) that are resistant to outliers

- Gene-specific normalization (SCnorm) that recognizes different normalization requirements for genes with different abundance levels [3]

Table 2: Normalization Methods for RNA-seq Data

| Method | Sequencing Depth Correction | Library Composition Correction | Suitable for DE Analysis | Key Tools |

|---|---|---|---|---|

| CPM | Yes | No | No | Base R, edgeR |

| TPM | Yes | Partial | No | RSEM, StringTie |

| RPKM/FPKM | Yes | No | No | Cufflinks, StringTie |

| Median-of-Ratios | Yes | Yes | Yes | DESeq2 |

| TMM | Yes | Yes | Yes | edgeR |

| Pearson Residuals | Via GLM | Yes | Yes | sctransform |

Experimental Protocols

Protocol 1: Shifted Logarithm Transformation

The shifted logarithm remains one of the most widely used transformations due to its computational simplicity and interpretability [2].

Materials Required:

- Raw count matrix (genes × samples)

- Computational environment: R or Python

- Size factor estimates (e.g., from DESeq2 or calculated directly)

Procedure:

- Calculate size factors for each sample:

Estimate an appropriate pseudo-count:

- For bulk RNA-seq: (y_0 = 1) is commonly used

- For UMI-based single-cell data: Estimate overdispersion parameter α and set (y_0 = 1/(4α)) for optimal approximation of the acosh transformation [2]

Apply the transformation: [ y'{gc} = \log\left(\frac{y{gc}}{sc} + y0\right) ]

Validate transformation effectiveness:

- Check that variance is approximately constant across expression levels

- Verify absence of correlation between transformed expression and sequencing depth

Troubleshooting Tips:

- If variance still correlates with mean after transformation, try increasing the pseudo-count

- If transformation introduces too much noise in low counts, consider filtering weakly expressed genes first

- For single-cell data with high sparsity, consider alternative approaches like Pearson residuals

Protocol 2: Variance Stabilizing Transformation (VST) via DESeq2

DESeq2's VST incorporates gene-specific dispersion estimates to optimally stabilize variance [11].

Materials Required:

- DESeq2 package in R

- Raw count matrix

- Sample metadata table

Procedure:

- Create a DESeqDataSet object from count matrix and sample information:

Estimate size factors using the median-of-ratios method:

Estimate dispersion parameters:

Apply the variance stabilizing transformation:

Extract the transformed matrix for downstream analysis:

Validation Steps:

- Plot mean versus standard deviation before and after transformation

- Perform PCA and check that first principal component does not correlate with sequencing depth

- Compare sample distances with and without transformation

Protocol 3: Pearson Residuals Transformation for Single-Cell Data

The Pearson residuals approach implemented in sctransform effectively handles the high sparsity and technical noise characteristic of single-cell RNA-seq data [3].

Materials Required:

- sctransform R package

- UMI count matrix (genes × cells)

- High-performance computing resources for large datasets

Procedure:

- Fit a regularized negative binomial regression for each gene: [ \mathbb{E}[Y{gc}] = \mu{gc} = \exp(\beta{g0} + \beta{g1} \log sc) ] where (sc) represents the sequencing depth (total UMI count) for cell (c) [3]

Calculate Pearson residuals: [ r{gc} = \frac{y{gc} - \hat{\mu}{gc}}{\sqrt{\hat{\mu}{gc} + \hat{\alpha}g \hat{\mu}{gc}^2}} ]

Clip residuals to mitigate the influence of extreme values: [ r{gc}' = \max(-\sqrt{N}, \min(\sqrt{N}, r{gc})) ] where (N) is the total number of cells

Use the residuals as normalized expression values for downstream analysis.

Implementation Notes:

- Regularization is critical to prevent overfitting; sctransform pools information across genes with similar abundances

- The resulting residuals should have approximately standard normal distribution for non-differentially expressed genes

- This approach automatically corrects for sequencing depth without explicit size factors

Workflow Visualization

Figure 1: RNA-seq Data Transformation Workflow. The process begins with raw counts, proceeds through quality control and sequential normalization steps, and culminates in transformed data suitable for downstream analyses like PCA and differential expression testing.

Figure 2: Relationship Between Technical Challenges and Transformation Goals. Effective transformation strategies must address multiple technical artifacts simultaneously while preserving biological signal of interest.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for RNA-seq Transformation Analysis

| Tool/Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| R/Bioconductor Packages | DESeq2, edgeR, limma-voom | Differential expression analysis with built-in normalization | Bulk RNA-seq analysis |

| Normalization Tools | sctransform, SCnorm, BASiCS | Specialized normalization for specific data types | Single-cell RNA-seq, complex designs |

| Quality Assessment | FastQC, MultiQC, Qualimap | Initial data quality evaluation | Pre-processing stage |

| Visualization Platforms | pcaExplorer, IDEAL, GeneTonic | Interactive exploratory data analysis | Post-normalization exploration |

| Quantification Software | Salmon, Kallisto, HTSeq | Alignment-free transcript quantification | Generation of count matrices |

| Programming Environments | RStudio, Jupyter Notebooks | Implementation of analysis workflows | Entire analytical pipeline |

Performance Benchmarks and Validation

Comparative Method Performance

Evaluations of transformation methods reveal context-dependent performance characteristics. In comprehensive comparisons, no single method emerges as superior across all scenarios, though clear patterns inform practical recommendations [12] [13].

For bulk RNA-seq differential expression analysis, DESeq2 and edgeR generally perform similarly, with extensive overlap in identified genes and comparable false discovery rate control [13]. The limma-voom approach shows advantages for large sample sizes (hundreds of samples) due to computational efficiency, though it may have reduced sensitivity with small sample sizes (n=3 per group) or small fold changes [13].

For single-cell RNA-seq, the shifted logarithm with appropriate pseudo-count selection performs surprisingly well against more sophisticated alternatives, though Pearson residuals from regularized negative binomial regression better handle the high sparsity and technical noise characteristic of these datasets [2] [3].

Validation Metrics and Procedures

Rigorous validation of transformation effectiveness should include both diagnostic plots and quantitative metrics:

Visual Diagnostics:

- Mean-variance plots showing stabilization of variance across expression levels

- PCA plots with coloring by technical covariates (sequencing depth, batch)

- Scatterplots of transformed expression versus sequencing depth

Quantitative Metrics:

- Correlation between principal components and technical variables

- Variance homogeneity tests across expression strata

- Preservation of biological signal in positive control genes

Transformation success is ultimately determined by improved performance in downstream tasks like differential expression analysis, where effective normalization should increase power while maintaining false discovery rate control [10] [11].

Effective transformation of RNA-seq data to stabilize variance and correct for sequencing depth remains a critical component of transcriptomic analysis pipelines. The choice of specific method should be guided by data characteristics (bulk versus single-cell, sample size, sparsity) and analytical goals. While theoretical considerations provide important guidance, empirical validation using the metrics and protocols outlined here ensures that transformations successfully facilitate biological discovery rather than introduce analytical artifacts. As RNA-seq technologies continue to evolve, transformation methodologies must similarly advance to address new computational and statistical challenges.

RNA sequencing (RNA-Seq) has revolutionized transcriptomics by enabling genome-wide quantification of RNA abundance. However, the raw count data generated by this high-throughput technology is not directly comparable between samples. Normalization is therefore an essential computational step to adjust for technical variability, thereby ensuring that observed differences in gene expression reflect true biological signals rather than artifacts of the data generation process [10] [14]. Technical factors requiring correction include sequencing depth (the total number of reads obtained per sample), gene length, and library composition (the distribution of RNA populations across samples) [10] [15]. The choice of normalization technique is critical, as it directly impacts the sensitivity and reliability of all downstream analyses, including differential expression testing, principal component analysis (PCA), and co-expression network construction [16] [15].

Within the specific context of variance-stabilizing transformation for RNA-Seq PCA research, normalization takes on heightened importance. PCA and other multivariate exploratory tools are highly sensitive to the underlying data structure. Without proper normalization, the largest sources of variation in the PCA often correlate with technical confounders like sequencing depth, potentially obscuring the biological variation of interest [16] [17]. This article provides a detailed overview of three common normalization techniques—CPM, TPM, and TMM—framed within the workflow of RNA-Seq analysis and supplemented with practical protocols for their application.

The Normalization Workflow in RNA-Seq Analysis

Normalization is not a single step but a process integrated within a larger analytical workflow. The journey from raw sequencing reads to normalized, comparable gene expression values involves several stages, each addressing different technical challenges. The figure below outlines the key decision points for applying CPM, TPM, and TMM normalization within a typical RNA-Seq analysis pipeline.

Techniques in Detail: CPM, TPM, and TMM

Counts Per Million (CPM)

CPM is a straightforward within-sample normalization method that controls for differences in sequencing depth—the total number of reads obtained per sample [14]. It is calculated as follows:

Formula: CPM = (Number of reads mapped to a gene / Total number of mapped reads in sample) * 1,000,000 [14]

By scaling counts to a common total of one million reads, CPM allows for the direct comparison of the relative abundance of a specific gene within a single sample. However, it does not correct for gene length bias, meaning longer genes will naturally have higher counts independent of their true expression level. Furthermore, CPM does not account for differences in library composition between samples. If a few genes are extremely highly expressed in one sample, they consume a large fraction of the sequencing reads, skewing the counts for all other genes and making cross-sample comparisons unreliable [10]. Consequently, CPM is not recommended for differential gene expression (DGE) analysis [10].

Transcripts Per Million (TPM)

TPM is an evolution of RPKM/FPKM and represents a within-sample normalization method that corrects for both sequencing depth and gene length [10] [14]. Its calculation is a two-step process:

- Normalize reads for gene length:

Reads per Kilobase = (Number of reads mapped to a gene / Gene length in kilobases) - Normalize for sequencing depth:

TPM = (Reads per Kilobase / Sum of all "Reads per Kilobase" values in the sample) * 1,000,000[14]

A key advantage of TPM is that the sum of all TPM values in every sample is the same (one million), which reduces variation between samples caused by sequencing depth and makes cross-sample comparison more intuitive than with RPKM/FPKM [14]. TPM is suitable for comparing the relative expression of different genes within a single sample. However, like CPM, it does not effectively correct for library composition effects and is therefore not ideal for rigorous differential expression analysis, where more robust between-sample normalization methods are preferred [10].

Trimmed Mean of M-values (TMM)

TMM is a between-sample normalization method designed specifically for differential expression analysis. It operates on the core assumption that most genes are not differentially expressed (DE) across samples [10] [14]. TMM calculates scaling factors to adjust library sizes by first selecting one sample as a reference. For each other sample, it calculates gene-wise log-fold changes (M-values) and expression abundances (A-values). The mean of the M-values is then computed after trimming away the top and bottom 30% of the data, as well as genes with extreme large or small A-values [14]. This trimmed mean is used as the scaling factor for that sample relative to the reference.

This method is robust to situations where a subset of genes is highly differentially expressed or abundant, as these are likely to be trimmed away. TMM, and the similar "median-of-ratios" method used in DESeq2, effectively correct for both sequencing depth and library composition, making them the gold standard for DGE analysis [10] [15]. These methods are implemented in popular Bioconductor packages like edgeR (TMM) and DESeq2 (median-of-ratios).

Table 1: Comparison of Common RNA-Seq Normalization Methods

| Method | Sequencing Depth Correction | Gene Length Correction | Library Composition Correction | Primary Use Case |

|---|---|---|---|---|

| CPM | Yes | No | No | Within-sample comparison; input for downstream between-sample methods [10] |

| TPM | Yes | Yes | Partial | Within-sample comparison; cross-sample visualization [10] [14] |

| TMM | Yes | No | Yes | Between-sample differential expression analysis [10] |

Practical Application and Protocols

Protocol 1: Calculating CPM and TPM

This protocol details the steps to perform CPM and TPM normalization starting from a raw count matrix.

Key Reagent Solutions:

- Raw Count Matrix: A gene-by-sample matrix containing the number of reads mapped to each gene. This is typically generated by tools like

featureCountsorHTSeq-count[10]. - Gene Length File: A data file containing the length (in kilobases) of each gene/transcript in the reference genome.

Procedure:

- Data Input: Load the raw count matrix into your analytical environment (e.g., R/Python).

- CPM Calculation:

a. For each sample, calculate the total library size (sum of all counts).

b. For each gene in the sample, divide its raw count by the library size and multiply by 1,000,000.

- R code snippet:

cpm <- (counts / matrix(colSums(counts), nrow=nrow(counts), ncol=ncol(counts), byrow=TRUE)) * 1e6

- R code snippet:

- TPM Calculation:

a. Length Normalization: Divide the raw counts for each gene by its length in kilobases.

b. Sample-Specific Scaling: For each sample, calculate the sum of all length-normalized counts.

c. Final Calculation: Divide each length-normalized count by the sample-specific sum from step 3b and multiply by 1,000,000.

- R code snippet:

Protocol 2: Applying TMM Normalization for Differential Expression

This protocol describes the application of TMM normalization using the edgeR package in R, prior to differential expression analysis.

Key Reagent Solutions:

- Raw Count Matrix: As described in Protocol 1.

- edgeR Package: A Bioconductor package for differential expression analysis of digital gene expression data.

Procedure:

- Package Installation: Install and load the

edgeRpackage in R.if (!require("BiocManager", quietly = TRUE)) install.packages("BiocManager")BiocManager::install("edgeR")library(edgeR)

- Create DGEList Object: Create a DGEList object, which is the core data structure in

edgeR.dge <- DGEList(counts = count_matrix)

- Calculate TMM Factors: Use the

calcNormFactorsfunction to perform TMM normalization.dge <- calcNormFactors(dge, method = "TMM")

- Output and Use: The normalization factors are stored in

dge$samples$norm.factors. These factors are automatically used in downstreamedgeRfunctions for differential expression testing (e.g.,estimateDisp,glmQLFit). The normalized, comparable counts can be obtained as counts per million usingcpm(dge).

The Scientist's Toolkit: Essential Research Reagents and Software

Successful normalization and analysis require a suite of computational tools and reagents. The following table details key resources mentioned in this protocol.

Table 2: Key Research Reagent Solutions for RNA-Seq Normalization

| Item Name | Function/Brief Explanation | Example Use Case |

|---|---|---|

| Raw Count Matrix | The foundational input data; a table of raw read counts per gene per sample, generated by aligners or quantifiers [10]. | Serves as the direct input for all normalization methods (CPM, TPM, TMM). |

| Gene Length File | A reference file containing the length of each gene/transcript in the genome. | Required for calculating TPM and other length-sensitive normalizations (RPKM, FPKM) [14]. |

| edgeR | A Bioconductor software package for differential expression analysis. | Implements the TMM normalization method via its calcNormFactors function [10]. |

| DESeq2 | A Bioconductor software package for differential expression analysis. | Uses the "median-of-ratios" method, a robust normalization technique comparable to TMM [10]. |

| Size Factors | Sample-specific scaling factors calculated by methods like TMM and median-of-ratios to correct for library size and composition [10]. | Applied to raw counts to make them comparable across samples for DGE analysis. |

The choice of normalization method is a pivotal decision in RNA-Seq data analysis. CPM, TPM, and TMM each serve distinct purposes: CPM offers a simple depth correction, TPM allows for within-sample comparisons by also accounting for gene length, and TMM provides a robust framework for between-sample differential expression analysis by correcting for sequencing depth and library composition. Within the context of variance stabilization for PCA, failure to adequately normalize data using appropriate methods like TMM can result in technical artifacts, such as sample clustering driven by sequencing depth rather than biology [16] [17]. Therefore, researchers must align their choice of normalization technique with the specific analytical goals and biological questions at hand to ensure accurate and meaningful interpretation of their transcriptomic data.

A Practical Toolkit: Implementing Key Variance-Stabilizing Transformations

Single-cell RNA-sequencing (scRNA-seq) data presents unique analytical challenges due to its fundamental statistical characteristics. The raw count tables, structured as genes × cells matrices, exhibit significant heteroskedasticity, meaning that the variance of counts depends strongly on their mean expression level. Specifically, counts for highly expressed genes demonstrate substantially more variability than counts for lowly expressed genes. This property makes application of standard statistical methods problematic, as these techniques typically perform best with data exhibiting uniform variance across its dynamic range.

The Gamma-Poisson model (also referred to as the negative binomial distribution) has emerged as a theoretically and empirically well-supported framework for modeling the sampling variability of scRNA-seq counts. This model accounts for the quadratic mean-variance relationship inherent to this data type, where the variance is expressed as Var[Y] = μ + αμ², with μ representing the mean expression and α quantifying the overdispersion beyond Poisson sampling noise. Within this statistical framework, Pearson residuals provide a powerful approach for normalizing count data, stabilizing variance across the expression range, and enabling downstream applications such as principal component analysis (PCA) and differential expression testing.

Theoretical Foundation of the Gamma-Poisson Model and Pearson Residuals

The Gamma-Poisson Modeling Framework

The Gamma-Poisson model for scRNA-seq data operates on the fundamental principle that the observed count ( Y{gc} ) for gene g in cell c follows a gamma-Poisson distribution with parameters ( μ{gc} ) (mean) and ( α_g ) (gene-specific overdispersion). The model is formally specified through a generalized linear model (GLM) framework:

[ \begin{aligned} Y{gc} &\sim \text{gamma-Poisson}(\mu{gc}, \alphag) \ \log(\mu{gc}) &= \beta{g,\text{intercept}} + \beta{g,\text{slope}} \log(s_c) \end{aligned} ]

where ( sc ) represents the size factor for cell c, accounting for technical variability in sampling efficiency and cell size, while ( \beta{g,\text{intercept}} ) and ( \beta_{g,\text{slope}} ) are gene-specific intercept and slope parameters [1] [2].

Pearson Residuals Calculation

Within this fitted model, Pearson residuals are calculated as standardized differences between observed counts and their expected values under the model:

[ r{gc} = \frac{y{gc} - \hat{\mu}{gc}}{\sqrt{\hat{\mu}{gc} + \hat{\alpha}g \hat{\mu}{gc}^2}} ]

where ( y{gc} ) is the observed count, ( \hat{\mu}{gc} ) is the fitted mean, and ( \hat{\alpha}_g ) is the estimated overdispersion parameter [1] [2]. The denominator represents the standard deviation of a gamma-Poisson random variable, ensuring that the residuals are appropriately scaled relative to the expected variability for each gene's expression level.

Statistical Properties of Pearson Residuals

Pearson residuals possess several important statistical properties that make them particularly suitable for scRNA-seq analysis. When the model is correctly specified, these residuals exhibit approximately zero mean and unit variance, making them suitable for downstream statistical methods that assume homoskedasticity. Theoretical research has shown that through careful adjustment using matrix formulae of order ( n^{-1} ), these residuals can be further refined to more closely approximate the ideal properties of zero mean and unit variance, even in finite samples [18].

The adjustment accounts for the inherent dependence between the residuals and the model parameters, particularly addressing the fact that the raw Pearson residuals tend to be underestimated in magnitude due to the shrinkage effect of estimating parameters from the same data used to calculate residuals. These adjusted Pearson residuals demonstrate improved performance in diagnostic applications and provide more reliable inference in practical settings [18].

Comparative Analysis of Transformation Methods

While Pearson residuals represent a powerful normalization strategy, several alternative transformation methods are commonly employed in scRNA-seq analysis, each with distinct theoretical foundations and practical implications:

Delta method-based transformations: Include the

acoshtransformation (( g(y) = \frac{1}{\sqrt{\alpha}} \text{acosh}(2\alpha y + 1) )) and the shifted logarithm (( g(y) = \log(y + y_0) )), which provide approximate variance stabilization based on the delta method from mathematical statistics [1] [2].Latent expression transformations: Methods such as Sanity, Dino, and Normalisr that infer parameters of postulated generative models to estimate latent gene expression values based on observed counts [1].

Factor analysis approaches: Techniques including GLM PCA and NewWave that directly produce low-dimensional latent space representations without explicit transformation of counts [1].

Performance Comparison of Transformation Methods

Table 1: Comparative analysis of transformation methods for scRNA-seq data

| Method Category | Representative Methods | Theoretical Foundation | Variance Stabilization | Handling of Size Factors |

|---|---|---|---|---|

| Delta method | acosh, shifted logarithm |

Delta method applied to mean-variance relationship | Approximate, fails for lowly expressed genes | Problematic - size factors remain strong variance component |

| Pearson residuals | sctransform, transformGamPoi | Gamma-Poisson GLM with standardization | Effective across dynamic range | Effective - properly accounts for cell-to-cell variability |

| Latent expression | Sanity, Dino, Normalisr | Bayesian inference or mixture models | Varies by method | Generally effective |

| Factor analysis | GLM PCA, NewWave | Gamma-Poisson factor model | Direct dimension reduction | Built into model structure |

Empirical Performance Benchmarks

Empirical evaluations using both simulated and real-world datasets have revealed interesting performance characteristics. While methods based on Pearson residuals and latent expression inference demonstrate appealing theoretical properties, benchmarks have shown that a relatively simple approach—the logarithm with a pseudo-count followed by PCA—often performs as well as or better than more sophisticated alternatives in practical applications [1] [2].

However, a significant limitation of delta method-based transformations, including the shifted logarithm, is their inadequate handling of size factors. Even after size factor scaling, the size factor remains a strong variance component in the transformed data, potentially confounding biological signal with technical artifacts. In contrast, Pearson residuals-based transformations better mix droplets with different size factors, more effectively removing this technical source of variation [1] [2].

Another key advantage of Pearson residuals is their superior performance in stabilizing the variance of lowly expressed genes. While delta method-based transformations show practically zero variance for genes with mean expression <0.1, residuals-based transformations maintain a weaker dependence on mean expression across the dynamic range [1].

Computational Implementation Protocols

Software Tools and Packages

Table 2: Essential computational tools for Gamma-Poisson modeling and Pearson residuals calculation

| Tool/Package | Primary Function | Implementation | Key Features |

|---|---|---|---|

| glmGamPoi | Fitting Gamma-Poisson GLMs | R/Bioconductor | Fast estimation, handles large datasets, works on disk without loading full data into RAM |

| transformGamPoi | Variance-stabilizing transformations | R/Bioconductor | Multiple transformations (acosh, shifted log, Pearson residuals, randomized quantile) |

| sctransform | Pearson residuals normalization | R | Original implementation of Pearson residuals approach by Hafemeister and Satija |

| DESeq2 | Generalized linear modeling | R/Bioconductor | Robust GLM fits, differential expression testing |

| edgeR | Generalized linear modeling | R/Bioconductor | Proven methods for count data, differential expression |

Protocol: Calculating Pearson Residuals for scRNA-seq Data

Purpose: To compute Pearson residuals for single-cell RNA-seq count data using the Gamma-Poisson model for downstream analyses including PCA and clustering.

Materials:

- Count matrix (genes × cells)

- R statistical environment (version 4.0 or higher)

- Bioconductor packages: glmGamPoi, transformGamPoi, SingleCellExperiment

Procedure:

Data Preparation:

- Load count data into R, ensuring proper formatting as a matrix or SingleCellExperiment object

- Perform basic quality control to remove low-quality cells and genes

- Check for and handle extreme outliers in library sizes

Size Factor Estimation:

- Calculate size factors to normalize for differences in sequencing depth:

Model Fitting:

- Fit Gamma-Poisson GLM to the count data:

Pearson Residuals Calculation:

- Compute residuals using the fitted model:

Downstream Application:

- Use the residuals for PCA and visualization:

Troubleshooting:

- For large datasets (>10,000 cells), use

on_disk = TRUEto reduce memory usage - If convergence issues occur, try increasing

max_iterin glmGamPoi - For problematic genes, consider filtering prior to analysis

Workflow Visualization

Advanced Applications and Considerations

Integration with Downstream Analyses

Pearson residuals transform count data into a normalized space that is suitable for various downstream analytical techniques:

Principal Component Analysis: The variance-stabilized nature of Pearson residuals makes them ideal for PCA, as the technique assumes homoskedasticity across variables. The residuals can be directly input to PCA without additional scaling in most cases.

Clustering and Cell Type Identification: The improved signal-to-noise ratio in Pearson residuals enhances clustering performance, enabling more accurate identification of cell types and states.

Differential Expression Analysis: While specialized methods exist for formal differential expression testing, Pearson residuals can provide initial insights into expression differences between conditions.

Data Visualization: The normalized residuals facilitate effective visualization through t-SNE, UMAP, and other dimensionality reduction techniques.

Addressing Analytical Challenges

The application of Pearson residuals to scRNA-seq data addresses several specific analytical challenges:

Handling of Zero Inflation: scRNA-seq data exhibits an abundance of zero counts, both due to biological absence of expression and technical dropout events. The Gamma-Poisson model underlying Pearson residuals naturally accounts for this zero inflation through its distributional form.

Overdispersion Adjustment: Biological count data typically exhibits overdispersion relative to the Poisson distribution. The Gamma-Poisson model explicitly parameterizes this overdispersion through the α parameter, which is incorporated into the denominator of the Pearson residuals formula.

Compositional Effects: The use of size factors in the GLM specification helps account for the compositional nature of sequencing data, where counts are relative rather than absolute measures.

Limitations and Alternative Approaches

While Pearson residuals provide a powerful normalization approach, they have certain limitations that researchers should consider:

Computational Intensity: For extremely large datasets (millions of cells), the GLM fitting process can be computationally demanding, though packages like glmGamPoi have significantly improved efficiency [19].

Dependence on Model Fit: The quality of the residuals depends on the adequacy of the Gamma-Poisson model for the specific dataset. Model diagnostics should be employed to verify assumptions.

Alternative Parameterizations: Some implementations offer variations such as clipped Pearson residuals (to handle extreme values) or randomized quantile residuals (which provide uniform distribution under the correct model) [20].

In conclusion, Pearson residuals derived from the Gamma-Poisson model provide a statistically rigorous approach for normalizing scRNA-seq data, effectively addressing heteroskedasticity and enabling reliable application of downstream statistical methods. Their integration into comprehensive analysis workflows supports robust biological discovery from single-cell transcriptomic studies.

Single-cell RNA-sequencing (scRNA-seq) data analysis grapples with a fundamental challenge: count tables are inherently heteroskedastic, meaning counts for highly expressed genes demonstrate substantially more variance than those for lowly expressed genes. This property violates the assumptions of many standard statistical methods, including principal component analysis (PCA), which perform optimally with uniform variance across the data's dynamic range. Variance-stabilizing transformations (VSTs) serve as a critical preprocessing step to address this heteroskedasticity, enabling more reliable application of downstream analytical techniques [2] [1].

The choice of transformation is pivotal for principal component analysis, as it directly influences which biological signals are captured most effectively. This application note explores two advanced approaches for preprocessing scRNA-seq data: the acosh transformation, derived from the delta method, and methods based on latent expression inference. We frame this discussion within the broader thesis that proper variance stabilization is a prerequisite for biologically meaningful PCA in RNA-seq research, providing detailed protocols for their implementation and evaluation.

Theoretical Foundations of Transformation Approaches

The Gamma-Poisson Model and Mean-Variance Relationship

The foundation of most VSTs for unique molecular identifier (UMI)-based scRNA-seq data is the gamma-Poisson model (also known as the negative binomial model). This model accurately captures the technical noise properties of UMI data, characterized by a quadratic mean-variance relationship expressed as Var[Y] = μ + αμ², where μ is the mean expression and α represents the overdispersion parameter, quantifying additional biological variation beyond Poisson sampling noise [2] [1].

Four principal families of transformations are employed in scRNA-seq analysis, each with distinct theoretical underpinnings:

- Delta Method VSTs: These apply a non-linear function designed to render the variance approximately constant across the expression range, based on the assumed mean-variance relationship [2].

- Residuals-based VSTs: This approach uses residuals from a generalized linear model (GLM), such as Pearson residuals, which account for technical covariates [2] [1].

- Latent Expression Inference: These methods infer a latent, "true" expression level by modeling the count data as emanating from an underlying generative process, effectively denoising the observations [2] [1].

- Count-Based Factor Analysis: This family bypasses explicit transformation, instead directly generating a low-dimensional latent representation using models like gamma-Poisson factor analysis [2].

Table 1: Core Transformation Approaches for scRNA-seq Data

| Approach Family | Key Principle | Example Methods | Primary Output |

|---|---|---|---|

| Delta Method | Applies a variance-stabilizing function derived via the delta method | acosh, Shifted Logarithm |

Transformed normalized counts |

| Model Residuals | Uses residuals from a fitted gamma-Poisson GLM | Pearson residuals (sctransform) |

Standardized residuals |

| Latent Expression | Infers latent expression values from a generative model | Sanity, Dino, Normalisr | Estimated latent expression |

| Factor Analysis | Directly models counts for dimensionality reduction | GLM PCA, NewWave | Low-dimensional embedding |

TheacoshTransformation: Theory and Protocol

Mathematical Derivation and Rationale

The acosh transformation is the theoretically justified variance-stabilizing transformation for the gamma-Poisson distribution. Given the mean-variance relationship Var[Y] = μ + αμ², the delta method yields the following exact VST:

g(y) = (1/√α) * acosh(2αy + 1) [2]

This transformation is closely approximated by the more familiar shifted logarithm g(y) = log(y + y₀), especially when the pseudo-count y₀ is set to 1/(4α) [2] [1]. The acosh function, or inverse hyperbolic cosine, is defined for real numbers ≥ 1 and returns a non-negative real number. For real values x > 1, it satisfies acosh(x) = ln(x + √(x² - 1)) [21] [22].

Experimental Protocol: Implementing theacoshTransformation

The following protocol details the steps for applying the acosh transformation to a UMI count matrix prior to PCA.

Input: A raw UMI count matrix (genes × cells). Output: A variance-stabilized matrix ready for PCA.

Step 1: Size Factor Normalization

- Calculate the total UMIs per cell (

cell_total = sum(genes) y_gc). - Compute the average total UMIs across all cells (

L = average(cell_total)). - For each cell

c, estimate its size factor:s_c = cell_total_c / L[2] [1]. - Optional but common: Scale size factors to have a mean of 1 by dividing each

s_cby the mean of alls_c.

Step 2: Overdispersion Estimation

- Estimate the gene-wise overdispersion parameter

α_gfor each gene. This can be achieved using functions from packages likeglmGamPoioredgeR. ThetransformGamPoipackage offers integrated workflows for this purpose.

Step 3: Apply the acosh Transformation

- For each count

y_gc(for genegin cellc), compute the normalized and transformed value:z_gc = (1 / √α_g) * acosh(2 * α_g * (y_gc / s_c) + 1)[2]. - The resulting matrix

Zis variance-stabilized and can be used as input to PCA.

Figure 1: Workflow for the acosh transformation. The process involves sequential steps of count normalization and application of the variance-stabilizing function.

Critical Parameter Considerations

The relationship between the shifted logarithm and the acosh transformation highlights a common pitfall. Using fixed normalization schemes like CPM (Counts Per Million) with L=10^6 is equivalent to setting a tiny pseudo-count (y₀ = 0.005), which implies an unrealistically high overdispersion (α = 50). Similarly, Seurat's default L=10,000 implies α = 0.5. Instead, it is recommended to directly estimate a typical overdispersion from the data and parameterize the transformation accordingly using y₀ = 1/(4α) [2] [1].

Latent Expression Inference: Theory and Protocol

Conceptual Framework

Latent expression inference methods operate on a different principle than direct transformations. They postulate a generative model where the observed counts are a noisy reflection of an underlying, true (latent) expression state. These methods use statistical inference, often within a Bayesian framework, to estimate these latent expression values, effectively denoising the data [2] [1].

Key Methods:

- Sanity: A fully Bayesian model that infers latent expression using a variational mean-field approximation for a log-normal Poisson mixture. It can output a posterior mean and standard deviation (Sanity Distance) or the maximum a posteriori estimate (Sanity MAP) [2].

- Dino: Fits mixtures of gamma-Poisson distributions and returns random samples from the posterior distribution [2].

- Normalisr: Infers logarithmic latent gene expression using a binomial generative model, returning the minimum mean square error estimate [2].

Experimental Protocol: Using Sanity for Latent Expression

This protocol outlines the use of the Sanity tool to obtain latent expression estimates.

Input: A raw UMI count matrix (genes × cells). Output: A matrix of inferred latent expression values.

Step 1: Data Preparation

- Format the count data into a genes × cells matrix compatible with the Sanity package (e.g., as a

matrixorDataFrameobject in R).

Step 2: Model Configuration

- Choose the Sanity variant based on the downstream need:

- Sanity MAP: For a single "best" estimate of latent expression.

- Sanity Distance: To also obtain uncertainty estimates, useful for calculating cell-to-cell distances that account for this uncertainty.

Step 3: Model Fitting

- Execute the Sanity algorithm, which performs variational inference to approximate the posterior distribution of the latent expression levels. This involves iteratively updating the parameters of the approximating distribution until convergence to the true posterior.

Step 4: Extract Latent Expression

- For Sanity MAP, retrieve the maximum a posteriori estimate for each gene in each cell.

- For Sanity Distance, retrieve the mean of the posterior distribution for each gene-cell combination. The resulting matrix represents the inferred, denoised expression and can be used directly for PCA.

Figure 2: Workflow for latent expression inference with Sanity. The core process involves specifying a generative model and performing statistical inference to estimate the underlying expression.

Comparative Analysis and Benchmarking

Performance Evaluation Framework

Evaluating transformation efficacy requires benchmarking against defined biological and technical tasks. Key benchmarks include:

- Preservation of Biological Variance: The ability to reveal meaningful cell-type separation in a homogeneous cell population.

- Suppression of Technical Variance: The effectiveness in removing variation due to technical artifacts like library size (size factor).

- Downstream Task Performance: The impact on the accuracy of clustering, differential expression analysis, and trajectory inference.

Results from Comparative Studies

A comprehensive 2023 benchmark study compared the four transformation families across simulated and real-world datasets [2]. The results highlighted several critical findings:

Table 2: Benchmarking Results of Transformation Methods

| Method | Handling of Library Size Effects | Variance Stabilization for Lowly Expressed Genes | Overall Benchmark Performance |