Visualizing High-Dimensional Gene Expression with PCA: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive guide to Principal Component Analysis (PCA) for visualizing and interpreting high-dimensional gene expression data from technologies like RNA-seq and microarrays.

Visualizing High-Dimensional Gene Expression with PCA: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive guide to Principal Component Analysis (PCA) for visualizing and interpreting high-dimensional gene expression data from technologies like RNA-seq and microarrays. Aimed at researchers, scientists, and drug development professionals, it covers foundational concepts, practical methodologies, advanced troubleshooting for noisy biological data, and validation techniques to ensure biological relevance. By exploring both standard and novel PCA applications, this guide empowers scientists to uncover hidden patterns, identify sample clusters, and drive discoveries in genomics and clinical research.

Understanding PCA and Its Critical Role in Gene Expression Analysis

In the realm of modern biology, particularly in genomics and transcriptomics, researchers increasingly encounter datasets where the number of measured variables (d) far exceeds the number of observations (n). This "large d, small n" paradigm presents significant analytical challenges collectively known as the curse of dimensionality. This technical guide explores these challenges within the context of gene expression analysis and demonstrates how Principal Component Analysis (PCA) serves as an essential statistical tool for visualizing and interpreting high-dimensional biological data. We provide a comprehensive examination of the mathematical foundations, practical implementations, and methodological considerations for applying PCA to transcriptomic datasets, enabling researchers to extract meaningful patterns from complex biological systems.

The Curse of Dimensionality in Biological Data

Defining the "Large d, Small n" Problem

High-dimensional data in biology is characterized by a fundamental imbalance: the number of features (dimensions) is much larger than the number of observations. In transcriptomic studies, it is common to analyze expressions of over 20,000 genes (the features, P) across fewer than 100 samples (the observations, N) [1]. This creates a scenario where P ≫ N, leading to what is statistically known as an "underdetermined system" with potentially infinite solutions [1].

The term "curse of dimensionality" refers to the computational, analytical, clustering, and visualization challenges that arise when working with such high-dimensional data [1] [2]. These challenges become particularly acute in digital medicine, where multiple high-dimensional data streams—including medical imaging, clinical variables, genome sequencing, and wearable sensor data—create an enormously complex representation of a patient's health state [2].

Mathematical and Practical Consequences

The curse of dimensionality manifests through several critical mathematical consequences:

- Data sparsity: As dimensions increase, data points become increasingly scattered through the high-dimensional space. The average distance between points grows, creating expansive "blind spots"—contiguous regions of feature space without any observations [2].

- Covariance matrix singularity: When P ≫ N, the variance-covariance matrix becomes singular, meaning it cannot be inverted, causing failures in many statistical calculations [1].

- Performance misestimation: Models trained on high-dimensional data with inadequate sample sizes often show highly variable performance estimates, leading to unpredictable real-world performance after deployment [2].

Table 1: Characteristics of High-Dimensional Biological Datasets

| Data Type | Typical Dimensions | Primary Challenges | Common Applications |

|---|---|---|---|

| RNA-seq Gene Expression | 20,000+ genes, <100 samples [1] | Visualization, overfitting, computational complexity | Differential expression, biomarker discovery [3] |

| Medical Imaging | 1,000,000+ voxels, 100s-1000s of images [2] | Storage, processing power, feature extraction | Disease diagnosis, treatment monitoring |

| Genomic Data | 1,000,000+ SNPs, 1000s of individuals [4] | Multiple testing, population stratification | GWAS, personalized medicine |

| Speech Biomarkers | 1000s of features, 10s-100s of patients [2] | Generalizability, model robustness | Neurological disease detection |

Principal Component Analysis: Mathematical Foundations

Core Conceptual Framework

Principal Component Analysis (PCA) is a dimensionality reduction technique that identifies the most important directions—called principal components—in a dataset [5]. These components are orthogonal directions that maximize variance, allowing researchers to reduce the number of dimensions while retaining as much information as possible [5].

PCA can be understood through three complementary perspectives:

- A Rotation Procedure: PCA rotates the original coordinate system to align with directions of maximum variance [6].

- Eigenvalue Decomposition: PCA decomposes the covariance matrix into eigenvalues and eigenvectors that define the new coordinate system [6].

- Linear Combinations: PCs are linear combinations of the original variables, weighted by coefficients that maximize variance capture [6].

Algorithmic Steps

The computational implementation of PCA follows a standardized workflow:

Centering the Data: Subtract the mean of each feature so the dataset is centered at zero: Xcentered = X - X̄ [5]

Computing the Covariance Matrix: Capture relationships between features: C = (1/n) XcenteredT Xcentered [5]

Performing Eigen Decomposition: Decompose the covariance matrix into eigenvalues and eigenvectors: C = VΛVT [5] The eigenvectors (principal components) represent new coordinate axes, and eigenvalues indicate the amount of variance they capture.

Selecting Top k Components: Choose the k eigenvectors with the largest eigenvalues to form the reduced dataset [5].

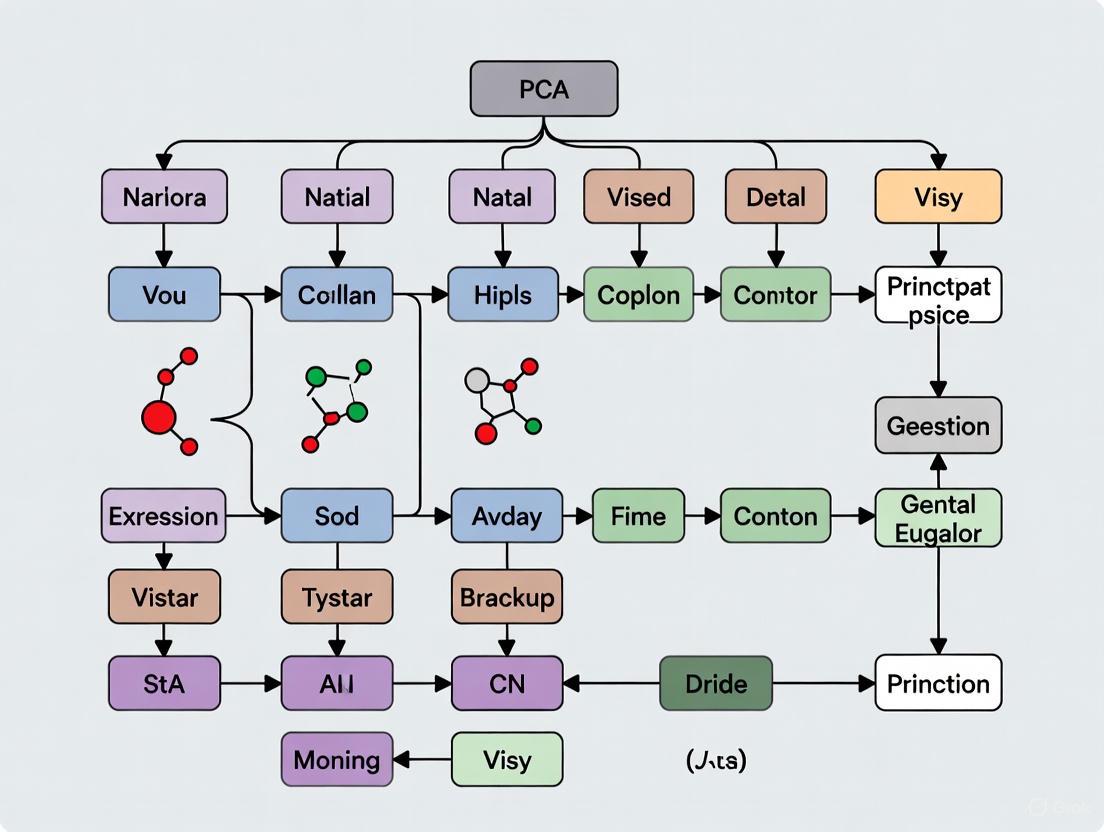

Figure 1: PCA Computational Workflow. The process transforms high-dimensional gene expression data into a lower-dimensional representation while preserving maximal variance.

Practical Implementation for Gene Expression Data

Data Preprocessing Considerations

Proper data preprocessing is critical for successful PCA of transcriptomic data. Key considerations include:

- Centering vs. Standardizing: PCA can be performed on either covariance matrices (using centered data) or correlation matrices (using standardized data). Standardization—centering and dividing by standard deviation—is recommended when variables are on different scales to prevent high-magnitude features from dominating the components [3] [6].

- Data Transformation: For RNA-seq data, normalization and transformation (e.g., log transformation) are often necessary before PCA to address mean-variance relationships and make the data more suitable for linear methods [3].

Computing PCA in R

The following code demonstrates PCA implementation using R's prcomp() function, commonly used for transcriptome data:

Interpreting PCA Output

PCA generates three fundamental types of information essential for biological interpretation:

- PC Scores: Coordinates of samples in the new PC space, used to visualize sample relationships [3].

- Eigenvalues: Represent the variance explained by each PC, used to determine the importance of each component [3].

- Variable Loadings: Reflect the weight each original variable contributes to a PC, interpreted as the correlation between the PC and original variables [3].

Table 2: Essential Components of a PCA Result for Gene Expression Data

| Component | Description | Biological Interpretation | Example Extraction in R |

|---|---|---|---|

| PC Scores | Coordinates of samples in the new PC space | Reveals sample clustering, outliers, and batch effects | sample_pca$x |

| Loadings | Weight of each gene on PCs | Identifies genes driving observed patterns | sample_pca$rotation |

| Eigenvalues | Variance captured by each PC | Determines how many PCs to retain | sample_pca$sdev^2 |

| Variance Explained | Percentage of total variance per PC | Assesses information retention in reduced dimensions | (sdev^2)/sum(sdev^2)*100 |

Advanced PCA Applications in Bioinformatics

Supervised and Sparse PCA Variations

Standard PCA has been extended in several directions to address specific bioinformatics challenges:

- Supervised PCA: Incorporates outcome variables to guide dimension reduction, potentially improving predictive performance for specific biological questions [4].

- Sparse PCA: Modifies PCA to produce components with sparse loadings (many loadings set to zero), enhancing interpretability by identifying smaller gene subsets driving each component [4].

- Functional PCA: Specifically designed for time-course gene expression data, capturing dynamic patterns across multiple time points [4].

Pathway and Network-Informed PCA

Rather than applying PCA to all genes simultaneously, researchers can incorporate biological hierarchy:

- Pathway-Based PCA: Conduct separate PCA on genes within predefined pathways, using the resulting PCs to represent pathway activity in downstream analyses [4].

- Network Module PCA: Perform PCA on genes within network modules identified through co-expression or protein-protein interaction networks, capturing coordinated biological programs [4].

Figure 2: Advanced PCA Applications in Bioinformatics. Beyond standard applications, PCA variants address specific biological questions and data structures.

Table 3: Research Reagent Solutions for PCA in Transcriptomics

| Tool/Resource | Function | Application Context | Implementation Example |

|---|---|---|---|

| R Statistical Environment | Primary computing platform | Data preprocessing, analysis, and visualization | Comprehensive ecosystem for statistical computing |

| prcomp() function | PCA computation | Core algorithm implementation | sample_pca <- prcomp(expression_matrix, scale. = TRUE) [3] |

| ggplot2 package | Visualization of results | Creating publication-quality PCA plots | Visualizing PC scores with sample groupings |

| FactoMineR package | Enhanced PCA implementation | Additional metrics and visualization options | Supplementary tools for biplots and dimension interpretation |

| Gene Expression Matrix | Structured input data | Formatted gene-by-sample data | Normalized, transformed count data from RNA-seq |

| Pathway Databases | Biological context interpretation | Relating PCs to known biological processes | KEGG, GO, Reactome for functional interpretation |

Experimental Protocol: PCA for Transcriptome-Wide Pattern Discovery

Sample Preparation and Data Generation

- Experimental Design: Ensure adequate sample size and biological replication. While "large d, small n" is common, extremely small sample sizes (n < 10) severely limit statistical power and generalizability [2].

- RNA Extraction and Sequencing: Follow standardized protocols for RNA extraction, library preparation, and sequencing to minimize technical artifacts.

- Quality Control: Assess RNA integrity, sequencing depth, and alignment metrics before proceeding to analysis.

Data Preprocessing Pipeline

- Normalization: Apply appropriate normalization method (e.g., TPM for RNA-seq, RLE, or quantile normalization) to address technical variability.

- Filtering: Remove lowly expressed genes and outliers that may disproportionately influence PCA results.

- Transformation: Apply variance-stabilizing or log-transformation to make data more suitable for linear methods like PCA.

- Standardization: Standardize genes to mean=0 and variance=1 if they are on different scales, particularly when using correlation matrix-based PCA.

PCA Execution and Validation

- Component Selection: Use scree plots and cumulative variance explained to determine the number of meaningful components to retain.

- Batch Effect Assessment: Color samples by batch in PCA plots to identify potential technical confounders.

- Biological Validation: Relate PC patterns to known biological covariates (e.g., treatment groups, patient characteristics).

- Stability Assessment: Use bootstrap or subsampling approaches to assess stability of principal components.

The challenge of high-dimensionality in gene expression data represents both a fundamental analytical obstacle and an opportunity for discovery. Principal Component Analysis serves as a powerful approach to navigate this complexity, transforming the "curse of dimensionality" into a manageable framework for biological insight. When properly implemented and interpreted, PCA enables researchers to visualize global patterns, identify key sources of variation, and generate hypotheses about underlying biological processes. As high-dimensional data continue to proliferate across biomedical research, mastering these dimensionality reduction techniques remains essential for advancing our understanding of complex biological systems.

What is PCA? Defining Principal Components, Loadings, and Explained Variance

In fields ranging from drug development to genetics, researchers increasingly face the challenge of analyzing high-dimensional data, where the number of variables measured can reach into the tens of thousands. Single-cell RNA sequencing (scRNA-seq), for instance, routinely generates datasets with approximately 20,000 genes across thousands to millions of cells [7] [8]. Visualizing, exploring, and interpreting such data in its raw form is practically impossible. Principal Component Analysis (PCA) addresses this challenge by performing dimensionality reduction, transforming complex, high-dimensional datasets into a simpler format while preserving the most critical patterns and trends [9] [10] [11]. By reducing data to its essential components, PCA enables researchers to identify underlying structures, classify samples, and generate testable hypotheses about biological systems. This technical guide explores the core concepts of PCA—principal components, loadings, and explained variance—with a specific focus on its application in visualizing high-dimensional gene expression data.

Core Concepts of PCA: A Technical Definition

What is Principal Component Analysis?

Principal Component Analysis (PCA) is a linear dimensionality reduction technique that simplifies complex datasets by identifying new, uncorrelated variables called principal components [9]. These components are linear combinations of the original variables, constructed such that the first component captures the largest possible variance in the data, the second component captures the next highest variance under the constraint of being orthogonal (uncorrelated) to the first, and so on [9] [10] [12]. The result is a reoriented coordinate system where the axes are ranked by their importance in describing the data structure. PCA was invented by Karl Pearson in 1901 and later independently developed by Harold Hotelling in the 1930s [9]. It is known by different names across disciplines, including the discrete Karhunen–Loève transform in signal processing and empirical orthogonal functions in meteorological science [9].

The Mathematics Behind PCA

Mathematically, PCA is performed through eigendecomposition of the data's covariance matrix or singular value decomposition (SVD) of the data matrix itself [9]. For a data matrix ( X ) with ( n ) samples (rows) and ( p ) variables (columns), where each variable is centered (mean-zero), the PCA transformation is defined by a set of vectors ( \mathbf{w}{(k)} = (w1, ..., wp){(k)} ), with the projection of a data vector ( \mathbf{x}_{(i)} ) onto these vectors giving the principal component scores:

[ t{k(i)} = \mathbf{x}{(i)} \cdot \mathbf{w}_{(k)} \quad \text{for} \quad i=1,\dots,n \quad k=1,\dots,l ]

This can be expressed in matrix form as:

[ \mathbf{T} = \mathbf{X} \mathbf{W} ]

where ( \mathbf{T} ) is the matrix of scores, and ( \mathbf{W} ) is the matrix of weights whose columns are the eigenvectors of ( \mathbf{X}^T \mathbf{X} ) [9]. The eigenvectors determine the direction of the principal components, while the corresponding eigenvalues represent the amount of variance captured by each component [10] [11].

Table 1: Key Mathematical Entities in PCA

| Entity | Symbol | Description | Role in PCA |

|---|---|---|---|

| Data Matrix | ( \mathbf{X} ) | ( n \times p ) matrix of original data | Input to the PCA algorithm |

| Eigenvectors | ( \mathbf{w}_{(k)} ) | Direction vectors of maximum variance | Define orientation of principal components |

| Eigenvalues | ( \lambda_k ) | Scalars representing magnitude of variance | Quantify variance explained by each PC |

| Scores | ( \mathbf{T} ) | Transformed coordinates in PC space | Represent projected data points |

| Loadings | ( \mathbf{L} ) | Eigenvectors scaled by ( \sqrt{\lambda_k} ) | Relate original variables to PCs |

The Principal Components

Defining Principal Components

Principal components (PCs) are synthetic variables constructed as linear combinations of the original variables [11]. They are designed to be orthogonal to each other, ensuring they capture non-redundant information [12]. The first principal component (PC1) is the direction through the data that captures the maximum possible variance [9] [10]. Formally, the first weight vector ( \mathbf{w}_{(1)} ) is defined as:

[ \mathbf{w}{(1)} = \arg \max{\|\mathbf{w}\|=1} \left{ \sumi \left( \mathbf{x}{(i)} \cdot \mathbf{w} \right)^2 \right} ]

This is equivalent to finding the eigenvector of the covariance matrix corresponding to the largest eigenvalue [9]. Geometrically, PC1 is the line that best fits the cloud of data points, where "best" is defined as minimizing the sum of squared perpendicular distances from points to the line [12].

Higher-Order Components

The second principal component (PC2) accounts for the next highest variance under the constraint that it must be orthogonal to the first component [9] [10]. Mathematically, this is achieved by subtracting the first ( k-1 ) components from the data matrix:

[ \mathbf{\hat{X}}k = \mathbf{X} - \sum{s=1}^{k-1} \mathbf{X} \mathbf{w}{(s)} \mathbf{w}{(s)}^{\mathsf{T}} ]

and then finding the weight vector that extracts the maximum variance from this residual matrix [9]. This process continues until ( p ) components have been calculated, equal to the original number of variables [12]. However, in practice, only the first few components are typically retained, as they capture the majority of the interesting variance in the data.

Loadings: Interpreting the Relationship Between Variables and Components

What Are Loadings?

Loadings are crucial for interpreting the relationship between the original variables and the principal components. Mathematically, loadings are defined as eigenvectors scaled by the square root of their corresponding eigenvalues:

[ \text{Loadings} = \text{Eigenvectors} \cdot \sqrt{\text{Eigenvalues}} ]

[13]. While eigenvectors are unit vectors indicating direction, loadings incorporate information about the variance along these directions [13]. Loadings can be understood as the covariances or correlations between the original variables and the unit-scaled principal components [13].

Interpreting Loadings

Loadings provide a means to interpret what each principal component represents in terms of the original variables [13] [12]. A loading value indicates how much a specific original variable contributes to a particular principal component. The sign of the loading indicates the nature of this relationship:

- Positive loading: The variable increases with the component

- Negative loading: The variable decreases as the component increases [14]

The magnitude of a loading reflects its importance, with larger absolute values indicating stronger contributions to the component [14] [12]. Since loadings are derived from cosine functions, they range from -1 to +1 [14]. In practical terms, variables with loadings near ±1 for a specific component strongly influence that component, while variables with near-zero loadings contribute little.

Table 2: Comparison of PCA Terminology Across Applications

| Concept | General Definition | Gene Expression Context |

|---|---|---|

| Variables | Original measured features | Genes (20,000+ in scRNA-seq) |

| Samples | Individual observations | Single cells (thousands to millions) |

| Loadings | Relationship between variables and PCs | Which genes define each component |

| Scores | Projection of samples onto PCs | Cell coordinates in reduced space |

| Variance Explained | Proportion of total variance captured by a PC | Amount of transcriptional variance captured |

Explained Variance: Quantifying Information Content

Defining Explained Variance

In PCA, "variance" refers to the summative variance or total variability across all original variables [15]. The explained variance of a principal component is equal to the eigenvalue associated with that component [16]. The total variance in the data is the sum of all eigenvalues, which equals the sum of variances of the original variables when the data is centered [15]. The proportion of total variance explained by the ( k )-th principal component is given by:

[ \text{Explained Variance Ratio}k = \frac{\lambdak}{\sum{j=1}^p \lambdaj} ]

where ( \lambda_k ) is the eigenvalue of the ( k )-th component [16]. This ratio represents the relative amount of variance explained by each component [16].

The Scree Plot and Component Selection

A scree plot is a visual tool used to decide how many principal components to retain [10] [12]. It displays eigenvalues in descending order, showing the decreasing rate at which variance is explained by additional components [12]. Several criteria help determine the optimal number of components:

- Elbow Method: Identify the point where the scree plot curves elbow, retaining components before the drop-off [10]

- Percentage Threshold: Keep enough components to explain a predetermined percentage of total variance (e.g., 90%) [12]

- Kaiser Criterion: Retain components with eigenvalues greater than 1 when using correlation matrices, or greater than the average eigenvalue [12]

Table 3: Example of Variance Explanation in a PCA Result

| Principal Component | Eigenvalue | Explained Variance | Cumulative Explained Variance |

|---|---|---|---|

| PC1 | 1.651 | 47.9% | 47.9% |

| PC2 | 1.220 | 35.4% | 83.3% |

| PC3 | 0.577 | 16.7% | 100.0% |

| Total | 3.448 | 100% |

Data adapted from [15]

The PCA Workflow: A Step-by-Step Protocol

The standard PCA workflow involves several sequential steps that transform raw data into its principal components. The following diagram illustrates this complete process:

Figure 1: The PCA Analysis Workflow. This diagram illustrates the five key steps in performing Principal Component Analysis, from data preparation to final results.

Step 1: Data Standardization

The first step involves standardizing the data to ensure each variable contributes equally to the analysis [10] [11]. This is critical when variables are measured on different scales, as PCA is sensitive to differences in variance [11]. Standardization transforms each variable to have a mean of zero and standard deviation of one:

[ z = \frac{x - \mu}{\sigma} ]

where ( \mu ) is the variable mean and ( \sigma ) is its standard deviation [11]. In gene expression studies, this prevents highly expressed genes from dominating the analysis simply due to their larger numerical values [12].

Steps 2-3: Covariance Matrix and Eigen Decomposition

After standardization, the covariance matrix is computed to understand how variables vary from the mean with respect to each other [11]. This symmetric ( p \times p ) matrix contains all possible pairwise covariances between variables [11]. The signs of the covariance values indicate the nature of relationships:

- Positive covariance: Variables increase or decrease together

- Negative covariance: One variable increases when the other decreases

- Zero covariance: No linear relationship between variables [11]

Eigendecomposition is then performed on this covariance matrix to extract eigenvectors (principal component directions) and eigenvalues (amount of variance explained by each component) [9] [11].

Steps 4-5: Component Selection and Data Projection

Using the scree plot and variance explanation criteria, the number of components to retain is determined [12]. The selected eigenvectors form a feature vector that is used to project the original data into the new principal component space [11]. This projection creates the scores—the coordinates of each sample in the new coordinate system defined by the principal components [14] [12].

PCA in Gene Expression Research: Applications and Protocols

PCA for Single-Cell RNA-Sequencing Data

In single-cell RNA-sequencing (scRNA-seq) analysis, PCA is routinely applied for dimensionality reduction prior to clustering and visualization [7] [8]. Typical scRNA-seq datasets have extreme dimensionality, with ~20,000 genes (variables) across thousands to millions of cells (samples) [7]. The compositional nature of scRNA-seq data (where the total mRNA content per cell is fixed) makes it particularly suited for PCA, as the technique focuses on relative relationships rather than absolute values [7]. PCA helps mitigate the "dropout" problem in scRNA-seq, where genes randomly show zero counts due to technical limitations [7].

Experimental Protocol: PCA for scRNA-seq Analysis

The following protocol outlines a standard PCA workflow for single-cell gene expression data:

Data Preprocessing:

- Filter cells with unusually high or low gene counts

- Filter genes expressed in very few cells

- Normalize counts to account for sequencing depth differences

Transformations:

PCA Implementation:

- Use specialized tools (e.g., Seurat's

prcomp_irlbain R) capable of handling large matrices - Run PCA on the transposed expression matrix (cells × genes)

- Use specialized tools (e.g., Seurat's

Visualization and Interpretation:

- Create scree plot to determine significant components

- Plot PC1 vs. PC2 to visualize cell relationships

- Examine loadings to identify genes driving component separation

Table 4: Research Reagent Solutions for PCA in Genomic Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| Seurat | R package for single-cell genomics | Comprehensive toolkit for scRNA-seq analysis including PCA |

| Scanpy | Python-based single-cell analysis | PCA implementation optimized for large-scale genomic data |

| Bioconductor | Open source software for bioinformatics | Multiple packages offering robust PCA implementations |

| Random Matrix Theory (RMT) | Statistical framework for high-dimensional data | Guides selection of significant components in noisy data [8] |

| Centered Log-Ratio (CLR) Transformation | Compositional data transformation | Handles compositional nature of gene expression data [7] |

Advanced Topics and Recent Developments

Sparse PCA for Enhanced Interpretability

A limitation of standard PCA is that all original variables typically have non-zero loadings, making biological interpretation challenging with thousands of genes. Sparse PCA addresses this by imposing constraints that force some loadings to be exactly zero, resulting in principal components defined by only a subset of genes [8]. Recent advances combine sparse PCA with Random Matrix Theory (RMT) to automatically determine the optimal sparsity level, making the method nearly parameter-free [8]. This approach has shown consistent improvement over standard PCA in cell-type classification tasks across multiple single-cell RNA-seq technologies [8].

Compositional Data Analysis and PCA

Recognizing that scRNA-seq data is inherently compositional (relative abundances rather than absolute counts) has led to new preprocessing approaches. The centered log-ratio (CLR) transformation, part of the Compositional Data Analysis (CoDA) framework, can be applied before PCA to better account for the data's compositional nature [7]. Studies have shown that CLR-transformed data can provide more distinct and well-separated clusters in dimensionality reduction visualizations and improve trajectory inference in developmental studies [7].

Principal Component Analysis remains a cornerstone technique for exploring and visualizing high-dimensional data in biological research. By understanding its core concepts—principal components as variance-maximizing directions, loadings as variable-component relationships, and explained variance as an information content metric—researchers can effectively apply PCA to extract meaningful patterns from complex gene expression data. As genomic technologies continue to evolve, producing ever-larger and more complex datasets, variations like sparse PCA and integration with compositional data analysis will ensure PCA remains an essential tool in the scientist's computational toolkit.

Principal Component Analysis (PCA) stands as a foundational technique in the analysis of high-dimensional biological data. This whitepaper elucidates the core principles and applications of PCA, with a specific focus on visualizing gene expression data, reducing noise, and facilitating exploratory analysis. Framed within the context of genomic research and drug development, we provide a detailed examination of how PCA addresses the curse of dimensionality, enhances data interpretability, and serves as a critical preprocessing step for downstream analyses. The document includes standardized protocols, computational toolkits, and visual guides to empower researchers in effectively implementing PCA within their investigative workflows.

The advent of high-throughput profiling technologies has transformed biomedical research, enabling simultaneous measurement of tens of thousands of molecular features. Transcriptomic datasets routinely analyze over 20,000 genes across fewer than 100 samples, creating a classic high-dimensionality problem [1]. This "curse of dimensionality" presents significant challenges for visualization, analysis, and mathematical operations [1]. Principal Component Analysis (PCA), invented by Karl Pearson in 1901, remains a go-to solution for navigating this complexity [9]. As a linear dimensionality reduction technique, PCA transforms correlated variables into a smaller set of uncorrelated components that capture the essence of the data, making it indispensable for researchers confronting multidimensional genomic datasets [4] [9].

Core Principles of Principal Component Analysis

Mathematical Foundations

PCA operates through a structured mathematical process that transforms original variables into a new coordinate system. The technique identifies principal components (PCs) as eigenvectors of the data's covariance matrix, with corresponding eigenvalues representing the amount of variance captured by each component [9] [17]. The first principal component (PC1) corresponds to the direction of maximum variance in the data, with each subsequent component capturing the next highest variance while being orthogonal to previous components [9].

The transformation is defined by:

- Weight vectors (w₁, w₂, ..., wₚ) that form the principal components

- Projected values (t₁, t₂, ..., tₗ) representing the original data in the new coordinate system

- The fundamental relation: ( t_k(i) = x(i) \cdot w(k) ) for i = 1,...,n and k = 1,...,l [9]

The PCA Workflow

The standard PCA implementation follows a systematic workflow:

Figure 1: The standardized PCA workflow, from data preprocessing to transformed output for downstream analysis.

PCA for Visualizing High-Dimensional Gene Expression Data

Overcoming Visualization Barriers

In gene expression analysis, PCA enables visualization of high-dimensional data by projecting it onto a reduced number of principal components. The technique effectively addresses the fundamental limitation of human perception, which cannot intuitively visualize beyond three dimensions [1]. By transforming >20,000 gene dimensions into a 2D or 3D space, PCA allows researchers to identify patterns, clusters, and outliers that would otherwise remain hidden in the high-dimensional space [4].

Practical Implementation for Transcriptomic Data

The application of PCA to gene expression datasets follows a structured protocol:

- Data Preprocessing: Normalize read counts across samples, typically centering to median counts and transforming to natural log scale [18]

- Standardization: Scale each gene expression measurement to have mean = 0 and standard deviation = 1, ensuring comparable influence across genes [17]

- Dimensionality Reduction: Project the normalized data onto the top principal components (typically 2-3 for visualization) [4]

- Visual Interpretation: Examine the projected data for clustering patterns, outliers, and biological gradients

Table 1: Key Software Packages for PCA Implementation in Genomic Research

| Software Platform | Function/Command | Primary Use Case | Access |

|---|---|---|---|

| R Statistical Environment | prcomp() | General bioinformatics analysis | Open source |

| Python (scikit-learn) | PCA() in sklearn.decomposition | Machine learning pipelines | Open source |

| SAS | PRINCOMP and FACTOR procedures | Clinical and pharmaceutical research | Commercial |

| MATLAB | princomp() | Engineering and computational biology | Commercial |

| SPSS | Factor function (data reduction) | Social and behavioral sciences | Commercial |

Noise Reduction and Data Denoising Through PCA

The Mechanism of Noise Filtering

PCA contributes to noise reduction by segregating variance structure in the data. While not specifically designed for noise removal, PCA inherently separates strong, systematic patterns (typically captured in early PCs) from weaker, more random variations (often represented in later PCs) [19]. By retaining only the components with the highest eigenvalues, researchers effectively filter out dimensions contributing minimally to the overall variance structure, which frequently correspond to measurement noise and stochastic variations [17].

Empirical Evidence in Genomic Studies

In practical applications, PCA has demonstrated significant utility in denoising gene expression data. When applied to datasets like the U.S. Postal Service handwritten digits (usps) dataset, PCA effectively reduced the influence of artificially added Gaussian noise while preserving the underlying signal structure [19]. The denoising performance is tunable through the parameter k (number of components retained), allowing researchers to balance noise reduction against signal preservation [19].

Table 2: Variance Explained by Principal Components in a Typical Gene Expression Dataset

| Principal Component | Variance Explained (%) | Cumulative Variance (%) | Typical Interpretation |

|---|---|---|---|

| PC1 | 42.5 | 42.5 | Major biological signal (e.g., tissue type) |

| PC2 | 18.3 | 60.8 | Secondary biological signal (e.g., disease state) |

| PC3 | 8.7 | 69.5 | Technical batch effects or subtle biology |

| PC4 | 5.2 | 74.7 | Often includes mixed signals and noise |

| PC5+ | <3.0 each | >75.0 | Decreasing biological signal, increasing noise |

PCA in Exploratory Data Analysis and Bioinformatics

Hypothesis Generation and Data Quality Assessment

In exploratory analysis, PCA serves as a primary tool for initial data interrogation, allowing researchers to:

- Identify major sources of variation and potential confounding factors

- Detect sample outliers and technical artifacts

- Assess batch effects and data quality issues

- Generate hypotheses about underlying biological structure [4] [20]

The application of PCA to the entire dataset provides a comprehensive overview of the data structure, while focused PCA on specific gene sets (e.g., within pathways or network modules) can reveal more targeted biological insights [4].

Integration with Downstream Analysis

PCA frequently serves as a preprocessing step for various downstream analyses:

- Clustering Analysis: The first few PCs capturing most variation are used for clustering genes or samples instead of original expressions [4]

- Regression Analysis: PCs overcome collinearity problems in regression models, enabling construction of predictive models for clinical outcomes [4]

- Interaction Studies: PCA can accommodate interactions among genes, pathways, and network modules through innovative applications [4]

Figure 2: PCA as the foundational step in a comprehensive genomic analysis pipeline, enabling multiple downstream applications.

Advanced PCA Applications in Genomic Research

Supervised, Sparse, and Functional PCA

Recent methodological advances have extended PCA's utility in bioinformatics:

- Supervised PCA: Incorporates response variable information to guide component identification, improving empirical performance [4]

- Sparse PCA: Generates components with sparse loadings, enhancing interpretability by focusing on smaller gene subsets [4]

- Functional PCA: Analyzes time-course gene expression data, capturing dynamic patterns across experimental conditions [4]

Pathway and Network Integration

Innovative applications of PCA now accommodate biological hierarchy and structure:

- Pathway-Level PCA: Conducted on genes within predefined biological pathways, with PCs representing pathway effects [4]

- Network-Module PCA: Applied to genes within network modules, where PCs represent effects of functionally related gene groups [4]

- Interaction PCA: Accommodates gene-gene interactions by incorporating second-order terms in the analysis [4]

Experimental Protocol: Standardized PCA for Gene Expression Data

Data Preprocessing and Standardization

Data Normalization

- Normalize raw read counts using median scaling

- Apply log transformation (typically natural log) to stabilize variance

- Code example (Python):

Quality Control

- Remove genes with excessive missing values (>20%)

- Filter low-expression genes (based on counts per million thresholds)

- Identify and address technical batch effects

PCA Implementation and Component Selection

Covariance Matrix Computation

- Calculate the covariance matrix of standardized data

- Perform eigendecomposition to obtain eigenvectors and eigenvalues

Optimal Component Selection

- Use scree plots to visualize variance explained by each component

- Apply Kaiser criterion (eigenvalue >1) for initial selection

- Consider cumulative variance explained (typically 70-90%)

- Code example (Python):

Interpretation and Validation

Component Interpretation

- Examine loadings to identify genes contributing most to each component

- Correlate component scores with sample metadata

- Perform biological pathway enrichment on high-loading genes

Validation Procedures

- Split-data reproducibility: Apply PCA to training/test splits

- Bootstrap stability assessment: Evaluate component robustness

- Biological validation: Correlate components with known biological factors

Research Reagent Solutions

Table 3: Essential Computational Tools for PCA in Biomedical Research

| Tool Category | Specific Solution | Function/Purpose | Implementation |

|---|---|---|---|

| Programming Environments | R Statistical Environment | Data manipulation, statistical analysis | Open source |

| Python with scikit-learn | Machine learning implementation | Open source | |

| Visualization Packages | matplotlib (Python) | Basic plotting and visualization | Open source |

| seaborn (Python) | Enhanced statistical visualizations | Open source | |

| ggplot2 (R) | Grammar of graphics implementation | Open source | |

| Bioinformatics Suites | Scanpy | Single-cell RNA sequencing analysis | Open source |

| NIA Array Analysis | Web-based microarray analysis | Open source | |

| Commercial Platforms | SAS JMP | Pharmaceutical and clinical analytics | Commercial |

| MATLAB Bioinformatics | Engineering-focused implementations | Commercial |

Principal Component Analysis remains an indispensable tool in the analysis of high-dimensional genomic data, successfully addressing fundamental challenges in visualization, noise reduction, and exploratory analysis. Its mathematical robustness, computational efficiency, and interpretive flexibility make it particularly valuable for researchers and drug development professionals navigating the complexity of gene expression datasets. While newer dimensionality reduction techniques continue to emerge, PCA's proven utility, particularly when enhanced with modern adaptations like supervised and sparse implementations, ensures its continued relevance in biomedical research. As genomic technologies evolve toward even higher dimensionality through multi-omics integration and spatial profiling, PCA's role as a foundational analytical method appears secure, providing a critical bridge between raw data and biological insight.

In the field of genomics, researchers frequently encounter what is known as the "curse of dimensionality" when analyzing gene expression data. Modern transcriptomic studies, including those using RNA sequencing (RNA-seq) technologies, typically measure the expression levels of tens of thousands of genes across multiple biological samples. This creates a fundamental visualization challenge: while each gene represents a dimension in mathematical space, the human brain cannot perceive beyond three dimensions. In practical terms, this means that a dataset with 20,000 genes exists in a 20,000-dimensional space that we cannot directly observe or navigate. The curse of dimensionality refers to the computational, analytical, clustering, and visualization challenges that arise when dealing with such high-dimensional data [1]. As the number of variables (genes) increases, the data becomes increasingly sparse in the mathematical space, making it difficult to identify patterns, relationships, or groupings without specialized dimensionality reduction techniques [21].

This dimensional complexity is particularly pronounced in biological research where the quantity of genetic variables far outweighs the number of observations. In transcriptomic datasets, it is common to analyze more than 20,000 genes across fewer than 100 samples, creating a classic high-dimensionality problem where P (variables) ≫ N (observations) [1]. Such datasets pose significant practical concerns for analysis, including increased computation time, storage challenges, and potentially decreased accuracy in statistical models due to overfitting [22]. Principal Component Analysis (PCA) addresses these challenges by transforming the high-dimensional gene expression space into a lower-dimensional representation while preserving the most biologically meaningful information.

Mathematical Foundations of PCA in Gene Expression Analysis

The Core Mathematical Framework

Principal Component Analysis operates on the fundamental principle of identifying directions of maximum variance in high-dimensional data through eigenvalue decomposition of the data covariance matrix. For a gene expression matrix X with n samples (columns) and p genes (rows), where each element x_ij represents the expression level of gene i in sample j, PCA begins by centering the data by subtracting the mean expression of each gene. The algorithm then computes the covariance matrix C = (1/(n-1)) X X^T, which captures the relationships between genes across samples [21]. The principal components are derived from the eigenvectors of this covariance matrix, with the eigenvalues representing the amount of variance captured by each component.

Mathematically, the first principal component (PC1) is the linear combination of the original variables (genes) that captures the maximum variance in the data: PC1 = w1^T X, where w1 is the eigenvector corresponding to the largest eigenvalue of the covariance matrix. Each subsequent principal component is obtained similarly, with the constraint that it must be orthogonal to all previous components. This process generates a new coordinate system where the axes (principal components) are uncorrelated and ordered by the amount of original variance they explain [1] [21].

Data Transformation and Dimensionality Reduction

The transformation from gene expression space to principal component space can be represented as Y = W^T X, where W is the matrix of eigenvectors and Y is the transformed data in the principal component space. The dimensionality reduction occurs when we select only the first k principal components (where k ≪ p), effectively projecting the data from a p-dimensional space to a k-dimensional space while retaining most of the relevant biological information [23].

The proportion of total variance explained by the i-th principal component is given by λi/Σλ, where λi is the eigenvalue corresponding to that component. In practice, researchers often visualize the first two or three principal components, which typically capture the most significant sources of variation in the data, potentially including biological signals of interest, batch effects, or other technical artifacts [24].

Table 1: Key Mathematical Components of PCA for Gene Expression Analysis

| Mathematical Component | Biological Interpretation | Computational Consideration |

|---|---|---|

| Covariance Matrix | Captures co-expression patterns between genes across samples | Requires centered data; dimensions: p×p |

| Eigenvectors (Principal Components) | Directions of maximum variance in gene expression space | Orthogonal; ordered by variance explained |

| Eigenvalues | Amount of variance captured by each principal component | Determines significance of each PC |

| Projected Coordinates | Samples positioned in reduced-dimensional space | Enables 2D/3D visualization of sample relationships |

Practical Implementation: PCA Workflow for Transcriptomic Data

Data Preprocessing and Normalization

The successful application of PCA to gene expression data requires careful preprocessing to ensure that technical artifacts do not dominate the biological signal. For RNA-seq data, this typically begins with raw count normalization to account for differences in sequencing depth between samples. The DESeq2 package, commonly used in genomics, employs a median-of-ratios method to calculate size factors that normalize for library size [24]. Alternatively, transformation using the variance stabilizing transformation (VST) or regularized logarithm (rlog) can be applied to count data to stabilize variance across the mean expression range and make the data more suitable for PCA [24].

Gene filtering is another critical step, as excluding genes with low expression or minimal variance across samples can reduce noise and improve the signal-to-noise ratio in PCA. A common approach is to remove genes with very low counts (e.g., those with less than 10 counts across all samples) or those showing minimal variability (e.g., the bottom percentage of genes by variance) [23]. Missing data must also be addressed, as most PCA implementations require complete data matrices. While some methods like pcaMethods::pca can handle limited missing data, the prcomp function used in many genomics packages does not tolerate missing values, requiring either filtering of incomplete features or imputation [23].

Computational Implementation in R/Bioconductor

The Bioconductor project provides several specialized packages for performing PCA on gene expression data. The pcaExplorer package offers a user-friendly, interactive Shiny-based interface that simplifies exploratory data analysis with PCA for RNA-seq datasets [24]. This package automatically handles normalization and transformation steps while providing comprehensive visualization options. A typical analysis begins by loading the count matrix and sample metadata, then calling the pcaExplorer() function to launch the interactive application [24].

For large-scale genomic data, such as genome-wide association studies (GWAS), the SNPRelate package implements optimized algorithms for principal component analysis using a genomic data structure (GDS) file format. This format efficiently stores genotype data, with each byte encoding up to four SNP genotypes, thereby reducing file size and access time [25]. The package supports parallel computing to accelerate the calculation of the genetic covariance matrix, which is particularly valuable when working with large datasets [25].

Table 2: R/Bioconductor Packages for PCA Analysis of Genomic Data

| Package | Primary Application | Key Features | Data Input Format |

|---|---|---|---|

| pcaExplorer | RNA-seq exploratory analysis | Interactive Shiny interface, automated reporting | Count matrix + sample metadata |

| SNPRelate | Genome-wide association studies | Parallel computing, efficient GDS format | GDS format with genotype matrix |

| exvar | Integrated gene expression and variant analysis | Shiny apps for visualization, multiple species support | Fastq files or count matrices |

| DESeq2 | Differential expression analysis | Built-in PCA plotting, variance stabilizing transformation | DESeqDataSet object |

Interpreting PCA Results in Biological Context

Sample-Level Patterns and Batch Effects

PCA plots of gene expression data primarily reveal relationships between samples rather than between genes. When samples cluster together in PCA space, it indicates they have similar gene expression profiles across the entire transcriptome. Conversely, samples that are distant from each other have divergent expression patterns. The direction of separation along specific principal components often correlates with biological or technical variables recorded in the sample metadata [23].

A critical application of PCA in quality control is identifying batch effects - technical artifacts introduced when samples are processed in different batches, by different personnel, or using different reagents. When coloring points in a PCA plot by batch, overlapping clusters suggest minimal batch effects, while clear separation by batch indicates potential technical confounding that should be addressed before biological interpretation [23]. Similarly, PCA can reveal outlier samples that diverge dramatically from others in the same experimental group, potentially indicating sample contamination, poor RNA quality, or other technical issues that might warrant exclusion or additional investigation [24].

Biological Interpretation of Principal Components

Interpreting the biological meaning of principal components requires examining which genes contribute most strongly to each component. Genes with the highest absolute loading values (coefficients in the eigenvector) for a particular principal component have the greatest influence on that component's direction. Biplots, which overlay sample positions and gene loadings on the same plot, can help visualize which genes are driving the separation between sample groups [23].

Functional interpretation can be enhanced by performing gene set enrichment analysis on genes with high loadings for each principal component. The pcaExplorer package automates this process through integration with gene ontology (GO) databases, identifying biological processes, molecular functions, or cellular compartments overrepresented among genes with extreme loading values [24]. For example, in a study of neuronal gene expression, researchers observed that genes with negative fold changes connected to mitochondria, nucleoplasm, and the synaptic region of neurons were driving separation along specific principal components [26].

Advanced Applications and Integration with Other Technologies

Integration with Spatial Transcriptomics and Imaging

Recent advances in spatial transcriptomics technologies have created new opportunities and challenges for dimensionality reduction techniques. These datasets combine gene expression measurements with spatial coordinates in tissue sections, requiring specialized visualization approaches. The spatial transcriptomics imaging framework (STIM) builds on imaging-based computational frameworks to enable interactive visualization and alignment of high-throughput spatial sequencing datasets [27]. Such tools represent a convergence of PCA with computer vision techniques, allowing researchers to visualize how gene expression patterns vary across tissue architecture while managing the high dimensionality of spatial transcriptomics data.

Another innovative approach comes from the expressyouRcell package, which generates dynamic cellular pictographs to represent variations in gene expression at subcellular and organelle-level resolution. This tool uses the concept of choropleth maps to visualize fluctuations in gene and protein expression levels across multiple time points or experimental conditions [26]. By mapping quantitative gene expression changes to specific cellular compartments, researchers can intuitively communicate complex expression patterns that might be obscured in traditional PCA plots.

Cross-Disciplinary Integration with Remote Sensing

In a groundbreaking application of transcriptomics to ecology, researchers at the University of Notre Dame are partnering with NASA to correlate gene expression patterns in forest trees with remote sensing data from space-based instruments [28]. This project uses PCA and related techniques to link transcriptomic data from leaf samples with spectral signatures captured by the GEDI, ECOSTRESS, and DESIS instruments on the International Space Station. The spectral patterns reflected by leaves are strongly correlated with their chemical structure, which in turn reflects gene expression variation [28]. By reducing the dimensionality of both transcriptomic and spectral data, researchers can model how genetic expression for entire forests changes over time in response to environmental factors like drought, pest infestation, or disease.

Experimental Design and Protocol Considerations

Sample Size and Replication Requirements

The reliability of PCA results in gene expression studies depends heavily on appropriate experimental design. While there are no fixed rules for sample size in PCA, the stability of principal components improves with larger sample sizes. As a general guideline, studies with fewer than 10 samples per group may yield unstable PCA results, while studies with 20 or more samples per condition typically provide more robust component definitions. Biological replication is essential for distinguishing technical artifacts from biologically meaningful variation [24].

When designing experiments that will include PCA, researchers should ensure that metadata collection encompasses all potential sources of variation, including batch information, processing dates, technician identifiers, and relevant biological covariates. This comprehensive metadata enables post-hoc investigation of patterns observed in PCA plots and facilitates appropriate coloring and labeling of samples during exploratory analysis [23].

Protocol for PCA-Based Quality Assessment

A standardized protocol for PCA-based quality assessment of gene expression data includes the following steps:

Data Preparation: Begin with a raw count matrix from RNA-seq alignment, ensuring row names are gene identifiers and column names are sample identifiers. Prepare a metadata table with complete sample annotations [24].

Normalization: Apply appropriate normalization for the data type. For RNA-seq count data, use the DESeq2 median-of-ratios method or a similar approach to account for library size differences [24].

Variance Stabilization: Transform normalized counts using a variance-stabilizing transformation (VST) or regularized log transformation to address mean-variance relationships in count data [24].

PCA Computation: Perform principal component analysis on the transformed data using tools like

prcompin R or specialized functions in packages like pcaExplorer [24].Visualization: Create scatter plots of the first few principal components, coloring points by key biological and technical variables from the metadata. Include variance explained percentages on axis labels [23].

Interpretation: Identify patterns of clustering, separation, or outliers. Investigate potential batch effects and biological signals. Examine gene loadings for biologically interpretable patterns [24].

Documentation: Generate reproducible reports that capture both the code and interactive visualization state, facilitating collaboration and publication [24].

Table 3: Troubleshooting Common PCA Challenges in Gene Expression Analysis

| Problem | Potential Causes | Solutions |

|---|---|---|

| First PC dominated by technical artifacts | Batch effects, library size variation | Improve normalization, include batch correction |

| Low percentage of variance in early PCs | High noise, insufficient biological signal | Filter low-variance genes, increase sample size |

| Unexpected outlier samples | Sample contamination, poor RNA quality | Check RNA quality metrics, verify sample identity |

| Poor separation by experimental group | Weak treatment effect, heterogeneous responses | Verify experimental manipulation, consider subgroup analysis |

Computational Tools and Platforms

The effective application of PCA to gene expression data requires access to specialized computational tools and platforms. The following table summarizes key resources available to researchers:

Table 4: Essential Computational Resources for PCA in Gene Expression Analysis

| Resource | Type | Primary Function | Access |

|---|---|---|---|

| pcaExplorer | R/Bioconductor Package | Interactive exploration of RNA-seq data with PCA | Bioconductor: http://bioconductor.org/packages/pcaExplorer/ |

| exvar | R Package | Integrated analysis of gene expression and genetic variation | GitHub: https://github.com/omicscodeathon/exvar |

| SNPRelate | R/Bioconductor Package | High-performance PCA for genome-wide association studies | Bioconductor: http://bioconductor.org/packages/SNPRelate/ |

| DESeq2 | R/Bioconductor Package | Differential expression analysis with PCA visualization | Bioconductor: http://bioconductor.org/packages/DESeq2/ |

| Docker container for exvar | Containerized Environment | Reproducible analysis environment with pre-installed dependencies | Docker Hub: imraandixon/exvar |

Beyond the core computational packages, several resources specialize in the visualization and interpretation of PCA results:

The expressyouRcell package provides unique pictographic representations of gene expression changes in specific cellular compartments, offering an intuitive complement to traditional PCA plots [26]. This approach is particularly valuable for communicating results to interdisciplinary collaborators or non-specialist audiences.

For studies involving genetic variants, the VCF (Variant Call Format) file format serves as a standard for storing gene sequence variations, while the GDS (Genomic Data Structure) format implemented in SNPRelate offers efficient storage and access to large-scale genotype data [25]. These standardized formats facilitate the application of PCA to diverse genomic data types across different research contexts.

Principal Component Analysis remains an indispensable tool for navigating the high-dimensional landscapes generated by modern genomic technologies. By transforming gene expression space into its most informative dimensions, PCA provides researchers with a powerful approach to visualize complex datasets, identify quality issues, detect batch effects, and generate biological hypotheses. The integration of PCA with interactive visualization tools, spatial transcriptomics, and even remote sensing technologies continues to expand its utility across biological disciplines. As genomic datasets grow in size and complexity, the geometric perspective offered by PCA will remain essential for extracting meaningful biological insights from the multidimensional complexity of gene expression space.

A Step-by-Step Guide to Implementing PCA on RNA-seq and Microarray Data

In high-dimensional genomic studies, particularly those utilizing Principal Component Analysis (PCA) for visualization and exploration, the initial data preprocessing steps are not merely cosmetic; they are fundamental to the biological validity of the results. Gene expression data from technologies like RNA-sequencing (RNA-seq) and single-cell RNA-sequencing (scRNA-seq) is inherently messy, containing substantial technical noise and unwanted variation that can obscure the underlying biological signals [29]. Techniques like normalization, centering, and scaling are employed to mitigate these technical artefacts, allowing researchers to distinguish true biological variation from confounding factors.

The primary challenge stems from the fact that in raw genomic data, the differences between samples or cells can be dominated by technical effects, such as variations in library size (the total number of sequenced reads per sample) or the presence of a few highly expressed genes [29]. If left unaddressed, these technical variations can become the principal drivers of the apparent structure in the data, leading to PCA plots that reflect experimental artefacts rather than biological states. Therefore, the careful application of preprocessing techniques is a prerequisite for generating meaningful visualizations and insights from high-dimensional gene expression data.

Core Concepts and Their Impact on PCA

The Mechanics of PCA and Preprocessing

Principal Component Analysis (PCA) is a mathematical procedure that transforms a number of possibly correlated variables (e.g., the expression of thousands of genes) into a smaller number of uncorrelated variables called principal components (PCs) [30]. These PCs are ordered so that the first component (PC1) accounts for as much of the variability in the data as possible, and each succeeding component accounts for the highest remaining variance under the constraint of orthogonality [29]. The goal of PCA in genomics is often to reduce dimensionality to visualize an overview of the data and assess the relationship between samples based on their global gene expression profiles.

The application of PCA is deeply sensitive to the scale and distribution of the input data. Because PCA seeks directions of maximum variance, features with naturally large ranges (e.g., highly expressed genes) will dominate the component structure, even if their variation is technically driven or biologically uninteresting. Preprocessing steps directly manipulate the data matrix to ensure that the resulting principal components reflect the most biologically relevant sources of variation.

Defining the Preprocessing Triad

Normalization: This process adjusts for systematic technical differences between samples. The most common form is library size normalization, which corrects for the fact that some samples may have been sequenced more deeply than others, leading to larger total counts. Without this correction, samples would appear separated in PCA based on their total RNA output rather than their biological type [29]. Methods include Counts Per Million (CPM) and others like TMM and RLE, which can be more robust [31] [32].

Centering: This involves subtracting the mean expression of each gene across all samples. Gene-wise centering ensures that the principal components describe directions of variation around the mean, rather than the absolute expression level. This is a standard step in PCA that shifts the cloud of data points to be centered on the origin of the new component axes [30].

Scaling (Standardization): Also known as calculating Z-scores, this process involves dividing the centered expression values for each gene by its standard deviation across samples. This gives every gene unit variance, ensuring that highly variable genes do not automatically dominate the early principal components simply because of their scale. This step places an equal a priori weight on each gene for the downstream analysis, which can de-emphasize a small handful of highly expressed genes that might otherwise dominate the data [29].

The following workflow diagram illustrates the logical sequence and key decision points in preprocessing genomic data for PCA.

Detailed Methodologies and Experimental Protocols

Standard Protocol for Bulk RNA-Sequencing Data

This protocol is designed for a typical bulk RNA-seq dataset where the goal is to visualize sample relationships using PCA. The example uses R and common Bioconductor packages.

1. Data Loading and Initialization:

- Load your raw count matrix and sample metadata (colData). The count matrix should be genes as rows and samples as columns.

- Create a DESeq2DataSet object, specifying the experimental design.

2. Library Size Normalization (CPM Method):

- Calculate size factors to adjust for sequencing depth. The

counts_per_millionmethod effectively normalizes each sample's total count.

3. Transformation for Variance Stabilization:

- Apply a logarithmic transformation to the normalized counts. The pseudocount (+1) ensures stability for zero counts.

4. Centering and Scaling the Data:

- For each gene, subtract its mean expression and divide by its standard deviation. This is a gene-wise Z-score calculation.

5. Performing and Visualizing PCA:

- Execute PCA on the preprocessed matrix. The

prcompfunction is standard in R. - Visualize the PCA results using a scores plot (e.g., PC1 vs. PC2), coloring samples by experimental condition.

Specialized Considerations for Single-Cell RNA-Seq Data

Single-cell data presents unique challenges, such as an even higher prevalence of zero counts and strong "batch effects" from processing multiple cells simultaneously.

- Normalization: Methods tailored for scRNA-seq, like those in the

scranpackage, often pool cells to calculate more robust size factors, which are then applied to deconvolute the cell-specific biases. - Highly Expressed Gene Removal: It can be beneficial to exclude genes that are extremely and ubiquitously highly expressed (e.g., 'Rn45s' in mouse data), as they can consume a disproportionate share of the variance in early PCs [29].

- Batch Effect Correction: For data integrating multiple batches, advanced methods like ComBat (from the

svapackage) or MNN Correct should be applied after normalization but before PCA to remove technical batch effects.

Comparative Analysis of Normalization and Scaling Methods

The choice of normalization and scaling strategy can dramatically alter the outcome of a PCA. The table below summarizes the properties, strengths, and weaknesses of common methods.

Table 1: Comparison of Data Preprocessing Methods for Genomic PCA

| Method | Type | Key Function | Impact on PCA | Best Used For |

|---|---|---|---|---|

| CPM | Library Size Normalization | Adjusts for total sequencing depth per sample. | Prevents sample separation based purely on library size. | Initial exploration; when biological assumption of constant total RNA holds. |

| TMM / RLE | Library Size Normalization | Robustly estimates size factors, less sensitive to outliers. | Similar to CPM, but often more robust performance in differential analysis [32]. | Bulk RNA-seq with expected compositional differences between samples. |

| Log Transformation | Variance Stabilization | Compresses dynamic range, making data more symmetric. | Reduces dominance of very highly expressed genes; prepares data for Gaussian-based methods [29]. | Almost always after normalization, before centering/scaling. Essential for count data. |

| Centering | Mean Adjustment | Sets the mean expression of each gene to zero. | Ensures PCs describe variation, not mean levels. A standard prerequisite for PCA [30]. | Always performed before PCA. |

| Scaling (Z-score) | Variance Standardization | Gives every gene unit variance. | Allows all genes to contribute equally to PCA; prevents high-variance genes from dominating [29]. | When the research goal requires giving equal weight to all genes (e.g., finding subtle co-expression patterns). |

| No Scaling | - | Retains the original variance of each gene. | PCA will be dominated by the most variable genes, which may be biologically interesting but technically confounded. | When prior knowledge suggests highly variable genes are of primary interest. |

Another critical consideration is the selection of features (genes) used as input for the normalization and PCA procedures. Using non-differentially expressed genes (NDEGs) as a stable reference set for normalization has shown promise, particularly for cross-platform prediction models [33].

Table 2: Impact of Gene Selection on Normalization and Model Performance

| Gene Set | Basis for Selection | Role in Preprocessing | Impact on Downstream Analysis |

|---|---|---|---|

| All Genes | No selection; uses all detected features. | Standard approach for most protocols. | Can introduce noise if many genes are uninformative; technical variation may be prominent. |

| Differentially Expressed Genes (DEGs) | Statistical tests (e.g., ANOVA p < 0.05) for association with a trait. | Used for classification after normalization. | Improves classification model focus but is not suitable for defining the normalization itself. |

| Non-Differentially Expressed Genes (NDEGs) | Statistical stability (e.g., ANOVA p > 0.85) across sample groups. | Can serve as a robust internal reference set for normalization methods [33]. | May improve cross-platform performance and generalizability of models by reducing technical bias. |

The Scientist's Toolkit: Essential Research Reagents and Software

Table 3: Essential Tools for Preprocessing and PCA of Genomic Data

| Tool / Reagent | Category | Function in Workflow |

|---|---|---|

| DESeq2 | R/Bioconductor Package | Performs statistical normalization (median-of-ratios), differential expression, and supplies normalized counts for PCA. |

| EdgeR | R/Bioconductor Package | Offers alternative robust normalization methods (TMM) for bulk RNA-seq data. |

| Scanpy / Scran | Python / R Package | Comprehensive toolkits for single-cell RNA-seq analysis, including cell-specific normalization and PCA. |

| prcomp() | R Function | The standard function in R for performing Principal Component Analysis on a numeric matrix. |

| High-Quality RNA Samples | Wet-Lab Reagent | The foundational input; integrity (RIN > 8) is crucial for minimizing technical noise from degradation. |

| Stable Reference Genes | Bioinformatics Resource | A set of pre-validated, stable non-differentially expressed genes (NDEGs) for robust normalization [33]. |

The path from a raw count matrix to an interpretable PCA plot is paved with critical decisions regarding normalization, transformation, centering, and scaling. There is no single "correct" pipeline for all experiments; the optimal strategy depends on the data modality (e.g., bulk vs. single-cell), the specific biological question, and the nature of the technical variation present. However, a robust and widely applicable approach involves normalizing for library size using a method like TMM or CPM, followed by a log transformation, and culminating in centering and scaling the data prior to PCA. This process systematically minimizes technical artefacts, stabilizes variance, and standardizes gene contributions, thereby ensuring that the resulting visualization captures the most biologically meaningful sources of variation in the data. By rigorously applying these preprocessing steps, researchers can confidently use PCA to uncover true biological insights from high-dimensional genomic datasets.

Principal Component Analysis (PCA) is a foundational dimensionality reduction technique in bioinformatics, critically important for analyzing high-dimensional data like gene expression profiles from RNA-seq and single-cell RNA-seq (scRNA-seq) experiments. The method operates by transforming high-dimensional interrelated variables into a new set of uncorrelated variables called principal components (PCs), where the first component captures the largest variance in the data, followed by subsequent components in descending order of variance explained [34]. This transformation enables researchers to visualize global data structures, identify patterns, and detect sample clustering in two or three dimensions that would be impossible to discern in the original high-dimensional space [1] [4].

In bioinformatics, PCA addresses the "curse of dimensionality" problem, where the number of variables (P) - such as genes in transcriptomic studies - far exceeds the number of observations (N) - such as biological samples or single cells [1] [4]. This P≫N scenario presents significant challenges for computational analysis, statistical modeling, and data visualization. For example, in a typical RNA-seq experiment, researchers might analyze more than 20,000 genes across fewer than 100 samples, creating a classic high-dimensionality problem where conventional statistical methods fail [1]. PCA effectively reduces this dimensionality by constructing linear combinations of original measurements (principal components) that explain the majority of variation with far fewer dimensions [4].

Theoretical Foundations of PCA

Mathematical Principles

PCA operates on the covariance matrix of the original data, performing eigenvalue decomposition to identify directions of maximum variance. Given a data matrix X with n samples and p variables (typically centered to mean zero and sometimes scaled to unit variance), PCA computes the covariance matrix Σ = (XᵀX)/(n-1). The eigenvalue decomposition of this symmetric, positive semi-definite matrix yields eigenvalues (λ) and eigenvectors (v) that satisfy Σv = λv [35]. The eigenvectors define the principal components (PCs), while the eigenvalues represent the variance captured by each corresponding PC [35].

The principal components are ordered by the magnitude of their eigenvalues, with the first PC (PC1) representing the direction of maximum variance, the second PC (PC2) capturing the next highest variance orthogonal to PC1, and so on [35]. Any linear function of the original variables can be expressed in terms of these principal components, making them statistically equivalent to the original measurements for analyzing linear effects [4].

Key Statistical Properties

Principal components possess several important statistical properties that make them invaluable for bioinformatics applications. First, different PCs are orthogonal to each other (vᵢᵀvⱼ = 0 for i ≠ j), effectively solving collinearity problems often encountered with gene expression data [4]. Second, when P ≫ N, the dimensionality of PCs can be much lower than that of the original gene expressions, alleviating high-dimensionality challenges [4]. Third, the variance explained by PCs decreases sequentially, with the first few components typically capturing the majority of variation in the data [4]. Fourth, the total variance in the data equals the sum of all eigenvalues, allowing calculation of the proportion of variance explained by each PC [34].

The standard PCA workflow involves several sequential steps: data preparation, preprocessing, PCA computation, component selection, and result interpretation. Each stage requires careful consideration of methodological choices that can significantly impact the biological conclusions drawn from the analysis. The following diagram illustrates the complete PCA workflow in bioinformatics:

Data Preprocessing for PCA

Bioinformatics PCA analyses commonly begin with various data types, each requiring specific preprocessing approaches. Gene expression matrices from RNA-seq or microarray experiments typically contain tens of thousands of genes (features) across tens to hundreds of samples (observations) [4]. Single-cell RNA-seq data presents additional challenges with extreme sparsity and higher dimensionality [7]. Genetic variant data from VCF (Variant Call Format) files contains genotype information across numerous genomic positions [36]. Each data type has unique characteristics that influence preprocessing decisions.

For gene expression analysis, data is typically structured in an N × P matrix where N represents the number of observations (cells, individuals, samples) and P represents the number of variables (gene expression levels, peak accessibility) [1]. Each variable constitutes a dimension - an axis in space along which data points can vary. More dimensions mean increased complexity, as data spreads out into additional directions, making analysis, clustering, and visualization more challenging [1].

Normalization Methods

Normalization is essential for making accurate comparisons of gene expression between cells or samples. The counts of mapped reads for each gene are proportional to both biological expression of interest and technical artifacts that must be accounted for [37]. The main factors considered during normalization include:

- Sequencing depth: Accounting for sequencing depth is necessary for comparison of gene expression between cells. Without normalization, differences in total read counts between samples can dominate the apparent expression patterns [37].

- Gene length: For full-length sequencing protocols, accounting for gene length is necessary for comparing expression between different genes within the same cell. However, for 3' or 5' droplet-based methods, transcript length may have minimal effect [37].