Why Your PCA Clusters Aren't Separating: A Biomedical Researcher's Guide to Diagnosis and Solutions

This guide provides a comprehensive framework for researchers and drug development professionals struggling with poor cluster separation in PCA plots.

Why Your PCA Clusters Aren't Separating: A Biomedical Researcher's Guide to Diagnosis and Solutions

Abstract

This guide provides a comprehensive framework for researchers and drug development professionals struggling with poor cluster separation in PCA plots. It covers foundational principles of PCA and clustering, explores advanced methodological approaches, details a systematic troubleshooting protocol for optimizing results, and establishes robust validation techniques. By addressing common pitfalls in high-dimensional, noisy biomedical data—such as genomic, metabolomic, and patient stratification datasets—this article delivers practical strategies to enhance analytical reproducibility, ensure biological interpretability, and derive meaningful insights from unsupervised learning.

Understanding PCA and Clustering: Why Your Biomedical Data Resists Clear Grouping

The Core Objective of PCA in Exploratory Data Analysis

Frequently Asked Questions

Q1: What is the primary goal of PCA in exploratory data analysis? The core objective of Principal Component Analysis (PCA) is to reduce the dimensionality of a dataset while retaining as much of the original variation as possible. It does this by transforming the data to a new coordinate system, where the new axes (principal components) are ordered by the amount of variance they capture from the data. [1] [2] In the context of clustering, this simplification helps to reveal the intrinsic grouping structure of the data in a lower-dimensional space that is easier to visualize and interpret. [3] [1]

Q2: I performed PCA, but the clusters in my plot are not well-separated. What does this mean? Poor cluster separation in a PCA plot can indicate several things. It might mean that distinct groups do not exist in your data based on the features you provided. Alternatively, it could signal that the principal components you are visualizing do not capture the data patterns that differentiate the clusters. [4] It is not guaranteed that the first few PCs, which capture the most variance, are also the most informative for clustering. [4] Finally, it could mean that your clusters are inherently overlapping and not well-defined, which is common when characterizing closely related cell types or subtypes. [5]

Q3: Should I always standardize my data before performing PCA? Standardization (scaling your features to have a mean of 0 and a standard deviation of 1) is generally recommended, especially when your variables are on different scales. [3] [1] Without standardization, variables with larger numeric ranges will dominate the principal components, potentially leading to a biased analysis. [3] However, there are specific situations where standardization might "ruin" your results, for instance, if the relative scale of your variables is meaningful for your biological question. [6] It is good practice to try both approaches and see which leads to more interpretable results.

Q4: How many principal components should I use for clustering? There is no definitive rule, but a common strategy is to choose the number of components that capture a sufficient amount of your data's total variance. You can use a scree plot (a plot of the variance explained by each component) and look for an "elbow" point where the explained variance starts to level off. [1] You can also consider the total cumulative variance explained. For example, you might choose the smallest number of components that explain more than 90% of the total variance. [1] [4] For clustering, you can also evaluate cluster separation (e.g., using silhouette width) for different numbers of PCs. [5]

Troubleshooting Guide: Poor Cluster Separation in PCA Plots

This guide walks you through a systematic approach to diagnose and address unclear clustering results.

Step 1: Evaluate Your Clustering Quality Before changing your approach, quantify the current cluster separation.

- Metric to Use: Silhouette Score. It measures how similar a data point is to its own cluster compared to other clusters. Scores range from -1 to 1, where:

- How to Proceed: Calculate the average silhouette score for your clustering. A low or negative average score confirms poor separation. You can also compute the score per cluster to identify which specific clusters are poorly defined. [3] [5]

Step 2: Diagnose the Cause of Poor Separation

| Potential Cause | Diagnostic Questions | Supporting Metric/Tool |

|---|---|---|

| Insufficient PCs Used | Does your 2D/3D plot ignore higher PCs that might contain cluster information? [4] | Scree Plot: Look for components beyond the "elbow" that still explain meaningful variance. |

| Irrelevant Features | Are all provided features relevant for distinguishing the groups you expect? | Variable Loadings: Examine the PCA loadings (the weight of each original variable in the PC). PCs driven by uninformative features won't aid separation. |

| Incorrect Data Preprocessing | Was the data standardized? Would a different transformation (e.g., log) be more appropriate? [6] | Data Summary: Check the mean and variance of your original variables. |

| Genuine Overlap | Is the biological reality that your subgroups are very similar? [5] | Domain Knowledge: Consult the biological context of your experiment. |

Step 3: Apply Corrective Methodologies Based on your diagnosis from Step 2, apply the following experimental protocols.

Protocol 1: Feature Selection and Engineering

- Objective: Ensure the features fed into PCA are relevant for distinguishing clusters.

- Methodology:

- Leverage Domain Knowledge: Choose attributes known to be biologically relevant (e.g., specific gene markers for cell types). [3]

- Drop Correlated Features: Use a correlation matrix to identify and remove highly correlated features that provide redundant information. [3]

- Use Feature Importance: If you have any labeled data, use supervised models like Random Forest to identify the most important features for classification and use those for PCA. [3]

- Expected Outcome: Principal components are constructed from meaningful features, improving the potential for clear cluster separation.

Protocol 2: Systematic PCA Dimensionality and Algorithm Tuning

- Objective: Find the optimal number of principal components and clustering algorithm for your data.

- Methodology:

- Determine Optimal PCs: Generate a scree plot and calculate cumulative explained variance. Don't just use the first 2-3 PCs by default; experiment with more. [1] [4]

- Choose a Clustering Algorithm: Test different algorithms on the PCA-transformed data. K-Means is common, but may not work for complex, non-spherical clusters. [3]

- Determine Optimal Clusters (k): If using K-Means, use the Elbow Method on within-cluster variance or directly optimize the Average Silhouette Score for different values of k. [3]

- Expected Outcome: A more robust clustering based on a principled choice of parameters.

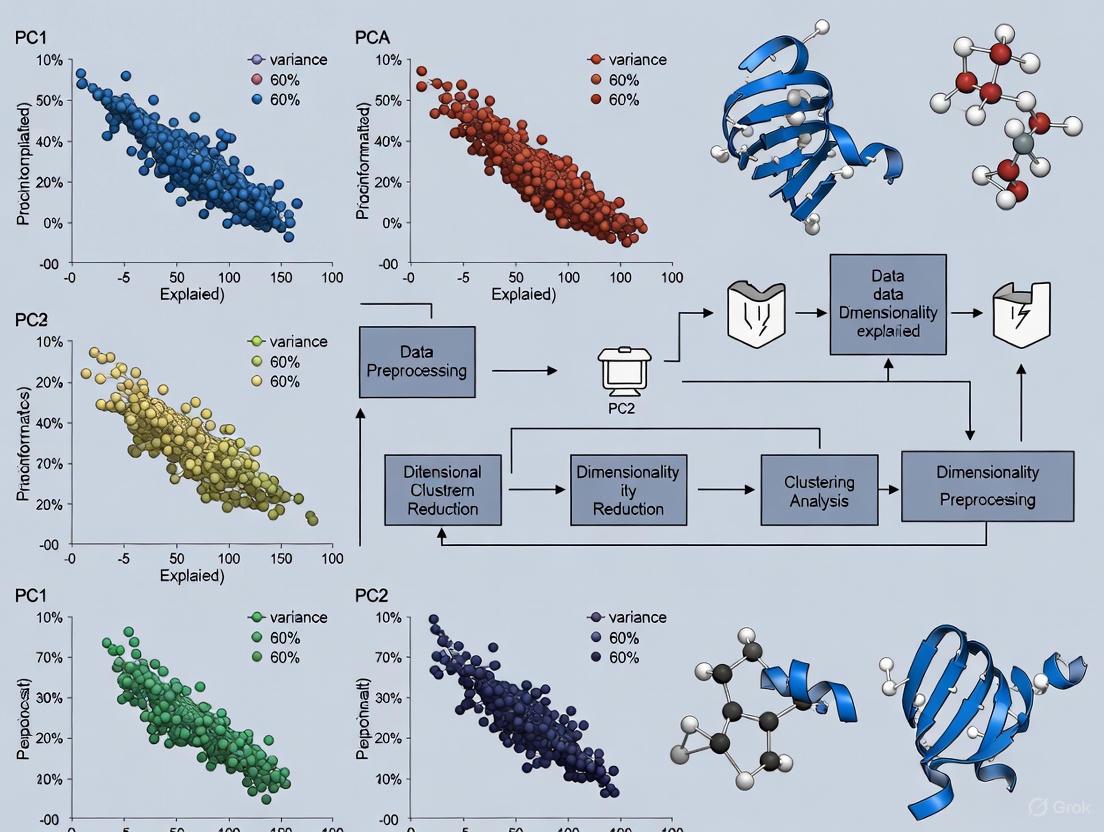

The following workflow summarizes the troubleshooting process:

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details key computational "reagents" and metrics essential for diagnosing and troubleshooting PCA-based clustering.

| Research Reagent / Metric | Function & Purpose in Analysis |

|---|---|

| Silhouette Score | A diagnostic metric that quantifies the separation and compactness of resulting clusters. Values near +1 indicate well-defined clusters. [3] [5] |

| Scree Plot | A visual tool (plot of eigenvalues) used to decide how many principal components to retain by showing the variance explained by each component. [1] |

| Elbow Method | A heuristic used in conjunction with a scree plot or within-cluster variance to identify the optimal number of clusters (k) by looking for an "elbow" point. [3] |

| PCA Loadings | The weights assigned to each original variable in the linear combination that forms a principal component. Critical for interpreting what each PC represents biologically. [1] [7] |

| Correlation Matrix | Used during feature selection to identify and remove highly correlated variables that can bias the PCA transformation and subsequent clustering. [3] |

| StandardScaler / Z-score | A standard preprocessing step that normalizes features to have a mean of 0 and standard deviation of 1, preventing variables with large scales from dominating the PCA. [3] [1] |

Frequently Asked Questions (FAQs)

Q1: My PCA plot shows poor separation between presumed clusters. Does this mean my data has no meaningful groups?

A: Not necessarily. Poor separation in a Principal Component Analysis (PCA) plot can indicate that the underlying cluster structure in your data is non-linear. PCA is a linear dimensionality reduction technique and may fail to preserve complex cluster shapes, making distinct clusters appear overlapped [8]. Before abandoning your analysis, consider applying non-linear dimensionality reduction techniques (such as t-SNE) prior to clustering, or using clustering algorithms capable of identifying non-spherical clusters [9] [8].

Q2: Why does my K-Means clustering produce biologically implearable results on gene expression data?

A: This is a common issue. K-Means operates on several restrictive assumptions that are often violated in biomedical data:

- It assumes clusters are spherical and of similar size [10].

- It is sensitive to outliers and noise, which are common in experimental data [10].

- It requires you to pre-specify the number of clusters (k), which is rarely known a priori in exploratory research [10]. Biomedical data often contains clusters of irregular shapes, varying densities, and outliers. Using K-Means in such contexts can lead to unreliable results [11] [10].

Q3: How can I objectively determine the optimal number of clusters for my data?

A: There is no single best method, but several established techniques can guide your decision:

- Elbow Method: Plot the within-cluster sum of squares (WSS) against the number of clusters. The "elbow" point, where the rate of WSS decrease sharply slows, suggests a suitable k.

- Gap Statistic: Compares the total intra-cluster variation of your data to that of a reference null dataset. The cluster number that maximizes the "gap" statistic is optimal [12].

- Model-Based Methods: For model-based clustering, use statistical metrics like the Bayesian Information Criterion (BIC) to choose the best-fitting model and number of clusters [9]. It is best practice to use multiple indices and combine them with domain knowledge for a biologically defensible conclusion [13].

Troubleshooting Guide: Poor Cluster Separation

Problem Diagnosis

The following table outlines common symptoms, their potential causes, and initial diagnostic steps.

| Symptom | Potential Cause | Diagnostic Check |

|---|---|---|

| Overlapping clusters in PCA plot | Non-linear cluster structure [8] | Visualize data with t-SNE or UMAP. Check if separation improves. |

| Inconsistent cluster results | Noise and outliers in the data [11] | Conduct exploratory data analysis to identify and inspect outliers. |

| K-Means produces long, elongated clusters | Violation of spherical cluster assumption [10] | Run a density-based algorithm like DBSCAN and compare the results. |

| High variability in cluster assignments | Incorrect number of clusters (k) [9] | Apply the Elbow Method or Gap Statistic to re-estimate k. |

| Clusters seem driven by a few strong variables | Features on different scales dominating the distance calculation [9] | Ensure all features were standardized (e.g., Z-score normalization) before clustering. |

Solution Protocols

Protocol 1: Addressing Non-Linear Data and Poor PCA Separation

Objective: To achieve effective clustering when linear separation methods fail.

- Dimensionality Reduction: Apply a non-linear dimensionality reduction technique to your data.

- t-SNE: Effective for visualizing high-dimensional data in 2D or 3D, often revealing complex structures.

- UMAP: A newer technique that often preserves more of the global data structure than t-SNE.

- Algorithm Selection: Choose a clustering algorithm that does not assume spherical clusters.

- Validation: Cluster the data in the new non-linear space and validate the biological coherence of the results using domain knowledge and internal validation indices.

Protocol 2: Handling Noisy Biomedical Data with Outliers

Objective: To obtain robust and reliable clusters from data containing outliers and noise.

- Data Preprocessing:

- Robust Clustering:

- Consider Trimmed Clustering: Use algorithms that automatically "trim" or exclude a proportion of potential outliers during the clustering process, enhancing robustness [11].

- Use DBSCAN: This algorithm explicitly defines outliers as points in low-density regions, effectively separating them from core clusters [12].

- Validation: Use cluster validity indices that are robust to noise. Compare the stability of your results with and without the suspected outliers.

Algorithm Selection Workflow

The following diagram outlines a logical decision process for selecting an appropriate clustering algorithm based on your data characteristics and research goals.

The Scientist's Toolkit: Research Reagent Solutions

Essential Computational Tools

| Tool / Resource | Function | Application Notes |

|---|---|---|

| R Programming Language | A statistical computing environment with extensive packages for clustering and PCA. | Essential packages: evaluomeR (for automated trimmed clustering) [11], cluster, factoextra (for visualization and validation). |

| Python (Scikit-learn) | A machine learning library providing robust implementations of major clustering algorithms. | Modules: sklearn.cluster, sklearn.decomposition (for PCA), sklearn.preprocessing (for data scaling) [15]. |

| StandardScaler / Z-Normalization | A data preprocessing technique to standardize feature scales. | Critical for K-Means and PCA, which are sensitive to variable magnitude. Ensures all features contribute equally to distance calculations [9] [15]. |

| Silhouette Score | An internal validation metric to evaluate cluster quality and aid in determining k. | Values range from -1 to 1; higher positive values indicate better-defined clusters [12]. |

| Gap Statistic | A statistical method to estimate the optimal number of clusters by comparing data to a null reference. | More objective than the Elbow Method for choosing k [12]. |

| DBSCAN Algorithm | A density-based clustering algorithm that identifies arbitrary-shaped clusters and marks outliers. | Ideal for noisy biomedical datasets where the number of clusters is unknown and clusters are non-spherical [12] [14]. |

The 'Variance-as-Relevance' Assumption and Its Pitfalls in Biological Data

You've run your experiment, processed your high-dimensional biological data, and generated a Principal Component Analysis (PCA) plot, only to find a messy overlap of data points instead of the distinct clusters you expected. This common frustration often stems from a fundamental misconception known as the "Variance-as-Relevance" assumption—the flawed expectation that the directions of greatest variance in your dataset always correspond to biologically meaningful patterns.

In reality, the largest sources of variance in biological data often represent technical noise, batch effects, or biologically irrelevant variation that can obscure the signals you care about. This technical support guide will help you diagnose and resolve the issues causing poor cluster separation in your PCA plots, providing practical methodologies to extract meaningful biological insights from your data.

Frequently Asked Questions (FAQs)

FAQ 1: My PCA shows poor cluster separation despite strong biological signals in my raw data. What's wrong?

Answer: Poor cluster separation often indicates that technically sourced variance is dominating your biologically relevant variance. The "Variance-as-Relevance" assumption fails when systematic errors create larger data dispersion than your experimental effects.

Common causes include:

- Batch effects: Samples processed at different times or locations exhibit systematic technical differences

- Sample quality issues: Degradation or contamination affecting subsets of samples

- Inadequate normalization: Failure to account for technical variance before dimensionality reduction

- Hidden covariates: Unrecorded experimental variables influencing your measurements

FAQ 2: How can I determine if my variance structure is problematic?

Answer: Investigate the relationship between variance and signal intensity in your data. In many biological measurements, particularly gene expression studies, variance is intensity-dependent—with low-abundance features exhibiting proportionally higher variance that can dominate PCA results [16].

Diagnostic approach:

- Create a mean-variance relationship plot to identify problematic patterns

- Check if the features contributing most to principal components are biologically relevant or known technical artifacts

- Use spike-in controls if available to distinguish technical from biological variance

FAQ 3: What are the practical alternatives when PCA fails due to non-linear data structures?

Answer: When your data contains non-linear relationships that PCA cannot capture, consider these alternatives:

- t-SNE: Effective for visualizing complex local structures but distances between clusters are not meaningful

- UMAP: Generally preserves more global structure than t-SNE with similar local clustering capabilities

- Phate: Specifically designed for biological data with trajectory structures

- Non-linear PCA variants: Kernel PCA or autoencoder-based approaches

Table: Dimensionality Reduction Methods for Different Data Structures

| Method | Best For | Limitations | Non-Linear Capture |

|---|---|---|---|

| PCA | Linear data, Gaussian distributions | Fails with circular/non-linear patterns | No |

| t-SNE | Local structure visualization | Loses global structure, computational cost | Yes |

| UMAP | Preserving local and global structure | Parameter sensitivity | Yes |

| Kernel PCA | Non-linear manifolds | Computational complexity, kernel choice | Yes |

Troubleshooting Guide: Step-by-Step Protocols

Protocol 1: Data Quality Assessment and Preprocessing

Objective: Identify and mitigate data quality issues before PCA.

Generate quality control metrics

- For sequencing data: Calculate Phred scores, alignment rates, and GC content

- Use tools like FastQC to identify issues in sequencing runs or sample preparation [17]

- Establish minimum quality thresholds before proceeding with analysis

Assess mean-variance relationship

- Plot feature variance against mean expression/intensity

- Identify if low-abundance features with high relative variance are dominating your data

- Consider variance-stabilizing transformations if needed

Implement appropriate normalization

- Select normalization method based on your data type (e.g., TPM for RNA-seq, RLE for count data)

- Account for library size differences and other technical biases

- Validate normalization by checking if technical artifacts are reduced

Protocol 2: Batch Effect Detection and Correction

Objective: Identify and correct for batch effects that may obscure biological signals.

Detect batch effects

- Color PCA plot by potential batch variables (processing date, operator, etc.)

- Use statistical tests like PVCA or surrogate variable analysis

- Check if batch variables explain more variance than biological variables

Apply batch correction methods

- Choose appropriate method: ComBat, limma's removeBatchEffect, or SVA

- Preserve biological variance of interest while removing technical variance

- Validate correction by confirming batch variables no longer drive clustering

Experimental design to minimize batch effects

- Randomize samples across batches when possible

- Include technical replicates across batches

- Balance biological groups within batches

Protocol 3: Variance Modeling for Improved Signal Detection

Objective: Use advanced variance modeling approaches to enhance biological signal detection.

Select appropriate variance modeling approach

- For small sample sizes: Implement information-borrowing methods like Cyber-T or Limma [16]

- For complex experimental designs: Consider VAMPIRE's global variance modeling

- For RNA-seq data: Use DESeq2 or edgeR's dispersion estimation

Implement variance-stabilizing transformation

- Apply techniques that account for mean-variance dependence

- For count data: Consider regularized log transformation or variance stabilizing transformation (VST)

- Validate that transformation reduces technical noise while preserving biological signal

Feature selection based on biologically relevant variance

- Identify features with high biological coefficient of variation

- Prioritize features with consistent patterns within biological groups

- Avoid selecting features based solely on overall variance

Experimental Workflow for Robust Dimensionality Reduction

The diagram below illustrates a comprehensive workflow for addressing variance-related issues in PCA analysis:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table: Key Reagents and Computational Tools for Variance Troubleshooting

| Item | Function | Application Notes |

|---|---|---|

| Spike-in Controls | Distinguish technical from biological variance | Use ERCC RNA spike-ins for RNA-seq; add at known concentrations |

| Quality Control Tools | Assess data quality before analysis | FastQC for sequencing data; Qualimap for alignment metrics |

| Variance Modeling Software | Improve signal detection in small samples | Cyber-T, Limma, VAMPIRE, DESeq2 |

| Batch Correction Packages | Remove technical artifacts | ComBat, sva, limma's removeBatchEffect in R |

| Alternative Dimensionality Tools | Handle non-linear data structures | UMAP, t-SNE, PHATE, Kernel PCA |

| Visualization Libraries | Create diagnostic plots | ggplot2, plotly, seaborn, matplotlib |

Advanced Technique: Global Variance Modeling

For researchers dealing with particularly challenging datasets where traditional approaches fail, global variance modeling provides a powerful alternative:

Implementation protocol:

- Model the variance structure using the relationship: σ² = μ²A + B, where A represents expression-dependent variance and B represents expression-independent variance [16]

- Estimate parameters using Markov chain Monte Carlo (MCMC) algorithms for maximum likelihood estimation

- Incorporate variance estimates into statistical testing to identify truly significant changes

- Validate model fit by comparing observed versus expected variance patterns

This approach is particularly valuable for studies with limited replicates, where traditional methods like the t-test have low power and high false-positive rates for low-abundance features [16].

Successfully troubleshooting poor cluster separation in PCA requires abandoning the simplistic "Variance-as-Relevance" assumption and adopting a more nuanced understanding of data variance. By implementing the quality control measures, variance modeling techniques, and diagnostic approaches outlined in this guide, researchers can significantly improve their ability to extract meaningful biological insights from high-dimensional data.

Remember that PCA is just one tool in your dimensionality reduction arsenal—when your data contains complex non-linear structures, don't hesitate to explore alternative methods that might better capture the biological relationships you're studying.

Frequently Asked Questions (FAQs)

Why do my clusters separate well in raw data but disappear after standardization? This occurs when the original cluster separation was driven primarily by differences in feature scales rather than underlying correlations. Variables with larger ranges dominate the first principal components in unstandardized PCA, creating illusory clusters. Standardization ensures all features contribute equally, revealing the true underlying structure, which may show poorer separation [18] [6]. This is particularly common when data features have different measurement units or scales.

What does it mean when my PCA plot shows two distinct clusters for what should be identical gestures or samples? This typically indicates a preprocessing or data collection inconsistency between batches. In motion capture data, for example, slight differences in sensor calibration or positioning between recording sessions can cause identical gestures to form separate clusters in PCA space. This signals that technical artifacts, rather than biological or meaningful variation, are driving your principal components [19].

Why does my PCA clustering not correspond to known sample groupings? The principal components capturing the most variance may represent noise, batch effects, or biologically irrelevant variation (like population structure in genetics) rather than variation relevant to your grouping of interest. This violates the "variance-as-relevance" assumption that high-variance components necessarily contain meaningful cluster information [20].

How can I determine if my lack of cluster separation indicates genuine similarity or a methodological issue? First, verify your data preprocessing pipeline includes proper standardization, as scale differences can mask true separation [18]. Next, calculate the variance explained by your principal components; if the first few components capture minimal cumulative variance (e.g., <70%), your data may be too noisy for clear separation. Finally, conduct sensitivity analyses with different preprocessing approaches to see if separation improves [20].

Troubleshooting Guide: Poor Cluster Separation in PCA

Quick Diagnosis Table

| Symptom | Possible Causes | Diagnostic Steps | Potential Solutions |

|---|---|---|---|

| Distinct clusters disappear after standardization [6] | Clusters driven by scale differences, not correlation | Compare feature variances pre/post standardization; check if high-variance features defined original clusters | Focus on biological interpretation; use domain knowledge to select relevant features |

| Multiple clusters for identical sample types [19] | Batch effects, sensor calibration drift, collection protocol variations | Color points by collection date/batch; check for technical correlations with PCs | Implement batch correction; apply sensor calibration; standardize protocols |

| Diffuse, overlapping clusters with no clear separation | High noise-to-signal ratio; too many irrelevant features; genuine sample similarity | Calculate variance explained by first 2-3 PCs; assess feature quality; add known positives | Apply feature selection; increase sample size; use regularization; try alternative methods (t-SNE, UMAP) |

| Known groups don't separate in expected directions | PC axes capture irrelevant variance; group differences are subtle | Color points by known groups; check which features load strongly on early PCs | Apply supervised approaches (LDA); use weighted PCA; select group-informative features |

Comprehensive Experimental Protocol for Diagnosing Separation Issues

Step 1: Data Quality Assessment Begin by examining your raw data structure. Calculate basic descriptive statistics (mean, variance, range) for each feature to identify variables with dramatically different scales. For the sarcoidosis radiomics data discussed in the literature, researchers found that 9,706 feature pairs had correlations beyond 0.9, indicating severe redundancy that can distort PCA results [20]. Document any missing data patterns and assess whether they correlate with potential batch effects.

Step 2: Systematic Preprocessing Evaluation Process your data through multiple preprocessing pathways in parallel:

- Raw data (unstandardized)

- Z-score standardized data (mean-centered, unit variance)

- Range-scaled data (scaled to [0,1])

- Log-transformed data (if appropriate for your data type)

For each pathway, apply PCA and generate 2D and 3D plots of the first 2-3 principal components. Color points by known experimental factors (batch, date, operator) and hypothesized biological groups.

Step 3: Principal Component Analysis Compute PCA for each preprocessed dataset. Examine the scree plot to determine the variance explained by each component. As shown in PCA tutorials, the first component should capture the most variance, with each subsequent component capturing progressively less [18] [21]. Calculate the cumulative variance explained by the first 2-3 components, as these will determine your visualization clarity. If these components capture less than 60-70% of total variance, cluster separation will likely be poor.

Step 4: Cluster Validation Metrics Apply multiple clustering algorithms (K-means, Gaussian Mixture Models) to the principal components. Calculate silhouette scores, within-cluster sum of squares, and other validity measures for different numbers of hypothesized clusters. Compare these metrics across preprocessing methods to identify optimal analysis conditions.

Step 5: Sensitivity Analysis Systematically investigate how robust your results are to different analytical choices. This includes testing different feature subsets, applying various normalization schemes, and using alternative dimension reduction techniques. The goal is to determine whether poor separation persists across methodological variations or is specific to certain analysis decisions [20].

Advanced Diagnostic Framework

Research Reagent Solutions for PCA Cluster Analysis

| Reagent/Resource | Function | Application Notes |

|---|---|---|

| StandardScaler (sklearn.preprocessing) | Standardizes features by removing mean and scaling to unit variance | Essential for preventing high-variance features from dominating PCA [19] [18] |

| PCA (sklearn.decomposition) | Performs principal component analysis | Use n_components=None initially to examine all components; random_state for reproducibility [21] |

| Shapiro-Wilk Filter | Preprocessing filter to counter variance-as-relevance assumption | Identifies and removes features where high variance doesn't correlate with cluster relevance [20] |

| VarSelLCM Package (R) | Variable selection for model-based clustering | Implements diagonal GMM with models indexed by variable relevance; uses BIC for model selection [20] |

| Dynamic Time Warping | Aligns time-series data before PCA | Critical for motion capture or temporal data to align sequences despite timing variations [19] |

| Procrustes Analysis | Shape-based alignment of datasets | Aligns new recordings with reference gestures to ensure consistency in PCA space [19] |

Quantitative Thresholds for Cluster Separation Assessment

| Metric | Good Separation | Marginal Separation | Poor Separation |

|---|---|---|---|

| Variance Explained (PC1+PC2) | >80% | 60-80% | <60% |

| Silhouette Score | 0.7-1.0 | 0.5-0.7 | <0.5 |

| Between:Within Cluster SS Ratio | >3.0 | 1.5-3.0 | <1.5 |

| Cluster Distinctness (Visual) | Clear separation, minimal overlap | Partial separation, some overlap | No clear boundaries, heavy overlap |

Intervention Protocol Based on Diagnostic Results

Troubleshooting Guide: Poor Cluster Separation in PCA

Is the observed cluster separation in my PCA plot real or an artifact?

Problem You have run a single-cell RNA-sequencing experiment and performed PCA. The resulting plot shows clear clusters, but you are unsure if these groups represent true biological cell types or are technical artifacts.

Explanation Cluster separation in PCA can be driven by both biological and technical sources of variation. Batch effects—technical variations from processing cells in different laboratories, at different times, or with different reagents—create consistent fluctuations in gene expression that can be mistaken for biological signal [22]. Furthermore, the inherent population structure of your cells, such as a hierarchical relationship between cell types, can be misinterpreted by standard clustering algorithms, leading to either over-clustering or the false discovery of novel cell populations [23] [24].

Solution Follow the diagnostic workflow below to systematically evaluate your clustering results. This will help you determine if your clusters need correction for batch effects or merging due to over-clustering.

How do I definitively identify a batch effect in my data?

Problem You suspect a batch effect but are not sure how to confirm it.

Explanation A batch effect is present when technical factors (e.g., sequencing date, lane, or protocol) systematically explain more of the variance in your data than biological factors. This can be observed visually and confirmed with quantitative metrics [22].

Solution Follow this experimental protocol to detect batch effects.

Experimental Protocol: Batch Effect Detection

- Step 1: Visual Inspection with PCA. Perform PCA on your raw, uncorrected gene expression matrix. Create a scatter plot of the top principal components (e.g., PC1 vs. PC2). Color the data points by their batch of origin (e.g., experiment date). If cells cluster strongly by batch rather than by expected biological condition, a batch effect is likely present [22].

- Step 2: Visualization with UMAP/t-SNE. Similarly, generate a UMAP or t-SNE plot from your data and color the points by batch. The presence of separate, batch-specific sub-clusters within a group of cells that should be biologically homogeneous is a key indicator of a batch effect [22].

- Step 3: Calculate Quantitative Metrics. Use metrics to objectively measure the degree of batch integration. Common metrics include:

- kBET (k-nearest neighbor batch effect test): Rejects the null hypothesis (good batch mixing) if batches are not well mixed [22].

- ARI (Adjusted Rand Index): Measures the similarity between two clusterings. A low ARI between batch labels and cluster labels is desirable [24].

- NMI (Normalized Mutual Information): Measures the information shared between batch and cluster labels. Lower values indicate less influence from batch [22].

Table: Key Quantitative Metrics for Batch Effect Assessment

| Metric | What It Measures | Interpretation | Desired Value |

|---|---|---|---|

| kBET | Mixing of batches in local neighborhoods | Lower rejection rate indicates better mixing | Closer to 0 |

| ARI | Agreement between batch labels and cluster labels | Lower value indicates batch has less impact on clustering | Closer to 0 |

| NMI | Shared information between batch and cluster labels | Lower value indicates batch and clusters are independent | Closer to 0 |

My clusters are statistically significant but don't make biological sense. What now?

Problem Your data has passed batch effect checks and clustering algorithms report statistically distinct groups, but these groups lack known cell type markers or have unstable definitions.

Explanation This is a classic sign of over-clustering. Widely used clustering algorithms like Louvain and Leiden are heuristic and will partition data even when only random variation is present [23]. They do not formally account for statistical uncertainty, leading to overconfidence in the discovery of novel cell types [23]. This is especially problematic when the true biological structure of the cell population is hierarchical (e.g., T-cells and B-cells are both lymphocytes), but the clustering metric treats all groups as unrelated [24].

Solution Incorporate significance analysis into your clustering workflow.

Experimental Protocol: Significance Analysis for Clustering

- Step 1: Use Model-Based Hypothesis Testing. Employ methods like single-cell Significance of Hierarchical Clustering (sc-SHC). This approach defines a realistic parametric distribution for a cell population and tests whether a proposed split into two clusters could have arisen by chance from a single population [23].

- Step 2: Assess Pre-computed Clusters. If you have already generated clusters with a tool like Seurat, you can apply a significance analysis framework retrospectively. The method will hierarchically cluster the centers of your provided clusters and recursively apply statistical tests to determine which clusters should be merged [23].

- Step 3: Use Hierarchical Metrics for Evaluation. When comparing your results to a reference, use metrics that account for cell type hierarchy.

- Weighted Rand Index (wRI): Assigns different weights to pairwise cell relationships based on their place in the known cell type hierarchy. Mistakes between closely related subtypes (e.g., CD4 and CD8 T-cells) are penalized less than mistakes between distinct types (e.g., T-cells and neurons) [24].

- Weighted Normalized Mutual Information (wNMI): Uses a structured entropy that considers hierarchical relationships to reflect the accuracy of recovering the true cell population structure [24].

How do I choose and apply a batch effect correction method?

Problem You have identified a batch effect and need to correct it without removing true biological signal.

Explanation Batch effect correction methods use various algorithms to align cells from different batches in a shared space, assuming that a subset of the cell population is shared across batches [25]. The goal is to remove technical variation while preserving biological variation. Different methods are suited to different data types and sizes.

Solution Select an appropriate algorithm and be vigilant for overcorrection.

Table: Comparison of Common Batch Effect Correction Methods

| Method | Core Algorithm | Key Principle | Best For |

|---|---|---|---|

| Harmony [22] | Iterative clustering and correction | Removes batch effects by clustering similar cells across batches and maximizing diversity within each cluster. | Datasets with complex batch structures. |

| MNN Correct [25] [22] | Mutual Nearest Neighbors (MNNs) | Finds cells in different batches that have similar expression profiles (MNNs) and uses them as anchors to correct the data. | Datasets where not all cell types are present in all batches. |

| Seurat CCA [22] | Canonical Correlation Analysis (CCA) & MNNs | Projects data into a subspace using CCA, finds MNNs in this subspace, and uses them as anchors for integration. | Integrating large, complex datasets. |

| Scanorama [22] | Mutual Nearest Neighbors in reduced space | Finds MNNs in dimensionally reduced spaces and uses a similarity-weighted approach for integration. | Large datasets with high computational demands. |

Warning: Signs of Overcorrection After applying batch correction, check for these signs that you may have removed biological signal along with the batch effect [22]:

- Cluster-specific markers are dominated by common, non-informative genes (e.g., ribosomal genes).

- Significant overlap exists between markers for different clusters.

- Expected canonical cell type markers are absent.

- Few differential expression hits are found for pathways known to be active in your samples.

The Scientist's Toolkit

Table: Essential Research Reagents & Computational Tools

| Item | Function / Purpose | Example Tools / R Packages |

|---|---|---|

| Batch Effect Correction | Algorithms to remove technical variation from different experiments. | Harmony, MNN Correct, Seurat (CCA), Scanorama [22] |

| Significance Testing for Clusters | Statistically validates whether clusters represent distinct populations. | sc-SHC (single-cell Significance of Hierarchical Clustering) [23] |

| Hierarchical Evaluation Metrics | Evaluates clustering results while accounting for known cell type relationships. | Weighted Rand Index (wRI), Weighted NMI (wNMI) [24] |

| Dimensionality Reduction | Visualizes high-dimensional data to assess clustering and batch effects. | PCA, UMAP, t-SNE [22] |

| Quantitative Integration Metrics | Provides objective scores to assess the success of batch correction. | kBET, ARI, NMI [22] |

Experimental Protocol: A Rigorous Clustering Workflow

For robust results, follow this integrated protocol that incorporates batch correction and significance testing.

Workflow: An Integrated Approach to Valid Clustering

Step-by-Step Instructions:

- Start with Normalized Data. Begin with a properly normalized count matrix (cells x genes) to control for sequencing depth and other library-size biases [22].

- Perform Dimensionality Reduction. Run PCA on a set of highly variable genes. This reduces noise and computational cost for subsequent steps [23].

- Diagnose Batch Effects. As detailed in FAQ #2, use PCA/UMAP plots and quantitative metrics (kBET, ARI) to check for batch effects [22].

- Apply Batch Correction. If a batch effect is detected, select and apply a correction method from the table above (e.g., Harmony). Visualize and re-run the quantitative metrics to confirm the effect has been mitigated [22].

- Perform Clustering. Use a standard algorithm (e.g., Louvain in Seurat) to get an initial partition of the data into clusters [23].

- Run Significance Analysis. Apply a method like sc-SHC to your clusters. This will test each proposed split in the clustering tree and automatically merge clusters that do not represent statistically distinct populations, effectively correcting for over-clustering [23].

- Annotate and Validate Clusters. Use known marker genes to assign biological cell type labels to the statistically validated clusters. The use of hierarchical metrics (wRI, wNMI) for comparison with a reference can provide a more biologically plausible evaluation [24].

Beyond Basic PCA: Advanced Preprocessing and Clustering Techniques for Robust Analysis

Troubleshooting Guide: Poor Cluster Separation in PCA Plots

This guide addresses common data preparation issues that lead to poor cluster separation in Principal Component Analysis (PCA), a key step in many drug development and research pipelines. Proper data preprocessing is critical because PCA is sensitive to the scale, quality, and consistency of your input data [19].

Problem 1: Inconsistent Data Scaling

The Issue After applying PCA, your data forms unexpected or poorly separated clusters, even when you know the underlying groups should be similar. This often manifests as identical gestures or samples splitting into two distinct clusters [19].

Root Causes

- Dominant Features: Variables with larger numerical ranges (e.g., 0-1000) dominate the PCA, overshadowing variables with smaller ranges (e.g., 0-0.1), as PCA is sensitive to variance [19] [26].

- Inconsistent Preprocessing: Data collected in different batches or with slightly different sensor calibrations may have different baseline scales, causing them to cluster separately [19].

Solutions

- Apply Standardization: Use StandardScaler (Z-score normalization) to transform features to have a mean of 0 and a standard deviation of 1. This ensures all features contribute equally to the PCA [19] [26].

- Validate Across Batches: Ensure the same scaler fit to your original reference data is applied to new datasets to maintain consistency [19].

Experimental Protocol: Standardization

Problem 2: Sensor Drift or Misalignment

The Issue Newly recorded time-series data (e.g., from motion sensors) does not align with previous recordings in the PCA plot, despite representing the same biological or physical phenomenon [19].

Root Cause Small, consistent errors in sensor calibration, such as a 5-degree rotational offset, can systematically shift the data in the high-dimensional space, leading PCA to perceive it as a different cluster [19].

Solutions

- Sensor Calibration: Apply rotational or translational transformations to realign new data to a reference frame. The

scipy.spatial.transform.Rotationlibrary can be used for this purpose [19]. - Advanced Alignment: For time-series data, use Dynamic Time Warping (DTW) or Procrustes analysis to align sequences before applying PCA [19].

Experimental Protocol: Sensor Calibration

Problem 3: High-Dimensional, Correlated, and Noisy Data

The Issue In high-dimensional data (e.g., from genomics, metabolomics, or imaging), the first few Principal Components (PCs) capture a low percentage of the total variance, and cluster separation is poor [4] [20].

Root Causes

- "Variance as Relevance" Fallacy: PCA prioritizes high-variance features. In biological data, the largest sources of variance (e.g., population structure, batch effects) may not be relevant for discriminating the disease subtypes of interest [20].

- Correlated Noise: Highly correlated and noisy features can create large-variance principal components that are irrelevant for clustering, masking the true discriminatory signal [20].

Solutions

- Filter for Relevant Variance: Instead of using all PCs, employ a Shapiro-Wilk (SW) filter to select PCs that deviate from a normal distribution, as they are more likely to contain cluster structure [20].

- Explore Alternative Preprocessing: Investigate decorrelation filters or other dimensionality reduction techniques like autoencoders that do not rely solely on variance [19] [20].

Problem 4: Improper Handling of Missing Values

The Issue Clusters appear distorted, or the analysis fails entirely due to the presence of missing values in the dataset.

Root Causes

- Information Loss: Simply removing cases with missing values (complete case analysis) can introduce bias and reduce the effective sample size, leading to overfitting and imprecise clusters [9] [27].

- Inaccurate Imputation: Replacing missing values with a simple mean can distort the relationships between variables and the natural structure of the data [9].

Solutions

- Use Advanced Imputation: Apply multiple imputation or k-nearest neighbor (KNN) imputation to estimate missing values in a way that better preserves data structure [9].

- Avoid Dichotomania: Do not handle missingness by dichotomizing continuous variables, as this wastes information and reduces statistical power [27].

Frequently Asked Questions (FAQs)

Q1: Why do my identical biological replicates form separate clusters in the PCA plot?

This is a classic sign of batch effects or inconsistent preprocessing. Ensure that all data is scaled using the same parameters (e.g., the same StandardScaler object fit on your control data). Investigate whether technical artifacts (e.g., different sample preparation days) are introducing systematic variation that PCA is detecting [19].

Q2: My explained variance for the first few PCs is low (~20%). Can I still use PCA for clustering? Yes, but with caution. A low explained variance suggests that the key differences between your clusters might not be the largest sources of variance in the data. The PCs that capture most of the variance are not guaranteed to be the ones that are informative for clustering. You should investigate lower-order PCs or use pre-processing filters (like the Shapiro-Wilk filter) to find components that better separate your clusters [4] [20].

Q3: What is the single most important preprocessing step for PCA-based clustering? Standardization (Z-score normalization) is often the most critical step. Without it, PCA will be unduly influenced by the scale of your measurements, and variables measured in larger units (e.g., concentration in mmol/L) will dominate those in smaller units (e.g., expression fold-change), regardless of their biological importance [19] [26].

Q4: How can I align new data with my original reference dataset in PCA space? Beyond standardization, you may need a calibration or alignment step. For kinematic data, this could be a rotational transformation. For other data types, Procrustes analysis can be used to rotate, translate, and scale the new dataset to match the configuration of the original reference data as closely as possible [19].

Q5: Can autoencoders be a better alternative to PCA for clustering? Yes, in some cases. Autoencoders are neural networks that can learn non-linear latent representations of your data. By training an autoencoder on your original data, you can map new recordings into a shared latent space, which can be more robust to certain types of noise and variation, potentially leading to better-aligned clusters [19].

Data Presentation: Scaling and Normalization Techniques

The table below summarizes key techniques to prepare your data for PCA and clustering.

| Technique | Method Description | Sensitivity to Outliers | Best Use Cases for Clustering |

|---|---|---|---|

| Standardization (Z-Score) | Centers data to mean=0 and scales to standard deviation=1 [26]. | Moderate | Most common starting point; assumes near-normal data [26]. |

| Min-Max Scaling | Scales data to a specified range (e.g., [0, 1]) [26]. | High | Neural networks; data with bounded ranges [26]. |

| Robust Scaling | Centers data using the median and scales using the Interquartile Range (IQR) [26]. | Low | Data with significant outliers or skewed distributions [26]. |

| Absolute Maximum Scaling | Divides values by the maximum absolute value per feature. Scales to [-1, 1] [26]. | High | Sparse data; simple scaling needs. |

| Vector Normalization | Scales each individual sample (row) to have a unit norm (length=1) [26]. | Varies | Algorithms relying on cosine similarity or sample direction. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

| Item | Function in Data Preparation |

|---|---|

| StandardScaler (sklearn) | Standardizes features by removing the mean and scaling to unit variance. Critical for PCA [19] [26]. |

| RobustScaler (sklearn) | Scales features using statistics that are robust to outliers. Use when your dataset contains many extreme values [26]. |

| Multiple Imputation | A statistical technique for handling missing data by creating several complete datasets and pooling results. Superior to mean imputation [27] [9]. |

| Dynamic Time Warping (DTW) | An algorithm for measuring similarity between two temporal sequences. Useful for aligning time-series data before clustering [19]. |

| Shapiro-Wilk (SW) Filter | A pre-processing filter used to select Principal Components that deviate from normality, as they are more likely to contain cluster-relevant information [20]. |

Experimental Workflow Diagrams

Data Preprocessing for Optimal PCA Clustering

Addressing Poor Cluster Separation

Automated and Sparse Clustering Methods for High-Dimensional Biomarker Data

Frequently Asked Questions

Q1: My PCA plot shows poor cluster separation. Does this mean my biomarkers have no meaningful patterns? Not necessarily. PCA can fail to separate clusters if the data has a non-linear structure or if the primary source of variance is not aligned with class boundaries [8]. Before abandoning your analysis, investigate using Linear Discriminant Analysis (LDA), which is designed specifically to maximize separation between known groups [28], or explore non-linear dimensionality reduction techniques.

Q2: What is the fundamental difference between traditional clustering and automated clustering for biomarker discovery? Traditional clustering methods (like k-means) often require you to specify the number of clusters in advance and can struggle with high-dimensional noise. Automated Clustering solves the Automatic Clustering Problem (ACP) by simultaneously determining the optimal number of clusters and the best assignment of data objects, maximizing intra-cluster cohesion and inter-cluster separation without prior information [29].

Q3: My high-dimensional proteomics data is very noisy. Which clustering method should I use? For high-dimensional, noisy biomarker data (e.g., from mass spectrometry), Automated Trimmed and Sparse Clustering (ATSC) is highly suitable. It automatically determines the optimal number of clusters while suppressing noise by emphasizing significant features and excluding outliers, all without manual parameter tuning [11].

Q4: How can I ensure my clustering results are biologically interpretable and not a black box? Seek out methods that provide interpretable results. For instance, the Interpretable Graph Neural Additive Network (GNAN) can be used to analyze sparse temporal biomarker data, providing node and feature importance metrics that trace which biomarkers and time points contribute most to a classification decision [30]. Furthermore, algorithms generated by Automatic Generation of Algorithms (AGA) are symbolic and human-readable, allowing researchers to understand and refine their structure [29].

Q5: What is a key advantage of using sparse clustering methods like ST-CS? Sparse clustering methods, such as Soft-Thresholded Compressed Sensing (ST-CS), integrate feature selection directly into the model training. This results in a parsimonious feature set, identifying a small subset of the most discriminative biomarkers. This enhances model interpretability and predictive accuracy by eliminating redundant or non-informative features [31].

Troubleshooting Guides

Guide 1: Troubleshooting Poor Cluster Separation in PCA

Poor cluster separation in a PCA plot is a common issue in biomarker research. The flowchart below outlines a systematic diagnostic and resolution process.

Resolution Steps:

- If Class Labels Are Known: Apply Linear Discriminant Analysis (LDA). Unlike PCA, which maximizes total variance, LDA finds the axes that maximize the separation between multiple predefined classes [28].

- If Class Labels Are Unknown:

- Investigate Non-Linearity: PCA is a linear technique and will fail if the data has a complex, non-linear structure (e.g., a circular distribution) [8]. Plot your raw data to check for such patterns.

- Apply Non-Linear Dimensionality Reduction: If non-linearity is suspected, use techniques like t-Distributed Stochastic Neighbor Embedding (t-SNE) or Neighbourhood Components Analysis (NCA) [28]. These methods are designed to reveal complex, non-linear cluster structures in low-dimensional projections.

- Address Data Quality Issues:

- Outliers and Noise: PCA is sensitive to outliers [32]. Use outlier detection methods from Exploratory Data Analysis (EDA) to identify and remove them [32].

- Data Scaling: If variables are on different scales (e.g., heart rate vs. a 1-5 symptom score), scaling is crucial before PCA to ensure that variables with larger values don't disproportionately influence the components [33].

Guide 2: Optimizing Clustering in High-Dimensional, Noisy Biomarker Data

High-dimensional biomarker data from proteomics or transcriptomics is often plagued by noise and redundant features. The following workflow is designed for this specific challenge.

Detailed Methodologies:

Automated Trimmed and Sparse Clustering (ATSC) Protocol [11]:

- Implementation: The ATSC method is available within the

evaluomeRpackage for R. - Process: The method automatically calibrates its tuning parameters—the trimming proportion (to exclude outliers) and the sparsity level (to suppress noisy features).

- Output: It outputs a robust clustering solution where the optimal number of clusters is determined without manual intervention, effectively handling noise and redundancy.

- Implementation: The ATSC method is available within the

Soft-Thresholded Compressed Sensing (ST-CS) Protocol [31]:

- Objective: Recover a sparse coefficient vector (ω) where non-zero coefficients correspond to discriminative biomarkers.

- Optimization Framework: The coefficients are estimated by solving a constrained optimization problem that includes dual ℓ₁ and ℓ₂ regularization. The ℓ₁-norm enforces sparsity, while the ℓ₂-norm stabilizes estimates and handles multicollinearity.

- Automated Feature Selection: Instead of manual thresholding, the resulting coefficients are partitioned into "signal" vs. "noise" using K-Medoids clustering on the coefficient magnitudes, fully automating the selection of the most important biomarkers.

Automatic Generation of Algorithms (AGA) for Clustering [29]:

- Concept: Uses Genetic Programming (GP) to assemble fundamental algorithmic components into novel, complete clustering algorithms.

- Output: The result is a human-readable and executable algorithm that is specifically tailored to a given dataset, potentially outperforming state-of-the-art general-purpose methods.

Comparative Analysis of Clustering Methods

The following table summarizes key automated and sparse clustering methods relevant for biomarker research.

| Method Name | Core Functionality | Key Advantages | Ideal Use Case in Biomarker Research |

|---|---|---|---|

| Automated Trimmed & Sparse Clustering (ATSC) [11] | Automatically determines cluster number (k) with noise trimming & sparsity. | Fully automated; robust to outliers & high-dimensional noise. | Unsupervised patient stratification from noisy transcriptomic/proteomic data. |

| Soft-Thresholded Compressed Sensing (ST-CS) [31] | Integrates classification with automated, sparse feature selection. | Outputs a minimal, discriminative biomarker panel; high specificity. | Identifying a parsimonious serum protein signature for disease diagnosis. |

| Automatic Algorithm Generation (AGA) [29] | Automatically constructs novel clustering algorithms from components. | Generates a custom, interpretable algorithm for a specific dataset. | Tackling novel, complex dataset structures where standard methods fail. |

| Interpretable Graph Learning (GNAN) [30] | Models sparse temporal biomarker data as graphs for classification. | Provides feature & time-point importance; no data imputation needed. | Analyzing irregularly sampled blood test data to find critical pre-diagnostic windows. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

This table lists key computational tools and their functions for implementing the methods discussed.

| Item | Function in Analysis | Key Parameter / Consideration |

|---|---|---|

| evaluomeR Package (R) [11] | Implements the Automated Trimmed and Sparse Clustering (ATSC) method. | Accessible via Bioconductor; requires minimal computational background. |

| ST-CS Framework (Python/MATLAB) [31] | Provides the code for Soft-Thresholded Compressed Sensing. | Look for published code alongside the manuscript (e.g., on GitHub). |

| Genetic Programming (GP) Library (e.g., DEAP) | Serves as the engine for Automatic Algorithm Generation (AGA) [29]. | Requires definition of a set of elementary algorithmic components. |

| Silhouette Index (SI) [29] | An internal validation metric used as an objective function to evaluate clustering quality. | Does not assume cluster shape; values range from -1 (poor) to +1 (excellent). |

| 1-Bit Compressed Sensing [31] | A signal processing technique that quantizes data to binary values for robust sparse recovery. | Reduces noise and computational complexity, aligning with classification tasks. |

Integrating Sensor Calibration and Data Alignment to Correct for Technical Variance

Core Problem: Technical Variance Masks Biological Signals

In high-dimensional biological data analysis, technical variances from sensor drift or misalignment can obscure true biological clusters in Principal Component Analysis (PCA). These inconsistencies cause identical experimental conditions to appear as separate clusters, complicating interpretation [19]. Proper sensor calibration and data alignment are critical for ensuring that PCA visualizations reflect biological reality rather than technical artifacts.

Troubleshooting Guide: Poor Cluster Separation in PCA

| Problem | Root Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Separate PCA clusters for identical gestures/conditions [19] | Inconsistent sensor calibration or improper data scaling [19]. | Check for unit-to-unit sensor variation; review preprocessing and scaling pipelines [19] [34]. | Apply sensor calibration and use StandardScaler before PCA [19]. |

| Cluster drift between experimental batches | Sensor sensitivity changes over time and use (e.g., piezoelectric accelerometers) [34]. | Compare initial calibration certificates with recent performance data [34]. | Recalibrate sensors annually or after heavy use [34]. |

| Failure of new data to align with reference in PCA space | Slight changes in sensor placement or environmental conditions [19]. | Use Dynamic Time Warping (DTW) or Procrustes analysis to quantify misalignment [19]. | Apply rotation transformations or affine alignment to new datasets [19]. |

Experimental Protocols for Calibration & Alignment

Sensor Calibration Procedure

This protocol corrects for structural errors in inertial measurement units (IMUs) like accelerometers and gyroscopes [35].

- Objective: Determine scale factor, misalignment, and bias parameters for each sensor axis using a linear sensor model [35].

- Equipment: Precision rate table (for gyroscopes), multi-axis turntable (for accelerometer tumble test), thermal chamber [35].

- Methodology:

- Accelerometer Tumble Test: Mount the sensor and collect static measurements in multiple orientations to align each axis with, opposite to, and perpendicular to gravity, providing a ±1 g measurement [35].

- Gyroscope Rate Table Calibration: Secure the sensor on a rate table and rotate it across a range of precise angular rates to characterize response [35].

- Thermal Calibration: Perform the above processes inside a thermal chamber across the sensor's operational temperature range to model temperature-dependent parameter changes [35].

- Data Processing: A least-squares fit is performed on the collected data to populate the parameters in the sensor model for correcting future measurements [35].

Data Alignment Protocol for PCA

This corrects for misalignment between new recordings and a reference dataset in PCA space [19].

- Objective: Apply transformations so that identical biological conditions or gestures form unified clusters in a PCA plot.

- Preprocessing:

- Alignment Techniques:

- Rotation Transformation: For rotational misalignment, use Euler angles to realign data [19].

- Procrustes Analysis: Apply scaling, rotation, and translation to optimally align one dataset (new recording) to another (reference) [19].

- Dynamic Time Warping (DTW): Align time-series data by minimizing temporal distortions between sequences [19].

The following workflow integrates these protocols into a cohesive analysis pipeline to ensure data integrity from collection to visualization.

Cluster Diagnostics and Visualization

After calibration and alignment, validate clustering performance.

- Determining Cluster Number (k):

- Elbow Plot: Use the

yellowbrickpackage to visualize the within-cluster sum of squares against the number of clusters (k). The optimal k is often at the "elbow" of the plot [36]. - Silhouette Analysis: Plot silhouette scores for different k values. The highest average score suggests the most coherent cluster structure [36].

- Elbow Plot: Use the

- Dimensionality Reduction for Visualization:

- PaCMAP Recommendation: For 2D visualization of clusters, use PaCMAP, which better preserves both local and global data structure compared to PCA, t-SNE, or UMAP [36].

- Linear Discriminant Analysis (LDA): If cluster labels are known, LDA can be used to find the projection that maximizes between-cluster separation, directly addressing the visualization goal [28].

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function |

|---|---|

| Precision Rate Table | Provides precise angular rates for gyroscope calibration, characterizing scale factor and bias [35]. |

| Multi-Axis Turntable | Enables accelerometer tumble testing by rotating the sensor into multiple static orientations relative to gravity [35]. |

| Thermal Chamber | Allows calibration across a range of temperatures to model and correct for temperature-sensitive parameter drift [35]. |

| Reference Accelerometer | A NIST-traceable, calibrated reference sensor used to validate and calibrate the sensors under test [34]. |

| StandardScaler | A preprocessing tool that standardizes features by removing the mean and scaling to unit variance, preventing high-variance features from dominating PCA [19]. |

Frequently Asked Questions (FAQs)

Q1: Why do my identical gestures or experimental conditions form two separate clusters in my PCA plot? This is typically caused by technical variance, such as inconsistent sensor calibration between recording sessions or slight changes in sensor placement. PCA is sensitive to these systematic differences and will interpret them as separate sources of variance, breaking what should be one cluster into two [19].

Q2: How often should I recalibrate my sensors? The need for recalibration depends on the sensor technology and usage. Piezoelectric accelerometers can show noticeable sensitivity drift over time and may require annual recalibration. In contrast, MEMS-based sensors (variable capacitance, piezoresistive) are often more stable, with many units showing gain variations of less than 2% over time, making frequent recalibration less critical [34].

Q3: I have calibrated my sensors, but my new data still doesn't align with my original reference set in the PCA space. What else can I do? Calibration corrects internal sensor errors. For external misalignment (e.g., different orientation), apply data alignment techniques before PCA. Use Procrustes analysis to find the optimal rotation, translation, and scaling to align your new dataset to the reference. For time-series data, Dynamic Time Warping (DTW) can correct temporal misalignments [19].

Q4: Is PCA the best method for visualizing my clusters? PCA is excellent for preserving the global structure of your data. However, if your goal is to maximize the visual separation between known clusters, Linear Discriminant Analysis (LDA) is a more suitable technique, as it explicitly finds axes that maximize between-cluster variance [28]. For a more balanced preservation of local and global structure, consider PaCMAP [36].

Q5: Can machine learning solve this clustering issue without manual calibration? Advanced techniques like autoencoders can learn a shared latent space that is more robust to minor technical variations. By training a model on your original data, it can potentially map new, slightly misaligned recordings into the correct cluster. However, this requires a large and well-characterized training set, and proper sensor calibration remains the most reliable foundation [19].

Frequently Asked Questions

1. Why would my classification model perform well even when my PCA plot shows poor cluster separation?

This common scenario occurs because PCA only uses the first few principal components for visualization, which maximize the variance of the entire dataset but may not capture the features most relevant for class discrimination. Your classification model likely uses many more components or original features, allowing it to detect subtle patterns invisible in a 2D PCA plot [37]. The separation might be present in higher, un-plotted principal components.

2. I am using PCA for clustering, but the results are poor. What is the issue?

PCA is a linear technique designed to preserve global data variance, not to identify clusters, which are concentrations of data points (neighborhoods) [38]. Using neighborhood-preserving methods like t-SNE or UMAP before clustering often yields better results because their objective aligns directly with the goal of clustering [39] [38].

3. When should I avoid using PCA altogether?

PCA has known limitations in specific, advanced research contexts. In quantitative genetic association studies on human data, especially with family or multiethnic cohorts, PCA can perform poorly compared to Linear Mixed Models (LMMs) due to its inability to adequately model complex relatedness structures [40]. It is also generally inadequate for data with strong non-linear relationships [39] [41].

4. My t-SNE plot looks different every time I run it. Is this normal?

Yes, this is expected. The t-SNE algorithm is stochastic, meaning it contains random elements during the optimization process. While the random_state parameter can be set for reproducibility, different initializations can lead to visually distinct layouts, though the core cluster relationships should remain similar [39] [42].

5. For visualizing a very large dataset (e.g., >100,000 points), is t-SNE a good choice?

For very large datasets, UMAP is generally recommended over t-SNE. t-SNE is computationally intensive and slow on large data, while UMAP is designed for scalability and can handle millions of points efficiently, producing results in a fraction of the time [43] [44].

Troubleshooting Guides

Guide 1: Troubleshooting Poor Cluster Separation in PCA Plots

This guide helps diagnose and resolve situations where PCA fails to reveal expected data clusters.

Step 1: Confirm the Nature of Your Data

- Action: Determine if your data has non-linear relationships. PCA can only capture linear patterns.

- Interpretation: If the underlying data manifold is non-linear (e.g., a spiral or "S" curve), PCA will be ineffective. This is the primary reason to switch to a non-linear method [41].

Step 2: Check the Variance Explained by Plotted Components

- Action: Examine the

explained_variance_ratio_of your PCA model. A low cumulative variance for the first two components indicates that your 2D plot is missing most of the data's information [37]. - Interpretation: If the first two components explain a small percentage of the total variance (e.g., <30%), separation may exist in higher components not visualized.

- Action: Examine the

Step 3: Switch to a Non-Linear Dimensionality Reduction Method

- Action: Apply t-SNE or UMAP to your data using the workflow below.

- Interpretation: If clear, separable clusters appear with t-SNE or UMAP but not with PCA, your data contains non-linear structures that PCA cannot capture.

The following workflow outlines the decision path and primary considerations when troubleshooting poor PCA results:

Guide 2: Choosing Between t-SNE and UMAP

Once you've decided a non-linear method is needed, this guide helps select the most appropriate one.

Step 1: Evaluate Your Need for Speed and Scalability

Step 2: Determine Your Structural Priorities

- Action: Decide if understanding tight local neighborhoods or the global layout of clusters is more important for your analysis.

- Interpretation: t-SNE excels at creating tight, well-separated clusters that emphasize local similarities. UMAP provides a more balanced view, preserving more of the global structure (e.g., the relative distances between clusters) [44] [45].

Step 3: Consider Parameter Tuning and Reproducibility

The table below summarizes the core differences to guide your choice:

| Feature | t-SNE | UMAP |

|---|---|---|

| Primary Strength | Excellent for visualizing tight local clusters [44] | Balances local and global structure preservation [39] [44] |

| Speed | Slow, especially on large datasets [39] [43] | Fast and highly scalable [39] [43] |

| Global Structure | Poor; can distort relative positions of clusters [44] [45] | Better; more faithfully represents overall data layout [44] [45] |

| Parameter Sensitivity | High sensitivity to perplexity [39] [44] |

Less sensitive; more robust to parameter changes [44] |

| Ideal Use Case | Exploring small/medium datasets for fine-grained clustering (e.g., single-cell RNA-seq) [39] [44] | Visualizing large datasets and understanding broader relationships between groups [39] [44] |

Experimental Protocols

Protocol 1: Implementing t-SNE for Cluster Visualization

This protocol provides a standard method for using t-SNE to visualize clusters in a 2D scatter plot.

1. Research Reagent Solutions

- Python (v3.8+): Programming language environment.

- scikit-learn (

sklearn.manifold): Library containing theTSNEimplementation [39]. - Matplotlib/Seaborn: Libraries for creating static, publication-quality visualizations [39].

- StandardScaler (

sklearn.preprocessing): (Recommended) For standardizing features before analysis [39].

2. Methodology

- Data Preprocessing: Standardize your data matrix

XusingStandardScaler. This ensures all features contribute equally to the distance calculations [39]. - Model Initialization: Create a

TSNEobject. Key parameters to set are: - Model Fitting and Transformation: Call the

.fit_transform()method on your standardized dataXto generate the 2D embedding. - Visualization: Create a scatter plot of the resulting embedding, coloring points by their known labels if available.

- Data Preprocessing: Standardize your data matrix

3. Code Template

Protocol 2: Implementing UMAP for Scalable Dimensionality Reduction

This protocol details the use of UMAP for efficient visualization of both small and large datasets.

1. Research Reagent Solutions

2. Methodology

- Data Preprocessing: While UMAP is less sensitive to scaling than PCA, standardizing your data is still considered good practice.

- Model Initialization: Create a

UMAPobject. Key parameters are:n_components=2: For 2D projection.random_state: For reproducibility.n_neighbors: (Default=15) Controls the scale of structure captured. Lower values focus on local, higher values on global structure [44].min_dist: (Default=0.1) Controls the minimum distance between points in the embedding, affecting cluster tightness.

- Model Fitting and Transformation: Call

.fit_transform()on your data. - Visualization: Generate a scatter plot from the UMAP embedding.

3. Code Template

Comparative Analysis & Data

For a quantitative comparison, the table below summarizes benchmark performance and key characteristics of PCA, t-SNE, and UMAP.

| Feature | PCA | t-SNE | UMAP |

|---|---|---|---|

| Type / Preserved Structure | Linear / Global variance [39] | Non-linear / Local neighborhoods [39] [44] | Non-linear / Local & some Global [39] [44] |

| Speed (Relative) | Very Fast [39] [43] | Slow [39] [43] | Fast (slower than PCA, faster than t-SNE) [39] [43] |

| Use in ML Pipelines | Yes (e.g., as feature preprocessor) [39] | No (visualization only) [39] | Yes [39] |

| Inverse Transform | Yes [39] | No [39] | No [39] |

| Handles Non-Linear Data | No [39] | Yes [39] | Yes [39] |

| Typical Runtime on 70k samples (MNIST) | ~Seconds [43] | ~Hours (sklearn) / ~Minutes (Multicore) [43] | ~Minutes [43] |

The following diagram illustrates the fundamental algorithmic differences that lead to the performance and structural preservation characteristics outlined in the table above.

In the analysis of high-dimensional biological and chemical data, particularly in drug development research, Principal Component Analysis (PCA) is a fundamental technique for dimensionality reduction and visualization. However, researchers frequently encounter the challenge of poor cluster separation in PCA plots, which can obscure meaningful patterns in datasets related to compound screening, genomic profiling, or patient stratification. This technical support guide addresses the implementation of robust preprocessing and model-based clustering workflows in R and Python to diagnose and resolve these separation issues, framed within a broader thesis on troubleshooting cluster visualization.

Poor cluster separation often stems from inappropriate data scaling, high-dimensional noise, or the inherent limitations of linear techniques like PCA when applied to complex biological relationships. Through systematic troubleshooting methodologies and optimized code implementations, researchers can enhance their analytical workflows to extract more reliable insights from their experimental data.

Technical Support Center: Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: Why do my clusters appear poorly separated in PCA plots despite clear experimental groupings?

Poor cluster separation in PCA visualization can result from several factors:

- Inadequate preprocessing: Data may not be properly scaled, allowing features with larger variances to dominate the principal components disproportionately [46].

- High-dimensional noise: Biological datasets often contain technical noise that obscures meaningful biological signal in the first few principal components.

- Non-linear relationships: PCA is a linear technique and may fail to capture complex non-linear relationships present in the data [46].

- Insufficient variance capture: The first two principal components may not explain enough of the total variance in your dataset to reveal separation.

Q2: What Python and R packages are most suitable for implementing preprocessing and clustering workflows?

For Python:

- Preprocessing: Scikit-learn's

StandardScaler,MinMaxScaler, andPCAmodules [46] - Clustering: Scikit-learn's

KMeans,DBSCAN, andAgglomerativeClustering[47] - Visualization: Matplotlib, Seaborn, and Plotly

For R:

- Preprocessing: Built-in

scale()function andfactoextrapackage - Clustering: